Page 131

9

The Economy and the Information Age

We live in an era of astounding technological transformation in which change, not stability, has become the norm. All around us are now-familiar technologies whose present state of development—or very existence—would have seemed extraordinary just a generation ago. From wireless telephones and handheld GPS units to video games, DVD, and digital television; to genetic engineering, decoding of the human genome, and combinatorial drug design; to MRIs, CT scans, laser eye surgery, and robotic hip replacement surgery; to polymeric materials for inline skates, tennis rackets, and skis; to superalloys for jet engine turbine blades; to lightweight, fuel-efficient automobiles and aircraft; to computers, the Internet, and the World Wide Web—technology is everywhere and touching all of us in ever more pervasive ways (see sidebar “The World Wide Web”).

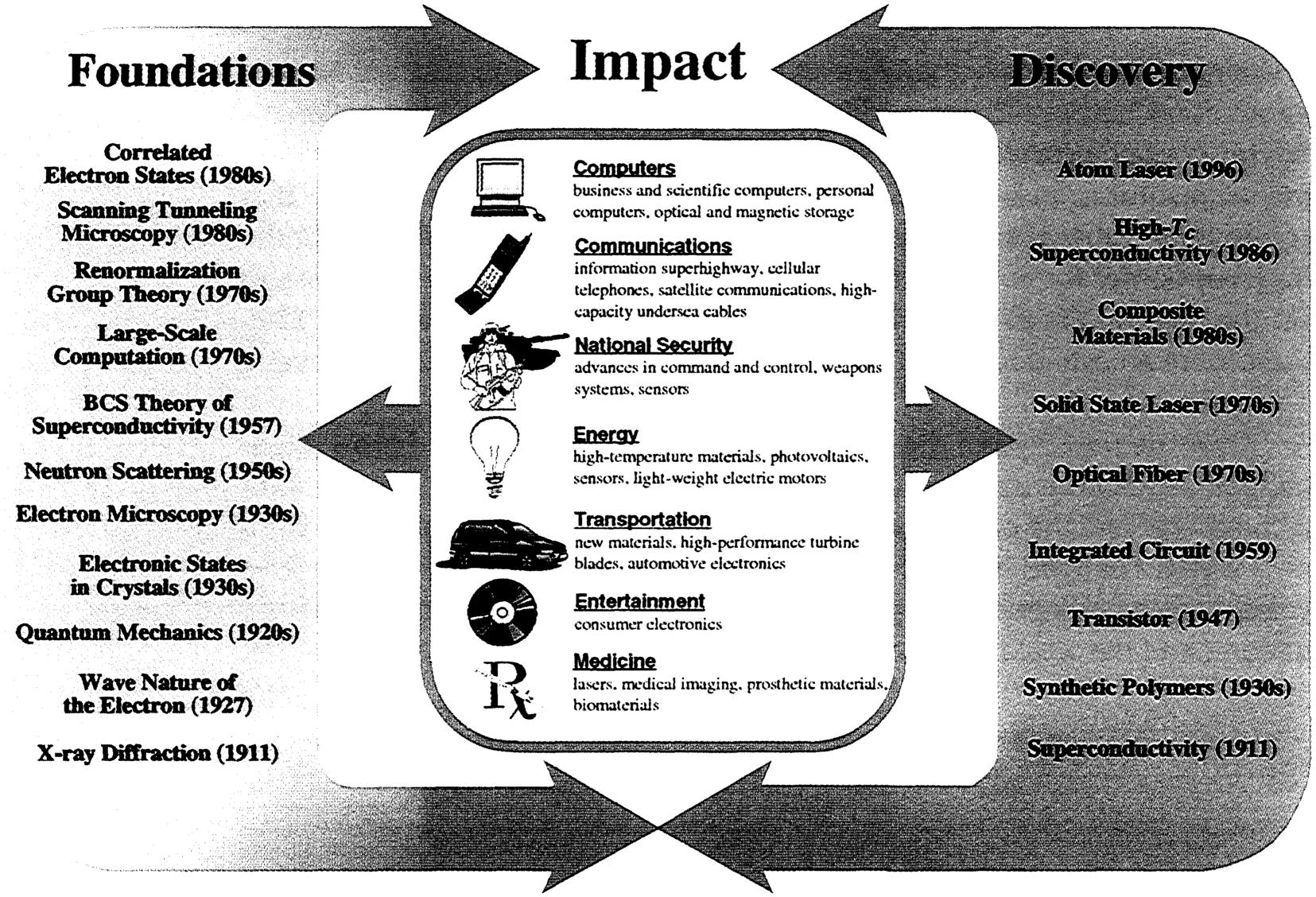

All of this technology does not just happen. It arises from innovation based on decades of research in the underlying basic science, of which physics is a major component in essentially every case. Medicine, entertainment, transportation, energy, national security, communications, computers—the unprecedented advances in all of these areas are built on solid technical foundations resulting from a half-century and more of continuous investment in basic science. It is the investment in basic science that we are making today that will fuel the technological innovations of tomorrow (see Figure 9.1).

At the heart of this age of change is information technology. We are in the midst of an information revolution that is every bit as profound as the two great technological revolutions of the past—the agricultural and industrial revolutions. Information technology sector revenues are estimated to account for 5 to 15 percent of the U.S. GDP, and 40 percent of U.S. industry capital spending today is for information technology.

Scientific understanding of fundamental phenomena has been key to the development of materials for the information age, the carriers and con-

Page 132

THE WORLD WIDE WEB

By now most Americans are well aware of the World Wide Web, and for many it has become an integral part of their business and recreational lives. It is creating new business processes and models, from book sales to airline tickets to banking to vacation planning to stock trades to home and automobile purchases. Few of its users, however, are aware of how it came to be. The Web was born in a high-energy physics laboratory in Switzerland. It came about as a solution to CERN's communications and documentation problems, characteristic of large, complex experiments involving collaborators from around the world. Three key technologies—computer networking, document/information management, and software user interface design—were brought to bear on these problems, and the outcome became the Web. The Internet, initiated by DARPA in the late 1960s, was well entrenched at CERN by the late 1980s. At that time it was expanding explosively worldwide, due in large part to the wide acceptance of e-mail as an effective means of communication. The Internet became the medium in which the Web was created. In the fall of 1990 the proposal for the World Wide Web, including its name, was advanced and acted favorably upon at CERN. Its eight key goals, defining features of the Web, were as follows:

In a little over a year, a complete version of this proposal had become a reality and announced to the high-energy physics community. In the process, HTTP (Hypertext Transfer Protocol), which allows the client and server to communicate, was invented, along with HTML (Hypertext Markup Language, based on SGML), which allows content to be displayed on a client. The initial acceptance of the Web by the high-energy physics community was far from |

Page 133

|

unanimous. It was finally the browser interface to the SPIRES (Stanford Public Information Retrieval System) databases at the Stanford Linear Accelerator Center, containing a wide range of information on high-energy physics experiments, institutes, publications, and particle data, that sold the Web to that community. Subsequently, the Mosaic browser developed in early 1993 at the National Center for Supercomputing Applications at the University of Illinois set the Web on the path to the broad role it plays today. By the end of 1995 there were an estimated 50,000 Web servers worldwide, up by 20-fold over a 1-year period. Over the past 5 years the World Wide Web has continued to grow exponentially. Today there are hundreds of millions of Web servers worldwide, with e-commerce and the proliferation of dot-coms emerging throughout the business world. The Web has had a truly astonishing trajectory over the 10 short years from its inception in a physics laboratory to the front burner of our nation's and the world's economy.

~ enlarge ~ |

Page 134

~ enlarge ~

FIGURE 9.1 The incorporation of scientific advances into new products can take decades and often follows unpredictable paths. The physics discoveries shown in this figure have enabled breakthrough technologies in virtually every sector of the national economy. The most recent fundamental advances leading to new foundations and discoveries have yet to realize their potential.

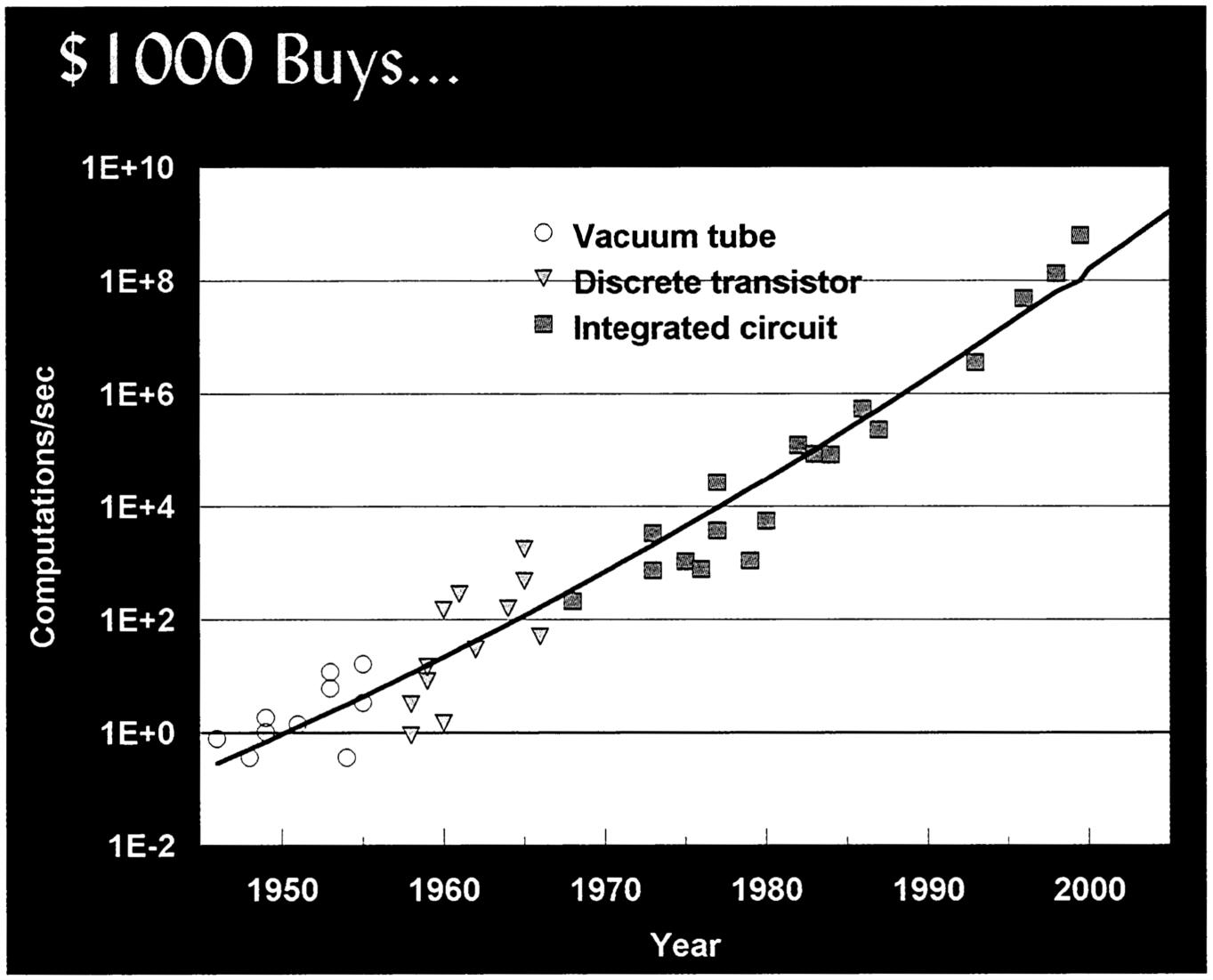

trollers of electrical current, light waves, and magnetic fields. Equally important is a scientific understanding of the processes, because it is such understanding that enables the lower-cost manufacture of devices and systems based on materials used by the rapidly changing electronics and telecommunications industries. This last point cannot be overemphasized. Without the continual decrease in the cost of processing, communicating, and storing bits of information, the information age would never have happened, irrespective of the sophistication and elegance of the technology. Figure 9.2 shows how much computational capability $1000 was able to buy over the past half century, demonstrating faster-than-exponential growth of approximately nine orders of magnitude during that time. Through basic research in physics, the technology itself has changed completely several

Page 135

times during this same 50 years, with the integrated circuit having dominated since the mid-1970s.

INTEGRATED CIRCUITS

Semiconductors have grown into a global industry with year 2000 revenues of about $200 billion, supported by a materials and equipment infrastructure of about $60 billion. Semiconductor technology is also the heart of the $1 trillion global electronics industry and is vital in many other areas of the approximately $33 trillion global economy.

The predominant semiconductor technology today is the silicon-based integrated circuit. The key foundations of the modern silicon semiconductor industry are the discovery of the electron in 1897, the concept of the field-effect transistor (FET) in 1926, the first demonstration of the metal oxide

~ enlarge ~

FIGURE 9.2 Computational power that $1000 has bought over the last 50 years.

SOURCE: Hans Moravec. 1998. “When Will Computer Hardware Match the Human Brain?” Journal of Transhumanism, Vol. 1.

Page 136

semiconductor FET in 1959, and the development of dynamic random access memory (DRAM) in 1967 and the first microprocessor in 1971. In 2000, the Nobel Prize in physics was awarded in part for basic and applied research leading to the integrated circuit.

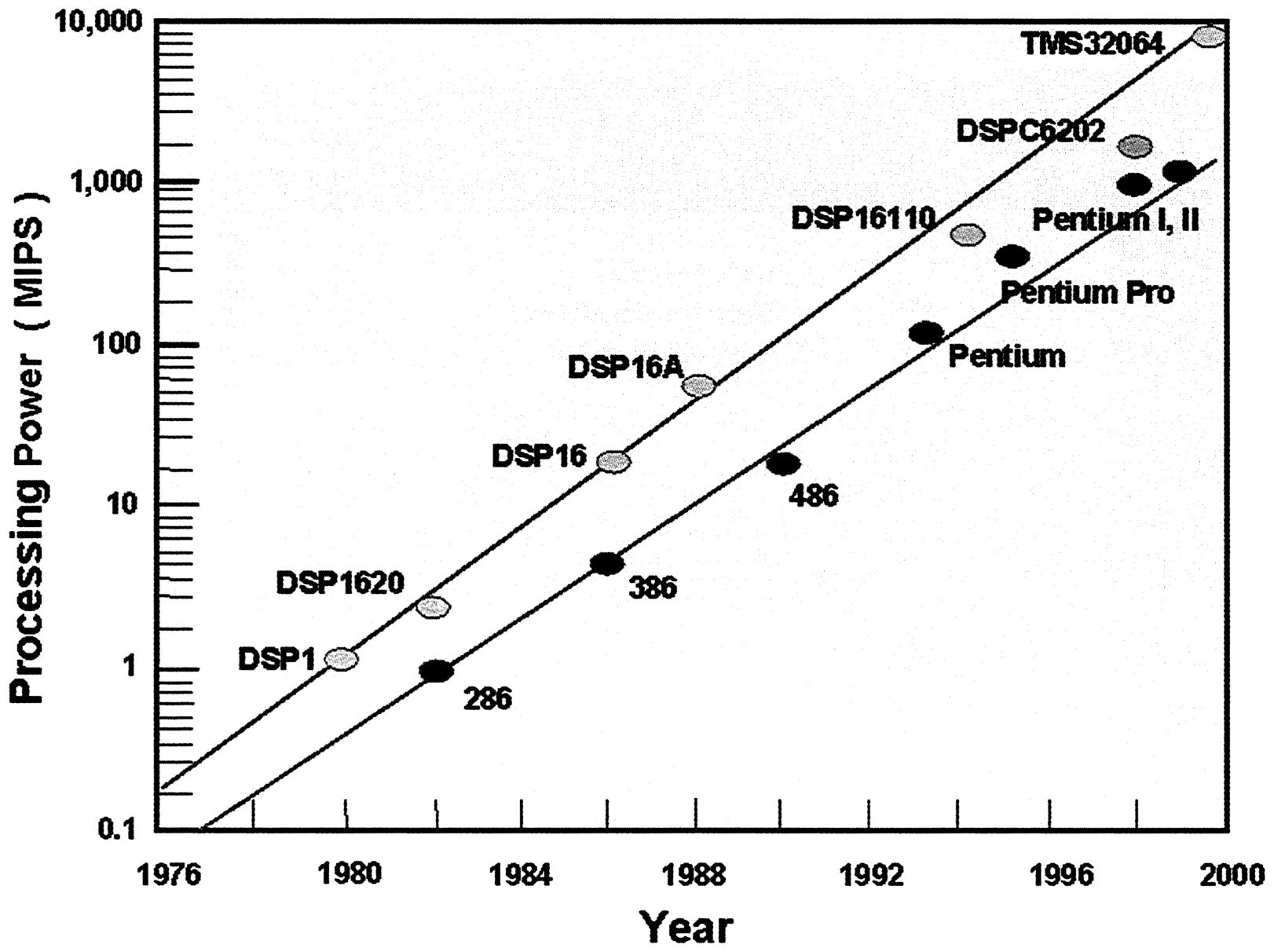

For the past 30 years, semiconductor technology has been described by Moore's law, an empirical observation that the density of transistors on a silicon integrated circuit doubles about every 18 months. Today's computing and communications capability would not have been possible without the phenomenal exponential growth in capability per unit cost since the introduction of the integrated circuit in about 1960. That sustained rate of progress has resulted in high-density DRAMs with 64 million bits on a chip and complex, high-performance logic chips with more than 9 million transistors on a chip. Figure 9.3 illustrates the exponential growth in micropro-

~ enlarge ~

FIGURE 9.3 Computational power versus time for digital signal processors (DSPs, green) and microprocessors (red). Courtesy of Intel and Texas Instruments.

Page 137

cessor computing power over the past 25 years. The availability of enormous computational power at low cost is having a dramatic impact on our economy. In addition, it feeds back directly into the scientific enterprise and is stimulating a third branch of research in physics, computational physics, which is rapidly taking its place alongside experiment and theory.

Moore's law, first articulated by Gordon Moore of Intel Corporation, is not a physical principle. It is, instead, a statement that industry will perform the R&D necessary and supply the required capital investment at the rate required to achieve this exponential growth rate. While this has certainly been the case so far, there is considerable debate over how the future will play out. Exponential growth cannot continue forever, and at some point, for either technical or economic reasons, the growth will slow. Many daunting scientific and engineering problems must be overcome for industry to continue at the Moore's law rate of progress for the next 15 or even 10 years. For instance, the number of wires needed to connect the transistors grows as a power of the number of transistors. As transistor dimensions are shrunk, integrated circuit manufacturers pack an ever-increasing number of devices into their chips. The complexity of wiring the transistors in these chips may eventually reach the limits of known materials. The cost of manufacturing increasingly layered and complex wiring structures may limit the performance of these systems. Even if solutions to the interconnect problem can be identified, continued scaling of silicon technology will ultimately encounter fundamental limits. For example, metal-oxide semi-conductor transistors can be built today with gate lengths of 30 nm (about 150 atoms long) that display high-quality device characteristics. Manufacturing complex circuits that rely on devices with features of this size will require several hundred processing steps with atomic-level control. Moreover, the performance of complex integrated circuits with tens of millions of transistors may be degraded because of nonuniform operating characteristics. In time, continued decreases in device dimensions may result in the information being carried by an ever-decreasing number of charge carriers; ultimately, simple statistical fluctuations will limit the uniformity of device characteristics as the number of charges used to convey information decreases.

To delay the onset of these limits as long as possible, research is under way on new materials with a high dielectric constant appropriate for both memory applications and for limiting the leakage of current in tightly packed integrated circuits. As the understanding of synthesis and processing increases, ferroelectric materials are being introduced for nonvolatile memory applications. Even with these advances, as feature sizes continue to de-

Page 138

crease, integrated circuits based on field-effect transistors will eventually encounter fundamental limits such as interconnect delays caused by the ever-increasing number of interconnects, heat generation, or the quantum limits of transistors too small to confine the electrons in the conduction channels. Ultimately, devices that rely on the manipulation of single electrons may play an important role. Today's approach to the design and manufacture of integrated circuits, which already requires processing and control at near single-atom levels, will no longer be extensible to smaller feature sizes and higher densities.

OPTICAL-FIBER COMMUNICATION

If silicon integrated circuits are the engine that powers the computing and communications revolution, optical fibers are the highways for the information age. Roads and highways changed in the last century to accommodate the explosion of cars and trucks. Multiply that by a million and you'll get an idea of the scope and the pace of change of the communications revolution. Today's communications networking industry is one of the most dynamic and rapidly changing industries in the world. It is expected that nearly $1 trillion will be spent in the next 3 years on building the next generation of Internet networks. It took nearly a century to install the world's first 700 million phone lines; 700 million more will be installed in the next 15 years. There are more than 200 million wireless subscribers in the world today; another 700 million will be added in the next 15 years. There are more than 200 million cable TV subscribers in the world today; 300 million more will be added in the next 15 years. All of this would be impossible using the copper wires that were the mainstay of the telecommunications industry only 20 years ago.

Optical-fiber communication is based on a number of developments in basic physics and materials science. They include the purification and processing of tiny pipes of glass, called optical fibers, that efficiently guide light over long distances with so little absorption or scattering that the light can travel for hundreds of kilometers without being absorbed; the invention of the laser and its realization in tiny, efficient semiconductor chips, which gives us a suitable source of the light; and, finally, the invention of the erbium-doped fiber amplifier, which allows efficient amplification of the optical signals.

An erbium fiber amplifier is an optical fiber that has a small amount of exotic material (the element erbium) mixed in with the glass of the fiber.

Page 139

When irradiated by light from very recently invented solid-state lasers, erbium atoms act as amplifiers for the light signals through the fiber, boosting the strength all at once to compensate for any losses. This replaces complex, expensive, and relatively unreliable repeater stations, which must separately amplify each channel by absorbing the light from a single channel on a detector, processing the signal, then feeding it to another laser that emits light to send the signal on its way. Since such stations are difficult and expensive to replace—they are often at the bottom of the ocean—the advantages of a simple, highly reliable system of amplification are obvious. These amplifiers would never have been possible without basic research to understand what colors of light are absorbed and emitted by different atoms, how the absorption and emission of light for specific atoms are affected by the insertion of the atoms into the glass host, and how the atoms can be excited by one color of light to act as an amplifier for another.

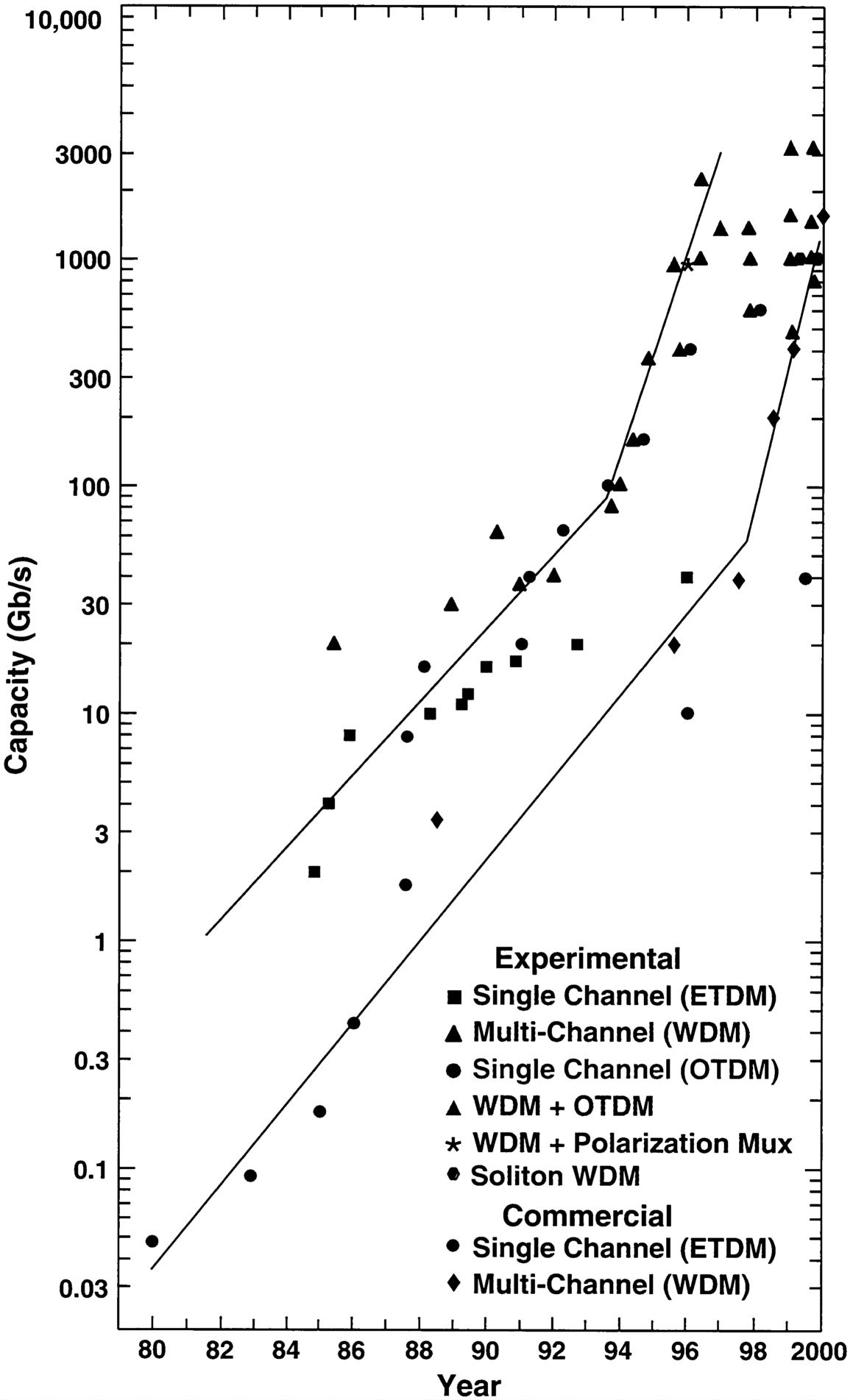

Fiber amplifiers were first used because of their simplicity and reliability. They have also resulted in a major improvement in the amount of information that can be transmitted down a single fiber. This is shown by the sudden change in the slope of the curve for the information capacity of a single fiber (in 1993 and 1997 for experimental and commercial fibers, respectively), as shown in Figure 9.4. Because fiber amplifiers amplify the light without changing it, they amplify different colors of light at the same time. Multiple colors of light can now be sent simultaneously down the same fiber, each carrying its own information, much like the multiple channels of radio and TV signals. This so-called wavelength division multiplexing (WDM) is being used to multiply the effectiveness of currently installed fiber many times over. A few years ago, optical physicists discovered that it was possible to change slightly the properties of glass under particular conditions by irradiating it with ultraviolet light. This has made possible the fiber gratings that are now used to combine and separate the different colors of light that make up the different WDM channels. Also, basic research on atoms and molecules that involved precisely measuring the color of light that they absorb is now used to stabilize the color of light used for each WDM channel, allowing even closer spacing of channels that do not interact, called dense WDM (DWDM).

In part because of the faster than exponential growth of connections to the Internet, optical fiber is being installed worldwide at the rate of more than 20 million km per year—more than 2000 km every hour. Optical telecommunication was introduced into the market in 1980; today, not only is optical fiber the medium of choice for long-distance voice and data

Page 140

~ enlarge ~

FIGURE 9.4 Optical-fiber capacity, 1980 to 2000. The development time between the demonstration of a specific experimental system and its commercial deployment has narrowed from about 5 years to just a few months over the last two decades. Courtesy of Bell Laboratories, Lucent Technologies.

Page 141

communications, but it is also rapidly growing to be a leading player in the local area network (LAN) market. Optical-fiber manufacturing revenues are predicted to be about $30 billion in 2003.

The rate of information transmission down a single fiber is increasing exponentially. Transmission at 3 terabits per second (Tbps) has been demonstrated in the research laboratory, and the time lag between laboratory demonstration and commercial system deployment has shortened to several months, as shown in Figure 9.4. What cannot be as easily discerned from the figure is the fact that the analogue of Moore's law for fiber transmission capacity, which serves as a technology roadmap for lightwave systems, is a doubling every 9 months (twice as fast as the rate for transistor density on a silicon chip).

The first undersea optical cable, installed in 1988, had a capacity of about 8000 voice channels per cable at a cost of about $400 per channel. More than 300,000 km of undersea lightwave cable had been installed by the end of 1996, when it cost less than $30 per year per voice channel and had 120,000 voice channels per cable (5 Gbps per fiber). The first large terrestrial lightwave system installed in the United States linked Washington, D.C., and New York City with a capacity of 90 Mbps per fiber in 1983, and a similar system linked New York and Boston in 1984. More than 230 million km of fiber had been installed worldwide, about two-thirds of it in the United States, by the third quarter of 2000. The latest systems incorporate WDM, dispersion-shifted fiber, and optical amplifiers. Currently in deployment are 400-Gbps-per-fiber systems using 40 channels with 10 Gbps per channel. In the next year or two, 3- to 6-Tbps-per-fiber systems will be introduced into the market, with 40 Gbps per channel. The ultimate information theory limit to the amount of data that can be transmitted over a single fiber is estimated to be on the order of 20 Tbps and is currently a subject of intense study by physicists.

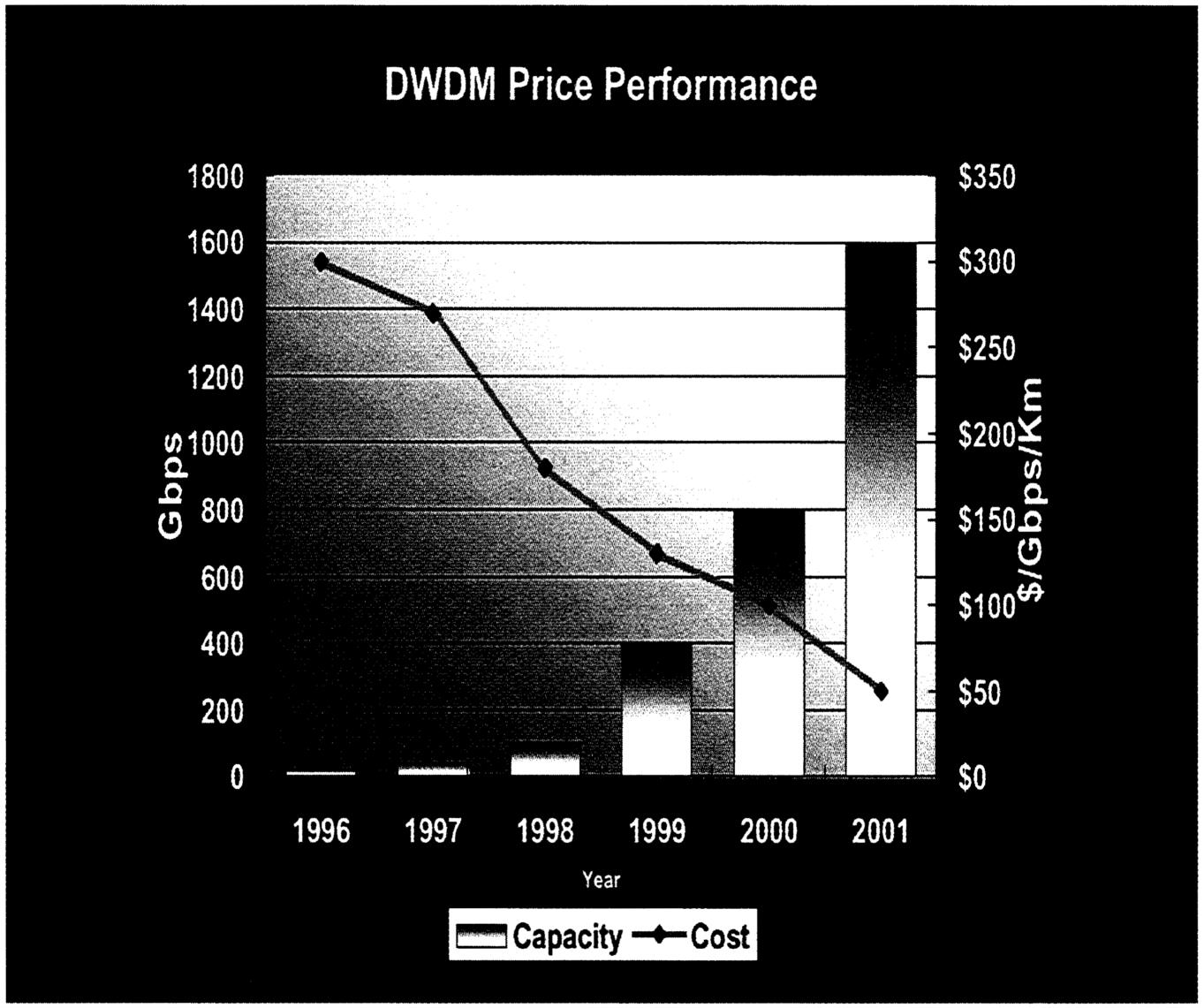

As with silicon technology, it is also the dramatic reduction in cost that has driven fiber technology development so rapidly. Figure 9.5 shows the tenfold decrease over the last 5 years in the cost of transmitting data over 1 km of fiber. This cost reduction has continued for two decades. This sustained reduction in the cost of communication has been a key driver of the information revolution in general and the Web in particular (see also the sidebar “MEMS for Optical Switching and High-Density Storage”). It is specifically the reduction in the cost of communication that is fueling the growth of the World Wide Web.

The photons that transport information along optical information highways are provided by compound semiconductor diode lasers. Such lasers

Page 142

~ enlarge ~

FIGURE 9.5 Total capacity per fiber is increasing exponentially with time. Over the last 5 years the cost of transmitting a gigabit-per-second signal for 1 km of fiber has decreased by a factor of 10. Courtesy of Bell Laboratories, Lucent Technologies.

are also at the heart of optical storage and compact disc technology. Because compound semiconductors have two or more different atomic constituents, they can be tailored by selecting materials that have the desired optical and electronic properties. Exploiting decades of basic research in materials such as gallium arsenide and indium phosphide, we are now beginning to be able to understand and control all aspects of compound semiconductor structures, from mechanical through electronic to optical, and to grow devices and structures with atomic layer control. This capability allows the manufacture of high-performance, high-reliability lasers to send information over the fiber-optic networks. High-speed, compound-semiconductor-based detectors receive and decode this information. These same materials provide the billions of light-emitting diodes sold annually for lighting displays, free-space or short-range, high-speed communication, and

Page 143

MEMS FOR OPTICAL SWITCHING AND HIGH-DENSITY STORAGE

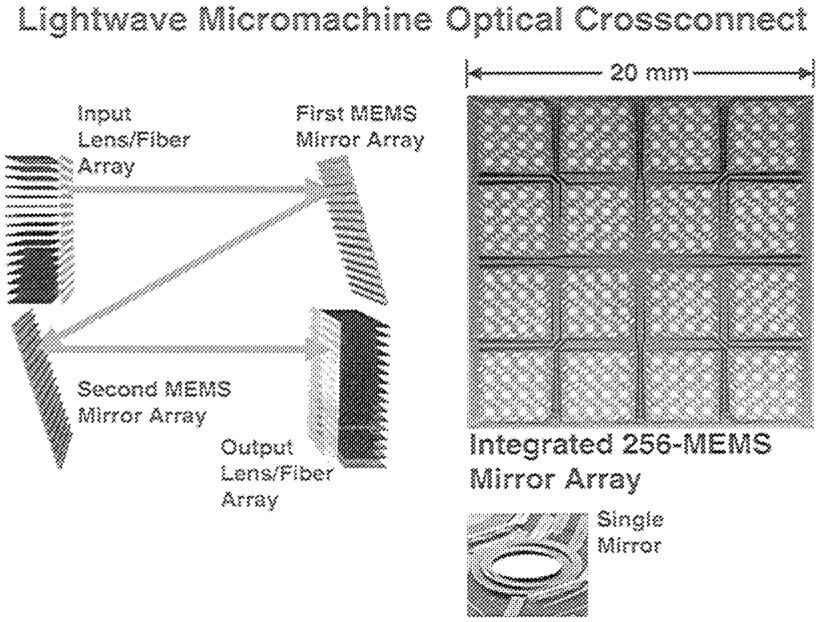

With the advent of microelectromechanical systems (MEMS) micromirror technology for routing light signals, we are entering into the era of all-optical networks. MEMS micromirror arrays such as the one depicted below at the left enable an optical cross-connect configuration setup to switch any light signal in any format at any bit rate coming into any of a thousand input fibers into any of a thousand different output fibers without the costly conversion of optical to electrical and back to optical signals that exists in today's networks. Each tiny gold-coated silicon mirror in the figure is about a millimeter in diameter. Mirrors can rotate along two axes on tiny gimbal mounts, bouncing a light beam that hits the mirror face in any direction. Optical switches, along with optical amplifiers, all-optical repeaters, and wavelength converters, will create the all-optical networks of the future, which will be both more flexible and less expensive for the consumer. These technologies are all expected to be emerging in the near future from the research laboratory after decades of fundamental research in materials science.

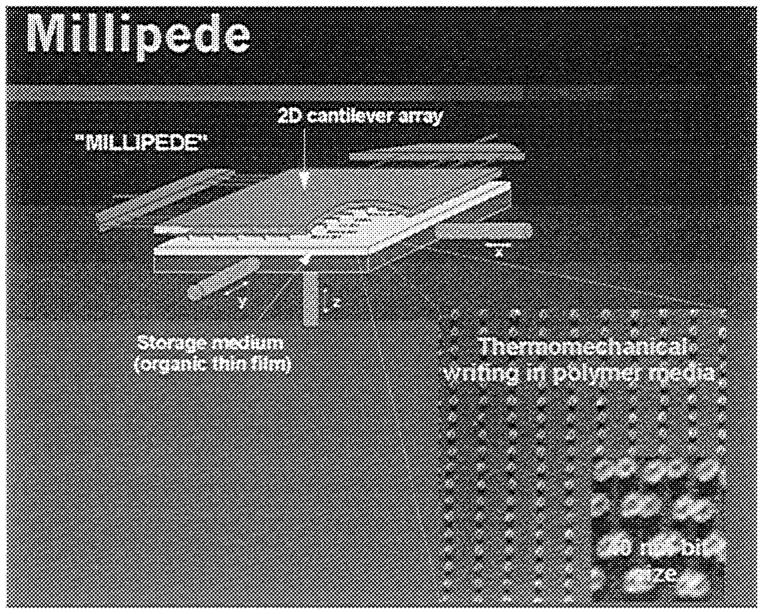

~ enlarge ~ The areal density limit for magnetic storage, as currently practiced, is believed to be about 100 Gbit/in.2, set by thermal stability limitations in the media. To continue the rapid growth in areal density beyond this limit, a number of different approaches are being considered. One approach is based on atomic force microscopy (AFM). The basic idea is to store information in the form of physical structure on a surface, which is read out as ones and zeros with an AFM. In the example below at the right, the structure takes the form of dimples that are thermomechanically written in a film with the same AFM that does the reading. A drawback of this approach is that the read/write bandwidth of a single AFM is low owing to its relatively slow scan rate. To overcome this limitation, an array of 103 or even 106 AFMs, all integrated on a single MEMS chip, is being investigated. The chip is scanned as an array of tiny phonograph needles over the media, with all the AFMs operating in parallel to read out the data. This approach holds promise of achieving storage densities of several terabits per square inch or more with wide bandwidth read/write capability.

~ enlarge ~ |

Page 144

numerous other applications. In addition, very-high-speed, low-power compound semiconductor electronics in materials such as gallium arsenide and—recently—silicon, germanium, and gallium nitride plays a major role in wireless communication, especially for portable units and satellite systems. The compound semiconductor industry has approximately $1 billion in revenues today and is growing at 40 percent per year.

INFORMATION STORAGE

The third key enabler of the information revolution is low-cost, low-power, high-density information storage that keeps pace with the exponential growth of computing and communication capability. While both magnetic and optical storage are in wide use, computer hard disk drives, which use magnetic storage, constitute the single largest market, about $30 billion per year.

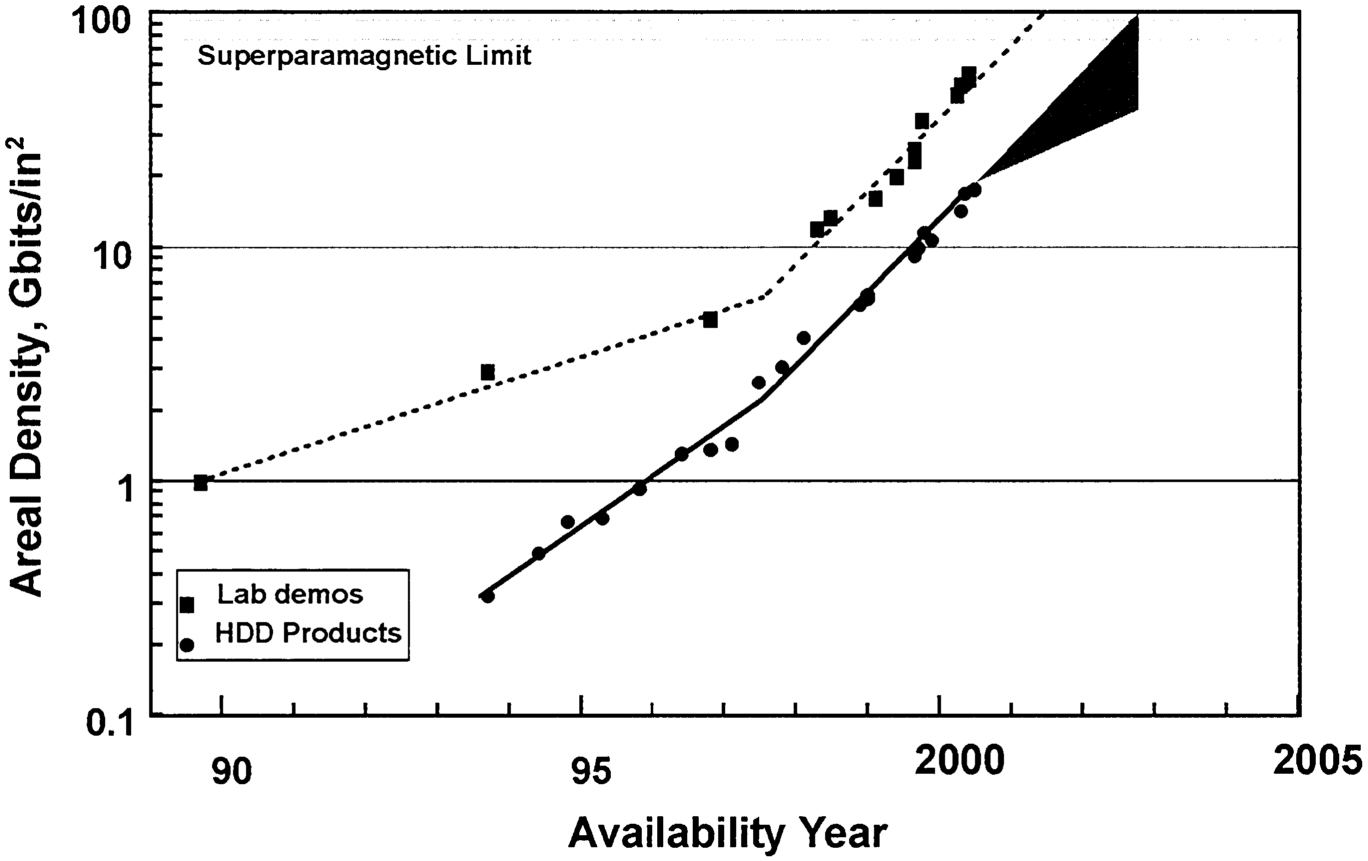

The highest-performance magnetic storage/readout devices have now begun to rely on giant magnetoresistance (GMR), the highly sensitive dependence of the electrical resistivity of certain metallic multilayered structures on magnetic field. Although Lord Kelvin discovered magnetoresistance in 1856, it was not until the early 1990s that commercial products using the technology were introduced. In the past decade, our growing understanding of condensed matter and materials led to important advances in our ability to deposit materials with atomic-level control, enabling production of the GMR heads that were introduced in workstations in late 1997. This increased understanding, made possible by our growing computational ability coupled with atomic-level control of materials, has led to exponential growth in the storage density of magnetic materials. From the mid-1960s through about 1990, the compound growth rate in areal storage density was about 25 percent per year. In about 1990, the rate increased to 60 percent per year, coincident with the introduction of magnetoresistive heads. As shown in Figure 9.6, there has recently been another increase in the rate to 100 percent per year, coincident with the introduction of GMR heads. Also shown in the figure is the reduction in time between initial demonstration and first shipment of the product. This is an unambiguous indication of the urgency and intensity with which information storage is progressing.

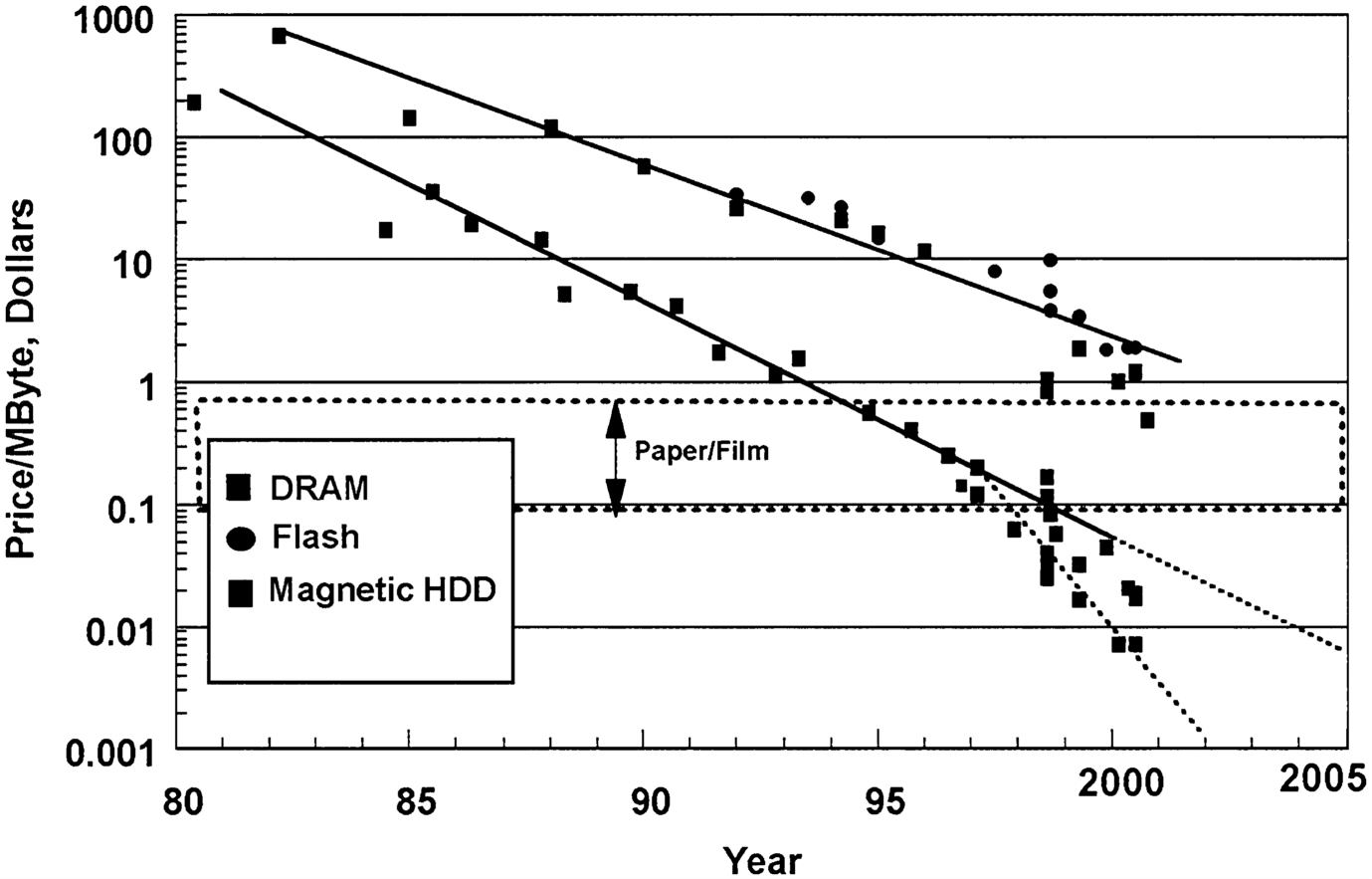

Like the silicon and fiber-optic industries, the storage industry has been driven by remarkable reductions in cost. Figure 9.7 shows the cost of 1 MB of hard disk drive storage capacity over the past 20 years. This cost has gone down by three to four orders of magnitude since 1980. For comparison, the cost of semiconductor memory is also shown.

Page 145

~ enlarge ~

FIGURE 9.6 Hard disk drive (HDD) areal density is now doubling every 12 months. Courtesy of IBM Research.

~ enlarge ~

FIGURE 9.7 Cost per megabyte of magnetic hard disk drive (HDD) storage, 1980 to 2005 (projected). Semiconductor memory (Flash and DRAM) is shown for comparison. Courtesy of IBM Research.

Page 146

It is amazing that while magnetic effects drive a $100 billion per year industry, our basic understanding of magnetism, even in a material such as iron, is incomplete. The fundamental limit on the stability of magnetic domains is an important area of basic investigation in magnetism. Materials exhibiting colossal magnetoresistance (CMR), with much more sensitivity to magnetic fields than even GMR, are being actively investigated. The advancing march of magnetic technology makes investigation of these limits inevitable, but these are also some of the most challenging questions for condensed-matter physics and materials science. What is the smallest-size magnetic element that is stable against external perturbations such as temperature fluctuations? Given that quantum mechanics sets bounds on the lifetime of any magnetic state, how do such bounds ultimately establish limits on the size of the smallest possible magnetic entities useful for technological applications?

The applications focus provided by GMR has helped to stimulate and invigorate the search for new magnetic heterostructures and nanostructures and new magnetoresistive materials. Work on magnetic multilayers is stimulating new thinking about novel devices that can be made by integrating magnetic materials with standard semiconductor technology. Spin-polarized tunneling experiments are helping to elucidate novel magnetic properties as well as demonstrate qualities that have considerable potential for use in devices. The first successes in spin-polarized tunneling between two ferromagnets through an insulating tunnel barrier at room temperature occurred only very recently, with resistance changes of greater than 30 percent now demonstrated.

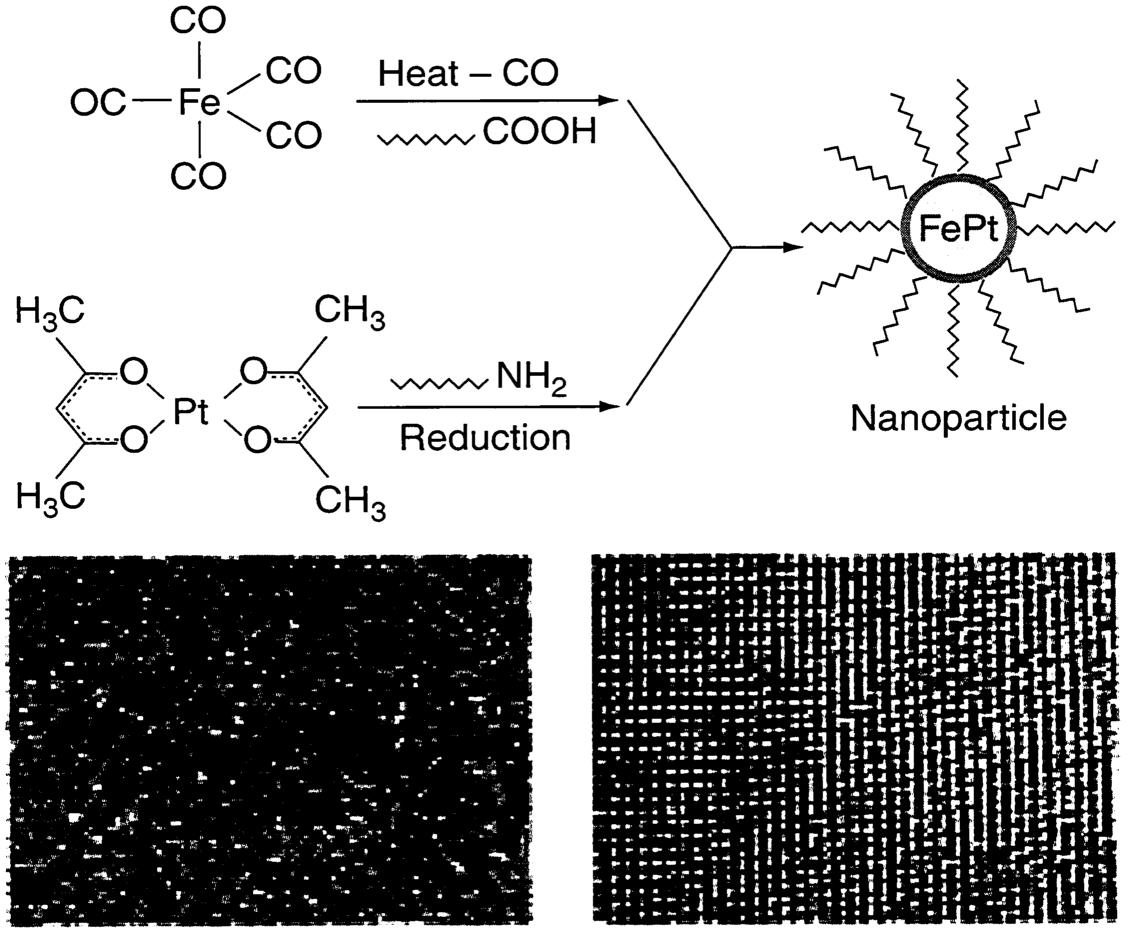

The continued exponential growth of magnetic storage is by no means assured. As with silicon and optical communications technology, technical and economic limits are looming, and fierce competition is driving the technology toward those limits at a frantic rate. In the case of read-head technology, GMR is now being widely exploited, with CMR and magnetic tunnel junctions as possible follow-ons. In the case of magnetic recording media, the maximum areal density is related to thermal stability, which decreases with bit size. Today's products are believed to be less than a factor of 10 away from this limit. However, the science of magnetism at these scales is still far from complete (see sidebar “Nanocrystals: Building with Artificial Atoms”), and there will undoubtedly be many more innovations in both structure and materials.

Page 147

NANOCRYSTALS: BUILDING WITH ARTIFICIAL ATOMS

Nanoparticles are nanometer-sized fragments of semiconductors, metals, and dielectrics containing 100 to 1 million atoms. They have captured the attention of researchers and the imagination of the public with their fascinating physical properties. A nanoparticle is often referred to as a nanocrystal or a nanocrystallite when the particle core is a single crystal. The observation of discrete properties resulting from confinement effects in these systems has spawned labels like “quantum dots” and “artificial atoms.” Methods of organizing collections of these tailored nanoscale building blocks into new solids that have special optical, electronic, or magnetic properties are being explored. In fact, under the right conditions, these building blocks can assemble themselves (crystallize). When the individual particles are single crystals, the array is often referred to as a nanocrystal superlattice. Their potential value has recently been demonstrated in magnetic recording studies. The lower left panel shows state-of-the-art CoPtCrB magnetic recording media, while the lower right shows a self-assembled FePt nanocrystal superlattice at the same magnification. Each FePt nanocrystal is 4 nm in diameter. Smaller, more uniform grains in the FePt system will enable more detailed studies of the limits of magnetic recording and the production of ultrahigh-density recording media. The schematic shows the basic combination of organoplatinum, iron carbonyl, and surfactant species employed in the production of FePt nanocrystals, which could provide the means for high-density data storage.

~ enlarge ~ |

Page 148

SUMMARY

The ability to compute, communicate, and store information is at the heart of the information revolution. Silicon chip technology, fiber-optic communication technology, and magnetic storage technology are currently the key enabling technologies. All three are experiencing exponential growth in capacity and exponential reductions in cost per operation. Research in condensed-matter and materials physics is the foundation on which these technologies are based. Ultimately these growth rates will flatten as physical and/or economic limits are reached. At that point, either information technology will stop growing or—more likely—wholly new technologies will arise from basic research as the new enablers. In storage, for example, various scanning probe techniques have been proposed as follow-ons to hard disk drives, and computer logic gates made of biological entities or even individual molecules are possible. In optical communications, the use of miniaturized silicon technology or microelectromechanical systems to produce tiny arrays of switches will enable new, low-cost optical networks that do not require translation from optical to electronic and back to optical for regeneration.

Whatever the future may hold, clearly it is the role of basic research to provide the innovations to improve today's technologies and to lay the foundations for new technologies. The critical role of basic research in the technologies of our era was highlighted in a recent address by Alan Greenspan, chairman of the Federal Reserve Board:1

When historians look back at the latter half of the 1990s a decade or two hence, I suspect that they will conclude we are now living through a pivotal period in American economic history. New technologies that evolved from the cumulative innovations of the past half-century have now begun to bring about dramatic changes in the way goods and services are produced and in the way they are distributed to final users. While the process of innovation, of course, is never-ending, the development of the transistor after World War II appears in retrospect to have initiated a special wave of innovative synergies. It brought us the microprocessor, the computer, satellites, and the joining of laser and fiber-optic technologies. By the 1990s, these and a number of lesser but critical innovations had, in turn, fostered an enormous new capacity to capture, analyze, and disseminate information. It is the growing use of information technology throughout the economy that makes the current period unique.

1 Alan Greenspan, “Technology Innovation and Its Economic Impact,” address to the National Technology Forum, St. Louis, Mo., April 7, 2000.