Panel II: A Historical Perspective: Federal Partnerships in Computing and Biotechnology

INTRODUCTION

Patrick Windham

Stanford University

Mr. Windham said that the second panel would try to provide more details about the historical evolution and today’s relationship between government and industry in the biotechnology and computing sectors. In particular, the panel will try to understand better the forces that are affecting both industries today and think about the forces that are likely to influence them in the future.

The panel today is very distinguished, consisting of Kenneth Flamm of the University of Texas, who has written extensively on the computing and semiconductor industries; Leon Rosenberg of Princeton University, a distinguished pediatrician who has also served as director of research at Bristol-Myers and as Dean of Yale Medical School; and William Bonvillian, legislative director for Senator Joseph Lieberman (D-Connecticut) and one of the most respected senior staffers on Capitol Hill.

PARTNERSHIPS IN THE COMPUTER INDUSTRY

Kenneth Flamm

University of Texas at Austin

Dr. Flamm said that his remarks would update some of the data he compiled in writing two books in the 1980s on the development of the computer industry

in the United States.1 Rather than discuss the history of the computing industry, Dr. Flamm said he would focus on current trends in federal funding for R&D in the computer industry. In light of recent changes in funding levels, as well as the growing importance of information technologies in the economy, updating his data may contribute to this conference’s deliberations, as well as the broader policy debate.

Evolution of the Computer

To set the stage for his discussion of data trends, Dr. Flamm touched on a few topics from the computer industry’s history. Unlike the semiconductor industry, nearly all computer development in the immediate postwar era enjoyed significant federal support. It was not only that government served as the market for computers, but the government also provided substantial funding for computer development. From 1955 to 1965, the commercial market for computers grew rapidly, although the government continued to fund most high-performance computing projects and government-funded computer development projects served to push the leading edge of technology. In the 1965 to 1975 period, the growth of the commercial market for computers accelerated even more, and the government role centered primarily on funding the very high end of computer technology development. During the next fifteen years, from 1975 to 1990, commercial markets grew far larger than government markets and pushed the preponderance of technology development. However, government played an important niche role in funding what might be called “the exotic leading edge” of computer technology development. The period from 1990 to 1997 is marked by some surprising, and in some ways disturbing, trends in computer R&D.

Before turning to describing these trends, Dr. Flamm made an observation about the role of cryptography in the invention of the computer. The ENIAC computer at the University of Pennsylvania is generally credited as being the first electronic computer ever built. However, Dr. Flamm said that a case can be made that the first electronic stored-program digital computer was built in England in 1943 and used by the cryptographic community to break German codes.

In fact, the National Security Agency (NSA) in the United States and its antecedents were the chief funders of much of the advanced computer R&D in the 1950s and into the mid-1960s. The reason for NSA’s interest was cryptography, and indeed the cryptographic community continues to drive innovation in high-end computing as a way to make and break codes for national security purposes.

In addition to NSA, the Defense Advanced Research Projects Agency (DARPA), from the 1960s onward, played a very important role in driving innovation in computing. Among the well-chronicled contributions to computing funded by DARPA include time-sharing, networking, artificial intelligence, computer graphics, and advanced microelectronics. The Defense Department’s record is not unblemished, Dr. Flamm noted. Projects such as the Ada programming language and the Very High Speed Integrated Circuit (VHSIC) initiative did not pay off.

The Atomic Energy Commission and the Department of Energy have also been major players in advanced computing over the years. Their role has not just been in providing R&D funds, but also in serving as a market for high-end computing machines. The National Aeronautics and Space Administration has played a small role in computer development, but an important one in certain niches, such as computer simulation, image processing, and large-scale system software development.

The National Science Foundation came relatively late to the support of computer research because it was bound by traditional academic disciplines in the 1950s and therefore relatively unreceptive to a new field such as computer science. It was not until the 1970s that computer science was seen as a separate discipline and incorporated into NSF’s grant structure. In the 1990s, NSF began to play a very prominent role in high-performance computing.

At the National Institutes of Health, the role has traditionally been rather modest, but as the remarks of Ed Penhoet show, the need for advanced computer systems to interpret data and aid in diagnostics is growing rapidly.

Trends in Federal R&D Support for Computing

Adding up the contribution of all federal agencies to computer R&D, a picture emerges of a declining role of federal R&D support in the computer sector, which Dr. Flamm defines as firms in the Office Computer and Automated Machinery (OCAM) sectors. In the 1950s, the federal government funded 60 percent of computer R&D. As the commercial sector grew in the 1960s, the figure fell to about 33 percent, falling further to 22 percent in 1975, 13 percent in 1980, increasing slightly to 15 percent in 1984, and 6 percent in 1990. As businesses invested heavily in computers throughout the 1990s and as the market for personal computers exploded, the federal share in R&D shrunk dramatically; in 1995, 0.6 percent of computer R&D was federally supported, a number that fell to 0.4 percent in 1997 (Table 1).

Taking a more expansive look at the federal role, in which funding for math and computer science in universities and expenditures for federally funded research and development centers (FFRDCs) are included, the decline in the federal role is less precipitous. Dr. Flamm presented data showing that, when OCAM and these additional categories are considered, the federal share fluctuated be-

TABLE 1 Shrinking federal role: Federal share of OCAM R&D dollars

|

1950s |

60%* |

|

mid-1960s |

33% |

|

1975 |

22% |

|

1980 |

13% |

|

1984 |

15% |

|

1990 |

6% |

|

1995 |

0.6% |

|

1997 |

0.4% |

|

* estimated |

|

tween 16 percent and 26 percent between 1975 and 1995, before falling to 10 percent in 1997 (Table 2). Much of the decrease is attributable to a decrease in funding for math education, an issue policymakers may want to take into account.

TABLE 2 Shrinking federal role: Federal share of OCAM and mathematics and computer science dollars in universities and university FFRDCs

|

1975 |

26% |

|

1980 |

19% |

|

1984 |

21% |

|

1990 |

16% |

|

1995 |

22% |

|

1997 |

10% |

|

NOTE: Mathematics funding has been sharply reduced recently; see trend toward “targeted” federal research noted below. |

|

Analyzing Trends in Federal R&D Support for Computers

In assessing these figures, Dr. Flamm said that one might view them as simply a natural progression for a maturing industry. There may be some optimal amount of money the government should spend on such research, and as the industry grows and the commercial market expands, it should not be surprising or alarming that the overall federal share declines. In other words, the pie may be expanding so greatly that the federal slice will naturally shrink.

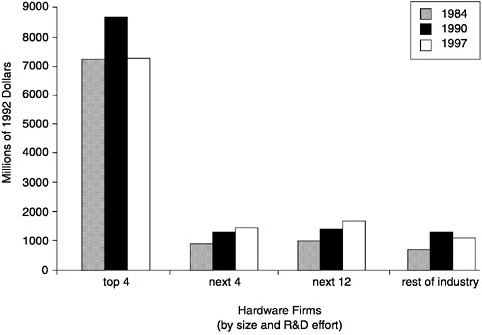

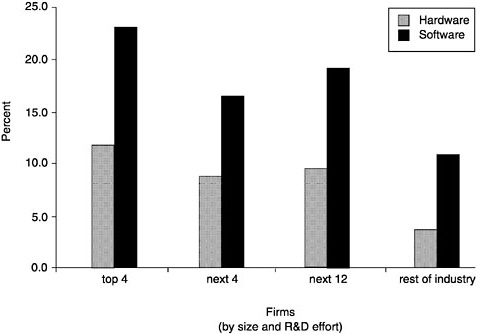

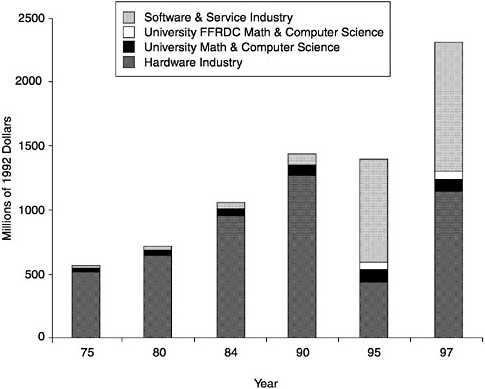

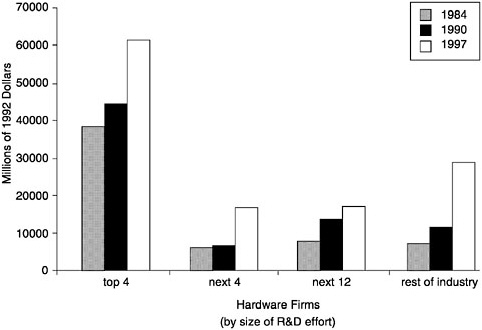

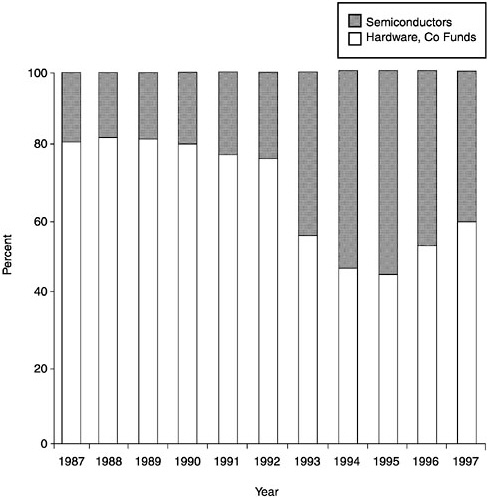

Dr. Flamm said, however, that this is not the case. In presenting a chart that tracked actual expenditures for computer R&D—in 1992 constant dollars—Dr. Flamm showed that the amount of R&D for hardware (which is essentially OCAM) has remained more or less constant since 1984 (Figure 1). Dr. Flamm conjectured that the large drop in R&D spending on hardware in 1995 occurred because IBM’s business classification was changed from hardware to software due to greater sales volume of software. The increase in 1997 may be because

FIGURE 1 Computer R&D.

IBM returned to the hardware category. In sum, the generally flat spending on hardware R&D by industry indicates that the declining federal share in R&D spending in OCAM cannot be explained by growing overall expenditures for computer hardware R&D.

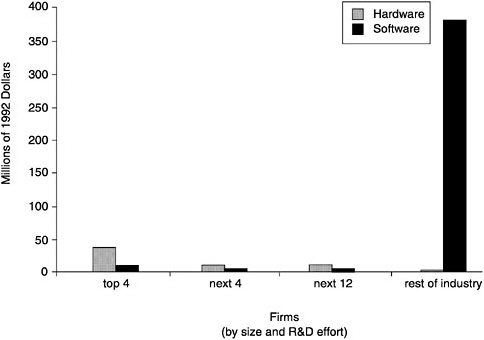

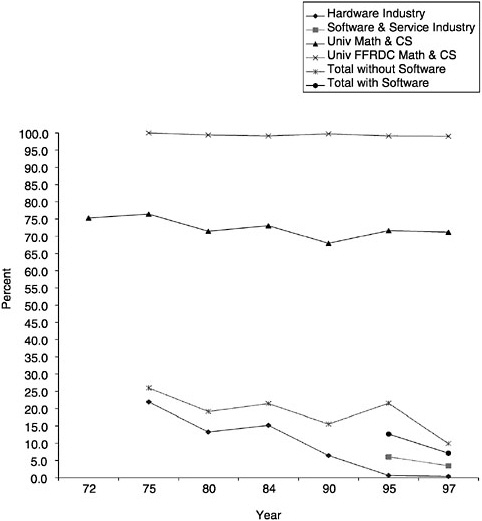

Taking another perspective on the data, Dr. Flamm displayed a chart showing that the federal share in R&D funding for math and computer science in universities and for FFRDCs has remained fairly constant since 1972 (Figure 2). For other categories, such as R&D funding for hardware and software, the federal share has declined markedly. Basically, Dr. Flamm concluded, there is almost no federal R&D being devoted today to computer hardware. This raises the question, in light of major initiatives on high-performance computing, of how these resources are being channeled to industry. Dr. Flamm suggested that procurement is the likely candidate; R&D funds for computer hardware are included in government contracts to develop and purchase high-performance computers for industry.

FIGURE 2 Federal share of computer R&D.

The Distribution of R&D Among Computer Firms

Dr. Flamm then turned to a discussion of the distribution of R&D among firms in the computer hardware industry. With respect to hardware sales among the top 20 R&D performing hardware firms, Dr. Flamm presented snapshots of the sales distributions in 1984, 1990, and 1997. As Figure 3 shows, the industry is concentrated, as the top four firms have about as much revenue as the remaining firms in the sample. In a cross-year comparison, it appears that concentration has declined, as firms that are not among the top 20 R&D performers accounted for relatively more sales in 1997 than in 1984. In terms of total R&D in constant

FIGURE 3 Distribution of hardware sales.

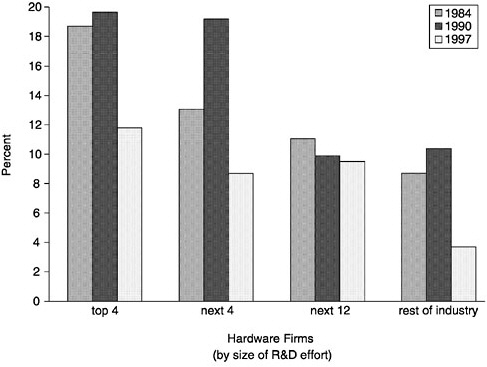

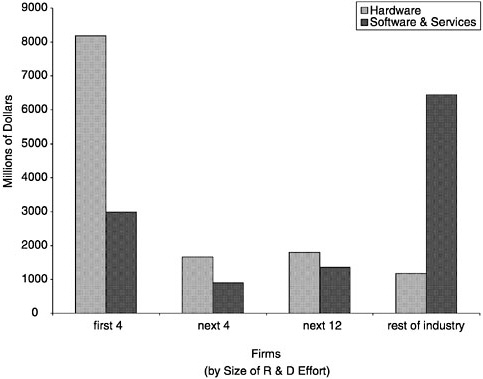

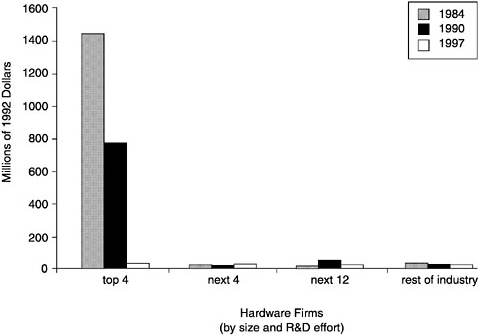

1992 dollars, R&D spending among the top four firms was roughly the same in 1997 as in 1984 and down from 1990 levels (Figure 4). The remainder of the industry shows only modest increases, indicating a flat trend since 1984, and an overall decline since 1990. Turning to R&D as a percentage of sales, Figure 5 shows that this has fallen sharply in recent years, especially among the top four R&D performing companies. Finally, Figure 6 demonstrates that in 1984, federal R&D funds for computer hardware firms were a significant source of funding for the top R&D-performing firms in particular. Federal R&D dollars were never a large source of funds for firms outside the top tier, but by 1997, federal R&D dollars for all segments of the computer hardware industry had ceased to be a meaningful amount.

Hardware versus Software

Comparing R&D across hardware and software firms is still another way to assess the data, Dr. Flamm continued. In 1995, the Bureau of the Census and the National Science Foundation began to collect data specifically on software companies. This new data source creates the opportunity for interesting comparisons. Among the top R&D performers in the industry, hardware firms outpaced software firms in terms of sales, $140 billion to $85 billion in 1997.

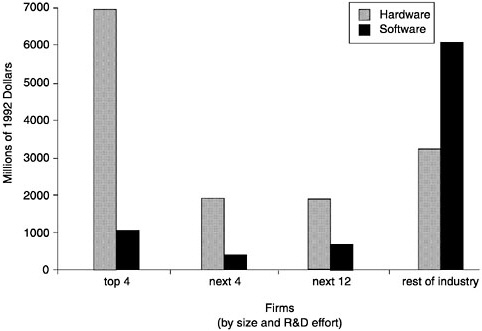

The most notable contrast from the data is in the distribution of sales, total R&D funds, and total R&D as a percentage of sales across the hardware and

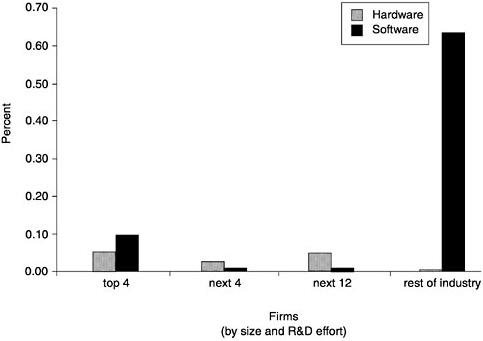

FIGURE 6 Distribution of federal R&D to industry.

software industries. As Figure 7 shows, the top four R&D-performing hardware firms account for a large share of total sales, while for software, the “rest of the industry” (that is, small firms that rank low in terms of total R&D spending) accounts for most of the sales. When the total amount of R&D spending is added across the same categorizations of hardware and software firms, the same pattern emerges (Figure 8). Finally, when examining R&D as a percentage of sales, Figure 9 shows that software companies are more R&D intensive by this measure than hardware firms, and the distribution of R&D intensity for software firms is more even than that for hardware companies.

The distribution of R&D activity in the software industry raises questions, given the size and influence of Microsoft. One conjecture is that some small firms conduct R&D in the hope or anticipation of being acquired by Microsoft, meaning that much of what is counted as small-firm R&D is eventually fed into Microsoft. Dr. Flamm said he knew of at least one example in which “hope-for acquisition” by Microsoft was an explicit part of the business plan for a small software start-up. As for the higher level of R&D as a percentage of sales in the software industry, Dr. Flamm speculated that a wider range of activities could be counted as R&D in the software industry than in the hardware industry. Anyone writing code, for example, could be seen as engaging in development activity.

In terms of federal funding for software R&D, Figure 10 shows that small software firms receive the vast majority of federal R&D dollars; however, this is still a meager amount of overall software R&D. As Figure 11 shows, for small

FIGURE 11 Federal R&D funds as a percentage of sales, 1997.

software firms, federal R&D as a percentage of sales is only 0.65 percent, and much smaller for larger software firms. So federal funding is not very important for software firms.

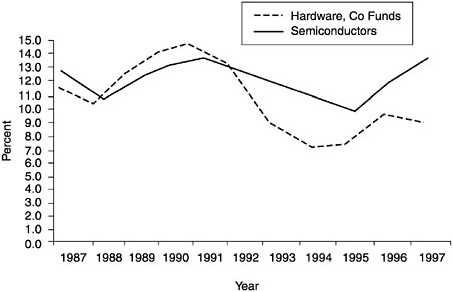

One possible explanation for the decline in R&D in the computer hardware industry is that R&D is migrating from the computer industry into the semiconductor industry. Figure 12 offers support for this notion. Although both sectors saw R&D intensity decline in the early 1990s, the semiconductor industry has witnessed a sharp rebound in R&D intensity since 1995, while the computer industry has experienced a decline in recent years. The distribution of computer versus semiconductor R&D also supports the idea that R&D has shifted to semiconductors from computers. Figure 13 shows that the semiconductor and computer industries now conduct about the same amount of R&D, whereas in the late 1980s computer firms conducted approximately $4 of R&D for every $1 conducted by semiconductor companies.

Summary

The data indicate that industrial R&D in the computer industry is declining, and that federal R&D funding (measured as a percentage of sales) is declining quite sharply. Moreover, fundamental research seems to be bearing the brunt of overall R&D reductions, whereas targeted research—funded both by industry

FIGURE 12 R&D as a percentage of sales.

and government—seems to be on the increase. Dr. Flamm pointed out that while it is true that venture capital funding is more plentiful these days, venture capital should not be confused with R&D funding. Finally, a traditional source of fundamental R&D, namely DARPA, is devoting fewer resources to this type of R&D.

A number of reports have highlighted these disturbing trends, most notably one produced by the President’s Information Technology Advisory Council (PITAC). PITAC has recommended that federal computer R&D support be doubled by 2004 to a total of $1.24 billion, with approximately half going to hardware and half to software. In particular, PITAC recommends that this funding emphasize fundamental R&D as opposed to targeted R&D.

In conclusion, Dr. Flamm said that a strong consensus has developed that information technologies have a substantial impact on the economy. Both government and industry have concluded that the seed corn for future progress in information technologies—long-term research and development—has been underfunded in recent years. Additionally, government and industry have grown to understand that each party should fund different aspects of information technology R&D. However, there is little consensus at this point over the proper level of funding, the proper division between hardware and software, program structures, and overall objectives. Each of these topics is likely to be very controversial and generate vigorous political discussion as Congress considers how to allocate scarce federal resources.

FIGURE 13 Distribution of hardware and semiconductor R&D.

PARTNERSHIPS IN THE BIOTECHNOLOGY INDUSTRY

Leon Rosenberg

Princeton University

Dr. Rosenberg opened his remarks by observing that it was important to have an ongoing conversation on the issues that academics and industrialists must face in the biotechnology and computing industries. Looking at the audience assembled, Dr. Rosenberg said he believes that this does not happen much. Seeing very few familiar faces in the audience—a surprise to Dr. Rosenberg since he spends a good deal of time in Washington and at the National Academy

of Sciences—suggests that these gatherings are rare, but also bodes well for this conference’s deliberations.

To take full advantage of the opportunity the conference offers, Dr. Rosenberg presented the definitions of several terms used in medical research and biotechnology to give participants a common understanding of key concepts, including the following.

-

Medical Research: Science-based inquiry, both basic and applied, whose goal is improvement in health and eradication or mitigation of disease and disability. It is synonymous with health research.

-

Biotechnology: A group of techniques and technologies that apply the principles of genetics, immunology, and molecular, cellular, and structural biology to the discovery and development of novel products.

-

Biotechnology Industry: This industry is composed of nearly 1,300 private companies that use various biotechnologies to develop products for use in health care, food and agriculture, industrial processes, and environmental cleanup. The subset of companies engaging in health research makes up an important part of the medical research enterprise of this country. It is also important to recognize that most of these 1,300 companies are very small. Two-thirds have fewer than 50 employees. All but 20 of these companies are “unencumbered by revenues.” The biotechnology industry is a very entrepreneurial industry, but one with relatively few commercial successes.

-

The Pharmaceutical Industry: This industry is composed of approximately 100 research-based private companies whose purpose is the design, discovery, development, and marketing of new agents for the prevention, treatment, and cure of disease. The term “bio-pharmaceutical industry” is in some ways a more accurate description of the industry, because pharmaceutical companies are today very reliant on biotechnologies.

-

Partnership—the state or condition of being associated in some action or endeavor. In the business context, this means the provision of capital by two parties in a common undertaking, or a joint venture with the sharing of risks and profits.

Dr. Rosenberg pointed out that to some people, government-industry partnership is a misnomer, because the two parties have very different missions and roles in society. The term “alliance” might be preferable to many people, or perhaps the more generic term “interaction.”

Medical Research in the United States

The federal government and the private sector account for over 90 percent of research investment for medicine in the United States today. The remainder

comes from universities, non-profit organizations, foundations, and voluntary health agencies. In the private sector share, bio-pharmaceutical firms account for nearly all of the private sector R&D support. In 1999, $45 billion was invested in medical R&D, with about 60 percent from industry, 35 percent from NIH, and 5 percent from other sources. To keep track of medical research, therefore, one must keep an eye on industry and NIH primarily, even though the other sources are important.

The scientists who produce research from these funding sources, not surprisingly, are located mainly in industry and universities, with a small share from NIH’s intramural program and from independent research institutes. Yet the work that is carried out in universities differs from that carried out in industrial research laboratories. Academic scientists still are fundamentally concerned about the frontier, asking questions that may not have utilitarian purposes. Generally, these researchers compete for peer-reviewed funds in the form of grants from NIH. For academic researchers, approximately 90 percent of their grants come from the federal government, with the remainder coming from industry. That ratio is not, at present, changing much. For NIH, 90 percent of its R&D funds are spent in academic laboratories, with the remaining 10 percent spent in the intramural laboratories in Bethesda.

Company revenues, of course, support industry research. Today, in biotechnology, the industry spends between 15 and 20 percent of revenues on R&D, a very high share by the standard of other industrial sectors, and even other high-tech industries. Taking exception to a point mentioned by Dr. Flamm, Dr. Rosenberg said that this percentage is not dropping. The bio-pharmaceutical companies recognize that it is in their interest to maintain a high level of R&D spending.

Between industry, universities, and government over 200,000 people are engaged in research in the bio-pharmaceutical field. That number continues to grow.

Advocacy for R&D in Medical Research

Dr. Rosenberg noted that little had been mentioned today about the role of advocacy in support of federal funding for R&D in the computer and semiconductor industries, but that he believes it has been crucial to the development of the bio-pharmaceutical industries. Effective lobbying from the Association of American Medical Colleges, Research America, Funded-First, disease groups, and others, have been responsible for the growth in support for federally funded medical R&D over the past 30 years, and the past 10 years especially. In 1990, the NIH budget was $8 billion; in 1999 it was $15.6 billion with likely continued strong growth. That is essentially a doubling of NIH funding over 10 years, and there are many calls for another doubling of NIH’s budget over the next five years.

From 1990 to 1999, private R&D investment in the bio-pharmaceutical industry has grown from $10 billion to $24 billion. The bio-pharmaceutical industry has more than kept pace with increases in public R&D budgets as the industry has continued to use platforms developed in academic laboratories to develop new drugs. In general, the division of labor among industry and academia is such that industry conducts most of the applied research, while university researchers carry out most of the basic research. The bridge between basic and applied research—the transitional R&D that positions the research for commercial use—is conducted to varying degrees by all parties.

The interaction among participants of the medical research enterprise is important. Informal contacts between industry, government, and university researchers take place all the time at scientific conferences. Money does change hands in these interactions, but these informal collaborations are thought to be among the most fruitful for the advancement of the field.

Partnerships in the Biotechnology Industry

In the bio-pharmaceutical sphere, the discussion of partnerships cannot be limited to government-industry collaboration, simply because university research plays such a critical role. Indeed, universities are virtually always the third party in the bio-pharmaceutical R&D arena. Dr. Rosenberg added that grants and contracts play an important role in partnerships in the bio-pharmaceutical field. These grants and contracts may be from government to academia or industry to academia. If one were to exclude grants and contracts from a discussion of medical R&D, then one would greatly skew one’s understanding of partnerships in the field.

Large companies frequently enter into sponsored research agreements with universities, and for them, exclusive access to the research results is very important. Patents are pursued and defended at every level in such arrangements, and licensing agreements are closely overseen for compliance. From the perspective of universities, grants are the most preferable type of collaborative arrangement, because they allow for the maximum amount of academic autonomy in the conduct of R&D and use of its results. For academic scientists, grants are more desirable, but contracts and agreements play a significant role in academic science—a total of $1.5 billion worth of contracts and agreements were entered into between the bio-pharmaceutical industry and universities in 1999.

Dr. Rosenberg added that small companies—especially those founded by academic researchers—need all the sources of support that they can muster. Grants and contracts from government, collaborative relationships with government and industry, as well as taking or giving licenses to intellectual property—these are all useful sources of funding for small, academically spawned start-ups.

The Rationale for Partnerships in the Bio-Pharmaceutical Industry

The major participants in collaborative relationships enter into these arrangements for different reasons. Academics and small companies want more money for R&D, while large companies want access to new technologies and intellectual property. The different needs have led to a very healthy relationship among participants and they have led to greater interaction between the university, government, and private sector. All research-intensive academic institutions have a rich network of interaction with industry and government agencies. Government agencies such as NIH have steadily expanded their contacts with universities, established companies, and small start-ups. For example, in 1998 NIH and private companies entered into 166 cooperative research and development agreements (CRADAs), which allow for the sharing of compounds and other research materials and results, as well as for the exchange of funds. This is the largest number of CRADAs ever between NIH and the private sector. Through the Small Business Innovation Research (SBIR) program, NIH awarded $266 million in grants to small firms for medical and bio-pharmaceutical research. It is expected that the SBIR program at NIH will exceed $300 million in 1999. In 1999, the Commerce Department’s Advanced Technology Program (ATP) awarded $29 million to small biotechnology companies. It is hoped that that number will grow.

To demonstrate that the flow of funds is a two-way street, Dr. Rosenberg said that in 1999, NIH received $40 million in royalties from its licenses, and universities received more than $300 million in revenues from licenses for their innovations in health and medical R&D.

Conclusion

Dr. Rosenberg concurred strongly with Dr. Penhoet that continued advances in biotechnology depend on advances in information technology. Examples are numerous, from the human genome, to computational neurobiology, on-line databases, gene profiling, and patient records. For those close to academia and industry, it has become clear that there is a dearth of trained people in bioinformatics. From his experience in government, universities, and industry, Dr. Rosenberg noted the different cultures within these various institutions. Nonetheless, Dr. Rosenberg said incredible advances have been made over the years. Many concerns have arisen over whether academic culture could withstand greater collaboration with industry, and whether academic research would become more applied as a result of interaction with industry.

In fact, Dr. Rosenberg said, academic research has not become more applied, and the culture of academic research in the health sciences has survived nicely. By and large, the worst-case scenarios—technologically, ethically, or sociologically—have simply not played out. Tensions and potential conflicts of

interest have emerged, and they must be addressed as government, industry, and academia increase the frequency of collaboration. However, Dr. Rosenberg said that collaboration has benefited all parties in recent decades, resulting in better academic research and a more productive industry, and ultimately a better-off public. This should give us confidence that we can effectively address the challenges facing government-industry-academic collaboration in the next millennium.

TRENDS IN FEDERAL RESEARCH

William Bonvillian

Office of Senator Joseph Lieberman

Mr. Bonvillian began by drawing an analogy of federal funding for science and technology. Imagine, Mr. Bonvillian said, a character called “Dr. Joe Science” who is standing in the middle of a 16-lane federal interstate highway, a highway called “the domestic discretionary funding interstate.” Dr. Science is in the middle of it, with cars and trucks speeding by. The interstate has a number of huge 18-wheel trucks on it, and Dr. Science has to dodge one called “Social Security entitlements.” Another 18-wheeler approaches called Medicare entitlements, and another truck called the “tax-cut express” careens toward him. The trucks are eating up the road as Dr. Science tries to stay out of harm’s way. Joe Science is definitely stuck on a tough road.

The question is: Will Dr. Science be able to construct his own 18-wheel truck in order to survive on the road? As Dr. Rosenberg described, the life sciences community is busy building an 18-wheeler that seems well positioned to navigate the 16-lane highway. For the physical sciences, however, no one has even begun to assemble a truck. In other words, Mr. Bonvillian said, neither political party has etched science on its stone tablet; the ideologies of each party simply do not include science in any prominent way, at least in the ways in which entitlements and tax cuts have been included. The life sciences community is making efforts to have its agenda carved onto the parties’ stone tablets, but the physical sciences have not been as successful as the life sciences in this enterprise. The different abilities of the two scientific communities to take their messages to policymakers have profound ramifications for the life sciences and physics, particularly as they become more interdependent.

Trends in Federal R&D Spending

Recent trends in federal R&D spending reveal a steady decline in federal R&D spending as a share of Gross Domestic Product (GDP). From a high of 1.8 percent in the 1960s, Mr. Bonvillian said, federal spending on R&D has fallen to 0.8 percent of GDP today. In the so-called “knowledge economy” of today,

financial analysts look increasingly at factors other than earnings to evaluate firms’ future profitability. Certainly, R&D spending is one important non-earnings-related measure of firms’ potential. However, in the context of the federal budget, R&D seems to be taking a back seat. From 1992 to 1997, federal R&D spending declined by 9 percent in real terms, and there was an additional scare this year when House appropriators passed a bill reducing civilian R&D by 10 percent. Subsequently, the House settled on a figure that approximates last year’s spending on civilian R&D. Mr. Bonvillian suggested that this year’s action by the House signals future political problems in the R&D arena.

The Distribution of Reductions in R&D Spending

R&D spending has not been cut equally across all sectors, Mr. Bonvillian said. There have been winners, and Dr. Rosenberg earlier showed that the research budget at NIH has been the beneficiary of effective advocacy by medical schools, patient groups, and other groups. The National Science Foundation has seen its R&D spending increase slightly in recent years, but R&D funding for some of the large agencies, such as the Department of Energy and the Department of Defense, has declined sharply.

Because different agencies emphasize different science fields, certain scientific disciplines become winners while others become losers. Work published by the STEP Board has found that 15 science research fields are declining, while 11 are rising.2 The fault line for winners and losers is life sciences versus physical sciences, with the life sciences, as already noted, doing much better. Defense R&D cuts play a central role in these trends, simply because the Defense R&D budget is so large; in real terms, DoD’s R&D budget is down by about 30 percent over the past six years. At the time of this report, the Clinton Administration is considering another 14 percent reduction in Defense R&D for the next fiscal year. Over the past 50 years, the Defense Department has funded the research of 58 percent of Nobel Prize winners in chemistry and 43 percent of the Nobel Prize winners in physics. The decline in DoD R&D therefore has profound implications for the physical and life sciences community.

Understanding Changing Patterns of R&D Spending

To some extent, one could argue that the shifting pattern of R&D expenditures reflects progress in different fields and new opportunities in them. The shifts may then be seen as natural adaptations to changing circumstances.

Mr. Bonvillian argued, however, that the shifts are largely a result of organizational changes in government. In the immediate post World War II era, Vannevar Bush envisioned a single Department of Science that would rationally allocate funding across competing scientific research needs. Of course, the United States never established such a department; only a small piece of it was incorporated into the National Science Foundation. Perhaps the rich mix of mission agencies and general funding of basic science has served this country better than a single Department of Science would have. With the end of the Cold War and the changing relative institutional strengths of various science-funding agencies, there has been a substantial reallocation of R&D funding. And neither Congress nor the Executive Branch has come to grips with these changes.

Perhaps the best argument for better balance in science and R&D funding across disciplines comes from the preceding discussions—namely that many of the most important scientific advances today come from research with a multidisciplinary component. In biotechnology and computing, future advances are likely to be based on inherently multidisciplinary R&D, so there will be major societal consequences if this research is not funded adequately and in proper proportion.

Challenges for Policymakers

A crucial question for policymakers is how to determine which R&D efforts must be funded to ensure future progress, in other words, “to separate the crown jewels from the paste” in funding certain parts of the overall federal research portfolio. This is a challenging question to say the least, because it is very difficult to project what will be important 20 years from now. In 1969, for example, two great technological developments occurred: the United States landed a man on the moon, and in the last day of the fiscal year, the Advanced Research Projects Agency (ARPA) put ARPANet—the forerunner to the Internet—into operation. At the time, the entire world knew about a man walking on the moon, but only a couple dozen knew about ARPANet. In retrospect, we need to ask what was more important. Even though it seems obvious to some today that ARPANet has had more impact, the answer to the question is very much open.

“Random disinvestment” in various scientific fields—which is arguably occurring today—is an issue that greatly affects the talent pool for the nation’s overall scientific enterprise. Federal R&D funds not just specific science and technology projects but also graduate education. Such grants to graduate students help them develop not just textbook knowledge but also experiential knowledge on how to become good scientists and engineers. These grants, directly or indirectly, affect industrial innovation.

In confronting these issues, Mr. Bonvillian said that policymakers should try to develop “an early warning system” on the federal R&D portfolio. Policymak-

ers must devise a way to assess when funding in a particular area has fallen below a critical mass level, below which future innovation may be at risk. The issue is not just a matter of funding levels but, as Dr. Rosenberg’s and Dr. Flamm’s presentations indicated, also the balance of R&D funding. Some “alert system” is necessary, but a host of measurement issues arise. How do we measure success and failure in a scientific discipline? Numbers of patents, citations in scientific journals, R&D investment levels, and scientific personnel are all possible measures, but each are imperfect in some way.

Mr. Bonvillian said that Dr. Dolores Etter, the Deputy Undersecretary of Defense for Acquisition and Technology, is concerned about these issues because they affect our defense industrial base. Dr. Etter has asked her staff to develop a “global technology watch” to track trends in worldwide R&D using some of the measures mentioned above. Dr. Etter’s effort will focus in particular on trends in fundamental research. Additionally, a number of private organizations, the Science and Engineering Indicators at the National Science Foundation, the National Critical Technologies panel, and others, track research trends. Mr. Bonvillian suggested that the information from these various efforts could be combined to create an “R&D alert indicator” for policy makers. Developing an alert system does not ensure success in guarding against precipitous drops in R&D spending in some fields; but without at least assembling this information, we are far more likely to be caught off guard.

Mr. Bonvillian also suggested that the science and engineering community give more thought to how the nation can get more mileage from its R&D investments. The innovation process has been changing, and Mr. Bonvillian mentioned a recent article by Don Kash and Robert Rycroft arguing that innovation in complex technologies is now done by a network of innovators, not the sole researcher. They also note that complex technologies made up 43 percent of exported goods in 1970; in 1995, complex technologies made up 82 percent of exported goods. Moreover, 73 percent of patents filed in the United States in 1997 referred to publicly funded R&D; this indicates the growing importance of public R&D and networks of innovation.

The biotechnology and computing industries are prime examples of how constant interaction between government, industry, and academia has contributed greatly to innovation. The Internet, Mr. Bonvillian noted, evolved largely through the rich, continuous interaction between government, university, and industrial researchers. Indeed, the Internet’s evolution points to the fact that innovation takes place through the exchange of information among networks of scientists, engineers, and users. However, federal R&D resources seem to be allocated on the basis of an earlier model of innovation—the so-called “linear model” —rather than today’s era in which networking and multidisciplinary research is crucial. Policymakers must become more attuned to how innovations are actually developed and how federal R&D can support an innovative environment.

Mr. Bonvillian added that networking does not have to be based only on

formal institutional arrangements. Companies are increasingly turning to expert software systems to enable them to ask simple questions such as “who knows what” about a particular area. This growing field of “knowledge management” is improving companies’ capacity to maintain awareness of innovation. Federal R&D managers would do well to improve their “knowledge management” to keep pace with developments in the private sector and universities.

Summary

Mr. Bonvillian made the following points in summarizing:

-

Science—especially physical sciences—face serious funding challenges. Physical scientists must learn from their counterparts in the life sciences how to build political support for R&D funding.

-

An “alert system” must be developed to warn policymakers when funding levels for some scientific disciplines drop below critical levels.

-

The federal R&D system must be organized to take advantage of burgeoning networks of innovation in the private sector and universities.

-

The federal government must take better advantage of “knowledge management” to enhance the efficiency of the federal R&D enterprise.

THE CORNUCOPIA OF THE FUTURE

Daniel S.Goldin

National Aeronautics and Space Administration

I am really pleased the Academy is following up its June report on industrial competitiveness with this week’s events. Science and technology drive the world’s economy and help provide a healthier, safer, and better world. I am also glad you are emphasizing the links between biotechnology and computing. I believe those links will become even stronger as we enter the new millennium—just not in the way you may think. For NASA, that link will be crucial to everything we’re planning to do in the twenty-first century.

Last week, I was flying to a meeting in Norfolk, Virginia. I was looking at the full moon through the airplane window and thought of the Apollo era and people walking on the moon. I thought about what an amazing achievement that was when you consider that the computer on Apollo was less powerful than the one in your car today. Yet it helped take humans to the moon and brought them back safely. And the Apollo era provided an incredible bounty of technological achievements that we are all still using. But that also reminded me of the great paradox we face today.

Today’s electronics are much more advanced than what we used on Apollo

missions. Those computers only ran at a few megahertz and cost millions of dollars—about 100 times slower and 10,000 times more costly than today’s desktop machines. And yet, for all that, our software is more and more complex and much less error tolerant. We still interface with computers by typing characters into a keyboard, rather than a more natural interaction in a fully immersive, multi-sensory, and fully interactive environment.

Think about how many people in the course of a business day gather around a desktop computer just to figure out where this interface problem is coming from. I’m sure that has never happened to any of you.

And the basic platforms for aircraft and spacecraft in 1999 are no different than they were in 1969. The 707 was the last leap forward in aviation, and the rockets we fly today are the same technology we had 30 or 40 years ago. That’s a real paradox.

The shuttle represented a major step forward. It opened the first era of reusability. We no longer throw all the hardware away, but we practically rewrite all the software between each and every mission. Your home computer is more powerful than the ones that first went into the shuttle and you can buy better flight simulators at a toy store than NASA had for the Apollo astronauts.

We have come a long way in some areas, but we still require thousands of people to process the shuttle between each mission and hundreds to conduct launch and mission operations. This operational dilemma I’m talking about is not unique to space or the shuttle program. All you need to do is take a look at today’s air traffic control system or large petrochemical facilities or heavy manufacturing, look into the operations and see the price we’re paying for presentday hard, deterministic computation.

Why the paradox? We do too much by brute force.

Then I began to think forward instead of looking backwards. Some things won’t change. Reaching orbital speeds will always be a great accomplishment. However, if “past is prologue” as is written across the cornice of the National Archives, the future is very exciting. The last 350 years have shown the power of science and technology to shape our society. With each new plateau came great accomplishments—not foreseen by even the greatest of visionaries.

Newton’s mathematical formulation of gravity and the laws of force and energy ushered in the era of modern technology. Maxwell’s mastery of electromagnetism in the 1800s brought the Industrial Revolution to full bloom. The discovery of atomic structure, quantum mechanics and Einstein’s theory of relativity in the 1900s gave us an understanding of the universe and the forces that shape it at its largest and smallest scales.

The twenty-first century will bring the age of bioinformatics and biotechnology, and the physical scientists cannot sit back and argue about why not to spend money on biology. Most people don’t understand biology’s potential to dramatically change electronics, computational devices (both hardware and software), sensors, instruments, control systems, and materials—or the new platform con-

cepts and systems architectures that will bring them all together. The terms of the future are biomimetics, bioinformatics and genomics, and they will be as common as transistors and microchips are today.

So far, our ability to emulate biological functions has been limited to what we can do with silicon microchips, space age materials and chemical reactions. This is simply not good enough. In the future we want our systems to be biologically inspired. This means we can mimic biology or embed elements of biology to create hybrid systems, or they can be fully biological and life-like.

The greatest attribute that biological systems have over solid-state systems is the ability to change on their own. These systems will adapt to different operating environments, to accomplish different tasks or to renew and repair themselves. A robot on a distant planetary surface shouldn’t walk off a cliff simply because someone in mission control on Earth pre-sent a code to move it forward 10 paces. Now this is not fiction, because we just had a robot out in the desert to simulate an operation on Mars. We pre-programmed the robot, and it walked right over a dinosaur footprint.

NASA has set its sights on understanding our universe in greater depth. Eventually, we want to send humans to the edges of our solar system and beyond. This requires a dedication to pursuing the unknown. We have to learn as much as possible about our home planet. We need to explore other planets and return the data—or the human explorers—to Earth safely, relatively quickly, and inexpensively. However, we cannot use brute force to achieve these goals. It is too clumsy, too costly, and too slow. What we need are systems that work with people, to enable a few people to do what many people do today. We need to develop new computing systems to take over routine and mundane tasks, to monitor, to analyze, and to advise us. This means they must be capable of more than just following a set of hard, deterministic pre-programmed instructions.

Today’s computers are a lot like moving a ball on a flat table. People decide direction and velocity, and they have to keep maneuvering the ball on the table. That’s how today’s computer coding works. The codes describe an action, computation, or comparison, and the computer simply executes what it is told to in a linear, deterministic order—one step after another.

Today our complex systems contain thousands of microchips controlled by millions of lines of code all written by hand and structured around a systems architecture that has very little fault tolerance. Since we can only write, verify, validate, and qualify a few lines of code per hour, the cost and time for software development is huge. And we need so many software coders—there aren’t enough in America—that it is holding back our progress because we keep sticking with old tools. The software concepts we are using today were basically developed in the 1970s.

Yet this software is so complex that we can never be sure of finding all possible failure modes. We keep patching and patching. In addition, when everything has checked out and been proven flightworthy, a subtle manufacturing

change in any one component can introduce a new failure mode that may not be uncovered during check-out and quality control procedures. We are starting to see this problem become more and more serious each year, and it’s costing American industry dearly. It’s costing the space program dearly.

This interdependency between software and hardware implementation has, in effect, built design obsolescence into our products. It is becoming almost impossible to easily and safely upgrade systems as technology advances. This paralyzes the ability of our designers to utilize platforms that are upward-compatible with technological advances.

In our space program, this has resulted in robotic operations that require direct human oversight, whether it is a rover on a planetary surface or an astronaut controlling the shuttle’s robotic arm to move objects in and out of the payload bay. It requires many months of expensive simulation and training to plan and conduct these space operations.

We need to move toward the next era of space robotic operations that places the human into the role of a systems manager, not a real-time controller as they are today. This is a crucial difference.

Levels of intelligence need to be incorporated into our systems so that they can conduct routine tasks autonomously. Ultimately we want “herds” of machines to function as a cohesive, productive team to explore large areas of planets, build structures in space, and perform continuous inspections of our most critical systems.

We also want those robots to be able to express emotion and be able to overcome some of the tremendous challenges we are facing. They can also perform the most dangerous tasks and keep our astronauts out of harm’s way. Within the foreseeable future, intelligent machines will not totally replace people. They simply let people do what they do best—engage in creative thought— in the safest possible environment.

NASA believes one of the most fruitful approaches for getting us there is to look to biology for inspiration. Mother Nature holds all the best patents that already exist. Nothing approaches the inherent intelligence, power efficiency or packing density of a “brain.” “Eyes” can almost respond to a single photon and biological electronics sensors are extraordinarily sensitive. Fireflies convert chemical energy to light with near-perfect efficiency, and living membranes, such as skin, will self-heal when injured. No other technology we know of has a comparable ability to self-organize and reconfigure.

We need to look to nature for solutions.

We cannot have a power cord going all the way to Mars—the space program cannot work that way. So our computers must become thousands of times faster, denser, and less power consuming. We will talk about teraflops per watt, not per megawatt, and sizes in terms of cubic centimeters, not cubic meters. The fastest computer today has a teraflop of speed, but it takes a megawatt of electricity to operate it. The brain is a million times faster and takes a fraction of a watt.

Think about it.

We will have robots and spacecraft that are self-diagnosing and self-repairing. They will be able to learn and act, respond, adapt, and evolve. They will also be able to reduce vast amounts of raw data to useful information products to assist humans, not replace them.

We will have a new era of human-machine partnerships. But this time, HAL of “2001: A Space Odyssey” won’t get depressed. Nature is clearly superior, so we can use our understanding of cells and systems as guides for our next-generation devices.

The computational systems of the future will be very different. They will not be designed or built anything like today’s computers. They will be more like the brain, with millions to billions of relatively simple but highly networked nodes. These computers will solve problems by absorbing data and being inherently driven to assimilate solutions to our most difficult problems. Our goal is to keep these computational systems focused on what we want them to do. They will work like water flowing downhill, changing directions, flowing around large obstacles, and over or through smaller ones—rapidly finding their own way to solutions. The computer will capture all relevant physics, biology, and real phenomena, including complex transient behavior.

Even more exciting will be the development of hybrid systems that combine the best features of biological processes, including DNA and protein-based processes, with optoelectronics devices and quantum devices. These hybrid systems will be extraordinary in performance and functionality, and not achievable by any one technology. We get the best of both worlds, but only if physicists learn to cross the biological divide.

Just imagine the day when computers behave more like we do. We will communicate with them using all our senses through totally immersive environments including natural language—not simply through characters typed on a keyboard. They will understand our intentions and even sense our physical state.

Sound like science fiction? Researchers at NASA Ames have successfully tested a revolutionary bio-computer interface using electromyographic signals (tiny electrical impulses from forearm muscles and nerves) to fully control the takeoff, flight and landing of a commercial airline in a high-fidelity simulation. They don’t have keyboards for the computer, just the sensors on their arms and hands. The eventual goal of this research is to develop direct bio-computer interfaces using total human sensory, and two-way interaction and communication, including even haptic feel. As strange as it sounds, we will be moving back to the analog computer, except now the analog is the human brain. Biologically inspired computing tools will also enable us to develop intelligent robotic systems.

Before we send humans to explore beyond our planet, we will first send robotic colonies to set up livable systems. They will need to behave just like humans. They will have to adapt automatically to the environment. They will be autonomous, and they will be able to do jobs in non-traditional ways. Like

humans, these robots will employ biologically inspired sensors and motor control, anticipate future events, cooperate with other systems, select and depose leaders, and be motivated to explore, repair, and adjust to meet changing needs or to respond to an emergency.

Giving robots and computers the capacity to learn and act requires a move from conventional deterministic software to “soft computing,” which accounts for uncertainty and imprecision. Neural networks—one element of soft computing—could be the basis for the futuristic computers I described earlier. Neural networks assimilate vast amounts of data and extract information—trends, patterns, solutions—the kind of thing we do when we learn and think as humans.

Today we can build systems with hundreds to thousands of neural connections. In the future, they will have millions of connections in a package the size of a sugar cube.

For many applications, neural networks reduce supercomputer time by more than an order of magnitude while providing more accurate analysis than conventional approaches.

We are simulating turbine engines on computers driven by neural nets to help engine manufacturers regain the critical edge they need. But they need biology to do it. NASA also has applied neural networks to the flight controls of an F-15 aircraft. The traditional flight control software system—based on conventional, deterministic software methods—required one million lines of code. We reduced it to about ten thousand. We demonstrated improved performance. We introduced software faults, and the system identified the problem and self-corrected in seconds. We even simulated loss of aircraft control surfaces, and the system adapted and returned aircraft control authority within seconds. Now that is an intelligent system. And we are just getting started.

We will move from data…to information…to knowledge…to intelligence.

When we are ready to send humans deep into space, astronaut health and safety will be our top priority. Biologically inspired technologies will enable a human-machine partnership that is advanced enough to ensure the well-being of astronauts, perhaps on a 2- to 4-year trip to Mars.

If you get appendicitis 50 million miles from Earth and travelling at 25,000 miles an hour, you will not be able to come home. And if you are the only doctor on board, you had better have some of these biologically inspired tools. You cannot take a hospital with you. It is too heavy.

We could develop nano-scale sensors with sensitivities and detection capabilities at the molecular level. They would be like little monitor cells, and they would transmit the information they acquire to other systems outside the body or inside our spacecraft. While we will not sense that such devices are in our bodies, we will certainly know they are there because of the continual stream of information they provide about what they are doing and finding. Such on-board devices will be crucial to health care in space or on the ground. An astronaut’s medical emergen-

cy could become a tragedy if we were forced to rely on responses from Earth, which might involve round-trip transmission times of 20 to 40 minutes. Health and safety are much better served with real-time assessments and decisions, which could easily be done with on-board machines. A smart robot, acting as a health-monitoring “buddy” to a human, will free an astronaut from ongoing self-monitoring, leaving much more time for humans to think, create, and experiment.

Humans will still be the ultimate decision makers, using information from the robots and sensors. However, we will be much more informed decision makers than we are today. The idea of a “one size fits all” cure like “take two tablets every four hours” just won’t do when we send astronauts to Mars. We will have diagnostic and treatment procedures that are adaptive in unknown environments. Our machines will respond to our needs and our moods. By measuring nerve activity on the surface of the skin, machines can determine if a person is calm or agitated. Or we might measure hormone or neurotransmitter levels as an indicator of our emotional state or stress level.

They will also make us more productive and improve safety by alerting us to mistakes before we make them, letting us know when we are showing signs of fatigue—an enormous problem in corporate America today. This is just a sampling of NASA’s vision of the amazing benefits biotechnology will bring us in the future.

And we can use these technologies to improve life on Earth. For instance, the tiny health monitoring sensors I mentioned could give constant health updates and instant diagnoses. We are already working with the National Cancer Institute (NCI) to attempt to develop such sensors. We are bringing our physics-based technologies to researchers in the NIH who need these tools. Science and technology are intertwining. We cannot separate them.

NASA sees great potential for enhancing astronaut health during lengthy missions, and the NCI is obviously interested in using the sensors to detect indications of cancer. The National Cancer Institute would like to detect the first mutations of a cell. We don’t know if we’ll get there, but micro-electric sensing may be the way to do it. This system could also be used to control the delivery of drugs. The sensors could analyze blood chemistry—or other material in vivo. Equally important, the sensors could be placed at specific locations where measurement is optimal or where measurement is critical. We would know that a drug may be accumulating at too high a rate in a sensitive part of the body before there is any adverse response.

We would not wait for the body to tell us there is a problem at the macroscopic level; we would know it beforehand. This sensor suite could monitor all critical bodily functions, account for gender differences, and use this data to guide therapies. Biologically inspired technologies also have incredible potential beyond health-care applications.

Eye-like devices of the future would not just work in the visual. They

would work across the entire spectrum. Just imagine how much intelligence that would give our factories, especially “eyes” with single-photon sensitivity. These “eyes” could open up the entire bandwidth of vision and greatly enhance processing and control of products by detecting even the tiniest material defects both on the surface and far below the surface.

We could use “noses” to protect workers in hazardous situations by “sniffing out” potential dangers. This would revolutionize plant safety by providing layered levels of protection and warning, not achievable with today’s mass spectrometry. As I said, the twenty-first century will be the age of biotechnology. It promises a bounty of life-enhancing technologies that will eclipse America’s incredible achievements during the last five decades—the decades of physics.

Let me take you on a futuristic journey. Our entire notion of designing and building systems could change. We would like to have the power to pre-build entire systems in “cyberspace” totally within the computer with geographically distributed teams using heterogeneous systems that use physics and biologically-based calculations to enhance their work.

We could plan and develop every step of every mission from concept to disposal before we ever place a single order or bend a single piece of metal. But in the end, we will still order parts and bend metal unless we have a revolution. Instead of committing 90 percent of our resources early in the process when we only have 10-percent design knowledge (this is why programs overrun in industry and government), we could simulate everything first and focus our resources where they are needed most. When ready, we will have bounded 90 percent of uncertainty and have full confidence in the total system life cycle, cost, and performance.

That is what NASA wants to do a decade from now. However, the ultimate power of biology will be to design the molecules that contain the coding, or blueprint, for complex space systems—that is, spacecraft DNA—that will initially build critical parts, and ultimately, the entire spacecraft. We will simulate the entire process to be sure we have it right, but once we “plant the seed,” spacecraft genetics will take over in our factory of the future. Think of it as hydroponic farming for robots.

I am talking about truly multi-functional capabilities. Machines would fully integrate mechanical, thermal management, power, and electronic systems. They’d be more like humans than simply black boxes and wiring harnesses placed on structures. These biologically based systems may not look exactly like the systems we have today, but they will perform the same basic functions. They will just be better, faster, and cheaper. When I talk of planting the seeds for a new revolution in technology, I mean it in literal terms.

Let’s go another step further.

With the ability to grow spacecraft systems, our craft could grow new parts from raw materials, or better yet, consume the parts it no longer needs to make the parts it does need.

This metamorphosis of single living entities or local ecosystems is taken for granted—it happens in nature every day, every minute, every second, everywhere. To some, it is science fiction to think of spacecraft in this way. But think of the implications upon modern manufacturing and the radical new products we can make on Earth to enhance our society.

As I said, we need to develop machines that will work with our people in the future. But today, we have to work together with a common purpose to make this vision a reality and to make sure America achieves its destiny in the new millennium. Every member of the partnership—government, industry, and academia—needs to contribute to researching and developing revolutionary technologies.

And I want to leave you with this thought. This is not going to happen with wishful thinking. This is not going to happen by scientific cannibalism. This is going to happen because this country must understand that unless it looks to the future, unless it does not take a vacation from long-term R&D, we may have problems beyond the twenty-first century. But I believe without a doubt that the American public will get it. The potential is there. All we have to do is reach out.