BLS is trying, for example, to find an appropriate way to measure the contribution of stock options to national income. He said that the best answers to such challenges would probably be known only in retrospect.

Dr. Price, also of the Department of Commerce, agreed that one of an economist’s greatest challenges for the last 30 years has been to develop new measures for economic activity. For example, traditional means of measuring GDP focus on market activity. Today, many activities captured by market activity, such as child care, elderly care, sick care, and food preparation, used to be done by families. Yet economists call the economy larger when these functions are transferred to “market activity.” At the same time, the department used to record services such as gasoline pumping, which have now moved out of the market. However, because the traditional concept is market activity, economists still take such work into account in looking at welfare over time.

Dr. Price said that similar problems would probably emerge in the future. The amount of self-service education and health care will probably increase, for example, and the Bureau of Labor Statistics has no effective way to measure it. In health care, people are doing more self-education before going to a doctor, which changes the output of health care centers.

RAISING THE SPEED LIMIT: U.S. ECONOMIC GROWTH IN THE INFORMATION AGE

Dale Jorgenson

Harvard University

Professor Jorgenson said he would discuss the “relatively narrow issue” of how to integrate the picture of technology painted by Vint Cerf with the macro picture of the economy described by Robert Shapiro. He added that this task is central to the mission of the Board on Science, Technology, and Economic Policy—a mission for which the National Research Council is positioned to be “ahead rather than behind the rest of the economics profession in this important arena.” The current workshop presents an unusual opportunity because its participants include some of the technologists and economists leading the nation’s effort to understand this complex issue.

Dr. Jorgenson began with recent projections from the Congressional Budget Office. These projections indicated that the economic forecasting community had missed the mark in its projections as recently as three years earlier in predicting an unending deficit. What has happened since, he said, is causing a sea change in thinking about the economic role of technology (Figure 3).6

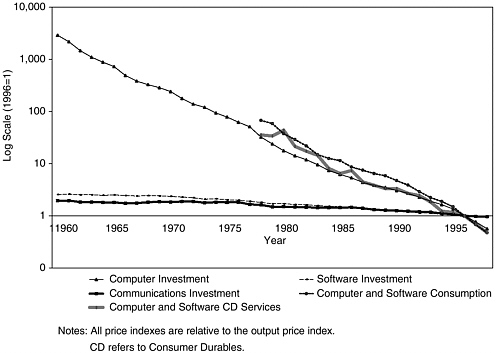

FIGURE 3 Relative Prices of Information Technology Outputs, 1960–1998.

A Sudden Point of Inflection in Prices

The first challenge in understanding this change is to integrate economic and technological information, starting with an examination of prices. Dr. Jorgenson affirmed Dr. Cerf’s insight that, “once you commit something to hardware, Moore’s Law kicks in” and showed how quickly the prices of information technology—in terms of computers and computing investments—have declined at a rate basically determined by Moore’s Law. Translated to the portion of computer technology that can be associated with Moore’s Law, this decline would be about 15 percent a year. In 1995, however, the decline in computer prices suddenly doubled in a dramatic “point of inflection” that has only recently been identified.

The Dark Planet

Some of this change could be attributed to improved semiconductor technology, but this abrupt shift seems to be part of a larger trend that reflects important gaps in our information. One of these gaps is an absence of data about communications equipment. Another gap is the role of software, the “dark planet” in our information system. In fact, software investment was not even part of our eco-

|

Box B: “The continued strength and vitality of the U.S. economy continues to astonish economic forecasters. A consensus is now emerging that something fundamental has changed, with ‘new economy’ proponents pointing to information technology as the causal factor behind the strong performance of the U.S. economy. In this view, technology is profoundly altering the nature of business, leading to permanently higher productivity growth throughout the economy. Skeptics argue that the recent success reflects a series of favorable, but temporary, shocks. . . .”7 Dale Jorgenson, Harvard University and Kevin Stiroh, Federal Reserve Bank of New York |

nomic information system until a year or so ago. As a result, we have an incomplete picture of the New Economy. This point of inflection has attracted considerable attention and emphasized the need for more complete data.

The Initial Skepticism of Economists

Most economists have held the opinion that information technology is important to the economy but no more important than other components. That is, computer chips are no more important than potato chips. In fact, if the food industry were placed on the same dollar-output chart with all components of information technology, it would dwarf the output of the entire information economy.

To counter that skepticism Dr. Jorgenson compared the growth rate of information technology with the growth of the rest of the economy. The nominal shares of output for computers have been fairly stationary, but prices have declined by about 40 percent a year. That means that the real value of computer output is going up at precisely the same 40 percent rate. Moreover, if one rates the nominal share by the growth rate of output information technology becomes far more important. In fact, it accounts for about 20 percent of the economic growth that has taken place in the “new era” since 1995, the point of inflection he identified in the price statistics (Figure 4).

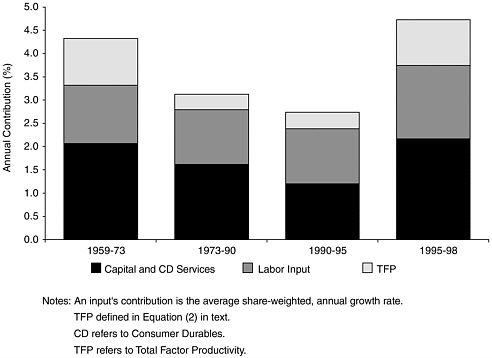

FIGURE 4 Sources of U.S. economic growth, 1959–1998.

Information Technology in the New Economy

Dr. Jorgenson then traced the history of information technology growth since 1959. Through the slow-down of the 1980s and then the real slowdown of 1990 the New Economy appeared to hold nothing new. “It had been there all along but it was building momentum.” The sudden point of inflection came in 1995 when the New Economy took hold and began to change economic thinking.

New Features

Several new features have emerged. First, in assessing the impact of information technology, one must include not only computers but also communications equipment and software. Another new feature is that the consumer portion of information technology has become quite important since the advent of the personal computer.

The Role of Information Technology in Building Production Capacity

Moving to the role of information technology in building the production capacity of the economy, he noted that information technology behavior was very

distinctive, as already emphasized in the data on prices. No other component of the economy shows price declines that approach the rate of information technology. That is the primary reason why the New Economy is indeed new. Information technology has become faster, better, and cheaper—attributes that have formed the mantra of the New Economy.

Some Consequences of Faster, Better, Cheaper

This faster-better-cheaper behavior means that weights applied to information technology investments must be different from weights applied to other investments. First, investments have to pay for the cost of capital, which is about 5 percent. Second, they have to pay for the decline in prices, which represents foregone investment opportunity. The rate of decline was about 15 percent before the point of inflection in 1995 and rose to about 30 percent after it. The rate is a huge amount relative to the rest of the economy where investment prices tend to trend upward rather than downward. Third, investors have to pay for depreciation, because information technology turns over every three or four years, costing another 30 percent. In all, every dollar of investment produces essentially a dollar of cost annually just to maintain its productive capacity and measure its contribution to the input (Figure 5).

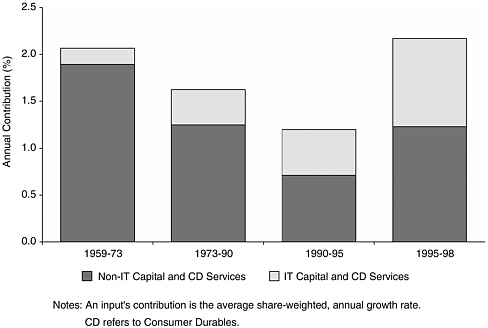

FIGURE 5 Input contribution of information technology, 1959–1998.

Next, Dr. Jorgenson showed the same process from the input side. This is the share of capital as an input, which is approximately 10 percent. When one takes into account the fact that information technology is growing at rates that reflect the drop in prices and the rise in output, the result is the contribution of capital to the U.S. economy. In this picture, information technology contributes almost half the total. He noted that “it’s an extremely important phenomenon and it’s one with which economics must contend.” It is surprising that economists have taken so long to understand this because there is nothing new about the New Economy’s underlying features, which have been developing for years. An examination of information technology growth before 1995 would have produced a picture that was the same in its essential features.

Features of Recent Economic Growth

He then turned to another dimension of change, which is the substantial recent growth of the economy. The basic explanation for this growth is that components of information technology have been substituted for other inputs. These investments were going into sectors that are using information technology and they are displacing workers. We are deepening the capital that is part of the workers’ productive capacity, in regard not only to computers but also to software, communications equipment, and other technology.

Reminding his audience that prices of computers have been declining approximately 30 percent a year, he showed a graph that demonstrated that the growth is not limited to computers but also includes communications equipment and software. Does that mean that the substitution of cheaper computers for workers also obtains for software? He said that the answer is probably “yes.” However, this answer is not supported by statistics, which lack sufficient information about prices for software and telecommunications equipment. “But, I think it’s pretty obvious that that’s going on,” he said, “and that is an important gap in our story.”

He then showed a depiction of the sources of U.S. economic growth—capital, labor, and productivity—and cautioned care in the use of productivity. Productivity is often used in the sense of labor productivity, as in the industries that use information technology, and labor productivity is rising rapidly. However, total factor productivity, which is the output per unit for all inputs, including capital as well as labor, is not going up in those industries. That is why this growth in total factor productivity is highly, though not exclusively, concentrated in information technology.

Rapid but Transient Job Growth

Beginning in 1993, the United States experienced one of the most dramatic declines in unemployment in American history, leading to the extraordinary cre-

ation of 22 million jobs. This is not unique in historical experience, but it is a very large figure. According to business forecasters, this growth will not persist, because the growth rate of the labor force will be roughly the same as the growth rate of the working age population, which is now about half the growth rate that has obtained since 1993.

The growth story is also transient in the following sense. The level of output will remain high because of the investment opportunities in information technology. However, in the long run, the growth of investments is closely associated with the growth of output. In the absence of another point of inflection—a further acceleration in the decline in information technology prices—output growth will lead to a permanently higher level. The new growth will last only long enough to reach this new higher level and will not affect the long-term growth of the economy.

Rising Total Factor Productivity8 in Information Technology

This leads to the following question: How much of total factor productivity growth in information technology—which could lead to a permanent increase in our economic growth rate—is due to identifiable changes in technology?

Dr. Jorgenson translated the change in prices into increased productivity growth. When this is done, we see an increase in productivity due to information technology from about 2.4 percent to about 4.2 percent between 1990–1995 and 1995–1998. This means there is an increase in total factor productivity due to information technology that is about the equivalent of 0.2 percent per year. This turns out to be a very large number, because the total factor productivity growth from the period 1990–1995 was only 3.7 percent. As a result, this increase in the rate of decline of information technology prices has enormously expanded the opportunities for future growth of the U.S. economy. If that persists, as Dr. Jorgenson predicted, it will continue to raise the economic growth rate for the next decade or so.

Uncertainty About the Future

However, an additional factor represents a large gap in economic understanding. This is the moderate increase in productivity growth in the rest of the economy about which, “frankly we don’t know a great deal about it.” If we fill in the gaps in the statistical system by imputing the rates of decrease in computer prices to telecommunications equipment and software, then it turns out that all

the productivity growth in the period 1995–1998, which represents a permanent increase in the growth rate of the economy is due to information technology. This demonstrates the wide range of uncertainty about the future of the U.S. economy with which forecasters have to contend.

Dr. Jorgenson explained the contributions of different industries to total factor productivity growth. While electronics and electronic equipment were among the leaders, he noted that the biggest contributor turned out to be trade sector, followed by agriculture and communications. Thus, “this is not only an information technology story and this represents the challenge to the Board on Science, Technology, and Economic Policy and to all of us here, which is to try to fill in some of the gaps and understand the changes that are taking place.”

Summary

Dr. Jorgenson offered the following summary of his thesis: As recently as 1997 economists were convinced of the validity of the so-called Solow paradox—we see computers everywhere but in the productivity statistics. That has now changed dramatically. A consensus has emerged that the information technology revolution is clearly visible in productivity statistics. The visibility of this revolution has been building gradually for a long time, but something sudden and dramatic happened in 1995, when an accelerated decline in computer prices, pushed by the decline in semiconductor prices that preceded it, made obvious the contribution of information technology to productivity statistics.

Dr. Jorgenson closed by returning to what actually happened in 1995—a change in the product cycle of the semiconductor industry from three years to two years. In addition, new technologies began to emerge, such as wavelength division multiplexing, that represent rates of change that exceed Moore’s Law and have yet to be captured in our statistical system. He suggested that a better understanding of the sustainability of the growth resurgence and the uncertainties that face policy makers should be regarded as a high national priority.

DISCUSSION

Concern About Software Productivity

Dr. Cerf noted that in the telecommunications business the cost of hardware as a fraction of total investment is dropping. That has not helped a great deal because the price of software has risen dramatically in absolute terms and also as a fraction of total investment. He said that productivity in software might turn out to be key for the future productivity of a good fraction of the economy, but he is concerned because of the industry’s limited success in producing large quantities of highly reliable software. Software productivity might have risen by a factor of only three since 1950, seriously lagging hardware advances. As hardware costs

continue to fall, he speculated that cost becomes less important, while software becomes critical. He posed the question of whether the problem of software productivity and reliability will seriously interfere with the future productivity of the New Economy.

Professor Jorgenson invited an answer from Eric Brynjolfsson, whom he characterized as “probably the world’s leading expert on this.” Dr. Brynjolfsson said that he had tried to measure some of the productivity improvements, described in a paper with Chris Kemerer, because software productivity had not been measured nearly as accurately as hardware productivity.9 They found that software productivity has not improved as fast as hardware, but it had still grown impressively—on the order of 10 to 15 percent—compared to the rest of the economy. Their study concerned only packaged software, which has more economies of scale than custom software. The improvements in custom software have been slower, although there has been a steady movement from custom software toward packaged software. He concluded that there is a great deal of unmeasured productivity improvement in software production, but that this is beginning to appear in statistics of the Bureau of Economic Analysis.

The True Costs of Re-engineering a Company

Bill Raduchel commented that because software production is a hardware-intensive process, falling costs of hardware will tend to reduce software costs and raise productivity. This observation was based on his experience of 10 years as a CIO, his role in re-engineering a company three times, and his consulting experience on about 50 other projects. The last re-engineering project cost $175 million, of which information technology expenditures were less than $10 million. The other $165 million appeared over three years as General and Administrative (G&A) expenses for testing, release, data conversion, training, and other activities. He said that productivity would actually decline in some cases because implementation costs show up in the statistics. Once implementation is complete, gross margins and productivity suddenly jump. The changes in business practices actually drive productivity, and these changes are set functions inside the company. Only 5 to 10 percent of the expense of any re-engineering project goes for IT; the other 90 to 95 percent is spent for training and testing and data collection, all of which by accounting rules is booked as G&A. If one looks only at company statistics, one sees an increase in G&A—overhead—rather than an investment to increase productivity.10

More Concerns About Software

Dr. Aho added that the issue of software is an extremely important one, and that software productivity has always been a matter of concern to software engineers. He also raised several questions: How much software is required to run the global economy? At what rate is it growing? Are there any measures of the volume of software? He raised a related issue: Once software is added to a system, it is difficult to remove it. He expressed concern that in many cases, the software people in a company are younger than the systems they are maintaining. This can create handicaps in trying to upgrade such systems.

Dr. Jorgenson commented that both the preceding observations were germane in describing what we know about software investment. He said that such figures are not available for other countries in comparable form and they exclude the phenomenon described by Dr. Raduchel. We have made some progress in our knowledge about software especially since 1999, the year when the Department of Commerce began to track it. The most significant gap in our knowledge, however, is how to use these numbers to calculate productivity. He ended the session by expressing the hope that the current workshop would shed some light on precisely this issue.