2

Accessibility of Data: The Architecture of the Archives

Over the last two decades, major changes have taken place in the way that NASA’s data are archived and distributed. These changes have resulted in more data being more accessible more rapidly to a larger number of users. Prior to the 1980s, most data were processed and interpreted by principal investigators (PIs), either working individually or as teams. Mailing data tapes to the PIs was slow, and data were sometimes lost because instrument failures were not discovered in a timely manner.1 Other data were lost because Pis had strong incentives to publish, but fewer incentives to archive and distribute the data or to send properly documented data to established archives. Even if data were archived, they were not always in convenient formats or on usable media.2 The primary facility for storing and maintaining data was the National Space Science Data Center (NSSDC), which had been operating since 1966.

The 1980s saw the introduction of data systems that would process and archive data centrally and provide a variety of services. Nevertheless, a 1982 National Research Council (NRC) report found that “the distribution, storage, and communication of data currently limit the efficient extraction of scientific results from space missions.”3 These problems were expected to worsen as data volumes continued to grow exponentially. A 1985 NRC report recommended the establishment of a network of geographically distributed data centers and active archives for dealing with the data.4 Data that require long-term maintenance because of the likelihood of future use would be held in data centers, and data being used intensely in research would be held in active archives. NASA adopted the idea and established 10 active archives by the early 1990s. Today, there are 16 major data archives, data centers, and services (see Table 2.1), which disseminate most of the data from the Earth Science and Space Science Enterprises.5

TABLE 2.1 Earth and Space Science Archives and Data Centers

|

Facility |

Year Established |

Host Institution |

Scientific Specialty |

|

Earth Science |

|||

|

ASF DAAC |

1990 |

Alaska SAR Facility, University of Alaska |

Sea ice, polar processes |

|

EDC DAAC |

1992 |

EROS Data Center, U.S. Geological Survey |

Land processes |

|

GSFC DAAC |

1993 |

Goddard Space Flight Center, NASA |

Upper atmosphere, atmospheric dynamics, global biosphere, hydrologic processes |

|

LaRC DAAC |

1989 |

Langley Research Center, NASA |

Radiation budget, aerosols, tropospheric chemistry |

|

NSIDC DAAC |

1991 |

National Snow and Ice Data Center, University of Colorado |

Snow and ice, cryosphere |

|

ORNL DAAC |

1993 |

Oak Ridge National Laboratory, U.S. Department of Energy |

biogeochemical fluxes and processes |

|

PO.DAAC |

1991 |

Jet Propulsion Laboratory, NASA-Caltech |

Ocean circulation, air-sea interaction |

|

SEDAC |

1994 |

CIESIN, Columbia University |

Socioeconomic data and applications |

|

Space Science |

|||

|

ADC |

1977 |

Goddard Space Flight Center, NASA |

Astronomy, astrophysics, photometry, spectroscopy |

|

HEASARC |

1990 |

Laboratory for High-Energy Astrophysics, Goddard Space Flight Center, NASA |

High-energy astrophysics |

|

IRSA |

1999 |

Infrared Processing and Analysis Center, CalTech |

Infrared science |

|

MAST |

1997 |

Multi-mission Archive, Space Telescope Science Institute |

Optical/UV science |

|

NED |

1989 |

Infrared Processing and Analysis Center, CalTech |

Extragalactic astronomy and cosmology |

|

NSSDC |

1966 |

Office of the Space Science Directorate, Goddard Space Flight Center, NASA |

Space physics data and long-term maintenance of all space science data |

|

PDS |

1991 |

Jet Propulsion Laboratory, NASA-Caltech |

Planetary and space science |

|

SDAC |

1991 |

Goddard Space Flight Center, NASA |

Solar and heliospheric physics |

Data have never been as plentiful as they are now. The widespread availability of desktop computing and the ability to transfer data via the Internet have made a wide range of data quickly and easily accessible to all. Both the Earth Science and Space Science Enterprises have policies of full and open access (i.e., data are available without restriction, for no more than the cost of filling a user request), which encourages data use by the broader community.6 Proprietary periods differ by discipline, but in all cases, data are to be made available to the broader community within two years. This policy encourages rapid data processing and publication. Finally, plans for documenting and archiving data are now required of every mission. Of course, compliance with these policies varies, and some data systems and services operate more effectively than others do.

This chapter summarizes strategies for making data available in several earth and space science disciplines and identifies the approaches that appear to be most effective. The space science active archives are discipline-specific and operate independently of one another, using standards and formats developed for their specific holdings. The earth science active archives are also discipline-specific, but they use common standards and formats to permit data from multiple centers to be located and integrated. Such integration is essential for studying complex environmental processes. Space science research problems have traditionally not required the integration of data from multiple centers. However, as described in Chapter 4, this is starting to change in some disciplines.

SPACE SCIENCE DATA SYSTEMS

NASA has supported the creation of a number of data centers for astrophysics, planetary science, and solar science. Information about the active archives, data centers, and data services is summarized in Table 2.2. There is wide disparity in budgets, but it is not the size of the holdings that determines the costs of operating a data center. Instead, cost drivers include (1) the complexity of the holdings and the number of unique data sets that must be acquired, quality controlled, and maintained, with planetary science being a prime example of a discipline that collects very different types of data; (2) the demand for user services compared with automated data delivery; (3) the need to repackage the data in formats suitable for particular types of research; (4) the investment in user interfaces, visualization programs, and querying tools; and (5) the overhead imposed by the host institution.

Astrophysics Data Systems

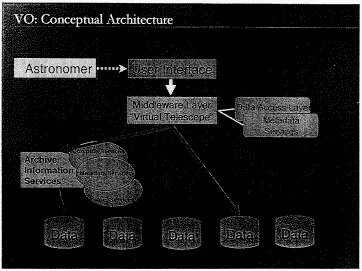

NASA has supported the successful development of an end-to-end system for managing and distributing astrophysics data. The overall architecture of the astrophysics data system is shown in Figure 2.1. Each mission has an associated science center or data facility, which is responsible for the acquisition, characterization, and documentation of the data. In a few cases, the PI may be responsible for data processing. After the initial proprietary period, if there is one, the data are

|

6 |

For example, see NASA Earth Science Enterprise Statement on Data Management, April 1999, <http://globalchange.gov/policies/agency/nasa.html>. |

TABLE 2.2 Characteristics of Space Science Data Facilities and Services

|

Center |

Number of Usersa |

Budget ($M) |

Holdings (TB) |

Number of Staff |

||

|

FY 2000 |

FY 2005 |

FY 2000 |

FY 2005 |

|||

|

Data Facility |

||||||

|

HEASARC |

8,887 |

1.5 |

1.8 |

2 |

6 |

14 |

|

IRSA |

4,022 |

1.2 |

1.3 |

18 |

23 |

2.5 |

|

MAST |

3,300 |

0.6 |

1.1 |

11 |

13 |

4.6 |

|

NSSDC |

Unknown |

5.9 |

5.9 |

20 |

35 |

58 |

|

PDS |

6,000 |

4.8 |

6.1 |

1 |

76 |

27 |

|

SDAC |

Unknown |

0.6 |

1.1 |

3 |

5–15 |

2.5 |

|

Data Service |

||||||

|

ADC |

59,418 |

0.6 |

0.6 |

18GB |

23GB |

5 |

|

NED |

18,382 |

0.9 |

1.5 |

0.2 |

2 |

11 |

placed in an active archive, from which they can be downloaded by the community. In some cases, the active archives are maintained by the mission-specific science center. In other cases, they are transferred to one of the wavelength-oriented centers: the Multi-mission Archive at Space Telescope (MAST) for optical/ultraviolet data, the Infrared Science Archive (IRSA), or the High Energy Astrophysics Science Archive Research Center (HEASARC). These centers take advantage of the economies of scale associated with providing a common archive and distribution infrastructure, and they maintain staff who are sufficiently knowledgeable about the data to assist community users. Standard algorithms are developed and made available to the community for performing functions such as extracting sources from images and classifying sources to determine whether they are stars or galaxies. Algorithm development is science-driven, with priorities determined by the astrophysics community. Long-term maintenance of the data is the responsibility of the NSSDC.

|

a |

Unique users who received data in FY 2000. “Unknown” indicates that the facility counts only the number of Web site hits. |

|

|

NOTE: Budgets and holdings for FY 2005 are estimated. |

SOURCE: Managers of the data facilities and services (see questionnaire in Appendix C).

The standard policy is to make all data openly available; for some facilities there is an initial, usually brief, proprietary period. For example, Hubble Space Telescope (HST) data become available one year after they are obtained for the investigator who proposed the specific observations. Support for calibration, documentation, archiving, and distribution makes the policy effective; HST data are used extensively by scientists other than those who submitted the original observing proposals.

The Space Infrared Telescope Facility (SIRTF) Legacy Science program illustrates another approach to providing early and open access to data. SIRTF is a cryogenically cooled telescope with a finite lifetime, probably about three years. The usual sequence followed by an observing program (i.e., submit a proposal, observe, analyze, publish, interpret, and then submit a new proposal based on what was learned) is too long for a short mission, especially one that offers orders-of-magnitude gains in sensitivity and that will undoubtedly discover unexpected phenomena. The Legacy Science program will move data into the public domain immediately in order to guide subsequent proposals from the community.7 Funding is being made available to

|

7 |

The six science teams supported by the Legacy Science program were chosen through peer review. A description of the projects is given at <http://sirtf jpl.nasa.gov/SSC/A_GenInfo/SSC_A1_Legacy_Selection.html >. |

FIGURE 2.1 The architecture of the astrophysics data centers. Data are initially calibrated and stored at mission-specific centers, then transferred to centers organized by wavelength—IRSA for infrared data, MAST for optical/ultraviolet data, and HEASARC for high-energy data. These wavelength centers maintain most of the data online so that they can be readily accessed by the user community. The NSSDC provides long-term maintenance and backup storage of data. In addition, a number of services facilitate access to data. NED, for example, makes it possible to locate data on individual galaxies; SIMBAD (Set of Identifications, Measurements, and Bibliography for Astronomical Data) performs a similar service for stellar data; and the ADS (Astrophysics Data System) provides online access to most of the astronomical literature.

SOURCE: Ethan Schreier, Space Telescope Science Institute.

six science teams prior to launch in order to support the planning of large, coherent SIRTF investigations that will provide data of general and lasting importance to the astronomy community. These science teams will also collect ancillary data if they are required, and will develop postpipeline processing algorithms and software in time to be applied as soon as the SIRTF data become available.

In addition to collecting data, astronomers have used NASA support to develop a number of integrating services that facilitate research. The Astronomical Data Center (ADC) provides Internet access to bibliographic information and abstracts for most of the published papers in space science and full articles from many journals. For an astronomer looking for relevant material in the published literature, a computer terminal, not a library, is likely to be the first stop. The NASA/Infrared Processing and Analysis Center Extragalactic Database (NED) provides online access to information on galaxies, quasars, and extragalactic radio, X-ray, and infrared sources. The database contains positions, redshifts, photometry, images, other basic data, and associated physical quantities as well as a comprehensive catalog of the published literature. NED has become, according to the most recent senior review (see Box 2.1), “an irreplaceable tool for observational and archival extragalactic research.”8

The active archives maintained by MAST, IRSA, and HEASARC are seeing heavy and growing use for research (see Figure 1.1 in Chapter 1). In the case of long-lived missions such as HST, grant support is available for research that makes use of data stored in the active archives. Awards for both new observations and for use of older data are made through peer review.

NASA’s Astrophysics Senior Review panel, which met in June 2000, found that the astrophysics active archives and data services are generally serving the community well.9 However, the panel recommended that greater attention be paid to increasing interoperability of data sets and active archives. Plans for creating such a system are described in Chapter 4.

Planetary Data Systems

Planetary science receives its data from ground-based telescopes, Earth-orbiting telescopes, and space missions to solar system objects. In addition, some complex and expensive modeling studies are viewed as community resources, and the data from these calculations are made available to the wider community. The structure and character of data from these sources varies greatly. Data from ground-based telescopes are under the control of the PI, who is responsible for data reduction, analysis, interpretation, and dissemination of results. No guidelines exist for making these data available to a wider community. On occasion they are placed in the planetary active archive (i.e., the Planetary Data System, described below), but this is the exception. Data from Earth-orbiting observatories make use of the same facilities as for astrophysics data and are handled in the same way. Data from planetary missions are handled in a variety of ways.

|

BOX 2.1 The Senior Review Process The senior review, held every 2 years by an ad hoc panel of researchers active in the field being reviewed, is the highest level of peer review within the Space Science Enterprise. Senior review panels consider operating missions, data analysis from current and past missions, and supporting science and data facilities. Scientific merit is the primary evaluation criteria. The panels are chartered to carry out these tasks:

The senior review process has only recently been implemented in each of the space science programs. The astrophysics program has held six senior reviews since 1998, the Sun-Earth connection program held reviews in 1997 and 2001, and the first planetary science review was held in 2001. SOURCE: G.Riegler, NASA Office of Space Science, white paper on the “Senior Review” Process, January 2002 |

Data from early planetary missions were disseminated in an ad hoc manner. No formal archives were kept, standards and formats varied widely, and in-depth and detailed knowledge of instrument and spacecraft operations was often required to use the data. Frequently, a strong working relationship with the instrument team was necessary. Many early planetary missions were exploratory, and the ability to independently browse, examine, and process large and comprehensive data sets was not a priority.

With the advent of modern instruments and the development of missions that obtain comprehensive measurements of solar system bodies, the planetary science community recognized the need for an established data system for archiving and distributing data. The Planetary Data System (PDS) has been in place for approximately 8 years, and data from all current and planned missions are required to be stored there.

The PDS consists of eight distributed discipline nodes, maintained at university or research centers across the country (see Table 2.3). The nodes were chosen by a competitive proposal process. Most of them are headed by a scientist actively working in the subject area of the node, and most have an advisory committee that meets regularly to review performance, goals, and developments in their area. Some of the discipline nodes (e.g., the Small Bodies Node) consist of several subnodes. A central PDS node at the Jet Propulsion Laboratory links the discipline nodes.10

The PDS facilitates access to planetary data from both ongoing and previous planetary missions. For example, users can access either original experimental data records or derived imaging products from the PDS Imaging Node over the Internet. The data can be searched either by spacecraft mission or by planetary target.11 Although the bulk of its inventory consists of

|

10 |

See <http://pds.jpl.nasa.gov> for descriptions and links to individual nodes. |

|

11 |

See <http://www-pdsimage.jpl.nasa.gov/PDS/public/jukebox.html>. |

TABLE 2.3 Planetary Data and Image Facilities

|

Facility |

Location |

|

PDS Nodes |

|

|

Central Node |

Jet Propulsion Laboratory, Pasadena, Calif. |

|

Planetary Atmospheres Node |

New Mexico State University, Las Cruces, N.Mex. |

|

Geosciences Node |

Washington University, St. Louis, Mo. |

|

Imaging Node |

Jet Propulsion Laboratory, Pasadena, Calif, and U.S. Geological Survey, Flagstaff, Ariz. |

|

Navigation and Ancillary Information Facility |

Jet Propulsion Laboratory, Pasadena, Calif. |

|

Planetary Plasma Interactions Node |

University of California, Los Angeles, Calif. |

|

Rings Node |

NASA Ames Research Center, Moffett Field, Calif. |

|

Small Bodies Node |

University of Maryland, College Park, Md. |

|

U.S. RPIFs |

|

|

Center for Information and Research Services |

Lunar and Planetary Institute, Houston, Tex. |

|

Northeast Regional Planetary Data Center |

Brown University, Providence, R.I. |

|

Pacific Regional Planetary Data Center |

University of Hawaii, Honolulu, Hawaii |

|

Regional Planetary Image Facility |

National Air and Space Museum, Washington, D.C. |

|

Regional Planetary Image Facility |

Washington University, St. Louis, Mo. |

|

Regional Planetary Image Facility |

Jet Propulsion Laboratory, Pasadena, Calif. |

|

Regional Planetary Imaging Facility |

U.S. Geological Survey, Flagstaff, Ariz. |

|

Space Imagery Center |

University of Arizona, Tucson, Ariz. |

|

Space Photography Laboratory |

Arizona State University, Tempe, Ariz. |

|

Spacecraft Planetary Imaging Facility |

Cornell University, Ithaca, N.Y. |

|

RPIF Centers in Other Countries |

|

|

Israeli Regional Planetary Image Facility |

Ben-Gurion University of the Negev, Beer-Sheva, Israel |

|

Phototheque Planetaire |

Universite Paris-Sud, Orsay, France |

|

Nordic Regional Planetary Image Facility |

University of Oulu, Oulu, Finland |

|

Planetary and Space Science Centre |

University of New Brunswick, Fredericton, Canada |

|

Regional Planetary Image Facility |

University College London, London, United Kingdom |

|

Regional Planetary Image Facility |

Institute of Space Sensor Technology and Planetary Exploration, Berlin, Germany |

|

Regional Planetary Image Facility |

Institute of Space and Astronomical Sciences, Sagamihara-Shi, Kanagawa, Japan |

|

Southern Europe Regional Planetary Image Facility |

Consiglio Nazionale delle Richerche Istituto de Astrofisica Spaziale, Area Ricerca di Roma Tor Vergata, Rome, Italy |

spacecraft data, the PDS also stores some ground-based telescope data, and even some theoretical model output.

The recent change in NASA’s approach to planetary missions—from large, expensive, and infrequent missions such as Voyager, Galileo, and Cassini, to smaller and more frequent missions such as those in the Mars and Discovery programs—implies that the number of missions contributing data to the PDS will increase substantially in the near future. Moreover, because of advances in instrument technology, the new, smaller missions may yield larger volumes of data than those from the historic flagship missions. For these reasons, demands on the PDS are anticipated to grow exponentially in the near future (see Table 2.2).

The standard policy is to make all planetary data openly available both to scientists and to the public. In general, the proprietary period during which new data are only available to the science team members has decreased with time. The large planetary missions that typified the 1970s (e.g., Viking and Voyager) had proprietary periods of up to 18 months, which often led to considerable frustration among members of the science community who were not part of a flight instrument team. In contrast, the more frequent, smaller planetary missions have short or no proprietary periods. For example, the Mars Global Surveyor (MGS) spacecraft, in orbit around Mars since 1997, has no proprietary period; instead, there is a brief data validation period during which the science teams verify data quality prior to data releases at roughly 6-month intervals. The large quantity of data generated by the MGS instruments is released via the Internet, either at the appropriate PDS nodes or at a dedicated site maintained by the instrument science team. CD-ROMs of the same data are available a few months later from the PDS node. However, the steadily increasing data volumes will soon make it impractical to distribute all planetary data on CD-ROMs (or even DVDs).

The PDS nodes have evolved with the increasingly sophisticated needs of both researchers and the general public and with the rapidly growing volume of planetary data. The distributed nature of the PDS has both advantages and disadvantages. On the one hand, each node is tailored to the specific requirements of its research community and in that sense is highly responsive. Some nodes even distribute ancillary data (for example, absorption cross sections) and software that is particularly useful to its community. On the other hand, the existence of a large number of nodes does mandate continued oversight to ensure coordination and minimal redundancy.

The PDS has fundamentally changed the manner in which NASA planetary data are distributed. With the advent of this data system, fully calibrated and documented data can be retrieved remotely by researchers who have no relationship with the instrument PI Moreover, the entire system inventory can be searched to discover data on a particular object or topic. The increase in availability and ease of retrieval provided by the PDS is a substantial benefit. However, along with automated distribution comes a decrease in the degree of guidance and interaction on research questions between data users and senior scientists associated with each spacecraft mission.

Another type of resource available to planetary scientists is the Regional Planetary Image Facility (RPIF). A network of 10 RPIFs was established in the United States in the early 1980s to help scientists obtain planetary data required for their research projects. NASA provides the RPIFs with copies of all planetary imaging data, along with annual support for data storage and maintenance. There are also 8 RPIFs in other countries, which only receive data (Table 2.3). In addition to serving scientists, the RPIFs serve as a resource to the local press, students, teachers, and the general public looking for information on planetary imaging data. Helping interested individuals (both scientists and nonspecialists) to find and obtain data appropriate for their needs

is a growing role for the RPIFs. Although these facilities have not yet been reviewed, NASA’s Planetary Geology and Geophysics program has initiated a rotating schedule of reviews that will evaluate the performance of each RPIF every 5 years.

Solar and Space Physics Data Systems

Data related to the Sun and its influence on the interplanetary and Earth environment are managed by a variety of NASA-sponsored organizations, including the Solar Data Analysis Center (SDAC), PI and mission facilities, the National Solar Observatory, and the Stanford Solar and Heliospheric Observatory data center.12 The NSSDC is both the active archive for space physics data (through the Space Physics Data Facility) and the permanent data center for U.S. solar and space physics data.

Data from these organizations as well as from other observatories and facilities around the world are increasingly available via the Internet. Proprietary periods are decreasing, and most solar and space physics data are now available a year or less after they were collected. Some observations, such as images from the Solar and Heliospheric Observatory, are even provided to scientists and the general public in real time.

The use of the Internet to disseminate solar and space physics data has led to a significant improvement in the ability of scientists to access data from different instruments and ground stations. However, many valuable data sets, particularly those held by individual PIs, remain offline. Moreover, as pointed out by a recent NRC report, searching across centers remains problematic, particularly for researchers who need to combine data from several archives.13 Although systems such as the Space Science Data System have been proposed to address this problem,14 the systems have largely lapsed, and users must rely on Web links provided by the individual centers to find data.

Solar Data Analysis Center

The Solar Data Analysis Center at Goddard Space Flight Center is the active archive for solar physics. It serves as the distribution center for a large and growing solar database and provides network access to data and images from such missions as the Solar and Heliospheric Observatory, Yohkoh, and the Transition Region and Coronal Explorer. Much of the data is distributed via network-attached servers with no interactive operating system. According to the archive manager, this approach is necessary for staying within a small budget (see Table 2.2). A senior review held in August 2001 found that the SDAC is an excellent example of a small discipline active archive that operates very cost-effectively and provides major services to the solar physics community.15

|

12 |

NOAA centers, such as the National Geophysical Data Center and the World Data Center for Solar Terrestrial Physics, also manage U.S.-collected solar physics data. |

|

13 |

National Research Council, 1998, Ground-Based Solar Research: An Assessment and Strategy for the Future, National Academy Press, Washington, D.C., 47 pp. + 11 appendixes. |

|

14 |

Final Report of the Task Group on Science Data Management to the Office of Space Science, NASA, Jeffrey Linsky, chair, October 23, 1996, 61 pp. |

|

15 |

Senior Review of the Sun Earth Connection Missions Operations and Data Analysis Programs, August 29, 2001, <http://spacescience.nasa.gov/admin/divisions/ss/SECSeniorReview2001.pdf>. |

Space Plasma Physics

The PI team historically has been responsible for all aspects of handling space physics data derived from the instrument that they built. In the early years of space science, proprietary rights to the data lasted for 2 years after their receipt, and data analysis funding officially was planned for 2 years after launch. Data quality and level of processing varied greatly across the various PI data nodes. PI teams were encouraged to submit their data to the NSSDC for long-term maintenance. Generally, no time interval, medium, or format was specified for this submission.

Because of NASA encouragement and support in the more than 40 years since the beginning of the space age, more standardization has been introduced into the data management process. Instrument teams remain the focal point of all data-processing requirements and their implementation, and they are responsible for processing the data, developing higher-level data products, storing data, and maintaining accessibility. The data quality and level of processing are now not only more uniform across PI data nodes, but the data products are much more refined and sophisticated. PI teams also contribute processed low-resolution, “quick-look” data to missionwide databases or PI Web sites that are accessible in near-real time to the research community. However, high-resolution data, which are needed to study fundamental processes governing space plasmas, are not always widely available, owing to lack of funds. For example, a number of high-resolution data sets from the International Solar Terrestrial Program are available on neither NSSDC nor PI Web sites.16 Investigators are required to submit the full data set to NSSDC, although this requirement has not always been enforced, and resources have generally not been made available to do this job adequately. In some instances, an extended version of quick-look data is held in other archival systems (e.g., Galileo particles and field data are submitted to and held in the PDS).

Although some prelaunch support is available for planning and development of data-handling software, it is generally insufficient to provide fully usable data production immediately after launch. Postlaunch data processing and analysis are usually funded for 2 years after launch. While initial results and discoveries appear during this period, the primary scientific return occurs in the following several years, after confidence has been established in the data-processing software.

EARTH OBSERVING SYSTEM DATA AND INFORMATION SYSTEM

Because many of the important research problems studied by earth scientists are multidisciplinary in nature, the active archives of NASA’s Earth Science Enterprise were designed to be interoperable at the outset. The Earth Observing System (EOS) Data and Information System (EOSDIS) was built to process, disseminate, and archive data from the entire EOS program, with the goal of creating “one-stop shopping” for researchers interested in studying the Earth as a system.17 The objectives of this ambitious program include the following:

|

16 |

For example, high-resolution data sets from the 3DP plasma instrument on the Wind spacecraft, the energetic particles instrument on the Geotail spacecraft, and the Hydra Plasma and Energetic Particles instruments on the Polar spacecraft are not available from NSSDC or PI Web sites. See <http://nssdc.gsfc.nasa.gov/space/ >. |

|

17 |

For a history of EOSDIS, see National Research Council, 1998, Review of NASA’s Distributed Active Archive Centers, National Academy Press, Washington, D.C., 233 pp. |

-

Facilitate the creation of standard data products, thereby permitting the immediate scientific goals of the science teams to be realized.

-

Catalyze the preparation of a wide range of secondary data sets and information products that combine information from different satellites and in situ sources, thereby stimulating collaborative, multidisciplinary research.

-

Make such products readily accessible to the broader scientific community.

-

Preserve data in usable forms for future generations of scientists.

As originally conceived, EOSDIS had two main elements: (1) the EOSDIS Core System (ECS), which was intended to perform a variety of functions—from spacecraft command and control to data acquisition, processing, distribution, and archiving; and (2) a network of eight distributed active archive centers (DAACs) to manage the data and provide user services (see Table 2.1). However, delays in the ECS and problems with the system design led to the adoption of back-up plans for processing data and creating data products. Data from most current Earth Science Enterprise (ESE) missions are being processed by science computing facilities (SCFs) using software designed and implemented for the task at hand, not by the ECS (see Table 2.4).18

The DAAC and SCF components of the system are working well. Users can obtain a wide range of data and products, and the use of common formats and standards permits the integration of different types and scales of data. In general, the production of data sets from all the currently operational missions (e.g., Landsat 7, Terra, the Tropical Rainfall Measurement Mission) is being performed in a timely fashion, including both level 1 and higher data products from each of the instruments. Each day, more than a terabyte (1012 bytes) of data is added to the EOSDIS archive, and 2 terabytes of products are distributed to the community through the DAACs. In addition to fulfilling the needs of scientific users, the DAACs are producing a variety of data products for use by nonscientists, including farmers and urban planners. These data products have already garnered a large and growing user community (see Table 2.5).

TABLE 2.4 Processing Summary for EOSDIS Instruments

|

Mission |

Instrument |

Level 0 Processinga |

Level 1 Processinga |

Level 2 Processinga |

|

Current Missions |

||||

|

ERBS |

ERBS SAGE |

LaRC Instrument SCF |

LaRC Instrument SCF |

LaRC Instrument SCF |

|

TOMS-EP |

TOMS |

Instrument SCF |

Instrument SCF |

Instrument SCF |

|

TOPEX/Poseidon |

NASA ALT |

Instrument SCF |

Instrument SCF |

Instrument SCF |

|

UARS |

All |

CDPF |

CDPF |

CDPF |

|

TRMM |

TIM PR VIRS CERES LIS |

TSDIS TSDIS TSDIS LaTIS LIS SCF |

TSDIS TSDIS TSDIS LaTIS LIS SCF |

TSDIS TSDIS TSDIS LaTIS LIS SCF |

|

SeaStar |

SeaWiFS |

Instrument SCF |

Instrument SCF |

Instrument SCF |

|

Landsat 7 |

ETM+ |

LPGS |

LPGS |

N/A |

|

Terra |

MODIS CERES MOPITT MISR ASTER |

EDOS EDOS EDOS EDOS EDOS |

GSFC DAAC/ECS LaTIS MOPITT SIPS LaRC DAAC/ECS ERSDAC Japan |

MODAPS LaTIS MOPITT SIPS LaRC DAAC/ECS EDC DAAC/ECS |

|

ACRIMSat |

ACRIM |

Instrument SCF |

Instrument SCF |

Instrument SCF |

|

QuikSCAT |

Sea Winds |

Instrument SCF |

Instrument SCF |

Instrument SCF |

|

Upcoming Missions |

||||

|

Meteor |

SAGE III |

Instrument SCF |

Instrument SCF |

Instrument SCF |

|

ADEOS II |

SeaWinds |

Instrument SCF |

Instrument SCF |

Instrument SCF |

|

Jason |

Poseidon-2/ DORIS/JMR |

Instrument SCF |

Instrument SCF |

Instrument SCF |

|

Aqua |

MODIS AIRS, HSB, AMSU AMSR-E CERES |

EDOS EDOS EDOS EDOS |

GSFC DAAC/ECS GSFC DAAC/ECS NASDA LaTIS |

MODAPS GSFC DAAC/ECS Instrument SCF LaTIS |

|

SORCE |

SOLSTICE |

Instrument SCF |

Instrument SCF |

Instrument SCF |

|

ICESat |

GLAS |

EDOS |

Instrument SCF |

Instrument SCF |

SOURCE: V.Griffen, Science Operations Manager, Goddard Space Flight Center, August 2001.

TABLE 2.5 Characteristics of DAACs

|

Center |

Number of Usersa |

Budget ($M) |

Holdings (TB) |

Number of Staff |

||

|

FY 2000 |

FY 2005 |

FY 2000 |

FY 2005 |

|||

|

ASF |

736 |

13.3 |

6.8 |

239 |

712 |

68 |

|

EDC |

20,004 |

4.1 |

11.2 |

74 |

3148 |

87 |

|

GSFC |

47,144 |

5.4 |

12.7 |

154 |

1465 |

131 |

|

LaRC |

3,570 |

10.5 |

12.5 |

39 |

610 |

105 |

|

NSIDC |

1,225 |

3.5 |

5.2 |

5 |

72 |

39 |

|

ORNL |

1,973 |

2.4 |

3.0 |

0.3 |

3 |

13 |

|

PO.DAAC |

15,657 |

5.4 |

6.1 |

8 |

42 |

30 |

|

SEDAC |

17,000 |

3.0 |

4.0 |

0.1 |

0.2 |

27 |

SOURCE: Managers of the DAACs (see questionnaire in Appendix C).

In contrast to the DAAC and SCF components, the capabilities of the ECS component of EOSDIS fall short of those originally envisioned. Early operational problems included: (1) processing delays or failures were caused by bit flips in data and system outages and anomalies; (2) data gaps and missing data files hindered the ability to process the science data routinely; (3) the DAACs and instrument teams promoted new science algorithms, which contributed to the processing backlog; (4) the need for reprocessing was greater than anticipated; and (5) commercial-off-the-shelf (COTS) and system tuning issues decreased system stability.19 NASA has worked diligently to correct these issues, but the capacity of EOSDIS to process and distribute data has not been sufficient to meet all of the expectations of the earth science community. As noted by the Office of the Inspector General, “The ECS contract has been

problematic with significant delays. The entire ECS as originally envisioned is no longer affordable.”20 Accordingly, Goddard Space Flight Center issued a request for proposal in 1998 to restructure the contract. The restructuring defers and/or eliminates some lower-level-data processing functionality; provides less user support; reduces production capacity by 25 percent; discards interim products after 6 months; reduces distribution capacity to users by one-third; reduces timeliness of data distribution; and permits DAACs and SCFs to take on some ECS functions. The increase in the estimated cost of the ECS contract is $98.8 million for 3 years, which includes the reduced requirements; inclusion of a new flight segment approach for Terra; the addition of the control center requirement for Aqua; and the addition of science data management for Aqua, Aura, and ICESat.21 The total award fee to the ECS contractor was decreased 12 percent owing to poor performance in both cost and technical management.

The primary reason for the shortcomings in the ECS capabilities is probably that the ECS software is far too complicated ever to achieve a high degree of reliability. Discussions with DAAC managers and ECS developers suggest that although the ECS software has become increasingly stable over the 22 months since its initial release, it remains fragile. For example, the Moderate Resolution Imaging Spectroradiometer ECS data flow had been running with a 90 to 92 percent uptime prior to the release of the ECS 6A04 software in the summer of 2001.22 After the new software was installed, uptime dropped to only 84 percent, but gradually returned to previous levels as software patches were implemented. Such fragility is symptomatic of a system that is too large (there are currently over 1.2 million lines of code and more than 40 COTS packages) and too complex to be properly tested, maintained, and extended. For instance, testing of the ECS release 6A04 software was incomplete, partly because of the prohibitive expense of testing the performance of the system and partly because of the requirement to rush software to operations to meet the schedule.

It is not clear what should be done with the ECS software in the future. Data streams that are currently captured or processed using the ECS software will continue for several more years, so this software will have to be maintained. On the other hand, a number of tasks handled by the ECS software could possibly be performed more reliably and/or cost-effectively using other existing software.23 Similarly, capabilities not currently part of the ECS could be provided by other software. For example, the Land Rapid Response Project is producing level 1B MODIS products within three to five hours of receiving level 0 granules.24 Since the focus of the data pipeline is to produce level 3 fire products for use by the National Oceanic and Atmospheric Administration (NOAA) and the U.S. Forest Service, level 0 granules corresponding to portions of the Earth covered with water are currently discarded. However, according to a PI on the project, the addition of a small increment of computing capability (a few more nodes) could enable the pipeline to produce level 1B MODIS data sets for the entire Earth with the same

|

20 |

Office of Inspector General, 1999, Performance Evaluation Plan for the Earth Observing System Data and Information System Core System Contract, IG-99–038, September 8. |

|

21 |

Martha Maiden, Code YF Data Network Manager, personal communication, February 2002. The $100 million was allocated to the ECS contractor and the Science Computing Facilities that wished to process data. |

|

22 |

Steven Kempler, Manager, Goddard DAAC, personal communication, August 2001 and March 2002. |

|

23 |

For example, according to the Langley DAAC manager, the LaTIS software, which is already being used to handle data from the Clouds and the Earth’s Radiant Energy System instruments on Terra and TRMM, could have been used for the Multi-angle Imaging Spectroradiometer and Measurements of Pollution in the Troposphere instruments. |

|

24 |

In contrast, the Goddard DAAC normally requires 24 to 48 hours. See <http://rapidfire.sci.gsfc.nasa.gov/index.html>. |

degree of delay.25 Further modifying the software to permit receipt of broadcasts directly from the Terra satellite could eventually lead to a worldwide network of sites generating MODIS (and other) products in near real time. These concepts have yet to be tested, and it remains to be seen whether the system architecture and operations plan of the Land Rapid Response Project would be scalable. Nevertheless, systems that grow from small, focused efforts on the part of many individuals and organizations are commonly more successful than top-down, centralized approaches because they are simpler and more flexible.26 A recent NRC report laid out the following principles for creating small, evolvable information systems:27

-

Because the analysis of long-term data sets must be supported in an environment of changing technical capability and user requirements, any data system should focus on simplicity and endurance.

-

Adaptability and flexibility are essential for any information system if it is to survive in a world of rapidly changing technical capabilities and science requirements.

-

Experience with actual data and actual users can be acquired by starting to build small, end-to-end systems early in the process. EOS data are available now for prototyping new data systems and services….

The task group agrees with these principles and encourages NASA to adopt them in future data and information systems.

STRATEGIC EVOLUTION OF ESE DATA SYSTEMS

NASA recognizes the problems associated with the ECS and is developing a strategy for the evolution of the network of data systems and service providers that support the Earth Science Enterprise.28 The next-generation system is called SEEDS (Strategic Evolution of ESE Data Systems, formerly known as NewDISS). SEEDS is intended to support all phases of the data management life cycle: (1) acquisition of sensor, ancillary, and ground validation products necessary for processing; (2) processing of data; (3) generation of value-added products via subsetting, format translation, and data mining; (4) archiving and distributing products; and (5) providing search, visualization, subsetting, translation, and order services to assist users in identifying, selecting, and acquiring products of interest. Study teams drawn from the user community are being engaged to identify options, define scope, and establish schedule requirements. It is intended that SEEDS will be managed and implemented as an open and distributed information system architecture under a unifying framework of standards, core interfaces, and levels of service.

SEEDS faces a number of major challenges, including determining how to organize and manage a distributed system and achieving a balance between providing science teams with the

appropriate levels of freedom in developing and operating science data systems while maintaining NASA agency accountability for data stewardship and accessibility.

The preformulation phase of SEEDS was initiated in 1998, and the formulation phase is scheduled to conclude in 2003. The SEEDS program has solicited lessons learned from EOSDIS, which NASA summarized for the task group as follows:29

-

Information technology outpaces the time required to build large, operational data systems and services. Technology is now changing at such a rapid pace that it is impossible to predict technological solutions even 2 years into the future. And, in contrast with 10 to 15 years ago, government information systems no longer drive the development of hardware and software; NASA is now just another customer trying to capture the attention of the vendors.

-

Data systems and services should leverage off emerging information technology and not try to drive it. Since NASA can no longer drive commercial hardware and software development, SEEDS must be open to the infusion of new technologies developed by industry. A few years ago many of these industries were completely unassociated with digital information management but are now leaders in the field.30

-

A single data system should not attempt to be all things to all users. The ESE research and applications community is extraordinarily diverse, ranging from scientific researchers to for-profit companies, policy makers, government operations, and the general public. The standards and practices governing the acquisition, archiving, documentation, distribution, and analysis of earth science data vary by user group as well as by scientific discipline. SEEDS must recognize and embrace this tapestry of disciplines and subcommunities; there is no one-size-fits-all solution to the myriad data management needs of the community as a whole.

-

A single, large design- and development-contract stifles creativity. Given the complexity of the required systems and services, the volatility of the technology, and the potential for changes in scientific priorities, centralized development is too inflexible and increases the risk that large portions of the data system will be vulnerable to single-point failures. Such an approach is also prone to “monopolistic” tendencies and does not encourage the kind of diversity and variety found in a competitive marketplace.

-

Future information systems will be distributed and heterogeneous in nature. Management tools and practices must encourage a flexible, distributed, and loosely coupled network of data providers, even if this requires a fundamentally new management approach within the NASA culture.

The task group agrees with these conclusions and notes that many of these “lessons learned” describe the more evolutionary approach implemented successfully in the development to date of the Astrophysics Data System.

NASA had not yet completed its plans for SEEDS prior to completion of this report. Therefore, the task group cannot comment on whether or not SEEDS will meet the needs of the earth science community. Also, no information was available about what role the ECS software will play in the SEEDS effort. Will it be replaced? Evolved? Or simply maintained in its current state? The task group is concerned, however, by the timelines that were provided for the SEEDS

effort.31 The timeline specifies five years of planning and seven years of implementation. This extended time for both phases is inconsistent with the rapid timescales for the evolution of relevant technologies and appears inconsistent with the first of the EOSDIS lessons listed above.

Recommendation. The ECS (the EOSDIS Core System) software should be placed in a maintenance mode with no (or very limited) further development until a concrete plan for the follow-on system, SEEDS (Strategic Evolution of ESE Data Systems), has been formulated, its relationship to ECS defined, and the plan reviewed by an external advisory group. This plan should be measured against the lessons learned from EOSDIS and from the experience in other disciplines, and should include provisions for rapid prototyping and an evolutionary and distributed approach to implementing new capabilities, with priorities established by the scientific and other user communities.

LONG-TERM MAINTENANCE OF DATA

The growing body of NASA data is becoming an increasingly powerful tool for identifying and monitoring long-term changes in objects as nearby as rain forests and as distant as supernovae near the edge of the visible universe. Long-term maintenance requires much more than just making sure that the data are preserved and that the storage media are kept up to date.32 In order to ensure that archived data sets can continue to be used in the future, they must be properly documented, stored with data access and processing software, and migrated regularly to new media, operating systems, and so on. Only by continually reprocessing all data sets and data products can one ensure that the data will be viable 50 years from now.

NASA data are federal records and thus must comply with standards developed by the National Archives and Records Administration (NARA). NARA provides guidance to federal agencies on the management of records, the retention and disposition of records, and the storage of records in centers from which agencies and their agents can retrieve them.33 In addition, NARA collaborates with other federal agencies and universities to develop new archiving approaches. Examples include the Persistent Archive Initiative, and the Methodologies for Preservation and Access of Software-dependent Electronic Records, which are being carried out by the San Diego Supercomputing Center with NARA funding.34 The goals of the Persistent Archive Initiative are to develop an information architecture that can evolve with changes in technologies into the indefinite future. Work on maintaining the ability to discover and access digital objects while the supporting hardware and software systems evolve is of particular importance to NASA, since so many NASA mission data are dependent upon software systems. The Methodologies project is concerned with developing software-independent tools for

|

31 |

Briefing to the task group by Steven Wharton, NewDISS program formulation manager, July 30, 2001. |

|

32 |

A number of NRC reports have discussed the rationale and provided principles for the long-term maintenance of scientific data. For example, see National Research Council, 1982, Data Management and Computation: Volume 1: Issues and Recommendations, National Academy Press, Washington, D.C., 167 pp.; National Research Council, 1995, Preserving Data on Our Physical Universe, National Academy Press, Washington, D.C., 67 pp.; National Research Council, 2000, Ensuring the Climate Record from the NPP and NPOESS Meteorological Satellites, National Academy Press, Washington, D.C., 51 pp. |

|

33 |

See <http://www.nara.gov/records/>. |

|

34 |

See <http://www.sdsc.edu/NARA/>. |

archiving and accessing data.35 Central to this work is the development of criteria for infrastructure-independent representations of electronic documents (including spatial data), which are key to providing access to complex scientific data over time. This project is also contributing to major grid projects such as the National Virtual Observatory (see Chapter 4) and NASA’s Information Power Grid.

Attention must also be paid to international standards, because many countries collect data used in U.S. earth and space science studies. An example of such standards is the International Organization for Standardization reference model for long-term maintenance of data sets, which was recently developed by the Consultative Committee for Space Data Systems.36 The members of this committee included representatives from NASA and space agencies in Europe and Japan. A 2000 NRC report found that the OAIS (Open Archival Information System) model is “important for digital preservation standards and strategies because it defines the functions and requirements for a digital archive through an international standard that vendors and producers of digital information can reference.”37

The OAIS reference model addresses a full range of archival information-preservation functions, including ingest, archival storage, data management, access, and dissemination.38 It covers the migration of digital information to new media and forms, the data models used to represent the information, the role of software in information preservation, and the exchange of digital information among archives. Both internal and external interfaces to the archive functions are identified, as well as a number of high-level services at these interfaces. Finally, the reference model defines a minimal set of responsibilities for an archive to be called an OAIS and an optimum archive in order to provide a broad set of useful terms and concepts.

The earth and space sciences have taken different approaches to long-term maintenance of data. Space science data are maintained indefinitely at the NSSDC. In contrast, earth science data will be transferred to agencies mandated to archive data—the U.S. Geological Survey (USGS) and NOAA—15 years after collection. Both approaches entail a risk to the usefulness of data to future generations of scientists, as detailed below.

Space Science Data and the National Space Science Data Center

The mission of the NSSDC is “to provide data and information from space flight experiments for studies beyond those performed by the principal investigators.”39 The NSSDC acts as the active archive for most space physics data and selected long-wavelength astrophysics data. Much of its current emphasis is on serving the heliospheric, magnetospheric, and ionospheric communities. The NSSDC also serves as the data center for long-term maintenance of data from all other space science missions. It receives data directly from spacecraft project data facilities or their PIs as well as from other space science active archives (e.g., PDS nodes, HEASARC). However, as noted above, only a fraction of data from these missions is actually contributed to the NSSDC; scientifically important data are commonly held by the PIs or active archives.

|

35 |

See <http://www.sdsc.edu/NHPRC>. |

|

36 |

See <http://www.ccsds.org/>. |

|

37 |

National Research Council, 2000, LC21: A Digital Strategy for the Library of Congress, National Academy Press, Washington, D.C., pp. 112. |

|

38 |

See <http://www.ccsds.org/documents/pdf/CCSDS-650.0-R-2.pdf>. |

|

39 |

NSSDC Charge and Service Policy, <http://nssdc.gsfc.nasa.gov/nssdc/cands_policy.html>. |

Traditionally, NSSDC has archived only processed data, but it is increasingly being asked to include raw data and software.

It has been required since 1993 that every project data management plan specify what data will be maintained in the long term, when they will be sent to the data center, and in what easily usable format.40 Yet, some scientific data are not reaching the data center because the PIs do not have the resources to prepare the material for archiving. Those data sets that do reach the NSSDC are not always formatted for convenient use for downstream users.41 For example, data contributed from past space physics missions are typically processed at a low level and are not well enough documented to be used for purposes and by investigators outside the original project. Planetary science data from previous missions are in a variety of formats, typically a different formatting scheme and processing software for each instrument, although the PDS has since developed standards for documenting planetary science data.

In the past decade, NSSDC has taken a number of steps to improve both its data center functions and the services it offers to the scientific community. Data are now held in climate-controlled conditions, and back-up copies are stored in commercial facilities that are compliant with NARA standards. However, the recent destruction of thousands of historic images because of water damage42 illustrates the need to devote additional attention to the safety of the holdings, particularly the nondigital records.

The operations of the NSSDC have been addressed by two recent Office of Space Science senior reviews. The 2000 astrophysics senior review concluded that the NSSDC archives data satisfactorily and with apparent care. However, the review recommended that the NSSDC work more closely with other active archives in terms of connectivity and active linking and with the goal of sorting out overlapping functions in order to streamline the agency’s overall data storage, archiving, and handling functions.43 The 2001 senior review of the Sun-Earth Connection program expressed concern about the long-term availability of solar data and recommended that the current informal agreements concerning the transfer of data from SDAC, the active archive, to NSSDC be formalized.44 This review also noted that the NSSDC had incorporated value-added services that have greatly facilitated accessibility and research in space physics. Finally, the senior review encouraged the NSSDC to complete planning for how to archive raw data and software.

NASA has substantially increased its budget for archive activities (including the active archives) over the last 10 to 15 years. However, funding for NSSDC has declined by 6 percent since the late 1990s.45 NSSDC budgets are projected to remain flat or decline further over the next 5 years, even though holdings are projected to increase by 30 to 40 percent, resulting in a substantial decrease in the number of real dollars available for archival activities. Activities

meant to serve the general public have been reduced or eliminated to accommodate the budget cuts, but if these trends continue, the NSSDC may not meet the needs of the scientific community in the future.

Earth Science Data

The earth science active archives are meant to hold data until 15 years after the mission. At that time, responsibility for the data will be transferred to federal agencies with a long-term data maintenance mission—NOAA and the USGS. The USGS has obtained funding for the long-term maintenance of Landsat data, but funding is still not available for archiving the majority of the data at NOAA. The 1989 NASA/NOAA Memorandum of Understanding specifies that NASA will “transfer to NOAA, at a time to be determined, responsibility for active long-term archiving and appropriate science support activities for atmosphere and oceans data.”46 NASA and NOAA are responsible for making “joint presentations to NASA, DOC [U.S. Department of Commerce], NOAA, OMB [Office of Management and Budget], and the Congress, as necessary, to explain the essential role of each organization and funding needs”. These efforts have been largely unsuccessful, although the president’s budget for Fiscal Year 2003 includes $3 million to begin archiving NASA EOS data at NOAA’s National Climatic Data Center. However, as noted by a 2000 NRC report, “even if this work is fully funded by Congress, it should be recognized that substantially greater investments will be required to develop the [data center].”47

The uncertainty over the ultimate fate of EOS data has long been a concern of scientific researchers and science agencies.48 For example, some are concerned that data will be transferred from scientists and data managers who work with the data and thus understand their usefulness and limitations to data managers without similar experience. This is not an issue for the Landsat holdings, which are already collocated with the Landsat data center. A similar solution, in which a NOAA data center is built at Goddard Space Flight Center, is being considered for atmosphere and oceans data. In 1998, NASA and NOAA sponsored a workshop to develop guiding principles for long-term maintenance of Earth observation data and for assessing lessons learned from current and past experience (see Box 2.2). In 2000, an NRC report outlined the initial steps that should be taken to ensure the continuity of the climate record in the transition, including the following:49

-

NOAA should begin now to develop and implement the capability to preserve in perpetuity the basic satellite measurements (radiances and brightness temperatures);

-

NASA, in cooperation with NOAA, should support the development and evaluation of climate data records, as well as their refinement through data reprocessing;

-

NOAA and NASA should define and develop a basic set of user services and tools to meet specific functions for the science community, with NOAA assuming increasing responsibility for this activity as data migrates to the long-term archive; and

-

NASA and NOAA should develop and support activities that will enable a blend of distributed and centralized data and information services for climate research.

NASA and NOAA should not address these issues in isolation. A number of efforts underway, such as those sponsored by NARA, are developing technologies and approaches to supporting long-term preservation and access to data. Consultation with NARA should be very useful in planning the transition from NASA to NOAA data centers, once adequate funding is secured.

|

BOX 2.2 Findings from the Report of a Workshop: Global Change Science Requirements for Long-Term Archiving According to a 1998 workshop sponsored by NASA and NOAA, data centers should be supported by two guiding principles:

Specific findings include the following:

SOURCE: Adapted from U.S. Global Change Research Program, 1999, Global Change Science Requirements for Long-Term Archiving, Report from a workshop, National Center for Atmospheric Research, Boulder, Colorado, October 28–30, 1998, 78 pp. |

Conclusions

NASA should to have in place both a strategy and funding for long-term maintenance that will preserve data in usable forms. Since the data are a national resource, their preservation is an appropriate federal responsibility and should not be left solely to contractors or principal investigators. If resources are inadequate for preservation, NASA should establish a process involving the scientific community to examine the priorities between acquiring new data and preserving existing data for ongoing scientific uses.

Recommendation. NASA should assume formal responsibility for maintaining its data sets and ensuring long-term access to them to permit new investigations that will continue to add to our scientific understanding. In some cases, it may be appropriate to transfer this responsibility to other federal agencies, but NASA must continue to maintain the data until adequate resources for preservation and access are available at the agency scheduled to receive the data from NASA.