5

Impact of Scientific and Technological Advances on Partnerships

Advances in science and technology drive the evolution of the weather and climate information system. Scientific, operational, and, increasingly, business requirements determine what observations to make, how the information should be analyzed, and what products to create. The scientific understanding generated by developing and using these data and products, together with improvements in instrumentation and computation, lead to a new set of requirements. The new capabilities that emerge from this evolving system can change what the sectors are doing or want to do—sometimes dramatically—and thus directly affect public, private, and academic partnerships.

Despite sharp declines in the telecommunications industry and Internet start-up companies, new technologies and products continue to be introduced at a rapid rate.1 Rapid technological change, intense competition, and the creation of new markets are expected to continue or even increase in the coming decade. This chapter reviews scientific and technological changes in the weather and climate information system that have the potential to affect partnerships. The committee focuses on how the evolution of technology might alter the balance between the sectors, rather than on specific technologies, which have been the subject of numerous reports.2

The history of technology shows that changes come in two main forms: those that can be reasonably well predicted and those that cannot.3 Predictability falls off rapidly with time, so the focus here is on technological changes in the weather enterprise that are occurring now or that may occur over the next five to eight years. There are many computer and communications technologies that may have an impact on both the weather enterprise and the relationships among the partners. Examples include modeling, networking technologies, visualization, human-computer interfaces, and technologies for storing, structuring, and exchanging data. This report focuses on technologies that were deemed to have particular impact on partnerships. Predictable technological changes will have (somewhat) predictable impacts on public, private, and academic partnerships. However, there will surely be surprises as well, which will place unexpected stresses on existing partnerships and create new opportunities for cooperation. In either case, the weather and climate services offered in 2008 or 2010 will likely be very different from the services offered today. A longer view taken by a previous National Research Council (NRC) committee (Box 5.1) is consistent with the trends described in this report.

CHANGES IN THE SECOND HALF OF THE TWENTIETH CENTURY

Fifty years ago weather observations were made with in situ instruments or by eye or ear and plotted by hand on paper weather maps (Table 1.1). Observations were analyzed subjectively, and forecasts were based largely on the empirical skill of government forecasters. Weather and cli-

|

BOX 5.1 2025 Vision of Weather and Climate Forecasts An NRC report A Vision for the National Weather Service: Road Map for the Futurea looked ahead to the year 2025 to much improved weather and climate forecasts and how information derived from these forecasts would be increasingly valuable to society. The report envisions weather forecasts approaching the limits of atmospheric predictability (about two weeks) and new forecasts of chemical and space weather, hydrologic parameters and other environmental parameters. It describes the use of ensemble forecasts that project nearly all possible future states of weather and climate and how these ensembles can be used in a probabilistic way by a variety of users. It asserts that as the accuracy improves and measures of uncertainty are better defined, the economic value of weather and climate information will increase rapidly as more and more ways are found or created to use information profitably. New markets, such as the weather derivatives market, will be created. Some markets will be strengthened (e.g., forecasting for transportation, energy, and agriculture). Other markets may diminish, such as the role of human forecasters in adding value to numerical forecasts beyond one day or in preparing graphical depictions of traditional weather forecasts. |

mate information was disseminated to the public in text format, by radio, or through simple graphical displays on black-and-white television. The weather information system was run almost entirely by the National Weather Service (NWS), with academia focused on basic research and the private sector just beginning to emerge.

The atmospheric sciences community has made enormous progress over the past 50 years since the first weather radars and satellites started an era of remote sensing and the first numerical models of the atmosphere generated 24-hour forecasts of 500-mb (~18,000 ft or 5.5 km) circulation patterns. Advances in technology, including remote sensing from satellites, radars, and in situ sensors; computers; information and communication technologies; and numerical modeling, coupled with increased understanding derived from investments in research, have produced a weather and climate information system in the United States that is at the cutting edge of science and technology.

As scientific understanding and computational capabilities improved throughout the second half of the twentieth century, private companies found opportunities to use government data to create value-added products for clients. However, as little as 10 years ago (1992), federal government agencies still collected nearly all of the data and developed and ran the

forecast models (Table 1.1). Today the situation is radically different. Declining instrument costs have permitted state and local government agencies, universities, and private companies to deploy Doppler radars and arrays of in situ instruments. Increased computing power4 and bandwidth at rapidly dropping prices have enabled a substantial number of private companies and universities to run their own models or models developed by others. The development of new communications technologies (e.g., Internet, wireless devices) has reduced dissemination costs, increased the availability of weather data, and created new markets for weather and climate information. Indeed, advances in networking have transformed the weather and climate enterprise (Box 5.2). Finally, the widespread availability of visualization tools has made it easier for all sectors to display and better communicate weather information. These changes have made it possible for each of the sectors to provide services that were only recently in the domain of another sector (e.g., examples 4, 5, and 7, Appendix D) and thus have become a source of tension in the weather and climate enterprise.

CURRENT AND NEAR-TERM ADVANCES

Data Collection

Weather and climate phenomena and their impact on society occur on a variety of scales, from flooding in a farmer’s field to global weather patterns affected by changes in the jet stream. Studying these different phenomena and developing products and tools to mitigate their impacts requires data of different spatial coverage and resolution, collected from a mixture of satellite instruments, local arrays, and independent stations. Satellite instruments provide high spatial and temporal resolution global coverage. The satellite observations are complemented by in situ measurements from radiosondes, aircraft, and surface stations. Doppler radars track and monitor small-scale severe storms and precipitation systems. Most instruments collect data continuously, but some are event driven. Examples include the lightning detection network, which is triggered by cloud-to-ground lightning strikes, and reconnaissance aircraft that fly into hurricanes. Other meteorological instruments can be adjusted to collect higher-resolution data for specific events—for example, geostationary satellites and radars, which can scan at a higher rate over areas of severe weather, thereby providing greater temporal resolution (on the order of minutes)

|

BOX 5.2 Network Communications Networking is having an increasing impact on all aspects of the weather and climate enterprise. Advances in network technologies have enabled automated data collection, as well as remote access to specialized computing servers that support models and forecasting. Networking has also dramatically increased the speed at which weather products are available and the number of users they reach. However, networking is not monolithic. The networking required for remote sensors and data collection may be wireless and self-organizing and may or may not have to be high bandwidth. Distributed and remote modeling and forecasting require extremely high bandwidth reliable networks to specific locations. However, excessively high or reliable bandwidths are not required for disseminating weather forecasts, watches, warnings, advisories, and other information products to the public. The advances in networking rely to a large extent on improvements to underlying technologies. Terrestrial and satellite radio technologies provide access to instruments and enable operation in conjunction with ad hoc, self-organizing networks,a in which the sensors on the net may also play a role in the infrastructure of the network itself as routers and forwarders of traffic.b Advances in extremely high speed networks have come not only from improvements in copper-based technologies, but also from enormous strides in optical networking.c Several different advances are having and will continue have an impact on dissemination. First, as computer prices decline, home and office computers are becoming increasingly pervasive. The widespread availability of personal computers made the provision of network services possible, but it was the combination of e-mail, the World Wide Web, and web browsers that made them economically viable. Today, the majority of office workers in the United States have networked workstations on their desks. Second, the rise in the wireless cellular telephone and other wireless technologies is enabling people to stay connected while mobile. The combination of computer networks and wireless technologies dramatically increases the avenues for broad, rapid dissemination of urgently important weather information.

|

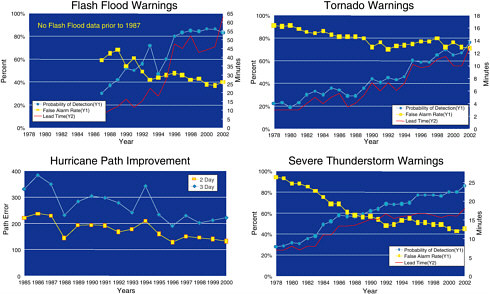

FIGURE 5.1 More frequent and detailed observations will greatly increase the resolution, coverage, and volume of data to be assimilated. Left: Improved resolution provided by upgraded radars. Right: The number and frequency of meteorological observations will increase over the next decade. Although most of this increase will come from new satellites, it also reflects expansions planned for the Cooperative Observer Network and other surface observational networks, additional aircraft reports, and additional radar data. SOURCE: National Weather Service.

than normal for that event. This mixture of observing approaches is also a cost-effective way of meeting the needs of the diverse weather and climate communities.

New observing systems currently being considered are intended to provide better accuracy, resolution, and coverage (Figure 5.1), as well as to maintain continuity with current observing systems. The latter is important not only for weather prediction but also for preserving the continuity of the climate record.5 Over the next five to eight years, existing measuring systems such as rawinsondes and mobile radars must be maintained and upgraded.6 Satellite observations (e.g., hyperspectral remote sounding instruments on geostationary and polar-orbiting satellites, and Global Positioning System [GPS] receivers on low Earth orbiting satellites) and ground-based

remote sensing systems such as radars and GPS receivers will continue to be provided by the U.S. government and its international partners.7 Some of these systems (e.g., satellites) are still too expensive for the commercial weather industry to invest in. Sensors that can be deployed on aircraft or on the ground are becoming cheaper, smaller, and more powerful, primarily because of the continued decrease in cost and increase in capability of semiconductors. As a result, universities, state governments, and the private sector can increasingly afford to purchase, install, and maintain low-cost sensors for purposes that would not have been considered in the past (e.g., monitoring ice conditions on individual highway bridges).8 More expensive sensors can also be cost-effective to the private sector if it holds a monopoly on the data (see Chapter 4), as in the case of lightning data.9 It may soon be possible to deploy networks that reconfigure themselves in response to changing situations, thus providing optimal data coverage at relatively low cost.10 Some of these sensor networks can be automated, further reducing operating costs, although automation raises questions about transmission delays and the robustness and reliability of the underlying networks.

The growth of private networks raises both scientific and policy issues. Most data collected by private companies and some data collected by state and local government agencies are proprietary (see Chapter 4). Since proprietary data and the methods by which they were collected cannot be scrutinized, it is difficult to determine whether the sensors were deployed in a scientifically rigorous manner (e.g., rooftop sensors may give unrepresentative temperature readings) or whether the resulting data were handled according to accepted scientific practices (e.g., calibration, validation, and quality control procedures). This uncertainty limits the value of proprietary data to the weather and climate enterprise.

Modeling and Forecasting

The atmosphere-ocean-land system is complex and yields its secrets slowly. Models for understanding the system and for generating forecasts are only as good as the level of scientific knowledge, quality and coverage of input data, and computer-processing capabilities permit. Numerical models incorporate the dynamical equations governing the changing state of the atmosphere and oceans and fill in the spatial and temporal gaps in the global observing system (see Chapter 2 for an overview of weather and climate models). Such models are gradually getting better as the “three legs of the stool” get stronger (i.e., more and better observations, more powerful computers, better scientific understanding). They will continue to do so as very high resolution data and algorithms describing processes such as cloud interactions and land-surface and boundary-layer physics are incorporated.11

Advances in understanding and improved data coverage place increasing demands on processing capabilities. Indeed, one of the primary constraints on the accuracy and quality of forecasts is the computational effort required (1) to process effectively the large volume of observations that are collected and (2) to run numerical weather prediction models with high spatial resolution. For example, a recent NRC report found that ensemble models require 20 Gflops each day for weather prediction and 2.5 Tflops each day for short-term climate prediction.12 Advances in high-performance computing (both hardware and software) will improve the precision and accuracy of weather forecasts. For example, the new NWS supercomputer—an IBM-built massively parallel machine that uses more than 2700 conventional microprocessors—will be able to resolve differences in weather for Manhattan and Queens.13 Japan recently developed the Earth Simulator, a new supercomputer based on specialized hardware that will model climate change.14 Given the rate of progress predicted by Moore’s Law, it will be possible to forecast weather on a half-mile grid by 2015,15 although insufficient observations may limit the usefulness of these models in some situations.

|

11 |

National Research Council, 1999, A Vision for the National Weather Service: Road Map for the Future, National Academy Press, Washington, D.C., 76 pp. Research opportunities for the atmospheric sciences are also outlined in National Research Council, 1998, The Atmospheric Sciences Entering the Twenty-First Century, National Academy Press, Washington, D.C., 384 pp. |

|

12 |

National Research Council, 2001, Improving the Effectiveness of U.S. Climate Modeling, National Academy Press, Washington, D.C., 128 pp. |

|

13 |

IBM Gets Contract for Weather Supercomputer, New York Times, June 1, 2002, <http://www.nytimes.com/2002/06/01/technology/01SUPE.html?pagewanted=print&position=bottom>. |

|

14 |

|

|

15 |

IBM Gets Contract for Weather Supercomputer, New York Times, June 1, 2002, <http://www.nytimes.com/2002/06/01/technology/01SUPE.html?pagewanted=print&position=bottom>. |

Advances in visualization technology could potentially help to improve the effectiveness of weather forecasts by making it easier for users to interpret large volumes and/or different types of data.16 An example is the virtual geographic information system being used to provide interactive, three-dimensional visualizations of severe weather in northern Georgia.17 The system combines petabyte-sized NEXt generation weather RADar (NEXRAD) and terrain data sets with information on human habitations (e.g., buildings, roads). The visualization grid size can be varied, allowing greater detail to be seen in some parts of the weather system than others. Integrating visualization tools with high-resolution weather models makes it possible to study the real-time development of storms in three dimensions, albeit crudely compared to what will be possible in the near future.

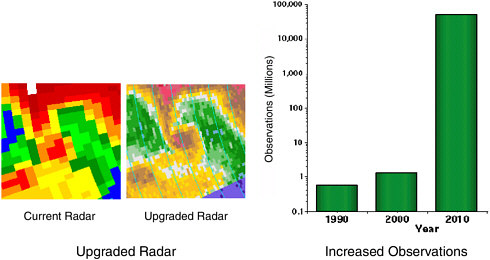

Advances in modeling and the computational and visualization tools that support them can be made by all three sectors, but access to improved models may vary. New or improved models generated by the public or academic sector are likely to be placed in the public domain, whereas models developed by the private sector (or commercialized government agencies in other countries) are likely to be proprietary. Nevertheless, the results of near-term scientific and technological advances are (1) more widespread use of sophisticated models in the academic and private sectors and (2) increasingly accurate forecasts extending further into the future. For example, the average lead time for tornado warnings in the United States increased from 6 minutes in 1991 to 10 minutes in 2001 (Figure 5.2). Four-day forecasts are as accurate today as two-day forecasts were 20 years ago.18 The current limit for scientifically valid deterministic forecasts is 10-14 days, but as the forecast accuracy (Box 5.3) of longer-term events (i.e., seasonal and longer) increases, it opens new avenues of research in the academic sector and new business opportunities in the private sector, especially in agriculture, energy, and insurance.19

|

16 |

L.A. Treinish, 2002, Coupling of mesoscale weather models to business applications utilizing visual data fusion, in Third Symposium on Environmental Applications: Facilitating the Use of Environmental Information, American Meteorological Society, Orlando, Fla., pp. 94-101. |

|

17 |

This partnership between the Georgia Institute of Technology and the University of Oklahoma was funded by the National Science Foundation and the Georgia Emergency Management Agency, and NOAA’s National Severe Storms Laboratory is testing the system. See presentation to the committee by Nick Faust, Georgia Institute of Technology, May 16, 2002, and <http://www.cc.gatech.edu/gvu/datavis/research/weather/weather.html>. |

|

18 |

National Research Council, Satellite Observations of the Earth—Accelerating the Transition from Research to Operations, National Academy Press, Washington, D.C., in preparation. |

|

19 |

National Research Council, 1999, A Vision for the National Weather Service: Road Map for the Future, National Academy Press, Washington, D.C., 76 pp. |

|

BOX 5.3 Forecast Accuracy and Skill Trends in objective, quantitative measures of accuracy and skill are very important in determining how forecasts are improving over time. The accuracy of a forecast is estimated quantitatively by comparing one or more elements or variables of the forecast, such as temperature, pressure, wind, or precipitation, to the corresponding observed value of the same variable or to an analysis of the variable using many observations. Measures of accuracy include mean, absolute, and root-mean-square (RMS) errors, and correlation coefficients between predicted and observed or analyzed variables, among others. Thus, the accuracy of a model’s or a forecaster’s five-day temperature forecast for a season might be expressed as a mean error of 1°C and an RMS error of 3.5°C. A forecast is said to have skill if it has an accuracy above some simple method of prediction, such as persistence (tomorrow’s forecast variable equals today’s observed variable) or climatology (tomorrow’s forecast is the climatological value of the variable). The accuracies of these simple forecast methods serve as a baseline for the model or human forecast, and the latter forecasts are said to have skill only if they are more accurate than the simple forecast method. SOURCE: R.A. Anthes, 1983, Regional models of the atmosphere in middle latitudes, Monthly Weather Review, v. 111, p. 1306-1335; Glossary of the American Meteorological Society, 2000, Boston, p. 688-689. |

Dissemination

The goal of any weather and climate information system is to deliver accurate, timely information to users. Throughout the 1950s weather data and products were transmitted through regional and national teletype lines and printed at rates of 300 words per minute.20 NWS forecast maps drawn from the numerical models were transmitted to both internal and outside users via the National Facsimile Analog Circuits at 120 scan lines per minute. General forecasts and forecast products were delivered to the media by teletype, facsimile, mail, or hand. Weather warnings were broadcast over National Oceanic and Atmospheric Administration (NOAA) Weather Radio as well as television and commercial radio.

The advent of digital communications increased the speed of data transmission and created new avenues for delivering data to users, such as the Internet, cable, satellite television, and wireless devices. The Internet is now

|

20 |

The History of Computing Project (Hardware, Teletype Development), <http://www.thocp.net>. |

widely available21and is a powerful, low-cost, convenient means for all sectors to disseminate weather and climate products and, in some cases, to allow access to the underlying data. All three sectors embraced the Internet, but e-government initiatives in the 1990s22 gave the NWS added impetus to adopt Internet- and computer-based technologies in its daily operations and in their interactions with the public. Indeed, the NWS earned straight A’s in the Federal Performance Project for above-average communication and the use of information technology to improve the accuracy and timeliness of forecasts and to restructure the agency.23 The NWS now provides weather products in both text and graphical format on its web site, in addition to its traditional dissemination vehicles (e.g., NOAA Weather Radio, printed bulletins). Users can also access certain NWS models (e.g., the Global Forecast System), model analyses, forecasts, and databases from their desktops. Information analysis and search tools allow Internet users to obtain more specialized weather data—anywhere, anytime. For example, both NWS and private sector web sites allow users to obtain weather forecasts by zip code.

Another means by which the NWS is improving access to data is the National Digital Forecast Database (NDFD), which will produce experimental digital forecasts over the conterminous United States by June 2003. Prior to 1998, most NWS data were in analog form.24 With the NDFD, NWS forecasts will be in digital form and organized in a database, offering much greater flexibility in the way they can be used (Box 5.4). Users will be able to download only the information they need, and they will be able to combine different data and manipulate them on their own site. For example, suppose a user wants to calculate an hourly wind chill index in a

|

21 |

The Internet evolved from a Department of Defense Advanced Research Projects Agency (ARPA) project called Arpanet. Using this foundation, the National Science Founcation (NSF) funded the creation of a network of networks, called the Internet. Backbone speeds increased from 56 kbps in 1986 to 448 kpbs over multiplexed T1 links in 1988 to 1544 kpbs over nummultiplexed T1 links in 1989 to 45 Mbps over T3 links in 1992. In 1995 the regional networks became independent of NSF and the commercial Internet service provider structure evolved over the next few years. The development of the Internet is described in National Research Council, 1994, Realizing the Information Future, National Academy Press, Washington, D.C., 285 pp., and National Research Council, 2001, The Internet’s Coming of Age, National Academy Press, Washington, D.C., 236 pp. |

|

22 |

National Research Council, 2002, Information Technology Research, Innovation, and E-Government, National Academy Press, Washington, D.C., 168 pp. |

|

23 |

The Federal Performance Project is a partnership between Government Executive magazine and George Washington University’s Department of Public Administration that rates federal agencies’ management abilities. See J. Dean, 2001, Information management: Risking IT, GovExec.com, <http://www.govexec.com/fpp/fpp01/s4.htm>. |

|

24 |

Presentation to the committee by Bruce Budd, meteorologist-in-charge, Weather Forecast Office-Central Pennsylvania, January 10, 2002. Meteorological services in other countries |

|

BOX 5.4 Database Technology The NWS has traditionally stored weather data in multiple large data sets. This approach limits the usefulness of NWS data to end users. Programs to use the data must be written by experts who understand the format of each data set. These programs can be executed only on the entire data set; they cannot be executed on a subset of the data or against multiple, merged data sets without planning either in the original programs or additional programming. A database management system offers a more flexible approach to managing and using data. The database architecture separates the structure of the data from the applications that manipulate them. Hence, end users can develop programs that meet their specific needs, such as new and different functions on the data, or subsetting or merging data in databases. In addition, abstraction of the database to a conceptual model makes it possible to modify the physical organization of the data without disturbing the application software or the users’ logical view of the data. This may be necessary as the amount and kinds of data collected increase or change. Significant investment in database technology will improve the ability of users to analyze the vast amount of weather information that is being collected.a Ideally, the database or databases should be designed to meet the needs of the weather community as a whole. Current database representations of weather data can contain inconsistencies that lead to erroneous analyses. Integrating databases from multiple sources can improve weather forecasts but remains a significant challenge because of the varied formats, semantics, and precision of each data source. |

particular town. Such an index cannot be computed from an analog forecast of “sunny, windy, and turning colder, with temperatures falling into the 20s tonight.” Rather, digital hourly temperature and wind speed data for the grid point closest to the town are required. Such data will be available from the digital database. Of course, the deterministic nature of NDFD products may give users an unwarranted sense of the precision of the data, so care is warranted when using the database.

|

|

have been using digital forecast databases for several years. Examples include the Canadian SCRIBE (R. Verret, G. Babin, D. Vigneux, J. Marcoux, J. Boulais, R. Parent, S. Payer, and F. Petrucci, 1995, SCRIBE: An interactive system for composition of meteorological forecasts, 11th International Conference on Interactive Information and Processing Systems for Meteo-rology, Oceanography, and Hydrology, American Meteorological Society, Dallas, Tex., Janu-ary 15-20, pp. 56-61), and the U.K. Horace system <http://www.met-office.gov.uk/research/nwp/publications/nwp_gazette/dec99/horace.html>. |

By providing access to digital data not currently available in standard products, the database will improve the ability of all the sectors to produce high-quality weather services, particularly as temporal and spatial resolution increases. The initial spatial resolution is 5 km and the temporal resolution is 3 hours for 1-3 days and 6 hours for 4-7 days.25 In the future, the NWS plans to increase the spatial and temporal resolution and integrate other information into the database. In 5 to 10 years, the NDFD will include observations; analyses; weather, water, and climate forecasts from the forecast offices and the National Centers for Environmental Prediction; as well as watches, warnings, and advisory information.

These improvements in the NDFD will greatly increase the number of opportunities for the private sector to provide value-added products and create new services. On the other hand, the public at large may well demand more detail in weather forecasts, and not all of this added detail can be expected to come from the private sector. Improvements in the science and technology of weather forecasting and enhanced opportunities for rapid and targeted dissemination will all continue to challenge the partnership.

Making use of the full range of modern database technology can have a much larger impact on the weather enterprise than even the NDFD will have, because the NDFD will initially provide database access only to NWS forecast products. As described in Box 5.4, the use of a database management system for archiving and distributing the underlying data can greatly improve the usability and flexibility of the database. Such flexibility could have a significant economic impact on the whole community by allowing users to extract more value from the data.

Internet dissemination can be passive (i.e., users find and download copies of the information they want) or active (i.e., data are transmitted selectively to users). Most Internet weather dissemination is passive, but an increasing number of companies are offering services that push real-time weather forecasts and warnings to a user’s workstation. The WeatherBug is one of a number of such products (Box 5.5). This new method of dissemination could reach a vast number of people who spend their day in front of workstations, away from televisions or radios.

There appears to be widespread agreement in the weather community that structuring information to be disseminated has significant benefit. Previously, NWS forecast products were only available as text or maps. By agreeing on an exchange structure (e.g., Extensible Markup Language [XML], Simple Object Access Protocol [SOAP]), the data can be handled and analyzed by programs much more easily and accurately, making them

|

BOX 5.5 WeatherBug The WeatherBug is a web-push product developed by AWS, Inc., to provide real-time weather information to clients. The system is based on the AWS World-wide School Weather Network, which consists of more than 5000 automated weather sites. The WeatherBug and similar products allow users to connect to the site closest to a specified zip code in order to receive weather information, such as temperature, humidity, and heat index, in real time.a In addition, users are immediately notified when the NWS issues a storm watch or warning for the user-specified zip code. The product can be downloaded free with sponsor ads or for a small monthly fee without ads. |

easier for all sectors to use. The NWS is moving toward distributing structured data, either through the web or through other avenues.

Advances in wireless and semiconductor technologies have created new opportunities for the private sector to deliver weather information to clients via portable wireless devices, such as cell phones, personal digital assistants, and pagers. All standard digital cell phones have a feature called “cell broadcasting” that allows text messages to be pushed to all phones in a given cell at no additional cost.26 Because the message is broadcast from the control channel, the system does not overload. Pushing warnings via cell phone offers two advantages over NOAA Weather Radio: (1) weather warnings can be provided to a specific cell, rather than to an entire county, which reduces the number of irrelevant warnings that users hear, and (2) cell phones can store warnings. However, a number of technical issues must still be resolved before cell phone capabilities can be fully exploited. These include developing priority override, ringer suppression, and better user interfaces (to distinguish, for example, between an advertisement and an emergency alert) and dealing with the diversity of cell phone standards prevalent in the U.S. marketplace.

Some wireless devices take advantage of geographic information and computer graphics to provide more sophisticated weather services. GPS capabilities enable weather services to be tailored for and delivered to

precise locations. Improvements in computer graphics enable real-time visualization and display of weather information. For example, Digital Cyclone offers a service that allows mobile users to access local weather forecasts, view animated radar for the user’s location, and receive severe weather updates on a wireless device.27 Such services may reduce people’s vulnerability to severe weather when they are traveling.

To take advantage of wireless services to the public, the NWS need not invest in a new communications infrastructure. Indeed, the NWS has no plans for disseminating weather information via wireless devices.28 However, the NWS can provide watches, warnings, and other weather products in formats and structures that are most useful to companies that are developing the applications. The NWS has provided similar services for industries developing other media (e.g., television) in the past. Of course, there is no guarantee that companies that are currently passing along NWS weather warnings to wireless device users will continue to provide this service in the future. However, weather information is of such interest to the public that regulatory mechanisms may not be required to encourage expansion of this new avenue of dissemination.29

Given the rapid technological advances in both wired and wireless communications, all sectors must constantly evaluate the costs and utility of the various dissemination approaches for meeting customer needs. Cooperation between the NWS and the private sector will greatly facilitate the efficient use of dissemination technologies that serve both the specialized user and the general public.

Data Archiving

Maintaining a long-term archive poses significant challenges for the U.S. weather and climate enterprise. The deployment of a new generation of satellites in the coming decade (National Aeronautics and Space

|

27 |

Digital Cyclone combines its proprietary weather forecasting system with NWS data to create localized weather forecasts. Information on Digital Cyclone’s Mobile My-Cast service can be found at <http://www.my-cast.com/mobile/index.jsp>. |

|

28 |

Presentation to the committee by Ed Johnson, director, NWS Office of Strategic Planning and Policy, February 19, 2002. |

|

29 |

Regulatory mechanisms have had limited success in enforcing public policy mandates. For example, in 1996 the Federal Communications Commission (FCC) mandated that cell phone carriers transmit the address and phone number of 911 callers to the public safety answering point. However, many carriers have not implemented the E-911 mandate, citing the high cost of compliance. See J.H. Reed, K.J. Krizman, B.D. Woerner, and T.S. Rappaport, 1998, An overview of the challenges and progress in meeting the E-911 requirement for location service, IEEE Communications Magazine, April, p. 30-37. |

Administration’s [NASA’s] Earth Observing System [EOS], Next Generation Geostationary Operational Environmental Satellite [GOES], and the Department of Defense-NASA-NOAA National Polar-Orbiting Operational Environmental Satellite System [NPOESS]) and the enhancement of NEXRAD present major data management challenges to the National Climatic Data Center (NCDC). Data volumes are projected to increase to 40 petabytes or more by 2010.30 However, the challenge of ingesting data is dwarfed by the challenge of retrieving them.31 Other challenges include managing disparate types of data, developing a rapid-access storage and retrieval system, and providing on-line access to data (in accordance with the federal government’s e-government initiative).32 Fortunately, technological advances will alleviate some of these problems. The cost of storage capacity will likely continue to drop dramatically; 200 Gbyte disks are available for as little as $399,33 although problems in increasing large-scale storage capacity remain. The problems range from understanding the physics of making rotating devices move faster reliably to increasing bandwidth for communicating with the devices. One can predict that significant increases in capabilities and decreases in cost will continue, but the imbalances will remain.

Advances in database technology now permit companies to manage very large databases and archives.34 For example, the TerraServer project demonstrated that large volumes of geospatial data (more than 20 terabytes of maps and aerial photographs) could be disseminated on-line by a very

|

30 |

NCDC currently holds 1.4 petabytes of data. The EOS satellites are generating about 60 terabytes of data per year, and the NPOESS satellites will generate 200 terabytes of data per year beginning in 2009. These data will be made available on the same terms as current satellite data (i.e., full and open access). See National Research Council, 2000, Ensuring the Climate Record from the NPP and NPOESS Meteorological Satellites, National Academy Press, Washington, D.C., 51 pp. |

|

31 |

See, for example, National Research Council, 1995, Preserving Data on Our Physical Universe, National Academy Press, Washington, D.C., 167 pp.; National Research Council, 2000, Ensuring the Climate Record from the NPP and NPOESS Meteorological Satellites, National Academy Press, Washington, D.C., 51 pp.; National Research Council, 2002, Assessment of the Usefulness and Availability of NASA’s Earth and Space Science Mission Data, National Academy Press, Washington, D.C., 100 pp. |

|

32 |

Testimony on NOAA’s FY 2003 budget regarding satellite data utilization and management by Conrad C. Lautenbacher, Jr., Undersecretary of Commerce for Oceans and Atmosphere, before the Subcommittee on Environment, Technology and Standards Committee on Science, U.S. House of Representatives, July 24, 2002. |

|

33 |

See a story about Western Digital’s “Drivezilla” in ZDNet at <http://zdnet.com.com/2100-1103-946929.html>. |

|

34 |

A survey of some of these technologies that are relevant to data centers is given in National Research Council, 2003, Government Data Centers: Meeting Increasing Demands, The National Academies Press, Washington, D.C., 56 pp. |

small staff.35 The world’s largest database system (BaBar), which runs on 100 servers and distributes data to 75 institutions around the world, has stored more than 668 terabytes of data from the Stanford Linear Accelerator.36 These and other academic and private sector advances in geospatial data management could greatly improve the usefulness of the nation’s weather and climate record to all sectors. Long-term archive issues are not currently a priority for weather companies, but as forecasting skill improves to permit seasonal and longer-term weather predictions, the quality and accessibility of archived weather and climate data will become increasingly important to the private sector, creating a new source of stress on the partnership.

CONCLUSIONS

Advances in science and technology over the last 10 years have drastically changed the capabilities of the three sectors as well as the expectations of their respective users. Barriers to entry have been lowered, eroding previously exclusive roles. For example, data collection and modeling are no longer exclusively the role of the federal government, and visualization techniques are no longer used exclusively by the private and academic sectors. Modeling and forecasts have improved, and new methods of communicating weather and climate information have emerged, creating opportunities for providing new products and serving new user communities. Major shifts include the use of wireless technologies and long-range (climate) forecasts by the private sector, and the implementation of Internet search tools and the National Digital Forecast Database by the NWS.

Prudent public policy must be based on the assumption that rapid advances in scientific understanding and technology will continue. These changes make it inadvisable to define sharp boundaries for what each sector can and cannot do. Indeed such prescriptions would be obsolete and ineffectual before they could be promulgated. Instead, the public, private, and academic sectors must work diligently to improve the processes and mechanisms by which they will deal with the problems and differences that are certain to arise. Recommendations for these improved processes are discussed in Chapter 6.

|

35 |

TerraServer is a test bed for developing advanced database technology. It is operated as a partnership between Microsoft Corporation, the U.S. Geological Survey, the Russian Sovinformsputnik Interbranch Association, and other organizations. See <http://terraserver.homeadvisor.msn.com/About.aspx?n=AboutWhatIs>. |

|

36 |

<http://www.slac.stanford.edu/BFROOT/www/Public/Computing/Databases/index.shtml>. |