The Human Factor

KIM J. VICENTE

Department of Mechanical & Industrial Engineering

University of Toronto

Toronto, Ontario

Many people find technology frustrating and difficult to use in everyday life. In the vast majority of cases, the problem is not that they are technological “dummies” but that the designers of technological systems did not pay sufficient attention to human needs and capabilities. The new BMW 7 series automobile, for example, has an electronic dashboard system, referred to as iDrive, that has between 700 and 800 features (Hopkins, 2001). An article in Car and Driver described it this way: “[it] may…go down as a lunatic attempt to replace intuitive controls with overwrought silicon, an electronic paper clip on a lease plan. One of our senior editors needed 10 minutes just to figure out how to start it” (Robinson, 2002). An editor at Road & Track agreed: “It reminds me of software designers who become so familiar with the workings of their products that they forget actual customers at some point will have to learn how to use them. Bottom line, this system forces the user to think way too much. A good system should do just the opposite” (Bornhop, 2002). As technologies become more complex and the pace of change increases, the situation is likely to get worse.

In everyday situations, overlooking human factors leads to errors, frustration, alienation from technology, and, eventually, a failure to exploit the potential of people and technology. In safety-critical systems, however, such as nuclear power plants, hospitals, and aviation, the consequences can threaten the quality of life of virtually everyone on the planet. In the United States, for example, preventable medical errors are the eighth leading cause of death; in hospitals alone, errors cause 44,000 to 98,000 deaths annually, and patient injuries cost between $17 billion and $29 billion annually (IOM, 1999).

DIAGNOSIS

The root cause of the problem is the separation of the technical sciences from the human sciences. Engineers who have traditionally been trained to focus on technology often have neither the expertise nor the inclination to pay a great deal of attention to human capabilities and limitations. This one-sided view leads to a paradoxical situation. When engineers ignore what is known about the physical world and design a technology that fails, we blame them for professional negligence. When they ignore what is known about human nature and design a technology that fails, we typically blame users for being technologically incompetent. The remedy would be for engineers to begin with a human or social need (rather than a technological possibility) and to focus on the interactions between people and technology (rather than on the technology alone). Technological systems can be designed to match human nature at all scales— physical, psychological, team, organizational, and political (Vicente, in press).

COMPUTER DISPLAYS FOR NUCLEAR POWER PLANTS

People are very good at recognizing graphical patterns. Based on this knowledge, Beltracchi (1987) developed an innovative computer display for monitoring the safety of water-based nuclear power plants. To maintain a safety margin, operators must ensure that the water in the reactor core is in a liquid state. If the water begins to boil, as it did during the Three Mile Island accident, then the fuel can eventually melt, threatening public health and the environment. In traditional control rooms, such as the one shown in Figure 1, operators have to go through a tedious procedure involving steam tables and individual meter readings to monitor the thermodynamic status of the plant. This error-prone procedure requires that operators memorize or record numerical values, perform mental calculations, and execute several steps.

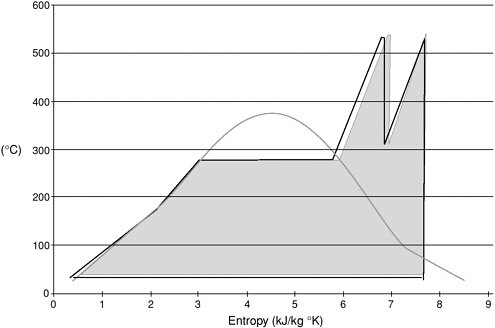

Beltracchi’s display (Figure 2) is based on the temperature-entropy diagram found in thermodynamic textbooks. The saturation properties of water are shown in graphical form as a bell curve rather than in alphanumeric form as in a steam table. Furthermore, the thermodynamic state of the plant can be described as a Rankine cycle, which has a particular graphical form when plotted in temperature-entropy coordinates. By measuring the temperature and pressure at key locations in the plant, it is possible to obtain real-time sensor values that can be plotted in this graphical diagram. The saturation properties of water are presented in a visual form that matches the intrinsic human capability of recognizing graphical patterns easily and effectively. An experimental evaluation of professional nuclear power plant operators showed that this new way of presenting information leads to better interactions between people and technology than the traditional way (Vicente et al., 1996).

FRAMEWORK FOR RISK MANAGEMENT

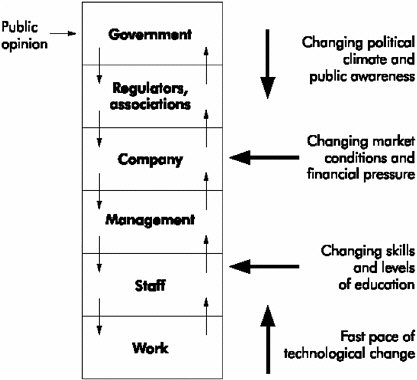

Public policy decisions are necessarily made in a dynamic, even turbulent, social landscape that is continually changing. In the face of these perturbations, complex sociotechnical systems must be robust. Rasmussen (1997) developed an innovative framework for risk management to achieve this goal (Figure 3).

The first element of the framework is a structural hierarchy describing the individuals and organizations in the sociotechnical system. The number of levels and their labels can vary from industry to industry. Take, for example, a structural hierarchy for a nuclear power plant. The lowest level usually describes the behavior associated with the particular (potentially hazardous) process being controlled (e.g., the nuclear power plant). The next level describes the activities of the individual staff members who interact directly with the process being controlled (e.g., control room operators). The third level from the bottom describes the activities of management that supervises the staff. The next level up describes the activities of the company as a whole. The fifth level describes the activities of the regulators or associations responsible for setting limits to the activities of companies in that sector. The top level describes the

FIGURE 3 Levels of a complex sociotechnical system involved in risk management. Source: Adapted from Rasmussen, 1997.

activities of government (civil servants and elected officials) responsible for setting public policy.

Decisions at higher levels propagate down the hierarchy, and information about the current state of affairs propagates up the hierarchy. The interdependencies among the levels of the hierarchy are critical to the successful functioning of the system as a whole. If instructions from above are not formulated or not carried out, or if information from below is not collected or not conveyed, then the system may become unstable and start to lose control of the hazardous process it is intended to safeguard.

In this framework, safety is an emergent property of a complex sociotechnical system. Safety is affected by the decisions of all of the actors—politicians, CEOs, managers, safety officers, and work planners—not just front-line workers. Threats to safety or accidents usually result from a loss of control caused by a lack of vertical integration (i.e., mismatches) among the levels of the entire system, rather than from deficiencies at any one level.

Inadequate vertical integration is frequently caused, at least partly, by a lack of feedback from one level to the next. Because actors at each level cannot see how their decisions interact with decisions made by actors at other levels, threats to safety may not be obvious before an accident occurs. Nobody has a global view of the entire system.

The layers of a complex sociotechnical system are increasingly subjected to external forces that stress the system. Examples of perturbations include: the changing political climate and public awareness; changing market conditions and financial pressures; changing competencies and levels of education; and changes in technological complexity. The more dynamic the society, the stronger these external forces are and the more frequently they change.

The second component of the framework deals with dynamic forces that can cause a complex sociotechnical system to modify its structure over time. On the one hand, financial pressures can create a cost gradient that pushes actors in the system to be more fiscally responsible. On the other hand, psychological pressures can create a gradient that pushes actors in the system to work more efficiently, mentally or physically.

Pressure from these two gradients subject work practices to a kind of “Brownian motion,” an exploratory but systematic migration over time. Just as the force of gravity causes a stream of water to flow down crevices in a mountainside, financial and psychological forces inevitably cause people to find the most economical ways of performing their jobs. Moreover, in a complex sociotechnical system, changes in work practices can migrate from one level to another. Over time, this migration will cause people responding to requests or demands to deviate from accepted procedures and cut corners to be more cost-effective. As a result, over time the system’s defenses are degraded and eroded.

A degradation in safety may not raise an immediate warning flag for two reasons. First, given the stresses on the system, the migration in work practices

may be necessary to get the job done. That is why so-called “work-to-rule” campaigns requiring that people do their jobs strictly by the book usually cause complex sociotechnical systems to come to a grinding halt. Second, the migration in work practices usually does not have immediate visible negative impacts. The safety threat is not obvious because violations of procedures do not lead immediately to catastrophe. At each level in the hierarchy, people may be working hard and striving to respond to cost-effectiveness measures; but they may not realize how their decisions interact with decisions made by actors at other levels of the system. Nevertheless, the sum total of these uncoordinated attempts at adapting to environmental stressors can slowly but surely “prepare the stage for an accident” (Rasmussen, 1997).

Migrations from official work practices can persist and evolve for years without any apparent breaches of safety until the safety threshold is reached and an accident happens. Afterward, workers are likely to wonder what happened because they had not done anything differently than they had in the recent past.

Rasmussen’s framework makes it possible to manage risk by vertically integrating political, corporate, managerial, worker, and technical considerations into a single integrated system that can adapt to novelty and change.

Case Study

A fatal outbreak of E. coli in the public drinking water system in Walkerton, Ontario, during May 2000 illustrates the structural mechanisms at work in Rasmussen’s framework (O’Connor, 2002). In a town of 4,800 residents, 7 people died and an estimated 2,300 became sick. Some people, especially children, are expected to have lasting health effects. The total cost of the tragedy was estimated to be more than $64.5 million (Canadian).

In the aftermath of the outbreak, people were terrified of using tap water to satisfy their basic needs. People who were infected or who had lost loved ones suffered tremendous psychological trauma; their neighbors, friends, and families were terrorized by anxiety; and people throughout the province were worried about how the fatal event could have happened and whether it could happen again in their towns or cities. Attention-grabbing headlines continued unabated for months in newspapers, on radio, and on television. Eventually, the provincial government appointed an independent commission to conduct a public inquiry into the causes of the disaster and to make recommendations for change. Over the course of nine months, the commission held televised hearings, culminating in the politically devastating interrogation of the premier of Ontario. On January 14, 2002, the Walkerton Inquiry Commission delivered Part I of its report to the attorney general of the province of Ontario (O’Connor, 2002).

The sequence of events revealed a complex interaction among the various levels of a complex sociotechnical system, including strictly physical factors, unsafe practices of individual workers, inadequate oversight and enforcement by

local government and a provincial regulatory agency, and budget reductions imposed by the provincial government. In addition, the dynamic forces that led to the accident had been in place for some time—some going back 20 years—but feedback that might have revealed the safety implications of these forces was largely unavailable to the various actors in the system. These findings are consistent with Rasmussen’s predictions and highlight the importance of vertical integration in a complex sociotechnical system.

CONCLUSIONS

We must begin to change our engineering curricula so that graduates understand the importance of designing technologies that work for people performing the full range of human activities, from physical to political activities and everything in between. Corporate design practices must also be modified to focus on producing technological systems that fulfill human needs as opposed to creating overly complex, technically sophisticated systems that are difficult for the average person to use. Finally, public policy decisions must be based on a firm understanding of the relationship between people and technology. One-sided approaches that focus on technology alone often exacerbate rather than solve pressing social problems.

Because the National Academy of Engineering has unparalleled prestige and expertise, it could play a unique role in encouraging educational, corporate, and governmental changes that could lead to the design of technological systems that put human factors where they belong—front and center.

ACKNOWLEDGMENTS

This paper was sponsored in part by the Jerome Clarke Hunsaker Distinguished Visiting Professorship at MIT and by a research grant from the Natural Sciences and Engineering Research Council of Canada.

REFERENCES

Beltracchi, L. 1987. A direct manipulation interface for water-based Rankine cycle heat engines. IEEE Transactions on Systems, Man, and Cybernetics SMC-17: 478-487.

Bornhop, A. 2002. BMW 745I: iDrive? No, you drive, while I fiddle with the controller. Road & Track 53(10): 74-79.

Burns, C.M. 2000. Putting it all together: improving display integration in ecological displays. Human Factors 42: 226-241.

Hopkins, J. 2001. When the devil is in the design. USA Today, December 31, 2001. Available online at: <www.usatoday.com/money/retail/2001-12-31-design.htm>.

IOM (Institute of Medicine). 1999. To Err Is Human: Building a Safer Health System, edited by L.T. Kohn, J.M. Corrigan, and M.S. Donaldson. Washington, D.C.: National Academy Press.

O’Connor, D.R. 2002. Report of the Walkerton Inquiry: The Events of May 2000 and Related Issues. Part One. Toronto: Ontario Ministry of the Attorney General. Available online at: <www.walkertoninquiry.com>.

Rasmussen, J. 1997. Risk management in a dynamic society: a modelling problem. Safety Science 27(2/3): 183-213.

Robinson, A. 2002. BMW 745I: the ultimate interfacing machine. Car and Driver 47(12): 71-75.

Vicente, K.J. In Press. The Human Factor: Revolutionizing the Way We Live with Technology. Toronto: Knopf Canada.

Vicente, K.J., N. Moray, J.D. Lee, J. Rasmussen, B.G. Jones, R. Brock, and T. Djemil. 1996. Evaluation of a Rankine cycle display for nuclear power plant monitoring and diagnosis. Human Factors 38: 506-521.

Human Factors Applications in Surface Transportation

THOMAS A. DINGUS

Virginia Tech Transportation Institute

Virginia Polytechnic Institute and State University

Blacksburg, Virginia

Our multifaceted surface transportation system consists of infrastructure, vehicles, drivers, and pedestrians. The hardware and software engineering sub-systems, however, are generally traditional and not overly complex. In fact, the technology in the overall system is, by design, substantially removed from the “leading edge” to ensure that it meets safety, reliability, and longevity requirements. The complexities of the transportation system are primarily attributable to the human factors of driving, including psychomotor skills, attention, judgment, decision making, and even human behavior in a social context.

Many transportation researchers have come to the realization that human factors are the critical elements in solving the most intransigent problems in surface transportation (e.g., safety and improved mobility). That is, the primary issues and problems to be solved are no longer about asphalt and concrete, or even electronics; instead they are focused on complex issues of driver performance and behavior (ITSA and DOT, 2002).

A substantial effort is under way worldwide to reduce the number of vehicular crashes. Although the crash rate in the United States is substantially lower than it was, crashes continue to kill more than 40,000 Americans annually and injure more than 3,000,000 (NHTSA, 2001). Because “driver error” is a contributing factor in more than 90 percent of these crashes, it is clear that solutions to transportation problems, perhaps more than in any other discipline, must be based on human factors.

One of the hurdles to assessing the human factors issues associated with driving safety is the continuing lack of data that provides a detailed and valid representation of the complex factors that occur in the real-world driving environment. In this paper, I describe some new techniques for filling the data void

and present examples of how these techniques are being used to approach important safety problems.

DATA COLLECTION AND ANALYSIS

There are two traditional approaches to collecting and analyzing human factors data related to driving. The first approach is to use data gathered through epidemiological studies (often collected on a national level). These databases, however, lack sufficient detail to be helpful for many applications, such as the development of countermeasure systems or the assessment of interactions between causal and contributing factors that lead to crashes.

The second approach, empirical methods, including newer, high-fidelity driving simulators and test tracks, are necessarily contrived and do not always capture the complexities of the driving environment or of natural behavior. For example, test subjects are often more alert and more careful in a simulation environment or when an experimenter is present in a research vehicle than when they are driving alone in their own cars. Thus, although empirical methods are very useful in other contexts, they provide a limited picture of the likelihood of a crash in a given situation or the potential reduction of that likelihood by a given countermeasure. State-of-the-art empirical approaches can only assess the relative safety of various countermeasures or scenarios. They cannot be used to predict the impact of a safety device or policy change on the crash rate.

Advances in sensor, data storage, and communications technology have led to the development of a hybrid approach to data collection and analysis that uses very highly capable vehicle-based data collection systems. This method of data collection has been used by some auto manufacturers since the introduction of electronic data recorders (EDRs) several years ago. EDRs collect a variety of vehicular dynamic and state data that can be very useful in analyzing a crash. However, they currently lack sufficient measurement capability to assess many human factors-related issues.

Recently, a handful of efforts has been started to collect empirical data on a very large scale in a “pseudonaturalistic” environment—subjects use the instrumented vehicles for an extended period of time (e.g., up to a year) for their normal driving, with no in-vehicle experimenter or obtrusive equipment. Unlike EDRs, these systems also use unobtrusive video and electronic sensors. The goal is to create a data collection environment that is valid, provides enough detail, and is on a large enough scale to reveal the relationship between human factors (e.g., fatigue, distraction, driver error, etc.) and other factors that contribute to crashes. Although these studies are not naturalistic in the strict sense, evidence collected thus far indicates that they can provide an accurate picture of the myriad factors involved in driving safety.

Three examples of pseudonaturalistic approaches are described below.

The Naturalist “100 Car” Study

The primary objectives of this study are to develop instrumentation, methods, and analysis techniques to conduct large-scale, pseudonaturalistic investigations. This pilot study will collect continuous, real-time data over a period of one year for 100 high-mileage drivers. The data set will include five channels of video data and electronic data from many sensors. Data-triggering techniques will be used to identify crashes, near crashes, and other critical incidents for further analysis. Thus, the data will provide video and quantitative information associated with any “event” of interest. These could include the use of cellular telephones (triggered via radio-frequency sensors), unplanned lane deviations (detected by lane-position sensors), short time-to-collision situations (detected by radar sensors), or actual crashes (detected by accelerometers), just to name a few. The data can then be analyzed to determine the exact circumstances for each event. Once the database is complete, the information can be used to develop and evaluate concepts for countermeasures of all types, everything from engineering solutions to enforcement practices.

Researchers anticipate that the database created as part of the 100 Car Study will provide a wealth of information, similar to the information provided by a crash database, but with a great deal more detail. Data analysis will be conducted for vehicle-following and reaction time, the effects of distractions (e.g., electronic devices), driver behavior in proximity to heavy trucks, and the quantitative relationship between the frequency of crashes and other critical incidents. However, a primary purpose of the 100 Car Study is to develop instrumentation and data collection and analysis techniques for much larger studies (e.g., a study of 10,000 cars).

Field Operational Tests of Human Factors and Crash Avoidance

Another use of large-scale data on naturalistic driving data is to evaluate the benefits of engineering-based safety measures. Pseudonaturalistic studies using actual vehicles are currently under way to assess collision-avoidance systems (which use forward-facing radar to warn drivers of an impending crash) as well as lane-position monitoring and driver-alertness monitoring systems for heavy trucks.

Unlike simulator or test-track studies, these studies can test devices in situ and monitor the interactions of all of the environmental factors described above. They can also assess how drivers adapt over time. This is an extremely important aspect of a safety evaluation because, if drivers rely too much on a safety device, the crash rate may actually increase.

Driver Fitness-For-Duty Studies

Fitness-for-duty studies are geared toward helping policy makers assess the effects of fatigue, alcohol, prescription drugs, and other factors that can affect a driver’s behavior. For example, a recently completed pseudonaturalistic study assessed the quality of sleep that truck drivers obtain in a “sleeper berth” truck (Dingus et al., 2002). After epidemiological studies identified fatigue among truck drivers as a significant problem, it became important to determine the causes of the problem and possible ways to address it. Researchers hypothesized that a likely cause was the generally poor quality of sleep on the road. An additional hypothesis was that team drivers who attempted to sleep while the truck was moving would have the poorest quality sleep and, therefore, would be the highest risk drivers. A large-scale instrumented-vehicle study (56 drivers and 250,000 miles of driving data) was undertaken to assess sleep quality, driver alertness, and driver performance on normal revenue-producing trips averaging up to eight days in length. Using this methodology, it was determined that, although team drivers obtained a poorer quality of sleep than single drivers, the poor quality was offset by the efficient use of relief drivers. The results showed that single drivers suffered the worst bouts of fatigue and had the most severe critical incidents (by about 4 to 1). This (and other) important findings could only have been obtained from pseudonaturalistic studies.

SUMMARY

Significant progress in driving safety will require additional large-scale data from pseudonaturalistic studies that can complement existing data-gathering methods. Eventually, these data may improve our understanding of the causal and contributing factors of crashes so that effective countermeasures can be evaluated and deployed efficiently and quickly.

REFERENCES

Dingus, T., V. Neale, S. Garness, R. Hanowski, A. Keisler, S. Lee, M. Perez, G. Robinson, S. Belz, J. Casali, E. Pace-Schott, R. Stickgold, and J.A. Hobson. 2002. Impact of Sleeper Berth Usage on Driver Fatigue. Washington, D.C.: Federal Motor Carrier Safety Administration, U.S. Department of Transportation.

ITSA (Intelligent Transportation Society of America) and DOT (U.S. Department of Transportation). 2002. National ITS Program Plan: A Ten-Year Vision. Washington, D.C.: Intelligent Transportation Society of America and U.S. Department of Transportation.

NHTSA (National Highway Traffic Safety Administration). 2001. Traffic Safety Facts 2000: A Compilation of Motor Vehicle Crash Data from the Fatality Analysis Reporting System and the General Estimates System. DOT HS 809 337. Washington, D.C.: National Highway Traffic Safety Administration.

Implications of Human Factors Engineering for Novel Software User-Interface Design

MARY CZERWINSKI

Microsoft Research

Redmond, Washington

Human factors engineering (HFE) can help software designers determine if a product is useful, usable, and/or fun. The discipline incorporates a wide variety of methods from psychology, anthropology, marketing, and design research into the development of user-centric approaches to software design. The ultimate goal is to ensure that software products are discoverable, learnable, memorable, and satisfying to use.

HFE is a discipline partly of science and partly of design and technological advancement. The scientific aspects of the discipline primarily bring together principles from psychology and anthropology. HFE professionals require a background in research on human cognitive abilities (e.g., attention, visual perception, memory, learning, time perception, categorization) and on human-computer interaction (HCI) (e.g., task-oriented methods, heuristic evaluations, input, visualization, menu design, and speech research). Although HFE is based on solid principles from basic research, it is constantly evolving in response to improvements in technology and changes in practices and values.

For software products to be successful in terms of ease of use, HFE practices must be incorporated early in the product development cycle. In areas of fierce competition or when innovation is necessary for product success, user-centered design practices have been shown time and again to make or break a product. There are too many principles of HCI and software design to include in this short paper, but many excellent books are available on the subject (e.g., Newman and Lamming, 1995; Preece, 1994; Shneiderman, 1998; Wickens, 1984). However, we can easily summarize the rule for incorporating HFE into the software design process with one golden principle—know thy user(s). HFE professionals must research end users’ tasks, time pressures, working styles,

familiarity with user interface (UI) concepts, and so forth. Many tools are available for determining these characteristics of the user base, including field work, laboratory studies, focus groups, e-mail surveys, remote laboratory testing, persona development, paper prototyping, and card sorts. Most, if not all, of these are used at various points in the product life cycle.

RATIONALE FOR USING HUMAN FACTORS ENGINEERING

The most obvious reason for including user-centered design practices during software product development is to ensure that the product will be useful (i.e., solves a real problem experienced by the target market) and usable (i.e., easy to learn and remember and satisfying to use). In addition, cost savings have been well documented (e.g., Bias and Mayhew, 1994; Nielsen, 1993). For example, a human factors engineer spent just one hour redesigning a graphical element for rotary-dial phones for a certain company and ended up saving the company about $1 million by reducing demand on central switches (Nielsen, 1993). A study of website design by Lohse and Spiller (1998) showed that the factor most closely correlated with actual purchases was the ease with which customers could navigate the website. In other words, ease of use is closely correlated with increased sales, especially on the web. Interestingly, the benefits can be measured not just in reduced costs or streamlined system design or sales figures. User-centered design also shortens the time it takes to design a product right the first time, because feedback is collected throughout the process instead of at the end of the process or after products have been shipped when reengineering is very costly. Finally, HFE saves money on the product-support side of the equation, because there are fewer calls for help from customers and fewer products returned. In the end, if users are satisfied with a company’s products, they are likely to buy more products from that company in the future.

User expectations of ease of use have steadily increased, especially in the web domain, where it only takes one click for users to abandon one site for another. In this way, users are spreading the word virtually about their demands for web design. The same is true for packaged software. In addition, several countries (most notably Germany) have adopted standards for ease of use that software companies must demonstrate before they can make international sales. To meet these standards, many companies require the help of HFE professionals.

Last but not least, user-centered design is one of the few ways to ensure that innovative software solves real human problems. The following case study illustrates how one company uses HCI research in the software development process.

CASE STUDY: LARGE DISPLAYS

Microsoft Research has provided HCI researchers with access to very large

displays, often 42 inches wide, running aspect ratios of 3072 × 768, using triple projections and Microsoft Windows XP support for multiple monitors. When we performed user studies on people using Windows OS software on 120-degree-field-of-view displays, it became immediately apparent that the UI had to be redesigned for these large display surfaces. For instance, the only task bar, which was displayed on the “primary” monitor of the three projections, did not stretch across all three displays. Similarly, there was only one Start menu, and one system tray (where notifications, icons, and instant messages are delivered) located in a far corner of only one of the displays. Even with these design limitations, our studies showed significant improvements in productivity when users performed everyday knowledge worker tasks using the larger displays. Our challenge was to create novel UIs that maintained or furthered the increased productivity but made using software programs easier. In addition, software innovations could not get in the way of what users already understood about working with Windows software, which already had a very large user base.

Following the principle of Know Thy Users, we hired an external research firm to do an ethnographical study of how 16 high-end knowledge workers multitasked and which software elements did or did not support this kind of work. In addition, we performed our own longitudinal diary and field studies in situ with users of large and multiple displays. This intensive research, which took more than a month, exposed a variety of problems and work style/task scenarios that were crucial during the design phase. For instance, we were surprised to see variations in the way multiple monitors were used by workers in different domains of knowledge. For example, designers and CAD/CAM programmers used one monitor for tools and menus and another for their “palettes”; other workers merely wanted one large display surface. We were also struck by the amount of task switching, and we realized that Windows and Office could provide better support for these users. After these studies had been analyzed and recorded, we had accomplished the following goals:

-

We had developed a market profile of end users for the next two to five years and studied their working style, tasks, and preferences.

-

We had developed personas (descriptions of key profiles in our user base) based on our research findings and developed design scenarios based on the fieldwork and the observed, real world tasks.

-

We had developed prototypes of software UI design that incorporated principles of perception, attention, memory, and motor behavior and solved the real world problems identified in the research.

-

We had tested ideas using tasks from real end-user scenarios and benchmarked them against existing software tools, iterated our software product designs, and retested them.

-

We had learned a great deal about perception, memory, task switching,

-

and the ability to reinstate context and navigate to high-priority information and content.

The design solution we invented was a success with our target end users. After packaging the software technology for transfer to our colleagues, we moved on to research on creative, next-generation visualizations based on our studies. We will continue to do user studies on our new, more innovative designs to ensure that we remain focused on providing better solutions to real problems for large displays than are provided by UIs with standard software.

CONCLUSION

I have argued that innovation and technology transfer for successful software products must be guided by sound HFE, because customers today expect it and clearly favor usable systems. In addition, sound HFE saves time and money during software development because there are fewer calls to the help desk, fewer product returns, more satisfied and loyal customers, more innovative product solutions, and faster development life cycles.

REFERENCES

Bias, R.G., and D.J. Mayhew. 1994. Cost Justifying Usability. Boston: Academic Press.

Lohse, G., and P. Spiller. 1998. Quantifying the Effect of User Interface Design Features on Cyberstore Traffic and Sales. Pp. 211-218 in Proceedings of CHI 98: Human Factors in Computing Systems. New York: ACM Press/Addison-Wesley Publishing Co.

Nielsen, J. 1993. Usability Engineering. Boston: Academic Press.

Newman, W.M., and M.G. Lamming. 1995. Interactive System Design. Reading, U.K.: Addison-Wesley.

Preece, J. 1994. Human-Computer Interaction. Menlo Park, N.J.: Addison-Wesley.

Shneiderman, B. 1998. Designing the User Interface, 3rd ed. Menlo Park, N.J.: Addison-Wesley.

Wickens, C.D. 1984. Engineering Psychology and Human Performance. Glenview, Ill.: Scott, Foresman & Company.

Frontiers of Human-Computer Interaction: Direct-Brain Interfaces

MELODY M. MOORE

Computer Information Systems Department

Georgia State University

Atlanta, Georgia

A direct-brain interface (DBI), also known as a brain-computer interface (BCI), is a system that detects minute electrophysiological changes in brain signals and uses them to provide a channel that does not depend on muscle movement to control computers and other devices (Wolpaw et al., 2002). In the last 15 years, research has led to the development of DBI systems to assist people with severe physical disabilities. As work in the field continues, mainstream applications for DBIs may emerge, perhaps for people in situations of imposed disability, such as jet pilots experiencing high G-forces during maneuvers, or for people in situations that require hands-free, heads-up interfaces. The DBI field is just beginning to explore the possibilities of real-world applications for brain-signal interfaces.

LOCKED-IN SYNDROME

One of the most debilitating and tragic circumstances that can befall a human being is to become “locked-in,” paralyzed and unable to speak but still intact cognitively. Brainstem strokes, amyotrophic lateral sclerosis, and other progressive diseases can cause locked-in syndrome, leaving a person unable to move or communicate, literally a prisoner in his or her own body. Traditional assistive technologies, such as specialized switches, depend on small but distinct and reliable muscle movement. Therefore, until recently, people with locked-in syndrome had few options but to live in virtual isolation; observing and comprehending the world around them but powerless to interact with it.

DBIs have opened avenues of communication and control for people with severe and aphasic disabilities, and locked-in patients have been the focus of

much DBI work. Experiments have shown that people can learn to control their brain signals enough to operate communication devices such as virtual keyboards, operate environmental control systems such as systems that can turn lights and TVs on and off, and even potentially restore motion to paralyzed limbs. Although DBI systems still require expert assistance to operate, they have a significant potential for providing alternate methods of communication and control of devices (Wolpaw et al., 2002).

CONTROL THROUGH DIRECT-BRAIN INTERFACES

Brain control in science fiction is typically characterized as mind reading or telekinesis, implying that thoughts can be interpreted and directly translated to affect or control objects. Most real-world DBIs depend on a person learning to control an aspect of brain signals that can be detected and measured. Some depend on the detection of invoked responses to stimuli to perform control tasks, such as selecting letters from an alphabet. Some categories of brain signals that can be used to implement a DBI are described below.

Field potentials are synchronized activity of large numbers of brain cells that can be detected by extracellular recordings, typically electrodes placed on the scalp (known as electroencephalography or EEG) (Kandel, 2000). Field potentials are characterized by their frequency of occurrence. Studies have shown that people can learn via operant-conditioning methods to increase and decrease the voltage of brain signals (in tens of microvolts) to control a computer or other device (Birbaumer et al., 2000; Wolpaw et al., 2000). DBIs based on processing field potentials have been used to implement binary spellers and even a web browser (Perelmouter and Birbaumer, 2000).

Other brain signals suitable for DBI control are related to movement or the intent to move. These typically include hand and foot movements, tongue protrusion, and vocalization. These event-related potentials have been recorded both from scalp EEGs to implement an asynchronous switch (Birch and Mason, 2000) and from implanted electrodes placed directly on the brain (Levine et al., 2000). Other research has focused on detecting brain signal patterns in imagined movement (Pfurtscheller et al., 2000).

Another aspect of brain signals that can be used for DBI controls is the brain’s responses to stimuli. The P300 response, which occurs when a subject is presented with something familiar or surprising, has been used to implement a speller. The device works by highlighting rows and columns of an alphabet grid and averaging the P300 responses to determine which letter the subject is focusing on (Donchin et al., 2000). P300 responses have also been used to enable a subject to interact with a virtual world by concentrating on a virtual object until it is activated (Bayliss and Ballard, 2000).

Another approach to DBI control is to record from individual neural cells. A tiny hollow glass electrode was implanted in the motor cortices of three locked-

FIGURE 1 General DBI architecture. Source: Mason and Birch, in press.

in subjects enabling neural firings to be captured and recorded (Kennedy et al., 2000). Subjects control this form of DBI by increasing or decreasing the frequency of neural firings, typically by imagining motions of paralyzed limbs. This DBI has been used to control two-dimensional cursor movement, including iconic-communications programs and virtual keyboards (Moore et al., 2001).

SYSTEM ARCHITECTURE

DBI system architectures have many common functional aspects. Figure 1 shows a simplified model of a general DBI system design as proposed by Mason and Birch (in press).

Brain signals are captured from the user by an acquisition method, such as EEG scalp electrodes or implanted electrodes. The signals are then processed by a feature extractor that identifies signal changes that could signify intent. A translator then maps the extracted signals to device controls, which control a device, such as a cursor, a television, or a wheelchair.

APPLICATIONS

As the DBI field matures, considerable interest has been shown in applications of DBI techniques to real-world problems. The principal goal has been to provide a communication channel for people with severe motor disabilities, but other applications may also be possible. Researchers at the Georgia State University (GSU) BrainLab are focusing on applications for DBI technologies in several critical areas:

Restoring lost communication for a locked-in person is a critical and very difficult problem. Much of the work on DBI technology centers around communication, in the form of virtual keyboards and iconic selection systems, such as TalkAssist (Kennedy et al., 2000). Environmental control is also an important quality-of-life issue; environmental controls include turning a TV to a desired channel and turning lights on and off. The Aware Chair project at GSU is

working on incorporating communication and environmental controls into a wheelchair equipped with intelligent, context-based communication that can adapt to the people in the room, the time of day, and the activity history of the user. The Aware Chair also presents context-based environmental control options (for example, if the room is getting dark, the chair provides an option for turning on the lights). Currently, the Aware Chair is being adapted for neural control using EEG scalp electrodes and a mobile DBI system.

The Internet has the potential to greatly enhance the lives of locked-in people. Access to the Internet can provide shopping, entertainment, educational, and sometimes even employment opportunities to people with severe disabilities. Efforts are under way to develop paradigms for DBI interaction with web browsers. The University of Tübingen, GSU, and University of California, Berkeley, have all developed browsers (Mankoff et al., 2002).

Another quality-of-life area lost to people with severe disabilities is the possibility of creating art and music. The GSU Neural Art Project is currently experimenting with ways to translate brain signals directly into a musical instrument device interface (MIDI) to produce sounds and visualizations of brain signals to produce graphic art.

A DBI application with significant implications is neural-prostheses or muscle stimulators controlled with brain signals. In effect, a neural prosthesis could reconnect the brain to paralyzed limbs, essentially creating an artificial nervous system. DBI controls could be used to stimulate muscles in paralyzed arms and legs to enable a subject to learn to move them again. Preliminary work on a neurally controlled virtual hand has been reported by Kennedy et al. (2000). DBI control has also been adapted to a hand-grasp neuroprosthesis (Lauer et al., 2000).

Restoring mobility to people with severe disabilities is another area of research. A wheelchair that could be controlled neurally could provide a degree of freedom and greatly improve the quality of life for locked-in people. Researchers are exploring virtual navigation tasks, such as virtual driving and a virtual apartment, as well as maze navigation (Bayliss and Ballard, 2000; Birbaumer et al., 2000).

CONCLUSION

Researchers are just beginning to explore the enormous potential of DBIs. Several areas of study could lead to significant breakthroughs in making DBI systems work. First, a much better understanding of brain signals and patterns is a difficult but critical task to making DBIs feasible. Invasive techniques, such as implanted electrodes, could provide better control through clearer, more distinct signal acquisition. Noninvasive techniques, such as scalp electrodes, can be improved by reducing noise and incorporating sophisticated filters. Although research to date has focused mainly on controlling output from the brain, future

efforts will be focused on input channels (Chapin and Nicolelis, 2002). In addition to improvements in obtaining brain signals, much work remains to be done on using them to solve real-world problems.

REFERENCES

Bayliss, J.D., and D.H. Ballard. 2000. Recognizing evoked potentials in a virtual environment. Advances in Neural Information Processing Systems 12: 3-9.

Birbaumer, N., A. Kubler, N. Ghanayim, T. Hinterberger, J. Perelmouter, J. Kaiser, I. Iversen, B. Kotchoubey, N. Neumann, and H. Flor. 2000. The thought translation device (TTD) for completely paralyzed patients. IEEE Transactions on Rehabilitation Engineering 8(2): 190-193.

Birch, G.E., and S.G. Mason. 2000. Brain-computer interface research at the Neil Squire Foundation. IEEE Transactions on Rehabilitation Engineering 8(2): 193-195.

Chapin, J., and M. Nicolelis. 2002. Closed-Loop Brain-Machine Interfaces. Proceedings of Brain-Computer Interfaces for Communication and Control. Rensselaerville, New York: Wadsworth.

Donchin, E., K. Spencer, and R. Wijesinghe. 2000. The mental prosthesis: assessing the speed of a P300-based brain-computer interface. IEEE Transactions on Rehabilitation Engineering 8(2): 174-179.

Kandel, E., J. Schwartz, and T. Jessell. 2000. Principles of Neural Science, 4th ed. New York: McGraw-Hill Health Professions Division.

Kennedy, P.R., R.A.E. Bakay, M.M. Moore, K. Adams, and J. Goldwaithe. 2000. Direct control of a computer from the human central nervous system. IEEE Transactions on Rehabilitation Engineering 8(2): 198-202.

Lauer, R.T., P.H. Peckham, K.L. Kilgore, and W.J. Heetderks. 2000. Applications of cortical signals to neuroprosthetic control: a critical review. IEEE Transactions on Rehabilitation Engineering 8(2): 205-207.

Levine, S.P., J.E. Huggins, S.L. BeMent, R.K. Kushwaha, L.A. Schuh, M.M. Rohde, E.A. Passaro, P.A. Ross, K.V. Elisevish, and B.J. Smith. 2000. A direct-brain interface based on event-related potentials. IEEE Transactions on Rehabilitation Engineering 8(2): 180-185.

Mankoff, J., A. Dey, M. Moore, and U. Batra. 2002. Web Accessibility for Low Bandwidth Input. Pp. 89-96 in Proceedings of ASSETS 2002. Edinburgh: ACM Press.

Mason, S.G., and G.E. Birch. In press. A general framework for brain-computer interface design. IEEE Transactions on Neural Systems and Rehabilitation Technology.

Moore, M., J. Mankoff, E. Mynatt, and P. Kennedy. 2001. Nudge and Shove: Frequency Thresholding for Navigation in Direct Brain-Computer Interfaces. Pp. 361-362 in Proceedings of SIG-CHI 2001 Conference on Human Factors in Computing Systems. New York: ACM Press.

Perelmouter, J., and N. Birbaumer. 2000. A binary spelling interface with random errors. IEEE Transactions on Rehabilitation Engineering 8(2): 227-232.

Pfurtscheller, G., C. Neuper, C. Guger, W. Harkam, H. Ramoser, A. Schlögl, B. Obermaier, and M. Pregenzer. 2000. Current trends in Graz brain-computer interface (BCI) research. IEEE Transactions on Rehabilitation Engineering 8(2): 216-218.

Wolpaw, J.R., D. J. McFarland, and T.M. Vaughan. 2000. Brain-computer interface research at the Wadsworth Center. IEEE Transactions on Rehabilitation Engineering 8(2): 222-226.

Wolpaw, J.R., N. Birbaumer, D. McFarland, G. Pfurtscheller, and T.Vaughan. 2002. Brain-computer interfaces for communication and control. Clinical Neurophysiology 113: 767-791.