2

Status of Aviation Weather Forecasting Research

The research community has helped develop many advances in convective weather forecasting. The motivation of this workshop was to bring together members of the operational forecasting, user, and research communities to begin to explore the potential for improving 2- to 6-hour convective forecasts used for flight planning. The second session of the workshop focused on research approaches and strategies to address convective weather forecasting. The discussions in this session were structured around four questions provided by the Federal Aviation Administration (FAA):

-

What approaches and strategies will be most effective to get an accurate 2- to 6-hour forecast of areas of convection for aviation use in the next 5 to 10 years? (Accurate means a desired false alarm rate (FAR) of ≤0.20, a desired probability of detection (POD) of ≥0.80, a maximal FAR of 0.30, and a minimal POD of 0.60.)

-

What specific scientific enabling capabilities are needed to realize these gains and when will they be available? For example, what improvements, in observations, algorithms, analyses, and numerical modeling, are likely to yield the best results? What are the major gaps in the current research and development activities that need to be addressed?

-

What is the most appropriate way to present the forecast in an operational setting?

-

Consider the two main uses are flight planning and traffic flow management.

-

Consider how the forecast will be developed and presented (i.e., purely probabilistic or deterministic).

-

How will we know when we are done? What verification scheme makes the most sense from an aviation perspective?

Many workshop participants thought that the 5- to 10-year goals for forecast accuracy set by the FAA in preparation for this workshop (desired FAR ≤0.20, desired POD ≥0.80, maximal FAR of 0.30, minimal POD of 0.60) were unrealistic and, in fact, ill posed. That is, improvement in skill as measured by metrics such as POD and FAR does not necessarily translate into increased value for the end user owing to numerous mitigating influences (e.g., constraints on the overall air traffic system, nonweather impacts, and industry-government politics). Further, such metrics, which are perfectly suited for large-scale weather features, do not apply to spatially irregular and highly intermittent convective phenomena. Because of these concerns, the workshop presenters did not focus specifically on these goals but rather on improving forecasts more generally.

This chapter summarizes the information presented during this session of the workshop. Text boxes for each discussion topic call out key points identified by individual presenters. These key points do not reflect the consensus of the presenters or the committee.

STRATEGIES FOR IMPROVING CONVECTIVE FORECASTS

Accurate prediction of convection in the 2- to 6-hour time range may not be amenable to an “engineered” solution without further research related to improved understanding of convection and the practical limits to its predictability. During the workshop, Richard Carbone of the National Center for Atmospheric Research (NCAR), J.Michael Fritsch of Pennsylvania State University, and Cynthia Mueller of NCAR presented their visions of a 2- to 6-hour forecast strategy. These respective visions follow sequentially below.

Richard Carbone

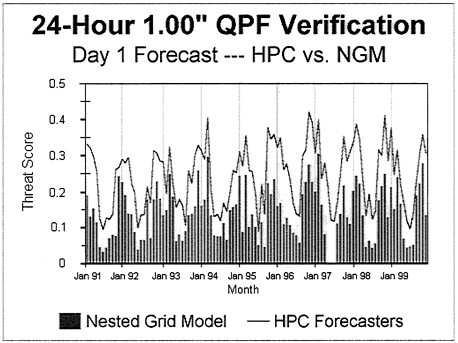

Mr. Carbone noted that traditional numerical weather prediction (NWP), including all operational and mesoscale models that parameterize convection, have not fared well in predicting convection generally, though other presenters noted successes in specific cases. For example, threat score1 performance for forecasts of at least 1 inch of rain at a 24-hour range exhibits very little skill in summer (see Figure 2–1). Initial condition uncertainties, model physics, and chaotic evolution of convection are among the principal impediments to accurate forecasts.

FIGURE 2–1. Monthly threat scores from NCEP’s Hydrometeorological Prediction Center. Note the midsummer minima in forecast performance.

Nowcasting is at the other end of the spectrum of approaches to forecasting weather. So-called expert systems, mainly using knowledge-based rules, neural networks, and similar types of logic, have made substantial progress in the 0- to 60-minute range and also exhibit some skill out to the 2-hour range. There appears to be a predictability “wall” that resides at a range short of 3 hours for all but the most strongly forced systems, which are usually associated with fronts and cyclones. Nowcasting, as currently implemented, is unlikely to make much headway in skillful forecasts of weakly forced convection during midsummer. This is because the disturbed local environment, created by antecedent convection and other initial condition uncertainties, creates too many degrees of freedom for rule-based estimates of convective evolution, at least at the cell or storm scale. Nowcast systems have recently begun to include information from adjoints of dynamical models. This is an early stage of convergence between simple extrapolation of observations and NWP.

Parameterized convection in NWP models is a principal limitation to skillful predictions for many of the same reasons attributed to nowcasting. Advanced data assimilation techniques, combined with explicit convection-resolving models, hold promise for the future of dynamically based forecasts. Encouraging results can now be obtained from simulations of selected cases. However, routine forecasts from these methods regularly have major “busts.” Advanced variational assimilation of radar and satellite data is needed to keep models on track. Furthermore, trajectories indicated by skillful nowcasts may also influence dynamical model trajectories via data assimilation, blurring the distinction between nowcasting and NWP forecasts.

Employing the use of ensembles for probabilistic prediction can serve to quantify forecast uncertainty; however, knowledge is scant about true forecast sensitivities, initial state limitations, and how best to generate or select members of an ensemble. An optimist would attempt to observe initial states at a much higher temporal and spatial resolution, assuming model error per se is a small part of the problem. However, large model error, resulting from sensitivity to poor representation of microphysics, related diabatic heating effects, and deficiencies in representations of boundary and surface layers appears to be a significant part of the forecast problem. Research to decipher the largest forecast sensitivities is badly needed, as are the applicability and effectiveness of advanced data assimilation methodologies such as four-dimensional variational assimilation (4-DVAR) and Ensemble Kalman Filtering.

Over the past 2 years, research on the climatology of convection over the United States has led to some encouraging findings about the apparent

intrinsic predictability of convective events as viewed through a coarse two-dimensional filter. Examining the distribution, diurnal cycle, and autocovariance properties of convection on a continental scale yields strong signals that finer scales of analysis obscure, mainly due to chaotic evolutions at the storm scale. Nearly every day, coherent convective “episodes” span 1,000 km or more and last 20 hours or more, despite the absence of strong forcing at the synoptic scale. This convection often contains the strongest and largest events on a given day as it propagates across the country. The episodes consist of sequences of convective systems, exhibiting recurrent coherent regeneration of convection that spans long distances and time periods. More than 5,400 such events have been observed in the Weather Surveillance Radar 88 Doppler (WSR-88D) data over four warm seasons (1997–2000). The findings suggest that a coarse-grained look at convection may yield substantial statistical predictability, even without the aid of NWP guidance. For example, at 0600 UTC, an event that has existed for 6 hours near 100° W longitude has a 70 percent chance of continuing to propagate in a predictable manner for 6 additional hours. Furthermore, application of NWP guidance to such observations-based predictions should markedly improve probabilistic predictions through the addition of information on forcing at meso-synoptic scales.

Mr. Carbone concluded by advocating a fusion of nowcasting and NWP techniques, that is, statistical-dynamical prediction of secondary convection. This approach would exploit the statistical coherence of convection by combining knowledge of this behavior with knowledge of large-scale and mesoscale forcing that lies in the path ahead of antecedent organized convection. Knowledge of such forcing is a strength of NWP models, and it will improve with better observations and more skillful assimilation schemes. Such methods, however, do not address, except climatologically, the issue of convective initiation (i.e., prediction of primary convection) and will yield probabilistic predictions, not deterministic ones. This is just one of several possible approaches to probabilistic prediction.

J. Michael Fritsch

Dr. Fritsch noted that weather forecasting has traditionally focused on time- and space scales that, for the most part, are longer and larger, respectively, than the needs of the aviation industry. Specifically, forecasters have concentrated on synoptic-scale systems to forecast the “today, tonight, tomorrow” time period. To do this, forecasters depend strongly on synoptic-scale numerical model guidance. However, the aviation

industry operates in a mesoscale world and requires more specific and more frequent guidance than that provided by the current NWP-based forecasting system. Moreover, numerical models have inherent limitations when it comes to providing guidance in a timely manner and remain notoriously poor at forecasting cloud-scale and mesoscale phenomena. During the workshop, Drs. Fritsch and Joby Hilliker of Pennsylvania State University described a technique using high-frequency weather observations in nonlinear statistical forecast models to improve forecasts of convection.

The problem of dealing with small time- and space scales is exacerbated in the presence of deep convection. Historically, levels of skill for forecasting convective storms are poor. This is readily evident from cursory inspection of historical records of quantitative precipitation forecasts. Assuming a typical bias of 1.1, the National Oceanic and Atmospheric Administration Hydrometeorological Prediction Center Model is only able to forecast 30 to 40 percent of the observed area of heavy rainfall (a proxy for convective storms) in the “day 1” forecast period (the 24-hour period from 12 to 36 hours after model initialization).

Because the aviation industry operates on such short timescales, guidance from numerical models is generally of limited value to air traffic management. Interviews of Terminal Radar Approach Control Traffic Management coordinators conducted by Forman et al. (1999) revealed that the optimal lead time needed to manage current traffic is 30 minutes. Traditional models, such as the Eta Model, are only operationally updated every 6 hours, a time interval longer than the duration of a typical domestic flight (Black, 1994). The latest version of the Rapic Update Cycle model is updated hourly and offers 1-, 2-, and 3-hour forecasts, yet the parameters most critical to aviation (e.g., convective ceiling, thunderstorm cell location) are absent in conventional model output (Benjamin et al., 1998).

Aviation is a decision industry; it needs reliable unbiased guidance to operate at peak efficiency. However, most model guidance is still deterministic, meaning that for a given lead-time a single forecast is produced. The user is left to wonder how much confidence to place in the forecast. Considering the multitude of nonlinearities that exist and interact in the atmosphere, the crude approximations applied in model initialization, and the various parameterization schemes and algorithms applied to output aviation parameters, undoubtedly, there can be large uncertainty and bias. Traffic flow management personnel not only recognize this inherent uncertainty when predicting weather but account for it through careful cost-benefit decision making in an effort to minimize the airlines’ operating costs. For example, Andrews (1993) states that the optimal ground-holding time of an aircraft is dictated by the mean and standard deviation of the

flight’s predicted delay. This information is inextricably linked with uncertainties about the onset and duration of an adverse weather event. The uncertainties incorporated into cost-benefit analyses can best be captured, then, using reliable probabilistic forecast guidance. Therefore, it is not enough that a model predicts the occurrence of a given weather condition. It is also necessary to know the likelihood (probability) that the model prediction will be correct. It follows that no matter which model is run, statistical postprocessing is necessary to correct for bias and to obtain an accurate measure of the uncertainty in the forecast.

It is possible that ensembles of high-resolution model forecasts could provide a measure of uncertainty for the aviation system. However, providing an ensemble of high-resolution model forecasts and then postprocessing the output in a timely manner is unlikely in the foreseeable future.

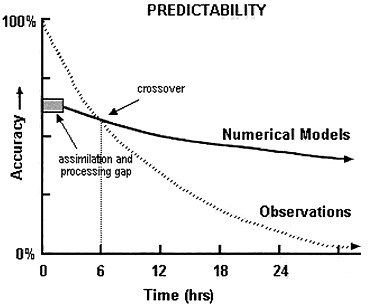

Considering all of the above, Dr. Fritsch asserted that an alternative strategy to simply improving NWP may be necessary to provide the type of guidance needed by the aviation industry. One alternative would be to blend short-term observations-based statistical forecasting techniques with NWP output in a manner that will provide a time continuum of reliable quantitative measures of uncertainty that will fill the short-term gap created by the pre- and postprocessing required by NWP (see Figure 2–2). Observations-based systems run quickly and can be automated every few minutes as new observations become available. These systems will likely prove to be of great utility to the aviation industry.

During their presentations, Drs. Fritsch and Hilliker used a case study to demonstrate a prototype of an observations-based system. Radar images along with the areal distribution of the probabilities of thunderstorms for a 30-minute lead time were shown. They demonstrated how the system can produce forecasts for a suite of lead times ranging from the ultra short term (i.e., 6 minutes) to the traditional short term (i.e., 6 hours). The rapid-update capability of the forecast system also was shown, with 6-minute updates to the suite of forecasts displayed.

Drs. Fritsch and Hilliker also noted that, although traditional nowcasting techniques have demonstrated some success at forecasting well-organized mesoscale convective systems (e.g., squall lines), a large fraction of convective events exhibit chaotic organization and behavior, making traditional nowcasting approaches to forecasting exceedingly difficult. For these chaotic systems, the only reasonable approach is probabilistic guidance.

FIGURE 2–2. The decline in accuracy of forecasts based on numerical models and observations with time. Shaded gray area indicates time gap during which model products are not available due to the time needed to assimilate and process observations.

Cynthia Mueller

Ms. Mueller, from the perspective of an aviation nowcast developer, commented on the predictive skill currently associated with storms, the performance of current nowcast systems, and some recommended areas of research and testing. Forecasts in the 2- to 6-hour range have proven to be quite difficult, and there has been little focus in the research community on this forecast period. Forecast skill is low because storm evolution is rapid and nonlinear. Large areas of convection are more likely to persist and therefore are more predictable than small isolated storms. Experience with isolated storms reveals low predictive skill at ranges greater than 1 hour, but such isolated convective storms are easily circumnavigated in the en-route environment. A multicellular linear storm complex or a mesoscale convective system can last for several hours and exhibit propagation speeds that are reasonably stable and thus enable skillful extrapolation forecasts out to 2 to 3 hours. There is significant predictive skill associated with large-scale linear systems at longer ranges (1 to 6 hours); however, information

that is useful to a pilot or dispatcher (e.g., to determine the location where a convective line can either be penetrated or circumnavigated) is often poorly predicted. Low skill is associated with features such as gaps in convection, regions of nonturbulent low storm top heights, and the length of storm lines.

Prediction studies have tended to focus on types of storm systems and structure and not environmental conditions. Large systems that are forced by mesoscale triggers (such as interactions between a gust front, undular bore, or terrain-induced circulations) can be similar in size to organized convection that is commonly associated with a synoptic-scale front. To date, studies have not been performed to quantify predictability of storm initiation, growth, and decay.

Concerning the techniques currently used in nowcasting systems, Ms. Mueller spoke to two methodologies applicable to the 2- to 6-hour range: (1) observations-based systems (also called data fusion or expert systems) and (2) numerical models that assimilate radar and satellite data.

Observations-based systems primarily use current conditions and trends to forecast convection. Examples of such systems include the Aviation Weather Research Program’s Convective Weather Forecasts (Terminal Convective Weather Forecast, Regional Convective Weather Forecast, and National Convective Weather Forecast), the United Kingdom’s Gandolf and Nimrod systems, and the Auto-Nowcast system (ANC). A primary component of the ANC system is its ability to identify and characterize boundary layer convergence lines. “Feature” detection algorithms and the Variational Doppler Radar Assimilation System (Sun and Crook, 2001) are used to monitor and nowcast boundary layer structure. Although the ANC system has shown promising results for near-term (0- to 2-hour) forecasts, it does not have predictive capability beyond 2 hours.

There are several sources of uncertainty and errors in the ANC forecasts. One source of error is poor extrapolation of features, including boundaries (lines of boundary layer convergence), cloud features, and storms. Extrapolation errors, which compound with forecast length, are due to nonlinear motion in the features and algorithm limitations. A second source of error is the inability to nowcast secondary convection. Research has shown that gust fronts play a major role in organizing convection. However, there is no skill in forecasting which storm will produce a gust front that goes on to initiate secondary convection. Another limitation in the forecasts is that the initiation, growth, and dissipation of elevated convection are not captured. Elevated convection often occurs under stable nocturnal boundary layer conditions and also in association with overrunning at stationary and warm fronts. A final limitation is that boundary layer moisture and temperature are not observed on scales thought necessary to

nowcast storms. Stability information is obtained by indirect means such as observation of cloud fields, inducing possible errors.

Ms. Mueller also cited several sources of uncertainty in NWP-based forecasts, noting in particular that higher resolution currently does not lead to improved predictive skill. She identified a lack of physical understanding, most notably of convective life cycles and processes associated with secondary convection; initialization deficiencies associated with boundary layer and storm structures, and in-storm microphysics; and grid resolution and domain size limitations. Research most needed to overcome these limitations includes:

-

Development of high-resolution boundary layer wind and thermodynamic field analyses: Efforts are needed to determine the multisensor observations most applicable for characterizing the boundary layer and near-storm environment. Candidate sensors and platforms include radars, mesonets, satellites, aircraft Communications and Reporting System, and surface-based profilers.

-

Predictability, scale interaction, and climatology studies: Basic research in these areas is necessary both to provide a better understanding of the phenomena and to identify realistic limits associated with forecasts in the 2- to 6-hour range. Currently, explicit forecasts of storms are expected at the same scale as the observations. At longer ranges, however, this is not a well-founded expectation because there is no objective basis to establish the spatial or temporal limitations. Further, there is an emphasis on the use of probabilistic forecasts, but from an aviation user standpoint the most appropriate characteristics of these forecasts are unknown.

-

Use of NWP guidance in convective forecasts: Data fusion or expert system techniques need to be developed that combine numerical model forecasts, statistics, algorithmic observations, and human forecasts. For example, data fusion techniques could be developed to determine (1) if confidence values can be assigned to 2- to 6-hour NWP deterministic forecasts of convective storms and (2) whether the NWP explicit forecasts can be improved by applying time and space corrections based on observations available after forecasts are issued or human input is applied. Indeed, it is important to the aviation community that forecasts are updated routinely in a timely manner. For example, extrapolation forecasts at the 0- to 2-hour time period have proven to be useful partially because of their frequent update rate. For a forecast to be of utility and trusted by the aviation community, it must be updated frequently.

-

Utility of water vapor: Convection is known to be highly sensitive to boundary layer water vapor; however, the actual distribution and variability of water vapor over continents in summer are poorly understood. The International H2O Project (IHOP), conducted in Oklahoma, Kansas, and Texas in 2002, offers unique datasets to narrow the bounds of uncertainty and to further evaluate forecast sensitivity. Numerical studies associated with the IHOP datasets should be pursued.

Ms. Mueller summarized by saying that the 2- to 6-hour forecast problem will require a combined NWP and expert system/statistical approach. Improvements should be expected in the 5-year time frame for forecasts of multicellular systems that are forced by large-scale features. Improvements in forecasts of systems triggered by mesoscale features or elevated convection are farther down the road. Efforts are needed to define products that are realistic from a science standpoint and to an aviation user. This may best be accomplished through a “testbed” approach.

|

Key Points Identified by Presenters on Effective Strategies for 2- to 6-Hour Forecasts

|

|

RESEARCH GAPS AND NEEDS

Andrew Crook’s presentation focused on approaches and strategies for achieving an accurate 2- to 6-hour forecast of convection. Theoretical studies of turbulent flows suggest that flow predictability is limited to the order of the eddy turnover time. Extending these results to moist convective flows suggests the following predictability timescales for these convective phenomena:

-

Large mesoscale convective systems, 3 to 6 hours

-

Squall lines, 2 to 3 hours

-

Large thunderstorms, 1 to 2 hours

-

Single convective cells, 10 to 60 minutes

To examine the predictability of convection initiation, it is necessary to differentiate between cases that are strongly forced by large-scale

circulations and those where the forcing is weak. A number of modeling studies have indicated that convection initiation in weakly forced environments is highly sensitive to thermodynamic parameters that are within observational error bounds. This limits the predictability to timescales of less than about an hour or, for example, to times when the first convection is observed. The exception to this result appears to be for convective systems that are strongly forced by large-scale circulations. The initiation of these convective systems appears to have more predictability in the 2- to 6-hour time frame as long as the large-scale circulation pattern is predicted accurately.

Given this limitation, Dr. Crook suggested that the FAA goal of obtaining an accurate forecast of areas of convection in the 2- to 6-hour time frame in the next 5 to 10 years will only be met for a limited number of convective phenomena. These include large mesoscale convective systems and convective systems generated by well-defined large-scale circulation patterns. The rest of the convective spectra (which probably accounts for 80 percent of the convection observed in the United States) will be difficult to predict in the 2- to 6-hour time frame. Progress will only be made for this portion of the convective spectra by embracing probabilistic forecasting, possibly using ensemble techniques.

Kelvin Droegemeier of the University of Oklahoma summarized the current state of research and development in the explicit prediction—both deterministic and stochastic—of deep convective storms. To understand the associated challenges, it is important to view this topic in a historical context. Early “synoptic-scale” models (e.g., the National Centers for Environmental Protection limited fine-mesh model, the nested grid model), which operated at grid spacings of approximately 80 to 150 km, were incapable of explicitly representing convective storms because they utilized a hydrostatic framework, which assumes that vertical accelerations are small. They also were unable to resolve the spatial scales associated with convection and were initialized using observations on spatial scales far larger than those of convective storms. The trend toward increasingly finer grid spacings (the current operational Eta model uses a grid spacing of 12 km), brought about by sustained increases in computer power, posed few scientific challenges because the physical assumptions underlying model formulation remained essentially unchanged—the flow was hydrostatic and clouds could not be represented explicitly.

The move of today’s operational models from resolutions of about 10 down to less than 3 km, however, is entirely different because no clear scale separation exists in this range for convective clouds. Convection generally cannot be resolved explicitly, and closure assumptions regarding its

representation as a subgrid-scale phenomenon generally are not applicable (Molinari, 1993). Consequently, the next major step in numerical prediction is likely to come not with the continued extension of today’s models down to grid spacings of a few kilometers, but rather via a jump directly to nonhydrostatic models at grid spacings of approximately 1 km—unless, of course, cumulus parameterization schemes suitable for application at resolutions between about 10 and a few kilometers can be developed.

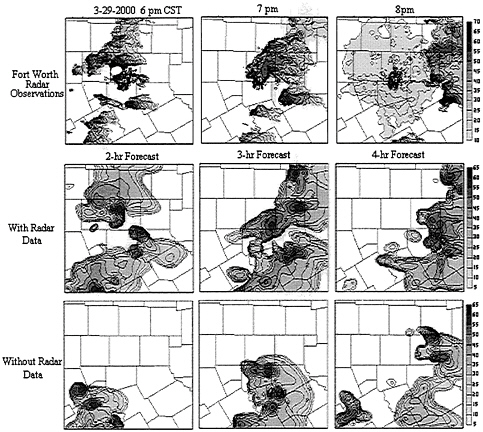

Research conducted during the past several years at the Center for Analysis and Prediction of Storms at the University of Oklahoma (Droegemeier, 1997; Carpenter et al., 1999; Wang et al., 2001; Weygandt et al., 2002; and Xue et al., 2003) has demonstrated considerable skill in the explicit prediction of convective storms, especially for events with moderate to strong meso- or synoptic-scale forcing and in cases where high-resolution Doppler radar observations are available (see Figure 2–3). The prediction of “airmass”-type storms, and general regions of unorganized convection, is a much greater challenge, and the ability to forecast a specific storm in such instances perhaps may be impossible.

The above work and that performed by others (Sun and Crook, 1998; Belair and Mailhot, 2001; Mass et al., 2002) reveals the existence of numerous challenges on the path toward reliable operational storm-scale NWP. One such challenge is the tremendous nonlinearity of the small-scale atmosphere as exhibited by its predictive sensitivity to atmospheric, surface, and subsurface properties, particularly as they influence the timing and location of storm initiation and demise. A second challenge is associated with the difficulty of assigning objective skill measures to forecasts of highly intermittent phenomena. For example, a predicted thunderstorm that is correct in every detail yet has a spatial error of 20 miles, or a temporal error of 30 minutes, might represent an amazing feat scientifically and be of great practical value. Yet by traditional statistical measures, it would have zero skill (i.e., zero overlap with observations). A final hurdle is the clear need for probabilistic forecasting via ensemble techniques, and the combination of model output with other information, to create probabilistic products that can be applied directly to cost-benefit and economic decision models.

Dr. Droegemeier stated that successful storm-scale NWP will depend on the initialization of models with observations of comparable resolution, including three-dimensional wind fields derived from single-Doppler radar data; hydrometeor species retrieved from radar measurements; and moisture fields retrieved from high-density Global Positioning System (GPS), radar, and satellite data. Moreover, an ensemble of model results will be needed to

FIGURE 2–3. The top panel shows an hourly sequence of reflectivity images from the Fort Worth, Texas WSR-88D (NEXRAD) radar in association with a series of tornadic storms that moved through the Fort Worth metro area on 29 March 2000. Contour shading indicates precipitation intensity, with higher rates indicated by darker colors. Shown in the middle three panels is the equivalent radar reflectivity from a 3 kilometer-grid forecast using the University of Oklahoma Advanced Regional Prediction System (Xue et al., 2003), initialized at 2300 UTC with Fort Worth NEXRAD radar and other data. The degree of agreement between observations and forecast, even out to 4 hours, is remarkably good. The lower three panels show the same forecast, though without radar data in the model initial conditions. Although sufficient information exists to capture some structure associated with storms to the south of Fort Worth, the tornadic storms to the north are completely absent—thus highlighting the value of radar data in storm-scale NWP.

Zquantify forecast uncertainty given that the state of the atmosphere on the storm scale is highly variable in time and space and is not well sampled. Finally, in contrast to present-day operational models, it is likely that future models will not be operated centrally on fixed schedules or in fixed configurations but rather will be physically distributed, controlled locally, and configured to respond rapidly to the weather itself and to decision-driven inputs from users.

Given that the research needed to meet the above challenges is broad and significant, Dr. Droegemeier proposed that the most important concerns might be additional developments in three- and four-dimensional data assimilation, including the especially promising ensemble Kalman filter. Indeed, the creation of suitable initial conditions for forecast models is the key element to successful storm-scale NWP. New observing systems, such as the planned phased array radar, along with current GPS water vapor sensing technologies, show great promise in this regard. Especially important is remote sensing of the lowest 3 km of the atmosphere, which has received relatively little attention and where many key processes governing convective initiation occur.

Also important are improvements to forecast models, especially their representation of surface and subsurface features and processes, and the coupling of the ground surface to the atmosphere. Cloud physics modeling schemes today are quite sophisticated, but little routinely available observational information exists with which they can be initialized. Dual-polarization Doppler radar holds promise in this area. Dr. Droegemeier proposed that the impact on forecast quality of various types of data, and a determination of the optimal mix of observations, should be assessed, and techniques should be developed for storm-scale ensemble forecasting. Further, accurate estimation of observational and model error statistics, both of which are extremely important for modern data assimilation techniques, is essential, and statistical techniques for forecast verification at the storm scale should be developed since conventional methods are not applicable. Indeed, Dr. Droegemeier indicated that these latter techniques should go beyond traditional skill measures and include elements of value and risk.

It is perhaps not inappropriate to end by asking whether, after all this work, reliable storm-scale NWP is even theoretically possible. In contrast to the large-scale atmosphere, where pioneering work by Lorenz (1969) continues to define the theoretical limits of predictability, no similar body of work has been undertaken at the storm scale. Interestingly, the practical demonstration of global numerical prediction preceded Lorenz’s theory by roughly a decade, and the same appears to be happening at the storm scale. Relatively clear, however, is that Lorenz’s analysis does not appear to be

valid for the storm scale, principally because it is not suitable for highly intermittent flows, and because its formulation is inconsistent with the limited-area domains being applied to the small-scale atmosphere.

Alexander MacDonald, of the National Oceanic and Atmospheric Administration’s Forecast Systems Laboratory, concluded the session with a call for sustaining and improving the nation’s meteorological observation capabilities. He indicated that, if the observational network is maintained and improved, significant improvements in convective forecasts are possible (though not to the levels requested by the FAA) in the next 2 to 5 years. Current understanding of model physics is sufficient to provide increasingly accurate convective forecasts if observations of high spatial and temporal resolution are available to be blended with models. In particular, better observations of moisture could allow for significant improvements in model representations of convection. At a minimum, models need to run every hour to provide more accurate information of value to aviation users.

In designing an observational network, Dr. MacDonald stressed the importance of measuring moisture, winds, and temperature from complementary platforms. He noted that a solid plan is in place for satellite observing systems but that plans for other observing platforms could be strengthened in order to provide sufficient complementary data. In particular, wind profilers provide critical information about the vertical structure of winds through deep layers of the atmosphere and of temperature through a few kilometers. Instrumented aircraft provide additional information about the vertical distribution of meteorological variables; however, this dataset is sparse at night and over places infrequently visited by aircraft. Lastly, GPS instruments can be used to determine integrated moisture content between a ground station and satellite. A network of closely positioned GPS receivers could be used to provide vertical information about moisture content in the atmosphere. Determining the optimum mix of different observations for improving convective forecasts is a matter of current research in the area of data assimilation.

|

Key Points Identified by Presenters on Needed Forecast Capabilities

|

|

PRESENTATION OF FORECASTS

Presentation of the forecast for decision support is a critical component for improving the usefulness of the operational convective forecast. During the workshop, John McCarthy of the Naval Research Laboratory discussed techniques for presenting forecasts and potential strategies for the best use of convective forecasts in support of air traffic management. There is a significant disconnect between the language of convective nowcast and forecast capabilities provided by meteorologists and that of the nonmeteorological operational forecasting community. One promising approach for addressing this disconnect is by a product development team similar to what the FAA Aviation Weather Research Program uses to ensure that scientists, those who develop new technologies, and users of forecast products use the same language to meet operational needs. Such a mechanism should have feedback loops as an integral part of the concept.

Dr. McCarthy identified the need for a four-dimensional weather hazard or, conversely, a weather-free zone concept, to be established to ensure that flight paths are free of danger. Hazard definition is a complex function of weather, aircraft type, and pilot capabilities. To make this concept more realistic, other complexities such as space and time dynamics of weather, traffic flow rates, controller sector acceptance rates, and airport arrival and departure acceptance rates will need to be addressed.

One key forecast product is a tactical system (0- to 2-hour range) that provides some feedback to larger strategic (2- to 6-hour range) decision support tools that are both automatic and human generated. This is an inversion of requirements of the Systems Command Center to make them more consistent with scientific reality. It is likely that these products will combine both deterministic and probabilistic elements of prediction. To this end, much greater inquiry into the human-machine interface is needed to improve interpretation and general overall usefulness of weather products for air traffic management.

Dr. McCarthy also identified some potential problems with promising significant improvements to the 0- to 6-hour forecast in too short a period (3 to 5 years) based on the following points:

-

Auto-Nowcaster has taken nearly 20 years to develop and is still in the research and development stage.

-

Mesoscale convective systems have been in careful consideration for at least as long, and forecasting them is still problematic.

-

Gains in climatological forecasts of convection are promising but still fully in the research mode; mesoscale and cloud models that utilize data assimilation have had remarkable progress but need much work to be operational in the FAA sense.

-

Validation of products is a difficult matter, even though much progress has been made.

-

Assessing the true impact of weather on the aviation system is a difficult task, and progress has been made only quite recently.

James Evans of the Massachusetts Institute of Technology Lincoln Laboratory elaborated further on the role of forecast presentation and decision support techniques. One key element he emphasized was the importance of having convective forecasts presented both graphically for use by human decision makers and in a numerical form suitable for use by air traffic management decision support tools.

In addition, because highly accurate deterministic forecasts may be difficult to provide operationally a large fraction of the time (e.g., over 50 percent) during the high-delay months of June, July, and August, probabilistic forecasts of convective activity for strategic planning will continue to be a critical support tool for the operational and user communities.

During his presentation, Dr. Evans identified potential techniques for mitigating the impact of convective weather in the near term based on a two-pronged strategy that provides tactical and strategic planning capabilities.

Tactical capabilities should consist of improved convective forecasting in the 0- to 2-hour time frame plus a much better air traffic management system to use these forecasts. Key elements of the air traffic management process are assessing the impact of the forecast weather on air traffic control operations; developing a mitigation plan that considers the traffic flow management and automation implications of possible solutions; expediting the process of choosing between potential mitigation plans including FAA, airline dispatch, and pilot coordination; and making it easier to dynamically reroute planes so as to implement the mitigation plan in real time. The 2- to 6-hour strategic plan should be developed with the tactical capability in mind. Given these elements of air traffic management, Dr. Evans proposed that this would include development of probabilistic forecasts that can meaningfully be used by both humans and automated air traffic management and dispatch algorithms. For example, it would allow the translation of probabilistic forecasts into estimates of airspace and terminal capacity.2 In addition, better strategic mitigation planning capability should be possible by using optimized mitigation plans for cases where convection will only reduce traffic on routes and partially reduce capacities rather than a limited set of predefined options that only consider the very rare case of impenetrable weather.

Probabilistic representations other than the ensemble model sample functions3 that have historically been used for weather forecasts may also be needed to improve decision support. Time and space Markov processes could be attractive both as an input to air traffic management and dispatch decision support tools and as a means of capturing the space and time dependencies of the weather. Explicitly representing the degree of spatial organization for the expected weather, as well as the degree of confidence in the forecasts, could potentially be very important for route and traffic flow decision making.

Successful 0- to 2-hour tactical forecasts can provide some feedback to 2- to 6-hour strategic planning efforts. Rapid progress is occurring in the development of air traffic management tools that can use the 0- to 2-hour deterministic and probabilistic forecasts to identify opportunities to safely

move additional planes. The characteristics of these tools suggest how operational users might utilize highly accurate 2- to 6-hour convective forecasts when they are developed. A departure route availability planning tool (RAPT) that uses the 0- to 60-minute Terminal Convective Weather Forecasts commenced operational evaluation at the New York terminal area and surrounding en-route facilities in August 2002. RAPT examines four-dimensional intersections of planes with forecasted storm locations to determine appropriate departure times from a runway. The RAPT software will utilize the 0- to 2-hour Regional Convective Weather Forecasts at a number of air traffic control facilities in 2003. Direct use of convective forecasts to assist air traffic users in making decisions about traffic routing, such as illustrated by RAPT, has significant implications for the presentation of convective weather forecasts and validation. First, the uncertainty of convective forecasts needs to be expressed in a way that allows tools such as RAPT to provide guidance to operational users as to the likelihood of a route being available for use as a function of time. And second, convective forecast accuracy needs to be verified in the context of operational value to the user, particularly by explicitly addressing the accuracy for route usage decisions.

|

Key Points Identified by Presenters on Ways to Present Forecasts

|

VERIFICATION SCHEMES

Verifying forecast accuracy is a critical step in providing valuable forecast products for use by the aviation community. Marilyn Wolfson of the Massachusetts Institute of Technology Lincoln Laboratory framed her comments about verification schemes around the question “How will we know when we’re done?” To answer this question, she started by defining the intended use of weather forecasts from an aviation perspective, which is to improve flight planning and thus maintain schedule integrity. Weather forecasts are used to predict the expected capacities in various en-route sectors of airspace as a function of space and time; route availability, including initial routes, alternate routes, miles in trail spacing, blockages, and flow-constrained areas; and terminal impacts, including the availability of alternate airports for landing en-route planes.

Based on these needs of the aviation community, Dr. Wolfson noted that her thoughts on verification are based on postulating the following characteristics for improved 2- to 6-hour forecasts. First, she opined that there is general consensus that probabilistic forecasts are needed and that they should be designed for utility and value to the ultimate user. In addition, the forecasts need a high level of specificity in space and time, particularly in terms of resolving convection. Lastly, forecasts need to be generated automatically, with standardized outputs, low latency, and high reliability. Indeed, providing a continuum of forecasts with different lead times—for example, in granularities of 15 or 30 minutes—would be more valuable to those making flight planning decisions than the currently provided 2-, 4-, and 6-hour products. An automated system may help eliminate bias or equity issues associated with forecasts provided by individual entities. Even with an automated system, forecasters may need to play a role in updating, reviewing, and editing the automated output.

Dr. Wolfson identified several forecast characteristics of particular importance for anticipating how convective weather will impact the national airspace. These characteristics include:

-

Spatial coverage and timing: These can be considered together because forecasts may often trade off accuracy in space and time. Verifications of the temporal and spatial characteristics of convection will likely need to include a probabilistic tolerance for errors that increases as the forecast extends farther into the future.

-

Strength and height of the storm: Many en-route flights avoid storms by flying over them, rather than around them. Accurate vertical information

-

in a forecast will allow for much better planning capabilities to avoid unnecessarily diverting flights around predicted convection.

-

Storm characteristics and orientation: Linearly organized convective storms (or line storms) cause the most problems for flight planning. Knowing that a line of convection exists, its width and length, its vertical extent, and the location of gaps in the line can be of great use. Line storm orientation relative to flight route orientation is also very important. Many routes that parallel a line storm can potentially remain open, whereas most routes perpendicular to a line would be blocked. New growth of convective cells above runways (i.e., “pop-ups”) also causes substantial delays in the summer, making accurate prediction of them desirable (though very difficult).

-

Storm evolution and its impact on terminal and en-route capacity: Accurately representing in forecasts how storms evolve can have a large impact on terminal and en-route capacity. For example, to make a meaningful 4-hour forecast, it is necessary to forecast everything that happened between now and 4 hours, including especially large storm systems whose lifetime is encompassed within the 4 hours.

How best to visually portray these forecast characteristics and their accuracy for aviation users presents an additional challenge. For example, the presence of spatially intermittent events in an area otherwise clear of severe weather requires special attention. One approach is to map the whole area, recognizing that some conditions have a low probability of convection. A different approach would be to show in some probabilistic sense the forecasted pattern of convection, which will likely be somewhat erroneous. This second approach does evoke a notion of a porous piece of airspace that one could fly through. A third alternative is to score an entire region in a way that characterizes the weather by spatial scale and whether the forecast has captured that spatial scale correctly.

Schemes to verify forecasts must accommodate the meteorological developer’s desire to improve overall forecast quality, the air traffic manager’s interest in having the ability to trade off forecast capabilities, and the user’s need for an easily interpreted measure of forecast quality. Verification schemes also need to allow cross-comparison of different forecasts via a metric that is actually comparable. Given these requirements for a verification scheme, forecast users and evaluators need to work together to determine on what spatial and temporal scales to assess the forecasts and which weather characteristics to consider. These questions can be answered in part by talking to the users and in part by data mining maps of the weather systems, the air traffic systems, and their interaction. Data

mining allows the development of quantitative models that relate historical measurements of key air traffic control parameters—such as the capacity of various sectors of airspace as a function of space and time; route availability, including initial routes and alternate routes; miles in trail spacing; and terminal impacts—to storm characteristics. A second challenge is to determine how to assess a probabilistic forecast.

Designing a verification scheme that provides a single score for a forecast is appealing in terms of its simplicity for interpretation by various users. Dr. Wolfson proposed a system in which measured and modeled performance measures, such as storm type, orientation, height, area of coverage, and impact on specific traffic flow, could be combined into a single consolidated score by weighting each individual performance measure proportional to user value. Such a system is not currently available but could be developed through a research effort. Ultimately, the process of developing a single score or set of scores and mapping them in time and space could be part of the automated system providing weather forecast information to the aviation community.

Michael Prather of the University of California, Irvine, provided additional guidelines for developing verification systems for convective weather forecasts. His first suggestion was to focus on scientifically improving forecast accuracy, rather than concentrating too much on improving the application of the forecast to reducing delays. The suggested emphasis on science derives from the fact that it has a large role to play in developing more accurate forecasts but is only a small component of the collaborative, bureaucratic, and human issues that contribute to air traffic control delays.

In developing a verification scheme, Dr. Prather proposed selecting a range of objective verification scores that reflect a predictable quantity of interest to aviation. Examples of forecast characteristics that could be scored include likelihood and persistence of cells located over terminals, percent coverage and organization of convection in key air traffic control zones, and the presence of convection in space-time averaged windows. As other workshop participants noted, probabilistic forecasts are the key to verification and metrics of success in forecasts because skill measures for a single deterministic forecast are ambiguous. For example, evaluating a single deterministic forecast requires space-time averaging over designated windows to identify a “hit.”

Given the success in combining weather forecasts and air traffic control, Dr. Prather briefly discussed other issues for the airline industry to consider in terms of cost co-benefits. The question of aircraft pollution as it impacts air quality and climate is one topic to consider in this regard. Air traffic is

estimated to contribute 2 percent of global carbon dioxide emissions and 3.5 percent of global radiative forcing, which is primarily driven by contrail formation (IPCC, 1999). In global terms the climatic impact of the 10 percent of air traffic leading to contrail occurrence is of the same order of magnitude as the 90 percent of air traffic not leading to contrail occurrence. Contrail formation could be limited using air traffic control and weather knowledge, thereby mitigating the climatic impact of aviation. With the ability to mitigate the climate impacts of aviation may come costs and responsibilities. Indeed, aircraft emissions are already being taxed in the European Union to begin accounting for their environmental cost.

|

Key Points Identified by Presenters on Verifying Forecast Accuracy

|