4

Science

Many young children get off to a good start in acquiring knowledge on a variety of scientific topics. U.S. fourth graders score near the top in science—just behind Korea and Japan—among the nations in the Third International Mathematics and Science Study (TIMSS) (National Center for Education Statistics, 1999). However, since little science is taught in the early elementary grades, it is unlikely that these results can be attributed to school science programs. Instead, the high scores probably reflect the many informal science learning opportunities that abound in the United States, including science and technology museums, youth organizations that support science activities, television (e.g., the Discovery Channel; Magic School Bus; 3-2-1 Contact; Bill Nye, the Science Guy), trade books, and children’s science magazines.

When serious science instruction begins, typically in middle school or even later, the advantages of informal learning resources begin to be overtaken by the disadvantages of unfocused curricula and weak teacher knowledge of both science content and pedagogy. At this stage the international comparisons become much less favorable. In fact, the TIMSS results at grade 8 place the United States in 17th place out of 26 nations. By grade 12 the United States scores are lower still, with advanced U.S. students scoring last of the 16 countries compared. Scores on national assessments confirm the bleak TIMSS results. On the 2000 National Assessment of Educational Progress (NAEP), 47 percent of twelfth grade students scored in the lowest category (below basic proficiency), an increase from 43 percent in 1996 (National Center for Education Statistics, 2003). Clearly, science education is not on a path to improvement.

As in reading and mathematics, there are pockets of research and development in science education that have pro-

duced instructional programs with demonstrated student achievement benefits. In physics, for example, a highly productive tradition of research has produced a deep knowledge base with very important implications for educational practice. In contrast, for other areas in science education the research base is not yet developed fully enough to guide or support decisions about instruction. As with reading comprehension, knowledge of the progression of student understanding is relatively sparse and spotty from topic to topic. Moreover, there is very little evidence about how student understanding can develop with instruction over the school years.

The first section of this chapter, as in the chapters on mathematics and reading, addresses an area that has potential for wide impact in the relatively near term: physics. Unlike the other two disciplines, however, this downstream case falls late in the K-12 curriculum. The second section of this chapter addresses science education across the school years, since we are still far upstream in developing a principled organization of science instruction, particularly in the years before high school.

THE TEACHING AND LEARNING OF PHYSICS

The number of students who take courses in physics is relatively small in comparison to the number who take biology or chemistry, as is the number of credentialed high school physics teachers in comparison to other science teachers. Perhaps because the community of educators working on physics is small, it has been possible to pursue a cumulative research agenda on major issues in physics teaching and learning.

STUDENT KNOWLEDGE

The Destination: What Should Students Know and Be Able to Do?

Until relatively recently there was substantial overall agreement regarding what students should know and be able to do in the typical high school or college physics course (the content of the two overlaps substantially). In general, students were ex

pected to understand a range of concepts and laws organized around such domains as force and motion, electricity and magnetism, waves and optics, etc. Typically, understanding was demonstrated by the solution of problem types using quantitative methods that minimally demanded algebra. However, over the past several decades, research on student understanding has called into question whether the goals of instruction were being achieved.

The Route: Progression of Understanding

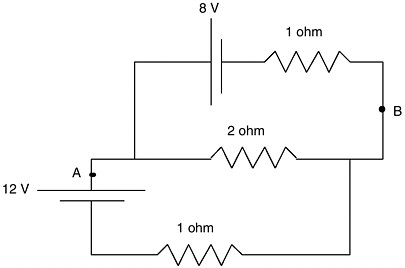

Historically, the implicit assumption in physics instruction has been that novice students could come to understand physics by receiving classroom presentations of what physics experts know. Research, however, has uncovered problems with that assumption. Students’ naïve ideas and conceptions about the physical world are not easily changed and in fact often remain substantially unaffected by typical classroom instruction. In the 1970s and 1980s, research conducted by John Clement (1982), Andrea diSessa (1982), Lillian McDermott (1984), and others revealed that even those students who can recall physics laws and use them to solve textbook problems may not understand much about the implications of these ideas in the world around them. For example, in diSessa’s research, college physics students performed no better than elementary students when asked to strike a moving object so that it will hit a target with minimum force at impact. Students relied on their untrained ideas in this task, ignoring the role of momentum, even when they could precisely reproduce the relevant laws of momentum on a test. Similarly, a study of student solutions to a problem with simple electrical circuits confirmed that students can reproduce scientific knowledge for a test, but revert to everyday ways of thinking when that knowledge is tapped outside the classroom (see Box 4.1).

Additional work over many years has led to the conclusion that students bring to physics a substantial set of persistent conceptions that are significantly different from those needed to understand aspects of the physics curriculum. By far, the largest amount of work on student conceptions has been in the area of Newtonian mechanics (McDermott and Redish, 1999). Both before and after passing high school and even college physics courses, students often behave as if their conceptual under-

standings of force and motion are more in line with pre-Newtonian, even Aristotelian conceptions. Although these ideas are misconceptions from a scientific perspective, they are sensible interpretations people construct from their everyday experience with objects and events in the world. Because these intuitions serve quite well to explain and predict many features of everyday phenomena, they are often unexamined and may be difficult to change. Yet instruction often proceeds as if students have no ideas at all—as if the job of teaching is simply to provide the ideas that are scientifically correct. Students’ well-learned and practically useful mental representations for making sense of the world around them need to be actively engaged and built on if instruction is to be successful.

Thinking like a scientist is partly a matter of understanding how scientific principles are embodied in familiar events around us. This is a challenge for students. Like novices in any area, they are often too reliant on surface features when they attempt to interpret and solve physics problems. Students tend to look for clues in the objects featured in the problem. For example, Larkin (1983) found that novices often rely on the objects mentioned in the problem statement, like blocks, inclined planes, and pulleys, to try to construct a basic representation that consists of relations among these explicit objects. From this basic representation, they then seek directly to identify a set of equations that they can use to plug in the values mentioned in the problem.

In contrast, experts first construct an intermediate interpretation that represents the elements of the problem in constructs of the discipline, such as forces, acceleration, mass, momenta, etc. Chi and Bassok (1989) refer to this level of interpretation as a physics representation. They point out that the entities in a physics representation are not directly described but must be inferred. Perhaps because students tend not to identify problems as being members of categories defined by common scientific principles, they often fail to transfer what they “know” in the context of one problem to novel or even analogous problems.

Researchers have focused considerable effort on mapping out what students do understand about a variety of physical phenomena and how that understanding progresses as a consequence of instruction. This work has probed the conceptual understandings of learners from preschool onward. Minstrell’s

work with high school students (e.g., Minstrell, 1989; Minstrell and Simpson, 1996), studies pursued by the Physics Education Group at the University of Washington with college students, and the research of many other investigators provide a wealth of information about how students typically think about a range of physical situations and concepts.

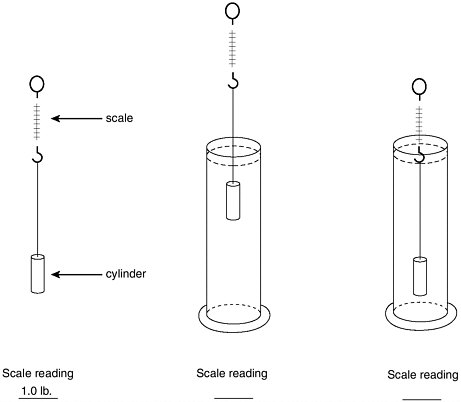

The question in Box 4.2 regarding the relative weight of an

object when it is surrounded by air and submerged at two different depths of water produces a predictable range of responses from students when asked before, during, and even after instruction. Those responses can be evaluated for consistency with the various forms of student understanding shown in the box. The different answers, reflections of what Minstrell (1992) refers to as “facets” of thought, can be sequenced with

respect to scientific sophistication. They range from acceptable understandings in introductory physics (310) to those representing partial understanding (e.g., 315), to those representing more serious misunderstandings (e.g., 319).

Minstrell (1992) has argued that partially correct understandings frequently arise from formal instruction and may represent over- or undergeneralizations or misapplications of a student’s knowledge. These can result if the set of examples

|

Forms of Student Understanding 310—pushes from above and below by a surrounding fluid medium lend a slight support (pretest—3 percent). 311—a mathematical formulaic approach (e.g., rho × g × h1 rho × g × h2 = net buoyant pressure). 314—surrounding fluids don’t exert any forces or pushes on objects. 315—surrounding fluids exert equal pushes all around an object (pretest—35 percent). 316—whichever surface has greater amount of fluid above or below the object has the greater push by the fluid on the surface. 317—fluid mediums exert an upward push only (pretest—13 percent). 318—surrounding fluid mediums exert a net downward push (pretest—29 percent). 319—weight of an object is directly proportional to medium pressure on it (pretest—20 percent). |

presented to students is too limited or if an appropriate set of contrasting cases is not included to help clarify the conditions under which a concept applies. The task for a student is to come to recognize similar situations and problems as members of a category. Part of the challenge, then, is to understand the range of conditions under which concepts apply. It is important for both the instructor and the student to become aware of the form of the student’s conceptual understanding when instruction be

gins and to monitor changes in the student’s knowledge as instruction progresses. The goal is to build on what students do know and to help them understand the conditions under which it applies, rather than ignoring students’ current concepts and trying to replace them immediately with scientific reasoning.

The Vehicle: Pedagogy and Curriculum

Along with the research on student understanding have come new approaches to teaching physics that clearly demonstrate the accessibility of the subject for all students, if it is taught in ways that acknowledge what is known about student understanding.

First, instruction needs to be based on the acknowledgment that students are being asked to reformulate category systems that have served them quite well in the past. This entails coming to recognize apparently familiar objects and events as members of novel classes, an accomplishment that develops slowly and only if students receive multiple well-chosen opportunities to experience the relevant range of situations in which a concept applies or does not apply. Second, teachers need to be aware of the range of ways in which students interpret situations and problems and to develop a repertoire of proven strategies for helping them question their assumptions, tune their partly correct conceptions, and understand the boundary conditions for important principles. The goal is to help students understand, which requires knowing how to capitalize on the forms of sense-making that they have available to work with. Third, effective physics instruction is designed to make more transparent what the practice of physics is all about. As Hestenes (1987) has cogently argued, physics is a “modeling game,” but this is far from apparent to students. The emphasis in much physics instruction is on using the products of physics—laws and principles—with little attention to why or how physicists generate and work with these concepts. Students rarely are taught physics as the enterprise of constructing, testing, and revising models of the world, and therefore its primary goals and epistemology are typically invisible. As we explain below, however, new approaches to instruction are emphasizing this aspect, inviting students into the modeling game and making evident the goal of what otherwise may seem a rather mysterious enterprise.

To summarize, we emphasize three characteristics of new

approaches to physics instruction that follow from contemporary learning research:

-

helping students develop new schemas for recognizing objects and events in the world as members of a category system more like the one used by scientists;

-

continually monitoring changes in students’ evolving knowledge to inform choices of just the right experiences, countercases, and challenges to support their next step in knowledge development; and

-

introducing students to physics as a modeling game, so that they grasp its epistemology and central goals.

Most of the more fully developed curricula inspired by this research have been targeted at the college level. McDermott and Redish (1999) have identified nine such curricula and more are under development, including a research-based version of the widely used college text by Halliday and Resnick (Cummings et al., 2001). However, the substantial overlap of introductory college and high school physics courses suggests that much in these curricula may also be appropriate for high school use.

Two programs designed specifically for students in middle school and high school have demonstrated improvements in student achievement, particularly with respect to conceptual understanding. In the first of these, the “modeling method” of instruction, students work to develop, evaluate, and apply their own models of the physical behavior of objects (see Box 4.3). The key to this instructional intervention is a series of professional development workshops with teachers, who are supported in effecting a radical shift of their pedagogy. Teachers are encouraged to become modeling coaches, helping students to observe, model, and explain interesting and puzzling phenomena. A 6-year project that provided extensive training and support for 200 teachers in this instructional approach resulted in nearly all of them demonstrating significant improvements in their own understanding, in their teaching, and in their students’ achievement (Hestenes, 2000; for more information, see http://modeling.la.asu.edu/modeling.html) on a highly regarded measure of physics conceptual understanding, the Force Concept Inventory (see below). These studies, conducted with large numbers of students in matched comparison groups, were carried out in multiple sites across several years. The research

included students in regular and introductory physics classes, honors-level physics, and advanced placement physics. Results repeatedly showed greater pretest to posttest gains in physics content knowledge when students taught by the modeling method were compared with (a) physics students of the same teachers in the year before the teachers implemented the program and (b) students in traditional physics classes and alternative reform programs. Students taught with the modeling method exceeded the performance of comparison groups by

|

BOX 4.3 The modeling method has been developed to address problems with the fragmentation of knowledge in traditional physics instruction and the persistence of naive beliefs about the physical world. It is an approach to high school physics instruction that organizes course content around a small number of basic scientific models as units of coherently structured knowledge. David Hestenes and colleages at the University of Arizona have developed the approach to both instruction and teacher preparation over the past two decades. The program is grounded on the thesis that scientific activity is centered on modeling: the construction, validation, and application of conceptual models to understand and organize the physical world. Instructional activities give students experience in constructing and using models to make sense of a variety of physical problems. A critical feature of the program is the role played by the teacher: “The teacher cultivates student understanding of models and modeling in science by engaging students continually in ‘model-centered discourse’ and presentations.” The program developers argue that “the most important factor in student learning by the modeling method (partly measured by Force Concept Inventory scores) is the teacher’s skill in managing classroom discourse” (Hestenes, 2000, p. 2). The teacher is prepared with a definite agenda for student progress and guides student inquiry and discussion in that direction with Socratic questioning and remarks. The program uses computer models and modeling to develop the content and pedagogical knowledge of high school physics teachers, and relying heavily on professional development workshops to equip teachers with a teaching methodology. Teachers are trained to develop student abilities to make sense of physical experience, understand scientific claims, articulate coherent opinions of their own, and evaluate evidence in support of justified belief. Teachers are also equipped with a taxonomy of typical student misconceptions in order to prepare them to identify and work with them as they surface. In a sample of 20,000 high school students, gains on the Force Concept Inventory under modeling instruction are reported to be on average double those under traditional instruction, with teachers who implement the program more fully showing higher gains in their students scores. All students gained significantly from modeling instruction, but students with the lowest scores before instruction gained most. More information on the modeling method is available on the projects web site: http://modeling.la.asu.edu/modeling.html. |

margins that in some cases were larger than two standard deviations.

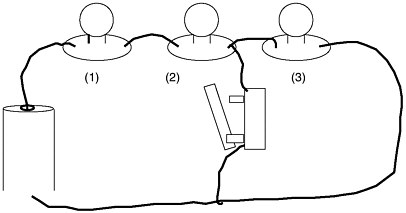

The second program, ThinkerTools, also emphasizes physics as modeling and features both computer simulations and inquiry with physical materials. The curriculum (White, 1993; White and Frederiksen, 1998) teaches the physics of force and motion and is designed to be successful with students in middle school and in urban environments as well as in high school and in suburban environments.

The inquiry-based curriculum engages students’ preconceptions by asking them what they think will happen when certain forces are applied to objects. Students test their ideas in a computer-simulated world and learn when their ideas hold true and when not. The observations allow students to directly challenge their ideas and to engage in a search for a theory that can adequately explain what they observe. The class functions as a research community, and students propose competing theories. They test their theories by working in groups to design and carry out experiments using both computer models and realworld materials. Finally, students come together to compare their findings and to try to reach consensus about the laws and causal models that best account for their observations.

Students systematically build conceptual understanding by encountering problems that increase in complexity and difficulty. The problems are based on knowledge about typical forms of student thinking and its progression. Experiences that students encounter support the “conditionalized” kind of knowledge that experts hold, allowing students to detect patterns that novices do not see. ThinkerTools provides multiple experiences with problem solving, but the carefully controlled difficulty of problems is designed to build pattern recognition efficiently.

Like the modeling method, the emphasis is on constructing and revising models and explanations, and modeling ability is acquired in the service of building a conceptual understanding of motion, gravity, friction, and the like. What is distinctive in the ThinkerTools curriculum is the addition of a “reflective assessment” component. In addition to engaging in inquiry learning, students learn to evaluate the quality of their own and others’ inquiry investigations using standards that reflect the culture and the goals of the scientific community.

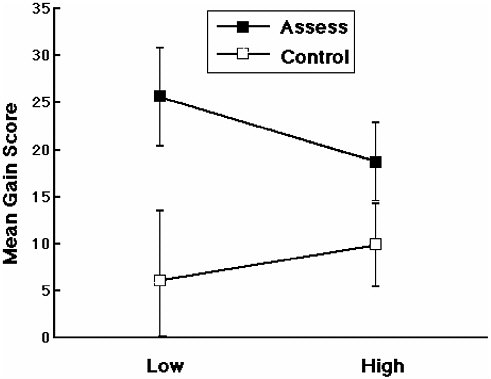

As with the modeling method, student achievement gains with the ThinkerTools curriculum are impressive. Students con-

struct a deeper understanding and are better able to transfer their knowledge to novel problems than students taught with a traditional curriculum. These advantages held even when the ThinkerTools group consisted of urban students who were compared with suburban counterparts, as well as middle school students compared with high school students. Of particular importance is the finding that teaching students to engage in reflective assessment—to judge how well they and their colleagues carried out an inquiry investigation—substantially improved learning gains. Not only did students come away with a deeper understanding of the inquiry process, but they also improved their content knowledge of physics. The gains were particularly striking for students who began instruction as low achievers (see Box 4.4).

Checkpoints: Assessment

New forms of instruction pose two fundamental challenges for assessment. First, since the goal of instruction emphasizes conceptual understanding and the ability to transfer knowledge to new situations, assessments are needed that capture these competences. Second, since the instructional goal is to support conceptual change, instructors need assessment tools to continually diagnose student thinking so that instruction can address students’ evolving conceptions.

With respect to the first challenge, physics teachers and researchers alike are increasingly adopting the Force Concept Inventory (see Box 4.5) developed initially by Halloun and Hestenes (1985) and later modified and published with comparison data (Hestenes et al., 1992). This instrument probes beyond the usual focus on students’ capability to solve traditional physics problems, emphasizing instead their conceptual analysis of physical situations. Rather than right or wrong answers, the inventory diagnoses student conceptions; the items and choices in the instrument are based on research about the range of thinking that students typically bring to situations featured in the test. Because it provides feedback about students’ conceptual development, it has persuaded some instructors of the need to make significant changes in their teaching (see, e.g., Mazur, 1997). Several other tests modeled after the Force Concept Inventory are currently available or under development in areas of physics beyond force and motion. One ex-

ample is the conceptual survey of electricity and magnetism described by Maloney et al. (2001).

Although it is certainly useful to know how students think about physical situations and concepts at the start and end of instruction, it is also important to monitor changes in these ideas during the course of instruction to address specific student needs and modify instruction accordingly. Incorporating formative assessment practices into ongoing instruction requires quality assessment materials that are closely connected to conceptual models of student understanding, together with effective ways of presenting, scoring, and interpreting the assessment results. Time and efficiency are obviously of central importance: if the feedback is not available when the next instructional decisions need to be made, then important opportunities will be lost. An excellent example of an effort to integrate assessment and instruction is the facets-based instruction and assessment work performed by Minstrell and his colleagues described above (Minstrell, 1992; Hunt and Minstrell, 1994; Levidow et al., 1991). The focus of the research effort has been on identifying facets (mental representations for interpreting physical situations) of student knowledge and understanding for various topics in physics. These facets are incorporated into Diagnoser, a relatively simple-to-use computer program designed to help teachers evaluate the quality and consistency of student reasoning in physical situations.

The Diagnoser program presents sets of carefully designed problems and records student responses and justifications as a means of identifying their understanding. When needed, the program provides instructional prescriptions that are designed to challenge the student’s thinking and address a possible conceptual misunderstanding. The course instructor is provided with information about the range of student understanding in the class and can then adjust lessons accordingly. Minstrell and Hunt (1990) have demonstrated that the facets approach can be successfully adopted by teachers and that it produces better outcomes than instruction that lacks integrated diagnostic assessment.

TEACHER KNOWLEDGE

Teacher knowledge of physics is typically not a serious concern, since most physics teachers have undergraduate or ad-

|

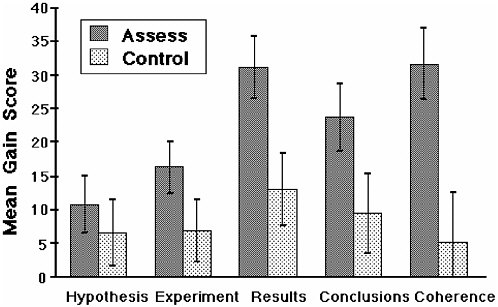

BOX 4.4 ThinkerTools is an inquiry-based curriculum that allows students to explore the physics of motion. The curriculum is designed to engage students’ conceptions, to provide a carefully structured and highly supported computer environment for testing those conceptions, and to steep students in the processes of scientific inquiry. The curriculum has demonstrated impressive gains in students’ conceptual understanding and ability to transfer knowledge to novel problems. White and Frederiksen (1998) designed and tested a reflective assessment component that provides students with a framework for evaluating the quality of an inquiry investigation—their own and others. The assessment categories included understanding the main ideas, understanding the inquiry process, being inventive, being systematic, reasoning carefully, using the tools of research, teamwork, and communicating well. The performance of students who were engaged in reflective assessment was compared with that of matched control students who were taught with ThinkerTools but were asked to comment on what they did and did not like about the curriculum without a guiding framework. Each teacher’s classes were evenly divided between the two treatments. There were no significant differences in students’ initial average standardized test scores (the Comprehensive Test of Basic Skills was used as a measure of prior achievement) between the classes assigned (randomly) to the different treatments. Students in the reflective assessment classes showed higher gains both in understanding the process of scientific inquiry and in understanding the physics content. For example, one of the outcome measures was a written inquiry assessment that was given both before and after the ThinkerTools inquiry curriculum was administered. It was a written test in which students were asked to explain how they would investigate a specific research question: “What is the relationship between the weight of an object and the effect that sliding friction has on its motion?” (White and Frederiksen, 2000:22). Students were instructed to propose competing hypotheses, design an experiment (on paper) to test the hypotheses, and pretend to carry out the experiment, making up data. They were then asked to use the data they generated to reason and draw conclusions about their initial hypotheses. Presented below are the gain scores on this challenging assessment for both low- and high-achieving students and for students in the reflective assessment and control classes. Note first that students in the reflective assessment classes gained more on this inquiry assessment. That this was particularly true for the low-achieving students. This is evidence that the metacognitive reflective assessment process is beneficial, particularly for academically disadvantaged students. This finding was further explored by examining the gain scores for each component of the inquiry test. As shown in the figure below, one can see that the effect of reflective assessment is greatest for the more difficult aspects of the test: making up results, analyzing those results, and relating them back to the original hypotheses. In fact, the largest difference in the gain scores is that for a measure termed “coherence,” which reflects the extent to which the experiments the students designed addressed their hypotheses, their made-up results related to their experiments, their conclusions followed from their results, and their conclusions were related back to their original hypotheses. The researchers note that this kind of overall coherence is a particularly important indication of sophistication in inquiry. |

|

BOX 4.5 The Force Concept Inventory (Hestenes et al., 1992) has been widely used to compare student mastery of basic concepts of Newtonian mechanics. The test examines core conceptual understanding of Newtonian mechanics. Sample Question Imagine a head-on collision between a large truck and a small compact car. During the collision:

Correct answer is (E). This question assesses student understanding of Newton’s third law. The distractors (incorrect answers) are adapted from student responses in interviews and open-form questions, revealing various naive conceptions of force as associated with size or effect. Newtonian principles demonstrate that force is an interaction, so the forces are the same, but the effects of the forces (acceleration, damage) differ according to the mass and structure of the object. The inventory is commonly given in a pretest-posttest mode. It is inconceivable to most teachers that a student well trained in mechanics could do poorly on these core concepts on the posttest. Most physics teachers agree that a student with a reasonable understanding of Newtonian mechanics should be able to correctly answer the 30 simple questions on the test, such as the one illustrated above. Indeed, the test seemed so simple that many instructors initially did not think it was worth administering as either a pretest or a posttest. Yet students do poorly on the inventory as a pretest, and a full semester of careful traditional instruction produces little change in student performance: this result has been a major wakeup call to many physics teachers. Such results, which teachers can often replicate with their own classes, have significantly increased the audience for the results of physics education research. |

vanced physics training. Of far greater concern is teachers’ pedagogical content knowledge. As the above discussion suggests, the knowledge and tools are now available to support the latter, including the research base concerning students’ conceptual understanding, assessment tools such as the Force Concept Inventory, and alternative forms of instruction, such as the emphasis on modeling described earlier. It is uncertain what proportion of the 19,000 physics teachers in the United States engage in instructional practices that are aligned with what is known about learning and instruction in physics, or how many make use of research-based curricular materials, assessments, and approaches. Equally uncertain is the source of their knowledge, that is, whether generalized from their own experiences as physics students, acquired during pre-service teacher education, or developed as a result of professional development programs.

In any given year, the number of physics majors pursuing a secondary education teaching credential is relatively small, and in most institutions of higher education only a few of these students may be simultaneously pursuing a certification program. Variation in program content, student learning experiences, and supervision can be substantial. This is especially problematic with regard to the specifics of how prospective physics teachers acquire knowledge about important characteristics of student learning and the teaching of physics.

In contrast to pre-service teacher education, the professional development of in-service physics teachers is often better organized, especially with regard to regional, state, and national workshops. Many of these opportunities have been supported by federal funding such as the Eisenhower math-science programs, the National Science Foundation’s teacher enhancement projects. Professional societies have played a role as well. The physics teaching resource agent (PTRA) program, run by the American Association of Physics Teachers, is one model of a sustained teacher enhancement project. First funded in 1985, the PTRA program develops workshop materials, prepares exemplary high school teachers to serve as resource agents, and provides support to those agents to offer workshops in their own regions (Nelson and Bader, 2001). Agents continue to receive education over successive summers to expand their repertoire of workshops.

Approximately 500 outreach teachers have been educated, and more than 300 remain active. From 1985 to 1995, the out-

reach program offered workshops to about 60,000 teachers, including physics, physical science, middle school, and elementary school science teachers. Since 1995, the project has continued with the urban PTRA project and the rural PTRA project. In these continuations, there is an emphasis on building a continuing relationship with a group of teachers for sustained professional development. The PTRA now serves as a model for the development of outreach programs in other science areas.

The opportunities for sustained teacher learning are better developed in physics than in most fields. Yet even here, relatively little is known about the processes of effective teacher learning. In physics as elsewhere, little is understood about how knowledge of student thinking is bound to practice—that is, how it is used by teachers to deploy specific instructional moves. Little is known about the conditions that optimize and impede the development of the knowledge base of prospective and new teachers or the conditions that assist experienced teachers in understanding and adopting more effective instructional practices. Finally, little is known about the range of teacher characteristics and organizational circumstances that are conducive to adopting the instructional approaches derived from physics education research (like the modeling method or facets-based instruction). Because the knowledge base and tools for teacher learning are better developed in physics than in other areas, it provides fertile ground for investigating these broadly applicable questions.

RESEARCH AGENDA

Three closely related initiatives for research and development are proposed that would build on the existing, high-quality research and development in physics and support the usefulness of existing knowledge and tools for school decision makers and classroom teachers:

-

undertake an effort to identify and differentiate existing research-based physics instruction programs on dimensions of learning outcomes and characteristics of students, teachers, and schools in which the program has been effective;

-

study the scalability of the existing programs and develop supports for taking programs to scale;

-

study teacher knowledge requirements for effective use, and how that knowledge builds with teacher learning opportunities and experience.

Initiative 1: Differentiating Instructional Programs and Outcomes

The initiative should begin by developing a better characterization of existing programs, as well as the range and scope of their use, for purposes of informing education decision makers. One set of questions concerns what conditions usually accompany success: participation from university or other research partners; electronic or physical proximity to a network of more expert reform teachers; administrative support and resources; aligned policies about assessment, grading, and student promotion.

The analysis should also identify the dimensions on which these research-based programs differ from each other and how the differences affect outcomes. Some of the programs rely heavily on computer simulations and data-gathering tools, and others do not. Some emphasize student reflection more than others. Some are targeted primarily toward students, whereas others are targeted primarily toward changing the practices of teachers. A useful first step, then, would be to provide a systematic catalogue of the current state and scope of available programs, evidence about their outcomes, and analysis of the features that may account for variability in outcomes.

These kinds of comparisons are often difficult to make because the research designs, measures of success, and data collected differs from one program evaluation to the next. A likely required step in this effort would be the design of research to allow the questions regarding relative effectiveness under varying conditions to be answered more robustly. The investigations would be strengthened considerably if researchers work in schools and conduct cycles of repetition-with-variation of instruction so that critical variations in program features can be explored.

Initiative 2: Scalabilty

In spite of the promising nature of the findings to date, only a small fraction of physics classes currently use research-based programs. We therefore propose exploring the extent to which

promising programs can be taken to scale. This work could be undertaken in a set of SERP field sites that exhibit a range of student, teacher, and school characteristics. Careful study of variation in the success of the program, as well as the characteristics and conditions that can explain that variation, would be the primary research task.

As instructional programs move farther away from the sites and the individuals who generated them, they typically undergo change. As Spillane (2000) and others have shown, reform curricula may look far different in practice than what was intended in the reform design. Very often, policy makers and practitioners generate piecemeal interpretations of new approaches to instruction, preserving some of its surface features while missing its underlying goals—rather like the physics novices described earlier. So, for example, a teacher initially encountering an unfamiliar way to teach physics might incorrectly conclude that the most important feature of a research-based curriculum is its hands-on approach. Of course, students can have their hands on many things, often in ways that fail to promote any meaningful conceptual development. The point is that the more new programs and curricula deviate from currently familiar practices, the more likely they are to be distorted or misunderstood. These misinterpretations, when combined with the need to adapt to local circumstances, can lead to wide variability in the program in practice.

Consequently, instructional programs that successfully build on knowledge from research and that demonstrate effectiveness in experimental studies can show insignificant, or even negative, results when implemented more broadly. The very notion that research can improve practice is undermined by the outcome. If research is to have a positive, widespread impact on student learning, following curriculum use as it spreads into school districts and is adapted by teachers will be critical.

The envisioned work would seek to identify the variability of implementation in the new programs. Are there programs in which fidelity is generally preserved and others that are more frequently distorted? If so, which programmatic features are responsible? Are there adaptations that improve program performance?

These investigations require the kind of longer term study that SERP can support. Moreover, it will be important to learn about the forms and amounts of variability in implementation

that programs can sustain (that is, program robustness), and the resulting outcomes that can be expected from typical variations in enactment.

The research effort should be accompanied by an effort to develop supports for effective implementation. If, for example, a specific feature of a program is easily misunderstood, the program itself may need to be revised, or supplemental opportunities for understanding may need to be developed for users. Multiple iterations of research, design, and testing will be required to develop supports that are effective.

Initiative 3: Teacher Learning

Much more research has been devoted to understanding student learning of physics and how to improve it than has been spent on corresponding studies of teacher learning and how to improve it. As yet, we know very little about how effective physics teachers use their knowledge of student thinking to make decisions about which move in their instructional repertoire to deploy in a situation. Some clues do exist. For example, Minstrell has formalized an expert teacher’s diagnostic knowledge into his assessment program, Diagnoser (discussed above). But the instructional move an expert might make to respond to a given facet identified by Diagnoser is still poorly understood. Nor do we have a good sense of how this kind of knowledge develops—that is, at what characteristic rates and under what conditions.

Physics is an excellent topic for supporting a SERP study of the development of teacher knowledge, because so much of the student diagnostic work has already been accomplished. And successful efforts to educate teachers, like that employed by the modeling instruction program described above, provide fertile ground for research. SERP could support longitudinal studies to generate a richer sense of the typical development of teacher knowledge, from pre-service student, through novice, to journeyman teacher. These studies might also help us understand what hampers the continuing development of this form of knowledge and its connection to instructional decision making. On one hand, because student conceptions in physics are so robust, it seems unlikely that novice teachers are entirely unaware that their students hold them. On the other hand, neither teachers’ education nor the forms of teaching they adopt may

provide the kinds of feedback that help them develop a systematic catalogue of those conceptions and a repertoire of productive approaches to addressing them. Experiences that provide this kind of information at the appropriate level of detail to guide instruction are particularly important to understand. This research would clearly support efforts to understand teacher knowledge requirements in other subject matter, with very close parallels, for example, to the teacher knowledge agenda in reading comprehension.

SCIENCE EDUCATION ACROSS THE SCHOOL YEARS

It would surely be disturbing if the mathematics instruction in schools followed no plan for increasing students’ knowledge cumulatively across grades of study but instead meandered from topic to topic in an unprincipled way. Yet this is an accurate description of science instruction in elementary schools and in many middle schools. High schools have a more predictable sequence of science subjects rooted in tradition, but the subjects are generally treated separately. Even in high school, there is little effort devoted to drawing connections in the content across subjects or to systematically building an understanding of the discipline.

STUDENT KNOWLEDGE

The Destination: What Should Students Know and Be Able to Do?

Achieving consensus on the content of science education across the K-12 school years has been stymied by a long history of debates and subsequent confusion about the appropriate organizing principles for science education. The debates often swell around the process-content divide. Some have argued that the most important thing for students to learn is the process of scientific reasoning, including the logic of controlling extraneous variables in scientific experimentation, the coordination of theory and evidence, and standards for evaluating evidence. However, these attempts often founder on superficial and fragmented treatments of science content. Too exclusive an empha-

sis on scientific processes can result in instruction about a bundle of topics that are loosely, if at all, related to each other because the development of scientific reasoning does not depend on the treatment of particular topics. Units on weather, electricity and magnetism, and the rain forest follow each other, in an organization most charitably described as modular. Knowledge accumulated in early grades does not build smoothly toward the scientific ideas that will be encountered in high school study and beyond.

In contrast, those who endorse a content approach seek coherence by emphasizing the integrated development of knowledge within scientific disciplines, like biology or chemistry. In practice this emphasis often leads to a focus on concepts and facts—the products of science—with little attention to how that knowledge was generated. In earlier grades, students receive instruction that jumps from earth sciences to physical sciences to biological sciences. The usual result is superficial or fragmentary understanding (Vosniadou and Brewer, 1989; Pfundt and Duit, 1991).

Neither of these views is well aligned with the vision sketched in national science standards (e.g., those from the American Association for the Advancement of Science [AAAS] and the National Research Council). The standards point toward the big ideas and themes that ought to be the goals of science education. For example, AAAS’s report (1991) Science for All Americans proposes that by the time they graduate, students should understand important scientific themes like systems, models, constancy and change, and scale. AAAS points out that these themes “transcend disciplinary boundaries and prove fruitful in explanation, in theory, in observation, and in design” (p. 165). However, there are few illustrations in practice of what it means to understand these ideas deeply, and few guideposts to help teachers navigate the very extensive list of topics that the standards include so that students will arrive at deep understanding of these themes or organizing big ideas. Overall, very little is known about how the material outlined in the standards is actually attainable over the time course of schooling.

The Route: Progression of Understanding

From a very young age, children begin to impose order on the world they observe, generating ideas about why objects float or sink, about what it means to be alive, about why plants

grow, and about temperature and weather. Like the high school physics students described in the preceding section, children are sense-makers, and their theories and ideas are tools that should be encouraged and stretched, not ignored. As children accumulate greater experience with the world, they begin to master the kinds of distinctions that adults and scientists make: dogs are indeed alive and rocks are not, but plants are also alive, even though they do not move from place to place of their own volition.

Early conceptions may evolve somewhat with increased experience. But scientific conceptions generally do not develop without explicit instruction, partly because the epistemological assumptions that underlie them are complex and often invisible. As discussed with regard to physics, many important scientific processes, principles, and laws are at odds with everyday understandings and experiences. Much scientific work requires methods and measurements that are not characteristic of everyday activity, and that rely on instruments that allow the scientist to explore what is not otherwise accessible.

Even more fundamentally, it is by no means self-evident to children what kind of enterprise science is. Experimentation, for example, requires arranging aspects of the world to generate a model of the phenomenon that is of interest. The model, which is taken to stand for the more general class of events, is then systematically perturbed as a way to seek deeper understanding. The history of science suggests that this way of constructing knowledge evolved gradually, as practicing scientists increasingly came to regard their work as the creation of a form of argument, rather than as the unproblematic observation of transparent events in the world (e.g., Bazerman, 1988).

While a scientific approach is not likely to develop in children naturally, this form of thinking can be developed gradually when students have sustained opportunities (as in Sister Hennessey’s classroom, described below) to learn the discipline’s content knowledge (what we know), theory (what we make of what we know and don’t know), and knowledge of the epistemologies of science (how we know). But little is known about the routes or the progression of understanding that characterize effective mastery of science content and reasoning.

What science are children capable of learning at different grade levels? Elementary school children studying marine mammals may be quite capable of understanding that the ancestors

of whales lived on land, and they may also be engaged by the story of how that came to be known by scientists. But less is known about their readiness to understand the concepts of distribution and variation that underlie such evolutionary tales. Some evidence suggests that even elementary and middle school students can begin to develop an understanding of these ideas (see, for example, Cobb et al., 2003; Lehrer and Schauble, 2001, 2002; Petrosino et al., 2003). But little systematic research has been done to discern what the majority of children are able to grasp with reasonable instructional effort at different grade levels. Furthermore, we know little about what instruction is required at one level to prepare students for instruction at the next. Most instructional research is conducted over brief periods of time and so does not provide information about the potential for long-term development of knowledge and reasoning.

AAAS Project 2061 published a set of science literacy maps that lay out a progression over grades in the components of knowledge that students should develop for each of the AAAS benchmarks (American Association for the Advancement of Science, 2001). At present, this atlas represents the only comprehensive attempt to establish a developmental course of learning and instruction for students in grades K-12. But it, like the National Research Council science standards and the AAAS benchmarks, lacks a research foundation to support the assumptions about learning and the progression of understanding. They are conjectures that, while reasonable, lack empirical confirmation. Moreover, since they are based on a sense of the way that “typical” children think (that is, under no particular conditions of instruction), they are very likely to be underestimates of children’s capabilities. Also missing is knowledge about how to provide appropriate sequences of instruction, as well as a clear sense of the ways in which the standards and benchmarks map against assessments of students’ knowledge representations and cognitive skills.

The Vehicle: Curriculum and Pedagogy

The knowledge base to support the development of curriculum and pedagogy, we have argued, is characterized by little detail on the instructional implications of teaching the big ideas and little understanding of the progression of student thinking

that is possible with instruction both in science content and process. Those weaknesses in the knowledge base are reflected in the K-12 science curriculum.

Analyses of TIMSS science achievement results (Schmidt, 2001; Valverde and Schmidt, 1997) as well as research conducted by other investigators show that in contrast to other countries, elementary and middle school science in the United States emphasizes broad coverage of diverse topics over conceptual development and depth of understanding. For example, eighth grade textbooks in the United States cover an average of more than 65 science topics, in stark contrast to the 25 topics typical of other TIMSS countries. “U.S. eighth-grade science textbooks were 700 or more pages long, hardbound, and resembled encyclopedia volumes. By contrast, many other countries’ textbooks were paperbacks with less than 200 pages” (Valverde and Schmidt, 1997:3). The more recent TIMSS-R follow-up study concluded that the comparatively poor performance of U.S. eighth graders is related to a middle school curriculum that is not coherent and is not as demanding as that found in other countries studied. “We have learned from TIMSS that what is in the curriculum is what children learn” (Schmidt, 2001:1).

Commercially published textbooks are the predominant instructional materials used in science (Weiss et al., 2002). In grades K-4, textbooks are used 65 percent of the time; this increases to 85 percent of the time in grades 5-8, and 96 percent of the time in grades 9-12. Most of these textbooks are seriously flawed. A team at the AAAS reviewed widely used textbooks in middle and high school science and ranked them on a number of criteria, among them the extent to which the major concepts were communicated clearly and students’ preconceptions were addressed. All of the middle school textbooks and most of the high school textbooks were rated poor (Roseman et al., 1999). On the critical dimension of supporting conceptual change, widely used science textbooks at both the middle school and high school level have been judged poor by the AAAS team (Roseman et al., 1999).

In recent years, researcher-practitioner collaborations have begun to generate more systematic approaches to science education. These instructional efforts span several grades and are, in a sense, hypotheses about what children can learn and do at different grade levels. The commitments and design trade-offs

vary somewhat from program to program, but they share the following features:

-

reformulation of the goals and purposes of school science;

-

including less content material at greater depth and an emphasis on understanding over coverage;

-

fostering and studying the long-term development of student knowledge under optimal conditions of teaching and learning;

-

sequencing curriculum on the basis of what is being learned about the development of student knowledge; and

-

conducting research on what it takes for these forms of teaching and learning to take hold and flourish in new settings.

These programs share the conviction that the central goal of science education should be to develop students’ understanding and appreciation of the forms of knowledge-making that characterize scientific practice. At the same time, however, this goal is pursued in the course of serious and extended investigation of science content. While the program developers have been collecting data on learning outcomes that show promise, these programs have not undergone rigorous, independent testing. Their value to a SERP research agenda lies not in the definitive answers to instructional questions provided by the programs, but in the opportunity they provide to further develop and rigorously test hypotheses about alternative approaches to teaching science to young children. The programs can be differentiated as having one of four foci: methods of empirical inquiry and inference; theory building; modeling; or argumentation.

Methods of Empirical Inquiry Kathleen Metz (2000) at the University of California, Berkeley, has been working for several years with a cadre of elementary grade teachers to reorganize science teaching and learning around the enterprise of (and methodologies for) empirical inquiry (see Box 4.6). Students learn how to pose questions, to think carefully about how those questions could be answered empirically, and to master a repertoire of methods to conduct empirical investigations. Metz is generat-

ing longitudinal data, both about students’ evolving content understanding (e.g., concepts about animal behavior, adaptation) and their capabilities to pose interesting questions and investigate them via studies of their own design, as well as to diagnose weaknesses in their own and other students’ investigations.

|

BOX 4.6 Kathleen Metz has worked with teachers in grades 1 through 5 to engage children in authentic, goal-focused scientific inquiry. As students study a domain in greater depth, they are given increasing responsibility for the inquiry. The program grew out of Metz’s concerns that existing curricula for elementary science assume developmental constraints on the students’ ability to learn science at an early age that are not supported by research. As a result, the content of elementary science curricula is frequently impoverished, and the focus on discrete “science process skills” in many curricula is divorced from the robust context of inquiry that can enrich students learning opportunities and interest. Metz’s instructional model uses a combination of empirical investigations, text, video, and case studies to develop content knowledge of the domain, and to introduce students to the big ideas of biology, process knowledge of tools and decision making involved in scientific inquiry, and science as a way of knowing. She has instantiated this instructional model in curriculum prototypes in animal behavior and botany. For example, in the study of animal behavior, young children’s observations of animals are used as a basis to discuss distinctions between observation and inference that children usually conflate into one undifferentiated category of “the way things are.” Eventually, the children develop parallel distinctions between theory and evidence through case study, empirical investigations, and text analysis. In one case, children take on the question animal behaviorist Roger Payne posed to himself of why grey whales migrate. They examine Payne’s analysis of the theories he considered and his evidence for and against them. Analysis of text is also used to deepen their knowledge and support the development of individual interests and questions. The study introduces theory and evidence, in conjunction with the key idea of survival advantage. In another case, across a series of increasingly complex investigations of cricket behavior, the teacher supports the development of children’s emergent repertoires of methods, ways to analyze data, and ways to represent data in the form of three “menus.” As students become more familiar with the inquiry process, they work in pairs to formulate the question they want to research, develop a plan to investigate the question, implement their study, and represent their work in the form of a research poster. In a research poster conference, the children analyze the surprises, the relation between their studies, and consider next steps in their research as a whole. Metz’s bet is that scaffolding the content and process knowledge necessary for children to assume control of their own investigation has significant pay-offs from cognitive, epistemological, and motivational perspectives. |

Theory Building An instructional approach designed by Sister Mary Gertrude Hennessey emphasizes theory building (see Box 4.7). Across content domains through the elementary years, students repeatedly consider the criteria by which scientific theories are formulated, used, tested, and revised. Sister Hennessey has generated longitudinal data concerning changes in her students’ grasp and application of these criteria, and she has also tracked changes in students’ propensities to reflect about their own thinking. Researchers external to the project have documented impressive performances by these students on standardized interviews concerning the nature of science.

Modeling A number of investigators are examining the potential of organizing science instruction around the practice of modeling. This kind of instruction emphasizes developing models of phenomena in the world, testing and revising models to bring them into better accord with observations and data, and, over time, developing a repertoire of powerful models that can

|

BOX 4.7 Until recently, Sister Mary Gertrude Hennessey, who has Ph.D.s in both science and science education, served as the sole science teacher for students in Grades 1-6 at St. Ann School in Stoughton, Wisconsin (she is now serving as principal). “Hennessey’s curricular approach stands out as an extensive and sustained attempt to teach elementary science from a coherent, constructivist perspective” (Smith et al., 2000:359). Hennessey’s instruction emphasized theory-building, both as the process by which students build their own science understanding and as an object of explicit reflection. Across content domains, students repeatedly considered the criteria by which scientific theories are formulated, used, tested, and revised. This emphasis was consistently maintained across grades of study. In early grades, the focus was on identifying and explicitly stating one’s own beliefs and the alternative beliefs held by classmates. In later grades, Hennessey “raised the ante” by urging students to consider the advantages of adopting additional criteria, such as the intelligibility, plausibility, and extensibility of their beliefs and the beliefs of others. In every case, these issues were explored in the context of sustained investigations of phenomena. Students applied these criteria as they worked toward building deep explanations based on theoretical entities, investigating the implications of their own explanations and alternative explanations proposed by the class. Sixth graders who spent six years under Hennessey’s tutelage showed impressive epistemological development on the nature of science interview developed by Carey and colleagues (Carey et al., 1989; Smith et al., 2000). |

be brought to bear on novel problems. Modeling approaches have the advantage of avoiding the content-process debates that have plagued science education over the years. One cannot model without modeling something, so when students are engaged in modeling, reasoning processes and scientific concepts are always deployed together.

Most existing research on modeling has been conducted with units or courses that do not span more than one school grade. (For example, Stewart and colleagues have developed high school courses in evolutionary biology and genetics; Reiser et al., 2001; White and Frederikson, 1998; Raghavan et al., 1995; and Wiser, 1995 have developed units for middle school grade students.) On a longer time scale, Lehrer and Schauble (2000) have initiated and studied a school-based program in which science teaching and learning is organized over grades 1-6 around modeling approaches to science (see Box 4.8). Data from this project include paper-and-pencil “booklet” items administered to intact classes of students, yearly three-hour detailed student interviews, and “modeling tasks” completed by small groups of students. Producing these items was itself a challenging task, since students were learning forms of mathematics not routinely taught in elementary grades. The items that were developed were based on evolving data about children’s understanding of ideas in geometry, measurement, data, and statistics. The student achievement data showed strong student gains; for example, from the first to the second year of the project, effect sizes by grade were 0.56 (Grade 1), 0.94 (Grade 2), 0.43 (Grade 3), 0.54 (Grade 4), and 0.72 (Grade 5).

Argumentation Bazerman (1988), Lemke (1990), Kuhn (1989), and others have pointed out that science entails mastering and participating in a particular form of argument, including relationships between theories, facts, assertions, and evidence. This characterization of science explicitly acknowledges that science is not just the mastery of knowledge, skills, and reasoning but also participation in a social process that includes values, history, and personal goals. This view of science informs the ongoing work of Warren and Rosebery (1996), for example, who focus on classroom discourse organized around argumentation in science (see Box 4.9). Once again, researchers are supplementing their reports of teachers’ professional development with careful measures of student learning. These measures are

specific to both the content that students are studying in their classrooms (e.g., interviews of students’ grasp of ideas about motion) and in general (e.g., noting changes in the rates of certain patterns of discourse in classroom discussions of science).

|

BOX 4.8 In Lehrer and Schauble’s (2000) program, researchers work with teachers to reform instruction and, in coordination, to study the development of model-based reasoning in students. Early emphasis is on developing young children’s representational resources (drawings, writing, maps, three-dimensional scale models) as they conduct inquiry about aspects of the world that they find theoretically interesting. For example, first graders studied ripening and rot by using drawings to record changes in the color and squishiness of fruit, compost columns to investigate rates of decomposition, and maps of the school to investigate the dispersal of fruit flies from the compost columns to classrooms near and far. Teachers typically begin modeling with young students by exploring models that literally resemble the scientific phenomena being modeled. For example, first graders cut green paper strips to record changes over time in the height of amaryllis and paperwhite narcissus that they grew in soil and in water. Then, as investigations proceed, initial models are successively revised to provide increased representational power. As in the history of modern science, these models increasingly incorporate mathematical descriptions of the world. The students investigated concepts about measurement as they investigated which of the plants grew tallest (Lehrer and Schauble, 2000). However, when the teacher shifted the question to “Which plant grew fastest?” attention turned to recording and representing changes in height over time. In subsequent grades, questions about plants expanded to include comparison of growth rates (with attention to logistic curves as a general model of growth), the volume of their canopies (investigations about whether canopies grow in geometrically similar proportions), and shapes and other qualities of distributions of plants grown under different conditions (including sampling investigations). Researchers are investigating the potential of a range of central science themes (growth and diversity, animal and human behavior, structure) that can support this kind of cumulative modeling approach. The objective is to develop a cumulative approach to science that permits steady growth in students’ modeling repertoires across the elementary and middle school grades. One focus of research is to identify themes that are central to later science instruction and that provide early entry to young students and smooth “lift” (increased challenge) as students graduate from grade to grade. The primary form of professional development in this program is teachers’ collective investigation of the development of student thinking and study of the implications of those findings for teaching. The research also tracks the professional development of participating teachers and documents the institutional conditions required to support these forms of teaching and learning. |

|

BOX 4.9 The Cheche Konnen project developed by Warren and Rosebery (1996) turns the attention of practicing teachers toward student meaning-making in science, especially those students whose first language is not English. Instruction capitalizes on students’ linguistic and cultural resources developed outside the school. In addition, teachers are encouraged to emphasize that the work of practicing scientists is also “populated by intentions, those of the speaker and those of others, both past and present” (p.101). Teachers seek to find points of contact between their students’ talk and reasoning and the forms of communication observed in communities of professional scientists. By conducting their own extended scientific inquiries, teachers in the Cheche Konnen project come to better understand the social and human basis of the scientific enterprise. Together, teachers conduct close study of student language by analyzing and investigating videotapes of classroom discourse. The assumption in this work is that student talk is sensible, and that the teacher’s job is to become increasingly skilled at identifying that sense and using it as the foundation for instructional moves. |

Checkpoints: Assessment

As in other subjects, quality assessment in science requires, as a starting point, an understanding of what students should know and be able to do. It is perhaps not surprising, then, that the current situation in science assessment outside physics is dire. The broad but shallow coverage of science topics in current texts is mirrored in standardized assessments (including those administered for accountability purposes by the states) that touch briefly on a very wide array of concepts and topics without deeply probing student understanding of any of them. Some assessments include items designed to tap common student misconceptions, but they do not diagnose the developmental level of a student’s thinking about the topic.

The diagnosis of student understanding that would render an assessment of greater use for instruction would be difficult to achieve without narrowing the range of topics. In-depth assessment, like that done in the Force Concept Inventory (discussed above) of so many topics, would not be practical in a single assessment. The current practice of devoting no more than a few items to each of several topics means that the assessments do not capture information that teachers can use. Even worse, they may serve to reify bad practice by encouraging an instructional

emphasis on coverage over conceptual understanding. There have been recent attempts to develop alternative performance-based assessments in science to address these problems, but these have not yet overcome the psychometric and logistical challenges (Ruiz-Primo and Shavelson, 1996; Solano-Flores and Shavelson, 1997; Ruiz-Primo et al., 2001; Stecher et al., 2000). Moreover, attempts to change the form of assessment are hampered by the more fundamental problem of forging consensus about what is worth assessing.

TEACHER KNOWLEDGE

Relatively few teachers at the K-8 level feel well qualified to teach life, earth, or physical science. The percentages range from 18 for physical science to 29 for life science. K-8 teachers generally lack a deep knowledge of the subject matter of science. Few have an undergraduate major in a science discipline, although most have done some science coursework while in college. Undergraduate courses in science are not particularly helpful for understanding the rich conceptual repertoires that children typically bring to understanding scientific situations.

There is little in teacher preparation programs that provides the foundations of pedagogical content knowledge for teaching science. Elementary school teachers are less likely than middle or high school teachers to indicate that they are prepared to support the development of students’ conceptual understanding of science, provide deep coverage of fewer science concepts, or manage a class of students engaged in an extended inquiry project (Weiss et al., 2001). The available evidence does suggest, however, that “teachers who participate in standards-based professional development often report increased preparedness and increased use of standards-based practices, such as taking students’ prior conceptions into account when planning and implementing science instruction. However, classroom observations reveal a wide range of quality of implementation among those teachers” (Horizon Research, 2002:168-169).

RESEARCH AGENDA

International and national test scores highlight the weakness of K-12 science education in the United States. That students in so many other countries perform considerably better suggests that the problems are tractable. And there are some

indicators of the path toward improvement: we need to be more thoughtful about supporting a deeper understanding of the big ideas in science curricula. This implies principled choice of fewer topics to be treated in greater depth and with greater coherence. These must then be the ultimate targets of a SERP agenda that holds promise for improving student learning outcomes.

Promising work, examples of which are described, has begun to build the knowledge base for a more coherent approach to science education. Teams of researchers working closely with teachers and other educational practitioners are systematically exploring the long-term learning potential and technical feasibility of pursuing systematic, cumulative approaches to science that treat topics in depth. All of these efforts include thoughtful consideration of the appropriate goals of early science education, investigations of the development of student knowledge, and research on the professional development and institutional supports required to implement them. Finally, each of these efforts relies on practitioner-researcher partnerships that extend over a number of years. Such long-term relationships are essential because the targets of the research (forms of student thinking) must first be reliably generated before they can be systematically studied. In these many respects, these are the types of efforts we have argued carry potential to improve practice.

So far, each of these efforts has been patched together with the short-term grant awards that are typical in education funding. They have not had the support required for evaluating long-term student outcomes rigorously and independently, with a broad range of students in a range of settings. But they provide a very promising point of departure for a SERP research program.

With its longer time scale and capability to plan comparative studies of the trade-offs of different approaches, SERP could assume a critical role in the development, study, and comparison of these models of teaching and learning that build systematically across the grades of schooling. Some of the programs we have mentioned are already yielding longitudinal findings about student learning. Some are investigating the forms of professional development and institutional support that are required to help similar programs flourish more widely. For this work to contribute to the quality of K-12 science education on a large scale, however, will require a sustained effort to learn from the range of experiments and to use what is learned to

inform both the next stages of research and development and the goals and standards set for science learning. To this end, the research agenda we propose involves initiatives that we spell out in general terms, and that will look in their specifics much like the initiatives in reading and mathematics:

-

Development and evaluation of integrated learning-instruction models, with component efforts that include curriculum, assessment, and teacher knowledge requirements;

-

Evaluating standards for science achievement.

Initiative 1: Development and Evaluation of Integrated Learning-Instruction Models

Identifying a productive organizing core for school science across the grades is an important element in providing science education that builds from one year to the next. This does not suggest that there is a single, right vision about what is worth teaching and learning. But alternative visions should be formulated, articulated, and carefully justified, so that instruction in all cases can be oriented around valued goals. However, the challenges and possibilities of alternative commitments become clear only when the details of instruction have been worked out, conjectures about fruitful paths for learning have been developed and pursued, and longitudinal research has been conducted as instruction plays out in classrooms. While there have been several efforts to establish standards (the core), these have had minimal impact because they are promulgated without the details of instruction that are required to attain the goals that are envisioned. This will require work on curriculum and on assessment that is closely linked.

Curriculum development and evaluation Existing promising programs like those described above should be further developed and evaluated. The work we propose is not the typical process in which curricula are first invented and then evaluated. Rather, it is one in which design and research are intimately inter-leaved, so that initial design decisions take the status of conjectures. Evidence regarding the conjectures and their consequences for learning would then contribute to the ongoing shaping and

revision of the design. Such an approach is particularly important for a field like science education, in which there is not yet an organized research base to undergird conjectures about optimal sequences of topics and tasks. The key role to be played by SERP is in evaluating the range of programs to consolidate an understanding of differences across programs and their implications for student learning outcomes.

The nature of the work to be done here parallels several of the research and development efforts described in previous chapters. The evaluation of curricula for science would be much like the evaluation of curricula in mathematics. The desired outcome here, as there, is not a stamp of success or failure, but a deeper understanding of the learning process and effective avenues to support it, with a goal of continuous learning and improvement.