Ensuring Robust Military Operations and Combating Terrorism Using Accident Precursor Concepts

YACOV Y. HAIMES

Founding Director, Center for Risk Management of Engineering Systems

University of Virginia

Effective quantitative risk assessment and risk management must be based on systems engineering principles, including systems modeling. By exploring the commonalities between accident precursors and terrorist attacks, a unified approach can be developed to risk assessment and risk management that addresses both. In this paper, I discuss the importance of system modeling and the centrality of the state variables to vulnerability, threat, and the entire process of risk modeling, risk assessment, and risk management.

The risk assessment and risk management process is effectively based on answering two sets of triplet questions:

-

The first set represents risk assessment (Kaplan and Garrick, 1981). What can go wrong? What is the likelihood? What are the consequences?

-

The second set represents risk management (Haimes, 1991). What can be done, and what options are available? What are the trade-offs in terms of costs, benefits, and risks? What are the impacts of current policy decisions on future options?

Answering the first question in each set (what can go wrong, and what can be done, and what options are available?) requires multiperspective modeling that can identify all conceivable sources of risk and all viable risk management options. Several modeling philosophies and methods have been developed over the years that address the complexities of large-scale systems and offer various modeling schema (Haimes and Horowitz, 2003). In Methodology for Large-Scale Systems, Sage (1977) addresses the “need for value systems which are

structurally repeatable and capable of articulation across interdisciplinary fields” for modeling the multiple dimensions of societal problems. Blauberg et al. (1977) point out that, for the understanding and analysis of a large-scale system, the fundamental principles of wholeness (the integrity of the system) and hierarchy (the internal structure of the system) must be supplemented by the principle of “the multiplicity of description for any system.”

To capture the multiple dimensions and perspectives of a system, Haimes (1981) introduced hierarchical holographic modeling (HHM). Haimes argues that “To clarify and document not only the multiple components, objectives, and constraints of a system but also its welter of societal aspects (functional, temporal, geographical, economic, political, legal, environmental, sectoral, institutional, etc.) is quite impossible with a single model analysis and interpretation.”

Recognizing that a system “may be subject to a multiplicity of management, control and design objectives,” Zigler (1984) addressed modeling complexity in Multifaceted Modeling and Discrete Event Simulation, where he introduces the term multifaceted “to denote an approach to modeling which recognizes the existence of multiplicities of objectives and models as a fact of life.” In Synectics, the Development of Creative Capacity, Gordon (1968) introduces an approach that uses metaphorical thinking as a means of solving complex problems. Hall (1989) develops a theoretical framework, which he calls metasystems methodology, with which to capture a system’s multiple dimensions and perspectives.

Other seminal works in this area include Social Systems: Planning and Complexity by Warfield (1976) and Systems Engineering by Sage (1992). Sage identifies several phases in a systems-engineering life cycle to represent the multiple perspectives of a system—the structural definition, the functional definition, and the purposeful definition. Finally, in the multivolume Systems and Control Encyclopedia: Theory, Technology, Applications, Singh (1987) describes a wide range of theories and methodologies for modeling large-scale, complex systems.

In tracking terrorist activities as precursors to terrorist attacks, we can use HHM results to help determine which information is potentially the most worthwhile for the purposes of analysis. According to Haimes and Horowitz (2003), this information can include data related to four factors, the first three of which are areas of intelligence collection that depend on the fourth—environment:

-

the people associated with the potential targets (e.g., employees or members of related organizations)

-

potential methods of attack (e.g., specific poisons that might be most effective for a meat poisoning attack, based on detectability, potency, and ability to withstand the impact of cooking)

-

the numerous characteristics, detailed subsystems, and processes associated with potential targets (e.g., cybersecurity, physical security, or location )

-

environment, which includes information about terrorist organizations (e.g., strategies, funding, skills, cultural values, etc.) and the overall geo-

-

political situation (e.g., terrorist sympathizers, funding sources, training supporters, etc.).

THE STATES OF THE SYSTEM IN RISK ANALYSIS

Perrow (1999) defines an accident as “a failure in a subsystem, or the system as a whole, that damages more than one unit and in doing so disrupts the ongoing or future output of the system.” We can broaden Perrow’s definition of accidents to include terrorist attacks. Accidents, natural hazards, and terrorist attacks may all be perceived, or even anticipated, but still may be largely unexpected at any specific time. The causes, or initiating events, may be different for each, but the dire consequences to the system can be the same. In this paper, we use the following definitions and include both accident precursors and terrorism in each term.

State variables, the fundamental elements or building blocks of mathematical models, represent the essence of the system. State variables serve as bridges between a system’s decision variables, random and exogenous variables, input, output, objective functions, and constraints. The two sets of triplet questions are bridged through state variables. Here are some examples. To control steel production, you must understand the state of the steel at any instant (e.g., its temperature, viscosity, and other physical and chemical properties). To know when to irrigate and fertilize a farm to maximize crop yield, a farmer must assess soil moisture and the level of nutrients in the soil. To treat a patient, a physician first must know the patient’s temperature, blood pressure, and other vital states of physical health.

The word system connotes the natural environment, man-made infrastructures, people, organizations, hardware, and software.

In terms of terrorism, vulnerability connotes the manifestation of the inherent states of the system (e.g., physical, technical, organizational, cultural) that can be exploited by an adversary to cause harm or damage. However, in general terms, vulnerability connotes the manifestation of the state of the system so that it could fail or be harmed by an accident-prone initiating event (Haimes and Horowitz, 2003).

In terms of terrorism, the word threat connotes a potential intent to cause harm or damage to the system by adversely changing its states. However, if we generalize to include precursors to accidents, threat connotes an initiating event that can cause a failure to a system or induce harm to it. A threat to a vulnerable system results in risk (Haimes and Horowitz 2003).

Lowrance (1976) defines risk as a measure of the probability and severity of adverse effects.

If we look for the common denominator among all “accidents,” including natural hazards, terrorist attacks, and human, organizational, hardware, and software failures, we find that all of them can be anticipated. However, the likeli-

hood that they will be expected and/or realized depends on their reality and/or on our perception of (1) the state of the system being protected against accidents and (2) the associated initiating event(s).

Based on these definitions, the vulnerability of a system is directly dependent on and correlated to the levels of its state variables. Consider, for example, an electric-power infrastructure. Some of its state variables are reliability, robustness, resiliency, and redundancy (either of the entire system or of subsystems, such as generation or transmission). The task of the officials in charge is to maintain the state variables at their optimal operational level. The task of a terrorist network is to change the state variables drastically and render the system inoperable. These concepts will be discussed further in terms of the risk assessment and risk management process in the case studies that follow.

As another example, the United States is vulnerable to terrorist attacks because it has an open and free society, long borders, accessible modes of communication and transportation, and a democratic system of government. Today, these long-established state variables render it vulnerable to terrorism. A similar statement can be made about the implicit and explicit dependencies of reliability, quality, and risk on the corresponding states of the system. Therefore, a systemic, comprehensive risk assessment methodology is necessary to identify all of the system vulnerabilities; the risks associated with given threats of terrorism or accidents must also be managed through a comprehensive risk-management methodology.

The next section includes synopses of two case studies in which quantitative risk assessment and management processes were used successfully (Haimes, 2004): (1) planning, intelligence gathering, and knowledge management for military operations; and (2) the risk of a cyberattack on a water utility.

CASE STUDIES

The following synopses of two case studies demonstrate the efficacy and centrality of state variables in risk modeling, risk assessment, and risk management. In both cases HHM is used to answer the first question in the risk assessment process (What can go wrong?) and the first question in the risk management process (What can be done, and what options are available?) (Haimes, 1981, 1998). The first case study includes a brief description of HHM.

Risk Assessment and Risk Management to Support Operations Other than War

The requirements for the first line of defense against accidents in military operations include good planning, intelligence, training, and adequate resources in personnel and materiel. The following case study describes a study performed for the U.S. Army for operations other than war (OOTW) (Dombroski et al.,

2002 and CRMES, 1999). OOTW decision makers include people at all levels of the military, from strategic personnel in the Pentagon to tactical officers in the field. Recent experiences of U.S. forces involved in OOTW in Bosnia, Kosovo, Rwanda, Haiti, and now Afghanistan, Iraq, and elsewhere have dramatized the need to support military planning with information about each country that can be clearly understood. The geopolitical situation and the subject country must be carefully analyzed to support critical initial decisions, such as the nature and extent of operations and the timely marshaling of appropriate resources. Relevant details must be screened and carefully considered to minimize regrettable decisions, as well as wasted resources. Relevant details include: information on existing roads, railways, and shipping lanes; the reliability and security of electric power; communications networks; water supplies and sanitation; disease and health care; languages and cultures; police and military forces; and many others. Interagency and multinational cooperation are essential to OOTW to ensure that ad hoc decision making is done with careful attention to cultural, political, and societal concerns. The study developed an effective, holistic approach to decision support for OOTW that encompasses the diverse concerns affecting decision making in uncertain environments.

Hierarchical Holographic Modeling for System Characterization

There are numerous ways to characterize a country as a potential theater for OOTW. The characterization of state variables, such as technical infrastructure, political climate, society, and environment, are essential for both risk assessment and risk management. Indeed, before U.S. forces plan a deployment into a country for OOTW, the military needs to know practically everything important about that country. By identifying the critical state variables, as well as the state variables of U.S. forces and their allies, the military can identify (1) its own vulnerabilities (accident precursors); (2) threats from unfriendly elements; and (3) risk management options to counter these threats. HHM was the basis for the risk assessment and risk management process in the methodology developed for the Army’s National Ground Intelligence Center (NGIC) and for Kosovo, as a test bed.

HHM decomposes a large-scale system into a hierarchy of subsystems, thus identifying nearly all system risks and uncertainties. Holography, a well known photographic technique, shows a multidimensional, holistic view of a subject. The holographic aspect of HHM strives to represent the many levels of the decision-making process and the inherent hierarchies in organizational, institutional, and infrastructural systems.

HHM provides a complete, documented decomposition of large-scale systems (Kaplan et al., 2001). High-level criteria in an HHM are called head-topics; lower level criteria relating to particular head-topics are called subtopics. Criteria, sources of risk, and subtopics all refer to components of the HHM. For the risk assessment process, the developers of the HHM considered the OOTW from

a risk perspective, asking what could go wrong. By encapsulating nearly all possible risk scenarios, an HHM can also be perceived as the conceptualization of a “success” scenario. For the risk management process, the developers of the HHM considered the OOTW from a management perspective, asking what could be done, and what options were available.

Four HHMs were developed for OOTW: (1) the Country HHM, which identified a broad range of criteria for characterizing host countries and the demands they place on coalition forces; (2) the United States (US) HHM, which characterized what the United States had to offer countries in need; (3) the Alliance HHM, which characterized forces and organizations other than U.S. forces and organizations, such as multinational alliances and nongovernmental agencies; and (4) the Objectives HHM, which recognized the multiple, varying objectives of potential users of the methodology and coordinated the other three HHMs.

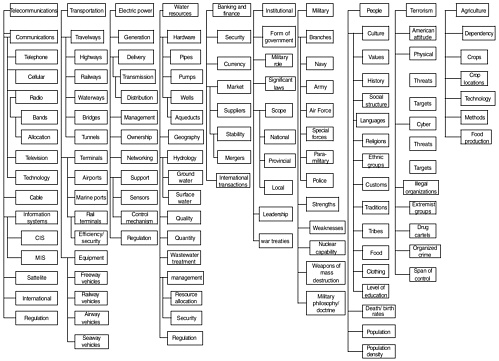

Figure 1 presents a Country HHM (head-topics and subtopics) based on an analysis of OOTW doctrine, case studies of previous operations, and brainstorming. Analytical case-study models from Operation Provide Comfort, Operation Restore Hope, Operation Joint Endeavor, and Operation Allied Force were analyzed to identify important criteria (C520, 1995). For example, decision makers for a typical OOTW need to know about the culture of the people, the economic and political stability of the nation, and the strength and disposition of the country’s military force. For a humanitarian relief operation, they must know about the existing health-care system, as well as about the food, water, and other resources the nation can provide. For a peacekeeping mission, the focus is more on externalities and terrorists who could potentially destabilize the existing situation. In many ways, the Country HHM constitutes a “demand” model; it represents a country’s needs in terms of personnel and materiel.

The US HHM addresses the supply aspect of an OOTW. The United States has a broad range of options available for addressing crisis situations, including diplomatic negotiations, economic assistance, and/or the deployment of troops and equipment. The US HHM is divided into two major areas: (1) Defense Decision-Making Practice; and (2) Defense Infrastructure. The US HHM also provides supply-side information, helping decision makers marshal supplies for an OOTW. The Defense Infrastructure subcriterion documents equipment, assets, and options the United States can offer to an OOTW. Details of the US HHM can be found in Dombroski et al. (2002).

The Alliance HHM is based on the recognition that the international community is more involved in maintaining international security now than it has been at any other time in world history (FM 100-8, 1997). The Alliance HHM documents countries, multinational alliances, and permanent and temporary relief organizations involved in an OOTW, including nongovernmental organizations (NGOs), private volunteer organizations (PVOs), and the United Nations. These organizations stabilize disengagement and ensure the economic, political, and social stability of a region after U.S. military forces leave (CALL, 1993).

Together, the Country HMM, US HMM, and Alliance HHM contain a vast amount of information pertaining to an OOTW, educating decision makers about the situation and helping planners and executors attain their mission goals. However, all of the information may not be important to all users at all times. A particular user may be concerned only with a subset of OOTW demands. For example, users of the system who assist in coordinating supply and demand, may be interested in a specific subset of total characterizations. The Coordination HHM identifies critical user-objective spaces with predictable information needs, including staff function, policy horizon, outcome valuation, and three decision-making levels: strategic, operational, and tactical. Users at each decision-making level want answers to specific questions pertaining to Country HHM subtopics that facilitate the identification of critical information for each decision maker.

The strategic level includes national-strategic and theater-strategic decision making. Strategic decision makers consider whether or not to enter into an operation. Operational decision makers define the operation’s objectives and plan missions to maintain order and prevent escalation of the situation. Tactical decision makers plan and execute OOTW missions to support higher objectives. Details of the Coordination HHM can be found in Dombroski et al. (2002).

Planners must focus limited resources on the most likely sources of risk, but because of the large number of HHM risk scenarios, they may find it difficult to determine which information is important. Risk filtering, ranking, and management (RFRM) integrates quantitative and qualitative approaches to identify critical scenarios (Haimes et al., 2002). Using four filtering phases, decision makers can sift out the most critical 5 to 15 subtopics from the 265 subtopics in the HHM. Unfortunately, it is beyond the scope of this presentation to describe the RFRM process further.

Risk Management through Comparison Charts

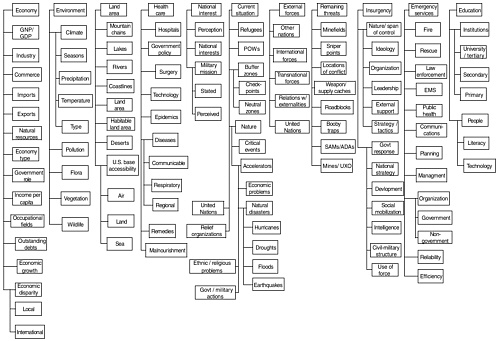

The OOTW undertaken by the United States in the Balkans can illustrate the use of comparison charts, which helped determine the medical supplies needed for the incoming refugees. Officers analyzed health care and disease data for Serbia to get an understanding of the existing conditions in the province of Kosovo. To help staff officers who were not familiar with conditions in Serbia, the data were compared to data from the United States, China, and Croatia.

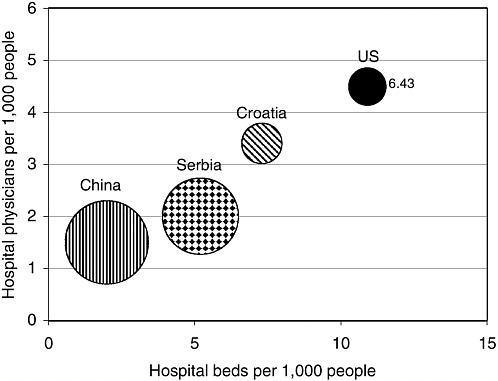

Figure 2 is a three-dimensional bubble chart showing three health-care metrics, one on the x axis, one on the y axis, and one in the bubble of variable areas. The figure suggests that Serbia’s health-care system is in a state of disrepair—Serbia has fewer hospital physicians and beds per 1,000 people and higher infant mortality than Croatia (the United States is used as a reference base). Even though Serbia’s health-care system is not as poor as China’s, staff officers inferred that refugees might be in poor health, indicating that a large variety of medical supplies might be required to conduct the operation effectively.

FIGURE 2 Bubble chart comparing health-care metrics for Serbia, Croatia, China, and the United States. Source: CRMES, 1999.

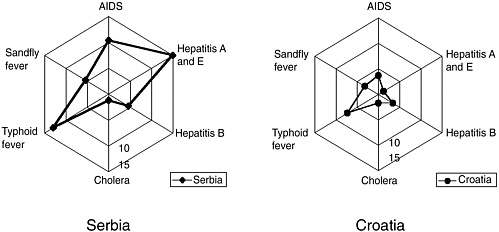

To determine which diseases they might encounter, the staff officers studied Figure 3, which shows the estimated prevalence of certain diseases in Serbia and Croatia. The metrics on the radials of Figure 3 indicate the percentage of the population infected. The comparison shows that the prevalence of AIDS, hepatitis A and E, and typhoid fever is higher in Serbia than in Croatia.

Risk of a Cyberattack on a Water Utility Supervisory Control and Data Acquisition Systems

Water systems are increasingly monitored, controlled, and operated remotely through supervisory control and data acquisition (SCADA) systems. The vulnerability of the telecommunications system opens the SCADA system to intrusion by terrorist networks or other threats. This case study addresses the risks of willful threats to water utility SCADA systems (Ezell, 1998; Ezell et al., 2001; Haimes, 2004). As a surrogate for a terrorist network, the focus is on a disgruntled

FIGURE 3 Radial chart showing the prevalence of diseases in Serbia and Croatia (numbers show the percentage of the population infected). Source: CRMES, 1999.

employee’s attempt to reduce or eliminate the water flow in a city we’ll call XYZ. The data are based on actual survey results showing that the primary concern of U.S. water-utility managers in XYZ was disgruntled employees.

Identifying Risks through System Decomposition

HHM yielded the following major head-topics and subtopics:

Head-Topic A: Function. Because of the importance of the water distribution system, its normal functioning is at major risk from cyberintrusion. This head-topic may be partitioned into three subtopics: A1 gathering, A2 transmitting, and A3 distributing.

Head-Topic B: Hardware. SCADA hardware is vulnerable to tampering in a variety of configurations. There are nine subtopics: B1 master terminal unit (MTU), B2 remote terminal unit (RTU), B3 modems, B4 telephone lines, B5 radio, B6 integrated services digital network (ISDN), B7 satellite, B8 alarms, and B9 sensors. Depending on the tools and skills of an attacker, hardware elements could have a significant impact on water flow for a community.

Head-Topic C: Software. This head-topic, perhaps the most complex, also represents the most dynamic aspects of changes in water utilities. Software has many components that are sources of risk—among them are C1 controlling and C2 communication.

Head-Topic D: Human. There are two major subtopics: D1 employees and D2 attackers. This head-topic addresses a decomposition of humans capable of tampering with a system.

Head-Topic E: Tools. A distinction is made between the various types of tools an intruder may use to tamper with a system. There are six subtopics: E1 user command, E2 script or program, E3 autonomous agent, E4 tool kit, E5 data trap, and E6 high-energy radio frequency (HERF) weapon (Howard, 1997).

Head-Topic F: Access. There are many paths (or vulnerabilities) into a system that an intruder might exploit. There are five subtopics: F1 implementation vulnerability, F2 design vulnerability, F3 configuration vulnerability, F4 unauthorized use, and F5 unauthorized access. Even though a system may be designed to be safe, its installation and use may lead to multiple sources of risk.

Head-Topic G: Geography. Physical location is not a relevant factor in cyberintrusion because tampering with a SCADA system can have global sources. International borders are virtually nonexistent because of the Internet. Four subtopics are identified: G1 international, G2 national, G3 local, and G4 internal.

Head-Topic H: Time. The temporal category shows how present or future decisions affect the system. For example, a decision to replace a legacy SCADA system in 10 years may have to be made today. Therefore, this head-topic addresses the life cycle of the system. There are four subtopics: H1 long term: ≥ 10 years, H2 short term: ≥ 5 years and < 10 years, H3 near term: < 5 years, and H4 today.

Measuring Risk Using the Partitioned, Multiobjective Risk Method

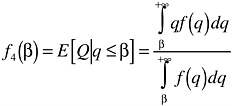

The partitioned, multiobjective risk method (PMRM) was used to quantify and reduce the risks of extreme events (i.e., events with low probability and high consequences) (Asbeck and Haimes, 1984; Haimes, 1998). PMRM generates a conditional expectation, given that the random variable is limited to either a range of probabilities or a range of consequences. Because the cumulative distribution function (CDF) is monotonic, there is a one-to-one relationship between the partitioned probability axis and the corresponding consequences. In this case study, we are interested in the common-unconditional expected value of risk, denoted by f5 and in the conditional expected value of risk of extreme events (low probability/high consequences) denoted by f4. The function f1 denotes the cost associated with risk management.

Let the random variable Q denote water flow and q denote a realization of the random variable Q. Let f(q) denote the probability density function (PDF)

and β the partitioning point of water flow. Then, the conditional expected value of risk of water flow below β is:

Thus, for a particular policy option, there is a measure of risk f4, in addition to the common (unconditional) expected value E[Q]:

City XYZ

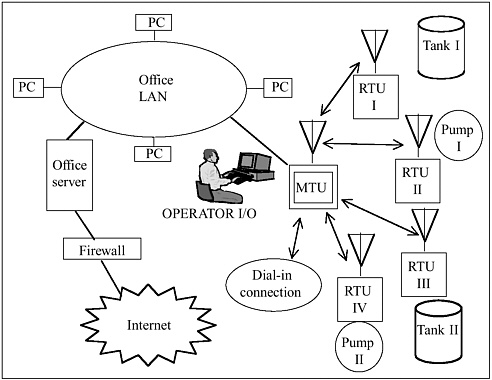

City XYZ, a relatively small city with a population of 10,000 has a water distribution system that accepts processed and treated water “as is” from an adjacent city (Wiese et al., 1997). The water utility of XYZ is primarily responsible for an uninterrupted flow of water to its customers. The SCADA system uses a master-slave relationship, relying on the total control of the SCADA master; the remote terminal units are dumb. There are two tanks and two pumping stations as shown in Figure 4. The first tank serves the majority of customers; the second tank serves fewer customers in a topographically higher area. Tank II is located at a higher topographical point than the highest customer served. The function of the tanks is to provide a buffer and to allow the pumps to be sized lower than peak instantaneous demand.

The tank capacity has two component segments: (1) reserve storage that allows the tank to operate over a peak week when demand exceeds pumping capacity; and (2) control storage, the portion of the tank between the pump cut-out and cut-in levels. Visually, the control storage is the top portion of the tank. If demand is lower than the pump rate (low-demand periods), the level rises until it reaches the pump cut-out level. When the water falls to the tank cut-in level, it triggers the pump to start operating. If the demand is higher than the pump rate, the level continues to fall until it reaches reserve storage. The water level then remains in this area until the demand has fallen long enough to allow it to recover. The reserve storage is sized according to demand (e.g., Tank I with its larger reserve storage serves more customers).

FIGURE 4 Interconnectedness of the SCADA system, local area network, and the Internet. Source: adapted from Ezell, 1998.

The SCADA master communicates directly with pumping stations I and II and signals the units when to start and stop; operating levels are kept in the SCADA master. Pumping Station I boosts the flow of water beyond the rate that can be supplied by gravity. The function of Pumping Station II is to pump water during off-peak hours from Tank I to Tank II. The primary operational goal of both stations is to maximize gravity flow and, as necessary, pump during off-peak hours as much as possible. The pumping stations receive a start command from the SCADA master via the master terminal unit (MTU) and attempt to start the duty pump. Each tank has separate inlets (from the source) and outlets (to the customer); water level and flows in and out are measured at each inlet and outlet. An altitude control valve closes the inlet when the tank is full; the “full” position is above the pump cut-out level so there is no danger of closing the valve during pumping. If something goes wrong and the pump does not shut off, the altitude valve closes, and the pump stops delivery on overpressure to prevent the main from bursting.

The SCADA system is always dependent on the communications network of the MTU and the SCADA master, which regularly polls all remote sites.

Remote terminal units (RTUs) respond only when polled to ensure that there is no contention on the communications network. The system operates automatically; the decision to start and stop pumps is made by the SCADA master and not by an operator sitting at the terminal. The system has the capability of contacting operations staff after hours via a paging system in the event of an alarm.

In the example, the staff has dial-in access, so, if they are contacted, they can dial in from home and diagnose the extent of the problem. The dial-in system has a dedicated PC connected to the Internet and the office’s local area network (LAN). A packet-filter firewall protects the LAN and the SCADA. The SCADA master commands and controls the entire system, and the communications protocols for SCADA communications are proprietary. The LAN, the connection to the Internet, and the dial-in connection all use transmission-control protocol and Internet protocol (TCP/IP); instructions to the SCADA system are also encapsulated with TCP/IP. Once instructions are received by the LAN, the SCADA master de-encapsulates TCP/IP, leaving the proprietary terminal emulation protocols for the SCADA system. The central facility is organized into different access levels for the system, and an operator or technician has a level of access, depending on need.

Identifying Risks through System Decomposition

The HHM head-topics and subtopics listed earlier identify 60 sources of risk to the SCADA system of XYZ. The access points for the system are the dial-in connection points and the firewall connecting the utility to the Internet. In this example, the intruder uses the dial-in connection to gain access and control of the system.

The intruder’s most likely course of action is to use a password to access the system and its control devices (Ezell, 1998). Because physical damage to equipment from dial-in access inherently requires analog fail-safe devices, managers conclude that the intruder’s probable goal is to manipulate the system to affect adversely the flow of water to the city. For example, if water hammers are created, they might burst mains and damage customers’ pipes. Or, the intruder might shut off valves and pumps to reduce water flow. After discussing the potential threats, the managers conclude that their greatest concern is that a disgruntled employee might tamper with the SCADA system in these ways.

Risk Management Using Partitioned, Multiobjective Risk Method

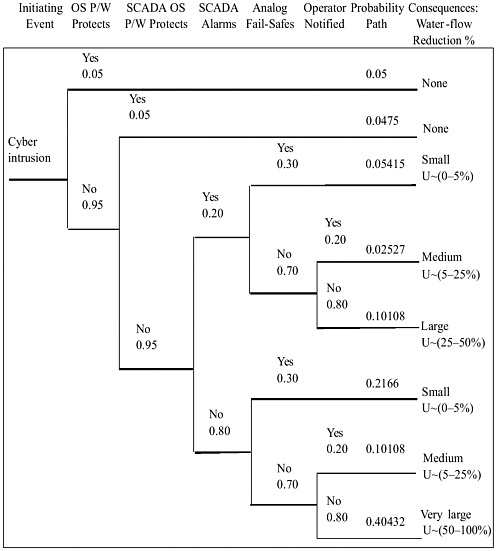

For each alternative, the managers would benefit from knowing both the expected percentage of water-flow reduction and the conditional expected extreme percentage reduction in 1-in-100 outcomes (corresponding to β). The PMRM partitions the framework s1, s2, s3, …, sn on the consequence (damage) axis at β for all alternative risk management policies. For this presentation, we

used the assessment of Expert A for the event tree in Figure 5 (A. Nelson,1 personal communication, 1998). The figure represents the state of performance of the current system based on an expert’s assessment of an intruder’s ability to transition through the mitigating events of the event tree. The initiating event, cyberintrusion, engenders each subsequent event, culminating with the consequences at the end of each path through the event tree. Assuming a uniform distribution of damage for each path through the tree, a composite, or mixed, probability density is generated. The uniform distribution is appropriate because the managers are indifferent beyond the upper and lower bounds.

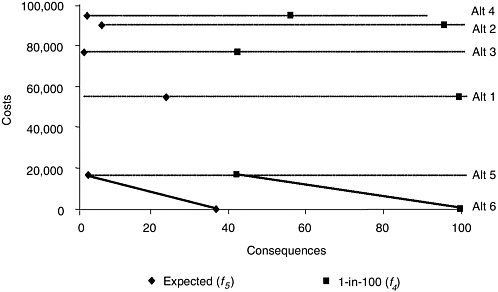

The conditional expected value of water-flow reduction for the current system at the partitioning of the worst-case probability axis at 1-in-100 corresponds to β = 98.7 percent. Thus, the conditional expected value for this new region is 99.5 percent. Using Equations 1 and 2, five expected values of risk E(x) and five conditional expected values of risk, f4 (β), were generated and plotted (Figure 6).

Assessing Risk Using Multiobjective Trade-off Analysis

Figure 6 shows the plot of the cost of each alternative on the vertical axis and the consequences on the horizontal axis. In the unconditional expected value of risk region, Alternatives 5 and 6 are efficient. For example, Alternative 5 outperforms Alternative 3 and costs $56,600 less. In the conditional expected value of risk (worst-case region), only Alternatives 5 and 6 are efficient (Pareto optimal policies). Alternative 5 reduces the expected value of water-flow reduction by 57 percent for the 1-in-100 worst case. Note, for example, that although Alternative 5 yields a relatively low expected value of risk, at the partitioning β, the conditional expected value of risk is markedly higher (more than 40 percent). To supplement the information from our analysis, the managers use their judgment to arrive at an acceptable risk management policy.

Conclusions

This case study illustrates how risk assessment and risk management can be used to help decision makers determine preferred solutions to cyberintrusions. The approach was applied to a small city using information learned from an expert. The limitations of this approach are: (1) it relies on expert opinion to

FIGURE 6 Trade-off costs for f5 vs. f4.

estimate probabilities for the event tree; (2) the model is not dynamic, so it does not completely represent changes in the system during a cyberattack; and (3) the event tree produces a probability mass function that must be converted to a density function for the exceedance probability to be partitioned. The underlying assumption that damages are uniformly distributed must be further explored.

REFERENCES

Asbeck E., and Y.Y. Haimes. 1984. The partitioned multi-objective risk method. Large Scale Systems 6(1): 13–38.

Blauberg, I.V., V.N. Sadovsky, and E.G. Yudin. 1977. Systems Theory: Philosophical and Methodological Problems. New York: Progress Publishers.

C520. 1995. Operations Other Than War. January 2, 1995. Fort Leavenworth, Kansas: U.S. Army Command and General Staff College.

CALL (Center for Army Lessons Learned). 1993. Operations Other Than War. Volume IV: Peace Operations. Newsletter No. 93-8. Fort Leavenworth, Kansas: U.S. Army Combined Arms Command.

CRMES (Center for Risk Management of Engineering Systems). 1999. Application of Hierarchical Holographic Modeling (HHM): Characterization of Support for Operations Other Than War. Charlottesville, Va.: Center for Risk Management of Engineering Systems, University of Virginia.

Dombroski, M., Y.Y. Haimes, J.H. Lambert, K. Schlussel, and M. Sulkoski. 2002. Risk-based methodology for support of operations other than war. Military Operations Research 7(1): 19–38.

Ezell, B. 1998. Risks of Cyber Attack to Supervisory Control and Data Acquisition for Water Supply. Masters thesis, Department of Systems Engineering, University of Virginia, Charlottesville.

Ezell, B., Y.Y. Haimes, and J.H. Lambert. 2001. Risk of cyber attack to water utility supervisory control and data acquisition systems. Military Operations Research 6(2): 23–33.

FM (Field Manual) 100-8. 1997. The Army in multinational operations. Washington, D.C.: Headquarters, Department of the Army.

Gordon, W.J.J. 1968. Synectics: The Development of Creative Capacity. New York: Collier Books.

Haimes, Y.Y. 1981. Hierarchical holographic modeling. IEEE Transactions on Systems, Man, and Cybernetics 11(9): 606–617.

Haimes, Y.Y. 1991. Total risk management. Risk Analysis 11(2): 169–171.

Haimes, Y.Y. 1998. Risk Modeling, Assessment, and Management. New York: John Wiley and Sons.

Haimes, Y.Y. 2004. Risk Modeling, Assessment, and Management, 2nd ed. New York: John Wiley and Sons.

Haimes, Y.Y., S. Kaplan, and J.H. Lambert. 2002. Risk Filtering, Ranking and Management Framework Using Hierarchical Holographic Modeling. Risk Analysis 22(2): 383–397.

Haimes, Y., and B. Horowitz. 2003. Adaptive two-player hierarchical holographic modeling game for counterterrorism intelligence analysis. Center for Risk Management of Engineering Systems. University of Virginia. Report 2003-12.

Hall, A.D., III. 1989. Metasystems Methodology: A New Synthesis and Unification. New York: Pergamon Press.

Horowitz, B., and Y.Y. Haimes. 2003. Risk-based methodology for scenario tracking for terrorism: a possible new approach for intelligence collection and analysis. Systems Engineering 6(3): 152-169.

Howard, J. 1997. An Analysis of Security Incidents on the Internet. Ph.D. dissertation, Carnegie Mellon University. Available online at http://www.cert.org/research/JHThesis/.

Kaplan, S., and B.J. Garrick. 1981. On the quantitative definition of risk. Risk Analysis 1(1): 11–27.

Kaplan, S., Y.Y. Haimes, and B.J. Garrick. 2001. Fitting hierarchical holographic modeling into the theory of scenario structuring and a resulting refinement to the quantitative definition of risk. Risk Analysis 21(5): 807–819.

Lowrance, W. 1976. Of Acceptable Risk. Los Altos, Calif.: William Kaufman.

Perrow, C. 1999. Normal Accidents. Princeton, N.J.: Princeton University Press.

Sage, A.P. 1977. Methodology for Large-Scale Systems. New York: McGraw-Hill.

Sage, A.P. 1992. Systems Engineering. New York: John Wiley and Sons.

Singh, M.G., ed. 1987. Systems and Control Encyclopedia: Theory, Technology, Applications. New York: Pergamon Press.

Warfield, J.N. 1976. Social Systems: Planning and Complexity. New York: John Wiley and Sons.

Wiese, I., C. Hillebrand, and B. Ezell. 1997. Scenarios One and Two: Source to No 1 PS to No 1 Tank to No 2 PS to No 2 Tank (High-Level) for a Master-Slave SCADA System. SCADA Consultants, SCADA Mail List available online at members.iinet.net.au/nianw/mailst.html.

Zigler, B.P. 1984. Multifaceted Modeling and Discrete Simulation. New York: Academic Press.