On Signals, Response, and Risk Mitigation

A Probabilistic Approach to the Detection and Analysis of Precursors

ELISABETH PATÉ-CORNELL

Department of Management Science and Engineering

Stanford University

Precursors provide invaluable signals that action has to be taken, sometimes quickly, to prevent an accident. A probabilistic risk analysis coupled with a measure of the quality of the signal (rates of false positives and false negatives) can be a powerful tool for identifying and interpreting meaningful information, provided that an organization is equipped to do so, appropriate channels have been established for accurate communication, and mechanisms are in place for filtering information and reacting to true alerts (Paté-Cornell, 1986). The objective is to find and fix system weaknesses and reduce the risks of failure as much as possible within resource constraints (Paté-Cornell, 2002a).

In this paper, I present several examples of failures and successes in the monitoring of system performance and an analytical framework for optimizing warning thresholds. I then briefly discuss the application of this reasoning to the modeling of terrorist threats and the characteristics of effective organizational warning systems.

THE SPACE SHUTTLE

In 1988, in the wake of the Challenger disaster, the National Aeronautics and Space Administration (NASA) offered me the opportunity to study, as part of my research, some of the subsystems of the space shuttle. Conversations with the head of the mission-safety office at NASA headquarters and with some of the astronauts revealed that they were concerned about the tiles that protected the shuttle from excessive heat during reentry. Therefore, I decided that the tiles

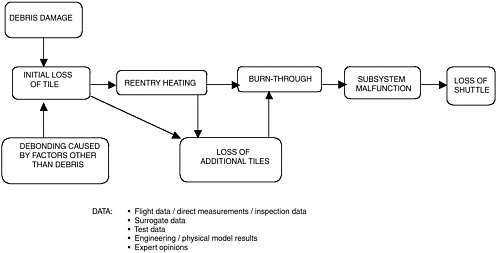

FIGURE 1 Influence diagram for an analysis of the risk of an accident caused by the failure of tiles on the space shuttle. Source: Paté-Cornell and Fischbeck, 1994.

were an appropriate subject for a risk analysis that might reveal some fundamental problems and help avert an accident.

With NASA funding, and with the assistance of one of my graduate students (Paul Fischbeck), I performed such an analysis based on the first 33 flights of the shuttle. The results were published in several places (Paté-Cornell and Fischbeck, 1990, 1993a,b, 1994). I went first to Johnson Space Center (JSC) to get a better understanding of how the tiles worked, what problems might arise, and how the tiles might fail. The study was based on four critical parameters for each tile: (1) the heat load, which is vitally important because, if a tile is lost, the aluminum skin at that location might melt, thus exposing the internal systems to hot gases; (2) aerodynamic forces because, if a tile is lost, the resultant cavity creates a turbulence that could cause the next tile to fail; (3) the density of hits by debris, which might indicate the vulnerability of the tile to this kind of load; and finally (4) the criticality of the subsystems under the skin to determine the consequences of a “burn-through” in various locations of the orbiter’s surface. Based on these four factors, we constructed a risk analysis model (Figure 1) described as an influence diagram.

The pattern of debris hits was intriguing. First, we looked at maps of direct hits the shuttle had experienced during each of its 33 flights (a map of hits had been recorded for each flight). When we superimposed these maps, we found an interesting pattern of damage under the right wing (Paté-Cornell and Fischbeck, 1993a,b). As it turns out, a fuel line runs along the external tank on the right side, and because of the way the foam insulation on the external tank is applied, little pieces of insulation had debonded where the fuel line was attached to the tank.

This observation immediately directed our attention to what was happening with the insulation of the external tank, as well as the system’s performance under regular loads (e.g., vibrations, aerodynamic forces, etc.).

The next question we examined was what the consequences would be if the aluminum skin were pierced in different locations of the orbiter’s surface. We found that once a tile or several tiles was lost, the aluminum skin would be exposed; it would begin to soften at approximately 700°C and would melt shortly above that temperature. In some places, a burn-through would be catastrophic. For example, the loss of the hydraulic lines or the avionics, would lead to an accident.

Once it was clear that the tile system was critical, I wanted to understand the factors that affected the capacity of the tiles to withstand the different loads to which they were subjected. I went to Kennedy Space Center (KSC) to talk to the tile technicians and observe their work. In the course of these discussions, I discovered that during maintenance, a few tiles had been found to be very poorly bonded. This could have happened, for example, if the glue had been allowed to dry before pressure was applied, either during the first installation or later during maintenance. Even though poorly bonded tiles could withstand the 10-pounds-per-square-inch pull test, they could be dislodged either by a large debris hit or, perhaps, even by normal loads, such as high levels of vibration. At JSC, I also asked for the potential trajectories of debris that could debond from the insulation of the external tank, both from the top and the center of the tank (Paté-Cornell and Fischbeck, 1990, 1993b). At Mach 1, it seemed that tiles debonded from either location would hit the tiles under the wings. These trajectories appear in the original report (Paté-Cornell and Fischbeck, 1990). I must point out, however, that in general I did not look into the reinforced carbon-carbon, including on the edge of the left wing, which seems to have been hit first in the Columbia accident of February 2003.

In December 1990, I delivered a report to NASA pointing out serious problems, both with the foam on the external tank and the weak bonding of some of the tiles (Paté-Cornell and Fischbeck, 1990). One of the findings was that about 15 percent of the tiles were responsible for 85 percent of the probability per flight of a loss of vehicle and crew due to a failure of the tiles. The risk of an accident caused by the tiles was evaluated at that time to be approximately 1/1,000 per flight.

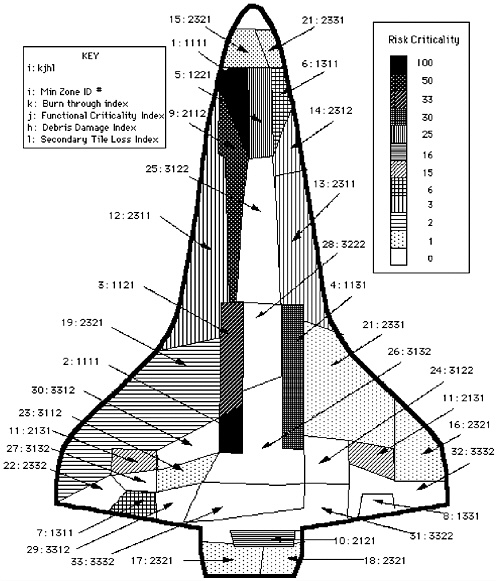

Next, we constructed a map of the underside of the orbiter to show the location of the most risk-critical tiles, so that when NASA (as required by the procedures in place) picked 10 percent of the tiles for detailed inspection before each flight, the technicians would have an idea of where to begin (Figure 2).

But obviously, most of the risk was the result of the potential for human error, in many cases a direct consequence of management decisions. Therefore, I also looked into some management issues. I learned that tile technicians were paid a bit less than machinists and other technicians, so they tended to move on

FIGURE 2 Map of the risk criticality of the tiles on the space shuttle orbiter as a function of their location. Source: Paté-Cornell, 1990, 1993a,b, 1994.

to other jobs. Therefore, the tile maintenance crews sometimes lost some experienced workers. I also learned that tile technicians at the time were under considerable pressure to finish work on the spacecraft quickly for the next flight. Because of those time constraints, some workers had become creative—for instance, at least one of them had decided to spit into the tile glue to make it cure faster. But the curing of the glue is a catalytic reaction and adding water to the bond at

the time of curing could perhaps cause it to revert to a liquid state sooner than it would otherwise.

The completed study was published in the literature (Paté-Cornell and Fischbeck, 1993a,b). In 1994, we were among the finalists for the Edelman prize of the Institute for Operations Research and Management Sciences (INFORMS) for that work (Paté-Cornell and Fischbeck, 1994). We were told by the jury that we were not chosen because we could not “prove” that if NASA implemented our recommendations, it would save the agency some money. That proof, unfortunately, came with the Columbia accident.

Shortly thereafter, the study was revived by Dr. Joseph Fragola, vice president and principal scientist at Science Applications International Corporation (SAIC), who incorporated it into a complete risk analysis of the shuttle orbiter. After that, it seems that the study was essentially forgotten, except for efforts at JSC in recent years to revisit it to try to lower the calculated risks of an accident caused by a tile failure. In any case, NASA lost the report, and, with some embarrassment, asked me for a copy of it on February 2, 2003.

On the morning of the accident (February 1, 2003), I was awakened by a phone call from press services asking for my opinion about what had just happened. I did not immediately conclude that a piece of debris that had struck the left wing at takeoff had been the only cause of the accident as described in one of the scenarios of the 1990 report. But I knew immediately that it could not possibly have helped for the shuttle to have reentered the atmosphere with a gap in the heat shield.

Had NASA implemented the study’s recommendations? In fact, quite a few of the problems noted in the 1990 report about organizational matters had been corrected. For instance, the wages of tile technicians had been raised, eliminating some of the turnover among those workers, and the risk-criticality map had been used at KSC to prioritize tile inspections. But it appears that at JSC, where maintenance procedures are set, management had concluded that the study did not justify modifying current procedures. As a result, unfortunately, several things that should have been done were not. For example, no nondestructive methods were effectively developed for testing the tile bond. Tests could have been done using ultrasounds, which would have been expensive but, with sufficient resources, might have been achieved by now. Second, once in orbit, the astronauts were unable to fix gaps in the heat shield. Imagine that you are in orbit looking down to reentry and you realize one or more tiles are missing. To me, this was a real nightmare. At the present time (after the accident), NASA seems to have concluded that the astronauts should have the skills to fix tiles in flight before the space shuttles fly again. That process may be completed as early as December 2003.

NASA might also have looked at the precursors, especially the poorly bonded tiles, and done something about them. Instead, in its risk analyses, NASA redefined the precursors. The 1990 study had concluded that 10 percent of the

risk of a shuttle accident could be attributed to the tiles. But apparently, NASA thought this figure was too high because a number of flights had occurred without any tile loss since our study. So they asked a contractor to redo the analysis; the contractor decided to take as a precursor the number of tiles lost in flight (instead of the number of weakly bonded tiles). During the first 68 flights, only one tile had been lost, some of the felt had remained in the cavity, and the lost tile had not caused an accident. Obviously, the new analysis changed the results, and the computed risk went down from 1/1,000 to 1/3,000.

I believe that the contractor focused on the wrong precursor, that is, a phenomenon (the number of tiles that had debonded in flight) for which statistics were insufficient. Indeed, history corrected the new results when two additional tiles were subsequently lost, which brought the risk result back to about 1/1,000. Therefore, I believe that our original study had used a better precursor, because it provided sufficient evidence to show that the capacity of a number of tiles was reduced before they actually debonded or an orbiter was lost.

FORD-FIRESTONE

The Ford-Firestone fiasco is another example of precursors being ignored until it was too late. The Firestone tires on Ford Explorer SUVs blew out at a surprisingly high rate, causing accidents that were sometimes deadly. But it took 500 injuries and roughly 150 deaths before Ford reacted. The first signals had been detected by State Farm Insurance in 1998, but nothing was done about the problem in the United States.

Part of the problem was a split warranty system. The car was under warranty by Ford, but the tires were under warranty by Firestone. This created a data-filtering problem that has now been addressed by the TREAD Act passed by Congress in 2000. The TREAD Act mandates the creation of an early warning system; the law requires that even minor problems be reported to the National Highway Safety Transportation Administration (DOT-NHTSA, 2001). Some serious questions, however, have not yet been fully addressed—what data should be collected, how data should be stored, and how the information should be organized and processed. My research team is currently studying these issues.

SUCCESS STORIES

In spite of some visible failures, there have been some real successes in the creation of systems designed to identify and observe precursors. William Runciman, then head of anesthesiology of the medical school in Adelaide, Australia, constructed the Australian incident monitoring study (AIMS) database (Webb et al., 1993). The system requires that every anesthesiologist submit an anonymous report after emerging from the operating room describing problems encountered during the operation. Because anonymity is absolute, the questionnaire is widely

TABLE 1 Incidence Rates of Initiating Events in Anesthesia Accidents (gathered from the AIM database)

|

Initiating Event |

Number of AIMS Reports* |

Report Rate |

Probability of Initiating Event |

Relative Fraction |

|

Breathing circuit disconnect |

80 |

10% |

7.2 × 10−4 |

34% |

|

Esophageal intubation |

29 |

10% |

2.6 × 10−4 |

12% |

|

Nonventilation |

90 |

10% |

8.1 × 10−4 |

38% |

|

Malignant hyperthermia |

n/a |

— |

1.3 × 10−5 |

1% |

|

Anesthetic overdose |

20 |

10% |

1.8 × 10−4 |

8% |

|

Anaphylactic reaction |

27 |

20% |

1.2 × 10−4 |

6% |

|

Severe hemorrhage |

n/a |

— |

2.5 × 10−5 |

1% |

|

*Out of 1,000 total reports in initial AIMS data. |

||||

used. Indeed, for some, the exercise seems to be almost cathartic. The AIMS reporting system enables the hospital to identify the frequency and probability of various factors that initiate accident sequences that might kill healthy patients under anesthesia (e.g., people undergoing knee surgery). These factors include, for example, incorrect intubation (e.g., in the stomach instead of the lungs) and overdoses of a particular anesthetic.

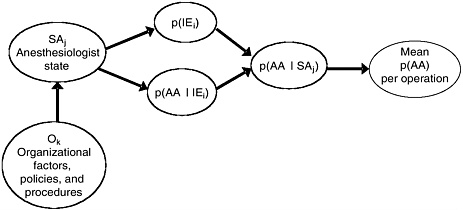

A risk analysis we did with a research group from Stanford based on the AIMS database provided insights into improving safety in anesthesia (Paté-Cornell, 1999; Paté-Cornell et al., 1996). Table 1 shows the kind of data we derived from the AIMS database regarding the probability of an initiating event per operation. Figure 3 shows the structure of the risk analysis model in which these data were used to identify (1) the main accident sequences (and their dynamics), (2) the effect of the “state of the anesthesiologist” (e.g., extreme fatigue) on patient risk, and (3) the effect of management procedures (e.g., restriction on the length of time on duty) on the state of the anesthesiologist.

Another success story is airplane maintenance. Some of my students worked, under my supervision, on a project to analyze maintenance data for one of the most popular airplanes in the fleet of a major airline (Sachon and Paté-Cornell, 2000). Their study revealed an intriguing problem. Because of rare errors during maintenance, the flaps and slats of the leading edge of the plane sometimes dropped on one side during flight. There has never been an accident involving these flaps and slats, apparently because the drop never occurred at a critical time. We computed the probability of the drop happening at takeoff, landing, or in bad weather, in other words, the probability of an accident that has not happened yet. Based on our work, the airline decided to modify its maintenance procedure. We hope this work will make a difference in the long run.

FIGURE 3 Influence diagram showing the analysis of patient risk in anesthesia linked to human and organizational factors. Source: Paté-Cornell et al., 1997.

COMBATING TERRORISM

The U.S. intelligence community failed to recognize precursors leading up to the catastrophic terrorist attack of September 11, 2001. This was a very complex situation. Obviously, signals were missed, or were not allowed to surface, within an agency; but there were also legal issues that prevented early actions. There are two distinct problems, both related to the fusion of information: (1) the combination of different pieces and different kinds of evidence; and (2) communication among agencies.

Before September 11, the U.S. intelligence community was aware of the activities of Al-Qaeda but did not recognize (or act upon) precursors to the attack for many reasons. One aspect of the overall failure was the lack of communication among intelligence agencies. This was partly the result of laws passed at the end of the Vietnam War that mandated separate databases for separate entities, which deliberately kept agencies from communicating with each other. To overcome this problem, we need interfaces between some computers and databases. Congress, however, is reluctant to permit the development of such interfaces for a sound reason—the need to protect privacy. One project by the Defense Advanced Research Projects Agency to implement interfaces was terminated because, although it was legal, some members of Congress thought it was getting too close to an invasion of privacy.

Questions of gathering and processing intelligence are particularly interesting. Two kinds of uncertainty problems are involved: (1) a statistical problem of extracting relevant information from background noise; and (2) the problem of identifying and gathering information about possible threats that have been detected but have not been confirmed. The first problem can be enormously diffi-

cult, like finding a needle in a haystack hidden by an opponent intent on deception. But even if we have several pieces of intelligence information, some strong and some weak, some independent and some not, the challenge is to determine the probability of an attack of a given type in the next specified time window given what we know today.

Bayesian reasoning involving base rates, as well as the likelihood of the signals given the event, can be extremely helpful in addressing this challenge. Bayesian reasoning allows the computing of probabilities in the absence of a large statistical database; it uses logical reasoning based on the prior probability of an event and on the probabilities of errors (both false positives and false negatives) (Paté-Cornell, 2002a).

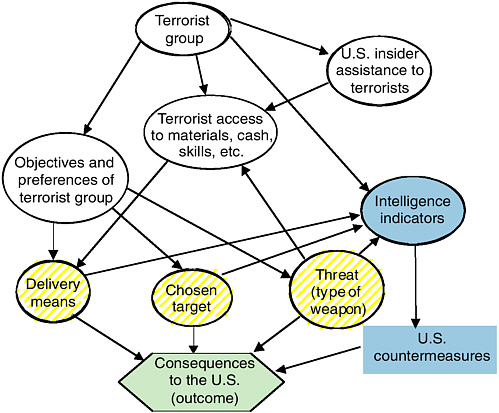

I began doing some risk modeling of terrorism as a member of a small panel of the Air Force Scientific Advisory Board. The panel members included eminent specialists in many fields, from history of the Arab world to weapons design. The topic under study was asymmetric warfare. We had to deal with a great mass of information under a relatively tight deadline. To help set priorities, I constructed for myself a probabilistic risk analysis model in the form of an influence diagram (Figure 4).

For simplicity, I considered only two kinds of terrorist groups—Islamic fundamentalists and disgruntled Americans (Paté-Cornell and Guikema, 2002). Experts then told me that although these groups had different preferences, they had some common characteristics, such as the importance of the symbolism of the target. I then looked more carefully at what we know about the supply chain of different terrorist groups (people and their skills, materials, transportation, communications, and cash). It was clear that both the supply chains and the preferences of the American groups were different from those of Islamist groups. Next, I asked how likely a group was to have U.S. insiders assisting them. I was then able to put the characteristics of an attack scenario into three classes: (1) choice of weapons; (2) choice of targets; and (3) means of delivery. For instance, the weapon might be smallpox or a nuclear warhead. I also considered the possibility of repeated urban attacks.

With the assistance of one of my graduate students, Seth Guikema, I constructed a “game” model involving two diagrams, one for the terrorists and one for the U.S. government (Paté-Cornell and Guikema, 2002). Based on our beliefs about the terrorists’ knowledge and preferences and our perceptions of the preferences of the American people in general, and the administration in particular, we then looked for feedback between the two models to try to set priorities among attack scenarios.

Obviously, a large part of the relevant information is classified, and the published paper contains only illustrative numbers. But, as expected, it appears that one attractive weapon for Islamist groups is likely to be a nuclear warhead—if they can get their hands on one—because of the sheer destruction it would cause. Smallpox was second in the illustrative ranking. Dirty bombs (e.g., spent

FIGURE 4 Influence diagram representing an overarching model for prioritizing threats and countermeasures. Source: Paté-Cornell and Guikema, 2002.

fuel combined with conventional explosives) were lower on the scale because, although they are scary and easy to make, they may not do as much damage.

The U.S. Department of Homeland Security’s recent simulation exercise (TOPOFF 2) was also instructive. TOPOFF 2 involved a hypothetical combined attack on Seattle with a dirty bomb and on Chicago O’Hare Airport with biological weapons. One problem faced by the participants was uncertainty about the plume from the dirty bomb. The models constructed by the Nuclear Regulatory Commission and the Environmental Protection Agency, perhaps because they were created for regulatory purposes, turned out to be too conservative to be very helpful in predicting the most likely shape of the plume. Those models are thus unlikely to match actual measurements, which may undermine confidence in the analytical results.

In any case, modeling attack scenarios has to be a dynamic exercise. Technologies change every day, and terrorist groups are constantly evolving, which, of course, makes long-term planning difficult. Therefore, it may be most useful to start by identifying desirable targets. For example, infrastructure is important

to the average person, but perhaps not very attractive to terrorists because it lacks symbolic impact. Before these groups strike at infrastructure targets, they may try, given the opportunity, to hit something that looks more appealing in that respect.

So what can we do? Of course, we should identify the weak points in global infrastructure systems (e.g., the electric grid), because they are worth reinforcing in any case. We should also make protecting symbolic buildings, prominent people, harbors, and borders, a high priority.

Monitoring the supply chains of potential terrorist groups (people, their skills, the materials they use, cash, transportation [both of people and materials], and communications) is especially important. A coherent and systematic analysis of the intelligence signals remains difficult and often depends on intuition, but a Bayesian analysis of the precursors and signals would account explicitly for reliability and dependencies (Paté-Cornell, 2002a). But we have a long way to go before a probabilistic analysis will be implemented, because it is not part of a tradition. Currently some interest has been expressed in using such methods, and I hope that this logical, organized approach will eventually be adopted.

ORGANIZATIONAL WARNING SYSTEMS

An effective organizational warning system has to be embedded in an organization’s structure, procedures, and culture. The purpose of a warning system is to watch for potential problems in organizations that construct, operate, or manage complex, critical physical systems.

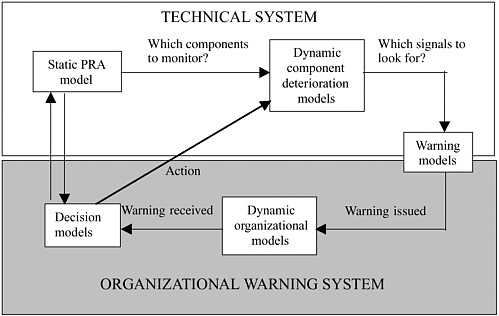

How do we begin to think about an organizational warning system? First, we must analyze, in parallel, the dynamics of the physical system and of the organization. As Figure 5 shows, a good place to start is by identifying the physical weaknesses of a critical system, using a probabilistic risk analysis, for example, as a basis for setting priorities (look at the engines before you look at the coffee pot). The second step is to find corresponding signals and decide what the monitoring priorities should be. The third step is to examine the dynamics of both the organization and the system as it evolves. Once a problem starts, how rapidly does a situation deteriorate, and how soon does a failure occur if something is not done? Finally, part of the analysis is to assess the error rate of the monitoring system to provide filters and to prevent counterproductive clogging of the communication channels.

The next step is to analyze the organizational processing of the message. How is an observed signal communicated? Who is notified? Who can keep the signal from being transmitted? Does the decision maker eventually hear the message (and how soon), or is it lost—or the messenger suppressed—along the way? Finally, how long does it take for a response to be actually implemented? Clearly, no response can be formulated without an explicit value system for weighing

FIGURE 5 Elements of a warning system management model. Source: Lakats and Paté-Cornell, 2004.

costs and benefits, as well as the trade-offs between false positives and false negatives.

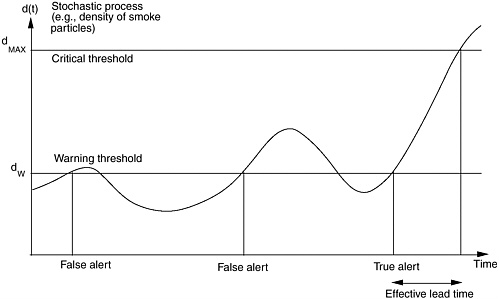

SETTING AN ALERT THRESHOLD

One way of filtering the signal is to optimize the warning threshold based on an explicit stochastic process (Figure 6) that represents the variations over time of the underlying phenomenon to be monitored (Paté-Cornell, 1986). The problem could be, for instance, the density of smoke in a living space as determined by a fire alarm. How sensitive should the system be? If it is too sensitive, people may shut it off because of too many false alerts. If it is not sensitive enough, the situation can deteriorate too much before action is taken. The warning threshold should thus be a level at which people will respond and that gives them enough lead time to react to the signal.

An appropriate alarm level is likely to lead to some false alerts, which have costs. For instance, a false positive indicating a major terrorist attack could lead to costly human risks—for instance, the risks incurred in a mass evacuation. A false positive could also cause a loss of confidence that reduces the response to the next alert. False negatives (missed signals) also imply some costs. If no warning is issued when it should be, there is nothing one can do, and losses will be incurred. Another cost of a false negative is loss of trust in the system. To

FIGURE 6 Optimization of the threshold of a warning system based on variations of the underlying stochastic process. Source: Paté-Cornell, 1986.

provide a global assessment of a warning system, the results thus have to be examined case by case, but the base rates and the rates of errors must be taken into account.

Given the trade-offs between false positives and false negatives, there is no way to resolve the problem of filtering out undesirable signals without making a value judgment. A balance has to be found between the time necessary for an appropriate response, the corresponding benefits, and the cost of false alerts, in terms of both money and human reaction. Therefore, a simple model of this process must include at least three elements: (1) the underlying phenomenon (in terms of recurrence and consequences); (2) the rate of response given people’s experience with the system’s history of true and false alerts; and (3) the effectiveness of the use of lead time.

One of the problems that has emerged with the color alert system used by the U.S. Department of Homeland Security is a lack of clarity about what an alert means and what should be done. For the time being, however, it is not clear how the system can be improved. Some have suggested using a numerical scale, for instance, but this would have to be very well thought through to be more meaningful than the color alert system. In any case, determining the cost and benefits of a warning system involves taking into account the base rate and the effects of the events, as well as false positives and false negatives.

SUMMARY

In conclusion, I would like to make several points. First, failure stories do not provide a complete picture. There are many more precursors and signals observed and acted upon than there are accidents. This is not always apparent, however, because when signals are recognized and timely actions taken, the incident is rarely visible, especially, for example, in the domain of intelligence. Therefore, we know when we have failed, but we often do not know when we have succeeded. Second, managing the trade-off between false positives and false negatives in warning systems is difficult because it involves the quality of the information, as well as costs and values. Third, to design an effective organizational warning system, one has to know where to look.

The way people in an organization react depends in part on management. The structure, procedures, and culture of the organization determine the information and the incentives people perceive, as well as the range of their possible responses. It is essential to decide how to filter a message to avoid passing along information that may have many costs and few benefits. In that respect, the quality of communications is essential—both communication to decision makers who must have the relevant facts in hand and communication to the public who must have trust in the system and respond appropriately. And finally, when it comes to risk management, the story is always the same—when it works, no one hears about it…

REFERENCES

DOT-NHTSA (U.S. Department of Transportation-National Highway Transportation Safety Administration). 2001. NPRM: Reporting of Information and Documentation about Potential Defects. Retention of Records that Could Indicate Defects. Pp. 66,190–66,226 in Federal Register 66 (246) Dec. 21, 2001.

Lakats L.M., and M.E. Paté-Cornell. 2004. Organizational warnings and system safety: a probabilistic analysis. IEEE Transactions on Engineering Management 51(2).

Paté-Cornell, M.E. 1986. Warning systems in risk management. Risk Analysis 5(2): 223–234.

Paté-Cornell, M.E. 1999. Medical application of engineering risk analysis and anesthesia patient risk illustration. American Journal of Therapeutics 6(5): 245–255.

Paté-Cornell, M.E. 2002a. Finding and fixing systems weaknesses: probabilistic methods and applications of engineering risk analysis. Risk Analysis 22(2): 319–334.

Paté-Cornell, M.E. 2002b. Fusion of intelligence information: a Bayesian approach. Risk Analysis 22(3): 445–454.

Paté-Cornell, M.E., and P.S. Fischbeck. 1990. Safety of the Thermal Protection System of the STS Orbiter: Quantitative Analysis and Organizational Factors. Phase 1: The Probabilistic Risk Analysis Model and Preliminary Observations. Research report to NASA, Kennedy Space Center. Washington, D.C.: NASA.

Paté-Cornell, M.E., and P.S. Fischbeck. 1993a. Probabilistic risk analysis and risk-based priority scale for the tiles of the space shuttle. Reliability Engineering and System Safety 40(3): 221–238.

Paté-Cornell, M.E., and P.S. Fischbeck. 1993b. PRA as a management tool: organizational factors and risk-based priorities for the maintenance of the tiles of the space shuttle orbiter. Reliability Engineering and System Safety 40(3): 239–257.

Paté-Cornell, M.E., and P.S. Fischbeck. 1994. Risk management for the tiles of the space shuttle. Interfaces 24: 64–86.

Paté-Cornell, M.E., and S.D. Guikema. 2002. Probabilistic modeling of terrorist threats: a systems analysis approach to setting priorities among countermeasures. Military Operations Research 7(4): 5–23.

Paté-Cornell M.E., L.M. Lakats, D.M. Murphy, and D.M. Gaba. 1996. Anesthesia patient risk: a quantitative approach to organizational factors and risk management options. Risk Analysis 17(4): 511–523.

Paté-Cornell, M.E., D.M. Murphy, L.M. Lakats and D. M. Gaba. 1997. Patient risk in anesthesia: probabilistic risk analysis, management effects and improvements. Annals of Operations Research 67(2): 211–233.

Sachon, M., and M.E. Paté-Cornell. 2000. Delays and safety in airline maintenance. Reliability Engineering and Systems Safety 67: 301–309.

Webb, R.K., M. Currie, C.A. Morgan, J.A. Williamson, P. Mackay, W.J. Russel, and W.B. Runciman. 1993. The Australian Incident Monitoring Study: an analysis of 2000 incident reports. Anaesthesia and Intensive Care 21: 520–528.