Panel II—

Best Practice Examples of Public-Private Partnerships

INTRODUCTION

Arden Bement

National Institute of Standards and Technology

Dr. Bement noted that the first panel had established the basis and rationale for public-private partnerships associated with the nation’s new security challenge. The current panel, he said, would take the issue a step further, to best practices and examples of public-private partnerships.

He noted that NIST was effectively engaged in matters of homeland security, including cyber security, counterterrorism, and critical infrastructure protection. Homeland security had now been designated as one of four strategic thrusts for NIST over the next decade, when the institute expected to increase organizational emphasis and investment.

He said that the two previous speakers had served as models of the kind of cooperation, dedication, and ingenuity necessary to prevail against the threat of terrorism. He praised Gordon Moore as a member of “the nation’s pantheon of technologists,” having “charted the semiconductor industry’s course” in the information revolution and co-founded and led the company “that helped put the revolution into high gear.” All along, he said, Dr. Moore championed the strategic importance of maintaining a strong national platform for innovation, which was now an asset fundamental to the successful response to the challenge of terrorism.

He noted that Congressman Boehlert came from the state that had borne the brunt of the “misery and devastation wrought by the attacks on September 11,” and had become a forceful and effective advocate for policies that view the support of science and engineering research and their application as “investments in a better future.” He said that Mr. Boehlert had been at the forefront of efforts to leverage the nation’s science and technology resources in the fight against terrorism. Leveraging through partnerships and coordination, he said, will be key to how effectively we marshal our technological capabilities to counter the asymmetric threats of terrorism, a threat he called “unprecedented in terms of its dimensions and complexities.”

As an example, he described the physical infrastructure at potential risk—the nation’s collection of utilities, bridges, ports, water systems, airports, hospitals, plants, and factories, some 85 percent of which is privately owned. He pointed as well to our immense information infrastructure and its multitude of vulnerabilities, and to levels of emergency preparedness in 56 states, territories, and possessions, more than 3,000 counties, and tens of thousands of communities where 285 million citizens live. In all, he said, this presented a “systems problem of the highest order.” The number of technical issues and scientific questions aside, he said, we face a gigantic organizational and operational challenge that can best be faced collaboratively.

NIST and Homeland Security

He said that NIST was supporting some 120 projects that address issues of homeland security, many of them characterized by collaboration. Some 75 of those projects, which had begun before 9/11, had been redirected. In the area of radiation standards, for example, NIST had already been developing standards, and had redirected its work to include development of standards for beta radiation. For DNA, the institute had been developing standards for analysis, and it shifted that work to the study of damaged DNA in order to assist in the identification of the victims of the World Trade Center collapse. In addition, NIST’s Advanced Technology Project (ATP) supported companies that were bringing to life “embryonic technologies” through cost-sharing awards. Since 1992, ATP had obligated an estimated $270 million to companies and joint ventures pursuing promising commercial technologies that could be enlisted in the fight against terrorism. That partnership, he said, underscored the dual nature of many of the technologies that were now needed.

Many partners outside NIST were contributing to the homeland security work underway in NIST laboratories, with an emphasis on responding to measurement and standards-related needs. He cited three such needs:

(1) NIST was starting its investigation of the structural failure and progressive collapse of the World Trade Center, bringing together technical experts from industry, academia, and other laboratories and interacting regularly with the pro-

fessional community, local authorities, and the general public. NIST had also assigned a special liaison to families of first responders and families who had had members in the buildings at the time of the collapse. The investigation was part of a broader NIST response to the World Trade Center disaster.

(2) In concert with the World Trade Center investigation, NIST was conducting a multi-year research and development program that also engaged experts from the private sector, academia, and professional societies. The objective was to apply lessons learned and to use the results of this collaboration to provide a technical basis for improved building and fire codes, standards, and practices.

(3) NIST was also facilitating and supporting an industry-led program to disseminate information and technical assistance. This program was designed to provide practical guidance and tools to better prepare facility owners, contractors, designers, and emergency personnel to respond to future disasters, whether natural or human-caused. One challenge was to convey reliable information to people in the front lines of homeland security. The previous month, for example, NIST had issued the first comprehensive set of basic procedures for decontaminating protective clothing and equipment. This was being provided to personnel who were charged with responding to chemical, biological, radiological, or nuclear attack. The report consolidated recommendations and key information from many authoritative sources, including makers of synthetic fibers and protective equipment, fire departments, and government laboratories. This potentially life-saving reference was the result of collaboration between NIST, local fire marshals, U.S. fire administration, and the chairperson of the national Volunteer Fire Council. The manual was being made available without charge, and could be seen on the NIST web site.

The programs described under (2) and (3) both sought to gain lessons from the events of September 11 and to apply those lessons to new codes and standards.

Dr. Bement said that international collaboration was also critical in strengthening the nation’s and the world’s defenses against terrorism. The advanced encryption standard (AES), for example, was a result of such international cooperation, which also featured rigorous competition. The standard was selected from 15 algorithms submitted by cryptographers from around the world. The winning AES was developed by two cryptographers in Belgium and approved last December as the federal standard for civilian agencies; it was already finding widespread use in the private sector as well. The AES was designed to protect sensitive computerized information and financial transactions. He estimated that millions of people in both the public and private sectors would likely use this standard over its lifetime, which could span one to two decades, or even more. He said that Intel’s chief security architect had described the selection of the encryption standard as a process that “should be held up as the model of industry-academia and government cooperation.”

SEMATECH: ASSESSING THE CONTRIBUTION

Kenneth Flamm

University of Texas at Austin

Dr. Flamm said that he wanted to offer some comments about the contribution and lessons of SEMATECH that were based primarily on his own experience and opinions, rather than on rigorous analysis.

He began by sketching a picture of the semiconductor industry, to explain why economists—and the STEP board—placed so much emphasis on it. The semiconductor industry was now the largest U.S. manufacturing industry, measured by value added—the contribution to national output. He said that it may be a surprise “or even shocking” for some people to learn that it was even larger than the computer industry in the United States. Likewise, because value-added figures are those that relate to value originating within the industry itself, the semiconductor industry was larger than the automobile industry, which uses many components (such as semiconductors) that originate in other industries. As a single manufacturing industry, he said, the semiconductor industry was then approaching 1 percent of GDP; the entire manufacturing sector in the U.S. accounted for 17–18 percent of GDP.

Perhaps more importantly, semiconductors constituted a key input to other important industries—especially across the spectrum of information and communications technology (ICT). Semiconductors were probably the largest and most important input to all of the industries that made up the new realm of ICT. He said that this conclusion grew largely out of the work of Prof. Dale Jorgensen of Harvard, who had performed extensive research on the impact of semiconductors on GDP and ICT.6 He also said that economists had substantially improved the quality of their statistics on the computer and communications industries. “Our understanding of the growth of the U.S. economy over the last two decades has been completely altered,” he said, “by this revisiting of the basic numbers on the sources of productivity growth.”

Price Performance of Semiconductors

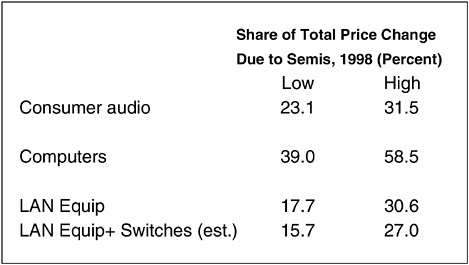

He said that adequate data had made it possible to estimate what portion of the decline of computer and communications equipment prices—a measure of technological progress—was due to the price performance of semiconductors (see Figures 1 and 2). The calculations were laborious, he said, but in summary, he had found that about 40–60 percent of the decline in computer prices in 1998 was due to the decline in the cost of semiconductor functionality. The remaining 40 to

60 percent of the cost decline was caused by innovation. He had made similar calculations for communications equipment, and found that 15 to 30 percent of price declines were due to price declines in semiconductors; for consumer audio, the figure was 20 to 30 percent. He added that the actual measure he had used was a quality-adjusted measure for the decline in price for a particular kind of equipment that made use of semiconductors.7

Despite the importance of the semiconductor sector, he said, “the data are awful.” Given that this is the largest single manufacturing sector in the U.S., “you’d think that effort would be expended on collecting adequate numbers. We have better numbers on pork bellies and cheese and industrial fasteners than on semiconductors.” He said there were many complicated and legitimate reasons for this, and an important one is the cost of collecting the needed data. Without adequate funding, the government relies on price data sold by market research companies. These data are undoubtedly cheaper, he said, but are not collected by the standards required by economists.

He then reviewed the origins and early years of the semiconductor industry, which “was basically a U.S. industry.” The transistor was invented at Bell Labs in New Jersey, and the integrated circuit was developed at two U.S. laboratories independently. During the early years of the industry, in the 1960s, each of the major competing firms would design and manufacture not only semiconductors, but all the other ingredients needs for integrated circuits. They would develop their own materials, grow their own crystals, slice wafers, and design circuits. They would be engaged in front-end fabrication, the deposition and patterning of silicon wafers, and even the back-end activities of assembly and testing. But as the industry got bigger, it began to specialize. The semiconductor industry was one of the first to send its labor-intensive activities—the assembly and testing of semiconductors—offshore to Hong Kong, Southeast Asia, Japan, and Mexico.

Then in the 1970s, the integrated companies began to spin off their materials and equipment activities to firms that specialized in those activities. The 1980s saw the next step of specialization, most notably the “fabless” firms that did only chip design, leaving the fabrication operations to manufacturing firms downstream.8

With specialization came new kinds of coordination challenges. When all functions were done inside individual large firms, coordination was manageable.

|

7 |

Ana Aizcorbe, Kenneth Flamm, and Anjum Khurshid, “The Role of Semiconductor Inputs in IT Hardware Price Decline: Computers vs. Communications,” Federal Reserve Board, Finance and Economics Discussion Series, August 2002, http://www.federalreserve.gov. |

|

8 |

Taiwan Semiconductor Manufacturing Co. and a few other firms changed the semiconductor market by specializing in the manufacture of custom wafers under contract to chip designers. This freed the designers to concentrate on making and marketing the integrated circuits formed on the wafers to form microchips and helped spark an explosion of “fabless” microchip companies, such as those that populate Silicon Valley and the high-tech zone around Taipei. |

Now, most firms found themselves involved in complex technology flows from different groups of vendors in different niches and countries. Only the very largest leader firms had the resources to coordinate the next generation of technology internally. This is a high-cost activity; those who attempt it have to accept that a certain amount of “leakage” will spill over to other firms.

A Review of Moore’s Law

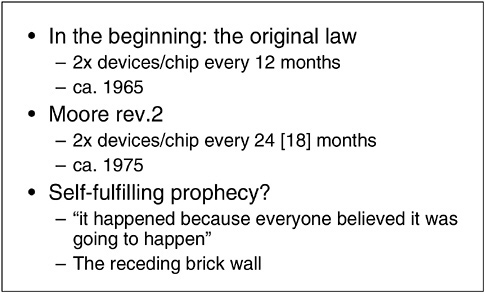

Another important event in the history of the semiconductor was “Moore’s law.” He recalled that Gordon Moore in 1965 published a paper in an IEEE journal suggesting that the density of devices on a chip would approximately double every 12 months for the next several years (see Figure 3). This meant essentially a doubling of the capacity of each integrated circuit. Ten years later, that prediction was still more or less accurate, and it became a de facto benchmark for predicting when the next generation of technology would come around. What started out as an optimistic prediction, said Dr. Flamm, began to take on a life of its own, becoming, by accident, a coordination device for the industry.

Around 1975, the rate of doubling slowed, and Dr. Moore raised his estimated cycle time to 24 months. This figure turned out to be pessimistic, as the doubling time rose to only about 18 months—a “middle ground between Moore One and Moore Two.”

FIGURE 3 Moore’s law.

Dr. Flamm pointed out that there was no actual physical basis underlying Moore’s law; the process involved was that “human beings were investing in R&D and inventing new technologies.” Moore’s law was a well-informed prediction. Nonetheless, it became in essence a kind of coordinating device for an increasingly complex and dispersed industry. “Moore’s law worked because everyone believed it was going to work,” he said. “If you wanted to be competitive, you had to bring out the next generation of technology on schedule.”

Another piece of legend, he said, was the history of “brick walls,” technical problems or physical limitations that periodically threatened to slow or even halt the rapid progress of the semiconductor industry and derail Moore’s law. Every time such a brick wall has been described, however, a solution has emerged, and the industry has continued to move ahead as predicted.

By the early 1980s, nearly 20 years after Moore’s law was suggested, the United States no longer dominated the industry. In particular, Japan had invested a major effort in their own semiconductor technology, beginning in the 1950s. Some of their investments had paid off, especially in materials, equipment, and manufacturing technology. They were doing so well that many U.S. firms found themselves falling behind, especially in manufacturing technology, and customers of these firms began to discuss a perceived quality gap in U.S. chips. The industry initially denied it, but customers were doing their own tests on failure rates. In addition, the costs of U.S. firms were too high, and they were falling behind in manufacturing productivity as well.

By the mid-1980s, the industry was also hampered by a host of new trade issues and several national security concerns, including those identified by the National Science Board in 1986. These were based on the supposition during the Cold War that advanced technology, especially semiconductor content, constituted a large component of the nation’s security and should be carefully guarded.

Evidence of Success

In this tense environment, the idea of a government-industry partnership arose as a mechanism to sort through these challenges and propose a unified strategy. Even though President Reagan traditionally opposed public interventions in the free market, the Republican administration took a favorable view of the partnership that became SEMATECH, as did the semiconductor firms themselves.

It is fair to say, according to Dr. Flamm, that SEMATECH became, under the aegis of the 1984 National Cooperative Research Act, the highest-profile government-industry R&D consortium in the United States. Despite that, however, there is a dearth of serious research on its impact. He said that the entire body of empirical literature amounted to three studies “with any pretense of rigor,” none of them with quantitative empirical research that yields reliable proofs. That, he said, was why he was careful to acknowledge at the outset that his remarks were based on his own opinions, information gathered through interviews, and his reviews of published results.

The designers of SEMATECH took some time to plan a strategy, and they decided to emphasize strategies that would improve the equipment and materials used by U.S. producers. In 1982, William Spencer became the director of the consortium, and began to focus on reducing the time between new technology nodes and speeding up the flow of technology. He also promoted the sponsorship of a technology roadmap, which by now had become a fundamental feature of the consortium. The roadmap had been judged a “hugely” important and innovative model for global technology formation, adapted internationally. In the 1990s, the government subsidy ended, and SEMATECH continues today as an international organization.

Although his primary conclusion is “unprovable,” said Dr. Flamm, he said that he found widespread agreement the partnership played a significant role in the resurgence of the U.S. semiconductor industry. There is little doubt that the industry did come back, he said, and that many U.S. firms today are on the leading edge of manufacturing—a condition that was not true in 1985. It is impossible, he said, to determine the extent to which SEMATECH was responsible. And there are critics in the industry today who ask to be left alone to chart their own course—although, says Dr. Flamm, this request was not heard in the mid-1980s.

Imitation as More Than Flattery

Perhaps a more interesting, and telling, consequence, he said, was that SEMATECH was widely credited in Japan with a considerable accomplishment. The proof of that came in the 1990s, when the Japanese began to copy the structure of SEMATECH in their semiconductor strategy. Another line of evidence, he said, had been the “revealed willingness” of the members of SEMATECH to increase their funding for the consortium to about $140 million a year after the government subsidy disappeared. All of these “data points,” he suggested, showed that the effects of SEMATECH were widely viewed as useful.

Perhaps most importantly, said Dr. Flamm, the advance of productivity in the semiconductor industry accelerated dramatically in the late 1990s, after the major strategies of SEMATECH were installed. He cited Professor Jorgensen’s view that this acceleration was directly linked to a simultaneous upsurge in U.S. productivity. “There was a direct link,” he said, “between this improvement in the pace of introduction of semiconductor technology and the improvement of the aggregate macro-economic performance of the U.S. economy.”

He returned to the evolution of the roadmap, which he said had “never occurred in any global high-tech industry before.” It created the basis for an international framework to coordinate technology development on a global scale. Even more remarkable is that companies that are competing against one another voluntarily assemble every year to try jointly to identify technological obstacles and make plans to overcome them collectively as a global industry. “This is a new

and totally unique phenomenon that I think is one of the lasting contributions of SEMATECH,” he said “—a template for a new form of R&D collaboration.”

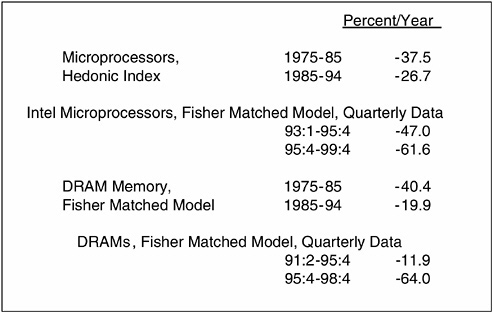

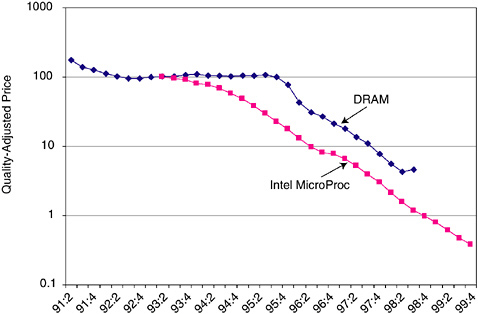

He turned to a slide that illustrated the price decline of semiconductors in late 1990s, showing acceleration (see Figure 4). “This may not be entirely due to SEMATECH,” he said, “but there certainly has been a pickup in the decline of semiconductor prices, and therefore an economic impact.” He also showed an illustration of prices of memory and microprocessors, which also declined substantially. “Clearly something happened around mid-1990s,” he said.

He concluded that SEMATECH was an interesting and successful experiment, a public-private partnership that operated on legal, financial, and technological levels. “It is widely believed by people who have some inside information to have been useful to the U.S. semiconductor manufacturers,” he concluded, “and I think it has led to a unique new mechanism for international, industrial public-private R&D coordination. In many respects, the partnership is an institutional innovation that will live on long after SEMATECH itself it is no longer necessary.”

Dr. Bement added that NIST had had a long partnership with SEMATECH, not only providing inputs for their roadmapping, but also taking NIST projects from the roadmap. In addition, SEMATECH annually critiqued the NIST program in areas related to semiconductors.

FIGURE 4 Change of pace?

PARTNERING FOR PROGRESS: THE ADVANCED TECHNOLOGY PROGRAM

Maryann Feldman

Johns Hopkins University

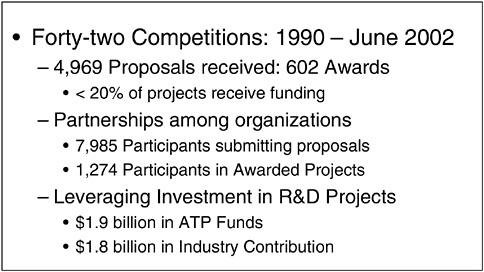

Dr. Feldman presented the results of about 5 years’ work as an independent consultant for the Economic Assessment Office at NIST, where she assembled a profile of facts and figures on the Advanced Technology Program. ATP, a public-private partnership program, had held 42 competitions that generated nearly 5,000 proposals and resulted in 602 “highly competitive” awards; less than 20 percent of the projects received funding (see Figure 5). An important component of the ATP project is that it involves partnerships among different types of organizations. Nearly 8,000 organizations participated in the ATP competitions, and 1,300 of them received funding and worked together on projects.

The ATP program provides an award to firms on the condition that they find matching funds. This is considered a critical aspect of the partnership. She reiterated Dr. Bement’s observation that the ATP had funded about $270 million worth of R&D related to national defense. This translates, Dr. Feldman said, into a total investment in the U.S. economy of about half a billion dollars. Beyond that value, her work demonstrated that garnering an ATP award bestowed a “halo effect” that made it easier for participating firms to raise further funding. This created an even larger ripple effect in the U.S. economy.

FIGURE 5 Advanced Technology Program (ATP).

Some Causes of Market Failure

Dr. Feldman noted that as an economist she had been accustomed to believe that in most situations the market would naturally lead to an efficient allocation of resources. She learned, however, that there are several reasons why firms are likely to underinvest in R&D projects due to market failures that necessitate partnerships. The first—relevant to national defense—is the tendency of firms to avoid research projects that hold promise but have a high chance of failure. Firms prefer not to “get too far ahead of the pack.”

A second reason for under-investment is that invention has become more complex, and many firms lack the in-house capacity to invest effectively in challenging R&D projects. R&D development often requires collaboration, but collaboration is difficult and costly. Therefore, firms have developed a bias toward short-term, go-at-it-alone projects and away from long-term projects that require multi-firm collaborations.

Finally, private incentives may not be sufficient to induce firms to undertake projects when they cannot be sure of appropriating the resulting benefits. This is a classic case of a market failure—when the private rate of return is lower than the public rate of return. The new knowledge or product is available freely to other firms and individuals in the economy, while the firm that created the knowledge or product is not able to price those benefits, or their knowledge spillovers.

These reasons lend support to those who advocate a government role in public-private partnerships—especially projects that promote pre-commercial technological development of high-potential social value. In order to assess the outcomes of the ATP program to do just that, Dr. Feldman undertook a survey of the 1998 ATP applicants. Prior to her survey, it was difficult to discern the net effect of the partnership mechanism. The ATP tracked the subsequent results of the award, but had no way of demonstrating what would have happened to those firms and their research projects in the absence of an award.

Therefore, Dr. Feldman’s study followed all the ATP applicants for 1998—those who won awards and those who did not. The goal was to observe the differences in the two groups: their types of projects, partnerships, and behaviors. Thanks to the rigorous peer-review followed by ATP, the researchers could track the proposals on the basis of technical scores given by reviewers. This allowed them to see if the ATP selected the kinds of high-risk projects that could not proceed without a government subsidy.

The researchers followed the firms for one year after the competition, and then asked each firm if it had pursued its proposed project and if it had been able to secure additional or other sources of funding. The general conclusion, using regression analysis and controlling for firm characteristics and unique factors, was that the ATP was indeed selecting projects that had greater potential to generate substantial public benefits—primarily the riskier, early-stage projects and new partnerships. Also, the projects selected for funding had characteristics

associated with knowledge spillovers and the propensity to share research results. It appeared that the ATP program managers examined the broader prospects of research proposals and did not simply select high-profile projects.

Debunking the Myth of “Agency Capture”

Dr. Feldman addressed the assumption that public-private partnerships are commonly involved in “agency capture.” Agency capture occurs when the awarding agency returns repeatedly to the same successful companies, giving multiple awards. By applying controls to the evaluation, she found that agency capture was not in fact happening.

Dr. Feldman found that of the firms who applied and were not selected for funding, the majority of proposed projects—70 percent—did not proceed and were abandoned by the proposing firm; 30 percent did proceed, but at a reduced level. The firms that did proceed were those able to raise money outside the ATP competition. In fact, firms not funded by ATP were more likely to seek external funding. However, the firms that received awards seemed to develop a “halo,” meaning these firms were able to attract three times the funding from venture capital firms and other private-market sources as the control group of firms with the same characteristics. Thus, Dr. Feldman’s group concluded that the ATP program, instead of “crowding out” potentially worthy firms, as proposed in the literature, was actually “crowding in” more investment to the most worthy R&D projects.

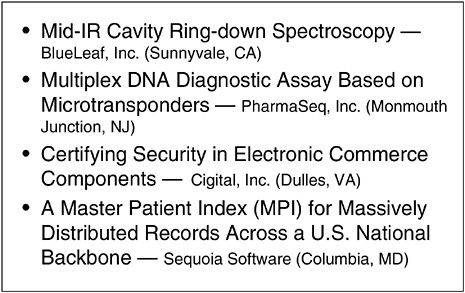

Dr. Feldman further concluded that partnerships funded through ATP have direct relevance to national security concerns (see Figure 6). Two years ago, Johns Hopkins received funding from an anonymous donor for an information security institute, and Dr. Feldman was asked to be the policy director. Subsequently, she reviewed the most promising ways to inject new ideas into the security arena. She saw that ATP, because it relies on commercial firms to propose projects, created the ability for the private sector to clearly see and invest in the kinds of innovative applications that have highest potential to bring new capabilities to bear on national security concerns.

Beyond that, Dr. Feldman said her research results suggest that the ATP offers incentives that tend to increase the efficiency in the overall national system of innovation. The projects proposed to ATP are private-sector solutions—the kinds of high-risk, high-payoff creative ideas that are not likely to proceed without a public-private partnership. The ATP is grounded through a rigorous peer review. This grounding is reinforced by the participation of NIST, the parent agency of ATP, which sets standards for the infrastructure and platforms of national security.

Finally, Dr. Feldman concluded that her research results suggest that the ATP actually increases private-sector R&D in the kinds of activities that it funds. This means that ATP should be considered a program that provides a well-

FIGURE 6 ATP-funded projects with relevance to national security.

functioning infrastructure of research funding that can aid in finding creative platform solutions related to national security. She closed by pointing to the ATP’s “great Web site,” <www.atp.nist.gov> where visitors can review the technologies and companies that are likely to be relevant to improving national defense.

UNIVERSITY RESEARCH AND THE MARKET: THE CARNEGIE MELLON EXPERIENCE

Christina Gabriel

Carnegie Mellon University

Referring to the title of her talk, Dr. Gabriel said that to some people, “university research” and “market” did not fit well within the same title. In fact, she said, the two worlds can interact productively in ways that are relevant to partnering against terrorism. She had been impressed by how many academic people at her own institution were looking for ways, in the wake of September 11, to adapt their own research activities to the new and pressing needs of their nation. Just after that event, over 40 faculty members from across the campus attended a meeting convened hastily on a weekend, so that they could strategize with each other about ways they could use their combined expertise to help.

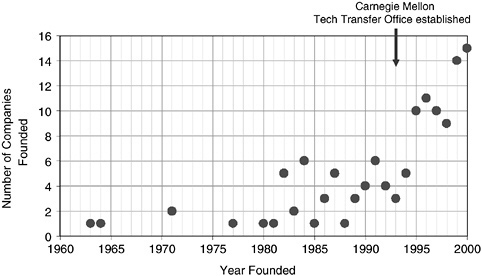

She said that Pittsburgh, where Carnegie Mellon is located, has a rich history of interaction between academia, industry, and the community at large. A strong

entrepreneurial economy was created in Pittsburgh by Andrew Carnegie and other well-known industrialists of a century ago—resulting in the dominance of U.S. Steel, Westinghouse, Heinz, Alcoa, and other large manufacturing companies (see Figure 7). Carnegie endowed his new university in 1900 so that it could educate the children of the steelworkers, and the University of Pittsburgh’s gothic skyscraper was designed to be visible from all the working-class communities in the region as an inspiration to them. Then, in the 1970s, when Japan successfully implemented new, lower-cost techniques of steelmaking, Pittsburgh lost most of its steel jobs along with the mills themselves, and it has taken decades to restructure the economy around a more diverse set of industry sectors. Today, the region is looking to its universities and medical research centers as the source of new jobs and new companies to bring back rapid rates of growth.

One benefit Pittsburgh continues to enjoy from the accumulation of wealth by those early entrepreneurs, Dr. Gabriel said, is the availability of more philanthropic foundation dollars per capita than perhaps any other community in the country. In recent years, the Pittsburgh foundations have supported a variety of new programs designed to help the community capitalize on the quality of its universities to revitalize the economy. Connecting university research more closely with the market where jobs can be created is a goal Pittsburgh shares with many regions around the country. With the pace of innovation increasing, research in many fields actually is much closer to the market than it has ever been before. “Because of information technology,” she said, “the pace of change is relentless. If I have an idea and I tell you about it, you’ll probably have a better idea soon. Through the Internet, I can tell millions of people all at once—and then getting the next better idea is a race among those millions—very likely faster than either you or me.” She recalled talking to a friend, Robert Colwell, head of the design team for the Intel Pentium II processor, who described the enormous efforts made by a very large team of people to make that chip the most powerful product on the market. After all that effort, he said, the chip would probably be obsolete after no more than a few years. Had he been an engineer in Caesar’s day, he would have worked on the aqueducts or the Appian Way, which remained in use for centuries. But even though our results may be shorter-lived, the intellectual challenges are more exciting than ever. If we are to be more innovative, we need to become better at working within partnerships of various kinds.

The Importance of a Government Partner

As part of her research and management positions in industry, government, and universities, Dr. Gabriel has observed the characteristics of a variety of effective partnerships. She found that government funding played an important role, especially in the early growth stages of technology companies, “more often than people realize. Government is not just something that sits on the sidelines. Government does play in important role, especially as an early catalyst and in shaping

FIGURE 7 Estimated number of new Pittsburgh-region companies started with Carnegie Mellon founders or technology.

incentives in the marketplace, and we have to make that role as effective as it can be.”

She proposed a list of factors that made university-industry partnerships successful. The most important, she said, was strong interpersonal relationships. “It’s really about trust; about people knowing each other well enough to take risks with them.” No matter how well one structures a new program at the policy level, she said, it is “extremely important to make sure the incentives make it work at the operational level” as well.

As an example of a government-supported partnership that led to regional economic development, she cited the NSF Engineering Research Centers. This program was designed to improve engineering education by encouraging stronger working partnerships between universities and industry. One ERC at Carnegie Mellon, the Data Storage Systems Center, partnered with a consortium of companies including Seagate, the largest disk-drive manufacturer in the world. In 1998, Seagate decided to create its first and only research laboratory. Although the company headquarters is in California, the company chose Pittsburgh for its new facility so that the collaboration with Carnegie Mellon’s center could continue. For its first several years, the new lab grew at a rapid pace, hiring on average one PhD per week from over 20 different countries. Collaboration with the university continues to be strong, and Pittsburgh is developing as a hub for the information storage industry, with university spin-off companies also being created as a result of the ERC. Most of the economic activity has been supported by the private

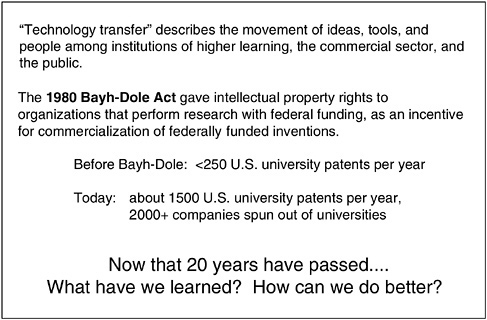

FIGURE 8 Technology transfer.

sector, but the early federal dollars invested in this peer-reviewed center award was the catalyst that made this growth possible.

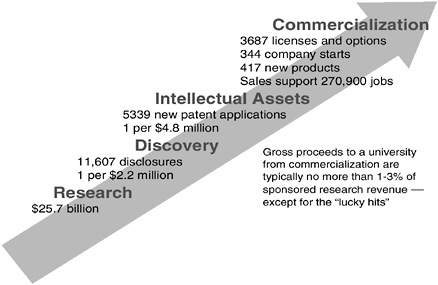

The Learning Curve of Tech Transfer

To stimulate the contributions that Carnegie Mellon technologies can make to economic growth, the university has restructured its technology transfer function. Starting in late 2000, Dr. Gabriel led an exercise, marking the 20th anniversary of the Bayh-Dole Act, that brought together people from across the university “to see how we can do technology transfer better.”9 Before Bayh-Dole, fewer than 250 U.S. university patents were issued each year; today, about 1,500 U.S. university patents are issued each year, and thousands of companies and jobs have been created that are based on university research (see Figure 8).

She said that one result of this increased patenting activity by universities is anger and frustration on the part of many of the private-sector constituencies that the university interacts with. Whether as nonprofit organizations that receive federal funding or as public institutions, universities operate under a set of legal constraints that are not well understood by outsiders. It can seem to industry

partners as well as to faculty inventors that the university is overly bureaucratic. People from the business world, investment world, and academic world often seem to speak different languages.

“How do we fix that?” Dr. Gabriel asked. She noted that a national organization, the Association of University Technology Managers (AUTM), exists to help universities share best practices and promote the movement of innovative ideas into the private sector. Each year AUTM compiles data collected from about 200 universities and research institutes to measure the success of technology transfer, such as how many patents are filed, how much royalty revenue is realized, and how much money is spent on legal costs. However, there is a shortcoming of such financial metrics alone, she said. They say little about social return—how easily new inventions move into broader use, benefits to the region around a university, improvements in faculty attraction and retention, etc. If the sole focus of technology transfer is on financial returns to the university, she argued, it is difficult to make the case for the university to support a tech transfer mechanism at all. The reality is that a technology transfer office typically costs more to operate on an annual basis than the university realizes in ongoing royalty revenue. The function must be subsidized virtually every year; only occasionally, with luck, does a university spin off a useful product that brings in millions of dollars in revenue—and even then, the return comes years after the investment of staff time and legal expenses. There are too few of these “home-run hits,” she said, to justify tech transfer offices for most universities on purely financial grounds (see Figure 9).

FIGURE 9 U.S. university tech transfer. SOURCE: Jim Severson, from Association of University Technology Managers (AUTM) national survey data, FY1999.

Contrary to the dreams of many universities in the early days following Bayh-Dole, tech transfer can be expected to bring in only a small percentage of what the university earns for its federally funded research.

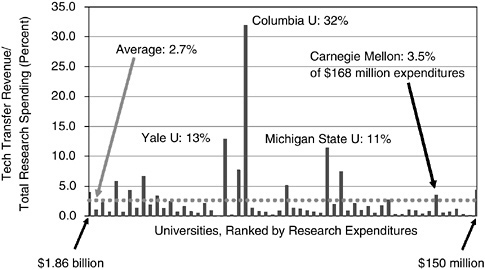

To test that assumption, she plotted technology transfer revenue for one recent year at the largest of the nation’s universities, divided by overall research revenue (see Figure 10). To avoid absolute-dollar comparisons between the giant California system, for example ($1.6 billion of research revenue), and smaller schools like CMU, this ratio roughly scales the universities by size. In general, universities did well if they realized a few percent of the research budget as technology transfer revenue. For a $100 million research budget, bringing in $3 million in royalties and capital gains revenue “should be considered a very good result,” she said. “But don’t hope or expect that you’ll do even that well routinely.”

During Carnegie Mellon’s reassessment of its tech transfer function, the university group first asked a large number of experienced people what they thought was wrong with the process and how it ought to work instead. Tech transfer was being regarded as a bureaucratic function that was added to the university, rather than an integral part of the institution’s mission. The philosophy in the early years was more or less to search for the “diamond in the rough,” focusing all its energy on that “best bet” that might hit the jackpot for the university—while providing little or no attention for ideas that seemed less promising financially. The implicit goal was to maximize revenue while minimizing risk. “But those two goals are

FIGURE 10 FY 1999 tech transfer revenue as a fraction of total research spending, top spending universities.

mutually exclusive,” she said. “There’s no way you can have zero risk and maximum return. It wasn’t working.” Moreover, since even the best venture capitalists, who are far downstream in the commercialization process, get “home runs” less than 10 percent of the time, the group felt that a university should aim for a higher volume of transactions rather than trying to make these guesses at the research stage.

Debunking the “Home-Run Strategy”

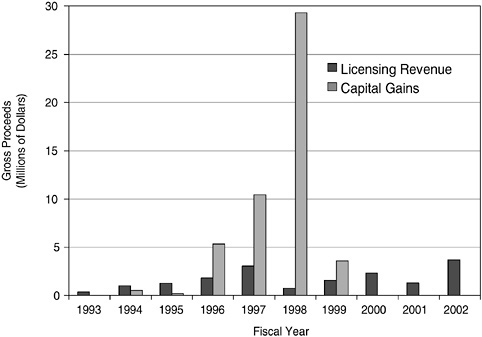

She backed up to say that on one level, it was possible to argue that the strategy was working beautifully: The University had had an income bonanza in 1998 when the Lycos search engine was invented at Carnegie Mellon by Fuzzy Malden and his collaborators (see Figure 11). The university had equity in the company, and when it went public, the university’s half of the capital gains income provided enough money to build a much-needed building, while the other half went back to the inventors. The “home-run strategy” worked in that case, and it was possible to argue in hindsight that the result was good for everybody.

But the Carnegie Mellon task force decided on balance to change the strategy. While Pittsburgh was not the booming economy of Silicon Valley or Boston, it had by then gathered a substantial population of experienced lawyers, accoun-

FIGURE 11 Carnegie Mellon tech transfer revenue.

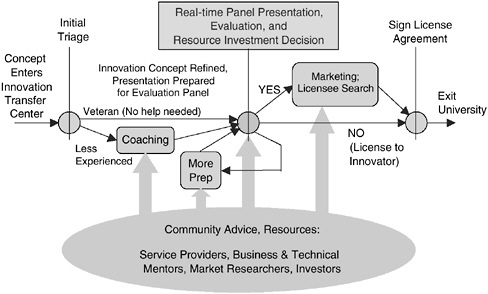

tants, and economic development organizations that could offer competent help for new entrepreneurs. With more expertise in the community, tech transfer could now more effectively be done in close partnership with the external business community. So the university decided to simplify and streamline the transfer process, making it easier for an embryonic company to get out of the university quickly. After that, the company would depend on community resources, with only informal help from the university and its network of spin-off companies.

The Power of Group Process

Perhaps the most important shift in tech transfer design was suggested by the influence that Nobel Laureate Herbert A. Simon has had on Carnegie Mellon. Simon, said Dr. Gabriel, “really understood how to have strong people in different disciplines work together to do amazing things.” Carnegie Mellon is now known for its collaborative, problem-solving culture across the campus, from the fine arts to engineering, computer science, and business. The task force decided to try to apply that gift to their process of innovation transfer. Evaluation of new technology concepts is now done through a collaborative, real-time evaluation of the innovation by a panel of reviewers with complementary expertise. Half or more of the reviewers are chosen from the business community outside the university in order to involve deep experience with commercialization relevant to

FIGURE 12 Innovation transfer process.

the innovation to be considered. The group has a conversation about commercialization strategy around a table with people they may not yet have met, who share their interest in the technology but see it from a different perspective. Through this brainstorming session, the university gets high-quality input to help make better decisions about its resource investments. The reviewers get an intellectually stimulating experience and the chance to expand their own personal networks. The creators of the innovation get higher-quality attention and a faster decision from the university about how they should proceed. By making judicious use of appropriate external expertise, the university makes these difficult decisions and carrying out these tasks easier and more likely to be successful.

This interactive approach is designed to bring out issues that might be missed by individual specialist reviewers and to offer a range of perspectives for the university to consider. In addition, since each panel will involve different experts, many of them from the Pittsburgh region, the process will, over time, strengthen the network of technology and business professionals in Pittsburgh and the working relationship between Carnegie Mellon and the regional community. “At the end of a two-hour session,” she said, “you know everyone around the table well enough to ask them anything, anytime. The network of experts that this builds can really help our inventors and their spin-off companies.”

She then discussed how to extend these discoveries about group interaction to a more effective response to the national focus on preventing terrorism. Like others at the workshop, she was optimistic about the ability of public-private partnerships to provide effective structures and to speed up the creation and deployment of useful new innovations. She based this opinion partly on her own experience with the Technology Reinvestment Project10 during her tenure at the National Science Foundation in the early 1990s.

The TRP, she recalled, was politically charged from its first implementation, dividing those who saw an urgent need to use private-sector advances and the “dual-use” concept to strengthen the defense industry sector and those who believed the program was primarily trying to move spending away from defense to commercially directed applications. As the program began, she said, one of the greatest needs was to break down the walls that divided different program offices in different government agencies funding work in similar technology areas. “When I arrived at NSF in 1991,” she said, “I was appalled to find that program

officers in my technology field did not even know their counterparts in other agencies—even though we were all working within a few miles of one another.”

The cause of the “walls” was not mysterious, she observed: Each agency had its own mission and everyone was busy. What the TRP did was “to force six agencies with a common pot of money and an important overarching objective to jointly structure the program, review proposals, make decisions, and execute tasks.” The agency representatives deliberated together in intense review sessions for weeks, driven by a requirement that all the money had to be disbursed in a finite time. After that experience, she found that those six people knew and respected each other very well. “We all remember how exciting and important that was. I truly believe that if we did joint program execution like this more often, the government would work better. Business-as-usual has too often been for each agency to create its own new program for each new hot field, not communicating with the others and ignoring themes that require a larger critical mass than any one program can provide. It would be nice to know that whenever there’s an urgent national need, you could get an interagency group like this together to find and fund the best collaborative work quickly, without turf getting in the way.”

She closed by noting the value of other major federal-private partnership programs designed to stimulate technological innovation and economic growth, such as NIST’s Advanced Technology Program and the Small Business Innovation Research (SBIR) program.11 The SBIR set-aside program, for example, she said, has helped a large number of new technology companies create and commercialize innovations much more quickly than could have been done within large companies. Much of this work is done in partnership with universities, and much more could be done this way. The SBIR mechanism could play a major role in seeking out and developing innovations to help the nation address its new technology challenges within the war on terrorism. She suggested that program organizers study the feasibility of adapting SBIR to this need, especially by finding ways to reduce the cycle time for proposal solicitation and review. This, she said, might effectively tap the current energy in universities and companies and make their work quickly responsive to national needs.

DISCUSSANT

Michael Borrus

The Petkevich Group

Mr. Borrus said he had been struck by the parallel between the qualities of a good public-private partnership and those of a long marriage. Each had to navigate

the uncertainties of misunderstandings and poor communication, but both had the potential for high achievement over the long term. And when they did fulfill their potential, they could accomplish goals that were beyond the reach of a single person. In the same manner, public-private partnerships can advance the pace of technology in ways that neither federal agencies nor private firms alone can do: by overcoming roadblocks that block development and innovation; by focusing creative thinking on national needs that would otherwise go unmet; by compensating for market gaps or imperfections; and by producing significant social benefits that an individual firm or sector would be unlikely to achieve.

He noted that the United States had had a long history of public-private collaborations, dating back perhaps to Alexander Hamilton’s Report on Manufactures, and certainly as far as the Morrell Act of 1862, when the government established the land-grant universities and the agricultural extension service to assist private farmers. More recently, partnerships played a significant role in developing such innovations as the jet air frame and jet engine, the transistor and the silicon chip, computer technology and the Internet, and many vaccines and other elements of modern biotechnology. “It’s worth reminding ourselves,” he said, “that if we do this right, these collaborations can work, and we can apply the lessons learned to the extraordinary goal of homeland security.”

Attributes of Successful Partnerships

He said that partnerships are particularly effective at tackling very complex problems, especially those that occur at the intersection of existing disciplines, methodologies, and perspectives. He suggested some key attributes of successful partnerships:

-

He agreed with Dr. Gabriel that trust plays a critical role in bringing together people with different knowledge.

-

The initial design of partnerships also appeared to matter, he said. One size does not fit all, and each partnership must be designed according to its specific goals. This does not mean, however, that program planners should attempt to pre-determine how to reach those goals. In fact, he said, failure is almost always the result when a group attempts to over-plan.

-

Competition is important throughout the process, he said, even among members of the partnership itself.

-

Those projects that succeed tend to have funding commensurate with their goals; either too much or too little funding can impede progress.

-

All members of a partnership should play the roles for which they are best suited, rather than assuming or being asked to play a role for which they are not institutionally or historically prepared. For example, universities are not naturally suited to play the role of venture capital firms; they are better able to facilitate interaction between the VC industry and university researchers.

-

Partnerships should receive frequent, rigorous evaluation throughout their lifetime. Evaluators must have the courage and willingness to point to experimental failure or error. For some partnerships that have failed over the years—including the original Synfuels projects, the fast breeder reactor, and the supersonic transport airplane—evidence of experimental failure was sometimes ignored and yet the program was permitted to continue—and to fail. External input may force the evaluation toward greater objectivity; inbreeding can be fatal to sound assessment.12

-

Partnerships must be flexible. He said that virtually no venture capital company had ever funded a company that exactly followed the business plan originally used to secure funding. The key to a successful venture, he said, is to support people who can adjust flexibly to new markets, new technologies, and unexpected experimental results.

The Need for Validation by the Market

A subtext to all public-private ventures, he said, was the need for validation by the market. There must be substantial overlap between what the project is trying to accomplish and where the relevant civilian commercial markets are headed. In past projects that failed, such as the SST, there was substantial divergence between partnership objectives (smaller planes, supersonic speeds) and what the market wanted (wide-bodies and long-haul). As technology development proceeds over time, it becomes ever more difficult and expensive to force the two sets of objectives together if they’ve diverged from the start. Reaping the full social and economic benefits of collaborations requires sensitivity to commercial market demand on the part of all partners.

The fight against bioterrorism in particular, he suggested, heralds a profound shift in U.S. defense. He cited George Poste, former chair of research and development at SmithKline Beecham, who predicted within the next several decades a

time in which every cell system in the human body will be understood sufficiently to be manipulated either for good—to cure disease—or for ill—to cause harm. Consequently, said Mr. Borrus, the nation faces no choice but to develop something new—a “true biodefense industry.”

In doing so, he said, we are on the verge of attempting something not done since the post-World War II years: to coordinate public spending, academic research input, research from the national labs, and the activities of private industry to build defense capability. Today this capability will be in biodefense; 50 years ago, it was in conventional and nuclear deterrents. At that time, we made the choice to prevail on the basis of superior technology rather than superior numbers. And today, he said, we have the same choice, and we need to make the same decision. “We need to differentiate our performance in the biodefense realm on the basis of our superior technology, not in any other way.”

He went on to demonstrate that partnerships were essential in developing our defense 50 years ago, and should be so again in developing biological capabilities.

Again, 50 years ago, partnerships brought tremendous technology-transfer benefits to civilian society, in addition to building a superior military capability. Today, he said, partnerships in biology have the potential to deliver benefits of the same order. To detect a biothreat, for example, one needs early warning, preferably at a point of care, so intervention can begin immediately. One also needs early intervention to contain the threat before it can spread. He suggested that such early detection and diagnosis are precisely what civilian society needs to maintain a strong health care system for the United States.

“If we do this right,” he concluded, “by learning lessons from past public-private partnerships and applying them systematically to build the best biodefense capability, we also have the hope of improving our civilian health system and generating commercial leadership across a range of new technologies. We should consider the presentations today, and the panel, to be a modest but essential contribution to getting it right.”

DISCUSSION

Anne Solomon, of the Center for Strategic and International Studies in Washington, commented that the relationship between partnerships and commercial markets was complex, and wondered what kinds of markets there would be for some of the technologies produced. She suggested two possible sources: government acquisition, and the commercial market, including sectors that control technologies, or companies that may want the products. She said that the CSIS had been developing a long-term strategy for bioterrorism countermeasures. The problems they found were enormous, she said, especially those having to do with the market and with the government’s ability to articulate what it wants; a reluctance to talk about vulnerabilities for fear of liability; and competition, in the sense that

one company hesitates to invest in protection unless its competitors also invest. How, she asked, would venture capital firms react to a company that invested in R&D that was aimed at counter-bioterrorism products if there was no known commercial market?

Mr. Borrus responded that if the potential reward was commensurate with the risk that needed to be taken, it would be taken. He noted as an aside that liability issues per se had not restrained financial misconduct at Enron Corp. because the potential for gain was so high.

A participant observed that the government may have to play a lead role in stimulating a market for biodefense products. This would not necessarily be bad, she said, especially since “companies almost always misjudge the market in terms of timing.”

Dr. Bement added that the issue of product liability was central to the mission of NIST in the sense that standards and measurements are fundamental to improving reliability and reducing risk. “The whole infrastructure in standards development,” he said, “is pretty much aimed at reducing product liability.” Also, he said, even though public sector intervention is normally intended to address “market failure,” this is a very subjective term. “It’s in the eyes of the beholder,” he said, “and something we don’t know how to deal with very well.”

Dr. Wessner pressed Dr. Flamm about the evidence that SEMATECH was regarded positively—that it had maintained its membership and added international membership, that companies had continued to pay their dues, and that within the industry it was considered to have a positive impact. He asked whether there was any additional “proof of success,” and whether SEMATECH had been the model for the similar European consortia.

The Difficulty of Proving Successes

Dr. Flamm replied that it is difficult to prove success “when the target is so broad”—that is, restoring the competitiveness of manufacturing processes within U.S. companies. So many forces contribute, he said, that the idea of isolating a single causative factor is “basically impossible,” unless one can actually measure inputs and outputs, which is “relatively hard to do for an entire industry.” He argued that the only useful yardstick is the perceptions of the people involved: Those who provided the funding perceived that the program was worth supporting. In addition, Japan had explicitly modeled its program on SEMATECH. “There’s great irony there,” he added, “because to some degree SEMATECH was a response to Japanese programs of the 1980s.” Today, he concluded, most industrialized countries now have some program resembling SEMATECH.

Mr. Borrus agreed, adding that the strongest indication that SEMATECH added value to the industry was the willingness of industry to fund it after government support ended.

David Peyton, of the National Association of Manufacturers, posed a question

for Dr. Gabriel. Referring to recent testimony before the Senate Commerce Committee on nanotechnology, he described the opinion of a senior scientist from Hewlett-Packard that U.S. universities had become more difficult to partner with on sponsored research, because they insisted on tougher policies on intellectual property rights. He asked whether in fact leading research universities were trying harder to retain patent ownership, and whether it would be possible to develop a balance that would allow universities to spawn new businesses and major R&D companies to benefit from research done at universities.

Adjusting to the Age of Intellectual Property

Dr. Gabriel said that universities were indeed becoming more savvy than they had been in the early 1980s, and that IP was “a tough domain.” Companies had once approached universities, she said, with the expectation that universities knew little about IP issues. As universities entered this domain with IP offices of their own, they had been “stumbling sometimes” as they moved along the learning curve to navigate the constraints of nonprofit law, IRS rulings, export controls, and the preservation of an open research environment. But she pointed out that companies also find each other hard to deal with, because intellectual property issues are “inherently contentious.” “This is just a fact of life,” she said, “and we’re all going to have to learn from each other about how to make it easier.”

Fred Adler, of WDC USA World-Wide, who identified himself as a former colleague of Dr. Flamm at the Department of Defense, said that he had been in Tokyo during the Sarin nerve gas attack on subway passengers. He asked whether it would be profitable to partner with the Japanese, in the assumption that the Sarin attack had served as a “technology accelerator.” He also asked how public-private partnerships might be arranged with Japan, especially in the context of the planned global disaster information network.

Dr. Flamm agreed that there are strong anti-terrorism resources outside the United States, especially in Europe and Israel, and that he hoped we are tapping these resources. Mr. Adler added that there is good technology in many places, but that few of them have sufficient funding for investment.

Mr. Borrus said that “we have no choice but to partner; knowledge is too widespread around the world for us to do it ourselves.” He noted that patterns of direct foreign investment already show that private companies know this and are exploiting technical specialization around the globe. He urged efforts to locate partnerships in the U.S. whenever possible so as to gain the most value from knowledge spillovers.

Dr. Bement closed the discussion by observing that NIST partnered with about 40 national metrology institutes around the world, bringing many of their members to NIST as guest scientists. He found that some of them, especially those from Japan, contributed valuable insights through their strengths in analytical chemistry, detection technology, and other fields.