2

A Systems Approach to Assessment

Although the science assessment that is put in place by states specifically to meetthe No Child Left Behind (NCLB) requirements will constitute but a small fraction of all of the science assessment that is conducted in schools and classrooms across a state, it will exert a powerful effect on all aspects of science education. The committee concludes that carefully considering the potential nature of this effect up front, as NCLB assessment strategies are being developed, would help states meet the requirements of the law and, at the same time, lessen the unintended and possibly undesired effects on students’ science education. Thus, the committee recommends that states take a systems approach and consider the design of science assessment in its context as part of the larger education system, not as an entity that operates in isolation.

This chapter begins with a discussion of what a system is and what it means to take a systems approach to assessment. It then describes the reasoning that underlies the committee’s conclusion that states should meet NCLB requirements by developing a system of assessment that incorporates multiple measures and a range of assessment strategies. We then discuss the importance of coherence in the science education system and, within it, in the science assessment system. The chapter concludes with summaries of some possible configurations for assessment systems to meet NCLB requirements.

CHARACTERISTICS OF SYSTEMS

The term “system” is used frequently in discussions of education; people speak of educational systems, instructional systems, assessment systems, and others, but it is often unclear what they actually mean by the term. In recom-

mending that states take a systems approach to science assessment, the committee means that assessment must be understood in terms of the ways it works within the education system and the ways in which the parts of the assessment system interact.

Systems have several key characteristics:

-

Systems are organized around a specific goal;

-

Systems are composed of subsystems, or parts, that each serve their own purposes but also interact with other parts in ways that help the larger system to function as intended;

-

The subsystems that comprise the whole must work well both independently and together for the system to function as intended;

-

The parts working together can perform functions that individual components cannot perform on their own; and

-

A missing or poorly operating part may cause a system to function poorly, or not at all.

Systems and subsystems interact so that changes in one element will necessarily lead to changes in others. Systems must work to strike a balance between stability and change, and they need to have well-developed feedback loops to keep the system from over- or underreacting to changes in a single element. Feedback loops occur whenever part of an output of some system is connected back to one of its inputs. For example, when teachers identify difficulties students are having with a concept and adjust their instructional strategies in response, which in turn causes students to approach the concept in a different way, a feedback loop has worked effectively. As we will discuss in Chapter 8, evaluation and monitoring—which are essentially assessment of the assessment system—provide another source of feedback that can shape the ways in which the assessment system functions within the education system.

THE SCIENCE EDUCATION SYSTEM

The goal of a science education system is to provide all students with the opportunity to acquire the knowledge, understanding, and skills that they will need to become scientifically literate adults (science literacy is discussed further in Chapter 3). In a standards-based education system, the state goals are articulated in the standards. Thus, well-conceived standards are key if the science education system is to achieve its goals and we discuss the nature of high-quality standards in Chapter 4.

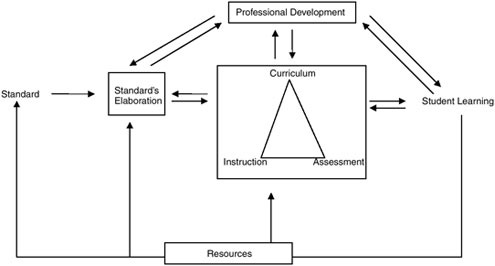

The science education system is part of a larger system of K–12 education and is, itself, comprised of multiple interacting systems. These other systems include science curriculum, which describes what students will be taught; science instruction, which specifies the conditions under which learning should take place; and

FIGURE 2-1 Conceptual scheme of a science education system.

teacher preparation and professional development, which are critical for the proper functioning of many other elements. Each of these systems is also subject to other influences—as for example when teacher preparation policies are influenced by professional societies, accrediting agencies, institutions of higher education, and governmental agencies or when legislators pass laws to influence what is taught in the schools. The committee’s conception of a science education system is illustrated in Figure 2-1.

An additional source of complexity is that science education systems function at multiple levels—classroom, school, school district, state, and national levels. Moreover, because comparisons among educational priorities and achievement results around the world are often sought, international influences also affect science education. Public reactions to results from international comparisons of educational achievement, such as the Third International Mathematics and Science Study or the Programme for International Student Assessment highlight the influence of the international community on science education in the United States.1

|

1 |

The committee commissioned a paper from Dylan Wiliam, Educational Testing Service, and Paul Black, Kings College, England, to explore the nature of science education in other countries and its effects on science assessment and achievement. The paper, “International Approaches to Science Assessment,” is available at http://www7.nationalacademies.org/bota/Test_Design_K12_Science.html. |

A science education system is thus responsive to a variety of influences—some that emanate from the top down, and others that work from the bottom up. States and school districts generally exert considerable influence over science curricula, while classroom teachers have more latitude in instruction. The federal govenment and states tend to determine policies on assessment for program evaluation and accountability, while teachers have greater influence over assessment for learning. Thus for the education system to maintain proper balance, adjustments must continually be made among curriculum, instruction, and assessment not only horizontally, within the same level (such as within school districts), but also vertically, through all levels of the system. For example, a change in state standards would require adjustments in assessment and instruction at the classroom, school, and school district levels.

Coherence in the Science Education System

A successful system of standards-based science education is coherent in a variety of ways. It is horizontally coherent in the sense that curriculum, instruction, and assessment are all aligned with the standards, target the same goals for learning, and work together to support students’ developing science literacy. It is vertically coherent in the sense that there is a shared understanding at all levels of the system (classroom, school, school district, and state) of the goals for science education that underlie the standards, as well as consensus about the purposes and uses of assessment. The system is also developmentally coherent, in the sense that it takes into account what is known about how students’ science understanding develops over time and the scientific content knowledge, abilities, and understanding that are needed for learning to progress at each stage of the process.

Coherence is necessary in the interrelationship of all the elements of the system. For example, the preparation of beginning teachers and the ongoing professional development of experienced ones should be guided by the same understanding of what is being attempted in the classroom as are the development of curriculum, establishing goals for instruction, and designing assessments. The reporting of assessment results to parents and other stakeholders should reflect these same understandings, as should the evaluations of effectiveness built into all systems. Each student should have an equivalent opportunity to achieve the defined goals, and the allocation of resources should reflect those goals. Each of these issues is addressed in greater detail later in the report, but we mention them here to emphasize that a system of science education and assessment is only as good as the effectiveness—and alignment—of all of its components.

While state standards should be the basis for coherence, and should serve to establish a target for coordination of action within the system, many state standards are so general that they do not provide sufficient guidance about what is expected. Thus, each teacher, student, and assessment developer is left to decide

independently what it means to attain a standard2—a situation that could lead to curriculum, instruction, and assessment working at cross purposes. As will be discussed in Chapter 4, better specified standards can assist states in achieving coherence among curriculum, instruction, and assessment.

THE SCIENCE ASSESSMENT SYSTEM

Science assessment is a primary feedback mechanism in the science education system. It provides information to support decisions and highlight needed adjustments. Assessment-based information, for example, provides students with feedback on how well they are meeting expectations so they can adjust their learning strategies; provides teachers with feedback on how well students are learning so that they can more appropriately target instruction; provides districts with feedback on the effectiveness of their programs so they can abandon ineffective programs and promulgate effective ones; and provides policy makers with feedback on how well policies are working and where resources might best be targeted so appropriate decisions can be made. Assessment practices also communicate what is important and what is valued in science education and, in this way, exert a powerful influence on all other elements in the science education system.

Collecting data about student achievement is very important to states, and the results must be accurate and valid for the specific purposes for which they will be used (American Educational Research Association, American Psychological Association, and National Council on Measurement in Education, 1999). Researchers in educational measurement have established that, in general, the results from a single assessment cannot provide results to support valid interpretations for a variety of purposes equally well. Given this reality, multiple assessment strategies, or a single assessment consisting of multiple components, are required to supply the assessment-based information needed within the system. Incorporating multiple assessment strategies into a system is important, but a set of strategies does not, as Coladarci et al. (2001)3 note, necessarily constitute a system any more than a pile of bricks constitutes a house. Just as the science education system must be coherent, the assessment system must be coherent as well.

Coherence in the Science Assessment System

Educators at each level of the education system use a variety of assessment strategies to obtain the information they need, and these strategies can take many

|

2 |

Many state standards are written using terms such as “students will understand,” “students will know” (see Chapter 4 of this report). |

|

3 |

Downloaded on April 15, 2005 at: http://mainegov-images.informe.org/education/g2000/measured.pdf. |

forms and can serve both summative and formative purposes. Formative assessment provides diagnostic feedback to teachers and students over the course of instruction and is used to adapt teaching and learning to meet student needs. Formative assessment stands in contrast to summative assessment, which generally takes place after a period of instruction and requires that someone make a judgment about the learning that has occurred.

While any assessment can provide valuable information, the multiple forms of assessment that are used within a district or state are typically designed separately and thus do not cohere. This situation can yield conflicting or incomplete information and send confusing messages about student achievement that are difficult to untangle (National Research Council, 2000a). If discrepancies in achievement are evident, it is difficult to determine whether the tests in question are measuring different aspects of student achievement—and are useful as different indicators of student learning—or whether the discrepancy is an artifact of assessment procedures that are not designed to work together. Gaps in the information provided by the assessment system can lead to inaccurate assumptions about the quality of student learning or the effectiveness of schools and teachers.

For a science assessment system to support the goals of the science education system, it must be coherent within itself and with the larger system of which it is a part. Thus, it must be tightly linked to curriculum and instruction so that all three elements are directed toward the same goals. Moreover, assessment should measure what students are being taught, and what is taught should reflect the goals for student learning articulated in the standards. The assessment system must be characterized by the same three kinds of coherence—horizontal, vertical, and developmental—that are needed in the system as a whole.

In a coherent assessment system, assessment strategies that are designed to answer different kinds of questions, and provide different degrees of specificity, can provide results that complement one another. For example, a classroom assessment designed by a teacher might provide immediate feedback on a student’s understanding of a particular concept, while an assessment given throughout the state might address mastery of larger sets of related concepts achieved by all students at a particular grade level. The results might look different in the way they are expressed, but because they would both be linked to the shared goals that underlie the assessment system, they would not cause confusion. In a coherent system, even information that seems contradictory is useful as it may be shedding light on an important aspect of achievement not tapped by other measures.

When there is no system to guide the interpretation of assessment results, data from any one assessment can be taken out of context as justification for action. This may cause the education system to over- or underreact to assessment results in ways that are disruptive to the education system and student learning. For example, if the state indicates that it values the results of a particular test and makes known its plans to base important decisions on the results, school and school district education systems are likely to adjust their efforts to make sure that

scores on that test improve. Teachers and administrators may focus on teaching the knowledge and skills that are assessed while deemphasizing those that are not tested. Similarly, students may focus on mastering those things that will lead to better test performance. The result may be to turn a single, narrowly focused assessment into the de facto curriculum. This situation is undesirable, even when the assessment is aligned with the standards because no single assessment, no matter how well designed, can tap into the complexity of knowledge and skills that are necessary for developing students’ science understanding. Evidence that teaching to a single test has contributed to the narrowing of curricula in many jurisdictions is discussed in Chapter 9. Box 2-1 outlines some important characteristics of a high-quality science assessment system.

MULTIPLE MEASURES AND A RANGE OF MEASUREMENT APPROACHES

The committee recommends that states take a systems approach to science assessment, by developing a system of assessment that incorporates multiple measures and a range of assessment strategies. Box 2-2 describes some of the possible approaches states could incorporate into their systems. The list is not meant to be exhaustive, and the committee does not specify which of these strategies would be most useful for states to use, rather states must determine which strategies are most likely to produce the information that they need to fully assess students’ achievement of the state goals.

NCLB requirements support the committee’s position that multiple assessment strategies should be iused (see Box 1-1). While the use of the terms “multiple measures” and “range of measurement approaches” is advocated, these terms can mean different things in different contexts. For example, the term multiple measures is used in the context of high-stakes testing to mean that when important decisions about individuals are to be based on test results, they should not be made on the basis of the results of a single test. The concept of multiple measures has expanded over the years and now also is used to refer to the array of assessment approaches that are included in an assessment program rather than the number or kinds of tests that any one student is asked to take.

The committee recommends that states should incorporate both multiple measures and a variety of measurement approaches in their science assessment systems for two primary reasons, the second of which has several components. First, as discussed above, different kinds of information are needed at each level of the education system to support the many decisions that educators and policy makers need to make, for example, assessing the status or level of student achievement for the purposes of monitoring progress in the classroom, evaluating programs at the district level, or providing information for accountability purposes at the state level. No one assessment instrument could reasonably supply, for example, long-term trend data regarding the achievement of population sub-

|

BOX 2-1 The following are characteristics of an assessment system that could provide valid and reliable information to the multiple levels of the education system and support the ongoing development of students’ science understanding:

|

groups statewide, ongoing feedback to support instruction and learning in the classroom, information about students’ mastery of a topic they have just been taught, and comparative data that could be used to evaluate a new instructional strategy—yet these are only a few of the types of information needed within the system.

NCLB requires that assessment-based information be used for multiple purposes. Explicitly, it is to be used both to hold schools and districts accountable for student achievement and to provide interpretive, descriptive, and diagnostic information that can be used by parents, teachers, and principals to understand and address individual students’ specific academic needs. Tests intended to serve diagnostic functions for individuals are likely to include kinds of items and tasks different from those tests designed to monitor a system’s progress toward system goals (Millman and Greene, 1993). Thus, while both individual and group-level information may be needed to achieve the overarching goals of NCLB, it is not

|

BOX 2-2

SOURCE: Adapted from Science Assessment and Reporting Support Materials, 1997, Department of Education, Victoria. Available: http://www.eduweb.vic.gov.au/curriculumatwork/science/sc_assess.htm. |

likely that a single assessment, consisting of a single component, could provide this information and meet professional technical standards for validity and fairness for each purpose for which the results will be used.

The second reason is that, as discussed in Chapter 3, science achievement is dependent on students’ developing a broad and diverse set of knowledge and skills that cannot be adequately assessed with a single assessment or a single type of assessment strategy. Indeed, some critical aspects of science, such as the ability to conduct a sustained scientific investigation, cannot be tested using traditional paper-and-pencil tests (Quellmalz and Haertel, 2004; Champagne, Kouba, and Hurley, 2000; Duschl, 2003). Furthermore, learning science requires different kinds of knowledge and applications of knowledge. A growing body of evidence indicates that some types of measurement approaches may be better suited than others for tapping into these different aspects of knowing. For example, creating multiple-choice items that are indicators of the reasoning that students use in arriving at their answers can be very difficult, while this aspect of learning may be measured more easily using other formats.

Another concern that is raised by the complexity of the domain of science is related to the importance of assessment for signaling to teachers, students, and the public what is valued in science and what should be the focus of teaching and learning. A single assessment, no matter how well designed, cannot capture the breadth and depth of the science that is included in most state standards. Similarly, it would be difficult for a single assessment to be fully aligned with states’ content and performance standards, as is required by NCLB. There is also some suggestion that a single state standard may be too complicated to assess with a single assessment strategy.

Some states, such as Maryland, California, and Kentucky, tried to develop assessment systems that are more fully aligned with their goals for student learning than previous assessments. All three used a matrix sample design in which all students take the test, but each student is only tested on a small subset of all of the items. This allows a larger sample of the instructional program to be assessed, which helps states both to align the assessment with the full breadth and depth of their standards and to discourage narrowing of the science curriculum. These states also included multiple measurement strategies, such as performance tasks and student collaborative work into the system. However, we note that state programs such as these that have instituted this design have been forced, by public pressure for individual scores, to abandon these programs. For a similar reason a model that only included a matrix-sample test could not, by itself, be used by a state to meet NCLB requirements which require the reporting of individual results that are diagnostic, descriptive, and interpretive.

Finally, multiple measures are needed to provide a complete and accurate picture of students’ science achievement. Any one test, task, or assessment situation is an imperfect measure of what students understand and can do. Including different types of measures in the system also can provide opportunities for dif-

ferent types of learners to demonstrate their achievement. In addition, multiple measures directed at the same standard can paint a richer picture of student achievement and can tap into the complexity of each science standard more fully.

THE NEED FOR AN ASSESSMENT SYSTEM TO MEET NCLB REQUIREMENTS

The committee concludes that meeting NCLB requirements will necessitate that states develop either one test with multiple components or a set of assessment strategies that collectively can provide assessment-based information. The components necessarily will vary according to state priorities and goals. For example, some states may choose to develop a single hybrid test in which students take both an individual core assessment and also participate in large-scale assessment with a matrix sample design that provides information about groups of students. Other states may choose to combine standardized classroom assessment with a large-scale assessment with a matrix sample design. Still others may decide not to develop a statewide test at all and may opt instead for one of many local, district, or mixed models of assessment that combine local, district, and state assessments.

Some states may decide to meet NCLB requirements by allowing districts to develop their own content standards and assessments. In this model, districts adopt or develop challenging academic content standards, develop or use existing assessments that are aligned to these standards, and set achievement standards. Other states may choose to use a mixed approach by creating mechanisms whereby the state and school district work together to create and implement assessment that fills in the information that is lacking in the state test.

The specific components of a science assessment system will vary for many reasons, including available resources, political influences, the purposes for which the system is being created, and the needs of the audiences that will use the results.

FOUR SAMPLE ASSESSMENT SYSTEM DESIGNS

Recognizing that no one conception of an assessment system would fit every state, the committee commissioned four different groups of science educators and researchers to develop the outlines of assessment systems that would not only meet the criteria laid out in NCLB, but also meet a variety of other criteria. These designs are just a few of the many possible configurations that states could adopt. The charge to these design teams is included in Appendix B. For consistency, each team was instructed to use the National Science Education Standards (NSES) as the basis for its model, though the committee recognizes that state standards are designed to meet somewhat different goals than those that guided the development of the NSES. Appendix B provides lists of the participants in these design teams. We summarize here their key findings.

Instructionally Supportive Accountability Tests

One of the four teams was asked to develop a system with a focus on the application of the 2001 recommendations of the Commission on Instructionally Supportive Assessments. That commission determined that a significant problem with most high-stakes tests is that they are expected to provide information on student mastery of exhaustive science standards—an expectation that is unreasonable and will lead to a variety of serious deficiencies in the assessment. In brief, the commission advocated that tests:

-

Measure students’ mastery of only a modest number of extraordinarily important curricular aims,

-

Describe what was to be assessed in language that is entirely accessible to teachers, and

-

Report results for every assessed curricular aim.

The design team chose to apply these three principles just in the context of the NSES standards for science-as-inquiry in physical science, which could serve as an example for the way it might be done for an entire set of science standards. With guidance from educators, physicists, and chemists, the team developed a set of strategies for implementing the commission’s recommendations. Their strategies were based on the assumption that states would use a 90- to 100-minute assessment once in each grade band to meet the NCLB requirements.

The team found that it could winnow the curricular aims in the NSES related to physical science considerably, and it organized them in a matrix of cognitive skills (such as identifying questions, designing and conducting an investigation, etc.) and significant concepts (such as forces and motion, forms of energy and energy transfer, etc.). The team recognized that some of the elements on the matrix would overlap with those for other science disciplines, such as life sciences, which would help streamline the ultimate results. Each cell in the matrix would include examples of test items or other assessment tactics that could measure the concepts and skills well, in addition to suggested instructional strategies.

While the interrelationships in the matrix helped the team reduce the total number of critical concepts, they were not able to winnow the set of critical concepts down to a number that could be assessed with reasonable accuracy using an annual test; since they were addressing only one aspect of one scientific discipline, a strategy for assessing the “genuinely irreducible” number of key concepts was needed.

The team’s solution was to recommend that the key concepts be rotated on an unpredictable basis, so that each would be eligible for inclusion on the assessment in any given year, though no single assessment would include all of them. In this way, teachers would continue to view all of them as important. The team recognized, however, that student progress toward mastery of the crucial concepts should be monitored in other ways as well, and included optional classroom

assessments of key concepts and skills, perhaps standardized instruments developed at the state level, in their model.

Other characteristics of the model included an emphasis on the assessment of concepts over skills, a reliance on multiple-choice items supplemented by a small proportion of constructed-response items, and the incorporation of targeted professional development designed to assist teachers in gaining optimal instructional insights from the assessments.

A Classroom-Based Assessment System for Science

Another design team was asked to develop a strategy for building a coherent, instructionally useful, teacher-led assessment program that would meet the NCLB requirements. Their principal goal was that assessment should have the effect of informing instruction and improving student achievement. The team took as its starting point the Nebraska STARS assessment system, which uses classroom-based assessments for accountability purposes. The team identified professional development as the critical component in the system, with the following specific goals:

-

Teachers must understand the state content standards and incorporate them into their work,

-

Teachers must be able to develop instruments to gather information about their students’ performance relative to the standards at the classroom level, and

-

Teachers’ reports of their students’ achievement can be collected and used in meeting NCLB’s accountability requirements.

The model includes criterion-referenced assessments administered in the classroom and developed by teachers, with guidance provided through peer groups and other supports. These assessments, while embedded in regular classroom activities, would yield classifications of students into at least the three categories required by NCLB: basic, proficient, and advanced. Because the results would be reported in terms of these predetermined performance categories, they could be aggregated across the state, and could be disaggregated by student subgroups. The assessments would also yield diagnostic information about individual students that could be immediately useful to students and teachers, as well as other kinds of information needed by stakeholders at each level of the system.

While the team saw considerable potential benefits to a classroom-based system led by teachers—ranging from the potential for integrating standards, instruction, and assessment to empowerment of teachers, to potential cost savings—they noted challenges as well. Calibrating the expectations for achievement of different districts and teachers is not easily done. Some costs may be reduced, but others—particularly for professional development—will likely be higher. They also acknowledged that this assessment model is not, as they put it, “psychometri-

cally pristine.” They noted that in a system where districts and teachers are given considerable freedom to devise assessment strategies on their own, a system for evaluating and documenting the technical quality, and the content validity, of classroom-based assessments is critical to the integrity of the enterprise. Despite these and other challenges, however, the team was convinced that such a system could meet NCLB requirements, and provide significant other benefits as well.

Models for Multilevel State Science Assessment Systems

A third design team was asked to explore two possible means of meeting the NCLB requirements for science: using collaboration among states to minimize the burden of developing new strategies, and using technology in new ways to streamline and improve tasks ranging from developing innovative assessment tasks to scoring and data analysis. The team focused on identifying key ways in which intrastate collaboration and technology could be harnessed, and went on to develop two models that illustrate different ways of implementing the features they identified.

The team described its plan as a multilevel, articulated science assessment system that would build and draw upon banks of items and tasks designed according to common specifications. States that chose to participate would share resources to build the banks and draw from them to build individual or shared state science assessments. The item and task pools would be aligned with separate state standards, yet represent joint efforts to address individual standards identified as high priority. Teachers and professional development teams would share responsibility for the pools and work together both to maintain their quality and utility and to engage in a process of ongoing professional development.

Model 1, the State Coalition for Assessment of Learning Environments Using Technology (SCALE Tech), focuses on collective development of means to measure the full range of challenging science standards, and the use of technology throughout the system. Skills and concepts that are particularly difficult to measure could be targeted using strategies—such as simulations and other advanced technologies, tailored reporting of results, and tasks that call on students to design investigations and do other scientific work—that might seem out of reach to a single state on its own.

Model 2, the Classroom Focused Multi-Level Assessment Model, by contrast, focuses on assessments that are embedded in classroom activities. Again drawing on both collaboration, to spread out resources and costs, and technology, to increase efficiency, this model allows teachers to use assessment flexibly, as a formative tool. The program would offer modules that teachers could adapt to meet their own instructional needs as well as administrators’ needs for information to support decision making.

The team provides examples of ways technology can support assessment, and, for both models, presents ways to implement them incrementally, to suit

states’ individual needs. They stressed as essential elements for success in collaboration: a clear, shared mission that meets the needs of each participating state; realistic expectations of what is to be accomplished; a governing board with decision-making authority; and expert advisors to assist in maintaining quality.

Psychometric and Practical Considerations

The fourth design team was asked to consider the design of a science assessment to meet the requirements of NCLB in the context of psychometric and practical considerations that states are likely to face. This team’s job was to think about the choices states would have—and the constraints they would face—in trying to adapt a fairly typical assessment program to the NCLB requirements, while maintaining validity and reliability. The team assumed that the basic elements of the program that could be reconfigured would include content standards, test blueprints, test items, scoring methods, measurement models, scaling and equating procedures, standard-setting methods, and reporting procedures.

After reviewing each of these elements and their implications for the outcome, the team developed a hybrid test design that incorporates a variety of elements in common use. Their aim was to develop a model that would bring simplicity and clarity to a complex domain. While the design calls for innovative items that target significant aspects of science learning, it focuses on the collection of summative information of the kind typically used for accountability, with the proviso that classroom assessments and other tools for collecting formative data would be collected separately.

The design uses a matrix-sampling model similar to that used in the National Assessment of Educational Progress, in which students are given a variety of combinations of test forms so that a broad content domain can be covered. This design also allows for the inclusion of sets of items that can be used to compare performance among schools and districts over time. A version of vertical scaling, in which assessment can be linked by measures of common content across grades, allows for monitoring of growth over time. Moreover, a subset of the test forms could focus on different aspects of the entire domain; as a result, no one test administration would cover the whole domain, but that fact would not be license for teachers or schools to neglect the content not included in any one year.

The team acknowledges that the design is complex, and that it entails demanding statistical analysis procedures, but it believes it successfully balances the need for broad content coverage with the demands for strict comparability that arise when a significant purpose of the testing is accountability.

INTERNATIONAL EXAMPLES

In addition to the models described above, the committee sought insight from approaches to assessment that have been developed in other countries. In a

study of the structure and functioning of science assessment systems in seven countries, Wiliam and Black (2004) point to an almost complete absence of pattern in the science assessment systems of the eight countries they investigated. This team reviewed assessment systems in Australia (Queensland), France, Germany, Japan, New Zealand, Sweden, and England—a set that illustrates both important differences from practices in the United States and a wide range of practices in general—in an effort to identify critical design issues.

Wiliam and Black concluded that while there is no one right assessment system for all jurisdictions, nine major issues provide the greatest insight into a particular assessment system, and merit careful consideration as systems are designed. These issues are:

-

Which purposes of assessment are emphasized—accountability, certifying individual achievement, or supporting learning.

-

The structure of the assessment system, including the articulation between the assessments taken by students at different ages, and the way achievement results are reported.

-

The locus of assessment, that is, who creates the assessments, when and where they are administered, and who scores them.

-

The extensiveness of assessment, that is, questions of who will be assessed at which times, and on what basis these decisions are made.

-

The assessment format, that is, multiple-choice or constructed-response test, portfolios, or other kinds of assessments.

-

Scoring models, the way in which results are combined, aggregated, reconciled, and reported.

-

Issues of quality, including standards for validity, fairness, and reliability.

-

The role of teachers in assessment.

-

Contextual issues, that is, the relationship between assessment strategies and beliefs and assumptions about learning, education, the value of numerical data, and other issues.

As the four models and the insights from abroad presented above suggest, views of what is fundamental to an assessment system do not vary dramatically, but the forms they take are more variable. At the same time, the models presented in this and other chapters offer many valuable ideas for states to consider.

QUESTIONS FOR STATES

Having laid out the case for taking a systems approach to assessment, the committee proposes two questions for the states to consider.

Question 2-1: Does the state take a system approach to assessment? Are assessments at various levels of the system (classroom, school district, state) coherent

with each other and built around shared goals for science education and the student learning outcomes described in the state standard?

Question 2-2: Does the state have in place mechanisms for maintaining coherence among its standards, assessments, curricula, and instructional practices? For example, does the state have in place a regular cycle for reviewing and revising curriculum materials, instructional practices, and assessments to ensure that they are coherent with each other and with the state science standards, and that they adhere to the principles of learning and teaching outlined in this report? Does the state conduct studies to formally monitor and evaluate the alignment between its standards and assessments?