4

Benefits from Improved Earthquake Hazard Assessment and Forecasting

Remarkable advances in our understanding of earthquakes and their effects have occurred during the past century. These advances have been concentrated in the period since 1960, following the installation of a global network of seismic monitoring stations, the World Wide Standardized Seismograph Network (WWSSN). Although the main purpose of the WWSSN was the monitoring of nuclear explosions, the revolution in earth science produced by the acceptance of the theory of plate tectonics in the 1960s could not have occurred without the seismic information provided by this network (a comprehensive summary of these advances was presented in NRC, 2003a). In the context of these advances, this chapter first provides an overview of the role that seismic monitoring plays in hazard assessments in the United States. These hazards include earthquake ground shaking, tsunamis generated by earthquakes, and volcanic eruptions. It then discusses the central role that seismic monitoring plays in the development of ground motion prediction models, which are a vital input into all aspects of earthquake engineering (see Chapter 6). In this context, the committee focuses on a particularly important application of seismic monitoring—the identification of locations within urban regions that are especially vulnerable to damaging earthquake ground motions (seismic zonation). This is followed by a description of the role of seismic monitoring in earthquake forecasting and prediction.

MONITORING FOR HAZARD ASSESSMENT

Each year, tens of thousands of small earthquakes occur throughout the United States, reflecting the brittle deformation of the North American plate along its edges and within its interior. Although not damaging, these smaller earthquakes provide a wealth of information that enables seismologists and engineers to better assess the distribution, frequency, and severity of seismic hazards throughout the country. Seismograph networks supply earthquake parameter and waveform data that are essential for the real-time evaluation of tectonic activity for public safety (e.g., volcanic eruptions, tsunamis, earthquake mainshocks and aftershocks), the development of earthquake hazard maps and seismic design criteria used in building codes and land-use planning decisions (e.g., characterization of seismic sources, ground failure, strong ground motion attenuation), and basic scientific and engineering research.

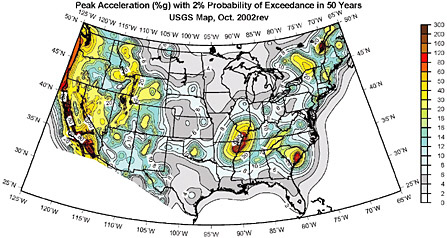

National and regional earthquake hazard maps published by the U.S. Geological Survey (USGS) and state geological surveys involve the collection and integration of seismograph network data with other geologic and geophysical data, including paleoearthquake chronologies, locations of active faults, determinations of three-dimensional velocity and geologic structure, and wave propagation and attenuation parameters. These earthquake hazard data and maps help define the level of earthquake risk throughout the United States and provide input to risk management decisions at both the national and local levels (see Chapter 2). Efforts to reduce the uncertainties in these data help to clarify the level of seismic hazard and risk and to identify the appropriate mitigation and response strategies for different parts of the country.

Earthquake Monitoring

Seismic monitoring provides a wealth of critical information for earthquake hazard assessment and for improved understanding of the earthquake process. The basic product of earthquake monitoring is the seismicity catalog, a listing of all earthquakes, explosions, and other seismic disturbances (both natural and manmade). Parametric data, such as earthquake origin times, locations, and magnitudes, are used to characterize the frequency and size of earthquakes in a particular region and help identify active faults. Earthquake catalogs play a key role in probabilistic seismic hazard assessment, especially in the eastern and central United States where there is generally insufficient detailed information on active faults and their tectonic causes (NRC, 1996).

The importance of earthquake catalogs for seismic hazard assessment underscores the need for consistent and reliable long-term recording and

reporting to reduce uncertainties in magnitude, frequency, and location of earthquakes. The level of earthquake catalog completeness and location accuracy varies as a function of time and location throughout the United States. In northern California, for example, regional catalogs are thought to include every earthquake greater than magnitude 5 since about 1850 and every event of magnitude 4 and greater since about 1880. Location accuracies of pre-instrumental earthquakes are within ±50 km, based on newspaper reports and personal accounts. Instrumentally recorded earthquakes of magnitude 3 and larger began appearing in the catalog in the 1940s. With the addition of ~200 U.S. Geological Survey seismographic stations in the greater San Francisco Bay area—from the late 1960s to the early 1980s (USGS, 2003a)—station coverage and velocity models were sufficient to record earthquakes with magnitudes of 1 to 2 and resulted in location and depth uncertainties being reduced to less than 5 km. Currently, relative epicentral locations are estimated to be better than ±0.5 km (depth uncertainty of ±1 km) for earthquakes within the densest portions of seismic monitoring networks in California (Hill et al., 1990).

Elsewhere in the United States, efforts are under way by the USGS, through the Advanced National Seismic System (ANSS) program, to provide uniform coverage in areas not currently monitored by regional networks. Regional network operators throughout the United States have begun to implement ShakeMap capabilities in cities such as Portland, Oregon; Reno, Nevada; and Salt Lake City, Utah. The benefit of this capability was demonstrated in Seattle, Washington, where the first deployment of ANSS instruments was made a few months prior to the 2001 Nisqually earthquake (see Figure 2.1). In areas where sufficient instrumentation is still lacking, such as the central and northeastern United States, it is only possible to issue model rather than observational or empirical ShakeMaps.

Variations in network configurations with time, due to changes in instrumentation and sensitivities as well as changes in procedures for computing earthquake magnitudes and locations, introduce additional uncertainty. Improving the completeness and accuracy of these catalogs is a major objective of seismic hazard analysis, often depending on the occurrence of small earthquakes to identify the potential for damaging fault ruptures, and of earthquake physics, which relies on catalogs as the basic space-time record of fault system behavior.

Changes in technology during the last two decades have resulted in improvements in the detail, quality, and usefulness of seismic data through the deployment of three-component digital and strong motion sensors capable of reliable on-scale recordings over a range of earthquake sizes. The majority of strong motion networks in the United States were established prior to the ready availability of digital technology and,

FIGURE 4.1 Seismic hazard map for the United States, showing the distribution of ground motions (in %g) with a 2 percent probability of exceedance in 50 years or a 2,475-year return period.

SOURCE: Frankel et al., 2002.

although still useful, do not meet the current needs of engineers and emergency management officials. As of 1999, only 6 percent of the operating seismographs in the United States could accurately record both very small and fairly large earthquakes on-scale (USGS, 1999).

Digital waveform data, either weak or strong motion, are used to further improve earthquake locations, characterize seismic source and wave propagation effects, measure the state of stress in the brittle crust, and develop ground motion attenuation models. Digital waveform data also have many uses in earthquake engineering, as described in Chapter 6.

Monitoring in the Urban Environment

For close to a century, standard seismological practice has been to site delicate instruments far from urban centers and other sources of noise. Studies of weak ground motions, faint vibrations from earthquakes occurring around the globe, led to important scientific advances during the twentieth century. Recent earthquakes, however, have dramatically demonstrated the vulnerability of the urban environment to earthquake-related damage. Unprecedented growth in urban areas during the last few decades has served to increase the level of earthquake risk faster than our efforts to reduce or mitigate it. Addressing seismic hazard and risk issues in the urban environment has required a change in the standard

seismological practice, with the recognition that instruments have to be installed in cities to record ground motions where the earthquake damage is occurring. Recording on-scale ground motions close to active faulting (the near field) and within structures, and obtaining a better understanding of ground response in urban areas, have become critical elements in the national goal of reducing seismic risk. The existing ground motion hazard maps—as illustrated in Figure 4.1—provide information on a national scale, and these will increasingly have to be supplemented by more detailed maps representing seismic zonation for urban areas.

Tsunami Monitoring

Tsunamis are oceanic gravity waves that may be caused by submarine earthquakes or other geologic processes such as volcanic eruptions or landslides. In the United States, tsunamis present a significant (although relatively infrequent) danger to coastal communities in California, Washington, Oregon, Alaska, Hawaii, and Puerto Rico. Seismic monitoring to detect large subduction zone earthquakes around the circum-Pacific and Caribbean regions provides valuable public safety information in advance of tsunami arrivals.

Distant tsunamis and locally generated tsunamis require responses at significantly different time scales. For local tsunamis, the ability to warn coastal communities of a potentially dangerous situation immediately after a large local earthquake is the key to public safety. Locally generated tsunamis can reach the shoreline quickly (within as little as 5 minutes), giving authorities limited time to issue any warnings or evacuations. The 1992 Mw 7.1 Cape Mendocino, California, earthquake generated a small 1-foot tsunami that reached Humboldt Bay 20 minutes after the earthquake occurred. Regional groups throughout the Pacific Northwest—such as the University of Washington, the Oregon Department of Geology and Mineral Industries, and the Bonneville Power Authority—recognize the significant local tsunami hazard posed by the Cascadia subduction zone and have begun installing strong motion instruments for real-time monitoring and warning for coastal communities.

Distant or tele-tsunamis generated from other parts of the circum-Pacific are monitored by the Pacific Tsunami Warning System, which was established in 1948 following the 1946 Aleutian (Unimak Island) tsunami. Tsunami waves travel at speeds of 800 km/h (or 0.2 km/s) at a water depth of 5,000 meters, far slower than seismic waves (3-8 km/s). This difference in wave speed makes it possible to issue tsunami warnings throughout the Pacific basin after an earthquake has been detected, but before the arrival of the tsunami. A Tsunami Watch Bulletin is released when an earthquake occurs with a magnitude of 6.75 or greater on the

Richter scale. A Tsunami Warning Bulletin is released when information from tidal stations indicates that a potentially destructive tsunami exists.

Although great strides have been made over the past 50 years in tsunami detection and warning, 75 percent of all tsunami warnings issued since 1948 were false alarms and evacuation was not required. The cost of evacuation to the Hawaii Gross State Product is estimated to be ~$58 million (1996 dollars) per day (Iboshi, 1996). Not only are these false alarm evacuations costly, they also erode the credibility of the tsunami warning system. Furthermore, the fear and disruption of a false alarm can itself put a population at physical risk—fatalities and injuries have occurred during an evacuation due to such things as heart attacks and accidents.

As part of the National Tsunami Hazard Mitigation Program, the National Oceanic and Atmospheric Administration (NOAA), in cooperation with the USGS and the States of Alaska, California, Hawaii, Oregon, and Washington, is expanding, integrating, and upgrading the network of seismic stations to improve tsunami warnings, reduce false alarms, and better track the source and type of earthquakes for NOAA’s tsunami warning centers at Ewa Beach, Hawaii, and Palmer, Alaska. Real-time determination of earthquake source parameters by digital seismograph networks enables faster response times for the warning centers and recognition of “tsunami earthquakes”—events that excite tsunamis that are larger than expected for their magnitude (such as the 1946 Aleutian and 1992 Nicaragua tsunamis). Improved seismic data, coupled with information from deep-sea buoys that detect water pressure changes, will enable the accurate determination of tsunami size in real time and eliminate or reduce unnecessary coastal evacuations. The recent provision of funding to enable the USGS to expand seismic instrumentation for tsunami warning and response,1 following the Indian Ocean tsunami of 2004, represents an explicit recognition by Congress of the value of seismic networks for emergency response.

Volcano Monitoring

Nearly every recorded volcanic eruption has been preceded by an increase in earthquake activity beneath or near the volcano. For this reason, seismic monitoring has become one of the most useful tools for eruption forecasting and monitoring (McNutt, 2002). Systematic volcano monitoring enabled the accurate prediction, from hours to even a few weeks in advance, of nearly all the post-May 18, 1980, dome-building

eruptions of Mount St. Helens. Real-time and near-real-time seismic monitoring capabilities at numerous volcanoes around the world provide a major advance for identifying and guarding against volcano hazards. In addition to monitoring, the improved ability to locate earthquakes recorded by permanent seismic networks provides three-dimensional images of the magmatic plumbing systems beneath some volcanoes. The increasing use of broadband seismometers has facilitated the complete recording and comprehensive analysis of long-period seismic signals, which have preceded and accompanied a number of eruptions. A more quantitative understanding of long-period seismicity not only refines short-term forecasts of volcano hazards, but also improves our knowledge of magma transport and eruption dynamics.

The economic consequences of volcanism in the United States are wide and varied, ranging from the destruction associated with the May 1980 eruption of Mount St. Helens, Washington (~$1 billion in losses and 57 fatalities), to the impacts on air transportation from high-altitude ash clouds, to fluctuations in real estate values as a societal response to official warnings (e.g., Mammoth Mountain-Long Valley Caldera, California, earthquake swarms in the 1980s).

In the past 30 years, more than 90 jet-powered commercial airplanes worldwide have encountered clouds of volcanic ash and suffered varying amounts of damage as a result (Guffanti and Miller, 2002). The overall economic risk from airborne volcanic ash effects is estimated to be about $70 million per year (Kite-Powell, 2001). More than 10,000 passengers and millions of dollars of cargo fly across the North Pacific region each day, and the area’s aviation traffic is increasing at a rate of 10 percent per year (USGS, 2004). Coordinated observations, using both land- and space-based data, are needed to evaluate volcanic threats in real time. Seismic monitoring coupled with satellite observations and ash-cloud transport models enables the air transportation industry to reroute flights and avoid costly ash-cloud encounters. More than 100 potentially dangerous volcanoes lie under air routes in the North Pacific. Along the Alaska Peninsula and the Aleutian Islands there are more than 41 historically active volcanoes. As of July 2002, the Alaska Volcano Observatory operated networks at 23 of the most dangerous volcanoes in Alaska and had plans to instrument additional volcanoes to achieve the ANSS goal of having all potentially active volcanoes in the United States monitored by at least three seismograph stations within 20 km of the volcano.

MONITORING FOR GROUND MOTION PREDICTION MODELS

Earthquake engineering practice uses ground motion prediction models to estimate ground motion levels for the design of structures. These

models predict the level of the ground motion of future earthquakes based on the earthquake magnitude, the distance of the site from the earthquake, and the nature of the shallow surface geology (soil or rock) at the site. In the western United States, ground motion models are based mostly on the recorded ground motions of past earthquakes. In the central and eastern United States, they are based mostly on computer simulations of earthquake ground motions derived using seismological theory (e.g., Abrahamson and Shedlock, 1997).

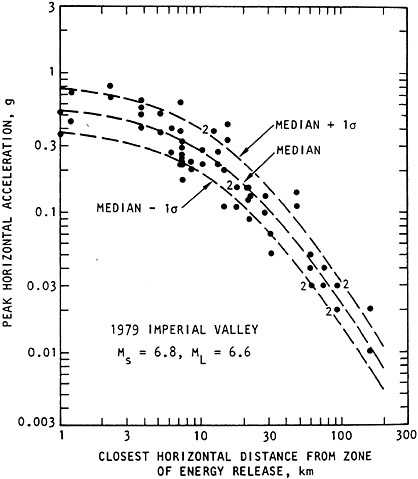

The strength of earthquake ground motion has a large degree of variability from one location to the next, even when these locations are at the same distance from the same earthquake. For this reason, ground motion models specify two measures of the ground motion level—the average value and the variability (standard deviation) about this average value (e.g., Figure 4.2). The standard deviation in ground motion models typically has values that range from a factor of 1.5 to a factor of 2, and the total range of variation can approach a factor of 10. This large degree of variability is reflected in the irregular distribution of both damage and ground shaking intensity patterns observed following earthquakes.

Use of Ground Motion Prediction Models for Building Codes and Seismic Design

Because of the uncertainty in the location and timing of future earthquakes, engineers generally take a probabilistic approach to characterizing the strength of future earthquake ground motions for seismic design at a given site. This probabilistic approach is at the core of current building code and seismic design practice (ICC, 2000; FEMA, 2001b). In this approach, the frequency with which a given ground motion level is expected to occur at a site is calculated based on consideration of the frequency of occurrence of all of the possible earthquakes that could occur on all of the faults that are close enough to affect the site. The probabilistic ground motion calculation also takes into account the variability in the level of the ground motion expected from a given earthquake.

In the FEMA (2001b, 2001c) National Earthquake Hazards Reduction Program (NEHRP) seismic provisions, which form the basis of current building codes, seismic design at most locations is based on the ground motion level that has a 1/2,500 chance per year of being exceeded. In seismically active areas such as the coastal regions of California, earthquakes recur on some faults as frequently as once every few hundred years. Consequently, construction design may have to accommodate the largest ground motion that would be expected from the occurrence of 10 earthquakes on such faults. If there were no variability in the ground motion level caused by a given earthquake, then the largest ground

FIGURE 4.2 Recorded peak accelerations of the 1979 Imperial Valley earthquake and an attenuation curve that has been fitted to the data.

SOURCE: Seed and Idriss (1982).

motion level from the occurrence of 10 earthquakes would be the same as that from a single occurrence—the average value. However, given the variability in the ground motion level, we would expect the largest ground motion level from the occurrence of 10 earthquakes to be higher than that from one, because with 10 earthquakes there is a higher probability that one of them would produce a ground motion level that is much higher than the average value for that earthquake.

The effect of the variability in ground motion is to greatly increase the probabilistic estimates of ground motion levels at annual probabilities of 1/2,500. Near large, active faults in the western United States, the probabilistic ground motion levels are so high that engineers have decided not to use them in building codes, and instead they apply a cap to the ground motion levels used in the building code (FEMA, 2001b, 2001c). They have done this because the damage that they have observed in earthquakes does not seem to them to be as great as would be expected if the actual ground motions were as large as the values that are projected to occur based on current ground motion models and structural analysis techniques. This situation results from a fundamental lack of data that can be used to describe the strength of earthquake ground motions. Until the ground motion recordings that can resolve this issue are obtained, the ground motion levels used in building codes will continue to be based more on professional judgment than on real knowledge of ground motion characteristics. This lack of adequate strong motion recordings is hindering the continued development of performance-based design (see Chapter 6), whose goal is to develop reliable procedures for predicting the performance of structures when subjected to earthquake ground motions. This kind of predictive capability is necessary to provide a more rational basis for the cost-effective design of structures.

The Need to Record Damaging Ground Motions

The current seismic monitoring networks in the United States are designed mainly to detect earthquakes and, for that purpose, use very sensitive transducers that can record the smallest possible earthquake magnitudes. When an earthquake occurs that is large enough to be damaging (with accelerations more than about 10 percent of the acceleration of gravity), most of the nearby seismic recording instruments are driven off scale. This results in the loss of information about how strongly the ground was shaken at the sites of damaged structures, making it difficult for engineers to assess building performance relative to actual ground motions. One of the main purposes of the ANSS program is to rectify this situation by installing instruments that will remain on scale during damaging earthquake ground motion. These instruments will be installed both on the ground, to measure the strength of the shaking that enters structures, and within structures, to measure the behavior of the structures in response to ground shaking. The ground instruments will have the capability of recording both strong ground motion (i.e., motions having potentially damaging levels, directly important for earthquake engineering) and weak ground motion (i.e., barely perceptible motions from small or dis-

tant earthquakes, directly applicable to earthquake monitoring and earthquake hazard assessment).

Paucity of Design-Level Strong Motion Recordings in the Western United States

There is a large and growing data set of strong motion recordings of earthquakes in the western United States, mostly from California. However, there are still very few nearby recordings of the large-magnitude earthquakes that control the design of structures in the western United States. Consequently, many structures are designed for ground motions that are stronger than any that have been recorded in this country. The development of ground motions for engineering design thus involves extrapolation of ground motion models to larger magnitudes and closer distances than are reliably represented by recorded data. The resulting uncertainty in the levels of ground motions that are suitable for seismic design gives rise to the use of conservative assumptions that result in unnecessary expense.

The strong ground motions recorded during recent large earthquakes in other countries, including Turkey, Taiwan, and Japan—the latter two of which have strong motion recording systems that are vastly superior to those in the United States—all point to the likelihood that our current ground motion models are too conservative (Somerville, 2003). Confirmation of this finding from recordings of earthquakes in the United States is essential. The Mw 7.9 earthquake that occurred in Alaska in 2002 presented such an opportunity, occurring on a well-known active fault (the Denali fault) and causing a rupture length of almost 400 km. However, there was only one strong motion recording near the fault, made by the Alyeska Pipeline Company close to the location where the oil pipeline crosses the fault. If the Denali fault had been adequately monitored with recording instruments, a valuable data set would have been recorded for use in seismic design in the western United States.

Paucity of Strong Motion Recordings in the Eastern and Central United States

At present, there are very few strong motion recordings of earthquakes in the eastern and central United States, both because the level of seismic activity is quite low and because very few strong motion recording instruments are located in this region. When an earthquake does occur in this region, most of the closest recording instruments go off scale, and as a result there are insufficient strong motion recordings to develop

ground motion models. Until there is adequate monitoring of strong ground motion in the central and eastern United States, earthquake engineering design in this region will continue to be subject to very large uncertainty and the concomitant economic effects of potential “underdesign” (resulting in high damage levels) or “overdesign” (resulting in needless construction costs).

Because of the paucity of strong motion recordings available in the central and eastern United States, ground motion models for this region are based mostly on computer simulations of earthquake ground motions derived using seismological theory. These theory-based models themselves are based on information on the characteristics of the earthquake source and of seismic wave propagation through the earth. As described earlier, the information about these characteristics is derived from the modeling and analysis of seismograms recorded on seismic networks. To a large extent, these analyses can use information from quite small earthquakes, which occur with sufficient frequency to provide useful results. These small earthquakes do not themselves generate strong motions, but the weak motions that they generate can be used to understand earthquake source and wave propagation characteristics, which can then be extrapolated for the prediction of strong ground motions from larger earthquakes. This means that while we are waiting for strong ground motions to be recorded from future large earthquakes in the central and eastern United States, recordings from smaller earthquakes can be used to improve our current theory-based ground motion models.

SEISMIC ZONATION FOR REDUCING UNCERTAINTY

As noted above, the pattern of damage caused by earthquakes often has a highly irregular distribution, with concentrations of damage in some locations and relatively little damage in others. In communities where strong motion recordings are available, these spatial variations in damage characteristics are usually reflected in a general way by the distribution of recorded ground motions of the mainshock (e.g., as depicted by ShakeMap) and by the spatial variations of weak ground motions recorded during aftershocks. The ability to reliably predict the pattern of ground motion amplification in urban areas, and thus identify locations that are especially vulnerable as well as those that are not, has the potential to significantly reduce earthquake losses and guide rational urban development. Box 4.1 demonstrates how improved information about the location and levels of ground shaking impacts bridge design and construction costs in California. However, the development of this capability is contingent upon the deployment of dense arrays of strong motion recording instruments in urban regions, as planned for ANSS.

|

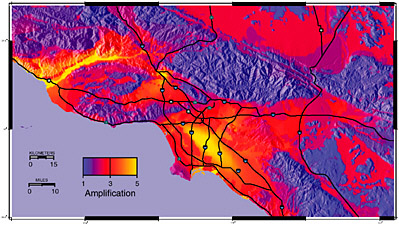

BOX 4.1 Transportation systems are examples of geographically distributed networks. Figure 4.3 shows levels of ground amplification in southern California, where a number of transportation corridors cross areas with the highest levels of ground amplification. Increased specificity (reduced uncertainty) as to both the location and the level of ground shaking enables geographically optimized risk management and mitigation decisions for both the long and short term. Long-term benefits are achieved through improved and more appropriate design and construction. Improved information about ground motion demand impacts $10 million to $60 million of new bridge construction costs each year in California (based on $1 billion spent each year on bridges in California). Figure 4.4 shows that a 10 percent change in ground motion results in a 6 to 15 percent change in construction costs. Short-term benefits are realized during the earthquake response and recovery phase. The California Transportation Department (CalTrans) and (CSMIP) have invested ~$7million in strong motion monitoring of bridges. Information about the severity of ground shaking at specific bridge locations and the capacity of those bridges allows CalTrans to prioritize post-earthquake inspection and repair activities. Reduced traffic delays through efficient rerouting impact both the immediate emergency response and the  FIGURE 4.3 Map showing earthquake ground motion amplification in Southern California. SOURCE: Field (2001). |

FIGURE 4.4 Cost function graph, showing increased construction costs as a function of design ground motions. SOURCE: Ketchum et al. (2004). longer-term recovery phases. Costs associated with loss of access are estimated to be up to $1 million per day per route.a |

Causes of Spatial Variations in Ground Motion Levels

In some cases, the spatial variations in ground motions can be attributed to spatial variations in the near-surface geology. Indeed, building code provisions include the effect of the shallow geology (specifically, Vs, the average shear wave velocity in the upper 30 meters) on ground motion amplitudes. Accordingly, mapping of Vs in urban regions can be used as a first-order method of seismic zonation. As with all methods of seismic zonation, the use of Vs measurements to quantify site amplification requires experimental verification. For example, a major field program (ROSRINE)2 was undertaken following the 1994 Northridge earthquake

to measure Vs at some of the sites that recorded the earthquake ground motions, for use in testing methods of predicting site response.

However, in many other cases, there remain large spatial variations in ground motion levels that cannot be explained simply by the shallow geology. The large spatial variations in ground motion level in the Seattle area from the 2001 Nisqually earthquake and its aftershocks, and their correlation with damage (Figure 2.1), have already been noted. Another example is provided by the damage pattern caused by the Northridge earthquake, which was characterized by pockets of localized damage that were not clearly correlated with surficial soil conditions (Hartzell et al., 1997). It appears that—in both the Nisqually and Northridge earthquakes—deeper-lying geological structure may have had as much influence on strong motion patterns as the upper 30 meters that are conventionally used to characterize site response.

Basin-Edge Effects. The 1994 Northridge and 1995 Kobe earthquakes showed that large ground response motions may be influenced by the geological structure of fault-controlled basin edges. The largest ground motions in the Los Angeles basin during the Northridge earthquake were recorded just south of the Santa Monica fault. In this region, the basin-edge geology is controlled by the active strand of the Santa Monica fault (Figure 4.5) (Graves et al., 1998). Despite having similar surface geology, sites to the north of the fault (closest to the earthquake source) were subjected to relatively low amplitudes, whereas more distant sites to the south of the fault exhibited significantly larger amplitudes, with an increase in amplification occurring at the fault scarp. This pattern is dramatically reflected in the damage distribution indicated by red-tagged buildings (Figure 4.5), which shows a large concentration of damage immediately south of the fault scarp in Santa Monica. The strong correlation of the ground motion amplification pattern with the fault location indicates that the underlying basin-edge geology controlled the ground motion response, with the large amplification caused by the constructive interference of direct waves with surface waves generated at the basin edge.

The 1995 Kobe earthquake provided further evidence from recorded strong motion data, supported by wave propagation modeling using basin-edge structures, that ground motions may be particularly large at the edges of fault-controlled basins. The Kobe earthquake caused severe damage to buildings in a zone about 30 km long and 1 km wide, and offset about 1 km southeast of the fault on which the earthquake occurred (Pitarka et al., 1998). The basin-edge effect caused a concentration of damage in a narrow zone running parallel to the faults through Kobe and adjacent cities.

Wave Focusing Effects. Although basin-edge effects confirm the role of deep geological structure in causing the local amplification of ground

FIGURE 4.5 The surface waves generated in the west Los Angeles basin during the 1994 Northridge earthquake were trapped initially in the shallow sediments north of the ENE-WSW-trending Santa Monica fault. The abrupt deepening of the Los Angeles basin at the Santa Monica fault (shown in light colors on cross section K-K’) caused the basin-edge wave to form a large, long-period pulse of motion that resulted in substantial damage immediately south of the fault, as shown by the distribution of red tagged buildings.

SOURCE: Graves et al. (1998).

motions, in other locations the reason for the localization of damage and ground motion amplification remains obscure. Without detailed knowledge of the deeper structure (provided in the case of Santa Monica by seismic exploration for oil), it is difficult to predict the spatial variation of ground motion levels due to deeper geological structure. Such deeper

structure may include structures in the upper few kilometers of sedimentary basins as well as topographic relief of the sediment-basement interface. These structures may focus energy in spatially restricted areas on the surface, in some cases becoming the dominant factor in the modification of local ground motion amplitudes.

Earthquake Source Effects. Recordings of recent large earthquakes have shown that using only the traditional variables of earthquake magnitude, distance from the fault, and site conditions to predict ground motion levels does not adequately describe the observed spatial variations in ground motions. This has motivated the development of ground motion models that use a more complete description of the earthquake source and wave travel path parameters. For example, the long-period pulse of near-fault ground motion caused by forward rupture directivity of the earthquake source (Somerville, 2003), was responsible, together with the basin-edge effect described above, for the intense damage caused by the 1995 Kobe earthquake. Ground motions recorded on the hanging wall above the fault planes that generated the 1994 Northridge and 1999 Chi-Chi, Taiwan, earthquakes were much stronger than the ground motions on the adjacent foot wall, due to the geometrical effects of proximity to the fault. Analyses of strong motion recordings of recent earthquakes such as these are forming a basis for the capability to predict these effects, but many more recordings are needed to develop a comprehensive understanding of these phenomena.

Seismic Zonation of Urban Regions Using ANSS

In most cases, the only way to identify patterns of ground motion amplification in urban regions before the occurrence of a damaging earthquake is to deploy dense urban arrays of strong motion recorders, as planned for the ANSS program (USGS, 1999). These ANSS instruments will contribute to the seismic zonation of urban regions in three ways:

Identifying Zonation by Recording Damaging Motions. The few strong motion instruments located in urban regions in the United States are insufficient to provide an adequate description of the spatial distribution of ground motion. The proposed ANSS instruments will record damaging earthquakes on scale, providing the data that are needed for understanding the distribution of ground shaking level and its relationship to the distribution of damage (e.g., Figure 4.5).

Identifying Zonation by Recording Weak Motions. In most urban regions, damaging earthquakes occur infrequently. However, ANSS monitoring instruments will continuously record weak ground motions from the more frequent, smaller earthquakes. The pattern of ground motion amplitudes from these small earthquakes can potentially provide

useful information about the likely distribution of damaging ground motions that would occur in strong earthquakes. The recording of aftershocks following a large earthquake, often using portable deployments of instruments, is used for the same purpose of mapping the spatial variations in ground motion amplification in urban regions (Figure 2.1).

Identifying Zonation by Developing Predictive Capabilities. The ground motions recorded by seismic monitoring instruments from both small and large earthquakes provide data that can be used to identify the deep geological structure beneath urban regions. The adequacy of crustal structure models can then be tested to assess whether seismological ground motion simulation techniques are able to predict the observed patterns of ground motion amplitudes. If they are, then the models can be used to produce ShakeMaps for future scenario earthquakes. The ground motion amplification patterns in these ShakeMaps can then be used to develop a seismic zonation of the urban region, providing a basis for the prioritization of earthquake risk mitigation activities.

MONITORING FOR EARTHQUAKE FORECASTING, ALERTS, AND PREDICTION

Uncertainties about the timing and magnitude of future earthquakes have led seismologists to adopt a probabilistic approach to describing the likelihood of future damaging events. Forecasts, which may involve low probabilities, are distinguished from predictions, which involve probabilities that are high enough to warrant public response. Accordingly, prediction refers to situations in which the probability of occurrence of an earthquake is much higher than normal in a specified region.

Earthquake alerts can take several forms. Short-term (24-hour) forecasts of earthquake hazard, updated every hour, have recently been implemented throughout California.3 Following a large earthquake, the population may be alerted to the likelihood of aftershocks or the possibility that an even larger earthquake might occur (Jones and Reasenberg, 1989; Reasenberg and Jones, 1994). Alerts may also be issued when increased seismic activity in a seismic “hot spot” is interpreted as a possible precursor to a larger event. In the few seconds to tens of seconds immediately following an earthquake, advanced seismic monitoring systems can provide warning of the imminent occurrence of strong ground shaking—the role of immediate alerts (real-time earthquake warnings) for emergency management is discussed in Chapter 7.

Earthquake prediction is commonly understood to mean specification of the location, time and magnitude of an impending earthquake within specified ranges of uncertainty (Allen, 1976). Earthquake predictions can be divided into short-term predictions (hours to weeks), intermediate-term predictions (1 month to 10 years), long-term predictions or forecasts (10 to 30 years), and long-term potential (>30 years) (Sykes et al., 1999). Since an earthquake prediction might be fulfilled by chance, there is general agreement that probabilistic methods should be used to evaluate the success of any earthquake prediction. The development of a reliable earthquake prediction capability could potentially provide the means to move populations out of harm’s way, prioritize the retrofitting of seismically vulnerable infrastructure, and modify urban planning to minimize earthquake risk. The role that seismic monitoring plays in predictions and forecasts is addressed in the following sections.

Long- and Intermediate-Term Forecasts and Predictions

H.F. Reid’s (1910) observation that the 1906 San Francisco earthquake was the result of a sudden relaxation of elastic strains through rupture of the San Andreas fault laid the foundation for the development of elastic rebound theory and long-term earthquake forecasting. Large earthquakes are thought to occur more or less regularly in space along major fault systems and, in time, as a result of gradual stress buildup and sudden release by failure. This repetitive cycle of strain accumulation and release, termed the seismic or earthquake cycle (Scholz, 1990), is driven by plate tectonics along the world’s major plate boundaries and fault systems.

The seismic gap method is a frequently used application of this concept for long-term earthquake forecasting and the identification of seismic potential. Along many simple plate boundaries, like the San Andreas Fault, most of the long-term plate motion occurs during infrequent large and great earthquakes. As originally proposed by Kelleher et al. (1973), sections of active plate boundaries that have not been the site of large or damaging earthquakes for more than 30 years are considered the likely site for future events. This approach was successfully applied for several large (Mw >7.5) earthquakes along subduction zones and strike-slip plate boundaries during the 1960s and 1970s (Fedotov, 1965; Mogi, 1968; Kelleher et al., 1973). Application of this method for events with Mw <7.5, or in areas with complex tectonic settings, is more controversial (Jackson and Kagan, 1991, 1993; Nishenko and Sykes, 1993). During the 1980s and 1990s, the seismic gap methodology was extended to include the characteristic earthquake model (similar sized events that occur repeatedly along the same section of a plate boundary or fault zone) in a probabilistic framework (Schwartz and

Coppersmith, 1984; Sykes and Nishenko, 1984; WGCEP, 1988, 1990, 1995, 1999; Nishenko, 1991).

The repetitive pattern of strain accumulation and release is also expressed in seismicity patterns, where low levels of seismicity in the first part of the cycle (once aftershocks from the latest event subside) are followed by an increase in regional activity as strain reaccumulates and, ultimately, by the occurrence of another earthquake with its attendant foreshocks and aftershocks. Variations in the historic rate of moderate to large earthquakes in the San Francisco Bay area in the decades before and after the 1906 earthquake (Ellsworth et al., 1981) are similar to those described by Fedotov (1965) and Mogi (1968) for the earthquake cycle associated with great subduction zone earthquakes in Japan, the Kuriles, and Kamchatka.

Three examples of long- and intermediate-term earthquake forecasts in the United States—based on applications of elastic rebound theory and the seismic gap method—include the Parkfield, California, earthquake prediction experiment; the 1989 Loma Prieta, California, earthquake; and the 2002 San Francisco Bay area earthquake forecast. Experience with long- and intermediate-term predictions based on simplified or basic models of earthquake occurrence, however, has shown that accurate forecasts can be difficult even for plate boundaries that have seemingly regular historical sequences of earthquakes, as the following example demonstrates.

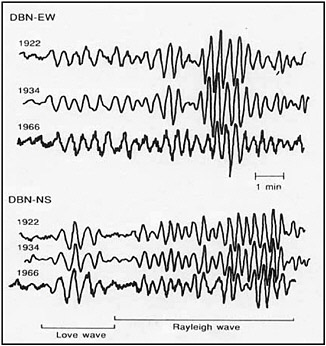

Parkfield, California. Moderate-sized (Mw ~6) earthquakes have occurred on the San Andreas Fault near Parkfield, California, in 1922, 1934, and 1966, and earlier events in 1857, 1881, and 1901 were also believed to have occurred in approximately the same area. Similarities in size, location, and even waveforms (see Figure 4.6) led seismologists to believe that these were examples of “characteristic” events that have occurred repeatedly along the same section of the San Andreas Fault. Even though there was significant variation in the times between these events, the relatively short average recurrence time of 22 years suggested that the next characteristic Parkfield earthquake would occur in 1988 (1966 + 22 years) and that the probability of that event occurring before 1993 was 0.95. A focused earthquake prediction experiment (the Parkfield Earthquake Prediction Experiment) began in 1985 (Bakun and McEvilly, 1984; Bakun and Lindh, 1985). The earthquake eventually occurred on September 28, 2004, 38 years after the previous event, without any foreshock or other evident precursory phenomena. Unlike the two previous events—which ruptured from the northwest to the southeast—this event ruptured from the southeast to the northwest, further demonstrating the epistemic and aleatory uncertainties that exist in our understanding of earthquake processes. Nevertheless, valuable strong motion data were recorded by

FIGURE 4.6 Comparison of seismograms recorded in DeBilt, Netherlands (DBN), for the 1922, 1933, and 1966 Parkfield, California, earthquakes. SOURCE: Bakun and McEvilly (1984).

arrays of instruments that had been deployed as part of the prediction experiment in the 1980s.

Loma Prieta, California. The 1989 Loma Prieta earthquake (Mw 6.9) occurred along an area of the San Andreas Fault where long- or intermediate-term forecasts had been made by a number of seismologists (Lindh, 1983; Sykes and Nishenko, 1984; Scholz, 1985). The Loma Prieta earthquake occurred near the southeastern end of the fault rupture of the 1906 San Francisco earthquake, where the relatively small amount of surface slip in 1906 was thought to have been recovered by elastic strain accumulation. This fault segment also exhibited a distinct absence of small earthquakes over a distance of some 40 km. The general region around Loma Prieta was also identified as having increased likelihood of an earthquake based on pattern recognition studies (see next section). While the 1989 earthquake fulfilled these forecasts in a general sense, details about the earthquake suggest that it may not have occurred on the San Andreas Fault, indicating

a greater degree of complexity than was previously recognized for this section of the San Andreas (Harris, 1998).

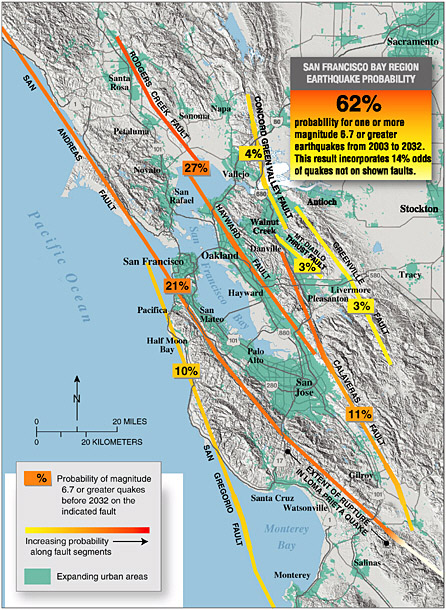

2002 San Francisco Bay Area Forecast. Building on the lessons learned from earlier California Earthquake Probability Working Groups (WGCEP, 1988, 1990), the USGS revised the 1990 forecast for the San Francisco Bay area and issued a new forecast in 2002. This revised forecast indicates a 62 percent chance of a Mw 6.7 or larger earthquake between 2003 and 2032 (USGS, 2003a; see Figure 4.7). These forecasts, like those used in the national seismic hazard maps, are based on the integration of seismograph network data with other geologic and geophysical data, including paleoearthquake chronologies as well as the locations of active faults and their rates of slip. They go beyond the current national seismic hazard maps in also addressing the time-dependent hazard of the region, using information about the time elapsed since the last large earthquake in the region. In contrast, the national seismic hazard maps are time independent, in that they do not change depending on the time from some specific earthquake in the region. These forecasts, coupled with the “wake-up call” from the 1989 Loma Prieta earthquake, have helped to focus earthquake preparedness and mitigation activities throughout the San Francisco Bay area.

Monitoring for Changes in Seismicity. Many earthquake predictions and forecasts have been based on observations of statistically significant changes in the rates and types of seismic activity over long, intermediate, or short time scales. Variations in the state of stress or strength of the crust may be manifest as spatial and temporal changes in seismicity patterns (e.g., “doughnut” patterns, increases or decreases in seismic activity), changes in earthquake focal mechanisms, and increases in the rate of seismic moment release (Mogi, 1969; Wyss and Habermann, 1979; Jaume and Sykes, 1999). The ability to rigorously evaluate the significance of these changes in activity requires a long-term commitment to the systematic collection and evaluation of seismic monitoring data.

Stress Interactions. In the past two decades, it has been recognized that earthquakes frequently occur in locations where the stress has been increased by the recent occurrence of a neighboring earthquake. An earthquake reduces the average value of shear stress on the fault that slipped and redistributes stresses to the fault tips and surrounding regions. Stress trigger zones and stress shadows are regions where previous earthquakes have increased the stress by loading, or decreased it by unloading. The seismicity rate before and after large earthquakes is not constant, and the changes in rate appear to be correlated with these stress trigger zones and shadows. A dramatic example of stress loading is the progressive triggering of 11 large earthquakes along the Anatolian fault system in Turkey between 1939 and 1999 (Stein et al., 1997).

A dramatic example of stress unloading is the stress shadow generated by the 1906 San Francisco earthquake, which decreased the regional seismicity rates in the San Francisco Bay region for the next 75 years (Ellsworth et al., 1981). Earthquake activity was relatively high during the latter half of the nineteenth century leading up to the occurrence of the 1906 event. After 1906, the level of seismicity decreased and moderate-sized events were absent through the first half of the twentieth century. Since the 1950s, the activity level has again increased in the San Francisco Bay area, with the most recent event being the 1989 Mw 6.9 Loma Prieta earthquake. While the long-term significance of the increase in regional activity is still being evaluated, the change in seismic activity—punctuated by the 1989 Loma Prieta earthquake—has prompted long-term mitigation and preparedness activities in the Bay area.

There is comparable evidence of seismicity changes in southern California, although the historic record is less reliable until about 1890. Along the rupture zone of the great 1857 Fort Tejon earthquake on the San Andreas Fault, available data show a similar period of low activity for several decades following the event. Farther south, along a section of the San Andreas that has not ruptured since 1630, the activity level since the 1880s is reminiscent of the activity in the San Francisco Bay area in the decades prior to the 1906 earthquake. As a possible long-term indicator of seismic potential, the seismicity surrounding the dormant southern section of the San Andreas agrees with independent assessments of the long-term estimates derived from paleoseismology (Ellsworth, 1990; see also Southern California Earthquake Center [SCEC] Southern California earthquake forecast model in WGCEP, 1995).

Pattern Recognition. Pattern recognition methods are based on statistical changes such as the rate of earthquake occurrence and the proportion of large to small earthquakes within a region. Specifically, the phenomena that are monitored and analyzed include small earthquakes becoming more frequent in an area that is not necessarily where the impending earthquake will occur; earthquakes becoming more clustered in time and space; earthquakes occurring almost simultaneously over large distances within the seismic region; and an increasing ratio of medium-magnitude to small-magnitude earthquakes (Keilis-Borok, 1996). These methods are used to predict the occurrence of a large earthquake within a specified period of time of months to years, in a specified large circular region having a radius of several hundred kilometers (see Box 4.2). The time period of successive predictions has been decreasing as the development of the method has progressed. The developers claim to have predicted a number of earthquakes that occurred within large circular regions: the 1989 Mw 6.9 Loma Prieta earthquake within a 5-year window, the 1994 Mw 6.7 Northridge earthquake within an 18-month window (although it

|

BOX 4.2 Recent research indicates that, by understanding how stress accumulates in the Earth’s crust and how faults interact through the stress changes caused by earthquakes, it may be possible to reduce forecasting times from current intervals of decades to a few years and perhaps even less. Recent scientific developments have stimulated lots of discussions about the feasibility of what we term “intermediate-term” prediction—forecasting earthquakes on time scales of months to years. Several well-respected groups of geophysicists are running intermediate-term prediction algorithms for research purposes. For example, last week [January 6, 2004], UCLA issued a press release that a group led by Dr Keilis-Borok had successfully predicted the 25 Sept 03 Hokkaido earthquake (M 8.1) and the 22 Dec 03 San Simeon earthquake (M 6.5). This release also pointed out that “Keilis-Borok’s team now predicts an earthquake of at least magnitude 6.4 by Sept 5, 2004, in a region that includes the southeastern portion of the Mojave Desert, and in an area south of it.” The zone where this event is predicted … includes the southern section of the San Andreas, most of the Eastern California Shear Zone south of Interstate 15, the San Jacinto, and several other significant faults. Dr Keilis-Borok has emphasized the hypothetical nature of this prediction and stressed that his new methodology has not been fully tested. Moreover, the prediction is incomplete, because the authors have not stated the gain in probability over random chance that such an event will occur. However, other researchers have recognized that the southern San Andreas fault, which lies in the Keilis-Borok prediction zone, is probably late in its seismic cycle and that the seismicity of the region is accelerating. Despite some discomfort within the scientific community about making predictions that might be misinterpreted by the general public, this type of research must be done in a transparent way and remain open to public scrutiny. Our responsibilities to the public dictate that we maintain up-to-date scientific assessments of earthquake predictability and prediction methodologies, and carefully explore their implications for seismic risk. At the state level, evaluating earthquake predictions from a public policy perspective is the responsibility of the California Earthquake Prediction Evaluation Council, and it is my understanding that the Keilis-Borok et al. prediction will be put in front of CEPEC for its assessment.a There is also the |

|

need for the USGS to reinstate the National Earthquake Prediction Evaluation Council to deal with those issues at the national level. In conclusion, we can firmly state that the era of time-dependent seismic hazard analysis is at hand. While reliable short-term prediction remains an unreached (and perhaps unattainable) goal, we know earthquake occurrence is inadequately described by assuming earthquakes occur at random times. Many scientists now believe earthquake probabilities have a rich space-time structure that can be quantified if adequate knowledge of the fault system can be leveraged from observations. Excerpt from address by Tom Jordan, director of the Southern California Earthquake Center: “Progress in Earthquake Science Since Northridge,” from Ten Years Since Northridge: A Special Event for “Movers and Shakers,” Jan. 16, 2004. |

actually occurred 21 days beyond the end of the window), and the 2004 Mw 6.5 San Simeon earthquake within a 9-month window. The developers predicted that an earthquake of magnitude 6.4 or larger would occur in southeastern California before September 5, 2004, but no such earthquake occurred.

Short-Term Prediction

An ultimate goal of earthquake science is the short-term prediction of the time, location, and size of an earthquake in a time window that is narrow and reliable enough to enable preparation for its effects. The prediction of the Mw 7.3 Haicheng, China, earthquake in 1975, less than 24 hours before its occurrence but in time to allow for evacuation of the population, is credited with saving many lives. However, the prominent foreshocks and hydrological precursors that formed the basis for the prediction were very unusual and were not observed before other earthquakes. Also, many false alarms had been issued, so the possibility of success by chance cannot be ruled out. The limitations of this apparently successful prediction soon became apparent with the occurrence the following year of the Mw 7.8 Tangshan earthquake in a neighboring region of China, where the death toll of at least 240,000 is one of the highest in recorded history. This earthquake was not predicted, despite extensive monitoring.

An Alternative View of Earthquake Prediction

Given the definition of earthquake prediction as the specification of the location, time, and magnitude of an impending earthquake within specified ranges of uncertainty, there does not exist anywhere in the world a tested and operational capability to predict earthquakes. However, research that is aimed at developing such a capability is currently under way in several countries. Moreover, operational programs to predict earthquakes, although untested, have been implemented in several locations (including Parkfield, California, and Tokai, Japan, with the latter still in progress), and the pattern recognition method described above is now in a stage of open operation and evaluation. The former two programs are based on the concept of progressing from a long-term forecast of location and magnitude, having very approximate time constraints, to a precise forecast of the time window as data and understanding permit. Lindh (2003) argues that a justification for pursuing earthquake prediction without a tested scientific basis is that society is willing to accept the uncertainties that come with the learning process in which seismologists are engaged because the potential benefits of predicting an earthquake might greatly outweigh the costs of that uncertainty. According to this alternative view of earthquake prediction, earthquakes present such a dire threat that society expects seismologists to attempt earthquake prediction even in the face of great uncertainties, in much the same way as it expects medical doctors to attempt to treat an illness even if its cause is difficult to diagnose.

Prospects for Earthquake Prediction

The following paragraphs describe three promising approaches to earthquake prediction.

-

Stress interactions. The stress transfer model for intermediate-term prediction provides a good retrospective explanation for many earthquakes sequences, but it has not yet been implemented as a testable hypothesis because the stress pattern depends on details of the previous earthquake rupture, fault geometry, crustal rheology, fluid flow, and other properties that are difficult to measure in sufficient detail. Monitoring earthquake activity can enhance our knowledge of previous earthquake ruptures, fault geometry, and crustal rheology and thereby enhance the possibility of implementing the stress interaction method as a testable hypothesis for earthquake forecasting.

-

Pattern recognition. Pattern recognition methods, which are based on statistical changes in seismic activity within a region, are currently

-

being tested in a real-time mode (see Box 4.2). Seismic monitoring of earthquake activity can enhance the accuracy of the seismicity on which this method is based and provide physical insight into the tectonic processes that contribute to the phenomena. These include changes in earthquake occurrence rates, earthquake clustering, near-simultaneous occurrence of earthquakes over a wide region, and changes in the proportion of moderate- and small-magnitude earthquakes.

-

Silent earthquakes. During the past decade, newly installed dense Global Positioning System (GPS) monitoring systems in the Pacific Northwest and Japan have revealed the occurrence of “silent earthquakes” on subduction plate interfaces. These silent earthquakes are similar to ordinary earthquakes in that they involve the relative movement of one side of a fault past the other, but they differ from ordinary earthquakes in that this slip occurs over a time interval of days to weeks, not the seconds to minutes of fault movement that occurs in ordinary earthquakes. These silent earthquakes are not completely silent—they cause muffled rumblings that are detected by seismic monitoring instruments. These new observations may constitute the means to observe the evolution of deformation on the plate interface that precede the occurrence of an earthquake. For example, a region of the plate interface that has not slipped recently—but lies adjacent to a region that has—may be a candidate for an impending earthquake. Such observations could be the basis for more focused monitoring of particular regions, providing some prospect for the development of an earthquake prediction capability. Regional networks of seismic recording instruments, such as those planned for the ANSS, provide an important component of the earthquake monitoring system needed for the potential development of such a prediction capability.