2

Project Management Performance Measures

CONTROL SYSTEM FOR PROCESS IMPROVEMENT

The purpose of performance measurement is to help organizations understand how decision-making processes or practices led to success or failure in the past and how that understanding can lead to future improvements. Key components of an effective performance measurement system include these:

-

Clearly defined, actionable, and measurable goals that cascade from organizational mission to management and program levels;

-

Cascading performance measures that can be used to measure how well mission, management, and program goals are being met;

-

Established baselines from which progress toward the attainment of goals can be measured;

-

Accurate, repeatable, and verifiable data; and

-

Feedback systems to support continuous improvement of an organization’s processes, practices, and results (FFC, 2004).

Qualitative and quantitative performance measures are being integrated into existing DOE project management practices and procedures (DOE, 2000). They are used at critical decision points and in internal and external reviews to determine if a project is ready to proceed to the next phase. Project directors and senior managers are using them to assess project progress and determine where additional effort or corrective actions are needed. However, DOE does not receive the full benefit of these measures because there is no benchmarking system to analyze the data to identify trends and successful techniques or compare actual performance with planned outcomes. For long-term process improvement, project performance measures and benchmarking processes should be used as projects are planned and executed as well as after they are completed.

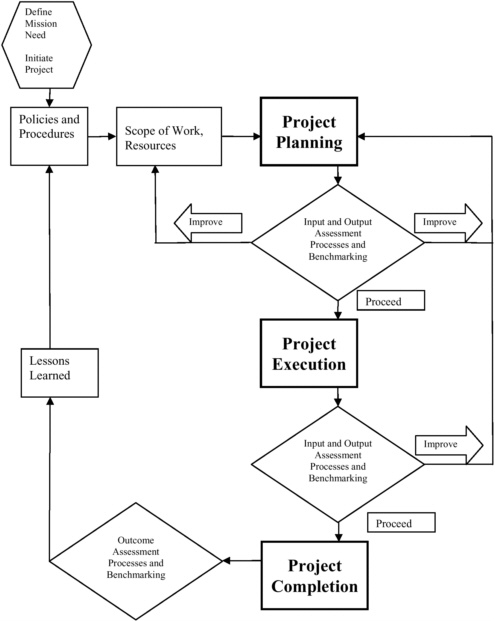

Figure 2.1 describes a project performance control model that can be used to improve current and future projects by identifying trends and closing gaps between targeted and actual performance. Current DOE project and program management procedures such as Energy Systems Acquisition Advisory Board (ESAAB) reviews, Earned Value Management System (EVMS), Project Analysis and Reporting System (PARS), Office of Environmental Management Project Definition Rating Index (EM-PDRI), quarterly assessments, external independent reviews (EIRs), and independent project reviews (IPRs) are integrated into this model and called assessment processes.

In this model, project management processes are applied to inputs such as project resources to generate project plans, and these plans and resources become inputs for project execution. Individual projects are assessed and benchmarked against project targets and the performance of other projects. Output measures are compared with performance targets to identify performance gaps. These gaps are analyzed to identify corrective actions and improve the project as it proceeds. Once a project is completed, an assessment can be made of what worked well and where improvements in processes and project teams are needed for future projects (NRC, 2004c). The project outcomes are assessed to develop

FIGURE 2.1 Model for controlling project performance.

lessons learned, which can be used as a feedback mechanism to improve policies and procedures and may drive changes in decision making and other processes.

INPUT, PROCESS, OUTPUT, AND OUTCOME MEASURES

Although assessment of the results of an internal process, such as project management, is much more straightforward than assessment of the results of public programs, the performance measures used

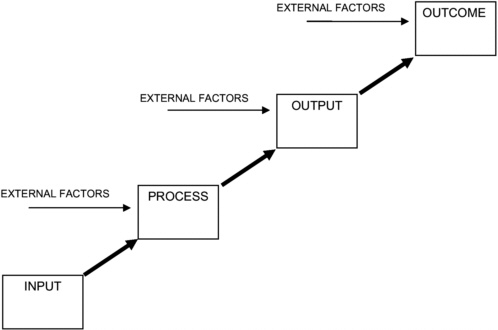

can have intrinsic similarities. Performance measures for public program assessments are generally identified as input, process, output, and outcome (Hatry, 1999). Input is a measure of the resources (money, people, and time) provided for the activity being assessed. Process measures assess activities by comparing what is done with what should be done according to standard procedures or the number of process cycles in a period of time. Output measures assess the quantity and quality of the end product, and outcome measures assess the degree to which the end product achieves the program or project objectives. Assessment becomes more difficult as the target moves from input to outcome because of the influence of factors that are external to the program.

FIGURE 2.2 Performance assessment model.

Following this paradigm, project management is essentially a process; however, project management can be evaluated at both the program and the project level to assess its inputs, processes, outputs, and outcomes (Figure 2.2). At the program level, the input measures include the number of project directors and their training and qualifications. Program process measures relate to policies and procedures and how well they are followed. Program output measures identify how well projects are meeting objectives for cost and schedule performance. Outcome measures focus on how well the final projects support the program’s or department’s mission.

When project management is assessed at the project level, the input measures include the resources available and the quality of project management plans. Project process measures look at how well the plans are executed. Project output measures include cost and schedule variables, while outcome measures include scope, budget, and schedule and safety performance. In the 2003 assessment report (NRC, 2004a), the desired outcome at the program or departmental level was referred to as “ doing the right project.” The desired outcome at the project level was “doing it right.” The committee noted that both are required for success.

The committee has identified all four types of measures, combined the two approaches (program and project), and grouped the measures in terms of the primary users (program managers and project managers). This approach separates measures used at the project level from measures used at the program/departmental level and combines input and process measures and output and outcome measures. In this approach, some output/outcome measures at the project level can be aggregated to provide measures at the program/departmental level through an analysis of distributions and trends. The committee believes that this will facilitate the benchmarking process by addressing the needs of the people who provide the data and derive benefits from the process.

SELECTING EFFECTIVE PERFORMANCE MEASURES

The measurement of performance is a tool for both effective management and process improvement. The selection of the right measures depends on a number of factors, including who will use them and what decision they support. For example, the airline industry has used on-time arrivals and lost bags per 1,000 as output measures, but to improve efficiency, procedures and processes are measured and analyzed in more detail by the airport ramp manager. Measures such as the time from arrival and chock in place to cargo door opening, the number of employees required and present for the type of aircraft, and whether the runner at the bottom of the conveyer is in place when the door is opened provide the information needed to improve efficiency and effectiveness.

Over the last several years DOE has improved and implemented new project management procedures and processes in the form of Order 413.3 (DOE, 2000). Efforts to measure and analyze implementation of the order at the project level can drive compliance and provide information for continued improvement. The committee believes that the requirements of Order 413.3 are directly correlated with project success but that performance data that measure the implementation of management plans are needed to support policies and guide future improvements.

The committee provides a set of performance measures in Tables 2.1 through 2.4, but it remains the responsibility of DOE to select and use the measures that work best for project directors, program offices, and the department. DOE should adopt performance measures suggested by the committee or other measures that have the following characteristics (NYSOT, 2003):

-

Measurable, objectively or subjectively;

-

Reliable and consistent;

-

Simple, unambiguous, and understandable;

-

Verifiable;

-

Timely;

-

Minimally affected by external influence;

-

Cost-effective;

-

Meaningful to users;

-

Relate to mission outcome; and

-

Drive effective decisions and process improvement.

The effectiveness of performance measures is also influenced by how well they are integrated into a benchmarking system. The system needs to be both horizontally and vertically integrated. That is the measures need to be balanced to provide a complete assessment of the management of a project and be combinable across projects to assess the performance of the program and across programs to assess the

impact of department-level policies and procedures.1 If any organizational entity can identify a measure that has meaning and identity throughout an organization, such a measure is very valuable and should be the goal of performance measure development.

The committee’s suggested performance measures are presented in four sets, including project-level input/process (Table 2.1), project-level output/outcome (Table 2.2), program- and department-level input/process (Table 2.3), and program- and department-level output/outcome (Table 2.4). Program- and department-level output/outcome are also the roll-up data of the project-level output/outcome measures. The program-level measures are also intended to be used throughout DOE. The tables describe the measures, how the measures are calculated, and specify the frequency with which data should be collected or updated.

Tables 2.1 through 2.4 include 30 performance measures. Taken individually, these measures lack robustness, but when they are analyzed as a group they provide a robust assessment of the individu variability and dependency of the performance measures. The adequacy of each performance measure individually is also limited, but combined they provide an assessment of the overall quality of project management for individual projects as well as the overall success of programs and the department. If the metrics are applied consistently over time and used for internal and external benchmarking, as described in Chapter 3, they will provide the information needed for day-to-day management and long-term process improvement.

REFERENCES

DOE (U.S. Department of Energy). 2000. Program and Project Management for Acquisition of Capital Assets (Order O 413.3). Washington, D.C.: U.S. Department of Energy.

EOP (Executive Office of the President). 1998. A Report on the Performance-Based Service Contracting Pilot Project. Washington, D.C.: Executive Office of the President.

FFC (Federal Facilities Council). 2004. Key Performance Indicators for Federal Facilities Portfolios. Washington, D.C.: The National Academies Press.

Hatry, H. 1999. Performance Measurement: Getting Results. Washington, D.C.: Urban Institute Press.

NRC (National Research Council). 2004a. Progress in Improving Project Management at the Department of Energy, 2003 Assessment. Washington, D.C.: The National Academies Press.

NRC. 2004b. Intelligent Sustainment and Renewal of Department of Energy Facilities and Infrastructure. Washington, D.C.: The National Academies Press.

NRC. 2004c. Investments in Federal Facilities: Asset Management Strategies for the 21st Century. Washington, D.C.: The National Academies Press.

NYSOT (New York State Office for Technology). 2003. The New York State Project Management Guide Book, Release 2. Chapter 4, Performance Measures. Available online at http://www.oft.state.ny.us/pmmp/guidebook2/. Accessed March 14, 2005.

Trimble, Dave. 2001. How to Measure Success: Uncovering the Secrets of Effective Metrics. Loveland Col.: Quality Leadership Center, Inc. Available online at http://www.prosci.com/metrics.htm. Accessed April 25, 2005.

TABLE 2.1 Project Level Input/Process Measures

|

Project-Level Input/Process Measure |

Comments |

Calculation |

Frequency |

|

|

1. |

Implementation of project execution plan |

Assumes appropriate plan has been developed and approved per O 413.3. |

Assessment scale from 1 (poor) to 5 (excellent).a Poor: few elements implemented. Excellent: all elements required to date implemented. |

Monthly |

|

2. |

Implementation of project management plan |

Assumes appropriate plan has been developed and approved per O 413.3. |

||

|

3. |

Implementation of project risk management plan |

Assumes appropriate plan has been developed and approved per O 413.3. |

Assessment scale from 1 (poor) to 5 (excellent). Poor: plan not reviewed since approved or few elements implemented. Excellent: plan reviewed and updated monthly and all elements required to date implemented. |

Monthly |

|

4. |

Project management staffing |

Is project staffing adequate in terms of number and qualifications? |

Assessment scale from 1 (poor) to 5 (excellent). |

Quarterly |

|

5. |

Reliability of cost and schedule estimates |

This measure is collected for each baseline change, and reports include current and all previous changes. |

Number of baseline changes. For each baseline change, (most recent baseline less previous baseline) divided by previous baseline. |

Quarterly |

|

6. |

Accuracy and stability of scope |

Does the current scope match the scope as defined in CD-0? |

Assessment of the impact of scope change on project costs and schedule on a scale from 1 (substantial) to 5 (none). Cost impacts of scope changes since CD-0 are

Schedule impacts of scope changes since CD-0 are

|

Quarterly |

TABLE 2.2 Project-Level Output/Outcome Measures

|

Project-Level Output/Outcome Measure |

Comments |

Calculation |

Frequency |

|

|

1. |

Cost growth |

|

(Estimated cost at completion less CD-2 baseline cost) divided by CD-2 baseline cost. |

Monthly |

|

(Actual cost at completion less CD-2 baseline cost) divided by CD-2 baseline cost. |

End of project |

|||

|

2. |

Schedule growth |

|

(Estimated duration at completion less CD-2 baseline duration) divided by CD-2 baseline duration. |

Monthly |

|

(Actual duration at completion less CD-2 baseline duration) divided by CD-2 baseline duration. |

End of project |

|||

|

3. |

Phase cost factors |

Use CD phases |

Actual project phase cost divided by actual cost at completion. |

End of project |

|

4. |

Phase schedule factors |

Use CD phases |

Actual project phase duration divided by total project duration. |

End of project |

|

5. |

Preliminary engineering and design (PE&D) factor |

|

PE&D funds divided by final total estimated cost. |

End of project |

|

6. |

Cost variance |

As currently defined by EVMS |

Budgeted cost of work performed less actual cost of work performed. |

Monthly |

|

7. |

Cost performance index |

As currently defined by EVMS |

Budgeted cost of work performed divided by actual cost of work performed. |

Monthly |

|

8. |

Schedule variance |

As currently defined by EVMS |

Budgeted cost of work performed less budget cost of work scheduled. |

Monthly |

|

9. |

Schedule performance index |

As currently defined by EVMS |

Budgeted cost of work performed divided by budgeted cost of work scheduled. |

Monthly |

|

10. |

Safety performance measures |

As defined by DOE environment safety and health (ES&H) policies |

Total recordable incident rate (TRIR) and days away restricted and transferred (DART). |

Monthly |

TABLE 2.3 Program- and Department-Level Input/Process Measures

|

Program- and Department-Level Input/Process Measure |

Comments |

Calculation |

Frequency |

|

|

1. |

Project director staffing. |

The number of certified project directors offers a shorthand way to assess the adequacy of senior-level staffing for major projects. In addition, information relating to their certification levels and experience can provide useful indicators over time of the health of the project management development process. |

Ratio of project directors to the number of projects over $5 million. Ratio of project directors to the total dollar value of projects. Number and percentage of projects where project directors have the appropriate level of certification. Average years of experience of the project director community. |

Quarterly |

|

2. |

Project support staffing. |

By identifying how many federal staff are assigned to a project and then over time measuring the project’s cost and schedule performance, DOE may develop some useful rules of thumb on optimal levels of staffing. To ensure a consistent count, staff should be formally assigned to the project and spend at least 80% of their time working on it. |

Ratio of federal staff to the numbers of projects over $5 million. Ratio of federal staff to the total dollar value of projects. Mix of skill sets found in the federal project staff. |

Quarterly |

|

3. |

Senior management involvement. |

The commitment of DOE leadership to good project management can be an important factor in ensuring continuing progress. This commitment can most easily be seen in the time that leadership devotes to project management issues and events. Senior leadership’s presence at critical decision and quarterly reviews sends a strong signal of the importance DOE attaches to good project performance. |

Frequency of critical decision reviews rescheduled. Effectiveness of reviews. Frequency of substitutes for senior leadership scheduled to conduct the reviews. Assessment scale from 1 (poor) to 5 (excellent):

|

Annually |

|

Program- and Department-Level Input/Process Measure |

Comments |

Calculation |

Frequency |

|

|

|

|

|

|

|

|

4. |

Commitment to project management training. |

Ensuring that key project management personnel are adequately prepared to carry out their assignments is critical to project management success. The willingness of DOE to commit resources to ensure that staff are able to fulfill their roles is an important indicator of the department’s support for improving project performance. |

Adequacy of data available on project staff training. Correlation over time between levels of project staff training and project success. Comparison of the level and amount of training for DOE federal project staff with that for the staff of other departments (e.g., Defense) and the private sector. |

Annually |

|

5. |

Identification and use of lessons learned. |

By effectively using lessons learned from other projects, the federal project director can avoid the pitfalls experienced by others. |

Categories for defining good project management from lessons-learned data. Comparison of factors used by DOE with factors used by the private sector to indicate effective project management performance. Suggested assessment from 1 (ineffective) to 5 (highly effective):

|

Annually |

|

Program- and Department-Level Input/Process Measure |

Comments |

Calculation |

Frequency |

|

|

6. |

Use of independent project reviews (external and internal reviews) and implementation of corrective action plans. |

Independent reviews can alert project directors to potential problem areas that will need to be resolved as well as provide suggestions for handling these problems. The varied experience of the independent reviewers offers the project director broad insight that goes beyond that available through his or her project team. |

Was an external independent review conducted on the project prior to CD-3? Percent of EIR issues addressed by corrective actions. |

Quarterly |

|

7. |

Number and dollar value of performance-based contracts. |

Performance-based contracting methods improve contractors’ focus on agency mission results. An increase in the number and value of such contracts should lead to improved project performance. A 1998 study conducted by the Office of Federal Procurement Policy found cost savings on the order of 15% and increases in customer satisfaction (up almost 20%) when agencies used performance-based contracts rather than traditional requirements-based procurements (EOP, 1998). Much of the success of a performance-based contracting approach results from effective use of a cross-functional team for identifying desired outcomes and establishing effective performance metrics.a |

Number performance-based service contracts. Total dollar value of performance-based contracts. Distribution of performance-based contracts across the various DOE programs. Share of performance-based contracts in all service contracts let by the department (dollar value). Percentage of project management staff who have received performance-based contracting training. Number of contracts that employed performance incentives to guide contractor actions. Number of contracts that were fixed price and the number that were cost plus. |

Annually |

|

8. |

Project performance as a factor of project size and contract type. Number and dollar value of contracts at the following funding levels: Under $5 million $5 million to $20 million $20 million to $100 million Over $100 million |

Information on the level and size of contracting actions will assist DOE in determining whether there is significant variance in project management performance depending on the overall dollar value of the project. To make this determination, data are needed on project cost and schedule performance for each project against initial targets. |

Variance in project cost and schedule performance based on the funding level of the project. Variance in project performance across the programs of the department. Correlation between use of various contracting techniques and project success. |

Annually |

TABLE 2.4 Program- and Department-Level Output/Outcome Measures

|

Program- and Department-Level Output/Outcome Measure |

Comments |

Calculation |

Frequency |

|

|

1. |

Assessment of project performance. |

DOE uses a dashboard-type approach for identifying to department executives those projects that are within budget and on schedule and those that are experiencing various levels of difficulty. An accounting of the number and percent of projects identified as green (variance within 0.90 to 1.15 of baseline cost and schedule), yellow (variance within 0.85 to 0.90 or 1.15 to 1.25 of baseline cost and schedule), or red (baseline variance of cost and schedule <0.85 or >1.25) offers a quick and simple means for assessing overall project performance across the programs over time. Progress is achieved as fewer projects are found with the yellow and red designations.a |

Number and percentages of projects designated as green, yellow, and red. Change in percentages of projects designated as green, yellow, and red over time. |

Quarterly |

|

2. |

Assessment of the length of time projects remain designated yellow or red. |

This measures the effectiveness of senior management review and the ability of project staff to take corrective action. |

Average length of time a project is designated yellow. Average length of time a project is designated red. Change in average length of time a project is designated yellow or red for programs over time. |

Quarterly |

|

3. |

Mission effectiveness. |

This measure is intended to integrate project performance measures with facility performance measures.b It is a comparative measure of an individual project within the program and how effectively the completed project fulfills its intended purposes and if the program has undertaken the “right project.” |

Assessment scale from 1 (poor) to 5 (excellent):

|

End of project |

|

aDOE’s current dashboard assessment prepared for senior management is basically effective. However, it depends on data in PARS that are not currently verified, and there is no process for collecting and analyzing historical data. Also, the committee believes the effectiveness of the dashboard in assessing project performance would be improved if the values for cost and schedule were differentiated and the expected performance were raised to green (0.95 to 1.05), yellow (0.90 to 0.95 or 1.05 to 1.10), and red (<0.90 or >1.10) for cost variance and to green (0.95 to 1.10), yellow (0.90 to 0.95 or 1.10 to 1.20), and red (<0.90 or >1.20) for schedule variance. bSee Intelligent Sustainment and Renewal of Department of Energy Facilities and Infrastructure, “Integrated Management Approach” (NRC, 2004b, p. 62). |

||||