Burroughs Wellcome Fund Evaluation Strategy

Martin Ionescu-Pioggia and Georgine Pion

Until 1993, the Burroughs Wellcome Fund (BWF; www.bwfund.org) was a small corporate foundation with a $35 million endowment. Its primary focus was on funding clinical pharmacology and toxicology research of interest to its parent pharmaceutical company, the Burroughs Wellcome Company. In 1993, BWF received a $400 million gift from the Wellcome Trust, the stockholder of Wellcome pharmaceutical interests worldwide. This substantial infusion of funding allowed BWF to become a private, independent foundation whose mission was to support both underfunded and undervalued areas of science and the early career development of scientists, with an emphasis on funding people rather than projects.

This influx of money also promoted the rapid growth of BWF programs. In 1995 the BWF Board of Directors undertook its first five-year strategic planning to allocate these new funds into areas where BWF could have the most impact. One result of this effort was the creation of a “flagship” program, Career Awards in the Basic Biomedical Sciences (CABS). CABS is a five-year $500,000 bridging award targeted at helping talented postdoctoral scientists obtain tenure-track faculty positions and achieve research independence. The program is highly selective and endeavors to launch the careers of future leaders in science.

Given the large investments in CABS and other new programs, the board wanted to determine whether program funds were well spent, that its newly created programs were achieving their goals, and that board-appointed advisory committees were selecting the best applicants as re-

cipients of BWF funding. The board requested that staff develop a strategy for evaluating grants to individuals and, to a lesser extent, project-type grants. During this period, the board made its overall approach to evaluation explicit. This paper describes the overall evaluation strategy, the evaluation efforts that have been conducted, and how the results of these evaluations have affected BWF decisions about both program design and investment in their continued operation.

OVERVIEW OF BWF’S EVALUATION STRATEGY

In determining what the role of evaluation should be in BWF decision making, the board identified the most important principles to incorporate in its evaluation process. These included the following:

-

Expert program review was to be conducted by advisory committees of senior scientists.

-

Advisory committee and staff review of program activities and grant recipients’ progress were to serve as BWF’s principal method of evaluation.

-

Members of the board also would serve as liaisons to each program, providing a vehicle for communication across levels of BWF. In addition to BWF staff program management, liaisons would help ensure that advisory committee activities (i.e., committee meetings in which grantees are selected and awardee progress is reviewed) would be consistent with program goals as set out by the board.

-

Annual awardee meetings were an important opportunity for both the board and the advisory committees to meet with the individuals whom BWF had funded and monitor how well grant recipients were doing.

-

Data-based outcome evaluations could be initiated to help inform the board of programs’ progress toward achieving their stated goals as appropriate. However, they were not to replace advisory committee and staff review as BWF’s principal method of evaluation.

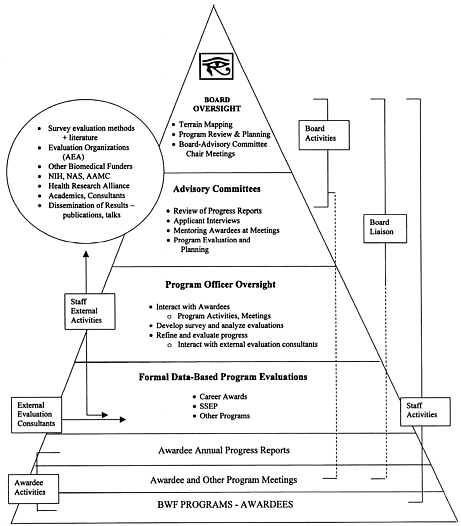

These key elements are illustrated in Figure 1. The figure is pyramid shaped to depict the flow of information and activities upward toward board oversight. The base of the pyramid is comprised of awardees and programs—the principal target for discharging the BWF’s mission of supporting underfunded areas of science as well as the early career development of scientists. The contents of the pyramid correspond to activities or groups involved in the award process. For the three types of BWF participants (board members, board liaisons, and staff) and for awardees, the

FIGURE 1 BWF’s key elements of evaluation.

vertical lines represent lines of activity or communication that link the strata of the pyramid together; solid lines indicate a direct or constant communication or activity, whereas broken lines identify an indirect or intermittent communication or activity. The impact of decision makers increases as the pyramid ascends, and in this regard, the pyramid reflects BWF’s position that advisory committee judgment carries more weight than formal data-based evaluations in the decision-making process. As can be seen, formal data-based evaluation studies form only one of several activities used by BWF to oversee and evaluate its programs.

As BWF’s experience with evaluation increased, it has added outreach activities to its portfolio of evaluation-related initiatives. Here, BWF shares evaluation methods and results with other funders and policymakers. External staff activities, including interactions with evaluation organizations (e.g., American Evaluation Association, Grantmakers for Effective Organizations) and other biomedical research and training sponsors involved in evaluation, also relay information to BWF, which is then used to guide future plans for evaluation of its programs.

In discussing BWF’s evaluation strategy and how individual evaluation studies would fit into this strategy, the board identified several factors that should guide the conduct of these efforts. That is, BWF should:

-

only initiate studies when there is a clear rationale for their conduct;

-

conduct outcome evaluations that are prospective (when possible), are empirical, and investigate outcomes relative to major program goals with stated a priori hypotheses;

-

employ outside experts to conduct the studies to ensure independence and credibility to findings (such consultants would work closely with staff in designing and executing these studies, and the results of this collaboration should be formally communicated through publications in the literature where appropriate);

-

make small but effective dollar investments in evaluation activities; and

-

be a “fast follower” in program evaluation rather than investing the capital to become a leader with a separate program evaluation arm.

The following sections summarize the major evaluation activities that were initiated for both “stand-alone” BWF programs and other initiatives that have been supported by BWF through other external organizations. As will be seen, whether awardee surveys were conducted or evaluation technical assistance was provided to awardees, these evaluations were consistent with the board’s guiding principles for assessment of its programs.

Table 1 provides an overview of these efforts and the BWF investment exclusive of staff time and administrative resources. For each program the evaluation activities are identified, along with instances of how the information yielded by these efforts was used by BWF in its oversight of programs. In some instances, uses of BWF evaluations by other organizations are noted. The following sections provide additional detail and discussion.

TABLE 1 A Summary of BWF’s Evaluation Efforts and Their Uses for Five Programs

|

BWF Program and Financial Investment |

Evaluation Activities |

Uses |

|

Career Award Program in the Biomedical Sciences (CABS): $110 million |

|

By BWF:

By Other Organizations:

|

|

Student Science Enrichment Program (SSEP): $10.8 million |

|

By BWF:

By Other Organizations:

|

|

BWF-HHMI 2002 Lab Management Course: BWF portion ~ $480,000 |

|

By BWF:

|

|

MBL Frontiers in Reproduction (FIR) Course: $1.2 million |

|

By BWF:

|

|

BWF Program and Financial Investment |

Evaluation Activities |

Uses |

|

American Association of Obstetricians and Gynecologists Foundation (AAOGF) Reproductive Science Fellowships: $1.1 million |

|

By BWF:

|

EVALUATING THE CABS PROGRAM

Description of the Program

The CABS program is modeled on the Markey Charitable Trust Scholars Program, which offered awards until 1991 (the foundation ceased operations in 1998). CABS was aimed at supporting the postdoctoral-to-faculty bridge at a time when large numbers of postdocs stagnated in postdoctoral positions because of the dearth of tenure-track faculty positions at universities. The situation was further exacerbated by the immigration of large numbers of foreign-born postdoctorals to the United States. The lack of tenured faculty positions compared to the eligible pool of candidates remains an issue today (National Academy of Sciences, 2000; National Research Council, 2005).

BWF receives 175 to 200 applications annually for the five-year $500,000 awards that require recipients to devote at least 80 percent of their time to research. With a competitive award rate of 10.7 percent, BWF has made 217 awards, for an investment of approximately $107 million in young scientists’ careers.

Evaluation Efforts

During the time BWF discussed evaluation of the program, there were neither similar programs (except for the previously mentioned one by the Markey Charitable Trust) nor evaluative data from the Markey program to inform its discussion. In addition, few studies had examined the impact of support for dedicated research effort during the postdoctorate or early faculty periods. It should be noted that shortly thereafter tracking evalu-

ations of programs aimed at young scholars did begin to surface while data collection for the CABS tracking study was under way (e.g., Armstrong et al., 1997; Willard and O’Neill, 1998).

Tracking Study

Partly because the CABS program was in its early years of operation and partly because systematic data were needed on grantees’ progress toward achieving the program’s intended outcomes, the decision was to first conduct a simple outcome tracking study. The study design purposively included a small number of critical outcome markers, based on the program’s goals or “markers of success” involved in becoming an independent investigator. Among them were:

-

receipt of a tenure-track faculty position at a research-intensive university,

-

time elapsed from degree receipt to first faculty appointment and total length of postdoctoral training,

-

percentage of time spent in research,

-

amount of start-up funding offered by the hiring faculty institution,

-

receipt of NIH R01 funding and time to receipt of first R01, and

-

number and quality of publications.

Data on these outcomes were collected in an annual survey of CABS recipients, with supplemental information being extracted from two external databases, NIH’s Computerized Retrieval of Information on Scientific Projects (CRISP) and the Institute for Scientific Information’s Web of Knowledge. For the first three CABS classes (1995–1997), data were collected retrospectively on individuals’ activities during previous years of the award; for later cohorts, individuals were surveyed annually throughout the award period. BWF was blinded to the results of the individual surveys and data were collected independently by a consultant to ensure awardee responses were free of any bias that might be created by the funder asking recipients to evaluate awards the recipient was dependent on. The results were judged against external markers; for example, the average length of CABS postdoctoral study was compared to the average length of postdoctoral training reported by others (e.g., National Academy of Sciences, 2000). Pion and Ionescu-Pioggia published the results of the initial tracking study in 2003.

These data have been updated from the 2002 publication and represent outcomes as of 2003. For the 126 CABS recipients whose award began between 1995 and 1999 (74 Ph.D.s and 52 M.D.s and M.D.-Ph.D.s), the data show that grantees had made substantial strides in developing an

independent and productive research career. After spending an average of 1.2 years in BWF-supported postdoctoral training, nearly all had obtained either tenure-track faculty appointments in U.S., Canadian, or European universities (97.6 percent) or tenure-track investigator positions at NIH (1.6 percent). The average length of postdoctoral study at application was approximately 32 months. When combined with the 11-month delay between application and the start of the award, the total length of postdoctoral study for awardees was approximately 4.8 years. Of those with faculty positions in the United States, 64.0 percent were at universities ranked among the top 25 in terms of NIH funding; the percentage increased to 83.8 percent when the top 50 institutions were considered.

Approximately 70.4 percent had NIH R01 funding, and this percentage grew to 76.5 percent when only those in U.S. universities—the group most likely to have applied for R01 support—were considered. Their average age at receipt of their R01 was 37.1 compared to 42 nationally (National Research Council, 2005). Examination of detailed data on publications and citations indicated that grantees published an average of 6.0 articles in peer-reviewed journals within the first four years of the award, and approximately half of these articles appeared in top-ranked journals such as Cell, Journal of Biochemistry, Journal of Immunology, Journal of Neuroscience, Molecular and Cellular Biology, Nature, Proceedings of the National Academy of Sciences, and Science.

In 2003 BWF temporarily suspended the annual evaluation survey because the results were not providing new insights into the program. The following year BWF developed an abbreviated version of the evaluation that awardees complete online with their annual progress report, and data are downloaded directly into a database for analysis. Results are shared with grant recipients.

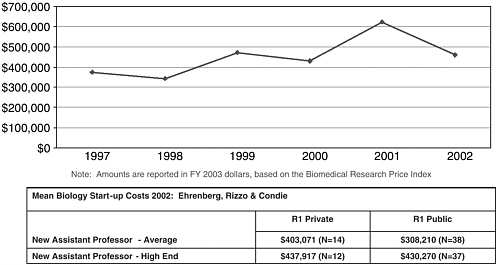

A later analysis compared awardees’ laboratory start-up packages to national averages for newly hired faculty at public and private universities and in average or high-end research areas (see Figure 2). Although direct statistical comparisons were not possible, the data suggest that the average start-up packages for CABS grantees hired in 2002 were higher than the national averages for new assistant professors involved in either “high-end or regular research” at public and private universities (Ehrenberg et al., 2003).

COMPARATIVE STUDY

The success of CABS grantees provides evidence that the CABS program is a sound investment for BWF. At the same time, their success raised the question of program effectiveness. Would they have excelled to the same degree without this award? After BWF completed the initial

FIGURE 2 Median value of start-up packages by year hired as faculty for 1997–2002 CABS grantees compared to results of a national survey.

tracking study, a second study, termed the “comparative study,” was launched to compare the performance of CABS recipients with individuals who had applied to the program but had not been selected to receive an award.

In designing the study, emphasis was placed on examining only those research-related career outcomes for which data were available from existing sources (e.g., NIH grants and publications). Although reliance on extant data has certain limitations (e.g., the restriction of outcomes to only those in available data sets), it also has certain advantages. For example, the data sources used were reasonably complete, resulting in very little, if any, missing data on the outcomes measured and absolutely no additional response burden on either CABS recipients or their counterparts who were not chosen for an award. It also was considerably less expensive than conducting a survey of applicants and their counterparts who applied to the fund but did not receive an award. The cost of both the tracking and comparative studies was $90,000, exclusive of staff time investments, or about 0.001 percent of award payout. This is significantly less than national guidelines for investments in evaluation, which range from 2.5 to 10 percent of dollars awarded.

Information was gathered for the 781 individuals who applied to the CABS program between 1996 and 1999. The data included (1) current employment, including type of employer and position; (2) receipt of NIH

research grants and career development awards; (3) number of publications, beginning from the year following application through 2003; and (4) the quality of these publications, based on the type of journals in which they appeared and citation counts. Background information also was gathered from the application files, including months of postdoctoral training, gender, race/ethnicity, citizenship, and research intensiveness of the nominating institution. The study includes an analysis of three descending levels of success. The top level is defined as having obtained a faculty position, an NIH R01 grant, and publication in a top-tier journal. The second level consists of having achieved only the faculty position and grant; the third level consists of the faculty position only.

The major comparisons of interest are those that contrast the performance of CABS award recipients on progress toward establishing an independent research career with two groups of applicants to the program: the interviewed-only group, those who were interviewed by the advisory committee but who were not chosen to receive an award, and the disapproved group, those who were not interviewed. Given the differences in research training, research interests, and work responsibilities, analyses are being performed separately for M.D.s and M.D.-Ph.D.s and for Ph.D.s. Because of how the selection process is structured, differences between the CABS grantees and the interviewed-only group are expected to be noticeably smaller than those between the CABS and the disapproved groups.

In any merit-based program, the challenge is to credibly attribute differences in performance that favor CABS recipients, rather than to talent or factors unrelated to the award. Where possible, analyses have been performed to adjust for initial preexisting group differences on such variables as months of postdoctoral training at the time of application and quality of the postdoctoral training institution. Results of the comparative study have been submitted for publication (Pion and Ionescu-Pioggia, in press). A long-term outcome analysis of approximately 60 to 80 CABS awardees who have been reviewed for tenure is planned.

Use of Evaluation Results

The evaluation data gathered from both BWF staff and formal evaluation studies have informed CABS program decisions and helped fine-tune the award to help meet the needs of recipients and validated BWF’s method of advisory committee application review. They also have been used by outside organizations involved with issues related to early-career investigators. Known uses of BWF’s evaluation efforts include the following:

-

Based on data from the tracking and comparative studies, as well as information from standard advisory-committee-based reviews of recipients’ progress, the board deemed that CABS was meeting its intended goals and decided to continue support for the program and increase the number of awards.

-

Outcome data provided to awardees have helped them gauge their progress relative to their awardee counterparts and are used by BWF to leverage additional university funding if the initial faculty offer is inadequate compared to survey averages.

-

Finding that approximately 60 percent of awardees “carry over” more than $100,000 of award funds upon award completion led to implementation of a policy allowing no-cost extensions for three to four years after the award period has ended. This provides an opportunity for the grantee to have research support in the event of a lapse of funding from other research sponsors or to have funding to conduct investigations in other “risky” areas of research that may not be initially fundable through other sources.

-

Based on outcome surveys and staff interviews with grantees, the patent policy for CABS recipients was revised to allow patents to either become the property of the investigator or to defer to institutional policy, supplemental grants were developed to support collaborative research and support awardee-initiated symposia at professional meetings, and the required minimum of one additional year of postdoctoral study for grantees who obtained faculty positions between the time of application and the start date of the award was waived.

-

Review of grantee progress reports and results of the tracking study revealed that grantees often had other sources of research support. Award policies were revised to allow them to receive awards from multiple private or public sources.

One important aspect of both the CABS outcome tracking study and the CABS comparative study is their value in informing policy recommendations. A 2000 National Academy of Sciences report, Enhancing the Postdoctoral Experience for Scientists and Engineers: A Guide for Postdoctoral Scholars, Advisers, Institutions, Funding Organizations, and Disciplinary Societies, called attention to the difficult training and funding environment for postdoctoral scholars and urged the government and others to act to remedy these issues. Consequently, in 2003, NIH convened a working group to better articulate early-career training and funding issues. BWF was asked to present data from the newly published CABS tracking study.

This working group instigated the formation of a National Research Council committee to review the early career development of biomedical investigators. The committee’s report, Bridges to Independence: Fostering the

Independence of New Investigators in Biomedical Research, was issued in 2005 (National Research Council, 2005). Given that BWF’s postdoctoral-faculty bridging award had 200 recipients and the only outcome data available, BWF also was invited to present findings from its evaluations at the initial meeting of the Bridges Committee (Ionescu-Pioggia 2004). Data presented by BWF suggest that bridging awards appear to promote early independence; however, causality cannot confidently be attributed to the award because of the design limitations inherent in the study. Early indicators of program outcomes provided support for one of the major recommendations from the committee:

NIH should establish a program to promote the conduct of innovative research by scientists transitioning into their first independent positions. These research grants, to replace the collection of K22 awards, would provide sufficient funding and resources for promising scientists to initiate an independent research program and allow for increased risk-taking during the final phase of their mentored postdoctoral training and during the initial phase of their independent research effort. The program should make 200 grants available annually of $500,000 each, payable over five years…. The award amount and duration [are] similar to [those] of the Burroughs Wellcome Career Awards [sic], which have shown success at fostering the independence of new investigators. (National Academy of Sciences, 2005, pp. 9–10)

The influence of BWF outcome data on the Bridges Report illustrates that a relatively small dollar investment in evaluation can have a major influence in potentially changing training and funding policies at a national level.

STUDENT SCIENCE ENRICHMENT PROGRAM

The board also recommended during its initial terrain mapping that BWF evaluate its Student Science Enrichment Program (SSEP) program. Unlike CABS, the impetus to evaluate SSEP was not based on the financial investment in the program (i.e., $1 million annually for SSEP versus $6.5 million to $13 million for CABS) but because science education was a new funding area for BWF and annual evaluations could help program development and fine-tuning.

Description of the Program

The goals of SSEP are to provide enrichment activities to students in grades 6 through 12 for the purpose of enhancing their interest in science. The rationale underlying this effort is that increased interest and competency in science will ultimately lead to the longer-term goal of priming the

science pipeline and research talent and capability. SSEP first offered these awards in 1996, which have involved more than 23,000 students in its programs and awarded more than $10.8 million to programs at 100 sites. The corresponding investment in evaluation and capacity-building activities for SSEP is 3 percent.

Evaluation Efforts

The general intent of the program is to inculcate or improve attitudes, such as enthusiasm for and learning about science, and to increase interest in participating in other science enrichment programs and, perhaps, choosing a career in science or technology. Some specific short-term measurable goals of the program include generating increased enthusiasm for science, learning about the scientific process, and improving scientific competency. In some ways SSEP is a difficult program to evaluate. Given that the programs provide “small doses” of science education “treatment” (e.g., two-week summer camps) and that longitudinal data from previous evaluative studies are scarce, the extent to which the intended long-term outcomes should occur is not clear. Also, tracking participants (let alone individuals in comparison groups) over an extended period of time so as to determine whether such outcomes as enrolling in college and majoring in one of the sciences is both costly and difficult. As a result, the SSEP evaluation focuses on short-term, postintervention results.

Outcome data on students’ perceptions of their competence and enthusiasm for science, interest in learning science, and overall satisfaction with the program have been collected annually since 1996. Ratings from the evaluators on program success based on student feedback data and observations of each project also suggest the program is accomplishing its goals.

An interesting facet of the SSEP evaluation initiative is that it includes ongoing evaluation capacity building for all sites to strengthen evaluation competency within funded programs and improve the quality of outcome data provided by each site to the summary SSEP outcome database. Capacity building supports major improvements to evaluation capability across sites, ensures that the quality of data submitted by each site is valid, and indirectly enhances networking between BWF and individual sites and among program grant recipients through an annually conducted evaluation meeting where new grant recipients are convened.

Use of Evaluation Results

Based on outcome data and the evaluation team’s observations of project activities, several characteristics appeared to be linked to program

success, which led to significant restructuring of the program. Moreover, the success of the SSEP program informed the board’s decision to continue and to expand program funding and create the North Carolina Science, Mathematics and Technology Education Center (http://www.ncsmt.org).

Following a review of the SSEP program and its evaluation, the Kauffman Foundation in Kansas City is developing a program modeled after SSEP—perhaps the most valid indicator of program success.

BWF-HHMI LAB MANAGEMENT COURSE

Description of the Program

BWF and the Howard Hughes Medical Institute (HHMI) conceived and developed a comprehensive 13-section, five-day course in laboratory management for advanced postdocs and new faculty—the first such course of its kind (Cech and Bond, 2004). The impetus for the course arose from survey and interview data collected by BWF of CABS training needs, which, when factor analyzed, pointed to the need for such a course.

To be successful, new investigators must employ management techniques and interpersonal skills in setting up and running their laboratories, which is very similar to running a small business; however, these skills are not taught in graduate or medical school or during the postdoctorate. Making the course available to awardees is a form of “career insurance” on BWF’s $115 million investment in early-career scientists.

To date, 220 BWF and HHMI postdoctoral fellows and new faculty have taken part in the course. In the 2005 course, 17 “partners” from universities and professional societies who collaborated on the development of the course attended and committed to offering smaller courses at their institutions. At the policy level, the BWF-HHMI laboratory management course provides a national and an international model for training new investigators in nonscientific management topics.

Evaluation Efforts

BWF developed the evaluation for the 2002 course, which involved surveys of course participants after each session, at the completion of the course, and at six months and one year after the course. These data were then used to determine whether to repeat the course and to identify areas that needed improvement. Based on evaluation data, 86 percent of participants said the “course met or exceeded expectations” and 98 percent would recommend the course to a colleague. As a result, the course was revised and offered for a second and final time in 2005. Making the Right

Moves: A Practical Guide to Scientific Management for Postdocs and New Faculty was published in 2003, making the core course text available to all postdoctoral fellows and those responsible for postdoctoral training. Approximately 10,000 printed copies have been distributed. The guide is available free at www.hhmi.org/labmanagement and an updated version along with a guide to help institutions develop their own courses will be available on the Web site in early 2006.

Efforts are under way to translate the guide into both Japanese and Chinese. BWF is examining the feasibility of producing an international guide more appropriate for developing countries. Perhaps the best evaluation of the course comes from HHMI download statistics for the guide from the HHMI Web site. Since its release in March 2003 through April 2005, the guide has been downloaded 75,000 times, in addition to nearly 74,000 individual chapter downloads. Table 2 illustrates chapter downloads from March 2003 through October 2004. The individual chapter downloads are indicative of young investigator training needs across the country. There is clearly a need for laboratory management training in the United States based on such large numbers of downloads.

Uses of Evaluation Results

Evaluation data from the first course was a critical element in deciding to reinvest in the course a second time and played a significant role in modifying the second version of the course. National interest in the course,

TABLE 2 BWF-HHMI Laboratory Management Guide Web Site Downloads by Chapter (October 2004)

|

N Downloads |

Chapter |

|

10,619 |

Getting Funded |

|

8,948 |

Data Management and Lab Notebooks |

|

7,380 |

Obtaining and Negotiating a Faculty Position |

|

4,016 |

Lab Leadership—Revised: Staffing Your Laboratory |

|

3,698 |

Time Management |

|

3,291 |

Mentoring and Being Mentored |

|

3,174 |

Getting Published and Increasing Your Visibility |

|

2,814 |

Lab Leadership—Revised: Defining and Implementing Your Mission |

|

2,682 |

The Investigator Within the University Structure |

|

2,649 |

Project Management |

|

2,207 |

Collaborations |

|

2,070 |

Course Overview and Lessons Learned |

|

1,616 |

Tech Transfer |

evidenced by the number of guide downloads, partially led BWF to the creation of the Partners Program, an effort involving 17 collaborators at universities and professional societies to help make training available to early-career scientists across the country.

The need for training in laboratory management was another conclusion of the Bridges to Independence Committee:

Postdoctoral scientists should receive … improved skills training. Universities … should broaden educational opportunities for postdoctoral researchers to include, for example, training in laboratory and project management, grant writing and mentoring. (National Academy of Sciences, 2005, p. 6)

Similarly the need for such training is included in Sigma Xi’s recently released national survey of postdoctorals and training needs, Doctors Without Orders (Davis, 2005).

MARINE BIOLOGICAL LABORATORIES: FRONTIERS IN REPRODUCTION: MOLECULAR AND CELLULAR APPROACHES

Description of the Program

BWF first codeveloped and funded this six-week intensive course in 1997 as part of its support for the underfunded and undervalued area of reproductive science, an area with scientific and distinct manpower development and retention challenges. The course aims to train early-career reproductive researchers in state-of-the-art techniques, to help them publish and obtain funding, and to retain them in the area. BWF’s investment in the course is $1.2 million.

Evaluation of the Course

Following an initial three years of funding and one three-year renewal, BWF wanted to know whether this course was meeting its goals given the $800,000 investment at the second renewal. BWF staff, in conjunction with the evaluation consultant and course directors, designed a tracking study to determine outcomes from the first six years of the course, which may be the first formal outcome study of “small-dose training treatments” like a six-week course.

BWF gave course directors a $10,000 grant to contract the evaluation. The study is complete and the manuscript is currently under review with the Journal of Reproductive Biology. Among other findings, outcomes demonstrate increased research capability, participants’ retention in repro-

ductive research, and increased publications in top-ranked reproductive biology journals (Pion et al., in press). These are impressive findings for a six-week training intervention when one considers the ratio of time spent at the course to the much longer period included in follow-up and a lifetime career.

Uses of the Evaluation

The study was submitted to the board along with other supporting data in 2002, and the board decided to fund the course for an additional three years. The evaluation made significant contributions to the board renewal of a third $430,000 three-year grant.

AMERICAN ASSOCIATION OF OBSTETRICIANS AND GYNECOLOGISTS FOUNDATION REPRODUCTIVE SCIENCE FELLOWSHIPS

Description of the Program

Again, as part of its earlier efforts in reproductive science, BWF invested approximately $1.1 million over 10 years in an external reproductive science fellowship. The fellowship was designed to provide support for postdoctoral research training of M.D.s in reproductive sciences.

Evaluation Efforts

Board terrain mapping conclusions, combined with CABS outcomes, led BWF to conclude that it receives better results when it manages programs it develops rather than supporting external endeavors. During the terrain mapping exercise, BWF wanted to know whether outcomes of the AAOGF fellowship merited continued support for the fellowship. Staff conducted an informal comparison of the fellowship to CABS data, concluding initially that CABS did significantly better than recipients of the external fellowship in terms of publications and external grant support. The board decided to sunset its support of the fellowship on this basis, giving AAOGF a $20,000 grant to formally evaluate the fellowship with the goal of improving outcomes.

Uses of the Evaluation

Because the more formal evaluation of the fellowship program was conducted after the board’s decision to no longer provide support, there were no direct uses of its results. The original study design utilized sur-

vey methods to capture previous awardees’ evaluations of the fellowship with the goal of making constructive changes to the fellowship structure; however, record-keeping problems involving the recipient organization prevented a survey from being fielded. Consequently, an outcome evaluation was completed using external sources that prevented feedback about the fellowship from being gathered, and a potential restructuring of the fellowship was not completed. Interestingly, the findings from the external study showed better outcomes than the staff evaluation in some areas. At the same time, in others, performance of AAOGF fellows was not equivalent to that of CABS recipients. These discrepancies provide a good warning about using informal evaluations to make program and funding decisions (Pion and Hammond, in press).

Some important evaluation lessons resulted from this experience. First, retrospective evaluations may be impossible to complete in a way that satisfies the initial goal of the evaluation. Second, caution should be exercised when planning to rely on records that are beyond the control of the funding agency. If evaluations of externally funded grants are planned, evaluation capacity building should be done with the recipient from the outset.

COLLABORATIVE EVALUATION ACTIVITIES OF THE HEALTH RESEARCH ALLIANCE

The Health Research Alliance (HRA) fosters collaboration among not-for-profit organizations that support health research with the goal of fostering biomedical science and its rapid translation into applications that improve human health (www.healthra.org). Guiding the development of the HRA have been 16 funding organizations, among them the Doris Duke Charitable Foundation, the Howard Hughes Medical Institute, the American Heart Association, the March of Dimes, the American Cancer Society, and BWF.

Within the area of evaluation, two of HRA’s principal interests are to facilitate the process by which members can conduct collaborative evaluations with other member foundations and relevant groups (e.g., American Association of Medical Colleges [AAMC], NIH) and to raise the level of program evaluation capacity among member foundations.

HRA’s gHRAsp Database (grants in the Health Research Alliance shared portfolio) is a database of health research awards made by nongovernmental funders that is currently under development. Data from gHRAsp can potentially be linked with data from external databases, such as those of AAMC and NIH. gHRAsp by itself has important evaluation potential because it should be possible, using the database to compare program outcomes across funders. External database linkages

exponentially expand such capability. Potential evaluations include cross-funder outcome evaluations, examinations of key program aspects supporting grant recipient success, background characteristics of successful scientists, institutional environments that promote grantee success, long-term funding patterns, and, particularly, utilizing member organizations’ outcome data as benchmarks for comparative studies.

HRA is also developing a white paper identifying core outcome variables for physician-scientist career development programs. BWF is active in helping HRA explore the evaluative potential of gHRAsp and helped convene a meeting between member foundations, NIH, and AAMC to mutually explore the evaluative possibilities posed by the database.

BWF is also leading a project for HRA to raise the level of program evaluation capacity by sharing members’ experiences with evaluation in a way that may also reduce evaluation costs for funders. The project will share survey methods and instruments from member organizations and will develop resources for practical evaluation of programs. Shared information leverages resources for evaluation among foundations since many organizations have small or no budgets for evaluation. HRA’s intention is to disseminate this information on the Web and in print as a shared resource for biomedical funders.

There is a pressing need to educate the funding community in evaluation given the reliance of the Bridges Committee on BWF outcome data and the report’s recommendations for assessment:

Ongoing evaluation and assessment are critical…. Ideally, this effort should be carried out in collaboration with foundations that have similar programs in order to obtain comparable data on a core set of outcomes. (p. 5-5)

HRA and gHRAsp have the potential to answer basic funding and grant recipient questions that heretofore have remained unaddressed and to help improve evaluations conducted not only by private biomedical funders but also by important related funding organizations, such as NIH.

SUMMARY

Albeit very modest, BWF’s investment in monitoring and assessing the outcomes of its programs has supplied useful data to inform the fund’s decisions about program design and continued investment in programs. In developing plans for new studies, staff plan to work closely with the BWF board, as was the case with the first CABS tracking study, to develop evaluations that address the outcomes of most interest and that are acceptable to the board at the onset.

The board had an interesting response to the comparative study that

may be of value to share with other foundations whose boards consist of biomedical scientists and corporate executives. Although the comparative study employs only modestly sophisticated methods, the board preferred outcome studies with clear, simple untransformed variables. The board’s reaction, and those of other organizations, to a well-designed study with several transformed or corrected variables (Fang and Meyer, 2003) was to dismiss the findings, even though they are favorable, in preference to expert opinion. These reactions argue for design simplicity and for close collaborations with governance in planning outcome evaluations.

As this paper was being written, the BWF Board of Directors conducted a thorough review of the fund’s evaluation strategy eight years after the original approach was formulated. The board reaffirmed the initial strategy and added several new foci. BWF should:

-

Collaborate with organizations to develop a better understanding of the environment for research. (For example: Is the academic job market contracting or expanding? How are foundation awards perceived by academic administrators, and do they provide awardees with any academic career advantage?) Examine the field of interdisciplinary science to characterize the movement to team science, the sorts of interdisciplinary science being conducted, and the career challenges and paths of investigators.

-

Review and possibly attempt to improve methods of expert review.

-

Conduct evaluations that provide grant recipients with useful information for negotiating the challenges to achieving research independence.

In conclusion, two points are worthy of special emphasis. First, the BWF’s most concerted efforts at evaluation were directed at CABS, a program with a high investment and one that could be considered unique or innovative. When CABS was fielded in 1994, only the Markey Trust had provided similar awards through its program for scholars. Although it had reached the stage where no new awards were being made, there are now several other new (albeit smaller) bridging awards program iterations based on BWF-Markey model (National Research Council, 2005, pp. 65–72) that were probably created partially because of CABS’s demonstrated success. Thus, for foundations that choose not to devote considerable resources to evaluation, the most reasonable approach and the one most likely to be beneficial to awardees, the foundation, and the field is one that focuses on unique and innovative programs in terms of evaluating them more systematically.

Second, one way to strengthen a foundation’s evaluation capacity is for it to collaborate with other foundations with similar programs and identify common outcome measures, along with feasible ways to measure and collect data on these outcomes. If the results of this collaboration are successful and data collection is implemented, the results will be several. Not only will foundations have data on their own grant recipients that can help them assess whether their program(s) is (are) achieving their intended goals, the availability of data from similar programs can be useful in constructing “benchmarks” for key outcomes that can be useful in providing some context for gauging their recipients’ outcomes with those of similar initiatives. Ideally, this collaborative effort among foundations and other sponsors also can strengthen the ability to implement prospective and more rigorous designs that can provide a better understanding of which programs work best for whom and under what circumstances. Consequently, the design of programs can be enhanced, biomedical research training can be improved, and the research enterprise can be strengthened.

ACKNOWLEDGMENTS

The authors acknowledge Russ Campbell, Rolly Simpson, Glenda Oxendine, Debra Holmes, and Barbara Evans for their assistance with evaluation information and Queta Bond, BWF president, for encouraging and supporting evaluation activities.

REFERENCES

Armstrong, P. W., et al. 1997. Evaluation of the Heart and Stroke Foundation of Canada Research Scholarship Program: Research productivity and impact. Canadian Journal of Cardiology 15(5):507–516.

Burroughs Wellcome Fund and Howard Hughes Medical Institute. 2003. Making the Right Moves: A Practical Guide to Scientific Management for Postdoctorals and New Faculty.

Cech, T., and E. Bond. 2004. Managing your own lab. Science 304:1717.

Davis, G. 2005. Doctors without orders. American Scientist 93(3, Suppl). Available online at http://postdoc.sigmaxi.org/results.

Ehrenberg, R. G., M. J. Rizzo, and S. S. Condle. 2003. Start-up costs in American research universities. Unpublished manuscript. Cornell Higher Education Research Institute.

Fang, D., and R. E. Meyer. 2003. Effect of two Howard Hughes Medical Institute research training programs for medical students on the likelihood of pursuing research careers. Academic Medicine 78(12):1271–1280.

Ionescu-Piogga, M. 2004. Bridges to Independence: Fostering the Independence of New Investigators. Postdoctoral-Faculty Bridging Awards: Strategy for Evaluation, Outcomes, and Features that Facilitate Success. Presentation at the National Academy of Sciences, June 16. Available online at http://dels.nas.edu/bls/bridges/Ionescu-Pioggia.pdf.

Ionescu-Piogga, M., and V. McGovern. 2003. ACD Working Group Postdocs: Training and Career Opportunities in the 21st Century. Career Achievement and Needs of Top-Tier Advanced Postdocs and New Faculty. National Academy of Sciences, Oct. 17. [Presentation available on request.]

Millsap, M. A., et al. 1999. Faculty Early Career Development (CAREER) Program: External Evaluation Summary Report. Division of Research, Evaluation, and Communications, National Science Foundation. Washington, D.C.

National Academy of Sciences, Committee on Science, Engineering, and Public Policy. 2000. Enhancing the Postdoctoral Experience for Scientists and Engineers: A Guide for Postdoctoral Scholars, Advisers, Institutions, Funding Organizations, and Disciplinary Societies. Washington, D.C.: National Academy Press.

National Research Council. 2005. Bridges to Independence: Fostering the Independence of New Investigators in Biomedical Research. Washington, D.C.: The National Academies Press.

Pion, G., and M. Ionescu-Pioggia. 2003. Bridging postdoctoral training and a faculty position: Initial outcomes of the Burroughs Wellcome Fund Career Awards in the Biomedical Sciences. Academic Medicine 78:177–186.

Pion, G., and M. Ionescu-Pioggia. In press. Performance of Burroughs Wellcome Fund Career Award in the Biomedical Sciences grantees on research-related outcomes: A comparison with their unsucessful counterparts. Submitted for publication.

Pion, G. M., M. E. McClure, and A. T. Fazleablas. In press. Outcomes of an intensive course in reproductive biology. Submitted to Biology of Reproduction.

Pion, G. M., and C. Hammond. In press. The American Association of Obstetricians and Gynecologists Foundation Scholars Program: Additional data on research-related outcomes. American Journal of Obstetrics and Gynecology.

Williard, R., and E. O’Neil. 1998. Trends among biomedical investigators at top-tier research institutions: A Study of the Pew Scholars. Academic Medicine 73(7):783–789.