Research Program Evaluation at the American Heart Association

Patricia C. Hinton

Founded in 1926, the American Heart Association (AHA) is avoluntary health agency dedicated to the reduction of disability and death from cardiovascular disease and stroke. The association’s 2010 goal is to reduce coronary heart disease, stroke, and risk by 25 percent compared to the levels in 2000. To accomplish this the association strives to raise public awareness about healthy lifestyles, enhance the focus of prevention among health care providers, and provide funding to research programs that will enrich the existing pool of evidence-based research and identify new ways to prevent, detect, and treat cardiovascular disease and stroke, the nation’s number one and number three leading causes of death, respectively.

AHA RESEARCH PROGRAM

The research program has two specific strategic goals:

-

Increase the capacity of the research community to generate the highest-quality research.

-

Identify critical research agendas and increase the understanding of specific cardiovascular-related issues, inclusive of basic, clinical, and population research.

The AHA has spent almost $2.5 billion on research since 1949. The association’s research expenses in 2003–2004 were $129.4 million, about

23.7 percent of its total expenses. The 12 AHA affiliates’ programs accounted for $73.6 million, and the national research program accounted for $55.8 million of that total.

For 2004, the association reviewed 4,554 applications for research funding and activated 1,057 new awards, a 23 percent success rate. Currently, the AHA is funding 2,309 investigators. Much of the annual research funding commitment supports career development awards. The AHA research portfolio includes the following types of programs:

-

Predoctoral fellowships. To help students initiate careers in cardiovascular research by providing research assistance and training for predoctoral Ph.D., M.D., and D.O. (or equivalent) students seeking research training with a sponsor/mentor prior to embarking on a research career. Funds are available for up to 2 years

-

Postdoctoral fellowships. To help a trainee initiate a career in cardiovascular research while obtaining significant research results. Supports individuals before they are ready for some stage of independent research. M.D., Ph.D., D.O., or equivalent at activation. Funded for two or three years.

-

Fellow-to-faculty transition award. This award provides funding for beginning physician-scientists with outstanding potential for careers in cardiovascular disease and stroke research. Physicians holding M.D., M.D.-Ph.D., D.O., or equivalent degree who seek additional research training with a mentor prior to embarking on a career in research are eligible. They must have completed clinical training by award activation but have no more than five years of postdoctoral research training. Funding is available for five years.

-

Beginning and scientist development grants. The goal of this award is to promote the independent status of promising beginning scientists. Eligible candidates include M.D., Ph.D., D.O., or equivalent faculty/ staff members initiating independent research careers, up to and including assistant professor (or equivalent) at activation. The award is for two years.

-

Scientist development grant. This award is designed to help promising beginning scientists move from completion of research training to the status of independent investigators. Faculty/staff up to and including the assistant professor level (or equivalent) at application. M.D., Ph.D., D.O., or equivalent at application are eligible. At activation, no more than four years should have elapsed since first full-time faculty/staff appointment at the assistant professor level or equivalent. The award is funded for three or four years.

-

Established investigator award. The award supports midterm investigators with unusual promise and an established record of accomplish-

TABLE 1 American Heart Association New Award Commitments for Fiscal Year 2003–2004

|

Program |

Dollar Amount |

Percentage of Dollars |

Percentage of Awards |

|

Predoctoral |

$10.1 million |

7 |

23 |

|

Postdoctoral |

$20.8 million |

15 |

25 |

|

Fellow-to-Faculty |

$7.0 million |

5 |

1 |

|

Beginning Grant-in-Aid |

$14.3 million |

10 |

11 |

|

Scientist Development Grant |

$49.3 million |

34 |

19 |

|

Established Investigator |

$14.0 million |

10 |

3 |

|

Grant-in-Aid |

$27.5 million |

19 |

18 |

|

Total unrestricted |

$143.0 million |

100 |

100 |

-

ments. Candidates must have a demonstrated commitment to cardiovascular or cerebrovascular science as indicated by prior publication history and scientific accomplishments. A candidate’s career is expected to be in a rapid growth phase. Faculty/staff members with M.D., Ph.D., D.O., or equivalent doctoral degree are eligible. At the time of award activation, the investigator must be at least four years but no more than nine years since the first faculty/staff appointment at the assistant professor level or equivalent. The award is funded for five years.

-

Grant-in-aid. The purpose of this award is to encourage and fund innovative and meritorious research projects from independent investigators. Eligibility is restricted to full-time faculty/staff of any rank pursuing independent research with M.D., Ph.D., D.O., or equivalent training. It is funded for two or three years.

Table 1 gives the amount of support that the AHA allocated to each program in 2004. Focused research programs supported by restricted funds are not included.

EVALUATION AND THE SUPPORT OF SCHOLARS

Research program evaluation is an important component of the AHA research program. In reviewing the association’s research program evaluation efforts since 1988, several questions will be considered:

-

Why is your organization conducting evaluations or assessments of the scholars or fellows it funds? Is this part of a larger assessment strategy?

-

What types and levels of information does your organization obtain from the evaluation or assessment?

-

How is your organization conducting its evaluation or assessment of scholars? Of special interest, how have you operationalized the outcomes in your assessments, what time frames do you use in your evaluations, and what level of reporting and/or monitoring is part of the evaluation or assessment?

-

How is the information collected through the evaluation used by stakeholders in your organization to guide and/or tailor the funding of scholars?

This paper focuses on those AHA programs whose purpose is to provide mentored training or a bridge to the development of independent investigative careers. These include postdoctoral fellowship, including affiliate postdoctoral fellowships and the fellows of AHA/Bugher Centers for Molecular Biology in the Cardiovascular System (awards activated in 1986 and 1991); Fellow-to-Faculty Transition Award; and Scientist Development Grant and Beginning Grant-in-Aid.

WHY CONDUCT AN EVALUATION?

The AHA conducts research program evaluations to increase our knowledge of its applicant pool, to determine how well each research program is meeting its objectives, as a basis for refining a program’s characteristics, and to ensure that the association is accountable to its supporters. The AHA has used several approaches or evaluation techniques within the umbrella of a larger assessment strategy. The types of evaluation the AHA has undertaken sometimes correspond to “formative” or “progress” evaluations and sometimes to “outcome” or “impact” evaluations, as described by Stryer (2004). Formative or progress evaluation looks at the ongoing activities of a program—program inputs, elements, outputs, and surrogate outcomes as markers of success of the program. Outcome or impact evaluation assesses intermediate and long-term benefits. Ultimately, the AHA is interested in the latter—determining the extent to which a research program has met its stated objective. In the case of training and career development programs, that expected outcome is productive investigative careers of cardiovascular and stroke scientists. However, in the early years of a program, when that long-term outcome cannot yet be assessed, formative evaluation is useful to determine whether a program appears to be on the “right track” toward achieving the objective and to determine whether changes in the program’s structure or eligibility criteria are warranted.

Therefore, most of the AHA’s efforts look at adequacy (extent to which a program is likely to address a problem or need), whether a program has any impact, and whether a program is initially effective and produces a

sustainable effect. The association began to develop its original evaluation strategy in 1988. The components of that strategy are described below, including the original components and whether each has been maintained and components added more recently.

OVERALL EVALUATION STRATEGY

In 1988 the AHA initiated an effort to develop a plan for evaluating its research programs. At that time the research program represented an annual investment of about a $65 million. Since about 1970 the association had been maintaining an electronic record of all national research program applicants and awardees. Beginning in the early 1980s, the association began maintaining a record of affiliate research program awards. In the late 1980s the association began to develop a new research management system. All this presented an opportunity to design a new system to support evaluation efforts. An evaluation plan was developed in 1988 and 1989 with the objectives of increasing understanding of characteristics of the AHA applicant pool and evaluation of how well AHA research programs meet their stated objectives. The objectives of most programs were to assist in research career development and/or fund scientific discovery. The original evaluation plan was designed to measure success against specific program objectives, not to evaluate the productivity of individual awards or determine the long-term scientific impact of AHA funding. The AHA Research Program Evaluation Guide (Hinton and Read, 1994) summarized the structure of the original evaluation and provided guidelines and templates for the association’s affiliates and national research program managers to use for evaluation.

The components of the AHA’s original evaluation plan were as follows:

-

Applicant/awardee profile—to document trends in the characteristics of the AHA applicant pool, by program type and year of application. These characteristics were included in the application form and so reflect applicant characteristics at the time of application. Such data collection had never before been done systematically before and provided a much clearer picture of the applicants for AHA funding and of changes in characteristics over time. This would fall clearly into the formative evaluation category and includes data on academic position, career stage, tenure status, degree, citizenship, age, gender, ethnicity, and past AHA funding.

-

Progress report review—prepared annually based on information collected from active awardees about accomplishments during the funding period. The information is summarized for a specific program, be it postdoctoral fellowship, grant-in-aid, or other. A number of measures

-

were identified and included in a questionnaire distributed with the request for the annual report of progress against proposed research aims. This would also fall into the formative evaluation category. Data reviewed include analysis of progress against goals, tenure/promotions received, extramural funding received, productivity, honors/awards, and professional memberships and activities.

-

Past applicant survey—a mailed survey distributed to applicants five or more years after the date of award termination. Both funded and unfunded applicants are asked to complete the survey, which is intended to determine the extent to which AHA-funded individuals have established successful research careers and whether applicants whom the AHA selected for funding were significantly more productive than those not funded. Data collected include academic position, number of promotions, tenure status, extramural funding, and percentage of time dedicated to research. This represents an outcomes evaluation, particularly for programs whose objective was career development. It measures the effectiveness of AHA support in encouraging productive research careers five years after the funding ceased.

-

Bibliometric analysis—summary of publications and citations collected from the ISI Science Citation Index (SCI) on each AHA applicant. This method of evaluation provides a measure of research productivity and of the level of use (citation) of articles produced. It provides information that is not dependent on the responses of the applicant. It supplements the outcomes evaluation of the past applicant survey. Like the past applicant survey, this analysis was conducted on both funded and unfunded applicants to determine if there was a significant difference in publication or citation rates. Data are collected on the number of publications, number of citations, and impact factor. Bibliometric analysis provides surrogate measures for scientific impact, although there are dangers in using citation count alone as a measure of impact, since sometimes incorrect or fraudulent papers may be cited frequently as examples of poor science.

All aspects of the original evaluation strategy were implemented at least once between 1988 and 1990. The programs that were evaluated included the national Clinician-Scientist, Established Investigator, and Grant-in-Aid programs. Based on those assessments, all components appeared feasible to implement routinely. However, the amount of staff time and costs (photocopy, postage, SCI data acquisition) suggested that it would be impractical to repeat all components of the strategy annually for the volume of applications managed by the AHA.

Of the original components of the evaluation plan, the applicant/ awardee profile has become an annual activity and has provided useful

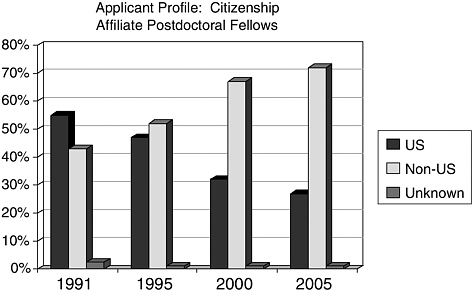

information, particularly via the tracking of trends in applicant characteristics over time. For example, the audience for the Established Investigator shifted from almost exclusively assistant professors (80 percent in 1994) to 51 percent assistant professors and 41 percent associate professors in 2004. As another example, the citizenship of postdoctoral fellows shifted from 43 percent non-U.S. citizens in 1991 to 72 percent in 2004 (see Figure 1).

On the other hand, the progress report assessment has not been implemented other than for the initial testing of the overall evaluation strategy in 1988–1989. The amount of time involved in an annual analysis of progress is prohibitive for over 2,000 awardees. Although scientific progress reports continue to be collected and reviewed annually, the objective information on academic promotion, publications, other funding, and honors has been eliminated from the progress report. The survey at award termination, described later in this paper, provides an alternative.

Past applicant surveys have been repeated for affiliate grants and fellowships. An expanded past applicant survey was conducted for the Clinician-Scientist Award because of concerns about the declination/ resignation rate for that program. Another survey was conducted to determine the long-term career impact on fellows of the AHA-Bugher Centers for Molecular Biology in the Cardiovascular System (Morgan and Paul, 1995; Hinton, 1998). Similarly, bibliometric analyses were

FIGURE 1 Citizenship of AHA postdoctoral awardees, 1991–2005.

conducted separately for affiliate grants and fellowships and for the Clinician-Scientist Award.

Beginning in 2001, the AHA began to organize a new evaluation strategy, based on the experience from earlier efforts and on a changing programmatic environment. Whereas the programs of the 1980s and early 1990s were relatively stable, beginning in the mid-1990s new programs were introduced more frequently and some old programs were discontinued. There was a greater need for rapid feedback on a program’s relevance and effectiveness. The “luxury” of waiting until five or more years after the termination of awards to evaluate a program was less frequently available. As a result, the AHA added two new components to its evaluation strategy:

-

Surveys at award termination—a new component of the evaluation strategy that gives more rapid feedback on the impact of AHA funding. This technique is made practical for annual implementation by the advent of e-mail and Web-based survey tools such as the one used by AHA (Perseus Survey Solutions http://www.perseus.com/survey/software/index.html). See Table 3 for measures collected at award termination.

-

Progress assessment of new programs—Limited assessments of new programs within two to three years of first implementation or to determine whether characteristics of an ongoing program warrant adjustment. The focus of the assessment is one or more characteristics of the program that may be in question such that further information is needed. These are useful to address specific concerns about the structure of the program even before the termination of any awards.

EVALUATION RESULTS FOR TRAINING AND CAREER DEVELOPMENT PROGRAMS

The AHA has conducted both formative and outcomes evaluations on its training and career development programs. Results of these evaluations are discussed in the sections that follow.

Postdoctoral Fellowships, Beginning Grants-in-Aid, and Scientist Development Grants

Because the AHA’s affiliate postdoctoral fellowships have existed for many years, both formative and outcomes evaluations have been conducted. Formative analyses have provided a good picture of the participants in the fellowship program (see Table 2), and a 2003 survey of sponsors of AHA fellows provided suggestions as to whether to modify the program. The results supported the following decisions:

TABLE 2 Applicant/Awardee Profile for Affiliate Postdoctoral Fellowship, Affiliate Beginning Grant-in-Aid, and National Scientist Development Grant Applicants (2003)

|

Measure |

Postdoctoral Fellowships 268 Awards—907 Applications |

Beginning Grant-in-Aid 141 Awards—639 Applications |

Scientist Development Grant 27 Awards—747 Applications |

|

Percentage with Ph.D. |

55 |

60 |

64 |

|

Percent in Academic position: |

|

|

|

|

Postdoctoral/Research |

93 |

18 |

25 |

|

Assoc/Intern Instructor |

1 |

75 |

65 |

|

Asst/Assoc/Prof |

7 |

7 |

9 |

|

Percentage U.S. Citizens |

28 |

51 |

41 |

|

Percentage Male |

59 |

67 |

64 |

|

Average age |

32.4 |

36.6 |

35.4 |

|

Percentage |

6 |

6 |

8 |

|

Underrepresented |

|

||

|

Minority |

|

||

-

Supported mandatory $1,000 minimum added to each fellowship to cover health care benefits for fellows.

-

Reinforced broad eligibility, including non-U.S. citizenship.

-

Led to change in target audience definition to include M.D.s or M.D.-Ph.D.s with clinical responsibilities who hold a title of instructor or a similar title due to their patient care responsibilities but who devote at least 80 percent full-time effort to research training M.D.s with clinical responsibilities.

Outcomes assessments have provided intermediate outcomes assessed at award termination and longer-term outcomes via past applicant surveys and bibliometric analyses.

The AHA queried sponsors of postdoctoral fellows in 2003, and 232 sponsors responded. They indicated that a desirable annual stipend for postdoctoral fellows was $34,000 to $45,000. The sponsors found the AHA fellowship attractive because (1) the fellowships are not limited to U.S. citizens, (2) funding levels are higher than those from the National Institutes of Health (NIH), (3) there is rapid turnaround on applications, and (4) it offers fellows an opportunity to begin their research careers. The sponsors said that the most important changes AHA could make to the

fellowship program included (1) increasing the stipend, (2) increasing the award duration, and (3) adding fringe benefits. Most fellows were given titles such as research associate, visiting scholar, instructor, or lecturer. Fellows with clinical responsibilities might be given the title of attending.

The postdoctoral fellows, grant-in-aid recipients, and scientist development grant recipients whose funding ended in June 2004 were surveyed to assess their experience while funded. The response rate for this survey was about 50 percent.

About 30 percent of the postdoctoral fellows had been promoted since the receiving the AHA award. In addition, all had received some extramural funding, with most former fellows receiving less than $50,000. Over 90 percent of the former fellows devoted 80 percent or more of their time to research and were moderately productive, with an average of 2.9 publications and 2.0 abstracts since getting the fellowship. Most expected the AHA fellowship to advance their research careers.

The outcomes were similar for the grant-in-aid recipients. Again, about 30 percent has been promoted since receiving the AHA award. Similarly, all had received extramural funding; however, half had received awards of $100,000 or more. About two-thirds of the grant-in-aid recipients devoted 70 percent or more of their time to research. Productivity levels were similar to those of the postdoctoral fellows; grant-in-aid recipients had 2.4 publications and 1.4 abstracts since receiving their awards. Nearly all believed that the award advanced their research careers.

Three-fifths of the recipients of scientist development awards had been promoted since receiving their awards. Moreover, all had received extramural funding; 67 percent had received more than $100,000 in extramural funding. About two-thirds of them devoted 80 percent or more of their time to research. They had an average of 6.4 articles and 5.3 abstracts since receiving the award. Recipients thought the award was tremendously important in advancing their research careers.

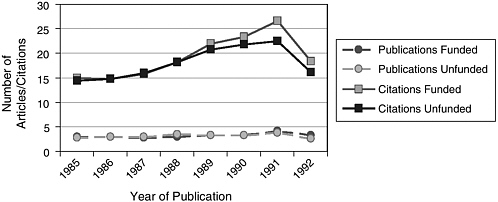

In addition, AHA conducted a bibliographic analysis of postdoctoral fellow applicants who applied for funding during the 1984–1985 funding cycle. Both the average number of publications and the average number of citations were compared for funded and unfunded persons for the 8 years following the funding decision. Results of the bibliographic analysis, shown in Figure 2, indicate that there was no difference between funded and unfunded applicants in the average number of publications but that funded applicants had a higher average number of citations.

Finally, follow-up surveys were conducted of one special group of fellows—those funded through the six AHA-Bugher Centers for Molecular Biology in the Cardiovascular System—to demonstrate the effectiveness of this program, which funded three centers beginning in 1986 and

FIGURE 2 Bibliographic analysis of 1984–1985 AHA postdoctoral applicants: 1985–1992.

three more beginning in 1991. The program was designed to achieve two specific objectives: (1) to stimulate and enhance application of the science of molecular biology to study components of the cardiovascular system and (2) to recruit and train young scientists with medical training to enter research careers in molecular biology of the cardiovascular system (Morgan and Paul, 1995). As of June 1996, 122 paid or honorary trainees had been involved in the six centers, 77 in the first three and 45 in the second three. A follow-up survey of trainees in the first three centers was conducted in 1994. The results suggest that these individuals as a group have continued in research and, more specifically, in molecular biology research. In addition, there is some evidence of impact in terms of increased application volume in molecular biology and increased molecular biology submissions to the AHA scientific sessions. Another survey was conducted in 1998 to update the information given below, and a third follow-up is currently in progress.

Scientist Development Grants

Though initiated in 1997, the Scientist Development Grant is a more recent program and is just reaching the point where a follow-up five years after award termination is possible. However, annual applicant profiles provide a good picture of the program’s participants, and surveys at award termination provide information on the intermediate-term impact of the program.

Fellow-to-Faculty Transition Awards

This is the newest of the regular AHA research programs, having its first round of awards activated in 2002. Its objective is to provide funding for beginning physician-scientists with outstanding potential for careers in cardiovascular disease and stroke research. The five-year award supports postdoctoral training and the early years of the first faculty appointment. Applicant profiles and progress assessments after two years are the only evaluations conducted to date. The progress assessment was conducted because of a particularly high declination/resignation rate in the second cycle of the program. In addition, there was a concern that the program was too duplicative of the NIH K08.

Of the original 17 awardees, five received an NIH K8 or K32 award; two left research for private practice. The 15 who stayed in research were surveyed and 11 responded. Although the results suggested that more of those surveyed would choose the NIH award because of its prestige and higher initial salary support, the caliber of the Fellow-to-Faculty Transition Award (FTF) applicants, the fact that several expressed a preference for the FTF, and the positive comments made about the FTF reinforced the association’s commitment to continue the program for at least two additional cycles. Twelve additional awards were activated in July 2004 and nine for July 2005. It was also reassuring to learn that most awardees were declining or resigning to accept NIH funding rather than rejecting research for private practice.

USING THE RESULTS

The results of evaluation efforts have been valuable in several ways. The results identify areas for and support adjustments to program characteristics. For example, when the applicant/awardee profile reports showed a trend of increased participation in the Established Investigator program by more senior investigators, the eligibility criteria were subsequently modified to clarify that applicants could be no more than nine years since the completion of their research training. Subsequent applicant/awardee profile reports showed the effectiveness of this change in criteria. Another value of the applicant/awardee profile has been to allow AHA to document the level of inclusiveness of women and underrepresented minorities in science.

In some cases an evaluation has aided in the decision to continue or end a program. This was a question in the cases of both the Clinician-Scientist Award and Fellow-to-Faculty Transition Award evaluations described above. The results of the Clinician-Scientist Award analysis suggested that some of the concern about resignations and declinations was

unwarranted. Similarly, the progress analysis of the FTF reassured the organization that this program was an important step for a number of physician-scientists, even though the “K” award would be the first choice of some who were offered both awards.

Taking a broader perspective, the analyses provide a rationale for the association’s continued support for research and for its emphasis on career development programs. Results of award termination surveys for the Scientist Development Grant have impressed the AHA Research Committee and the AHA Board of Directors with the level of progress that beginning investigators have shown during the tenure of these grants, which are intended to provide a bridge to academic research independence.

WHAT LIES AHEAD?

Since developing its original evaluation strategy, the AHA has had a desire to determine the public health impact of the research it funds. This goes beyond supporting the development of research careers or assessing the number of publications or citations. A methodology does exist, though the investment in implementing it is substantial. The AHA took advantage of the Comroe-Dripps (1978) study, The Top Ten Clinical Advances in Cardiovascular-Pulmonary Medicine and Surgery, 1945–1975, to identify AHA awardees who were on critical paths to major biomedical discoveries. Could a similar study be designed and repeated today?

Also ahead is another round of past applicant surveys and bibliometric analysis to assess the longer-term impact of the Scientist Development grants on research careers. Once the first FTF awardees complete their awards, an exit survey will be initiated. To the extent possible, the AHA wants to routinize and automate its evaluation efforts to make it more likely that they will be continued in a consistent manner.

Finally, the AHA welcomes opportunities, such as that presented by this workshop and by the new Health Research Alliance, to collaborate on the evaluation of programs with career development objectives similar to those of the AHA. Perhaps that collaboration could address some of the questions the AHA struggled with in conducting its evaluations:

-

How frequently should ongoing programs be evaluated?

-

How does one define the control group in evaluation or the standard against which outcomes are to be measured? Is it the unfunded group? Is it the results from a program funded by another agency?

-

Is a sample of all awardees sufficient? If so, how large?

-

At what level of detail should one measure? Is a count of publica-

-

tions sufficient, or is the impact factor important? Is the total amount of extramural support sufficient, or is the source critical?

-

Should one maintain applicant or awardee contact information or try to locate investigators later?

-

What is an acceptable response rate? How can response bias be avoided when comparing awardees to unfunded applicants?

-

Are successful outcomes a function of good peer review at the beginning or of the funding?

-

How does one evaluate the impact of the program on public health?

REFERENCES

Comroe, J. H., and R. D. Dripps. 1978. The Top Ten Clinical Advances in Cardiovascular-Pulmonary Medicine and Surgery, 1945–1975. U.S. Department of Health, Education, and Welfare, Public Health Service, National Institutes of Health. Washington, D.C.: U.S. Government Printing Office.

Hinton, P. C., and S. Read. 1994. AHA Research Program Evaluation Guide. American Heart Association.

Hinton, P. C. 1998. American Heart Association-Bugher Foundation Centers for Molecular Biology in the Cardiovascular System, Update on Progress. American Heart Association.

Morgan, H. E., and S. R. Paul. 1995. American Heart Association-Bugher Foundation Centers for Molecular Biology in the Cardiovascular System. Circulation 91(2):487–493.

Stryer, D. 2004. Program Evaluation Fundamentals and Best Practices. Invited presentation, Partnering to Advance Health Research: Philanthropy’s Role, March 4.