3

New Challenges and Directions for MS&A

CAPABILITIES NEEDED FOR DEFENSE MS&A

In Chapter 2, the committee identified three overarching themes that must be reflected in DoD’s future development of MS&A: networking, adaptability, and embedded systems. These themes lead the committee to make three recommendations, which will be expanded on in this chapter:

Recommendation 1: DoD should give priority to developing flexible, adaptive, and robust MS&A methods for evaluating military strategies.

Recommendation 2: DoD should ensure that the basic architecture of MS&A systems reflects modern concepts of network-centric warfare.

Recommendation 3: DoD should give special emphasis to the development of MS&A capabilities that are needed within embedded systems.

These recommendations give rise to a set of functional needs. Because the new challenges in defense planning are dominated by uncertainty, solutions should emphasize strategies that are flexible, adaptive, and robust (FAR). That, in turn, requires an approach to MS&A that can help identify candidate FAR strategies and evaluate them. Adaptability is not a characteristic of most legacy systems, which include scripted (predetermined) data entities, strategies, tactics, and behaviors.

To elaborate, if DoD strategies and programs are conceived with branches and other features designed to cope with uncertainty, then the evaluation of options requires models that generate the realistic dynamic circumstances with which the strategic options will have to deal. Such models will need to reflect the learning, adaptive, and sometimes random behaviors of individual groups. They will also need to reflect the possibility of structural changes in the system as coalitions form or dissolve, key leaders emerge or disappear, and physical events change the realities of, say, geography or access. DoD can no longer evaluate its strategies with models conceived in a paradigm of well-defined closed systems. Adaptation can be achieved by drawing on methods derived from operations research, game theory, control theory, and agent-based modeling (see the subsection “Other Methods for Representing Adaptive Systems” in this chapter).

Another functional need is to ensure that people are employed effectively in the use of MS&A. One lesson learned over and over is that people are exceedingly capable when dealing with uncertainty or innovative concepts, or integrating across boundaries such as those associated with DIME and PMESII—indeed, often much more capable than traditional models and simulations. This superiority of human beings is so clear that, in some situations, gaming is a preferred method for operational planners and strategic planners. Gaming, however, has many limitations, including the potential for missing constraints imposed by the physics of the situation or by the real-world capabilities of systems. Further, humans have only limited capability to deal with complex nonlinear phenomena; they may be very creative and sometimes find novel solutions, but rigorous thinking amidst complexity is difficult. Furthermore, attempts to be rigorous often oversimplify the problem and obscure possibilities that are important, such as the diverse reactions of an opponent to one’s own actions. It follows that MS&A should be reconceived broadly to include human gaming and interactive M&S for originality and insight. This applies whether MS&A is used for the support of strategic planning or for training, operations, or acquisition. Although neither gaming nor interactive M&S is rigorous, they have demonstrated the ability to reveal important factors and possibilities often missed by individual analysts or decision makers.

Representing Complex, Dynamic, and Adaptive Systems

Previous generations of MS&A were developed largely with perspectives that we now associate with idealized prob-

lems and mass-dominated forms of warfare emphasizing attrition and, sometimes, maneuver. This style reflected the educational background of the model builders and the experience of the United States in two world wars. These were “industrial wars,” and the U.S. style in war was reasonably described as winning through sheer overwhelming force with large military forces and prodigious quantities of aircraft, ships, and tanks (Weigley, 1973). In addition, the individual services fought separately and had clear sectors of responsibility, which simplified deconfliction. Finally, the U.S. military thought about war as combat itself, with relatively little discussion of gray area activities before, during, and after combat.1

Over the last 15-20 years, the shortcomings of that approach to MS&A have increasingly been recognized, and the skyrocketing power of computing has made richer approaches possible. As far back as 1980, a new approach that would be, in today’s terminology, more joint and more integrated with both political and military considerations was being considered by DoD. The Office of Net Assessment sponsored an ambitious undertaking along those lines at RAND. With the end of the cold war, the disappearance of the Soviet threat, and the temporary loss of interest in big models and games, the effort dissipated despite its successes, although leaving behind the improved Joint Integrated Contingency Model (JICM), which is now an important element of analysis in parts of DoD. The effort also stimulated a great deal of research into planning under uncertainty, which has contributed significantly to today’s concepts of capabilities-based planning (CBP) and adaptive planning (the phrase used by DoD when referring to operations planning under uncertainty).

During those same 15-20 years, researchers in a number of scientific disciplines were making progress in modeling complex adaptive systems (CAS). Chemists, physicists, biologists, economists, engineers, and others nurtured and shaped CAS theory as a powerful way to look at much of what goes on in the real world. The approach to modeling CAS includes systems of interacting actors at different levels of organization, actors that have goal-seeking behavior and that can learn, adapt, and interact in ways that sometimes lead to higher-level phenomena (emergent behavior) whose character is not predictable by viewing the individual actors.2 This extended earlier thinking about systems, such as the system dynamics methodology pioneered at MIT by Jay Forrester,3 and added an emphasis on nonlinearities and an increased ability to predict events that were sensitive to initial and subsequent conditions.4

By recognizing this fundamental fact—that is, that the real world is a complex adaptive system—one’s approach to MS&A changes substantially. Table 3.1 displays some of the changes. The first column applies to relatively simplistic views and models; the middle column applies to a few defense models of the 1980s, such as the RAND Strategy Assessment System (RSAS), Eagle, and the Navy Simulation System (NSS); and the last column arguably applies to the future. If the table is roughly correct, then the conclusion is inescapable that representing jointness, DIME/PMESII aspects, and asymmetric strategies requires modeling that is mindful of the paradigm of complex, adaptive, and dynamic systems. Moreover, that conclusion does not depend on whether particular methods of current mainstream CAS research prove enduring.

Table 3.1 might suggest an inexorable movement toward complexity—that is, a move from simple models to something inherently deep and detailed. There may, in some respects, be such a movement, but movement toward complexity is not what this report recommends. Instead, the committee sees the need for families of models and games varying greatly in level of detail and perspective. Perhaps most models should be relatively small, specialized, and readily understandable, with only a few models and simulations used for integrating concepts, as described in the last column of the table. The model-family concept is discussed further in the section titled “Promising Technical Approaches for Attaining the Needed MS&A Capabilities.” The importance of relatively simple models is also discussed there and elsewhere in this chapter.

Features mentioned in the intermediate column were achieved to a significant degree in some 1980s-era systems, such as the RSAS, the Eagle, and the NSS and to some extent in the Joint Warfare System (JWARS) system currently being tested.

Representing Embedded Systems

A special challenge in representing complex systems arises from the need to develop MS&A capabilities that are part of embedded systems. In such cases, there might be feedback loops, dynamic incorporation of new data, and complex interactions between real (sensed) and simulated data. The state space becomes enormous, yet quality assurance must be high. There are many unresolved challenges as a result.

Although embedded MS&A capabilities have existed as an integral part of many systems, especially DoD systems,

TABLE 3.1 Levels of Model Sophistication

|

Aspect |

Simplistic |

Intermediate |

Advanced |

|

View of war |

Continuous “piston- driven” battles. Simple system depictions with with air, mari- time, and ground components. |

Maneuver leading to discrete battles with attrition and move- ment affected by material and qualitative considerations. Richer logistics and depiction of political-military systems, with some models represent- ing decision makers’ war plans. |

Preparation, combat, and stabilization and reconstruction, substantial political and economic aspects. Allow big shifts of war trajectory as result of changes in leaders, coalitions, forces, or events. Logistics with just-in-time and responsive aspects. |

|

Number of parties |

Two sides, with allies folded into the appropriate side. |

Plus some explicit modeling of third countries. |

Plus nongovernment organizations and threats. |

|

Decision making and strategies |

Implicit in data or behavioral algorithms in specialized models. |

Top-down decision models, political and military branches and adaptations. |

Top-down, bottom-up, and distributed decision making and behavior at all levels, sometimes emergent. |

|

Instruments |

Physical attrition and targeting. |

Plus some mechanisms for escalation, de-escalation, or or termination. |

Plus nonkinetic attacks and mechanisms of coercion and dissuasion. |

|

Attrition and targeting mechanisms |

Difference equations with situational coefficients; direct physical destruction. |

Plus per-sortie or or per-shot kills, breakthrough effects, and other embellishments. |

Plus nonkinetic kill mechanisms and effects-based operations. |

|

Nature of variables |

Only objective variables, such as a side’s firepower. |

Plus soft variables such as a side’s fighting effective- ness, affected by morale, leadership and other factors. |

Plus soft variables such as nationalism, ethnic group association, and propensity for brutality and terrorism. |

|

Command and control (including information assurance and intelligence) |

Assumed perfect. |

Plus specialized technical models of communicat- ions. Plans pre- determine who does what to whom. |

Plus network-centric concepts with, e.g., publish-subscribe architectures and capacity for self-synchronization. |

|

Intended purpose of model runs |

Emphasis on predictive modeling. Some sensitivity. |

Multiscenario analysis with recognition of great uncertainies. |

Exploratory analysis in search of strategies that are flexible, adaptive, and robust. |

their number and diversity have greatly increased, from handheld devices to spaceships, and hence vary greatly in the constraints on computational power, memory management, and timing requirements. The projected use of unpiloted ground and air vehicles or swarms of vehicles that can respond autonomously to environmental changes or changes in enemy actions observed by onboard detectors is a prime example of the importance of embedded systems to future military capabilities. In order to effectively respond to changes, these vehicles need to autonomously project the effect of their courses of action. Despite the widely varying scales and capabilities of embedded systems, most embedded systems have the same impacts on MS&A.

Most prominently, in an embedded system, the MS&A has gone from being an offline computational capability for reasoning about the system to being an integral part of a performing system in an online, often real-time capability. This mode of operation for MS&A requires new types of traceability, new ways to provide checkpoints for the system so that it can back up to previous decisions and states within a rapidly changing circumstance, and new ways to evaluate and report partial results amid ongoing computation. It also requires new self-monitoring and self-analysis capabilities that provide, for example, a computationally reflective MS&A system that monitors its own state and acts on and modifies itself.

Because embedded systems must use the currently available data within a fixed amount of time and must therefore sometimes yield intermediate results, we will need advances in methods for evaluating the goodness of the current solution or the computational progress so far—that is, how far the current solution is from the optimal and how much additional value can be obtained by continuing computation.

M&S embedded within a command and control system exemplifies what is generally known as a dynamic, data-driven application system (DDDAS). Such systems are the target of a major program at the National Science Foundation.5 In such a system, the embedded M&S is expected to control and guide a measurement process, determining when, where, and how to gather additional data. The embedded M&S must operate at both a global level—determining which systems to use to collect more data—and a local level—guiding particular systems as they gather measurements.

The vision of a DDDAS-style system also includes a second major goal, the incorporation of dynamic data inputs into an executing application in order to have the currently most accurate data available for models and other computational processes. This vision includes the ability of the system to accept and respond to dynamic inputs from live data sources that might include users, computational processes, archival data, or sensors.

One of the hardest challenges in such systems is the reconciliation of the modeled world with a continuous stream of newly measured data. To the extent that this challenge can be met, the advantages are clear for a large number of current MS&A applications, especially in time-critical applications such as route planning. Embedded systems that incorporate simple forms of MS&A are already tackling this hard issue of reconciling and updating their models with newly sensed information. However, the state of the art requires a lot of hand-tuning, and there has been little analysis or validation of these early systems.

Representing Networking

Through most of the 1990s, DoD’s models and simulations were largely developed with the technical aspects of command and control taken for granted or, at best, treated by separate communities, such as those dealing with communication issues or space surveillance. Within most combat models, command and control was largely assumed to work, except perhaps for parameters representing delays and hard-wired relationships allowing only some organizations to communicate with others. The mental picture of command and control was often point to point, and if one had a specific problem in mind, such as requiring the presence of a particular surveillance platform, that platform could be added with specific point-to-point links.

The modern concept of networking is quite different from this point-to-point perspective. Some of the capabilities enabled by the increasingly ubiquitous presence of networks and associated services are the following: (1) planners can draw information worldwide without knowing the specific locations where the information resides, (2) operating forces can obtain situational-awareness (sensor and intelligence) information without hard wiring sensor-to-weapon-platform links, and (3) flexible command and control and organizational relationships can be established to meet the needs of immediate contingencies and then to adapt as the situation evolves.

This type of networked world and the concomitant network-centric operation suggests the need for a new generation of MS&A having basic architectures in tune with modern-day concepts. Such architectures would probably be quite different from the prenetworking architecture, which had a simple overlay of particular platforms for sensing and communication. Some strides are being made, but the committee believes that no clear consensus yet exists for how network-centric operation should be represented in DoD’s MS&A, either as retrofits to legacy models or in future models.

The need for this new MS&A capability is critical. It is required to help develop operational concepts and force structures for U.S. and coalition military forces, allowing them to meet and adapt to the new threats facing them, particularly in light of uncertainty about where and in what manner threats will arise. Furthermore, the DoD investment

|

5 |

Information available online at http://www.dddas.org. |

in developing network-centric capabilities is large—the expenditure estimated for the Global Information Grid (the emerging networking and information infrastructure) through 2011 is $34 billion (GAO, 2006), and analytical means are necessary to optimize this and later investments.

The following examples are representative of the current state of network-centric M&S for the major DoD process areas shown in Figure 2.1.6

-

Concept development and capabilities definition (example 1). Agent-based models with simple agents (i.e., few rules governing their behavior) have demonstrated qualitative behaviors of network-centric operation (e.g., self-synchronization of force elements). As such, these models can serve as exploratory tools, but they do not provide the quantitatively supportable results needed for more detailed analysis.

-

Concept development and capabilities definition (example 2). Numerous experiments involving live and simulated forces have been conducted to explore network-centric operational concepts. While a variety of models and simulations support these experiments, they themselves do not typically embody network-centric concepts—rather, the network-centric behavior is achieved by connecting the individual models and simulations over physical networks.

-

Programming and budgeting. Traditional models (CASTFOREM, VIC, NSS, and others)7 have been used by the military services in making major resource allocation decisions affecting the development of network-centric capabilities (e.g., for the Army’s Future Combat System). While the services indicated they had made significant progress in the course of these analyses, these activities were assessed as being only initial efforts in addressing network-centric operations (MORS, 2004).

-

Acquisition and engineering. Network Warfare Simulation (NETWARS) is the Joint Staff’s standard model for measuring and assessing information flow through existing and planned military communications networks. It is intended for analyzing performance resulting from behavior at the physical layer through the network layer, and it provides an extensive capability for doing so. However, its focus on the lower layers of the network precludes it from modeling important technical factors such as information assurance (beyond encryption), higher-layer services (e.g., discovery and collaboration), and ad hoc entry to and exit from networks that are critical to envisioned modes of network-centric operation.

-

Training and operations. Agent-based models coupled with the techniques of dynamic network analysis have been applied to address operational problems confronting combatant commands. Examples include assessing and improving the organizational behavior of command and control staffs and characterizing the network structure of terrorist threats and their surrounding social environment. While these examples illustrate how the underlying technologies contribute to operational use, much basic research must be done and empirical data collected for a broad, robust application of the technologies.

In summary, there is activity in each of the four DoD process areas shown in Figure 2.1, but in none of them is there yet a broad conceptual basis for addressing the problems of the area.

Given the need for MS&A to represent network-centric operations and the current state of such representation, as indicated by the examples above, the committee recommends as follows:

Recommendation 4: DoD should establish a comprehensive and systematic approach for developing the MS&A capabilities to represent network-centric operations:

-

Enhance and sustain collaborations among the various parties developing network-centric MS&A capabilities.

As it researched the examples given above, the committee found little evidence of significant interaction and cross-fertilization across the application communities associated with each of the examples. Such interaction is necessary to promote innovation and establish broadly based capabilities. The necessary collaboration might be facilitated by a DoD-sponsored series of workshops involving all the communities, leading to a substantive report synthesizing the views of the different communities and identifying opportunities for cross-fertilization.

-

Continue and extend the development of existing approaches to modeling network-centric operation.

Since the basic architecture and functioning of traditional models reflect a prenetwork perspective on military operations, those models are not adequate for describing network-centric operation. Still, they cannot be abandoned at this time since no other means exist for supporting certain important quantitative analyses such as resource allocation. Agent-based models have shown some promise, and

TABLE 3.2 Important Directions Recommended in This Report for Advancing the Capabilities of Defense MS&A

|

Area |

Recommended Topic |

Page |

|

Navigation of large state spaces |

Exploratory analysis |

23 |

|

|

Multiresolution modeling |

24 |

|

Representing complex adaptive systems |

Optimization and agent-based models |

25 |

|

|

Multiagent systems |

26 |

|

|

Social behavioral networks |

27 |

|

Fundamental scientific issues |

Serious games |

28 |

|

|

Network science |

29 |

|

|

Embedded MS&A systems |

29 |

|

|

Expanded concepts of validation |

30 |

|

Infrastructure needs |

Composability |

32 |

|

|

Improved data collection for MS&A |

32 |

|

|

Visualization of high-dimensional data |

35 |

further development is warranted. Attention should be given to the use of complex agents with sizable rule sets governing behavior to provide quantitative models and to the continued coupling of agent-based models with the techniques of dynamic network analysis (for a fuller discussion of dynamic network analysis, see the subsection “Social Behavioral Networks,” later in this chapter, and see Appendix B). In contrast to bottom-up agent approaches, a top-down architectural approach to describing networked behavior is also desirable, but no good examples of such an approach exist yet.

-

Establish a new mathematical basis for models describing network-centric operation, drawing on an array of approaches, particularly complex, adaptive systems research.

Just as new mathematics or the new application of existing mathematics has been necessary for advances in science, so too might a new network-based mathematical framework be necessary to realize appropriate models and simulations for network-centric operations. In general, research in the complex adaptive systems community could provide a basis for this framework. Some ideas along these lines have been put forward based on the mathematical structure of networks (Cares, 2006), and the methods underlying dynamic network analysis should also be applicable (Carley, 2003).

PROMISING TECHNICAL APPROACHES FOR ATTAINING THE NEEDED MS&A CAPABILITIES

To build the capabilities described in the preceding section, progress is needed in four areas of MS&A:

-

Tools are needed for navigating intelligently through the very large state spaces characteristic of complex, dynamic networked systems. Some promising directions are described below, in the subsections devoted to exploratory analysis, multiresolution modeling, and families of models and games.

-

Methods are needed for representing complex adaptive systems. There has been real progress in this direction through agent-based modeling, other means of representing adaptive systems, serious games, social behavior networks, and network science.

-

Research must be stimulated to address fundamental questions that limit our ability to create scientifically sound and empirically grounded MS&A of the necessary complexity. There are many open questions about the analytical basis for such complex models, and validation is still more of an art than a science.

-

New requirements for MS&A will need capabilities based on new infrastructure.

In the rest of this chapter, the committee recommends directions for advancing the capabilities of defense MS&A, as shown in Table 3.2. Many of these topics are already recognized within parts of DoD as logical steps for the evolution of MS&A, and relevant R&D exists or is beginning. Therefore, the table should not be taken to imply that these are new or unappreciated ideas; rather, it demonstrates an interwoven vision of the many important topics in the vanguard of defense MS&A and recommends that DoD and the MS&A community adopt this holistic view for advancing DoD’s capabilities to address the needs identified in Chapter 2.

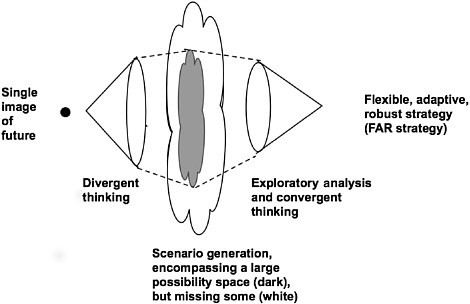

FIGURE 3.1 Divergent and convergent thinking in search of FAR strategies.

Exploratory Analysis

MS&A is needed to assess, among other things, whether an option under consideration is flexible, adaptive, and robust (FAR). The option must be evaluated across as broad a space of scenarios as can be conceived. In addition to having suitable models and games for such an evaluation, one must also have methods for generating cases throughout the space, be able to use M&S to characterize results from each case, and then be able to make sense of the results. Figure 3.1 provides a schematic depiction of the kinds of thinking involved in developing and evaluating FAR strategies

The sheer dimensionality of the space of possibilities can make FAR strategy development a daunting task. A rich collection of methods for exploratory analysis has developed over the last decade or so.8 These include methods for structuring the initial possibility space, divergent thinking to expand notions about what is possible (to include, for example, the possibility that people and groups will adapt or that events that change the very structure of the system will occur), generating simulations, visualizing outcomes, and applying a variety of tools—some of them derived from data mining or cluster analysis, some from the artificial intelligence and statistics communities, and some from outside-the-box gaming or brainstorming—and then finally converging toward reasonable depictions of alternative strategies and assessment of their merits. Exploratory analysis is very different from traditional sensitivity analysis. Whereas sensitivity analysis typically examines how the outputs of a complex model or simulation change when parameters and inputs deviate slightly from nominal values, exploratory analysis typically attempts much broader coverage of the space of parameters and inputs from much simpler quick-and-dirty models. Different versions of exploratory analysis apply to planning and programming system development, and operations, and the difference between the versions is large.9,10

Some of the challenges associated with exploratory analysis are deeply technical while others have to do with how best to structure collaborative analyses involving both hu-

mans and machines and how best to summarize results for decision makers. While some decision makers prefer firm predictions and dislike uncertainty, many have a great interest in understanding uncertainty and how their course of action can both allow for opportunities that may arise and hedge against downside events. The issue becomes how to convey that kind of information clearly and accurately, a topic discussed further in Chapter 4.

Multiresolution Modeling and Families of Models and Games

A multiresolution model (MRM) is a model that can accept inputs and/or perform analyses at varying levels of resolution. True multiresolution modeling is very different from modifying a bottom-up model that requires high-resolution inputs to enable it to display aggregated (low-resolution) outputs. One motivation for MRM is the recognition that people need to reason at different levels of detail. At any given level of decision making, people do most of their reasoning with the natural variables of that level. In addition, they need to be able to zoom to the next more detailed level, so as to understand factors and phenomena underlying these higher-resolution features. Furthermore, they typically need to be able to summarize their reasoning to their own superiors, abstracting it into a form suitable for a lower-resolution level. The need for models at different levels of resolution cannot be addressed by simply applying sufficient computing power to do all modeling at the highest resolution. There are fundamental issues associated with aggregation and drill-down that must be understood and incorporated into models if multiresolution models are to provide effective support to decision makers.

To some extent, a given model can be designed so that it can be used at different levels of detail. However, because MRM models can become quite complex, at some point it becomes easier and clearer to have an integrated family of models. Sometimes it is adequate to have a family of models that were not designed in an integrated way but that are sufficiently consistent and sufficiently well understood to be able to inform one another. For example, if one has a trusted high-resolution model, it can be used to develop values, value ranges, or even probability distributions for the inputs to a higher-level, more-aggregated model of the family. Further, if one has a trusted low-resolution model, perhaps informed by solid empirical experience (including history), it can be used to inform higher-resolution models. Sometimes simple models reflect considerations such as the morale and fighting effectiveness of a nation’s army, which have usually been assumed away in higher-resolution models.11

Exploratory analysis is arguably best accomplished with a good aggregate-level model that can cover the entire possibility space clearly, albeit at low resolution. Such a model might have 6 to 10 variables. Understanding the outputs of the model over the entire space the values of those variables is quite feasible with modern tools. Further, one can then reason at that level of detail. If one does such a synoptic exploration and finds that only two or three of the variables are particularly important, then with MRM or a suitable family of models, one can zoom to higher resolution on those variables. This provides a straightforward, cognitively natural way of conducting exploratory analysis. In contrast, if one starts with a complex model built bottom-up, the model may have thousands of inputs (especially if one realizes that the individual items in the model’s complex databases are all uncertain). Making sense of that model’s behavior and finding abstracted insights can be exceedingly difficult and treacherous.12 Thus, while MRM is not necessarily essential for exploratory analysis, it is a strong enabler.

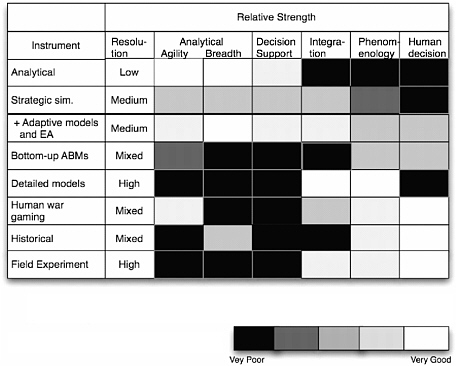

Another enabler for exploratory analysis (again useful for other purposes as well) is having families of models, human games, experiments, and other sources of information (Davis, 2006). Figure 3.2 illustrates this by suggesting the strengths and weaknesses of some of the various instruments that can be brought to bear. Although the cell-by-cell evaluations depend on various assumptions and are only approximate, the story conveyed is valid. For example, relatively simple analytical models and programs (top left) are excellent for agility and breadth of work and for highlevel decision support but poor for revealing underlying phenomenology. In contrast, detailed models, bottom-up, agent-based modeling (discussed later), human games, field experiments, and history can be very good at representing and studying phenomenology. Human games and man-machine gaming can also be particularly good for coming up with innovative concepts of operations, clever tactics, and new uses of technology. Strategic-level simulations can be excellent for integration, especially if they have adaptive decision models.

Recommendation 5: DoD’s analytical organizations should take a portfolio approach to designing their analyses and supporting research, investing in a range of methods including diverse models, games, field experiments, and other ways to obtain information.

FIGURE 3.2 Relative strengths for MS&A models.

Such organizations should be cautious about (1) allowing the high-cost MS&A activities to use all of the investment resources, with no groups doing fast, simple, and nimble thinking; and (2) depending entirely on computer models, which may be unrealistic because they lack human involvement and often do not use real-world data, such as lessons-learned information from recent wars.

If exploratory analysis were used by analytical organizations in cooperation with other organizations, something else would probably happen: increased analytical structuring of human games and exercises. Games and exercises are rarely designed with the idea of building a consolidated knowledge base. But they could be, in which case human games would be tailored and analyzed accordingly, as would experiments, resulting in enhancements to a knowledge base and to models. For example, a theater-level model could have sub-models to represent commanders’ decision making. Those, in turn, could be made to address issues arising in human war games (as well as many other issues not arising in the games). In practical terms, this might involve building into the model aspects such as substantial decision delays except in circumstances of prior alert and prior authorization for rapid action. Decision delays might also be explicitly dependent upon the availability and quality of information that is not from satellites or aircraft but from U.S. national and regional intelligence, personal commander-to-commander conversations with allied officers, or special information from, say, a nongovernmental organization aware of circumstances on the ground. That is, human gaming could force MS&A to incorporate variables that are important in the real world of DIME/PMESII but not natural to those building traditional mathematics-based models.

Optimization and Agent-Based Modeling

It is often the case that mathematical optimization is needed as a component of a larger simulation or to establish performance bounds on the results of a simulation. While in some cases the simulations required for the challenges cited in Chapter 2 may be too large or complex to optimize in any formal sense and the uncertainties may diminish the utility of optimization, in other cases optimization techniques can be quite useful. It is important to realize that such techniques are available when needed. Many “purposive” military systems are currently modeled as a collection of smart, and arguably rational, decision-making agents that attempt to continuously improve some overall objective function. Although this paradigm has its defenders and critics in the modeling community when used to model some nonmilitary systems, it has been recently shown that the paradigm of self-optimizing via agents can be used to optimize a variety of general large-scale complex systems (Ghate et al., 2005). These need not be systems of antagonistic elements, as is often assumed for military analysis. For example, distributed and/or decentralized control architectures have been studied in artificial intelligence and robotics (Bui et al., 1998). The typical setting involves a group of agents that have (more or less) homogeneous capabilities, share a common objective, and, critically, have access to an ad hoc protocol set for a large number of contingencies and for coordination among them-

selves. Since these protocols assume assured bilateral agent communication, determining the best protocols to use becomes computationally intractable when the number of contingencies and/or the number of agents increases.

An alternative agent-optimizing approach, known as fictitious play (FP) (Brown, 1951; Robinson, 1951), addresses the challenge of optimizing black-box simulation models that cannot be expected to exhibit the kinds of regularity or convexity properties that conventional nonlinear optimization approaches demand (Bazaraa et al., 1993). The basic idea, inspired by game theory, is to animate the design components or controllable variables of a system by representing them as the decisions of intelligent, goal-seeking agents. These agents attempt to optimize their own selfish responses to an environment created by the behaviors of the other agents/components. This process can be viewed as an iterative game, with the components being players having identical interests—the overall performance of the system. Although in its infancy, this approach has been successfully applied to problems in the private sector (e.g., the joint optimization of plant-level production, capacity planning, and marketing decisions at one of the big three automakers) and the military (e.g., allocating resources and routing messages in mobile ad hoc networks and determining optimal ship-steering policies).

For example, in typical traffic routing in a dynamic network, an individual vehicle has origin and destination locations, an origin departure time, and a finite set of sequences (routes) of road segments joining the origin with the destination. Each vehicle’s route traversal time is influenced by the traffic congestion on each link in the route during the time the vehicle is traveling along the link, so that the route traversal time depends on the choices of routes made by the other vehicles in the network. This system-optimal traffic assignment problem with flow-dependent costs has been studied extensively.13 However, a crucial distinguishing characteristic of this problem—namely, dynamic, time-dependent congestion on the links in the network—has rarely been considered. In this version of the problem, numerical procedures for evaluating transit times are simulation based (down to the individual vehicle) and require significant computational effort. The task of finding the system-optimal routes is therefore inherently complex, and it is the subject of a great deal of research in the fields of intelligent transportation systems and sequential dynamic systems.14 However, an FP algorithm has recently been proven successful in addressing this problem (Garcia et al., 2000; Lambert et al., 2005).

There are several general computational advantages to FP when trying to analyze models of military complexity:

-

It can be applied to complex optimization problems with a black-box objective function lacking special structure.

-

It updates all decision variables independently and in parallel, making the approach scalable in the number of variables (unlike other global optimization approaches, such as simulated annealing).

-

Convergence to an optimal solution is ensured in the limit (Ghate et al., 2005).

-

In many applications, only one evaluation of the objective function is needed per iteration.

-

In practice, very fast convergence to high-quality solutions is observed.

Because of its general nature and its ability to optimize simulation-based models, FP warrants a thorough investigation. The committee has chosen to focus on FP as a particularly promising technique for optimization of highly complex, nonlinear systems. There are, of course, other promising approaches that merit investigation. The broader point is that serious investigation is needed into theories and methods for optimizing the performance of complex, adaptive networked systems.

Other Methods for Representing Adaptive Systems

The use of multiagent systems (MASs) allows developers an often appealingly intuitive and straightforward way of incrementally developing complex systems in a distributed and locally adaptive fashion. These are explored in the subsection after next. However, MASs are not the only way to model adaptive systems, and it is important that DoD continue to actively use, research, and develop other methods for modeling adaptive systems, as well as ways to compare the benefits and limitations of different modeling methods across different classes of problems. Different modeling methods are called for because of variations in the depth and fidelity required for a given application area and because of implementation issues, including efficiency, development time, and the expected operation of different asynchronous and autonomous segments of the system.

Modeling methods that can represent adaptive behavior are needed because in many systems one cannot specify in advance all the conditions that could prevail and all the data that might be obtained. Furthermore, in many large, distributed real-time systems, no central decision-making element is fast enough to respond as needed to locally changing conditions.

The word “agent” has been used for models, however implemented, that can generate solutions in an adaptive manner. Examples include genetic or evolutionary programming methods for solving optimization problems, and game-theoretic, control-theoretic, or rule-based methods for solving decision problems. However, in order to gain the advantages of different MAS methods and other methods for representing

adaptive behaviors, it is important to distinguish among the different desirable characteristics of adaptability exhibited by different implementation strategies and to identify the applications for which each is most desirable. It should be noted that these heuristic methods may be superseded in the future by new theoretical developments in the mathematics of optimization or by increased computer power. This report has not focused on techniques of deterministic optimization.

When we talk about modeling methods that allow both local decision making and local individual history in a heterogeneous distributed environment, it is very natural to think in terms of agency. Agency can be implemented with any distributed, object-oriented modeling technique not just with those explicitly labeled “software agents.” The ability to adapt to specific, time-dependent inputs can also be implemented with decision rules, knowledge bases, and logic-based programming methods.

In a well-characterized solution space, there are mathematical methods to combine or integrate local results, often described using sets of equations. Partial differential equation solvers and other synchronous computational methods are examples of important methods that may be usefully incorporated into an agent-based framework. That is, although equation solvers are sometimes viewed as antithetical to an agent-based solution, it is quite feasible to design an agent-based system in which some of the agents use inputs from their environment to construct equations that they then employ an equation-solver to solve and provide the results to other agents in the system. Similar remarks obviously apply to optimization, regression, and other methods often placed in opposition to agent-based systems.

Currently deployed adaptive systems use a wide range of strategies for adaptation. Some—for example, control systems for complex electromechanical devices and systems such as UAVs—choose among existing models of the environment in response to results of measurements. The best of these allow for some online model building to help react appropriately in the short term to unexpected behaviors in the environment (usually combined with a call for human intervention). In addition, any amount of self-modeling that can be usefully interpreted allows the system to examine its own capabilities and plan its activities much more effectively, including identifying and reacting more quickly when problems occur and even determining in advance when problems might occur.

Social Behavioral Networks

A behavioral model is a model of human activity in which individual or group behaviors are derived from the psychological or social aspects of individuals. Much progress has been made in recent years in this area, and it is of central importance to many questions addressed by MS&A across the DIME space. There are a number of approaches to behavioral modeling. Among these, the key computational approaches that are important from a DoD perspective are social network models and multiagent systems. In this section, the committee briefly describes both approaches and their variants in order to motivate recommendations to improve their utility to DoD. More detail is found in Appendix B.

Social network models represent relationships among individuals, the flow of information among individuals, and other aspects of the ways by which individuals are connected to and interact with each other. These models are based on graph theory, and because of that, traditional operations research flow and network models have been used for analysis. The nodes in such a model are individuals and the arcs are derived from relational data—that is, who knows whom, who works with whom, and so forth. There are three forms of analysis in this area: traditional social network analysis, link analysis, and dynamic network analysis. However, all of these forms of analysis, at their core, involve graph theoretic concepts and computations.

Traditional social network analysis mainly involves statistical analysis to identify the topology of the network, the influential nodes, and the key positions in the network. Link analysis is concerned with pattern recognition in the network and used to look at the formation of cliques and other relationship groups. Dynamic network analysis adds simulation to traditional social network analysis and link analysis to look at network evolution. These three techniques are used to analyze relational data—that is, data about whether entities of one type relate to entities of another type. Successful analysis is heavily dependent on the existence of reliable data and the availability of computational resources.

Social networks are a promising tool for studying many problems of importance to DoD, such as terrorist networks, the spread of infectious agents, flows of information and influence within enemy forces, and others. While considerable progress has been made in recent years, there are a number of weaknesses in the current state of technology for social behavior networks. A few of the most important limitations are these:

-

Existing visualization techniques do not scale well, and interpretation of the results is dependent on the particular visual representation of the network.

-

There is no agreed-on set of metrics for social behavioral networks, and those that do exist frequently do not correlate well with the property being measured.

-

There are no standard techniques dealing with missing or erroneous data, and since these networks are data greedy, missing or erroneous data are a common problem.

-

There is insufficient ability to link social networks to other events and locations, which is necessary to ensure that such networks are not used in isolation.

In contrast to social network analysis, multiagent systems can be used to model the way in which social behavior emerges from the actions of a set of agents. Multiagent systems are computer-based simulations of a set of actors, called

agents, that take autonomous actions as they interact with one another. The agents can be heterogeneous; for instance, some may represent humans while others represent groups with which the humans interact. There are numerous such systems, which may differ in the number of agents, the type of algorithm used, the cognitive and social sophistication of the agents, and whether or not the agents are constrained to move on a grid.

Multiagent systems are also limited given today’s technology:

-

Validation is difficult (and is discussed separately in the subsection “Expanded Concepts of Validation”).

-

The goal, producing multiagent dynamic network systems tied to empirical data, now requires a multiperson, multiyear data collection effort on top of a similar development effort.

Recommendation 6: DoD should devote significant research to social behavioral networks and multiagent systems because both are promising approaches to the difficult modeling challenges it faces.

Serious Games

A video game is a mental contest, constrained by certain rules, played with a computer for amusement, recreation, or winning a stake. A serious game, by contrast, is a video game designed and used to further training, education, health, public policy, or strategic communication objectives. In addition to the story, art, and software aspects they share with video games, serious games are designed to educate, and they have a basis of pedagogical knowledge.

There are strong driving applications to which DoD could apply a science of games if such a science existed. In the health domain, medical personnel could train via games to perform procedures on vital systems. This capability is already evident in the game America’s Army, which contains a full three-lecture series on lifesaving during combat. Training and simulation using game technology and creativity is an obvious option for the DoD; but to realize the full potential of this option, DoD should do better to coordinate its efforts. At present, game development for defense training is being handed off to individual contractors, who are not necessarily tightly coupled to DoD requirements. Those arm’s-length contracted efforts lead to systems that are not well connected to the ever-changing requirements of the military. Additionally, the games are being built with proprietary technologies and resources that cannot be reapplied to other parts of DoD without additional payments.15

To avoid these limitations and to advance its capabilities, DoD might consider forming a university-affiliated research center (UARC) focused completely on game-based training and simulation. Such a UARC should be coupled to universities strong in human performance engineering and in game development. DoD could also license a commercial game engine for any DoD purpose or develop its own open source game engine. Art and other gaming resources built at that UARC should be easily shareable for all DoD game-based training requirements. With such a UARC in place, it would be feasible to produce game-based systems for mission rehearsal deployable by the soldier in minutes rather than months. In addition, the next-generation combat modeling and analysis systems might be accessible through gamelike interfaces rather than complicated menus and submenus. While the committee is impressed with the successes achieved by existing UARCs, it recognizes that despite the difficulties with commercial development of DoD training games noted above, other approaches are possible, including efforts based at private companies or other nonacademic organizations or by the establishment of a research consortium. The goal is to give DoD adequate assurance of top quality and modern technology with appropriate cost and continuity and without conflict of interest.

To realize the potential of games both serious and for entertainment, it will be necessary to undertake research and development aimed at transforming the production of games from a handcrafted, labor-intensive effort to an effort having shorter, more predictable timelines, with increased complexity and innovation in the produced games and a stronger focus on their pedagogical effectiveness. R&D is needed in a number of areas:

-

Infrastructure. The underlying software and hardware for interactive games, including multiplayer game architectures, game engines, streaming media, next-generation consoles, and new wireless and mobile devices.

-

Cognition. Theories and methods for the modeling and simulation of computer characters, story lines, and human emotions and of innovative play styles.

-

Immersion. Technology for engaging the game player by means of sensory stimulation, including theories of presence and of sensing a player’s physical state and emotions.

-

Serious games. Game evaluation, human performance engineering, and principles common to the different domains in which games may be applied.

Recommendation 7: DoD should form a research center or consortium focused on game-based training and simulation.

A more complete discussion of serious games can be found in Appendix A.

Network Science

The nascent study of networks per se, which would allow understanding them intrinsically rather than through particular instantiations, also shows promise as a foundation for advanced defense MS&A. Society depends on a diversity of complex networks, and this report has emphasized the growing dependence of the military on networks for information dissemination, command and control, and effects-based operations, among others. Despite this dependency, our fundamental knowledge about networks is in its infancy; indeed, there is no body of knowledge that can be called “network science.” A recent Army-sponsored report (NRC, 2006), referred to below as Network Science, is probably the first attempt to define both the need for and the substance of a science of networks. Although the report does not specify a rigid body of knowledge to be incorporated in the new field, it defines network science as consisting of the study of network representations of physical, biological, and social phenomena leading to predictive models of these phenomena.

Network Science identifies research areas of special interest to the Army that, in addition, apply more broadly to the entire DoD. One high-priority area is modeling, simulating, testing, and prototyping very large networks. Other aspects of networks that are relevant to MS&A include the impact of networked structures on organizational behavior (see the subsection “Social Behavioral Networks” in this chapter) and on enhanced networked-centric mission effectiveness (see the following subsection). In agreement with this report, Network Science concluded that advances in network science can address the threats of greatest importance to the nation’s security.

Recommendation 8: DoD should support and extend initiatives to cooperate with other agencies funding research on networks.

Building the Scientific Base for Embedded MS&A

Present efforts to design and use complex, dynamic models are hindered by major gaps in the theoretical underpinnings of such models. For instance, the mathematical formalisms used in most modeling assume that the system being modeled is closed—that is, that the model output will always fall into a clearly defined space of possible outputs. But this assumption is violated for MS&A embedded in other systems, because the models must account for inputs from a dynamically changing set of sensors.

The NSF has identified a number of advances needed in mathematics and statistics as part of its Dynamic Data-Driven Applications Systems (DDDAS) program, mentioned earlier in this chapter. For example, the DDDAS characteristic of allowing new data to be incorporated into running algorithms raises fundamental questions about the stability of those algorithms and their outputs. NSF’s program has developed fundamental analytical challenges for understanding and managing DDDASs:

-

The creation of new mathematical algorithms with stable and robust convergence properties under perturbations induced by dynamic data inputs.

-

Algorithmic stability under dynamic data injection/ streaming.

-

Algorithmic tolerance to data perturbations.

-

Multiple scales and model reduction.

-

Enhanced asynchronous algorithms with stable convergence properties.

-

Stochastic algorithms with provable convergence properties under dynamic data inputs.

-

Handling data uncertainty in decision-making/optimization algorithms, especially where decisions can adapt to unfolding scenarios (data paths).

Embedded MS&A systems must revisit a number of mathematical and statistical issues. These issues are given new prominence because the interaction between models and live data sources may cause small effects to cascade. These issues include

-

Assessment and propagation of measurement error.

-

Combining different types of uncertainties.

-

Adapting to small sample sizes, incomplete data, and extreme events.

-

Evaluation of quantization schemes.

-

Optimization or satisficing within complex solution spaces.

These issues are well known in the optimization community and have been extensively studied, but embedded systems face the additional challenge of adapting to the rapid and unpredictable changes resulting from new data or a new base model of the solution space.

The use of MS&A in embedded situations creates a need for mathematical methods to enable the evaluation of partial or intermediate results. Embedded MS&A systems must be able to reason about how closely they have approached a good-enough solution in order to evaluate the trade-off between better results and the investment of additional sensing and computing resources. This requires measures of goodness that are meaningful in spite of uncertainty about the achievable end state, the means of dynamically adjusting the streams of input to move toward that end state, and the rates of convergence to some quality target. There might also be competing criteria of goodness. A step toward this capability would be the development of new analytic methods that could (1) characterize the solution space so that designers know something about its areas of sensitivity, boundaries, bad areas, and well-behaved areas and (2) characterize (using sensitivity analyses) the impacts of assumptions within the models or simulations that are running. Reasoning about

the progress of a given intermediate solution may also be thought of as an online process, where one is asking whether either more data or more time will yield a better solution. New mathematical models of computational processes might be helpful for this.

In addition to the particular problems of embedded systems there are other areas where mathematical advances would find ready application:

-

The use of structured random search and determination of problems amenable to such an approach.

-

Scalability of mathematical methods, including better partitioning, abstraction, and aggregation methods.

-

Methods for analyzing the results of model composition, interoperability, and resource integration, including methods for combining different formalisms, different definitions of uncertainty, and techniques such as metalogics, which allow one to reason about the characteristics of different logics and knowledge representations and their applicability to a specific problem.

-

Methods for analyzing integrated modeling and data analysis environments. These would include research into the behavior of real-time linkage of models to data streams, perhaps using Bayesian methods to update model parameters with a combination of real and simulated data. Useful methods might also link machine-learning techniques for data extraction with simulation tools for forecasting.

This report has identified embedded MS&A as an important component of the changing DoD landscape and as an area in need of additional scientific research.

Recommendation 9: DoD should begin cooperative programs of research into embedded systems with other agencies facing similar demands.

Expanded Concepts of Validation

Some M&S is, or could be, solidly based in settled theory or empirical testing. Classical validation methods would then apply, and a model’s predictions could be compared against a trusted reference. In addition, successful analysis requires confidence in the model’s results, or at least an understanding of the limitations of the results. This said, many models and simulations, especially when considered to include the databases with which they will be used, contain a great deal of uncertainty (see Chapter 4 for an extensive discussion of this). If the data for previous events are known, at least retrospectively, then postdiction can be used to assess validity, but even that condition is often not met. The output of complex systems is influenced by one-time occurrences along the way that cannot be identified reliably even after the fact—when, for instance, a soldier is killed while on sentry duty or a surveillance aircraft is shot down without an impending or actual attack ever having been reported.

Even worse, when dealing with complex systems, we often do not even know what the correct structure of a good model should be. We may have reasonable conjectures, but it is hardly unusual for experts to disagree fiercely on such matters. For instance, traditional validation methods might not be applicable to large-scale multiagent models used for examining sociocultural systems because the fundamental underlying laws either do not exist or are unknown. Considerations such as these necessitate a new concept of validation;16 it may be prudent to implement a means of labeling a model, simulation, or game as “valid for the purposes of exploration in a particular context.” This would be a judgment not about the truth of any one prediction but about whether, on balance, the tool was useful.17 Note that the important standard technique of judging face validity does not apply to the kinds of exploratory models and games on which this report focuses. Often the purpose of exploratory work is to uncover possibilities very different from what would usually be expected: system failures when certain odd combinations of events occur, such as long strings of (good or bad) luck; changes in the very structure of a social system due to personalities, deaths, random encounters at special times, and so on. If the model is used to find unexpected outputs, face validity is a poor judge of model validity.

How might one assess validity, even for limited purposes of exploration? The committee is skeptical about the value of bureaucratic processes to assess validity, since they are expensive, time-consuming, and frequently reinforce conventional wisdom and standard databases even when the reality is massive uncertainty.

Nonetheless, validity is an important matter. Several criteria are necessary to establish a model’s validity:

-

The model should be sufficiently consistent with the laws of physics and realities of technology so that the insights apparently obtained are not artifacts of violations of these.18

-

The model (or game) should be comprehensible and explainable, often in a way conducive to explaining its workings with a credible and suitable “story,” thereby helping people to assess in real time whether an insight could be illegitimate or the result of artifact.

-

Models used in exploratory analysis should deal rea-

-

sonably with all known classes of uncertainty, possibly deep uncertainty, and at least confront candidly the problem of unknown unknowns,19 with some combination of speculation or stating of assumption.

-

Models should be falsifiable. As in science, assertions and predictions that are ultimately circular should not be tolerated.

Multiresolution, multiperspective modeling can be very useful for validating troublesome models. One of the most convincing and economical ways to falsify some models is by looking at aggregate-level consequences and comparing them to aggregate-level empirical information. For example, if a detailed simulation shows complete military victory and successful stabilization with only a very small offensive force, then an excellent basis for skepticism is a low-resolution model reflecting historical experience that a much larger force had been deemed necessary in prior campaigns (Gordon and Trainor, 2006).

Another approach to such validation efforts is to work methodically through the components of a model, such as one used for exploration, examining the validity of the mathematics and logic in each component and the presence of factors known empirically to be significant.20 Such testing is desirable when feasible, but the tester should be aware that because the modules of a complex system model are valid does not necessarily mean the overall system model is valid.

Even models of complex adaptive systems can be given the capability to explain results (e.g., by instrumenting the model or saving all relevant data so that a step-by-step replay is possible), although the current state of the art for doing so is poor. If such explanatory capabilities are built in, then conclusions can be evaluated in part by the chain of events leading to a particular result. Of course, a flaw in model logic does not necessarily indicate that the insight was wrong. Nonetheless, this method can be quite powerful when available.

Finally, sometimes a good way to assess model validity is to compare models and their “predictions” (even those of exploratory analysis) to the predictions of models built by other people, preferably with different mindsets. This is common in examining scientific disputes. The result may be to find important errors or omissions, to note significant differences without being able to evaluate relative correctness, or to find reasonable consistency—at least in a specific problem context.

Games are even more problematic. Games are superb vehicles for revealing factors and considerations that might not otherwise be recognized and for building a “sense of the chessboard” and the moves that can be made. Some games also bring out a range of plausible and revealing human emotions, such as distrust and parochialism, and various misperceptions that are well-understood by cognitive psychologists. However, it seldom occurs to anyone who has played a game that the game should be validated. What would validation mean? Only one path through possibility space was traced out, and not everything happening in the game was necessarily realistic.

Nonetheless, games might provide a new opportunity for the validation of social behavioral models—especially if they include cross-cultural players, which present a particularly difficult problem for verification. We have no way to monitor humans and their behaviors such that those behaviors could be provided as inputs to a social model and could produce an output—that is, an action or behavior that a human or group of humans might perform. However, massively multiplayer online games (MMOGs) provide an environment in which experimentation and testing might be performed. By some estimates the number of players participating in online games already amounts to 180,000 person-years of game playing.21 If these games could be instrumented and the behavior exhibited and captured, they could serve as virtual laboratories for the study of social phenomena. Recorded behaviors could then be tested against the outputs of social models.

The difficulty, of course, is that current MMOGs are commercial; their mission is to provide an entertaining and engaging experience to customers/players, not to run experiments of interest to DoD. However, it might be possible for DoD to carefully negotiate the funding of a virtual laboratory that would attach to a commercial MMOG and that could be used by DoD to test its behavioral models and by the game owner as an analysis tool.

The preceding discussion should be read not as suggesting a deemphasis on careful model evaluation but rather as urging recognition that “evaluation” must necessarily be quite different for models dealing with highly complex and uncertain phenomena than, for example, for engineering models. When dealing with issues that are less measurable but relevant to, say, effects-based operations, different methods are called for, and demands for validation in the classical sense are not pragmatic.

INFRASTRUCTURE TO SUPPORT THE NEEDED MS&A CAPABILITIES

The preceding section discussed capabilities needed for DoD’s MS&A in order to address the challenges of Chapter 2

|

19 |

Deep uncertainty is sometimes said to be uncertainty of the type one has when even the nature of the underlying processes is unknown. A statistician might refer to not knowing the nature of the probability distribution. The Secretary of Defense has referred to “unknown unknowns,” which are behaviors omitted from the modeling entirely because their existence is not recognized. |

|

20 |

This is not always straightforward. In social science it is not uncommon for some experts to insist that a factor is important, even though there is no empirical basis. |

|

21 |

Statistics available at http://www.gamasutra.com/gdc2005/features/20050309/postcard-diamante.htm. |

and some promising technical directions to pursue. In practice, DoD’s assessment of new MS&A capabilities will depend heavily on establishing a substantial forward-looking infrastructure. Laying the right infrastructure could have extraordinary benefits over the long run; failure to do so could greatly impede progress and efficiency.

To build the capabilities described so far in this chapter, the committee regards the following issues as the most important with respect to infrastructure:

-

Composability of M&S. The ability to improve efficiency and coherence by constructing higher-level models from lower-level components.

-

Data collection and data farms. The ability to draw quickly on existing databases, whether of input assumptions or previously generated output.

-

Visualization. The ability to visually interpret high-dimensional data.

-

Chains of tools and computational platforms. The ability to used linked chains of tools and platforms.

-

Service-oriented architectures. The ability to develop and use modularized functionality that is available on the network as a service.

-

A definitive repository. The existence of a central virtual repository and clearinghouse for pointers and advice.

-

Cooperation with other entities. The ability to communicate across organizational and cultural boundaries unhindered by stovepiping or bureaucratic or technical obstacles.

This section explores these needs. Chapter 5 explores another important area of infrastructure, the educational background of the MS&A practitioners.

Composability

A recent technical review of model-composability issues (Davis and Anderson, 2004) discussed DoD model composability in some depth. The committee does not attempt to replicate the advice contained in that review except to highlight some of its main points and add commentary and recommendations. Appendix C provides more detail, including citations to the recent literature.

Composability is the capability to select and assemble components in various combinations to satisfy specific user requirements meaningfully. In M&S, the components in question are themselves models and simulations. Composability implies the ability to assemble components readily in various ways for different purposes. It goes further than interoperability, which may be achieved only for a particular configuration, perhaps in an awkward one-time lash-up. To put it differently, composability is associated with modular building blocks.

DoD’s experience with composability has been disappointing, despite the considerable priority accorded it and the promise it showed. As discussed in Davis and Anderson, four factors affect model composability:

-

Complexity of the system being modeled.

-

Difficulty in defining when composite M&S will be used.

-

Strength of the underlying science and technology.

-

Human considerations, such as the quality of management, the existence of a community of interest, and the skill and knowledge of the workforce.

Davis and Anderson’s review recommended a number of priorities and actions, which are summarized tersely in Table 3.3.

In addition to the priorities listed in the table, the committee offers the following general guidance on composability:

-

To obtain the highest degree of composability, it must be engineered in. In general, DoD should treat composability as a matter of degree, measured as a function of the time and effort necessary and the flexibility obtained.

-

By differentiating among (1) conceptual models, (2) implemented models, (3) simulators, and (4) experimental frames, the quality of MS&A can be substantially improved. There may be alternative ways to implement each of these, but without a clear distinction among them, it is hard to make sound judgments.

-

DoD should continue to support the development of potentially standard ontologies, such as the Web Ontology Language (WOL), under development by the World Wide Web Consortium. These address key semantic and pragmatic issues important to the advancement of composability.

-

Poorly documented legacy code will continue to be a challenge for composability into the indefinite future. DoD should invest in a selective program of retro-documentation.

-

In a few high-leverage cases, DoD should reprogram legacy models that appear to be valuable but that are technologically obsolete or limited.

Improved Data Collection for MS&A

Models of complex systems usually require large amounts of data as inputs, for determining parameters and for validation. Collecting those data can become a technological challenge. DoD needs to automate, or semiautomate, the collection of data for building and validating new models and simulation systems. Key tools for this more automated approach will be improved data-mining and text-mining techniques. Data-mining and text-mining tools are becoming in-

TABLE 3.3 Recommended Priorities for Improving Model Composability

|

Category |

Component |

Specific Priority Items |

|

Science and technology |

Military science for selected military domains |

Capabilities-based planning, effects-based operations, network-centric operations |

|

|

Science and technology of M&S |

Model abstraction (including multiresolution modeling model families) |

|

|

|

Validation |

|

|

|

Heterogeneous M&S |

|

|

|

Communication: documentation and new methods of transferring models |

|

|

|

Exploration mechanisms |

|

|

|

Intimate man-machine interactions |

|

|

Standards |

Revisit standards, as in the pre-HLA days, but at the same time hurry to realign DoD’s direction with that of the commercial marketplace |

|

|

Model representation, specification, and documentation |

Exploit commercial developments, especially for high-level specification |

|

Understanding |

|

Develop methods to predict difficulty and cost of proposed composability projects |

|

|

|

Commission independent lessons-learned study on experiences from JSIMS, JWARS, and one SAF |

|

Management |

|

Define requirements and methods for developed first-rate M&S managers |

|

Workforce |

|

Stimulate systematic education, selection, and training of M&S workforce, in cooperation with other agencies, academia, and industry |

|

General environment for DoD M&S |

|

Improve incentives and mechanisms to improve industrial and other bases |

|

|

|

Encourage marketplace of ideas and assure even playing field for competitions |

|

|

|

As part of this, insist on transparency and exchange, reducing the scope of proprietary restrictions |

|

SOURCE: Adapted from Davis and Anderson (2004). |

||

creasingly important parts of the modern simulationist’s tool kit. Such tools pave the way for more automated collection of the large time-sensitive data sets that are now needed and will be even more needed for simulation, particularly in the DIME/PMESII areas. To date, however, data-mining and text-mining tools are limited in the following ways:

-

Lack of automated or semiautomated ontology creators to facilitate data sharing and analysis in new areas.

-

Limited ability to handle nontext data such as photographs.

-

Limited ability to extract data from various formats such as pdf and PowerPoint.

-

Lack of good entity extraction algorithms that automatically correct for typos, spelling errors, aliases, and the like.

-

Absence of tools that can work with streaming data.