Panel IV

Evolution of Technology Partnerships in the United States

Moderator:

Lewis S. Edelheit

General Electric, retired

Dr. Edelheit, GE’s chief technology officer during the 1990s, opened the session with some observations from what he described as an industry perspective. “This symposium,” he said, “is about competitiveness: Some countries are trying to figure out how to get it, others how to keep it, and still others how to get it back. And it’s all about learning how to move fast and win in a brutally competitive economy such as we’ve never seen.”

About a quarter-century before, he recalled, industrial laboratories in the United States had become “very uncompetitive.” No longer relevant to their businesses, they were in a position of having to change, and change a great deal, or else die; meanwhile, the new companies that arose were not forming research labs. The deciding factor was not so much the issue of applied versus basic research, or even the issue of short-term versus long-term research, but the fact that the old model just was not producing results. “Funding a basic researcher in a lab someplace to do something, then hoping that you could get it into production,” he said, “stopped working. It was too slow.”

Many industrial research labs did die, but others changed, and very quickly. Of the numerous ways in which they changed, Dr. Edelheit named two. One was to start partnering with their own businesses much more closely, as the only way they could acquire speed was to move much closer to the marketplace. The second was to start partnering with other companies, with other industries, with

government, and with other countries, since even a company as big as GE was unable to move rapidly enough on its own.

UNITED STATES USING OUTDATED RESEARCH MODELS

But, in many cases, the old models remain in use in the United States—within the government, industry, universities, and the National Laboratories—and they were not moving fast enough. Other countries are wrestling with how to speed up the innovation process, especially in such areas as energy, job creation, health care, and the environment, where national needs are not being met with sufficient speed, in part because the models are too slow.

It was to consider these questions that the symposium was convened, Dr. Edelheit said, and the current panel would offer three speakers with interesting perspectives on the issue of partnerships or the lack thereof, among government, industry, and universities in the United States. The first, Ken Flamm, would talk about the case of supercomputers, a technology that clearly was critically important for a great number of industries.

U.S. POLICY FOR A KEY SECTOR: THE CASE OF SUPERCOMPUTERS

Kenneth Flamm

University of Texas at Austin

Dr. Flamm, thanking Dr. Wessner for the invitation to speak, said he would use his time to “tell a story” about the supercomputer industry. Some of the material he would present has already been published in an earlier version, having formed the basis for recommendations of a 2004 report on the future of supercomputing by a National Academies panel on which he served.14

He would begin with the field’s early history, he said, in order to make sure that his listeners understood what is meant by the term “supercomputer” and where the supercomputer came from. The machines built to decrypt code traffic during World War II were the direct precursors of the modern electronic digital computer and the famous ENIAC machine, built for other purposes, appeared shortly thereafter.

In the Beginning, All Computers Were “Super”

At the computer industry’s very beginning in the 1940s and 1950s, all computers were essentially supercomputers—every new model being the “biggest,

baddest, greatest computer ever built”—and a national-security application, whether bomb design or cryptography, was behind the funding of most computer R&D. It was in the late 1950s that a commercial computer market began to develop, and the term “supercomputer” came into use in the early 1960s. It was probably first applied to the IBM 7030 stretch, a special-purpose machine designed for two powerful government-mission customers, the National Security Agency and the Department of Energy. The Control Data 6600 was the other model referred to by that label, but in reality predecessors of both had merited it.

While all computers were supercomputers originally, as the commercial market began to develop and differentiate in the early 1950s, the gap separating the capability of the most powerful computer being produced and sold from that of the least powerful approached an order of magnitude. That gap grew to between three and four orders of magnitude by the end of the decade and, by the early 1970s, came to exceed the upper end of that range.

Control Data and Cray Constitute the Industry

From about the mid-1960s to the late 1970s, the entire supercomputer industry basically resided in two U.S. firms, Control Data and Cray, the latter company being a spin-off staffed by ex-employees of the former company and of its predecessor, Engineering Research Associates. Very high performance supercomputers at that time offered very good price performance; doing an excellent job of providing raw computing capability, they were highly competitive with less powerful machines in cost/computing capacity. As a result, they were used by commercial customers after having been pioneered for government users. But in those days all computers were, in fact, “custom” products: For each specific machine, the manufacturer typically designed both a special-purpose processor and a proprietary interconnect system linking that processor to the other components.

It was not until about the mid-1980s that Japanese firms entered the computer market, initially producing IBM-compatibles, then designs of their own, and ultimately machines that were quite competitive with those of Control Data and Cray. Around the same time came the very first wave of technological challenge from microprocessors, which made very small increments of computing power available to the end user in individual personal machines. Cray machines nonetheless remained quite cost-competitive into the 1980s.

Japan’s Industrial Policy Creates Challenge to the United States

But what happened in that decade, a development that brought Dr. Flamm to the day’s theme of innovation policy, was that Japanese technology advanced in a number of areas, including both the semiconductor and computer industries. It initiated the Fifth-Generation Computer Project and the Superspeed Computer Project, the latter being at least as important as the former even if less publicized.

For the very first time, Japanese producers were making significant inroads into the high-performance mainframe computer market.

This occasioned some alarm in the United States, particularly within the military and other government agencies that had originally funded computer technology. Given that superiority in information technology was seen as essential to the U.S. goal of having a qualitative technological edge in defense systems, it was of some concern that producers elsewhere were coming on stream with products that were competitive in demanding, high-performance applications.

One reaction was the Defense Advanced Research Projects Agency’s (DARPA) launch in the 1980s of its Strategic Computing Initiative. The move was in part an attempt at responding to the technological challenges of the time, including competitive challenges from foreign companies producing systems that threatened to narrow the gap between U.S. information technology and IT available on the open market overseas.

Emergence of the Commodity-Based Supercomputer

Although DARPA program managers originally focused on custom components and on ways to use parallelism as an alternative design methodology to create new computer architectures, they gradually switched their emphasis to so-called commodity processors over the course of the initiative. The reasoning behind this change, which coincided with the arrival of the microprocessor on the industrial scene, was quite simple: Supercomputers were produced in relatively small quantities. Designing a custom processor to tweak the maximum possible performance, and producing it in relatively small scale, would result in a machine with an enormous price tag because all that R&D would be expensed over a relatively small number of units. But what if the new, microtype commodity processors that were being marketed in the millions, and whose costs were very much lower than those of custom processors, could somehow be harnessed? Even if they were less efficient at doing some of the calculations as individual processors, it might be possible to lash them together into a system and to figure out how to split up problems so that they could be handled by a large ensemble of cheap microprocessors networked together. That would prove a less costly way of getting the problem done, and, if scalable, could be used to solve any problem that was properly partitioned.

As a result, there emerged a whole new methodology for competing in supercomputers. Instead of focusing on the very high-performance individual processor, which was going to be enormously expensive to produce, a generic technology would be developed involving massively parallel systems that could run on relatively cheap components. Dr. Flamm characterized the strategy as a “kind of industrial jiu-jitsu”: Rather than meeting directly the threat from “very, very well-done” high-performance processors coming out of Japan, the United States would “shift the terms of the battlefield.” Many ultimately dismissed as

a waste of money the $1 billion spent by the Strategic Computing Initiative between 1983 and 1993. Although numerous new firms appeared on the computing scene, many of them—even some that became major players, like Thinking Machines—ended up going out of business.

The other response to the Japanese challenge of the 1980s took the form of trade-policy initiatives, one of those being an attempt to open up Japan’s market through forcing procurement by its government of U.S. supercomputers. In addition, dumping cases were filed in the United States in the mid- to late-1990s. Dr. Flamm pointed out that, between 1986 and 1992, the three major Japanese producers of noncommodity or “vector computers”—NEC, Hitachi, and Fujitsu—whittled the U.S. share of the market from nearly 80 percent to under 60 percent. “This threat was a very real threat,” he remarked.

Federal Investment Transforms the Industry

Having set out the background, Dr. Flamm next discussed how investment by the United States in experimenting with a new set of technologies has ended up altering the competitive dynamics—the “industrial ecology”—of the supercomputer business. For it has indeed done so, and in some very significant ways that he did not believe to be widely appreciated, particularly in Washington. As a measure of industry dynamics he would use what was referred to as “Top 500” data, which described the 500 fastest computers in the world based on the LINPACK performance benchmark.15 Not all computers were tested this way, and, he acknowledged, legitimate questions exist about whether the Linpack really is the best possible measurement of computer performance. Still, the numbers he would use were reflective of trends in and broader measures of the industry, and they were at once very easy to use and very detailed.

Arriving at the present, Dr. Flamm noted that it was with the inauguration of Japan’s Earth Simulator, referenced in Dr. Kahaner’s presentation, that the United States fell out of the world leadership in computing that it had held since about 1950. The United States had assumed the lead at that time from the United Kingdom, which had produced the very first electronic digital computers during World War II and continued in the No. 1 position until it “basically blew it” in the late 1940s. And the Earth Simulator had come on line not only as fastest in the world, but as faster by over a factor of two or three than its closest U.S. competitor. In 2002 many people compared the challenge this event presented to the United States, both technologically and in other respects, to the launch of Sputnik.

|

15 |

According to Wikipedia, The LINPACK Benchmark measures how fast a computer solves dense n by n systems of linear equations Ax=b, a common task in engineering. The solution is based on Gaussian elimination with partial pivoting, with 2/3·n3 + n2 floating point operations. The result is millions of floating point operations per second (Mflop/s). Accessed at <http://en.wikipedia.org/wiki/LINPACK>. |

Growth of Industry Purchases Marks a Shift

Dr. Flamm then described a “major shift in the way the market works” that had taken place between June 1995 and December 2004. Historically, around two-thirds of supercomputers sold in the United States went to government, research users, or academic institutions. But, in slightly less than a decade, industry’s share rose to the point that it was buying more than half of the most powerful machines on the market.

Meanwhile, there were no signs of a letup in the pace of computer performance improvement. Improvement was more or less continuous, with leading-edge outputs. In fact, the Earth Simulator, after reigning as the world’s fastest machine for two-and-a-half years, has been displaced by an American machine, the Blue Gene L.

Dr. Flamm refuted the contention that there is a widening gap between the low and high ends of the Top 500, with the government continuing to acquire the truly fast machines and industry purchasing “shlock.” The dispersion between the two ends, he said, is relatively constant with the exception of a big jump caused by the Earth Simulator, “an exceptional machine.” Evidence does exist of a diminished industrial presence at the very top of the list, however: There has not been an industry-owned machine among the fastest 20 since about 2001.

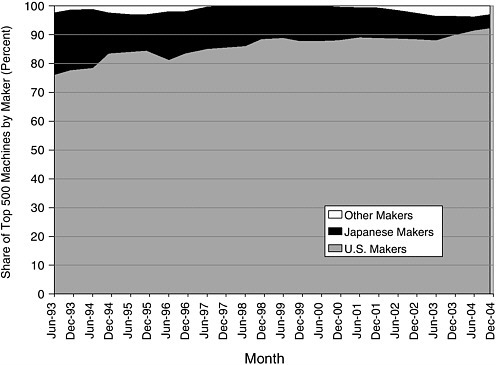

United States Ascends, Japan Declines

Despite other trends over this period, the United States has enjoyed a “huge success story” in supercomputing. U.S. industry has emerged very strong, its share of Top 500 machines sold marching steadily upward while Japan’s share is shrinking (Figure 27). Pointing to the top right-hand corner of his graph, Dr. Flamm specified that the small percentage of non-Japanese, non-U.S. machines indicated there represent products not from Europe but from China and India, the “new industrial powerhouses.”

He added that the picture differs little if looked at from the point of view of total computing capability, which is in fact a better proxy for value. Furthermore, U.S. market share has been increasing not only worldwide but in each individual region of the globe, whether measured by number of machines sold or by total computing capability. Finally, U.S. manufacturers’ share of the 20 fastest machines, after eroding during the mid-1990s, has emerged from the 1993-2004 period only slightly below where it had been at the start. The percentage of the Top 20 machines installed in the United States has followed a similar trajectory.

National Trade, Investment Policies Make an Impact

Evidence exists that national trade and industrial policies have had an effect on market behavior. In 2005 U.S. manufacturers of Top 500 machines controlled 100 percent of their home market, into which only a handful of Japanese

FIGURE 27 U.S. makers stronger than ever: Share of Top 500 machines (numbers) by maker.

machines in the category had been sold since 1998, and none at all since 2000. That contrasts with the situation prior to 1998, indicating that the dumping cases brought against Japanese manufacturers had made an impact. And while the U.S. share of Japan’s market had dipped suddenly after the filing of the dumping cases, it popped back up again once they were settled. “It’s hard not to think there’s some causal connection there,” Dr. Flamm observed.

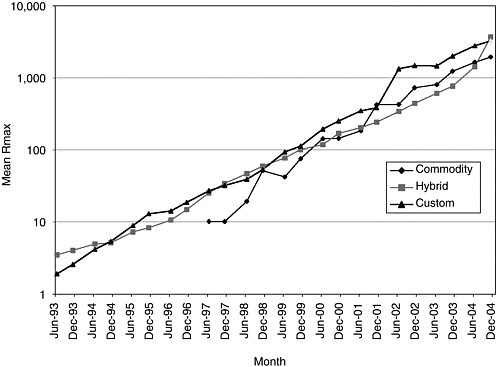

Dr. Flamm next took up the thesis that the government-industry partnership formed to develop alternative methodologies for designing and building supercomputers has been quite a success and has transformed the nature of the supercomputer market over the previous decade. He noted that this is also the thesis of the National Academies report on which he has collaborated with a number of others, including computer scientists familiar with the industry.16 He divided the architectures used in making supercomputers into three categories: “custom,” applied to traditional machines with full-custom processors and full-custom interconnects between those processors; “commodity,” denoting those

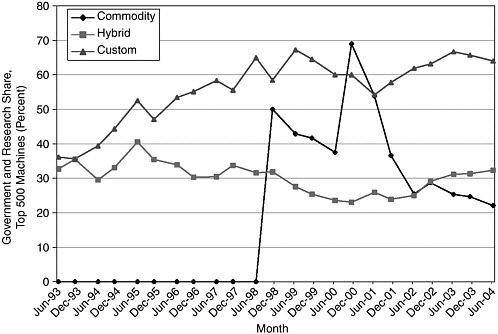

FIGURE 28 System performance by type: Mean Rmax by system type.

made of microprocessors and interconnects available for purchase on the open market from third parties; and “hybrid,” used for machines that generally had commodity processors but custom interconnects. “The very last segment of the computer industry to be transformed by the microprocessor and the PC has indeed been transformed,” he declared, calling this “an extraordinary story.”

Commodity Supercomputers Taking Over

The system performance of the custom and hybrid machines has improved almost in parallel since 1993, with that of commodity architecture tracing a similar slope since its advent in 1997, and there are no sign of any category’s slowing down (Figure 28). Commodity machines’ representation among the Top 500 has skyrocketed; however, from a standing start in December 1998, the commodity sector achieved an 80-percent share of all supercomputers sold by the end of 2004. In this rise, the commodity machines have essentially displaced the hybrids, which between 1993 and 1998 were displacing the full-custom machines. The change is nearly identical when tracked on the basis of capacity

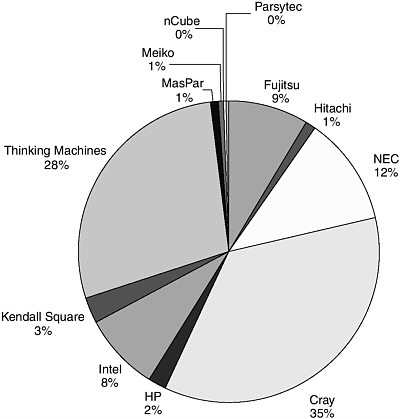

FIGURE 29 Restructuring of product has restructured industry: Top 500 market share (Rmax) by company, June 1993.

rather than units, and differs only slightly when consideration was narrowed to the Top 20 machines.

Transforming a Market Restructures the Industry

This transformation of the supercomputer market has completely restructured the computer industry as a whole. According to a graph illustrating the composition of the market for the Top 500 by computing capacity (Figure 29), the two leaders as of June 1993 were Cray, with 35 percent of the market, and Thinking Machines, another U.S. company, with 28 percent. Japan’s NEC, Hitachi, and Fujitsu combined for a 22-percent share, as compared to the more than 40 percent of the vector-supercomputer market by machines installed that they enjoyed in 1992.

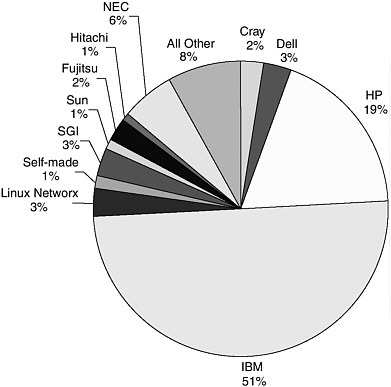

FIGURE 30 A whole new playing field: Top 500 market share (Rmax) by company, June 2004).

By June 2004, the Top 500 market was radically altered (Figure 30). IBM, out of the previous picture entirely, was the leader with more than half of all capacity sold; H-P had climbed into the No. 2 spot, its 19-percent share almost an order or magnitude higher than the 2 percent it had held 11 years earlier. While NEC was the third-largest player at 6 percent, the total share of the Japanese manufacturers was only 9 percent. Cray’s share of the market had plunged to 2 percent.

Government-Only Demand for Traditional Technology

Traditional custom supercomputers, still required by government users for some applications, are showing signs of becoming “a government-only island,” as illustrated by a graph which indicates that around 60 percent of custom machines consistently go to purchasers in the government and research sectors (Figure 31). From the perspective of computing capacity, the figures are even more one-sided: Since 2001, 85 percent of the market for custom systems has

FIGURE 31 Custom supercomputer market becoming a government-only niche: Government and research share, top 500 machines.

NOTE: Balance is industrial, vendor, academic.

been accounted for by government and research users. “A strategy started by the military has become so dramatically successful that some government users—a minority, fortunately—are essentially cut off from this whole new development in computing technology,” observed Dr. Flamm, likening the current market for custom supercomputers to those for submarines and aircraft carriers.

He then presented a number of conclusions:

-

The conventional wisdom holding that the government role in computers is much diminished has never been true at the high end and is still not true. Critical government applications have motivated policy in that area.

-

The policy implemented in the 1990s in response to the challenge that U.S. government users faced in the previous decade have proved to be a huge success, even though the ultimate game plan has not matched the original. The technological foundations residing in “those dead companies that littered the landscapes” have fueled new ideas and new methods that have led to industrial outcomes that are highly favorable to the United States.

-

With considerable evidence available that the custom-supercomputer market is increasingly a government-only niche, the government might be forced to view it the way it views weapons systems and to pay for the full load if its need for the product persists.

-

Spillovers from supercomputers seem to have slowed, as has supercomputer R&D, and “spin-on” has become as important as spin-off. This, again, is one of the messages of the National Academies report to which Dr. Flamm has contributed.

-

It is hard to see how trade barriers have helped either U.S. supercomputer producers or users.

-

The government’s challenge is to maintain whatever capabilities it needs that are outside the commercial mainstream while leveraging developments in the industry it has so successfully transformed in such a way as to make cost-effective solutions linked to the mainstream accessible to government users.

In closing, Dr. Flamm held up the history he had recounted as “an example of a government-industry partnership in technology development that has yielded unforeseen but impressive results as an industrial outcome for the United States.”

Dr. Edelheit, thanking Dr. Flamm and moving on to the next presentation, stated that best practice in government-industry-university collaboration was to be found not abroad but at the U.S. National Institute of Standards and Technology in the form of the Advanced Technology Program (ATP), whose director, Marc Stanley, would follow.

CROSSING THE VALLEY OF DEATH: THE ROLE AND IMPACT OF THE ADVANCED TECHNOLOGY PROGRAM

Marc G. Stanley

National Institute of Standards and Technology

Mr. Stanley, thanking Dr. Edelheit and expressing his pleasure at attending the symposium, remarked that, speaking later in the day, he benefited from having heard the earlier discussion of how countries might profit from public-private partnerships. The observations he would offer, he said, would not necessarily be limited to the ATP.

Conceived by two congressional staff members, one in each house, the ATP mission is “to accelerate the development of innovative technologies for broad national benefit through partnerships with the private sector.” Mr. Stanley described societal benefit as “a very interesting criterion” for a public-private partnership to use when considering investments in high-risk technology. The key to the process was getting industry to lead it, he said. His answer to one of the

questions most frequently asked about ATP—was government actually capable of making this kind of investment?—was that more than a dozen years of observing the program had taught him that industry knows best where particular market gaps might be.

U.S. Market’s Total Autonomy a “Myth”

While acknowledging that the U.S. market is very open and lacks the regulatory constraints often seen overseas—and that these exigencies both provide strength and act as a motivator—Mr. Stanley likened to a “myth” the belief held both at home and abroad that, in the United States, markets acted entirely on their own. Impediments to early-stage, high-risk investment remain a cause for concern in the United States; at the same time, the government’s role in technology development is underappreciated. He would therefore devote some of his time to the question of whether there is a role for government to play in innovation.

To set the stage for this discussion, Mr. Stanley referred to a plaint against Roman rule from Monty Python’s Life of Brian that, for The Economist (May 1, 2004), had evoked criticisms heard from Americans of their government’s role in ushering major technological innovations: “But what, apart from the roads, the sewers, the medicine, the Forum, the theater, education, public order, irrigation, the fresh-water system and public baths … what have the Romans done for us? (And the wine, don’t forget the wine …).” Placing this debate in a U.S. context, he recalled that Alexander Hamilton had created the nation’s first public-private partnership, a planned industrial center in New Jersey called the Society for Establishing Useful Manufactures (SEUM), as a tool for competing with Great Britain.

Partnerships a Hallowed U.S. Tradition

The history of U.S. partnerships provides ample evidence of government involvement apart from that just supplied by Dr. Flamm in his discussion of the computer industry. To cite a few examples:

-

1798—Congress provides a grant for production of muskets with interchangeable parts to Eli Whitney, who founds first machine-tool industry

-

1842—Samuel Morse receives award to demonstrate feasibility of telegraph

-

1919—RCA founded on initiative of U.S. Navy with commercial and military rationale (patent pooling, antitrust waver, equity contributions)

-

1969-1990s—Government investments develop the forerunners of the Internet

-

present—The government is currently making major investments in genomic/biomedical research

Mr. Stanley asked the audience to focus on two facts: 1) that deep capital markets exist in the United States, but 2) that some underinvestment in precompetitive technologies nonetheless remains. He would attempt to reconcile these facts and, in the process, demonstrate that, under the proper circumstances, public-private partnerships can play a key role in helping countries anywhere in the world to compete.

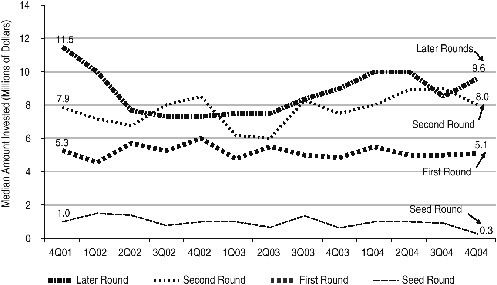

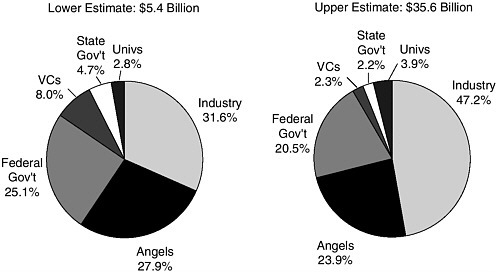

Private Investors Neglect Early Stage

To begin, he raised a question: If the United States has large and well-developed early-stage capital markets—indeed, the world’s best—thanks to broad angel markets and deep venture markets, what is the issue? In answer, he noted that of about $20 billion in rounds of venture-capital investment in 2004, only $105 million represent seed rounds, a constant trend in recent years. Another graph (Figure 32) showed that the median deal size for early-stage seed-round investments had fallen to $300,000 by the end of 2004 from $1 million at the beginning of 2001. Much more of the private equity community’s money, therefore, is going into later-round investments, which typically fund such later-stage business activities as product development and marketing. In addition, the distribution of venture capital tends to concentrate in a limited number of geographical regions.

Mr. Stanley listed several reasons for what he contended was underinvestment in precompetitive technologies in the United States:

-

Markets, although powerful, are imperfect.

-

New ideas lack constituencies.

-

Venture capitalists tend to invest later in the cycle.

-

Firms can’t capture the entire value of some investments when acting alone.

ATP’s Role More Extensive Than Recognized

A review of the funding of early-stage, high-risk research conducted by Lewis Branscomb and Philip Auerswald of Harvard’s Kennedy School under contract to ATP has produced estimates placing investments by venture-capital firms, state governments, and universities at only 8 to 16 percent of the total funding applied to early-stage technology development. The federal government, through ATP and the Small Business Innovation Research (SBIR) program, is estimated to account for between 21 and 25 percent of these moneys (Figure 33). The study’s conclusion is that ATP plays a greater role in financing the development of precompetitive technology than is widely appreciated.

Outside the United States, as suggested both by some of the day’s earlier speakers and by Mr. Stanley’s own travels in member countries of the Organisa-

FIGURE 32 Early-stage deal size declines: Median amount invested by round class.

SOURCE: Adapted from Dow Jones Venture One/Ernst & Young.Median Amount Invested (Millions of Dollars)

tion for Economic Co-operation and Development (OECD), there has been a “clarion call” for enlisting government involvement as one tool for building a dominant position in certain areas of technology. To illustrate, Mr. Stanley displayed a comment by Elizabeth Downing, an official of the ATP award winner 3D Technology Laboratories: “Why should the government fund the development of enabling technologies? Because enabling technologies have the potential to bring enormous benefits to society as a whole, yet private investors will not adequately support the development of these technologies because profits are too uncertain or too distant.”

In a similar vein, the noted venture capitalist David Morgenthaler has remarked that “It does seem that early-stage help by the government in developing platform technologies and financing scientific discoveries is directed exactly at the areas where institutional venture capitalists cannot and will not go.” And Jeffrey Schloss, when speaking on behalf of Dr. Francis Collins of the National Human Genome Research Institute of the National Institutes of Health, has said: “The Advanced Technology Program can stimulate certain sectors like biotechnology where the risk is such that the private-sector investment is ineffective or nonexistent. Because of its synergies across a broad range of technologies, ATP has advanced the research being done in DNA diagnostics tools.”

FIGURE 33 Early-stage technology development: Estimated distribution of funding sources for early-stage technology development, based on restrictive and inclusive criteria.

NOTE: The proportional distribution across the main funding sources for early-stage technology development is similar regardless of the use of restrictive or inclusive definitional criteria.

SOURCE: Lewis M. Branscomb and Philip E. Auerswald, Between Invention and Innovation: An Analysis of Funding for Early-Stage Technology Development, NIST GCR 02–841, Gaithersburg, MD: National Institute of Standards and Technology, November 2002, p. 23.

Market Inefficiencies Make Federal Role “Critical”

On the basis of such statements, as well as other evidence, one might infer that the federal role in dealing with the investment gap affecting early-stage, high-risk technologies is critical, Mr. Stanley remarked. Markets for allocating risk capital to early-stage technology are not efficient, he asserted, due to inadequate information for making investment decisions, the high uncertainty of outcomes, and difficulty in appropriating the benefits of early-stage enabling technologies. As Dr. Branscomb has said in testimony before the U.S. Senate Committee on Commerce, Science, and Transportation, “Entrepreneurs and private equity investors alike consistently state that there exists a financial ‘gap’ facing early-stage technology ventures seeking funding in amounts ranging roughly from $200,000 to $2 million.”

Foreign competitors, meanwhile, have technology-support programs larger than those of the United States, and those programs employ a broad range of mea-

sures in such domains as trade, tax, procurement, standards, government equity financing, and regional aids. To illustrate, Mr. Stanley offered a few details from programs discussed earlier in the symposium. Finland’s Tekes runs a program that was similar to ATP but is financed at an annual level of around 387 million euros as compared to ATP’s $140 million. Japan’s ASET program, one of six Japanese semiconductor partnerships under way, received $473 million in the period 1995-2000. In the European Union, JESSI was funded at $3.6 billion for the period 1988-1996; MEDEA+ was receiving 500 million euros annually; and 17.5 billion euros is to be provided under the Framework Program over 5 years. In addition, not only Canada but other nations of the OECD are practicing many forms of public-private partnerships to compete with the United States.

Foreign Competitors’ Concerted Efforts in R&D

Posting a chart that traces over time a number of developed nations’ R&D expenditures in percentage of GDP, Mr. Stanley remarked that it should not be surprising that the trend has been upward. The data graphed is based on a wide variety of programs, including direct grants, loans, equity investments, and tax deferral; whether the investments represented include both civilian and dual-use technology, or only the former, has not been determined. “Clearly, other countries are increasing their expenditures on R&D and have taken up concerted efforts,” he stated.

Mr. Stanley then turned to ATP’s workings. Unlike SBIR, some of whose funding was agency- or mission-specific, ATP has a collaborative focus and a flexibility under which funding is available to all technologies, enabling industry to address large problems. Cost sharing, which combines private and public funding, and serious commitment to commercialization are requirements. He supplied a list of attributes that he considers “pillars to develop a good public-private partnership,” saying each contributes to success, both within ATP and abroad: These are

-

an emphasis on innovation for broad national economic benefit;

-

a strong industry leadership in the planning and implementation of projects;

-

project selection based on technical and economic merit;

-

a demonstrated need for funding;

-

a requirement that projects have well-defined goals;

-

provisions limiting the funding period;

-

a rigorous competition based on peer review;

-

encouragement of collaboration among small, medium-sized, and large companies; universities; and clusters and industry parks; and

-

evaluations, to be pursued both by presenting results to the granting agency and by establishing a baseline as an aid to proving success.

Measuring ATP’s Success in Societal Benefits

How successful has ATP been? The program was able to show net societal benefits of $17 billion based on the analysis of only 41 of the 736 projects it has funded; the total cost to the federal government over the life of the program was about $2.2 billion. “This is just the beginning,” Mr. Stanley promised. “The rewards are continuing to come in.”

But success is not to be measured exclusively in return on investment. The White House Office of Management and Budget, following a review according to its Performance Assessment Rating Tool (PART) 2 years before, praised ATP’s assessment effort, and a National Academies review had called it “one of the most rigorous and intensive efforts of any U.S. technology program.”17

Evaluation Considered an Integral Component

ATP’s selection process, monitoring, and follow-up on projects is “exceptional,” Mr. Stanley asserted, adding that the program has the ability to identify unsuccessful projects and that those projects are terminated. “You have to terminate companies that are not successfully doing what they say,” he commented. “And then you should be able to speak not only of your successes but of your failures, because there are lessons to be learned from that.”

ATP used a number of methods to measure the activity of its awardees against the program’s mission:

-

Inputs: ATP funding; industry cost-share;

-

Outputs: R&D partnering; risky, innovative technologies; S&T knowledge;

-

Outcomes: acceleration; commercial activity; and

-

Impacts: broad national economic benefits.

The program had in the previous year published a book on its measurement tools, and the book had “become a very hot seller overseas.”

To conclude, Mr. Stanley provided examples of the kinds of studies ATP has employed in order, as he put it, “to do the kind of work that we think is essential to maintain fidelity to the taxpayer[s] for the investments that they’ve given us”:

-

statistical profiling of applicants, projects, participants, technologies;

|

17 |

According to the Office of Management and Budget, “the PART was developed to assess and improve program performance so that the federal government can achieve better results. A PART review helps identify a program’s strengths and weaknesses to inform funding and management decisions aimed at making the program more effective. The PART therefore looks at all factors that affect and reflect program performance.” Office of Management and Budget Web site, accessed at <http://www.whitehouse.gov/omb/part/>. |

-

status reports (mini case studies) for all completed projects;

-

econometric and statistical studies of innovation and portfolio impacts;

-

special-issue studies;

-

progress tracking of all projects and participants (business reporting system, other surveys);

-

detailed microeconomic case studies of selected projects, programs;

-

macroeconomic impact projects from selected microeconomic case studies; and

-

development and testing of new assessment models, tools.

The program reports on these extensive studies, he said.

Dr. Edelheit thanked Mr. Stanley and introduced Pace VanDevender to talk about how the Department of Energy’s National Laboratories fit into this landscape.

SANDIA NATIONAL LABORATORIES: DOE LABS AND INDUSTRY OUTLOOK

J. Pace VanDevender

Sandia National Laboratories

Expressing his pleasure at participating in the symposium, Dr. VanDevender specified that he would present the legislative basis for the national laboratories’ involvement in technology transfer in light of the fact that their mission was a governmental function (making the United States safe and secure). He said that he would also explain how technology partnerships support DoE in that function, discuss the competitive advantage to industry of collaborative research and licensing and, finally, proffer some closing remarks.

Dr. VanDevender explained that DoE National Laboratories are government-owned, contractor-operated organizations (GOCO). Under this arrangement, the labs’ property and equipment belongs to the government, and their people and reputation are affiliated with a contractor. The labs’ missions, however, are fixed by Congress and range from the pursuit of knowledge to the maintenance of nuclear weapons. In fiscal year 2003, the laboratories received around $6 billion of DoE’s $8.5 billion R&D budget. “It is a lot of money,” he remarked, “and every dollar has its mission.”

DoE’s Tech-Transfer History a Quarter-Century Old

Dr. VanDevender then tracked the policy basis for DoE’s involvement in technology transfer. This began in 1980, when the Stevenson-Wydler Technology Innovations Act, which established technology transfer as a mission for the federal laboratories. Each lab set up an Office of Research and Technology

Applications to help disseminate information: “If it wasn’t classified, we were to publish it,” he recalled, “and if it was useful, industry could use it.” This legislation also established a preference for U.S. manufacturers, something that persists to the present, “even though,” as he noted, “globalization has radically changed the nature of the industrial world.”

A second major landmark came in 1984 with the Trademark Clarification Act, which gave the GOCOs licensing and royalty authority for the first time. Then, in 1986, the Federal Technology Transfer Act extended the responsibility for technology transfer to lab employees, so that each individual’s performance evaluation took into consideration fulfillment of this mission. “That did not work at all, as you might imagine,” Dr. VanDevender recalled. “It just didn’t engage the consciousness of the lab employees.”

A turning point was reached with the National Competitiveness Technology Transfer Act of 1989. This act made technology transfer a mission of the DoE weapons labs. It also allowed GOCOs to enter into cooperative research and development agreements (CRADAs): i.e., to make deals with industry that involves becoming partners and cofunding R&D activities. Also in 1989, the NIST Authorization Act recognized CRADA intellectual property other than inventions and, thereby, helped resolve a problem that had inhibited technology transfer from the labs. The 1995 National Technology Transfer Act guaranteed to industry the ability to negotiate for rights to CRADA inventions and increased the royalty distribution limit that had been placed on lab inventors, thereby increasing their motivation to invent.

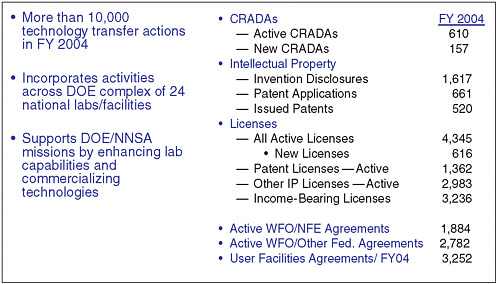

Assessing the Efficacy of Tech-Transfer Policies

How well has this policy basis for GOCOs’ participation in technology transfer worked? “Pretty well,” Dr. VanDevender assessed, posting a tabular account of the DoE labs’ tech-transfer activities for fiscal year 2004 that displayed what he called “some respectable numbers” (Figure 34). However, the approximately 10,000 tech-transfer actions that took place during that year across DoE’s entire complex of 24 National Labs and other facilities were “not at all uniformly distributed.” In fact, about half of the activity, as measured by funds coming in from industry, was attributable to a single laboratory out of the 24. On a lab-to-lab basis, therefore, tech-transfer activity had been “fairly modest.”

Selecting among the figures, Dr. VanDevender noted that there were 610 active CRADAs across DoE in FY2004; based on the fact that 157 had been initiated in that year, he posited an average lifetime for CRADAs of 3 to 4 years. Whether the 520 patents issued in FY2004 amounted to “a lot or a little,” he allowed, “depends on your perspective.” Of 4,345 licenses active in FY2004, 616 were new and 3,236 were income bearing. Agreements classified under “active work for others, nonfederal entities” (WFO/NFE), numbered 1,884, while those classified under “active work for others, other federal agencies” (WFO/Other

FIGURE 34 Technology transfer supplements the primary mission of each lab.

Fed. Agreements) numbered 2,782. User-facilities agreements came to 3,252 in FY2004.

Results Plateauing in Numerous Areas

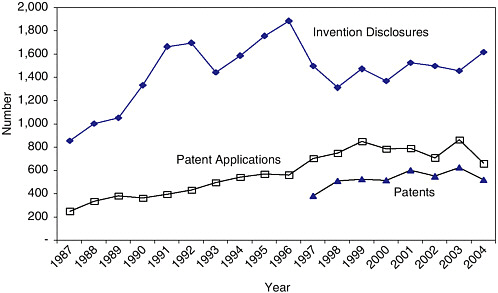

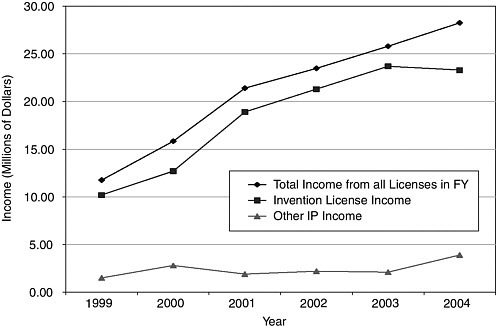

Dr. VanDevender then projected a graph showing that several measures of activity in the intellectual-property domain has plateaued—i.e., very little change with time—as technology-transfer policy has evolved (Figure 35). Invention disclosures, after growing vigorously in the decade ending in 1997, dipped sharply and then, around the close of the century, hit something of a plateau. Patent applications and patents awarded, moving almost in lockstep with one another, have followed a similar if somewhat steadier course.

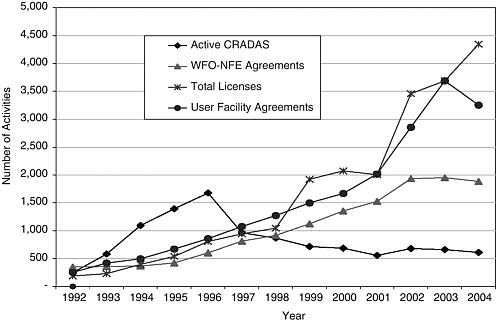

CRADA activity shows a rapid increase from 1992 to 1996, after which it drops off significantly (Figure 36). Dr. VanDevender’s explanation was that when CRADAs came into being, they were cofunded by government and industry, with a 50-50 share being typical. When the government participated as a “funds-producing partner,” CRADAs grew rapidly. “But when the economy recovered and there were other pressing needs for federal money,” he said, “the federal matching dollars went away, and much of the CRADA activity did also.” It has not, however, fallen to zero, because some robust partnerships were established during the years of upward growth and these industry participants provided the funding needed to sustain their CRADAs.

WFO/NFE agreements also reached a plateau in 2002 after many years of sustained growth. However, licenses set a pattern of swift growth that is continuing, and agreements covering user facilities appear to have done likewise until their sharp decline in 2004. “The message from both of those,” Dr. VanDevender reflected, “is that there’s been a lot of growth, a lot of deals were made and relationships built, but it has really plateaued under the current policies.”

Gauging Tech Transfer’s Benefit to the Taxpayer

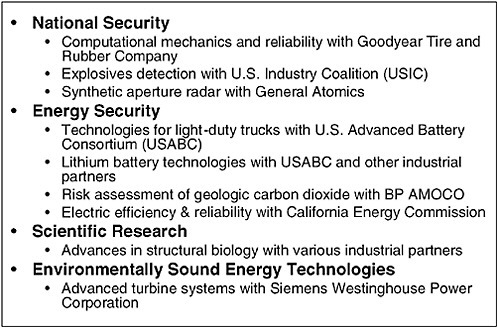

The benefit of this technology-transfer activity to the U.S. taxpayer is substantial, according to Dr. VanDevender. A joint program with Goodyear Tire and Rubber Company in computational mechanics and predictive reliability is an example of a fruitful endeavor for Goodyear and for national security. How could research involving tires be of value to the nuclear weapons program? “If you think of the nose cone of a B-61 crashing into the earth, it is a highly deformable material problem,” he explained. “Tires rotating on your car are continually deformed. Computer codes that were developed at Sandia for the B-61 were extended in partnership with Goodyear.” The resulting benefit for the company is the Assurance tire, which surpassed its 2004 sales projection of one million units by 100 percent; the benefit for DoE is a better finite element code for government applications.

Figure 37 provides examples of CRADA projects that benefit a corporation and “often gets products into the hands of the public to protect us all” conveying “the gist of dual-use.”

Meanwhile, DoE’s income from licensing increased over time to $27 million in 2004 (Figure 38). Other DoE intellectual property income, from copyrights and other sources, have lagged far behind that. The extra money was “helpful,” Dr. VanDevender said, in that it made DoE “more agile in meeting [its] needs.” About 20 percent of the licensing income typically went to the inventors and the rest was put to a variety of uses, such as:

-

upgrading the Advanced Photon Source at Argonne National Laboratory;

-

establishing the Technology Maturation Fund used by many of the DoE labs;

-

supporting startups through the Center for Entrepreneurial Growth;

-

developing the fan airfoil for improved energy efficiency; and

-

reinvesting in teams that had developed and licensed the intellectual property.

“Think of [the last use] as very early-stage seed money,” he suggested, noting that it was usually distributed in amounts no larger than $300,000 and often as small as $10,000. Still, such a “microinvestment … gets teams started [and] makes them more credible so they can attract other teams.”

FIGURE 37 “Funds-in” agreements advance DoE/NNSA objectives.

NOTE: Representative examples are cited.

Industry Benefiting Enough to Stay Engaged

Industry, for its part, is gaining sufficient competitive advantage through such collaborative research and licensing activities to stay engaged with DoE, Dr. VanDevender said. He cited a variety of outcomes:

-

A major manufacturing company has reduced design time and eliminated the need for multiple prototypes with codeveloped simulation tools.

-

Chemical and power companies have improved processes and plant designs using an advanced software toolkit.

-

Equipment able to detect radiation from high-speed vehicles—potentially useful in identifying terrorists in possession of nuclear materials—has become available to the public sector through licensed suppliers.

-

A new duct-sealing system that enabled home and business owners to save energy has been a deployed through more than 60 commercial franchises.

-

A company has developed cancer treatments based on licensed intellectual property.

-

Airfreight containers employing advanced materials developed through collaborative research has gone onto the market.

-

A commercially successful family of hydrogen sensors has been licensed to help promote the future hydrogen energy distribution system.

FIGURE 38 Licensing income supplements the $6 billion of federal funding at labs to enhance mission results.

Sandia’s S&T Park Growing Robustly

In contrast to CRADAs, patent applications, and other tech-transfer vehicles whose growth has plateaued, science and technology parks are springing up as a new thrust. Sandia National Labs Science & Technology Park (SS&TP) in its seventh year, had drawn $167 million in investment—$146.6 private, $20.4 million public—and was still growing. A pedestrian-oriented, campus-style installation located on a tract of land exceeding 200 acres in area, SS&TP in the spring of 2005 housed 19 organizations with 1,098 employees that occupies almost 500,000 square feet. Sandia National Laboratories provides redundant power and state-of-the-art connectivity to the park, plus it helps tenants accelerate city approval processes. Tenants paid in $17 million to Sandia Labs while acquiring contracts from the labs worth $85.6 million; “the government, Sandia, and industry,” observed Dr. VanDevender, “therefore benefit as funds flow both ways.” Projections put SS&TP’s final size at about 2.3 million square feet and its final workforce at between 6,000 and 12,000.

Dr. VanDevender recalled visiting Dr. Chu at ITRI a few months before the symposium and seeing Hsinchu Science Park, which produced about 10 percent of Taiwan’s GDP. “We have 1,000 people, they have 100,000, but they’re 30 years along,” he said. “Obviously, there’s much we can learn from them, and we’re interested in doing that and have begun that relationship.” Contrasting

ITRI’s and DoE’s models, he noted that the former is based on a single-purpose mission of technology development and commercialization with relationships, while the main mission of the DoE labs is “national security broadly writ”; for ITRI, therefore, technology transfer is a dedicated mission, whereas for DoE it is a supplementary mission.

Comparing Results of DoE, ITRI Models

The DoE labs receive about ten times as much annual funding as ITRI, or $6 billion versus $600 million. But industrial contributions account for only about $60 million of the DoE labs’ funding, or 1 percent, while around $200 million, or one-third, of ITRI’s funding comes from industry. The latter is “a very powerful statement of support consistent with their mission,” Dr. VanDevender said.

The DoE labs produce around 600 patents per year, half as many as ITRI’s 1,200; this translates to 0.1 patent per $1 million for the DoE model and two patents per $1 million for the ITRI model. However, the difference in patents per industry dollar is far narrower—about 10 patents per $1 million from industry for DoE versus six patents per $1 million from industry for ITRI—because industry is leveraging the huge U.S. investment in national security in the DoE model. However, these two rates are “very comparable,” said Dr. VanDevender, “given the uncertainty in the value of those patents, [and] particularly since many more companies are spun off from ITRI than from DoE labs.” Both models have their strengths and both were valuable, he concluded, suggesting that the comparison raised a question worth considering at the next stage of policy making: “whether or not [the United States] should experiment with a single-mission lab for industrial competitiveness.”

In closing, Dr. VanDevender affirmed that technology partnerships add significantly to the innovation capabilities of the DoE and NNSA labs, as well as to the innovation capabilities of their industrial partners. They expand the R&D capacity for both industry and the labs and contribute to the fulfillment of DoE’s mission and goals while providing competitive advantage to those industry partners that have long-term relationships with the laboratories. Although the effect is very small in percentage terms, the partnerships continue to provide important results for the government’s national security mission (even during very constrained federal budgets) and differentiating benefits for the partnering corporation. Nevertheless, he emphasized that partnership activities have “really plateaued under the current policies and priorities; a wiser and bolder approach is needed to move partnerships to the next level of effectiveness and efficiency.”