2

Diversity of Assessments and Their Potential Contributions

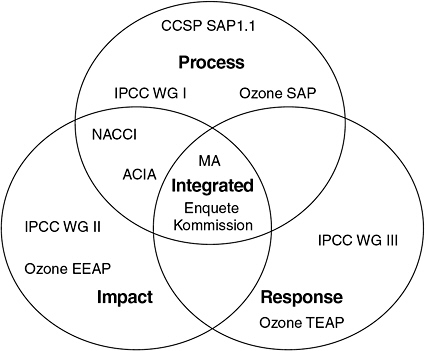

When looking at lessons learned from diverse global change assessments, it is important to consider the science and decision-making context in which each assessment was undertaken and the kind of decisions the assessment was intended to inform. Because the effectiveness of any assessment approach depends on the context and goals of the assessment, the committee describes the role of both in distinguishing various assessment types. The committee groups assessments into four general types: (1) process assessments, (2) impact assessments, (3) response assessments, and (4) integrated assessments. This classification is consistent with and frequently encountered in the assessment literature (Smit et al. 1999; Parson 2003; Farrell et al. 2006; Fussel and Klein 2006; Martello and Iles 2006).

POTENTIAL CONTRIBUTIONS OF ASSESSMENTS TO DECISION MAKING

Any attempt to ascribe a single, general definition of success or effectiveness to assessments has encountered fundamental problems. These problems stem from the diversity of contexts in which assessments are conducted; the diversity of assessment strategies, which results from the variety of goals and potential contributions; and the fact that assessments are evaluated by multiple actors with distinct perspectives and interests. Consequently, when evaluating the effectiveness of a particular assessment the following

questions must be considered: Effective according to whom? Effective in achieving which goals over what time frame?

Despite this diversity, the record of past assessments indicates certain specific categories of contributions that assessments can make to policy debates or to decision making. Illustrative examples of such contributions are given below (Mitchell et al. 2006). While this list is not exhaustive, it captures the most important categories of contributions that are evident in the record of global change assessments of the past 30 years. Note that the ability of an assessment to make any of these types of contributions depends on the state of both the scientific and the policy context in which the assessment is conducted.

-

Assessments have the potential to establish the basic significance or importance of an issue and elevate it onto the decision-making agenda. If an issue is not yet on the agenda of decision makers, which have with the authority and resources to address it, an assessment that assembles an review of evidence can make the case that it is serious or urgent enough to deserve their attention. The stratospheric ozone trends panel (WMO 1990a) and the Villach report (WMO 1986b), for example, exerted a decisive influence over the policy debate by showing the seriousness of ozone depletion and climate change, respectively. In fact, when the policy context for an issue is immature, this may be the only contribution that an assessment can make.

-

Assessments have the potential to provide authoritative resolutions of policy-relevant scientific questions. Sometimes particular scientific questions come to be widely perceived as important, perhaps even decisive, for policy decisions. Important examples include the significance of an environmental change (e.g., how much ozone depletion is required to significantly impact the skin cancer rate?), the significance of the human contribution to a naturally occurring change, or discrepancies in data records or observational techniques. If the policy debate on an issue is characterized by controversy or deadlock because conflicting claims are being made about key scientific questions, an assessment can inform and advance the policy debate by authoritatively resolving these questions. Such a contribution requires both that available scientific knowledge is able to support a clear resolution and that there is a policy-making body with the issue on its agenda.

-

Assessments have the potential to link actions to consequences. When the policy context is even more advanced—in that a decision forum and agenda have been established, a specific set of options is being considered, and actors broadly agree on the consequences of these choices—assessments can inform decisions by making specific, scientifically founded

-

statements that link alternative trends in human drivers or alternative actions to limit these drivers to specific environmental changes. The Intergovernmental Panel on Climate Change (IPCC) Working Group III on mitigation of climate change (IPCC 2001c) is a good example. Assessments can also provide scientific evaluations of adaptation strategies, such as in the case of the IPCC Working Group II on impacts, adaptation, and vulnerability (IPCC 2001b). As with all these types of contributions, the ability of assessments to effectively link actions and consequences depends both on an adequate base of scientific knowledge and on policy actors willing to support the activity and consider acting on its results.

-

Assessments have the potential to help solve recognized, shared technical problems. If most or all members of a relevant decision-making body perceive themselves to have a specific, shared problem, an assessment can make a significant contribution by bringing the principal parties together to find common technology options and solutions that solve their problem. An example is the Technology and Economic Assessment Panel (TEAP) that provides technical advice to the Montreal Protocol regarding alternatives to ozone-depleting substances.

-

Assessments have the potential to help identify and clarify key policy-relevant questions or research priorities. If the policy-relevant questions are vague or confused, instruments and models give conflicting results, or there are other serious conflicts in the field, assessments can provide disciplined settings to force confrontations between contending claims, sharpen disagreements, clarify incompatible terminology or concepts, and develop a research agenda to advance knowledge on key policy-relevant questions. Examples of assessments that have made contributions of this sort include IPCC’s Working Group I report (IPCC 2001a) and the Arctic Climate Impacts Assessment (ACIA 2004).

-

Assessments have the potential to demonstrate that a policy is providing environmental benefits. For example, the process assessment of stratospheric ozone assessment conducted under the Montreal Protocol provided evidence that the new policies were effective in reducing negative impacts on the ozone layer.

SCIENTIFIC AND POLICY CONTEXTS FOR ASSESSMENTS

The context for any global change assessment has two primary components: (1) the scientific context, which concerns the state of relevant knowledge to be assessed; and (2) the policy and political context, which concerns the state of relevant policy debates and decisions that the assessment seeks

to inform. When analyzing or evaluating an assessment, it is crucial to consider these two components of the context in which it is conducted. These exercise fundamental influence over when and even whether an assessment can be undertaken, what contributions it can make, which approach holds most promise, and by what criteria it can be evaluated. The effectiveness of any given assessment approach depends on how well the scope of the assessment fits within the scientific and political context; hence, both need to be considered prior to framing the assessment.

The Scientific Context

An important aspect of the scientific context is the maturity, understanding, and degree of consensus in the relevant fields and on the most central questions of concern (Ravetz 1971). In particular, the wealth of evidence and data available to address the science issues plays a crucial role in the type of assessment that can be undertaken. It is important to consider the maturity of the field and whether the relevant knowledge lies within one or several research disciplines. If it spans multiple disciplines, a certain capacity building is required for the experts from various fields to be able to communicate and find agreement on approaches to the questions of concern.

The Policy and Political Context

Together with the science context, the policy and political context determines what an assessment can contribute and, in many ways, what its mandate, goals, and approach ought to be. At an early stage of maturity in the policy context,1 an issue might not have risen to the importance of being included on the policy agenda because the potential decisions to be considered are not clearly identified (Social Learning Group 2001b). At this early stage in the public attention cycle, the goal of an assessment ought to be to establish the importance of an issue, which might increase the public’s attention and bring the issue onto the decision-making agenda. For example, the Villach report (WMO 1986b) was an assessment that brought the issue of climate change onto the policy agenda.

Once relevant decisions have been identified and have entered the policy agenda, the focus on a global change issue depends on whose agenda it is and how much attention the issue is being given. Often the attention is

short-lived as other more pressing issues replace it. In addition, when the issue is being considered in the decision-making process, the ability to make progress becomes a question of (1) the level of disagreement over actions or decisions to be taken and (2) if there is disagreement, the source of such. In particular, to what extent are actors basing their policy arguments on scientific claims? At this point, assessments will be required that not only advance the consensus in the basic understanding of the process and its impact but also analyze potential policy options and response options.

FOUR TYPES OF ASSESSMENTS AND CONSEQUENCES FOR DESIGN CHOICES

The type of assessment to be undertaken depends largely on the science and policy context, which in turn limits many internal design choices at the inception of the assessment. Therefore, it is important to keep in mind that some key dimensions of an assessment are established by conditions external to the assessment at its inception.

Conditions Established at the Assessment’s Inception

The Institutional Setting and Authorizing Environment

This first dimension concerns issues such as: Who asked for the assessment or gave permission to do it? What organizations have provided official sponsorship and on what terms? Who is funding it? To whom is its output addressed? Although these issues may appear similar to the types of factors that define the assessment’s policy context, it is important to distinguish them. Whereas the policy context of an assessment concerns historical conditions around the relevant issues at the time the assessment is established and conducted, which one cannot choose, the issues presented here are established at the inception of a particular assessment. For example, there are many variants in the institutional setting of assessments, some of which can be identified according to their degree of official connection to international policy-making bodies:

-

Assessments conducted under the auspices of an official policy-making body (e.g., World Meteorological Organization stratospheric ozone assessments);

-

Assessments sponsored by international or intergovernmental bodies that do not have direct decision-making authority and those sponsored by multiple national bodies (e.g., IPCC assessments);

-

Assessments sponsored and authorized by national governmental bodies (e.g., National Assessment of Climate Change Impacts [NACCI]); and

-

Independent, ad hoc assessments, with various weaker degrees of linkage to official or decision-making bodies (e.g., Global Biodiversity Assessment).

The Scope and Mandate

Global change assessments can be classified into four categories based on their mandate and goals: (1) process assessments, (2) impact assessments, (3) response assessments, and (4) integrated assessments. This four-part distinction matches the most common usage in the literature, although other terms have been proposed (Smit et al. 1999; Parson 2003; Farrell et al. 2006; Fussel and Klein 2006; Martello and Iles 2006). Just as these categories of assessments differ in the types of questions they answer, they also differ in the complexity of the analysis necessary to answer those questions. As a result, they may diverge in the approach necessary to enhance salience, credibility, and legitimacy.

The committee recognizes that none of the terms used to categorize assessments are wholly satisfactory, that this division does not represent a model that should be applied to all assessments, and that most assessments are hybrids of these ideal types to some degree. The taxonomy simply matches the historical practice of global change assessments. For example, the first three types of assessments roughly mirror the mandates of the IPCC Working Groups (WGs) I, II, and III, and of the Montreal Protocol’s Scientific Assessment Panel, Environmental Effects Assessments Panel, and TEAP. Although this division has sometimes been criticized by academics as being too simplistic and various attempts have been made to reorganize IPCC working groups along different boundaries, there are quite robust differences among the three in how they must be (and have been) organized and what conditions determine their effectiveness. Figure 2.1 illustrates the “space” an assessment can occupy, depending on how much attention it gives to each of the three questions. Integrated assessments attempt to incorporate all three.

Process Assessments: Understanding What Global Changes Are Occurring and What Is Causing Them

The goal of process assessments is to summarize and synthesize scientific knowledge of global change processes, rather than their impacts or responses to global change. Often such process assessments are initiated first due to the need to characterize the extent and the drivers of change.

FIGURE 2.1 Three types of global change assessment: process assessment, impact assessment, and response assessment. The fully integrated assessment lies at the intersection of the three types. Examples of assessments are included. ACIA = Arctic Climate Impact Assessment; CCSP SAP 1.1 = U.S. Climate Change Science Program Synthesis and Assessment Product 1.1 on Temperature Trends in the Lower Atmosphere; IPCC WG I, II, and III = Intergovernmental Panel on Climate Change Working Groups I, II, and III; MA = Millennium Ecosystem Assessment; NACCI = U.S. National Assessment of Climate Change Impacts; Ozone EEAP = Environmental Effects Assessment Panel of the stratospheric ozone assessments; Ozone SAP = Scientific Assessment Panel of the stratospheric ozone assessments; Ozone TEAP = Technology and Economical Affects Panel of the stratospheric ozone assessments.

An understanding of environmental processes and their drivers is required to examine impacts and especially responses.

The most prominent examples of process assessments are the Montreal Protocol Scientific Assessment Panels (WMO 1990a,b, 1992, 1995, 1999, 2003, 2007) and the IPCC WG I (IPCC 1990a, 1995a, 2001a).

To date, process assessments have generally focused on Earth system and ecological processes. Most participants come from the physical, chemical, and biological sciences. Of course, in the history of global change issues one of the key questions is not only whether global change is occurring but also whether it is driven by human action. Thus, while often centered in the natural sciences, these assessments also consider, explicitly or implicitly, human activity. They may describe the status of current and past trends in these processes

(e.g., climate trends and patterns of variability), explain the causal mechanisms that determine their dynamics, and, in some cases, include projections of future trends. To date projections have relied on simple, exogenously specified assumptions about future human perturbations (e.g., specified scenarios of trends in anthropogenic emissions are used for projecting future trends in stratospheric ozone and climate change). Process assessments have typically drawn on knowledge and participation either from one well-defined research community, from a few closely related ones, or, in case of the most interdisciplinary problems, from most disciplines in the natural sciences.

Process assessments have the potential to make the following types of contributions to policy debates and decision making. They can influence science-policy decisions by clarifying and prioritizing key research questions. In addition, they can demonstrate the accumulation of baseline knowledge and data on an issue, making the initial case for the credibility of the relevant scientific knowledge and the seriousness of the related environmental threat. This contribution typically takes place early in the development of an environmental issue and, once achieved, can make a qualitative change in the subsequent policy debate.

Impact Assessments: Understanding the Consequences of Global Change

Impact assessments seek to characterize, diagnose, and project the risks or impacts of the environmental change on people, communities, economic activities, ecosystems, and valued natural resources. As the history of climate change assessments and of stratospheric ozone assessments indicates, impact assessments often occur once a global change phenomenon has been validated by a process assessment and they often draw on the outputs of the process assessment. The most prominent examples are the IPCC WG II and Millennium Ecosystem Assessment (MA) reports.

Because most impacts play out within specific sectors (e.g., water resources, forestry, agriculture, fisheries) and at regional scales, impact assessments often focus on individual economic sectors or regions. For example, NACCI examined five sectors and nine megaregions in the United States and concluded that the impacts of climate change vary substantially across sectors and regions. Further, the impact on a sector will often differ across regions. In contrast to this context dependence, a process assessment can often do useful work at the global scale. This factor alone makes impact assessments quite complex. Impact assessments must also consider interactions among impacts, so that every impact assessment is to some extent an integrated assessment. Because of their complexity, impact assessments always require scientific input from many disciplines. In addition, the sectoral and regional specificity of impacts requires input from diverse stakeholders who provide critical local and sectoral information.

For these reasons, impact assessments are large and complex endeavors and require resources and organizational structures commensurate with their scale. Yet, their contribution to decision making is fundamental. While a process assessment can identify and characterize an anthropogenic global change, without sound analysis of impacts, decision makers cannot make an informed decision about the importance of responding. Impact assessments answer the “so what?” question, identify key vulnerabilities, and potential strategies to enhance resilience.

Response Assessments: Understanding the Options for Responding to Global Change

Response assessments seek to identify and evaluate potential responses that could reduce human contributions or vulnerabilities to the environmental perturbation at issue. They have also been referred to as option assessments (Social Learning Group 2001b). Some response assessments are focused narrowly on technology responses, mitigation, or adaptation and are referred to as such. Other response assessments are broader and may consider a variety of options, including changes in technology, policy, economic incentives, and mitigation or adaptation. The scale at which “what can be done” is considered may be economy-wide or specific to particular industry sectors, production processes, or individual firms. It may also be regional, national, or global. The measures considered certainly include alternative technologies but may also include process, product, managerial, organizational, or institutional changes brought about by either public or private policy. The questions posed may include identifying options (currently available or anticipated); assessing their feasibility, their state of development, and their potential contribution to solving the problem; and assessing their costs and benefits broadly, including monetary costs, their effects on factors such as yield, reliability, and product quality; and their contributions to other environment, health, and safety issues.

Again, it is important to remember that the four types of assessments are not perfectly distinct, and many fall on the spectrum between impact and response assessments. In particular, it is usually important to understand the full impacts of a response strategy. The exception may be when the response strategy is confined to technological choices within a sector that have few impacts outside that sector. In fact, because response assessments focus on reducing human drivers of the environmental change or on ways to mitigate their impact, there is a logical coupling between these and process assessments, mediated by scenarios (of emissions or of other anthropogenic perturbations) or models that can be used to drive projections of future environmental change in the process assessments. Process and response assessments together have a logical structure that considers natural and

human factors, and potential interventions in terms of the human factors. Therefore, the development of scenarios linking the two assessment types needs rigorous social science engagement to ensure that human factors are represented realistically (Social Learning Group 2001b).

Integrated Assessments: Understanding the Connections

Integrated assessments examine the links among the systems analyzed in process, impact, and response assessments. It is useful to differentiate two types of integrated assessments. Fully integrated assessments develop a common model of the world, generally a computer model. Sometimes this is done by linking models developed for narrower purposes, as in the efforts to link climate models to economic models. Linked assessments are a mix of process, impact, and response assessments in which the assessment teams actively communicate, coordinate, and build as much as possible on each others’ work. Many assessments attempt to integrate the three components through a synthesis report that pulls together (or sometimes merely juxtaposes) results and conclusions. The working groups of the IPCC follow this model. Other assessments are designed from the outset to analyze the individual pieces but with a deliberate strategy and common framework so that the various pieces can be integrated by a team into a synthesis report. This approach was used by the MA and NACCI.

Under common definitions (e.g., Weyant et al. 1996; Parson and Fisher-Vanden 1997), synthesis reports as undertaken by the IPCC or the MA would not qualify as integrated assessments. However, because of the importance of providing integrated information for decision making and the complexities of conducting integrated assessments, the committee believes that consideration of the less stringent forms of integration is also important to the design of assessments.

As noted, timing is a critical issue for linking assessments. A logical sequence would suggest that a process assessment should be completed before impact and response assessments so that the latter can benefit from the most updated understanding of the processes. In turn, the impact and especially the response assessments can inform assumptions about human activities that should be deployed in subsequent process assessments. However, mandates of many assessments do not allow this phased approach, instead requiring parallel activities. Sequencing activities so that process, impacts, and response assessments are conducted on an iterative cycle may be more effective.

Design Choices Made During the Conduct of an Assessment

Those leading and participating in an assessment must operate within constraints imposed by prior decisions defining an assessment’s scope, mandate, and organizational setting. Even so, assessment participants still have the opportunity to decide many aspects of its process, content, and presentation. Within an assessment’s previously defined mandate, participants choose what specific subject areas to include or emphasize, what sources of information to include, what methods or tools to use in integrating information, and what (if any) specific policy-relevant questions to answer. They may decide who participates in the assessment, how they are chosen, how they organize their collective work, how they make decisions (particularly in the case of disagreements), and how to identify and involve stakeholders. They choose how to present results, including the content and strength of conclusions, as well as whether to make interpretive judgments that go beyond the present literature, to employ “if-then” statements that link alternative choices to potential outcomes, or to include explicit recommendations for action. They may decide whether the assessment undergoes public or governmental review in addition to scientific peer review. They also decide the scale, form, and manner of dissemination of reports or other outputs.

Many of these design choices are linked with an assessment’s success in achieving credibility, legitimacy, and saliency, although the relationships are both complex and dependent on the assessment’s context. For example, broadening stakeholder participation in an assessment can increase legitimacy but poses risks to credibility to the extent that these participants are perceived as lacking expert standing, thereby, diminishing the assessment’s reliance on scientific expertise. In Chapter 3, the committee discusses in greater detail how these mostly internal design choices can be approached to optimally balance all three attributes in achieving an effective assessment.