2

Approaches to Quality Improvement Research

Although a number of different quality improvement strategies exist, Paul Batalden of Dartmouth noted that overall there is a lack of understanding of how to connect these different strategies in efforts to improve quality. Thus Batalden set forth a framework for connecting various strategies in quality improvement and quality improvement research. That framework, Batalden said, rests on three assumptions. First, the overall goal is to achieve better health care. Second, better health care should be based on as much knowledge as possible. And third, improving the quality of health and of health care is not as easy as it first seems (Batalden and Davidoff, 2007).

Batalden defined quality improvement as “the combined and unceasing efforts of everyone—health care professionals, patients and their families, researchers, payers, planners, educators—to make changes that will lead to better patient outcome, better system performance, and better professional development” (Batalden and Davidoff, 2007, p. 2). If quality improvement efforts are to be sustainable, Batalden said, all three of these goals must be focused on, not just one or two. Unfortunately, he said, in most cases the three goals are pursued independently, not collectively. For example, efforts are often made to improve patient outcomes and system performance, but the formative process of how health care professionals are trained and how to achieve better health care through

improvements in the quality of this training has generally received little attention.

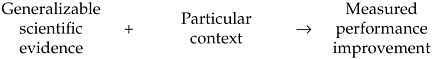

Batalden offered the following formula as a way of thinking about how the various factors of quality improvement fit together:

In short, by taking general scientific understandings and applying them to a particular context, one should be able to achieve a measurable improvement in performance. There are five distinct knowledge systems underlying this formula, Batalden explained. The first relates to generalizable scientific evidence, which Batalden described as understanding how to minimize the effect of context. The second system of knowledge focuses on understanding the specific variables that define a particular context. The third involves measuring the stability of change over time; this system of knowledge underlies measured performance improvement, which is the outcome—that is, the right-hand side—of the above formula. While Batalden stresses the importance of measurements over time, such measures often do not exist; instead, it is often the case that only discrete pre-and post-intervention measurements are taken. The fourth system of knowledge is implied in the plus sign in the formula: It is the knowledge involved in choosing the correct evidence to a particular context. The fifth system of knowledge, which Batalden calls the knowledge of execution, is the formula’s arrow.

Generalizable scientific evidence and particular contexts link together, creating a cycle that is a form of experiential learning. The cycle begins with testing the implications of concepts in new situations, Batalden explained. These tests lead to concrete experiences, and observations and reffections made from these experiences are then analyzed to form new abstract concepts and generalizations, which can then be tested in new situations, beginning another cycle. Without further testing and analysis, however, this is just experience. This cycle not only describes how a large majority of evidence-based medicine is developed, but it also captures how evidence-based medicine is largely integrated into practice. In fact, Batalden said, the quality improvement field has been substantially handicapped by the idea that only one method to control quality can be used at a time to affect change. While a lot can be learned from studying the effects of individual quality improvements, much can also be learned experientially from a multitude of efforts to improve health

care. Thus, Batalden suggested, experiential learning can be seen as one of the underpinnings of quality improvement.

Jeremy Grimshaw of the Ottawa Health Research Institute offered a different approach to quality improvement research. There is a consistent failure to translate research findings into clinical practice, as evidenced by studies showing 30 percent to 40 percent of patients not receiving the care they should (McGlynn et al., 2003) and 20 percent to 25 percent of patients receiving unnecessary or potentially harmful care (Grol, 2001), and, Grimshaw said, overcoming this failure is a major focus of health care quality improvement. One way to fix this failure, he suggested, would be to instill evidence into clinical practice at a variety of levels: the structural, the organizational, the group or team, and the individual health professional levels (Ferlie and Shortell, 2001). To target the stakeholders at the various levels, different interventions would be needed, depending on the identified barriers. The challenge, Grimshaw said, will be to equip health care providers with the correct tools to properly deliver evidence-based treatments.

Implementation research1 can be described as studies of how the uptake of research findings is promoted, explained Grimshaw. Implementation research focuses on the challenge of delivering evidence-based care to patients. The aim is to develop a generalizable evidence base that can be used to improve the implementation of research findings and enhance decision making at the local level. This research is inherently interdisciplinary, involving health care professionals, organization scientists, engineers, and others, Grimshaw noted.

Some workshop attendees questioned just how much these two views of quality improvement research differ. Despite the suggestion by some attendees that these approaches to quality improvement research oppose each other, others thought that the discussion revealed more similarities than differences. In particular, Batalden and Grimshaw agreed on the purpose of quality improvement research and also agreed that the evidence base needs to be developed to the point that it can build on itself.

METHODS

Quality improvement is analyzed using a variety of methods, Batalden said. These include systematic reviews, controlled trials, case reports, and hybrid quantitative/qualitative reports. These different methods have different strengths. The workshop discussions focused only on systematic reviews and randomized controlled trials.

Rigorous evaluations add valuable information to the overall knowledge base and provide a solid base of research that can be built upon, Grimshaw said. The majority of such evaluation approaches today emphasize a diagnostic process that first identifies barriers, then addresses the most important barriers with specific interventions, and, finally, evaluates the effects of the different interventions through rigorous evaluation designs.

Randomized controlled trials (RCTs) and other such rigorous research methods can provide better evidence of effectiveness than other types of methods when assessing specific questions, Grimshaw argued. RCTs should be used to evaluate such questions as what the likelihood is that an intervention will yield the desired effect, what the direct effects will be of that intervention and of its alternatives, under what circumstances the intervention will succeed or fail, and what resources are required to do the intervention, he said.

Grimshaw described the disagreement in the field about the use of what some believe to be the “gold standard,” RCTs, when making evaluations of the effectiveness and efficiency of interventions. There is some antipathy to the use of RCTs in complex social contexts, such as quality improvement processes, while others believe RCTs to be an extremely valuable method of evaluating these interventions. Responding to those who do not believe in using RCTs for quality improvement, Grimshaw said that there are many misconceptions about RCTs. It is often assumed by critics, for instance, that all randomized trials use the methods of explanatory (focused on efficacy) drug trials that require tight inclusion criteria, that they largely ignore context, and that they are expensive. But this is not necessarily true, Grimshaw argued. Randomized trials of quality improvement interventions tend to be pragmatic (focused on effectiveness) and attempt to elucidate whether an intervention will be effective in a real-world setting, not in an optimal one. Such RCTs frequently have broad inclusion criteria and can be designed to gain better understanding of the influence of context on the effectiveness of quality improvement interventions and why changes occurred. One method of achieving this, for instance, is to use observational

approaches in conjunction with data from RCTs to test multilevel hypotheses about which interventions work and which do not.

Quasi-experimental trials are another method of evaluating interventions, Grimshaw said. Additionally, uncontrolled before-and-after studies, controlled before-and-after studies, and interrupted time-series analyses are all frequently used alternatives to RCTs. However, many of the criticisms of RCTs also apply to these other methods. RCTs build on the knowledge generated by observational studies and case studies, Grimshaw said.

Grimshaw and Batalden agreed that the best method to evaluate a given intervention will depend on the specifics of that intervention, and one should always attempt to choose a mixture of the best possible method, given the individual circumstances.

AREAS WHERE MORE KNOWLEDGE IS NEEDED

Based on Batalden’s proposed model of quality improvement, many areas exist where more knowledge is needed to achieve better patient outcomes, system performance, and professional development. To improve patient and population outcomes, examples of areas where knowledge is needed include better measures of outcomes, improved confidence in these measures, and understanding of the causes of variation in both outcomes and measurement. Improving system performance will require enhanced measures, a better understanding of the various evaluation methods used, and an understanding of the role of standards in introducing change. Better professional development requires a more comprehensive understanding of such issues as competence, accreditation and licensure, and fostering of cooperation among professionals. Another area of knowledge that needs building, Batalden said, is how employees are trained, developed, and held accountable. Two other areas that are not well understood are the roles that leadership and governing boards play in quality improvement. Building this body of research knowledge will demand developments in many areas, Grimshaw said. Theoretical developments are needed to provide frameworks and predictive theories for creating generalizable research, such as understanding how interventions are chosen and interpreted and how to change individual and organizational behaviors. Methodological developments are also required, as well as exploratory studies aimed at understanding the experiential learning that takes place in individual settings and organizations. Rigorous evaluations need to be undertaken of the effectiveness and efficiency of interventions,

Grimshaw said; these bodies of knowledge can be synthesized to determine the generalizability of findings.

Finally, Grimshaw noted that partnerships are needed to encourage communication among various stakeholders, such as theorists, researchers, and implementers. Such partnerships are necessary in order to understand what types of knowledge are needed and how that knowledge can best be developed; but, he said, few such partnerships exist today.