3

Assessing Cost and Technology Readiness

On the second day of the workshop the five panelists at Session 2 examined how surveys could better deal with new project costing, technology readiness, risk, and execution. The questions posed to the panel included these:

-

How does a survey committee determine the cost and risk for candidate missions that have not yet been fully defined?

-

How does it define an affordable decadal plan?

-

Is it feasible or reasonable to expect a survey to be stable for 10 years?

-

What similarities and distinctions are there between considerations for NSF ground-based facilities and space missions?

This panel was moderated by Tom Young (Lockheed Martin, retired), and the panel members were Steve Battel (Battel Engineering), John Casani (Jet Propulsion Laboratory), Noel Hinners (University of Colorado), Bruce Marcus (TRW, retired), and George Paulikas (Aerospace Corporation, retired). Following brief remarks by each panelist, the moderator invited all the workshop participants to comment on cost and technology assessment.

Mr. Young opened the session by saying it appeared from the prior day’s discussion there had been a bias toward unrealistically low budget estimates, and he asked whether a survey’s priorities might change if the survey committee had better cost estimates. The current process seems to support initiation of a large number of programs, but it fails to support the completion of many programs. He went on to say that if the objective is to complete projects successfully, then the survey committees aren’t doing the cost estimating right—too many of them

suffer later from large budget and schedule overruns. Perhaps surveys should aim to start fewer programs and complete most or all of them.

PANEL MEMBER PRESENTATIONS AND DISCUSSION

Steve Battel started by remarking on what he thought were two key problems relevant to cost and technology assessments:

-

It is very difficult to match the cost and schedule assumptions of a mission with an uncertain 10-year agency budget outlook.

-

The limited opportunities to start a mission in the course of a decade, the competitive nature of the planning process, and very early technical assumptions lead to overoptimism on costs, schedule, and technical readiness and drive mission designs to be quite complicated—that is, there is often a desire to maximize science returns and to do things because we can rather than because they need to be done.

Mr. Battel mentioned that cost estimates in past decadal surveys had been 1.5 to 4 times lower than the actual costs of a mission. He thinks that these low preliminary cost estimates are due to (1) pressures from agency representatives and community members to adopt top-down, cost-capped approaches on missions, (2) inadequate investment up front to reduce technology and design risk, and (3) lack of discipline and tools for system evaluation and cost estimates.

Mr. Battel also believes the sources of real cost growth in projects include the following:

-

Mission creep, changes in project management during a project, and unreliable funding;

-

Course corrections or management mandates in response to other mission failures;

-

Underestimates of technical complexity and maturity;

-

Management approaches that simply seek to avoid risk rather than to manage it (risk aversion management deoptimizes a project by cutting cost, capability, or other resources to create an artificial contingency margin);

-

Unwillingness on the part of management to “push back” on projects that are in trouble (i.e., to terminate or downsize);

-

Uniqueness of aerospace practices, loss of experienced aerospace workforce (failure to share expertise), transition from military to less robust commercial aerospace technologies, and too much simulation in place of actual testing;

-

Fewer options for launching smaller missions; and

-

Overcommunication and superfluous management processes (“too many meetings”).

Mr. Battel thought that NASA deserved credit for establishing a process for developing and rating technology readiness. He cited both the Space Interferometry Mission and the James Webb Space Telescope as missions with clear technology roadmaps for achieving appropriate technology readiness before entering development. He thinks that smaller missions (e.g., Explorer and Discovery) should be based on existing technology or a controlled extension of existing technology. Mr. Battel believes that decadal surveys need tools to properly rate mission complexity and readiness. He thinks that the most recent surveys in the 2000s have been impacted by the paradigm shift away from faster-better-cheaper at NASA and by major reductions in science planning budgets. Mr. Battel recommended that agencies should

-

Continue to develop and improve parametric cost and schedule models based on real data and realistic assumptions,

-

Add experts to review mission costs and technology readiness,

-

Develop mechanisms to better couple mission planning and the identification of associated technology,

-

Work to simplify and optimize the management of small and medium missions to reduce cost and development time, and

-

Work to improve the affordability and availability of launches.

Similarly, he noted that survey committees should

-

Require that programs are technically ready so they can be accomplished within a decade,

-

Ensure that programs are properly scaled in size and have realistic cost numbers vetted through certified modeling processes,

-

Provide specific guidance on mission-enabling technologies,

-

Establish metrics and methods for creating and maintaining program balance, and

-

Work with agencies to find effective advisory mechanisms for obtaining tactical advice from the research community.

The discussion after Mr. Battel’s presentation focused largely on how wasteful it is to design smaller missions with the same approaches as large missions and how hard it is today for universities to undertake even very small missions. Given current constrained agency budgets, universities can no longer afford to maintain the infrastructure or staff to oversee missions.

John Casani started his brief remarks by stating that knowing the scope of a mission is the most important aspect of reliable cost estimating—one must be as certain about performance requirements as about cost before initiating a project. He said that one will never get good cost or technology readiness estimates if the input information about a mission is weak or if there is a lot of uncertainty.

Because his focus was solar system exploration, he mentioned that uncertainties about the environment in which a mission would be operating (e.g., Mars’s surface) were a major factor in cost uncertainty and made costs very difficult to characterize 10 years in advance. Dr. Casani agreed with earlier speakers that industry is under tremendous pressure to come in with low bids and that we do not spend enough time defining requirements. He recalled former NASA mission readiness assessment guidelines as saying that investments in Phase A (requirements definition) should be ~7 percent of the total project cost. Dr. Casani also made the following observations:

-

International cooperation helps secure a mission but rarely saves the United States money, and it introduces other risks (organizational communication problems, schedule and technology interdependencies, and politics).

-

One can spend a lot of money on risk management and not improve the mission success. Fewer mission opportunities encourage even more conservative and wasteful risk management approaches, perhaps in response to fear of failure.

-

Cost modeling does not take into account different management approaches and will probably be helpful only for comparing mission costs rather than giving good estimates for a specific mission.

NASA representatives agreed that cost models are currently best suited for comparing mission costs. Dr. Casani believes that NASA should develop a standard approach to cost estimating that is independent of the approaches used by advocacy groups and that NASA must take the leadership in challenging bad cost estimates. He mentioned that some of the missions he had worked on that did not have overruns used a lot of fixed-price contracting and had highly capable engineers overseeing them. They were able to do this because they invested time in defining the requirements up front, did the needed system engineering, and did not change the contract or requirements. When asked how decadal surveys are going to estimate mission costs when previous missions have been as much as four times more expensive than originally estimated, Dr. Casani said that we must do a better job of realistically assessing mission complexity and the likely cost of attendant risk mitigation. After starting a mission, we must insist on adequate systems evaluation and requirements definition prior to starting development: Spend the money needed to better define requirements, and do not go beyond those requirements.

Panel member Noel Hinners focused on how surveys produce better cost and risk estimates for missions that are not well defined. He said it is unrealistic to think we can get valid cost estimates for undefined missions unless we develop “ridiculously unambitious” missions. He suggested that survey committees need to look more at mission class, their risk, and their damage potential. Table 3.1 summarizes Dr. Hinners’s ideas on how cost overruns impact the rest of a pro-

TABLE 3.1 Potential Cost Growth in Some Mission Classes

|

Mission Class |

Characteristics |

Mission Cost (billion $) |

30% Overrun (billion $) |

Damage Potential |

|

Flagship |

5-10 year development cycle, little heritage from prior missions. Examples are Viking, Voyager, Galileo, HST. |

3-5 |

1-1.5 |

One New Frontier or two Discovery or three Explorer missions |

|

New Frontier |

~5 year development cycle, new instrument technology. Examples are SIRTF, GP-B, Juno. |

1-1.5 |

0.3-0.5 |

One Discovery mission or two Explorers |

|

Discovery |

Cost-capped. PI competition drives innovation, financials, and risk. Some system heritage, but challenging instruments. |

0.5-0.7 |

0.15-0.20 |

One Explorer mission |

|

Explorer |

Same as Discovery with small, colocated, experienced project teams. Most risk in instruments. |

0.1-0.3 |

0.03-0.06 |

Another Explorer mission or all the research, data analysis, and technology development |

gram’s portfolio, which he called “the mission impact food chain.” For example, paying for a 30 percent overrun on a multi-billion-dollar-class flagship mission can eliminate a New-Frontier-class mission or two Discovery-class missions or three Explorer-class missions. Similarly, covering the cost of a 30 percent overrun on a billion-dollar New Frontiers mission can eliminate a Discovery mission or two Explorers.

From this analysis, Dr. Hinners proposed to draw the following generalized conclusions:

-

The most expensive missions pose the greatest risk of major cost overruns and cause the most pain—“Beware the Big Vision.”

-

The main factor in cost risk for all mission classes is instrument development, which tends to be inadequately assessed in the approval process.

-

Competitively selected missions have built-in mechanisms to help restrain cost increases (i.e., caps).

-

NASA flight programs are self-insured in that they have no reserve other than delay, cancellation, or postponement of future missions.

-

Cost models are not that bad, but one must build only that which is actually costed at the preliminary design review (PDR).

Dr. Hinners’s suggestions for survey cost management included these:

-

Decadal survey plans should devote perhaps no more than 30 percent of their 10-year budget to a flagship mission(s).

-

Compete the flagship missions and apply cost caps that are established after well-funded Phase A/B studies.

-

Develop better capabilities for assessing the costs of new instruments and technology.

-

Maintain a program reserve (~20 percent of mission cost) separate from the project manager’s own mission contingency budget.

In the ensuing discussion participants asked if the ability to assess cost and technology readiness was better or worse today than in the past. The industry is smaller and the infrastructure is older, but the tools are better. However, today’s flight mission systems are much more heavily reliant on software than in the past, when a spacecraft was more likely to be dominated by hardware challenges. For this reason, cost estimates based on traditional spacecraft system parameters such as mass are not as accurate today. Some participants expressed doubts about the ability of survey committees to produce a good estimate of flagship mission costs so early in their conceptual design. Furthermore, principal investigators (PIs) can impose some control on a small mission but not on a flagship mission. The latter is just too challenging, and the technology is well beyond a group of university PIs. Participants added that the missions driven by NASA centers seem to be allowed to have cost growth, but PI missions are not. Finally, participants noted that developing a cost-capped mission with an inexperienced project manager is a very risky business.

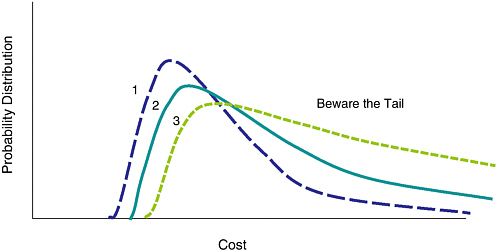

Bruce Marcus made a number of points about cost estimating and cost risk. He noted that a fundamental weakness with cost estimating is the subjectivity of judgments about complexity, technology maturity, and the like. Optimistic cost modeling is ever present when a program is being sold, and everyone is a player in this (scientists, vendors, agencies, Congress). Cost growth is closely related to development risk, increasing nonlinearly with the number of new technologies, and is very sensitive to schedule slips (especially the so-called “marching army” cost of keeping a mission team in place during a delay). Cost risk increases more rapidly than nominal cost with increasing technology development requirements (see Figure 3.1). He also suggested that using multiple-spacecraft platforms (versus larger multisensor platforms) can reduce overall mission cost risk, but generally at an increase in nominal costs (particularly due to launch costs).

Dr. Marcus then applied these observations to lessons for survey committees. He suggested that surveys should employ the same cost model and estimators for all missions so one can evaluate their “relative” costs, and then select a collection of small (<$300 million), medium ($300-$600 million), and large (>$900 million) missions from a single priority list that fit into the overall survey decadal budget.

FIGURE 3.1 Notional illustrations of the cost probability distributions for projects having different levels of requirements for technology development. A project that depends on few new technology developments (Curve 1) will have a lower most probable cost than projects having more technology development requirements (Curves 2 and 3), which will have both higher probable costs and a significantly higher likelihood of costing much more than the most probable cost value. The risk of dramatic cost growth is represented by the area under the tail of the probability distribution. Increasing technology development requirements significantly increases the area under the tail even though the estimated most probable cost increments may be modest. SOURCE: Workshop presentation by Bruce Marcus.

Decadal surveys should prioritize missions based on science value, technology readiness, and benefits to society. He noted that technology readiness is very subjective and what is deemed to be acceptable development risk is dependent on mission architecture (how much is at stake). Therefore, better coupling between advanced mission planners and programs like NASA’s New Millennium Program is needed to lower technology risk for missions. Finally, Dr. Marcus noted that because survey committee members are mostly academicians they are mostly qualified to identify key science questions and scientific mission priorities. This means that survey committees need to also include expertise on cost modeling, technology development, and societal benefit.

Workshop participants noted that the Explorer and Discovery programs have had to solve their own cost growth problems, while flagship programs appear to have “a license to steal” from other programs to fix their problems. Surveys need to have guidelines or rules on how to deal with this. There were also questions about how to optimize investments based on bins of small, medium, and large missions.

George Paulikas was the final panel member for Session 2. He said that one never really knows which cost estimates are realistic until the time of a PDR and that it requires a lot of history and experience to get reasonable cost estimates (or to recognize if estimates are bad). He suggested that survey cost bins should be set at a very coarse level. He also suggested that the biggest problems are the following:

-

Mission requirements creep,

-

Excessive optimism about even modest technology development and underestimation of mission complexity and program management demands,

-

Increased risk due to complexity of interagency or international collaboration,

-

Software complexity in today’s modern missions,

-

Ignoring past mistakes instead of learning from them, and

-

Switching launch vehicles during a project.

There was much discussion about how cost models could be used in surveys. Most participants believe they might have limited utility or accuracy since most missions are far short of being ready for PDR at the time of a survey. That said, survey committees need to be educated consumers of cost models and need to have members who have cost modeling expertise. It was also suggested that survey committees should contract out at least two independent cost estimates. It was clear from the discussion that missions (particularly large ones) need to have the technology development well understood or characterized before a cost estimate can be reasonable. It was suggested that a flagship mission should not go forward if its technology is not fully understood and should be recommended only as a candidate for technology development until it is better understood. Participants also noted that cost estimates usually do not take into account other key factors (such as the cost of the “marching army”) if a problem introduces a significant delay in the development schedule. That situation demands that a large reserve be maintained for such problems, and that reserve should be off-limits to other program needs (e.g., technology development). True reserves should be a critical component of any mission budget and should probably be ~20 percent of the total mission cost estimate.

The discussion turned to whether there were also lessons to be learned with respect to how NSF plans and manages large, ground-based projects. Some participants thought that there was some commonality with space missions, but others saw the terrestrial construction industry as quite different from the aerospace industry. Furthermore, the NSF major research equipment and facility construction budget represents only about 5 percent of the total NSF portfolio, and final cost estimates for projects take a long time. The National Science Board signs off on these large projects after an extended definition phase, and the board approves projects with fixed budgets. NSF facility construction projects are also

separate from NSF division research budgets. Therefore, while development costs do not affect the research division, the long-term operational costs must be accounted for within the research budget.

There was considerable discussion of the cost risks associated with international collaboration. NASA representatives pointed out that NASA has explored these risks recently and is moving toward not getting too dependent on international contributions. Participants cited the contrasting examples of the European Space Agency’s Huygens Probe and the German propulsion system on the Galileo mission. The Huygens atmospheric entry probe would not have happened without international cooperation, but the probe was separate from the rest of the Galileo mission and the core mission was not dependent on it. On the other hand, because the German propulsion system was highly integrated into the Galileo spacecraft, it had an impact on the overall mission cost. Others mentioned that when technology development is outsourced to other countries it diminishes the robustness of the technology coming from our nation’s aerospace community. Some participants, however, reminded their colleagues that one cannot ignore the scientific benefits that can come from international cooperation—they are not solely a matter of cost.

RECURRING THEMES

Many participants argued that the cost estimates put forward by the most recent series of surveys have been surpassed by revised costs that are as much as four times greater. Some observers noted that these increases have extremely disruptive impacts on the overall survey recommendations and must be addressed. Participants recognized that obtaining an accurate cost estimate is not an easy task because most initiatives recommended by a survey report are in very preliminary stages of design as the survey report is being prepared.

Participants generally viewed the following as important for future survey committees to consider:

-

Survey committee members having expertise in cost and technology readiness.

-

Use of uniform, independent costing approaches so that costs can be cross-compared.

-

Recognition that early cost estimates are better for comparing mission costs than for providing accuracy (since most missions are far short of being ready for a PDR).

-

Use of cost bins or ranges rather than specific costs.

-

Survey recommendations that provide guidance on risk mitigation via investment in mission-enabling technologies and by moving forward on less complex missions.

-

More time invested in defining mission requirements.

-

Appreciation of the need to have the technology well understood or characterized before cost estimates for the initiatives (particularly large ones) can be reasonable. It was suggested that a flagship mission should not go forward if its technology development is not fully understood and should be recommended only as a candidate for technology development until it is better understood.

-

Establishment of metrics for creating and maintaining a balanced set of large, medium, small, and core research and technology programs in a survey. A flagship program with cost increases can have a substantial impact on a survey, so agencies should seek advice on how to maintain a survey’s programmatic balance if the flagship project’s cost exceeds a certain threshold.

-

Consideration of incorporating competitively selected projects as much as possible.

-

Recognition that instrument development can trigger cost risk and that a program to reduce risk on instruments is important.

-

Prioritization criteria for new initiatives that look at science value, technology readiness, and benefits to society.

-

Recognition that interagency and international collaboration can substantially increase risk.

Participants also said that funding agencies rather than survey committees should consider the following things:

-

Continued development of parametric cost and schedule models, including models taking into account that, today, software has an impact on cost and schedule that is greater than that of spacecraft mass.

-

Simplifying and optimizing management processes on small and medium missions to cut cost and development time. Designing smaller missions under the same management constraints as large missions is viewed as inappropriate and wasteful.

-

Maintaining a reserve (equivalent to ~20 percent of the mission cost) separate from the mission budget.

-

Holding NASA center missions to the same cost growth criteria as PI-led missions.