Summary

Seven years ago, the Institute of Medicine (IOM) Committee on the Quality of Health Care in America released its first report, To Err Is Human, finding that an estimated 44,000 to 98,000 Americans may die annually due to medical errors. If mortality tables routinely included medical errors as a formal cause of death, they would rank well within the ten leading killers (IOM 2000). Two years later, the Committee released its final report, Crossing the Quality Chasm, underscoring the need for redesigning health care to address the key dimensions on which improvement was most needed: safety, effectiveness, patient centeredness, timeliness, efficiency, and equity (IOM 2001). Although these reports sounded appropriate alerts and have triggered important discussion, as well as a certain level of action, the performance of the healthcare system remains far short of where it should be.

Evidence on what is effective, and under what circumstances, is often lacking, poorly communicated to decision makers, or inadequately applied, and despite significant expenditures on health care for Americans, these investments have not translated to better health. Studies of current practice patterns have consistently shown failures to deliver recommended services, wide geographic variation in the intensity of services without demonstrated advantage (and some degree of risk at the more intensive levels), and

The planning committee’s role was limited to planning the workshop, and the workshop summary has been prepared by Roundtable staff as a factual summary of what occured at the workshop.

waste levels that may approach a third or more of the nation’s $2 trillion in healthcare expenditures (Fisher et al. 2003; McGlynn 2003). In performance on the key vital statistics, the United States ranks below at least two dozen other nations, all of which spend far less for health care.

In part, these problems are related to fragmentation of the delivery system, misplaced patient demand, and responsiveness to legal and economic incentives unrelated to health outcomes. However, to a growing extent, they relate to a structural inability of evidence to keep pace with the need for better information to guide clinical decision making. Also, if current approaches are inadequate, future developments are likely to accentuate the problem. These issues take on added urgency in view of the rapidly shifting landscape of available interventions and scientific knowledge, including the increasing complexity of disease management, the development of new medical technologies, the promise of regenerative medicine, and the growing utility of genomics and proteomics in tailoring disease detection and treatment to each individual. Yet, currently, for example, the share of health expenses devoted to determining what works best is about one-tenth of 1 percent (AcademyHealth September 2005; Moses et al. 2005).

In the face of this changing terrain, the IOM Roundtable on Evidence-Based Medicine (“the Roundtable”) has been convened to marshal senior national leadership from key sectors to explore a wholly different approach to the development and application of evidence for health care. Evidence-based medicine (EBM) emerged in the twentieth century as a methodology for improving care by emphasizing the integration of individual clinical expertise with the best available external evidence (Sackett et al. 1996) and serves as a necessary and valuable foundation for future progress. EBM has resulted in many advances in health care by highlighting the importance of a rigorous scientific base for practice and the important role of physician judgment in delivering individual patient care. However, the increased complexity of health care requires a deepened commitment by all stakeholders to develop a healthcare system engaged in producing the kinds of evidence needed at the point of care for the treatment of individual patients.

Many have asserted that beyond determinations of basic efficacy and safety, the dependence on individually designed, serially constructed, prospective studies to establish relative effectiveness and individual variation in efficacy and safety is simply impractical for most interventions (Rosser 1999; Wilson et al. 2000; Kupersmith et al. 2005; Devereaux et al. 2005; Tunis 2005; McCulloch et al. 2002). Information technology will provide valuable tools to confront these issues by expanding the capability to collect and manage data, but more is needed. A reevaluation of how health care is structured to develop and apply evidence—from health professions training, to infrastructure development, patient engagement, payments, and measurement—will be necessary to orient and direct these tools toward the

creation of a sustainable system that gets the right care to people when they need it and then captures the results for improvement. The nation needs a healthcare system that learns.

About the Workshop

To explore the central issues in bringing about the changes needed, in July 2006 the IOM Roundtable convened a workshop entitled “The Learning Healthcare System.” This workshop was the first in a series that will focus on various issues important for improving the development and application of evidence in healthcare decision making. During this initial workshop, a broad range of topics and perspectives was considered. The aim was to identify and discuss those issues most central to drawing research closer to clinical practice by building knowledge development and application into each stage of the healthcare delivery process, in a fashion that will not only improve today’s care but improve the prospects of addressing the growing demands in the future. Day 1 was devoted to an overview of the methodologic and institutional issues. Day 2 focused on examples of some approaches by different organizations to foster a stronger learning environment. The workshop agenda can be found in Appendix A, speaker biosketches in Appendix B, and a listing of workshop participants in Appendix C. Synopses follow of the key points from each of the sessions in the two-day workshop.

THE LEARNING HEALTHCARE SYSTEM WORKSHOP

Common Themes

In the course of the workshop discussions, several common themes and issues were identified by participants. A number of current challenges to improving health care were raised, as were a number of uncertainties, and a number of compelling needs for change.

Among challenges heard from participants were the following:

-

Missed opportunities, preventable illness, and injury are too often features in health care.

-

Inefficiency and waste are too familiar characteristics in much of health care.

-

Deficiencies in the quantity, quality, and application of evidence are important contributors to these problems, and improvement requires a stronger system-wide focus on the evidence.

-

These challenges are likely to be accentuated by the increasing com-

-

plexity of intervention options and increasing insights into patient heterogeneity.

-

The prevailing approach to generating clinical evidence is inadequate today and may be irrelevant tomorrow, given the pace and complexity of change. The current dependence on the randomized controlled clinical trial (RCT), as useful as it is under the right circumstances, takes too much time, is too expensive, and is fraught with questions of generalizability.

-

The current approaches to interpreting the evidence and producing guidelines and recommendations often yield inconsistencies and confusion.

-

Promising developments in information technology offer prospects for improvement that will be necessary to deploy, but not sufficient to effect, the broad change needed.

Among the uncertainties participants underscored were some key questions:

-

Should we continue to call the RCT the “gold standard”? Although clearly useful and necessary in some circumstances, does this designation overpromise?

-

What do we need to do to better characterize the range of alternatives to RCTs and the applications and implications for each?

-

What constitutes evidence, and how does it vary by circumstance?

-

How much of evidence development and evidence application will ultimately fall outside of even a fully interoperable and universally adopted electronic health record (EHR)? What are the boundaries of a technical approach to improving care?

-

What is the best strategy to get to the right standards and interoperability for a clinical record system that can be a fully functioning part of evidence development and application?

-

How much can some of the problems of post-marketing surveillance be obviated by the emergence of linked clinical information systems that might allow information about safety and effectiveness to emerge naturally in the course of care?

Among the most pressing needs for change (Box S-1) identified by participants were those related to:

-

Adaptation to the pace of change: continuous learning and a much more dynamic approach to evidence development and application, taking full advantage of developing information technology to

|

BOX S-1 Needs for the Learning Healthcare System

|

-

match the rate at which new interventions are developed and new insights emerge about individual variation in response to those interventions;

-

Stronger synchrony of efforts: better consistency and coordination of efforts to generate, assess, and advise on the results of new knowledge in a way that does not produce conflict or confusion;

-

Culture of shared responsibility: to enable the evolution of the learning environment as a common cause of patients, providers, and researchers and better engage all in improved communication about the importance of the nature of evidence and its evolution;

-

New clinical research paradigm: drawing clinical research closer to the experience of clinical practice, including the development of new study methodologies adapted to the practice environment and a better understanding of when RCTs are most practical and desirable;

-

Clinical decision support systems: to accommodate the reality that although professional judgment will always be vital to shaping care, the amount of information required for any given decision is moving beyond unassisted human capacity;

-

Universal electronic health records: comprehensive deployment and effective application of the full capabilities available in EHRs as an essential prerequisite for the evolution of the learning healthcare system;

-

Tools for database linkage, mining, and use: advancing the potential for structured, large databases as new sources of evidence,

-

including issues in fostering interoperable platforms and in developing new means of ongoing searching of those databases for patterns and clinical insights;

-

Notion of clinical data as a public good: advancement of the notion of the use of clinical data as a central common resource for advancing knowledge and evidence for effective care—including directly addressing current challenges related to the treatment of data as a proprietary good and interpretations of the Health Insurance Portability and Accountability Act (HIPAA) and other patient privacy issues that currently present barriers to knowledge development;

-

Incentives aligned for practice-based evidence: encouraging the development and use of evidence by drawing research and practice closer together, and developing the patient records and interoperable platforms necessary to foster more rapid learning and improve care;

-

Public engagement: improved communication about the nature of evidence and its development, and the active roles of both patients and healthcare professionals in evidence development and dissemination;

-

Trusted scientific broker: an agent or entity with the public and scientific confidence to provide guidance, shape priorities, and foster the shift in the clinical research paradigm; and

-

Leadership: to marshal the vision, strategy, and actions necessary to create a learning healthcare system.

PRESENTATION SUMMARIES

Hints of a Different Way—Case Studies in Practice-Based Evidence

Devising innovative methods to generate and apply evidence for healthcare decision making is central to improving the effectiveness of medical care. This workshop took the analysis further by asking how we might create a healthcare system that “learns”—one in which knowledge generation is so embedded into the core of the practice of medicine that it is a natural outgrowth and product of the healthcare delivery process and leads to continual improvement in care. This has been termed by some “practice-based evidence” (Green and Geiger 2006). By emphasizing effectiveness research over efficacy research (see Table S-1) practice-based evidence focuses on the needs of decision makers and on narrowing the research-practice divide. Research questions identified are relevant to clinical practice, and effectiveness research is conducted in typical clinical practice environments with unselected populations to increase generalizability (Clancy 2006 [July 20-21]).

TABLE S-1 Characteristics of Efficacy and Effectiveness Research

|

Efficacy |

Effectiveness |

|

Clinical trials—idealized setting |

Clinical practice—everyday setting |

|

Treatment vs. placebo |

Multiple treatment choices, comparisons |

|

Patients with a single diagnosis |

Patients with multiple conditions (often excluded from efficacy trials) |

|

Exclusions of user groups (e.g., elderly) |

Use is generally unlimited |

|

Short-term effects measured through surrogate endpoints, biomarkers |

Longer-term outcomes measured through clinical improvement, quality of life, disability, death |

|

SOURCE: Clancy 2006 (July 20-21). |

|

The first panel session of the workshop was devoted to several examples of efforts that illustrate ways to use the healthcare experience as a practical means of both generating and applying evidence for health care. Presentations highlighted approaches that take advantage of current resources through innovative incentives, study methodologies, and study design and demonstrated their impact on decision making.

Coverage with Evidence Development

Provision of Medicare payments for carefully selected interventions in specified groups, in return for their participation in data collection, is beginning to generate information on effectiveness. Peter B. Bach of the Centers for Medicare and Medicaid Services (CMS) discussed Coverage with Evidence Development (CED), a form of National Coverage Decision (NCD) implemented by CMS as an opportunity to develop needed evidence on effectiveness. By conditioning coverage on additional evidence development, CED helps clarify policies and can therefore be seen as a regulatory approach to building a learning healthcare system. Two case studies, one on lung volume reduction surgery (LVRS) for emphysema and another on PET (positron emission tomography) scans for staging cancers, illustrate this approach. To clarify issues of risk and benefit associated with LVRS and to define characteristics of patients most likely to benefit, the National Emphysema Treatment Trial (NETT), was funded by CMS, and implemented as a collaborative effort of CMS, the National Institutes of Health (NIH), and the Agency for Healthcare Research and Quality (AHRQ). Trial results enabled CMS to cover the procedure for groups with demonstrated benefit and clarified risks in a manner helpful to patient decisions, and from January 2004 to September 2005, only 458 Medicare patients filed a total of $10.5 million in LVRS claims, far lower than estimated. In the case of

PET scanning to help diagnose cancer and determine its stage, a registry has been established for recording experience on certain key dimensions, ultimately allowing payers, physicians, researchers, and other stakeholders to construct a follow-on system to evaluate long-term safety and other aspects of real-world effectiveness. This work is in progress.

Use of Large System Databases

With the adoption and use of the full capabilities of EHRs, hypothesisdriven research utilizing existing clinical and administrative databases in large healthcare systems can answer a variety of questions not answered when drugs, devices, and techniques come to market (Trontell 2004). Jed Weissberg of the Permanente Federation described a nested, case-control study on the cardiovascular effects of the COX-2 inhibitor rofecoxib (Vioxx) within Kaiser Permanente’s patient population, identifying increased risk of acute myocardial infarction and sudden cardiac death (Graham 2005). Kaiser’s prescription and dispensing data, as well as longitudinal patient data (demographics, lab, pathology, radiology, diagnosis, and procedures), were essential to conduct the study and contributed to the manufacturer’s decision to withdraw the drug from the marketplace. The case illustrates the potential for well-designed EHRs to generate data as a customary by-product of documented care and to facilitate the detection of rare events as well as provide insights into factors that drive variation. Weissberg also concluded that perhaps the most important requirement for reaping the benefits is that data collection be embedded within a healthcare system that can serve as a “prepared mind”—a culture that seeks learning.

Quasi-Experimental Designs

Randomized controlled trials are often referred to as the “gold standard” in trial design, while other trial designs are noted as “alternatives” to RCTs. Stephen Soumerai of Harvard Pilgrim Health Care argued that this bifurcation is counterproductive. All trial designs have widely differing ranges of applicability and validity, depending on circumstances. Although RCTs, if carefully developed, may produce the most reliable estimates of the outcomes of health services and policies, strong quasi-experimental designs (e.g., interrupted time series) are rigorous and feasible alternative methods, especially for evaluating the effects of sudden changes in health policies occurring in large populations. Because these are natural experiments that use existing data and can be conducted in less time and for less expense than many RCTs, they have great potential for contributing to the evidence base. For example, using interrupted time series to examine the impact of a statewide Medicaid cap on nonessential drugs in New Hamp-

shire revealed that prescriptions filled by Medicaid patients dropped sharply for both essential and nonessential drugs, while nursing home admissions among chronically ill elderly increased (Soumerai et al. 1987). Similar study designs have been used to assess the impact of limitations of drug coverage on the treatment of schizophrenia and the need for acute mental health services (Soumerai et al. 1994), as well as the relationship between cost sharing changes and serious adverse events with associated emergency visits among the adult welfare population (Tamblyn 2001). He concludes that time series data allow for strong quasi-experimental designs that can address many threats to validity, and because such analyses often produce visible effects, they convey an intuitive understanding of the effects of policy decisions (Soumerai 2006 [July 20-21]).

Practical Clinical Trials

Developing valid and useful evidence for decision making requires several steps, including identifying the right questions to ask; selecting the most important questions for study; choosing study designs that are adequate to answer the questions; creating or partnering with organizations that are equipped to implement the studies; and finding sufficient resources to pay for the studies. The successful navigation of these steps is what Sean Tunis of the Health Technology Center calls “decision-based evidence making.” Tunis also discussed pragmatic or practical trials as particularly useful study designs for informing choices between feasible alternatives or two different treatment options. Key features of a practical trial include meaningful comparison groups; broad eligibility criteria with maximum opportunity for generalizability; multiple outcomes including functional status and utilization; conduct in a real-world setting; and minimal intrusion on regular care. A CMS study, PET scan for suspected dementia, was cited as an example of how an appropriately designed practical clinical trial (PCT) could help address a difficult clinical question such as the impact of diagnosis on patient management and outcomes. However the trial remains unfunded, raising issues about limitations of current organizational capacity and infrastructure to support the needed expansion of such comparative effectiveness research.

Computerized Protocols to Assist Clinical Research

The development of evidence for clinical decision making can also be strengthened by increasing the scientific rigor of evidence generation. Alan Morris noted the lack of tools to drive consistency in clinical trial methodology and discussed the importance of identifying tools to assist in the design and implementation of clinical research. “Adequately explicit

methods,” including computer protocols that elicit the same decision from different clinicians when they are faced with the same information, can be used to increase the ability to generate highly reproducible clinical evidence across a variety of research settings and clinical expertise. Pilot studies of computerized protocols have led to reproducible results in different hospitals in different countries. As an example, Morris noted that the use of a computerized protocol (eProtocol-insulin) to direct intravenous (IV) insulin therapy in nearly 2,000 patients led to improved control of blood glucose levels. Morris proposed that in addition to increasing the efficiency of large-scale complex clinical studies, the use of adequately explicit computerized protocols for the translation of research methods into clinical practice could introduce a new way of developing and distributing knowledge.

The Evolving Evidence Base—Methodologic and Policy Challenges

An essential component of the learning healthcare system is the capacity for constant improvement: to take advantage of new tools and methods and to improve approaches to gathering and evaluating evidence. As technology advances and the ability to accumulate large quantities of clinical data increases, new opportunities will emerge to develop evidence on the effectiveness of interventions, including on risks, on the effects of complex patterns of comorbidities, on the effect of genetic variation, and on the improved evaluation of rapidly changing interventions such as devices and procedures. A significant challenge will be piecing together evidence from the full scope of this information to determine what is best for individual patients.

Although considered the standard benchmark, RCTs are of limited use in informing some important aspects of decision making (see papers by Soumerai, Tunis, and Greenfield in Chapters 1 and 2). In part, this is because in clinical research, we tend to think of diseases and conditions in single, linear terms. However, for people with multiple chronic illnesses and those that fall outside standard RCT selection criteria, the evidence base is quite weak (Greenfield and Kravitz 2006 [July 20-21]). In addition, the time and expense of an RCT may be prohibitive for the circumstance. A new clinical research paradigm that takes better advantage of data generated in the course of healthcare delivery would speed and improve the development of evidence for real-world decision making (Califf 2006 [July 20-21]; Soumerai 2006 [July 20-21]). New methodologies such as mathematical modeling, Bayesian statistics, and decision modeling will also expand our capacity to assess interventions.

Finally, engaging the policy issues necessary to expand post-market surveillance—including the use of registries and mediating an appropriate balance between patient privacy and access to clinical data—will make

new streams of critical data available for research. Linking data systems and utilizing clinical information systems for expanded post-marketing surveillance have the potential to accelerate the generation of evidence regarding risk and effectiveness of therapies. Furthermore, this could be a powerful source of innovation and refinement of drug development, thereby increasing the value of health care by tailoring therapies and treatments to individual patients and subgroups of risk and benefit (Weisman 2006 [July 20-21]).

Evolving Methods: Alternatives to Large RCTs

All interventions carry a balance of potential benefit and potential risk, and many trial methodologies can reveal important information on these dimensions when the conduct of a large RCT is not feasible. Robert Califf from the Duke Clinical Research Institute discussed some issues associated with RCTs and the trial methodologies that will increasingly be used to supplement the evidence base. Large RCTs are almost impossible to conduct, and Califf supported use of the term practical clinical trial for those in which the size must be large enough to answer the question posed in terms of health outcomes—whether patients live longer or feel better. A well-designed PCT has many characteristics that are frequently missing from current RCT design and is the first alternative to a “classical” RCT. Questions should be framed by those who use the information, and the methodology of design should include decision makers. PCTs however are also not feasible for a good portion of the decisions being made every day by administrators and clinicians. To answer some of these questions, nonrandomized analyses are needed. Califf reviewed four methodologies: (1) the cluster randomized trial, which randomizes on a practice level; (2) observational treatment comparisons, for which confounding from multiple sources is an important consideration (but should be aided by the development of National Electronic Clinical Trials and Research (NECTAR), the planned NIH network that will connect practices with interoperable data systems); (3) the interrupted time series, especially for natural experiments such as policy changes; and (4) the use of instrumental variables, or variables unrelated to biology, to produce a contrast in treatment that can be characterized. Califf indicated that such alternative methodologies have a role to play in the development of evidence, but for proper use, we also need to cultivate the expertise that can guide the use of these methods.

Evolving Methods: Evaluating Interventions in a Rapid State of Flux

As the pace of innovation accelerates, methodologic issues will increasingly hamper the straightforward use of clinical data to assess safety

and effectiveness. This is particularly relevant to the iterative development process for new medical device interventions. Evaluation of interventions in a rapid state of flux requires new methods. Telba Irony of the Center for Devices and Radiologic Health (CDRH) at the Food and Drug Administration (FDA) discussed some new statistical methodologies used at FDA, including Bayesian analysis, adaptive trial design, and formal decision analysis to speed approaches. Because the Bayesian approach allows the use of prior information and the performance of interim analyses, this method is particularly useful to evaluate devices, with the possibility of smaller and shorter trials and increased information for decision making. Formal decision analysis is also a mathematical decision analysis tool that has the potential to enhance the decision-making process by better accounting for the magnitude of advantage compared to the risks of an intervention (see Irony, Chapter 2).

Evolving Methods: Mathematical Models to Help Fill the Gaps in Evidence

Ideally, every important question could be answered with a clinical trial or other equally valid source of empirical observations. Because this is not feasible, an alternative approach is to use mathematical models, which have proven themselves valuable for assessing, as examples, computed tomography (CT) scans and magnetic resonance imaging (MRI), radiation therapy, and EHRs. Working through Kaiser Permanente, David M. Eddy developed a modeling system, Archimedes, that has demonstrated the promise of such systems for developing evidence for clinical decision making. Eddy notes that models will never be able to completely replace clinical trials, which as observations of real events are a fundamental anchor to reality. One step removed, models cannot exist without empirical observations. Thus, if feasible, the preferred approach is to answer a question with a clinical trial. However, in initial work on approaches to diabetes management, the Archimedes model has been validated against trial data with a very close match to the actual results (Eddy and Schlessinger 2003). Eddy maintains that in the future, the quality of models will improve, and as they do, with better data from EHRs, mathematical models can help fill more and more of the gaps in the evidence base for clinical medicine.

Heterogeneity of Treatment Effects: Subgroup Analysis

Heterogeneity of treatment effects (HTE) describes the variation in results from the same treatment in different patients. Sheldon Greenfield notes that HTE, the emerging complexity of the medical system, and the nature of health problems have contributed to the decreasing utility of

RCTs. Greenfield presented three evolving phenomena that make RCTs increasingly inadequate for the development of guidelines, for payment, and for creating quality-of-care measures. First, with an aging population, patients now eligible for trials have a broader spectrum of illness severity than previously. Second, due to the changing nature of chronic disease along with increased patient longevity, more patients now suffer from multiple comorbidities. These patients are frequently excluded from clinical trials. Both of these phenomena make the results from RCTs useful to an increasingly small percentage of patients. Third, powerful new genetic and phenotypic markers that can predict patients’ responsiveness to therapy and vulnerability to adverse effects of treatment are now being discovered. In clinical trials, these markers have the potential for identifying patients’ potential for responsiveness to the treatment to be investigated. The current research paradigm underlying evidence-based medicine, guideline development, and quality assessment is therefore fundamentally limited (Greenfield and Kravitz 2006 [July 20-21]). Greenfield notes that to account for HTE, trial designs must include multivariate pretrial risk stratification based on observational studies, and for patients not eligible for trials (e.g., elderly patients with multiple comorbidities), observational studies will be needed.

Heterogeneity of Treatment Effects: Prospects for Pharmacogenetics

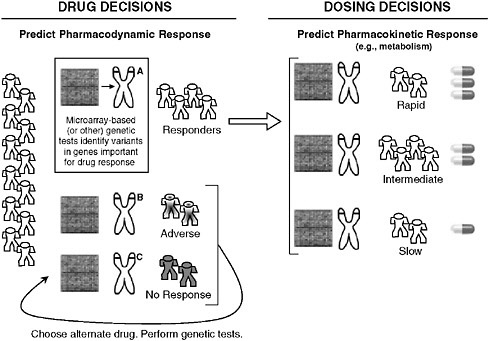

Recent advances in genomics have focused attention on its application to understanding common diseases and identifying new directions for drug or intervention development. David Goldstein of the Duke Institute for Genome Sciences and Policy discussed the potential role of pharmacogenetics in illuminating heterogeneity in responses to treatment and defining subgroups for appropriate care. While pharmacogenetics has previously focused on describing variations in a handful of proteins and genes, it is now possible to assess entire pathways that might be relevant to disease or to drug responses. The clinical relevance of pharmacogenetics will be in the identification of genetic predictors of a patient’s response to treatment with direct diagnostic utility (Need 2005), and the resulting expansion in factors to consider based on an individual’s response to treatment (see Figure S-1) could be substantial. The CATIE trial (Clinical Antipsychotic Trials of Intervention Effectiveness), comparing different antipsychotics, is illustrative.

While no drug was superior with respect to discontinuation of treatment, certain drugs were worse for certain patients in causing adverse reactions, illustrating the clear potential if genetic markers for possible adverse reactions could be used as a diagnostic tool. In addition to helping to specify disease subgroups and treatment effects, pharmacogenetics could benefit drug development. If predictors of adverse events could prevent the exposure of genetically vulnerable patients and preserve even a single drug,

FIGURE S-1 Possible implications of pharmacogenetics on clinical decision making. The appropriate drug for an individual could be determined by microarray-based (or other) genetic tests that reveal variants in genes that affect how a drug works (pharmacodynamics) or how the body processes a drug (pharmacokinetics), such as absorption, distribution, metabolism, and excretion. Note that individual metabolic response is commonly more complicated than the simplified case presented here for conceptual clarity.

the costs of any large-scale research effort in pharmacogenetics could be fully recovered.

Broader Post-Marketing Surveillance

Although often thought of as a mechanism to detect rare adverse treatment effects, post-marketing surveillance also has enormous potential for the development of real-world data on the long-term value of new, innovative therapies. Harlan Weisman of Johnson & Johnson noted that the limited generalizability of the RCTs required for product approval means that post-marketing surveillance is the major opportunity to reveal the true value of healthcare innovations for the general population. Electronic health

records, embedded as part of a learning healthcare system, would enable the development of such evidence on treatment outcomes and the effects of increasingly complex health states, comorbidities, and multiple indications. These data could also be used toward the conduct of comparative analysis. Weisman also discussed how the landscape of information needed changes rapidly and continuously, and called for the development of transparent methods and guidelines to gather, analyze, and integrate evidence—as well as consideration of how this new form of clinical data will be integrated into policies and treatment paradigms. To ensure that the goals of a learning healthcare system are achieved without jeopardizing patient benefit or medical innovation, Weisman suggested the importance of a road map establishing a common framework for post-marketing surveillance, to include initial evidence evaluation, appropriate and timely reevaluations, and application. Where possible, post-marketing data requirements of different agencies or authorities should be harmonized to reduce costs of collection and unnecessary duplication. He suggested multiple uses of common datasets as a means to accelerate the application of innovation.

Adjusting Evidence Generation to the Scale of the Effects

With new technologies introduced fast on the heels of effective older technologies, the demand for high-quality, timely comparative effectiveness studies is exploding (Lubitz 2005; Bodenheirmer 2005). Well-done comparative effectiveness studies identify which technology is more effective or safer, or for which subpopulation and/or clinical situation a therapy is superior. Steven Teutsch and Marc Berger of Merck & Co. advanced their perspective on the importance of developing strategies for generating comparative effectiveness data that improve the use of healthcare resources by considering the magnitude of potential benefits and/or risks related to the clinical intervention. Even when available, most comparative effectiveness studies do not directly provide estimates of absolute benefit or harms applicable to all relevant populations. Because of the impracticality and lack of timeliness of head-to-head trials for more than a few therapeutic alternatives, it is important that other strategies for securing this information be developed. Observational study designs and models can provide perspective on these issues, although the value of the information gleaned must be balanced against potential threats to validity and uncertainty around estimates of benefit. General consensus on the standards of evidence to apply to different clinical recommendations will be important to moving forward. Development of a taxonomy of clinical decision making would help to ensure transparency in decision making.

Linking Patient Records: Protecting Privacy, Promoting Care

Critical medical information is often nearly impossible to access both in emergencies and during routine medical encounters, leading to lost time, increased expenses, adverse outcomes, and medical errors. Having health information available electronically is now a reality and offers the potential for lifesaving measures not only through access to critical information at the point of care but also by providing a wealth of information on how to improve care. However, for many, the potential benefits of a linked health information system are matched in significance by the potential drawbacks such as threats to the privacy and security of people’s most sensitive information. The HIPAA privacy rule challenged decision makers and researchers to grapple with the questions of how to foster a national system of linked health information necessary to provide the highest-quality health care. Janlori Goldman of the Privacy Project and others presented the patient perspective on these issues, supporting the concept of data linkage as a way to improve health care, provided that appropriate precautions are undertaken to ensure the security and privacy of patient data and options are offered to patients with respect to data linkage. Such guarantees are critical to developing the access to clinical information that is important for systematic generation of new insights, and practical approaches are needed to both ensure the public’s confidence and address the regulatory requirement governing clinical information.

Narrowing the Research-Practice Divide—System Considerations

Capturing and utilizing data generated in the course of care offers the opportunity to bring research and practice into closer alignment and propagate a cycle of learning that can enhance both the rigor and the relevance of evidence. Presentations in this session suggest that if healthcare delivery is to play a more fundamental role in the generation and application of evidence on clinical effectiveness, process and analytic changes are needed in the delivery environment. Some considerations included strengthening feedback loops between research and practice to refine research questions and improve study timeliness and relevance, improving the structure and management of clinical data systems both to support better decisions and to provide quality data at the level of the practitioner, facilitating “built-in” study design, defining appropriate levels of evidence needed for clinical decision making and how they might vary by the nature of the intervention and condition, and changes in clinical research that might help accelerate innovation.

Feedback Loops to Expedite Study Timeliness and Relevance

An emerging model of care delivery is one focused around management of care, instead of expertise. Brent James of Intermountain Healthcare discussed the three elements of quality enhancement: quality design, quality improvement, and quality control. Of these, quality control is the key, but underappreciated, factor in developing stringent care delivery models. In this respect, process analysis is important to a care delivery model. Use of process analysis at Intermountain Healthcare revealed that a small percentage of clinical issues accounted for most care delivery shortfalls, and these became the first foci for initiation of a care management system. Although the original intent of this system was to achieve excellence in caregiving, a notable side benefit has been its use as a research tool (see James, Chapter 3). It has allowed for feedback systems, in which clinical questions can be addressed through interdisciplinary evaluation, examination of databases, prospective pilot projects, and finally, broad implementation when shown to be beneficial. Because the data management system is designed for a high degree of flexibility, data content can rapidly be changed around individual clinical scenarios. When a high-priority care process is identified, a flow chart is designed for that process, with tracking of a key targeted outcome for feedback and care management adjustment. The approach actively involves the patient and successively escalates care as needed, in a sort of “care cascade.” Each protocol is developed by a team of knowledge experts that oversee implementation and teaching. Once these systems are established for individual parameters of care, they are utilized in several different ways to generate evidence, such as quasi-experimental designs to evaluate policy decisions using pilot programs. Because RCTs are not practical, ethical, feasible, or appropriate to all circumstances, these large data systems with built-in study design and feedback loops allow for investigations that have real rigor, utility, and reliability in large populations.

Use of Electronic Health Records to Bridge the Inference Gap

Clinical decisions are made every day in the context of a certain inference gap—the gap between what is known at the point of care and what evidence is required to make a clinical decision. Physicians and other healthcare providers implicitly or explicitly are required to fill in where knowledge falls short. Walter Stewart of the Geisinger Health System discussed how the electronic health record can help to narrow this gap, increasing real-time access to knowledge in the practice setting and creating evidence relevant to everyday practice needs (see Stewart, Chapter 3). As such, it will bring research and practice into much closer alignment. EHRs can change

both evidence and practice through the development of new methods to extract valid evidence from analysis of retrospective longitudinal patient data; the translation of these methods into protocols that can rapidly and automatically evaluate patient data in real time as a component of decision support; and the development of protocols designed to conduct clinical trials as a routine part of care delivery. By linking research seamlessly to practice, EHRs can help address the expanding universe of practice-based questions, with a growing need for solutions that are inexpensive and timely, can meet the daily needs of practice settings, and can help drive incentives to create value in health care.

Standards of Evidence

The anchor element in evidence-based medicine is the clinical information on which determinations are based. However the choice of evidence standards used for decision making has fundamental implications for decisions about the use of new interventions, the selection of study designs, safety standards, the treatment of individual patients, and population-level decisions regarding insurance coverage. Steven Pearson of America’s Health Insurance Plans discussed the development of standards of evidence, how they must vary by circumstance, and how they must be adjusted when more evidence is drawn from the care process. At the most basic level, confidence in evidence is shaped by both its quality and its strength, and its application is shaped by whether it is being used for a decision about an individual patient or about a population group through policy initiatives and coverage determinations. In part, the current challenge to evidence-based coverage decisions is that good evidence is frequently lacking, and traditional evidence hierarchies also fit poorly for diagnostics and for assessing value in real-world patient populations (see Pearson, Chapter 3). However advances are also needed in understanding how information is or should be used by decision-making bodies. Using CED as an example, Pearson discussed how similar practice-based research opportunities inherent to a learning healthcare system could affect the nature of evidence standards and bring into focus certain policy questions.

Implications for Innovation Acceleration

Evidence-based medicine has sometimes been characterized as a possible barrier to innovation, despite its potential as means of accelerating innovations that add value to health care. Robert Galvin from General Electric discussed this issue, pointing out that employers seek value—the best quality at the most controlled cost—and their goal is to spend healthcare dollars most intelligently. This has sometimes led employers to ignore

innovation in their efforts to control the drivers of cost. There are numerous examples of beneficial innovations whose coverage was long delayed due to lack of evidence, as well as of innovations that, although beneficial to a subset of patients, were overused. A problem in introducing a more rational approach to these decisions is what Galvin terms the “cycle of unaccountability.” Each group in the chain—manufacturers, clinicians, healthcare delivery systems, patients, government regulators, and payers—desires system change but has not, to date, taken on specific responsibilities or been held accountable for roles in instituting change. General Electric has initiated a program Access to Innovation as a way to adopt the principles of coverage with evidence development in the private sector. Using a specific investigational intervention, reimbursement for certain procedures is provided in a limited pilot population to allow for the development of evidence in real time and inform a definitive policy on coverage of the intervention. Challenges encountered include content knowledge gaps; the difficulty of engaging purchasers to increase their expenditures, despite discussions of value; finding willing participants; and the growing culture of distrust between manufacturers, payers, purchasers, and patients (see Galvin, Chapter 3). Some commonality is needed on what is meant by evidence and accountability for change within each sector.

Learning Systems in Progress

Incorporation of data generation, analysis, and application into healthcare delivery can be a major force in accelerating understanding of what constitutes “best care.” Many existing efforts to use technology and create research networks to implement evidence-based medicine have produced scattered examples of successful learning systems. This session focused on the experiences of healthcare systems that highlight the opportunities and challenges of integrating the generation and application of evidence for improved care. Premier visions of how systems might effectively be used to realize the benefit of integrated systems of research and practice include the care philosophy and initiative at the Department of Veterans Affairs (VA), the front-line experience of the Practice-Based Research Networks in aligning the resources and organizations to develop learning communities, and initiatives at the AQA (formerly the Ambulatory Care Quality Alliance) to develop consensus on strategies and approaches that promote systems cooperation, data aggregation, accountability, and the use of data to bring research and practice closer together. These examples suggest a vision for a learning healthcare system that builds upon current capacity and initiatives and identifies important elements and steps that can take progress to the next level.

Implementing Evidence-Based Practice at the VA

The Department of Veterans Affairs has made important progress in implementing evidence-based practice, particularly via use of the electronic health record. Elements fostering the development of this learning system, cited by Joel Kupersmith, the chief research and development officer at the Veterans Health Administration, include an environment that values evidence, quality, and accountability through performance measures, the leadership to create and sustain this environment, and the VA’s research culture and infrastructure (see Kupersmith, Chapter 4). Without this appropriate culture and setting, EHRs may simply be a graft onto computerized record systems and will not help to foster evidence-based practice. Kupersmith presented the VA’s work with diabetes as an example demonstrating the range of possibilities in using the EHR for developing and implementing evidence at the point of care. This includes assistance in education and management of patients through automated decision support and evidence-based clinical reminders, as well as advancing research through the Diabetes Epidemiology Cohort (DEpiC). The cohort database consists of longitudinal record data on 600,000 diabetic patients receiving VA care, which is a key resource for a wide range of research projects. In addition, the recent launch of My HealtheVet, a web portal through which veterans will be able to view personal health records and access health information, allows patient-centered care and self-management and the ability to evaluate the effectiveness of these approaches. The result to date has been better control and fewer amputations. There are plans to link genomic information with this database to further expand research capabilities and offer increased insights toward individualized medicine.

Learning Communities and Practice-Based Research Networks

A culture change is necessary in the structure of clinical care, if the learning healthcare system is to take hold. Robert Phillips of the American Academy of Family Physicians described the formation of Practice-Based Research Networks (PBRNs) as a response to the disconnect between national biomedical research priorities and questions at the front line of clinical care. Many of the networks formed around collections of clinicians who found that studies of efficacy did not necessarily translate into effectiveness in their practices or that questions arising in their practices were not addressed in the literature. PBRNs began to appear formally more than two decades ago to support better science and fill these gaps, offering many lessons and models to inform the development of learning systems (see Phillips, Chapter 4). Positioned at the point of care, PBRNs integrate research and practice to improve the quality of care. By linking practic-

ing clinicians with investigators experienced in clinical and health services research, PBRNs move quality improvement out of the single practice and into networks, pooling intellectual capital, resources, and motivation and allowing measures to be compared and studied across clinics. The successful learning communities of PBRNs have definite characteristics, including a shared mission and values, a commitment to collective inquiry, collaborative teams, an action orientation that includes experimentation, continuous improvement, and a results orientation.

National Quality Improvement Process and Architecture

While there are many examples of integrated health systems, such as HealthPartners, the VA, Mayo, Kaiser Permanente, and others, a learning healthcare system for the nation requires thinking and working beyond individual organizations toward the larger system of care. George Isham of HealthPartners outlined the national quality improvement process and architecture needed for system-wide, coordinated, and continual gains in healthcare quality. Also discussed was the ongoing work at AQA that has assembled key stakeholders to agree on a strategy for measuring performance at the physician or group level, collecting and aggregating data in the least burdensome way, and reporting meaningful information to consumers, physicians, and stakeholders to inform choices and improve outcomes. The aim is for regional collaboration that can facilitate improved performance at lower cost; improved transparency for consumers and purchasers, involving providers in a culture of quality; buy-in for national standards; reliable and useful information for consumers and providers; quality improvement skills and expertise for local provider practices; and stronger physician-patient partnerships. Initial steps include the development of criteria and performance measures, such as those endorsed by the National Quality Forum, and the design of an approach to aggregate information across the nation through a data-sharing mechanism, directed by an entity such as a national health data stewardship entity that sets standards, rules, and policies for data sharing and aggregation.

Envisioning a Rapid Learning Healthcare System

The pace of evidence development is simply inadequate to begin to meet the need. Lynn Etheredge of George Washington University discussed the need for a national rapid learning system—a new model for developing evidence on clinical effectiveness. Already the world’s highest health expenditure, healthcare costs in the United States continue to grow, largely driven by technology. Short of rationing, any prospect of progress hinges on the development of an evidence base that will help identify the diagno-

sis and treatment approaches of greatest value for patients (see Etheredge, Chapter 4). Building on current infrastructure and resources, it should be possible to develop a rapid learning health system to close the evidence gaps. Computerized EHR databases enable real-time learning from tens of millions of patients that offers a vital opportunity to rapidly generate and test hypotheses. Currently the greatest capacities lie in the VA and the Kaiser Permanente integrated delivery systems, with more than 8 million EHRs apiece, national research databases, and search software under development. Research networks such as the Health Maintenance Organization (HMO) Research Network (HMORN), the Cancer Research Network at the National Cancer Institute (NCI), and the vaccine safety data link at the Centers for Disease Control and Prevention (CDC) also add substantially to the capacity. Medicaid currently represents the biggest gap, with no state yet using EHRs. An expansion of the infrastructure could be led by the Department of Health and Human Services and the VA, beginning with the use of their standards, regulatory responsibilities, and purchasing power to foster the development of an interconnected national EHR database with accommodating privacy standards. In this way, all EHR research databases could become compatible and multiuse and lead to substantial expansion of the clinical research activities of NIH, AHRQ, CDC, and FDA. In addition, NIH and FDA clinical studies could be integrated into national computer-searchable databases, and Medicare’s evidence development requirements for coverage could be expanded into a national EHR-based model system for evaluating new technologies. Leadership and stable funding are needed as well as a new way of thinking about sharing data.

Developing the Test Bed: Linking Integrated Service Delivery Systems

Many extensive research networks have been established to conduct clinical, basic, and health services research and to facilitate communication between the different efforts. The scale of these networks ranges from local, uptake-driven efforts to wide-ranging efforts to connect vast quantities of clinical and research information. This section explores how various integrated service delivery systems might be better linked to expand our nation’s capacity for structured, real-time learning—in effect, developing a test bed to improve development and application of evidence in healthcare decision making. The initiatives of two public and two private organizations serve as examples of the progress in linking research, translational, and clinical systems. A new series of grants and initiatives from NIH and AHRQ (NECTAR and Accelerating Change and Transformation in Organizations and Networks [ACTION], respectively—see below) highlight the growing emphasis on the need to integrate and communicate the results of research endeavors. The ongoing activities of the HMO Research Net-

work and the Permanente Foundation-Council of Accountable Physician Practices demonstrate that there is considerable interest at the interface of public and private organizations to further these goals. For each, there are organizational, logistical, data system, reimbursement, and regulatory considerations.

NIH and Reengineering Clinical Research

The NIH (http://nihroadmap.nih.gov/) Roadmap for Medical Research was developed to identify major opportunities and gaps in biomedical research, to identify needs and roadblocks to the research enterprise, and to increase synergy across NIH in utilizing this information to accelerate the pace of discoveries and their translation. Stephen Katz of the National Institutes of Health explained that a significant aim of this endeavor is to address questions that none of the 27 different institutes or centers that make up the NIH could examine on its own, but that could be addressed collectively. Within this context, there are several initiatives grouped as the Reengineering the Clinical Research Enterprise components of the Roadmap Initiative. They are oriented around translational science, clinical informatics, and clinical research network infrastructure and utilization. A major activity is the Clinical and Translational Science Awards (CTSAs), which represent the largest component of NIH Roadmap funding for medical research. The CTSAs are aimed at creating homes that lower barriers between disciplines, clinicians, and researchers and encourage creative, innovative approaches to solve complex medical problems at the front lines of patient care. A second component is the development of integrated clinical research networks through formation of the National Electronic Clinical Trials and Research (NECTAR) network. This initiative includes an inventory of 250 clinical research networks, as well as pilot projects to bring the NECTAR framework into action for a wide range of disease entities, populations, settings, and information systems.

AHRQ and the Use of Integrated Service Delivery Systems

Large integrated delivery systems are important as test beds not only for generating evidence, but for applying it as well. Cynthia Palmer of the Agency for Healthcare Quality and Research described its program ACTION, designed to foster the dissemination and adoption of best practices through the use of demand-driven, rapid-cycle grants that focus on practical and applied work across a broad range of topics. ACTION is the successor to the Integrated Delivery System Research Network (IDSRN), a five-year implementation initiative that was completed in 2005 and is based on the finding that the organizations that conduct health services research

are also the most effective in accelerating its implementation. One report suggested that it may take as long as 17 years to turn some positive research results to the benefit of patient care (Balas and Boren 2000), so working to reduce the lag time between innovation and its implementation is another primary goal of ACTION (see Palmer, Chapter 5). Features among participating organizations include size (the volume it takes to initiate change and assess its implementation), diversity (with regard to payer type, geographic location, and demographic characteristics), database capacity (large, robust databases with nationally recognized academic and field-based researchers), and speed (the ability to go from request for proposal to an award in 9 weeks and average project completion in 15 months).

The Health Maintenance Organization Research Network

Health maintenance organizations represent an important resource for innovative work in testing the effectiveness of new interventions. Eric Larson of Group Health Cooperative discussed HMORN, a consortium of 15 integrated delivery systems assembled to bring together their combined resources for clinical and health services research. Together these systems contain more than 15 million people, and as contained systems, natural experiments are going on every day. The formal research programs of HMORN include research centers at each of the participating sites, with a total of approximately 200 researchers and more than 1,500 ongoing research projects. All sites have standardized and validated datasets, and some have become standardized to each other. HMORN’s advantages include the close ties between care delivery, financing, administration, and patients, which aligns incentives for ongoing improvement of care as well as shared administrative claims and clinical data, including some degree of electronic health record (Larson 2006 [July 20-21]). The ongoing research initiatives are all public interest, nonproprietary, open-system research projects that include the ability to structure clinical trials with individual or cluster randomization around real-world care as opposed to the idealized world of the RCT. These studies can be formed prospectively, with the potential for longitudinal evaluation. Examples of HMORN’s work toward real-time learning include post-marketing surveillance and drug safety studies, population-based chronic care improvement studies, surveillance of acute diseases including rapid detection of immediate environmental threats, and health services research demonstration projects.

Council of Accountable Physician Practices

Physicians remain the central decision makers in the nation’s medical care enterprise. Michael Mustille of the Permanente Federation described

the work of the Council of Accountable Physician Practices (CAPP), organized in 2002 to enhance physician leadership in improving the healthcare delivery system. The organization is made up of 35 multispecialty group practices from all over the United States that share a common vision as learning organizations dedicated to the improvement of clinical care. Their features include physician leadership and governance, dedication to evidence-based care management processes, well-developed quality improvement systems, team-based care, the use of advanced clinical information technology, and the collection, analysis, and distribution of clinical performance information (see Mustille, Chapter 5). The formation of CAPP was initiated because multispecialty medical groups are well-designed learning systems at the forefront of using health information technology and electronic health records to provide advanced systems of care. One of the central organizing principles of CAPP is that physicians are responsible not only to the patient they are currently treating, but also to a group of patients, and to their colleagues, to provide the best care and contribute to the quality of care overall. Mustille described many of the ongoing activities of CAPP, including lending medical group expertise and leadership in the public policy arena, enabling physicians to lead change, facilitating research, and translating research and epidemiology into actual practice at the group setting level.

The Patient as a Catalyst for Change

There is a growing appreciation of the centrality of patient involvement as a contributor to positive healthcare outcomes and as a catalyst for change in healthcare delivery. This session focused on the changing role of the patient in the era of the Internet and the personal health record. It explored the potential for increased patient knowledge and participation in decision making and for expediting improvements in healthcare quality. It examined how patient accessibility to information could be engaged to improve outcomes; the roles and responsibilities that come with increased patient access and use of information in the electronic health record; privacy assurance and patient comfort as the EHR is used for evidence generation; and the accommodation of patient preferences. The types of evidence and decision aids needed for improved shared decision making, and how the communication of evidence might be improved, were also discussed. All of these are key issues in the emergence of a learning healthcare system focused on patient needs and built around the best care.

The Internet, eHealth, and Patient Empowerment

Information technology (IT) has the potential to support a safer, higher-quality, more effective healthcare system. By offering patients and healthcare consumers unprecedented access to information and personal health records, IT will also impact patient knowledge and decision making. Janet Marchibroda, from the eHealth Initiative, offered an overview of federal, state, and business initiatives contributing to the development of a national health information network that aims to empower the patient to be a catalyst for change and drive incentives centered around value and performance. For example, the National Coordinator for Health Information Technology was established to foster development of a nationwide interoperable health information technology (HIT) infrastructure, and about half of the states have either an executive order or a legislative mandate in place that is designed to stimulate the use of HIT. Employers, health plans, and patient groups are also engaged in various cooperative initiatives to develop a standardized minimum data content description for electronic health records, as well as the processing rules and standards required to ensure data consistency, data portability, and EHR interoperability. Most consumers—60 percent according to an eHealth Initiative survey (see Marchibroda, Chapter 6)—are interested in the benefits that personal and electronic health records have to offer and would utilize tools to mange many aspects of their health care. While Marchibroda felt that the United States is not yet at the point of a consumer revolution in shaping health care, it is clear that the patient is an integral part of expediting healthcare improvements and that the Internet and EHR-related tools will facilitate this progress.

Joint Patient-Provider Management of the Electronic Health Record

As patients, family members, other caregivers, and clinicians all begin viewing, using, contributing to, and interacting with information in the personal and electronic health record, new roles and responsibilities emerge. Andrew Barbash of Apractis Solutions noted that moving toward true patient-provider collaboration in health care may be less a data and infrastructure issue than a communication issue. What is needed is not the organization’s view of how to communicate with patients, but the patients’ view of how to communicate with the organization. Personal health records are only a small piece of the consumer’s world; and the technologies, demographics, and knowledge base are constantly changing, creating a very complex dynamic to navigate when making shared and often complex decisions about health care. A first obligation is defining what different users need to know, how best to convey this information to them, and what information models will be most useful. Existing collaboration tools

are “web-centric,” but the next step is to leverage the web as a vehicle for becoming “communication-centric.” There is significant potential for the Internet and EHRs to bring about changes in patient-provider communication and collaboration that will require forethought regarding the processes for governing, shared privacy management, liability, and self-education.

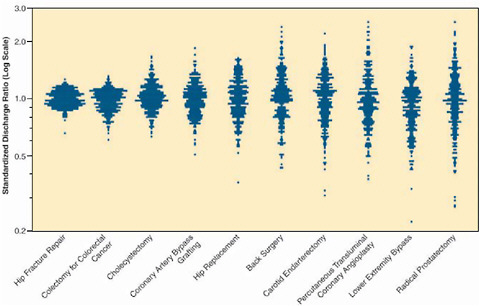

Evidence and Shared Decision Making

When medical evidence is imperfect, and its application must account for preferences, a collaborative approach by providers and patients is essential. James Weinstein of Dartmouth described what has been learned about discerning patient preferences as a part of shared decision making. Variation in care is a common feature of the healthcare system (Figure S-2). In emergency situations, such as hip fracture, patients both understand and desire the need for specific, directed intervention, and the choice to have a specific treatment is all but decided. However for other conditions such as chronic back pain, early-stage breast or prostate cancer, benign prostatic enlargement, or abnormal uterine bleeding, the decision to have a medical or surgical intervention is less clear and the path of watchful waiting is often an option. When patients delegate their decision making to their physicians, which is generally the case, the decisions often reflect providers’ options

FIGURE S-2 Profiles of variation for 10 common (surgical) procedures.

SOURCE: Dartmouth Atlas Healthcare.

rather than patients’. One result is that the likelihood of having a prostatectomy or hysterectomy varies two- to fivefold from one region to another; that is, “geography is destiny” (Wennberg and Cooper 1998; Wennberg et al. 2002). Many of these are “preference-sensitive” decisions, with the best choice depending on a patient’s values or preferences, given the benefits and harms and the scientific uncertainty associated with the treatment options. The Shared Decision Making Center at the Dartmouth-Hitchcock Medical Center seeks to engage the patient in these decisions by better informing patient choice through the use of interactive decision aids. One example given by Weinstein is SPORT (Spine Patient Outcomes Research Trial), a novel practical clinical trial that utilizes shared decision making as part of a generalizable, evidence-based enrollment strategy. Patients are offered interactive information about treatments and then offered enrollment in a clinical trial; those with strong treatment preferences who do not want to enter the RCT are asked to enroll in a cohort study. Shared decision making of this sort can lead to improved patient satisfaction, improved outcomes, and better evidence.

Training the Learning Health Professional

In a system that learns from data collected at the point of care and applies the lessons to patient care improvement, healthcare professionals will continue to be the key components at the front lines, assessing the needs, directing the approaches, ensuring the integrity of the tracking and quality of the outcomes, and leading innovation. However, what these practitioners will need to know and how they learn will change dramatically. Orienting practice around a continually evolving evidence base requires new ways of thinking about how to create and sustain a healthcare workforce that recognizes the role of evidence in decision making and is attuned to lifelong learning. Our current system of health professions education offers minimal integration of the concepts of evidence-based practice into core curricula and relegates continuing medical education to locations and topics distant from the issues encountered at the point of care. Advancements must confront the barriers presented by the current culture of practice and the potential burden to practitioners presented by the continual acquisition and transfer of new knowledge. Opportunities identified by presentations in this session include developing tools and systems that embed evidence into practice workflow, reshaping formal educational curricula for all healthcare practitioners, and shifting to continuing educational approaches that are integrated with care delivery and occur each day as a part of practice.

The Electronic Health Record and Clinical Informatics as Learning Tools

As approaches shift to individualized care, changes will be needed in the roles and nature of the learning process of health professionals. William Stead from Vanderbilt University discussed the use of informatics and the EHR to bring the processes of learning, evidence development, and application into closer alignment by changing the practice ecosystem. Currently, the physician serves as an integrator, aggregating information, recognizing patterns, making decisions, and trying to translate those decisions into action. However, the human mind can handle only about seven facts at a time, and by the end of this decade, there will be an increase of one or two orders of magnitude in the number of facts needed to coordinate any given medical encounter (Stead 2006 [July 20-21]). Future clinical decision making will need not just a personal health record but a personal health knowledge base that is an intelligent integration of information about the individual with evidence related to that individual, presented in a way that lets the provider and the patient make the right decisions. Also necessary is a shift from an educational model in which learning is a just-in-case proposition to one in which it is just-in-time—that is, current, competent, and appropriate to the circumstance. A model for a learning process, continuous learning during performance, details how learning can use targeted curricula to drive competency and outcomes. The potential uses of the EHR to manage information and support learning strategies include data-driven practice improvement, alerts and reminders in clinical workflow, identification of variability in care, patient-specific alerts to change in practice, links to evidence within clinical workflow, detection of unexpected events and identifying safety concerns, and large-scale phenotype-genotype hypothesis generation. These systems will also provide a way to close the loop by identifying relevant order sets, tracking order set utilization, and routinely feeding this performance data back into order set development. Achieving this potential will require a completely new approach, with changes in how we define the roles of health professionals and how the system facilitates their lifelong learning (see Stead, Chapter 7).

Embedding an Evidence Perspective in Health Professions Education

Evidence-based practice allows health professionals to deliver care of high value even within a landscape of finite resources (Mundinger 2006 [July 20-21]). With rapid advances in medical knowledge, teaching health professionals to evaluate and use evidence in clinical decision making becomes one of the most crucial aspects of ensuring efficacy of care and patient safety. To adequately prepare the healthcare workforce, their train-

ing must familiarize them with the dynamic nature of evolving evidence and position them to contribute actively to both the generation and the application of evidence through healthcare delivery. Mary Mundinger from the Columbia University School of Nursing presented several examples of curricula currently used at Columbia University by the medical, nursing, and dentistry schools to educate their students about evidence. One successful approach taken by the Columbia Nursing School was to adopt translational research as a guiding principle leading to a continuous cycle in which students and faculty engage in research, implementation, dissemination, and inquiry. This principle extends beyond the traditional linear progression of research from the bench to the bedside and also informs policy and curriculum considerations. Topics emphasized in these curricula included developing the skills needed to become sophisticated readers of the literature; understanding the different levels of evidence; understanding the relationship between design methods and conclusions and recommendations; understanding the science; knowing how care protocols evolve; and knowing when to deviate from protocols because of patient responses. To take advantage of a workforce trained in evidence-based practice, changes are needed in the culture of health care to emphasize the importance of evidence management skills (see Mundinger, Chapter 7).

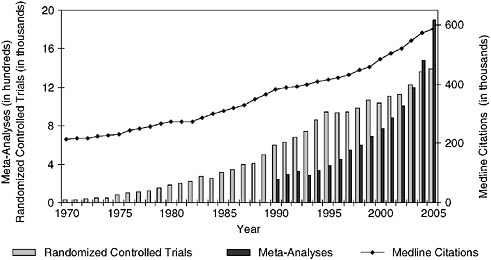

Knowledge Translation: Redefining Continuing Education

Evidence-based practice will require a shift in medical thinking that deemphasizes personal expertise and intuition in favor of the ability to draw upon the best available evidence for the situation, in an environment in which knowledge is very dynamic. Mark Williams from Emory University described the potential role of continuing education in such a transformation. Continuing medical education (CME) seeks to promote lifelong learning in the physician community by providing opportunities to learn current best evidence. However, technology development and the creation of new knowledge have increased dramatically in both volume and pace (Figure S-3), nearly overwhelming practicing clinicians (Williams 2006 [July 20-21]). While CME aims to alleviate this burden, the current format is based on a static model of evidence development that will become increasingly inadequate to support the delivery of timely, up-to-date care. New approaches to CME are being developed to engage these critical dimensions of a learning system. One variation is the knowledge translation approach in which CME is moved to where care is delivered and is targeted at all participants—patients, nurses, pharmacists, and doctors—and the content consists of initiatives to improve health care (Davis et al. 2003). By emphasizing teamwork and pulling physicians out of the autonomous role and into collaborations that are cross-departmental and cross-institutional,

FIGURE S-3 Trends in Medline citations and Medline citations for randomized controlled trials and meta-analyses per year.

SOURCE: National Library of Medicine public data, http://www.nlm.nih.gov/bsd/pmresources.html.

new approaches to CME support the necessary culture change and help shift toward practice-based learning that is integrated with care delivery and is ongoing.

Structuring the Incentives for Change

A fundamental reality in the prospects for a learning healthcare system lies in the nature of the incentives for inducing the necessary changes. Incentives are needed to drive the system and culture changes, as well as to establish the collaborations and technological developments necessary to build learning into every healthcare encounter. Public and private insurers, standards organizations such as the National Committee for Quality Assurance (NCQA) and the Joint Commission (formerly JCAHO), and manufacturers have the opportunity to shape policy and practice incentives to accelerate needed changes. Incentives that support and encourage evidence development and application as well as innovation are features of a learning healthcare system. Change can be encouraged through incentives at all layers—giving providers incentive to use established guidelines and drive better outcomes; giving healthcare delivery systems incentives for increased efficiency; giving manufacturers and developers incentives for bringing the

safest, most effective and efficient products to market; and giving patients incentives for increased engagement as decision-making participants.

Opportunities for Private Insurers