5

Patient Experience with Drugs over Time1

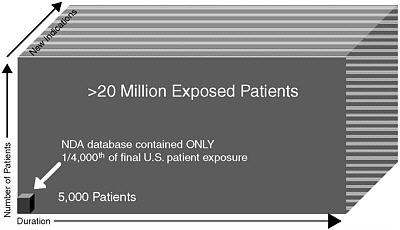

Only after a drug is launched and in use by patients over time does a full understanding of its benefits and risks emerge. However, the system for collecting and analyzing data on patient experiences with drugs, and for integrating that information back into the regulatory process, is seriously flawed, according to many of the workshop speakers and participants. While computerized patient and pharmacy order entry systems and other information technologies have the potential to improve the way we conduct postmarketing surveillance, the comments of participants suggest that these technologies have not yet lived up to that potential. There were repeated calls for integrating postmarketing data into the regulatory component of the life cycle (represented by the box in the lower left corner of Figure 5-1).

LIMITATIONS OF POSTMARKETING SURVEILLANCE

The challenge, according to Dr. Berger, is that once we leave the hypothesis-driven preclinical environment where we have a great deal of certainty about causality, we enter a world of observational studies where it is difficult to conclude with certainty that there may be causality. Several workshop participants commented on the failure to systematically

FIGURE 5-1 Learning about benefits and risks is a continuous process, creating a tremendous challenge with respect to updating benefit–risk assessments as postmarketing data accumulate.

SOURCE: From the presentation of discussion leaders Marc Berger, M.D., and Paul Seligman, M.D., MPH.

collect postmarketing safety data and the lack of confidence by many in the ability to monitor drugs in the postmarketing environment.

Dr. Strom discussed three primary postmarketing surveillance data sources and their limitations:

-

The Adverse Event Reporting System (AERS) has been the primary source of postmarketing information about drugs since the 1950s. Hundreds of thousands of adverse reaction reports are collected and analyzed annually. The system has seen very little improvement over time other than becoming computerized. Its major limitation is that it remains a collection of case reports that can signal problems, but cannot be used to draw conclusions.

-

Computerized claims or medical record systems, which are widely used and have been in existence since the late 1970s, include pharmacy, hospital, and physician claims reports sent to insurance carriers. Common identification numbers are increasingly being used to maximize the use of claims databases for research purposes. The Achilles heel of this type of system is physician reimbursement, which is based on visits rather than diagnosis. While hospitals and pharmacies must include diagnostic or drug identification information in claims forms, there is no incentive for physicians to do so.

-

Data collected de novo include datasets from either randomized trials or large observational studies. The problem with relying on this type of data is that they are expensive and time-consuming to collect. Once a drug reaches the market, the need to respond quickly to accusations about adverse reactions precludes this type of study.

In the discussion that followed Dr. Strom’s presentation, there was a question about common events, such as heart attacks, and whether and how they would be detected through AERS, since that system seems to be more suited to detecting rare events. Dr. Strom replied that more common risks would need to be studied epidemiologically and through more active surveillance.

There was some question as to whether the quality of AERS data improves over time, because there has recently been a push to encourage consumers to send information. Dr. Galson remarked that, while AERS is not perfect, it is all that we have right now in terms of providing a system for patients and physicians to alert the FDA. In the future, with better electronic systems and a more comprehensive national healthcare information infrastructure (e.g., the Medicare system that links health outcomes with prescribing data), our dependence on AERS will decrease. Until that happens, although the quality of the data from consumer reports can be dubious, there is no real substitute for the information collected through AERS.

Dr. Strom agreed that AERS is irreplaceable today but disagreed with Dr. Galson’s forecast that our dependence on it will decrease. He argued that automated data systems have existed for years and our dependence on AERS is no less now than it has been in the past. He further argued that with respect to adding data to AERS—more is not better. Smaller databases can allow for richer data extraction (by getting additional information about each case). In some countries, such as Sweden and Australia, where the number of reports is smaller, regulators have the ability to access much more detailed clinical information, which can be critical for accurately interpreting the results. If anything, increasing the size of the AERS database will result in people resorting to analyses based on the assumption that they are dealing with epidemiological data, which they are not.

There was some discussion about whether and how postmarketing information on risk and benefits can be incorporated into labels or made available in ways that allow physicians and other clinicians to use the information more effectively. Dr. Strom remarked that, first, given how our knowledge of both benefits and risks changes after marketing, comprehensive information isn’t even available in many cases. Once this information does become available, given the mass of information, the

challenge will be to effectively utilize it. That, he argued, is a question of informatics. Our society is beginning to computerize its healthcare system and, in so doing, it is also building the capacity to collect and analyze large amounts of information in a way that will rapidly alert physicians and pharmacists to safety signals.

Finally, there were some comments made about how much of the discussion was focused on postmarket “surveillance” that perhaps ought to be focused on “data collection and analysis”; the issue of comparative effectiveness; the issue of polypharmacy, which is a key factor driving the discrepancy between efficacy and effectiveness but for which there is almost no data from an outcomes point of view; and the dearth of post-marketing data on pediatric drugs in particular. All of these omissions, Dr. Strom noted, relate to “the mission of the search.” That is, how do we improve the use of drugs? He argued that by focusing on rare adverse reactions to new drugs, we are targeting the wrong question. Important public health questions are related to common adverse reactions to older drugs that are not being used optimally either by practitioners or by patients.

POSTMARKETING SURVEILLANCE WORKS: A CASE EXAMPLE

Dr. Overhage argued that both spontaneous reporting and active surveillance must be considered when thinking about how to adjust or modulate understanding of risk. Spontaneous reporting is invaluable because it is filtered (e.g., through astute physicians) and useful for identifying suggestive time relationships and plausible mechanisms. However its signal is low, with only about 10 percent of adverse events detected.2 Active surveillance produces a stronger signal, and its larger numbers allow for relative risk calculations, better precision, and comparisons within or between drugs.

Dr. Overhage described a recent study demonstrating that both automated (computer database) and manual (chart review) active surveillance identify significantly more events than spontaneous reporting. Interestingly, however, there is not much overlap (Jha et al. 1998). While computerized surveillance is better than chart review for detecting drug interactions and other laboratory-based changes, it is not as good at detecting symptom-related mild adverse events. Also, while automated surveillance can reliably and consistently identify signals, only some of those signals are adverse events (Honigman et al. 2001).

He emphasized that the feasibility of utilizing properly collected routine clinical data for surveillance is based on data reuse. Collecting

data is difficult and expensive, so standardizing and employing those data for multiple purposes is important.

Dr. Overhage described his work with the Indiana Network for Patient Care, a regional health information exchange that has been in development for several years. The goal of that program is to develop an operational, sustainable statewide health information exchange that networks across all of the approximately 6,000 physician practices and hospitals in Indiana. The network relies on real-time clinical data augmented by claims data and defined signals that meet specific preset criteria, indicating that an adverse event may have happened. Signals are evaluated through a tiered, computer-assisted human review process. The success of the system thus far demonstrates that it is possible to capture and store population-based data in order to identify adverse events and update risk information on an ongoing basis. The signals can also be used to conduct nonrandomized observational studies designed to test prespecified hypotheses.3

Dr. Overhage was asked about the limitations of using postmarketing observational data to update benefit profiles, as well as risk profiles, for various therapies, compared to data collected from randomized control trials. He replied that the system does not collect a lot of those data and will probably not for some time.

When asked about cost, Dr. Overhage stated that the cost is related to the accuracy desired. If one is willing to be 95 percent accurate, but not perfect, the cost would not be excessive. Perfection, on the other hand, is very expensive. He reemphasized that data reuse is what makes this feasible. The fact that the data are collected not just for Phase IV surveillance but also for public health, quality improvement for payers, and so on, with each stakeholder investing, makes it affordable.

There was a question about whether it was possible to publish comparative evaluations of benefit–risk that would be directly useful to physicians and other front-line providers. It was suggested that if more patient information needs to be collected in order to do this, the pharmaceutical industry could be taxed to support the independent (and credible) organizations that would do the work. Dr. Overhage responded that, first, it was an issue of scale. The program (network) would need to be expanded. Second, many unanswered methodological questions remain as well as many lessons to be learned about how to use observational data (e.g., separating signals from noise). Third, presenting the data to physicians and patients is a challenge, because nobody knows how to organize and synthesize the data in a way that is usable for them.

A comment was made about the necessity of standardizing electronic medical records so that data can be pooled across the country and that

we must be very careful with algorithms—we need to develop and then test them. Dr. Overhage remarked that, yes, every network study involves tuning the algorithms (he called it the “musical model”) for exactly that reason—to validate. He noted that the query tool of his system is very fast—it takes 15 to 20 minutes to query across 1.7 million patients. So the algorithm can be cycled and tuned very quickly. Moreover, the data-rich system can be used to address questions that may not be possible to address with smaller prospective or observational studies.

A comment was made that while the mining of more information may generate better hypotheses, it is not going to replace our need to do good experimentation in order to be really certain about benefit–risk. Others agreed that there are enormous challenges ahead, despite the optimism heard here and at so many other conferences about the future of fully interoperable automated electronic health records. First we need to solve the methodological problems (e.g., teasing apart the signal and noise for both benefit and risk) as well as data entry problems (e.g., nonstandardized coding, coding errors, and incomplete knowledge in many cases about why a drug was even prescribed).

It was noted that earlier in the day, Dr. Leiden had proposed a new paradigm for drug development that depends in large part on our ability to collect robust information in the postmarketing arena and be able to do experimental studies that involve randomization, or in some way classifying individuals into different groups, so that we can continue to collect good efficacy as well as safety information. Yet are the kinds of databases that Dr. Overhage accesses and structures the right venue for doing these kinds of studies? Do we need to think about other kinds of databases that one would have to construct in order to be able to do those rigorous studies? Dr. Overhage responded that if the databases provide 80 percent of the answer and that 80 percent is worthless without the remaining 20 percent, then no, we don’t have the right information. There are no incentives to collect and align that other 20 percent. On the other hand, with carefully selected questions, appropriate things can be done with the data. While doing those things, we can build in that direction, but we need to ask our questions carefully because capturing data is expensive.

VALIDATING BENEFIT–RISK DATA DURING POSTAPPROVAL: LESSONS FROM THE OMB

Dr. Graham noted that, based on his experience at the U.S. Office of Management and Budget (OMB), agencies often have a very strong incentive to make their proposals look as good as possible, in order to get them approved. This leads to analytical practices that are “not always of ideal academic quality.” For example, in deciding on an alternative,

agencies may compare their alternative with only one other option (e.g., a “do-nothing,” or status quo, option). The challenge for OMB, therefore, is to persuade agencies to perform more serious analyses of a next-best alternative or a variant of the preferred alternative. When OMB pushes for these additional analyses, it often experiences pushback from the agencies that express concerns about limited time or resources. Dr. Graham remarked that the FDA probably experiences a similar problem. While manufacturers may present data comparing their proposed therapy to a placebo, the more important clinical questions may relate to whether the proposed therapy is superior to other treatments already on the market. The solution to this problem is not straightforward. If the FDA were to compel manufacturers to generate data on a broader range of alternatives, the cost and delays associated with FDA approval would increase significantly.

A better solution, Dr. Graham argued, would be to stimulate more research and analysis during the postapproval period. Echoing what other presenters had suggested, this postmarketing research should be conducted by a variety of sources. Dr. Graham suggested multiple organizations that compete with each other for the reputation of doing quality work. An expansion of university-based programs in pharmacoepidemiology and pharmacoeconomics, with a mix of government and industry funding, would be a useful step in the right direction.

Dr. Graham then discussed the validation of benefit and risk data after a regulatory decision has been made. In its most recent report to Congress Validating Regulatory Analysis, OMB assembled all 47 published case studies (out of more than 20,000 new regulations since 1981) in which benefit and cost estimates had been validated after the rule was promulgated (OMB 2005). Such a limited sample allows only limited insights, but it is nonetheless interesting to note that federal regulators exaggerated both benefits and costs in most cases. They exaggerated benefit because they wanted their product to look good. They exaggerated cost because they underestimated the creativity of the industry in finding ways to meet regulatory requirements at lower cost. The report highlights the need for a broader literature to allow us to validate preapproval benefit–risk estimates.

With respect to the FDA, are there numeric projections that are falsifiable? Could we perform validation analyses on this process? While it is not obvious that this can be done, the advantage of doing it would be a track record of better estimations of risk and benefit. By documenting systematic errors, it becomes feasible to improve future benefit–risk analyses and identify situations where adjustments need to be made. Ultimately, Dr. Graham’s presentation raised concern about the lack of resources and incentives for following up on regulatory decisions.