3

State of the Science of Quality Improvement Research

To get a better sense of how quality improvement research is being conducted, the workshop convened six panelists from a variety of perspectives to address the following six questions:

-

With respect to quality improvement, what kinds of research/ evaluation projects have you undertaken/funded/reviewed? In which contexts (e.g., settings, types of patients)? With whom do you work to both study and implement interventions? For what audience?

-

How do you/does your organization approach quality improvement research/evaluation? What research designs/methods are employed? What types of measures are needed for evaluation? Are the needed measures available? Is the infrastructure (e.g., information technology) able to support optimal research designs?

-

What quality improvement strategies have you identified as effective as a result of your research?

-

Do you think the type of evidence required for evaluating quality improvement interventions is fundamentally different from that required for interventions in clinical medicine?

-

If you think the type of evidence required for quality improvement differs from that in the rest of medicine, is it because you think that quality improvement interventions

-

-

-

intrinsically require less testing or that the need for action trumps the need for evidence?

-

Does this answer depend on variations in context (e.g., across patients, clinical microsystems, health plans, regions)? Other contextual factors? Which aspects of context, if any, do you measure as part of quality improvement research?

-

-

Do you have suggestions for appropriately matching research approaches to research questions?

-

What additional research is needed to help policy makers/ practitioners improve quality of care?

Some panelists submitted written responses to these questions; those responses are included in Appendix C.

EVIDENCE-BASED PRACTICE CENTER

Paul Heidenreich of both the Palo Alto Veterans Administration (VA) Hospital and Stanford Evidence-based Practice Center (EPC) presented the EPC’s approach to evaluating quality improvement research. The EPC, a collaborative effort between Stanford and University of California, San Francisco (UCSF), is one of 13 EPCs funded by AHRQ to provide evidence-based reports and disseminate the findings of those reports.1 The Stanford–UCSF EPC has authored a series of reports titled “Closing the Quality Gap: A Critical Analysis of Quality Improvement Strategies.” These reports attempt to provide guidance to those doing quality improvement and assess the effectiveness of various quality improvement strategies under specific circumstances. A secondary goal is to advance review methodology. The series has evaluated a variety of issues in health care, including diabetes and medication management. Each evaluation studies the effects of the same quality improvement strategies, such as provider reminders and techniques to promote self-management (Table 3-1). The studies are all conducted using one of three evaluation designs: randomized trials, concurrent trials, and interrupted time series.

The EPC employs a “strength of evidence” scale to rate studies on three factors: impact, study strength, and effect size. The level of difficulty to implement an intervention is also considered, focusing on cost barriers and complexity. The strength of evidence and diffi-

TABLE 3-1 Crossing the Quality Gap Series Overview

|

Evaluations |

Quality Improvement Strategies |

Methods Used |

|

Diabetes |

Provider reminders |

Randomized trials |

|

Hypertension |

Facilitated relay of clinical data to providers |

Concurrent trials |

|

Medication management–antibiotic use |

Audit and feedback |

Interrupted time seriesa |

|

Asthma |

Provider education |

|

|

Care coordination |

Patient education |

|

|

Nosocomial infections |

Patient reminder systems |

|

|

Heart failure |

Organizational change |

|

|

|

Promotion of self-management |

|

|

|

Disease or case management |

|

|

|

Team or personnel changes |

|

|

|

Changes to medical record systems |

|

|

|

Financial incentives |

|

|

aThese studies collected data at least three times before the intervention and three times after the intervention |

||

culty of implementation are used in conjunction during evaluations. Using this scale, the reports were able to glean some findings about which quality improvement strategies work and which do not. For example, nearly all the quality improvement strategies had a positive impact in the hypertension evaluation, with an average reduction of 4.5 mmHg. Specific quality improvement strategies, such as organizational change and patient education, had greater impacts than others, Heidenreich said. The hypertension evaluation also concluded that it was difficult to distinguish the individual effects of components of multicomponent interventions. This is in contrast with the results from the diabetes evaluation, which found that more complex interventions yielded greater effects. Overall, the reports also found consistent results between the randomized controlled trials (RCTs) and the controlled before-and-after study designs.

The reports also highlighted some limitations in the literature. For example, study strategies were often not well described, particularly those assessing organizational change and studies using combinations of quality improvement strategies, Heidenreich said.

Without being able to identify the key components of a strategy, attribution of effects to specific interventions was difficult.

Heidenreich said he did not believe there was a difference between the evidence required for clinical medicine and for quality improvement. The difference, he said, is between the potential harm and cost of quality improvement and clinical medicine. For example, the potential harm patients face if every diabetic does not receive foot exams may be minimal when compared to the potential harm patients may encounter from drug trials. The need for evidence is fundamentally the same, Heidenreich said.

Areas for further research include the need to separate the components of multidimensional interventions. Additionally, the implementation of interventions should be evaluated.

COCHRANE EPOC

The Cochrane Effective Practice and Organisation of Care Group (EPOC) conducts systematic reviews. EPOC has completed 39 reviews and is developing 39 more, said Jeremy Grimshaw of the University of Ottawa. Through its work, EPOC has identified more than 5,000 randomized studies or well-designed, quasi-experimental studies for evaluating health care quality. In reviewing the literature, the challenge Grimshaw posed to the group was as follows: How do we get the most information out of these studies?

Quality improvement and quality improvement research are similar, but have many differences. Quality improvement aims to improve the quality of care delivered in a specific setting, requiring highly contextualized experiential learning. Quality improvement may demonstrate change but does not focus on evaluating causal relationships between the improvement activity and the magnitude of improvement. A common problem with current quality improvement efforts is that potential solutions are often tested before the problem is well understood. In contrast, quality improvement research generates generalizable knowledge by evaluating the effects of and exploring the mechanism of action and potential effect modifiers of different quality improvement interventions across a range of settings. Its goal is to make strong causal inferences, which requires use of a broad range of study designs.

Grimshaw defined quality improvement research as the scientific study of the determinants, processes, and outcomes of quality improvement, including the following:

-

synthesis of knowledge (identify knowledge base and various approaches);

-

identification of knowledge to action gaps;

-

development of methods to assess barriers and facilitators to quality improvement;

-

development of methods for optimizing quality improvement strategies;

-

evaluations of the effectiveness and efficiency of quality improvement strategies; and

-

development of quality improvement theories and research methods.

Quality improvement research should be actionable in a policy sense by being predictive, allowing for conclusions to be drawn, such as “if X intervention is implemented, Y benefits are likely to occur.” Quality improvement research requires use of diverse methods, such as ways to create and appraise clinical practice guidelines and cluster randomized trials in implementation research. Rigorous evaluations, such as RCTs, are needed to provide evidence of effectiveness to ensure that the effects are not a result of secular change. In the end, the methods used depend on the question being asked.

Grimshaw then described an increasingly common approach to quality improvement research, where initial research focuses on an assessment of barriers and supports in the practice environment. This is followed with the identification of strategies to overcome those barriers, leading to improvements in processes and outcomes of care.

The literature generally supports the notion that changing provider behavior and improving quality is possible. Frequently quality improvement interventions lead to modest results that are still potentially important from a population perspective. For example, the average absolute improvement in compliance with evidence-based recommendations across 118 RCTs of audit-and-feedback is around 5 percent, but this comes with a substantial amount of variability (–2 to +71 percent). Further there is considerable evidence that low-intensity interventions may be effective in improving quality. The need is not for new methods, Grimshaw argued, but for new approaches.

A great deal still needs to be answered by quality improvement research, such as the generalizability of interventions and their mechanisms. Additionally, researchers need to better understand the likely confounders of quality improvement in order to balance the unknown confounders. Understanding the effects of economic issues, such as the opportunity costs of disseminating ineffective or

inefficient interventions, is one such confounder. Quality improvement research should use an interdisciplinary approach and introduce different perspectives and methods from other disciplines (e.g., behavioral and psychological theorists). Priorities for future research include developing methods of barrier identification, optimizing interventions, and evaluating effectiveness and efficiency of different strategies.

MULTIPLE RESEARCH METHODS

Whereas Heidenreich and Grimshaw discussed methods of working with the complexity of and barriers to quality improvement research, Trish Greenhalgh of University College London discussed the problem that quality improvement research is undertheorized. As examples of how theories can be used in quality improvement research, Greenhalgh described two approaches to quality improvement undertaken by her own team: language interpretation services and electronic medical records. (See Appendix C for submitted response to answers.)

In her first example, Greenhalgh said she had worked with a local service provider in London who had identified their biggest problem as the poor state of language interpretation services in primary care. To better understand the problem, Greenhalgh applied a qualitative design study to interview patients, doctors, interpreters, and managers about their stories of interpreted consultations. The focus of these interviews and subsequent analyses was the person’s own narrative of the interpreted consultation as he saw it. Because the narrative serves as a window to the wider organizational context, Greenhalgh’s team was able to use participants’ stories to elucidate organizational routines, defined by organizational sociologist Martha Feldman as “repetitive, recognizable patterns of interdependent actions, carried out by multiple actors” (Feldman and Pentland, 2003). In the study of organizational routines, a useful unit of analysis is often the handover between two people, a common occurrence in contemporary health care. By studying the routines associated with the provision of professional interpreters, Greenhalgh was able to compare organizations having strong routines with those having weak routines. Those organizations with weak routines in this area tended to rarely use interpreting services (or to use them inefficiently) and thus were lower performers. In organizations with well-developed routines, junior administrative staff often were responsible for refining and applying the routines; thus, apparently low-status and “unimportant” staff had a high degree of agency and influence in whether the interpreting service

was actually available in the organization. Greenhalgh found that improvement was often driven by the creativity of individual staff to shape organizational routines.

Greenhalgh’s second example was the research and evaluation of the new Summary Care Record, an online version of the electronic patient record accessible to any United Kingdom health professional. Two parallel studies, funded by separate organizations (the Medical Research Council and the Department of Health), are underway that focus on the research and the service evaluation components of implementing the Summary Care Record. The studies focus on the various parts of a system required to implement electronic patient records, including the receptionists, nurses, and physicians as well as the routines to determine how work is constrained by the electronic records. A particular point of focus is “workarounds,” the informal actions people take to make complex interventions work in practice when there is a mismatch between what is supposed to happen and what is practical or possible. As discussed in the above two examples, research projects with built-in evaluations must be linked with making improvements in quality, Greenhalgh recommended.

A second recommendation was to explore the use of stories to capture the complexity of health care services, including the implementation of interventions, as well as the creativity of staff to stimulate improvement. Finally, Greenhalgh recommended the strengthening of theory to better understand organizational-level data. Although RCTs have a place in quality improvement research, other methods also must be employed to get a full picture.

VETERANS ADMINISTRATION

Brian Mittman of the VA and the journal Implementation Science spoke about his experiences with quality improvement research, in particular the VA’s Quality Enhancement Research Initiative (QUERI). The QUERI program is designed to maximize validity and rigor, while also recognizing practical importance. To achieve this, QUERI focuses on meeting the needs of clinicians, managers, and researchers using a two-pronged approach addressing both practice improvement goals and research goals. An additional goal is to balance internal and external validity. Formal frameworks guide the planning and design of research projects. With respect to the rigor of research design and method, QUERI’s approach stays away from designs that do not adequately allow for the realization of secular trends, such as single case studies and before-and-after studies. However, the approach does respect context, heterogeneity, and the importance of change processes and mechanisms.

The VA’s highly developed information technology system, its research-supportive management and culture, and its strong levels of funding for research-based quality improvement, Mittman concluded, are a result of the VA’s infrastructure, heralded as one of the best prepared systems to support quality improvement research.

In response to a question about the effectiveness of quality improvement strategies identified by the VA, Mittman noted that such strategies do not lead to clear decisions regarding effectiveness. This notion is derived from the idea that a strategy’s effectiveness is highly dependent on the setting and context for implementation. In fact, Mittman said, some researchers believe that what drives change is not the specific quality improvement strategy itself, but the manner in which it is implemented. The actual effects of a quality improvement strategy may not always be as useful as the derived observations of the change processes. Based on this thinking, selecting an improvement strategy based solely on an intervention’s effects in other settings may not be very useful; the organizational and contextual features (e.g., local circumstances, resources, and training) of implementing the strategy must be considered. Echoing sentiments from Grimshaw, Mittman stated that success in quality improvement will likely require multifaceted, multilevel campaigns. However, more effective ways to disseminate knowledge are needed. Although written documentation is necessary, it is not sufficient.

Is the evidence needed for quality improvement fundamentally different from that required for clinical medicine? Mittman said the evidence for quality improvement is very different and that the overreliance on using the methods and approaches from clinical medicine has hindered the advancement of quality improvement research. This is driven largely by the heterogeneity seen in both interventions and contexts. The evidence requirements also differ in that quality improvement focuses more on the data and analyses of processes than the impact and outcomes data. In quality improvement research, the need for implicit knowledge is greater than the need for explicit knowledge, the guiding framework for clinical research as exemplified by RCTs. Although RCTs are important, emphasis on use of other methods for data collection and analysis are necessary to develop other types of evidence.

Quality improvement research should be supported by social scientists, Mittman said. Additionally, quality improvement researchers should have some minimum level of training in the social and clinical sciences. Researchers need to be trained to understand the nature of the kind of evidence that needs to be produced.

PRACTICE-BASED RESEARCH NETWORKS

Bill Tierney of Indiana University and the Regenstrief Institute reviewed methods used in quality improvement research: RCTs, prospective cohort studies, retrospective cohort studies, and cross-sectional studies. RCTs are often the most desired method, Tierney said, because of their rigor and having fewer biases. However, RCTs also have drawbacks, which include being time consuming, expensive, and difficult to perform. Perhaps most importantly, findings from RCTs may be the least generalizable. Prospective cohort studies are often the next best alternative because they are quicker, cheaper, and easier to conduct. Similar to RCTs, prospective cohorts offer the advantage of capturing the most relevant measures for answering the question at hand. Even easier and less expensive to conduct are retrospective studies that rely on existing measures of everyday care and management. Although retrospective studies are good for understanding patterns of care and generating hypotheses, they are subject to severe observational bias and rarely provide definitive answers. Qualitative studies employ cross-sectional methods that provide data as snapshots in time. Firm conclusions are difficult to draw because findings often cannot be generalized, but they can help understand the extent and define the problem(s) needing more rigorous study. However, results from cross-sectional studies are highly actionable in the local environments in which they are conducted. Researchers must use the most appropriate research methods when RCTs are not possible.

In addition to the wide range of methods, a variety of measures are used to study quality improvement. For example, use of health care services, vital signs, and test results are easily derived from electronic medical records. However, aspects of care important to patients—how they feel, their quality of life, functional status, and preferences in care—are more difficult to measure, are not routinely recorded by health care providers, and therefore are often left out of quality improvement efforts. These types of data can only be collected from questionnaires completed by providers or patients.

In turn, collecting data on measures of quality requires adequate infrastructure. One formal structure is the Practice-Based Research Network (PBRN).2 Indiana’s PBRN includes both inner-city community health centers and suburban office practices. The PBRN collects data through a citywide network of hospitals and an electronic

medical records system employed by Indiana University for more than 35 years. Information is shared through a health information exchange that gathers information from a great number of hospitals, freestanding labs and x-ray facilities, and physicians in central Indiana to encourage community-based studies. Arguably the most important piece of infrastructure, however, is for clinical leadership to be committed to research in quality improvement and safety.

Tierney presented some of his own research findings based on his background in internal medicine, geriatrics, and informatics. Tierney has performed numerous types of research, including RCTs (e.g., evaluating multidisciplinary care management in geriatrics and internal medicine in areas such as depression, dementia, and computer decision support systems), cohort studies (e.g., assessing weight management in elders), retrospective cohort studies (e.g., comparing electronic medical records with chart audit programs for quality indicators), and cross-sectional studies. To study quality improvement, researchers at Indiana University have also used an approach called appreciative inquiry, a method that allows people to share stories and provide insights into parts of interventions not currently measurable, such as characteristics of organizational context. In comparing these stories, researchers can identify the values, barriers, and facilitators affecting quality of care.

In his research, Tierney has found computer decision support for preventive care to be effective. However, this approach has not been able to reliably yield improvements in chronic disease management. Prevention and chronic disease management are different problems that must be approached in different ways, Tierney said. Qualitative research is also largely underused. To more effectively use qualitative research and to have the greatest impact on provider and patient activities, behavioral scientists need to be more involved in the study of health care quality improvement.

Variability also exists by site. For example, Tierney and Indiana University have established a PBRN in western Kenya that delivers HIV/AIDS care to more than 55,000 patients at 20 sites. Strategies found to be effective in Kenya may work only in Kenya and East Africa and may not generalize to practices in the United States. Additionally, some quality improvement interventions, such as multidisciplinary case management, may be effective in some contexts and diseases, but they may be too costly to implement broadly in resource-constrained practices in the United States or elsewhere. The effectiveness of an intervention is difficult to predict. Interventions have been proven to be effective in areas thought to be difficult, such as keeping frail elders out of institutions and managing dementia.

But interventions in other areas originally believed to improve quality were not successful, such as managing chronic renal insufficiency using accepted evidence-based practices.

These findings suggest that more extensive research efforts are needed to establish useful approaches to improving quality and study their effects. For example, it must be determined how to incorporate health-related quality-of-life measures into everyday health care and quality improvement research. Although useful quality-of-life measures exist, using them in everyday care is not widely taught in U.S. medical schools. Another area of research needed is the engineering of clinical decision support tools that physicians will use, particularly in disease management. These tools should become a standard part of care processes and medical records, but must be meaningful and target the correct actor (e.g., develop tools to be used by nurses to support functions nurses handle). People must be able to see improvement tools as methods to help themselves change instead of the system forcing change. Finally, appropriate measures of costs should be developed.

PATIENT SAFETY

Kaveh Shojania of the Ottawa Hospital and the University of Ottawa spoke about quality improvement research from the patient safety perspective. (See Appendix C for responses to questions.) No quality improvement strategies have been proven to be very effective, Shojania said. General improvements have been made, but they are small in magnitude and often cannot be generalized; major breakthroughs are lacking. For example, during a review of 11 various quality improvement interventions for diabetes, hemoglobin A1c levels improved by an average of 0.42 percent across 66 trials, which would result in questionable effects on clinical care (Shojania et al., 2006). Although processes of care are often improved, processes do not usually translate into meaningful improvements in outcomes of care. There are no magic bullets, Shojania concluded.

Many question the model for approaching quality improvement in health care because of its lack of major breakthroughs over the past 20 years of research, but Shojania proposed that the expectations may be too high. The majority of clinical therapies yield mostly small to modest benefits. Improvements of 3 to 4 percent in breast cancer treatments supported by trillions of dollars of research often make headlines. The war on cancer has been fought for more than 30 years with steady, incremental improvements. Comparing quality improvement of health care to the basic sciences of cancer biology,

far less is known about quality improvement yet the investments have not been made. Therefore, Shojania does not believe that quality improvement requires different types of research than clinical research.

Common arguments for suggesting quality improvement research must respond to different standards of evidence, including the following: the urgency for evidence; the complexity of quality improvement; the understanding that some solutions do not require evidence; the evaluation of quality improvement interventions is too costly; and the side effects of quality improvement interventions are not the cause of major problems. Shojania refuted each argument to maintain that quality improvement research actually should not require separate standards of evidence.

The first argument derives from the notion that too many unnecessary deaths occur and that the problem is too urgent to wait for a large, convincing body of evidence to develop. However, medical errors reportedly were the eighth leading cause of death (IOM, 1999), and should be held to the same standards of evaluation as those applied to the top seven, Shojania said.

A second argument for quality improvement requiring different standards of evidence is that quality improvement is too complex to study adequately with even the most rigorous methods, such as RCTs. The purpose of RCTs, however, is to balance unknown factors between control and intervention subjects. In fact, there are more unknown factors of quality improvement as compared to clinical science. RCTs are thus well suited to the study of quality improvement and have been found to be very useful in the identification of ineffective strategies (e.g., an RCT of continuous quality improvement combined with the chronic care model found that while the process was successfully implemented, outcomes failed to improve). RCTs can help identify where resources should be concentrated.

The third argument refers to the notion that some ideas are so logical that evidence is not needed. A tongue-in-cheek article in the British Medical Journal, a systematic review of RCTs to determine the effectiveness of parachute use to prevent death and trauma, which (not surprisingly) found that no such RCTs had ever been conducted (Smith and Pell, 2003), reflects this view. However, Shojania pointed out that even if efficacy is taken for granted, implementing even the most apparently simple or straightforward intervention can be complex. For example, hand washing represents an incredibly basic patient safety strategy, yet increasing adherence to this widely recommended parachute equivalent tends to be extraordinarily diffi-

cult. Although the goal is not questioned, the most effective method for achieving the goal remains unclear.

The fourth argument for having different standards of evidence in quality improvement is that evaluating quality improvement interventions is too costly, citing a cost of more than $1 million for trials of computerized provider order entry systems (CPOE) (Leape et al., 2002). These systems themselves cost more than $20 million each and will have little return on investment if implementation problems occur, if many clinicians do not use the system, or if the system introduces new problems, all examples that have been documented. Given that billions of dollars are at stake for hospitals across the country to implement CPOE, spending several million dollars to confirm effectiveness for various systems and identify optimal implementation strategies seems quite cost-effective, Shojania said. Reducing work hours and mandating medication reconciliations are other examples of costly interventions that have relatively low returns on investment because the strategies for how best to implement them remain unknown.

The fifth argument Shojania rebutted was the idea that the side effects produced by quality improvement and patient safety are not of great consequence. Shojania dismissed that notion as false for two reasons. First, quality improvement interventions generally increase the amount of care people receive, giving rise to the known side effects of medications and other treatments involved. Second, changes in complex systems often result in unintended consequences. This was exemplified in articles reporting new errors in care that were introduced by CPOE, bar coding, work-hour reductions, and infection control isolation protocols. Based on these arguments, Shojania concluded that quality improvement tends to have more in common with the rest of clinical medicine than is generally recognized.

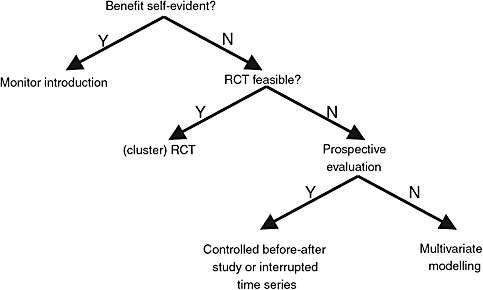

Shojania presented a framework for evaluation (Figure 3-1). Although the benefit of some interventions is self-evident, their implementation should be monitored to ensure no adverse unintended consequences arise. Most interventions, however, are not self-evident. For these interventions, it must be asked if an RCT should be conducted. If an RCT is not feasible, rigorous prospective study designs such as controlled before-and-after studies or multivariate modeling should be used. These evaluative models are used throughout health services research and are appropriate for quality improvement, Shojania said. Lessons from other disciplines, such as cognitive psychology, sociology, and qualitative research, should be employed in studying quality improvement. The need is not for

FIGURE 3-1 Framework for evaluation of interventions.

RCT = randomized controlled trial.

SOURCE: Adapted from Lilford (2005).

new models of evidence in quality improvement research, but for investments comparable to other areas in medicine in terms of time, money, and human resources.

OPEN DISCUSSION

An open discussion followed speaker presentations where members of the audience were invited to ask panel members questions. This section summarizes those discussions.

Panel members were asked to discuss the applicability of community-based participatory research methods in quality improvement. Greenhalgh responded by saying that those methods are extremely valuable because community members have high buy-in into studies when they help develop interventions. The downside, however, is that when participatory methods are used, participants may resist being randomized because the flip-side of buy-in is lack of equipoise stemming from a firm belief that the locally developed program is useful and that standard care is a lesser option. Mittman noted that similar methods are being used at the VA, likening the VA approach to health system–based participatory research, where the leadership of the VA health system serves in partnership with researchers. Grimshaw said one of his key conclusions from the forum

thus far is the need for use of multiple methods and a good framework. There are various stages for evaluating complex interventions: theory building, modeling approaches, and exploratory trials that are followed by definitive large-scale randomized trials. A range of methods is available for each stage; the challenge is to determine which method is most appropriate in individual circumstances, Grimshaw said.

Another question posed was whether one could identify specific factors and characteristics in people who successfully implement quality improvement interventions, and if so, whether people could be trained to be more successful. Tierney said successful implementation requires collaboration between clinical leadership and multidisciplinary researchers. With respect to whether people could be trained, Tierney said it is possible, but it must occur under an apprenticeship model: doing and showing the trainee effective approaches rather than telling the trainee what to do. Mittman agreed, adding that experience is critical to successfully researching and implementing quality improvement because of its complexity. Grimshaw said that whether better training can accelerate effective leadership remains unknown. The field needs to evaluate whether current leaders are equipped to bring the field forward and whether future leaders will be able to effect change.

Another question was whether quality improvement research should undergo ethics review. Tierney, a member of an institutional review board (IRB),3 said not all quality improvement projects should be considered human subjects research. For example, quality improvement studies aimed at improving care delivery in specific hospitals and practices without generating new knowledge should not need IRB approval. However, as an editor of a medical journal, Tierney acknowledged that most journals require studies to be reviewed by an IRB, and this might drive quality improvement researchers to obtain IRB approval for their studies that otherwise might not require such approval. Many mechanisms exist to address quality improvement research in IRBs, such as expedited and exempt review processes, discrediting common beliefs that IRB approval requires large investments in time and effort. Tierney encouraged quality improvement researchers to serve on IRBs to educate both themselves of IRB processes and educate other IRB members about quality improvement research through their participation. Heidenreich agreed with Tierney’s point that many

mechanisms exist to attain approval and that if research is being conducted, the study should undergo the IRB process. Greenhalgh said similar issues are being discussed in the United Kingdom, and research ethics application forms are not often designed for quality improvement projects. Greenhalgh recommended that researchers describe the uncertainties and parameters of projects in the notes sections of ethics forms and not send junior staff to defend complex projects. Many panelists noted they had not been subject to major delays or problems in attaining IRB approval.

When asked whether patients themselves should be randomized, Heidenreich said it depended on the intervention, but for the majority of cases, the point of randomization should be the physician or facility. Grimshaw agreed, noting that quality improvement interventions, and thus quality improvement research, often operate at the level of the provider or organization and not the level of individual patients.

Panelists were asked what types of research they would like to see going forward. Shojania replied by emphasizing the need for qualitative research to generate hypotheses, which should be followed up by empiric studies of effectiveness. Heidenreich called for analyses of the implementation of quality improvement as post-marked surveillance as well as analyses of the conduct of RCTs of different implementation methods. Grimshaw said building a common taxonomy was of primary importance and would involve input from a variety of stakeholders and researchers in other disciplines (e.g., organizational science, psychology, and clinicians). Grimshaw also said the basic science of quality improvement needs to become better developed, while building on existing knowledge in the social sciences. Mittman hoped for the creation of a road map identifying the theoretical frameworks and foundations for determining the most appropriate sequence of research projects. Greenhalgh hoped to see better publication standards for methods sections (where the details of interventions should be explained), noting that information currently gleaned from literature is often limited by restrictions on the length of methods sections allowed in journals. Tierney identified three specific needs: (1) to incorporate qualitative research into rigorous trials (in agreement with Heidenreich); (2) to involve industrial engineers and human factors engineers; and (3) to introduce more health services research into HIV/AIDS care in sub-Saharan Africa, where a large portion of the more than $30 billion investment has been spent inefficiently because of inadequate knowledge of proper care delivery in resource-poor countries.

In summary, there is no magic bullet to improve quality. As

many of the speakers suggested, all available research methods are valuable and researchers must learn which methods are most appropriate in answering different questions. The result will likely be multifaceted, multilevel research approaches and will require input from a number of disciplines. Speakers also largely recognized the need to not only understand what works, but also why an intervention does or does not work. This reflects the need for research studies to better discuss the roles of organizational and contextual factors, such as the role of leadership. Better understandings of these factors will help identify what improvements are not specific to an individual hospital or clinic but can be generalized to larger audiences.