1

Introduction

DEFINITIONS OF THE TERM “IT SYSTEM”

The statement of task for this study calls for an examination of the acquisition and the test and evaluation (T&E) processes as specifically applied to information technology (IT) in the Department of Defense (DOD). At the outset of the study, the Committee on Improving Processes and Policies for the Acquisition and Test of Information Technologies in the Department of Defense discovered that the term “IT system” was used in different ways by briefers to the committee as well as among members of the committee itself. Further investigation showed that the DOD provides no specific definition of “IT system” per se (see Box 1.1 for relevant examples). For purposes of this study, the committee decided to consider IT systems to be just those systems that support the DOD “information enterprise” (see definition in Box 1.1), but excluding IT embedded in weapons systems and in DOD-unique hardware. In particular, the term as used by the committee signifies systems expected to run on or interface with existing infrastructure and systems that are user-facing; moreover, “IT system” as used by the committee means systems that are delivered through the acquisition process (and not systems “homegrown” in individual commands).

The committee subdivided IT systems as specified above into two categories that differ in terms of development requirements, technical characteristics, and risk:

|

BOX 1.1 Definitions Related to the Term “IT System” in Department of Defense Directives Department of Defense Instruction (DODI) 5000.2, published most recently in December 2008, defines the authoritative DOD acquisition process. The terms “IT system,” “information technology system,” and “information system” are not explicitly defined in DODI 5000.2, although the term “IT system” is used in several places, as is the term “information system.” An “automated information system (AIS)” is defined as follows: A system of computer hardware, computer software, data or telecommunications that performs functions such as collecting, processing, storing, transmitting, and displaying information. Excluded are computer resources, both hardware and software, that are:

This definition focuses on characteristics relevant to the matter of who manages acquisition oversight for various types of programs based on the application, funding, or sensitivity of the program. DOD Directive (DODD) 8000 specifies oversight responsibilities for DOD information-management activities and supporting information technology, implementing provisions of the Information Technology Management Reform Act of 1996 (part of the National Defense Authorization Act for Fiscal Year 1996, Public Law 104-106). “Information technology” is defined in the directive as follows: |

-

Software development and commercial off-the-shelf integration (SDCI) programs—those that focus on the development of new software to provide new functionality or on the development of software to integrate commercial off-the-shelf (COTS) components, and

-

COTS hardware, software, and services (CHSS) programs—those that are focused exclusively on COTS hardware, software, or services without modification for DOD purposes (that is, the capabilities being purchased are determined solely by the marketplace and not by the DOD).

|

Any equipment or interconnected system or subsystem of equipment, used in the automatic acquisition, storage, manipulation, management, movement, control, display, switching, interchange, transmission, or reception of data or information by the executive agency, if the equipment is used by the executive agency directly or is used by a contractor under a contract with the executive agency that requires the use of that equipment; or of that equipment to a significant extent in the performance of a service or the furnishing of a product. Information technology includes computers, ancillary equipment, software, firmware and similar procedures, services (including support services), and related resources; but does not include any equipment acquired by a Federal contractor incidental to a Federal contract.2 This definition of information technology has, unfortunately, been too often interpreted as communications hardware-focused, although its scope is clearly broader. As a result, the committee chose as its point of departure for this study the definition of the “DOD information enterprise” provided in the glossary of DODD 8000.1: Department of Defense Information Enterprise. The DOD information resources, assets, and processes required to achieve an information advantage and share information across the Department of Defense and with mission partners. It includes: (a) the information itself and the Department’s management over the information life cycle; (b) the processes, including risk management, associated with managing information to accomplish the DOD mission and functions; (c) activities related to designing, building, populating, acquiring, managing, operating, protecting, and defending the information enterprise; and (d) related information resources such as personnel, funds, equipment, and IT, including national security systems.3 |

EFFECTIVE APPROACHES TO INFORMATION TECHNOLOGY IN THE COMMERCIAL SECTOR

The information age has ushered in an era of personalized products and services built on standard, massively replicable platforms—a powerful combination of centrally supported IT and end-user-driven IT (which generally relies on centrally managed IT to provide at least some of the underlying computing, storage, and communications capabilities). The result has been an ever-increasing empowerment of individuals and

organizations, giving them the ability to innovate their technical capabilities, their business processes, and their own product and service offerings. Accompanying this empowerment has been a rising set of expectations for performance of the information technology foundations through which these expectations are met. Hence the environment for delivering capability has become increasingly competitive, with emergent, tailored solutions for certain kinds of problems realized in days and months, sometimes by the customers themselves.

How are commercial IT market leaders managing these demands? They are doing so by instituting standardization and discipline at the heart of their respective IT enterprises while enabling agile, customer-led innovation at the edge of these enterprises. Most large IT providers have developed highly reliable, available, and scalable computing environments as the backbone of their product and service offerings. Consider search engines, commodity trading platforms, online auctions, and online marketplaces. All of these are based on commodity hardware and software that have been integrated to provide uninterrupted, extensible computing power, in many cases around the globe. These platforms are defined and their interfaces are exposed, at least internally, with an emphasis on interface stability and longevity.1,2 In some cases, the platform interfaces are exposed and accessed externally.3,4

Exposed, stable interfaces enable customers to apply computing power in new and unanticipated ways without compromising configuration control by the service provider or hindering the overall customer experience. By exposing robust interface points, customers can elect (or build) their own uniquely tailored experiences, thereby enjoying high satisfaction themselves and providing a reliable business base for the supplier.

The perception—and sometimes the reality—is that customer-led innovation is a “free-for-all” at the edge. Indeed, in many cases, consumer-facing providers cannot—or do not seek to—control the edge because their market is so diverse. However, this is not the general case for most enterprises. Many companies are successful at actively pursuing customer-led innovation as a principal means of driving the company’s evolution while doing so in a methodical, managed way. Integrating

the customer overtly into the product or service evolution is viewed as essential to success.5,6,7 At the same time, customer-led innovation has not resulted in delivery that is completely customer-driven: commercial developers still have their own rhythms of delivery of features, releases that customers must wait for.

For both centrally defined and edge-defined IT, many successful commercial IT suppliers have organized development around two key principles: (1) portfolio management8 and (2) development by small teams employing agile software-development methods.9,10 Portfolio management is a formal process whereby limited resources are strategically allocated to a subset of possible projects. Project risk, overall objectives, costs, benefits, and project interdependencies are all weighed, and a corporate-level decision is rendered on strategic investments. Implemented properly, portfolio management is an agile management tool that can accept current, real-world data and quickly evaluate and recommend changes to the portfolio.

The use of small teams employing agile methods has many advantages. Among them is the minimal enterprise expense that is incurred prior to the engagement of the first users and to all subsequent releases until a business base is established. If a product or service fails to meet business objectives at any point in its evolution, it can be canceled or redirected, at relatively low cost.11 The agile approach is one specific approach to software development within a larger category known as iterative, incremental development (IID). A survey article12 on the history of IID chronicles a long succession of major technology programs that have successfully used IID, including the X-15 hypersonic aircraft program and the application of IID methods to software projects on NASA’s Project Mercury. By the 1970s, IID was more widely applied to major software projects at selected major prime government contractors, including TRW and

|

5 |

Nanette Byrnes, “Xerox Refocuses on Its Customers,” Business Week, April 18, 2007. |

|

6 |

“Lego Mindstorms Advanced User Tools.” Available at http://mindstorms.lego.com/Overview/NXTreme.aspx; accessed June, 26, 2009. |

|

7 |

“National Instruments’ LabView.” Available at http://zone.ni.com/dzhp/app/main; accessed June 26, 2009. |

|

8 |

M.W. Dickinson, A.C. Thornton, and S. Graves, “Technology Portfolio Management: Optimizing Interdependent Projects over Multiple Time Periods,” IEEE Transactions on Engineering Management 48(4):518-527, November 2001. |

|

9 |

Ade Miller and Eric Carter, “Agility and the Inconceivably Large,” pp. 304-308 in Proceedings of the Agile 2007, IEEE Computer Society, Washington, D.C., 2007. |

|

10 |

Association for Computing Machinery, “A Conversation with Werner Vogels,” 2006. |

|

11 |

Lan Cao and Balasubramaniam Ramesh, “Agile Requirements Engineering Practices: An Empirical Study,” IEEE Software 25(1):60-37, January/February 2008. |

|

12 |

Craig Larman and V.R. Basili, “Iterative and Incremental Development: A Brief History,” IEEE Computer 36(6): 47-56, June 2003. |

IBM. The 1980s and 1990s saw significant evolution in IID approaches, and in 2001 the first text on the subject, Agile Software Development, by Alistair Cockburn, was published.13 A more in-depth discussion of IID is provided in Chapter 3 of this report. This chronology situates agile and related approaches within a broader context and also demonstrates that IID has a long history of being applied successfully for different types and scales of problems both in the DOD and in the commercial sector.

THE DEFENSE ACQUISITION SYSTEM

The complex Defense Acquisition System (DAS) has three major components, defined as follows:

-

The Joint Capabilities Integration and Development System (JCIDS) is aimed at identifying, assessing, and prioritizing joint military capability needs. The Joint Staff and the Joint Requirements Oversight Council champion it.14

-

The Planning, Programming, Budgeting and Execution System (PPBES) allocates resources to capabilities deemed necessary to accomplish the DOD’s missions. The Under Secretary of Defense, Comptroller champions it.15

-

The Defense Acquisition Management System (DAMS) establishes the “management framework for translating capability needs and technology opportunities, based on approved capability needs, into stable, affordable, and well-managed acquisition programs that include weapon systems, services, and automated information systems.” The Under Secretary of Defense for Acquisition, Technology and Logistics (USD AT&L) champions the DAMS.16

Each of these components is discussed in more detail in Appendix A.

The inherent difficulties in synchronizing these three DAS components have implications for all types of acquisition programs, including those delivering IT systems. The January 2006 report of the Defense Acquisition Performance Assessment (DAPA) project concluded that “the budget, acquisition and requirements processes [of the Department of Defense] are not connected organizationally at any level below the Dep-

|

13 |

A. Cockburn, Agile Software Development, Addison-Wesley, Boston, Mass., 2001. |

|

14 |

Defense Acquisition University, JCIDS Definition. Available at http://www1.dau.mil/; accessed June 2009. |

|

15 |

Defense Acquisition Guidebook Section 1.2. December 2004. Available at https://akss.dau.mil/dag/guidebook/IG-c1.2.asp; accessed June 2009. |

|

16 |

DOD Instruction 5000.2, “Operation of the Defense Acquisition System,” 2008, Paragraph 1.b. |

uty Secretary of Defense.”17 The DAPA panel specifically considered the impact of this disconnect on DOD software-related programs and made a number of recommendations aimed at addressing the problems.

The present committee’s report is focused largely on the DAMS component of the DAS. The PPBES is a well-established process, and its demands are largely predictable. The JCIDS requirements are sufficiently general to provide the necessary flexibility, and are integrated with the existing DAMS. The committee believes that the present Defense Acquisition Management System constitutes a significant challenge to the successful acquisition of IT programs and that changing it represents a promising opportunity to improve the performance of these programs. Moreover, the committee believes that these changes can be successfully integrated with the other existing components of the DAS. The DAMS thus constitutes the focus of this report, although the changes proposed by the committee may also have implications for the JCIDS and PPBES components.

RESULTS OF CURRENT ACQUISITION PROCESSES AND PRACTICES FOR INFORMATION TECHNOLOGY SYSTEMS

The committee received a briefing from the Office of the Assistant Secretary of Defense (Networks and Information Integration) (OASD [NII]) regarding the time that a set of major automated information system (MAIS) programs took to progress through the DOD acquisition system. The set was composed of 23 MAIS programs (3 of which were labeled as extensions of existing programs) that were initiated in fiscal year (FY) 1997 or later and that were completed or discontinued by early 2009. The presentation provided summary charts, and the OASD (NII) later provided the committee with the underlying data.18 This data set gives the dates on which each program started and completed the following phases in the acquisition cycle: the analysis of alternatives (AoA), the economic analysis (EA), engineering and manufacturing development (which begins following Milestone B [MS B]), and the achievement of initial operating capability (IOC). Some programs started a phase without completing previous phases, and some programs completed a phase without continuing to the next phase.

In the figures and table in this chapter, those programs that entered

the acquisition process at AoA are labeled A to H. (These labels are used rather than the program names because the objective of the analysis was to establish time lines rather than to examine issues associated with individual programs.) The programs that entered EA without first completing an AoA are labeled AA to DD. The programs that started at MS B are labeled AAA to HHH.

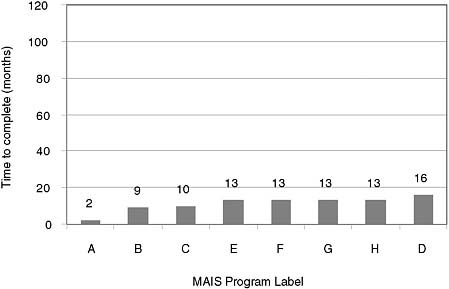

Eight programs started the acquisition process at the AoA phase (pre-Milestone B). Figure 1.1 indicates the time in months for each program to complete its AoA. The average time for these programs to complete their AoA was 11 months; the median was 13 months. Five of these programs (those labeled “A”, “B,” “C,” “D,” and “H”) went beyond AoA completion.

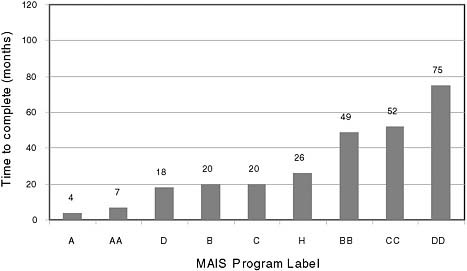

Nine programs in this data set completed their economic analysis (Figure 1.2). Five of these nine were continuations of efforts shown in Figure 1.1. The five programs in common in Figures 1.1 and 1.2 took an average of 28 months and a median of 30 months to complete both phases—roughly 2½ years. The remaining four programs reflected in Figure 1.2 entered EA without first completing an AoA. Overall the aver-

FIGURE 1.1 Time taken to complete the analysis of alternatives (AoA) for the eight major automated information system (MAIS) programs that started the acquisition process at the AoA phase. NOTE: See the accompanying text for an explanation of the program labels. SOURCE: Compiled by the committee from data provided by the Department of Defense for 23 MAIS programs initiated in FY 1997 or later and completed or discontinued by early 2009.

FIGURE 1.2 Time taken to complete the economic analysis phase (AoA completion to Milestone B) for major automated information system (MAIS) programs during FY 1997 to early 2009. NOTE: See the accompanying text for an explanation of the program labels. SOURCE: Compiled by the committee from data provided by the Department of Defense.

age time for programs in this data set to complete the EA was 30 months; the median was 20 months.

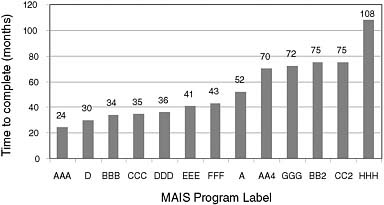

Figure 1.3 shows the time that it took for 13 programs to go from Milestone B to a successful initial operating capability. Most of these programs entered the acquisition process at Milestone B. Two of these programs (labeled “A” and “D”) completed all three phases of the acquisition process and are represented in all three figures. These programs took a total of 58 months (for “A”) and 64 months (for “D”) to reach IOC. Overall the average time for programs in this data set to go from Milestone B to IOC was 53 months; the median was 43 months.

Table 1.1 shows the average and median times required across all three acquisition phases to reach IOC. Although it is not mathematically accurate simply to add the averages or medians shown here, these statistics suggest that 6 to 8 years could be required to complete the entire acquisition process and reach IOC. Note that oversight attention is generally believed to have increased over the period of time represented in this data set and analysis, suggesting that the time to IOC may be even longer for more recent programs (and for programs in the future) than these averages suggest.

FIGURE 1.3 Time taken from Milestone B to initial operating capability for major automated information system (MAIS) programs during FY 1997 to early 2009. NOTE: See the accompanying text for an explanation of the program labels. SOURCE: Compiled by the committee from data provided by the Department of Defense.

TABLE 1.1 Average and Median Times Taken by Major Automated Information System Programs in Acquisition Process Phases Leading to Initial Operating Capability

|

Phase |

Average (in months) |

Median (in months) |

|

AoA completion |

11 |

13 |

|

AoA to MS B |

30 |

20 |

|

MS B to IOC |

53 |

43 |

|

NOTE: See accompanying text for a description of the MAIS programs in the data set; see also Figures 1.1, 1.2, and 1.3. AoA, analysis of alternatives; MS B, Milestone B; IOC, initial operating capability. |

||

One reason for these very long time lines is the burden imposed by the oversight process—the time associated with preparing documentation, scheduling review meetings, and so forth. To illustrate this point, the Business Transformation Agency (BTA)) constructed a graph—referred to as “The Big Ugly,” and based on one originally constructed by the U.S. Air Force—that shows all of the reviews and documents required to field a program. The BTA also considered the specific case of adding a 200-line program to a business system and projected that it would take more than

$1 million and 2 years just for the DOD 5000 acquisition reviews and documentation.19

SCOPE AND CONTEXT OF THIS REPORT

Over the years, numerous reports have made recommendations aimed at reforming defense acquisition. Indeed, multiple recent reports have tackled the question of IT acquisition specifically and have come to conclusions similar to those reached in this report. The committee believes that this general consensus buttresses the points made here. It is not the committee’s purpose, however, to comment specifically on other reports. One distinctive contribution of this report is its discussion of different classes of IT and how such differences merit different acquisition approaches.

The rest of the report examines in more detail the implications of current DOD IT acquisition processes and the committee’s rationales and recommended changes. Chapter 2 explores the cultural backdrop of the defense IT acquisition community and its effects on how IT systems are procured. Chapter 3 examines software and systems engineering practices and proposes a revised acquisition-management approach for IT systems. Chapter 4 considers testing and how the testing and evaluation of IT systems within the acquisition process might be made more effective. Appendix A provides a brief overview of the defense acquisition system for IT, Appendixes B and C respectively provide details of the recommended acquisition process for SDCI and CHSS programs, Appendix D gives examples of programs that have succeeded with nontraditional oversight, Appendix E lists briefings provided to the committee, and Appendix F provides biosketches of the committee members and staff. The acronyms used in the report are defined in Appendix G.