–B–

2010 Census Program of Evaluations and Experiments

Since the issuance of the panel’s letter report in February 2009, some additional detail about the structure of the 2010 Census Program of Experiments and Evaluations (CPEX) has become available. Some revisions to specific CPEX components were indicated in a formal response by the Census Bureau to the panel’s letter report (U.S. Census Bureau, 2009a). Other information and detail on planned CPEX components have also been made available because of the Census Bureau’s filing for general approval of CPEX data collection with the U.S. Office of Management and Budget (OMB). Under law, OMB is responsible for reviewing and approving any information collection activity that will be administered to 10 or more respondents.1 The Census Bureau requested generic clearance of parts of the CPEX program involving original data collection,2 and the request was approved on May 12, 2009; subsequent specific changes and project plans have been submitted in addition to this generic clearance. The package (Information

|

Box B-1 Experiments in the 2010 CPEX

SOURCES: Presentations to the panel; “2010 CPEX Information Sheet: 2010 ICP Paid Media Heavy-up Experiment,” shared with panel in May 2008. |

Collection Review [ICR] 200902-0607-007) is accessible through OMB’s RegInfo web site (http://www.reginfo.gov).

B–1

EXPERIMENTS

As presented to the panel in early 2009, the Census Bureau’s 2010 CPEX program included four formal experiments. Since then, a fifth experiment has been added to the ranks. Box B-1 lists the experiments for ease of reference; we provide additional description (and extend commentary from our letter report, as appropriate) on the experiments in the remainder of this section.

B–1.a

Alternative Questionnaire Experiment

An Alternative Questionnaire Experiment (AQE) in which a sample of census respondents receives questionnaires that vary in content, layout, and question ordering and wording has been a staple of census experimentation since the 1950 census. In that census, 10 district offices in Ohio and Michigan were used as “experimental areas” in which—among other things—four census forms were oriented toward households as the unit of analysis and self-response by individuals, as opposed to the person-based ledgers then used by enumerators in conducting their interviewers (U.S. Census Bureau, 1955:5). The 2000 census AQE focused heavily on the effect of visual cues and narrative instructions to guide respondents through the census long-form questionnaire. It also included an experimental group that varied the instructions and formatting of the basic residence (household count) question on the census form; the National Research Council (2006:202–203) observed that this single treatment constituted “a bundle of at least 10 changes,” some major and others extremely subtle, that rendered it impossible to ferret out which features were more or less effective than others.

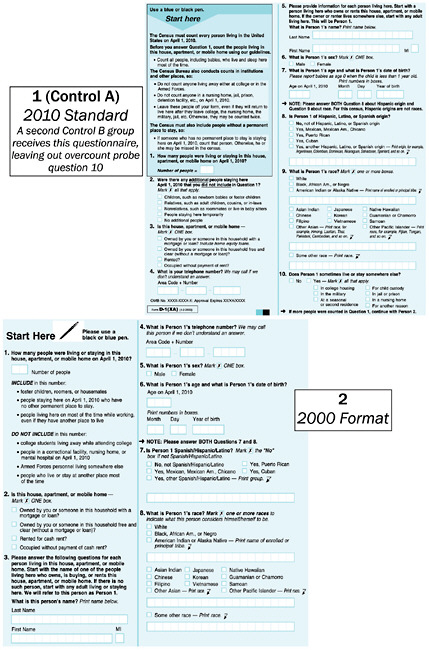

The 2010 AQE is planned to include 19 panels, most of which (15) involve variations to the questions on race and Hispanic origin. The experi-

mental panels are as follows and are numbered in the accompanying figures using the Census Bureau’s scheme:

-

Controls: The AQE shares a planned control group of 30,000 housing units with several of the other experiments described below; this group receives the standard 2010 census questionnaire with the only difference being that the phone number listed on the form for respondents to call if they have questions is a special CPEX line. However, the AQE includes a second control group that omits the overcount coverage probe question (i.e., “Does Person 1 sometimes live or stay somewhere else?”) on the 2010 census form because the space requirements of the revised race and Hispanic-origin questions in the other experimental panels precluded the overcount question from fitting on the form.3

-

Cumulative Changes from 2010: As shown in Figure B-1, one of the treatment groups uses the format and wording of the 2000 census questionnaire (save that it uses the 2010-standard blue color scheme rather than 2000’s yellow color). The 2000 census form did not include either the overcount or undercount (“Were there any additional people staying here [on] April 1, 2010 that you did not include in Question 1?”) coverage probe questions that have been added for 2010, and so those questions are not included on the 2000-style form. This treatment group is meant for comparison with the control group in order to study the cumulative effect of design and format changes from 2000 to 2010, although it will not be able to shed light on which specific features were more or less effective than others.

-

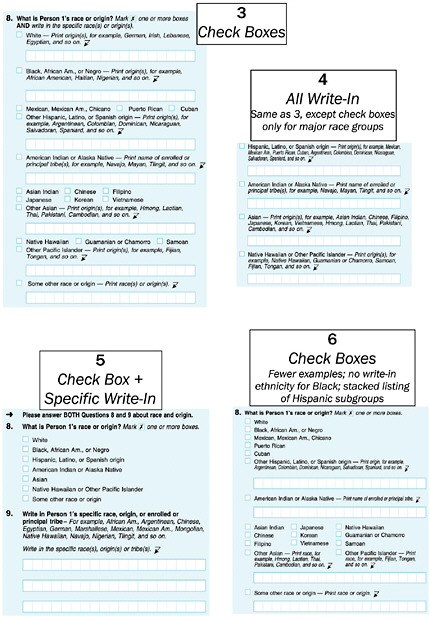

Combined Race and Hispanic-Origin Question: Four panels test possible structures for a “combined” race and Hispanic-origin question that lists Hispanic origin (and related subgroups) in line with traditional race categories, as shown in Figure B-2. Two of the treatments (numbers 4 and 5) limit check-box choices to major race categories (permitting write-in of specific origins, nationalities, or tribal affiliations), and the others add specific check boxes for selected subgroups.

-

Variations on the Race Question: As illustrated in Figure B-3, five experimental panels reflect suggestions from the Census Bureau’s Race and Ethnic Advisory Committees, varying the mix of listed examples for Other Asian (e.g., omitting “Thai” and adding “Mongolian”) and

-

Other Pacific Islander (adding “Marshallese”) groups. Two of the treatment groups (17 and 18) omit the word “race” from the wording of Question 9 and from the prefatory note, using the word only in the “Some other race” category.4

-

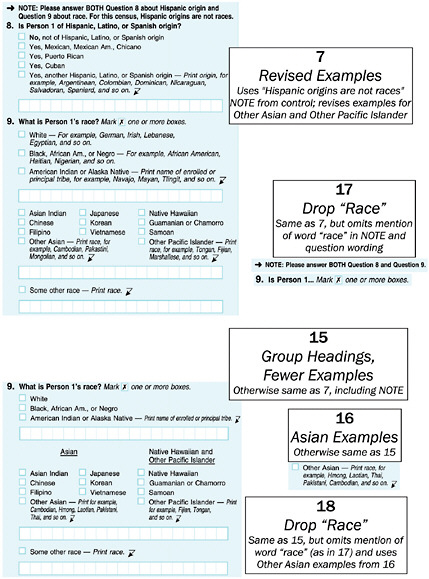

Variations on the Hispanic-Origin Question: Also based on input from the Race and Ethnic Advisory Committee, one treatment group varies the listed examples of Hispanic subgroups (group 8) while another explicitly permits respondents to check more than one Hispanic category, as is permitted for the race question (group 9; see Figure B-4).

-

Joint Variations of Race and Hispanic Origin: Also listed in Figure B-4, four treatment groups test combinations of the revised lists of race and Hispanic-origin examples with the “mark one or more” instruction on Hispanic origin.

-

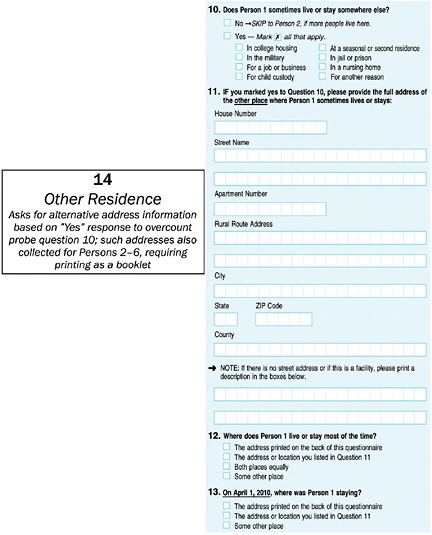

Other Residence Information: As shown in Figure B-5, a final experimental group in the AQE expands on the overcount coverage probe question. If the respondent indicates that a person sometimes lives or stays somewhere else, he or she is prompted to provide address information for that other location. Two follow-up questions then attempt to determine which address is the “usual” residence (“live or stay most of the time”) and which was the “current” residence on Census Day.

Originally planned for a target sample size of 560,000 households—30,000 per treatment group except for the 2000-format panel 2, with 20,000 households—the exact numbers in each group may vary because of a change in approach to drawing the experimental sample. In previous censuses, experimental groups were drawn once, from strata defined at the national level. However, the Census Bureau notes that the 2010 AQE will be different (ICR 200902-0607-007, individual request for clearance document for the AQE):

For the 2010 processing system development, schedule and time limitations necessitate sampling each Local Census Office (LCO) before moving onto the next, rather than selecting our sample once at the national level. This means that, since we will not have universe totals by stratum during the LCO sample selection, we have to fix our sampling intervals and let sample sizes vary.

As a result of this process, the Census Bureau estimates that the actual sample size of each 30,000-unit panel will be between 23,000 and 40,000 (and

Figure B-6 2010 nonresponse follow-up enumerator questionnaire, record of contact box

15,000–26,000 for the 2000-format panel). The sample for the race and Hispanic-origin groups will be constructed hierarchically to try to ensure that the smallest demographic groups of interest are in the sample. In the ideal, fixed sample-size case, this would involve first drawing 9,000 households per panel from tracts with 15 percent or more Asian or Pacific Islander people, then 9,000 from tracts with 25 percent or more black people, then from tracts with 40 percent or more Hispanic people, and finally 3,000 from all other tracts. For the other residence information panel, the Bureau indicated to OMB that it will attempt to target tracts with high densities of active-duty military personnel, seniors (possible nursing home residents), college-age students, and “areas more likely to have child custody coverage issues,” although how this will be done is not specified.

The AQE is also a factor in one of the formal evaluations in the CPEX program—a reinterview study—as described below in Section B–2.a.

B–1.b

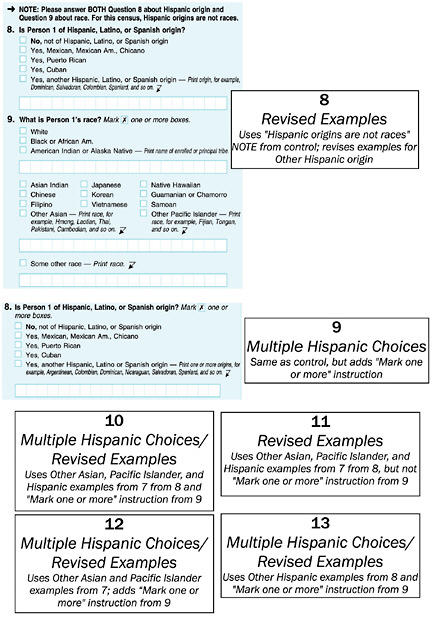

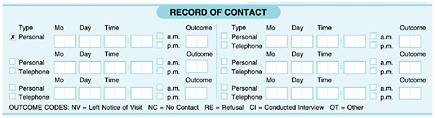

Nonresponse Follow-Up Contact Strategy Experiment

The 2010 census will follow the example of recent censuses by calling for temporary enumerators to attempt up to six contacts with households in the nonresponse follow-up (NRFU) workload. Three of these visits are supposed to be in-person contact attempts, and the other three can be done by telephone if a phone number is available. If no contact is made by the sixth try, then proxy information (i.e., from a landlord or neighbor) can be collected as a last resort. The contact log section of the paper-based enumerator questionnaire planned for use in 2010 is illustrated by Figure B-6.

The Nonresponse Follow-Up Contact Strategy Experiment of the 2010 CPEX proposes to test the effect of reducing the maximum number of contacts to either 4 or 5. As described to the panel in November 2008, the experiment was to take place in three local census offices and a total of about 52,000 housing units; all enumerators would use the same form with space for six contact attempts, but enumerators in sampled crew leader districts would receive different instructions on how many callbacks to make.

The Bureau argued that logistical challenges in varying field procedures (and training for fieldwork) precluded a larger sample of offices or housing units. We criticized the experiment in the letter report, principally on the grounds that it would be extremely difficult to generalize data from only three offices.

Subsequently, the Bureau designed two alternative enumerator questionnaires—each of which uses the same amount of space for the contact log as in Figure B-6 but simply omits one or two entries (and adjusts the layout so that the remaining entries fill the space). As described in the Bureau’s reply to our letter report (U.S. Census Bureau, 2009a) and the Bureau’s filing with OMB, the design now calls for 1.2 million of these experimental enumerator forms (600,000 for each of the 4-contact- and 5-contact-maximum groups) to be randomly inserted with the standard enumerator forms, and hence for the experiment to take place in all 494 local census offices. The 1.2 million sample size is said to be large enough so that, on average, every enumerator will have approximately one experimental questionnaire in each of their assignment areas. Although the sample size is now massive, the redesigned experiment has the drawback that the enumerators will be acutely aware of the maximum number of attempts they can make; arguably, then, the test is less about the effect of simply cutting off NRFU after (say) four attempts than it is about whether enumerators will expend extra effort to try to resolve cases in four tries (in the same way that they may try particularly hard to make contact on the sixth time in normal cases).

B–1.c

Deadline Messaging/Compressed Schedule Experiment

The Deadline Messaging/Compressed Schedule Experiment is intended to see whether mail response (and speed of response) improves when different messages urging a rapid response are included in four mailing pieces:

-

the advance letter sent prior to the main questionnaire mailout,

-

the envelope containing the census form,

-

the cover letter accompanying the census form, and

-

the reminder postcard sent after the main mailout but before beginning nonresponse follow-up.

As originally described to the panel in November 2008, the experiment included three types of deadline messages; subsequently, a fourth has been added. The four message types are:

-

“Mild,” which simply suggests a date by which the form should be returned;

-

“Progressive Urgency,” which casts the date as a deadline for response and reminds the respondent that response is required by law;

-

“Avoid NRFU Visit” (or, as the Bureau refers to it, “NRFU Motivation”), which casts the date as a deadline and urges the respondent to avoid the trouble of having a nonresponse follow-up interviewer visit their home; and

-

“Cost Savings,” which notes that money is saved by simply mailing the census form rather than having an interviewer come to visit.

It is useful to note that two of these message types repeat language used in 1990 census materials but not in 2000. The “Avoid NRFU Visit” message recalls a statement made directly in the instructions on the 1990 census form: “Avoid the inconvenience of having a census taker visit your home.” Likewise, the “Your Guide to the 1990 U.S. Census Form” brochure distributed with the 1990 census included a statement very similar to the “Cost Savings” message: “If you do not mail back your census form, a census taker will be sent out to assist you. But it saves time and your taxpayer dollars if you fill out the form yourself and mail it back.”5 The manner in which these deadline messages are rendered in the mailed items is shown in Table B-1.

In the experiment, each of the deadline message strategies is used in combination with one of two schedules. Following the normal 2010 census schedule, advance letters are supposed to arrive between March 8 and March 10, the census questionnaire between March 15 and March 17, and the reminder postcards between March 22 and March 24. The alternative, “compressed” schedule shifts these dates by one week, closer to the April 1 Census Day: that is, advance letters arriving March 15–17, questionnaires March 22–24, and postcards March 29–31. Under the compressed schedule, the target or deadline date of April 5 referenced in Table B-1 remains the same.

In our letter report, we offered little comment on the Deadline Messaging/Compressed Schedule Experiment, noting only that—like the other experiments—we were concerned about the lack of an analysis of the statistical power of the proposed test to discriminate between the alternatives. At the time, as presented to us in November 2008, each study panel was to include 10,000 households (drawn from sampling strata of expected high, medium, and low mail response based on the 2000 census). In replying to our letter (U.S. Census Bureau, 2009a), the Census Bureau cited two unpublished Census Bureau internal memoranda (not shared with the panel) as justifying the sample size selection; the reply also indicated that the number of housing units per panel in the deadline experiment would be doubled to 20,000, without any indication of how this level was determined.

Table B-1 2010 Deadline Messaging/Compressed Schedule Experiment, deadline message treatments by form type

|

Panel |

Advance Letter |

Initial Mailing Envelope |

Cover Letter |

Reminder Postcard |

|

Control (2010 Standard) |

When you receive your form, please fill it out and mail it in promptly. |

YOUR RESPONSE IS REQUIRED BY LAW |

Please complete and mail back the enclosed census form today. |

If you have not responded, please provide your information as soon as possible. |

|

1 (Mild) |

When you receive your form, please fill it out and mail it in by April 5. |

YOUR RESPONSE IS REQUIRED BY LAW ¶ Mail by April 5 |

Please complete and mail back the enclosed census form by April 5. |

If you have not responded, please provide your information by April 5. |

|

2 (Progressive Urgency) |

When you receive your form, please fill it out and mail it in by April 5. |

YOUR RESPONSE IS REQUIRED BY LAW ¶ Deadline is April 5 |

The deadline to complete and mail back the enclosed census form is April 5. |

If you have not responded, the deadline to provide your information is April 5. Your response is required by law. |

|

3 (Avoid NRFU Visit) |

When you receive your form, please fill it out and mail it in by April 5. |

YOUR RESPONSE IS REQUIRED BY LAW ¶ Mail by April 5 |

Please complete and mail back the enclosed census form by April 5 so that you can avoid a personal visit from an interviewer. |

If you have not responded, please provide your information by April 5 so that you can avoid a personal visit from an interviewer. |

|

4 (Cost Savings) |

When you receive your form, please fill it out and mail it in by April 5. |

YOUR RESPONSE IS REQUIRED BY LAW ¶ Mail by April 5 |

Please complete and mail back the enclosed census form by April 5. Mailing your census form on time saves money that would otherwise be used to follow up with you. |

If you have not responded, please provide your information by April 5. Mailing your census form on time saves money that would otherwise be used to follow up with you. |

B–1.d

Confidentiality/Privacy Notification Experiment

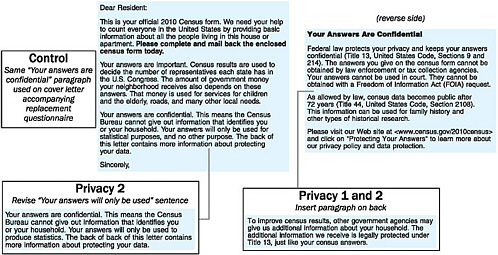

The cover letter accompanying a decennial census questionnaire typically is a letter or other printed message (often over the signature of the director of the Census Bureau) that assures respondents that the information they provide is kept confidential and is not disclosed to other government agencies. The planned cover letter for the 2010 census includes a short letter on one side and a short statement on confidentiality on the reverse side (the letter suggests that the document be turned over to read that information). The Confidentiality/Privacy Notification Experiment of the CPEX program involves two experimental treatments that make small changes to both the front and back of the cover letter, as shown in Figure B-7; these small changes are also made in the text of the letter that accompanies the second (replacement) questionnaire that the 2010 census will mail prior to the start of nonresponse follow-up. A control group—shared with the AQE and deadline messaging experiment—receives the standard 2010 cover letter in both the initial and second questionnaire mailings. A relatively small exercise as such census experiments go, the Confidentiality/Privacy Notification Experiment is planned to include 20,000 housing units in each of the two experimental panels.

B–1.e

Heavy-Up Publicity Experiment

In May 2009, the panel was informed that a fifth experiment—a “heavy-up” test of increased media buys for census publicity materials in selected markets—had been added to the CPEX program. The experiment is apparently intended to study the effectiveness of saturated advertising (base messages via local media buys or culturally/ethnically targeted media buys) in selected marketing areas. Because the only information the panel has heard or seen concerning this test is a short “fact sheet,” and the heavy-up experiment is not referenced in the Bureau’s CPEX clearance request to OMB, we cannot provide additional commentary on this experiment akin to what we provided for the other four CPEX experiments in our letter report.

B–2

EVALUATIONS

The Census Bureau’s supporting statement for its OMB submission for CPEX (ICR 200902-0607-007) describes it as including “over 20 evaluations.” Box B-2 lists the evaluation topic areas and the names of individual studies as they were presented to the panel in early 2009. The OMB submission provides detail on four of the proposed evaluations that involve original data collection, as discussed below.

|

Box B-2 Evaluations in the 2010 CPEX Coverage Improvement

Coverage Measurement

Field Operations

Language Program

Questionnaire Content

|

Marketing and Publicity

Privacy and Confidentiality

SOURCES: Presentations and materials shared by the Census Bureau with the panel, particularly Jackson (2008) and Reichert (2009). |

B–2.a

Alternative Questionnaire Experiment Reinterview

The principal focus of the AQE is differences in response to revised forms of the census questions on race and Hispanic origin; the Census Bureau plans to conduct a reinterview study with about 4,000 households from each of the 15 race and Hispanic-origin treatment groups in the AQE (as well as from the two control groups) to assess response bias. Plans call for the reinterviews to be conducted exclusively by telephone, so the availability of phone numbers (either provided by respondents on the form or found through directory look-ups) will dictate final sample sizes. Because the interest is in response bias, reinterviews will be conducted with the household members who completed the mail census form whenever possible (accepting proxy responses only as a last resort). The reinterview walks through the other questions on the short-form-only census questionnaire but is meant to be more probing with regard to the race and ethnicity questions (i.e., seeking yes/no verification for every major category and subcategory); it is also meant to be more conversational by including an open-ended question on race and origin perceptions (“I’d like you to think about what you usually say when asked about your race and origin…. Keeping in mind that you can say more than one, what do you usually say when asked about your race and origin?”).

B–2.b

Content Reinterview

On a much smaller basis than the AQE reinterview evaluation, the Census Bureau plans to conduct a general content reinterview study similar to those done in previous censuses. Like the AQE, plans call for the Content Reinterview to be performed by telephone, with an estimated sample size of 10,000 interview cases (drawn “proportionally across mail return ques-

tionnaires, update/enumerate interviews, nonresponse followup interviews, etc.”) in the United States and 860 in Puerto Rico.

B–2.c

Alternative Group Quarters Questionnaire

The Census Bureau’s planned Alternative Group Quarters Questionnaire experiment builds from experience in the 2000 census, but it also portends to repeat a major lost opportunity from that census.

In 2000, every Individual Census Report questionnaire filled by residents of group quarters (college housing, correctional facilities, health care facilities, etc.) included the question: “What is the address of the place where you live or stay MOST OF THE TIME?” (An instruction at the end of the first page of the form was intended to route respondents who live or stay at the group quarters location “most of the time” past this second address question and to the end of the questionnaire; still, the query for the second address dominated the second page of the form.) The same format (with slightly revised wording to fit the circumstances) held for the Military Census Reports and Shipboard Census Reports used to enumerate on-base military personnel and shipboard personnel. Although this “usual home elsewhere” (UHE) query was included on all group quarters questionnaires, the Census Bureau’s residence rules for the 2000 census considered UHE information from only certain group quarters types to be valid: valid for military personnel and such small group quarters as temporary worker camps, carnival grounds, and monasteries and convents, but invalid for the most sizable of group quarters populations (e.g., the aforementioned college students, prisoners, or persons in nursing homes).

Like the 2000 census, the Individual Census Report for the 2010 census (Form D-20, filed with OMB in ICR 200808-0607-003) asks about a usual home elsewhere regardless of group quarters type: if a respondent answers “no” to the question “Do you live or stay in this facility MOST OF THE TIME?” he or she is asked “What is the full address of the place where you live or stay MOST OF THE TIME?” The Bureau’s planned Alternative Group Quarters Questionnaire takes a different approach by asking for an “any residence elsewhere,” regardless of the respondent’s answer to the question about living or staying at the group quarters facility most of the time. The experimental question asks: “BESIDES THIS FACILITY, what is the full address of a place where you sometimes live or stay?” No follow-up question as to how frequently the person lives or stays at this alternate address is asked.

As of its initial March 2009 filing with OMB, the Bureau planned to use this alternative group quarters questionnaire in only 60,000 cases, administering the experimental questions to whole group quarters facilities rather than mixing normal and experimental questionnaires at individual

sites. However, in a July 2009 updated filing, the Census Bureau indicated that the sample size had been increased to 125,000, and that this sample would be conducted in only three local census offices (selected in May 2009 “based on demographics and 2010 geography”).

The National Research Council (2006) recommended that an “any residence elsewhere” question—similar to this experiment’s version but also including a follow-up on frequency of time spent at that address—should be asked of all group quarters residents in 2010 and of a large test sample of non-group-quarters, household respondents. Although this experiment goes a small part toward that recommendation, what is unknown at this time is whether the Bureau will make use of the alternative address information gathered on the standard group quarters form. In 2000, in principle, forms from non-UHE-eligible group quarters types should have been handled separately from those for which UHE was permissible. However, in practice, this prefiltering was not done, and all 2.9 million group quarters forms (including captured other-address responses) went through an initial geocoding operation when only 659,000 should have been eligible. Only when this extra work was done—and the resulting slow-down in other processing noticed—were the UHE-eligible and non-UHE-eligible groups separated, with the other-address information for the non-UHE-eligible group quarters types being discarded. The National Research Council (2006:230) described this situation as a “highly regrettable lost research opportunity”—throwing out what could have been a “trove of information on the nature of potential census duplicates.”

B–2.d

Interactive Voice Response Customer Satisfaction Survey

The Census Bureau plans to use interactive voice response (IVR) technology in its Telephone Questionnaire Assistance (TQA) program in 2010. Respondents seeking clarification or information (e.g., requesting a foreign-language questionnaire) can call a number in the census mailing package, and an automated computer system will administer help based on spoken word commands from the respondent. As one of the formal CPEX evaluations, the Census Bureau plans to conduct a Customer Satisfaction Survey with an approximate 1 percent sample of persons calling the TQA lines to assess satisfaction with the IVR interface. The current plan is for this survey to be done at the end of a TQA call; the IVR system will say:

If that’s all the information you needed, please hold for our Customer Satisfaction Survey. Otherwise, to hear the topic information again say “repeat that” or for help on another general question say “Census information.”

About two-thirds of the total estimated sample size (665,000 callers) will then be administered a five-item satisfaction survey via IVR, while the other

third will be transferred to a customer service representative to receive a seven-question survey. Perhaps recognizing that customers generally unsatisfied with speaking to the IVR system will not be inclined to go through a follow-up survey on IVR (particularly one administered by IVR)—and possibly accounting for people whose inquiries lead them to be diverted out of the IVR to a human operator—the Census Bureau currently projects a 7.6 percent response rate and an estimated 5,016 total respondents.

B–3

ASSESSMENTS

Box B-3 lists what is currently known about the content of the assessments portion of the CPEX program, in which “assessment” refers to operational histories and descriptions akin to what were generally labeled “evaluations” in 2000. Aside from these major headings, we have no more detailed information about research plans for specific assessments.

|

Box B-3 Assessments in the 2010 CPEX Assessment Studies, Grouped by Name of Designated Staff Teams

SOURCE: List of assessments shared by U.S. Census Bureau with the panel, May 15, 2009. |