5

Current Evaluation Framework and Existing Evaluation Efforts

There is substantial evidence that the National Oceanic and Atmospheric Administration (NOAA) is giving increasing attention to evaluating the education and outreach programs that it supports. Evaluation is more prominent in the 2009-2029 education strategic plan than it was in the 2004 plan. Evaluation is primarily the responsibility of each individual education program, and the Education Council (EC) provides leadership and guidance for education evaluation activities across the agency. The EC has also developed and promoted an implementation framework incorporating program evaluation as an integral part of the management of program delivery. Program officers indicate that these efforts are in part a response to executive and legislative mandates as well as a perceived need to make better use of resources across individual programs and share lessons learned among the programs and their partners.

Evaluation can contribute to sound decisions on how to make strategic use of resources. Common metrics or program performance standards are evaluation tools that can support strategic decisions about resources. Evaluation can assist this type of decision-making process even in the absence of common metrics and performance standards. Programs will need to be compared in terms of two criteria: their strategic importance within the education portfolio and their relative effectiveness in achieving their stated outcomes. Lessons to share among programs and their partners can be derived from both formative and summative evaluation results (see Box 5.1 for definitions).

This chapter provides a review of evaluation efforts of the EC and individual education programs. Our review is based on presentations by NOAA

|

BOX 5.1 Formative and Summative Evaluation Formative Evaluation: The purpose of formative evaluation is to provide feedback on the development of a program or project and its implementation. Formative evaluation results are used to make changes to programs during their development and initial implementation. An overarching formative question is “How is the project operating?” The specific questions focus on how the project is being implemented, including specific features of a project or program, such as recruitment strategies, participant attributes, materials, and attendance. Summative Evaluation: Evaluation of a project’s outcomes, also called summative evaluation, can be designed to address several questions. Summative evaluations are typically done after changes to the program have been made as a result of formative evaluations. They are used to determine whether, and to what extent, a program or project results in the desired outcomes. |

program managers and key strategic partners as well as an archival review of 18 evaluation reports (10 internal evaluations, 8 external evaluations) of individual education programs (18 were selected from the 47 provided; these 18 included data on participants’ reactions or outcomes and a description of the education program, participants, and data collection methods). In our review we observed considerable variance in the rigor of program evaluations. Most programs have not gone through a full outcome-based summative evaluation process; in fact, many have only recently begun to implement program evaluation at all. Even programs that have been in operation for many years and have significant performance measurement and evaluation procedures, such as Sea Grant, have difficulty in evaluating the scope and impact of their education activities. The norm among existing evaluations is not to measure impact, but instead to focus on such outputs as numbers served and the number of satisfied participants.

NOAA education managers demonstrated strong awareness of the limitations of past evaluation practices and have developed a plan of action in response. Therefore, rather than simply reviewing past evaluations, this analysis takes a forward-looking perspective, identifying the opportunities and challenges of linking existing formative and summative evaluation practice with the new strategies and goals set for NOAA’s education initiatives.

We begin our review by examining the quality of individual program evaluations conducted in recent years. Our review of recent evaluations was assisted by two research papers prepared for this report. One developed a logic model of the education programs offered by NOAA (Clune, 2009).

The other is a review of 18 of the more substantive evaluation reports provided by NOAA education programs (Brackett, 2009). We follow this examination with a description of evaluation practices and reports that have achieved high regard within the agency.

Next, we describe the two most visible recent changes designed to improve evaluation practice. First, in 2007 the EC adopted the Bennett Targeting Outcomes of Programs (TOP) model as a framework for all evaluations (described below). In adopting the TOP model the EC is attempting to encourage individual programs to view evaluation as an integral part of program development, delivery, and management rather than an activity used to satisfy external accountability requirements. Second, in 2008 the EC led an agencywide initiative to develop a new strategic plan to guide the organization, coordination, implementation, and evaluation of the many education programs housed in NOAA.

CURRENT AND PAST PRACTICES IN PROGRAM EVALUATION

In this section we review the state of evaluation for education and outreach programs, based on evaluations that NOAA provided. The 2008 strategic plan and the adoption of the TOP model represent developments toward stimulating a stronger evaluation process in the education programs of NOAA. However, much can be learned from reviewing the variety of approaches that current education programs have employed in carrying out evaluations. We describe some general trends in evaluation among NOAA education programs, highlighting both the stronger and weaker practices.

The majority of evaluations tend to focus on local projects and initiatives, and programs have either not yet conducted a programwide evaluation or are in the process of doing so (Brackett, 2009). Table 5.1 summarizes the evidence indicating whether or not evaluations were available for this review. For the programs providing evaluations, the table indicates whether they were programwide evaluations (i.e., the evaluation attempted to collect evidence from actors across all sites or projects) or local assessments of individual projects or sites. The evaluations of six of the programs took a local perspective, examining individual projects or sites, and four took a program-wide perspective.

In evaluations that examined activities from a programwide perspective, the evaluation questions tended to focus on specific engagements with participants and their reactions to the experience. In our review of the evaluation reports, we examined the transparency of the reports with regard to the methodology and questions used in the evaluation. By transparency we mean whether the reader can see the questions asked of respondents in an evaluation, the methodologies chosen, and the characteristics of the

TABLE 5.1 Summary of Evidence on Evaluation Practices

participants. We also examined the degree to which measures aligned with outcome categories or concepts that serve the strategic needs of NOAA.

In addition to the items or questions in the evaluation metrics, Brackett (2009) notes the importance of transparency with regard to the larger hypotheses or guiding questions. These are “the key organizers of a strong evaluation, dictating the design of the study, the data collection strategies and instruments to be used, and the data analysis. The findings of the evaluation provide answers to these questions and the basis for interpretation of findings and recommendations” (p. 4).

TABLE 5.2 Focus of Evaluation Questions in 18 NOAA Program Evaluation Reports (number of reports)

|

Student learning/achievement (8) Student stewardship (6) Student interest in science, careers (3) Student satisfaction with activity (2) Student engagement in learning (1) Student sense of place (1) Student leadership (1) |

Teacher learning (4) Teacher confidence in teaching ocean science (4) Teacher satisfaction with professional development (4) Teacher implementation or intent to implement practices, use materials (4) Teacher technology skills (2) Teacher stewardship (1) Teacher sense of place (1) |

|

Scientist satisfaction with activities (2) Scientist learning (1) Museum visitor understanding/learning (3) Museum visitor satisfaction (3) Museum visitor suggestions for improvement (3) |

Professional development provided, strategies used, program design (5) Professional development evaluation used (2) Program work environment (1) |

|

SOURCE: Brackett (2009, p. 5). |

|

By the standard of transparency, most of the evaluations reviewed fared well. Over three-quarters of the evaluations either provided the questions used or gave a strong enough implication of the nature of the question for the reader to understand what was being asked. A summary of the types of evaluation questions used across the 18 reports is presented in Table 5.2 and notes a suitable level of transparency. Although this suggests some room for improvement across programs, the norm for NOAA programs is to have acceptable levels of transparency in evaluation questions. In each quadrant of Table 5.2 the questions are aimed at different stakeholder communities important to the NOAA education mission. The 18 evaluations are aimed at understanding whether students, teachers, and museum visitors are learning and using the new knowledge they have been exposed to in their activities. There are also assessments of the participating scientists and their satisfaction in engaging with an education activity.

We also examined evaluation questions from the perspective of how well they served the strategic interests of NOAA. From this perspective, the evaluations also do good service. It is important to keep in mind that these evaluations were conducted under the guidance of the 2004 strategic plan, which placed greater emphasis on dissemination of NOAA science through the education and outreach programs. Brackett (2009, pp. 5-6) comes to a similar conclusion:

A previous NOAA strategic plan emphasized the importance of getting NOAA science in use through the NOAA education programs. As evident above, none of the program evaluations reviewed indicated an evaluation question or program objective directly focused on use of NOAA science. It should be noted, however, that the use of NOAA scientific research and researchers was an underlying piece of most of the programs evaluated. Specifically, 15 of the 18 reports indicated in some way use of NOAA science and/or researchers as part of the program’s work. Fourteen of the 18 reports provided teacher, student, or scientist satisfaction data concerning provision of the NOAA science research or data, or involvement of scientists in learning activities. Seven of the reports noted measures (self-report, tests, student presentations, or use of NOAA data) of teacher or student learning of NOAA-provided science content. Three of the reports gave no indications of a focus on using NOAA science, although one of these did provide a recommendation to develop a program using a system’s research information.

This assessment offers some optimism that NOAA education programs will adapt to the new mission and develop evaluation questions to serve the needs of the strategic goals for environmental literacy and workforce development. The adoption of the TOP model, described in detail below, requires that evaluations now be guided by a different set of evaluation questions that align with the need to address the 2008 strategic education plan goals.

The design, data collection, and analysis of the evaluation reports reviewed for this study were of mixed quality. In briefings, NOAA program officers indicated that each program is ultimately responsible for monitoring the quality of the evaluations. We observed the result of this approach in the variance in evaluation design and quality. We also observed considerable variance in the reports themselves, with some elements being quite solid and other elements providing a weak foundation for reporting results and impacts. We highlight some of the stronger evaluation studies in the next section. Box 5.2 lists the strengths found in the evaluations, and Box 5.3 lists the issues of concern. NOAA program officers are well aware of the limitations of many of the previous efforts at evaluation and have taken steps to improve evaluation quality now and in the future.

Highly Regarded Evaluation Practices and Reports

NOAA program officers and NOAA documents highlighted examples of evaluation practices and reports that have achieved a high level of regard within the agency. These highly regarded evaluations have influenced NOAA’s internal understanding of evaluation and shaped evaluation practices. These evaluations do not necessarily meet a high standard of evalu-

|

BOX 5.2 Notable Evaluation Strengths

SOURCE: Brackett (2009, p. 10). |

ation quality, yet examining them in greater detail provided some insight into the views of NOAA officers about effective evaluation practices. We highlight the reports or processes because they represent a range of evaluation strategies and practices and illustrate the need for evaluations to be conducted and communicated in a manner that supports the uptake of evaluation findings.

Bay-Watershed Education and Training Program

In 2007, the Bay-Watershed Education and Training (B-WET) Program completed a large, external evaluation of the Chesapeake Bay area training program in Delaware, Maryland, and Virginia that included teacher and student data from many smaller projects in the area. This report is notable because of the efforts at providing a rigorous programwide assessment of performance and impact among students and teachers. The study provides extensive surveys of educators who partner with B-WET. The report also provides matched comparisons of student performance in classes who have and have not participated in B-WET programs.

The 2007 evaluation is also notable because it is the most rigorous evaluation design employed among the NOAA evaluation programs. During the development of this report pressure was growing in the federal government to incorporate more rigorous designs into all program evaluations. The B-WET evaluation study coincided with the work of the Academic Competitiveness Council and the release of its report advocating rigorous

|

BOX 5.3 Notable Evaluation Weaknesses Clarity and Focus

Methodology and Instrumentation

Data Analysis and Presentation of Results

|

evaluation designs (U.S. Department of Education, 2007). At that time, “rigorous” was narrowly defined as clinical trials or equivalently designed evaluations, rather than the most appropriate for the evaluation purpose and questions. In public documents NOAA has touted the B-WET evaluation as an example of the responsiveness of the agency to the national policy initiatives emphasizing rigorous evaluation.

Sea Grant Program

Sea Grant, the oldest initiative in the EC, submitted 40 reports for our review of evaluation practices, but most were either assessments of a state’s Sea Grant Program (with a description of what education activities occur and who is served) or results from post-program surveys (i.e., the percentage of people who provided each type of response to questions about the activity). Of these reports, three were evaluations of an education

Interpretation of Results and Recommendations

SOURCE: Brackett (2009, pp.10-11). |

activity that included appropriate and sufficient information to warrant review. One consisted of an internal online questionnaire of 46 members of the Sea Grant Network conducted in 2008 to gather information about the programs and their needs. The other two reports provided evaluation information on two separate teacher learning projects: Teacher Education at Stone Laboratory (Ohio, undated) and the Aquatic Invaders in Maine (AIM) Teacher Workshop.

What is most notable about the Sea Grant Program is the extensive formal performance monitoring process that it has developed. The performance monitoring process is a tool used with all Sea Grant projects and activities, including those aimed at educators. Thus, the evaluations of Sea Grant include all research and extension-related activities, which are beyond the purview of this report. Sea Grant leadership indicates that the most common use of evaluations is as a performance management tool that provides Sea Grant program officers with up-to-date information on

the state of project implementation. Participants in Sea Grant programs are required as a condition of sponsorship to submit annual reports detailing progress to date.

Sea Grant uses this information every four years as part of a review of individual Sea Grant programs. This process for assessment has become a part of Sea Grant’s standard operating procedures. It is the only process observed that provides a scheduled, project-oriented view of performance. The key criteria for assessing Sea Grant programs consist of ratings for organizing and managing the program, connecting Sea Grant with users, effective and long-range planning, and producing significant results.

Sea Grant leadership reports the following challenges associated with the current evaluation process after two rounds of program assessments. First, program assessments are broadly focused on the entire research program. Consequently, there is relatively little time or talent dedicated to examining the education and outreach programs. Second, program assessments tend to focus on one university program at a time. This means that there are few opportunities for a comparative assessment across programs. However, a strength of the Sea Grant approach to evaluation is that it may create an information infrastructure that can be used to integrate planning, implementation, and evaluation. There is not sufficient evidence to judge whether the information infrastructure has been used in this manner.

Office of National Marine Sanctuaries

The Office of National Marine Sanctuaries (ONMS) is interesting because of the leadership and advocacy that it has been providing in recent years toward improving the quality of evaluations across NOAA education programs. It is through ONMS that the EC was introduced to the TOP model and ultimately adopted this approach across all NOAA education programs.

At the time of our study, there was no ONMS evaluation report available that had fully incorporated the TOP model. ONMS is currently developing a programwide evaluation that employs this approach. What was available was a series of project evaluations detailing the implementation and impacts of specific engagements with students and teachers. Each of these reports evaluated the use of the marine sanctuaries as a living classroom. For example, a 2004 assessment of the Dive into Education program examined the professional development of 62 K-12 teachers in Hawaii and American Samoa as they developed national science education standards-based ocean science activities aimed at stimulating student learning. Evaluations were also conducted to assess participant satisfaction with the LiMPETS (Long-term Monitoring Program and Experiential Training for Students) program, which provides teachers with training in marine science protocols

that can be applied in the classroom and the field. LiMPETS also provides students with an ongoing scientific process to monitor natural resources and contribute to databases over time. Other evaluated projects, such as the 2005 Hawaii Field Student, focus even more strongly on conservation and stewardship by following pairs of teachers and students as they interact with coral reefs and larger ocean ecosystems. We highlight these evaluations because the programs are viewed by NOAA as a good model for a program that achieves alignment with standards for teaching. As ONMS continues its implementation of the TOP model, it can test these assumptions.

Overall, it is clear that NOAA is engaged in various types of evaluation, that some programs have conducted evaluations of varying quality, and that the evaluations of higher quality could serve as models for other programs. For example, practices that are worthy of replication include recruiting a comparison group when useful and appropriate; creating an information infrastructure to integrate planning, implementation, and evaluation; collecting information from multiple sources; aligning evaluation questions with program goals; collecting both quantitative and qualitative data; and using literature reviews in early stages to understand best practices in program design and interpret program implementation findings.

Until NOAA articulates measurable goals and outcomes for its education programs, it will be difficult to design evaluation questions that align goals and outcomes or produce any summative results at the highest level. Instead, the agency is left primarily with formative results and, in a few cases, localized results showing impacts that serve as tests of the program design. Once a set of overarching measureable goals and outcomes is articulated, it will be possible to assess projects against those goals and outcomes. Such assessments are likely to reveal successes and failures, from which would emerge common metrics, instruments, and practices that could be promoted for use across similar types of programs (e.g., teacher training), providing the needed data for summative evaluation across NOAA programs. Decisions about the goals, outcomes, and assessment metrics should be made by NOAA staff with appropriate experts (program designers, evaluators, education staff from other agencies and institutions, among others).

THE ROLE OF EVALUATION IN THE 2008 STRATEGIC PLAN

While the 2004 strategic plan focused on translating NOAA science into useful knowledge for the education communities, the 2008 strategic plan is a more ambitious articulation of the agency’s goal of addressing the environmental literacy and workforce needs of the nation, in line with authority given to it by the America COMPETES Act. Under each goal the

importance of evaluation is stressed with respect to specific strategies for achieving key outcomes. In contrast, the 2004 strategic plan provided no specific mention of evaluation in the goals or strategy statements.

The 2008 strategic goals pose several challenges to existing evaluation practices. Perhaps the most significant challenge is establishing evaluation processes through which NOAA can assess the cumulative impact of education programs toward achieving the strategic national goals. The programs are numerous and relatively small in light of the mandated mission. The 2008 strategic plan articulates several key factors driving variability in the missions of the education programs and, subsequently, in the evaluation strategies pursued. Among these are authorizing legislation for the individual education programs, the diverse body of disciplines related to science, technology, engineering, and mathematics (STEM) on which the programs draw, and the target communities with which the program interacts (e.g., K-12 education, informal education institutions, postsecondary education).

A mismatch is noted between the 2008 strategic goals and the scope and scale of the evaluations conducted to date. Evaluations in NOAA tend to collect self-reported impact data and information about participant satisfaction. Larger evaluation questions about the effective allocation of resources tend not to be addressed, nor do the individual programs report an incentive for this type of assessment. The Office of Education managers explained that one way of addressing these problems is through the EC, which serves as a forum for sharing information across the portfolio of education programs. Current efforts to develop an implementation plan to support the 2008 strategic education plan include formalizing comparative reviews of evaluations as a part of the work of the EC.

While sharing program evaluations is a positive step, it does not address the issue of conducting appropriate evaluations that allow the EC to determine which of the education programs are effective and what parts of these programs contribute to success. It is also difficult to see how sharing the results of evaluations will provide a sufficient foundation of information to guide in the strategic allocation of education resources. In order to accomplish this, NOAA would have to weigh questions of value (whether a certain type of essential outcome is being addressed, such as document analysis) with questions of effectiveness and efficiency (such as outcomes-based evaluations that include an appreciation of input variables). Highly effective programs may not address particularly important strategic goals; conversely, programs in need of substantial improvement might be uniquely positioned to address them.

To further illustrate our concern regarding the mismatch of the scope and scale of evaluations, we turn to the paper prepared for the committee by Clune (2009). Its logic model for NOAA education programs

(see Table 5.3) notes that the relative weakness of the central governing authority allows the following to occur:

-

Redundancy of effort in the development and implementation of programs.

-

Overlapping constituencies for programs.

-

Barriers to promoting common standards for curriculum materials and pedagogy.

-

Barriers to having common cost-benefit standards for determining the effectiveness of programs.

TABLE 5.3 Common Logic Model for NOAA Instructional Programs

|

Logic Model Elements |

Corresponding NOAA components |

|

Inputs |

|

|

|

Educational goals in a research agency |

|

|

provide guidance for: |

|

|

Educational management |

|

|

that creates and administers: |

|

Activities |

|

|

|

Instructional activities |

|

|

directed at: |

|

|

An audience (or audience clusters) |

|

|

consisting of: |

|

|

Educational content, instructional materials, pedagogy |

|

|

delivered at/through: |

|

|

A geographical site, website, partnership |

|

|

aimed at producing: |

|

Outcomes |

|

|

|

Learning outcomes |

|

|

knowledge about: |

|

|

|

|

Medium- and long-term outcomes and impacts |

|

|

|

Behavioral outcomes, including positive: |

|

|

Decisions, policies, operations, politics |

|

|

that lead to: |

|

Impacts |

|

|

|

Societal outcomes, including: |

|

|

Conservation, restoration, sustainable use, and development |

From an evaluation perspective, this logic model raises two questions: (1) Does NOAA have an adequate forum for addressing issues of redundancy, overlapping constituencies, etc.? and (2) Is a sufficient information base being collected to address these types of problems? The evaluations reviewed by the committee do not include these types of issues as a focus of inquiry. This is probably because existing evaluations were aimed at assessing individual projects or events in and of themselves rather than in comparison to one another or the overall program. Some capacity for these types of evaluations is needed for NOAA to be able to convincingly demonstrate that the collection of information on individual programs is bringing about the outcomes related to environmental literacy and workforce development. On that note, NOAA needs to provide intermediary goals that are more in line with available resources. The committee appreciates, for example, that NOAA cannot increase the environmental literacy of the entire U.S. population. That said, however, what exactly would be a realistic goal for which NOAA should be accountable?

A second evaluation challenge growing out of the 2008 strategic plan is the importance placed on partnerships. The plan identifies partnerships across NOAA programs, with other federal and state agencies and with formal and informal education institutions. The 2008 strategic plan further identifies 29 distinct strategies for achieving outcomes aimed at fulfilling the two strategic goals. Partnership and interorganizational collaboration are key components in 19 of these 29 strategies.

Partnerships have been a focal point for evaluations in school-university partnerships (Goodlad and Sirotnik, 1988) and STEM education programs (Scherer, 2008) as well as other policy domains (Brinkerhoff, 2002). However, there is limited evidence that current evaluations conducted by NOAA account for the influence of partnerships in achieving outcomes and impacts. In addition, most evaluations do not attempt to observe the underlying partnership or explore this as a factor in assessment. Given the strategic importance placed on partnerships, greater attention to this topic is needed across NOAA program evaluations.

A related evaluation issue is that the education strategic plan does not specify a role for scientists and engineers and science offices in education, so it will be difficult to formulate, justify, or enforce evaluation metrics to determine if the interplay between agency scientists and engineers and education staff is working. It is critical that NOAA scientist and engineers have a role in the education efforts, because, as discussed in Chapter 3, they are one of the important assets that NOAA can use to address its educational goals and the needs of the nation. Collaborations between education staff and scientists and engineers have the potential to lead to higher quality education programs and resources than would be possible without such collaborations. Thus, just as the contributions of NOAA education staff

need to be evaluated, the contributions of its scientists and engineers need to be assessed so that their impact is not overlooked, so their contributions are appreciated at an institutional level, and so that continual improvement of their connection with education staff is possible.

A third evaluation challenge arising out of the 2008 strategic plan stems from the emphasis placed on the development of consistent performance metrics across programs aimed at improving environmental literacy. Performance metrics are to be applied in formal and informal education programs and for the many disciplines that contribute to environmental literacy. The EC is the suggested forum for sharing knowledge about the development of performance metrics and disseminating effective practices.

As in the challenge posed by partnerships, there is a significant gap between current evaluation practice and the goal of using common performance metrics. NOAA is making important investments in conducting the baseline research for constructing such metrics, as evidenced in the sponsorship of the National Assessment of Environmental Literacy conducted by the North American Association for Environmental Education (see McBeth et al., 2008). However, there is little evidence to date of the use of common metrics across NOAA program evaluations, nor has NOAA conducted a critical analysis to determine the feasibility of common metrics across significantly differing programs. There are enormous and probably prohibitive challenges in designing and applying common metrics in ways that would lead to comparable data and information. Even if theoretically possible and technically feasible, questions remain: Would common metrics be useful and desirable? Or could they lead to a centralization and homogenization of NOAA education programs, which could ultimately threaten the value of place-based, individual, local education efforts?

A fourth challenge is the emphasis of various education initiatives on reaching diverse communities. This emphasis must extend to the evaluation of NOAA’s programs by using evaluations that are sensitive to the existing context of culture and diversity. Such evaluations consider cultural diversity at all stages of the evaluation process: selection of stakeholders, development of evaluation questions, design of the evaluation, data collection and analysis, and communication. Evaluations should be culturally responsive and operate in a manner appropriate for the audiences being served. Some aspects of a culturally responsive evaluation are showing genuine respect for participants and engaging in an ongoing process of awareness of contextualized cultural needs (Mertens and Hopson, 2006). This also includes thoughtful consideration of culturally enforced differential access and resource opportunities. This perspective may substantially affect the timing and conduct of an evaluation and help to uncover basic but unstated assumptions about programming or evaluation findings. Culturally sensitive and appropriate evaluations also may run counter to the

ideal of common metrics and comparable evaluation results. The goal of developing culturally appropriate and sensitive evaluations may therefore be in conflict with goals for comparable evaluations based on common metrics.

A fifth challenge with conducting more and more rigorous and comprehensive evaluations is that evaluation, particularly when focusing on impact at the program level, can be expensive. Available funding for education programs and evaluations is limited. A rule of thumb for evaluating programs is that at least 5 percent of the total budget should be devoted to summative evaluation. Formative evaluation should be part of program design, and its cost is part of the program. Reports from project managers indicate that this level of funding for evaluation has not been provided. Insufficient funds severely limit the scope and nature of any evaluation. Given limited overall funds, it is critical that NOAA develop a plan for allocating the funds for evaluation.

To achieve the greatest return on limited resources, evaluation of individual projects can be scheduled on a cyclical basis, with high priority given to projects intended to have the greatest impact on environmental literacy and workforce needs and to projects that face important questions about activities, participants, staffing, funding, or organization. Both formative and outcome evaluations can usually be scheduled in advance. For example, reports about program effectiveness may be scheduled on a periodic basis: staff can plan for outcome evaluations in advance over a 4-5 year period, rotating the projects in the portfolio.

THE TOP MODEL AND EVALUATION

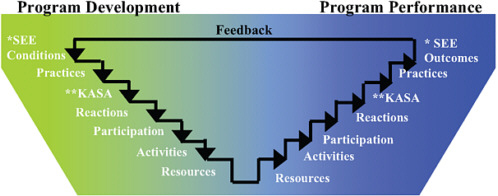

In April 2007 the EC adopted the Bennett TOP model (Bennett and Rockwell, 1995), a specific version of a logic or program model, as a common framework for evaluation (see Figure 5.1).

TOP focuses on outcomes in planning, implementing, and evaluating programs. TOP documentation presents itself as based on a hierarchy, integrating program evaluation in the program development process using a simple framework to target specific outcomes in program development to existing resources and outputs, and then to assessing the degree to which the outcome targets are reached. As with most evaluation frameworks, the TOP model does not provide guidance on specific methods or metrics for implementing individual evaluations.

TOP is described as based on a theoretically sound framework that has been tested, revised and refined, and widely used over the past 20 years (Bennett, 1975, 1979; Bennett and Rockwell, 1995). The model consists of seven progressive levels of outcome assessment derived through a system-

atic process of program development process. The seven assessment levels, presented on the TOP website, are briefly defined as follows:

-

The Resources (1) level explains the scope of the programming effort in terms of dollars expended, intellectual resources, partnerships, and other assets.

-

Progress documented at the Activities (2) and Participation (3) levels generally is referred to as outputs. It indicates the volume of work accomplished and is evidence of program implementation.

-

The Reactions (4) level, is evidence of participants’ immediate satisfaction.

-

Intermediate outcomes at the KASA (knowledge, attitude, skills and aspirations) (5) level focus on knowledge gained/retained, attitudes changed, skills acquired, and aspirations changed.

-

Intermediate outcomes at the Practices/Behavioral (6) level focus on the extent to which practices and behaviors of program participants are influenced. These outcomes can be measured months or years after program implementation, and they can also be accomplished in the short term, and may be measurable immediately following an intervention.

-

Intermediate outcomes lead to longer term social, economic, and environmental (SEE) changes, or impacts of the program or activity. Identifying outcomes at the SEE (7) level (akin to defining impacts) for localities may occur fairly quickly although state, regional, or national outcomes may take years to assess and may be very expensive.

The developers say that the strengths of the TOP model are its focus on the educational process and incorporation of a broad range of outcome-based evaluation techniques, as well as the fact that the outcomes are aligned in accordance with theories of behavioral change. At the management level, the educational approaches of individual projects can be compared for their effectiveness in achieving similar outcomes.

Models for evaluation have been developed for many years, and many began in the 1960s with the growth of federal accountability. The purpose of models for evaluation is to provide a mechanism for covering the range of issues involved in programs and mechanisms for determining effectiveness. Each model emphasizes different aspects of educational programming. Along with the development of models has been the development of standards for evaluation. Current evaluation standards from the Joint Committee on Standards for Educational Evaluation (1994)1 include

-

Utility Standards: These standards relate to guaranteeing that the evaluation information will be used once it is completed. In order to accomplish this, suggestions about how to engage in the following activities are provided: stakeholder identification, evaluator credibility, information scope and selection, values identification, report clarity, report timeliness and dissemination, and evaluation impact.

-

Feasibility Standards: These standards relate to guaranteeing that the evaluation can actually be carried out. Suggestions for how to conduct the following activities related to feasibility are provided: practical procedures, political viability, and cost effectiveness.

-

Propriety Standards: These standards relate to guaranteeing that the evaluation is conducted in a fair and equitable manner. To ensure propriety, suggestions on how to conduct the following activities are provided: service orientation, formal agreements, rights of human subjects, human interactions, complete and fair assessment, disclosure of findings, conflict of interest, and fiscal responsibility.

-

Accuracy Standards: These standards relate to guaranteeing that the evaluation information is valid, reliable, and analyzed appropriately. To ensure accuracy, suggestions on how to conduct the following activities are provided: program documentation, context analysis, described purposes and procedures, defensible information sources, valid information, reliable information, systematic information, analysis of qualitative information, analysis of quantitative information, justified conclusions, impartial reporting, and meta-evaluation.

The standards are based on the definition of evaluation as the assessment of something’s merit. The standards can be applied to any evaluation plan or evaluation model to determine its quality. In addition to the standards, Daniel Stufflebeam, the first chair of the Joint Committee on Standards for Educational Evaluation, recently provided an assessment of evaluation models using the 30 standards (Stufflebeam and Shinkfield, 2007). Stufflebeam and Shinkfield suggest five categories of program evaluation models or approaches: (1) pseudo-evaluations, (2) question- or methods-oriented, (3) improvement or accountability, (4) social agenda and advocacy, and (5) eclectic.

The TOP model appears to fall into the improvement/accountability category, along with approaches such as the CIPP (Context, Input, Process, and Product) model (Stufflebeam, 2005) or that of Cronbach (1982). The central thrust of this type of evaluation is to foster improvement and accountability by informing and assessing program decisions. In consider-

ing the TOP model in terms of each of the standards, it appears to be a reasonable model for evaluation but limited in detail about how to actually implement it, although this detail might be available from a TOP expert or experienced evaluator.

The TOP model connection between evaluation and programming is especially valuable for program development. The far-reaching aspects of social, economic, and environmental changes also fit well with the stewardship goals of NOAA. The needs and opportunity assessments described in the TOP model appear to be quite similar to the context and input portions of the CIPP model. There are also several real-world examples provided on the TOP website to help operationalize the model.

However, the model may not translate well to broader cross-program issues or to programs that are less oriented to participant development. The TOP model does discuss interorganizational issues, offering a five-level approach of networking, cooperation, coordination, coalition, and collaboration. The more outcome-based orientation of TOP might not provide sufficient feedback for program improvement, although the emphasis on program development might compensate for the lack of feedback. In addition, there appears to be less emphasis on the utility standards than could be warranted. The TOP model is strong in terms of the feasibility standards. It is difficult to understand from the material provided how the propriety standards would be met. The accuracy standards are critical to any evaluation, and more emphasis on these standards would improve the TOP model.

Brackett (2009) examined 18 NOAA evaluation reports submitted for analysis for conformity to the TOP model using the seven levels of assessment. Brackett notes that many of the evaluation reports submitted were conducted either prior to or coinciding with the adoption of TOP model by the EC and the issuance of any guidance to the individual programs.

Although only a small proportion of education initiatives have been evaluated, “17 of the 18 [evaluation] reports provided some data concerning Program Activities and Participation, although this information was often spotty in nature. All 18 reports provided information on the Reaction level. Sixteen reports provided data concerning intermediate outcomes in the area of KASA. Nine included information concerning intermediate outcomes in the area of Practices or Behaviors. None of the reports provided information concerning broader SEE changes” (Brackett, 2009, p. 10).

Brackett’s review suggests that the EC has good reasons for promoting TOP as a standard for evaluation by NOAA’s education and outreach programs. Current evaluations tend to focus on the specific forms of engagement by participants in NOAA-sponsored programs as well as evidence of reactions or learning taking place. Application of the TOP model may offer a useful reminder to program officers that they need to stretch the

scope of current evaluation practice to include the resource inputs and larger changes in practices and social impacts at the overall program level (not at the individual activity level). At the resource level, “staff time used” must include scientist time as well as educator time; it is not appropriate to expect scientists to squeeze education activities into their spare time while expecting them to carry a full load of scientific responsibilities.

Although the TOP model is not a magic wand for solving the evaluation challenges faced by NOAA, it does serve as important guidance reminding managers that evaluation is not simply an accountability chore on a checklist. With the proper scope, evaluations can provide critical information for program management. However, until there are real objectives in the strategic plan and good strategies for collecting data related to them, summative evaluation across the agency’s diverse and loosely coordinated education portfolio will remain conceptually challenging. This is true for assessing progress toward both the environmental literacy goal and the workforce goal. For example, assessing progress toward the workforce goal would necessitate either long-term longitudinal data or a plan for what to do in the absence of such data. It might also be necessary to develop pipeline metrics that could illustrate whether programs address critical bottlenecks or leaks in the pipeline, especially for individuals from underrepresented groups.

SUMMARY

Evaluation of federally funded education programs is evolving rapidly, and at the same time the expectations of NOAA programs have changed quickly. The agency has responded and in some cases has done exemplary work.

NOAA is increasing its emphasis on evaluation. It is using the Office of Education and the Education Council to coordinate evaluation activity and is adopting the TOP model. The model is a reasonable one.

Although NOAA is conducting evaluations of its educational activities, they are limited in scope and tend to focus on immediate and intermediate outcomes. Nearly all evaluations lacked comparative elements. Most serious is that there is little consideration of evaluation at the portfolio level, such as between different programs or different approaches or in terms of what types of programming might be most effective in meeting NOAA educational goals.

NOAA can improve its evaluation strategy by:

-

Increasing the emphasis on high-quality evaluations by using the higher order evaluation suggestions of the TOP model as well as its program development and improvement aspects.

-

Incorporating effective practices into any evaluations that are implemented.

-

Emphasizing evaluation of the entire portfolio of NOAA activities using consistent data-gathering approaches.

-

Evaluating both the education programs and the line offices on how effectively the ideas, insights, knowledge, understanding, and passion of the agency’s scientists and engineers, as well as other scientists and engineers in the relevant disciplines, are incorporated into educational materials and programs.

-

Evaluating the appropriateness and effects of their partnerships.