2

Overview of Risk Analysis at DHS

INTRODUCTION

The scope of responsibilities of the Department of Homeland Security (DHS) is substantial. Responsibilities range over most, if not all, aspects of homeland security and support in principle all government and private entities that contribute to homeland security. DHS is directly responsible for the planning for and recovery from nearly any catastrophic disaster, whether human inflicted or naturally occurring. The mission encompasses the following elements:

-

Terrorism and natural hazards (e.g., see p. 3 of http://www.dhs.gov/xlib-rary/assets/nat_strat_homelandsecurity_2007.pdf; natural hazards were emphasized also by Homeland Security Presidential Directive 5 [HSPD-5] http://www.dhsgov/xabout/laws/gc_1214592333605.shtm);

-

Border patrol and immigration;

-

Criminal activities within the jurisdiction of crimes that Immigration and Customs Enforcement (ICE), the U.S. Secret Service, and the U.S. Coast Guard (USCG) are responsible for;

-

Marine safety and protection of natural resources within the responsibility of the USCG;

-

Cyber security (HSPD-7, available online at http://www.dhs.gov/xabout/laws/gc_1214597989952.shtm); and

-

Accidental hazards, a term that encompasses industrial and commercial accidents with the potential to cause widespread damage to or disruption of economic and social systems.

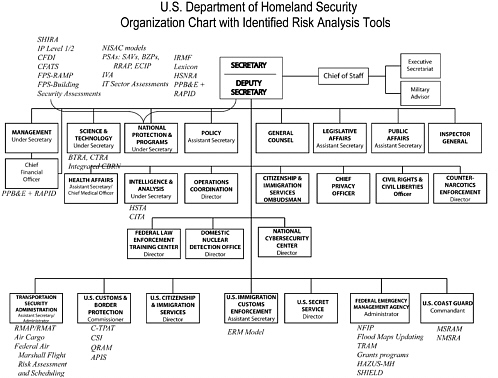

DHS includes 22 major “components,” many of which are well-known and long-standing federal organizations. The DHS organization chart (with some identified risk models and tools by directorate) is shown in Figure 2-1; the risk acronyms are spelled out in Table 2-1. It is clear then that DHS has a complicated responsibility with multiple functions, often only loosely related. This is reflected in DHS’s very broad definition of risk (DHS-RSC, 2008):

The Department of Homeland Security (DHS) defines risk as the potential for an unwanted outcome resulting from an incident, event, or occurrence, as determined by its likelihood and the associated consequences. These risks arise from potential acts of terrorism, natural dis-

asters, and other emergencies and threats to our people and economy, as well as violations of our borders that threaten the lawful flow of trade, travel, and immigration.

It is also clear that risk analysis is an activity that is spread broadly across DHS. This complexity and breadth distinguish DHS from many organizations that have successfully adopted risk analysis to inform decision making.

THE DECISION CONTEXT AT DHS

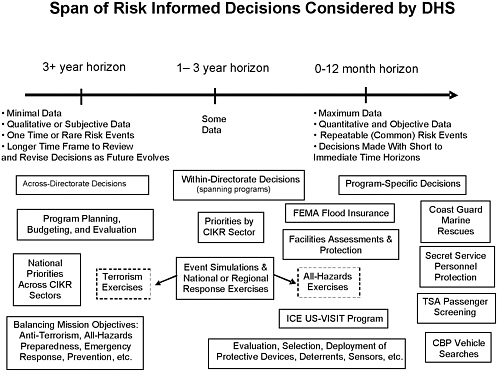

Regarding the types of decisions that effective risk management analysis might support, Figure 2-2 illustrates risk-informed decisions that confront DHS as defined by their time horizons. Decisions on the far left side of the figure are pure policy level decisions, such as how to balance the overall DHS focus among terrorism, law enforcement, infrastructure protection, preparedness-emergency response, and so forth. These address judgments that rely heavily on factors beyond just science and engineering.

The type and volume of data available tend to change from qualitative and subjective to quantitative and objective as one moves from left to right in Figure 2-2, although this is not a hard-and-fast rule. Similarly, the decision time horizon changes from several years, and great uncertainty, to a more immediate time frame with less uncertainty. The uncertainty that may have existed is often removed from consideration as one moves from left to right as a result of previous decisions. For example, what fraction of cargo to inspect is a decision assumed to have a fairly long time scale, and it is followed by more targeted (and perhaps shorter-lived) decisions about how to inspect—does one examine manifests, use some type of detector, or physically open containers? Associated decisions resolve where to set the threshold for triggering an alarm and similar protocols. Clearly, all these levels of decision are interrelated. There is no sense in deciding on a level of inspection that there is no way to implement or that is operationally too expensive.

Some policy level trade-offs must be made in the absence of much or any historical data and rely, instead, perhaps on surveys and formal expert elicitations; it is unfortunate that often the most consequential decisions have the fewest data to support them. The paucity of historical data complicates the analysis of risks associated with different terrorism scenarios. However, there are approaches to developing other types of threat data for use in quantitative models that should be used, when appropriate, by DHS. These include, for example, elicitation of expert judgments, game theory, and Bayesian techniques. While there will be uncertainties associated with these approaches, they are nevertheless important. The shortage of historical data does not obviate the value of carefully crafted and well-documented estimates of risk, with appropriate characterization of the uncertainties.

TABLE 2-1 Acronym Key and Notes for Risk Models and Processes shown in Figure 2-1a

|

Acronym (from Figure 2-1) |

Full Name |

Notes |

|

HITRAC* |

Homeland Infrastructure Threat and Risk Analysis Center |

A joint program of the Office of Infrastructure Protection (IP) and the Intelligence and Analysis Directorate (I&A) |

|

SHIRA |

Strategic Homeland Infrastructure Risk Assessment |

A high-level risk assessment of infrastructure elements |

|

IP Level 1/2 |

Also known as the “Level 1/Level 2” program |

A risk-based process for identifying high-risk infrastructure targets |

|

CFDI |

Critical Foreign Dependencies Initiative |

A process for examining supply chains to identify critical vulnerabilities |

|

CFATS |

Chemical Facility Anti-Terrorism Standards |

A risk-based method for identifying which chemical facilities will be regulated by DHS |

|

NISAC models* |

Models and simulations from the National Infrastructure Simulation and Analysis Center |

Most NISAC work informs consequence analyses |

|

PSAs |

Protective Security Advisors |

A program that provides security consultations to owners and operators of critical infrastructure elements |

|

SAVs |

Site Assistance Visits |

Evaluations performed by PSAs |

|

BZPP |

Buffer Zone Protection Program |

A program that identifies, based on analyses of risk, which areas contiguous to critical infrastructure elements merit their own protection |

|

RRAP* |

Regional Resiliency Assessment Projects |

Risk-based assessments of the resiliency of clusters of critical infrastructure and their buffer zones |

|

ECIP |

Enhanced Critical Infrastructure Protection Initiative |

An in-progress effort to improve the method for scoring vulnerabilities of critical infrastructure and key resources |

|

IVA |

Infrastructure Vulnerability Assessment |

A process under development to integrate site-specific vulnerability information with other vulnerability assessments to create a more integrated picture of vulnerabilities to guide risk assessment and management |

|

Acronym (from Figure 2-1) |

Full Name |

Notes |

|

RMA* |

Office of Risk Management and Analysis |

DHS office charged with coordination of risk analysis across the department |

|

IRMF* |

Integrated Risk Management Framework |

Structure for coordination being developed by RMA and the document that guides that coordination |

|

Lexicon |

DHS risk lexicon |

Defines risk analysis terms |

|

HSNRA-QHSR |

Homeland Security National Risk Assessment, Quadrennial Homeland Security Review |

The QHSR, released February 2010, proposes the development of a capability to perform HSNRAs |

|

PPBE + RAPID |

Planning, Programming, Budgeting, and Execution; Risk Analysis Process for Informed Decision-Making |

PPB&E is the process used in DHS’s finance office to build the budget. RAPID is a tool under development to supply risk analysis to inform that process |

|

BTRA* |

Biological Threat Risk Assessment |

A computationally intensive, probabilistic event-tree model for assessing bioterrorism risks |

|

CTRA |

Chemical Threat Risk Assessment |

A computationally intensive, probabilistic event-tree model for assessing chemical terrorism risks |

|

Integrated CBRN |

Integrated Chemical-Biological-Radiological-Nuclear risk assessment |

A computationally intensive, probabilistic event-tree model for developing an integrated assessment of the risk of terrorist attacks using biological, chemical, radiological, or nuclear weapons |

|

HSTA |

Homeland Security Threat Assessment |

An I&A program to develop an understanding of threats |

|

CITA |

Critical Infrastructure Threat Assessment Division |

An I&A unit that produces threat analyses for critical infrastructure and key resources |

|

IT Sector Risk Assessment |

Information Technology Sector Risk Assessment |

A process to assess risks against the IT infrastructure |

|

RMAP/RMAT |

Risk Management Analysis Process/Tool |

RMAT is an agent-based tool under development by Boeing and TSA to evaluate airport vulnerabilities. RMAP is the emerging process to make use of RMAT |

|

Acronym (from Figure 2-1) |

Full Name |

Notes |

|

Air Cargo |

Risk-informed method for selecting targets for screening. Not examined by this study. |

|

|

Federal Air Marshalls’ Flight |

Risk Assessment & Scheduling Risk-informed method for selecting flights to carry an Air Marshall. Not examined by this study |

|

|

C-TPAT |

Customs-Trade Partnership Against Terrorism |

Risk-informed process for examining security across worldwide supply chains. Not examined by this study |

|

CSI |

Container Security Initiative |

CSI uses threat information and automated targeting tools to identify containers for inspection at borders. Not examined by this study. |

|

QRAM |

Quantitative risk assessment model |

A general class of models used in part to set inspection levels at borders. Not examined by this study. |

|

APIS |

Advance Passenger Information System |

APIS uses threat information to identify passengers who should not be allowed to travel to or leave the United States by aircraft or ship. Not examined by this study |

|

ICE ERM Model |

Immigration and Customs Enforcement Enterprise Risk Management model |

A process, in the early stage of development, through which ICE plans to manage risks holistically across the entire enterprise. Not examined by this study |

|

FPS-RAMP |

Federal Protective Service-Risk Assessment Management Program |

RAMP, which is in the early stage of development, is intended to be a systematic, risk-based means of capturing and evaluating facility information. Not examined by this study |

|

FPS-Building Security Assessments |

FPS security assessments of federal buildings |

|

|

NFIP* |

National Flood Insurance Program |

A risk-based federal insurance program |

|

Flood Maps Updating |

|

Floodplain maps for the United States underpin the NFIP, and ongoing improvements improve the precision of risk analysis underlying the NFIP |

|

Acronym (from Figure 2-1) |

Full Name |

Notes |

|

TRAM* |

Terrorism Risk Assessment and Management |

A computer-assisted tool to analyze risks primarily in the transportation sector. |

|

Grants programs* |

FEMA allocates grants to first responders and others through a variety of programs. Some allocations are based on formula, whereas others are based on coarse assessments of risk |

|

|

HAZUS-MH |

HAZards U.S.—Multi-hazard |

A software tool that uses databases of physical infrastructure to analyze potential losses from floods, hurricane winds, and earthquakes |

|

SHIELD |

Strategic Hazards Identification and Evaluation for Leadership Decisions |

A scenario-based regional risk analysis for the National Capital Region |

|

MSRAM |

Maritime Security Risk Analysis Model |

A computer-assisted tool to analyze risks primarily in the maritime sector. |

|

NMSRA |

National Maritime Strategic Risk Assessment |

A process used by the Coast Guard to identify risks to achieving its performance goals and identifying mitigation options. Not examined by this study |

|

aExcept as noted, the study committee examined each of these. Starred terms in the first column are discussed in some depth in this report. |

||

FIGURE 2-2 Types of risk-informed decisions that DHS faces (in boxes) arrayed roughly according to the decision-making horizon they inform.

Once policy decisions have been made, strategies can be aligned to support each policy tenet.1 For example, it may be that DHS leadership makes the policy decision to apply equal resources to counterterrorism and natural hazards preparedness. Once those allocations are made, strategic decisions must be made about how to apportion resources to address particular natural hazards and particular terrorism threats. Note that this approach implicitly avoids the necessity of comparing the risks of for example, floods to the risks of nuclear attacks, because a policy decision has already been made to divide resources equally between natural hazards and terrorism. Clearly there are other methods to parse the policy questions, but this illustrates how uncertainly can be removed at the policy level, thus simplifying strategic decisions.

REVIEW OF CURRENT PRACTICES OF RISK ANALYSIS WITHIN DHS

The remainder of this chapter summarizes the current practices of risk analysis within DHS for six illustrative methods: (1) risk analysis for natural hazards; (2) threat, vulnerability, and consequence analyses performed for protection of Critical Infrastructure and Key Resources (CIKR) protection; (3) risk models used to underpin those DHS grant programs for which allocations are based on risk; (4) the Terrorism Risk Assessment and Management (TRAM) tool; (5) the Biological Threat Risk Assessment (BTRA) methodology; and (6) the Integrated Risk Management Framework (IRMF). The committee does not attempt to document the many other risk models and practices within DHS. Risk analysis for natural disasters is discussed first because it is the most mature of these processes.

Risk Analyses for Natural Hazards

DHS’s natural hazards preparedness mission is addressed principally within the Federal Emergency Management Agency (FEMA). With minor exceptions (e.g., the U.S. Coast Guard), no other DHS component has a significant natural hazard mission. In natural hazards, FEMA is concerned with a variety of threats, such as tornadoes, hurricanes, earthquakes, floods, wildfires, droughts, volcanoes, and tsunamis.

FEMA’s authority for flood hazard resides largely in the National Flood Insurance Program, (NFIP), which represents a substantial responsibility. The NFIP is administered by a core staff of employees with support from contractors (i.e., consulting firms with expertise in hydrology, hydraulics, and floodplain studies). FEMA’s role with respect to other natural hazards deals principally with mitigation and response rather than risk analysis and thus is not addressed by this report. For example, the U.S. Geological Survey (USGS) has the primary responsibility for assessing earthquake hazards, while FEMA deals with developing emergency plans for responding to earthquakes and recovering from their effects. Jointly, the USGS and FEMA help inform planning for building codes so as to reduce vulnerabilities and strengthen the nation’s resilience to such hazards. Risk analysis often informs this mitigation and response planning.

FEMA’s risk analysis related to flooding serves as the basis for the creation of NFIP flood insurance rate maps and the setting of flood insurance rates. The risk assessments involve statistical analyses of large historical datasets, obtained primarily from USGS stream gages, and hydraulic computations that produce flood-frequency relations, water surface profiles, and maps showing flood zone delineations. In the context of this program, information on regional hydrology, statistical methods, river hydraulics, and mapping is constantly being improved

(largely because these are of broad interest and application within the larger water resources enterprise). FEMA’s risk analyses in support of the NFIP are based on generally good data and mature, well-understood science. Importantly, the analysis of natural hazards and their risks generally proceeds from empirical data. Hundreds of Ph.D. theses and natural events have led to many ways of validating the models for natural hazard risks. For example, one can compare the actual frequency of floods occurring in various flood zones after a flood map has been developed for a community. Over many years, the NFIP has been the subject of much scrutiny and occasional external assessments and reviews by associations, consultants, and others, including the National Research Council (NRC). Recent reports by the NRC (2007a, 2009) provide a good current assessment and recommendations for improving flood risk assessment.

Analyses in Support of the Protection of Critical Infrastructure

One of the primary new responsibilities assigned to DHS-IP (2009) when it was established was to develop the National Infrastructure Protection Plan (NIPP), which

provides the coordinated approach that is used to establish national priorities, goals, and requirements for CIKR protection so that Federal resources are applied in the most effective and efficient manner to reduce vulnerability, deter threats, and minimize the consequences of attacks and other incidents. It establishes the overarching concepts relevant to all CIKR sectors identified under the authority of Homeland Security Presidential Directive 7 (HSPD-7), and addresses the physical, cyber, and human considerations required for effective implementation of protective programs and resiliency strategies. [Available online at http://www.dhs.gov/xlibrary/assets/nipp_consolidated_snapshot.pdf.]

DHS’s Office of Infrastructure Protection (IP) has the mandate to produce threat, vulnerability, and consequence analyses to inform priorities for strengthening CIKR assets.

Table 2-2 lists the 18 CIKR sectors and the federal agency or agencies that have the lead responsibility for managing the associated risks. DHS has lead responsibility for 11 of the sectors, and it is to provide supporting tools and analysis for the others, working with the Department of Energy to protect the electrical grid, the Department of Health and Human Services on public health, and the Environmental Protection Agency with respect to the nation’s water supply. DHS works with these agencies to develop sector-specific plans and risk assessments. Maintaining a strong interface between DHS and other federal agencies—in order to share information, tools, and insight—is key to solidifying our nation’s security in those sectors for which responsibility is shared.

TABLE 2-2 CIKR Sectors and Federal Agencies with Lead Responsibility for Managing the Associated Risks

|

Sector-Specific Agency |

Critical Infrastructure and Key Resources Sector |

|

Department of Agriculture Department of Health and Human Services |

Agriculture and food |

|

Department of Defense |

Defense industrial base |

|

Department of Energy |

Energy |

|

Department of Health and Human Services |

Health care and public health |

|

Department of the Interior |

National monuments and icons |

|

Department of the Treasury |

Banking and finance |

|

Environmental Protection Agency |

Water |

|

Department of Homeland Security |

Chemical Commercial facilities Critical manufacturing Dams Emergency services Nuclear reactors, materials, and waste |

|

Office of Infrastructure Protection |

|

|

Office of Cybersecurity and Communications |

Information technology Communications |

|

Transportation Security Administration |

Postal and shipping |

|

Transportation Security Administration, U.S. Coast Guard |

Transportation systems |

|

Immigration and Customs Enforcement, Federal Protection Services |

Government facilities |

|

SOURCE: DHS-IP (2009, p. 3). Available online at http://www.dhs.gov/xlibrary/assets/NIPP_ Plan.pdf. Accessed November 20, 2009. |

|

Threat analyses are facilitated by the Homeland Infrastructure Threat and Risk Analysis Center (HITRAC) program, which is a joint program of IP and DHS’s Office of Intelligence & Analysis (I&A). The latter is DHS’s interface with the intelligence community and provides expertise and threat information. Many of the I&A professional staff have been hired from other intelligence agencies, and they provide DHS with a formal and informal intelligence network.

I&A’s Critical Infrastructure Threat Assessment (CITA) division, working with Argonne National Laboratory, established the process to provide threat information for the 18 CIKR sectors as well as for other DHS needs. CITA determines threat through structured subject matter elicitation. Some of the subject matter experts (SMEs) are staff from within I&A; others are enlisted from elsewhere in the intelligence community. Attack scenarios are developed to represent how SMEs would expect different sorts of terrorist groups (e.g., domestic terrorist, sophisticated Islamic terrorists), to go about attacking particular CIKR assets. The CIKR sectors and I&A work jointly to develop the scenarios. I&A’s inputs include analytic papers and reports on threats affecting particular states and urban areas. About 25 attack scenarios are generated per sector. The same scenarios are used year after year with modification as needed as more is learned about tactics and techniques. The mix of SMEs often changes, which might limit the consistency of the estimates but also serves to introduce fresh thinking. During elicitation, the SMEs work through a structured process to score the likelihood of the various threats against each type of CIKR asset. Infrastructure vulnerability experts also can be asked to participate. The committee did not examine the elicitation process in detail.

When developing threat estimates with the involvement of uncleared experts, the SMEs are given generic attack scenarios against generic infrastructure assets. Generic attack scenarios allow for the moving of classified information to the unclassified level and also some consistency in the variables described across scenarios. The attack scenarios are developed by intelligence analysts drawing on experts, previous attacks, and reporting. Each scenario includes descriptions of the mode of attack (e.g., a vehicle-borne improvised explosive device), how the terrorist gains access, the target, the terrorist goal, and the geographical regional or location. The process includes training for the SMEs on how to provide expert judgment with the least chance for bias. Such training, for both SMEs and those who perform the elicitation, is critical because it is well known that biases can be introduced in expert elicitation, and there are established methods for lessening this risk.

One major HITRAC product is an annual distillation, based on data from states and from CIKR sector councils, to identify lists of high-risk CIKR assets. These lists are used to guide resource allocation. HITRAC does not rely solely on quantitative analysis; one of its sources of information is red-team exercises, using staff with backgrounds in military special forces to brainstorm CIKR vulnerabilities. Another HITRAC risk product is the Strategic Homeland Infrastructure Risk Assessment (SHIRA). According to the National Infrastructure

Protection Plan of 2009,

[T]he SHIRA involves an annual collaborative process conducted in co-ordination with interested members of the CIKR protection community to assess and analyze the risks to the Nation’s infrastructure from terrorism, as well as natural and manmade hazards. The information derived through the SHIRA process feeds a number of analytic products, including the National Risk Profile, the foundation of the National CIKR Protection Annual Report, as well as individual Sector Risk Profiles. [DHS-IP, 2009, p. 33]

Risk-Informed Grants Programs

Another major DHS responsibility is issuing grants to help build homeland security capabilities at the state and local levels. Most such money is distributed through FEMA grants, of which there are numerous kinds, some with histories dating to the establishment of FEMA in the mid-1970s. In 2008, FEMA awarded more than 6,000 homeland security grants totaling over $7 billion. Five of these programs, covering more than half of FEMA’s grant money—the State Homeland Security Program (SHSP), the Urban Areas Security Initiative (UASI), the Port Security Grant Program (PSGP), the Transit Security Grant Program (TSGP), and the Interoperable Emergency Communications Grant Program (IECGP)—incorporate some form of risk analysis in support of planning and decision making. Two others inherit some risk-based inputs produced by other DHS entities—the Buffer Zone Protection Program, which allocates grants to jurisdictions near critical infrastructure if they are exposed to risk above a certain level as ascertained by IP, and the Operation Stonegarden Grant Program, which provides funding to localities near sections of the U.S. border that have been identified as high risk by Customs and Border Protection. All other FEMA grants are distributed according to formula.

Even for the grant programs that are risk-informed, FEMA has to operate within constraints that are not based on risk. For example, Congress has defined which entities are eligible to apply for grants and, for the program of grants to states, it has specified that every state will be awarded at least a minimum amount of funding. Congress stipulated that risk was to be evaluated as a function of threat, vulnerability, and consequence, and it also stipulated that consequence should be a function of economic effects, presence of military facilities, population, and presence of critical infrastructure or key resources (the 9/11 Act of 2007 (P.L. 110-53), Sec. 2007). However, FEMA is free to create the formula by which it estimates consequences, and it has also set vulnerability equal to 1.0, effectively removing it from consideration. The latter move is in part driven by the difficulty of performing vulnerability analyses for all the entities that might apply to the grants programs. FEMA does not have the staff to do that, and the grant allocation time line set by Congress is too ambitious to allow

detailed vulnerability analyses.

DHS also has latitude to define “threat.” In the past, it defined threat for grant making as consisting solely of the threat from foreign terrorist groups or from groups that are inspired by foreign terrorists. That definition means that the threat from narcoterrorism, domestic terrorism, or other such sources was not considered. This decision is being reviewed by the DHS Secretary.

For most grant allocation programs, FEMA weights the threat as contributing 20 percent to overall risk and consequence as contributing 80 percent. For some programs that serve multihazard preparedness, those weights have been adjusted to 10 percent and 90 percent, respectively, in order to lessen the effect that the threat of terrorism has on the prioritizations. Because threat has a small effect on FEMA’s risk analysis, and population is the dominant contributor to the consequence term, the risk analysis formula used for grant making can be construed as one that, to a first approximation, merely uses population as a surrogate for risk. FEMA does not have the time or staff to perform more detailed or specialized consequence modeling, and the committee was told that this coarse approximation is relatively acceptable to the entities supported by the grants programs. It is not clear whether FEMA has ever performed a sensitivity analysis of the weightings involved in these grant allocation formulas or evaluated the ramifications of the (apparently ad hoc) choices of weightings and parameters in the consequence formulas. Such a step would improve the transparency of these crude risk models.

The FEMA grants program is working on an initiative called Cost-to-Capability (C2C). This was begun to emulate the way the Department of Defense analyzes complex processes and drives toward optimal progress. The objective is to identify the information needed to manage homeland security and preparedness grant programs. The C2C model replaces “vulnerability” with “capability,” in a sense replacing a measure of gaps with a measure of hardness against threats. A Target Capabilities List (TCL) identifies 37 capabilities among four core mission areas of prevention, protection, response, and recovery. The TCL includes capabilities ranging from intelligence analysis and production to structural damage assessment. The critical element of C2C is to identify the importance of such capabilities to each of the 15 national planning scenarios used to develop target capabilities. This intends to open up the possibility of aggregating capabilities to create a macro measure of national “hardness” against homeland security hazards. The C2C initiative is still in a conceptual stage and had been heavily criticized in congressional hearings, but it appears to be a reasonable platform by which the homeland security community can begin charting a better path toward preparedness. A contractor is creating software, now ready for pilot testing, that will allow DHS grantees to perform self-assessments of the value of their preparedness projects, create multiple investment portfolios and rank them, and track portfolio performance.

Risk Analysis in TRAM

The Terrorism Risk Assessment and Management (TRAM) toolkit is a mature software-based method for performing terrorism-related relative risk analysis primarily in the transportation sector. It helps owner-operators and other SMEs identify their most critical assets, the threats and likelihood of certain classes of attacks against those assets, the vulnerability of those assets to attack, the likelihood that a given attack scenario would succeed, and the ultimate impacts of the total loss of the assets on the agency’s mission. TRAM also helps to identify options for risk management and assists with cost-benefit analyses.

Overall, TRAM works through six steps to arrive at a risk assessment:

-

Criticality assessment

-

Threat assessment

-

Vulnerability assessment

-

Response and recovery capabilities assessment

-

Impact assessment

-

Risk assessment

Working through the process, the first step in the overall TRAM risk assessment is evaluation of the criticality of each of the agency’s assets to the mission. This includes a quantification and comparison of assets to identify those that are most critical. In making the determination, factors that the agency most wishes to guard against are identified: for example, loss of life or serious injury; the ability of the agency to communicate and move people effectively; negative impacts on the livelihood, resources, or wealth of individuals and businesses in the area, state, region, or country; or replacement cost of critical assets of the agency

The TRAM process then guides SMEs through a threat assessment. A potential list of specific types of threats (e.g., attack using small conventional explosives, large conventional explosives, chemical agents, a radiological weapon, or biological agents) is considered, and for each the SMEs are asked to estimate the likelihood of the specific attack type occurring against the agency’s critical assets. The analysis is also informed by general considerations of whether a terrorist group would be capable of such an attack and motivated to carry it out on the asset(s) in question.

Steps 3 to 5—vulnerability assessment, response and recovery capabilities assessment, and impact assessment—are similarly effected through expert elicitation, drawing largely on the knowledge and experience of agency security experts, engineers, and other experienced professional staff with a strong under-

standing of their assets and operations.2 The vulnerability assessment component evaluates the vulnerability of the identified critical assets to the specific threat scenarios. In relation to response, the TRAM process calls for local emergency response organizations to weigh in by performing self-assessments of their ability to support the mission of the agency being reviewed. Capabilities, gaps, and shortfalls with respect to aspects such as staffing, training, equipment and systems, planning, exercises, and organizational structure are considered relevant. The recovery assessment reviews the agency’s own functions and capabilities for managing aspects of recovery and business continuity. That assessment addresses elements such as plans and procedures, alternate facilities, operational capacity, communications, records and databases, and training and exercises. Impact assessment is designed to lead to the calculation of consequence measures for each particular threat scenario. This part of the process adds a sensitivity component to the analysis by taking into account not just the worst-case scenario in which there is a total loss of the critical asset, but also less extreme results. At step 6, risk assessment, the TRAM software is operated in batch mode—the parameters for a particular analysis are specified up front and the model is run offline. A complete set of scenarios, risk results, and a relative risk diagram are the outputs. The two-dimensional risk diagram shows a comparison of risk between scenarios based on their overall ratings of likelihood and consequence. Work is under way to expand TRAM to multiple hazards beyond terrorism. These might include human-initiated hazards such as sabotage and vandalism; technological hazards such as failure in structures, equipment, or operations; and natural hazards such as hurricanes, earthquakes, and blizzards.

Biological Threat Risk Assessment

The Biological Threat Risk Assessment tool is a computer-based probabilistic risk analysis (PRA), using a 17-stage event tree, to assess the risk associated with the intentional release of each of 29 biological agents. An NRC committee reviewed the method used to produce the 2006 biological threat risk assessment and found that the basic approach was problematic (NRC, 2008), as explained in Chapter 4. While some changes have been made and more are slated for the future, the same general approach is apparently still in use for assessments of biological threats, chemical threats, and DHS’s integrated chemical, biological, radiological, and nuclear (iCBRN) risks and, in particular, was used to produce biological risk assessments released in January, 2008, and January, 2010. The best description of the BTRA method is found in Chapter 3 of the NRC review.

It describes the method as follows (NRC, 2008, p. 22):

The process that produced the estimates in the BTRA of 2006 consists of two loosely coupled analyses: (1) a PRA event-tree evaluation and (2) a consequence analysis.

A PRA event tree represents a sequence of random variables, called events, or nodes. Each random-event branching node is followed by the possible random-variable realizations, called outcomes, or arcs, with each arc leading from the branching, predecessor node, to the next, successor-event node (and it can be said without ambiguity that the predecessor event selects this outcome, or, equivalently, selects the successor event). With the exception of the first event, or root node, each event is connected by exactly one outcome of a preceding event …. The path from the root to a particular leaf is called a scenario ….

The 17 stages modeled in BTRA are as follows:

-

Frequency of initiation by terrorist group

-

Target selection

-

Bioagent selection

-

Mode of dissemination (also determines wet or dry dispersal form)

-

Mode of agent acquisition

-

Interdiction during acquisition

-

Location of production and processing

-

Mode of agent production

-

Preprocessing and concentration

-

Drying and processing

-

Additives

-

Interdiction during production and processing

-

Mode of transport and storage

-

Interdiction during transport and storage

-

Interdiction during attack

-

Potential for multiple attacks

-

Event detection

The evaluation of consequences is performed separately, not as part of the event tree (NRC 2008, p. 27):

Consequence models characterize the probability distribution of consequences for each scenario. The BTRA employs a mass-release model that assesses the production of each bioagent, beginning with time to grow and produce, preprocess and concentrate, dry, store and transport, and dispense. The net result is a biological agent dose that is input to a consequence model to assess casualties. One equation from the model is produced here to give a flavor of the computations.

where MR is bioagent mass release, MT is target mass, and QFi are factors to explain production, processing, storage, and so on and are random variables conditioned on the scenario whose consequences are being evaluated.

The complete model computes, for an attack with a given agent on a given target, how much agent has been used, how efficiently it has been dispersed (and, for an infectious agent, how far it spreads in the target population), and the potential effects of mitigation efforts. For the BTRA of 2006, all of these factors were assigned values by eliciting opinions of subject-matter experts in the form of subjective discrete probability distributions of likely outcomes, and by some application of information on the spread of infectious agent, atmospheric dispersion, and so on.

The BTRA consequence analysis is qualitatively different from its event-tree analysis. Subject-matter expert opinions are developed much like case studies, and there is less clear dependence on specific events leading to each consequence. Thus, each consequence distribution should be viewed as being dependent on every event leading to its outcome …. A Monte Carlo simulation of 1,000 samples was used to estimate each consequence distribution in the BTRA of 2006.

Integrated Risk Management Framework

Recognizing the need for coordinated national-level risk management, on April 1, 2007, DHS created the Office of Risk Management and Analysis (RMA) within the National Protection and Programs Directorate. Serving as DHS’s executive agent in charge of national-level risk analysis standards and metrics, RMA has the broad responsibility to synchronize, integrate, and coordinate risk management and risk analysis approaches throughout DHS (http://www.dhs.gov/xabout/structure/gc_1185203978952.shtm). RMA is leading DHS’s effort to establish a common language and an integrated framework as a general structure for risk analysis and coordination across the complex DHS enterprise.

RMA’s development of the IRMF and supporting elements generally follows implementation of Enterprise Risk Management (ERM) in the private sector, most closely aligning with ERM practices in nonfinancial services companies. A brief overview of ERM is provided next to better explain the parallels between ERM as implemented in the private sector and IRMF as developed and implemented by RMA.

Enterprise Risk Management was sparked by concerns in the late 1990s about the “Y2K problem,” the risk that legacy software would fail when presented with dates beginning with “20” rather than “19.” In order for a firm to characterize its risk exposure to this problem, it was necessary to develop processes that enabled top management to identify not only information technology risks within discrete business units, but also those risks that arise or increase due

to interactions, synergies, or competition among business units. Building on a base of data analysis and risk modeling, ERM also relies on good processes for the establishment of strong management processes, common terminology and understanding, and high-level governance. ERM is risk management performed and managed across an entire institution (across silos) in a consistent manner wherever possible. This requires some entity with a top-level view of the organization to establish processes for governing risk management across the enterprise, coordinating risk management processes across the enterprise, and working to establish a risk-aware culture. ERM systems do not “own” unit-specific risk management, but they impose some consistency so that those risk management practices are synergistic and any data collected are commensurate. The latter allows for more rational management and resourcing across units. ERM systems also provide steps to aggregate risk analyses and risk management processes up to the top levels of the organization so as to obtain an integrated view of all risks. When viewed through the lens of aggregation, some risks that are of low probability for any given unit are seen to have a medium or high probability of occurring somewhere in the enterprise, and some risks that are of low consequence to any given unit can have a high consequence if they affect multiple units simultaneously.

More generally, ERM provides an understanding of potential barriers that must be recognized and managed to achieve program and strategic objectives. It also informs decision makers of corporate challenges and mitigation strategies, and it provides a basis for risk-based executive-level decisions. A comprehensive ERM framework strengthens leaders’ ability to better anticipate internal and external risks, and it allows risk to be addressed early enough to preserve a full range of mitigation options, and plan responses and generally to reduce surprises and their associated costs.

By and large, RMA appears to be trying to establish the elements commonly accepted as fundamental to ERM: governance, processes, and culture.

-

Governance includes the framework for strategic and analysis-driven decision making, high-level review and reporting, and ongoing strategic assessment of policies, procedures, and processes.

-

Processes include those for identification, assessment, monitoring, and resolution of risks at all levels of the enterprise.

-

Culture includes language, values, and behavior.

An interim draft of the Integrated Risk Management Framework was released in January 2009. The IRMF is intended to provide doctrine and guidelines that enable consistent risk management throughout DHS in order to inform enterprise-level decisions. It is also meant to be of value to risk management at the component level that informs decisions within those components. The objectives of the IRMF are to “[i]mprove the capability for DHS components to utilize risk management to support their missions, while creating mechanisms

for aggregating and using component-level risk information across the Department, [to support the] strategic-level decision-making ability of DHS by enabling development of strategic-level analysis and management of homeland security risks, [and to] institutionalize a risk management culture within DHS.”3 “The IRMF outlines a vision, objectives, principles and a process for integrated risk management within DHS, and identifies how the Department will achieve integrated risk management by developing and maturing governance, processes, training, and accountability methods” (DHS-RSC, 2009, p. 1-2). In addition, the IRMF is meant to help institutionalize a risk management culture within DHS (DHS-RSC, 2009, p. 12). The IRMF is gradually being supplemented with analytical guidelines that serve as primers on specific practices of risk management within DHS. Two recent draft guidelines that are adjuncts to the IRMF have addressed risk communication to decision makers and development of scenarios.

Other RMA activities to support IRMF (and, more generally, achieve the vision of ERM) include cataloging of risk models and processes in use across DHS, formation and coordination of a Risk Steering Committee (RSC), development of a risk lexicon, and work on the RAPID process (Risk Analysis Process for Informed Decision-Making) to link risk analysis to internal budgeting.

RMA has catalogued dozens of risk models and processes across DHS (DHS-RMA, 2009). A side benefit of this effort was that it presumably helped to establish an informal network of relationships and technical capabilities among at least some of the component units. Through that network, it is hoped that training, education, outreach, and success stories can migrate from the more risk-mature component units to those with less mature risk management practices.

Additionally, RMA is working to foster a coordinated, collaborative approach to risk-informed decision making by facilitating engagement and information sharing of risk expertise across components of DHS. It does this through meetings of the RSC, which is intended to promote consistent and comparable implementations of risk management across the department. The Under Secretary for National Protection and Programs chairs the RSC, whose members consist of component heads and various key personnel responsible for department-wide risk management efforts.

The DHS Risk Lexicon was released in September 2008 (DHS-RSC, 2008). It was developed by a working group of the RSC, which collected, catalogued, analyzed, vetted, and disseminated risk-related words and terms used throughout DHS.

The RAPID process is being developed to meet the strategic risk information requirements of DHS’s Planning, Programming, Budgeting, and Execution (PPBE) system. It is meant to assess how DHS programs can work together to reduce or manage anticipated risks in attaining Departmental goals and objec-

tives, ensure that decisions about future resource allocations are informed by programs’ potential for risk reduction, and support key DHS decision makers with a standardized assessment process to answer the basic risk management questions, How effectively are DHS programs helping to reduce risk? and What should we be doing next?4 RAPID, which is still at the prototype stage, consists of the following seven steps:

-

Select a representative sample of scenarios.

-

Build “attack paths” for each of the terrorist scenarios, turning the scenarios into a sequence of major activities.

-

For each activity in the attack path, use expert elicitation to assign probability estimates for (a) the probability that the terrorist chooses or accomplishes the activity, (b) the effectiveness of DHS programs in stopping the activity, and (c) the overall likelihood for the scenario.

-

Estimate the risk of a successful attack in terms of the consequences (lives lost, direct and indirect economic effects).

-

For each DHS program, calculate the risk reduction based on the threat probabilities and that program staff’s judgment of the program effectiveness.

-

Estimate the effectiveness of national (non-DHS) capabilities.

-

Assess risk reduction alternatives.

CONCLUDING OBSERVATION

During the course of this study, DHS was very helpful in setting up briefings and site visits. However, the committee’s review of DHS risk analysis was hampered by the absence of documentation of methods and processes. This gap will necessarily hinder internal communication within DHS and any attempt at internal or external review. The risk analysis processes for infrastructure protection, the grants program, and the IRMF were documented mostly through presentations. With the exception of NISAC work, the committee was not told about or shown any document explaining the mathematics of the risk modeling or any expository write-up that could help a newcomer understand exactly how the risk analyses are conducted. For example, there are apparently very detailed checklists to guide CIKR vulnerability assessments, which the committee did not need to examine, but the committee was not given any clear documentation of how the resulting inputs were used in risk analysis. The committee was told in general terms how the grants program calculates risk, but the people with whom the committee interacted did not know the exact formula and could not

point to a document. The committee did get to see emerging documentation about some aspects of IRMF, but important components such as the RAPID process for linking risk to budgets were presented only through charts.

The risk assessments done by FEMA to underpin the National Flood Insurance Program are better documented, in part because of their long history, perhaps because they are linked to an academic community. The NRC committee that reviewed the BTRA methodology had difficulty understanding the mathematical model and its instantiation in software, and noted in its report that the classified description produced by DHS lacked essential details. (The current study did not re-examine those materials to determine whether documentation had improved.) The TRAM model is fairly well described in an “official-use-only” document, the Methodology Description dated May 13, 2009, but there is no open-source description.

Because of this lack of documentation, the committee has had to infer details about DHS risk modeling in developing this chapter.