Developing Robust Cloud Applications

YUANYUAN (YY) ZHOU

University of California, San Diego

Despite possible security and privacy risks, cloud computing has become an industry trend, a way of providing dynamically scalable, readily available resources, such as computation, storage, and so forth, as a service to users for deploying their applications and storing their data. No matter what form cloud computing takes—public or private cloud, raw cloud infrastructure, or applications (software) as a service—it provides the benefits of utility-based computing (i.e., computing on a pay-only-for-what-you-use basis).

Cloud computing can provide these services at reduced costs, because cloud service is paid for incrementally and scales with demand. It can also support larger scale computation, in terms of power and data storage, without the configuration and set-up hassles of installing and deploying local, large-scale clusters. Cloud computing also has more mobility, because it provides access from wherever the Internet is available. These benefits allow IT users to focus on domain-specific problems and innovations.

More and more applications are being ported or developed to run on clouds. For example, Google News, Google Mail, and Google Docs all run on clouds. Of course, these platforms are also owned and controlled by the application service provider, namely Google, which makes some of the challenges discussed below easier to address.

Many applications, especially those that require low costs and are less sensitive to security issues, such as Amazon Elastic Computing Cloud (EC2) and Amazon Machine Images (AMIs), have moved to public clouds. Since February 2009, for example, IBM and Amazon Web Services have allowed developers to use Amazon EC2 to build and run a variety of IBM platform technologies. Because developers can use their existing IBM licenses on Amazon EC2, soft-

ware developers have been able to build applications based on IBM software within Amazon EC2. This new “pay-as-you-go” model provides development and production instances of IBM DB2, Informix Dynamic Server, WebSphere Portal, Lotus Web Content Management, and Novell’s SUSE Linux operating system on EC2.

With this new paradigm in computation, cost savings, and other benefits, cloud computing also brings unique challenges to building robust, reliable applications on clouds. The first major challenge is the change in mindset to the unique characteristics (e.g., elasticity of scale, transparency of physical devices, unreliable components, etc.) of deploying and running an application in clouds. The second challenge is the development of frameworks and tool sets to support the development, testing, and diagnosis of applications in clouds.

In the following sections, I describe how the traditional application development and execution environment has changed, the unique challenges and characteristics of clouds, the implications of cloud computing for application development, and suggestions for easing the move to the new paradigm and developing robust applications for clouds.

DIFFERENCES BETWEEN CLOUDS AND TRADITIONAL PLATFORMS

Although there are many commonalities between traditional in-house/local-execution platforms and clouds, there are also characteristics and challenges that are either unique or more pronounced in clouds. Some short-term differences that will disappear when cloud computing matures are discussed below.

Statelessness and Server Failures

Because one of the major benefits of cloud computing is lower cost, cloud service providers are likely to use cost-effective hardware/software that is also less robust and less reliable than people would purchase for in-house/local platforms. Thus, the underlying infrastructure may not be configured to support applications that require very reliable and robust platforms.

In the past two to three years, there have been many service outages in clouds. Some of the most widely known outages have caused major damage, or at least significant inconvenience, to end users. For example, when Google’s Gmail faltered on September 24, 2009, even though the system was down for only a few hours, it was the second outage that month and followed a disturbing sequence of outages for Google’s cloud-based offerings for search, news, and other applications in the past 18 months. Explanations ranged from routing errors to problems with server maintenance. Another example is the outage on Twitter in early August 2009 that lasted throughout the morning and into early afternoon and probably angered serious “twitterers.”

Ebay’s PayPal online payments system also failed a few times in August 2009; outages lasted from one to more than four hours, leaving millions of customers unable to complete transactions. A network hardware problem was reported to be the culprit. PayPal lost millions of dollars, and merchants lost unknown amounts. Thomas Wailgum of CIO.com reported in January 2009, that Salesforce.com had suffered a service disruption for about an hour on January 6 when a core network device failed because of memory allocation errors.

General public service providers have also experienced outages. For example, Rackspace was forced to pay out $2.5 to $3.5 million in service credits to customers in the wake of a power outage that hit its Dallas data center in late June 2009. Amazon S3 storage service was knocked out in summer 2008; this was followed by another outage in early 2009 caused by too many authentication requests.

Lack of Transparency and Control (Virtual vs. Physical)

Because clouds are based on virtualization, applications must be virtualized before they can be moved to a cloud environment. Thus, unlike local platforms, cloud computing imposes a layer of abstraction between applications and physical machines/devices. As a result, many assumptions and dependencies on the underlying physical systems have to be removed, leaving applications with little control, or even knowledge of, the underlying physical platform or other applications sharing the same platform.

Network Conflicts with Other Applications

For in-house data grids, it is a good idea to use a separate set of network cards and put them on a dedicated VLAN, or even their own switch, to avoid broadcast traffic between nodes. However, application developers for a cloud may not have this option. To maximize usage of the system, cloud service providers may put many virtual machines on the same physical machine and may design a system architecture that groups significant amounts of traffic going through a single file server, database machine, or load balancer. For example, so far there is no equivalent of network-attached shared storage on Amazon. In other words, cloud application developers should no longer assume they will have dedicated network channels or storage devices.

Less Individualized Support for Reliability and Robustness

In addition to the absence of a dedicated network, I/O devices are also less likely to find cloud platforms that provide individualized guarantees for reliability and robustness. Although some advanced, mature clouds may provide several levels of reliability support in the future, this support will not be fine-grained enough to match individual applications.

Elasticity and Distributed Bugs

The main driver for the development of cloud computing is for the system to be able to grow as needed and for customers to pay only for what they use (i.e., elasticity). Therefore, applications that can dynamically react to changes in workload are good candidates for clouds. The cost of running an application on a cloud is much lower than the cost of buying hardware that may remain idle except in times of peak demand.

If a good percentage of your workloads have already been virtualized, then they are good candidates for clouds. If you simply port the static images of existing applications to clouds, you are not taking advantage of cloud computing. In effect, your application will be over-provisioned based on the peak load, and you will have a poorly used environment. Moving existing enterprise applications to the cloud can be very difficult simply because most of them were not designed to take advantage of the cloud’s elasticity. Distributed applications are prone to bugs, such as deadlocks, incorrect message ordering, and so on, all of which are difficult to detect, test, and debug.

Elasticity makes debugging even more challenging. Developers of distributed applications must think dynamically to allocate/reclaim resources based on workloads. However, this can easily introduce bugs, such as resource leaks or tangling links to reclaimed resources. Addressing this problem will require either software development tools for testing and detecting these types of bugs or new application development models, such as MapReduce, which would eliminate the need for dynamic scaling up and down.

Lack of Development, Execution, Testing, and Diagnostic Support

Finally, one of the most severe, but fortunately short-term, challenges is the lack of development, testing, and diagnostic support. Most of today’s enterprise applications were built using frameworks and technologies that were not ideal for clouds. Thus, an application that works on a local platform may not work well in a cloud environment. In addition, if an application fails or is caught up in a system performance bottleneck caused by the transparency of physical configuration/layout or other applications running on the same physical device/hardware, diagnosing and debugging the failure can be a challenge.

IMPROVING CLOUDS

Cloud computing is likely to bring transformational change to the IT industry, but this transformation cannot happen overnight—and it certainly cannot happen without a plan. Both application developers and platform providers will have to work hard to develop robust applications for clouds.

Application developers will have to adopt the new paradigm. Before they can evaluate whether their applications are well suited, or at least have been revised properly to take advantage of the elasticity of clouds, they must first understand the reasons for, and benefits of, moving to clouds. Second, since each cloud platform may be different, it is important that application developers understand the platform’s elasticity model and dynamic configuration method. They must also keep abreast of the provider’s evolving monitoring services and service level agreements, even to the point of engaging the service provider as an ongoing operations partner to ensure that the demands of the new application can be met.

The most important thing for cloud platform providers is to provide application developers with testing, deployment, execution, monitoring, and diagnostic support. In particular, it would be useful if applications developers have a good local debugging environment as well as testing platforms that can help with programming and debugging programs written for the cloud.

Unfortunately, experience with debugging on local platforms does not usually simulate real cloud-like conditions. From my personal experience and from conversations with other developers, I have come to realize that most people face problems when moving code from their local servers to clouds because of behavioral differences such as those described above.

CLOUD COMPUTING ADOPTION MODEL

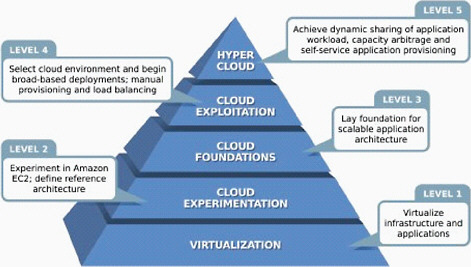

A cloud computing model, proposed by Jake Sorofman in an October 20, 2008 article on the website, Dr. Dobb’s: The World of Software Development, provides an incremental, pragmatic approach to cloud computing. Loosely based on the Capability Maturity Model (CMM) developed by the Software Engineering Institute (SEI) at Carnegie Mellon University, this Cloud Computing Adoption Model (Figure 1) proposes five steps for adopting the cloud model: (1) Virtualization—leveraging hypervisor-based infrastructure and application virtualization technologies for seamless portability of applications and shared server infrastructure; (2) Exploitation—conduct controlled, bounded deployments using Amazon EC2 as an example of computing capacity and a reference architecture; (3) Establishment of foundations—determine governance, controls, procedures, policies, and best practices as they begin to form around the development and deployment of cloud applications. In this step, infrastructures for developing, testing, debugging, and diagnosing cloud applications are an essential part of the foundation to make the cloud a mainstream of computing; (4) Advancement—scale up the volume of cloud applications through broad-based deployments in the cloud; and (5) Actualization—balance dynamic workloads across multiple utility clouds.