THE NATIONAL ACADEMIES

Advisers to the Nation on Science, Engineering, and Medicine

Hon. Janet Napolitano

Secretary

Department of Homeland Security

Washington, DC

Dear Secretary Napolitano:

This letter is the abbreviated version of an update of the interim report on testing, evaluation, costs, and benefits of advanced spectroscopic portals (ASPs), issued by the National Academies’ Committee on Advanced Spectroscopic Portals in June 2009 (NRC 2009). This letter incorporates findings of the committee since that report was written, and it sharpens and clarifies the messages of the interim report based on subsequent committee investigations of more recent work by the Domestic Nuclear Detection Office (DNDO). The key messages in this letter, which is the final report from the committee, are stated briefly in the synopsis on the next page and described more fully in the sections that follow. The committee provides the context for this letter, and then gives advice on: testing, evaluation, assessing costs and benefits, and deployment of advanced spectroscopic portals. The letter closes with a reiteration of the key points. The letter is abbreviated in that a small amount of information that may not be released publicly for security or law-enforcement reasons has been redacted from the version delivered to you in October 2010, but the findings and recommendations remain intact.

CONTEXT

U.S. Customs and Border Protection (CBP) searches for smuggled nuclear and radiological material by scanning1 more than 20 million cargo containers that enter the United States each year. In the initial scanning step used at ports and border crossings today, a truck bearing a cargo container is driven slowly through a PVT radiation portal monitor (PVT RPM), consisting of radiation detectors mounted in a tower located on each side of the inspection roadway. This is called primary inspection. For various reasons some conveyances are selected for additional scrutiny and are sent to secondary inspection. In secondary inspection, the truck is driven very slowly through another PVT RPM, and after the truck stops, a CBP officer scans the truck and the container with a handheld radiation detector device called a radioisotope identification device (RIID).

DNDO, which holds the government’s primary responsibility for improving CBP’s radiation detection capabilities at the nation’s ports of entry, advocated for development and deployment of better portals to replace the current detectors. The ASPs are intended to address known limitations of the PVT RPMs and the RIIDs, and to reduce the time involved in secondary inspection. DNDO manages the

__________________

1 According to CBP, screening comprises the efforts to identify which containers should be targeted for greater scrutiny, through evaluation of risk factors in the manifest and other information from intelligence and law enforcement. Scanning is physical inspection of the container itself, including use of passive detectors, such as the

SYNOPSIS

This report describes merits, deficiencies, and options for improving testing, evaluation, and analysis of costs and benefits of advanced spectroscopic portals (ASPs). Specifically, the report addresses the Domestic Nuclear Detection Office’s (DNDO’s) 2008 performance tests, its characterization of results of the tests, and the scope and implementation of DNDO’s draft cost-benefit analysis, as well as deployment of ASPs.

Testing The design and evaluation of DNDO’s 2008 ASP performance tests have shortcomings that impair DHS’ ability to draw reliable conclusions about the ASP’s likely performance. The physical tests were not and have not been structured as part of an effort using modeling (computer simulations) and physical tests to build an understanding of the performance of the ASPs against different threats over a wide range of configurations and operating environments, as was suggested in the committee’s interim report.

Evaluation In characterizing and evaluating the results of the tests comparing the relative performance of the ASP and the handheld radioisotope identification device (RIID), DNDO’s analysis used a figure of merit that is not technically meaningful and could be misleading. The committee recommends that DNDO use the more particularized results from its report to create a different figure of merit and suggests some options.

Costs and Benefits The estimated net cost of ASPs exceeds that of the existing polyvinyl toluene radiation portal monitors (PVT RPMs) and RIIDs, so it would make sense to procure ASPs only if the security benefits justify the additional investment. In its draft cost-benefit analysis, DNDO carried out both a breakeven analysis and a capabilities-based plan to account for security benefits from ASPs, but the DNDO draft analyses the committee examined still need substantial improvement to support decision making. Three major problems remain: (1) The strategic justification for the chosen alternative or preferred option was not provided; (2) the set of alternatives analyzed is too narrow; and (3) DNDO used quantitative modeling techniques and therefore quantified factors that could not be justifiably quantified, when the analysis could have been carried out effectively with qualitative reasoning.

With respect to the narrow alternatives, DNDO followed a suggestion in the committee’s interim report, examining the effect of using improved software and algorithms in conjunction with the current handheld RIIDs used in secondary inspection. The results show dramatic improvements, such that the performance of the RIIDs with a state-of-the-art algorithm could outperform the tested ASP systems (2008 hardware and software configurations) in some cases, although they were still poorer in other cases. There are drawbacks to using handheld detectors for external screening of cargo containers, but this low-cost option, which substantially increases scanning effectiveness, should be an alternative in the cost-benefit analysis, and it might ultimately prove to be the preferred option.

Deployment The committee previously recommended an incremental approach to deployment, exploiting the modularity required in the ASP product specifications to match the best hardware with the best data-analysis algorithms and to upgrade as experience is gained with the system. It appears that DNDO has not gotten the modularity from the vendors that was mandated in the specification. This deficiency should be corrected and DNDO should encourage a broader effort to improve data-analysis algorithms, additionally engaging experts outside of the very small community of researchers engaged to date.

development and acquisition program for ASPs, which like the PVT RPMs are portal-mounted detectors but have isotope identifying capabilities like the RIIDs. Congress required that the Secretary of Homeland Security certify that the new detectors provide a “significant increase in operational effectiveness” before the Department of Homeland Security (DHS) proceeds with full-scale procurement of the ASPs.

Also at the direction of Congress, in April 2008 your predecessor requested advice from the National Research Council to help bring scientific rigor to the procurement process for ASPs. Specifically, your predecessor requested findings and recommendations on testing, evaluation, and analysis of costs and benefits of the new devices. (See Attachment 1 for the full statement of task.) The ASP testing and evaluation program encountered delays in 2008 and early 2009, which gave the committee the opportunity to offer DHS an interim report recommending a better approach to testing, evaluation, cost-benefit assessment, and deployment of ASPs (NRC 2009; the executive summary can be found in Attachment 2).

The Committee on Advanced Spectroscopic Portals (see Attachment 3), which is conducting the study and wrote the interim report, has reviewed the progress that DNDO has made since the report was issued to DHS and to Congress at the beginning of June 2009. In February 2010, you decided to pursue certification for ASPs in secondary inspection only, because you determined that ASPs as tested do not meet DHS’s criteria for a significant increase in operational effectiveness for primary inspection. Therefore, this report focuses on the analysis of test results as they bear on the ASP’s intended role in secondary inspection. This report is based on the most recent information provided to the committee as of September 2010.

THE DECISION TO FOCUS ON SECONDARY INSPECTION

The committee agrees that the performance of ASPs to date does not support deployment in primary inspection. Test results indicate that the ASPs do not meet DHS’s threshold criteria for further consideration in primary inspection. The ASPs performed better than the PVT RPM and RIID system at detecting “moderately shielded” highly enriched uranium (HEU), and worse than the existing system at producing the correct outcome for masked special nuclear material (SNM).2,3,4 Quantifying the difference in performance between these systems is difficult because of problems with DNDO’s analyses to date, as described below. Those problems are important, but they do not call into question your conclusion about ASPs for primary inspection, unless the criteria for acceptance of ASPs change (e.g., by emphasizing shielded HEU over other threats). If either the ASP performance or the criteria were to change, one would still confront a question regarding costs and benefits: The ten-year lifecycle cost of existing current unit (a PVT radiation portal monitor) is approximately $600k, compared to approximately $1,200k for an ASP.5

__________________

2 Masking is when radiation from benign radioactive material makes it difficult for a detector system to detect and identify a threat object.

3 ASPs and PVT RPMs perform somewhat different functions in primary inspection: PVT RPM detect radiation and have only crude discrimination capabilities to evaluate the potential source of the radiation, so conveyances triggering the radiation alarm in primary inspection are referred to secondary inspection. ASPs have finer discrimination capabilities (energy resolution), so a conveyance that emits radiation may nonetheless be determined to be a benign radiation source and so released without secondary inspection. Because of the differences in the detectors’ functions, DNDO compared them primarily based on the operational outcome they produced, i.e., whether they resulted in the correct operational outcome for that detector.

4 Special nuclear material is defined in Title I of the Atomic Energy Act of 1954 to mean “plutonium, uranium enriched in the isotope 233 or in the isotope 235… .” It is the material of greatest use in nuclear explosives.

5 The costs listed here include procurement, deployment, and operation and maintenance, per DNDO’s 2009 analysis (DNDO 2010a). No sunk costs are included because the cost-benefit decision hinges on the future costs. Neither figure includes the cost of a RIID because DNDO’s draft cost-benefit analysis assumes that CBP will need the same number of RIIDs even if ASPs are deployed in secondary inspection.

To support a certification decision for deployment of ASPs in secondary inspection, results of the performance tests, field validation tests, and operational tests taken together would need to indicate a significant improvement over the current PVT RPM and RIID system. The acquisition decision, DNDO informed the committee, is a separate policy decision based on the cost-benefit analysis. Such policy decisions are outside of this study’s scope, so the committee has focused its efforts on evaluating whether the performance of the ASPs has been tested, analyzed, and characterized with scientific rigor, and whether the methods used in the cost-benefit analysis are sound, defensible, and appropriate.

TESTING

To establish how effective ASPs would be at detecting threat objects (i.e., those containing material that could be used to make a nuclear or radiological weapon), and differentiating them from benign radiation sources in general commerce, DNDO physically loaded truck-borne containers with such objects in a number of configurations of cargo, scanned the containers multiple times with the ASP and other detectors being tested, and recorded the detectors’ performance. The containers were then scanned using the handheld radioisotope identifier (RIID).6 For example, for tests of shielding and masking, the runs were repeated with the radiation source in different locations in the shipping container and with increasing increments of shielding or masking material (naturally occurring radioactive material, also called NORM) added until the source could not be detected.

As the committee noted in its interim report, DNDO’s 2008 tests were an improvement in scientific rigor over its earlier performance tests. The detectors’ performance was charted across the limits of their abilities to detect and identify radiation sources, which was not the case in earlier tests.

The Recommended Approach: Model-Test-Model

The performance tests are valuable, but by themselves they represent only a small set of possible configurations of threats and cargo in commerce. The set of possible combinations of threats, cargo, and environments is so large and multidimensional that DNDO needs an analytical basis for understanding the performance of its detector systems, not just an empirical basis.7 In other words, DNDO should be able to model and predict accurately the systems’ performance against different configurations and in different environments.

In its interim report, the study committee recommended that DHS use a standard scientific approach in which scientists use computer models to simulate radiation from radioactive material, configurations of cargo, and detector performance; use physical tests to validate and refine the models; and use the models to select key new physical tests that advance our understanding of the detector systems, iteratively. This iterative modeling and testing approach is common scientific practice in the development of high-technology equipment and is essential for building scientific confidence in detector performance over a wide range of circumstances, not all of which can be tested physically.

Modeling has not had a high priority within the ASP project. DNDO has funded a relatively small modeling effort to carry out what are called injection studies. These studies superpose a measured

__________________

6 DHS’s final report on these tests states that “In addition, ORTEC Detective measurements were acquired at the same position as one of the [RIID] positions.” (DNDO 2009) The Detective measurements were conducted at the request of the Department of Energy, so DNDO provided them back to DOE without analyzing them. The committee never learned what was done with the data beyond what is reported here. It might be useful to DNDO to analyze the data collected with the Detective and compare it to other devices.

7 Dennis Slaughter, a scientist at Lawrence Livermore National Laboratory, was commissioned by the DHS Operational Testing and Evaluation organization to evaluate DNDO’s 2008 NTS performance tests for their implications for operational testing (Slaughter 2009). Dr. Slaughter notes the limitations of physical tests, including both the limited set of configurations and the large uncertainties resulting from small sample sizes.

spectrum8,9 from a threat source (again, perhaps a highly enriched uranium source) on a spectrum measured from a benign cargo conveyance. Thus a threat spectrum can be “injected” into stream-of-commerce data. Such an approach provides additional spectra for testing the software that analyzes detector signals, but it does not provide an analytical understanding of the performance of the system or the ability to model threats, cargo, and a wide variety of environments that would fill out DHS’ testing of the possible threats. Neither does it provide the basis for on-going and continuous improvement of the detector systems, as recommended in the committee’s interim report.

In early 2010, DNDO initiated a 5-week modeling effort to understand results from a reanalysis of handheld radiation detector (RIID) spectra (see Alternatives under the Cost-Benefit Analysis section, below). DNDO contracted with staff at the Naval Research Laboratory and a company called SCA to model the tested configurations of sources, containers (with shielding and masking material), and RIID. (DNDO 2010b) The two groups used different radiation-transport computer codes, but found results consistent with each other and with the physical tests. This is a small step in the direction the committee recommended: it used a simulation to understand empirical test results. As has been noted, such modeling does not require advances in capabilities beyond what can be done with existing tools and expertise that are available within U.S. government laboratories and some companies today. The committee’s chief complaints about these studies are that: (1) they were too limited (scoped around a very narrow question about the RIIDs, but not the ASPs), and (2) they were not integrated into a larger plan for iterative empirical and computational testing. The committee applauds DNDO for undertaking this work as it is the kind of studies we recommended. The committee encourages DNDO to expand these efforts to include ASPs and other program elements and to make them an integral part of DNDO’s testing and evaluation program.

EVALUATION OF TEST RESULTS

DNDO described the results of its performance testing in its Final Report on 2008 Advanced Spectroscopic Portals Performance Tests (March 2009). The committee has two major concerns about DNDO’s summary of the test results. In the report, DNDO first presents the full results, with plots of the probability of detection or the probability of identification (depending on whether it was a test of primary inspection or secondary inspection) as a function of varying shield thickness or masking-material intensity. DNDO also reported confidence intervals (uncertainties) on these plots, which is the correct representation, in the committee’s view. However, to create a figure of merit that summarizes test results quantitatively for its cost-benefit analysis, DNDO aggregated results across test cases and across scenarios in ways that are incorrect and potentially misleading. Furthermore, uncertainties were not reported in these aggregated results.

DNDO is trying to characterize the probability of identification of each threat source across many different configurations and decided to do that with a single number: To create its figure of merit, DNDO averaged all of the runs for a given source. Characterizing performance of the systems is a difficult challenge, and it is not met by this averaged figure of merit. Indeed, this figure of merit is impossible to interpret meaningfully, even on a comparative basis to other detection systems. A more meaningful figure of merit would characterize the performance difference between the two detector systems for each case.

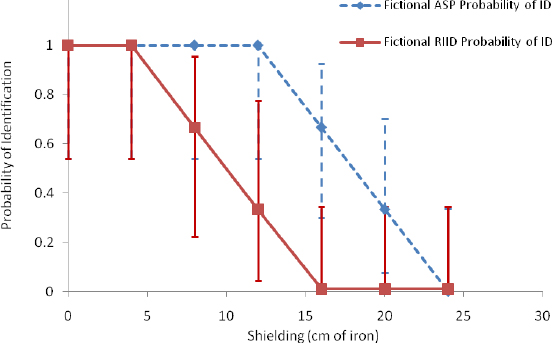

Even if DNDO decided to create a single composite probability by fiat, its method is not technically sound. To illustrate this point, we use a fictional example.

Imagine DNDO conducted test runs of a uranium threat source within different iron shields, with thicknesses of 4, 8, 12, 16, 20, and 24 centimeters. Now suppose that to get better statistical significance in its tests, DNDO conducted more ASP runs with shield thicknesses where the devices showed neither

__________________

8 A spectrum is the measured signal from a radiation detector showing the counted number of photons at each energy along a continuum of energies. A spectrum may also be generated by a simulation of a radiation source and a detector.

consistently positive results nor consistently negative results (a transition region for detection). This is all fine. But to characterize the ASP performance with a single number, DNDO averaged all of the runs, regardless of thickness and regardless of whether more runs were done with one thickness than with another. The resulting figure of merit depends at least as much on the number of runs at a given shield thickness as it does on the performance at a given thickness. See, for example, the fictional data listed in Table 1. Calculated DNDO’s way, the figure of merit is 65%. A more mathematically correct evaluation would be normalized for the number of runs (average the results for a given shield thickness) before averaging across shield thicknesses. Normalizing first and then averaging yields a figure of merit of 71%. However, the idea of averaging across shield thicknesses (or other distinct cases) is in itself fundamentally flawed and obscures the real results from such studies.

An inspection of the fictional data reveals a more meaningful assessment of ASPs over RIIDs, namely (a) equivalent performance at low (0-8 cm) and high (20-24 cm) thicknesses; and (b) possibly improved performance of ASP over RIID at only intermediate (8 or 12 to 16 or 20 cm) thicknesses, when uncertainties are factored in.10 The illustration, while hypothetical, demonstrates the problems with drawing inferences from "average performance."11

Table 1: Fictional data illustrating pitfalls of the DNDO figure of merit.

| Iron Thickness (cm) | Number of Runs | Fictional ASP Probability of ID | Fictional RIID Probability of ID |

| 0 | 6 | 1.0 | 1.0 |

| 4 | 6 | 1.0 | 1.0 |

| 8 | 6 | 1.0 | 0.67 |

| 12 | 6 | 1.0 | 0.33 |

| 16 | 9 | 0.67 | 0 |

| 20 | 9 | 0.33 | 0 |

| 24 | 9 | 0 | 0 |

The difference can be characterized in physical terms. For the tested threat object, the fictional new detector system yields the same probability of correct identification as the old system does, but with 8 additional centimeters of iron as shield (see Table 1 and Figure 1). Thus one could say that 8 cm of iron shielding is the difference between the two systems. Translating that difference into a relative probability of identification of a smuggled threat object is still difficult (see the systems analysis section, below), but the figure of merit at least has meaning that can be described in physical terms and understood. Indeed, DNDO uses such a characterization in its performance test report summary, “For [XX] source in its packaging configuration, the detection fall-off for the [tested] system was reached with [YY] less …shielding than for the ASP-C systems” (DNDO 2009a). Unfortunately, DNDO did not use this characterization when putting the performance test results in the cost-benefit analysis.

__________________

10 The uncertainties are large when the sample sizes are small. Slaughter suggests that some data may be aggregated across similar threat objects when the configurations are otherwise nearly the same. “Whether the result is meaningful depends on the extent to which the [threat objects] that are combined represent similar threats with similar screening performance” (Slaughter 2009). The committee agrees and notes that such aggregation must be done with great care. Aggregation may possibly require correction factors to adjust for differences between threat objects and is not a substitute for analyses of the performance of the individual threat objects.

11 The DHS Independent Review Team also cautioned DNDO against over-aggregating results. “…[D]etection and identification probabilities were averaged over five other objects. Averaging in this way is meaningful only if the detection probability for each object is weighted by the relative frequency of encountering the object in the actual stream of commerce. Such weights were not presented; in practice, they would be very difficult to determine.”

Figure 1: Illustration of better presentation of comparison of performance of the ASP and RIID, using fictional data from Table 1.

COST-BENEFIT ANALYSIS

A new acquisition can be justified if it lowers costs or does a better job than the current systems.12 The committee’s examination of DNDO’s draft lifecycle cost estimates suggests that DNDO has accounted for costs and operational improvements reasonably. As is pointed out in DNDO’s draft cost-benefit analysis, ASPs cost more than existing radiation portal monitors, even when gains in operational efficiency provided by the ASPs are taken into account. This means that any justification for deployment of ASPs hinges on improvements in the ASPs ability to detect and thus prevent smuggled nuclear or radiological material from reaching destinations in the United States, deterring adversaries from attempting to do so, or increasing the ability to act upon warning or intelligence about smuggling of nuclear materials, i.e., the security benefits.

The conclusion of the draft cost-benefit analysis, recommending deployment of ASP-C and ASP-D portals in all secondary scanning lanes that process truck traffic, “is based on the increased performance of the ASP in reducing the threat of a nuclear attack on the homeland.” (DNDO 2010a) The mere inclusion of threat reduction in the analysis indicates that DNDO has accepted the recommendation in the committee’s interim report: Prior to the report DNDO’s analyses did not include these considerations. Furthermore, DNDO clearly paid attention to the suggestions offered in the committee’s interim report on how to analyze security benefits, carrying out both a breakeven analysis and a capabilities-based plan. Each of these initial efforts, however, needs substantial improvement to result in a cost-benefit analysis that supports decision making. Three major problems remain: (1) the strategic justification for the option selected was not provided; (2) the alternatives considered were too narrow and did not include technology and deployment alternatives that might ultimately be preferred; and (3) DNDO

__________________

12 “A better job” may encompass many factors, including higher true positive detection rates, lower false negative detection rates, reliability, versatility, and a variety of other considerations.

used quantitative modeling techniques and therefore quantified factors that could not be justifiably quantified, when the analysis could have been carried out effectively with qualitative reasoning. These problems are described below.

Strategic Justification

The cost-benefit analysis for ASPs needs to be placed in a larger context of prevention of nuclear terrorism. Some of that context is provided in DNDO’s draft cost-benefit analysis,13 and some can be found in the Joint Annual Interagency Review of the Global Nuclear Detection Architecture (DNDO 2010d). These documents describe missions and goals. DHS needs to establish guiding principles and to apply those principles at a strategic level to achieve appropriate balance more broadly across the architecture. Such principles would enable DHS to make decisions about goals, cost tradeoffs, and priorities among the various programs, and also to better make the case for its conclusions. DHS’ decisions can be supported by a logical narrative, describing in words what measures are meant to address what classes of threats, how the pieces fit together, and how they reinforce each other and cover gaps. It can also be supported with relatively simple systems-level modeling that identifies what parts of the system have the greatest influence on security.

Deterrence or dissuasion is an important factor in the likelihood that a malefactor will decide to try to smuggle a weapon or weapon materials, but there is not yet a widely accepted intellectual framework or method to measure or evaluate this factor, so it is difficult to take account of it in planning and evaluation. In its interim report, the committee discussed deterrence and noted the value of exploiting (1) ambiguity in the detection capabilities exhibited to the public; (2) uncertainty on the part of a malefactor about his or her chances of being thwarted or caught; and (3) the likelihood that a malefactor would deem the material or device to be valuable, and therefore would be risk averse. Analysts and decision makers can reason through strategies and tactics based on these factors, and at the same time exercise caution about the limits of their knowledge—malefactors with a high-value weapon are likely to choose attacks that they deem to have a high probability of success, so unknown or unpredictable defenses can be a deterrent. At the same time, however, the malefactors’ goals are unknown—perhaps a detonation in a port is a sufficiently satisfying secondary target. The committee reiterates the value of taking into account the adversary’s perspective in evaluating the effectiveness of different deployment strategies for nuclear detection assets.

Taking a somewhat narrower view, DNDO needs to articulate what is achieved by improvements in detector performance. Drawing again on the fictional example described above, an improved passive detection system may force an adversary wishing to evade detection to place an additional 8 cm of iron around a threat object. The adversary’s action would reduce the probability of successful interdiction using passive detectors. But if this passive detection enhancement forces an adversary to use enough shielding material so that it is easily identified as a suspicious object when scanned by a technology that can ascertain the amount of shielding in a container, such as a gamma or X-ray radiography device, it could lead to additional security enhancement. When combined with, for example, random radiography of a fraction of conveyances (which would hold any conveyance at some risk of being scanned), one has an example of a coherent strategy that leads to a quantifiable probability of successful interdiction. This example is meant to be illustrative that DNDO and CBP need to have a strategy for each configuration. There may be shielding configurations that neither passive detectors nor radiography is likely to detect, so it may be that only random inspections have the potential to catch those objects, but some strategy needs to be articulated and applied to create a logical picture of detection and interdiction.

__________________

13 The DNDO draft cost-benefit analysis describes this context by reference to high-level strategic plans, such as the DHS Strategic Plan Fiscal Years 2008-2010 and the U.S. Customs and Border Protection 2005-2010 Strategic Plan.

Alternatives

The alternatives considered in DNDO’s cost-benefit analysis were not broad enough. For example, improved handheld detectors were dismissed without analysis, based on an assertion that handheld detectors simply are not suitable for external screening of cargo containers. This assertion may prove true depending on the criteria established, but recent analyses within DNDO suggest that handheld detectors, even the hardware currently in use, could be far more effective than DNDO thought possible in identifying threats in cargo.

In its cost-benefit analysis, DNDO compared the ASPs to the currently deployed RIID, which the committee was told is relatively old technology, first deployed several years ago. DNDO concluded that handheld detectors in general are unsuitable for external inspection of cargo containers (DNDO 2010a) and so did not compare ASPs to other handheld detectors, such as the newer sodium iodide and high-purity germanium detectors used by the Department of Energy, or the lanthanum bromide and other detectors in development in the Human Portable Radiation Detection Systems program at DNDO. DNDO did not include an enhanced version of the current RIID in its comparisons, either. (See Sidebar 2.)

In its interim report (NRC 2009), the committee made the following suggestion.

Because some of the improvement in isotope identification offered by the ASPs over the RIIDs is a result of software improvements, the best software package also should be incorporated into improved handheld detectors. Newer RIIDs with better software might significantly improve their performance and expand the range of deployment options available to CBP for cargo screening.

Separate from its cost-benefit analysis, DNDO followed this suggestion, providing data (raw spectra) collected using RIIDs to Sandia National Laboratories and having the laboratory process those spectra through DHSIsotopeID, a template-based gamma-spectrum-analysis program developed there. The results show dramatic improvements of the RIID with improved software over the current RIID system and relative to the ASPs.14 (Feuerbach and McGee 2010) DHSIsotopeID ran quickly and improved the performance of the RIIDs substantially, outperforming not only the RIID’s onboard software, but with less statistical significance also outperforming the ASPs in some cases.15

__________________

14 DHS evaluated all systems against a Level I operationally-correct “Probability of Detection”. This means that for a given spectrum, the test system was able to identify the radionuclide present (if any) or to correctly refer the spectrum for further analysis. Either of these responses results in an appropriate operational response, i.e., holding the truck for further investigation. For example, if the test system correctly identified the radionuclide present, this was considered an operationally correct identification. In addition, if the system indicated that radiation was present at levels above what would be expected from nonradioactive cargo, but that the source radionuclide could not be identified, this was also considered an operationally correct identification as the spectrum would be investigated further. Inherent in this scoring is the assumption that further investigation would correctly identify the radionuclide. If the test system either incorrectly identified the radionuclide present or incorrectly indicated that no threat object was present, it was scored as an incorrect identification.

15 The DHSIsotopeID probabilities of identification are better than the ASPs in some cases, but most of the differences are within the uncertainty bands for the data. Initial examination of the data suggests that DHSIsotopeID also outperformed CBP’s Laboratory and Scientific Services (LSS). However, upon deeper examination, it is less clear because different criteria were used for scoring their performance. A direct comparison of the full adjudication of spectra using the same criteria to evaluate the full current system (RIID through LSS and secondary reachback) versus alternatives (RIID with DHSIsotopeID, and ASPs) would help inform both CBP and DNDO.

In addition to the scoring criteria, there were differences in what information was provided. (DNDO 2010e) DNDO did not provide a RIID measurement of the background radiation during the performance tests, so Sandia created a background spectrum based on an average of the lowest count rates in the files provided. In addition, DNDO sent Sandia nearly 1200 files from another data collection done in 2005 at a real port with measuring the stream of commerce. When corrupted files were removed from this set, DHSIsotopeID identified a relatively small number as containing special nuclear material with high confidence. Those alarms might have been false positives or they might have actually been special nuclear material: CBP and DNDO did not save and correlate data from the

These results have some important implications. DNDO evaluated the lifecycle cost of a RIID of the type currently used at $27k16 and even the next generation RIIDs are only expected to cost approximately $40k. CBP plans to continue to use RIIDs for in-container inspection, even if ASPs are installed for secondary inspection. DHS already owns the DHSIsotopeID software. As noted above, the lifecycle cost of the PVT radiation portal monitor used in conjunction with the RIID is estimated to be $640k compared to over $1.2 million for the ASP and RIID. If improved software halves the difference between the current RIID and the ASP and DHS is simply looking for the greatest improvement detector performance at the least cost, then the improved software is a more cost-effective improvement to the current system than replacing it with the ASP.

Based on other factors, DHS could conclude that the improved RIID is still not good enough. For example, as described above, a logical framework for the global nuclear detection architecture that uses radiography or active interrogation to complement passive detection could create a threshold criterion that the passive detectors must meet. DHS could conclude that passive detectors must be sensitive enough to force a smuggler trying to evade detection to use a shield thick enough that it would be readily detected with radiography. For the shielded sources DNDO tested, however, the RIID with DHSIsotopeID appears to perform at least as well as the ASPs. If a similar threshold existed for masking, then the RIID with DHSIsotopeID might or might not meet the criterion. As they are used today, the RIIDs have other deficiencies, too (see Sidebar 2). Absent such a threshold or consideration of other liabilities, the net benefits per unit net cost can be compared directly.

Another reason that the RIID with improved software may not have been considered adequately in the cost-benefit analysis is that the improved RIID is not yet a self-contained system that can be purchased. Today, running these analyses requires that a person take the raw data from the RIID, select a set of peaks in the spectrum to use for calibration of the energy-dependent response of the detector, select a background spectrum, run the software, and decide what to do with cases that did not run properly (i.e., corrupted original data sets, see Footnote 14). DNDO compared complete systems in its performance tests. However, in the committee’s judgment, the adaptations required to make a complete system from the RIID and the best available software for that detector, which right now appears to be DHSIsotopeID, would be neither costly nor time consuming.

- CBP officers would need to record calibration and background spectra periodically during the day. Such recording is already part of standard operating procedure, but refinements and better adherence to the procedures would simplify analysis and improve the accuracy of results.

- The software would need to be automated and made to interface automatically with the RIID data.

- Advances in handheld computing since the current RIIDs were designed may enable the necessary calculations to be done onboard an otherwise identical RIID or on a separate handheld device. The Defense Threat Reduction Agency is currently funding a small effort to establish the feasibility of running software nearly identical to DHSIsotopeID on a portable digital assistant. But if a laptop or desktop computer is required, the data can be transferred easily from the RIID by several different means.

__________________

shipment manifests with the radiation measurements. In retrospect, this correlation might have been a valuable step to take. LSS has access to an array of information on every shipment entering the United States, which enhances and complements the analysis of spectra referred from secondary inspection.

16 DNDO’s cost estimates yield two different possible costs to consider for the RIID. The total sunk and future costs for the RIIDs over the next 10 years implies a unit cost of $27k. Looking only at future costs, the figure is $15k. The latter number assumes no acquisition costs because the RIIDs have already been purchased and CBP simply pays a maintenance fee per unit, which includes replacement of the units when they fail. DNDO informed the committee that the cost of that maintenance contract may rise in the near future.

SIDEBAR 2: Difficulties in Comparing RIID and ASP Performance

In its draft cost-benefit analysis and its requirements document for handheld detection systems (DNDO 2009b) DNDO provided scant support for its claim that handheld detectors in general are unsuitable for external inspection of cargo containers. In another report (Appendix 8 of HSI 2008), DNDO disputed the draft findings of the DHS Independent Review Team (IRT), especially that “using the ASP instead of the handheld RIID (Radio-Isotope Identification Device) for Secondary screening would not significantly change the probability of those objects [threats] being allowed to enter the United States.” DNDO countered that the IRT analysis relied on unrealistic, ideal-case assumptions: (1) that the RIID would be placed as close as possible to the source (threat object); (2) CBP officers in the field have time to refer all unknowns from the current RIID for further investigation; and (3) that it would be acceptable for further investigation to adjudicate a much larger fraction of the cases referred to secondary inspection.

The basis for DNDO’s first complaint about the RIID is that although the RIID can be placed closer to the container than the ASP detectors are, it is difficult to determine exactly where on the container surface to place the RIID, and it is difficult for the CBP officer to reach some locations (Oxford 2008). This complaint is accurate. Also, the officer may have difficulty identifying the best location to collect data with the RIID. ASPs suffer from neither of these problems: they can detect over of the whole container. The IRT ultimately concluded that “RIID localization errors—which are difficult to predict or control—can easily dominate the performance comparison [between RIIDs and ASPs].” This conclusion was based on calculations. The IRT noted that low-cost measures could address some limitations of the current device, and suggested that CBP explore the feasibility of such measures.

As with any detector, greater distances and more shielding or masking material between the threat object and the detector degrade the “signal” quality of the spectrum collected by the RIID, and improved software may not be able to compensate for a given arrangement. The configurations matter. However, the committee notes that the results comparing RIIDs with ASPs were not based on idealized, first-principle calculations, but on data collected by CBP officers operating the RIID as part of the 2008 performance tests in configurations identical to those examined using ASPs. DNDO commissioned the 5-week modeling effort described in this report because of doubts whether sufficient signal could be acquired by the RIID to achieve such good results. The simulations yielded spectra similar to those collected with the RIID, whose spectra were analyzed with DHSIsotopeID and yielded the improved results.

The RIID data from the performance tests may be better than what one would typically get in the field. The same may be said of the ASP data, although for different reasons. It seems likely that the CBP officers carrying out duties under test conditions were more thorough in scanning the containers than their colleagues are at real ports of entry. But no additional measures, such as those suggested by the IRT to assist placement of the RIID, were taken. Likewise, there were artifacts of the test conditions in the ASP tests. For example, trucks passed through ASPs at the speeds specified within the CONOPS, but trucks commonly transit portals at speeds higher than the designated limits. Furthermore, one truck was tested at a time, with no truck following close behind.

The last two points in DNDO’s counterargument to the IRT are addressed in this report: the inclusion of improved software would improve adjudication in the field, which also lowers referral rates. This is not to say that the RIID is or can be superior to the ASP in operation in the field. The ASP is designed to have advantages. But it is inappropriate to dismiss a RIID with enhanced software as an option, particularly in light of the data collected by DNDO since the IRT report.

None of these appears to be a major obstacle, and it only makes sense for the next iteration or generation of RIID to be able to accommodate software upgrades to use whatever is the best software version or algorithm available at a given time. In the section on deployment, below, the committee reiterates that a central message from the interim report: Scientific iteration is a better approach than full-scale deployments.

In the committee’s view, the handheld device using better software is a low-cost, high-effectiveness option that should be an alternative in the cost-benefit analysis. It might ultimately prove to be the preferred option. If it is not the preferred option, the cost-benefit analysis should explain why.

Setting aside different technology alternatives, the deployment alternatives considered in DNDO’s cost-benefit analysis do not describe the actual alternatives under consideration: Alternative 1 in DNDO’s draft cost-benefit analysis reflected the maximum possible deployment of ASPs in secondary inspection (over 400), when in fact DNDO and CBP are contemplating fewer such deployments. It is important that the alternatives evaluated include the deployment plans that are really under consideration. Further, rigid adherence to the existing concept of operations (CONOPS) may skew the view of what options are possible. In the committee’s view, it makes sense for DHS to review the CONOPS and seek improvements in inspection both through technology and improved procedures considered in concert.

Quantification

DNDO analyzed cost effectiveness with economic tools, a breakeven analysis and a capabilities-based plan, that in principle enable the user to identify the “efficient frontier,” i.e., which of the alternatives under consideration yields the greatest increase in performance for a given cost. The inputs to that analysis and the way the analysis was used make the results the committee saw unsound, and therefore they should not be used as the basis for a decision. In addition to relying on the flawed measure of detector-system performance described above, the measure of cost-benefit merit incorporates unjustified quantitative assumptions about the comparative importance of different levels of performance. Conclusions that are drawn from the resulting quantitative results in the draft cost-benefit analysis attribute precision to the analysis that cannot be supported.

The analyses are quantitative but quantification of some parts of the analysis is difficult to justify, and where quantification is justified, the analyses have unsupportable precision (three significant figures on values that are actually qualitative or, if quantitative, have no more than one significant digit precision). These large uncertainties are not propagated through the analysis and the committee questioned whether the analysis revealed any significant differences among the performance of the alternatives considered for deployment of ASPs (the base case, with no ASPs; ASPs in secondary only; ASPs in primary and secondary; and a hybrid ASP deployment) when uncertainties and appropriate precision were factored in.

Expert elicitations were used to weight the importance of different results, but the committee is concerned that some of the weighting or value functions used were counterintuitive or lacked a logical foundation. For example, a nonlinear function was used for weighting monetary costs and a linear function for weighting performance. One would expect the weighting of monetary costs to be linear: Money is fungible and the opportunity cost for a marginal unit of money is the same whether that marginal dollar is the one millionth dollar or the ten-millionth dollar.17

Weighting of performance might be nonlinear: decision makers might care more about an improvement in probability of identification from 45% to 60% than from 0% to 15% (the system is unreliable) or from 80% to 95% (the system is pretty reliable). As described above, the figure of merit used in the analysis is important. Decision makers may care most about what is the greatest level of shielding or masking that the detection system can see through with a 95% probability. In such a case, an

__________________

17 Money valuation could be nonlinear for other reasons: If one only has $1M, then a change from $200k to $400k is preferable to a change from $900k to $1.1M. But this is not really applicable to the ASPs, for which funding was already appropriated.

improvement from 0 cm to 5 cm of shielding could be quite important but from 30 cm to 35 cm could be comparatively unimportant. Hence, a nonlinear weighting function.

In DNDO’s draft cost-benefit analysis, weighting factors are applied to the different threat objects to create a composite, value-weighted result. However, such weighting is unjustified unless it is understood to be the probability of a terrorist attempting to smuggle SNM into the nation. For example, DNDO’s draft cost-benefit analysis states that “The team weighted the performance against Pu at zero because as both systems performed equally and optimally the performance against Pu was not considered to be of any value in discriminating one system from another.” But consider a hypothetical case in which the probability of encounter of an HEU device is small (say 5%) compared with a probability of encounter of shielded plutonium (say 95%). DNDO’s weighting factors would not be irrelevant, they would be incorrect. The weighting factors, as applied by DNDO, amplify differences, when it is just as important to identify whether two systems have similar performance as to show their differences.

Finally, any such analysis is subject to skepticism because the results depend strongly on the values selected for variables that are difficult to assess uniquely (such as the costs from a successful domestic nuclear attack), so sensitivity studies are critical to the credibility of such analyses. DNDO’s draft cost-benefit analysis has sensitivity studies of the costs elements of acquiring, deploying, and maintaining ASPs and PVT RPMs. The breakeven analysis isolates variables to find under what assumptions the system cost would equal another cost (here, the aforementioned nuclear attack). The only variable examined in the breakeven analysis is the probability of encounter, but others are unknown, too.

The RAND study cited in DNDO’s cost-benefit analysis should not be the only benchmark for the effect of cargo screening on the risk of a domestic nuclear detonation. Repeating the calculation for a small set of illustrative examples (e.g., a radiological dispersal device, RDD, in Detroit, a partial nuclear detonation in Washington, a foreign stockpile device in New York) would help the decision maker evaluate the value of cargo screening technologies in preventing the range of threats and attacks against which the nation deploys detectors. For such an analysis, DNDO should continue to use performance data that are relevant to the illustrative examples (e.g., detection probability of cesium-137 for the RDD, plutonium for a stockpile weapon), but use a more meaningful performance metric than the averaged figure discussed in detail in this report.

DEPLOYMENT

In its interim report, the committee recommended an incremental approach to deployment, with upgrades and improvements provided as experience is gained with the equipment. This is sometimes called spiral development. Another way to say this is that DNDO should be building a program around learning and continuous improvement. The ASPs are especially well suited to such an approach because the basic equipment could stay relatively unchanged while upgrades to the algorithms and analysis are developed. The Johns Hopkins Applied Physics Laboratory (APL) has already demonstrated that the impact of such upgrades can be evaluated without rerunning physical tests. Using its Replay Tool, APL has taken raw data streams and reanalyzed them with a variety of assumptions (e.g., that two of three neutron detectors are not providing data; Heimberg 2010) However, even if DHS concludes that it will not go ahead with a large acquisition and deployment of ASPs, DNDO would learn from a limited deployment of ASPs to examine real commerce. Whether that is the best use of funds is a policy decision that should be based in part on how likely DHS thinks a future deployment of ASPs is.

A tool such as the APL Replay Tool is especially well suited to address a recommendation from the committee’s interim report. The committee recommended that procurement of hardware and software be separated, so that the best data-analysis algorithms could be coupled to the best detector hardware. Such a tool would also enable DNDO to evaluate upgraded software and algorithms by reanalyzing past data collected with the same hardware using the new software. At DNDO’s request, APL tried to inter-compare the vendors’ systems, but encountered difficulties. The procurement specifications require that

the ASPs generate spectrum output data files in a standardized format to enable off-line analysis of the gamma spectra by a separate program (section 7.1.4 of the DNDO 2007). Such standardized output ought to result in modularity of the software, enabling interchange of analysis algorithm computer modules. Although the ASPs do produce the gamma spectrum output data files, at least one of the vendors uses a different data file containing additional information for its own analyses, so that vendor’s analysis software cannot reproduce its own results through offline analysis of the output data file. As a result, the analysis modules produced to date are not compatible with each other’s detector systems. Within the committee, this raised concerns about procurement: DNDO has not gotten the modularity from the vendors that was mandated in the specification. This deficiency should be corrected.

Furthermore, DNDO should not limit itself to the vendors’ algorithms. It is possible that the DHSIsotopeID package, developed at Sandia National Laboratories, or another algorithm is superior to those provided by the ASP vendors. Existing isotope identification algorithms have been developed by a very small community of researchers. DNDO should encourage a broader effort to address these challenges, additionally engaging experts outside of nuclear detection to assist in evaluation and modification of the analysis algorithms. Algorithms for spectral and image analysis in complex systems are found in many fields, including astronomy, medical imaging, and atmospheric analysis, and expertise developed in those areas could be applied to all of the spectroscopic detectors, including the ASP system.

CONCLUSION

The committee has identified the merits and shortcomings of the work DNDO has done in testing and evaluating ASPs, and described how to address the shortcomings. Much of the committee’s advice applies regardless of DHS’ chosen path. For example, modeling and simulations should play a larger role in testing and evaluation whether DHS selects ASPs, handheld detectors, both, or another technology. For any acquisition decision, DHS should use figures of merit that reflect the performance of the systems accurately and are meaningful to the decision factors. Regarding cost-benefit analyses, the acquisition decision should be placed within the larger context of strategies and decisions. The analysis should be only as quantitative as the data can support, and conversely a reasoned justification may be more appropriate than a quantitative analysis in some cases. The set of alternatives under consideration should reflect the options the decision maker would want to know about, not just the options fully available at a particular time. It may be that the preferred option is within that broader set. Finally, DHS should be building a program that is structured around learning that leads to continuous improvement of systems to be deployed operationally in the field.

Thank you for the opportunity to provide input to your decisions.

The Committee on Advanced Spectroscopic Portals

| Robert C. Dynes, Chair | John M. Holmes |

| Richard Blahut | Karen Kafadar |

| Robert R. Borchers | C. Michael Lederer |

| Roger L. Hagengruber | Keith W. Marlow |

| Carl N. Henry | John W. Poston, Sr. |