IDR Team Summary 5

How can we extend the domain of adaptive optics and adaptive imaging to new application, and how can we objectively compare adaptive and non-adaptive approaches to specific imaging problems?

CHALLENGE SUMMARY

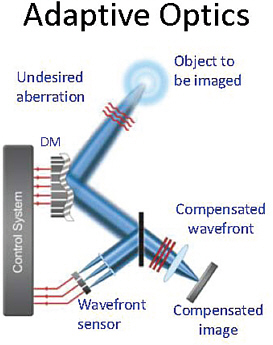

Adaptive optics has revolutionized ground-based optical astronomy, and it has found important applications in ophthalmology, medical ultrasound, optical communications, and other fields where it is necessary to correct for a phase-distorting medium in the propagation path. Most often, an adaptive optics system uses an auxiliary device such as a Shack-Hartmann wavefront sensor to characterize the instantaneous distortion, and it then uses a deformable mirror or other spatial phase modulator to correct the distortion in real time.

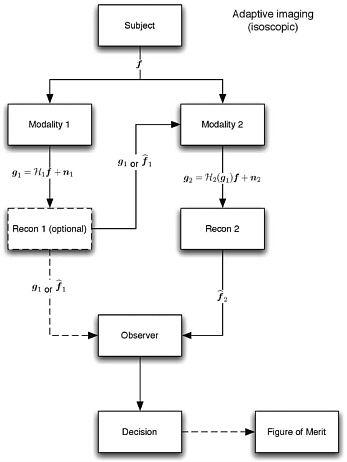

Adaptive imaging is a broader term than adaptive optics. It refers to any autonomous modification of the imaging system to improve its performance, not just correcting for phase distortions and not necessarily relying on auxiliary non-imaging devices such as wavefront sensors. One paradigm is to collect a preliminary image, perhaps with a short exposure time and relatively limited spatial resolution, then to use this information to determine the best hardware configuration and/or data-acquisition protocol for collecting a final image. Alternatively, the process can be repeated iteratively, and the optimum system for any current acquisition can be based on all previous acquisitions. In either case, the imaging information used to control the adaptation can be from the system being adapted or from some other imaging system, and it can even be from an entirely different imaging modality.

An example of the latter paradigm derives from a popular multimodality approach to medical imaging in which a functional imaging modality

such as PET (positron imaging tomography) is combined with an anatomical modality such as CT (computed tomography); normally the images are either superimposed or read together by the radiologist, but it is also possible to use the information from one of the modalities to control the data acquisition in the other modality (Clarkson et al., 2008; referenced under Reading).

The goal of either adaptive optics or the more general adaptive imaging is to improve the quality of the resulting images. Most often the quality has been assessed either in terms of image sharpness or subjective visual impressions, but it is also possible to define image quality rigorously in terms of the scientific of medical information desired from the images, which is often referred to as the task of the imaging system. Typical tasks in medicine include detecting a tumor and estimating its change in size as a result of therapy. In astronomy, the task might be to distinguish a single star from a double star or to detect an exoplanet around a star. The quality of an imaging system, acquisition procedure, or image-processing method is then defined in terms of the performance of some observer on the chosen task, averaged over the images of many different subjects.

The methodology of task-based assessment of image quality is well established in conventional, non-adaptive imaging (although some computational and modeling aspects will be explored under IDR Team Challenge 2), but very little has been done to date on applying the methodology to adaptive systems. Barrett et al. (2006) discusses task-based assessment in adaptive optics, and Barrett et al. (2008) treats the difficult question of how one even defines image quality, normally a statistical average over many subjects, in such a way that it can be optimized for a single subject. Much more research is needed on image quality assessment for all forms of adaptive imaging.

Key Questions

-

What imaging problems are most in need of autonomous adaptation? What information, either from the images or from auxiliary sensors, is most likely to be useful for guiding the adaptation in each problem?

-

For each of the problems considered under the first question, what are the possible modes of adaptation? That is, what system parameters can be altered in response to initial or ongoing image information?

-

Again for each of the problems, how much time is available to analyze the data and implement the adaptation? What new algorithms and computational hardware might be needed?

-

What new theoretical insights or mathematical or statistical models are needed to extend the methodology of task-based assessment of image quality to adaptive imaging?

Reading

Barrett HH and Myers KJ. Foundations of Image Science. John Wiley and Sons; Hoboken, NJ, 2004.

Barrett HH, Furenlid LR, Freed M, Hesterman JY, Kupinski MA, Clarkson E, and Whitaker MK. Adaptive SPECT. IEEE Trans Med Imag 2008;27:775-88. Abstract accessed online June 15, 2010.

Barrett HH, Myers KJ, Devaney N, and Dainty JC. Objective assessment of image quality: IV. Application to adaptive optics. J Opt Soc Am A 2006;23:3080-105. Abstract accessed online June 15, 2010.

Clarkson E, Kupinski MA, Barrett HH, and Furenlid L. A task-based approach to adaptive and multimodality imaging. Proc IEEE 2008;96:500-511. Abstract accessed online June 15, 2010.

Roddier F., Ed., Adaptive Optics in Astronomy. University Press; Cambridge, U.K., 1999.

Tyson RK. Introduction to Adaptive Optics. SPIE Press; Bellingham, WA, 2000.

IDR TEAM MEMBERS

-

Thomas Bifano, Boston University

-

Liliana Borcea, Rice University

-

Miriam Cohen, University of California, San Diego

-

Jason W. Fleischer, Princeton University

-

Craig S. Levin, Stanford University

-

Teri W. Odom, Northwestern University

-

Rafael Piestun, University of Colorado at Boulder

-

Hongkai Zhao, University of California, Irvine

-

Lori Pindar, University of Georgia

IDR TEAM SUMMARY

Lori Pindar, NAKFI Science Writing Scholar, University of Georgia

From the Heavens to Earth: Adaptive Optics and Adaptive Imaging

From the time Galileo first looked through his telescope to catch a glimpse at the night sky, the quest for how to best see past the visual limitation of the

human eye gained a fervor that has yet to lose momentum. In recent history, adaptive optics helped revolutionize the field of astronomy as telescopes became more powerful and better able to image through Earth’s atmosphere to see the celestial bodies in the universe. The benefits of adaptive optics did not stop there and have found practical application in fields such as medical imaging like ultrasound and visualization of the retina. Adaptive optics fits within the realm of adaptive imaging, a general term to describe techniques in which the ultimate goal is to improve the quality of the pictures received by those who use these resulting images for research, analysis, and diagnosis.

Therein lies the beauty and challenge of imaging and optics when we seek to make them adaptive. It introduces a set of issues ranging from technology and algorithms to how quality of an image best suits the problem proposed across multiple fields of study. However, imaging science is built on progression, and system performance is key in advancing the field, accruing accurate data, and ensuring that the best methods are being employed to benefit society as a whole.

A Picture Is Worth A Thousand … Pre-Detection Corrections

As IDR team 5 began its task, the first discussion revolved around creating a unified interdisciplinary understanding of what adaptive optics and adaptive imaging mean and what mechanisms and technologies use various correction technologies to improve imaging. The final consensus was that adaptive optics specifically deals with the correction of a wave front in an optical system with feedback and iteration. For this type of imaging system, there are multiple sources of aberration that impede picture quality such as reduction of contrast, resolution, or brightness. However, by adjusting for these sources of aberration (or adapting the system), the distortions can be counteracted.

Adaptive imaging, while including adaptive optics, also extends beyond to the realm of optimization. Adaptive imaging, for this challenge’s purpose, can be thought of as improvements to an image, while adaptive optics is concerned with the actions taken to compensate for aberrations that affect the image in the initial capture.

New Applications

How can the domain of adaptive optics and adaptive imaging be extended to new applications? The IDR team immediately saw that the prob-

FIGURE 1. The Classic Application of Adaptive Optics: Correction of wavefronts in an optical system in which medium aberrations are compensated through feedback to a deformable mirror (DM). Image courtesy of T. Bifano.

lem does not lie in how the field could be extended but in issues within the limitations of adaptive optics and imaging itself, as well as improvements to various fields that can be gained by use of adaptive optics. Questions regarding increased data correction, measure, and speed were introduced in order to better assess those applications for which adaptive optics and adaptive imaging are most amenable. For example, in biological tissue samples, when light or radiation is passed through a sample, there needs to be a proper balance so that the tissue can be seen without destroying the integrity of the sample. Thus, there are certain limitations that need to be taken into account depending on the field as well as what the person is looking for; therefore optimization and gaining the sharpest image is not always the simple answer when what you are looking for could be obscured, ironically, by making an image better.

To practically assess the problem, the team focused primarily on adaptive optics but did give some attention to adaptive imaging. Adaptive optics addresses the issues of aberrations, often unavoidable, such as ground-based telescopes that compensate for Earth’s atmosphere in order to see into outer space, or retinal imagers that examine the cornea of the eye. However,

FIGURE 2. Adaptive imaging is an active means of improving performance in an imaging system that includes adaptive optics and task-based control such as auto-focus. Image courtesy of H. H. Barrett.

because these media and the aberrations they produce are known, the instruments measuring them can be fixed to continually adjust and produce an accurate reading or measure of the object of study. The usual objective with adaptive optics is to reach a diffractive limit within a linear domain. So within an optical imaging system like a microscope or cameras, its optical power is only as strong as its imperfection in the lenses or alignments. For example, a 10 megapixel digital camera with autofocus will only provide images of 10 megapixel quality—which is appropriate if that is all you need but not so if you strive for 12 megapixel images. Although the scale of adaptive optics is much larger than of an ordinary digital camera, the example illuminates the limitations that technology reaches when dealing with tools that continuously correct for imperfections within a system. In

order to reach this diffraction limit, adaptive optics attempts to correct for such imperfections so that these systems can perform at their best, but it remains a task that is still difficult to accomplish—unless one is dealing with a space-based telescope that does not have to contend with atmospheric aberrations. However, the team took a novel approach in extending adaptive optics to the nonlinear domain and the methods in which to improve resolution in order to create a step-change outcome in applying adaptive optics to new arenas.

Emerging Applications in Adaptive Optics and Adaptive Imaging

-

Fixing (intentional) aberrations

-

Physical or algorithmic

-

Cubic phase plates; deliberately put in and then post process to remove so system becomes insensitive to defocus

-

-

Adapting nonlinear systems

-

Volume imaging: In the instance that phase aberrations become amplitude aberrations, use adaptive optics as a diagnostic tool

-

Thick tissue (limits depth)

-

High scattering media

-

Patients

-

Neuroimaging

-

Lungs (use to compensate for motion)

-

-

Employing multiscale adaptive optics

-

Molecular resolution at arbitrary depths in scattering media (current 0.5 mm)

-

-

Getting better (static, background) noise properties

-

Put a deformable mirror in two-photon microscope

-

The team also addressed the question, “If more was known about the media, how would that change the data acquisition process?” This led to the following ideas:

-

Applying adaptive optics to plasmonics to mold dynamically (nanoscale; adaptive imaging)

-

Using beam forming as an analog to adaptive optics

-

Focus a bright beam at an object in an effort to make it sharper

-

-

Improving acqusition through optics and other energy sources (acoustic, heat, etc.)

-

Angular dependent measurements, scattering kernel

-

Preliminary probing to get sparse information and then adapting accordingly for higher resolution

-

Foveated imaging. High resolution at point(s) of interest in a wide field image (by sculpting wave front to have tilt with spatial modulator, change focal length of optical elements with translating optics, deformable mirrors)

-

Practical for use in digital cameras, unmanned autonomous vehicles.

-

-

Compressive adaptive imaging

-

Imaging with past measurements in order to increase data speed and create higher resolution images

-

In extending adaptive optics and adaptive imaging to new systems, the prior conceptual list can be assessed by various fields and possibly build new relationships that are interdisciplinary and pose mutual benefit to implementing and using improved adaptive imaging systems.

To Adapt or Not Adapt … Is That the Question?

The second part of the task dealt with how to objectively compare adaptive and non-adaptive approaches to specific imaging problems. In order to tackle this problem the group asked how one can compare solutions that both adaptive and non-adaptive imaging create with the idea that if focusing a microscope is adaptive optics (on a certain level), despite there not being a multi-step system of inputs improving the image. Therefore, the second question spurned further questions.

Comparing Adaptive and Non-Adaptive Approaches: Further Questions

-

Are these fundamentally different imaging systems?

-

Do these systems actually require different metrics?

-

Does the specific imaging problem depend on the task?

-

How would you choose to manipulate data?

-

Issue of task-based metric and adaptive systems.

-

Receiver observer based on ensemble of objects but the system becomes completely different under a different operator.

-

-

Are there two different ways of adapting to compare the result?

-

Inverse problem; make problem convex—optimize an intermediate process but only when prior information is present.

-

Issue of creating a phantom that is appropriate to task (e.g., resolution, noise, or signal)

-

Correlation between feedback system and task; control metric and imaging metric—are they different? How does control loop relate to task? Can a surrogate figure of merit (FOM) be calculated on basis of individual images? If so, it can be correlated with task performance, and can it use on-the-fly adaptive imaging?

Key Challenges of Domain Extension with Adaptive Optics and Adaptive Imaging

Taking into consideration the ideas for new applications and the questions that arose from the discussion, the team decided to address two key challenges that pose a foreseeable impediment to implementation of adaptive systems. The first is the problem of using adaptive optics to image in and/or through a 3-D volume. Turbulence is one aspect to contend with, but add a long distance or wider field of view and the problem becomes a bit more layered and difficult to assess. Also, imaging through thicker samples, in biological imaging for example, will induce aberrations that are difficult to contend with because the amount of scatter will be increased. Therefore an inverse problem is introduced in scenarios where feedback may not be readily available to adapt (correct) image capture. For adaptive imaging, the problem involves imaging in and through highly scattered media. This problem is especially relevant in medical imaging through turbid media such as bodily tissue.

This further brings about computational issues such as sensor speeds and how algorithms based on linear models should be adjusted to image in volumes. Also, in a task-based sense, there needs to be an adaptive step to improve figures of merit so that computations are accurate and involve all aspects of alterations and aberrations within a system.

Future Steps: A Glimpse into the Periphery

In order to create a method of implementation to extend adaptive optics and adaptive imaging to new spheres, the team saw the need to

begin by analyzing several key components of the adaptive system so that mutual benefit in interdisciplinary fields could be gained for future imaging solutions.

These ideas include solving the inverse problem as well as creating an updated model for adaptive imaging to correct for aberrations or changes within a system. Also, there is benefit in extracting information from 3-D measurements to better know what exists (and thus, what affects the measurement) between the point of interest and the imaging technology. Media also needs to be approached differently depending on how turbulent or turbid they are, and physical system corrections need to be implemented. Such implementations are in the future because they are application-dependent, and how to actually correct the physical system is a question in and of itself.

Although improving the quality of images and their systems is an important goal, future tools, systems, algorithms, and other components of adaptive imaging and adaptive optics are yet to be designed and implemented. However, the team envisioned a future that can be brought from the peripheral cusp of ingenuity and into focus for future generations of imaging science applications.