The National Center for Science and Engineering Statistics (NCSES), at the U.S. National Science Foundation, is 1 of 14 major statistical agencies1 in the federal government, of which at least 5 collect relevant information on science, technology, and innovation activities in the United States and abroad. The America COMPETES Reauthorization Act of 2010 (H.R. 5116) expanded and codified NCSES’s role as a U.S. federal statistical agency.2 Important aspects of the agency’s mandate include collect, acquire, analyze, report, and disseminate data on (a) research and development (R&D) trends, on (b) U.S. competitiveness in science, technology, and research and development, and on (c) the condition and progress of U.S. science, technology, engineering, and mathematics (STEM) education. NCSES is also explicitly charged to support research on NCSES data and methodologies related to data collection, analysis and dissemination: these aspects of the new NCSES mandate are the most relevant for this study.

PANEL CHARGE

In response to a request from NCSES, the Committee on National Statistics, in collaboration with the Board on Science, Technology, and Economic Policy convened the Panel on Developing Science, Technology, and Innovation Indicators for the Future to examine the status of the NCSES’s science, technology, and innovation (STI) indicators. The detailed statement of task to the panel is in Box 1-1.

____________

1There are 14 members of the Office of Management and Budget-chaired Interagency Council on Statistical Policy.

2The act also gave the agency its new name; it had been the Science Resources Statistics Division.

BOX 1-1

Statement of Task

An ad hoc panel, convened under the Committee on National Statistics, in collaboration with the Board on Science, Technology, and Economic Policy, proposes to conduct a study of the status of the science, technology, and innovation indicators that are currently developed and published by the National Science Foundation’s National Center for Science and Engineering Statistics. Specifically, the panel will:

- Assess and provide recommendations regarding the need for revised, refocused, and newly developed indicators designed to better reflect fundamental and rapid changes that are reshaping global science, technology and innovation systems.

- Address indicators development by NCSES in its role as a U.S. federal statistical agency charged with providing balanced, policy relevant but policy-neutral information to the President, federal executive agencies, the National Science Board, the Congress, and the public.

- Assess the utility of STI indicators currently used or under development in the United States and by other governments and international organizations.

- Develop a priority ordering for refining, making more internationally comparable, or developing a set of new STI indicators on which NCSES should focus, along with a discussion of the rationale for the assigned priorities.

- Determine the international scope of STI indicators and the need for developing new indicators that measure developments in innovative activities in the United States and abroad.

- Offer foresight on the types of data, metrics and indicators that will be particularly influential in evidentiary policy decision-making for years to come. The forward-looking aspect of this study is paramount.

- Produce an interim report at the end of the first year of the study indicating its approach to reviewing the needs and priorities for STI indicators and a final report at the end of the study with conclusions and recommendations.

Understanding the interaction between the demand side and the supply side of the indicators enterprise is the major focus of this study. On the demand side, NCSES wants the panel’s assessment of the types of data, metrics, and indicators that will be particularly influential in evidentiary policy and decision making for the long term. NCSES’s indicators program serves researchers, administrators, and policy makers around the world who want high-quality, accessible, and timely observations about the global STI system. It is important that the resulting indicators and underlying conceptual framework have practical resonance with a broad base of the users of NCSES’s indicators in the near, medium, and long term.

On the supply side, NCSES charged the panel to recommend revised, refocused, and new indicators that reflect the fundamental and rapid changes in the global STI system. Although a clear focus of the panel is on recent efforts by NCSES to collect and disseminate measures of innovation in the United States and abroad, the panel is also assessing the need for revising existing indicators on research and development and on human capital. Understanding the network of inputs—which include data from NCSES surveys, other federal agencies, international organizations, and the private sector—that currently and should in the future feed into the indicators production function, is within the scope of study. However, NCSES did not ask the panel to recommend new survey designs or data taxonomies, nor to develop theoretical foundations of measurement for indicators that are derived from web sources or administrative records.3 The panel is also not focusing on NCSES’s dissemination practices, since a recent National Research Council (2011) report has already made recommendations to NCSES on this subject.

ORGANIZING FRAMEWORK

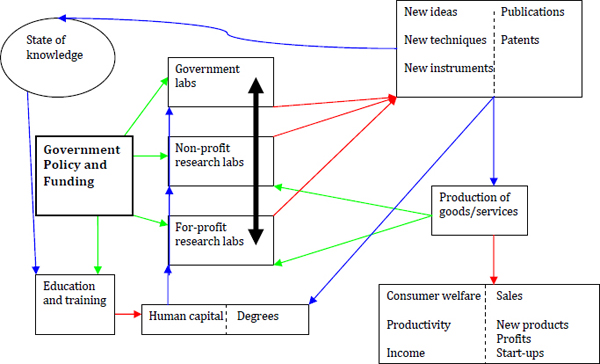

There is an extensive literature on measuring STI activities that informs data gathering and statistical analysis at NCSES. Models of the ecological system of scientific discovery and technological innovation have common themes, relating inputs (state of knowledge, government policy and funding, and education and training) to outputs (new ideas, new techniques, and new instruments as revealed by publications, patents, and new goods and services) and to outcomes (social well-being, such as spillovers to health, environmental, security, and other indicators of economic and social progress). Included in this framework are institutional elements that affect the functioning of the system, including activities at government, nonprofit, and for-profit research laboratories.

Evidence-based science and innovation policies at various geographic scales depend on quantitative measures and qualitative descriptions of the nodes and linkages between the nodes in this model. Figure 1 illustrates Jaffe’s (2011) conceptualization of this framework. In his

____________

3It should be noted that Science and Engineering Indicators 2010 (and the new 2012 volume) has an appendix entitled “Methodology and Statistics,” which describes data sources and potential biases and errors that are inherent in the data collected. The Organisation for Economic Co-operation and Development (2005, pp. 22-23), in the “Oslo Manual,” also has an extensive published methodology on the development of indicators. And a previous National Research Council study (2005, pp. 144-151) has a chapter on sampling and measurement errors regarding surveys and the use of federal administrative records. The economics literature is also replete with articles examining the existence of and correction for measurement errors that occur owing to survey methods (e.g., see Griliches, 1974, 1986; Adams and Griliches, 1996). Further study of measurement theory as it relates to STI indicators is beyond the scope of this study. However, as detailed below, we recommend that NCSES fund research to explore biases that are introduced to data that are gathered using web tools or administrative records.

paper, Jaffe points out that spillover effects and linkages are important but very difficult to measure.

Tassey (2007) and Gallaher and Petrusa (2006) have variants of the STI systems model. They distinguish proprietary technologies from generic technologies that are public goods. A distinguishing feature of the Tassey-Gallaher-Petrusa conceptual framework is that it includes nodes for entrepreneurial, market development, and risk reduction activities within companies. In the past decade, researchers have focused anew on linking investments in innovation to total factor productivity. The growth accounting framework is used for this purpose (see Corrado, Hulten, and Sichel [2005]; Haskel and Wallis [2009]). Researchers and practitioners alike are experimenting with methods accurately to measure the value of intangible assets.

FIGURE 1-1 Schematic overview of innovation system.

SOURCE: Jaffe (2011, p. 194). Permission to reproduce granted by Stanford University Press.

In summary, the theoretical foundations for STI indicators are myriad. Yet, there is agreement on broad categories for which measurement is needed to understand capacity and trends in human capital, R&D, and innovation in the United States, and how the United States compares to other nations in each of these areas. For decades, NCSES has published indicators on human capital and R&D. In 2010 the agency began to publish statistics on innovation as well. These three broad categories guide the panel’s investigation.

WORK TO DATE AND PLANNED

The panel’s findings and recommendations are informed by experts on data extraction, tools development, statistical measurement, and public policy. Commissioned studies will also allow the panel to deliver to NCSES feasible solutions to the issues raised by our charge. To

date, the panel has held three meetings, between April and September 2011, and three more meetings are scheduled during the first five months of 2012. Although this is an interim report and the panel is only mid-way through the deliberative process, we are able to offer some recommendations for near-term activities and indications as to how the panel will proceed on several fronts.

At the outset of the study, the panel members determined that it was important to first ascertain the sponsor’s perspective on specific areas of concern for improving data collection and development of STI statistics and on perceived opportunities for new products and partnerships. It was also important to begin the study by hearing from users of NCSES datasets and publications about what they thought were needs and priorities for STI indicators in the foreseeable future. This was accomplished at our first open meeting, and the members distinguished between near-term and long-term products and processes that NCSES can develop, given resource constraints. It was determined at that time that resource efficiency considerations would be a factor as the panel prioritizes recommendations to NCSES for the development and dissemination of new STI indicators. Given the long list of recommendations for new or revised indicators gleaned from that meeting—as well as new methods that could be used to generate data for indicators—the panel decided to prioritize which tasks could be implemented in the near term given existing resources and which items should be the subject of future research and development.

Since one of the primary goals of this study is to determine how to improve international comparability of STI indicators in the United States and abroad, our second meeting was a workshop of researchers and practitioners from around the world. The workshop was held on July 11 and 12, 2011, in Washington, DC: see Appendix A for the agenda and list of participants. Participants discussed: (1) metrics that have been shown to track changes in national economic growth, productivity and other indicators of social development; (2) frameworks for gathering data on academic inputs to research, development and translation processes toward commercialization of new scientific outputs, with specific subnational outlooks; and (3) next-generation methods for gathering and disseminating data that give snapshot views of scientific research and innovation in sectors, such as biotechnology and information and communications technology (ICT). Presentations and networked discussions focused attention on the policy relevance of redesigned or new indicators. It was evident that there is a worldwide desire for policy-relevant measures of science, technology, and, especially, innovation. However, it was clear that measures of innovation are particularly difficult to obtain directly or to calibrate. There was also much enthusiasm for NCSES and other international statistical organizations to develop STI indicators at different geographical scales.

At its third meeting, the panel focused on establishing the conceptual framework for the metrics and indicators that NCSES should be disseminating; gathering information on data origination to establish which data linkages among federal statistical agencies could improve and expand NCSES’s STI indicators offerings; and deliberating on its findings and recommendations. During the open part of the meeting, experts on industrial organization and economic growth theory presented conceptual frameworks that should guide the data collection processes for STI indicators, with cautions about measurement biases and speculations regarding extensions to the framework to allow measurement of intangible assets and innovation diffusion. Staff from statistical agencies also presented opportunities for linking data among agencies, which could be used to augment existing statistics on innovation activities and human capital in science, technology, engineering, and mathematics (STEM) occupations. During the closed portion of the meeting, the panel agreed on short-term recommendations to NCSES and enumerated items that

require further investigation. This interim report conveys the conclusions reached during those discussions.

In addition to information gathered at meetings and the workshop, the panel also commissioned three papers that will inform its final report. In one paper, Andrew Reamer will present foundations for developing subnational STI indicators, primarily focusing on data sources that could augment NCSES’s offerings in that area. Reamer held a Kauffman-sponsored roundtable discussion in June 2011, which was preceded by a short questionnaire in which roundtable participants gave their opinions and insights on data needs regarding R&D, innovation, and the STEM workforce, at the national and subnational levels. Reamer’s paper will include a summary of his findings from the responses to that questionnaire.

The second paper, by Bronwyn Hall and Adam Jaffe, will provide a conceptual framework for STI indicators’ development. It is important for the panel to be informed about what elements are expected to be included in the canonical set of indicators, what is already available, and what needs to be developed. This paper will add a new modeling framework that is more relevant for service-sector R&D. The paper will also map the developments in the European Union regarding measurement of innovation to activities at NCSES.

The third paper, by Sumiye (Sue) Okubo, is on data linkages among federal statistical agencies that can inform measures of international investments in R&D and international trade of R&D services. Okubo’s paper will include an empirical analysis of payments and receipts for R&D services in the United States and abroad, comparing data from the Bureau of Economic Analysis and the Business Research and Development and Innovation Survey.

In the coming months, the panel will carry out the following tasks, with the help of commissioned papers and consultants, as well as members’ own and staff work:

- Conduct gap analyses to determine the strengths, weaknesses, coverage, utility, and timeliness of STI indicators, with a view to developing a set of key national STI indicators. We will also consider the relative strengths and weaknesses of indicators produced by NCSES in comparison with those published by OECD, Eurostat, the United Nations Educational, Scientific and Cultural Organization, the European Union and other international organizations. At the subnational level, we will also consider the relative strengths and weaknesses of data from private and non-profit institutions. We will map NCSES’s science and engineering indicators to our policy questions, and to indicators that are published by other institutions domestically and abroad. We will identify the gaps where work needs to be done, identify the overlaps were resources may be able to be saved, and prioritize activity that NCSES should do to produce new STI indicators based on feasibility and the relative importance of the policy issue.

- Conduct performance tests of key STI indicators, including a synthesis of existing research where STI data and indicators are used in empirical analysis. The results of the test should enable us to determine how reliable certain highly relied-upon indicators are tracking what we expect them to track.

- Investigate further ways to improve measures of such items as open innovation, technological diffusion, values of and expenditures on intangible assets, trade in R&D services, firms’ age and size, length of firms’ lives, entrepreneurial activities, special nuances of the service sector that differ from the manufacturing sector, and STI talent that extends beyond traditional science and engineering fields.

- Explore new data developments at the United States Patent and Trademark Office and international governing bodies for patents, copyrights and trademarks, and make recommendations to NCSES on readily available and reliable data on these indicators of invention and potential innovation.

- Investigate further the use of microdata to develop STI statistics, including data retrieved using web tools and administrative records. The purpose of this task is to discover other occurrences of competitions and prizes that are used in the federal context for data development and to suggest to NCSES the parameters that are necessary for such a competition to be successful. We will also further investigate the methodological issues that could limit the utility of indicators resulting from non-survey methods.

- Investigate further the reliability and opportunity costs of NCSES’s developing new subnational STI indicators. Since NCSES already produces science and engineering indicators at the state level, we will be looking into the production of STI indicators for finer geographic scales, including metropolitan areas. We will also consider whether data from various subnational scales can be aggregated to national levels, although this is typically fraught with problems.

- Investigate further the potential for and complexities of data linking among U.S. statistical agencies and international organizations that have STI data and statistics. The panel expects to offer specific recommendations on how to use such collaborations to produce new and better STI indicators.

- Explore the role of institutions and regulations that inform STI activities. We will also consider what quantitative or qualitative information would inform the public on the role of culture in the innovation system and the public perception of science, technology, and innovation in the U.S. and abroad. The National Science Board’s Science and Engineering Indicators biennial volume contains a chapter on public attitudes and understanding of science and technology (see National Science Board, 2010).4

- Explore further the possibility of a coordination role for NCSES on STI data and statistics. An interagency council or working group on STI statistics could be created to identify potential synergies among datasets at federal statistical agencies. Any coordination activity of the council for NCSES would only relate to STI, and not to other collections pertaining to economic, demographic, or other statistics that are gathered and disseminated at the federal level.

The panel expects to offer recommendations that require longer lead times for data and tool development than those in this report, as well as recommendations on coordination with specific divisions of other statistical agencies in the United States and abroad. We will address the net value added of proposed indicators, and we expect to specify which data activities and indicators can be eliminated by NCSES. The panel’s final report is scheduled to be released in December 2012.

____________

4The National Science Board released the 2012 issue of Science and Engineering Indicators on January 18, 2012. Since that edition was not available to the panel for this interim report, we cite the 2010 version here. In the final report we will cite the 2012 edition.