2

Framework for Examination of Assessment Processes

As a prerequisite for effective assessment, the context within which an assessment of an R&D organization is conducted will be clearly elucidated before the assessment strategy is developed and applied. There is no single formula that works for all organizations, and there is no single way to compare one organization to another.1,2 Assessments can have many purposes, and it is crucial to identify clearly at the outset the purposes of a particular assessment. A self-assessment can be considerably different from one commissioned by a stakeholder and from one performed by an external team of assessors, even though the basic approaches may be similar. Different types of R&D organizations require different approaches to assessment, although there are common basic paradigms to guide the process. Effective assessments will also reflect cognizance of the time horizon identified for the various characteristics being evaluated.

Metrics and quantitative analyses can be valuable tools in assessments of the technical quality and preparedness of an organization, whereas qualitative findings, informed by quantitative metrics, are more valuable for organizational decision makers.3

Elements of an R&D Organization

The context of an assessment includes an articulation of the following elements of the organization: vision, mission, strategic plan, operations plan, and resources.

The vision statement of an R&D organization defines what the organization wants to be and to become and/or the impact that it seeks to have on its stakeholders from a long-term perspective. The vision statement may be inspirational or even passionate. It is usually influenced by the organization’s interpretation of its capabilities for impacting its stakeholders, customers, clients, and funding sources.

A mission statement defines the purpose of the R&D organization. It may serve as a guide for action by the organization, a definition of its goals, a high-level statement of the path by which the organization may reach its goals, and a decision-making guide. A mission statement may be influenced by the organization’s principal stakeholders, customers, clients, and resources. It may also define how an organization provides value to its principal stakeholders, customers, and clients.

An R&D organization’s strategic plan outlines what the organization does, for whom the organization executes its work, and how the organization plans to excel in executing its work. A strategic plan for any organization is best viewed as a dynamic process defined within a time

____________________________

1 J. Turner, 2010. Best Practices in Merit Review. Association of Public Land Grant Universities, Washington, D.C.

2 M. Kennerly and A. Neely, 2002. A framework of the factors affecting the evolution of performance measurement systems. International Journal of Operations and Production Management 22 (11):1222-1245.

3 J. Stephen Rottler, 2012. “Assessing Sandia Research.” Presentation at the National Research Council’s Workshop on Best Practices in Assessment of Research and Development Organizations, March 19, 2012, Washington, D.C.

frame typically identified as 3 to 5 to 10 years. A meaningful strategic plan is consistent with the mission statement of the organization. An effective strategic plan defines in detail the path by which the organization will attain its goals.

An operations plan includes the specific functions required to execute the strategic plan. The operations plan may focus on administrative, managerial, and production processes within the organization that enhance efficiency and effectiveness. An effective operations plan responds to gaps existing between resources and needs, including personnel and facilities. An effective operations plan is situation-sensitive. The operations plan is linked to the business of the parent organization.

The resources of an R&D organization include its personnel, facilities, finances, real estate, and the distribution and interrelationship of these elements. Resources drive the operations plan that binds the strategic plan that enables the mission within the vision.

Appendix B presents a summary of the presentation by Dr. John Sommerer, Head, Space Sector, and Johns Hopkins University Gilman Scholar, Johns Hopkins University Applied Physics Laboratory, delivered March 19, 2012, at the National Research Council’s Workshop on Best Practices in Assessment of Research and Development organizations.4 The presentation highlighted the importance of alignment between an organization’s vision and its people, especially in an R&D laboratory.

So that an assessment can be appropriately tailored to achieve its purpose for a given organization, it is important to consider the factors relating to different types of R&D organizations, detailed below.

Types of Organization

In setting the context for an assessment, it is helpful to identify four types of R&D organizations (detailed discussion of the different forms of impact expected of these organizational types is presented in Chapter 5). Although no organization is a perfect match with a single type (e.g., federally funded research and development centers [FFRDCs]), the following general statements can be made:

1. Mission-specific organizations (e.g., National Institute of Standards and Technology [NIST], Army Research Laboratory [ARL]): The mission is clearly defined by the stakeholder, who is often responsible for commissioning external assessments that supplement the organization’s own self-assessment practices.

2. Industrial organizations (e.g., IBM, Microsoft Research, Dow Chemical Company), research institutes, and contract organizations: The mission is clearly defined by the organization, and assessment is typically internal.

3. Product-driven organizations (e.g., National laboratories [e.g., Sandia National Laboratories, Los Alamos National Laboratory): The key missions are defined by a stakeholder, with considerable discretion available to the organization’s management to define or seek new mission space. These R&D organizations are typically subject to all types of assessments.

____________________________

4 National Research Council, 2012. Best Practices in Assessment of Research and Development Organizations: Summary of a Workshop. The National Academies Press, Washington, D.C.

4. University research organizations: Basic research is typically part of the core mission; assessment is typically self-commissioned, conducted by external peers and focused on the longer term.

Types of Assessment

Assessments can be grouped in three general categories:

1. Self-assessment typically looks at the effectiveness of the organization’s effort to improve the quality of its workforce and facilities, its preparedness to respond to current and future mission needs, and impact, as measured, for example, by return on investment.

2. Organization-commissioned external assessment is set by the organization; it usually looks at the quality of the work being performed and the strategies for maintaining and developing new core capabilities, as well as the impact of the organization on the broader community.

3. Independent external assessments are commissioned by and report to a stakeholder. The stakeholder sets the context, and frequently the assessment is focused on the impact and the return on investment.

TIMESCALES AND MULTIDIMENSIONALITY OF R&D ORGANIZATIONS

Research and development constitute a multidimensional process. An effective assessment includes consideration of all of these dimensions in order to provide a complete and comprehensive approach that enables valid, meaningful, and useful assessment of R&D organizations.

The timescale for the assessment may be short (1 to 3 years), medium (3 to 7 years), or long (7 years or longer). Typically, assessments involving shorter timescales focus more on the research process than on the research results. The nature of an R&D organization may reflect the sector within which it operates: mission-specific (generally government), industrial, national laboratories, or academic. The R&D performed in these different sectors may be done for quite different reasons, and assessment criteria may be different for these four settings.

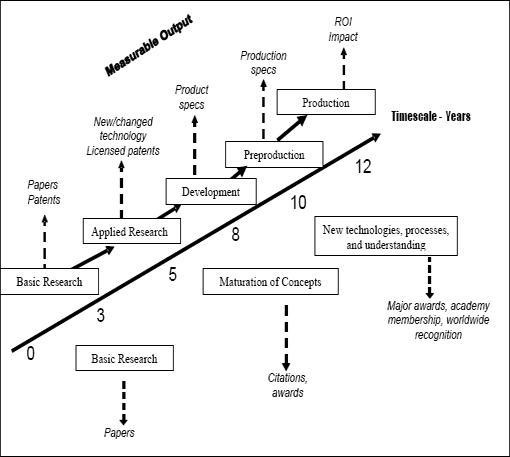

The stages of R&D may be characterized as basic research, applied research, advanced technology development, preproduction, and, at times, production and product fielding. As illustrated in Figure 2-1, different considerations may apply to the assessment of different stages of R&D. Characteristics of the organization include quality, relevance, productivity, and impact as well as the characteristics of its management.

Assessment measures and criteria may be qualitative, quantitative, or anecdotal. Although the traditional demand has been for quantitative metrics for assessment, many characteristics of an organization are not well suited to yielding countable indicators. An assessment may be conducted at the levels of project/task, program, organizational element, laboratory, or overall organization. Collaborations within the organization and extramural collaborations may also be considered. The audience for the assessment findings may include

FIGURE 2-1 Simplified representation of the flow of the research and development (R&D) process. Applied activities are presented above the timescale, and basic activities are presented below the timescale. At different times during the R&D process, different assessment methods and measures may be applied. Notes: (a) In practice, the R&D process is not linear, but, rather, involves numerous feedback loops; (b) in practice, the R&D process is continuous; (c) distinctions between basic and applied activities are often blurred; and (d) timescales may vary considerably across different types of organization.

customers, stakeholders, users of an organization’s products or services, internal management, the scientific and technical community, the general society, or other interested groups.

Figure 2-1 provides for both applied activities (above the timescale) and basic activities (below the timescale) a graphic representation of a simplified (linear) model of the R&D process (in practice, feedback loops connect the stages in the process). Figure 2-1 illustrates (without proposing an extensive set of metrics) that at each stage in the R&D process, different metrics can be considered for an assessment. The timescale indicated depends on the technical area considered.

For all timescales the timeline for innovation spans a continuum of R&D projects, from conception to implementation and finally to sunset. Human and capital resource requirements, and methods for the assignment of such resources, will likely vary greatly over this timeline. Similarly, the time lag from investment to return will vary as a function of program complexity, levels of funding and commitment, and other factors. An effective assessment of a given

organization includes exploration of programs at varying states within their life cycles, because the processes and the outcomes of those processes at each stage are likely to differ. It is important that the efficacy of program selection at the front end of the R&D process be considered on the basis of long-term outcomes, but also that the efficacy of program de-selection be considered, which appropriately occurs at the early phase of projects showing little likelihood of success. Learning also comes from the commercialization of previous research. It is important to cover this landscape thoroughly, because needs and processes will evolve, along with the manner of assessment.5 An effective assessment examines all elements of the organization, with time-appropriate quantitative and qualitative metrics and criteria, in terms of whether they are being integrated effectively. For each timescale appropriate guidelines will be defined in order to assess the quality, impact, and management of the activities of the organization. This includes an overall evaluation of the research portfolio of the organization.

The assessment of the human and capital resources, workforce development, and quality and relevance of the portfolio will vary for the different timescales defined above.

Short-term assessments (nominally 1-3 years) include factors such as the quality of personnel, customer interactions, and cross-organizational interactions. Quantitative metrics for this timescale include publications, patents, recruiting quality, retention, awards, extramural funding, and partnerships.

Midterm assessments (nominally 3-7 years) include personnel, new product introductions, publications, patents, citations, awards, funded programs, and customer satisfaction. Questions to be addressed include program implementation, project follow-through, financial metrics, and personnel growth and advancement.

Long-term assessments (nominally longer than 7 years) require a retrospective review of the major successes and failures of the organization. They include, where possible, the impact of projects that were launched at least a decade earlier. Questions to be addressed for long-term evaluations include whether programs transitioned from the organization are considered best in class, whether programs have met their financial commitments, whether assets have become or have led to the creation of a self-sustaining organization, and whether client partnerships are maintained.

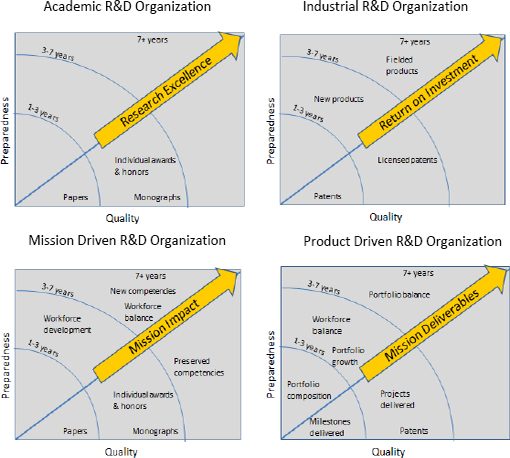

A comprehensive assessment addresses three key factors: (1) management practices (including the maintaining of preparedness to address future challenges), (2) technical quality of the R&D work and products, and (3) impact of the R&D and its products. Figure 2-2 illustrates sample assessment indicators for interactions between technical quality and management (referred to as “preparedness” in Figure 2-2 based on the management function of keeping the organization prepared to respond to changing demands) for four organizational types (academic, mission-driven, industrial, and product-driven). Preparedness is defined as the actions taken by the organization to identify and maintain the resources and strategies necessary to respond flexibly to future challenges.

____________________________

5 U.S. Department of Health and Human Services Office of the Inspector General, 2012. FY 2012 Online Performance Appendix. U.S. Department of Health and Human Services, Washington, D.C.

FIGURE 2-2 Quality and management preparedness are the key axes that drive an assessment of impact, relevance, or return on investment (impact is always dependent on context). Notes: (a) Metrics, outputs, and outcomes included in the figure are illustrative, not intended as an exhaustive set; (b) the time frames identified for outputs and outcomes are approximate.

A research institution may be examined in three phases of the R&D: (1) in the planning stage, (2) during ongoing research, and (3) when the work is completed. In the planning stage, prior to the initiation of research, an organization develops goals, selects strategies and tactics intended to reach those goals, identifies personnel and/or workforce development pathways, and lists metrics for evaluating progress. Planning is done in the context of the organization’s mission and may entail everything from broad capability development to specific, targeted development efforts. Appendix D provides an example of the effective application of peer advice during the planning phase of a research project at the Army Research Laboratory (ARL). The example describes how a set of experts selected from a pool of individuals familiar with the ARL contributed to effective refinement of a request for proposal for two significant ARL programs involving consortia that would examine multiscale modeling of materials relevant to Army R&D.

Ongoing research is the most common subject of review by processes external to the performing organization. The technical content of the R&D being performed is usually the subject of most attention, but an effective assessment also considers all of the context elements identified in the planning stage framework. Retrospective analyses of programs may be made at various times following the completion of research and/or development activities. Many of the same metrics used to evaluate work in progress are useful in examining an R&D program after its completion. Publications and patent awards to individuals and groups may be indicative of quality, particularly shortly after completion. Evidence of technology transition within the larger research, development, testing and evaluation, and fielding or commercialization system is another metric, although documentation of this metric is more readily obtained after time has passed. Reviews of economic impact and/or increased capability in a military system are examples of retrospective analysis that requires formal study by professionals, sometimes many years following the technical work of the organization. Such studies may be expensive and are most likely to be done on a case-by-case basis that tends to emphasize successes. Nevertheless, such anecdotal reviews after sufficient time has elapsed continue to represent the best evidence for later judgments of the effectiveness of the program.

Findings from assessments from each of the phases provide information to organizational decision makers, who are responsible for maintaining the preparedness of the organization to identify and respond to ongoing and future challenges.

After assessment findings are communicated to the organization, it is important to validate those findings—to assess the assessment itself. Appendix C provides a discussion of considerations pertaining to the validation of assessments, including discussion of various types of validity and reliability of assessment findings, factors relating to the efficiency of an assessment, and evaluation of the impacts of an assessment.

WHAT CONSTITUTES AN EFFECTIVE R&D ORGANIZATION?

Any assessment implicitly assumes what the attributes are that characterize an effective R&D organization. The attributes listed below were suggested in a report to the Secretary of Defense as part of the Base Realignment and Closure decision-making process pertaining to the Defense laboratories in 1991.6 The list does not address the accomplishments of the past or offer

____________________________

6 Federal Advisory Commission on Consolidation and Conversion of Defense Research and Development Laboratories, 1991. Report to the Secretary of Defense. U.S. Department of Defense, Washington, D.C.

a forecast of potential results; however, a positive assessment against these attributes was then considered the best possible indicator of the eventual impact and relevance of the organization and the R&D that it performed.

• A clear and substantive mission,

• A critical mass of assigned work,

• A highly competent and dedicated workforce,

• An inspired, empowered, highly qualified leadership,

• State-of-the-art facilities and equipment,

• An effective two-way partnership with customers,

• A strong foundation in research,

• Management authority and flexibility, and

• A strong linkage to universities, industry, research institutes, and government organizations.

Attributes of effective organizations are also proposed in a National Research Council report assessing Department of Defense basic research,7 the NIST Baldridge criteria for performance excellence,8 and reports by Jordan and Binkley that suggest numerous attributes of effective R&D organizations.9,10

SUMMARY OF ASSESSMENT FRAMEWORK CONSIDERATIONS

An effective assessment will include an evaluation of an organization’s management, the quality of its research and development activities, the impact of the effort, and associated interrelationships. Both qualitative and quantitative measures are required to assess management, quality, and impact. The context of the assessment is critically important in designing and carrying out an assessment and will differ depending on the mission and type of organization. Assessments are generally self-assessments, commissioned external assessments requested by the organizations themselves, or independent external assessments commissioned by and reported to stakeholders. Effective assessment of a research organization requires measurements over multiple timescales.

____________________________

7 National Research Council, 2005. Assessment of Department of Defense Basic Research. The National Academies Press, Washington, D.C.

8 National Institute of Standards and Technology, 2011. NIST Baldrige Program: 2011–2012 Criteria for Performance Excellence. National Institute of Standards and Technology, Gaithersburg, Md.

9 G. Jordan and J. Binkley, 1999. Attributes of a Research Environment That Contribute to Excellent Research and Development. Sandia Report SAND99-8519. Sandia National Laboratories, Albuquerque, N. Mex.

10 G. Jordan, L. Streit, and J. Binkley, 2003. Assessing and improving the effectiveness of national research laboratories. IEEE Transactions on Engineering Management 50(2).