4

Prioritization, Option Development,

and Decision Formulation

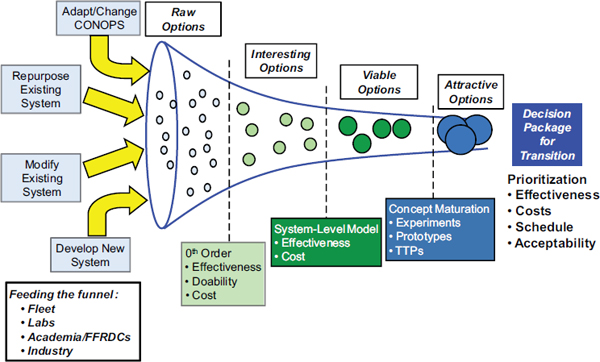

In order to develop cost-effective solutions to often complex, time-critical, unanticipated problems, there needs to be a process by which to generate mitigation options and pass them through a series of screens that reduce the many possibilities to a very few solid and attractive options with credible effectiveness and cost and favorable technical and operational viability metrics. This chapter outlines such a process within the proposed surprise mitigation office. The general process is outlined in Figure 4-1.

CONCEPTUALIZING RAW OPTIONS TO

MITIGATE HIGH-RISK SURPRISES

The first step in solving a problem is to conceptualize as many solutions as possible that could be brought to bear on the problem. This spectrum of options should encompass materiel and nonmateriel solutions within the naval forces as well as solutions that could be provided by joint action with other services.

Understanding the Surprise Challenge and Its Potential Impact

Conceptualizing a possible solution must start with fully understanding the problem. At a minimum the conceptualization process needs to ask and answer, “Why would this happen?” “What vulnerability do I have that my adversary is exploiting?” “What are the key actions that he is taking to bring this about?” “What are the consequences of his actions on my ability to operate?” Once this understanding is established, a set of key metrics can be created that capture the

FIGURE 4-1 Creating, evaluating, and maturing options to address a high-risk surprise. CONOPS, concept of operations; FFRDC, Federally Funded Research and Development Center; TTPs, tactics, techniques, and procedures.

essence of both the adversary’s ability to create the surprise as well as our own operational effectiveness in the presence of it. Both sets of metrics are important to establish early because they will form the foundation for measuring the operational effectiveness of each potential solution. The level of detail in both the questions and the metrics will grow as candidate options pass through the screening process shown in the figure and will become more mature, but they must have been thought about and must exist at some level, even at the outset.

The Conceptualization of Possible Options to Deal with a Given Surprise

Based on the investigation above regarding why there are potential consequences to what the adversary is doing, what those consequences are, what it is exploiting, and the actions it is taking to carry out its exploitation (and, inherent in that, what its exploitation vulnerabilities are), options can be conceptualized at a high level to deal with the adversary surprise. There are at least two dimensions to the types of solutions that could be brought to bear on the problem. One dimension is what can be done to eliminate or mitigate the problem. It includes options to

• Avoid the adversary’s ability to exploit our existing vulnerability.

• Interfere with the adversary’s ability to exploit our existing vulnerability by interfering with elements of the adversary’s required effects chain, probably with some offensive action.

• Mitigate or nullify the consequences of its actions by reducing or eliminating our existing vulnerability.

Any of these three actions might involve actions by naval forces alone or in joint operations with other Service forces.

The second dimension involves how things can be done to address any of the three options listed above. It includes the following options:

• Adapting or changing TTPs without the necessity for any materiel changes.

• Repurposing existing systems to suit a new mission without any significant system modifications but perhaps some minor software modifications (at a scale similar to what was done for Burnt Frost).

• Modify an existing system to modify an existing capability or to create a new one. This could entail the use of existing or new science or technology.

• Develop a new system(s) to create a new capability that is not available through modification of any existing system. As in the above case, this could entail the use of either existing or new science and technology.

As solution options are conceptualized across both dimensions, it is incumbent on whoever is driving this conceptualization process to force the process to think broadly. Trying to create options at many of the intersections of the two dimensions above will certainly help, but in addition there is the notion of “hats” or “flavors” that can be brought to bear. A hat or flavor is a particular way of looking for solutions. Suppose that one is looking for an option with the lowest near-term cost. One would focus on solutions that cost the least during R&D or those that are nonmateriel solutions at the expense of other attributes. Suppose that one is looking for the most capable solution. It is unlikely (although not impossible) that it will also be the least expensive or the quickest to initial operating capability (IOC), but one could force an option to be very high performance at the expense of cost and time. Minimum complexity, minimum ship fit issues, minimum schedule, and minimum risk are exemplars of other flavors that could be used to force the initial conceptualization to be broader than it might otherwise be. Through the combination of specifically addressing the what’s and how’s and then thinking of flavors, a rapid and broad examination of options to avoid, eliminate, or mitigate the effects of a serious surprise can be accomplished.

Establishing the Value Proposition for Assessing “Goodness”

Inevitably the options developed above will differ significantly in terms of their important attributes. Some will be lower cost, some will be more effective at either eliminating or mitigating the impact of a given surprise, some will be able to be fielded far more rapidly, some will more readily fit the culture or existing ways of conducting operations, and some will be more mature and represent lower risk, etc. Equally important to the differences in the attributes themselves is the fact that depending on the nature of a given surprise, the relative importance of each attribute will vary. In screening out options that are less desirable than others and ensuring that the better ones survive for further scrutiny and development, it is critical that a value structure be defined that, for a given adversary surprise, captures those solution attributes that are important and assigns a relative weighting to each. This weighting can be qualitative (e.g., critical, very important, less important, not important), but it needs to be established to deal with the fact that for a given problem, all attributes are not created equal. As for the attributes themselves, they are generally easy to define, and at a minimum should include such key attributes as these:

• Effectiveness against the impact of the surprise being countered.

• Robustness to variations in the particulars of the surprise.

• Reliability—particularly in the initial screening this will not be given a quantitative treatment, but there may be some characteristics of an option that make it inherently more or less reliable than other options.

• All elements of cost—development, acquisition (if any), support, and the like. Note that there may be different weightings even within this element, because near-term resources are often far more precious than those in out-years.

• Time to field or procure an initial capability to counter the surprise.

• Risk in achieving this capability—for example, Are there unknowns at this time? Is this dependent on technologies that at this point are immature? Are there ship fit issues that need to be resolved for which there are not ready solutions? Are there uncertainties in the overall impact on the Navy of a recommended change in CONOPS.

• Adaptability and flexibility in assessing a variety of options.

• Other—What is in a particular attribute that will differ from one surprise to another? (There will always be some issue that pertains to another.)

There needs to be a structured mechanism to screen the options down to a workable few. By “structured,” however, the committee does not mean that judgment needs to be replaced by a quantitative treatment of attributes. Rather, human judgment pervades the process and should not be replaced by “bean counting.” The structure to be imposed is one that captures the essence of the screening

process, retains the important why’s that screen certain options out, and sends others on to more refined analyses, but that does not interfere with the use of good judgment and time-tested experience throughout. This process is described in two steps below.

Initial “0th Order” Evaluation (Many Options to Consider:

Weeding Out Those That Are Not Interesting)

This level of evaluation needs to be accomplished at a very high level, based largely on a few subjective or at best semiquantitative measures. The committee focuses on high level and subjectivity because at this stage there are likely to be many options to consider, there will not be a lot of detail defining each one, and there will be many unanswered questions associated with each, and rapidly and inexpensively screening out those that will never be attractive regardless of how much detail is provided is the goal. Experience shows that it is always exceedingly difficult to do this and is critically dependent on the judgment of the people involved. Notionally one would set as an objective in this phase to get the surviving options down to a handful or so, because the more detailed examination and maturation activities that follow this phase cannot afford, either in time or resources, to deal with more than a very few. A secondary goal in this phase is to provide the initial insights and articulate the questions to be answered on those options that survive this initial screen.

Nevertheless, however high level and however subjective the criteria for this initial screening, they need to track in some fashion the attributes described above, perhaps focusing at this stage on effectiveness, cost, time, and feasibility. In order to effectively use high-level measures to screen out less attractive options and to resist the ever-present temptation to take just one more look, the recommended surprise mitigation office needs to be populated with at least a few very good and very experienced operations analysis personnel who understand the Navy, understand the systems and technology, think at a systems level, and are comfortable with back-of-the-envelope analysis and judgment when it is called for and more detailed modeling and simulation (M&S) when it is appropriate. One of the biggest challenges in the creation of the office may be to find, attract, maintain, and refresh a critical mass (perhaps a half dozen) such people.

More Detailed Screening: Assume Five Options That Are Interesting)

The few options that survive the initial screening need to be subjected to lower level and more rigorous analyses based on system level cost and effectiveness modeling and the initial treatment of some of the other considerations highlighted above that were not treated in the first-pass screening. Sufficient detail needs to be established for each of the options to enable meaningful modeling of the interaction between the victim of the surprise, the effects chain of the

“surpriser,” and whatever solution is being investigated. This needs to be accomplished with sufficient system fidelity to distinguish one option from another and at a sufficient level to highlight broad capability differences and effectiveness. The same is true of the cost modeling in this phase—it must be specific enough to highlight differences and detailed enough to be believable, but not so detailed that it requires inputs that are far beyond what is appropriate at this point of definition. First-order differences should be sufficient and should guide the level of detail asked for and provided. Providing the inputs for the cost analysis will, by definition, allow a first cut based on schedule requirements and an estimate of how long it will take to get an initial capability into the field. Lastly, all of the other important considerations in the value structure established for this problem should also be considered for this second cut. The objective should be to filter down to a smaller number (say, three) candidates.

CONCEPT REFINEMENT AND PROOF OF PRINCIPLE

(ASSUME THREE VIABLE OPTIONS)

The two things accomplished during this phase are described below: One deals with residual unknowns in the key enabling elements of each approach and the other deals with operations related to each approach.

Experiments and/or Prototyping of Key Elements of Each Option

It is likely that a few significant questions may still be unanswered at this point: Can a certain key performance characteristic be achieved? Can some key physical attribute be met? Is the assumed production cost for a high-volume component feasible? Can any one of a variety of other key components be counted on to materialize with a reasonable amount of development effort? Other questions related to field operation of a system may also remain unanswered, such as, Can an element be set up and become operational at the desired performance level within some stressing time line? Will it interfere with (or be interfered with by) other systems in close proximity on the same platform? Is the workload for an operator such that the assumed number of operators in the predicted operations and maintenance (O&M) cost is unrealistic? Still other questions may remain about the interoperability of one of the options with some other system outside the control of the system designer. These and many other potential critical questions can be answered by performing a key experiment or by prototyping a key element or component of the system (by, for example, using three-dimensional printers for parts on demand). If the viability or desirability of a particular concept hangs on one or two issues about which some doubt or ambiguity remains, then the objective of this task is to remove this doubt at far lower cost and in far less time than simply waiting to find out later in the development cycle. The key requirement is to design the experiment or the building of the prototype to

maximize learning on the important unknowns rather than as a show-and-tell to hype the best features of the system.

Rethinking the TTPs Enabled or Required by a Given Approach

Given that the system options at this point in the process are down to a small number, it is worth taking a further look at the TTPs for each option. If an option calls for a fundamental change in the standard TTPs associated with a mission, then the impact of that change on doctrine, training, or the conduct of other related missions needs to be considered. There may also be something associated with a system concept that, while not required, would enable a change in TTPs that provides additional warfighting leverage. In this case the added benefit needs to be noted and credit taken for it. Regardless of whether the impact of the system on TTPs is constraining or leveraging, it needs to be understood to avoid major warfighting “surprises” down the road should a given system concept be pursued further. It is this interaction between the technical and the operational, and between the engineers and operators and warfighters, that is as necessary to the selection process as is the technical refinement of a potential materiel solution.

PRIORITIZATION: THREE OPTIONS—

WHICH IS THE MOST ATTRACTIVE?

If a more detailed understanding of the key attributes and characteristics of each option has been obtained, no showstoppers have been identified, and all three options have survived, the next question to be answered is, How do the three options compare? What is their rank order of “goodness”? Which one (or in rare circumstances, ones) should be developed? At this point, three steps need to be followed.

Establish Relevant Metrics for Each Option

Earlier in the process a number of high-level measures of effectiveness (MOEs) were established to evaluate and cull the initial concepts that were posited as solutions. These MOEs were at first rough estimates of performance and effectiveness, cost, schedule, and risk. Through the refinement process, more detail is available on each of these measures, particularly on those measures that distinguish one option from another. Further, let us assume that the development program that has been laid out for each option captures the inherent risk of that option in terms of the program’s content, so that the nonrecurring development cost and schedule drives the risk down to an acceptable level. Thus, differences in development risk have been translated to differences in development cost and schedule and do not have to be treated explicitly. What therefore may remain as critical metrics are the following:

• Performance, effectiveness, and robustness;

• Development, acquisition, and life-cycle cost;

• Time-to-field capability;

• “Impedance” or cultural match to naval forces; and

• Other considerations that are deemed important and are not captured in any of the above.

Based on the level of detail available for each option, these measures need to be defined one or two levels below this level and should form the basis for evaluation. Using a set of M&S tools appropriate to the level of detail available, each of the options would then be evaluated based on the metrics listed. As lessons are learned through the evaluation process, such as why a particular option performs less well than another or has a much longer development cycle or is more counterculture to Navy standard practices, they need to be captured and documented, both so that the reasons behind a particular metric are not lost but, even more important, so the process of ranking the options becomes more transparent.

Establish Relative Attractiveness Based on the

Value Proposition or Structure

Once the various metrics have been established to distinguish the various options, it is often tempting to automate the ranking of the options based on some simple arithmetic algorithm combining the metrics and weightings. This temptation needs to be resisted, because human judgment is as much a part of the process as is the quantitative evaluation of MOEs. Rather, using the quantitative measures as guides rather than absolutes, the evaluators should stand back and try to see what high-level messages, if any, are present within the individual assessments. Which highly ranked options best satisfy the criteria on the value structure? Which one or ones have deep holes in some of the measures? Are those holes critical and not easily filled? What about a concept that ranks very high on all of the most important measures but falls short on a few of the others? Is there a work-around for them? It is this kind of questioning, answering, and then resynthesizing that should be spurred by looking at the quantitative MOEs to come up with the best informed basis for the final recommendation.

DEVELOP TRANSITION DECISION PACKAGE

The final step in the synthesis and evaluation process is to develop a draft briefing package and written report containing at a minimum the background on the problem being addressed, the alternative solutions that were examined, the evaluation process to which they were subjected, the value structure that was used to weigh the various criteria or MOEs, the rank ordering of the alternatives that resulted, and the recommendation for transitioning the concept for further devel-

opment, testing, prototyping, or fielding, whichever is appropriate. It is also worth noting that the most important reasons are captured in readily available backup should questions arise on any of the important issues covered in the briefing. These same reasons should be part of the discussions in the report.

The team should engage with the organizations that are key to the success and acceptance of the recommended solution or have a major interest in it. Based on the give and take at those discussions, the briefing and report should be updated as necessary to accommodate whatever feedback was obtained and was determined to be valid and useful in terms of the final product.

The final step in the process is to develop an executive-level decision package for whichever office is the final authority for determining whether to proceed with the recommendation. The content and level of detail should be appropriate to the office although the options considered, how they ranked, and the key rationale for the recommendation should all be treated.

For there to be an effective solution, the enterprise as a whole will have to respond in kind. It is important to include the Navy contractors involved, and their buy-in will be important for success. This is a tough problem. Traditionally the Navy has relied on its laboratory community—naval centers, university-affiliated research centers (UARCs), and FFRDCs—for objective advice (advice without a profit motive). Also, multiple labs are typically involved in removing the possible systematic technical biases of any single expert.

Finding 4: With the recent establishment of the Strategic Capabilities Office within the Office of the Under Secretary of Defense for Acquisition, Technology, and Logistics (USD AT&L), there appears to be an opportunity for a surprise mitigation office to provide naval force component solutions to surprises facing the entire Joint Force. Furthermore, there is an opportunity for both offices to draw on each other by sharing expertise, methodologies (modeling, simulation, analysis, red teaming), and learning.

Recommendation 4: The Chief of Naval Operations (CNO) and the Under Secretary of Defense for Acquisition, Technology, and Logistics (USD AT&L) should encourage their respective “surprise offices” to develop and foster a close working relationship with each other. In particular, the CNO and USD AT&L should direct their surprise offices to share ideas and to collaborate on methodologies (modeling, simulation, analysis, test data, and red teaming) in the interest of efficiency and obtaining consistent and coordinated results. Technical interchange meetings and the frequent exchange of information between the two offices and others that may be eventually established by the other Services should also be encouraged.