Strategic Planning and Metrics

This chapter addresses Task 1, which asks the committee to assess the feasibility of developing performance measures and quantitative metrics against existing performance goals of strategic plan and, if infeasible, recommended alternative approaches (see Appendix B for the full task statement).

This chapter comprises four sections. The first section introduces key terms, definitions, and concepts related to effectiveness measures and metrics. The second section assesses the feasibility of using measures and metrics to evaluate the effectiveness of the GNDA. The third section provides a summary of an analysis of the existing performance goals and strategic plan using the definitions and concepts introduced in the first two sections. The chapter concludes with a finding to address Task 1.

The following documents served as key references for this chapter:

• Government Performance and Results Act (GRPA) of 19931 and the GPRA Modernization Act of 20102

• Office of Management and Budget (OMB) Circular No. A–11, Preparation, Submission, and Execution of the Budget (OMB, 2012)

_____________

1 Pub. L. No. 103-62, § 20, 107 Stat. 285, available at http://www.whitehouse.gov/omb/mgmt-gpra/gplaw2m. Accessed August 1, 2013.

2 Pub. L. No. 111-352, § 1, 124 Stat. 3866, available at http://www.gpo.gov/fdsys/pkg/BILLS-111hr2142enr/pdf/BILLS-111hr2142enr.pdf. Accessed August 1, 2013.

• Global Nuclear Detection Architecture Strategic Plan 2010 (GNDA, 2010*)

• Global Nuclear Detection Architecture Joint Annual Interagency Review (GNDA, 2011*; GNDA, 2012*)

• Department of Homeland Security (DHS) GNDA Implementation Plan 2012 (DHS, 2012*)

3.1 KEY TERMS AND DEFINITIONS

Multiple definitions can be found for the terms commonly used in performance measurement theory. The following terms and definitions are presented to clarify their use in this report for addressing this study’s specific tasks.

Measure: Qualitative or quantitative facts that gauge the progress toward achieving a goal. These facts may be in the form of indicators, statistics, or metrics.

Indicator: A measurable value that is used to track progress toward a goal or target. (See “metric,” below.) “Agencies are encouraged to use outcome indicators … where feasible” (OMB, 2012, Section 200, p. 14).

Metric: Synonymous with “indicator,” the actual quantity that is used to measure progress. Metrics can be quantitative or qualitative. Quantitative metrics may use numerical (e.g., a percentage or number) or constructed (e.g., high, medium, low) scales.

Proxy metric: A metric that does not directly relate to a goal or objective but can be used as an indirect measure as long as a strong relationship exists between the metric and its objective can be made. Proxies can be useful and should not be indiscriminately avoided, especially when a direct metric cannot be established. Proxy metrics are also called “indirect metrics.”

An example of a measure and its metric would be the percentage of planned portal monitors that have been deployed at seaports (measure) and the number of portal monitors deployed in the past year (metric). An example of a proxy metric is the number of preexisting memoranda of understanding (MOUs) for sharing equipment and resources between states that are established before a disaster. This proxy has been shown by

_____________

* Not publically available.

the Environmental Protection Agency to be directly related to how rapidly adjoining states can respond with additional equipment following disasters and emergencies (Travers, 2012).

The committee uses “metric” in place of “indicator” and simplifies “measures and metrics” to “metrics.” At a detailed level, there is an important distinction between measures and metrics. However, the simplification allows this report to focus on the analysis and discussion of GNDA metrics rather than the differentiation between measures and metrics and to avoid the repeated use of the term “measures and metrics.”

Goal: A statement of the result or achievement toward which effort is directed. Strategic (or high-level) goals articulate clear statements of what the agency aims to achieve to advance its mission and address relevant national problem, needs, challenges, and opportunities. Such goals generally outcome-oriented and long-term and focus on major functions and operations of the agency.

Objectives: Objectives directly link to a goal and reflect the outcome or impact the agency is trying to achieve.

Performance Goals: Performance goals link to objectives and are established to help the agency monitor and understand progress. They should be of limited number and explain how they contribute to the strategic objective. “Agencies are strongly encouraged to set outcome-focused performance goals” (OMB, 2012, Section 200, p. 15).

This report does not distinguish between “strategic goals” and “goals” or “strategic objectives” and “objectives.” The use of goals and objectives throughout this report assumes they are strategic (or high level).

Mission Statement: “A brief, easy-to-understand narrative … [that] defines the basic purpose of the agency and is consistent with the agency’s core programs and activities expressed within the broad context of national problems, needs, or challenges” (OMB, 2012, Section 200, p. 13).

Strategic Plan: Presents the long-term objectives an agency hopes to accomplish. It describes general and longer-term goals the agency aims to achieve, what actions the agency will take to realize those goals, and how the agency will deal with the challenges likely to be barriers to achieving the desired result. An agency’s strategic plan should provide the context for decisions about performance goals, priorities, and budget planning, and should provide the framework for the detail provided in agency annual plans and reports (OMB, 2012, Section 200, p. 13).

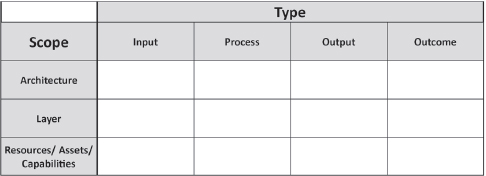

Types: There are different types of goals, objectives, and metrics (OMB, 2012, Section 200, page 14):

• Input—indicates consumption of resources used (e.g., time, money).

• Process—indicates how well a procedure, process or operation is working.

• Output—describes the level of activity (or product) that will be provided over a period of time.

• Outcome—indicates progress against achieving the intended result.

The committee introduces the concept of the functional scope of a goal, objective, or metric to describe how broadly focused it is.

Scope: The breadth of the focus of a goal, objective or metrics as they relate to the GNDA:

• Architecture—the integrated capability of all three geographic layers and the crosscutting functions of the GNDA;

• Layers—the operational elements and assets in each of the three geographical layers of the GNDA (exterior to the United States, transborder, and interior of the United States); and

• Resources/Assets/Capabilities—budgets, people, assets, and capabilities.

The remainder of this section discusses the characteristics and assessment of metrics that are useful to decision makers.

3.1.1 Characteristics of Metrics Useful to Decision Makers

Metrics are already used to report on the yearly progress of the GNDA. However, these metrics do not provide an assessment of the overall GNDA. This section introduces tools to develop and evaluate metrics in terms of their usefulness (e.g., their ability to provide information on the overall effectiveness of the GNDA).

Metrics are developed against particular criteria that are selected on the basis of the application (or objective) and the needs of the customer (or user). Different metrics may be chosen to meet different applications. For example, metrics could be used to assess U.S. security, report on management effectiveness, or gauge interagency cooperation. Customers who require reports on management effectiveness (e.g., Congress) may have a significantly different focus from customers interested in U.S. security assessment (e.g., GNDA federal partners). In fact, the GNDA has several customers for its metrics, for example:

FIGURE 3-1 A scope-versus-type matrix is formed by combining the different metric types (input, process, output, or outcome) with their scope (full architecture level, layer, and resources). Similar matrices can be used to categorize goals and objectives.

• Congress and the White House (e.g., OMB or the National Security Council),

• Domestic Nuclear Detection Office (DNDO) and DHS management,

• GNDA federal partners, and

• Other GNDA partners (including foreign, state, and local jurisdictions).

Outcome-based metrics provide information that is useful to these customers because they provide information on progress made against an intended result or changes in conditions that the customer is attempting to influence. Broadly-based metrics that provide information on the full scope of the GNDA (i.e., the overall GNDA) will also be of more value to those customers than narrowly-scoped metrics. To guide the development of metrics that are both outcome-based and broadly-scoped, 3 a categorization matrix that includes the type of metric (e.g., input-, process-, or outcome-based) and its functional scope (e.g., architecture, layer, or resources) is introduced. This matrix can be used to categorize existing or proposed metrics (see Figure 3-1).

Goals, objectives, and metrics that populate the upper-right corner (outcome-based and focused on the full architecture) are preferred over those found in the lower-left corner (input-based and focused at the resource-level) of the matrix. Such matrices can aid in the development of updated goals, objectives and metrics that will inherently provide better

_____________

3 There were some committee members who judged that this was redundant; if truly outcome-based, a metric (or goal or objective) would naturally be broadly-scoped. Because developing outcome-based strategic plans and metrics is difficult for programs such as the GNDA, it was determined that this additional criterion may prove helpful in GNDA agency self-assessment of future goals, objectives, and metrics.

information to decision makers than those that exist today. The development of outcome-based metrics that focus on the overall effectiveness of the GNDA requires that the higher-level goals and objectives also be outcome-based and focused on the full architecture. This is discussed in more detail later in this chapter.

Metrics that are useful tend to have the following additional characteristics:

• Understandable and transparent, including with respect to uncertainties—for confidence and communication and to enable peer review.

• Reproducible and flexible—to track progress and illustrate trends. When expert elicitation is used for data collection, assumptions and uncertainties should be provided.

• Quantitative with numerical or constructed (e.g., high/medium/ low) scales.

• Verifiable—for credibility, quality control and confidence.

This list of characteristics is consistent with other formulations, such as those provided by Keeney and Gregory (2005)4 and for software (Kaner, 2009). Checklists by themselves are not a guaranteed method of constructing meaningful or useful metrics. Foremost, metrics need to report on outcomes that are directly related to goals and objectives. Box 3-1 contains a summary of the characteristics of a useful metric.

_____________

4 The characteristics listed in Keeney and Gregory (2005, p. 3) are listed below:

• Unambiguous—A clear relationship exists between consequences and descriptions of consequences using the attribute.

• Comprehensive—The attribute levels cover the range of possible consequences for the corresponding objective and value judgments implicit in the attribute are reasonable.

• Direct—The attribute levels directly describe to the consequences of interest.

• Operational—In practice, information to describe consequences can be obtained and value tradeoffs can reasonably be made.

• Understandable—Consequences and value tradeoffs made using the attribute can readily be understood and clearly communicated.

The list of criteria matches reasonably well with that proposed by the committee. The committee lists “understandable and transparent,” which relates to “unambiguous” and to “understandable.” “Reproducible and flexible” relates to “operational.” The committee includes “quantitative,” whereas Keeney and Gregory suggest that the attributes should be “direct” in describing the consequences of interest. “Verifiable” relates to “direct” and “operational.” The concept of a “comprehensive,” metric is implicit in the committee’s discussion of the notion of “reproducible and flexible.”

BOX 3-1

Characteristics of Metrics Useful to Decision Makers

Useful metrics have following characteristics:

1. Defined customers and an understanding of their applications

2. A clear connection to consequences or decision options for the customer by being:

a. Outcome-based and broadly focused

b. Aligned clearly to higher-level outcome-based goals and objectives

c. Understandable/transparent, reproducible, quantifiable, and verifiable

d. Directly linked to objectives and goals when output-, process- or input-based.

Items 2.a and 2.b are more critical characteristics of useful metrics than are 2.c and 2.d.

3.2 FEASIBILITY OF EVALUATING THE GNDA

The focus of Task 1 is to assess the feasibility of developing metrics against existing performance goals of the GNDA strategic plan that can evaluate the overall effectiveness of the GNDA. The remainder of this chapter focuses on the existing GNDA strategic plan and its performance goals and also on assessing the feasibility, using the concepts introduced in the first section, to develop metrics against them.

Section 1103 of the Implementing the Recommendations of the 9/11 Commission Act of 2007 (P.L. 110-53) mandates annual interagency reviews of the GNDA. The results of these annual reviews are submitted to the President, Congress, and OMB. Therefore, OMB’s guidance on strategic planning and annual reporting are relevant to the GNDA and its federal partner agencies. Part 6 of Circular A-11 (OMB, 2012) describes the GPRA Modernization Act requirements and the expected approach to performance reporting.5 OMB’s strategic planning hierarchy can be found in Figure 3-2.

The guidance from OMB suggests that goals, objectives, performance goals, and metrics be outcome oriented, meaning that they should be focused on progress toward a mission rather than focused on activities and processes. Figure 3-2 shows the OMB “goals relationship” with some committee modifications: “Performance Goals with Performance Indicators”

_____________

5 DNDO and its partner agencies released the GNDA strategic plan 2010; this guidance from OMB was released in 2012.

FIGURE 3-2 The Goals Relationship showing the hierarchical relationship between a single mission statement supported by multiple goals, which in turn are supported by a suite of objectives and performance goals and their associated metrics. The example goals and objectives are for illustration only. This diagram has been modified by the committee to reduce the number of terms that are used, bringing it in line with the text of this report and to draw parallels with the GNDA strategic plan.

SOURCE: Modified from OMB (2012, Part 6, Section 200).

was reduced to simply “Performance Goals,” and the examples within the “Other Indicators” were replaced with a subset of metric types introduced in the preceding section. The basic hierarchical structure remains the same.

In practice, metrics are created to report on progress in meeting the performance goals. The performance goals inform the progress in meeting the objectives which, in turn, inform progress toward meeting the goals. The goals link directly to the mission (Figure 3-2).

3.3 ANALYSIS

Is it suitable to develop additional metrics against the existing GNDA performance goals? The committee notes that it is exceedingly difficult to create outcome-based metrics for the GNDA when its higher-level strategic components (goals, objectives, performance goals) are not outcome-based and are not focused on full architecture. In determining whether a compo-

nent is outcome-based, it is helpful to ask the question “Why is this being done?” or “Why is this important?” If the component is outcome-based, the answer will be self-evident. If an explanation of “Because … x, y, z,” is needed to answer the question, then the component is not outcome-based.

The committee applied this test to the existing strategic plan. While the existing plan has a hierarchical structure similar to the OMB suggested structure (see Figure 3-2), the connection between the mission, goals and objectives was not clear. Furthermore, the committee determined that the majority of the existing goals and objectives are not outcome-based nor are they focused on the full architecture. Therefore, developing outcome-based metrics to report on progress of the overall GNDA against the existing plan is not feasible.

3.4 FINDING

FINDING 1.1: It is fundamentally possible to create outcome-based metrics for the GNDA; however, it is not currently feasible to develop outcome-based metrics against the existing strategic plan’s goals, objectives, and performance goals because these components are primarily output- and process-based and are not linked directly to the GNDA’s mission.

Two conditions must be met to use metrics to evaluate the effectiveness of the GNDA:

1. A new strategic plan with outcome-based goals and objectives must be created and

2. An analysis framework must be developed to enable assessment of outcome-based metrics.

The committee concludes that it is not suitable to develop further metrics against the current strategic plan because they would not be outcome-based.

Through the development of the strategic plan, DNDO and its partners have defined the GNDA from a large and complex set of disparate U.S. government programs. DNDO is using the annual review process as a mechanism to engage its partners in a cooperative effort to evaluate and improve the GNDA. In its present state, the strategic plan and annual review provide an accounting of the preexisting programs but not an assessment of overall performance. While acknowledging potential implementation challenges in Observations 1 and 2, the committee notes that further steps, including an updated strategic plan (see Chapter 4) and development of an analysis framework (see Chapter 5) are needed to transform this initial effort into an integrated analysis and planning capability that can better estimate overall GNDA effectiveness and inform decisions.