As noted in Finding 1.1, two critical components are needed to evaluate the effectiveness of the Global Nuclear Detection Architecture (GNDA): a new strategic plan with outcome-based metrics and an analysis framework to enable assessment of outcome-based metrics. A notional example of a strategic plan and outcome-based metrics were developed in Chapter 4. The focus of this chapter is on an analysis framework. This chapter is intended to address the second charge of the statement of task (see Appendix B).

This chapter is organized into two sections. The first section provides an overview of current analysis approaches for complex technological systems. The final section introduces the committee’s recommended GNDA analysis framework using the notional metrics described in the preceding chapter. The chapter concludes with two findings and one recommendation.

5.1 EVALUATION OF COMPLEX TECHNOLOGICAL SYSTEMS

The committee was asked to identify relevant examples of analytical risk-based approaches for complex technological systems. Military planning and analysis of defense capabilities provide several examples; water security provides another. These examples are described in this section.

Modeling plays an important role in defense analysis and support to decision makers. Military planning models exist at several levels: component, system, force structure (architectures), and campaigns. The models are used to inform force mission planning and force structure planning decisions.

For example, consider strategic airlift to support a military campaign. Different models have been created to support different planning decisions.

Component models exist of aircraft airframes to estimate drag and fuel consumption. System models exist to calculate the time to load an aircraft and deliver the cargo to a destination. Force structure models exist to calculate the time to deploy a force (people and equipment) for a mission. Finally, campaign models exist to determine the time to achieve the campaign objectives given the force available and potential actions of the adversary. For force structure planning, a variety of models are used to help determine the best mix of aircraft (e.g., tactical and strategic) and an affordable amount of airlift capability given the potential threats on the strategic planning horizon. The models do not make decisions; rather, they inform the analysts, strategic planners, and decision makers. They also analyze the advantages and disadvantages of viable alternatives.

Other organizations have developed analysis frameworks for complex systems that have addressed challenges similar to those faced by the GNDA architecture. These include U.S. strategic nuclear war planning, U.S. ballistic missile defense, and U.S. water security programs.

5.1.1 U.S. Strategic Nuclear War Planning

Strategic nuclear defense is an example of a complex technological system that uses an analysis framework (including modeling) to assess effectiveness. Since the beginning of the Cold War, the United States has relied on nuclear forces as a deterrent to hostile actions by nuclear adversaries. For obvious reasons, the United States cannot conduct a full- or even a partial-scale nuclear war to demonstrate the capability to deter adversaries. As a result, since the 1980s the United States has relied on a combination of modeling, war gaming, component testing, conventional system tests, reliability testing, red teaming, exercises, and technical studies to develop and evaluate the capabilities of its nuclear forces and to maintain a credible nuclear deterrent.

Nuclear force evaluation requires four types of data: adversary capabilities and intent, weapons availability, weapons reliability, and weapons effectiveness. Data on adversary capabilities and intent are obtained from expert elicitation of the intelligence community about adversary capabilities and possible intent. Weapons availability data are reasonably easy to obtain because U.S. military forces are required to collect these data. Reliability and effectiveness data are more difficult to obtain directly. Since the first nuclear test moratorium1 took effect in 1992, the United States no longer fully tests the operation of nuclear warheads. Component, subsystem, and conventional (i.e., nonnuclear) system tests have been used to evaluate the

_____________

1 The test ban began in 1992, introduced by the Hatfield-Exon-Mitchell amendment. The test ban moratorium continues to be upheld.

reliability of warhead material properties and control system electronics. In addition, conventional system tests have been used to evaluate the reliability of intercontinental missiles by selecting a missile at random, removing the warhead, shipping it to a test launch site, installing an electronic warhead simulator, and launching the missile to a downrange location in the Pacific. Extensive telemetry is collected during the tests to evaluate missile reliability. Based on the system reliability data obtained from these tests and evaluations, military planning factors have been developed to assess the availability, reliability, and probability of kill against potential adversary targets. Modeling and analysis have been used to develop and evaluate the overall effectiveness of the nuclear war plan and limited nuclear options, including the extensive Stockpile Stewardship Program that certifies nuclear arsenal readiness (NRC, 2012c). Modeling, analysis, and war gaming have also been used to consider and assess the potential actions of nuclear adversaries.

5.1.2 U.S. Ballistic Missile Defense

The U.S. National Missile Defense Act of 1999 (P.L. 106-38) states:

It is the policy of the United States to deploy as soon as is technologically possible an effective National Missile Defense system capable of defending the territory of the United States against limited ballistic missile attack (whether accidental, unauthorized, or deliberate) with funding subject to the annual authorization of appropriations and the annual appropriation of funds for National Missile Defense.

Ballistic missile defense (BMD) is managed by the Missile Defense Agency (MDA) in the Department of Defense. The system’s architecture includes:2

• networked sensors (including space-based) and ground- and sea-based radars for target detection and tracking;

• ground- and sea-based interceptor missiles for destroying a ballistic missile using either the force of a direct collision, called “hit-to-kill” technology, or an explosive blast fragmentation warhead;

• and a command, control, battle management, and communications network providing the operational commanders with the needed links between the sensors and interceptor missiles.

Like the GNDA, the BMD is a complex detection architecture composed of a system of systems to defend against intelligent, adaptive adversaries for a high-consequence event that has not yet occurred. And like

_____________

2 See http://www.mda.mil/system/system.html. Accessed August 1, 2013.

the GNDA, the BMD cannot be evaluated by the direct use of operational stimuli (Parnell et al., 2001; Garrett et al., 2011; Willis, 2012). BMD also has a critical time challenge for reporting and response due to the short flight times of ballistic missiles. The BMD mission also has a critical deterrence component: The United States wants to deter an adversary from attacking with nuclear ballistic missiles.

The major challenges for analyzing the effectiveness of the BMD architecture involve characterizing adversary objectives, the operational performance of the adversary ballistic missiles and warheads, and the performance of the U.S. BMD architecture. Choices for an optimized command-and-control strategy for allocating defensive assets against adversary offensive assets can then be determined. Again, there is no way to obtain a full operational evaluation of the BMD architecture. One must rely on intelligence data to obtain adversary objectives and testing to provide missile and weapon performance data. For U.S. BMD systems, military planning factors include the assessment of the availability, reliability, and probability of kill against potential BMD targets based on component, subsystem, and limited-engagement ballistic missile test data (one defense system versus one simulated adversary missile). Modeling, analysis, exercises, and red teams are used to develop and evaluate the overall effectiveness of the BMD architecture. Because adversaries will seek to achieve their objectives by exploiting BMD vulnerabilities, these models must explore the full range of potential adversary objectives and attack plans. Again, one must evaluate the full architecture because the improvement of the defense in one region may shift the risk to another region.

5.1.3 U.S. Water Security Program

The Environmental Protection Agency’s (EPA’s) Water Security Office works with the Department of Homeland Security (DHS) to protect the U.S. water and wastewater critical infrastructure against all hazards (Travers, 2012). The Water Security Office shares many of the same challenges as the GNDA: it has a complex architecture; it must defend against adaptive adversaries; and the EPA’s Water Security Office lacks regulatory and budgetary authority over national, industrial, state, and local stakeholders. There are approximately 70,000 industrially owned water and wastewater treatment facilities throughout the United States of varying sizes and complexity. In addition to the risk of contamination by a terrorist, environmental hazards must also be included in the evaluation of overall risk. In the United States, major contamination events have not occurred and environmental disasters affecting the water supply are extremely rare; consequently, operational stimuli are rare or nonexistent. Unlike other parts

of the EPA, the Water Security Office does not have regulatory authority to enforce security practices of industrial, state, and local stakeholders.

The Water Security Office relies on a combination of models, simulations, and exercises to evaluate the effectiveness of water security programs. The models and simulations are exercised at state and local levels. The results of exercises are used to increase fidelity of the models. Incentives are used to encourage data sharing and involvement of stakeholders. These include developing strong partnerships with associations to gain local trust and establish legitimacy, developing products that all stakeholders can use (e.g., a downloadable, do-it-yourself security risk assessment tool), communicating the importance of managing risk for rare-occurrence, high-consequence events, and highlighting the mutual benefits of implementing water security programs (e.g., contamination detection systems also detect early-stage corrosion problems, which if corrected could allow for cost-effective solutions). The Water Security Office strives to use unclassified and unrestricted information to increase communication and use of products by stakeholders.3 The Water Security Office also recognizes the importance of deterrence through strong security but does not tie performance objectives to this measure.

5.2 PROPOSED GNDA ANALYSIS FRAMEWORK

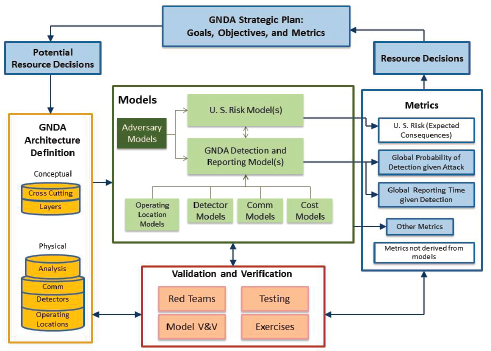

The committee’s proposed GNDA Analysis Framework provides a process for evaluating the notional metrics of the GNDA strategic plan. The framework provides an analytic capability to evaluate GNDA effectiveness and inform cost-effective resource decisions. It is described using the notional strategic plan and metrics examples presented in Chapter 4. Figure 5-1 provides a description of this framework. At the top of this figure are strategic planning, potential resource decisions that need to be made, and decisions that have been made based on analyses of the framework’s metrics. The GNDA Architecture Definition (left side of Figure 5-1) includes conceptual data (e.g., GNDA resources within the layers and crosscutting functions) as well as the physical information (e.g., locations of existing assets, detector types and detector performance, established communications channels, and analysis capabilities). Models include risk models and probabilistic network models but incorporate other models as shown. Metrics (right side of the figure; e.g., notional metrics from Chapter 4) are generated from a set of models for a variety of customers; data for metrics may also be generated from other means (e.g., timelines and budgets). Validation and Verification (V&V; bottom of the figure) is needed for a credible analysis

_____________

3 A copy of the memorandum from Michael H. Shapiro is available on the EPA website at http://water.epa.gov/infrastructure/watersecurity/lawsregs/upload/policytomanageaccesstosensitivedwrelatedinfoApril2005.pdf.

FIGURE 5-1 Proposed GNDA Analysis Framework. This framework has four major components with critical linkages to strategic planning: GNDA Architecture Definition (resource information such as detectors, personnel), Models, Metrics, and Validation and Verification (V&V). This framework guides strategic planning and allows for prioritization of goals and objectives by assessing the impacts of alternative resource allocations.

framework (Figure 5-1). V&V activities can also be used to determine or confirm uncertainties.

5.2.1 Alignment with Strategic Planning and Resource Decisions

One of the key purposes of an analysis framework is to provide iterative assessments to inform the GNDA strategic planning process. Strategic planning is not part of the analysis framework per se but it is connected to it in two ways: the framework can be used to evaluate and prioritize potential resource decisions, and the metrics’ output from the models can be used to demonstrate the effectiveness of previously made resource decisions. Potential resource decisions (e.g., new detectors, expanded training, and changes in budgets) are reflected in the GNDA Architecture Definition (left side of Figure 5-1). GNDA resource decisions also include operational changes (e.g., potential detector resource randomization strategies). The analysis framework evaluates the architecture’s proposed design using

metrics; the resulting change in metrics is used to guide resource decisions on potential improvements to meet objectives and goals. The analytical process is iterative and can therefore be used to explore a large number of potential resource decisions. Once a resource decision has been made and implemented, the framework’s metrics evaluate progress made toward meeting the strategic plan’s goals and objectives.

5.2.2 GNDA Architecture Definition

The GNDA Architecture Definition is a description of a set of detection and reporting capabilities (or resource allocations) arranged against a specific threat. The definition can be thought of as input data for the models. The purposes of the analysis framework are to evaluate the architecture’s integrated design, assess potential improvements, and to identify cost-effective improvements to meet strategic objectives. The GNDA architecture must be defined at the conceptual and physical data levels. There must be a common understanding and definition of existing GNDA architecture data (e.g., detectors, communications links, trained people) to calculate the metrics required for the assessments.

5.2.3 Models

Models are the key components of the proposed analysis framework illustrated in Figure 5-1. The reason for this is simple: as described in Section 5.1, models are essential for understanding the performance of complex systems (see also Appendix D).

Models have several important uses. They integrate data from a wide variety of GNDA-related programs and are used to exercise the GNDA against a variety of adversary stimuli. Models are also used to calculate the metrics based on other architecture data (such as data from tests, historical records, or expert elicitations). The models can be used to evaluate the current architecture, potential improvements, and their costs. Models such as RNTRA and PEM can be used in the committee’s proposed analysis framework. In addition, other models such as adaptive adversary models (see Figure 5-1) will also be needed.

The models may not capture all of the components of existing GNDA system. In fact, it may not be cost-effective to model all of the resources or programs that currently are listed in the annual reviews. However, the target percentage of GNDA representation should be a conscious decision made by decision makers with input from key stakeholders. DNDO could consider dynamically linking its models to real time data bases maintained in its analysis centers (such as the Joint Analysis Center, JAC, and others) to ensure that these models reflect the architecture as deployed.

5.2.4 Metrics

Metrics are intended to provide information on progress toward meeting objectives and goals of the GNDA strategic plan and evaluating GNDA effectiveness. The framework provides an analytical capability to produce metrics for these purposes. GNDA effectiveness can be characterized using the set of notional GNDA metrics that were introduced in Chapter 4 (see Table 4-1) and other metrics listed in Figure 5-1:

1. U.S. Risk (Expected Consequences). Although not a GNDA responsibility, the proposed analysis framework needs to provide data to support risk analyses. This metric is not listed in Table 4-1 because it does not directly link to the objectives in the notional strategic plan.

2. Effectiveness of Deterrence. As discussed in Chapter 4, measurement of deterrence will be indirect. One could develop a proxy metric by assuming that an increased cost to the adversary will be incurred by increased detection capabilities and reduced detection times.

3. Probability of Detecting an Attempt to Bring RN Materials into the United States at or between Ports of Entry. To fully evaluate the GNDA, the architecture must be evaluated against a large number of potential attack plans to identify weaknesses that an adaptive adversary may try to exploit. This is a fundamental effectiveness measure.

4. Detection Alert Time. Threat detections are necessary but not sufficient for assessing GNDA effectiveness. The sensor or sensor operator needs to send threat detection information to key operational command-and-control centers in time for decision makers to analyze, determine, and authorize appropriate actions. Alert times can be modeled in a network model.

As discussed in Chapter 4, in practice each objective would have at least one metric developed against it.

5.2.5 Validation and Verification Activities

V&V play a critical role in the proposed analysis framework: validation ensures that the GNDA models capture the architecture and detection capabilities as they exist and verification confirms that the model is correctly performing the calculations that it claims to be performing. Indepen-

dent V&V activities are needed to increase the fidelity of and confidence in the models, architecture data, and metrics.4

The following is needed for GNDA V&V:

• A complete technical description of the model and data (NRC 2008);

• Architecture designs, models and metrics validated by stakeholders to capture existing capabilities and proposed changes; and

• Credible peer review of the model.

Although the GNDA architecture design, models and metrics cannot be operationally evaluated (similar to BMD and strategic nuclear defense), specific parts can be validated using operational and developmental test data, red team assessments, exercises, and pilot activities. Several current examples of these types of activities could be used for validation.

DNDO’s Red Team and Net Assessments (Oliphant, 2012) and the Operations Support Directorates (OSD) (Fisher, 2012) train, exercise and evaluate specific components of the domestic portion of the GNDA.

Other GNDA stakeholders participate in large-scale exercises such as National Level Exercises (NLEs), Alpha Omega or Marble Challenge 2010 and National Nuclear Security Administration’s (NNSA) exercises that secure RDD threats.5 Such exercises are performed regularly to demonstrate federal, state, and local coordination capabilities. Data from these exercises can be incorporated into the analysis framework to validate models and data. The committee notes that some of the nuclear counter-terrorism activities that these exercises evaluate may fall outside of the scope of the GNDA (see Observation 2), but that there is still benefit in these exercises for state and local communication and coordination and standard operating procedures (SOP) development.

Operational data can be used for V&V purposes as well. An example of non-domestic operational data that could be used for validation is Second Line of Defense programs (Leffer, 2012). The committee judges that these activities that could serve to validate an analysis framework do not currently incorporate their results into the GNDA architecture definitions, models, or metrics.

_____________

4 For example, the Transportation Security Administration recently had an independent assessment performed on its analysis tools (Morral et al. 2012).

5 See http://nnsa.energy.gov/mediaroom/pressreleases/bearcatexercise080912. Press release from the DOE’s National Nuclear Security Administration. Accessed April 29, 2013.

5.4 FINDINGS AND RECOMMENDATIONS

The committee produced two findings and one recommendation in response to the second charge of the study.

FINDING 2.1:

A new GNDA Analysis Framework is needed to assess the GNDA effectiveness as shown in Figure 5-1. The critical components of the framework are the following:

1. A GNDA Strategic Plan that contains outcome-oriented, broadlyscoped goals, objectives, and metrics and is directly connected to the components listed below;

2. A GNDA Architectural Definition that provides the conceptual and physical descriptions of the GNDA, and that define needed input data for the models described below;

3. A suite of GNDA models that incorporate potential adversary objectives, accurately represent existing and potential architecture capabilities, and calculate the metrics described below;

4. Metrics that can gauge overall GNDA effectiveness and assess potential GNDA resource decisions to increase GNDA effectiveness; and

5. A Validation and Verification (V&V) program that evaluates the data used in the GNDA architecture definition, models. and metrics. A robust V&V program enhances the credibility of the analysis framework.

FINDING 2.2:

Current DNDO modeling, testing, red teaming, analysis, and training capabilities provide a foundation for evaluating components of the GNDA, but these current capabilities are insufficient for validating and verifying the overall effectiveness of the GNDA. Evaluating the effectiveness of the overall GNDA requires an integrated and continuous model-based scenario testing, red teaming, analysis, peer review, and training supplemented with intelligence awareness.

RECOMENDATION 2.1:

DNDO should develop a new GNDA Analysis Framework similar to the framework proposed by the committee. This framework defines an analytic process that clarifies the connections among strategic planning, architectural definition, models, metrics, and validation and verification efforts. Such an analysis framework can provide credible assessments of overall GNDA effectiveness.

A new analysis framework can also provide a credible, transparent, and actionable GNDA cost-benefit modeling capability to support resource allocation decision making within GNDA federal agencies and collaborating nations that support the GNDA.