9

Neural Networks

Computational Neuroscience: A Window to Understanding How the Brain Works

"The brain computes!" declared Christof Koch, who explained at the Frontiers of Science symposium how a comparatively new field, computational neuroscience, has crystallized an increasingly coherent way of examining the brain. The term neural network embraces more than computational models. It now may be used to refer to any of a number of phenomena: a functional group of neurons in one's skull, an assembly of nerve tissue dissected in a laboratory, a rough schematic intended to show how certain wiring might provide a gizmo that could accomplish certain feats brains—but not conventional serial computers—are good at, or a chip intended to replicate features of any or all of these using analog or digital very-large-scale integrated circuit technology. Neural networks are more than a collection of pieces: they actually embody a paradigm for looking at the brain and the mind.

Only in the last decade has the power of neural net models been generally acknowledged even among neurophysiologists. The paradigm that now governs the burgeoning field of computational neuroscience has strong roots among both theoreticians and experimentalists, Koch pointed out. With the proviso that neural networks must be designed with some fidelity to neurobiological constraints, and the caveat that their structure is analogous to the brain's in only the very broadest sense, more and more traditional neuroscientists are finding their own research questions enhanced and provoked by neural net modeling. Their principal motivation, continued Koch, "is the

realization that while biophysical, anatomical, and physiological data are necessary to understand the brain, they are, unfortunately, not sufficient."

What distinguishes the collection of models and systems called neural networks from "the enchanted loom" of neurons in the human brain? (Pioneer neuroscientist Sir Charles Sherrington coined this elegant metaphor a century ago.) That pivotal question will not be answered with one global discovery, but rather by the steady accumulation of experimental revelations and the theoretical insights they suggest.

Koch exemplifies the computational neuroscientist. Trained as a physicist as opposed to an experimental neurobiologist, he might be found at his computer keyboard creating code, in the laboratory prodding analog very-large-scale integrated (VLSI) chips mimicking part of the nerve tissue, or sitting at his desk devising schema and diagrams to explain how the brain might work. He addressed the Frontiers symposium on the topic "Visual Motion: From Computational Analysis to Neural Networks and Perception," and described to his assembled colleagues some of the "theories and experiments I believe crucial for understanding how information is processed within the nervous system." His enthusiasm was manifest and his speculations about how the brain might work provocative: ''What is most exciting about this field is that it is highly interdisciplinary, involving areas as diverse as mathematics, physics, computer science, biophysics, neurophysiology, psychophysics, and psychology. The Holy Grail is to understand how we perceive and act in this world—in other words, to try to understand our brains and our minds."

Among those who have taken up the quest are the scientists who gathered for the Frontiers symposium session on neural networks, most of whom share a background of exploring the brain by looking at the visual system. Terrence Sejnowski, an investigator with the Howard Hughes Medical Institute at the Salk Institute and University of California, San Diego, has worked on several pioneering neural networks and has also explored many of the complexities of human vision. Shimon Ullman at the Massachusetts Institute of Technology concentrates on deciphering the computations used by the visual system to solve the problems of vision. He wrote an early treatise on the subject over a decade ago and worked with one of the pioneers in the field, David Marr. He uses computers in the search but stated his firm belief that such techniques need to "take into account the known psychological and biological data." Anatomists, physiologists, and other neuroscientists have developed a broad body of knowledge about how the brain is wired together with its interconnected neu-

rons and how those neurons communicate the brain's fundamental currency of electricity. Regardless of their apparent success, models of how we think that are not consistent with this knowledge engender skepticism among neuroscientists.

Fidelity to biology has long been a flashpoint in the debate over the usefulness of neural nets. Philosophers, some psychologists, and many in the artificial intelligence community tend to devise and to favor "top-down" theories of the mind, whereas working neuroscientists who experiment with brain tissue approach the question of how the brain works from the "bottom up." The limited nature of top-down models, and the success of neuroscientists in teasing out important insights by direct experiments on real brain tissue, have swung the balance over the last three decades, such that most modelers now pay much more than lip service to what they call "the biological constraints.'' Also at the Frontiers symposium was William New-some. Koch described him as an experimental neurophysiologist who embodies this latter approach and whose "recent work on the brains of monkeys has suggested some fascinating new links between the way nerve cells behave and the perception they generate."

Increasingly, modelers are trying to mimic what is understood about the brain's structure. Said James Bower, a session participant who is an authority on olfaction and an experimentalist and modeler also working at the California Institute of Technology, "The brain is such an exceedingly complicated structure that the only way we are really going to be able to understand it is to let the structure itself tell us what is going on." The session's final member, Paul Adams, an investigator with the Howard Hughes Medical Institute at SUNY, Stony Brook, is another neuroscientist in the bottom-up vanguard looking for messages from the brain itself, trying to decipher exactly how individual nerve cells generate the electrical signals that have been called the universal currency of the brain. On occasion, Adams collaborates with Koch to make detailed computer models of cell electrical behavior.

Together the session's participants embodied a spectrum of today's neuroscientists—Koch, Sejnowski, and Ullman working as theoreticians who are all vitally concerned with the insights from neurobiology provided by their experimentalist colleagues, such as Bower and Adams. From these diverse viewpoints, they touched on many of the elements of neural nets—primarily using studies of vision as a context—and provided an overview of the entire subject: its genesis as spurted by the promise of the computer, how traditional neuroscientists at first resisted and have slowly become more open to the promise of neural networks, how the delicate and at times hotly de-

bated interplay of neuroscience and computer modeling works, and where the neural net revolution seems headed.

COMPUTATION AND THE STUDY OF THE NERVOUS SYSTEM

Over the last 40 years, the question has often been asked: Is the brain a computer? Koch and his colleagues in one sense say no, lest their interlocutors assume that by computer is meant a serial digital machine based on a von Neumann architecture, which clearly the brain is not. In a different sense they say yes, however, because most tasks the brain performs meet virtually any definition of what a computer must do, including the famous Turing test. English mathematician Alan Turing, one of the pioneers in the early days of computation, developed an analysis of what idealized computers were doing, generically. Turing's concept employed an endless piece of tape containing symbols that its creators could program to either erase or print. What came to be called the Turing machine, wrote physicist Heinz Pagels, reduces logic "to its completely formal kernel. . . . If something can be calculated at all, it can be calculated on a Turing machine" (Pagels, 1988, p. 294).

Koch provided a more specific, but still generic, definition: a computing system maps a physical system (such as beads on an abacus) to a more abstract system (such as the natural numbers); then—when presented with data—they respond on the basis of the representations according to some algorithm, usually transforming the original representations. By this definition the brain, indubitably, is a computer. The world is represented to us through the medium of our senses, and, through a series of physical events in the nervous system, brain states emerge that we take to be a representation of the world. That these states are not identical with the world is manifest, the further up the ladder of higher thought we climb. Thus there is clearly a transformation. Whether the algorithm that describes how it comes about will ever be fully specified remains for the future of neuroscience. That the brain is performing what Koch calls "the biophysics of computation," however, seems indisputable.

Computational neuroscience has developed such a momentum of intriguing and impressive insights and models that the formerly lively debate (Churchland and Churchland, 1990; Searle, 1990) over whether or not the brain is a computer is beginning to seem somewhat academic and sterile. Metaphorically and literally, computers represent, process, and store information. The brain does all of these things,

and with a skill and overall speed that almost always surpasses even the most powerful computers produced thus far.

Koch has phrased it more dramatically: ''Over the past 600 million years, biology has solved the problem of processing massive amounts of noisy and highly redundant information in a constantly changing environment by evolving networks of billions of highly interconnected nerve cells." Because these information processing systems have evolved, Koch challenged, "it is the task of scientists to understand the principles underlying information processing in these complex structures." The pursuit of this challenge, as he suggested, necessarily involves multidisciplinary perspectives not hitherto embraced cleanly under the field of neuroscience. "A new field is emerging," he continued: "the study of how computations can be carried out in extensive networks of heavily interconnected processing elements, whether these networks are carbon-or silicon-based.''

NEURAL NETWORKS OF THE BRAIN

In the 19th century, anatomists looking at the human brain were struck with its complexity (the common 20th-century metaphor is its wiring). Some of the most exquisite anatomical drawings were those by Spaniard anatomist Ramón y Cajal, whose use of Golgi staining revealed under the microscope the intricate branching and myriad connections of the brain's constituent parts. These elongated cells have been called neurons from the time of Aristotle and Galen. Throughout the history of neuroscience, the spotlight has swung back and forth, onto and away from these connections and the network they constitute. The question is how instrumental the wiring is to the brain states that develop. On one level, for decades, neuroscientists have studied the neuronal networks of sea slugs and worms up through cats and monkeys. This sort of analysis often leads to traditional, biological hypothesizing about the exigencies of adaptation, survival, and evolutionary success. But in humans, where neuronal networks are more difficult to isolate and study, another level of question must be addressed. Could Mozart's music, Galileo's insight, and Einstein's genius, to mention but a few examples—be explained by brains "wired together" in ways subtly but importantly different than in other humans?

The newest emphasis on this stupendously elaborate tapestry of nerve junctures is often referred to as connectionism, distinguished by its credo that deciphering the wiring diagram of the brain, and understanding what biophysical computations are accomplished at the junctions, should lead to dramatic insights into how the overall

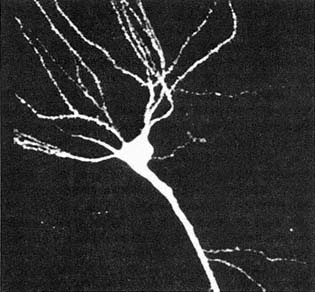

Figure 9.1 Nerve cell from the hippocampus, reconstituted by confocal microscopy. (Courtesy of P. Adams.)

system works (Hinton and Anderson, 1981). How elaborate is it? Estimates place the number of neurons in the central nervous system at between 1010 and 1011; on average, said Koch, "each cell in the brain is connected with between 1,000 and 10,000 others." A good working estimate of the number of these connections, called synapses, is a hundred million million, or 1014.

Adams, a biophysicist, designs models and conducts experiments to explore the details of how the basic electrical currency of the brain is minted in each individual neuron (Figure 9.1). He has no qualms referring to it as a bottom-up approach, since it has become highly relevant to computational neuroscience ever since it became appreciated "that neurons do not function merely as simple logical units" or on-off switches as in a digital computer. Rather, he explained, a large number of biophysical mechanisms interact in a highly complex manner that can be thought of as computing a nonlinear function of a neuron's inputs. Furthermore, depending on the state of the cell, the same neuron can compute several quite different functions of its inputs. The "state" Adams referred to can be regulated both by chemical modulators impinging on the cell as well as by the cell's past history.

To demonstrate another catalog of reasons why the brain-as-digital-computer metaphor breaks down, Adams described three types of electrical activity that distinguish nerve cells and their connections from passive wires. One type of activity, synaptic activity, occurs at the connections between neurons. A second type, called the action potential, represents a discrete logical pulse that travels in a kind of chain reaction along a linear path down the cell's axon (see Box 9.1). The third type of activity, subthreshold activity, provides the links between the other two.

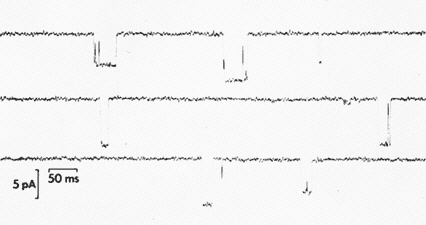

Each type of electrical activity is caused by "special protein molecules, called ion channels, scattered throughout the membrane of the nerve cells," said Adams. These molecules act as a sort of tiny molecular faucet, he explained, which, when turned on, "allows a stream of ions to enter or leave the cell (Figure 9.2). The molecular faucet causing the action potential is a sodium channel. When this channel is open, sodium streams into the cell," making its voltage positive. This voltage change causes sodium faucets further down the line to open in turn, leading to a positive voltage pulse that travels along the neuron's axon to the synapses that represent that cell's connections to other neurons in the brain. This view of "the action potential," he said, "was established 30 years ago and is the best-founded theory in neurobiology."

The other types of activity, synaptic and subthreshold, "are more complex and less well understood," he said, but it is clear that they, too, involve ion channels. These ion channels are of two types, which either allow calcium to stream into the cell or allow potassium to leave it. Adams has explored the mechanisms in both types of channel. Calcium entering a neuron can trigger a variety of important effects, causing chemical transmitters to be released to the synapses, or triggering potassium channels to open that initiate other subthreshold activity, or producing the long-term changes at synapses that underlie memory and learning.

The subcellular processes that govern whether—and how rapidly—a neuron will fire occur on a time scale of milliseconds but can be visualized using a scanning laser microscope. Activated by additional channels that allow double-charged calcium ions (Ca++) to rapidly enter the cell are two types of potassium channels. One of these channels quickly terminates the sodium inrush that is fueling the action potential. The other one responds much more slowly, making it more difficult for the cell to fire spikes in quick succession. Yet other types of potassium channels are triggered to open, not by calcium, but rather in response to subthreshold voltage changes. Called "M" and "D," these channels can either delay or temporarily prevent

|

BOX 9.1 HOW THE BRAIN TRANSMITS ITS SIGNALS The Source and Circuitry of the Brain's Electricity Where does the brain's network of neurons come from? Is it specified by DNA, or does it grow into a unique albeit human pattern that depends on each person's early experiences? These alternative ways of framing the question echo a debate that has raged throughout the philosophical history of science since early in the 19th century. Often labelled nature vs. nurture, the controversy finds perhaps its most vibrant platform in considering the human brain. How much the DNA can be responsible for the brain's wiring has an inherent limit. Since there are many more synaptic connections—"choice points"—in a human brain network during its early development than there are genes on human chromosomes, at the very least genes for wiring must specify not synapse-by-synapse connections, but larger patterns. The evolution of the nervous system over millions of years began with but a single cell sensing phenomena near its edge, and progressed to the major step that proved to be the pivotal event leading to the modern human brain—the development of the cerebral cortex. Koch described the cortex as a "highly convoluted, 2-millimeter-thick sheet of neurons. If unfolded, it would be roughly the size of a medium pizza, about 1500 square centimeters." It is the cerebral cortex that separates the higher primates from the rest of living creatures, because most thinking involves the associative areas located in its major lobes. A cross section of this sheet reveals up to six layers, in each of which certain types of neurons predominate. The density of neurons per square millimeter of cortical surface Koch put at about 100,000. "These are the individual computational elements, fundamentally the stuff we think with," he explained. Although there are many different types of neurons, they all function similarly. And while certain areas of the brain and certain networks of neurons have been identified with particular functions, it is the basic activity of each of the brain's 10 billion neurons that constitutes the working brain. Neurons either fire or they do not, and an electrical circuit is thereby completed or interrupted. Thus, how a single neuron works constitutes the basic foundation of neuroscience. The cell body (or soma) seems to be the ultimate destination of the brain's electrical signals, although the signals first arrive at the thousands of dendrites that branch off from each cell's soma. The nerve cell sends its signals through a different branch, called an axon. Only one main axon comes from each cell body, although it may branch many times into collaterals in order to reach all of the cells to which it is connected for the purpose of sending its electrical signal. |

|

Electricity throughout the nervous system flows in only one direction: when a cell fires its "spike" of electricity, it is said to emit an action potential that travels at speeds up to about 100 miles per hour. A small electric current begins at the soma and travels down the axon, branching without any loss of signal as the axon branches. The axon that is carrying the electric charge can run directly to a target cell's soma, but most often it is the dendrites branching from the target cell that meet the transmitting axon and pick up its signal. These dendrites then communicate the signal down to their own soma, and a computation inside the target cell takes place. Only when the sum of the inputs from the hundreds or thousands of the target cell's dendrites achieves a threshold inherent to that particular cell will the cell fire. These inputs, or spikes, are bursts of current that vary only in their pulse frequency, not their amplitude. Each time a given nerve cell fires, its signal is transmitted down its axon to the same array of cells. Every one of the 1015 connections in the nervous system is known as a synapse, whether joining axon to dendrite or axon to cell body. One final generalization about the brain's electricity: when a cell fires and transmits its characteristic (amplitude) electrical message, it may vary in two ways. First, it can emit a more frequent pulse. Second, when the action potential reaches the synaptic connections that the axon makes with other cells, it can either excite the target cell's electrical activity or inhibit it. Every cell has its own inherent threshold, which must be achieved in order for the cell to spike and send its message to all of the other cells to which it is connected. Whether that threshold is reached depends on the cumulative summation of all of the electrical signals—both excitatory and inhibitory—that a cell receives in a finite amount of time. Simple Chemistry Provides the Brain's Electricity The fluid surrounding a neuron resembles dilute seawater, with an abundance of sodium and chloride ions, and dashes of calcium and magnesium. The cytoplasm inside the cell is rich in potassium ions, and many charged organic molecules. The neuron membrane is a selective barrier separating these two milieu. In the resting state only potassium can trickle through, which as it escapes creates a negative charge on the cytoplasm. The potential energy represented by this difference in polarity for each cell is referred to as its resting potential and can be measured at about a tenth of a volt. Twenty such cells match the electrical energy in a size-D flashlight battery. On closer inspection, the cell membrane actually consists of myriad channels, each of which has a molecular structure configured to |

|

let only one particular ion substance pass through its gate. When the nerve is in its resting state, most of these gates are closed. The nerve is triggered to fire when a critical number of its excitatory synapses receive neurotransmitter signals that tip the balance of the cell's interior charge, causing the gates on the sodium channels to open. Sodium ions near these gates rush in for simple chemical reasons, as their single positive charge left by the missing outer-shell electron is attracted to the negative ions inside the cell. This event always begins near the cell body. However, as soon as the inrushing sodium ions make the polarity more positive in that local region, nearby sodium gates further down the axon are likewise triggered to open. This process of depolarization, which constitutes the electrical action potential, is self-perpetuating. No matter how long the journey down the axon—and in some nerve cells it can be more than a meter—the signal strength is maintained. Even when a damaged region of the cell interrupts the chemical chain reaction, the signal resumes its full power as it continues down the axon beyond the compromised region. The duration of the event is only about 0.001 second, as the system quickly returns to equilibrium. The foregoing sketch of the chemistry explains the excitatory phase and why cells do fire. Conversely, many synapses are inhibitory and instead of decreasing the cell's negative polarity actually increase it, in part by opening chloride gates. This explains, in part, why cells do not fire. This picture of how neurons fire leads to the explanation of why a given cell will begin the action-potential chain reaction. Only when its polarity changes sufficiently to open enough of the right kind of gates to depolarize it further will the process continue. The necessary threshold is achieved by a summation of impulses, which occur for either or both of two reasons: either the sending neuron fires rapidly in succession or a sufficiently large number of excitatory neurons are firing. The result is the same in either case: when the charge inside the cell decreases to a certain characteristic value, the sodium gates start to open and the neuron depolarizes further. Once begun, this sequence continues throughout the axon and all of its branches, and thereby transforms the receiving nerve cell into a transmitting one, whose inherent electrical signal continues to all of the other cells connected to it. Thus, from one neuron to another, a signal is transmitted and the path of the electricity defines a circuit. SOURCES: Changeux, 1986, pp. 80ff.; Bloom and Lazerson, 1988, pp. 36–40. |

Figure 9.2 Single ion channels in a membrane opening and closing. (Courtesy of P. Adams.)

the cell's firing that active synapses would otherwise cause. Adams and his colleagues have shown that many of these calcium and potassium channels are regulated by "neuromodulators," chemicals released by active synapses.

Working energetically from the bottom up, "the cellular or molecular neurobiologist may appear to be preoccupied by irrelevant details" that shed no light on high-level functions, Adams conceded. "Knowing the composition of the varnish on a Stradivarius is irrelevant to the beauty of a chaconne that is played with it. Nevertheless," he asserted, "it is clear that unless such details are just right, disaster will ensue." Any more global theory of the brain or the higher or emergent functions it expresses must encompass theories that capture these lower-level processes. Adams concluded by giving "our current view of the brain" as seen from the perspective of an experimental biophysicist: ''a massively parallel analog electrochemical computer, implementing algorithms through biophysical processes at the ion-channel level.''

FROM COMPUTERS TO ARTIFICIAL INTELLIGENCE TO CONNECTIONISM

Models—Attempts to Enhance Understanding

The complexity of the brain's structure and its neurochemical firing could well stop a neural net modeler in his or her tracks. If the

factors influencing whether just one neuron will fire are so complex, how can a model remain "faithful to the biological constraints," as many neuroscientists insist it must, and yet still prove useful? This very pragmatic question actually provides a lens to examine the development of neuroscience throughout the computational era since the late 1940s, for the advent of computers ignited a heated controversy fueled by the similarities—and the differences—between brains and computers, between thinking and information processing. Adams believes that neuroscience is destined for such skirmishes and growing pains because—relative to the other sciences—it is very young. "Really it is only since World War II that people have had any real notion about what goes on there at all," he pointed out.

Throughout this history, an underlying issue persists: what is a model, and what purpose does it serve? Much of the criticism of computer models throughout the computational era has included the general complaint that models do not reflect the brain's complexity fairly, and current neural networkers are sensitive to this issue. "It remains an interesting question," said Ullman, "whether your model represents a realistic simplification or whether you might really be throwing away the essential features for the sake of a simplified model." Koch and his colleague Idan Segev, however, preferred to reframe the question, addressing not whether it is desirable to simplify, but whether it is possible to avoid doing so (they say it is not) and—even if it were—to ask what useful purpose it would serve. Their conclusion was that "even with the remarkable increase in computer power during the last decade, modeling networks of neurons that incorporate all the known biophysical details is out of the question. Simplifying assumptions are unavoidable" (Koch and Segev, 1989, p. 2).

They believe that simplifications are the essence of all scientific models. "If modeling is a strategy developed precisely because the brain's complexity is an obstacle to understanding the principles governing brain function, then an argument insisting on complete biological fidelity is self-defeating," they maintained. In particular, the brain's complexity beggars duplication in a model, even while it begs for a metaphorical, analogical treatment that might yield further scientific insight. (Metaphors have laced the history of neuroscience, from Sherrington's enchanted loom to British philosopher Gilbert Ryle's ghost in the machine, a construct often employed in the effort to embody emergent properties of mind by those little concerned with the corporeal nature of the ghost that produced them.) But Koch and his colleagues "hope that realistic models of the cerebral cortex do not need to incorporate explicit information about such minutiae as the various ionic channels [that Adams is studying]. The style of

simplifying assumptions they suggest could be useful "[might be analogous to] . . . Boyle's gas law, where no mention is made of the 1023 or so molecules making up the gas." Physics, chemistry, and other sciences concerned with minute levels of quantum detail, or with the location of planets and stars in the universe, have experienced the benefit of simplified, yet still accurate, theories.

Writing with Sejnowski and the Canadian neurophilosopher Patricia Churchland, Koch has made the point that scientists have already imposed a certain intellectual structure onto the study of the brain, one common in scientific inquiry: analysis at different levels (Sejnowski et al., 1988). In neuroscience, this has meant the study of systems as small as the ionic channels (on the molecular scale, measured in nanometers) or as large as working systems made up of networks and the maps they contain (on the systems level, which may extend over 10 centimeters). Spanning this many orders of magnitude requires that the modeler adapt his or her viewpoint to the scale in question. Evidence suggests that the algorithms that are uncovered are likely to bridge adjacent levels. Further, an algorithm may be driven by the modeler's approach. Implementing a specific computational task at a particular level is not necessarily the same as asking what function is performed at that level. The former focuses on the physical substrate that is accomplishing the computation, the latter on what functional role the system is accomplishing at that level. In either case, one can look "up" toward emergent brain states, or "down" toward the biophysics of the working brain. Regardless of level or viewpoint, it is the computer—both as a tool and as a concept—that often enables the modeler to proceed.

Computation and Computers

Shortly after the early computers began to crunch their arrays of numbers and Turing's insights began to provoke theorists, Norbert Wiener's book Cybernetics was published, and the debate was joined over minds vs. machines, and the respective province of each. Most of the current neural net modelers developed their outlooks under the paradigm suggested above; to wit, the brain's complexity is best approached not by searching for some abstract unifying algorithm that will provide a comprehensive theory, but rather by devising models inspired by how the brain works—in particular, how it is wired together, and what happens at the synapses. Pagels included this movement, called connectionism, among his new "sciences of complexity": scientific fields that he believed use the computer to view and model the world in revolutionary and essential ways (Pagels,

1988). He dated the schism between what he called computationalists and connectionists back to the advent of the computer (though these terms have only assumed their current meaning within the last decade).

At the bottom of any given revival of this debate was often a core belief as to whether the brain's structure was essential to thought—whether, as the connectionists believed, intelligence is a property of the design of a network. Computationalists wanted to believe that their increasingly powerful serial computers could manipulate symbols with a dexterity and subtlety that would be indistinguishable from those associated with humans, hence the appeal of the Turing test for intelligence and the birth of the term (and the field) artificial intelligence. A seminal paper by Warren McCulloch and Walter H. Pitts in 1943 demonstrated that networks of simple McCullough and Pitts neurons are Turing-universal: anything that can be computed, can be computed with such networks (Allman, 1989). Canadian neuroscientist Donald Hebb in 1949 produced a major study on learning and memory that suggested neurons in the brain actually change—strengthening through repeated use—and therefore a network configuration could "learn," that is, be enhanced for future use.

Throughout the 1950s the competition for funding and converts continued between those who thought fidelity to the brain's architecture was essential for successful neural net models and those who believed artificial intelligence need not be so shackled. In 1962, in Frank Rosenblatt's Principles of Neurodynamics, a neural net model conceptualizing something called perceptrons curried much favor and attention (Allman, 1989). When Marvin Minsky and Seymour Papert published a repudiation of perceptrons in 1969, the artificial intelligence community forged ahead of the neural net modelers. The Turing machine idea empowered the development of increasingly abstract models of thought, where the black box of the mind was considered more a badge of honor than a concession to ignorance of the functioning nervous system. It was nearly a decade later before it was widely recognized that the valid criticism of perceptrons did not generalize to more sophisticated neural net models. Meanwhile one of Koch's colleagues, computer scientist Tomaso Poggio, at the Massachusetts Institute of Technology, was collaborating with David Marr to develop a very compelling model of stereoscopic depth perception that was clearly connectionist in spirit. "Marr died about 10 years ago," said Koch, but left a strong legacy for the field. Although he was advocating the top-down approach, his procedures were "so tied to biology" that he helped to establish what was to become a more unified approach.

Turning Top-down and Bottom-up Inside Out

Although always concerned about the details of neurobiology, Marr was strongly influenced by the theory of computation as it developed during the heyday of artificial intelligence. According to Church-land et al. (1990), Marr characterized three levels: (1) the computational level of abstract problem analysis . . .; (2) the level of the algorithm . . .; and (3) the level of physical implementation of the computation" (pp. 50–51). Marr's approach represented an improvement on a pure artifical intelligence approach, but his theory has not proven durable as a way of analyzing how the brain functions. As Sejnowski and Churchland have pointed out, "'When we measure Marr's three levels of analysis against levels of organization in the nervous system, the fit is poor and confusing'" (quoted in Churchland et al., 1990, p. 52). His scheme can be seen as another in a long line of conceptualizations too literally influenced by a too limited view of the computer. As a metaphor, it provides a convenient means of classification. As a way to understand the brain, it fails.

Inadequacy of the Computer as a Blueprint for How the Brain Works

Nobelist and pioneering molecular biologist Francis Crick, together with Koch, has written about how this limited view has constrained the development of more fruitful models (Crick and Koch, 1990). "A major handicap is the pernicious influence of the paradigm of the von Neumann digital computer. It is a painful business to try to translate the various boxes and labels, 'files,' 'CPU,' 'character buffer,' and so on occurring in psychological models—each with its special methods of processing—into the language of neuronal activity and interaction," they pointed out. In the history of thinking about the mind, metaphors have often been useful, but at times misleadingly. "The analogy between the brain and a serial digital computer is an exceedingly poor one in most salient respects, and the failures of similarity between digital computers and nervous systems are striking," wrote Churchland et al. (1990, p. 47).

Some of the distinctions between brains and computers: the von Neumann computer relies on an enormous landscape of nonetheless very specific places, called addresses, where data (memory) is stored. The computer has its own version of a "brain," a central processing unit (CPU) where the programmer's instructions are codified and carried out. Since the entire process of programming relies on logic, as does the architecture of the circuitry where the addresses can be

found, the basic process generic to most digital computers can be described as a series of exactly specified steps. In fact this is the definition of a computer algorithm, according to Churchland. "You take this and do that. Then take that and do this, and so on. This is exactly what computers happen to be great at," said Koch. The computer's CPU uses a set of logical instructions to reply to any problem it was programmed to anticipate, essentially mapping out a step-by-step path through the myriad addresses to where the desired answer ultimately resides. The address for a particular answer usually is created by a series of operations or steps that the algorithm specifies, and some part of the algorithm further provides instructions to retrieve it for the microprocessor to work with. A von Neumann computer may itself be specified by an algorithm—as Turing proved—but its great use as a scientific tool resides in its powerful hardware.

Scientists trying to model perception eschew the serial computer as a blueprint, favoring instead network designs more like that of the brain itself. Perhaps the most significant difference between the two is how literal and precise the serial computer is. By its very definition, that literalness forbids the solution of problems where no precise answer exists. Sejnowski has collaborated on ideas and model networks with many of the leaders in the new movement, among them Churchland. A practicing academician and philosopher, Church-land decided to attend medical school to better appreciate the misgivings working neuroscientists have developed about theories that seem to leave the functioning anatomy behind. In her book Neurophilosophy: Toward a Unified Science of the Mind/Brain, she recognized a connection between neural networks and the mind: "'Our decision making is much more complicated and messy and sophisticated—and powerful—than logic . . . [and] may turn out to be much more like the way neural networks function: The neurons in the network interact with each other, and the system as a whole evolves to an answer. Then, introspectively, we say to ourselves: I've decided'" (Allman, 1989, p. 55).

The Computer as a Tool for Modeling Neural Systems

Critiques like Crick's of the misleading influence the serial computer has had on thinking about the brain do not generalize about other types of computers, nor about the usefulness of the von Neumann machines as a tool for neural networkers. Koch and Segev wrote that in fact "computers are the conditio sine qua non for studying the behavior of the model[s being developed] for all but the most trivial cases" (Koch and Segev, 1989, p. 3). They elaborated on the

theme raised by Pagels, about the changes computation has wrought in the very fabric of modern science: "Computational neuroscience shares this total dependence on computers with a number of other fields, such as hydrodynamics, high-energy physics, and meteorology, to name but a few. In fact, over the last decades we have witnessed a profound change in the nature of the scientific enterprise" (p. 3). The tradition in Western science, they explained, has been a cycle that runs from hypothesis to prediction, to experimental test and analysis, a cycle that is repeated over and again and that has led, for example, "to the spectacular successes of physics and astronomy. The best theories, for instance Maxwell's equations in electrodynamics, have been founded on simple principles that can be relatively simply expressed and solved" (p. 3).

"However, the major scientific problems facing us today probably do not have theories expressible in easy-to-solve equations," they asserted (p. 3). "Deriving the properties of the proton from quantum chromodynamics, solving Schroedinger's equation for anything other than the hydrogen molecule, predicting the three-dimensional shape of proteins from their amino-acid sequence or the temperature of earth's atmosphere in the face of increasing carbon dioxide levels—or understanding the brain—are all instances of theories requiring massive computer power" (p. 3). As firmly stated by Pagels in his treatise, and as echoed throughout most of the sessions at the Frontiers symposium, "the traditional Baconian dyad of theory and experiment must be modified to include computation," said Koch and Segev. "Computations are required for making predictions as well as for evaluating the data. This new triad of theory, computation and experiment leads in turn to a new [methodological] cycle: mathematical theory, simulation of theory and prediction, experimental test, and analysis'' (p. 3).

Accounting for Complexity in Constructing Successful Models

Another of the traditional ways of thinking about brain science has been influenced by the conceptual advances now embodied in computational neuroscience. Earlier the brain-mind debate was framed in terms of the sorts of evidence each side preferred to use. The psychologists trying to stake out the terrain of "the mind" took an approach that was necessarily top-down, dominated by a view known as general problem solving (GPS), by which they meant that, as in a Turing machine, the software possessed the answers, and what they sometimes referred to as the "wetware" was largely irrelevant, if not immaterial. This did not prevent their constructing models, howev-

er, said Koch. "If you open a book on perception, you see things like 'input buffer,' 'CPU,' and the like, and they use language that suggests you see an object in the real world and open a computer file in the brain to identify it."

Neurophysiologists in particular have preferred to attend to the details and complexities rather than grasping at theories and patterns that sometimes seem to sail off into wonderland. "As a neuroscientist I have to ask," said Koch, "Where is this input buffer? What does it mean to 'open a file in the brain?'"

Biological systems are quintessentially dynamic, under the paradigm of neo-Darwinian evolution. Evolution designed the brain one step at a time, as it were. At each random, morphological change, the test of survival was met not by an overall plan submitted to conscious introspection, but rather by an organism that had evolved a specific set of traits. "Evolution cannot start from scratch," wrote Churchland et al. (1990), "even when the optimal design would require that course. As Jacob [1982] has remarked, evolution is a tinkerer, and it fashions its modification out of available materials, limited by earlier decisions. Moreover, any given capacity . . . may look like a wonderfully smart design, but in fact it may not integrate at all well with the wider system and may be incompatible with the general design of the nervous system" (p. 47). Thus, they conclude, in agreement with Adams, "neurobiological constraints have come to be recognized as essential to the project" (p. 47).

If a brain does possess something analogous to computer programs, they are clearly embedded in the scheme of connections and within the billions of cells of which that brain is composed, the same place where its memory must be stored. That place is a terrain that Adams has explored in molecular detail. "I really don't believe that we're dealing with a software-hardware relationship, where the brain possesses a transparent program that will work equally well on any type of computer. There's such an intimate connection," he stressed, "between how the brain does the computation and the computations that it does, that you can't understand one without the other." Almost all modern neuroscientists concur.

The Parallel, Distributed Route to New Insights

By the time Marr died in 1981, artifical intelligence had failed to deliver very compelling models about global brain function (in general, and memory in particular), and two essential conclusions were becoming apparent. First, the brain is nothing like a serial computer, in structure or function. Second, since memory could not be as

signed a specific location, it must be distributed throughout the brain and must bear some important relationship to the networks through which it was apparently laid down and retrieved.

It was these and other distinctions between brains and serial computers that led physicist John Hopfield to publish what was to prove a seminal paper (Hopfield, 1982). The Hopfield network is, in a sense, the prototypical neural net because of its mathematical purity and simplicity, but still reflects Hopfield's long years of studying the brain's real networks. "I was trying to create something that had the essence of neurobiology. My network is rich enough to be interesting, yet simple enough so that there are some mathematical handles on it," he wrote (p. 81). Koch agreed: "There were other neural networks around similar to Hopfield's, but his perspective as a physicist" turned out to be pivotal. Hopfield described his network, said Koch, "in a very elegant manner, in the language of physics. He used clear and meaningful terms, like 'state space,' and 'variational minimum,' and 'Lyapunov function.' Hopfield's network was very elegant and simple, with the irrelevancies blown away." Its effect was galvanic, and suddenly neural networks began to experience a new respectability.

David Rumelhart of Stanford University, according to Koch, "was the guiding influence" behind what was to prove a seminal collection of insights that appeared in 1986. Parallel Distributed Processing: Explorations in the Microstructure of Cognition (Rumelhart and McClelland, 1986) included the work of a score of contributing authors styled as the PDP research group, among whose number were Crick and Sejnowski. As the editors said in their introduction, "One of the great joys of science lies in the moment of shared discovery. One person's half-baked suggestion resonates in the mind of another and suddenly takes on a definite shape. An insightful critique of one way of thinking about a problem leads to another, better understanding. An incomprehensible simulation result suddenly makes sense as two people try to understand it together. This book grew out of many such moments" (p. ix). For years, various combinations of these people, later augmented by Churchland and others, have met regularly at the University of California, San Diego, where Sejnowski now works.

This two-volume collection contains virtually all of the major ideas then current about what neural networks can and might do. The field is progressing rapidly, and new ideas and models continue to appear in journals such as Network and Neural Computation. As a generalization, neural networks are designed to try to capture some of the abilities brains demonstrate when they think, rather than the

logic-based rules and deductive reasoning computers embody when they process information. The goal of much work in neural networks is to develop one or more of the abilities usually believed to require human judgment and insight, for example, the abilities to recognize patterns, make inferences, form categories, arrive at a consensus "best guess" among numerous choices and competing influences, forget when necessary and appropriate, learn from mistakes, learn by training, modify decisions and derive meaning according to context, match patterns, and make connections from imperfect data.

VISION MODELS OPEN A WINDOW ON THE BRAIN

Koch's presentation on unravelling the complexities of vision suggested the basic experimental approach in computational neuroscience: Questions are posed about brain states and functions, and the answers are sought by pursuing a continually criss-crossing path from brain anatomy to network behavior to mathematical algorithm to computer model and back again.

One begins to narrow to a target by developing an algorithm and a physical model to implement it, and by testing its results against the brain's actual performance. The model may then be refined until it appears able to match the real brains that are put to the same experimental tests. If a model is fully specified by one's network, the model's structure then assumes greater significance as a fortified guess about how the brain works. The real promise of neural networks is manifest when a model is established to adjust to and learn from the experimental inputs and the environment; one then examines how the network physically modified itself and "learned" to compute competitively with the benchmark brain.

Motion Detectors Deep in the Brain

As Koch explained to the symposium scientists: "All animals with eyes can detect at least some rudimentary aspects of visual motion. . . . Although this seems a simple enough operation to understand, it turns out to be an enormously difficult problem: What are the computations underlying this operation, and how are they implemented in the brain?" Specifically, in the case of motion, the aim is to compute the optical flow field, that is, to "attach" to every moving location in the image a vector whose length is proportional to the speed of the point and whose direction corresponds to the direction of the moving object (Figure 9.3). As used here, the term computation carries two meanings. First, the network of neuronal circuitry pro-

jecting from the eye back into the brain can be viewed as having an input in the form of light rays projecting an image on the retina, and an output in terms of perception—what the brain makes of the input. Human subjects can report on many of their brain states, but experiments with other species raise the problem of the scientist having to interpret, rather than merely record, the data. Koch models the behavior of a species of monkey, Macaca mulatta, which has a visual system whose structure is very close to that found in humans and which is very susceptible to training. Although it is not possible to inquire directly of the animal what it sees, some very strong inferences can be made from its behavior. The second sense of computation involves the extent to which the input-output circuit Koch described can be thought of as instantiating a computation, i.e., an information-processing task.

Before a simulation progresses to the stage that an analog network prototype is physically constructed and experiments are run to see how it will perform, the scientist searches for an algorithm that seems to capture the observable data. ''Computational theory provides two broad classes of algorithms computing the optical flow associated with changing image brightness," said Koch, who has chosen and refined the one he believes best explains the experimental data. He established some of the basic neuroanatomy for the symposium's scientists to provide a physical context in which to explain his model.

In the monkey's brain nearly 50 percent of the cortex (more than in the human brain) is related to vision, and about 15 percent of that constitutes the primary visual area (V1) at the back of the head, the first place, in moving through the monkey brain's visual circuitry, where cells are encountered that seem inherently sensitive to the direction of a perceived motion in the environment. "In connectionists' parlance, they are called place or unit coded, because they respond to motion only in a particular—their preferred—direction," Koch explained. At the next step in the circuit, the middle temporal area (MT), visual information clearly undergoes analysis for motion cues, since, he said, "80 percent of all cells in the MT are directionally selective and tuned for the speed of the stimulus."

One hurdle to overcome for effectively computing optical flow is the so-called aperture problem, which arises because each cortical cell only "sees" a very limited part of the visual field, and thus "only the component of velocity normal to the contour can be measured, while the tangential component is invisible," Koch said. This problem is inherent to any computation of optical flow and essentially presents the inquirer with infinitely many solutions. Research into

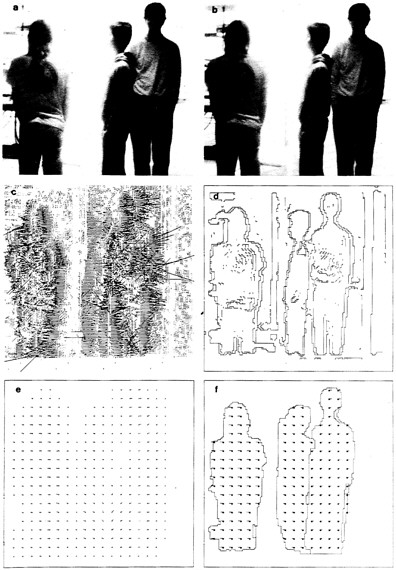

Figure 9.3

Optical flow associated with several moving people. (a) and (b) Two 128- by 128-pixel images captured by a video camera. The two people on the left move toward the left, while the rightward person moves toward the right. (c) Initial velocity data. Since each "neuron" sees only a limited part of the visual scene, the initial output of these local motion sensors is highly variable from one location to the next. (d) Threshold edges (zero-crossings of the Laplacian of a Gaussian) associated with one of the images. (e) The smooth optical flow after the initial velocity measurements (in c) have been integrated and averaged (smoothed) many times. Notice that the flow field is zero between the two rightmost people, since the use of the smoothness constraint acts similarly to a spatial average and the two opposing local motion components average out. The velocity field is indicated by small needles at each point, whose amplitude indicates the speed and whose direction indicates the direction of motion at that point. (f) The final piecewise smooth optical flow after 13 complete cycles shows a dramatic improvement in the optical flow. Motion discontinuities are constrained to be co-localized with intensity edges. The velocity field is subsampled to improve visibility. (Reprinted with modifications from Hutchinson et al., 1988.)

vision has produced a solution in the form of the smoothness constraint, "which follows not from a mathematical analysis of the problem, but derives rather from the physics of the real world," Koch said, since most objects in motion move smoothly along their smooth trajectory (i.e., they do not generally stop, speed up, or slow down) and nearby points on the same object move roughly at the same velocity. "In fact, the smoothness constraint is something that can be learned from the environment," according to Koch, and therefore might well be represented in the visual processing system of the brain, when it is considered as a product of evolution. Ullman clarified that the smoothness assumption "concerns smoothness in space, not in time, that nearby points on the same object have similar velocities.''

Equipped with these assumptions and modeling tools—the aperture problem, the smoothness constraint, and a generic family of motion algorithms—Koch and his colleagues developed what he described as a "two-stage recipe for computing optical flow, involving a local motion registration stage, followed by a more global integration stage." His first major task was to duplicate "an elegant psychophysical and electrophysiological experiment" that supported the breakdown of visual processing for motion into these two stages and established a baseline against which he could measure the value of his own model. To summarize that experiment (Adelson and Movshon, 1982): When two square gratings were projected in motion onto a screen under

certain provisos, human test subjects (as well as monkeys trained to indicate what they saw) failed to see them independently, but rather integrated them in a way that suggested the brain was computing normal components of motion into a coherent, unified pattern. "Under certain conditions you see these two gratings penetrate past each other. But under the right conditions," said Koch, "you don't see them moving independently. Rather you see one moving plaid," suggesting that the brain was computing normal components of motion received by the retina into a coherent unified pattern. While this perception may be of use from evolution's point of view, it does not reflect reality accurately at what might be called, from a computational perspective, the level of raw data. The monkeys performed while electrodes in their brains confirmed what was strongly suspected: the presence of motion-detecting neurons in the V1 and MT cortex regions.

It was in this context that Koch and his colleagues applied their own neural network algorithm for computing motion. Specifically, they tackled the question of why "any one particular cell acquires direction selectivity, such that it only fires for motion in its preferred direction, not responding to motion in most other directions," Koch explained. Among other features, their model employed population coding, which essentially summates all of the neurons under examination. Koch, often influenced by his respect for evolution's brain, believes this is important because while "such a representation is expensive in terms of cells, it is very robust to noise or to the deletion of individual cells, an important concern in the brain," which loses thousands of neurons a day to attrition. The network, when presented with the same essential input data as were the test subjects, mimicked the behavior of the real brains.

To further test the correspondence of their network model to how real brains detect motion, Koch and his colleagues decided to exploit the class of perceptual phenomena called illusions (defined by Koch as those circumstances when "our perception does not correspond to what is really out there"). Motion capture is one such illusion that probably involves visual phenomena related to those they were interested in. In an experiment generally interpreted as demonstrating that the smoothness constraint—or something very like it—is operating in the brains of test subjects, Koch's model, with its designed constraint, was "fooled" in the same way as were the test subjects. Even when illusions were tested that appeared not to invoke the smoothness constraint, the model mimicked the biological brain. Yet another experiment involved exposing the subject to a stationary figure, which appeared and disappeared very quickly from the viewing

screen. Brains see such a figure appear with the apparent motion of expansion, and disappear with apparent contraction, although "out there" it has remained uniform in size. Koch's network registered the same illusory perception.

Armed with this series of affirmations, Koch asked, "What have we learned from implementing our neuronal network?" Probably, he continued, that the brain does have some sort of procedure for imposing a smoothness constraint, and that it is probably located in the MT area. Koch, cautious about further imposing the mathematical nature of his own algorithm onto a hypothesis about brain function, said, "The exact type of constraint we use is very unlikely" to function exactly like that probably used by the brain. Rather than limiting his inferences, however, this series of experiments reveals "something about the way the brain processes information in general, not only related to motion. In brief," he continued, ''I would argue that any reasonable model concerned with biological information processing has to take account of some basic constraints."

"First, there are the anatomical and biophysical limits: one needs to know the structure of V1 and MT, and that action potentials proceed at certain inherent speeds. In order to understand the brain, I must take account of these [physical realities], he continued. But Koch thinks it useful to tease these considerations apart from others that he classifies as "computational constraints. This other consideration says that, in order to do this problem at all, on any machine or nervous system, I need to incorporate something like smoothness." He distinguished between the two by referring to the illusion experiments just described, which—in effect—reveal a world where smoothness does not really apply. However, since our brains are fooled by this anomaly, a neural network that makes the same mistake is probably doing a fairly good job of capturing a smoothness constraint that is essential for computing these sorts of problems. Further, the algorithm has to rapidly converge to the perceived solution, usually in less than a fifth of a second. Finally, another important computational constraint that "brain-like" models need to obey is robustness (to hardware damage, say) and simplicity—the simpler the mathematical series of operations required to find a solution, the more plausible the algorithm, even if the solution is not always correct.

Brain Algorithms Help to Enhance Vision in a Messy World

For years, he explained, Ullman's quest has been to "understand the visual system," usually taking the form of a question: what com-

putations are performed in solving a particular visual problem? He reinforced Koch's admonition to the symposium's scientists about "how easy it is to underestimate the difficulties and complexities involved in biological computation. This becomes apparent when you try to actually implement some of these computations artificially outside the human brain." It was, he continued, "truly surprising [to find] that even some of the relatively low-level computations . . . like combining the information from the two eyes into binocular vision; color computation; motion computation; edge detection; and so on are all incredibly complicated. We still cannot perform these tasks at a satisfactory level outside the human brain."

Ullman described to the symposium scientists experiments that point to some fairly astounding computational algorithms that the brain, it must be inferred, seems to have mastered over the course of evolution. Once again, the actual speed at which the brain's component parts, the neurons, fire their messages is many orders of magnitude slower than the logic gates in a serial computer. Ullman asked the symposium to think about the following example of the brain overcoming such apparent limitations. Much slower, for example, than the shutter speed of a camera is the 100 milliseconds it takes the brain to integrate a visual image. Ullman elaborated, "Now, think about what happens if you take a photograph of, for example, people moving down the street, with the camera shutter open for 0.1 second. You will get just a complete blur, because even if the image is moving very slowly, during that time it will move 2 degrees and cover at least 25 pixels of the image." Ullman reported that evidence has shown that the image on the surface of the retina is in fact blurred, but the brain at higher levels obviously sees a sharp image.

Ullman cited the implications of another experiment on illusion as illustrating the brain's ingenuity in deriving from its visual input—basically the simple two-dimensional optical flow fields Koch was describing—the physical shape and structure of an object in space. Shading is obviously an important clue to building the necessary perspective for computing a three-dimensional world from the two-dimensional images our retina has to work with. But also crucial is motion. Ullman used the computer to simulate a revealing illusory phenomenon—by painting random dots on two transparent cylinders (same height, different diameter) placed one within the other, and then sending light through the array so it would be projected onto a screen. "The projection of the moving dots was computed and presented on the screen," he continued, and the "sum" of these two random but stationary patterns was itself random, to the human eye. But when Ullman began to rotate the cylinders around their axis, the

formerly flat array of dots suddenly acquired depth, and ''the full three-dimensional shape of the cylinders became perceivable."

In this experiment, the brain, according to Ullman, is facing the problem of trying to figure out the apparently random motion of the dots. It apparently looks for fixed rigid shapes and, based on general principles, recovers a shape from the changing projection. "The dots are moving in a complex pattern, but can this complex motion be the projection of a simple object, moving rigidly in space? Such an object indeed exists. I now see," said Ullman, speaking for the hypothetical brain, "what its shape and motion are." He emphasized how impressive this feat actually is: ''The brain does this on the fly, very rapidly, gives you the results, and that is simply what you see. You don't even realize you have gone through these complex computations."

"'Nature is more ingenious than we are,'" wrote Sejnowski and Churchland recently (Allman, 1989, p. 11). "'The point is, evolution has already done it, so why not learn how that stupendous machine, our brain, actually works?'" (p. 11). All of the participants in the session on neural networks conveyed this sense of the brain's majesty and how it far surpasses our ability to fully understand it. Sejnowski reinforced the point as he described his latest collaboration with electrophysiologist Stephen Lisberger from the University of California, San Francisco, in looking at the vestibulo-ocular reflex system: "Steve and many other physiologists and anatomists have worked out most of the wiring diagram, but they still argue about how it works." That is to say, even though the route of synaptic connections from one neuron to another throughout the particular network that contains the reflex is mapped, the organism produces effects that somehow seem more than the sum of its parts. "'We're going to have to broaden our notions of what an explanation is. We will be able to solve these problems, but the solutions won't look like the neat equations we're used to,'" Sejnowski has said (Allman, 1989, p. 89), referring to the emergent properties of the brain that may well be a function of its complexity.

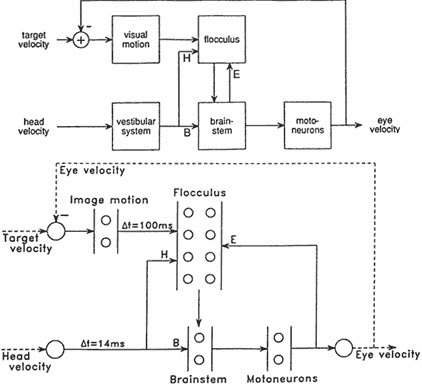

To try to fathom how two of the brain's related but distinct visual subsystems may interact, Sejnowski and Lisberger constructed a neural network to model the smooth tracking and the image stabilization systems (Figure 9.4). "Primates," said Sejnowski, "are particularly good at tracking things with their eyes. But the ability to stabilize images on the retina goes way back to the early vertebrates—if you are a hunter this could be useful information for zeroing in on your prey; if you are the prey, equally useful for noticing there is a predator out there." Like Ullman, he wanted to model the state of an image on the retina and explore how the image is stabilized. The

Figure 9.4 Diagram of the basic oculomotor circuits used for the vestibulo-ocular reflex (VOR) and smooth pursuit (top) and the architecture for a dynamical network model of the system (bottom). The vestibulary system senses head velocity and provides inputs to neurons in the brain stem (B) and the cerebellar flocculus (H). The output neurons of the flocculus make inhibitory synapses on neurons in the brain stem. The output of the brain stem projects directly to motoneurons, which drive the eye muscles, and also project back to the flocculus (E). This positive feedback loop has an "efference copy" of the motor commands. The flocculus also receives visual information from the retina, delayed for 100 milliseconds by the visual processing in the retina and visual cortex. The image velocity, which is the difference between the target velocity and the eye velocity, is used in a negative feedback loop to maintain smooth tracking of moving targets. The model (below) incorporates model neurons that have integration time constants and time delays. The arrows indicate connections between the populations of units in each cluster, indicated by small circles. The dotted line between the eye velocity and the summing junction with the target velocity indicates a linkage between the output of the model and the input. (Courtesy of S. Lisberger.)

vestibulo-ocular reflex (VOR) is "incredibly important," according to Sejnowski, "because it stabilizes the object on the retina, and our visual system is very good at analyzing stationary images for meaningful patterns." In the hurly-burly of life, objects do not often remain quietly still at a fixed spot on the retina. An object can move very rapidly or irregularly, and the head in which the visual system is located can also move back and forth, trying to establish a consistent relationship with the object. One of the brain's tricks, said Sejnowski, ''is to connect vestibular inputs almost directly to the motor neurons that control where the eye will be directed." Thus, he explained, in only 14 milliseconds those muscles can compensate for a quick head movement with an eye movement in the opposite direction to try to stabilize the image. Somehow the VOR computes this and other reactions to produce a continuous stability of visual input that a camera mounted on our head would never see.

The second brain skill Sejnowski and Lisberger wanted to include in their analysis was the pursuit of visual targets, which involves the interesting feature of feedback information. "In tracking a moving object," Sejnowski explained, "the visual system must provide the oculomotor system with the velocity of the image on the retina." A visual monitoring, feedback, and analysis process can be highly effective but proceeds only at the speed limit with which all of the neurons involved can fire, one after another. The event begins with photons of light hitting the rods and cones in the retina and then being transformed through visual system pathways to cerebral cortex and thence to a part of the cerebellum called the flocculus, and eventually down to the motor neurons, the so-called output whose function is to command the eye's response by moving the necessary muscles to pursue in the right direction. "But there is a problem," Sejnowski said. The "visual system is very slow, and in monkeys takes about 100 milliseconds" to process all the way through, and should therefore produce confusing feedback oscillations during eye tracking. And, he observed, [there is] ''a second problem [that] has to do with integrating these two systems, the VOR and the pursuit tracking."

So "our strategy," said Sejnowski, "was to develop a relatively simple network model that could account for all the data, even the controversial data that seem to be contradictory. The point is that even a simple model can help sort out the complexity." Once built, the model could simulate various conditions the brain systems might experience, including having its connection strengths altered to improve performance. Sejnowski then ran it through its paces. He also subjected the model to adaptation experiments that have been performed on humans and monkeys, and unearthed a number of in-

sights about how the brain may accomplish VOR adaptation (Lisberger and Sejnowski, 1991). "Now I would be the first to say," Sejnowski emphasized, "that this is a very simple model, but it has already taught us a few important lessons about how different behaviors can interact and how changing a system as complex as the brain (for example by making a lesion) can have effects that are not intuitive."

Sejnowski has been very active in the connectionist movement and has been credited with "bringing computer scientists together with neurobiologists" (Barinaga, 1990). With Geoffrey Hinton he created in 1983 one of the first learning networks with interneurons, the so-called hidden layers (Hinton and Sejnowski, 1983). Back propagation is another learning scheme for hidden units that was first introduced in 1986 (Rumelhart et al., 1986), but its use in Sejnowski's 1987 NETtalk system suddenly dramatized its value (Sejnowski and Rosenberg, 1987). NETtalk converts written text to spoken speech, and learns to do so with increasing skill each time it is allowed to practice. Said Koch: "NETtalk was really very very neat. And it galvanized everyone. The phenomena involved supervised learning." The network, in trying to interpret the pronunciation of a phoneme, looks at each letter in the context of the three letters or spaces on either side of it, and then it randomly activates one of its output nodes, corresponding to the pronunciation of a certain phoneme. ''The supervised learning scheme now tells the network whether the output was good or bad, and some of the internal weights or synapses are adjusted accordingly," explained Koch. "As the system goes through this corpus of material over and over again—learning by rote—you can see that through feedback and supervised learning, the network really does improve its performance while it first reads unintelligible gibberish, at the end of the training period it can read text in an understandable manner, even if the network has never seen the text before. Along the way, and this is fascinating, it makes a lot of the kinds of mistakes that real human children make in similar situations.''

THE LEAP TO PERCEPTION

At the Frontiers symposium, Sejnowski also discussed the results of an experiment by Newsome and co-workers (Salzman et al., 1990), which Sejnowski said he believes is "going to have a great impact on the future of how we understand processing in the cortex." Working with monkeys trained to indicate which direction they perceived a stimulus to be moving, Newsome and his group microstimulated the cortex with an electrical current, causing the monkeys to respond to

the external influences on their motion detectors, rather than to what they were "really seeing." The results, said Sejnowski, were "very highly statistically significant. It is one of the first cases I know where with a relatively small outside interference to a few neurons in a perceptual system you can directly bias the response of the whole animal."

Another experiment by Newsome and his colleagues (Newsome et al., 1989) further supports the premise that a certain few motion-detecting cells, when triggered, correlate almost indistinguishably with the animal's overall perception. This result prompted Koch to ask how, for instance, the animal selects among all MT neurons the proper subset of cells, i.e., only those neurons corresponding to the object out there that the animal is currently paying attention to." The answer, he thinks, could lie in the insights to be gained from more experiments like Newsome's that would link the reactions of single cells to perception.

Considering his own, these, and other experiments, Koch as a neuroscientist sees "something about the way the brain processes information in general," he believes, not just motion-related phenomena. To meet standards of reasonableness, models concerned with biological information processing must take account of the constraints he discovered crucial to his own model, Koch thinks. First, the algorithm should be computationally simple, said Koch: "Elaborate schemes requiring very accurate components, higher-order derivatives," and the like are unlikely to be implemented in nervous tissue. The brain also seems to like unit/place and population coding, as this approach is robust and comparatively impervious to noise from the components. Also, algorithms need to remain within the time constraints of the system in question: ''Since perception can occur within 100 to 200 milliseconds, the appropriate computer algorithm must find a solution equally fast," he said. Finally, individual neurons are "computationally more powerful than the linear-threshold units favored by standard neural network theories," Koch explained, and this power should be manifest in the algorithm.

These insights and many more discussed at the symposium reflect the least controversial of what neural networks have to offer, in that they reflect the determination, where possible, to remain valid within the recognized biological constraints of nervous systems. It can be readily seen, however, that they emanate from an experimental realm that is comparatively new to scientific inquiry. Typically, a scientist knows what he or she is after and makes very calculated guesses about how to tease it into a demonstrable, repeatable, verifiable, physical—in short, experimental—form. This classical method

of inquiry has served science well, and will continue to do so. But the brain—and how it produces the quality of mind—presents to modern science what most feel is its greatest challenge, and may not yield as readily to such a straightforward approach. While more has been learned about the brain in the last three decades of neuroscience than all that went before, there is still a vast terra incognita within our heads. People from many disciplines are thinking hard about these questions, employing an armamentarium of scientific instruments and probes to do so. But the commander of this campaign to unravel the mysteries of the human brain is the very object of that quest. The paradox inherent in this situation has been a subject of much concern, as suggested in a definition proposed by Ambrose Bierce (Allman, 1989, p. 5):

MIND, n.—A mysterious form of matter secreted by the brain. Its chief activity consists in the endeavor to ascertain its own nature, the futility of the attempt being due to the fact that it has nothing but itself to know itself with.

At last there may be more than irony with which to confront the paradox. Neural networks do learn. Some would argue that they also think. To say so boldly and categorically embroils one in a polemic, which—considering the awesome implications of the proposition—is perhaps as it should be. But whatever the outcome of that debate, the neural network revolution seems on solid ground. It has already begun to spawn early, admittedly crude, models that in demonstration sometimes remind one of the 2-year-old's proud parents who boasted about how well their progeny was eating, even as the little creature was splashing food all over itself and everyone nearby. And yet, that same rough, unformed collection of human skills will grow—using algorithms of learning and training, some inherent, others largely imposed by society—into what could become an astounding intellect, as its brain uses the successive years to form, to learn, and to work its magic. Give the neural networkers the same time frame, and it seems altogether possible that their network creations will grow with comparable—from here nearly unimaginable—power and creativity. Wrote Koch and Segev, "Although it is difficult to predict how successful these brain models will be, we certainly appear to be in a golden age, on the threshold of understanding our own intelligence" (Koch and Segev, 1989, p. 8).

BIBLIOGRAPHY

Adelson, E.H., and J. Movshon. 1982. Phenomenal coherence of moving visual patterns. Nature 200:523–525.