3

On the Nature of Cybersecurity

Chapter 1 points out that bad things that can happen in cyberspace fall into a number of different categories: cybercrime; losses of privacy; misappropriation of intellectual property such as proprietary software, R&D work, blueprints, trade secrets, and other product information; espionage; disruption of services; destruction of or damage to physical property; and threats to national security. After a brief note about terminology, this chapter addresses how adversarial cyber operations can result in any or all of these outcomes.

3.1 ON THE TERMINOLOGY FOR DISCUSSIONS OF CYBERSECURITY AND PUBLIC POLICY

In developing this report, the committee was faced with an unfortunate lexical reality—there is no consistent or uniform vocabulary (never mind the conceptual basis) for discussions about cybersecurity and public policy. One might expect this to be true across international borders, where it is well known that translations among English, Chinese, German, Russian, Arabic, French, and Hebrew can be problematic. But it is also the case that even within the United States, different communities—and even different individuals within those communities—use terminology whose definitions and usage conventions are somewhat different.

Perhaps it is not entirely surprising that such variation exists. Concerns about cybersecurity have spread rapidly in the past 10 years to many communities, and a uniform lexical or conceptual structure is unlikely to

be established under such circumstances. Further, different vocabularies and concepts often reflect differences in intellectual or mission emphasis and orientation that might differentiate various communities.

As an illustration, consider the term “cyberattack.” The term has been used variously to include:

• Any hostile or unfriendly action taken against a computer system or network regardless of purpose or outcome.

• Any hostile or unfriendly action taken against a computer system or network if (and only if) that action is intended to cause a denial of service (discussed below) or damage to or destruction of information stored in or transiting through that system or network. Data exfiltration is not included in this usage. (This particular distinction—between data exfiltration (the essential characteristic of cyber espionage) and other kinds of unfriendly action—has great significance in the context of international law and domestic legal authorities for conducting such actions; these points are discussed further in Section 4.2.3 on domestic and international law.)

• Any hostile or unfriendly action taken against a computer system or network if (and only if) that action is intended to cause damage to or destruction of information stored in or transiting through that system or network and is effected primarily through the direct use of information technology. (This definition rules out the use of sledgehammers against a computer or backhoes against fiber-optic cables, although of course such actions can seriously disrupt services provided by the attacked computer or cables.)

Another frequent confusion in the literature or in discussions involves the terms “exploit” and “exploitation.” As described below, an exploitation is an attempt to compromise the confidentiality of data, usually by making a copy of it and conveying that copy into an adversary’s hands. By contrast, an exploit (that is, “exploit” as a noun) is a mechanism used by an adversary to take advantage of a vulnerability. “Exploit” as a verb (as in “an adversary can exploit vulnerability X) means “to take advantage of” (as in “an adversary can take advantage of vulnerability X”).

The reader is cautioned that there are many terms in cybersecurity discussions with related but different definitions: some such terms include “compromise,” “penetration,” “breach,” “intrusion,” “exploit,” “attack,” and “hack.” It would be highly desirable for all discussants to standardize on some particular vocabulary, but until that day comes, participants in dialogs about cybersecurity will have to rely on context or otherwise take special care to ensure that they understand a speaker’s or writer’s intent when certain terms are used.

3.2 WHAT IT MEANS TO BE AN ADVERSARY IN CYBERSPACE

In this report, an adversarial (or hostile) cyber operation is one or more unfriendly actions that are taken by an adversary (or equivalently, an intruder) against a computer system or network for the ultimate purpose of conducting a cyber exploitation or a cyberattack. (“Offensive cyber operations” or “offensive operations in cyberspace” are roughly equivalent from an action standpoint but are terms for operations conducted by the good guys, however the good guys may be defined.) “Cyber incident” can be used more or less interchangeably with “hostile cyber operation,” but in this report will usually refer to an adversarial cyber operation conducted sometime in the past.

A hostile or adversarial cyber operation can be an exploitation or an attack.

A cyber exploitation is an action intended to exfiltrate digitally stored information that should be kept away from unauthorized parties that should not have access to it. To date, the vast majority—nearly all—of actual cyber incidents have been exploitations, and sensitive digitally stored information such as Social Security numbers, medical records, blueprints and other intellectual property, classified information, contract and bid information, and software source code have all been obtained by unauthorized parties.

Exploitations are usually undertaken surreptitiously. The surreptitious nature of an exploitation is one of its key features—a surreptitious exploitation of, say, an individual credit card number is much more effective than a discovered exploitation, because if the exploitation is discovered, the credit card owner can notify the bank and prevent the card’s further use.

One of the largest cyber exploitations ever discovered happened in the winter holiday season of 2013, when the Target retail store chain suffered a data breach in which personal information belonging to 70 million to 110 million people was stolen.1 Such information included names, mailing and e-mail addresses, phone numbers, and credit card numbers. Shortly after the breach occurred, observers noted an order-of-magnitude increase in the number of high-value stolen cards on black market Web sites, from nearly every bank and credit union. The Target

________________

1 Elizabeth A. Harris and Nicole Perlroth, “For Target, the Breach Numbers Grow,” New York Times, January 10, 2014, available at http://www.nytimes.com/2014/01/11/business/target-breach-affected-70-million-customers.html.

Corporation reported a 46 percent drop in income in the fiscal quarter ending February 1, 2014, relative to the comparable period a year earlier, and it incurred $61 million in breach-related expenses (of which insurance covered 72 percent).2 Financial institutions spent $200 million to replace credit cards after the breach.3

Although the vast majority of cyber exploitations target information stored on a computer or network, they can also seek information in the physical vicinity of a computer when the computer has audio and/or video capabilities. In such cases, an intruder can penetrate the computer and activate the on-board camera or microphone without the knowledge of the user, and thus surreptitiously see what is in front of the camera and hear what is going on nearby. For example, in an August 2013 incident, an extortionist assumed control of the Webcam in the personal computer of the new Miss Teen USA and took pictures that he subsequently used to blackmail the victim.4In this incident, the extortionist was able to prevent the warning light on the camera from turning on.

A cyberattack is an action intended to cause a denial of service or damage to or destruction of information stored in or transiting through an information technology system or network.

A denial-of-service (DOS) attack is intended to render a properly functioning system or network unavailable for normal use. A DOS attack may mean that the e-mail does not go through, or the computer simply freezes, or the response time becomes intolerably long (possibly leading to tangible destruction if, for example, a physical process is being controlled by the system). As a rule, the effects of a DOS attack vanish when the attack ceases. DOS attacks are not uncommon, and have occurred against individual corporations, government agencies (both civilian and military), and nations.

Typically, a DOS attack works by flooding a specific target with bogus requests for service (e.g., requests to display a Web page, to receive and store an e-mail), thereby exhausting the resources available to the target

________________

2 Paul Ziobro, “Target Earnings Suffer After Breach,” Wall Street Journal, February 27, 2014, available at http://online.wsj.com/news/articles/SB20001424052702304255604579406694182132568.

3 Saabira Chaudhuri, “Cost of Replacing Credit Cards After Target Breach Estimated at $200 Million,” Wall Street Journal, February 19, 2014, available at http://online.wsj.com/news/articles/SB10001424052702304675504579391080333769014.

4 Nate Anderson, “Webcam Spying Goes Mainstream as Miss Teen USA Describes Hack,” Ars Technica, April 16, 2013, available at http://arstechnica.com/tech-policy/2013/08/webcam-spying-goes-mainstream-as-miss-teen-usa-describes-hack/.

to handle legitimate requests for service and thus blocking others from using those resources. Such an attack is relatively easy to block if these bogus requests for service come from a single source, because the target can simply drop all service requests from that source. A distributeddenial-of-service attack can flood the target with multiple requests from many different machines. Since each of these different machines might, in principle, be a legitimate requester of service, dropping all of them runs a higher risk of denying service to legitimate parties. Botnets—a collection of victimized computers that are remotely controlled by an adversary—are often used to conduct DOS attacks (Box 3.1).

A well-known example of a DOS attack occurred on April 27, 2007, when a series of distributed denial-of-service (DDOS) attacks began on a range of Estonian government Web sites, media sites, and online banking services.5 Attacks were largely conducted using botnets to create network traffic, with the botnets being composed of compromised computers from the United States, Europe, Canada, Brazil, Vietnam, and other countries around the world. The duration and intensity of attacks varied across the Web sites attacked; most attacks lasted 1 minute to 1 hour, and a few lasted up to 10 hours.6Attacks were stopped when the attackers ceased their efforts rather than being stopped by Estonian defensive measures.7 The Estonian government was quick to claim links between those conducting the attacks and the Russian government,8 although Russian officials denied any involvement.9

A damaging or destructive attack can alter a computer’s programming in such a way that the computer does not later behave as it should. If a physical device (such as a generator) is controlled by the computer, the operation of that device may be compromised. The attack may also alter or erase digitized data, either stored or in transit (i.e., while it is being sent from one point to another). Such an attack may delete data files irretrievably.

Although the preparation for an attack may be surreptitious (so that

________________

5Economist, “A Cyber-Riot,” May 10, 2007; Jaak Aaviksoo, Minister of Defense of Estonia, presentation to Centre for Strategic and International Studies, November 28, 2007.

6 The most detailed measurements on the attacks are from Arbor Networks. See Jose Nazario, “Estonian DDoS Attacks—A Summary to Date,” May 17, 2007, available at http://asert.arbornetworks.com/2007/05/estonian-ddos-attacks-a-summary-to-date/.

7 McAfee Corporation, Cybercrime: The Next Wave, McAfee Virtual Criminology Report, 2007, p. 11, available at http://infovilag.hu/data/files/129623393.pdf.

8 Maria Danilova, “Anti-Estonia Protests Escalate in Moscow,” Washington Post, May 2, 2007, available at http://www.washingtonpost.com/wp-dyn/content/article/2007/05/02/AR2007050200671_2.html. The article quotes both the Estonian president and the Estonian ambassador to Russia as claiming Kremlin involvement.

9 McAfee Corporation, Cybercrime: The Next Wave, 2007, p. 7.

BOX 3.1 Botnets

An attack technology of particular power and significance is the botnet. Botnets are collections of victimized computers that are remotely controlled by the attacker. A victimized computer—an individual bot—is connected to the Internet, usually with an “always-on” broadband connection, and is running software clandestinely introduced by the attacker. The attack value of a botnet arises from the sheer number of computers that an attacker can control—often tens or hundreds of thousands and perhaps as many as a million. (An individual unprotected computer may be part of multiple botnets.)

Since all of these computers are under one party’s control, the botnet can act as a powerful amplifier of an adversary’s actions. In addition, by acting through a botnet, a malevolent actor can be more anonymous (because his actions can be routed through many computers belonging to third parties). The use of botnets can also help to defeat defensive techniques that identify certain computers as sources of hostile traffic—as one bot in the net is identified as a source of hostile traffic, the adversary simply shifts to a second, and a third, and a fourth bot.

An attacker usually builds a botnet by finding a few individual computers to compromise, perhaps using one of the tools described above. The first hostile action that these initial zombies take is to find other machines to compromise—a task that can be undertaken in an automatic manner, and so the size of the botnet can grow quite rapidly.

A botnet controller can communicate with its botnet and still stay in the background, unidentified and far away from any action, while the individual bots—which may belong mostly to innocent parties that may be located anywhere in the world—are the ones that are visible to the party under attack. The botnet controller has great flexibility in the actions it may take—it may direct all of the bots to take the same action, or each of them to take different actions.

Individual bots can probe their immediate environment and take action based on the results of that probe. A bot can pass information it finds back to its controller, or it can take destructive action, which may be triggered at a certain time, or perhaps when the resident bot receives a subsequent communication from the controller. Bots can also obtain new programming from their controllers, giving them great flexibility with respect to the range of harmful tasks they can conduct at any time.

Perhaps the most important point about botnets is the great flexibility they offer to a malevolent actor. Adversaries can obtain botnet services on the open (black) market for hacking services (e.g., the botnets used to attack Estonia in 2007 were apparently rented).1 Although botnets are known to be well suited to distributed denial-of-service attacks, it is safe to say that their full range of utility for adversarial operations in cyberspace has not yet been examined.

_________________

1 Mark Landler and John Markoff, “Digital Fears Emerge After Data Siege in Estonia,” NewYork Times, May 29, 2007, available at http://www.nytimes.com/2007/05/29/technology/29estonia.html?pagewanted=all&_r=0.

the victim does not have a chance to prepare for it), the effects of an attack may or may not be concealed. If the intent of an attack is to destroy a generator, an explosion in the generator is obvious (although it may not be traceable to a cyberattack on the controlling computer). But if the intent of an attack is to corrupt vital data, small amounts of corruption may not be visible (and small amounts of corruption continued over time could result in undetectable large-scale corruption).

The best known example of a destructive cyberattack is Stuxnet, a hostile cyber operation that targeted the computer-controlled centrifuges of the uranium enrichment facility in Natanz, Iran.10 After taking control of these centrifuges, Stuxnet issued instructions to them to operate in ways that would cause them to self-destruct, unbeknownst to the Iranian centrifuge operators.

3.2.3 An Important Commonality for Exploitation and Attack

An important point about cyber exploitation and cyberattack is that they generally use the same basic technical approaches to penetrate the security of a system or network, even though they have different outcomes. (By definition, the former results in exfiltration of data, whereas the latter results in damage to information or information technology—and whatever else that technology controls.) The reason for this similarity is addressed below in Section 3.4. Also, a single offensive operation can, in principle, involve both an exploitation phase and an attack phase.

3.3 INHERENT VULNERABILITIES OF INFORMATION TECHNOLOGY

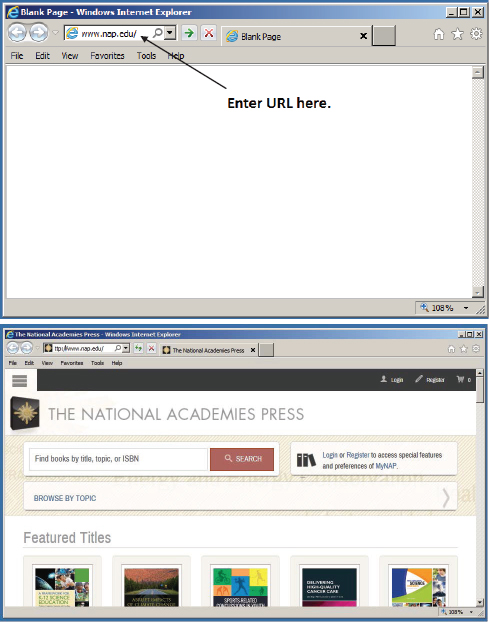

Designing a completely secure, totally unhackable computer is easy—put the computer into a sealed metal box, with no holes in the box for wires and no way to pass information (recognizing that computer programs are also a form of information) outside the box, and the computer system is entirely secure (see the left side of Figure 3.1). Of course, this system—inside the box—is entirely useless as well. Only by removing the box (which serves as an information barrier) can the computer be made useful (right side of Figure 3.1).

A computer can produce useful results only when it is provided with “correct” or “good” information. But what counts as “good” and “bad” information depends on decisions made by fallible human beings—and in particular humans who may be tricked into believing that certain

________________

10 David Kushner, “The Real Story of Stuxnet,” IEEE Spectrum, February 26, 2013, available at http://spectrum.ieee.org/telecom/security/the-real-story-of-stuxnet#.

FIGURE 3.1 A secure but useless computer (left), and an insecure but useful computer (right).

information or programs are good when they are in fact bad. This fact underscores a basic point about most adversarial cyber operations—the key role played by deception. Box 3.2 provides a simple example.

Two other factors compound the inherent vulnerabilities of information technology. First, the costs of an adversarial cyber operation are usually small compared with the costs of defending against it. This asymmetry arises because the victim (the defender) must succeed every time the intruder acts (and may even have to take defensive action long after the intruder’s initial penetration if the intruder has left behind an implant for a future attack). By contrast, the intruder needs to succeed in his efforts only once, and if he pays no penalty for a failed operation, he can continue his efforts until he succeeds or chooses to stop.11

Second, modern information technology systems are complex entities whose proper (secure) operation requires many actors to have behaved correctly and appropriately and to continue to do so in the future. Each of these actors exerts some control over some aspect of a user’s experience or the configuration or functioning of some part of the system, and a problem in any of them can negatively compromise that experience.

As an example, consider the “simple” task of viewing a Web page—

________________

11 This asymmetry applies primarily when the intruder can choose when to act, that is, when the precise timing of the intrusion’s success does not matter. If the intruder must succeed on a particular timetable, the intruder does not have an infinitely large number of tries to succeed, and the asymmetry between intruder and defender may be reduced significantly.

BOX 3.2 A Simple Example of Deception

HTML (an acronym for Hypertext Markup Language) is a computer language that is used to display Web pages. One feature of this language is that it enables the conversion of text into links to other Web pages. The user sees on the page a certain text string, but the text string conceals a link—clicking on the “click here” text brings the user to the Web site corresponding to the link. (Although the link can be revealed if the user’s pointing device hovers over the text string, many users do not perform such a check.)

But there are no limits on the text string to be displayed to the user, and so it is possible for the user to see on the screen “www.example.com,” but underneath the text is really the link for another Web page, such as www.hackyourcomputer.com. Clicking on the text displayed on the screen takes the user to the bad site rather than the intended site.

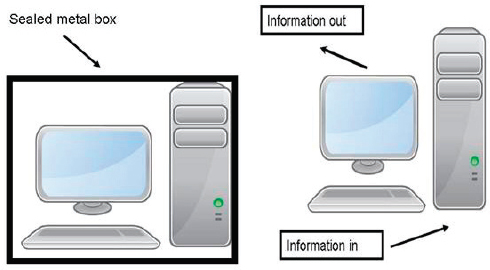

something that many people do every day with ease. The user can type the name of a Web page (called a URL, uniform resource locator) as depicted in the top of Figure 3.2, and the proper Web page appears in a second or two as depicted at the bottom of Figure 3.2. In addition, the user also wants the display of the Web page to be the only thing that happens in response to his request—exfiltrating the user’s credit card numbers to a cyber criminal or destroying the files on the computer’s hard disk are things that the user does not want to happen.

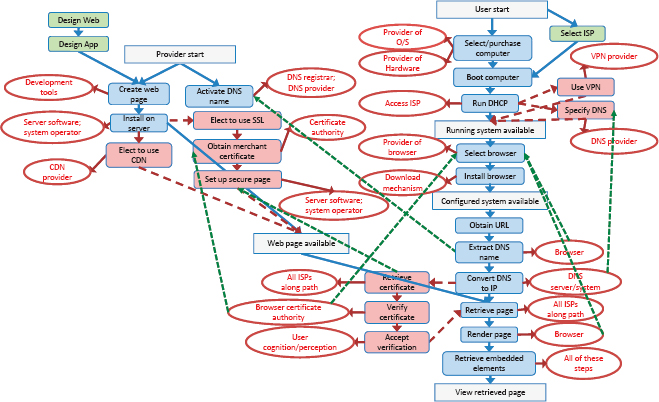

Behind this apparent simplicity is a multitude of steps, as depicted in Figure 3.3. What is immediately clear even without a detailed explanation is that the process involves many actors, and a flawed performance by any of these actors may mean that the requested page does not appear as required.

Inspection of Figure 3.3 with a powerful magnifying lens (not supplied with this report) would reveal that it could be simplified to some extent by considering the display as the result of three interacting parts: preparation of the computer used to display the page, preparation of the Web page that is to be displayed, and the actual retrieval of the Web page from where it is hosted to where it is displayed. Each of these parts is itself composed of actions involving a number of actors. Some of the actors shown in Figure 3.3 include:

• The provider of the hardware and the operating system;

• The delivery service that handles the computer in transit (e.g., UPS/FedEx), which must deliver the box containing the computer from

FIGURE 3.3 Viewing a Web page, behind the scenes. SOURCE: David Clark, “Control Point Analysis,” ECIR Working Paper, 2012, Telecommunications Policy Research Conference, available at http://ecir.mit.edu/index.php/research/working-papers/278control-point-analysis. Reprinted by permission.

the factory to the proper destination without allowing an adversary to tamper with it;

• The Internet service provider that provides Internet service to the location where the user accesses the Web page;

• The party responsible for the Internet browser that the user runs to access the Web page;

• The party or parties responsible for various add-ons that the browser runs (e.g., to display PDF files);

• The developer of the tools for creating Web pages;

• The operator of the server that hosts the Web page to be accessed;

• The provider of software for the host server;

• Providers of the advertisement(s) that might be served up along with the Web page;

• The Domain Name System registrar that registers the name of the Web site where the Web page can be found;

• The DNS provider that translates the name of the Web site to a numerical Internet Protocol address such as 144.171.1.4;

• The certificate authority who attests that the purported operator of the Web site is in fact the properly registered operator; and

• All of the Internet service providers along the connection path between the host and the user.

Each of these actors must carry out correctly the role it plays in the overall process; for example, ISPs must correctly operate the routing protocols if packets are to reach their destination. Moreover, each of these actors could take (or be tricked into taking) one or more actions that thwart the user’s intent in retrieving a given Web page, which is to receive the requested Web page promptly and to have only that task be accomplished, and not have any other unrequested task be accomplished.

3.4 THE ANATOMY OF ADVERSARIAL ACTIVITIES IN CYBERSPACE

Adversarial operations in cyberspace against a system or network usually require penetration of the system or network’s security to deliver a payload that takes action in accordance with the intruder’s wishes against the target of interest (e.g., against any of the entities shown in ovals in Figure 3.3). The payload is usually computer code, and is also known as malware. (In some cases, the payload could be instructions or commands issued to the computer by a malevolent actor.)

In a noncyber context, an intruder might penetrate a file cabinet. To penetrate the file cabinet, the intruder must first gain access to it—access to a file cabinet located on the International Space Station would pose

a problem very different from that posed by the same cabinet located in an office in Washington, D.C. Once standing in front of the file cabinet, the intruder might take advantage of an easily pickable lock on the cabinet—that is, an easily pickable lock is a vulnerability. The payload in this noncyber context reflects the action taken by the intruder after the lock is picked. For example, the intruder can alter some of the information on those papers, perhaps by replacing certain pages with pages of the intruder’s creation (i.e., he alters the data recorded on the pages), he can pour ink over the papers (i.e., he renders the data unavailable to any legitimate user), or he can copy the papers and take the copies away, leaving behind the originals (that is, he exfiltrates the data on the papers).

Access

In a cyber context, an intruder must first penetrate the system or network of interest. The first step is gaining access—an “easy” target is one that the intruder need spend only a little effort preparing and the intruder can gain access to the target without much difficulty, such as a target that is known to be connected to the Internet. Public Web sites are examples of such targets, as they must—by definition—be connected to the Internet to be useful.

Hard targets are those that require a great deal of preparation on the part of the intruder and where access to the target can be gained only at great effort or may even be impossible for all practical purposes. For example, the avionics of a fighter plane are not likely to be directly connected to the Internet for the foreseeable future, which means that launching a cyberattack against such a plane will require some kind of harder-to-achieve access to introduce a vulnerability that can be used later. As a general rule, sensitive and important computer systems or networks are likely to fall into the category of hard targets.

Access paths to a target include those in the following categories:

• Remote access, in which the intruder is at some distance from the adversary computer or network of interest. The canonical example of remote access is that of an adversary computer attacked through the access path provided by the Internet, but other examples might include accessing an adversary computer through a dial-up modem attached to it or through penetration of the wireless network to which it is connected. Malevolent actors are constantly searching for new computers on the Internet. When they find one, they are often able to penetrate the computer, and in some cases, such penetration takes only a matter of

minutes or hours from the moment of initial connection to the Internet, even without its owner taking any other action at all.12

An example of remote access is the use of SQL injection techniques to execute database commands selected by the intruder. Many public-facing Web sites allow a user to enter data through a keyboard. SQL is a common computer language used for managing data input and data manipulation in certain databases. If the SQL coding for managing data input from a user is flawed, it is sometimes possible for the user (the hostile user!) to insert (“inject”) database commands of his own into the input. In a 2009 event, the RockYou company, which developed applications for use on social networking sites, suffered an SQL penetration that resulted in making 32 million user names and passwords available to intruders.13 In 2012, RockYou paid $250,000 in penalties following a settlement with the Federal Trade Commission.14

• Close access, in which the penetration of a system or network takes place through the local installation of hardware or software functionality by seemingly friendly parties (e.g., covert agents, vendors) in close proximity to the computer or network of interest. Close access is a possibility anywhere in the supply chain of a system that will be deployed (Box 3.3). As a general rule, close access is more expensive, riskier, and harder to conduct than remote access.

One example of a supply chain attack occurred in 2008. According to The Telegraph, criminal gangs in China gained access to the supply chain for a certain line of chip-and-pin credit card readers.15 The gangs modified these readers to surreptitiously relay customer account information (including the security personal identification numbers) to criminal enterprises. The readers were repackaged with virtually no physical traces of tampering and shipped directly to several European countries. Details collected from the cards were used to make duplicate credit cards,

________________

12 See, for example, Survival Time, available at http://isc.sans.org/survivaltime.html. Also, in a 2008 experiment conducted in Auckland, New Zealand, an unprotected computer was rendered unusable through online attacks. The computer was probed within 30 seconds of its going online, and the first attempt at intrusion occurred within the first 2 minutes. After 100 minutes, the computer was unusable. See “Experiment Highlights Computer Risks,” December 2, 2008, available at http://www.stuff.co.nz/print/4778864a28.html.

13 Angela Moscaritolo, “RockYou Hack Compromises 32 Million Passwords,” SC Magazine, December 15, 2009, available at http://www.scmagazine.com/rockyou-hackcompromises-32-million-passwords/article/159676/.

14 Dan Kaplan, “RockYou to Pay FTC $250K After Breach of 32M Passwords,” SC Magazine, March 27, 2012, available at http://www.scmagazine.com/rockyou-to-pay-ftc-250k-after-breach-of-32m-passwords/article/233992/.

15 Henry Samuel, “Chip and Pin Scam ‘Has Netted Millions from British Shoppers,’” The Telegraph, October 10, 2008, available at http://www.telegraph.co.uk/news/uknews/law-and-order/3173346/Chip-and-pin-scam-has-netted-millions-from-British-shoppers.html.

BOX 3.3 Close Access Through the Information Technology Supply Chain

Systems (and their components) can be penetrated in design, development, testing, production, distribution, installation, configuration, maintenance, and operation. In most cases, the supply chain is only loosely managed, which means that the party supplying the system to the end user may well not have full control over the entire chain. Examples of possible supply-chain penetrations include the following:

• A vendor with an employee loyal to an adversary introduces malicious hardware or code at the factory as part of a system component for which the vendor is a subcontractor in a critical system.

• An adversary intercepts a set of USB flash drives ordered by the victim for distribution at a conference and substitutes a different doctored set for actual delivery to the victims. In addition to the conference proceedings, the adversary places hostile software on the flash drives that the victims install when they plug in the drives.

• An adversary writes an application for a smart device that performs some useful function (e.g., an app that displays a clock on the device’s face) but also includes malware that sends the contact list on the device to the adversary.

• An adversary bribes a shipping clerk to look the other way when the computer is on the loading dock for transport to the victim, opens the box, replaces the video card installed by the vendor with one modified by the intruder, and reseals the box.

A supply chain penetration may be effected late in the chain, for example, against a deployed computer in operation or one that is awaiting delivery on a loading dock. In these cases, such a penetration is by its nature narrowly and specifically targeted, and it is also not scalable, because the number of computers that can be penetrated is proportional to the number of human assets available. In other cases, a supply chain penetration may be effected early in the supply chain (e.g., introducing a vulnerability during development), and high leverage against many different targets might result from such an attack.

which could then be used for normal financial transactions. MasterCard International estimated that thousands of customer accounts were compromised, netting millions of dollars in ill-gotten gains before the breach was discovered.

• Social access. An intruder can gain access by taking advantage of existing trust relationships between people—one is usually more likely to trust the intentions of a known and/or friendly party than an unknown one. Pretending to be a friend or colleague of the victim is one example

of the fact that the human beings who install, configure, operate, and use IT systems of interest can often be compromised through recruitment, bribery, blackmail, deception, trickery, or extortion, and these individuals can be of enormous value to the intruder. In some cases, a trusted insider “goes bad” for his or her own reasons and by virtue of the trust placed in him is able to take advantage of his own credentials for improper purposes—this is the classical “insider threat.” Social engineering is the use of deception and trickery for gaining access. Social engineering results in the intruder gaining access to the credentials and therefore the access privileges of those the intruder has tricked.

As an example of an adversary taking advantage of social access to penetrate the security of computer systems, consider the case of Edward Snowden, who in June 2013 leaked to the news media classified documents from the National Security Agency and other government agencies in the United States and abroad that described electronic surveillance activities of these agencies around the world. Given the highly classified nature of these documents, many have asked how their security could have been compromised. According to a Reuters news report,16 Snowden was able to persuade two dozen fellow workers at the NSA to provide him with their credentials, telling them that he needed that information in his role as systems administrator.

Sometimes, social engineering is combined with either remote access or close access methods. An intruder may make contact through the Internet with someone likely to have privileges on the system or network of interest. Through that contact, the intruder can trick the person into taking some action that grants the intruder access to the target. For example, the intruder sends the victim an e-mail with a link to a Web page and when the victim clicks on that link, the Web page may take advantage of a technical vulnerability in the browser to run a hostile program of its own choosing on the user’s computer, often or usually without the permission or even the knowledge of the user.

Social engineering can be combined with close access techniques in other ways as well. For example, users can sometimes be tricked or persuaded into inserting hostile USB flash drives into the USB ports of their computer. Because some systems support an “auto-run” feature for insertable media (i.e., when the medium is inserted, the system automatically runs a program named “autorun.exe” on the medium) and the feature is often turned on, a potentially hostile program is executed. Open USB ports can be glued shut, but such a countermeasure also makes it impos-

________________

16 Mark Hosenball and Warren Strobel, “Snowden Persuaded Other NSA Workers to Give Up Passwords–Sources,” Reuters, November 7, 2013, available at http://www.reuters.com/article/2013/11/08/net-us-usa-security-snowden-idUSBRE9A703020131108.

sible to use the USB ports for any purpose. In one experiment, a red team used inexpensive USB flash drives to penetrate an organization’s security. The red team scattered USB drives in parking lots, smoking areas, and other areas of high traffic. A program on the USB drive would run if the drive was inserted, and the result was that 75 percent of the USB drives distributed were inserted into a computer.17

Vulnerability

Access is only one aspect of a penetration, which also requires the intruder to take advantage of a vulnerability in the target system or network. Examples of vulnerability include an accidentally introduced design or implementation flaw (common), an intentionally introduced design or implementation flaw (less common), or a configuration error in the target such as a default setting that leaves system protections turned off. Vulnerabilities arise from the characteristics of information technology and information technology systems described above.

An unintentionally introduced flaw or defect (“bug”) may open the door for opportunistic use of the vulnerability by an adversary who learns of its existence. Many vulnerabilities are widely publicized after they are discovered and can then be used by anyone with moderate technical skills until a patch can be developed, disseminated, and installed. Intruders with the time and resources may also discover unintentional defects that they protect as valuable secrets that can be used when necessary. As long as those defects go unaddressed, the vulnerabilities they create can be used by the intruder.

An intentionally introduced flaw has the same effect as an unintentionally introduced one, except that the adversary does not have to wait to learn of its existence, and the adversary can take advantage of it as soon as it suits his purposes. For example, so-called back doors are sometimes built into programs by their creators; the purpose of a “back door” is to enable another party to bypass security features that would otherwise keep that other party out of the system or network. An illustration would be a back door on the password manager to a system—authorized users would have an assigned login name and password that would enable them to do certain things (and only those things) on the Web site. But if the program’s creator had installed a back door, a knowledgeable intruder (perhaps in cahoots with the program’s creator) could enter a special

________________

17 See Steve Stasiukonis, “Social Engineering, the USB Way,” Dark Reading, June 7, 2006, available at http://www.darkreading.com/document.asp?doc_id=95556&WT.svl=column1_1.

40-character password and use any login name and then be able to do anything he wanted on the system.

One particularly problematic vulnerability is known as a “zero-day vulnerability.” The term refers to a vulnerability for which the responsible party (e.g., the vendor that provides the software) has not provided a fix, often because the vulnerability is not yet known. Thus, an intruder can often take advantage of a zero-day vulnerability before its becoming publicly known. The zero-day vulnerabilities with the most widespread impact are those in a remotely accessible service that runs by default on all versions of a widely used piece of software—under such circumstances, an intruder could take advantage of the vulnerability in many places nearly simultaneously, with all of the consequences that penetrations of such scale might imply.

Those who discover such vulnerabilities in systems face the question of what to do with them. A private party may choose to report a vulnerability privately to those responsible for maintaining the system so that the vulnerability can be repaired; publicize it widely so that corrective actions can be taken; keep a discovered/known vulnerability for its own purposes; or sell it to the highest bidder. National governments face a similar choice—keep it for future use in some adversarial or offensive cyber operation conducted for national purposes or fix/report it to reduce the susceptibility to penetration of the systems in which that vulnerability is found. National governments as well as nongovernment entities such as organized crime participate in markets to acquire zero-day vulnerabilities for future use.

Last, both cyber exploitations and cyberattacks make use of the same penetration approaches and techniques, and thus may look quite similar to the victim, at least until the nature of the malware involved is ascertained.

3.4.2 Cyber Payloads (Malware)

If an intruder is successful at penetrating a system or network, the intruder must decide what to do next.

A payload is most often a “malware” program that is designed to take hostile action against the system to which it has been delivered. In general, these hostile actions can be anything that could be done by an adversary that has programmed the system. For example, once malware has entered a system, it can be programmed to reproduce and retransmit itself, destroy or alter files on the system, slow the system down, issue bogus commands to equipment attached to the system, monitor traffic going by, copy and send files to a secret e-mail address, create a vulner-

ability for future use, and so on. The payload is what determines if an adversarial cyber operation is an attack or an exploitation.

Malware can have multiple capabilities when inserted into an adversary system or network—that is, malware can be programmed to do more than one thing. The timing of these actions can also be varied. And if a communications channel to the intruder is available, malware can be remotely updated. Indeed, in some cases, the initially delivered malware consists of nothing more than a mechanism for scanning the penetrated system to determine its technical characteristics and an update mechanism to retrieve the best packages to further the malware’s operation.

Malware may be programmed to activate either immediately or when some condition is met. In the second case, the malware sits quietly and does nothing harmful most of the time. However, at the right moment, the program activates itself and proceeds to (for example) destroy or corrupt data, disable system defenses, or introduce false message traffic. The “right moment” can be triggered because:

- A certain date and time are reached;

- The malware receives an explicit instruction to execute;

- The traffic monitored by the malware signals the right moment; or

- Something specific happens in the malware’s immediate environment.

Malware may also install itself in ways that keep it from being detected. It may delete itself, leaving behind little or no trace that it was ever present. In some cases, malware can remain even after a computer is scanned with anti-malware software or even when the operating system is reinstalled from scratch.

For example, many computers—including desktop and laptop computers in everyday use—run through a particular power-on sequence when their power is turned on. The computer’s power-on sequence loads a small program from a chip inside the computer known as the BIOS (Basic Input-Output System), and then runs the BIOS program. The BIOS program then loads the operating system from another part of the computer, usually its hard drive. Most anti-malware software scans only the operating system on the hard drive, assuming the BIOS chip to be intact. But some malware is designed to modify the program on the BIOS chip, and reinstalling the operating system simply does not touch the (modified) BIOS program. The Chernobyl virus is an example of malware that targets the BIOS,18 and in 1998 it rendered several hundred thousand

________________

18 The Chernobyl virus is further documented in CERT Coordination Center, “CIH/ Chernobyl Virus,” CERT® Incident Note IN-99-03, updated April 26, 1999, available at http://www-uxsup.csx.cam.ac.uk/pub/webmirrors/www.cert.org/incident_notes/IN-99-03.html.

computers entirely inoperative without physical replacement of the BIOS chip.

Last, the payload might not take the form of hostile software at all. For example, an intruder might use a particular access path and take advantage of a certain vulnerability to give himself remote access to the target computer such that the intruder has all of the privileges and capabilities that he might have if he were sitting at the keyboard of that computer. He is then in a position to issue to the computer commands of his own choosing, and such commands may well have a harmful effect on the target computer.

3.4.3 Operational Considerations

Sections 3.4.1 and 3.4.2 describe the basic structure of hostile activities in cyberspace. But an intruder must take into account a number of operational considerations if such activities are to be successful:

• Effects prediction and assessment. When planning a hostile operation, the expected outcome is a factor in weighing its desirability. When the operation has concluded, the responsible party wants to know if it was successful. But accurate predictions about the outcome of hostile operations and assessing the effects of such operations are complex and difficult challenges. Damage to a computer, for example, is invisible to the naked eye.

• Target selection. Which specific computers or networks are to be targeted in a hostile cyber operation? And how would they be identified at a distance? Target identification information can come from a number of sources, including open source collection, automated target selection, and manual exploration of possible targets. A high degree of selectivity in targeting may require large amounts of intelligence information.

• Fragility. A victim that discovers a penetration is likely to fix the vulnerability that was taken advantage of by an intruder. Thus, an intruder must consider the possibility that a particular penetration tool will be usable only once or a few times.

• Rules of engagement. These rules specify what tools may be used to conduct a hostile cyber operation, what their targets may be, what effects may be sought, and who may conduct such operations under what circumstances. Governments in particular expend considerable effort in specifying these rules for a variety of different purposes (e.g., military purposes, intelligence purposes, law enforcement purposes).

• The availability of intelligence. As a general rule, a scarcity of intelligence information regarding possible targets means that any cyber operation launched against them can only be “broad-spectrum” and relatively

indiscriminate or blunt. Substantial amounts of intelligence information about targets (and paths to those targets) are required if an operation is intended as a very precise one directed at a particular system. Often, intelligence is gathered in stages, in which an initial exploitation leads to information that can facilitate further exploitation.

3.5 CHARACTERIZING THREATS TO CYBERSECURITY

Malevolent actors in cyberspace span a very broad spectrum, ranging from lone individuals at one extreme to those associated with major nation-states at the other; all pose cybersecurity threats. Organized crime (e.g., drug cartels or extortion rings) and transnational terrorists (and terrorist organizations, some of them state-sponsored) occupy a region between these two extremes, but they are closer to the nation-state than to the lone intruder.

In addition to those who are motivated by pure curiosity, malevolent actors have a range of motivations. Some are motivated by the desire to penetrate or vandalize for the thrill of it, others by the desire to steal or profit from their actions. And still others are motivated by ideological or nationalistic considerations.

The skills of malevolent actors also span a very broad range. Some have only a rudimentary understanding of the underlying technology and are capable only of using tools that others develop to conduct their own operations but in general are not capable of developing new tools. Those with an intermediate level of skill are capable of developing hacking tools on their own.

Those with the most advanced levels of skills—that is, the high-end threat—can identify weaknesses in target systems and networks and develop tools to take advantage of such knowledge. Moreover, they are often supported by large organizations such as nation-states or organized crime syndicates, and may operate in large teams that provide a broad mix of skills including but by no means limited to those specifically related to computer skills. These organizations provide funding, expertise, and support. When governments are involved, the resources of national intelligence, military, and law enforcement services can be brought to bear. Of significant concern to policy makers is the reality that against the high-end intruder, efforts oriented toward countering the casual adversary or even the common cyber criminal amount to little more than speed bumps.

The availability of such resources widens the possible target set of high-end adversaries. Low- and mid-level adversaries often benefit from nonselective targeting—that is, they do not care which specific computers they victimize. For example, an adversary may conduct an operation that seeks the credit card numbers of a group of individuals. The operation

may not be entirely successful because some of these individuals have defenses in place that thwart the operation on their individual machines. But the operation taken as a whole will obtain some credit card numbers, and an adversary that simply wants credit card numbers without regard for who actually owns those numbers will regard this operation as a success.

However, because of the resources available to them, high-end adversaries may also be able to target a specific computer or user that has enormous value (“the crown jewels”). In the former case, an adversary confronted with an adequately defended system simply moves on to another system that is not so well defended. In the latter case, the adversary has the resources to escalate the operation against a specific target to a very high degree—perhaps overwhelmingly so. Box 3.4 describes what has become known as the advanced persistent threat.

High-end adversaries—and especially major nation-state adversaries—are also likely to have the resources that allow them to obtain detailed information about the target system, such as knowledge gained by having access to the source code of the software running on the target or the schematics of the target device, or through reverse-engineering. Success in obtaining such information is not guaranteed, of

BOX 3.4 The Advanced Persistent Threat

Discussions of high-end cybersecurity threats often make reference to the “advanced persistent threat (APT).” One document suggests that the term was originally coined by the U.S. Air Force in 2006 to refer to “a sophisticated adversary engaged in [cyber] warfare in support of long-term strategic goals.”1 That is, the APT is a party (an actor) that is technologically advanced and persistent (i.e., able and willing to persist in its efforts).

A second usage of the term refers to the character of a cyber intrusion—one that is technologically sophisticated (i.e., advanced) and hard to find and eliminate (i.e., persistent). In this usage, the APT is highly focused on a particularly valuable target. This tight and narrow focus stands in contrast to other cyber threats (e.g., spamming for credit card numbers) that seek targets of opportunity. An APT typically makes use of tools and techniques that are customized to the specific security configuration and posture of its target. Furthermore, it operates in ways that its perpetrators hope minimize the likelihood of detection.

_________________

1 See Fortinet, Inc., “Threats on the Horizon: The Rise of the Advanced Persistent Threat,” Solution Brief, 2013, http://www.fortinet.com/sites/default/files/solutionbrief/threats-on-thehorizon-rise-of-advanced-persistent-threats.pdf.

course, but the likelihood of success is clearly an increasing function of the availability of resources. For instance, a country may obtain source code and schematics of a certain vendor’s product because it can require that the vendor make those available to its intelligence agencies as a condition of permitting the vendor to sell products within its borders.

The high-end adversary is generally indifferent to the form that its path to success takes, as long as that path meets various constraints such as affordability and secrecy. In particular, the high-end adversary will trick or blackmail a trusted insider to do its bidding or infiltrate a target organization with a trained agent rather than crack a security system if the former is easier to do than the latter.

To support this broad range of malevolent actors, there is a thriving and robust underground marketplace for hacking tools and services. Those wishing to conduct an adversarial operation in cyberspace can often purchase the service with nothing more than a credit card (probably a stolen one) or an alternative and untraceable currency such as Bitcoin.19 Design and customization of tools is also available, as are piece parts out of which a malevolent actor can assemble his own adversarial cyber operation. In an environment in which such services can be bought and sold, the universe of possible adversaries expands enormously.

A number of general observations can be made about the various malevolent actors:

• Bad guys who want to have an effect on their targets have some motivation to keep trying, even if their initial efforts are not successful in intruding on a victim’s computer systems or networks.

• Bad guys nearly always make use of deception in some form—they trick the victim into doing something that is contrary to the victim’s interests.

• A would-be bad guy who is induced or persuaded in some way to refrain from intruding on a victim’s computer systems or networks results in no harm to those systems or networks, and such an outcome is just as good as thwarting his hostile operation (and may be better if the user is persuaded to avoid conducting such operations in the future).

• Cyber bad guys will be with us forever for the same reason that crime will be with us forever—as long as the information stored in, processed by, or carried through a computer system or network has value to

________________

19 Bitcoin is a digital currency that was launched in 2009. See, for example, François R. Velde, “Bitcoin: A Primer,” Chicago Fed Letter, Number 517, December 2013, available at http://www.chicagofed.org/digital_assets/publications/chicago_fed_letter/2013/cfldecember2013_317.pdf.

third parties, cyber bad guys will have some reason to conduct adversarial operations against a potential victim’s computer systems and networks.

The process through which information is assembled and interpreted to assess the threats faced by a potential target is known as threat assessment. Threat assessments help those who are defending systems and networks to allocate resources (money, time, effort, personnel) prior to hostile action and to plan what they should do when they are the target of such action. For example, a threat assessment may suggest that more resources should be deployed to combat one particular threat over all others or that a particular strategy would be more effective than others in responding to a given threat.

In general, threat assessments are based on information from multiple sources. As is discussed in Section 4.1.4, successful forensics of actual cyber incidents provide one kind of information, yielding details about the methodology and identity of the intruders, the damage that resulted, and so on. Other sources of useful information may include intercepts of communications and other signals, interviews with those knowledgeable about intruder doctrine or operations, analysis of intruders’ documents, photo reconnaissance, reports from intelligence agents, public writing and speeches by relevant parties, and so on.

A threat assessment sheds light on adversary capabilities and intentions. (“Adversary” in this context can refer to more than one potentially hostile party.) What an adversary is capable of doing depends on the tools available, the skill with which the adversary can use those tools, and the numbers of skilled personnel available to the adversary. What an adversary intends to do depends on the adversary’s motivation, as described above. Motivation and intent are reflected in the adversary’s target set (i.e., what targets the adversary seeks to penetrate) and in what the adversary wishes to do or be able to do once penetration is achieved (e.g., exfiltrate information, destroy data).