8

Interfacing Diverse Environmental Data—Issues and Recommendations

As described in Chapter 1, addressing many of the questions central to environmental research and assessment, and global change research in particular, requires combining geophysical and ecological data. Although this can be difficult, the success and cost-effectiveness of these research and assessment efforts depend significantly on the degree to which data interfacing issues are explicitly confronted. This chapter presents a working definition of data interfacing and describes in detail the technical and organizational barriers that impede it, including the barriers deriving from characteristics of data, from users' needs, from organizational interactions, and from information systems considerations. Specific recommendations also are provided. The chapter ends with a list of 10 Keys to Success, which are based on the committee's review of the case studies. These fundamental, generalized guiding principles should help practitioners to systematically respond to the challenges identified.

Real-world illustrations of problems and solutions relevant to data interfacing are used as examples throughout this chapter. Some of these are drawn from circumstances or applications that do not directly involve interfacing geophysical and ecological data. Examples of this sort were chosen because they effectively exemplify important elements or principles that are pertinent to such interfacing. Indeed, many of the challenges posed by interfacing these two data types are common to many other situations.

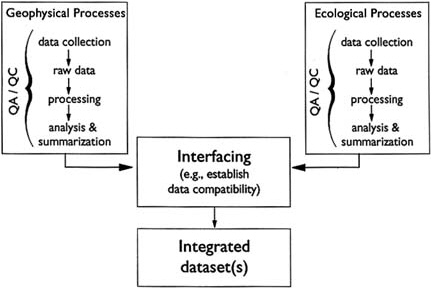

FIGURE 8.1 Generalized representation of the processes involved in interfacing geophysical and ecological data.

THE PROBLEM AND ITS CONTEXT

As defined in Chapter 1, interfacing of geophysical and ecological data is the coordination, combination, or integration of such data for the purposes of modeling, correlation, pattern analysis, hypothesis testing, and field investigation at various scales (Figure 8.1). The data being interfaced can be products of a single, integrated study or can be derived from several studies performed at different times or places. Similarly, the data could have been collected with the interfacing effort in mind, or for other purposes entirely. This deliberately broad definition of interfacing is intended to fit as many situations as possible. As discussed in greater detail below, the specific questions scientists will ask and the ways in which they will therefore endeavor to integrate data are often ill-defined and constantly changing (see Box 8.1). As a result, no single narrowly framed definition and no mechanistic prescription or solution will be of much lasting use to scientists contending with the problems related to interfacing.

At its simplest level, interfacing involves the identification, accessing, and combination of data. However, in practice these seemingly uncomplicated activities can be technically complex, stretching the limits of existing knowledge and the capabilities of available hardware and software

|

BOX 8.1 Complex Questions Require Data Interfacing Many fundamental questions about how ecosystems respond to forcing by larger-scale variables can be answered only by analytic techniques that use geophysical and ecological data together. The following examples from our case studies provide some sense of the range of such questions currently being addressed.

|

systems. In addition, the very act of interfacing frequently requires crossing disciplinary and administrative boundaries, thereby adding another level of complexity to the process. Interfacing therefore can best be understood as occurring in a series of overlapping contexts, both technical and organizational. Effective solutions must address and accommodate all of these relevant contexts.

Interfacing efforts can be confounded by a variety of obstacles (see Mathews, 1983; Henderson-Sellers, 1990). The ones described in this chapter are typical of most situations involving data management and data analysis on complex data sets. However, the challenges facing global change research and other large, interdisciplinary environmental research programs are extreme because of the massive volumes of data, the broad (up to global) geographic scale, the temporal scale, the variety of natural and anthropogenic processes included, the scope of modeling efforts, the numbers of organizations involved, and the evolving nature of the research itself. Consequently, the repercussions of not addressing the barriers described below are correspondingly more significant and more severe than for more traditional single-discipline applications.

ADDRESSING BARRIERS DERIVING FROM THE DATA

Data interfacing efforts must sometimes confront the misperception that once data are in digital form and in a common format, interfacing is simply a matter of merging two or more data sets. As Townshend and Rasool (1993) have pointed out, "collecting data globally does not of itself create global data sets." There is a series of technical pitfalls and obstacles that must be considered and resolved for data interfacing, even on regional scales, to produce scientifically meaningful output. Some of these stem from relatively simple discrepancies among data types and can be dealt with in a straightforward manner. Others, in contrast, reflect fundamental theoretical or "cultural" differences in the ways that ecological and geophysical studies are conceived and carried out. Barriers that arise from these more fundamental differences involve, among other things, the size and complexity of ecological versus geophysical studies, their spatial and temporal scales, the numbers and kinds of variables measured, the role of models in study design and analysis, and traditions of funding and project administration.

Spatial and Temporal Scale

Some of the most apparent barriers revolve around issues of scale. Geophysical studies are more likely to cover continental- and global-scale areas sampled at lower spatial resolution (Rasool and Ojima, 1989; Sellers et al., 1992a). In contrast, ecological studies generally tend to involve ground-based and closely spaced sampling of smaller areas over relatively short time periods. For example, a recent review of about 100 field experiments in community ecology revealed that nearly half were conducted on plots no larger than 1 m in diameter (Kareiva and Anderson, 1988). There is, in fact, only one widely used global data set in the ecological realm, the Global Vegetation Index produced by NOAA at a spatial resolution of 15 to 20 km (Townshend and Rasool, 1993). This stems, in part, from a tradition in ecology of studies on single species and from ecology's roots in natural history studies performed by individual investigators (Worster, 1977). It also reflects an emphasis in the conduct of ecological studies on labor-intensive field and laboratory techniques that preclude sampling over broader spatial scales. For example, in the FIFE study, data on canopy-leaf-area index, green-leaf weight, dead-leaf weight, and litter weight had to be collected by hand from relatively small study plots. This could not be avoided, even though the study was designed from the outset to integrate ecological and geophysical data over larger areas.

Because of such differences in study design, ecological data often

must be smoothed or averaged in order to match the coarser spatial and temporal scales characteristic of geophysical data and models. This occurs, for example, when Geographic Information Systems (GIS) are used to merge remotely sensed areal data (usually geophysical) with attribute data (usually ecological) from specific points on the ground (Elston and Buckland, 1993). This kind of averaging is a key step in the latest generation of integrated global climate models (e.g., Wessman, 1992; Baskin, 1993). However, such averaged data may not truly be representative of heterogeneous ecological communities. This is an important shortcoming when heterogeneity is a vital component determining an ecosystem's response to a changed environment. In fact, Holling (1992) points out that spatial heterogeneity, or lumpiness, is of primary interest to ecologists.

Such differences in spatial scale also are related to the kinds of processes each field considers important, and the range of spatial and temporal scales across which they can be integrated. Most ecological studies thus attempt to focus on well-bounded community types and the actions of individual species or groups of species within these. Even studies that, by ecologists' standards, cover large areas (see Box 8.2) are fairly restricted compared to the global scope of many geophysical investigations. In addition, Wiens (1989) suggests that ecologists have been slower than atmospheric and earth scientists to address issues of scale. These other sciences (e.g., Clark, 1985) have a longer history of linking physical processes from local to global scales. Further, most ecological models function at a single scale (Ustin et al., 1991) or do not explicitly address scale (Wiens, 1989).

There are, of course, exceptions to this generalization about the spatial scale of ecological studies. For example, there is a long-standing tradition in ecology of interest in global patterns of community diversity and the body sizes of individual organisms, and more recent concerns about biodiversity (e.g., May, 1991; Jackson, 1994 a,b) and sustainability of the biosphere (Lubchenco et al., 1991) encompass a global perspective. However, none of these concerns has to date required the interfacing of large amounts of data from different sources.

The differences in spatial scale between geophysical and ecological studies are paralleled by analogous issues of temporal scale. Long-term time series of ecological data are relatively rare. This may make it difficult or impossible to create integrated data sets that focus on long-term changes in coupled ecological-geophysical systems. Where historical ecological data are available, they are more likely to represent data from several studies carried out independently over the period of research interest. Long-term ecological data sets of broad spatial extent are thus more likely to result from the combination of data from several sources. This in turn requires solving quality control, metadata, and data integration

|

BOX 8.2 Ecological and Geophysical Scales Differ While the majority of ecological studies focus on relatively restricted spatial and temporal scales, other have a larger perspective. The committee examined two of these, the NAPAP and CalCOFI programs, as case studies (see Chapters 3 and 7). Other areas of study that attempt to link ecological processes across local to regional scales are described below. Each illustrates ways in which ecological and geophysical data might be interfaced. Even though large by ecologists' standards, they are small in comparison with may geophysical studies. Ecologists have used a wide variety of paleoecological data to explore the ways in which vegetation communities responded to past climate change, especially during and after the most recent glaciation. Most of these studies have concentrated on regions within Europe and North America (e.g., Davis, 1981; Cole, 1985; Pennington, 1986; Webb, 1987; Foster et al., 1990). A central concern in these studies is to understand how the ecological requirements and characteristics of individual vegetation species contribute to regional patterns of community change over time. Marine ecologists have expanded their understanding of how intertidal communities are structured by including oceanographic processes in their studies. Connell (1985), Gaines and Roughgarden (1985), and Roughgarden et al. (1985, 1986) showed that the importance of predation and competition for space within the intertidal community depends on the numbers of larvae available for settlement. This in turn depends on regional processes, such as currents and upwelling, that extend far beyond the intertidal zone. Forest ecologists are attempting to use simulation models that incorporate the birth, growth, and dynamics of individual plants to understand how vegetation would change on regional and global scales in response to climate change (Shugart et al., 1992). Such models include the physiological responses of individual plants to specific environmental variables. They also include somewhat broader-scale changes in community composition in response to physical disturbance as well as to environmental change. Long-term studies in the Chesapeake Bay have examined how regional land use, hydrology, waste discharge, and natural ecological processes interact to affect important estuarine resources. These studies were based on a systems approach that depended on interfacing data on many different aspects of the estuary (NRC, 1988). |

issues stemming from methodological differences between the data sets.

In addition to these differences in study design, the behavior of ecological systems can confound the interfacing of geophysical and ecological data. For example, environmentally induced changes in ecological systems often occur with time lags of varying lengths (e.g., Cole, 1985; Lewin, 1985; Pennington, 1986; Davis, 1989; Loehle et al., 1990; Steele, 1991). This can make it difficult to determine which ecological and geophysical data should most appropriately be interfaced. Such time lags may require interfacing data from the same location or region, but from different times. In many cases, such data may be difficult or impossible to

find. Research into the long-term response of vegetation to climate change (e.g., Davis, 1981, 1989; Cole, 1985; Pennington, 1986; Webb, 1987; Foster et al., 1990) has also shown that species respond individualistically, that is, that communities do not respond uniformly as entire units. This means that interfacing studies may provide misleading or inconsistent results when using ''representative" species as indicators of ecological response to geophysical variables.

Reflecting such differences in temporal scale, the time steps in the models that represent geophysical and ecological systems can be quite distinct. For example, general circulation models recompute winds and temperature every 20 minutes for each grid cell. In contrast, ecological models of vegetation change use monthly to yearly, or even decadal, time steps (Baskin, 1993), depending on the kind of response being modeled.

These scale-related problems stem from the fact that fundamentally different kinds of processes are at work in geophysical and ecological systems. They also arise from the fact that ecological systems can be viewed at many different scales and from many different perspectives. None of these is the only "correct" one (O'Neill, 1988; Wiens, 1989; Levin, 1992), and each is based on different choices about which underlying processes and mechanisms to look at. This complexity, of course, affects choices about what kinds of data should be selected for interfacing. More complex problems that cut across several scales are complicated by the fact that variables and processes may or may not change in concert across scales (Wessman, 1992). Certain kinds of measurements in ecological systems may be correlated at one scale, but appear unrelated or negatively correlated at another (Wiens, 1989). In addition, sampling at intervals that are too widely spaced in space and time often fails to capture important aspects of a system's underlying variability. In such instances, the well-known problem of aliasing can lead even sophisticated analysis approaches to falsely identify trends. Thus the scales at which different kinds of data are collected can constrain or even predetermine the relationships among ecological and geophysical variables. As a result, data collected at one scale cannot necessarily be used to represent processes at another scale (through averaging or subsetting). This means that scientists engaged in data interfacing efforts must exercise extreme care when attempting to integrate data across different scales. Merely merging ecological and geophysical data by rote without seriously considering scale-related issues and their implications could result in spurious relationships and misleading analysis results. Unfortunately, there are no well-developed guidelines to assist in such efforts, although hierarchy theory (O'Neill, 1988) is a promising conceptual approach for identifying ecological scales that maximize predictive power.

Developing an integrated conceptual model of the systems being studied

will assist in defining methodological and data management problems particularly with respect to spatial and temporal scales. For example, in the committee's NAPAP case study the decision to measure acid neutralizing capacity (ANC) was based on a model of how lake chemistry works. ANC turned out to be a key parameter in the survey of lake sensitivity.

The committee recommends that careful thought be given, in the planning for interdisciplinary research, to the implications of different inherent spatial and temporal scales and the processes they represent. These should be discussed explicitly in project planning documents. The methods used to accommodate or match inherent scales in different data types in any attempts to facilitate modeling and analysis should be carefully evaluated for their potential to produce artificial patterns and correlations.

Preliminary Data Processing and Statistical Uncertainty

A wide range of models and data processing algorithms typically are used in the development of ecological and geophysical data sets. Such preliminary processing is used for quality control and data cleanup, for data summarization and classification, and for extracting higher-level information from raw data (see Box 8.3). Raw data therefore are rarely used

|

BOX 8.3 Preliminary Processing Affects Data Compatibility A wide range of preliminary processing methods are used to convert raw ecological and geophysical data to a usable form. Many of these can significantly affect later efforts to interface disparate data types.

|

directly, with the result that assumptions (both implicit and explicit), biases, and various kinds of statistical error are unavoidably built into each data set.

These built-in features of the data have two important kinds of implications for data interfacing. First, they mean that data interfacing involves more than just combining the tangible aspects of the data, such as formats and data values. It also necessarily involves identifying, understanding, and accommodating the assumptions, perspectives, value judgments, and decisions inherent in each data set. In simpler terms, all data sets cannot be all things to all people. For example, Townshend and Rasool (1993) list the various data products currently being derived from NOAA's Advanced Very-High Resolution Radiometer (AVHRR) data. Each product responds to the specific needs of a different subset of users, and these products are not readily interchangeable, even though all are derived from the same basic raw data.

Second, all preliminary processing and derivation steps are associated with some kind and amount of statistical error or uncertainty. Data interfacing, whether it involves combining separate estimates of the same variable, different variables, summary statistics, derived spatial data, or data that incorporate subjective judgments, represents another source of statistical error (NRC, 1992). For example, investigators focusing on point-based data in the FIFE program were unprepared to deal with the registration accuracy problem when requesting "their" pixel of AVHRR data. Plus or minus one pixel may mean a 5-km uncertainty in location, whereas the ground-based instruments were sensitive at 100-m scales. A more complicated problem arose when FIFE investigators attempted to associate averaged flux measurements from a 15-km-long aircraft transect with point-based flux measurements collected on the ground. When such sources of uncertainty are not documented and accounted for in the metadata for any given data set, biases can be introduced that affect the outcome of data analyses and the conclusions drawn from them. As a general rule, uncertainty and sensitivity analyses should routinely be accomplished for the outputs of all models using the same data sets.

The committee recommends that the metadata for each data set explicitly describe all preliminary processing associated with that data set, along with its underlying scientific purpose and its effects on the suitability of the data for various purposes. Further, the metadata also should describe and quantify to the extent feasible the statistical uncertainty resulting from each processing step. Planning for studies that involve interfacing should explicitly consider the effects of preliminary processing on the utility of the resultant integrated data set(s).

Metadata issues also are discussed in more detail later in this chapter.

Data Volume

The sheer quantity of data projected for global change studies can pose significant challenges for nearly every aspect of the data storage, retrieval, and analytical systems currently available. As summarized by Townshend and Rasool (1993), these drastically increased volumes stem from a variety of sources:

-

A greater number of different sensing systems.

-

An increase in the number of spectral bands and frequencies per sensor system.

-

Improved sensor sensitivity.

-

Cumulative increases in data volume as the historical record grows and sensor technology continues to advance.

-

The proliferation of derived data sets to meet different needs.

-

The creation of regional- and global-scale data sets from preexisting, fragmented data.

In many cases, the projected volume of data from new instruments and programs is orders of magnitude higher than that currently produced by existing programs. This can make it impossible or impractical to continue using traditional data management and data interfacing methods. For example, the staff at the Carbon Dioxide Information Analysis Center (CDIAC) at Oak Ridge National Laboratory are concerned that their existing labor-intensive data cleanup and interfacing methods will not be suited to the demands of the new Atmospheric Radiation Measurements (ARM) program. This DOE program will produce approximately 1,000 gigabytes (1 terabyte) of data per year, an amount significantly larger than the 5 gigabytes of data archived by CDIAC. Up to now, two of CDIAC's most popular data sets, Keeling's atmospheric carbon dioxide concentrations from Mauna Loa and Marland's carbon dioxide emission estimates from fossil fuel burning, are only 0.03 and 75.6 megabytes in size, respectively.

Larger data volumes also can require changes in the relationship between data sources and users. For instance, the FIFE Information System staff considered it unreasonable to respond to open-ended investigator requests such as, "Send me all your level-1 AVHRR-LAC (Local Area Coverage) data," which totaled 1.5 gigabytes on ninety 6,250-bpi 9-track tapes. Instead, user support staff worked with users to refine specific data requests for actual research requirements.

A related barrier stems from the need to provide the research community

with information about and ready access to an ever-increasing volume of data. These volumes threaten to overwhelm some of the existing methods for data storage, archival, and retrieval.

The committee recommends that all proposed data management and interfacing methods be weighed carefully in terms of their ability to deal with large volumes of data. Assumptions that existing methods will continue to be suitable should be treated with caution.

Overcoming Data Incompatibilities

Data interfacing is often confounded by differences in the conventions that structure day-to-day practice. Each discipline has its own set of conventions, or language, which cannot easily be forced into a common terminology even when the same quantities are involved. For example, cartographers use "small scale" to refer to a very large area mapped without much detail, while other scientists use the same term to refer simply to a small area. Conversely, cartographers use "large scale" to refer to small areas mapped in great detail. Other scientists use this term to refer to extensive or large areas.

The FIFE and NAPAP studies provide a rich variety of examples of how seemingly innocuous data characteristics can bedevil data interfacing efforts. In the FIFE study, the Information System staff found that the same symbols or terms were used by different disciplines to refer to widely different quantities. An analogous problem arose from the fact that separate groups measured the same variables, but called them by such different names that it would be difficult to combine them without prior knowledge of their respective naming conventions. As another example, all disciplinary groups in the FIFE study measured time, but in ways that made it difficult to combine their respective data. The surface biology group preferred wall clock time for field data collection, while more globally oriented investigators used Greenwich Mean Time as a more universal standard to link satellite and aircraft data collection. Further, because time of day is not traditionally noted with soil moisture measurements, it was difficult to use the soil moisture data with other data, such as those for surface fluxes, with higher temporal resolution. This incompatibility was problematic because diurnal variations in soil moisture can have significant effects on energy and moisture balance calculations. As yet another example, investigators working at a local scale preferred Universal Transverse Mercator coordinates, while latitude and longitude were needed to track satellite and aircraft operations and to link solar position and time in a scientifically consistent way. The surface flux group was focused on circulation modeling with grid cells several kilometers on a side. That group was therefore satisfied with

fairly coarse digital elevation data (e.g., 30-m horizontal resolution). In contrast, the hydrological investigators wanted watershed descriptions at 1-m resolution.

The FIFE study encountered even more severe problems when attempting to incorporate needed data from outside sources. The historical precipitation data, extending back to the 1850s, had no associated locational or methodological documentation. Similarly, historical vegetation cover maps were available, but contained only the names of vegetation classes and not full descriptions of the species present. County soil maps, obtained from the Soil Conservation Service, were difficult to reconcile across county boundaries because different, and poorly documented, classification schemes were used in different counties. Likewise, the aquatic component of NAPAP relied on soil maps and geological data collected by different state agencies; in a number of cases, different data conventions mandated additional field studies to make the data sets compatible. Poor documentation, differences in sampling methods, and inadequate site data for fisheries also hampered NAPAP efforts to integrate these historical data sets from state agencies with water chemistry data collected by the NAPAP agencies. These difficulties, and the high cost of collecting new fisheries data, severely curtailed assessments of acid rain impacts on biotic resources.

Problems of data compatibility due to different methods, different definitions, or a lack of coordination among parties are exacerbated for international data sets. For instance, in the Sahel study, historical crop yield, a crucial biological endpoint, was often poorly defined and monitored in different ways among participating countries. The lack of suitable and consistent ground truthing (i.e., verifying remotely sensed observations by comparing them with reliable in situ observations) of yield data was especially problematic.

These differences between ecological and geophysical studies, and the data they produce, cause significant problems for data interfacing efforts. Creating synoptic ecological data sets that match the broader coverage of geophysical data is time-consuming, costly, and technically demanding. Gathering ecological data over large areas and times is often labor-intensive and difficult. It may be necessary to combine data from several ecological studies, which in turn usually requires extensive data standardization and cross-checking among ecological data sets that were originally collected independently. Some problems, such as differences in spatial registration of mapped data, are potentially resolvable. Others, such as differences from study to study in measurement methods or class limits of key variables, may not be. Resolving these and other problems stemming from differences between geophysical and ecological data requires

in-depth understanding of the data's characteristics and of the underlying scientific assumptions they reflect.

Each of the challenges described above gives rise to a corresponding requirement for successfully interfacing ecological and geophysical data. The methods for fulfilling these requirements will differ somewhat depending on whether interfacing is dealing with historical data from disparate studies or is envisioned for future studies that can be planned as integrated wholes. For historical data, requirements may have to be met by "retrofitting" certain aspects of the data. For future studies, challenges can be dealt with in the planning process, thereby avoiding some of the problems inherent in historical data. However, it is very unlikely that future interfacing efforts will occur only in the context of studies planned as fully integrated wholes. Instead, it is most likely that a great deal of interfacing will continue to involve data drawn from separate studies that were not originally envisioned as directly related. In either case, the degree to which these requirements are met will largely determine the success of interfacing efforts.

The committee recommends that efforts to establish data standards focus on a key subset of common parameters whose standardization would most facilitate data interfacing. Where possible, such standardization should be addressed in the initial planning and design phases of interdisciplinary research. Early attention to integrative modeling can help identify key incompatibilities. The data requirements, data characteristics and quality, and scales of measurement and sampling should be well defined at the outset.

In addition, the committee recommends that agencies that perform or support environmental research and assessment generally, and global change research particularly, identify and define key ecological data sets that do not exist but are important to their mission. A careful review should be made of options for finding, rescuing, or creating these crucial data, and funding should be set aside to implement the most feasible option(s).

ADDRESSING BARRIERS DERIVING FROM USERS' NEEDS

There is an extremely wide range of users from different countries, agencies, and scientific disciplines who could potentially use data interfacing in their work. However, in order for them to even conceive of an interfacing effort, they must first know what data are available in their particular discipline and perhaps even in the research community at large. Thus, a major threshold challenge is for data managers to keep a large, diverse, and geographically dispersed user community informed and up to date about data types, characteristics, locations, and retrieval methods,

as well as about changes in these. Inevitably, there will be as many ways of perceiving and thinking about the data as there are users. Different users will want to subdivide the same data differently, will be interested in different temporal and spatial scales, will use dissimilar derived variables, and will focus on separate processes (instances of such differences were described above in the section "Addressing Barriers Deriving from the Data"). Data interfacing systems and procedures therefore must have the ability to represent the data in different ways that conform to users' mindsets and research needs. (See also the subsection "Interoperability" in the section "Addressing Barriers Deriving from Information System Considerations," below.) Further, because analytical approaches and methods in climate change research are not standardized, users need the ability to interface data with their own desired analytical tools. Even in the FIFE program, which was designed from its inception as a set of integrated investigations, researchers preferred whenever possible to analyze data on their own systems and with their accustomed tools.

Multidisciplinary problems demand that users retrieve and interface data from studies in which they were not directly involved. For example, in NAPAP, data on aquatic biota in studies of acid rain effects were collected by one group of scientists, while data on precipitation chemistry were collected by another. Similarly, in the FIFE program, modelers used atmospheric as well as point-source vegetation data to develop a picture of how processes at different scales interact. In these multidisciplinary programs, data were collected with the intention of integrating them later with other data types. In contrast, much of the data envisioned for use in global change studies were not originally collected with specific interfacing applications in mind. This situation requires that data be exceptionally well documented in order to be usable by scientists who were not directly involved in their original collection and validation. Working scientists readily recognize the difficulties presented by poorly documented data in their own field. Such difficulties only increase when data being interfaced cut across disciplinary boundaries. In fact, the committee heard researchers in every case study describe the confusion, inefficiency, and technical dead ends that can result from poor documentation. As a result of experiences such as these, many recent reports on data management related to global change research have emphasized the central importance of thorough and readily accessible metadata (e.g., NRC, 1991; OSTP, 1991; CEES, 1992). Providing such metadata can have implications for the design of hardware and software systems to support interfacing (see the subsection "Complex Metadata" in the section "Addressing Barriers Deriving from Information System Considerations," below).

Most of the case studies the committee examined operated on the assumption that data were a common resource intended to be used in

different ways by different researchers. Indeed, this is a fundamental tenet of the global change research effort, where key data sets, such as those from remote sensing, are made available to the research community at large. When the same data are used in more than one way by different investigators, however, multiple and incompatible derivative versions of the original data set are produced. This is because researchers commonly process, subdivide, adjust, summarize, and correct working versions of acquired data sets based on their needs and their interactions with the principal investigators. In fact, where different researchers independently update or correct acquired data, a single, identifiable authoritative version may cease to exist. This happens when no one has the responsibility to ensure that all corrections and updates are collected and embodied in a validated "source" data set. Thus, for instance, an important aspect of CDIAC's role is to collect, document, and incorporate feedback from users into its data packages, such as the one for global production of carbon dioxide. This ensures that the user community can readily identify and obtain authoritative versions of key data sets. In contrast, analysts in the Sahel study, under pressure to produce timely crop estimates, made many undocumented corrections, adjustments, and derivations of original data. Therefore, even though some of the derived data sets that resulted from the Sahel study have been archived by NOAA, it is no longer clear how they were created. Thus, is it extremely difficult to use these data for other purposes or to retrace the data path to an intermediate point.

Global change research can be an extremely fluid activity. Scientists cannot always define exactly what data they are interested in, how they will interface them, or how they wish to analyze them. Even relatively well-defined programs involve a large component of exploratory data analysis. Researchers experiment with data, mixing and matching different data types to explore a variety of kinds of relationships among data and underlying processes. In addition, the results of one particular line of investigation can lead to another that was not in the original program plan (see Box 8.4). As a result, data interfacing systems and procedures must be exceptionally flexible and responsive to ad hoc retrieval requests, while data formats and transfer mechanisms must be standardized or otherwise made easily convertible (see the subsection "Interoperability" below).

Data interfacing systems and procedures must be adaptable as well over the somewhat longer term. The data, analytical methods, models, motivating questions, and related hardware and software all are changing and evolving rapidly. The committee heard unequivocal statements from participants in every case study that a critically important condition for successful data management and data integration is the direct and

|

BOX 8.4 Users' Needs Can Change Unpredictably The FIFE study provides a good example of how users, their scientific interests, and their needs for data all shift over time in ways that cannot necessarily be predicted. The overall study was based on a conceptual framework that guided the data collection and helped organize how the data would be used in atmospheric circulation models. However, FIFE was designed as an active experiment. It was not a well-defined monitoring program or a platform with a limited set of instruments. Investigators proposed measurements that had not been done before, built new instruments and modified existing ones, and undertook novel data collection procedures and protocols. When "quick-look" analyses identified aspects of the program that were not working, these were modified. The actual relationships among the measured variables, and how these were affected by sensor characteristics and radiative transfer processes in the vegetation canopy and in the atmosphere, were unknown at the beginning of the program. These became clear only over time as investigators began analyzing their data. As analyses progressed, investigators' interest in and need for data from other aspects of the study increased. In addition, the investigators themselves changed throughout the course of the experiment as graduate students and postdoctoral researchers moved on and principal investigators changed institutions. The need for certain data products, such as satellite image data processed to uniform formats, was anticipated in the planning. However, some anticipated needs never materialized. It was expected that many investigators would request AVHRR-GAC (Global Area Coverage at 4-to 8-km resolution) data, and substantial effort was expended to develop such data. However, the subsequent availability of an equivalent AVHRR-LAC (Local Area Coverage) data set at higher, 1-km, resolution absorbed all the anticipated demand. To date, there has not been one request for the GAC data set. The FIFE data also have been used in many ways not originally conceived. For example, they have been used as a source of test data for developing satellite processing algorithms. They also have been proposed as a test data set for producing data compression algorithms and data visualization tools. |

continuing involvement of the scientific end users. Ensuring such involvement has been a consistent recommendation as well of recent reports on data management aspects of global change research (e.g., NRC, 1991; OSTP, 1991; CEES, 1992).

The committee urges project scientists and data managers to adopt the view that one of their primary responsibilities is the creation of long-lasting data and information resources for the broad research community. Data management systems and practices, particularly the development of metadata, should be designed to balance the needs of this larger user community with those of project scientists.

ADDRESSING BARRIERS DERIVING FROM ORGANIZATIONAL INTERACTIONS

Because few existing organizational arrangements reflect the multi-disciplinary perspective that motivates the interfacing of geophysical and ecological data, any such interfacing will necessarily have to be implemented across a range of organizations. This in turn will involve making adjustments or accommodations to those aspects of organizations that affect the behavior of individuals, groups, and agencies. These include such things as management structures, organizational history and memory, norms of acceptable behavior, rewards and sanctions (both implicit and explicit), agency missions, and budgets. The committee heard in every case study that such organizational interactions were central to the success or failure of data management and data interfacing efforts. As a result, the committee concludes that organizational and technical considerations interact strongly and should be given equal weight in the design and development of data interfacing systems.

Rewards, Priorities, and Ingrained Attitudes

The reward system among scientists in universities is based largely on the publication of peer-reviewed papers. Within this overall setting, the geophysical sciences have a longer and more established tradition of studies with multiple investigators and complex data sets. In contrast, the ecological sciences are much more oriented toward studies with a single principal investigator. For example, in tenure decisions, many academic ecology departments give much greater weight to sole-author and first-author publications than to those with larger numbers of authors. While the size of the research group thus varies from field to field, the chief priority remains to publish original research in the peer-reviewed literature. Therefore the primary objective of most scientists is to gather, process, analyze, and publish ''their" data; only then do they respond to outside demands. But successful data interfacing requires that scientists participate more fully in outside activities (e.g., preparation of documentation, exhaustive quality control, and data submissions to outside agencies) for which they are not directly rewarded.

An analogous reward system influences the behavior of scientists and managers in agencies. Here, rewards stem from furthering the agency mission, as well as from publishing. In most cases, agency missions, and those of the departments or divisions within them, have been narrowly defined to focus on a particular activity, resource, or problem. This approach reflects the classic bureaucratic organizational model in which problems are addressed by compartmentalization, division of labor, and

well-defined procedures (Parsons, 1947). In most cases, the additional activities needed to support data interfacing (e.g., preparing documentation, responding to data requests, participating in standardization efforts) do not fit within traditional agency missions. Not only are staff typically not rewarded for such activities, in some circumstances they may be chastised for threatening an agency's highly prized autonomy. Thus, data interfacing efforts must overcome various versions of the "It's not in my job description" problem.

At an extreme, such inappropriate reward systems and the mindset they engender can result in the partial or complete failure of data interfacing efforts. For example, the documentation submitted to the Information System staff in the FIFE study was of variable quality, much of it insufficient to enable other researchers to make use of the data. Some data sets were fully described, but many others were accompanied only by a list of files, variables, and formats. The Information System staff found that many investigators were reluctant to devote the time and effort needed to prepare or review documentation, even when draft documents were prepared by the Information System staff. While most of these problems were overcome by persistence, the inability to develop complete documentation rendered two data sets—micrometeorological data from the Army Corps of Engineers and GOES data processed by Scripps Institution of Oceanography—essentially inaccessible and unusable. Because of problems such as these, the FIFE Information System staff identified the preparation of documentation as the single most demanding and frustrating element of their data interfacing experience.

Overcoming these obstacles sometimes requires accommodating or mimicking the existing reward system. For example, CDIAC often negotiates with data sources to allow them time to analyze their data and publish their results before submitting data for wider distribution. Consequently, the center sometimes waits for up to 2 years to receive targeted data sets. By listing data sources as primary authors of data packages once they are published and encouraging users of the data to cite data sources in their publications, the Center tries to use the academic reward system as a motivation for data sources to participate actively in the preparation of the data packages. Whatever the means used, successful data interfacing depends on surmounting the ingrained mindsets and priorities fostered in research scientists by the existing organizational reward system.

The committee recommends that professional societies, research institutions, and funding and management agencies reevaluate their reward systems in order to give deserved peer recognition to scientists and data managers for their contributions to interdisciplinary research. Granting and funding agencies, as well as program managers and university

administrators, should provide tangible incentives to motivate scientists to participate actively in data management and data interfacing activities. Such incentives should extend to favorable consideration of those activities in performance review, including treating the production of value-added data sets as analogous to scientific publications.

Also, because organizational missions and reward systems inherently reflect a larger policy context, relevant policy issues should be included in the planning for interdisciplinary research. This should be accomplished in part through open communication between project scientists and appropriate policymakers that continues throughout the life of the project. Such communication will help provide a basis for developing cooperative arrangements between collaborating institutions that will provide strong incentives for and reduce barriers to sharing data.

In addition to these academic and organizational reward systems, barriers to data interfacing stem from ingrained attitudes about the relative professional status of different groups. In every case study the committee found that interfacing geophysical and ecological data typically required information management skills that were beyond the capabilities of the average scientist. The lack of these skills is particularly acute in the ecological sciences, where, because studies are generally finer-scale and shorter-term, scientists do not need to confront complex data management issues. Ecologists are more likely to use software packages that combine statistical analysis capabilities with rudimentary data management features. These packages do not typically have the ability to handle complex relational databases or geographically based data, and, if they do, ecologists are less likely than geophysicists to use them. Relatively straightforward data management approaches are usually sufficient for the characteristic ecological study, where data volumes rarely exceed a few hundred megabytes, but not for the gigabyte-and terabyte-sized data sets typical of geophysical studies.

Despite the geophysical science community's greater familiarity with sophisticated hardware and software, however, both communities are relatively unschooled in the information management concepts and system design skills required for successful data interfacing. As a result, the most successful data interfacing efforts in the case studies were those where information management professionals were an integral part of the research team (Kanciruk and Farrell, 1989). Involving such individuals in the effort in turn required surmounting subtle ingrained attitudes that can frustrate productive cooperation between geophysicists and ecologists on the one hand, and information management specialists on the other. Each group is proficient in its own field and relatively naive in the

other's area of expertise. All too often, therefore, neither group affords the other the status of equals. There is a tendency among scientists to view information management staff as "technicians" fulfilling a support function that does not warrant equivalent status. Conversely, there is an inclination among information management specialists to view scientists as merely "users," a term that can have pejorative connotations. Successful broad-scale interfacing efforts require strategies that break down such ingrained attitudes and equalize the perceived status between the two groups.

In the NAPAP study, conflicts developed between the data managers and the scientific team. On the one hand, the scientific team felt that the data management team should provide a workable database from which the scientific team could do the actual data analysis and interpretation. On the other hand, the data management team considered themselves scientists as well as data managers and wanted the opportunity to interpret the data also. In the best of all worlds, the data management team and the scientific team should be combined to constitute a single team that can and should jointly prepare and publish interpretative reports.

The committee recommends that in order to help ameliorate some of these difficulties, research universities include courses in their curricula that provide environmental scientists with an in-depth understanding of the rationale for and principles of sound data management. Program managers and data managers, in their interactions with and training of environmental scientists, should emphasize how state-of-the-practice data management can provide immediate and long-lasting benefits to scientists, particularly those engaged in interdisciplinary research. At the same time, data managers need to be a part of the conceptual team from the beginning of a project and have equal status with principal investigators.

Data Ownership and Cooperation

The reward systems and attitudes described above contribute to a distinct sense of possessiveness about data. This is especially acute prior to publication when the fear of being "scooped" is highest. As noted in the previous section, CDIAC's data sources are sometimes unwilling to submit data for wider distribution until they have first published. Existing funding mechanisms also contribute to this sense of ownership of individual data sets. Even in the FIFE study, which was intended from the outset to involve a large amount of data interfacing across studies and data types, contracts were awarded to individual investigators or groups whose proposals were selected. In the Long-Term Ecological Research (LTER) study, data sharing among scientists increased in direct proportion

to the amount of use the principal investigators were making of other researchers' data. As a result, scientists have a built-in motivation to think data as belonging primarily to their particular project and only secondarily to larger data interfacing efforts that cut across projects.

Analogous concerns about data ownership can be even more intractable at the level of agencies or organizations. Here, data are usually viewed as an important organizational or economic resource, and efforts to make them more widely available to "outsiders" can be viewed with suspicion. In some cases, this suspicion stems from legitimate concerns that sensitive information will be misinterpreted or misused, and in others from a fear of being made to look bad. In other instances, organizations request payment or a fee as a return on the investment needed to gather the data in the first place. This request is often made for geophysical data that might be useful for such purposes as mineral prospecting or crop management. There also may be legal or ethical constraints on making data available when they contain proprietary or privileged information, or where their release could violate privacy rights.

The issue of data ownership arose in every case study the committee examined. Data interfacing succeeded only when explicit steps were taken to develop organizational mechanisms that produced countervailing motivations. For instance, both the California Cooperative Oceanic Fisheries Investigation (CalCOFI) and the FIFE programs were designed so that individual scientists need to have access to other kinds of data in order to address the program's core questions. However, data ownership problems cannot always be resolved in favor of complete openness and access to data. In the case of CDIAC's global inventory of carbon dioxide emissions, the Center had to accommodate certain politically motivated restrictions on the ways in which country-by-country population data could be reported.

Considerations of institutional ownership also can prevent data integration. For example, NAPAP relied on historical fisheries data from state agencies and found some cases in which well-documented and well-managed data sets were restricted from outside use.

The committee recommends that in order to encourage interdisciplinary research and to make data available as quickly as possible to all researchers, specific guidelines be established for when and under what conditions data will be made available to users other than those who collected them. Such guidelines are particularly important when data collectors, data managers, and other users are in different organizations. In addition, adequate rewards should be established by the funders of research and publishers to motivate principal investigators to place all data in the public domain.

Managing Organizational Change

Responses to these kinds of organizational barriers, as well as to those deriving from the data, from users' needs, and from system considerations, will necessarily be implemented by managers of and participants in data interfacing efforts. To succeed, they will need the ability to achieve three kinds of changes in existing organizational interactions. First, they must establish relationships and operational procedures across as opposed to strictly within organizations. Second, they must establish reward structures and other mechanisms to induce organizations and the individuals within them to change their behavior. Third, they must establish new functions that support data interfacing, but that are currently beyond the responsibilities of any individual organization.

These changes are analogous to those being implemented across a wide range of industries in the private sector. In these instances, managers are attempting to integrate previously separate activities or functions in order to achieve greater quality, productivity, and flexibility. Both the process of organizational change in industry and its substance are similar to the organizational changes required in data interfacing. A great deal of the learning taking place in these private sector organizations is thus directly relevant to the data interfacing context. There is a large and growing literature, from both corporate and academic perspectives, that presents and analyzes this experience. A thorough review is beyond the scope of this report, but managers of data interfacing efforts would benefit from reading some or all of the following: Davenport (1993), Freudenberg (1992), Katzenbach and Smith (1993), Lincoln (1985), Reason (1990), and Weick (1985).

As might be expected, given the wide range of organizational sizes, structures, histories, and cultures, there is no single formula for successfully resolving the challenges deriving from organizational interactions related to data interfacing. The particulars of successful solutions depend on the context of individual situations. However, several rules of thumb can be inferred from past experience, both within the case studies and in industry at large. Successful management of data interfacing efforts must be based on collaboration and flexibility, and specific attention must be paid to reward structures and motivation. In addition, management must foster productive teamwork between geophysical and ecological scientists, as well as between scientists and information management specialists.

The committee recommends that in the planning of any interdisciplinary research program, as much consideration be given to organizational and institutional issues as to technical issues. Every effort should be made to minimize the likelihood of misunderstanding, conflicts,

and rivalries by establishing interorganizational relationships and procedures, creating effective reward structures, and creating new functions that explicitly support data interfacing.

The committee also recommends that the agencies involved in supporting and carrying out interdisciplinary research investigate the possibility of establishing one or more ecosystem data and information analysis centers to facilitate the exchange of data and access to data, help improve and maintain the quality of valuable data sets, and provide value-added services. A model for such a center is the Carbon Dioxide Information Analysis Center (CDIAC) at Oak Ridge National Laboratory. In addition, it would be wise to look closely at the potential synergism between any new ecosystem data and information analysis center and all other existing environmental data centers.

ADDRESSING BARRIERS DERIVING FROM INFORMATION SYSTEM CONSIDERATIONS

Actual interfacing activities will be carried out primarily by means of computerized hardware and software systems, and interfacing capabilities will depend in large part on their characteristics. The range of challenges deriving from users' needs, from the organizations in which they work, and from the data themselves complicates the design, implementation, operation, and maintenance of such systems.

The wide diversity of studies, data types, and researchers is matched by a similar diversity of preferred hardware and software. It is unrealistic to expect that the research community at large, or even a sizable segment of it, could be required or persuaded to use common hardware and software. This inescapable variety is compounded by the rapid rate at which hardware, software, and system concepts are evolving and the fact that they are unlikely to stabilize soon. In this environment of rapidly shifting requirements, preferences, and capabilities, interfacing approaches, and the tools that implement them, should ideally be as independent as possible of specific platforms or systems. At present, however, the lack of true interoperability among different hardware and software components makes it impossible to completely achieve this ideal.

The demands of interfacing will stretch current concepts of system design and development. They will do this in at least four specific ways. First, systems must be developed in the absence of clear-cut, detailed, and stable users' requirements. Second, systems must more fully achieve the kind of interoperability that will give users flexible access to widely dispersed data. Third, metadata must efficiently describe the location and characteristics of a proliferating number of ever-larger data sets. This requires that metadata become more closely integrated with the data they

describe. Fourth, users must have the ability to backtrack along the data path when derived data do not meet the needs of a particular interfacing scenario. All four of these challenges, described more fully below, are compounded by the massive amounts of data involved in global change and other complex environmental research programs.

Fuzzy and Shifting Requirements

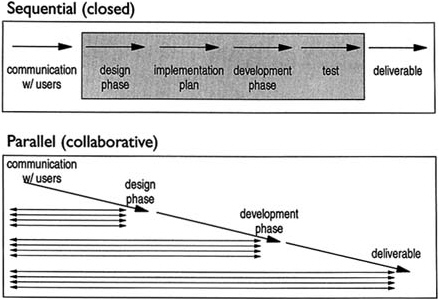

Some aspects of users' needs in global change research help to create exceptional challenges for system designers. In a typical system design scenario, clear definitions of users' requirements provide the basis for the detailed design of the hardware/software system. However, scientists engaged in interdisciplinary environmental research cannot specifically define all the kinds of interfacing they might wish to do in the present, nor how these desires might change in the future. Thus, while users are usually able to define a broad vision (e.g., maximize accessibility, flexibility, and adaptability), they cannot specify detailed and concrete requirements for interfacing (e.g., take exactly these data and do precisely these, and only these, operations on them). The research setting is thus fundamentally different from the setting of many large business systems (e.g., airline reservations and automated teller machine networks) for which requirements can be completely specified. In this kind of environment, as exemplified in the FIFE study described above in Box 8.4, users and information management specialists must interact closely and more continuously in order to respond to shifting needs (Figure 8.2) (Desmedt, 1994a,b).

Incompletely defined and constantly changing users' requirements mean that systems that support interfacing must be designed for flexibility in the present and adaptability in the future. Because such systems can never be actually finished, a fundamental shift in mindset is required on the part of system designers. In fact, striving to freeze users' requirements in order to deliver a stable, completed system can be counterproductive. For example, initial data management and interfacing efforts in the FIFE program were based on a traditional engineering approach in which system designers who lacked the requisite scientific expertise gathered input from users, established a set of users' requirements, and then attempted to develop and deliver a completed system without further interaction with the intended users. Not surprisingly, this design approach failed and had to be replaced with one based more directly on active and ongoing interaction between the information management staff and the research scientists.

In the Aquatic Processes and Effects portion of NAPAP, atmospheric and geochemical data collection and management activities were well-defined, well-focused, and consistent through the duration of the project.

FIGURE 8.2 Schematic representation of the difference between classical sequential system design and more flexible collaborative approach. In the sequential design, information management specialists interact with users only at the beginning and end of the process. In the collaborative process, users and information management specialists work in parallel to contribute their knowledge and insight to the design as it develops (adapted from Strebel et al., 1990).

Thus, data integration went smoothly based on watershed models, and well-documented databases were made available to a wide user population in a timely fashion. In contrast, the technical scope for fisheries assessment was not clearly defined in the early stages of program development and was inadequately represented in new field studies later on in the project. Uncertain and shifting requirements with regard to biological data led to an overreliance on poorly documented historical data, often collected and managed by state agencies. As a result, data integration was difficult and compromised technical rigor in assessing acid rain impacts to fish populations. Also, efforts to maintain and disseminate an integrated fisheries database were abandoned.

The committee recommends that hardware/software system development efforts be based on a model that includes ongoing interaction with users as an integral part of the design process. In addition, system designers should work from the assumption that systems will never be

finished, but will continue to evolve along with the data collected and users' needs. Designers therefore should use, to the greatest extent possible, modern database development approaches such as rapid prototyping, modular systems design, and object-oriented programming, which enhance system adaptability.

Interoperability

Poorly defined and changing requirements, along with many of the other challenges to data interfacing described above, could easily be satisfied by true interoperability. Interoperability, as defined here, is the ability to readily connect different databases on separate hardware/software systems and perform data retrieval, analyses, and other applications without regard to the boundaries between the systems. Such seamless interoperability is the basis for the concept of "virtual databases" in which users have access to a wide variety of data, regardless of their location (NRC, 1991).

Despite progress toward this goal, anyone who has attempted to functionally connect different hardware and software knows that this can be tremendously demanding, complex, and frustrating. In general, these difficulties derive from two sources. First are the differences in hardware- and software-specific issues such as digital communication protocols and ways of structuring and indexing databases. Surmounting these problems requires the development of common digital interface standards, either by the user community or through cooperative efforts of hardware and software vendors. In either case, the task can be arduous and time consuming. Second are the semantic differences between data from disparate databases. These result from the data themselves, as discussed earlier in this chapter, and include, for example, incompatible scientific naming conventions and fundamental differences in spatial and temporal scale. This second kind of barrier to interoperability can be overcome only by standardization of the key parameters that allow linkages to be created among different data sources. In the FIFE study, for example, the wide assortment of methods for recording time of sampling made it difficult to interface measurements taken at the same time.

These two barriers to interoperability must be approached and overcome in the context of a third and related barrier. This is the problem of providing users with flexible access to a diversity of data sources that will be subdivided and recombined in a variety of ways that cannot always be predicted. Although it is advancing rapidly, current technology cannot yet provide users with the flexible access to, retrieval from, and combination of data from widespread sources that they desire.

The committee recommends that program managers, project scientists,

and data managers review the interoperability of their hardware, software, and data management technologies to facilitate locating, retrieving, and working with data across several disciplines. However, this effort should be accompanied by parallel attempts to resolve inherent incompatibilities among data types that can thwart interfacing even when state-of-the-art hardware and software systems are seamlessly connected.

Complex Metadata

Many discussions of the data management issues related to global change research have emphasized the importance of accurate and complete metadata (e.g., NRC, 1991; OSTP, 1991; CEES, 1992). Box 8.5 expands on the committee's definition of metadata, which is provided in the Executive Summary. Practicing scientists understand that no data set is perfectly consistent, free of peculiarities, immune to errors, or necessarily suitable for all analytical approaches. They therefore depend on metadata to describe the data, suggest limitations on its use, and warn of potential pitfalls. Metadata become critically important in the interfacing context as scientists work with data that are outside their area of primary expertise and as they use data in ways not originally intended. At present, most metadata are typically contained in a document separate from the data themselves. For example, CDIAC provides hard-copy metadata with each of its data packages, and the NASA Global Change Master Directory describes available data sets in on-line metadata. In addition, certain kinds of information about individual data points can be embedded in the data set as codes or flags that, for example, identify data points of questionable quality.

This approach has proved satisfactory for relatively small data sets. However, the committee found widespread concern among data management specialists that the proliferating and ever-larger data sets used in global change research would make this approach unworkable, especially when interfacing different data types. Three principal and related concerns were voiced. First, the volume of some kinds of metadata is likely to increase along with the size of the data sets they describe. This is particularly true of the portion of metadata that refers to the peculiarities of individual data points or groups of data points. It may be unrealistic to expect researchers to be able to effectively assimilate this amount of information. Second, while some of this more specific metadata can be encoded as fields or flags associated with the data themselves, these codes and flags will not be relevant to all interfacing situations. This is because a particular data characteristic will be a greater or a lesser problem depending on the circumstances (see Box 8.6). As a result, researchers who

|

BOX 8.5 Metadata: The Key to the Lock Metadata document or describe all the facts, circumstances, and conditions associated with the actual data themselves. In most cases, metadata are the key needed for scientists other than the original investigator(s) to unlock the information contained in the data. This is because they provide insight into not only the raw characteristics of the data, but also constraints on their use and limits on their interpretation. Metadata are thus essential to the process of drawing scientifically defensible conclusions from the data. The essential components of metadata differ from project to project and data type to data type. However, the key elements include at a minimum those listed here. In addition, the committee found that the most thorough and useful metadata from the combined efforts of principal investigators, information management specialists, and other potential users not directly involved in collecting the data. Principal investigators are intimately familiar with the data and their quirks and peculiarities. Information management specialists are knowledgeable about ways in which the inherent structure of the data can affect their utility. Finally, other potential users will raise issues and propose applications of the data that would never have been thought of by the principal investigator. Key elements of metadata should include detailed description of at least the following (adapted from CENR, in press):

|

are interfacing large data sets cannot necessarily depend on embedded codes and flags to automatically subdivide, transform, or otherwise operate on the data. Thus, while researchers cannot necessarily depend on automation to solve this data management problem, neither can they be expected to exhaustively examine these detailed metadata manually to evaluate their relevance. Third, there is a large and ever-increasing number

|

BOX 8.6 Metadata in an Interdisciplinary Context A chronic problem with metadata is the reluctance of researchers to allow their data sets to be freely used by others. Good metadata, of course, are designed to allow this very thing to happen. Therefore, some mechanism needs to be established that encourages the free sharing of data. The H.J. Andrews Experimental Forest LTER study provides an interesting example of how a mechanism for sharing data across discipline boundaries developed on its own. The project started out as a series of related, but independent studies. As the study progressed, however, individual researchers began to realize that they needed data from other projects carried out at the site. As their need for other data increased, the individual scientists began to recognize that they had to make an effort to allow their data to be used by, and made useful to, their associates. This led to greater emphasis on adequately documenting their data sets. |

of data sets potentially useful in interfacing applications. For researchers to make effective use of this variety, they require a means of identifying, evaluating, accessing, and retrieving relevant data. Metadata play a key role in providing the information necessary for these steps. At present, some of this information is available through a combination of publications (e.g., newsletters and catalogs from CDIAC and the National Geophysical Data Center) and on-line data directories. These avenues are suited to providing summary descriptions of available data sets. However, it will be a challenge to furnish researchers with an efficient source of information about all available relevant data without requiring them to search numerous catalogs and directories.

The traditional approach to metadata, in which they are considered as information separate from the data themselves, will not meet the challenges just described. The committee found agreement among a large segment of the data management specialists it consulted that a new conceptual model of metadata is required in which metadata are somehow integral to the data themselves. There was equally wide agreement, however, that no quick fixes to this problem are readily apparent.

The committee recommends that the production of detailed metadata be a mandatory requirement of every study whose data might be used for interdisciplinary research. Metadata should be treated with the seriousness of a peer-reviewed publication and should include, at a minimum, a description of the data themselves, the study design and data collection protocols, any quality control procedures, any preliminary processing, derivation, extrapolation, or estimation procedures, the use of professional judgment, quirks or peculiarities in the data, and an assessment of features of the data that would constrain their use for certain purposes.

Retracing Data Paths

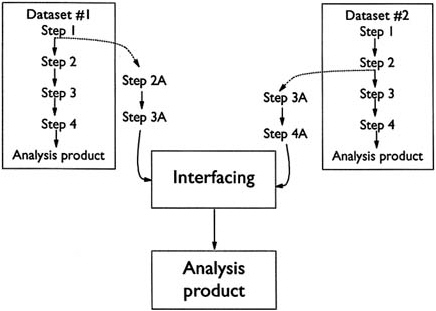

As described above, all data sets reflect a particular set of users' needs and perspectives, which are not necessarily applicable to all situations. Interfacing ecological and geophysical data can therefore require that they be reformatted, resummarized, reclassified, or otherwise adjusted. For example, in the Sahel study, remote sensing and ground-based precipitation data were combined to develop an overall picture of rainfall throughout the study area. In many cases, this can involve backtracking down the data path to an earlier version of the data and then proceeding from there along an alternate path (Figure 8.3). The ability to perform this kind of backtracking requires that detailed information be available about the prior processing steps that were used to create the data set(s) being retrieved for interfacing. Sometimes this can be accomplished with thorough metadata. However, when a large user community is simultaneously using, updating, and modifying a considerable number of data sets (as in the FIFE study), stand-alone documentation is not adequate.

FIGURE 8.3 Schematic representation of how data interfacing can involve data processing steps that would not be needed if data were being analyzed independently. For each unique data set, this different data processing can require back-tracking along the data to different points.

Instead, it is necessary to include this dynamic information in the database system itself by attaching it to each derived data set.

The committee recommends that metadata contain enough information to enable users who are not intimately familiar with the data to backtrack to earlier versions of the data so that they can perform their own processing or derivation as needed. Where stand-alone documentation is not adequate (for large and complex data sets or where multiple users are simultaneously updating and modifying data), data managers should investigate the feasibility of incorporating an audit trail into the data themselves.

Long-term Archiving of Data

An important concern is the stewardship of data sets throughout their life cycle, which for global change data extends over a minimum of decades to centuries and does not necessarily end when the primary users believe they no longer need the data. The committee concludes that far too many environmental research projects give insufficient attention, in either the planning or the implementation stage, to the long-term archiving of their data sets. Data from studies that contribute significantly to our understanding of components and processes of the Earth system must be preserved and made accessible for future potential users of the data. There is a need to create a mindset within the research community that valuable data must have a long-term life that extends far beyond the publication of the principal investigator's analyses.

In this regard, the committee found that there are no well-established and widely accepted protocols to assist scientists in deciding which data should be archived, in what formats they should be stored, and where and how they should be archived to maximize access for potential users. Further, in several cases the committee found little attention given to the long-term maintenance of data sets once they were archived. It is important to note, however, that there do not appear to be any insurmountable technical barriers to keeping all data collected in research projects, even data-intensive ones that involve high-resolution imagery, because advances in data storage and retrieval capabilities have kept pace with the ever-growing volumes of data in all fields of science. It is typical that the ensemble of all previous data in any scientific discipline is modest in volume compared to present and anticipated annual volumes. Therefore, the issue is not unmanageable volumes of data, rather it is the maintenance of the data sets in accessible, usable form over time that is the challenge for long-term retention.