Introduction

Humankind lives at the bottom of the sea of air, and climate change is perceived by us mainly as a change in the overall conditions of this sea's lower layers. The atmosphere lies at the heart of decade-to-century climate change: It filters the sun's rays as they reach the surface of the earth and as they are reflected again into outer space, and it is the principal medium of exchange of heat, water, trace-gases, and momentum between the other components of the climate system—oceans, land surface, snow, ice masses, and the biosphere. The atmosphere and ocean are intimately coupled within the climate system, and are governed by similar physical laws. But the atmosphere has been explored in greater detail—in terms of available observations and of existing models—than any other component of the climate system. Thus it is natural to review the results of this exploration first, as we begin our examination of climate variability.

Understanding of natural phenomena proceeds through a sequence of observations, experiments, and models. Given the complexity of the climate system, laboratory experiments can reproduce only very incompletely the system's major aspects, and have not been included in the present volume. Atmospheric observations have led, in past centuries, to very simple, purely descriptive models of atmospheric motions. In the second half of this century, advanced computer models of the atmosphere have simultaneously benefited from an increase in the number and quality of observations, and stimulated vast field programs designed to verify model results and yield the new details necessary for improving the models. The separate treatment of observations and models in this chapter and the next is, therefore, only a matter of expository convenience.

The oldest instrumental records of atmospheric temperature—and, to some extent, precipitation—extend about 300 years into the past. The coverage and density of these measurements have grown more or less continuously, with a dramatic increase occurring in the 1940s and 1950s. This permits us to make a fairly informed assessment of past interannual variability, but we have considerably less confidence about interdecadal changes and only little or indirect information on the century-to-century time scale.

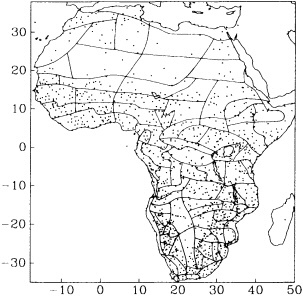

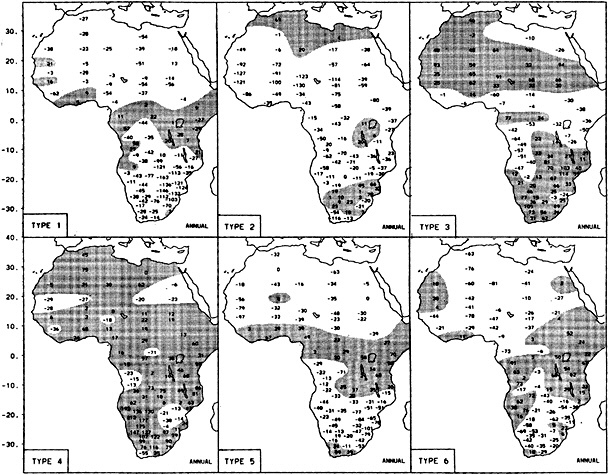

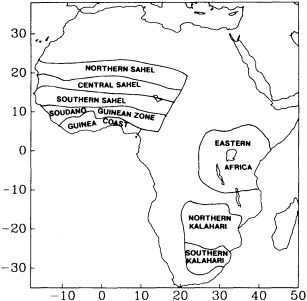

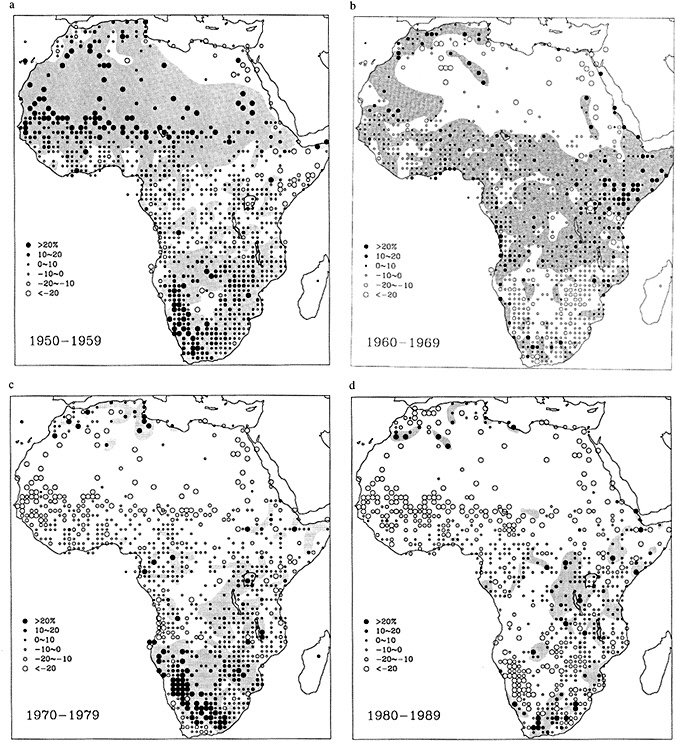

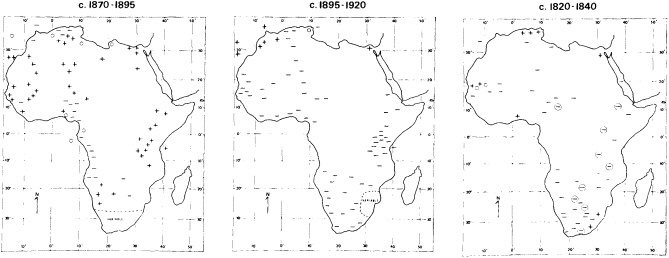

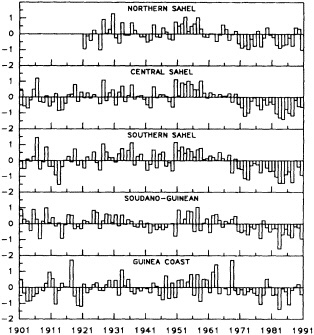

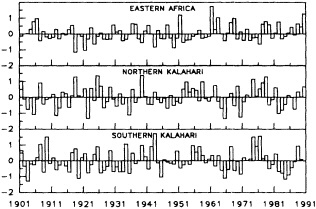

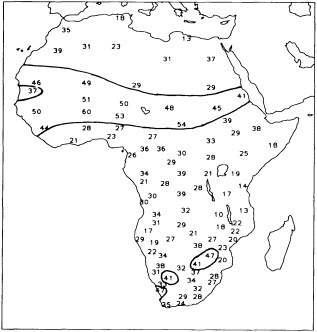

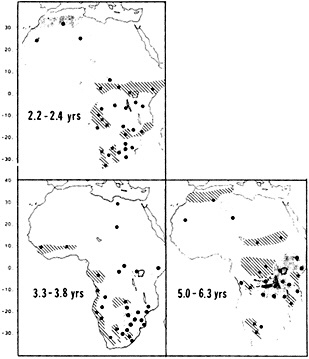

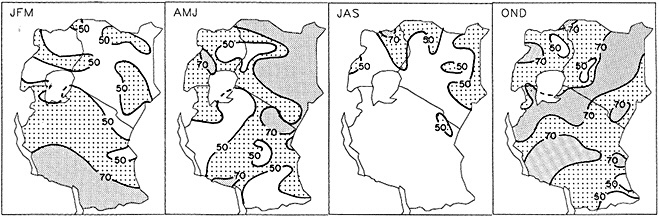

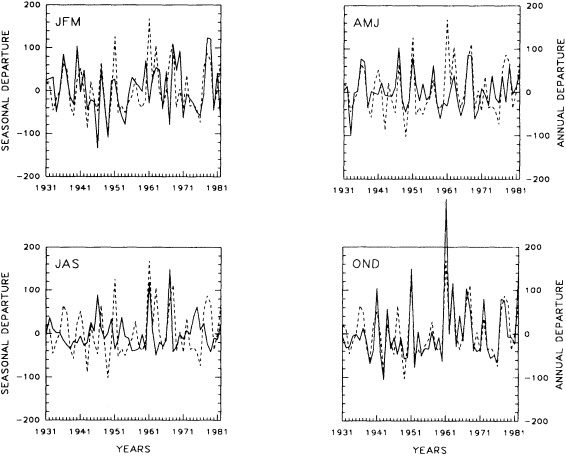

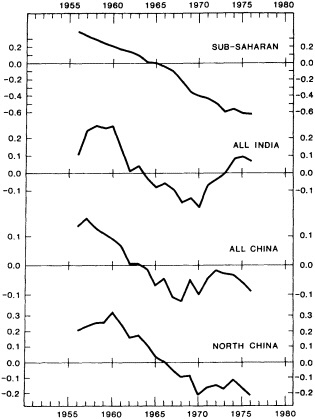

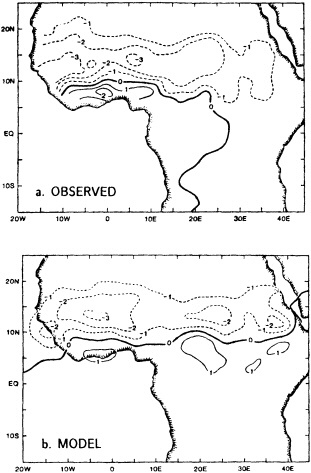

The Atmospheric Observations section below starts with a paper by H.F. Diaz and R.S. Bradley that addresses head-on the question of how different the climate of this century has been from those of previous ones. Proceeding from temperatures (which tend to be more uniform in space and time) to precipitation (which is considerably less so), S.E. Nicholson looks at the socioeconomically critical issue of African rainfall variability on interannual and decadal time scales. J. Shukla provides complementary insight on the initiation and persistence of drought in the Sahel.

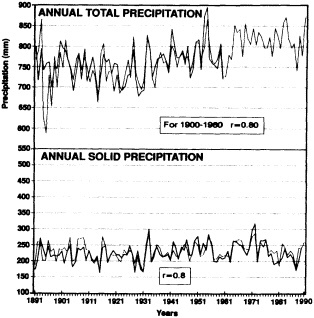

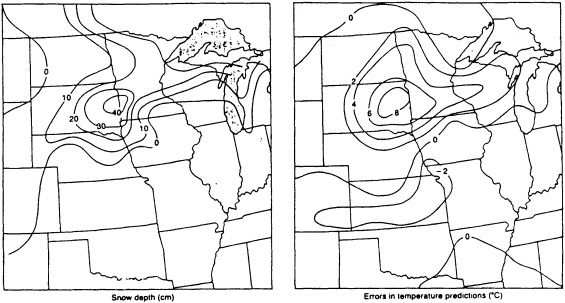

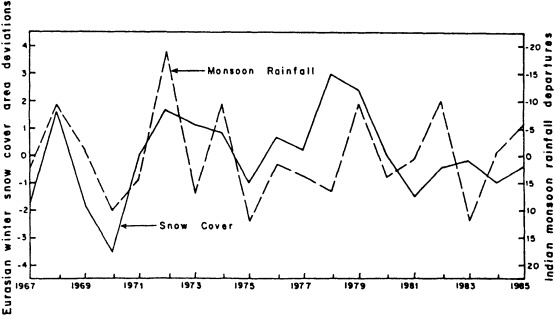

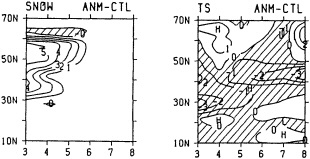

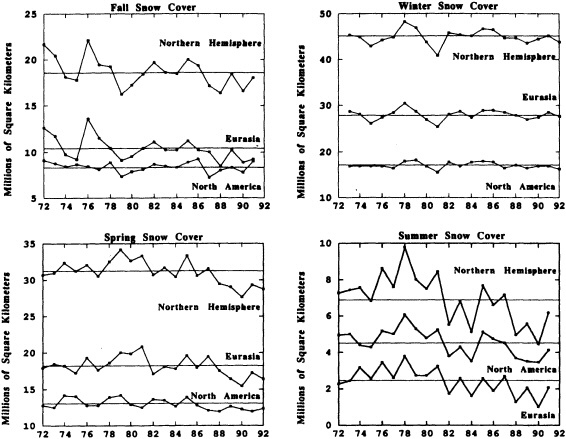

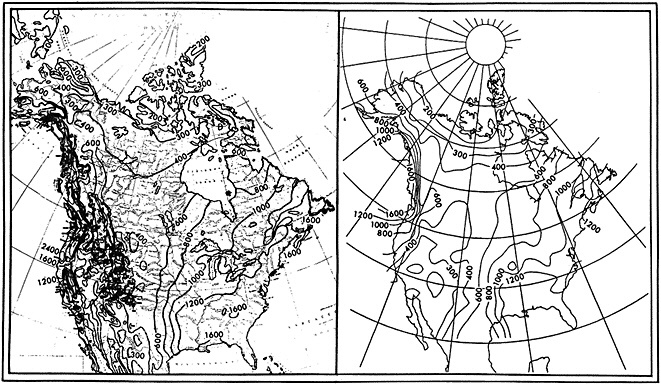

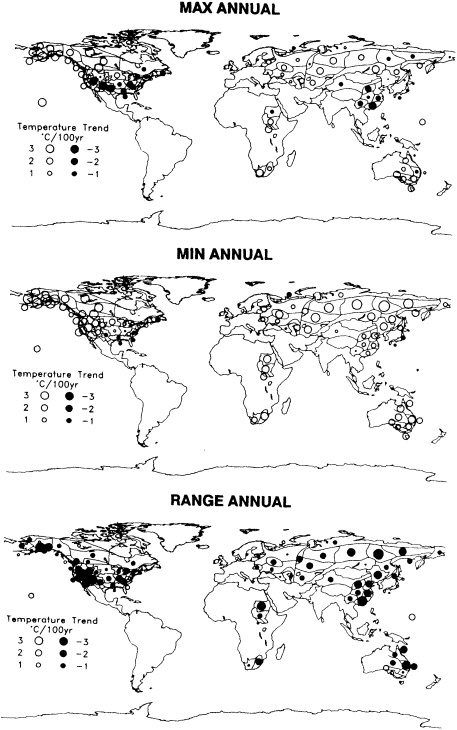

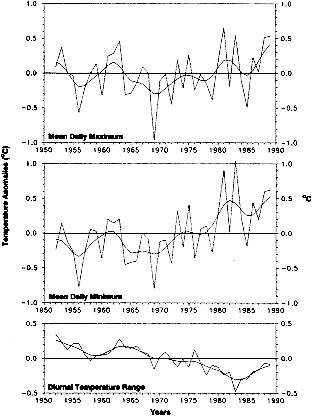

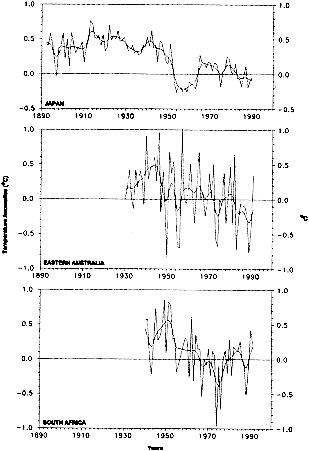

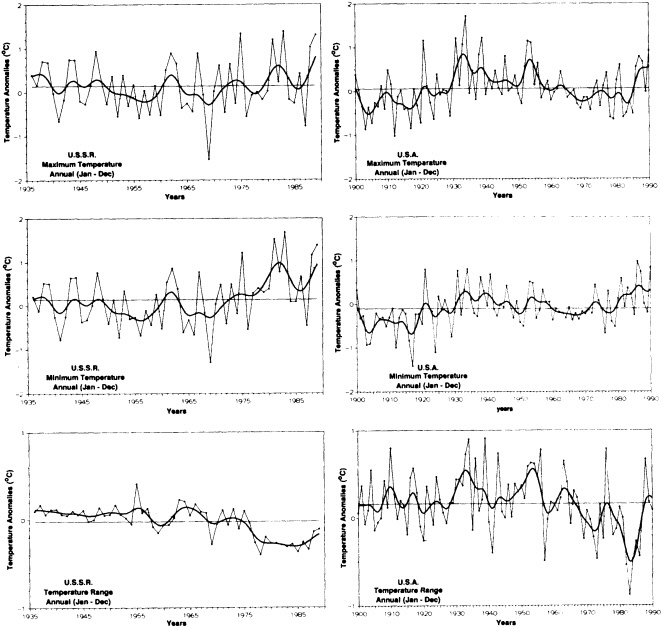

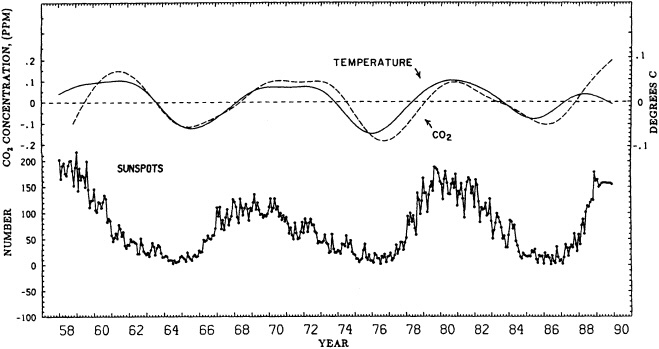

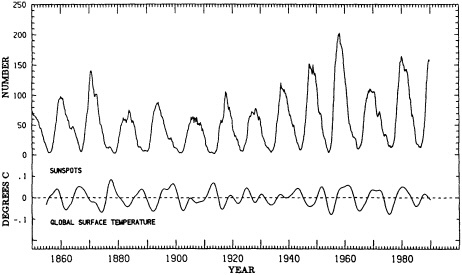

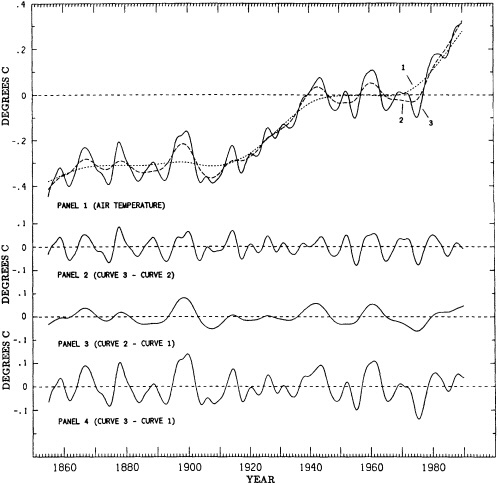

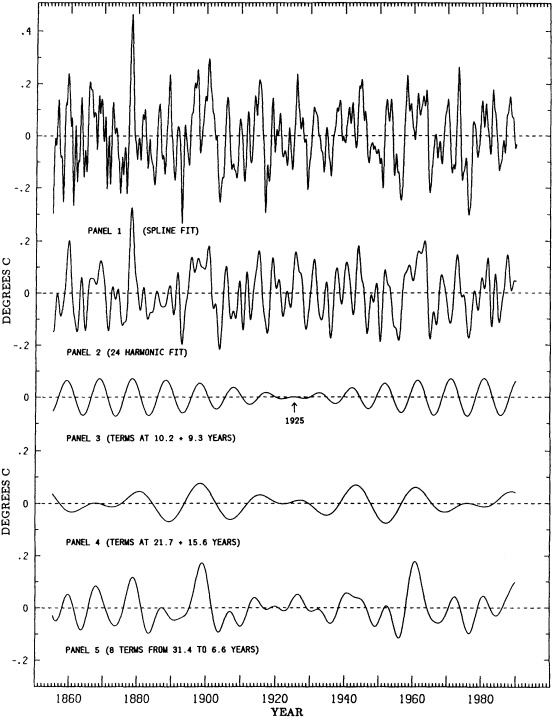

The role of snow cover in the radiation balance at the surface makes it an important player in climate variability; this role is discussed by J.E. Walsh. Variability and trends of both liquid and solid precipitation over North America are reviewed by P.Ya. Groisman and D.R. Easterling. Returning to temperature, a careful study of the difference between trends in daily temperature maxima and minima is presented by T.R. Karl and his colleagues. C.D. Keeling and T.P. Whorf then analyze the decadal oscillations in global temperatures and in atmospheric carbon dioxide. These oscillations are at the heart of understanding natural variability on this time scale; they are also covered later in this section in the essay introducing atmospheric modeling.

The Southern Hemisphere has less instrumental coverage, in space and time, than the Northern Hemisphere. While many of the earlier papers in the section concentrate on the latter, D.J. Karoly describes the observed variability in the atmospheric circulation south of the equator.

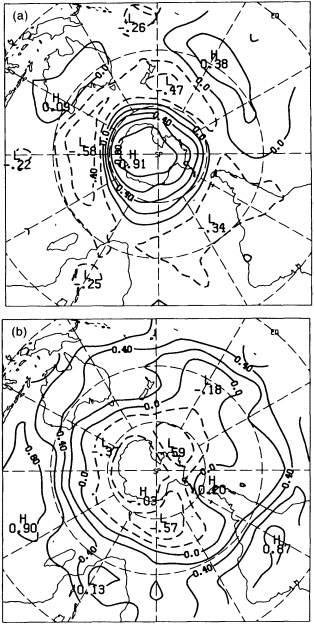

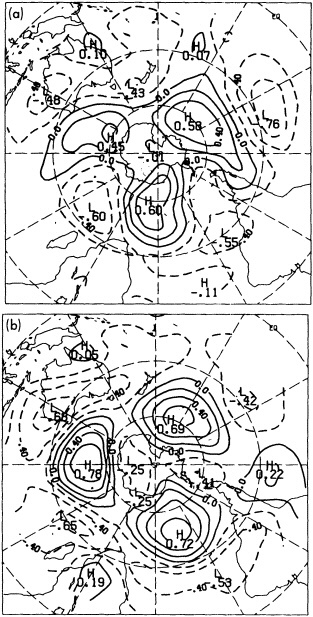

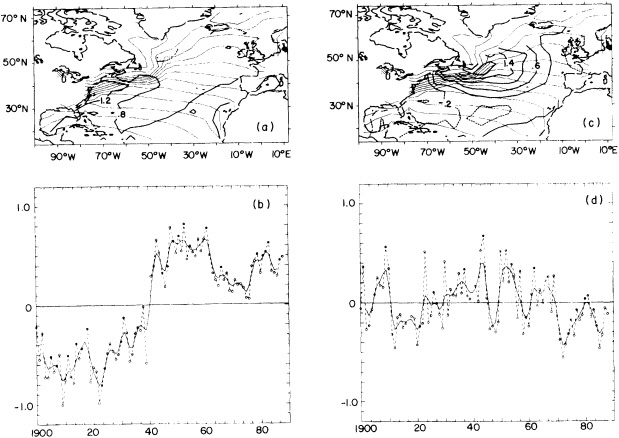

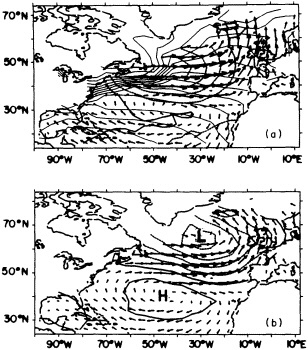

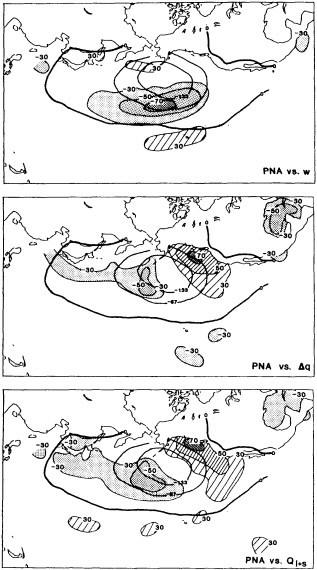

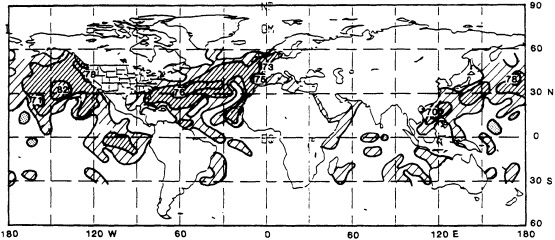

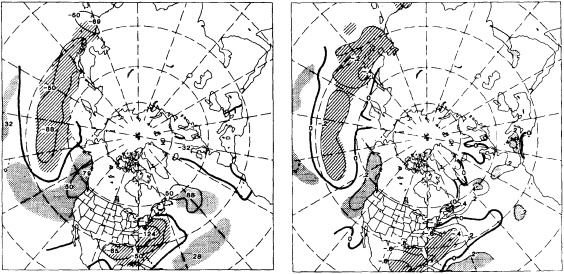

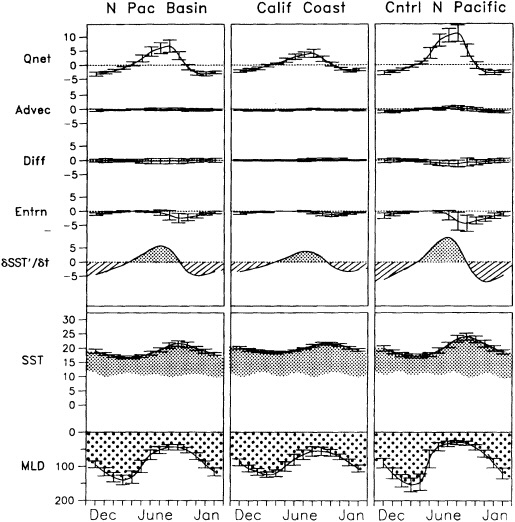

Atmosphere-ocean interaction is involved in the last two papers of this section. C. Deser and M.L. Blackmon review atmospheric climate variations at the surface of the North Atlantic, while D.R. Cayan and his associates study a general-circulation model simulation of the Pacific Ocean, driven by observed surface fluxes.

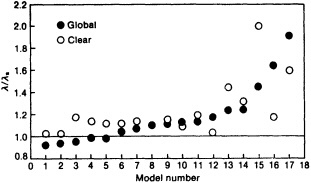

Atmospheric models have a relatively long tradition in the climate community, going back to the 1950s. They span a spectrum, from simple radiative-convective models in one vertical dimension, through energy-balance models in one and two horizontal dimensions, to fully three-dimensional general-circulation models. These models are of interest in their own right as important tools for investigating climate change, either by themselves or coupled to models of other climate subsystems. They also provide an instructive example of the creation of a full suite of models for intercomparison and validation; their development is only now being followed, more or less closely, by the modeling enterprise in oceanography, hydrology, and other disciplines contributing to the climate-change enterprise.

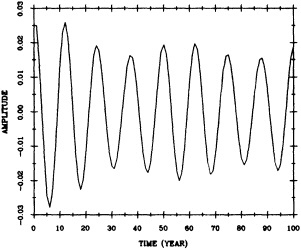

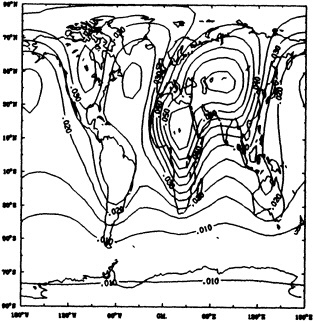

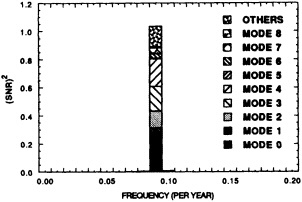

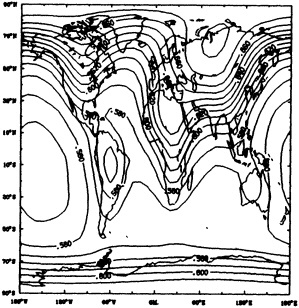

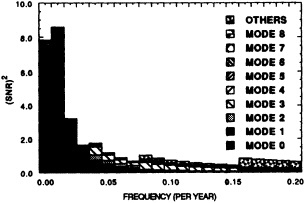

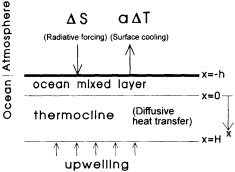

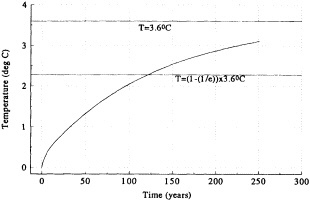

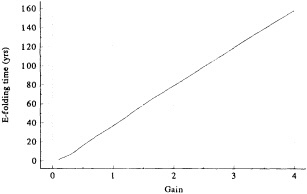

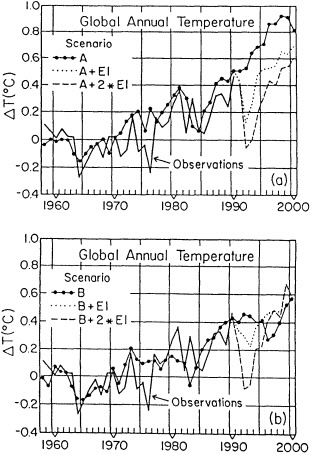

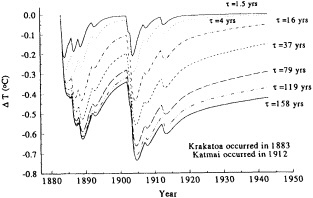

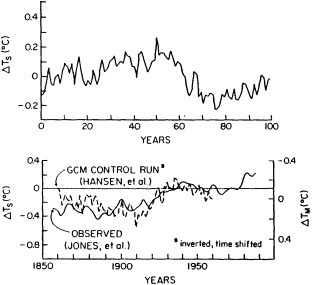

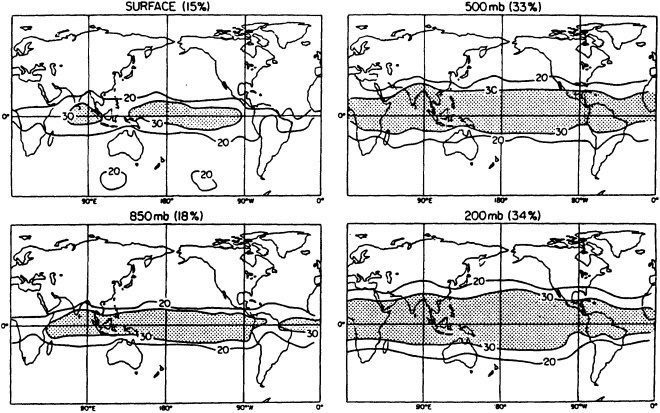

Modeling and observations are closely linked by the reciprocal problems of simulating the observed variability and validating the existing models. The Atmospheric Modeling section is thus appropriately begun by T.M.L. Wigley's and S.C.B. Raper's paper on modeling and interpreting paleoclimate data, with an emphasis on the greenhouse effect. G.R. North and K.-Y. Kim apply classic time-series analysis techniques to climate-signal detection. R.S. Lindzen then considers a few themes in these two papers from complementary viewpoints.

A number of causes for climate variability on time scales of decades to millennia are reviewed by D. Rind and J.T. Overpeck. They emphasize the modeling approach to a study of these causes, while J.M. Wallace addresses similar issues by analyzing the climate record.

The two sections of this chapter are introduced by essays, one by T.R. Karl and the other by M. Ghil. They provide an overview of the current state of the fields of atmospheric observations and atmospheric modeling, reviewing those topics not covered by the workshop and offering a perspective on the papers included. Following each paper, a commentary by the discussion leader and a condensation of the spirited discussion that took place at the workshop shed additional light on our current knowledge in both areas.

The ocean is examined in Chapter 3, and atmosphere-ocean interaction is explored further in Chapter 4. Also of interest are the proxy records of climate variability, such as tree rings and coral reefs, which are covered in Chapter 5. The sponsoring committee's conclusions, which draw on the material in this chapter and in the other three, appear in Chapter 6.

Atmospheric Observations

THOMAS R. KARL

INTRODUCTION

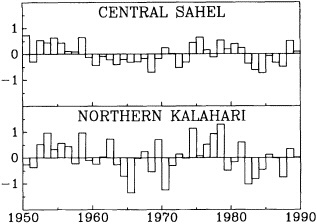

During the 1970s, the 1980s, and into the 1990s atmospheric scientists have accelerated research aimed toward identifying and explaining the presence of decade-to-century scale climate fluctuations. The motivation for special consideration of atmospheric climate fluctuations on these longer scales has its roots in the numerous major climate events whose occurrence is so well documented in the instrumental record. Probably the best-known of these fluctuations occurred about 1970, when rainfall suddenly decreased over the Sahel; the causes of this jump are discussed in this section by Nicholson (1995) and Shukla (1995). Other examples include the Dust Bowl years in North America during the 1930s, the diminished intensity of tropical storms affecting the East Coast of the United States during the 1960s, 1970s, and much of the 1980s, and the wet weather of the 1970s and 1980s over much of the United States.

As access to climate records has broadened during the past few decades, researchers such as Hurst (1957), Mandelbrot and Wallis (1969), Mitchell (1976), Douglas (1982), and Lorenz (1986) have presented evidence suggesting that the notion of a static climate is no longer tenable, even on less-than-geological time scales. We have come to realize that 30 years of data, the length of time that has been used to compute temperature and precipitation "normals" (Court, 1968), is inadequate to define climate. It does not provide us with sufficient information either to minimize adverse climate impacts within such sectors as energy, water supply, transportation, environmental quality, construction, agriculture, etc., or to maximize the availability of the climate-governed resources, such as water and energy, on which both natural and man-made systems depend.

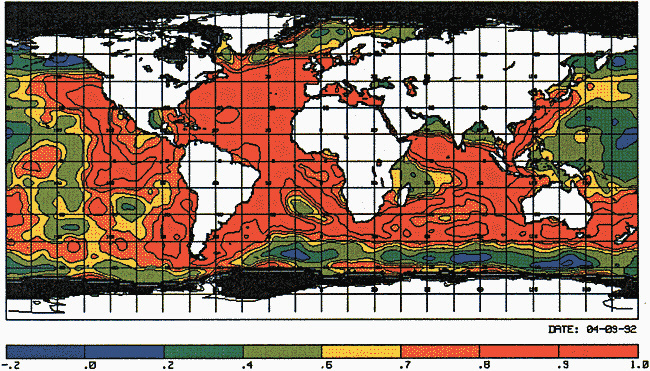

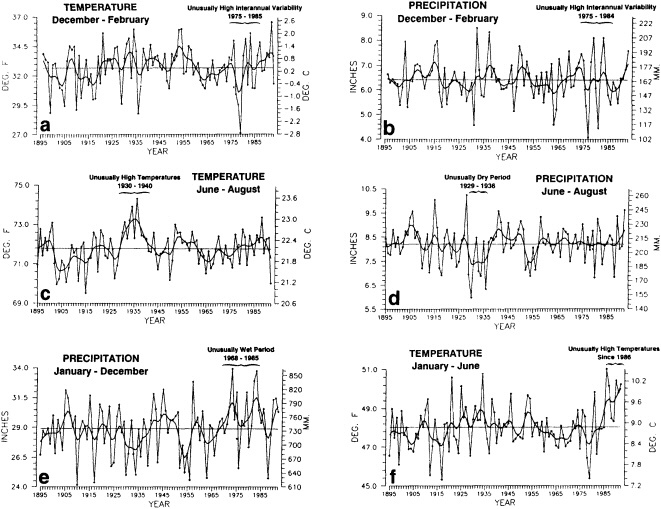

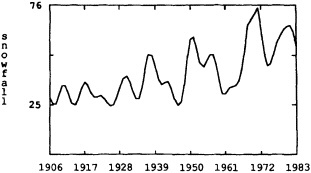

Several climate fluctuations that have affected the United States serve to illustrate this point. Beginning about 1975, the interannual variability of mean winter temperatures and total precipitation averaged across the contiguous United States substantially increased (Figures 1a and 1b); this increase persisted at least through 1985. In contrast, the interannual variability had been very low during the previous 20 years. The mean temperature increased dramatically over the United States during the 1930s, coincident with a large decrease in summer precipitation (Figures 1c and 1d), before returning to more typical conditions. The wetness of the 1970s and the first half of the 1980s, which is clearly evident in Figure 1e, resulted in record-setting high lake levels and caused considerable economic damage and human suffering (Changnon, 1987; Kay and Diaz, 1985). Over the past decade another climate fluctuation has been evident over the United States; as Figure 1f shows, temperatures increased in a jump-like fashion during the early 1980s. Interestingly, the temperature discontinuity that is apparent between January and June is not reflected by the temperatures during the second half of the year.

Due to the limited span of the instrumental climate record, evidence for climate fluctuations on decade-to-century time scales is biased toward higher frequencies. (The situation is much worse in the oceans; as Wunsch (1992) points out, the absence of comprehensive oceanographic data has led many oceanographers to focus on identi-

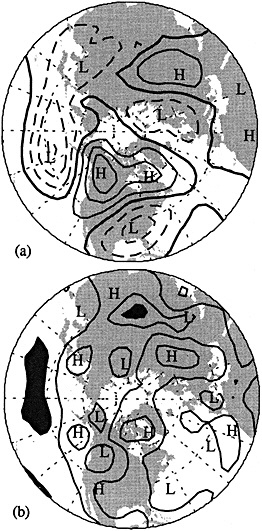

FIGURE 1

Time series of area-averaged seasonal and annual mean temperature and total precipitation for the contiguous United States. The smoothed curve is a nine-point binomial filter. The horizontal line reflects the mean over the period of record.

fying a mean climate state.) Nonetheless, there is evidence that climate fluctuations on longer time scales occur throughout the world (Jones and Briffa, 1995; Diaz and Bradley, 1995). As Karoly (1995) shows, there is a dearth of the data that would permit the identification of decadal-scale circulation variability in the Southern Hemisphere, but analysis of the longer-term surface data available suggests that significant climate fluctuations persist at least through decadal time scales.

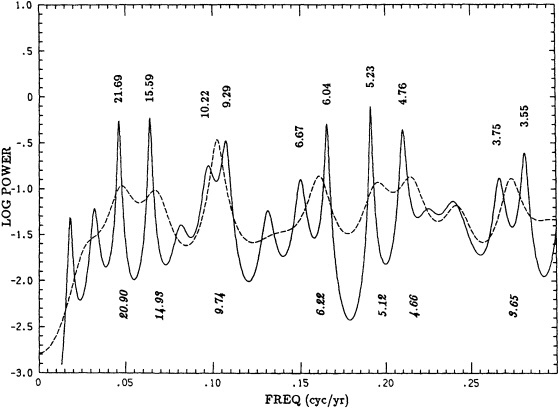

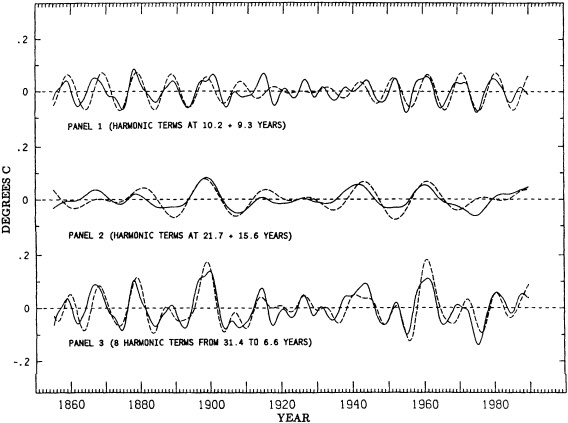

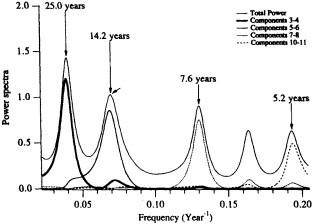

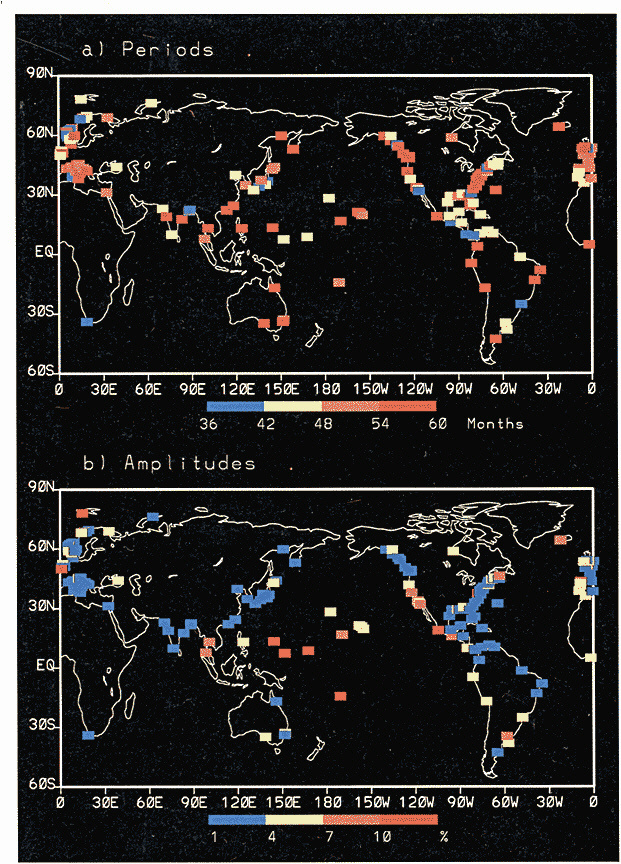

The forcing agents of many climate fluctuations may have their origin in either anthropogenic or natural factors, or both. These fluctuations are important in terms both of their socioeconomic and biophysical effects and of our need to distinguish between natural climate variations and anthropogenic climate changes. In analyzing the climate record, the dangers of "data dredging" must be kept in mind. As access to climate data increases, the chance of finding trends and variations that appear to be significant also increases. For this reason decade-to-century-scale climate fluctuations have been referred to as the "gray area of climate change" (Karl, 1988). For instance, Keeling and Whorf (1995) find evidence for decadal fluctuations in the global temperature record that can be reproduced by assuming the existence of two oscillations with small differences in frequency that beat on time scales of about 100 years. The search for an explanation of this statistical result is a good example of the challenge presented by the existence of these decadal fluctuations.

IDENTIFYING CLIMATE FLUCTUATIONS

The instrumental record of atmospheric and related land and marine observations is fragmentary until at least the middle of the nineteenth century. Moreover, virtually all of

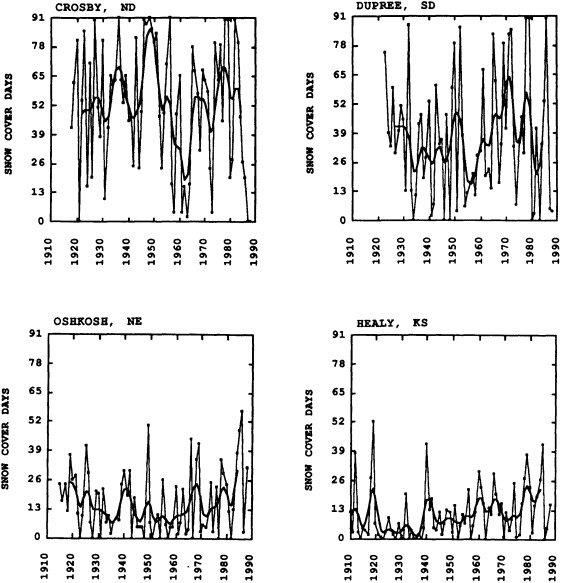

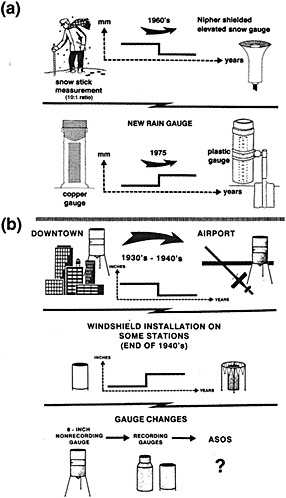

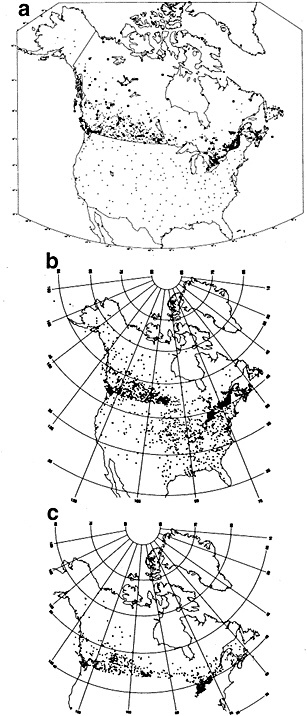

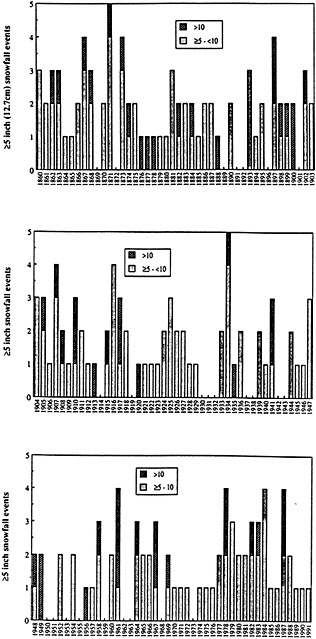

the observations used to study climate fluctuations are derived from observing programs that were developed not for this purpose, but rather to support day-to-day weather forecasting. Since the day-to-day variability of the atmospheric system in much of the world is far larger than the decadal climate variability, identification of multi-decadal climate fluctuations is often hampered by the poor quality and lack of homogeneity of the observations. For example, Groisman and Easterling (1995), in their study of the variability and trends of precipitation over North America, painstakingly document the many discontinuities and false jumps in the climate record that arise from changes in observing practices. These changes, if ignored, can and do overwhelm important long-term precipitation fluctuations.

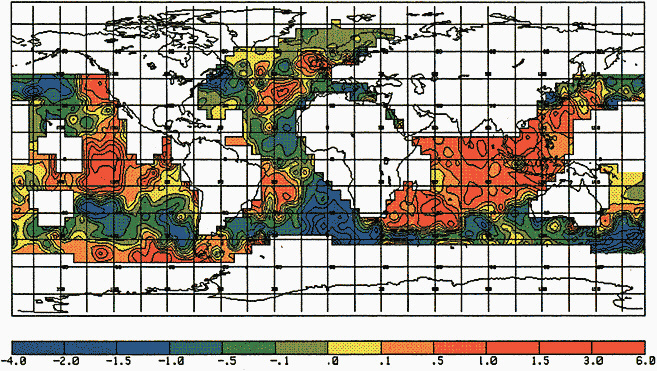

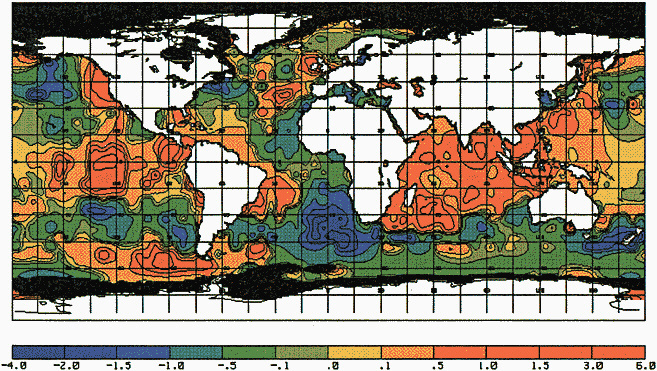

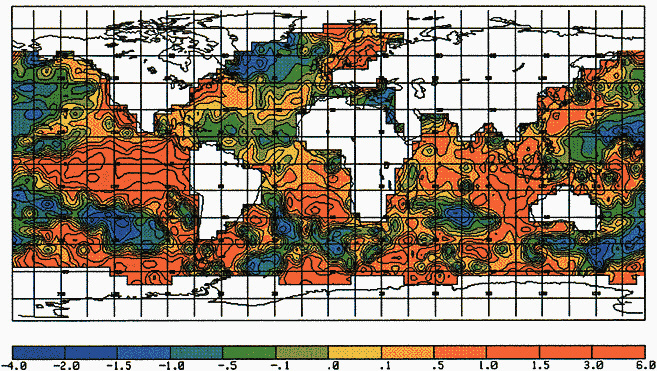

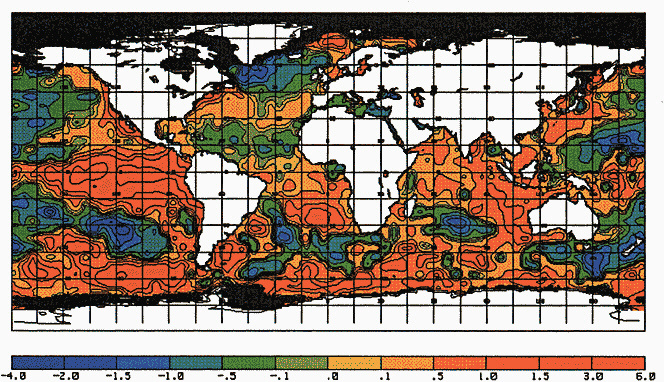

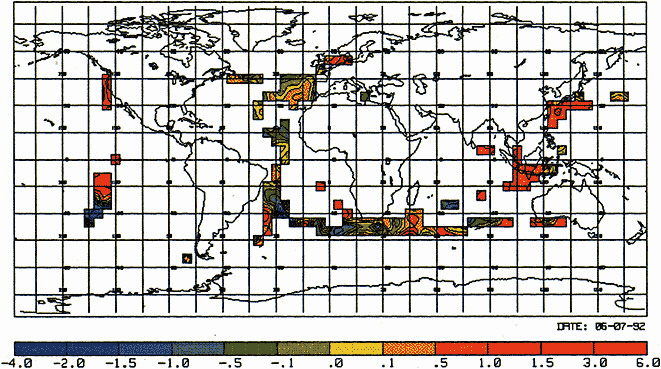

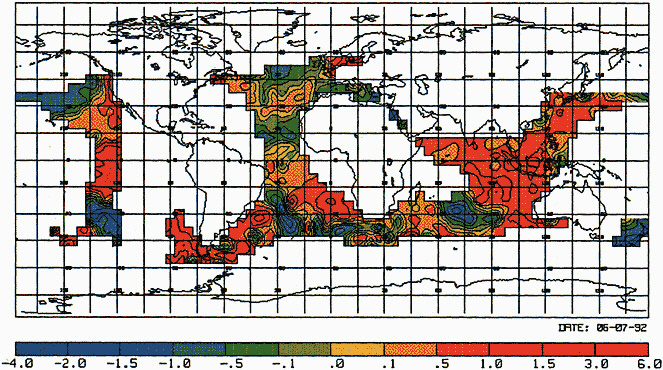

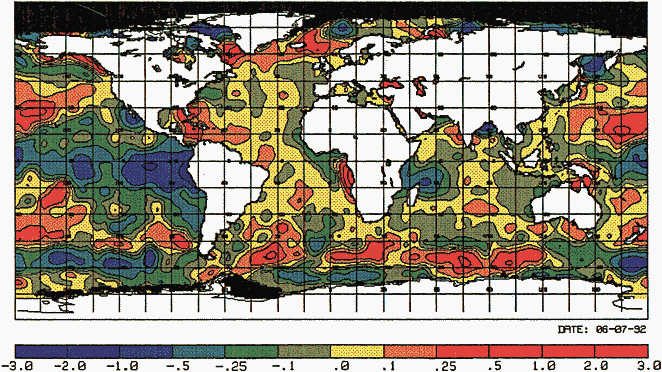

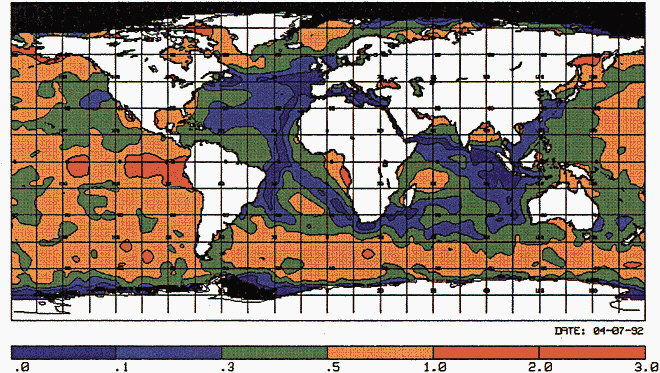

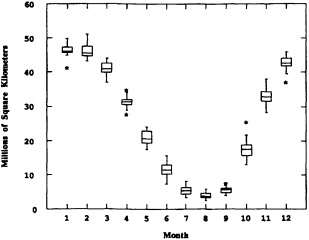

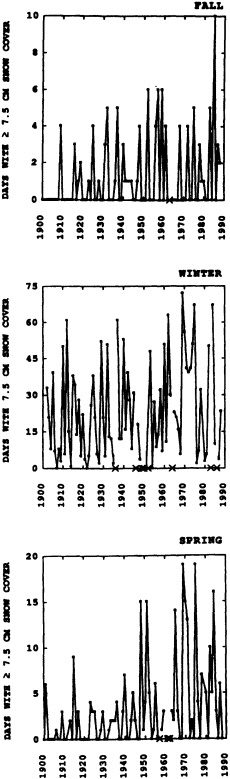

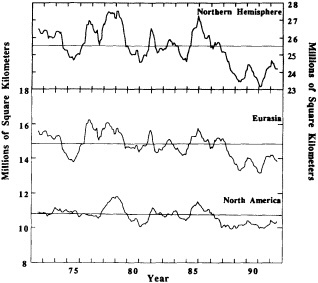

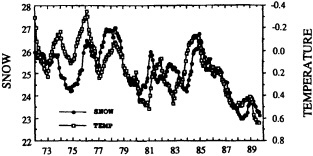

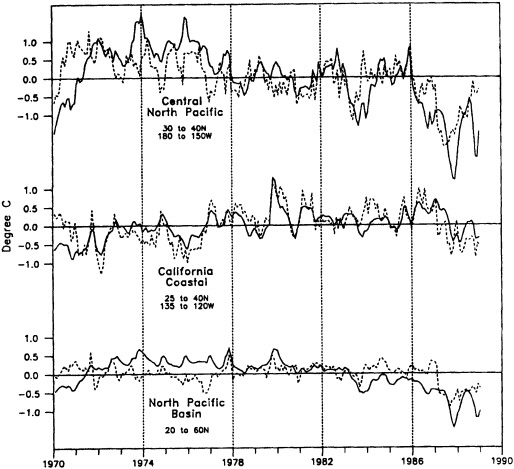

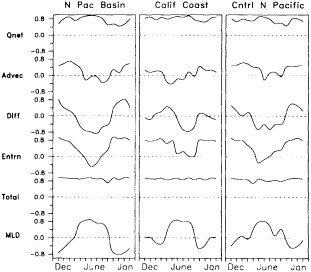

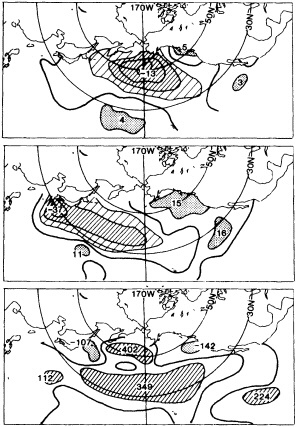

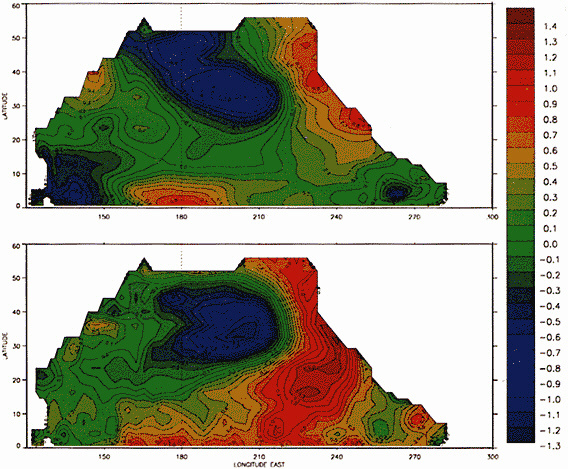

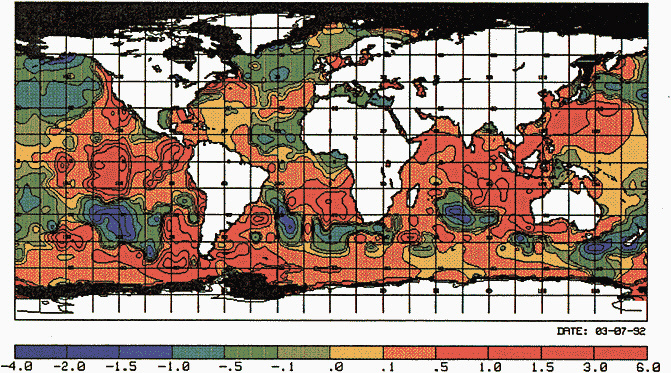

Other atmospheric quantities besides precipitation are also affected by problems in measurement. In this section, Robinson (1995) points out the large disparity in snow-cover extent between a NASA data set using microwave measurements and a NOAA data set using visible imagery. Karl et al. (1995) devote considerable discussion to jumps and trends introduced into the surface temperature record by such factors as urban heat islands, changes in irrigation practices, differences in instrumentation, and station relocations. Cayan et al. (1995) use surface marine observations to calculate the latent and sensible heat fluxes that are used as forcing agents of the sea surface temperature field in the North Pacific. Their analysis includes the basin-wide climate jump that began about 1976-1977. To account for known systematic biases related to trends in the wind field and the sea surface and marine air-temperature and moisture lapse rates (Ward, 1992; Cardone et al., 1990; Wright, 1988; Ramage, 1987), the global trend of each of these quantities is removed. As mentioned in Zebiak's commentary on Cayan's paper, it is unfortunate that such adjustments to the data are necessary.

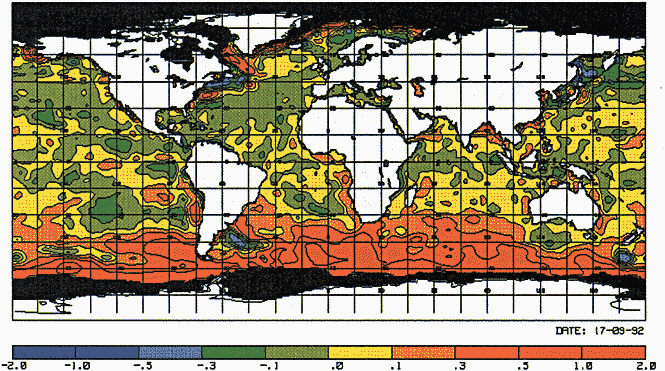

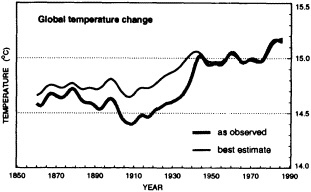

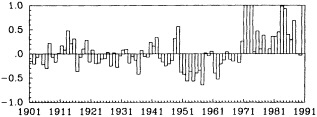

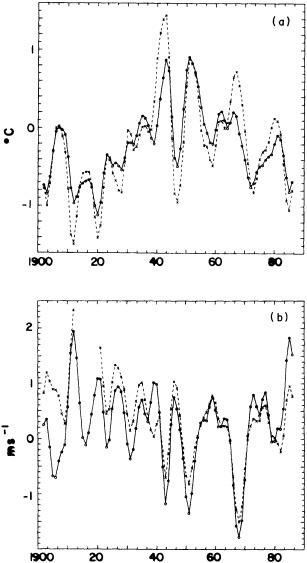

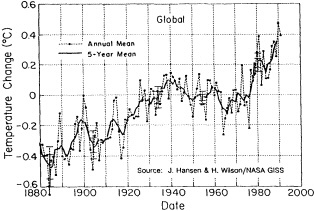

Often the corrections and adjustments that must be applied to a climate record are of such magnitude that it is difficult to be confident that the resulting time series adequately reflects the climate fluctuations. For example, Figure 2 illustrates the significant adjustments required to calculate global temperature fluctuations since the nineteenth century. Through the use of other data bases (e.g., changes in snow cover, alpine glaciers, or sea level) and the isolation of various components of the surface temperature record (e.g., marine air temperature, sea surface temperature, and land temperature), it is possible to gain more confidence in the adjustments applied. Each of the data sets has distinctly different problems related to long-term homogeneity and data quality that require independent adjustment procedures. When these independent data sets provide a physically consistent picture of decade-to-century climate fluctuations, their agreement can be very compelling. In fact, the analysis of quasi-periodic oscillations of surface winds, pressure, and ocean and marine air temperatures

FIGURE 2

Smoothed global surface temperature variations, as derived from the original observations without adjustments for inhomogeneities in the climate record, compared to the same observations after adjustments for inhomogeneities as described by the Intergovernmental Panel on Climate Change (IPCC, 1990, 1992).

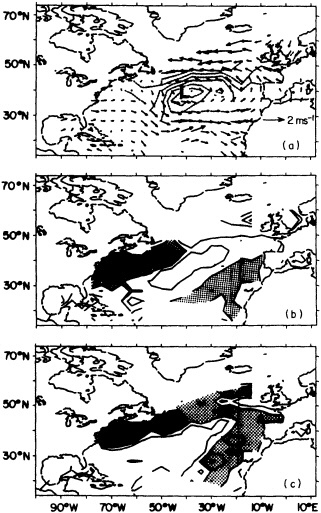

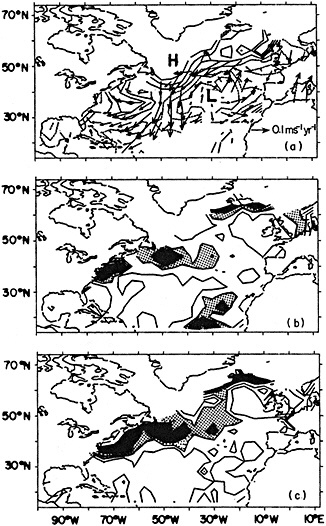

by Deser and Blackmon (1995) relies entirely on physical consistency among independent variables.

In order to improve our ability to discern climate fluctuations on decade-to-century time scales within the existing climate record, some federal agencies such as NSF, DOE, and NOAA are supporting data archeology efforts. Data archeology is the process of seeking out, restoring, correcting, and interpreting data sets. Such efforts are critical to identifying and understanding longer-scale climate fluctuations, since they often turn up information that reveals a pattern not otherwise obvious.

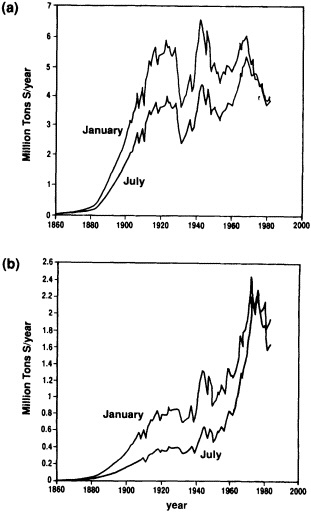

The Comprehensive Ocean-Atmosphere Data Set (COADS), which is just one of several major data-archeology efforts, provides a good example of the type of benefits that can be expected from such efforts. Now, several years after the project's inception (Woodruff et al., 1987), scientists are identifying decadal-scale variations and changes in many climate elements previously thought to be too uncertain to document with any confidence (London et al., 1991; Flohn et al., 1990; Parungo et al., 1994).

Interestingly, up to the present, satellite data have not figured prominently in the analysis of multi-decadal climate variability. Their short observing history and a lack of temporal homogeneity have hampered efforts to use them. Certainly, there are notable exceptions, such as NOAA's snow and sea-ice products and the Spencer and Christy (1990) microwave sounding-unit data. However, major temporal inhomogeneities in data sets, like those identified in the International Satellite Cloud Cover Project (ISCCP; see Klein and Hartmann, 1993), threaten the usefulness of much of the multi-decadal satellite data. The two major challenges over the next few decades for the United States will to be to ensure that global-change satellite-research projects such as EOS provide homogeneous data over their planned life-

times, and to lay the foundation for converting them into a long-term operational observing systems.

Future observations will require more attention to data quality, homogeneity, and continuity if we are to understand the nature of decade-to-century-scale climate variability. Currently under consideration is a Global Climate Observing System (GCOS)1 that includes observations of terrestrial, atmospheric, and oceanic aspects of climate. Since GCOS would be built around existing observing networks and environmental problems, it is essential that scientists effectively convey long-term climate-monitoring needs to the agencies sponsoring space-based and conventional observing systems. Among the climate-monitoring issues that should be addressed in the near future are stability of network sites, intercomparability of instruments, and increased sampling in data-sparse regions. Two concerns for both present and future observations are the better collection and documentation of metadata on observing instruments and practices as well as processing algorithms, and improved data-archiving practices and data-management systems. All these will increase the value of climate monitoring for decade-to-century-scale research; most essential, of course, is assurance of a long-term commitment to observational objectives.

THE FORCING AGENTS OF CLIMATE FLUCTUATIONS

The climate record provides the key to developing, refining, and verifying our hypotheses regarding the forcing agents responsible for climate fluctuations, be they anthropogenic or natural, internal or external to the climate system, global or regional, or persisting one or many decades. Diaz and Bradley (1995) suggest that the many decade-to-century climate fluctuations evident in both observations and proxy records may indeed have natural origins, since similar fluctuations seem to occur in the climate record both before and after humans became capable of modifying climate.

Often physically based models are the best means of testing our hypotheses about the cause of the fluctuations, as described in the modeling sections of this volume, but in addition they can be effectively used to help discern the physical consistency of apparent climate fluctuations and change. Cayan et al. (1995) demonstrate this approach; they use an ocean general-circulation model to help explain the climate jump over the North Pacific, as documented by Trenberth (1990; Trenberth and Hurrell, 1995, in this volume). Cayan et al. show how the atmosphere and ocean can act together to maintain decadal-scale climate fluctuations on a large spatial scale.

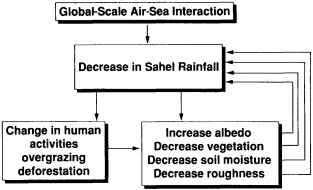

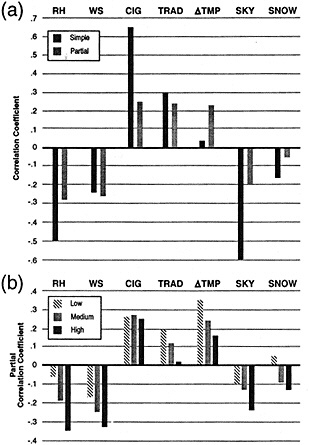

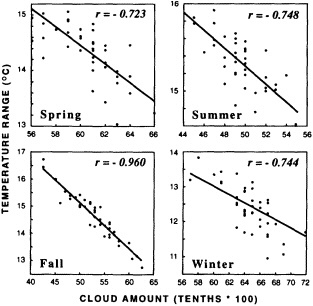

Potentially important factors in explaining climate fluctuations on decade-to-century time scales are land-surface and atmospheric feedback effects. Walsh (1995) provides ample evidence that snow cover has important feedback effects on the climate system on short (daily and interannual) and long (thousands of years) time scales, but on the time scales of interest to us the impact of snow is still not well understood. However, Nicholson (1995) and Shukla (1995) present evidence that the feedback between land-surface characteristics and the atmosphere has led to the prolonged drought in the Sahel. Karl et al. (1995) document an asymmetric increase of the mean maximum and minimum temperatures over many portions of the global land mass. Although they cite a number of potential causes of this multi-decadal trend, such as increases of anthropogenic atmospheric sulfate aerosols (Charlson et al., 1992) and greenhouse gases, empirical evidence suggests that, at least in some regions, observed increases in cloud cover play an important role in modulating the surface temperature. The forcing responsible for the increase in cloud cover remains unknown.

There are many important aspects of climate forcings and associated responses that cannot be covered here. Of particular relevance are the known changes in solar irradiance associated with the sunspot cycle. Recently Friis-Christensen and Lassen (1991) have used the length of the sunspot cycle to explain the decadal fluctuations and trends of land temperatures. Although a linear response of the surface temperature to the sunspot cycle length implies some unlikely responses of the temperature record in the early part of the time series (Kelly and Wigley, 1992), it is clear that changes in solar irradiance must continue to be monitored as a potential source of global climate fluctuations or change.

Recently, Elliott et al. (1991) have found evidence for an increase of tropospheric water vapor since 1973, leading to an enhanced greenhouse effect. However, the many types of observing and data-processing inhomogeneities in the upper-air moisture record make it immensely difficult to separate spurious trends and discontinuities from the true climate signal, even when the signal may be as large as a 10 percent increase in specific humidity.

A critical atmospheric quantity affecting surface temperature is the variability of cloud cover—its type, height, and spatial distribution. Using the ISCCP data base, Hartmann et al. (1992) showed the net forcing of various cloud types as a function of season and latitude. A recent study by Rossow (1995), however, reveals that this data set has serious biases that make it inappropriate for decadal-scale climate assessments. Meanwhile, conventional in situ data, analyzed on a national basis by a number of researchers, show a widespread general increase in total cloud cover

over the past several decades (Dutton et al., 1991; Henderson-Sellers, 1990; Karl and Steurer, 1990; London et al., 1991; McGuffie and Henderson-Sellers, 1989; Parungo et al., 1994). Clearly, a much more focused effort is required to better understand decadal and multi-decadal fluctuations of cloud cover, since they often have very important feedback effects on other climate quantities. For instance, cloud feedbacks work differently during the day from the way they do at night. They therefore differ at high and low latitudes, making generalizations speculative.

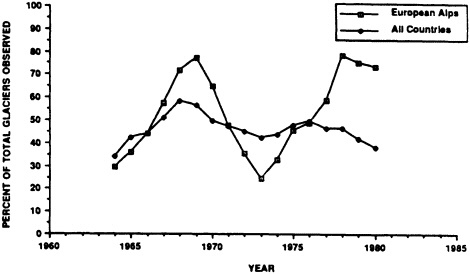

Also, there is no discussion in this volume of the world's glaciers, in spite of their known tendency to fluctuate markedly on decade-to-century time scales. Long-term trends of climate change are integrated by mountain glaciers, and during the past century mountain glaciers all over the world have been declining (IPCC, 1990). The World Glacier Monitoring Service (1993) summarized these changes over the past several decades, and the USGS Satellite Image Atlas of the World discusses observed variations of hundreds of glaciers over the past several centuries.

CONCLUSION AND RECOMMENDATIONS

Identification and explanation of the forcings and feedbacks responsible for decade-to-century-scale climate fluctuations are essential to distinguishing between natural and anthropogenic impacts on the climate system, as well as to developing any predictive skill with respect to these phenomena. Further progress in understanding this gray area of climate change will depend upon our ability to address three topics. First, we must be able to document climate fluctuations without spurious discontinuities and trends. Second, we must ensure that we are adequately monitoring potential forcings and feedbacks internal and external to the climate system, so that we will have the data necessary for testing hypotheses about their operation. Last, data analysts must work closely with modelers (and vice versa) to test the hypotheses we formulate on a variety of climate models, ranging from simple one-dimensional climate models to complex coupled ocean-atmosphere general-circulation models. The re-analysis modeling projects of the United States (Kalnay and Jenne, 1991) and the European community are likely to enhance our confidence in both how and why climate has varied on decade-to-century time scales. (It must be emphasized, however, that the value of any re-analysis effort can be jeopardized by the use of data that are biased or, most troublesome, inhomogeneous in time.) Only by advancing in all three of these areas will we be able to overcome our ignorance about multi-decadal climate fluctuations and make predictions with any degree of assurance.

Documenting Natural Climatic Variations: How Different is the Climate of the Twentieth Century from That of Previous Centuries?

HENRY F. DIAZ1 AND RAYMOND S. BRADLEY2

ABSTRACT

Changes in decadal-mean surface temperature and its variance for different land areas of the Northern Hemisphere are examined. In the last 100 years, changes in surface air temperature have been greatest and most positive in the period since about 1970. Interannual variability, particularly at the largest spatial scales has also increased, although it differs according to regions. The most unusual decade of the last 100 years in the contiguous United States may have been the 1930s, although that of the 1980s is probably a close second, and in some regions perhaps the most anomalous. Both the 1930s and the 1980s experienced significant warming together with enhanced climatic variability.

To incorporate a longer-term perspective than is obtainable from the modern instrumental record, we used summer temperature reconstructions based on tree-ring records, and on d18O ratios extracted from different ice-core records. Although none of these records is a simple or even direct temperature proxy, we present them as general indicators of prevailing environmental temperatures. The data were averaged by decades in order to focus on decadal-scale variability. With the exception of the data from tropical ice cores, the proxies indicate that the recent decades were not very unusual, either in regard to the mean or in terms of increased variability. While seasonal and annual temperature changes in the last two decades have been rather large in most areas of the Northern Hemisphere, the available paleoclimate evidence suggests that in many areas there have been decadal periods during the past several centuries in which reconstructed temperatures were comparable to those of the 1970s and 1980s, with climatic variability as large as any recorded in recent decades. Natural variability on decadal time scales is comparatively large—typically about half as large as the interannual variance.

INTRODUCTION

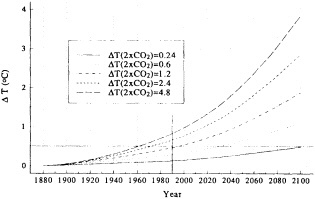

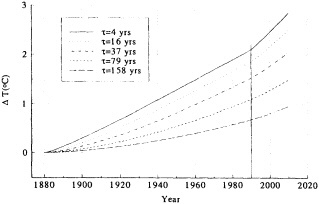

Considerable effort has been expended over the last 25 years to improve our knowledge and understanding of climate processes and mechanisms associated with changes in climate (Saltzman, 1983; Trenberth, 1992). A major impetus for much of the recent climate research has been the global and regional climate projections made with physical climate models (the so-called general-circulation models, or GCMs) for a doubling of carbon dioxide concentration in the atmosphere.

Most assessments of the climatic impact of increases in the ''greenhouse" gases (CO2, methane, nitrous oxide, chlorofluorocarbons) have concluded, more or less consistently, that global temperatures should rise (under a doubling of the most abundant of these greenhouse gases, CO2) from 1.5°C to 4.5°C (NRC, 1982; Bolin et al., 1986; IPCC, 1990; Schlesinger, 1984, 1991). Such changes, should they occur, will be superimposed on the natural variability of the climate, which is quite large at all time scales (Karl et al., 1989). Depending on a number of assumptions, the amount of global warming that should have been realized by now appears to be more consistent with the lower end of the above estimates (Bloomfield, 1992).

Karl et al. (1991b) performed a variety of tests using output from three different GCMs to ascertain, given their different climate sensitivities, when a statistically significant greenhouse signal might be detected in the central United States. They concluded that it was likely that a greenhouse signal had been masked, to date, by natural climate variability, and that it would likely take another two to four decades before the greenhouse signal in temperature and precipitation might be unambiguously detected in this region.

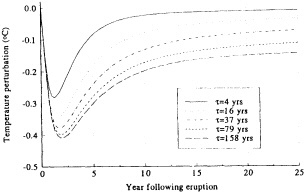

We should note the possibility that other human-induced factors may be acting to counteract the radiative effects of increased greenhouse-gas concentrations in the atmosphere. Recent work has indicated that sulfate aerosols, which act to increase the planetary albedo (Charlson et al., 1992; Hansen and Lacis, 1990; Wigley, 1989, 1991) may have counteracted to some degree the enhanced infrared warming (see Michaels and Stooksbury, 1992). These and other factors, whether anthropogenic in origin or not (e.g., changes in vulcanism), will undoubtedly "muddy" the climate picture and may make it more difficult to identify unequivocally a contemporary greenhouse signal.

In this paper, we consider two aspects of climate-change detection that we feel bear strongly on the issue of natural versus anthropogenic climate variability. One aspect of the problem is, in effect, at what point one rejects the null hypothesis (no climate change) and accepts the premise that the climate of the last few decades belongs to a different sample population. The difficulty there arises because we are evaluating a relatively short observational record with the knowledge that the climate has fluctuated in the past few centuries by an amount that may be of the same magnitude as the fluctuations observed in the recent record (see Bradley and Jones, 1992).

A second aspect of the problem of climate change versus natural variability, which we consider here, is temporal changes in that variability, i.e., in the variance. Changes in climatic variability are important, since they are likely to have a greater effect on a society's ability to mitigate and adapt to climatic changes than a slow alteration in the mean climate patterns. We have examined a variety of observational records of various lengths (typically 100 to 150 years), which are representative of different spatial scales (from hemispheric to regional basins). We will focus on decadal time scales, since one could argue that climatic changes will be better gauged at time scales that to some extent average out the high-frequency climatic "noise" associated with seasonal variability and other air-sea processes operating on annual time scales (e.g., the El Niño/ Southern Oscillation system). We compare these instrumental series with a suite of high-resolution climate proxy records—namely, tree rings and oxygen isotopes extracted from ice cores—spanning the last 300 to 800 years. Our aim is to give the reader some feeling for the range of this intermediate-frequency (decade-to-century-scale) climatic variability, as well as some appreciation of the uncertainties inherent in the existing records (instrumental, historical, and paleoenvironmental).

We note that the "fingerprint" climate-change-detection technique (Barnett, 1986; Barnett and Schlesinger, 1987; Barnett et al., 1991) provides a very useful methodology for testing a hypothesis (the GCM climate-change projections) against observations. We will attempt here to highlight some aspects of climatic variability at decadal and longer time scales and will re-emphasize the conclusion of Barnett et al. (1991), who noted that the existence of a high degree of "unexplained" interdecadal climatic variability (see also Ghil and Vautard, 1991, and Karl et al., 1991b) will greatly complicate the detection of a greenhouse climate-change signal.

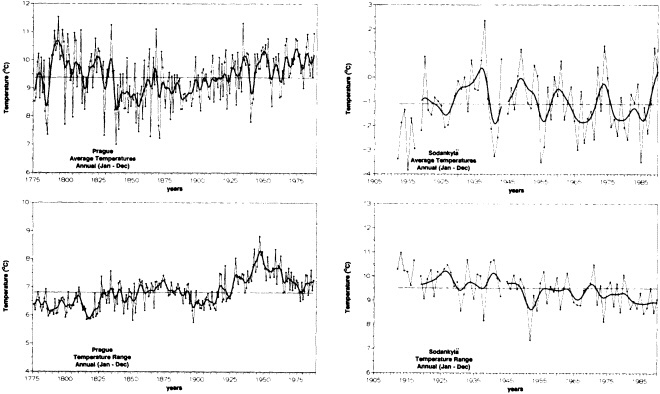

THE MODERN (INSTRUMENTAL) RECORD

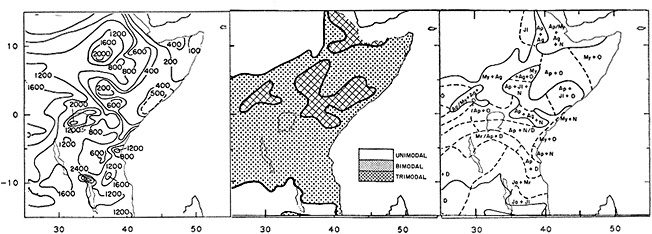

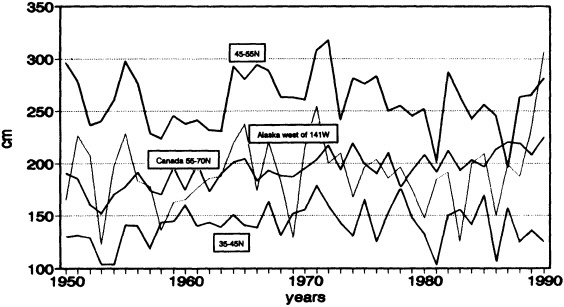

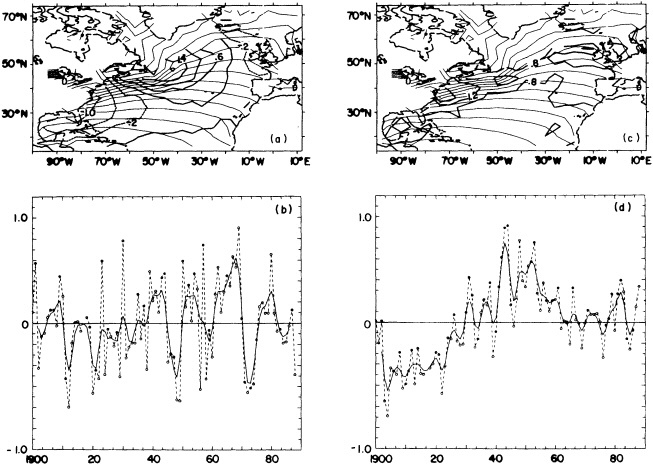

Characteristics of Decadal-Scale Changes of Area-Mean Temperature Indices

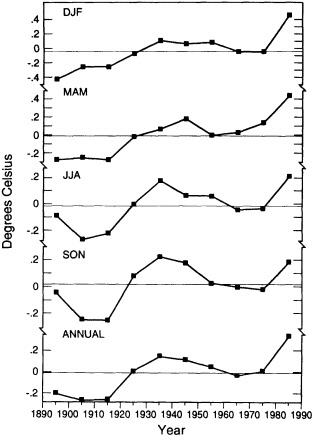

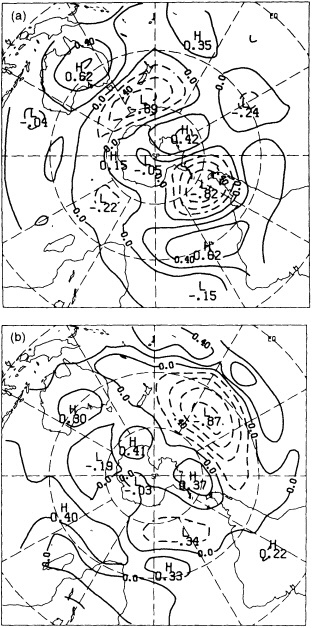

The available data suggest that during the past century decadal-scale changes in global-scale mean annual temperature are on the order of 0.1°C to 0.3°C (see Folland et al., 1990). Figure 1 illustrates the annual and seasonal temperature changes for the Northern Hemisphere land areas. The plotted data, from Jones et al. (1986a) with subsequent updates, are consecutive 10-year area-weighted averages from 1891-1900 through 1981-1990 of gridded temperature anomalies referenced to the 1951-1970 period. On this "dec-

FIGURE 1

Decadal-mean seasonal and annual temperature anomalies (in °C) for land areas of the Northern Hemisphere. Data is from Jones et al. (1986a), with subsequent updates.

adal" time scale, the warming of the 1980s relative to the 1970s (0.32°C for the hemisphere) is comparable to the warming that occurred in the 1920s relative to temperatures in the 1910s (0.26°C). Some seasonal differences can be noted, with northern summer and fall showing the least warming trend and winter and spring showing the greatest warming trend over the last 100 years.

The twentieth century contains many examples of decadal-scale climatic variations of sufficient magnitude to have significantly affected societies. The two-decade-long drought in the African Sahel is perhaps the best-known example. The words "climate change" have become overused, and climatic variations from seasonal to multi-year are often lumped together. Until the mid-1970s, "climate change" was used to refer to climatic changes on time scales of 104 to 106 years (essentially, Milankovitch time-scales). Variations of less than 10 years were generally considered to be part of the natural climate noise associated with interannual variations of the various components of the climate system and their nonlinear interactions, whereas variations on time scales of 10 to 103 years are now thought to be strongly driven by ocean-atmosphere heat exchanges resulting from changes in the ocean's thermohaline circulation.

Because of the changes in the earth's radiative balance imposed by human activities during the past century, climatologists have been trying to detect climatic-change signals that hitherto have typically been associated with time scales of millennia. The climate record available for such studies, even with the aid of high-resolution paleoenvironmental records, is sufficient to define only continental to hemispheric changes on decadal time scales, and perhaps regional (less than about 105 km2) changes on century time scales.

In the United States, perhaps the singular climatic event of this century was the severe, widespread, and persistent drought of the 1930s. This event has been widely chronicled in both scientific (e.g., Skaggs, 1975) and popular literature. Other climatic events that persisted for more than one year and affected relatively large areas of the United States were the drought of the 1950s in south central areas of the United States (Chang and Wallace, 1987), and the drought of the 1960s in the Northeast (Namias, 1966). Periodic drought of variable duration has affected all areas of the contiguous United States to varying degrees (Diaz, 1983). Indeed, multiyear climate anomalies are a characteristic feature of the climate of the United States and of other parts of the world (Karl, 1988; Folland et al., 1990).

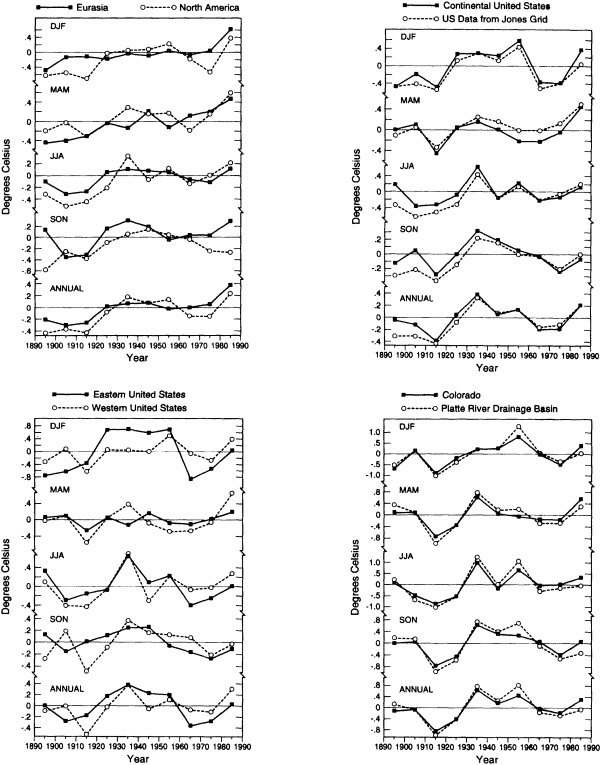

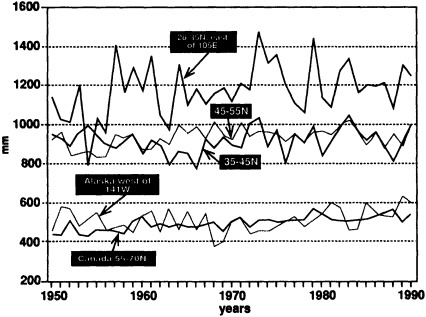

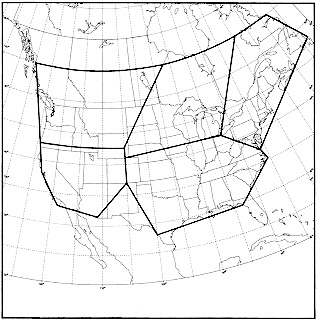

In Figure 2, decadal-mean seasonal (December-February for winter, March-May for spring, etc.) and annual temperature anomalies for various areas of the Northern Hemisphere are examined. Although Eurasia and North America (Figure 2a) have broadly similar seasonal trends over this period, differences can be noted in all seasons as well as in the annual averages. Figure 2b illustrates decadal temperature changes for the contiguous United States for the last century. The data used are from the adjusted divisional values (solid curve) described in Karl et al. (1984b). For comparison, the equivalent Jones et al. (1986a) gridded values are given as the dashed curve in Figure 2b. The decadal averages for the lower 48 states exhibit much smaller long-term trends than those noted for the continental-scale regions. Figures 2c and 2d illustrate the decadal temperature changes recorded in subregions of the United States, namely, the eastern and western halves of the United States (divided roughly along the 100th meridian), the state of Colorado, and a single state climatic division in Colorado where the city of Boulder is located. Again we note regional differences that can depart substantially from the temporal behavior of the larger spatial averages.

We have tabulated the mean temperature change from one calendar decade to the next over the 100-year period 1891 to 1990 for each of the above regions. No particular pattern emerges, except that at the largest space scales, the greatest decade-to-decade warming occurs mostly from the 1970s to the 1980s, whereas the time of the largest such

FIGURE 2

Decadal-mean seasonal and annual temperature anomalies (in °C). (a) For the Eurasian (solid line) and North American (dashed line) land masses. (b) For the contiguous United States, using data from the National Climatic Data Center described in Karl et al. (1984b) (solid line) and from the gridded temperature anomaly fields of Jones et al. (1986a) (dashed line). (c) For the eastern (solid line) and western (dashed line) United States. (d) For the state of Colorado (solid line) and the Platte River climatic division of Colorado (dashed line).

changes varies for the smaller regional averages, with most areas of the contiguous United States experiencing their greatest decadal changes during the first half of this century.

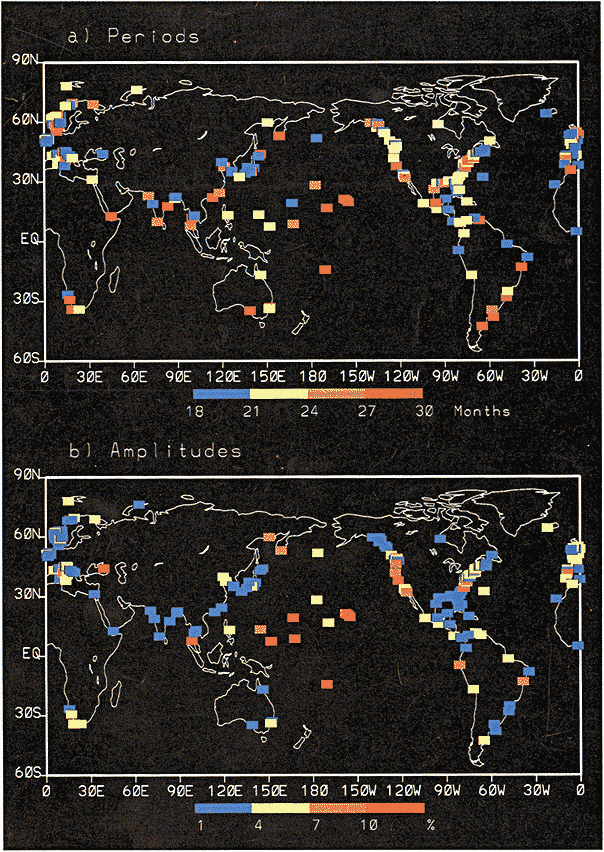

Recent studies have documented some rather pronounced and relatively rapid shifts in the climate system that occurred on decadal time scales. In particular, the atmospheric circulation in the extratropical North Pacific underwent a major shift in the mid-1970s that lasted over 10 years (Trenberth, 1990; Ebbesmeyer et al., 1991). This change was accompanied by a tendency for climate patterns in the tropical Pacific to exhibit conditions reminiscent of El Niño/Southern Oscillation (ENSO) warm-event conditions—weaker trades, warmer equatorial sea surface temperature (SST), anomalous rainfall patterns, etc.—relatively more frequently than during previous decades. Gordon et al. (1992) have also reviewed some of the relatively abrupt changes in water properties and surface climate in the Atlantic Ocean that have occurred in the past several decades, including sudden changes in SST anomalies in the South Atlantic compared to those of the North Atlantic, and the so-called "great salinity anomaly" in the North Atlantic from about the late 1960s to the early 1980s (Dickson et al., 1988). Certainly the sudden decrease of rainfall in the Sahel of Africa, a feature that has lasted for two decades, is a good example of how the climate can undergo significant, sudden, and prolonged change over relatively large spatial scales.

Clearly, increased knowledge of the behavior of climatic variability, from the interannual through decadal and century time scales, is needed to improve our assessments of any future changes in climate from regional to global scales. Indeed, as was noted by Ghil and Vautard (1991), the ability to distinguish a warming trend from natural variability is critical for separating out the greenhouse-gas-induced signal.

As we noted at the outset, an important element of climate that is too often overlooked is the variance or the characteristic variability of climatic means. In determining climate impacts, the importance to society of short- to medium-term climatic instability (i.e., climate fluctuations occurring on interannual to interdecadal time scales) is perhaps equal to or greater than that of slow changes in the background mean. Below we have analyzed some aspects of low-frequency changes in the temperature variance of different regional means.

Changes in Temperature Variability

We have examined contemporaneous variation of two measures of temperature variability, the standard deviation in running 15-year segments, and decadal means of the root-mean-square differences between successive yearly values of seasonal and annual regional temperature anomalies. The latter index is defined as

Since the xi are deviations from a reference mean and have approximately zero mean,

where s2 is the series variance, and r1 is the autocorrelation of the time series with a lag of I year. We will use this measure, rather than the standard deviation, as the key index of interannual variability. Note that this index, Ind V, amplifies changes in the high-frequency part of the variance spectrum.

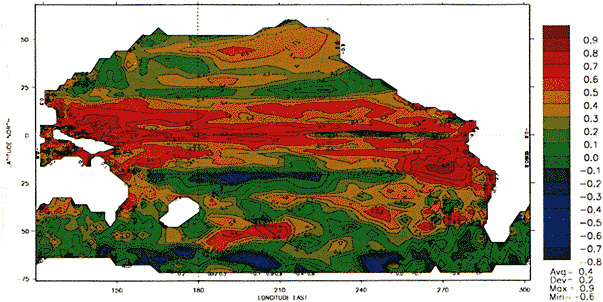

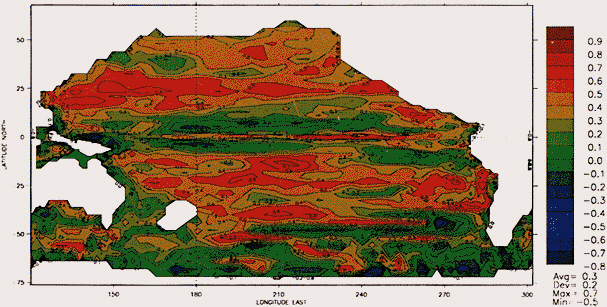

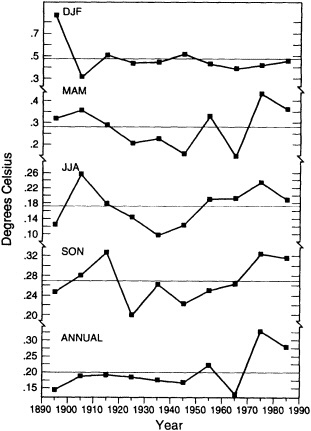

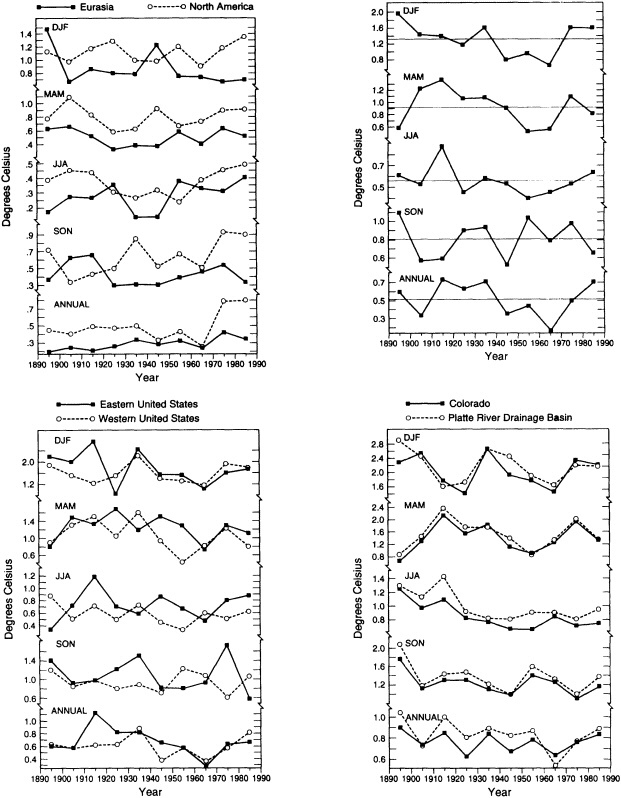

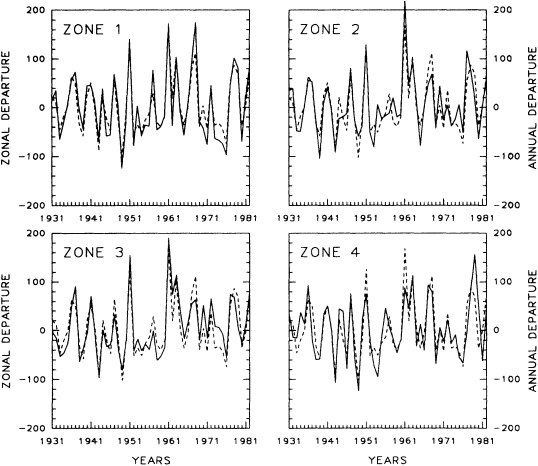

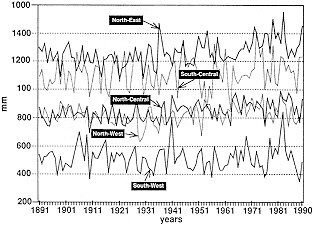

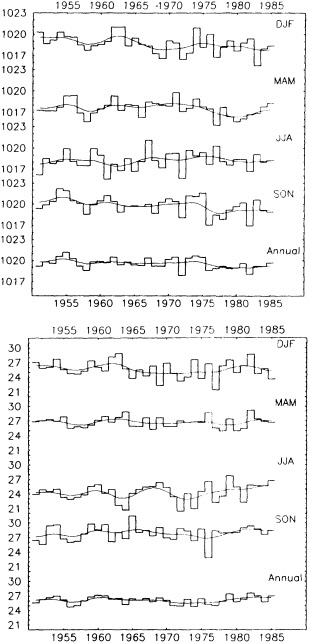

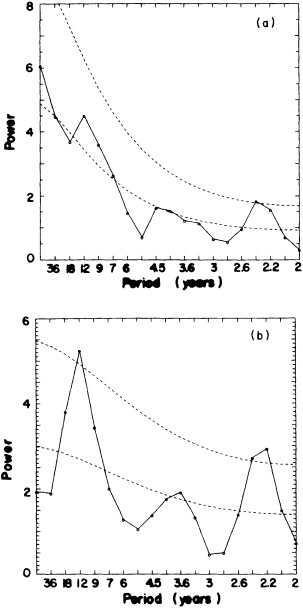

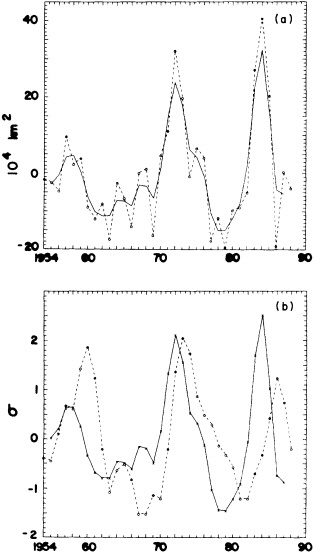

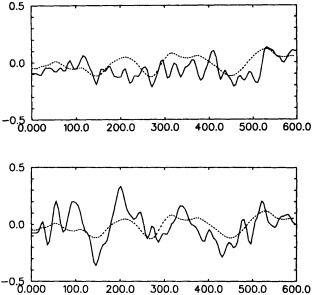

Figures 3 and 4 illustrate the changes in the interannual variability of the regional groupings discussed earlier. The curves differ from one season to another and from one region to another. For the largest continental-sized regions, there appears to be an increase in interannual variability in the last couple of decades. At smaller spatial scales, there does not seem to be a consistent trend; instead, the interan-

FIGURE 3

Index of interannual variability (in °C) for the Northern Hemisphere land areas. Values correspond to decadal means of the root-mean-square difference between successive seasonal and annual temperature anomalies over the last 100 years.

FIGURE 4

Indices of interannual variability, as in Figure 3. (a) For the Eurasian (solid line) and North American (dashed line) landmasses. (b) For the contiguous United States, using data from the National Climatic Data Center. (c) For the eastern (solid line) and western (dashed line) United States. (d) For the state of Colorado (solid line) and the Platte River climatic division of Colorado (dashed line).

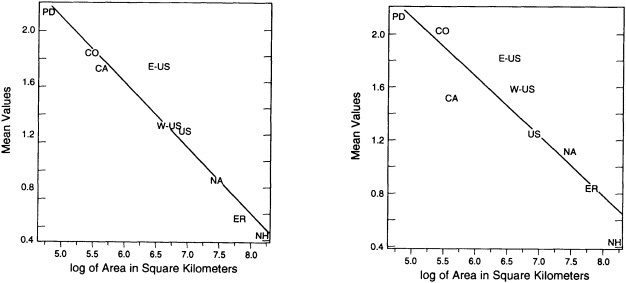

nual variability wanes and waxes over the last 100 years. The corresponding curves for the standard deviation (not shown) reveal similar patterns, with increases in variance at the largest spatial scales with time but no consistent trends in the standard deviation of the smaller regions. As one would expect, there is a well-defined log-linear relationship between the magnitude of the interannual variability and the size of the area comprised by the various indices (see Figure 5). The inherently higher variability at smaller regional scales should be kept in mind when interpreting the paleoclimate record, which is based on limited regional samples.

The question of whether a warming climate will exhibit increased short-term variability is still open. Previous studies have been generally inconclusive on this subject (van Loon and Williams, 1978; Diaz and Quayle, 1980). Certainly, there is no consistent relationship between interdecadal changes in average temperatures and interdecadal changes in variability. Climatic variability may increase, decrease, or stay the same, in response to an arbitrary change in decadal mean temperatures. On the basis of the above results, we can say that, considering surface temperature variations during the last century, there has been a recent increase in variability at large spatial scales (continental to hemispheric-scale averages). This increase in variability is also concurrent with relatively large temperature increases over those areas. However, this is not the case for earlier warming episodes.

THE PRE-INSTRUMENTAL PERIOD

Prior to the late 1800s, reliable quantitative data on climate variations are sparse, and conclusions about the magnitude of these changes on large spatial scales necessarily contain significant degrees of uncertainty. There is ample evidence that multi-decadal temperature changes occurring over regions the size of Europe and China have been at least of order 1°C (Bradley and Jones, 1992). This suggests that it is quite plausible, if not likely, that temperatures as warm as those prevailing since the end of the Second World War may have been experienced at regional spatial scales of about 105 to 106 km2 for periods of one to several decades during some portions of the past thousand years under what amounts to natural (i.e., with negligible human influences) conditions.

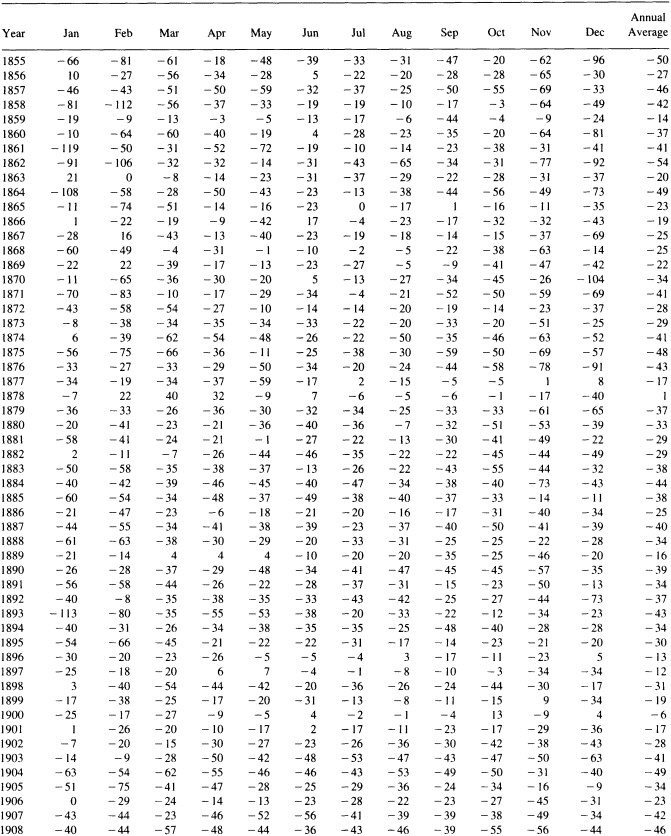

Below we present some examples of long-term variability in a set of climate-sensitive paleotemperature records. The climate indices used here are discussed in several chapters of Bradley and Jones (1992). A listing of them, together with the source references and periods of record, appears in Table 1; each of them has been assigned a number for use in later tables comparing their data. Because of its very long record, the instrumental temperature series for central England (Manley, 1974) is considered in this section for comparison with other paleoclimate indices.

Tree-Ring Indicators

There is an extensive body of work relating climate variations to growth changes in particularly climate-sensitive trees (e.g., Fritts, 1976; Fritts et al., 1979; Hughes et al., 1982; Cook and Kairiukstis, 1990). We have selected a suite of climate-sensitive tree-ring records to study possible changes in climatic variability. Two main considerations were applied in the selection of these indices. First, we chose

FIGURE 5

Interannual variability (in °C) as a function of the regional area. Values shown are for northern summer (left panel), and winter (right panel). Area is plotted as log10 values.

TABLE 1 Temperature Indices Used in This Study

|

Type of Record |

Time Coverage |

Reference |

Index No.* |

|

Ice-core d18O |

|||

|

Agassiz Ice Cap (Ellsmere Is.) |

A.D. 1349-1977 |

Fisher and Koerner (1983) |

1 |

|

Devon Island Ice Cap |

A.D. 1512-1973 |

Koerner (1977) |

2 |

|

Camp Century (Greenland) |

A.D. 1176-1967 |

Johnsen et al. (1972) |

3 |

|

Milcent (Greenland) |

A.D. 1176-1967 |

Hammer et al. (1978) |

4 |

|

Quelccaya Ice Cap (Peru) |

A.D. 1481-1981 |

Thompson et al. (1986) |

14 |

|

Dunde Ice Cap (China) |

A.D. 1606-1987 |

Thompson et al. (1990) |

15 |

|

Temperature reconstructions from tree rings |

|||

|

Western U.S. (annual) summer |

A.D. 1602-1961 |

Fritts (1991) |

5 |

|

Eastern U.S. (annual) summer |

A.D. 1602-1961 |

Fritts (1991) |

6 |

|

Western U.S. & SW Canada (annual) summer |

A.D. 1602-1961 |

Fritts (1991) |

7 |

|

Northern Treeline (North America) (annual) |

A.D. 1601-1974 |

D'Arrigo & Jacoby (1992) |

9 |

|

U.S. & SW Canada (April-September) |

A.D. 1600-1982 |

Briffa et al. (1992b) |

8 |

|

Northern Scandinavia (April-August) |

A.D. 500-1980 |

Briffa et al. (1992a) |

12 |

|

Northern Urals (June-July) |

A.D. 961-1969 |

Graybill and Shiyatov (1992) |

13 |

|

Tasmania, Australia (November-April) |

A.D. 900-1989 |

Cook et al. (1992) |

16 |

|

Patagonia, Argentina (December-April) |

A.D. 1500-1974 |

Boninsegna (1992) & Villalba et al. (1989) |

17 |

|

Rio Alerce, Argentina (December-February) |

A.D. 870-1983 |

Villalba (1990) |

18 |

|

Instrumental |

|||

|

Central England Temperature (annual) |

A.D. 1660-1987 |

Manley (1974) |

|

|

Dec-Jan-Feb series |

|

|

10 |

|

June-July-Aug series |

|

|

11 |

|

* These numbers are used in text and later tables to identify the various indices. |

|||

climate-sensitive tree-ring records that have been thoroughly documented in the refereed scientific literature. Second, we chose the longest of those records in order to maximize the temporal coverage, and tried, as far as possible, to select samples from representative geographical areas.

A number of problems are inherent in any climate reconstruction. These problems are discussed in detail in the original published papers; however, we will briefly highlight here the more critical ones as they may affect the results presented below. Because we have analyzed changes in variability, temporal changes in the composition of the tree-ring network used for the reconstructions will, in general, affect the high-frequency variance, and to some degree the low-frequency variance as well. In interpreting the temperature reconstructions shown here, we have taken into account these potential sources of biases as much as possible.

Regardless of whether one uses the width of the annual growth rings or the maximum latewood density to reconstruct a particular climate variable (generally, growing-season temperature and/or precipitation), a process of standardization is required to account for different rates of tree growth as a function of age. This standardization is achieved by fitting a growth curve to the series of annual values, usually a cubic smoothing spline (see Cook and Kariukstis, 1990), and taking residuals about the smoothed curve. The degree of smoothing and the functional form of the growth curve partly determine the spectral properties of the residual series. In order to develop a climate reconstruction from these records, it is necessary to formulate a transfer function to convert the tree-growth index into, say, a temperature index. The procedure usually involves a calibration phase, in which a set of regression coefficients is derived that convert the tree-growth parameter into a climatic estimate, and a verification phase (see, e.g., Cook

and Kariukstis). Verification is usually done against an independent set of predictors. The reader is urged to review the reference sources listed in Table 1 for specific details regarding each paleotemperature reconstruction.

Another feature of long-term reconstructions from tree rings that should be borne in mind is that the composition of tree-ring samples that make up each yearly value is variable. In general, an index value for a given site is composed of a number of tree samples from several individual trees. These may vary in age, and in some cases may come from both dead as well as living trees. The number of samples making a particular ensemble average will vary with time, although this is more generally the case near the beginning and end of a particular chronology. These extraneous factors can introduce "noise" and spurious fluctuations into the reconstructions. Nevertheless, the tree-ring indices considered here, while suffering to varying degree from the above-named shortcomings, comprise a high-quality set of proxy temperature records that are of value to compare.

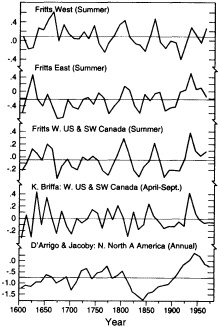

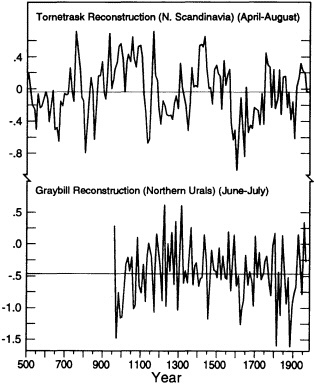

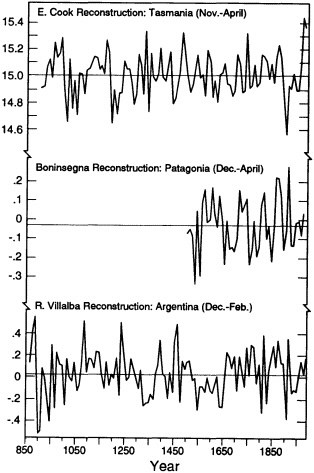

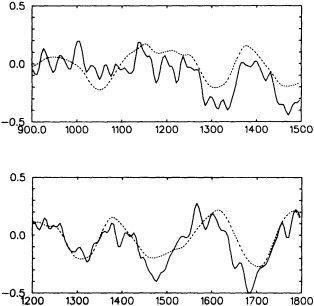

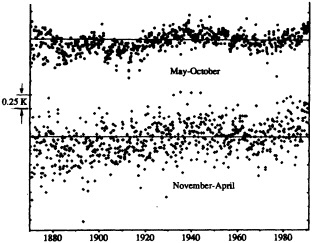

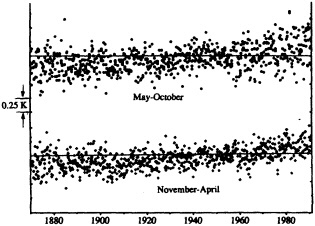

Figures 6 through 8 show decadal-mean values of various tree-ring reconstructions, generally representing summer (or growing-season) mean temperature. For the most part, these tree-ring reconstructions do not sample the 1980s; nevertheless, it is clear from the degree of interdecadal variability that it would be hard to point to any particular recent period as being unique or exceptional. The November-to-April

FIGURE 6

Decadal mean values of reconstructed summer- temperature anomalies for various North American regions. The top three graphs are in standardized units, the bottom two are in °C. See Table 1 for source information.

FIGURE 7

Decadal mean values of reconstructed summer temperature anomalies for Scandinavia and the northern Urals. Units in °C.

temperature reconstruction for Tasmania (Cook et al., 1992) does indicate that the last two decades of that record (1970s and 1980s) exhibit significantly greater tree growth (Figure 8) and hence higher reconstructed mean growing-season temperatures than previously, but it would seem premature to conclude that a "climatic change" has taken place in this region of southern Australia. We also note that, unlike the North American and Scandinavian reconstructions, this one is based on tree samples from only one location.

Some unique features are evident for a few of the temperature indices. For example, the curve of D'Arrigo and Jacoby (1992) is a reconstruction of annual mean temperature based on several sites along the northern North America treeline, stretching from Alaska to the Northwest Territories, plus a site located in northern Quebec Province. This reconstruction exhibits a pronounced warming from a very cold period during the mid-1800s to a maximum around 1940. By comparison, the two other high-northern-latitude sites discussed here—the indices from northern Scandinavia and from the northern Urals (Figure 7)—do not exhibit quite the same type of variations. The reader should note, however, that a much longer time period is sampled by these other two reconstructions, and that a seasonal (April to August for the Tornetrask index, and June to July for the

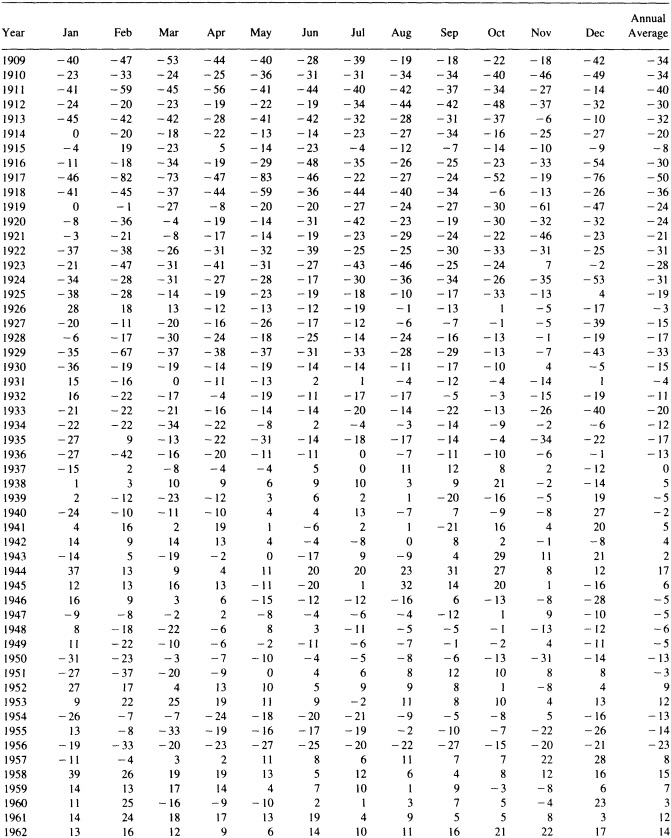

TABLE 2 Century Means of Interannual and Interdecadal Variability for 18 Temperature Indices (index sources identified in Table 1)

|

|

Index |

|||||||||||||||||

|

Century |

1 |

2 |

3 |

4 |

5 |

6 |

7 |

8 |

9 |

10 |

11 |

12 |

13 |

14 |

15 |

16 |

17 |

18 |

|

500s (ann.) |

x |

x |

x |

x |

x |

x |

x |

x |

x |

x |

x |

.84 |

x |

x |

x |

x |

x |

x |

|

(decad.) |

x |

x |

x |

x |

x |

x |

x |

x |

x |

x |

x |

.20 |

x |

x |

x |

x |

x |

x |

|

600s (ann.) |

x |

x |

x |

x |

x |

x |

x |

x |

x |

x |

x |

.79 |

x |

x |

x |

x |

x |

x |

|

(decad.) |

x |

x |

x |

x |

x |

x |

x |

x |

x |

x |

x |

.27 |

x |

x |

x |

x |

x |

x |

|

700s (ann.) |

x |

x |

x |

x |

x |

x |

x |

x |

x |

x |

x |

.64 |

x |

x |

x |

x |

x |

x |

|

(decad.) |

x |

x |

x |

x |

x |

x |

x |

x |

x |

x |

x |

.40 |

x |

x |

x |

x |

x |

x |

|

800s (ann.) |

x |

x |

x |

x |

x |

x |

x |

x |

x |

x |

x |

.63 |

x |

x |

x |

x |

x |

x |

|

(decad.) |

x |

x |

x |

x |

x |

x |

x |

x |

x |

x |

x |

.35 |

x |

x |

x |

x |

x |

x |

|

900s (ann.) |

x |

x |

x |

x |

x |

x |

x |

x |

x |

x |

x |

.70 |

.27 |

x |

x |

,x |

x |

.87 |

|

(decad.) |

x |

x |

x |

x |

x |

x |

x |

x |

x |

x |

x |

.31 |

.18 |

x |

x |

x |

x |

.41 |

|

1000s (ann.) |

x |

x |

x |

x |

x |

x |

x |

x |

x |

x |

x |

.66 |

1.95 |

x |

x |

.53 |

x |

.71 |

|

(decad.) |

x |

x |

x |

x |

x |

x |

x |

x |

x |

x |

x |

.25 |

.53 |

x |

x |

.25 |

x |

.28 |

|

1100s (ann.) |

x |

x |

x |

x |

x |

x |

x |

x |

x |

x |

x |

.69 |

1.70 |

x |

x |

.62 |

x |

.46 |

|

(decad.) |

x |

x |

x |

x |

x |

x |

x |

x |

x |

x |

x |

.43 |

.56 |

x |

x |

.20 |

x |

.14 |

|

1200s (ann.) |

x |

x |

1.36 |

1.25 |

x |

x |

x |

x |

x |

x |

x |

.69 |

1.85 |

x |

x |

.43 |

x |

.59 |

|

(decad.) |

x |

x |

.53 |

.85 |

x |

x |

x |

x |

x |

x |

x |

.22 |

1.02 |

x |

x |

.16 |

x |

.29 |

|

1300s (ann.) |

.93 |

x |

1.10 |

1.36 |

x |

x |

x |

x |

x |

x |

x |

.68 |

2.47 |

x |

x |

.42 |

x |

.68 |

|

(decad.) |

.39 |

x |

.65 |

.68 |

x |

x |

x |

x |

x |

x |

x |

.30 |

.66 |

x |

x |

.30 |

x |

.19 |

|

1400s (ann.) |

.70 |

x |

1.38 |

1.27 |

x |

x |

x |

x |

x |

x |

x |

.68 |

2.02 |

x |

x |

42 |

x |

.65 |

|

(decad.) |

.47 |

x |

.68 |

.33 |

x |

x |

x |

x |

x |

x |

x |

.23 |

.47 |

x |

x |

.18 |

x |

.32 |

|

1500s (ann.} |

.78 |

.59 |

1.13 |

1.18 |

x |

x |

x |

x |

x |

x |

x |

.60 |

1.75 |

1.86 |

x |

.42 |

.96 |

.62 |

|

(decad.) |

.85 |

.69 |

.65 |

.67 |

x |

x |

x |

x |

x |

x |

x |

.37 |

.56 |

.74 |

x |

.17 |

.23 |

.14 |

|

1600s (ann.) |

.89 |

.71 |

1.27 |

1.43 |

.45 |

.32 |

.31 |

.63 |

.22 |

x |

x |

.69 |

1.63 |

1.80 |

1.12 |

.50 |

.56 |

.66 |

|

(decad.) |

.50 |

.64 |

.59 |

.47 |

.30 |

.41 |

.21 |

.42 |

.25 |

x |

x |

.43 |

.53 |

.66 |

.49 |

.14 |

.14 |

.19 |

|

1700s (ann.) |

.84 |

.62 |

1.05 |

1.36 |

.42 |

.31 |

.25 |

.53 |

.23 |

1.81 |

.98 |

.74 |

1.85 |

2.01 |

1.11 |

.49 |

.53 |

.65 |

|

(decad.) |

.96 |

.27 |

.87 |

.54 |

.21 |

.26 |

.14 |

.20 |

.34 |

.77 |

.25 |

.43 |

.40 |

.70 |

.55 |

.22 |

.18 |

.28 |

|

1800s (ann.) |

.97 |

.78 |

1.25 |

1.21 |

.52 |

.35 |

.29 |

.54 |

.21 |

1.89 |

1.18 |

.84 |

1.64 |

1.67 |

1.10 |

.45 |

.53 |

.75 |

|

(decad.) |

1.07 |

.55 |

.62 |

.77 |

.26 |

.29 |

.21 |

.23 |

.26 |

.31 |

.50 |

.35 |

.95 |

.96 |

.29 |

.17 |

.18 |

.38 |

|

1900s (ann.) |

1.51 |

1.02 |

1.38 |

1.67 |

.51 |

.33 |

.29 |

.53 |

.27 |

1.67 |

1.14 |

.71 |

1.78 |

1.24 |

1.28 |

.45 |

.62 |

.76 |

|

(decad.) |

.52 |

.49 |

.72 |

.44 |

.30 |

.33 |

.23 |

.23 |

.27 |

.53 |

.35 |

.27 |

.68 |

1.10 |

.59 |

.21 |

.22 |

0.33 |

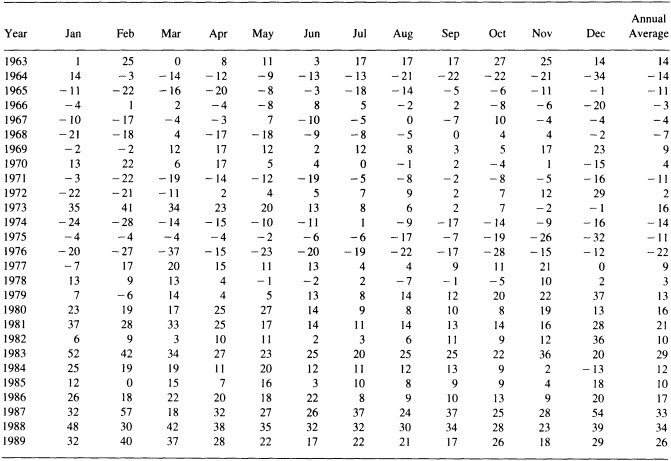

TABLE 3 Long-term Means of Interannual and Interdecadal Variability for 18 Temperature Indices (index sources identified in Table 1)

|

Index |

||||||||||||||||||

|

|

1 |

2 |

3 |

4 |

5 |

6 |

7 |

8 |

9 |

10 |

11 |

12 |

13 |

14 |

15 |

16 |

17 |

18 |

|

LTM (ann) |

.95 |

.75 |

1.24 |

1.33 |

.47 |

.33 |

.29 |

.56 |

.23 |

1.86 |

1.09 |

.71 |

1.91 |

1.74 |

1.15 |

.46 |

.66 |

.68 |

|

LTM (dec) |

.75 |

.55 |

.66 |

.62 |

.27 |

.33 |

.20 |

.29 |

.28 |

.59 |

.41 |

.33 |

.67 |

.82 |

.49 |

.20 |

.19 |

.27 |

|

Ratio (%) |

79 |

74 |

54 |

46 |

56 |

100 |

69 |

51 |

122 |

32 |

38 |

47 |

35 |

47 |

42 |

44 |

29 |

39 |

northern Urals set) interval rather than annual periods is considered.

The two summer temperature reconstructions for South America (Figure 8) are of widely different lengths. A comparison of the overlap period indicates that the Boninsegna series (see Table 1) exhibits a warming trend over the period of record. By contrast, the longer Villalba series exhibits some low-frequency variations but little if any trend over both the full data period and that of the Boninsegna period, beginning about the mid-fifteenth century.

Because of the longer record available, a variability index (described in the section on instrumental data above) was computed using the decadal averages, instead of the annual values. Our purpose here is to examine interdecadal temperature variability, with records spanning several centuries, noting that climate-sensitive tree-ring records are particularly useful for studying decadal-scale climatic fluctuations (Fritts, 1991). The changes in reconstructed climatic vari-

FIGURE 8

Decadal mean values of a reconstruction of summer temperatures for Tasmania, Australia (shown as actual temperatures) and two separate reconstructions for regions in Patagonia, Argentina (shown as anomalies). Units in °C.

ability have been summarized on a century-by-century basis in Table 2. For comparison, we also show in Table 3 the mean values of interannual and interdecadal variability for the full-length record. The variability index was calculated by applying equation (1) to both the annual and decadal-average reconstructed series. We fail to see any consistent trends in interdecadal variability associated with these tree-ring temperature reconstructions (see indices 5-9, 12-13, and 16-18 in Table 2). Interdecadal variability is typically about half the interannual values. This implies that substantial low-frequency variance is present in the paleotemperature record, so that recent high values of reconstructed decadal-mean summer temperature may yet represent an oscillation within the range of natural variability.

Oxygen Isotopes

The oxygen-isotope record has not been directly calibrated against temperature, as has been done for the tree-ring record. However, it is well established that the oxygen-isotope ratio (d18O) is dependent primarily on the temperature of formation of the precipitation, with increasingly negative d18O ratios associated with decreasing temperature. The problems lie in the interpretation of a local ice-core record, not only with respect to local temperature variations, but even in terms of the larger-scale temperature patterns. Several factors control the oxygen-isotope composition of the snow that falls on a given ice body, and temperature is only one of them. The sensitivity of these records to annual temperature variations has been discussed in a number of papers, including the source articles referred to in Table 1. Whether or not these oxygen-isotope records accurately represent a "local" temperature record, we consider them to be sensitive indicators of prevailing climatic conditions within a suitably broad source region. Since our stated purpose is to compare recent changes in a suite of climate-sensitive paleoindicators, we have included them in our comparisons.

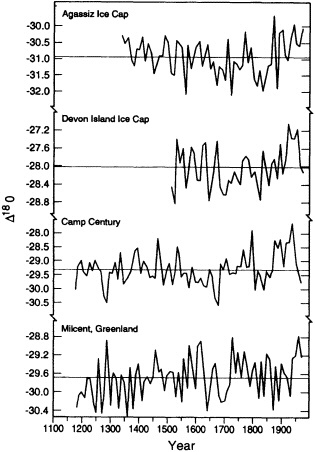

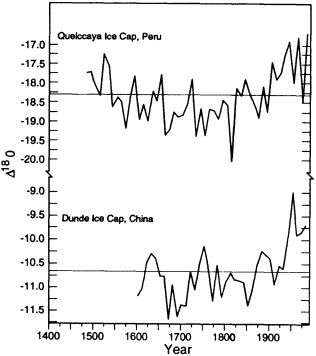

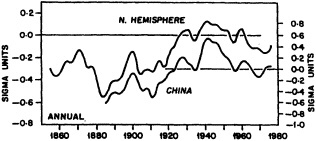

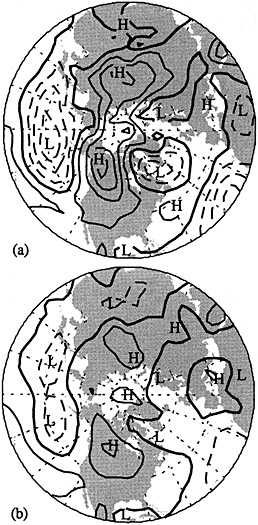

Figures 9 and 10 illustrate the changes in decadal averages of the oxygen-isotope ratio of glacier ice cores for different locations in northern Canada and Greenland, and for two low-latitude, high-elevation glaciers (the Quelccaya ice cap in the Peruvian Andes and the Dunde ice cap in the Tibetan Plateau region of China (see Table 1 for sources)). The polar ice d18O record, which ends before 1970, shows relatively less warming (trend toward smaller negative values of d18O) than do the tropical ice cores. Furthermore, the warmest decades in the tropical record occur in the most recent time. The relation of this tropical d18O signal to air-temperature changes in the general location of these records may be partially evaluated with independent observations. It is known that significant melting took place at the Quelccaya site during the 1980s (Thompson, personal communication). Increases in tropospheric

FIGURE 9

Decadal-mean values of d18O ratios from different polar-region ice cores. (See text for details.)

temperatures and water vapor obtained with tropical radiosondes for the past couple of decades have been documented by Flohn et al. (1992), although the drift toward less negative d18O ratios appears to have begun earlier in the ice-core record than in the instrumental climate record.

Changes in interdecadal variability are summarized in Table 2 (see indices 1-4 and 14-15). There is a suggestion that decade-to-decade changes in d18O ratios have increased in amplitude at most of these sites. In particular, the calculated interdecadal d18O variability at Quelccaya has increased sharply in the last 100 years. This increase is evident even though the greater number of samples in the upper part of the record permitted better averages to be created for the recent decades than for earlier periods. A similar signal is apparent on the Dunde ice cap as well. These changes, however, may reflect a more localized temperature signal, changes in moisture sources for snowfall on the ice caps, or some other unknown cause unrelated to climate. It should also be noted that these two sites are located at very high elevations, and thus differ from most of the other

FIGURE 10

The same as Figure 9, but for two tropical ice cores (Quelccaya ice cap, Peru, and Dunde ice cap. China).

paleoclimate indices, which are derived from locations at much lower elevations.

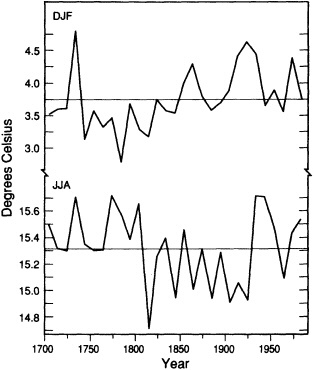

Central England Temperature

Decadal means of winter (December-to-January) and summer (June-to-August) mean temperature for central England (Manley, 1974, and subsequent updates) from 1700 to present are shown in Figure 11. The recent decades do not appear to be exceptional in comparison with the total record. The variability curves (not shown) indicate little overall change, with a tendency for the summer and winter seasons to have opposite changes in interdecadal variability (when one increases, the other tends to decrease). Jones and Bradley (1992, their Figure 13.1) have compared the central England temperature record with 12 other long-period station records in Eurasia and North America. Although there are obvious differences among the various climatic series, the twentieth century does not stand out as being a period of higher decadal-scale temperature variability. Most records (although not all) do exhibit the general warming trend of the past two centuries that is associated with the rebound from the Little Ice Age (see Bradley and Jones, 1992).

SUMMARY AND CONCLUSIONS

The purpose of this study was to evaluate whether the climate in recent decades was ''changing," both with regard

FIGURE 11

Decadal-mean values of central England winter and summer temperature (in °C).

to its mean value as well as with respect to changes in the variance on different time scales. Our findings are mostly mixed, in that the answer depends on the spatial scales selected for analysis.

For the period encompassing the last 100 years, changes in surface air temperature have been greatest and most positive in the period 1970 to 1990. Interannual variability at the largest spatial scales has also increased, although with seasonal differences. By and large, however, increases in variability at these spatial scales are not unique. When one considers smaller regions (in particular different portions of the contiguous United States) the picture changes, so that the 1980s do not necessarily show up as being a unique period in the instrumental climate history of the country.

In order to have a longer perspective on climatic variations, we utilized data from several paleoclimatic reconstructions of growing-season temperature based on tree-ring records and on d18O ratios extracted from different ice-core records. Data from the latter were also taken as a measure of the prevailing air temperature. Annual values were averaged by calendar decades in order to focus on lower-frequency climatic variability. With the exception of the tropical ice cores, the recent decades were not generally unique, in terms of either the average values or increased variability. We note that tropical sea surface temperatures have been generally above the long-term mean since the mid-1970s. It seems plausible that this recent warmth would be reflected in low (less depleted) d18O ratios on the Quelccaya and Dunde ice caps, although more positive d18O ratios were already evident by the 1950s. It should be emphasized again that the exact relationship between actual past climate and these two high-elevation tropical indices is not fully known. Certainly, the period encompassed by the Little Ice Age is reflected in relatively low d18O values, particularly in the Quelccaya record. The development of additional independent ice-core sequences from the Cordillera Blanca in Peru and from extreme western China by L. G. Thompson and colleagues should help in the interpretation of the nature of the climate signals present in these records (e.g., Thompson et al., 1995).

Do we have an answer to the question posed by the title of this study, namely, whether the climate of the twentieth century is different from that of previous centuries? Obviously, the response is not an unequivocal "yes" or "no." As in most instances when one deals with climatic time series, the answer is "It depends." There appear to be differences in regional responses, and they are evident in both the proxy and the instrumental climate records. Insofar as the United States is concerned, the most unusual decade of the last 100 years may have been the 1930s, although the recent period is probably a close second. We can only say that, for most areas of the coterminous United States, the climate of the most recent decades cannot be considered unique, even in the context of the last century.

Commentary on the Paper of Diaz and Bradley

EUGENE M. RASMUSSON

University of Maryland

When Drs. Diaz and Bradley present a paper, it always provides an enormous amount of information. The central question, in terms of a synthesis of the wealth of information provided in this paper, is the question posed by the title: "How different is the climate of the twentieth century"—more specifically the past few decades—"from that of earlier centuries?" In addressing this question the authors examined temperature, decade-to-decade temperature changes, and changes in interannual and interdecadal variability on various spatial scales.

The problem in any analysis of this type, of course, is that of inadequate data distribution in time and space. Thus, the analysis was largely limited to the land areas of the Northern Hemisphere, where both instrumental and proxy data are most plentiful.

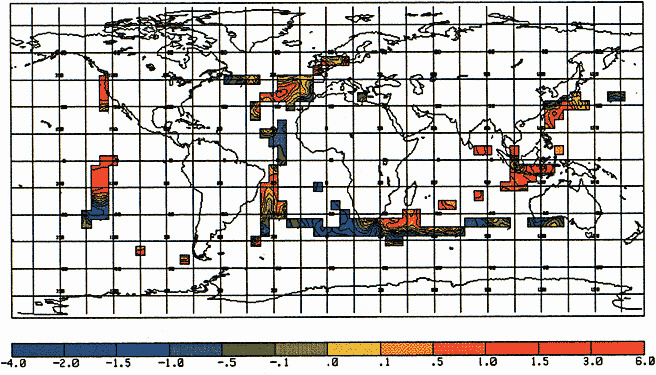

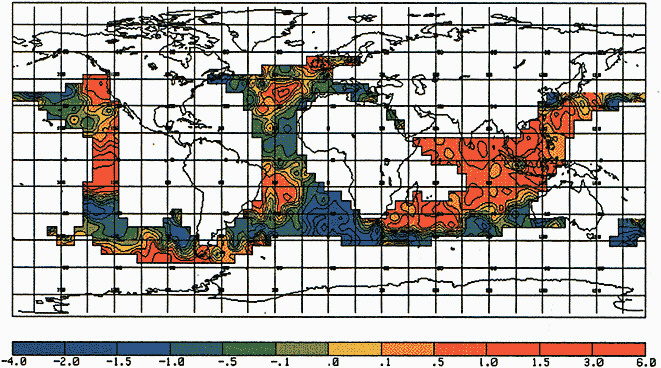

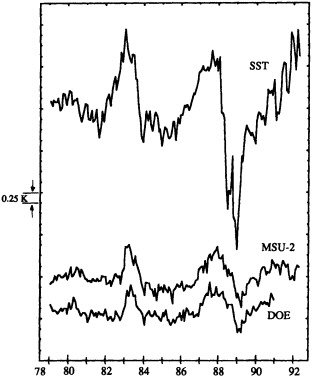

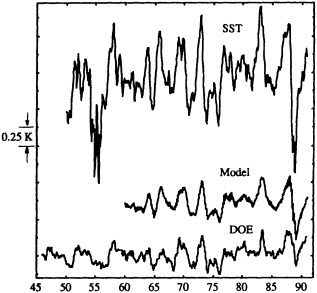

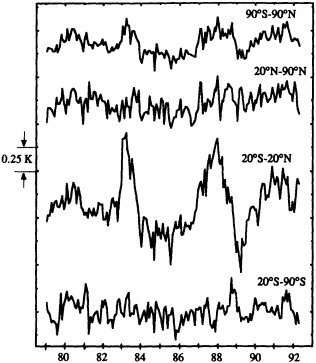

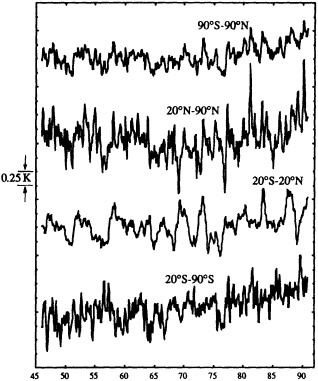

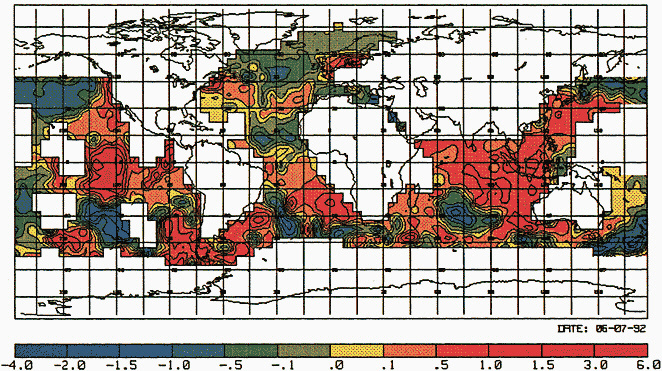

It may be well to note that changes in the spatial distribution of observations from decade to decade may affect both the computed area average and the variability. Figure 10 in my paper, which appears later, illustrates the effects of using different data distributions in synthesizing global averages of SST, where the problem is probably more severe than it is over the land areas.

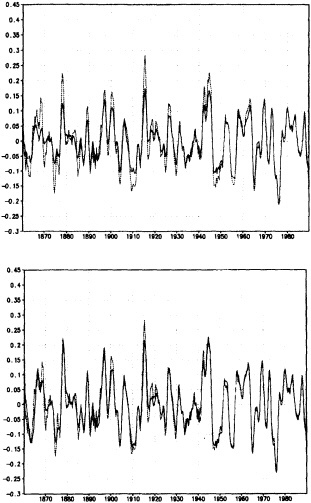

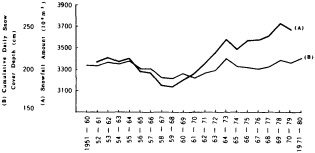

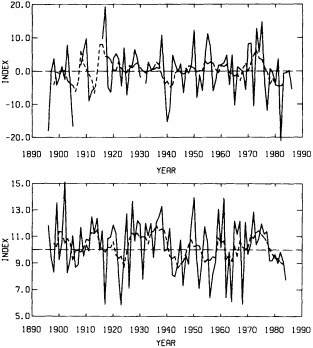

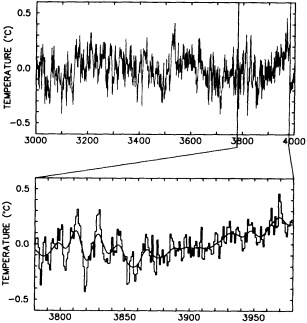

Figure 1 in this commentary also shows estimates of the variability in SST obtained from two different analysis schemes. Two estimates of variability are obtained. One is from the optimal averaging (OA) analysis technique of Vinnikov et al., and I think Dr. Groisman will tell us about that one. Another is derived from the simple box average that has been used—for example, by the U.K. Meteorological Office—in deriving SST area averages. The curves in Figure 10 of my paper show the low-frequency variations, i.e., periods longer than 30 years. There are some differences, but they are not too great, about 0.15 degrees around 1910 and 1920, with the OA staying a little bit closer to the mean for the entire period. Now, if we remove the low-frequency variations so that only the variations on time scales less than 30 years remain (Figure 1 in this commentary), and look at that difference between the two analysis schemes, we see that the level of higher-frequency variability may also depend on the first guess used. The box method and the OA with the previous month as the first guess are similar, but the OA with climatology as a first guess shows less variability during the earlier decades of the series. Thus, relative to the box method, the OA shows an increasing variability with time. This comparison illustrates that one

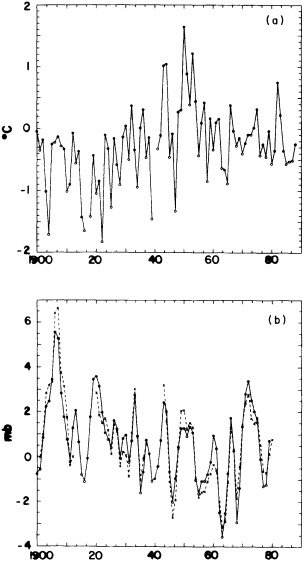

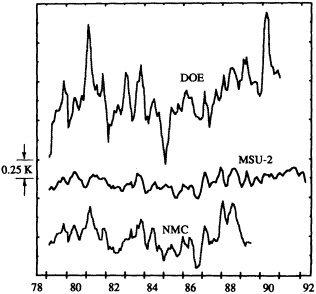

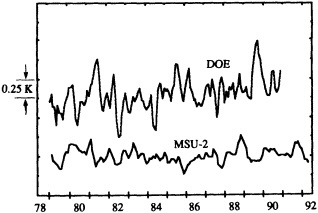

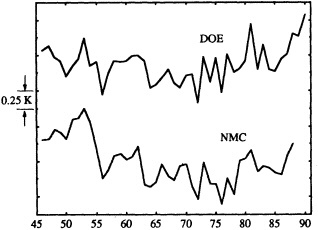

FIGURE 1

Time series of high-frequency variability (periods less than 30 years) of globally averaged sea-surface temperature. Top panel: Dashed curve is obtained by box method (Bottomley et al., 1990). Solid curve is obtained from optimal averaging using climatology as a first guess (Vinnikov et al., 1990). Bottom panel: Curves derived as above except that optimal averaging is obtained by using previous month as the first guess.

must be a bit careful in deriving conclusions about changes in variability from area averages. The estimates of variability may be sensitive to changes in data distribution and the particular analysis scheme used.

Data limitations become extremely severe when one attempts to quantify natural variability on decade-to-century time scales from proxy records. What information can we really derive regarding the patterns of large-scale variability from widely scattered proxy measurements? I think that further effort is required in two areas to maximize and better quantify the information content of the entire climate data base, both instrumental and proxy. First, we need more studies along the lines of data system tests. More specifically, using the more dense data distribution of recent decades, we should determine the loss of information as the distribution of observations is decreased. This will help establish confidence limits for estimates of large-scale averages based on the sparse distribution of observations and proxy series obtained during earlier decades and centuries.

Second, we need to place more emphasis on integration of information from various proxy sources, with the goal of obtaining a better picture of the pattern of global variability during earlier centuries. This is admittedly a formidable task when one is dealing with decade-to-century time scales. I do not believe that the concept of an overall integration of information in decade-to-century variations has yet to take root in the proxy community, but I think it should be strongly encouraged as a long-term goal.

Discussion

GROISMAN: Two quick comments, one for Gene and one for Henry. Gene, I just wanted to note that the SST variability does not reflect conditions over the Arctic. There is about a 25 percent difference in amplitude between SST and marine air temperature over the open ocean, the latter being higher, so there may be other reasons for the difference you cite. Henry, the problem with tree rings is that there are very few 1000-year-old trees, so in a time series you can't resolve the same mean variance.

DIAZ: I used only air temperature over land, no SSTs. Also, I started in 1891, when we had fairly good coverage. The changes in data distribution don't have much influence on the large-scale averages. As for the tree rings, we did try to compensate by looking at decadal means and interdecadal variability, and I don't think the middle section has much of a problem.

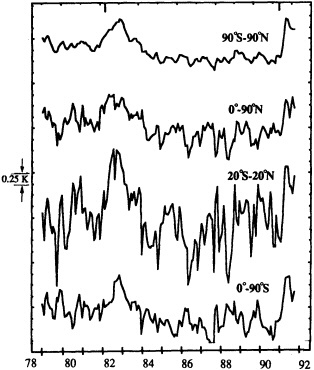

KEELING: I have a figure that is based on the Jones-Wigley temperature record, with a slightly different smoothing. Some of the early variability and the apparent biennial signal are probably the result of sparse data; beginning in 1951 the record is fairly homogeneous. You can see the 1958, 1961, 1963, 1982 El Niños. I'd like to know whether there is some difference in the quantity of data, or in its processing now that satellite data and ground data are being mixed, that has changed data variability in the last decade.

TRENBERTH: Perhaps when you fit the spline you are taking out more of the variance at the end.

GHIL: Henry, I was puzzled by the apparently much higher interdecadal variability in the second of your first two tree-ring figures.

JONES: It's partly due to the indexing procedure that there is more low-frequency variance in the top curve than the bottom one, though some of it could be real. KARL: It's important to remember that there are many different ways to classify variability. I suspect that I could show more or less of it just by defining it differently. On this particular figure, I think we need to be aware that one is for a longer season than the other, and the shorter period of a time series will give you higher variability.

DIAZ: Another point is that getting longer time series from proxy records may not improve our understanding of climate change mechanisms.