Introduction

Climate studies are motivated by the curiosity we all have about the weather, and our desire to predict it in order to make economic projections. The general public tends to focus on local weather—and thus generally on weather over land rather than over the ocean—as well as on fairly short-scale climate variations, several years rather than decades to millennia. For these reasons it is not always appreciated outside the scientific community that the ocean is an essential component of the coupled climate system. Understanding and modeling the ocean and its coupling to the atmosphere, land, and biosphere is vital. In the realm of fisheries, climate variations in the ocean itself can be seen to have an economic impact; recognizing this, coastal states have supported oceanography.

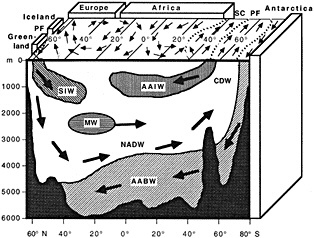

Approaches to studying the ocean's role in climate can be divided into two types: understanding and modeling the ocean as part of the fully coupled climate system, and observing, quantifying, and modeling the dynamics of the ocean itself. Clearly there is great overlap, and a full grasp of the latter is necessary for progress in the former. The ocean's most obvious and direct importance to land-based climate variations lies in the fact that it sets the surface temperature that forces the atmosphere over three-quarters of the planet; distribution of sea ice is important also, since it affects the planetary albedo and the amount of ocean/ atmosphere heat exchange. Predicting the surface temperature of the ocean and the extent of sea ice is not a simple exercise, however: It involves atmospheric forcing, lateral circulation, and vertical overturning. The last is affected by the salinity distribution, and salinity depends on factors that are similar to those influencing the ocean temperature.

Because of its great thermal inertia relative to that of the atmosphere, the ocean has a significant effect on climate. The two most commonly mentioned climate phenomena in which the ocean's role is important are El Niño, which is purely natural variability, and global warming, which is partly anthropogenic. Much progress has been made in observing and modeling both the oceanic and the atmospheric components of El Niño; other obvious climate effects are also strongly tied to the ocean, such as the higher temperatures in northern Europe relative to those of northeastern Canada. The papers in this volume discuss a decadal climate oscillation in the North Atlantic involving both the atmosphere and ocean, and a longer-period oscillation in the North Pacific, neither of which is firmly tied to El Niño. Our understanding of the ocean's role in much longer-scale variations, such as glaciations, has also improved greatly through the use of proxy records and coupled ocean-atmosphere modeling, both of which are represented in this volume. Thus our vocabulary of climate variations, even if limited to those we can quantify today, is already much broader than those that have drawn the most public attention.

In order to dissect the relative roles of the ocean and atmosphere in climate, it is necessary to both observe and model. Modeling is particularly important because of the relative lack of long-term observations. The merchant marine data set is the most comprehensive in time and space, since ship observations have been reported and archived for many years. This data set is limited to the sea surface, however, and is good only in regions of high merchant marine activity. The time series of water-column ocean

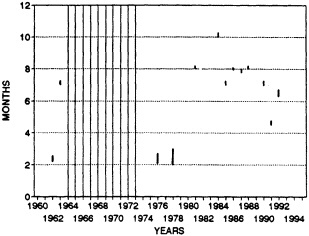

observations reported in this volume are representative of what is available globally; at best they are repeated measurements of temperature and salinity through the water column, collected at regular intervals and at the same location over decades. Such time series are very restricted spatially; they are usually localized to the coastal waters of a state or country that depends on fisheries. The time series that are more widely spatially distributed, such as those from the defunct weather ships, tend to be single points separated by a long distance from the next station. The upper water column has been better measured since the 1970s using ships of opportunity, but only for temperature and not salinity. (Relatively little from this extensive data set is reported in this volume.) Proxy records from sediment cores relate primarily to much longer time scales than the decade-to-century ones that are the focus of this volume, and consist of very few data points. Thus we are operating observationally from an extremely limited data set. In the last few years, attention has been turned to extracting as much information as possible from the data we do have, and progress has been remarkable under the circumstances. Many fine examples of this work are presented in this chapter.

Ocean modeling relevant to climate has progressed greatly in the last decade, due to the growth of computing power, the availability of a community ocean model, and an increased focus on these problems by a small community of modelers. Enormous advances have been made in understanding the El Niño problem in the tropical Pacific. Several modeling-related papers in this volume present new insights into the role of the lateral and overturning modes of ocean circulation in producing climate oscillations.

The current public concern about global warming and other issues related to climate and its prediction has resulted in international plans for greatly enhanced ocean observations in space and time. Major efforts include the placement of monitoring equipment in the tropical Pacific for El Niño prediction, a global ocean-circulation observational experiment designed to enhance our knowledge of the circulation as it exists today and provide better data for modeling, and establishment of global monitoring. Global monitoring should be focused on time series of ocean properties that affect climate and/or reflect climate change; these include temperature and velocity, and, as we are recognizing more and more, salinity. For this monitoring to be effective, measurements must be made in the upper ocean worldwide; at locations likely to be indicative of climate, they should be made throughout the relevant portion of the water column. Perhaps even more important than location, however, will be some assurance that a time series will be maintained indefinitely. If these two conditions can be met, monitoring should be begun as soon as possible. It is therefore critical that as much knowledge as possible be synthesized from currently available data and models, to ensure that the ocean observing systems will be both efficient and comprehensive. The papers in this chapter, which reflect the increasing attention being paid to climate issues by the oceanographic research community, represent a large advance in our knowledge of ocean variability on decade-to-century time scales.

Ocean Observations

MELINDA M. HALL

INTRODUCTION

Natural variability in the ocean has periods ranging from seconds to millennia. Those phenomena that have the most obvious impact on human affairs tend to recur periodically as well: daily, such as the tides; sporadically, such as storm surges or tsunamis; and seasonally, such as the simple warming of coastal waters in summer. Most of these events are predictable to varying degrees. It is now recognized that occurrences of the El Niño-Southern Oscillation (ENSO), a phenomenon of global scale that has tremendous socioeconomic consequences, are quasi-periodic (over a term of several years) and are therefore within the realm of predictability as well. Our understanding of these examples of natural variability, and hence our ability to predict them, are derived from our past experience with them—in other words, repeated observations of the same event—as well as from theoretical models based on ocean physics. Identifying the effects of anthropogenically induced changes in the ocean is a subtle problem, for there are few precedents against which models can be tested. But a prerequisite for prediction in any case is a knowledge of the natural variability inherent in the system, and an understanding of the physics that drives that variability.

The study of natural variability at periods of decades to centuries presents particular challenges. A primary difficulty derives from the fact that the observed variability that we surmise to be associated with climate change is generally smaller in magnitude than variability due to other causes, and is sometimes at the limits of instrumental accuracy. Long time series are therefore required to deconvolve its signal from the much more energetic influences of seasonal and other types of variability. Long in situ records are inherently difficult to obtain, however, due to the hostile nature of the very environment we are trying to observe. Indeed, because oceanographic data will never be quite complete enough to ''solve" the problem, there is a natural interdependency between the observations and modeling efforts. Models can provide globally complete fields, but data will always be required for their initialization, calibration, and validation.

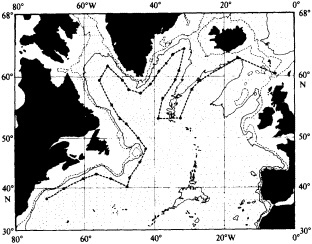

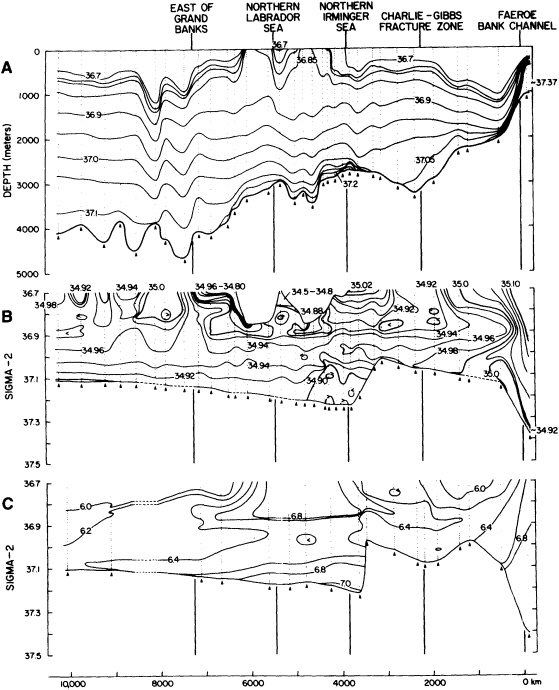

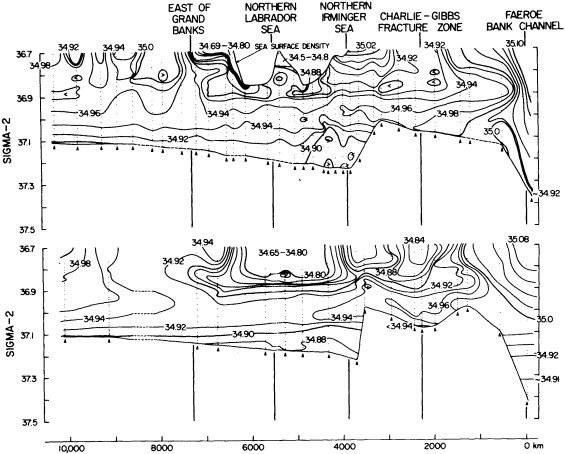

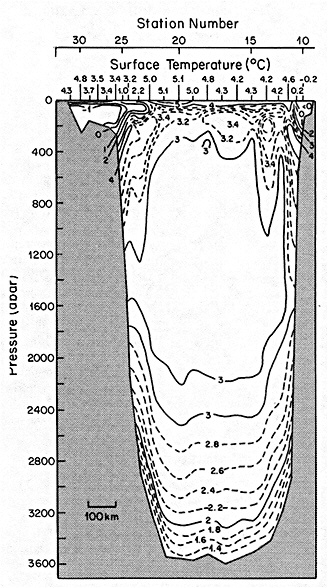

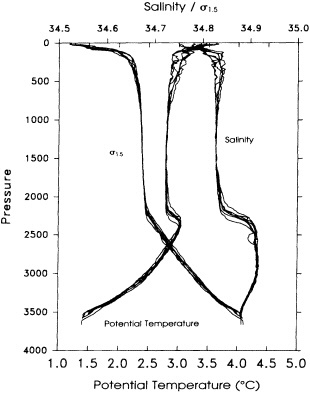

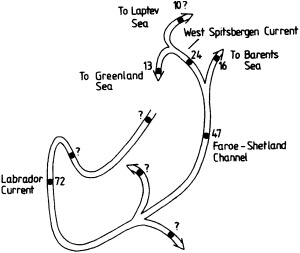

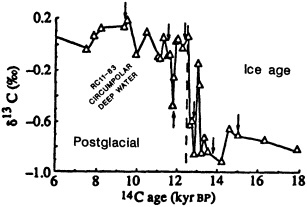

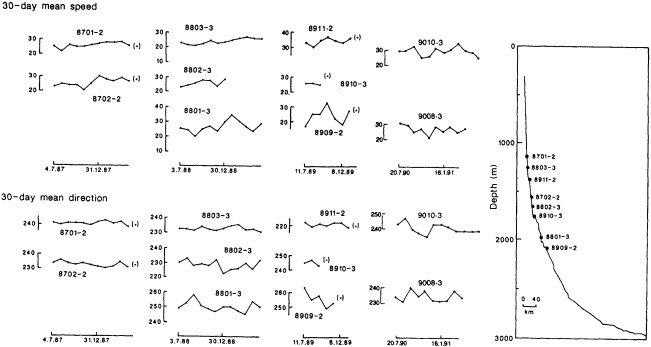

On the other hand, regarding the observational effort, it is important to note that oceanographic variability tied to atmospheric forcing may be much stronger in isolated areas. For example, it is now recognized that the production of deep water in the northern North Atlantic is intimately related to the global climate, and thus changes in its production are either the result of, or harbingers of, more widely spread climate changes. Although it is almost impossible to directly measure the amounts of water convectively overturned each year, much qualitative and some quantitative information regarding production in previous years can be inferred from an examination of the variability of water properties at locations downstream from the source waters, in the deep western boundary current that carries these waters to the mid-ocean. Swift (1995) clearly outlines these arguments; focusing particularly on the deep-water formation in the northern North Atlantic, he provides a good introduction to how one documents, studies, and interprets decadal changes.

Before returning to these ideas in more detail, the reader might find a brief history of ocean observations to be useful.

HISTORY OF OCEAN OBSERVATIONS

Quantitatively useful ocean observations date back only to about the turn of the century, which brought several important advances to the field of oceanography, and might be said to mark the start of a "modern" era. Around this time, empirical formulas were developed relating salinity, chlorinity, and density. These allowed precise salinity measurements to be made, since samples could be titrated to determine clorinity. Coincidentally, although the mathematics governing fluid dynamics had been studied for centuries, general physical theories of ocean circulation also developed with great rapidity beginning around the turn of the century. Many of these advances can be attributed to Scandinavian researchers (for a more detailed history, see the Introduction in Sverdrup et al., 1942).

The oceanographic expedition of the German research vessel Meteor, in 1925-1927, was led by Georg Wüst. It is notable for (at least) two contributions: First, Wüst's careful attention to accuracy and detail rendered the Meteor data useful as a baseline for comparison with later measurements of temperature, salinity, and dissolved oxygen. Second, Wüst conceived of and popularized the "core" method for determining the circulation of water masses. This method is based on the assumption that water parcels acquire their physical characteristics when they are in contact with the atmosphere at the sea surface, and that they retain these characteristics as they sink and flow into the ocean. Thus, Wüst concluded, the large-scale circulation in the ocean is reflected in the patterns of the temperature, salinity, and oxygen distributions. This concept is of fundamental importance to observations of deep-water production and circulation, particularly in recent decades when we have been able to measure many chemical constituents of anthropogenic origin (Schlosser and Smethie, 1995). By the middle of this century, temperature was being determined accurately to within about ±0.02°C and salinity to within about 0.02 permil. The next baseline for observational oceanography was the International Geophysical Year, carried out in the mid- to late 1950s. This coordinated series of expeditions sought to map the physical properties of the entire Atlantic Ocean on a somewhat regular grid, and the resulting data provide the second "snapshot," three decades after Wüst's work, of the North Atlantic temperature and salinity structure. (Fuglister's 1960 atlas presents these data.)

Clearly, because of the limited accuracy of most measurements before 1900, there exist relatively few examples of long time series of measurements useful to the study of climate change. Roughly century-long global or regional records derived from operational measurements of such quantities as sea-surface temperature, sea-ice cover and extent, and sea-level measurements have been accessible to observers much longer than quantitatively useful deep ocean measurements. Decades-long time series of deep-ocean properties do exist, but are generally either mid-ocean and very isolated, or extensive spatially but limited to coastal waters. Fisheries provide strong economic motivation for such programs as the 40-year time series from the CalCOFI hydrographic cruises off the coast of California, or some of the repeated hydrographic data sets maintained for years off the coast of Japan. For several decades, a number of mid-ocean stations were occupied regularly by the ocean weather ships, for the purpose of providing marine weather forecasts.

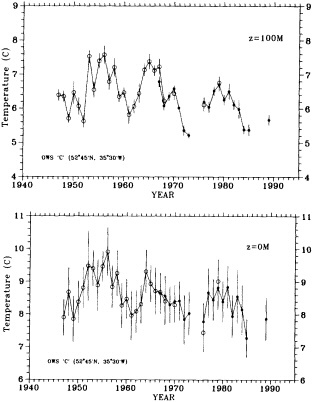

Although long time series collected explicitly for climate studies or other research purposes are virtually nonexistent, an outstanding exception is the time series of temperature and salinity from the Panulirus station, located in deep water just off the coast of Bermuda, which already has contributed to studies looking at long-term variability in properties of the North Atlantic. Finally, there exists a vast archive of expendable bathythermograph (XBT) data collected from merchant ships, which is global in extent but has remarkably dense coverage in the North Pacific, where the NORPAX program is in its third decade. Although the XBT measures temperature as a function of depth to only 400 or 750 m (sometimes 1500 m), prediction of decade-to-century-scale ocean variability will require emphasis on such upper-ocean monitoring.

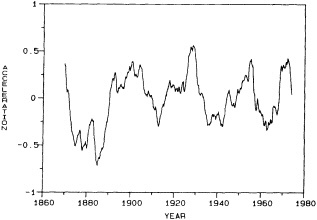

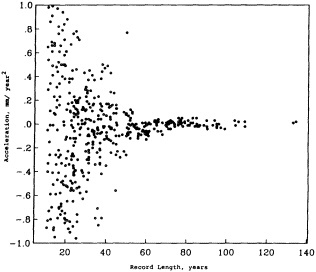

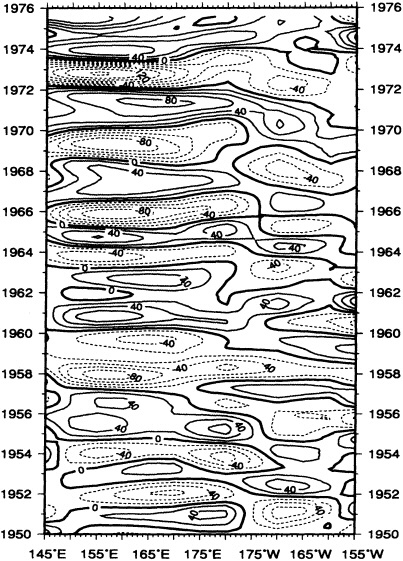

Parker et al. (1995) describe how useful baseline data sets can be constructed from historical records to yield a more comprehensive record of, in this case, monthly sea-ice and sea surface temperature fields dating back to January 1871. Since the resulting time series may exceed a century in length, it can be used for forcing and testing numerical models designed to examine variability at decade-to-century time scales. Mysak et al. (1990), analyzing sea-ice concentration and ice-limit data collected over almost 90 years, have found decadal-scale fluctuations in sea-ice extents, and have related them to other processes in the Arctic in a "negative feedback loop." Mysak (1995) reviews the evidence for such self-sustained climatic oscillations, presents more recent evidence strengthening these conclusions, and suggests links between the Arctic cycle and interdecadal variability at lower latitudes. That some of these observed interdecadal fluctuations are regular implies that they may in fact be predictable. Douglas (1995) reaches somewhat more negative conclusions in analyzing two sets of tide-gauge records (80 years and 141 years long): He finds no statistical evidence for acceleration of global sea-level rise, which is predicted to accompany global warming. A major difficulty is that the interdecadal signal overwhelms any longer-term trend. However, an understanding of the physics involved in the sea-level rise would allow this interdecadal signal to be removed from tide-gauge records, and would

reduce the record length required for detecting acceleration of the rise.

These analyses illustrate both the possibilities and the difficulties involved in dealing with data collected by operational measurements. These should be borne in mind in planning future observing systems, for it is clearly on such operational measurements that we will depend if we are to acquire sufficient data coverage to monitor climate variability.

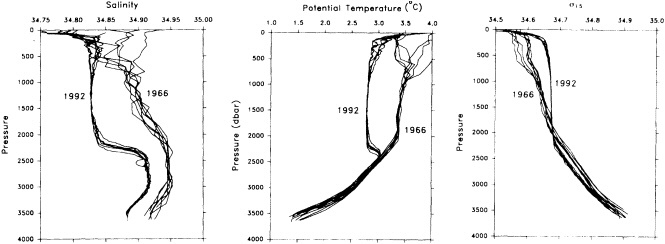

INTO THE PRESENT

The explosion of the electronics industry and the advent of the Space Age with its technological advances have had obvious implications for oceanographic observations. The development of electronic CTD (conductivity-temperature-depth) instruments now allows virtually continuous vertical sampling of the water column, whereas individual bottle samples are typically spaced 10-25 m apart in shallow waters and may be separated by several hundred meters at depth. Temperature is regularly measured to millidegree precision with an accuracy of ±0.002°C, and salinity is measured to a precision of 0.001 permil, with typical accuracies on the order of ± 0.002%. These accuracies are capable of revealing local and regional changes of water-mass properties over time even at depth, where the magnitude of the variability is usually < 0.01°C and 0.02 permil (see, for example, Levitus et al. (1995)). Besides obtaining the necessary accuracy of measurements, it is essential to establish time series of velocity, temperature, and salinity. These goals are being accomplished with the use of new technologies and improvements to existing instruments: continuation, expansion, and extension of XBT collection to high-resolution, deeper sampling; implementation of global arrays of surface drifters and subsurface floats; acoustic tomography; and satellite measurements. In addition, expendable CTDs (XCTDs) are becoming a viable though still expensive means of increasing coverage of salinity as well as temperature observations in thermocline waters.

Both surface drifters and subsurface floats have been in use for decades as a means of measuring absolute water velocities, which cannot be determined from hydrographic data alone. However, recent design improvements have increased their usefulness, as well as their lifetimes. Surface drifters, for example, are now drogued properly and designed for minimal windage to sample surface velocities accurately. Subsurface floats can be programmed to follow an isopycnal surface rather than a constant-pressure surface, and to change their depth periodically to provide vertical sampling; they can also be equipped with temperature and conductivity sensors for sampling hydrographic properties. Floats either transmit their data to acoustic transceivers moored on the ocean bottom, which must then be retrieved, or they surface periodically to telemeter their position and other stored data to a satellite, which transmits the information to a shore-based lab, allowing near-real-time data analysis. Floats can live up to four years, are easily deployed, and are generally considered "expendable."

Acoustic tomography takes advantage of the changing speed of sound in seawater, due to changes in density. At mid-depths a "channeling" effect allows transmitted acoustic signals to travel thousands of kilometers with little attenuation. (Note the summary of Dr. Munk's speech in this section.) Moreover, since density is a strong function of temperature, the measured travel time of an acoustic signal between two transceivers is related to the heat content of the water between them, suggesting the use of large arrays of acoustic transceivers as a potential tool for monitoring long-term changes of heat content at transoceanic scales.

Another technological advance of the past two decades is the development of remote sensing capabilities, that is, observations of the sea surface from instruments mounted on satellites in orbit around the earth. Different frequency bands are exploited to image different aspects of the ocean's surface. For example, infrared (IR) imagery can be used to deduce and map sea surface temperature, but because its ability is limited by the extent of cloud cover over the ocean, it is a more useful tool in subtropical and tropical latitudes than near the poles. On the other hand, microwaves penetrate the cloud cover, and several satellite-borne instruments are based on this frequency band. Radar altimeters can be used to determine the absolute distance between satellite and sea surface; scatterometers yield information on wind speed and direction over the sea surface; and synthetic aperture radar (SAR) can be used to map or image a wide variety of dynamical features at the sea surface and in the upper ocean. Clearly, satellites offer the potential of global coverage in space and more or less continuous temporal coverage of the ocean's surface. However, for future monitoring capability, it is essential that consistency be maintained: Sequential satellite missions must provide continuity in time, and they must sample in overlapping frequency bands—something past measurements have not.

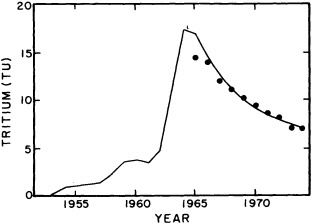

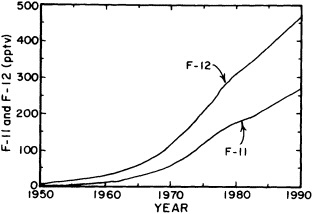

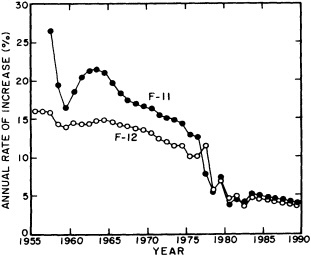

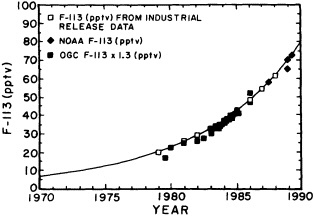

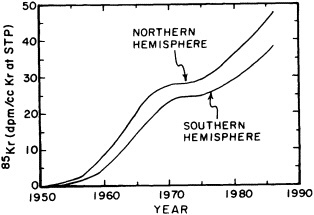

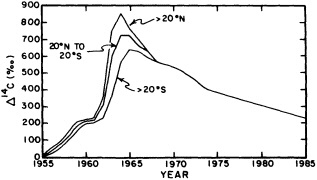

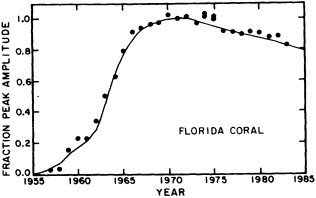

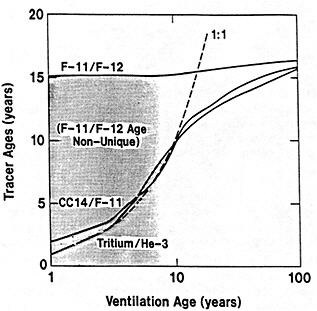

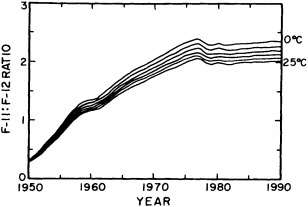

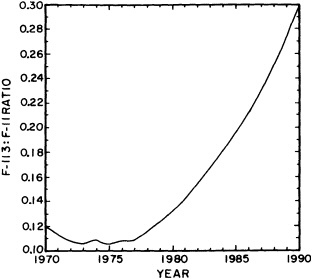

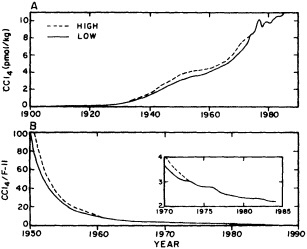

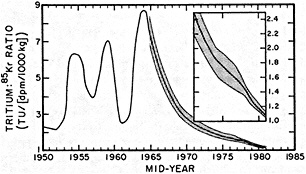

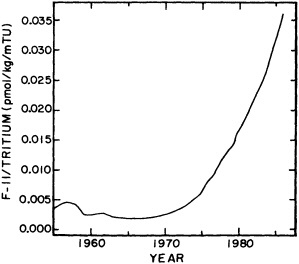

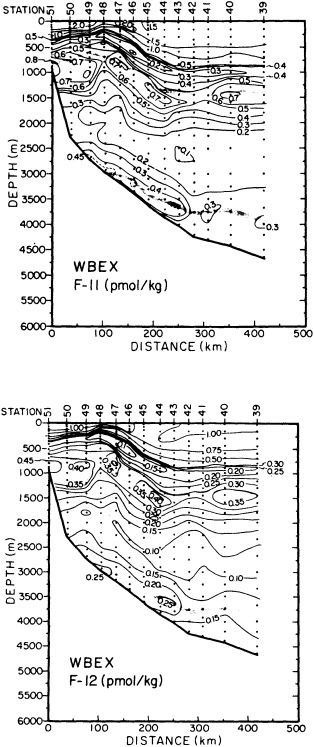

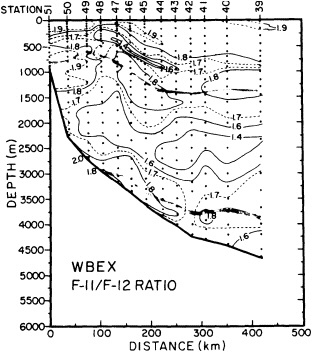

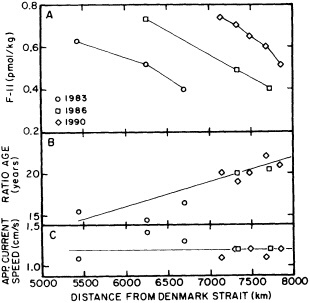

The past several decades also have led to the almost routine sampling of a host of other physical and chemical properties, including concentrations of helium and tritium, halocarbons ("freons"), and radiocarbon, which exist in trace quantities in the ocean. Some occur naturally, and some have been anthropogenically produced; in some cases the anthropogenically induced signal overwhelms an existing natural signal. All of these quantities act as "tracers" of water masses in the Wüstian sense: Once a water parcel has acquired its characteristic value of a tracer from contact with the atmosphere, it retains that value as it sinks and participates in the ocean circulation. Wüst's core method for tracing deep-water flow is thus appropriate, with one fundamentally important difference: Unlike temperature and

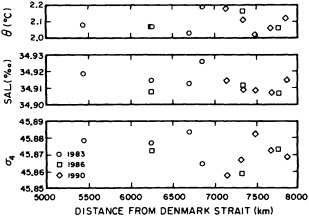

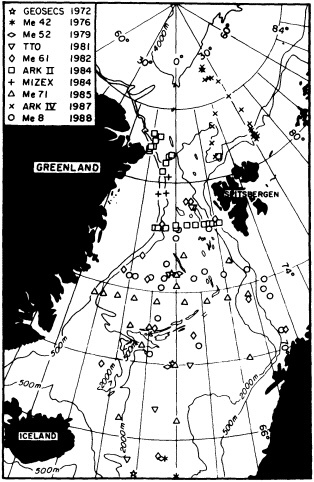

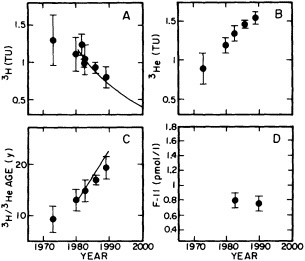

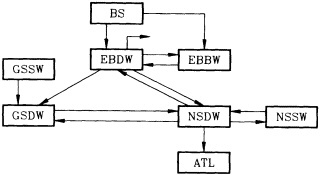

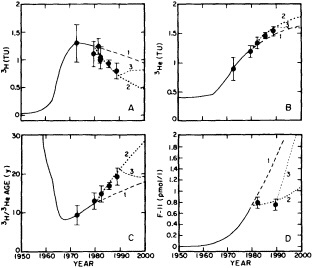

salinity, these trace substances carry time information. Bomb tests of the early 1960s and the ever-growing use of halocarbons in industry since the 1930s are among the sources for these tracers. It is fairly well known at what rate over time they have been injected into the atmosphere, and/or at what rate they decay or are destroyed. Schlosser and Smethie (1995) describe the nature and measurement of these "transient tracers." (Because of the particularly sparse nature of the observations in space and time, they emphasize the need to apply a model for interpreting the data most of the time.) They demonstrate the utility of tracers for studying decadal-scale variability by presenting two specific examples, and suggest that transient tracers, with their unique time-history information, be employed as part of an ongoing climate monitoring system.

TOWARD THE FUTURE

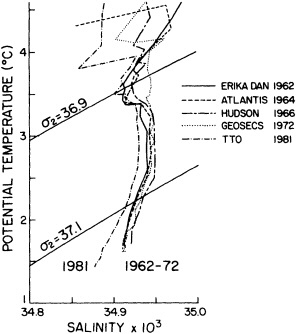

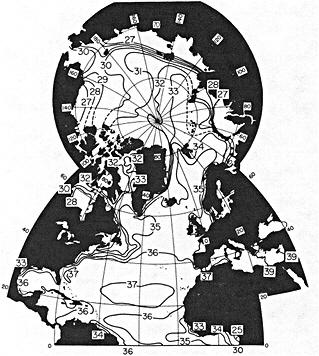

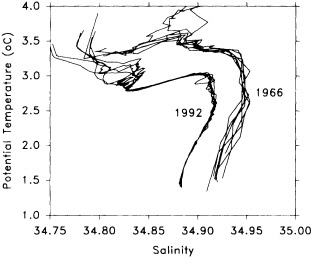

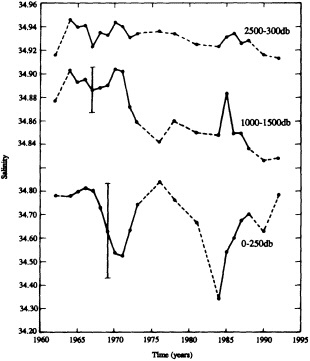

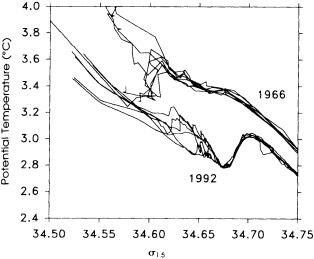

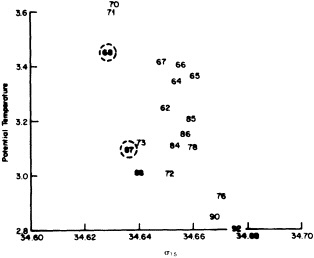

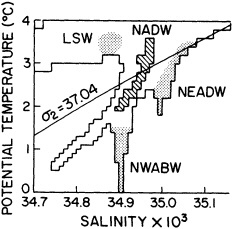

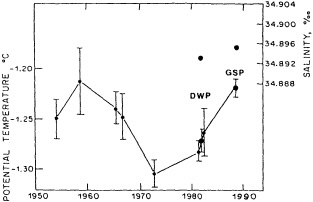

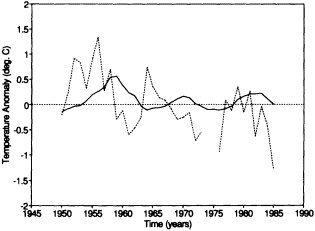

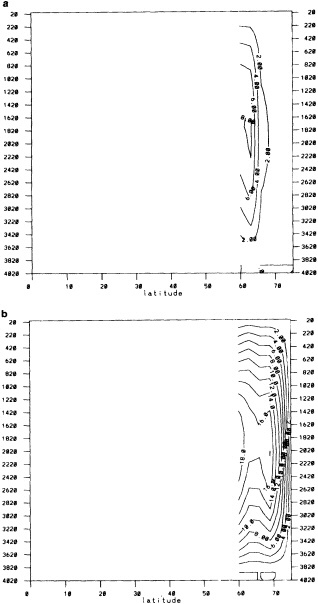

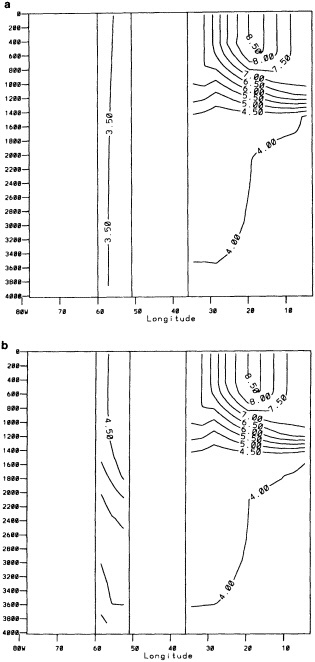

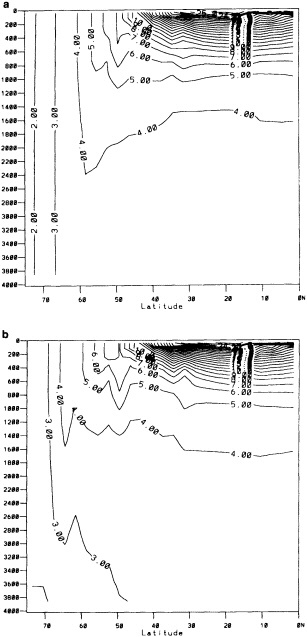

We noted earlier that deep convection occurring in the marginal seas surrounding the North Atlantic provides the sources for waters found in the North Atlantic deep western boundary current. Besides the tracer-based studies presented by Schlosser and Smethie, evidence for interdecadal variability has been documented in temperature and salinity records for other areas of the North Atlantic. Examples are presented by Swift, Lazier, and Dickson in this section. Swift (1995) discusses the freshening in recent decades of both deep and upper waters of the northern North Atlantic, and speculates that it is related to long-term shifts in the wind-driven ocean transport. Lazier (1995) argues that although in a broad sense LSW is characterized by a relative salinity minimum coincident with a relative stratification minimum at depths of 1000 to 2000 m in the ocean, its properties cannot be tracked properly over time by plotting temperature or salinity in the traditional way, on a constant-density surface, since LSW is not necessarily formed at a constant density year after year.

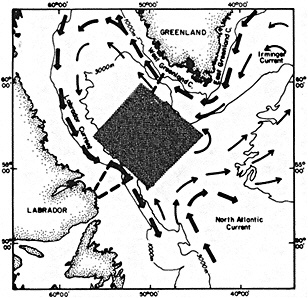

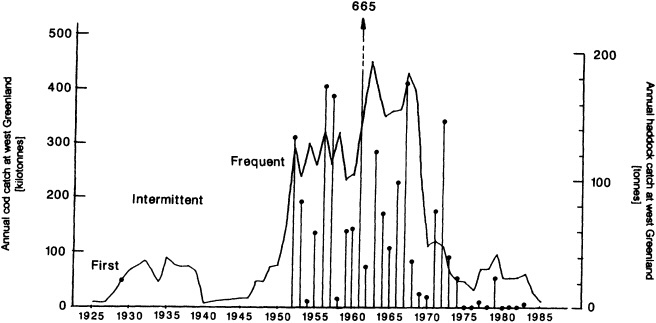

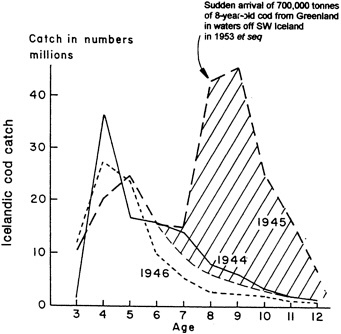

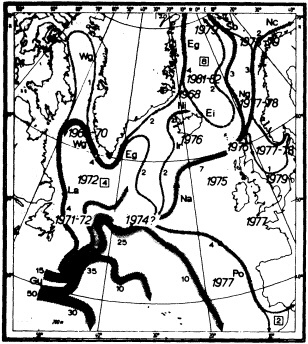

The work by Dickson (1995), who describes interdecadal variability of physical exchanges and transfers in the Irminger Sea and through the Denmark Strait, is a good example of the impact of geographically isolated variability on local, regional, and global scales. Locally, the impact is socioeconomic, affecting nearby cod fisheries. Regionally, the variability is tied in with the Great Salinity Anomaly (Dickson et al., 1988) through anomalous ice and freshwater production and export from the Arctic. Finally, the deep water formed in the Irminger Sea contributes to the total North Atlantic Deep Water production, which in turn is part of the global thermohaline circulation.

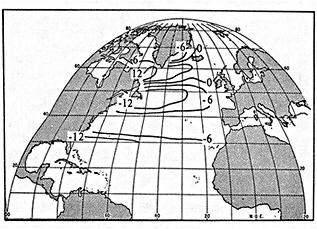

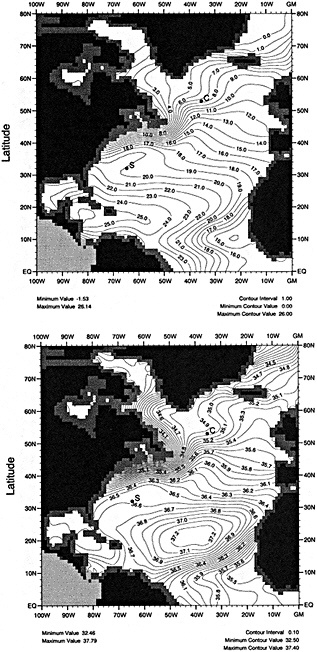

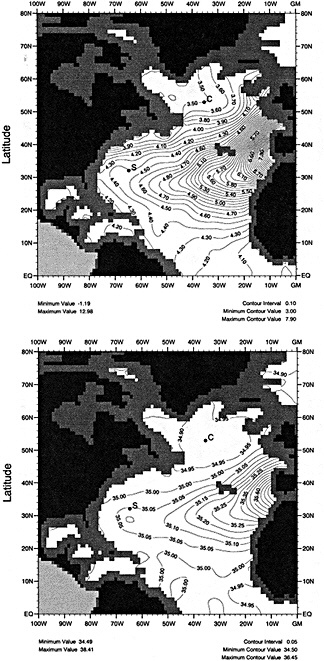

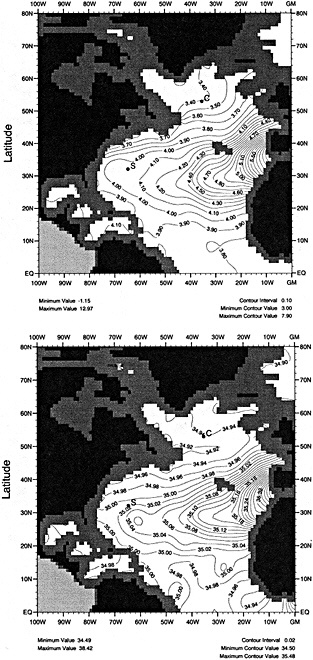

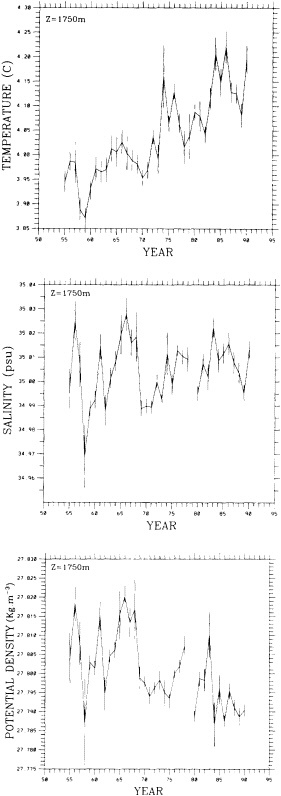

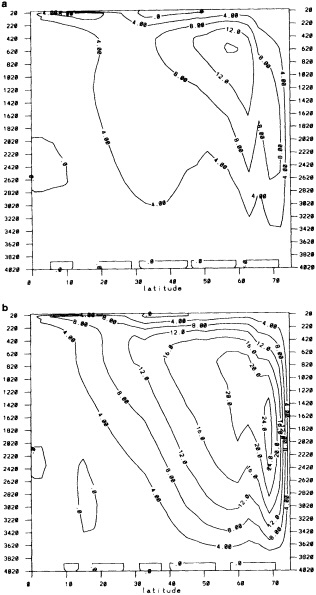

It should be pointed out that interdecadal variability is not limited to marginal seas and boundary currents, although such examples are prominent. Levitus et al. (1995) document interdecadal changes in the temperature and salinity fields in the interior of both the subpolar and subtropical gyres of the North Atlantic, by applying appropriate averaging techniques to the vast but irregular (in space, time, and quality) historical data base of the North Atlantic.

In addition to developing our ability to observe interdecadal changes in pivotal areas likely to be associated with more widespread climate change, we would of course like to be able to predict future changes. This will require continued effort in coupled ocean-atmosphere model development, using historical data for testing and validation purposes, as well as acquisition of real-time data as input for prediction of future climate states. The oceanic community recognizes these issues and has begun to address them in recent years with several programs of broad scope. Among these is the Tropical Ocean and Global Atmosphere (TOGA) Program which was initiated to increase understanding of ENSO events, but has contributed as well to our data base in the Pacific, especially the equatorial Pacific. Recently, TOGA has successfully made the transition from a scientific investigation to an operational monitoring system, maintaining an extensive upper-ocean network in all three oceans, with greatest concentration in the tropical Pacific. Though geographically limited, it might be regarded as a prototypical model for more extensive future systems. At high latitudes, increased effort is now being applied to understanding the complex interactions of the atmosphere/ocean/sea-ice system, as we have come to realize its significant role in determining the thermohaline circulation. This effort includes both more observational work than historically has been possible, and intensive modeling studies by a variety of individuals.

Two programs under way that are more attuned to longer periods of variability are the Atlantic Climate Change Program (ACCP) and the World Ocean Circulation Experiment (WOCE). The first of these seeks to determine the nature of interactions between the meridional circulation of the Atlantic Ocean, sea surface temperature and salinity, and the global atmosphere. Attaining this goal, it is noted, will require documentation of the general characteristics of decadal/century modes of Atlantic variability for model validation. WOCE, which is internationally coordinated and funded, has as its primary scientific objective "to understand the general circulation of the global ocean well enough to be able to model its present state and predict its evolution in relation to long-term changes in the atmosphere" (U.S. WOCE Office, 1989). WOCE includes both observational and modeling components, and also addresses data management issues. The Global Ocean Observing System (GOOS) comprises the operational extensions of programs such as GOOS and ACCP; its design will rely to a large extent on the scientific background provided by the research experiments. This international project, with its large amount of support, will be the vehicle for collecting much long-term data useful

for modeling climate prediction. Numerous other programs that are under way or in the planning stages seek to understand the myriad other processes contributing to climate.

The collection of papers presented in this section demonstrates that the tools exist to observe decade-to-century time scales of variability in the ocean, although there are clearly gaps in our understanding of underlying physical processes. The remaining challenge is to determine how to distribute necessarily limited resources among these different tools, in order to create a viable operational observing network that can be maintained well into the future. This challenge calls for close cooperation between observationalists and modelers, oceanographers and atmospheric scientists, and the academic and political communities.

Marine Surface Data for Analysis of Climatic Fluctuations on Interannual-to-Century Time Scales

DAVID E. PARKER, CHRIS K. FOLLAND, ALISON C. BEVAN, M. NEIL WARD, MICHAEL JACKSON, AND KATHY MASKELL1

ABSTRACT

The sea surface temperature (SST) data base of the Bottomley et al. (1990) Global Ocean Surface Temperature Atlas has recently been augmented with COADS data, better corrections for uninsulated and semi-insulated buckets have been applied, and other improvements have been made. A spatially and temporally interpolated version of the data set has been blended with historical sea-ice data and the 1951 to 1980 Bottomley et al. climatology to give monthly "globally complete" fields since 1871. One version of the data set includes satellite SST data from 1982 onward. To help explore more accurately the relationships between atmospheric circulation, surface climatic parameters, and SST, refined adjustments to marine wind data to compensate for progressive changes in observing practices have been derived using pressure data back to 1949. The improved winds, along with other atmospheric data, can also be used in the verification of numerical model simulations of the atmosphere forced with the new SST and sea-ice data set.

INTRODUCTION

The improvement of the data base of marine meteorological observations is a crucial prerequisite for most studies of climatic variation. Although "frozen-grid" experiments (e.g., Bottomley et al., 1990; Folland et al., 1990; Folland and Parker, 1992) suggest that multidecadal hemispheric and global mean sea surface temperature (SST) anomalies have been estimated with some reliability since at least the late nineteenth century, confidence in these estimates will be improved by any increase in coverage of, and improved analysis of, the data. Better analyses on shorter time scales are certainly needed. Moreover, regional or ocean-basin-scale studies will greatly benefit from improvements to the data. Most important, simulations of recent climate using numerical models require globally complete and, as far as possible, unbiased SST fields as input and, in addition, require reliable and reasonably complete coverage of mean-sea-level pressure and surface winds for use in verification. Satisfactory data bases of these latter parameters are currently lacking, except for short periods of modern surface-pressure data.

In this paper we present a new, "globally complete" monthly analysis of SST and sea ice, known as the Global Sea Ice and Sea Surface Temperature (GISST) data set. GISST 1.0 and 1.1 have already been created; plans for future versions are indicated. We also describe recent improvements in the analysis of trends in marine surface winds.

OUTLINE OF CREATION OF GISST 1.0

GISST 1.0 is a monthly data set that extends from January 1871 to December 1990. We created it in the following stages.

-

The monthly 5° latitude × longitude Meteorological Office Historical Sea Surface Temperature Data Set (MOHSST4, Bottomley et al., 1990) was augmented with 2° latitude × longitude data from the Comprehensive Ocean-Atmosphere Data Set (COADS, Woodruff et al., 1987) after these had been averaged into 5° boxes and subjected to rudimentary extreme-value quality control. The resulting data set is known as MOHSST5. The results were converted to anomalies from the Bottomley et al. (1990) 1951 to 1980 climatology.

-

Improved corrections to compensate for the use of uninsulated and semi-insulated buckets (Folland, 1991; Folland and Parker, 1995) were applied to the data up to 1941.

-

Missing and extreme monthly 5° latitude × longitude area SST anomalies were replaced by the mean of four or more spatially adjacent anomalies if available, or, in their absence, by the mean anomaly of the two adjacent months at the same location if available.

-

The coverage was further enhanced by replacing missing values with weighted SST anomalies from up to 5 months either side of the target month. Weights decreased with elapsed time before or after the target month.

-

Using the Bottomley et al. (1990) globally complete high-resolution 1951 to 1980 climatology, the fields of 5° latitude × longitude SST anomalies output by step (4) were converted to 1° latitude × longitude SST values.

-

Sea-ice extent information from a wide variety of sources was added.

-

SSTs were assigned in a special way to data-void 1° latitude × longitude areas adjacent to ice edges and to any data-void open-water areas that were climatologically ice covered.

-

SSTs were extended into the remaining missing areas using the Laplacian of the 1951 to 1980 climatology (Bottomley et al., 1990; Reynolds, 1988).

-

The resulting 1° latitude × longitude SST analysis was smoothed to retain anomaly variations with about 5° resolution.

TECHNIQUES AND QUALITY CONTROLS USED FOR GISST

The heading numbers below refer to the step numbers in the section above.

1. MOHSST5

-

Any values less than - 1.8°C were set to - 1.8°C. Values for the Caspian Sea were omitted because they, and the Bottomley et al. (1990) climatology there, appear to be unreliable for unknown reasons.

-

The addition of the COADS data resulted in an improvement in seasonal coverage, relative to MOHSST4, approaching 20 percent of the global ocean between the 1870s and World War I, with large improvements in the eastern Pacific. See Figure C3(a) of Folland et al. (1992).

2. Bucket Corrections

The thermodynamic theory is given by Folland (1991) and Folland and Parker (1995). The semiempirical technique used for derivation of the corrections is outlined by Bottomley et al. (1990) and is presented in full by Folland and Parker (1995), whose major differences from Bottomley et al. (1990) include a more rigorous formulation of the heat exchanges affecting wooden buckets and revised estimates of the historical variations of the types of buckets used. In particular, newly uncovered evidence led Folland and Parker to assume, despite considerable uncertainty, that 80 percent of buckets were wooden in 1856 with a linear transition to all-canvas or other uninsulated types in 1920. This brought their estimate of this factor into better agreement with that of Jones et al. (1991), although their estimates of the actual corrections for wooden buckets did not agree because they made different assumptions about the heat transfers involved. The bucket corrections used in the present paper follow Folland et al. (1992) in assuming 100 percent wooden buckets in 1856 and a slightly different specification for these buckets from that used by Folland and Parker (1995) but the resulting corrections generally only differ by a few hundredths of a degree Celsius.

3. Filling and Quality Control

-

Any anomalies exceeding 7°C in magnitude were recorded as missing. This slack criterion allowed anomalies in major El Niño events to be accepted. In future versions of GISST, a geographically varying threshold is to be used.

-

For each 5° latitude × longitude box, the average anomaly for the eight surrounding 5° latitude × longitude boxes was calculated, provided at least four had data. This average was then substituted in the

-

box if the existing anomaly was missing or differed from it by more than 2.25°C. This criterion was chosen empirically following careful tests on monthly 5° latitude × longitude fields taken from a range of years since 1860 and covering the entire annual cycle (Colman, 1992).

-

Next, for each box, the average anomaly for the previous and the subsequent months was calculated, if both were available. This average was then substituted in the box if the existing anomaly was still missing or differed from it by more than 2.25°C.

-

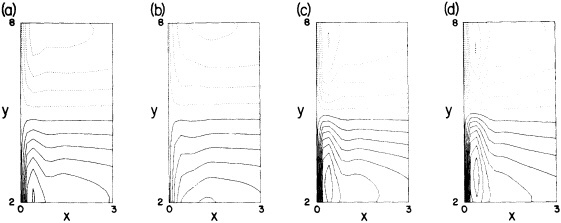

Processes b and c were carried out three times altogether. The substitution of missing data greatly augmented the global coverage in data-sparse years while maintaining spatial coherence (compare Figures 1a and 1b in the color well). The effects in recent years were greatest along the boundaries between well-sampled areas and major data voids, e.g., in the Southern Ocean.

4. Further Enhancement

-

Where data were still missing for a 5° latitude X longitude box, a search was made up to 5 months backward and forward to find the nearest anomalies. If both anomalies were available for months - 1 and + 1, their average was substituted for the missing value. Otherwise, any available anomaly an observed n months before (n negative) or after (n positive) the target month was multiplied by a reduction factor 0.6|n|. The search was continued with increasing |n| until the sum of the reduction factors used (Sdn0.6|n| where dn = 0 for missing data, 1 for available data) reached 0.6; note that both anomalies were used when available from equidistant months. The average of the reduced or "muted" anomalies (p-1Sandn0.6|n|), where p is the number of anomalies used, was substituted for the missing value. The empirically chosen reduction factors are consistent with the global annual average of the monthly lag correlations presented in Bottomley et al. (1990), but no geographical or seasonal variation has been allowed.

-

A further spatial quality control was carried out. This was designed to reduce any grid-scale incoherence introduced by (a) above, especially where anomalies were rapidly changing in time or were much larger than the newly introduced muted anomalies. The procedure corresponded to item (b) in the section on Filling and Quality Control, but as few as two neighboring anomalies were used, and no missing boxes were substituted. If only a single neighboring anomaly was available, the mean of it and the anomaly being checked was used in the same way. Isolated 5° anomalies exceeding ± 2.25°C were reduced to ± 2.25°C.

The step-by-step effects of the "filling" and enhancement stages on sparse data can be seen by comparing Figures 1a, 1b, and 1c for January 1878. For recent years with far more data, the effects were much smaller.

5. Conversion from 5° to 1° Resolution and to Absolute SST Values

This step was an essential preparation for the incorporation of sea-ice fields as well as for the Laplacian interpolation (see below), in which it was necessary to preserve climatological gradients of SST. The 5° resolution monthly anomalies output after the "further enhancement" described in the previous subsection were added to the Bottomley et al. (1990) globally complete 1° resolution monthly climatological SST for 1951 to 1980. This climatology was assigned to 1° boxes in 5° areas without anomalies.

6. Sea Ice

The sources of sea-ice data are listed in Table 1. The NOAA analyses from 1973 onward are largely satellite based (Ropelewski, 1990). Note that published manuscript climatologies were used for earlier times, so that the same calendar-monthly ice cover was used in successive years, as opposed to the use of observed, interannually changing ice cover for more recent times, i.e., 1953 onward for the Arctic and

TABLE 1 Sources of Sea-ice Data

|

a) |

ARCTIC |

|

|

|

Up to 1943 |

German 1919-1943 climatology (Deutsches Hydrographisches Institute, 1950) |

|

|

1944-1952 |

Interpolation to recent climatology (1953-1982) |

|

|

1953-1972 |

Observed data provided by J. Walsh (Walsh, 1978) |

|

|

1973 onward |

Observed data provided by NOAA (Walsh, 1991) |

|

b) |

ANTARCTIC |

|

|

|

Up to 1939 |

German 1929-1939 climatology (Deutsches Hydrographisches Institute, 1950) |

|

|

1940-1946 |

Interpolation to Russian 1947-1962 climatology |

|

|

1947-1962 |

Russian 1947-1962 climatology (Tolstikov, 1966) |

|

|

1963-1972 |

Interpolation to recent climatology (1973-1982) |

|

|

1973 onward |

Observed data provided by NOAA (Walsh, 1991) |

1973 onward for the Antarctic. We fully recognize the very uncertain nature of the earlier climatologies but, in the absence of evidence to the contrary, consider that they provide a better estimate than modern data. The data were interpolated to 1° latitude × longitude resolution. Each oceanic box was designated either "ice" or "water."

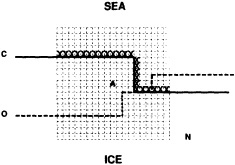

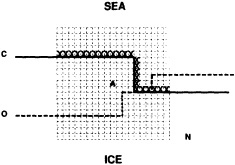

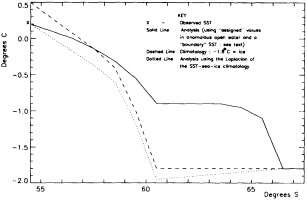

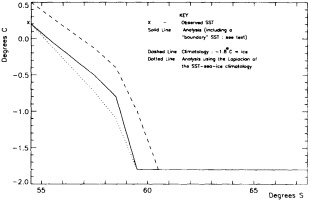

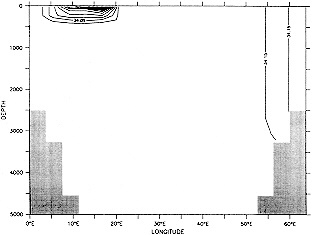

7. Assignment of SSTs near Sea Ice

We chose to produce a globally complete temperature field by Laplacian interpolation, preserving the second derivative of the climatology. To do this, we needed to specify conditions along an external boundary completely enclosing the region to be filled (Figures 2a f). Reynolds and Marsico (1993) used the observed ice limits as their boundary, setting ice points to - 1.8°C. However, this method rarely yields the expected positive anomalies when ice cover is less extensive than its climatological normal. This is because - 1.8°C is also assigned to ice in the climatology, so that the ice edge is given a zero anomaly of SST. Then, since the Laplacian of the SST sea-ice climatology is completely preserved, the computed SST between the retreated ice edge and the climatological ice edge deviates from - 1.8°C only to the extent that the nearest observed SST implies an anomalous gradient of SST. An observed negative SST anomaly thus even yields SSTs below - 1.8°C in the anomalous open-water area (dotted line in Figure 2e). These cold biases also influence the SST analysis between the climatological ice edge and the nearest observations of SST; see also section C3.1.2.2 of Folland et al. (1992). Furthermore, if ice is anomalously advanced toward warmer water, we could expect local SST gradients to be enhanced; thus, an assumption of - 1.8°C at the ice edge and approximate climatological SST gradients seaward, as implied by the Laplacian interpolation, may also yield negative temperature biases (dotted line in Figure 2f). This is particularly to be expected when there are strong gradients very close to the ice edge at or below the 1° latitude × longitude resolution of our analysis, a documented occurrence (Muench et al., 1985).

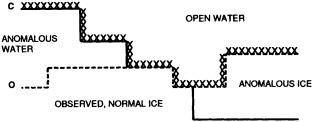

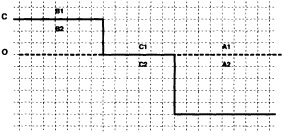

We therefore used specially computed values of SST near ice edges in data-void regions. In a particular year and month any of the following four types of situations might occur (Figure 2a):

-

Ice areas that are also climatologically ice covered. These areas were set to - 1.8°C.

-

Ice areas that are climatologically open water. These areas were also set to - 1.8°C.

-

Open water areas that are climatologically ice covered. Anomalous water areas of 1° were set to the mean of the Bottomley et al. (1990) climatological SSTs for the relevant calendar month for all 1° latitude × longitude areas adjacent to the climatological ice edge in a 19° latitude × longitude area centered on the target area. The representative temperature for target box A in Figure 2b, for example, would be set to the mean of the climatological SSTs in those boxes marked with a cross.

-

Boundary areas. Boundary areas were defined as 1° open-water areas adjacent to (i.e., sharing a side with) any area in the above three categories. Ice areas and anomalous water areas were thus separated from the open sea by a boundary area 1° latitude or longitude wide. (See Figure 2b.) The SST in a boundary area was calculated as the climatological value plus one-half the temperature anomaly in an adjacent anomalous ice or anomalous water square. (See Figures 2c and 2d.) If more than one adjacent area had anomalous conditions, preference was given to the areas lying to the north and south, e.g., case C in Figure 2d. If none of the adjacent areas had anomalous

FIGURE 2a

Calculation of a representative temperature for anomalous water areas.

FIGURE 2b

Designation of areas near ice edge.

|

KEY: |

C |

Climatological ice edge |

|

|

O |

Observed ice edge |

|

|

X |

Boundary areas (see text). |

|

KEY: |

C |

Climatological ice edge |

|

|

O |

Observed ice edge |

|

|

A |

Target area |

|

|

N |

19° latitude x longitude area centered on target area |

|

|

X |

Climatological SSTs for these boxes are used to calculate representative temperature for area A. |

FIGURE 2c

Calculation of boundary temperatures.

|

KEY: |

C |

Climatological ice edge |

|

|

O |

Observed ice edge |

|

|

Al etc. |

1° boxes: see footnotes |

|

FOOTNOTES A. If TA2 = Temperature in 1° box A2 = - 1.8°C for observed ice, and NA1, NA2 = Normals in 1° boxes A1, A2, then TA1 = Temperature in 1° box A1 = NA1 + 0.5 (TA2 - NA2) = NA1 + 0.5 (- 1.8-NA2) B. TB2 = Representative temperature assigned to 1° box B2 (see text and Figure 2b) TB1 = NB1 + 0.5 (TB2 - NB2) = NB1 + 0.5 (TB2 + 1.8) because NB2 = - 1.8°C for climatological ice. C. TC1 = NC1 because the ice edge is at its normal position. |

|

KEY TO AREA DESIGNATIONS: |

|

|

C |

Climatological ice edge |

|

O |

Observed ice edge |

|

I |

Observed ice areas set to - 1.8°C |

|

W |

Anomalous open-water areas: SSTs calculated as in text |

|

B |

Boundary SSTs: see text and Figure 2c |

|

L |

Areas left blank for filling in the Laplacian stage. |

|

FOOTNOTES A. -1.3 + 0.5 (-1.4-(-1.8)) = -1.1°C B. -0.7 + 0.5 (-1.8-(-0.8)) = -1.2°C C. -1.3 + 0.5 (-1.8-(-1.4)) = -1.5°C |

-

using ice anomaly to southward, not to westward conditions, the climatological value alone was used. If an observed SST was found in the boundary area, this was used in preference to the computed value. The SSTs in the boundary areas were then used as the external boundary conditions in the Laplacian interpolation process (Figures 2e and 2f). Reducing the ice-edge anomalies by a half had ensured that the values supplied to the Laplacian process were not extreme and had strengthened the temperature gradient at the edge of anomalously frozen areas (Figure 2f).

8. Laplacian Interpolation

-

The 1° resolution SSTs were first smoothed 1:2:1 east-west, then north-south, simplifying the smoothing to 1:1 for boxes next to land. Anomalous water areas were omitted from this smoothing, as were boundary 1° areas (Figure 2a) next to sea ice or to missing data. In this process 1° boxes adjacent to boxes still containing climatology would have been affected by the climatology, muting their anomalies. They were therefore regarded as data void, along with the boxes containing pure climatology.

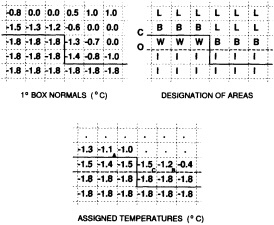

FIGURE 2e

Example of SST analysis near retreated ice edge.

FIGURE 2f

Example of SST analysis near advanced ice edge.

-

Laplacians of the 1951 to 1980 MOHSST4 climatology (Bottomley et al., 1990) were calculated for data-void 1° boxes.

-

SSTs for the data-void 1° boxes were then computed by solving Poisson's equation with the Laplacians as forcing and the values in data-filled boxes as boundary conditions, as has been done by Reynolds (1988) and Bottomley et al. (1990) in constructing their climatology.

9. Final Smoothing

The 1° resolution absolute SST data output by the Laplacian interpolation were converted to anomalies, and the anomalies were smoothed 1:2:4:6:4:2:1 east-west, then north-south. Anomalies rather than actuals were smoothed, to avoid smoothing strong SST gradients. After smoothing, the anomalies were converted back to SSTs. SST gradients were thus retained with approximately 1° resolution, whereas the anomalies, which vary much more slowly geographically, were retained on about their original 5° resolution.

Examples of the final result of this process, in the form of anomalies for January 1878 and January 1983, are shown in Figures 1d and 3b (see color well). These fields (and others not shown) suggest that in future versions of GISST, the anomalies should be smoothed with a spatially lower-pass filter. Even in the 1980s, monthly (as opposed to seasonal) in situ SST anomalies are often unreliable on a 5° space scale (Folland et al., 1993).

INITIAL ASSESSMENT OF GISST 1.0

A sampling experiment was carried out to assess the reliability of the analysis technique when used on sparse data. In it, the complete analysis, including the Laplacian stage, was repeated for each month of the El Niño years 1982 to 1983, but with the basic SST data prior to the ''filling and quality control" stage omitted from 5° boxes where there were no data in the corresponding months of the El Niño years 1877 to 1878. In this experiment, January 1877 corresponds to January 1982, and December 1878 corresponds to December 1983. Also, data for 1981 and 1984 needed for this experiment were reduced to the coverages available in the corresponding months of 1876 and 1879. Figures 4a, b, c, and d, which should be compared with Figures 3a and b, illustrate the results of the experiment for January 1983. Figure 5a shows the two-year mean difference between the reduced-data analysis and GISST 1.0. (All are in the color well.)

Biases are largest in the eastern tropical Pacific, where the analysis of the reduced data base underestimated the strength of the 1982 to 1983 warm El Niño event. This underestimation exceeded 1°C in some places at the peak of the event in late 1982 to early 1983 (compare Figures 3b and 4d). It is likely therefore that GISST 1.0 itself will have underestimated the strength of the 1877 to 1878 El Niño, owing to the sparser data base then. Figure 5b presents the root-mean-square (rms) difference field between the GISST 1.0 and the reduced-data analyses; it is based on 24 monthly values at each location. The rms differences (which include a bias component) are less than 0.5°C over most of the Atlantic and Indian Ocean north of 40°S, but exceed 0.5°C over much of the Pacific and the Southern Ocean, and are over 1°C in the eastern tropical Pacific, where the reduced-data analyses underestimate the El Niño warmth. Cosine-latitude-weighted summary statistics for the globe and for the east tropical Pacific (20°N to 20°S, east of 170°W) are given in Table 2. The global rms difference (which also includes a bias component) is around 0.5°C before and after the El Niño but reaches 0.7°C in early 1983, when the underestimation in the east tropical Pacific averages nearly 0.7°C (compare also Figures 3b and 4d). However, the global field correlations rise from about 0.55 before the event to about 0.7 during the event and maintain this level, possibly because the El Niño intensifies the overall global anomaly pattern signal. During the El Niño the global bias reaches -0.15°C. For the two-year period as a whole, however, the global bias is only -0.03"C, suggesting, in accord with the "frozen grid" analyses in Bottomley et al. (1990) and Folland et al. (1990), that the sparse coverage available in 1877-1878 does not severely prejudice estimation of annual or multi-annual global mean SST anomalies. Similar, but apparently slightly better, results (not shown) were obtained when a corresponding experiment was made using the El Niño years 1972-1973 with the 1877-1878 coverage. The apparent improvement may have resulted from the slightly reduced coverage of observations in 19721973 relative to 1982-1983.

In a further, longer experiment the analysis for 1981 to 1990 was repeated using coverage for 1881 to 1890. Biases (not shown) were within ±0.1°C in most of the Atlantic and the Indian Ocean, and generally exceeded ± 0.3°C only in the Southern Ocean and parts of the North Pacific. Hemispheric and global average biases were within ±0.01°C. Root-mean-square differences generally exceeded 0.5°C only in the latter areas and in parts of the tropical Pacific and the far northern Atlantic. Correlations (Figure 6. again in the color well) based on 120 values at each location, exceeded 0.8 over most of the Atlantic, much of the eastern Pacific, and much of the Indian Ocean. Low correlations over the Southern Ocean and the central and northern Pacific emphasize the need to acquire more historical data for these regions.

These sampling experiments only assess the impact of reduced areal coverage of 5° box SST data. They do not provide any measure of the effects of increased scatter in the individual monthly 5° box values resulting from, for

TABLE 2 Summary Statistics for the Reduced-Sampling Test of GISST 1.0 Analysis

|

|

|

Globe |

|

|

Tropical East Pacific |

|

|

Year |

Month |

rms Difference (°C) |

Field Correlation |

Bias* (°C) |

rms Difference (°C) |

Bias* (°C) |

|

1982 |

1 |

.51 |

.57 |

-.02 |

.55 |

-.17 |

|

1982 |

2 |

.52 |

.57 |

+.01 |

.55 |

+.03 |

|

1982 |

3 |

.55 |

.55 |

-.05 |

.62 |

-.12 |

|

1982 |

4 |

.51 |

.54 |

-.08 |

.52 |

-.17 |

|

1982 |

5 |

.51 |

.57 |

-.06 |

.57 |

-.26 |

|

1982 |

6 |

.51 |

.64 |

+.00 |

.65 |

-.36 |

|

1982 |

7 |

.53 |

.63 |

+.10 |

.49 |

-.13 |

|

1982 |

8 |

.54 |

.56 |

+.06 |

.60 |

-.19 |

|

1982 |

9 |

.55 |

.67 |

+.06 |

.73 |

-.16 |

|

1982 |

10 |

.54 |

.74 |

+.01 |

.76 |

-.35 |

|

1982 |

11 |

.60 |

.70 |

-.06 |

1.09 |

-.50 |

|

1983 |

12 |

.66 |

.67 |

-.12 |

1.24 |

-.59 |

|

1983 |

1 |

.70 |

.67 |

-.14 |

1.19 |

-.63 |

|

1983 |

2 |

.69 |

.65 |

-.15 |

1.11 |

-.68 |

|

1983 |

3 |

.67 |

.65 |

-.09 |

.94 |

-.54 |

|

1983 |

4 |

.58 |

.71 |

-.03 |

.84 |

-.21 |

|

1983 |

5 |

.60 |

.71 |

+.00 |

.77 |

-.16 |

|

1983 |

6 |

.56 |

.78 |

-.09 |

.82 |

-.36 |

|

1983 |

7 |

.57 |

.78 |

-.07 |

.88 |

-.36 |

|

1983 |

8 |

.52 |

.76 |

-.05 |

.79 |

-.22 |

|

1983 |

9 |

.46 |

.72 |

-.03 |

.53 |

-.12 |

|

1983 |

10 |

.49 |

.62 |

-.03 |

.58 |

-.23 |

|

1983 |

11 |

.43 |

.72 |

+.01 |

.54 |

-.06 |

|

1983 |

12 |

.42 |

.74 |

+.02 |

.49 |

-.08 |

|

WHOLE PERIOD |

|

|

-.03 |

|

-.28 |

|

|

* Bias is "reduced-sampling analysis" minus "GISST" |

||||||

example, a smaller number of constituent observations. Also, the reduced detail of the earlier sea-ice data is ignored. To take advantage of satellite data, which are expected to improve the analyses from the 1980s onward especially in the Southern Ocean, the experiments will be repeated using GISST 1.1.

The sampling experiments on GISST 1.0 do suggest that the interpolation techniques have not introduced systematic long-term biases or false trends into the analysis. There may, however, be some systematic uncertainties owing to shortcomings in the bucket corrections resulting from the assumptions made about measurement techniques etc. (Folland and Parker, 1995). On a global average, the uncertainty arising from these is likely to be of the order of ± 0.1°C, especially before 1900 (Folland et al., 1992); but in regions where the corrections themselves are large, e.g., the Gulf Stream and Kuroshio in winter, an uncertainty of at least ±0.25°C may be expected.

A 1951-1980 monthly climatology has been created from GISST 1.0. MOHSST5 is more consistent with this than with the Bottomley et al. (1990) climatology. Relative to the latter, there were persistent anomalies of one sign in MOHSST5 in substantial parts of the Southern Ocean. These persistent anomalies were reduced or eliminated by referencing MOHSST5 to the GISST climatology. We regard the GISST climatology as the more reliable because the additional data input to GISST will have reduced the influence of the highly interpolated Alexander and Mobley (1976) climatology used by Bottomley et al. (1990) in data-sparse areas.

REVISIONS TO GISST

For GISST 1.1 we have incorporated satellite-based SSTs from R. W. Reynolds (NOAA) from 1982 onward. Because of their extensive coverage, they replaced the filling and enhancement processes, and the assignment stage except for 1° boxes adjacent to observed sea ice and Antarctica. They also almost entirely replaced the Bottomley et al. (1990) climatology in the Laplacian stage, resulting in an improved analysis. The Laplacian of the climatology was first used to make a complete field from the satellite data; then the Laplacian of this field was used to make the in situ data field complete. As expected, GISST 1.1 is warmer

in the Southern Ocean than the combined in situ satellite analysis by Reynolds and Marsico (1993), because of our different assignment of SSTs near sea ice. Typical differences are of the order of 0.5°C (see Figure 7 in the color well).

The historical in situ SST data base is to be expanded by incorporating millions of hitherto undigitized observations from the United States, Japan, and possibly the United Kingdom, Russia, and Norway. These data will also be incorporated into COADS, and an optimum data bank will be created by blending COADS and the U.K. Meteorological Office marine data base observation by observation. (See Parker (1992) as well as Komura and Uwai (1992), whose report suggests that there are about 4 million undigitized Japanese marine observations for the period 1890 to 1932.)

In collaboration with J. Walsh (University of Illinois), additional historical sea-ice data have been located and are to be digitized and combined with the Kelly (1979) sea-ice data. It is hoped that these data will be incorporated into later versions of GISST, replacing some of the existing sea-ice data.

Possible major changes to the analysis technique include:

-

Use of characteristic anomaly patterns in the background field for the Laplacian stage. The result may be an improved analysis of El Niño events in data-sparse years.

-

Refinement of the interpolation processes. The use of geographically and seasonally varying lag correlations and of optimum averaging (Gandin, 1963) is being considered.

MARINE WINDS

We are undertaking an extensive study of the dynamical consistency between sea level pressure (SLP) and near-surface wind observations in the COADS dataset. The chief motivation is the suggestion that changes in observational practice on board ships may have introduced a spurious upward trend in reported ship winds (e.g., Ramage, 1987; Cardone et al., 1990). Ward (1992) devised a method for deriving the seasonal mean geostrophic wind from COADS 2° latitude × longitude seasonal mean SLP data. A comparison of the trends in the observed winds and the derived geostrophic winds suggested a spurious upward trend in the magnitude of the observed wind of some 16 percent on average over the period 1949 to 1988. This work is being extended in two major ways:

-

Analysis is being performed on the 10° latitude X longitude (10° box) scale to improve spatial data coverage. The procedure works by first forming a 10° box anomaly data set in the way described in Ward (1992) and then adding the 10° box normal to the 10° box anomaly to get an actual value for the given 10° box.

-

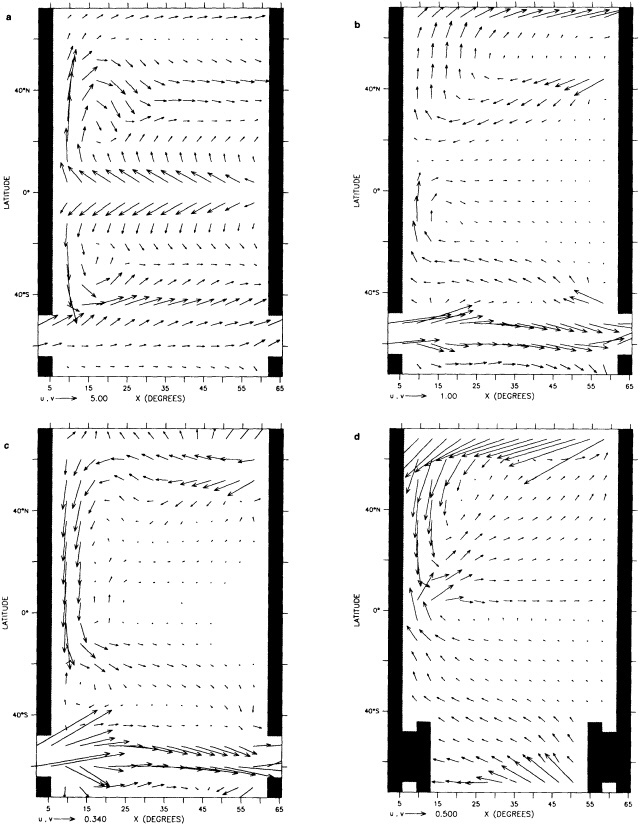

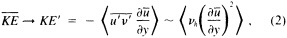

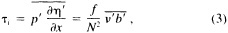

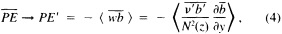

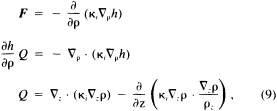

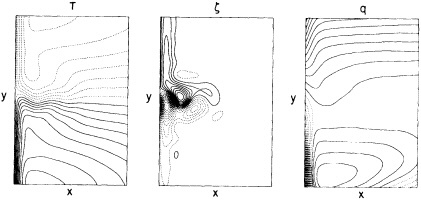

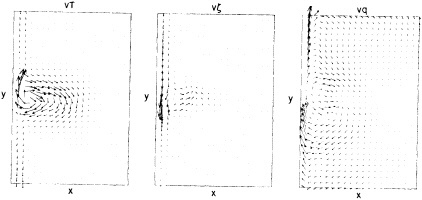

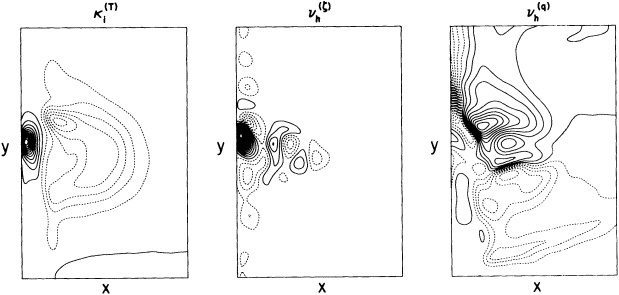

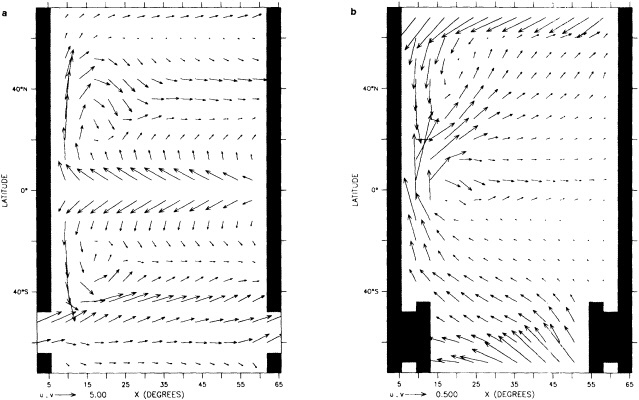

A wind is derived from the 10° box grid of SLP values using a method that performs better in the tropics than does the geostrophic approximation in Ward (1992). The new method assumes a three-way balance of forces between pressure gradient, Coriolis force, and friction. The last is assumed to be linearly related to wind speed (through a constant, called the coefficient of surface resistance) and to directly oppose the wind direction. Such a system is often said to describe "balanced frictional flow," and the horizontal momentum equation can be written (see Gordon and Taylor, 1975):

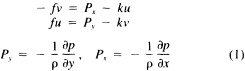

where

and p = surface pressure, ρ = density of air, f = Coriolis parameter, k = coefficient of surface resistance, u,v = zonal, meridional wind, and x,y = zonal, meridional distance.

Such a formulation leads to the following equations for the u and v wind components:

Assumptions have to be made about the value of k. It can be shown that, under the assumptions of this method, k is related to the backing angle (α) of the observed wind from the geostrophic wind:

For the studies described here, the value of k used is varied with latitude from about 2.5 × 10−5 at 50° latitude to 1.5 × 10−5 near the equator. These values are broadly consistent with the backing angle of the mean wind in directionally steady regions as calculated using climatological fields, and they are also consistent with the results of previous studies that have used other methods to estimate k (e.g., Brummer et al., 1974, Hastenrath, 1991).

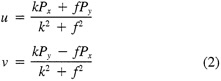

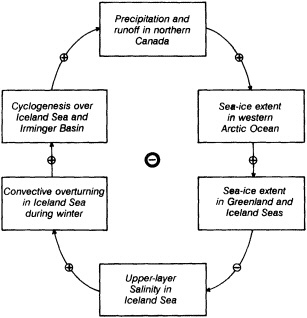

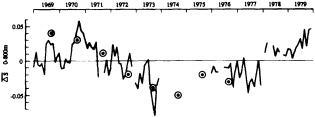

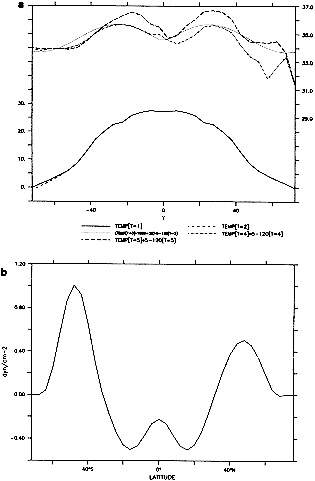

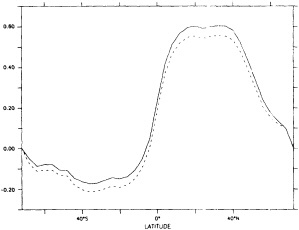

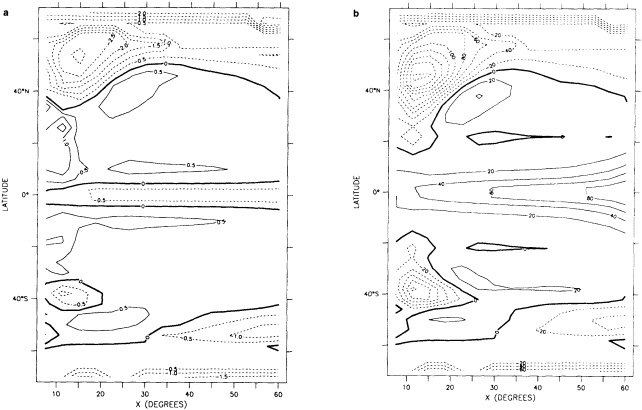

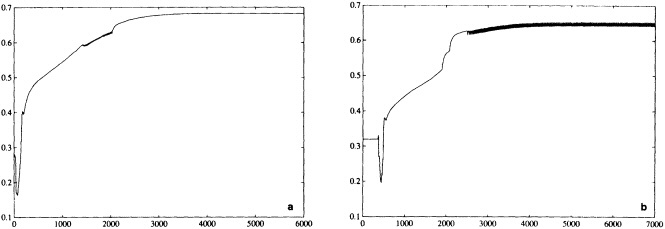

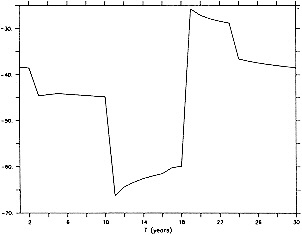

To illustrate a typical result, we show time series of the December to February observed and derived u-wind component for the 10° box centered at 15°N, 45°W (see Figure 8a). The required pressure gradients are estimated as finite differences using the four boxes to the north, south, east, and west. Strictly speaking, the derived wind is applicable to a 20° latitude × 20° longitude region centered at 15°N, 45°W, so the observed zonal wind is calculated as a weighted average of the box centered at 15°N, 45°W (weight = 1.0) and the four surrounding boxes (each with weight = 0.75). The agreement between detrended versions

FIGURE 8

Time series of observed zonal wind anomaly (dotted line) and derived zonal wind anomaly (solid line) based on seasonal mean SLP (see Eq. 2 in text). (a) December to February for 10° latitude × 10° longitude box centered at 15°N, 45°W. (b) As for (a). but March to May centered at 55°N, 15°W. Some statistics for the series are given in Table 3.

of the derived and reported wind is excellent (Table 3, column a). This gives high confidence in the quality of the detrended component of the data and in the method used to derive the wind from the SLP. Good agreement in the detrended component of the derived and observed wind is generally found for most 10° boxes, except for some regions adjacent to the equator that show poor agreement. This is probably because Eq. 1 is less applicable close to the equator, and SLP needs to be resolved on a finer spatial scale.

The quality of the data on the longer time scale is questioned because of the difference in the trends of the two series in Figure 8a. The observed wind shows a stronger trend toward increasing wind strength than does the derived wind (Table 3). Such a result is found in most of the 10° boxes analyzed in each of the four seasons. An average spurious upward trend in the magnitude of the observed wind of about 14 percent over 1949 to 1988 is suggested by the new derived wind series. This is very similar to the value of about 16 percent derived using the different analysis scheme of Ward (1992). However, the better data coverage of the new analysis scheme is leading to the identification of a somewhat different regional pattern of biases from that in Ward (1992), which is being studied further.

One region where Ward's (1992) trend biases were substantially different from the global average was in the North Atlantic near 55°N, where the average spurious wind speed

TABLE 3 Statistics for the Wind Time Series Shown in Figure 8

|

Wind Series |

(a) |

(b) |

|

Observed Trend |

- 0.27 |

+ 0.19 |

|

Derived Trend |

-0.03 |

+ 0.40 |

|

r(O, D) |

+ 0.87 |

+ 0.89 |

|

r(Odt, Ddt) |

+ 0.94 |

+ 0.88 |

|

NOTES: Wind series (a) and (b) are those shown in Figure 8 (a) and (b), respectively. Trends are in 10 × m s-1 yr-1. For series (a), mean zonal wind is negative (i.e., easterly), so negative trend implies strengthening wind. For series (b), mean zonal wind is positive. r(O,D) is the correlation between observed wind and derived wind. r(Odt, Ddt) is the correlation after removing the linear trend from the series. |

||

change over 1949 to 1988 was suggested by the pressure data to be negative. Figure 8(b) illustrates this using the newly derived wind for March to May for the 10° box centered at 55°N, 15°W. The relationship between the observed and the derived winds seems to change around 1962. After about 1962, there is particularly good agreement between the observed and derived winds with no appreciable systematic difference in the two series. However, before about 1962, the year-to-year agreement is less good, and there is an appreciable bias; the derived wind is more easterly than the observed. This bias leads to differing linear trends in the observed and derived winds (Table 3); this difference in trends broadly supports the results of Ward (1992). Similar results are found for other nearby boxes in this and other seasons. The discrepancy before 1962 may be caused by biases in either the SLP or the wind data, or by the method used to derive the wind from the SLP data. These possibilities are to be investigated.

"CLIMATE OF THE TWENTIETH CENTURY" INTEGRATIONS

Atmospheric simulations are being commenced using the climate version of the U.K. Meteorological Office Unified Model forced with GISST 1.1. The simulations will require much-improved surface pressure and marine surface wind data sets for their verification. We anticipate substantial benefits from interactions between data analysis, modeling, and studies of the mechanisms of recent climatic variations.

ACKNOWLEDGMENTS

This work was partially supported by the Commission of the European Communities under Contract EPOC-0003C(MB), and by the U.K. Department of the Environment under Contract PECD/7/12/37.

Commentary on the Paper of Parker et al.

THOMAS L. DELWORTH

NOAA/Geophysical Fluid Dynamics Laboratory

As someone who is mostly familiar with modeling and the efforts that go into it, I am very appreciative of the tremendous effort that goes into constructing observational data sets. The data sets are of critical importance in assessing the ability of models to simulate climate and its variability. I have a few comments and a couple of examples from modeling work to emphasize the importance of the work that Dr. Parker is doing, particularly with regard to the importance of sea ice for low-frequency climate variability.

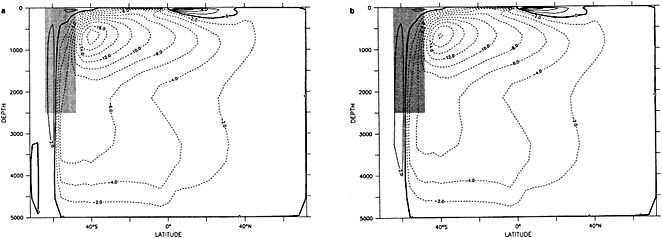

Examination of low-frequency variability contained in a multi-century integration of a coupled ocean-atmosphere model reveals that there are very long time scales associated with a number of oceanic variables in the polar latitudes of the Southern Hemisphere. In particular, there is a tremendous amount of low-frequency variability in the ice thickness, which then has a substantial impact on the model atmospheric variability. Sea ice, in some regions, can virtually disappear for times as long as a decade, with the result that surface air temperatures are much higher during this period. When you look at interdecadal model variability, features such as this are quite striking. It is critical to increase our observational data base for quantities such as sea ice in order to be able to assess whether such model features exist in the real climate system. Some of the data sets produced by Dr. Parker should help with that.

A key point in his manuscript is the theme of attempting to obtain more information from the available observations through the use of empirically motivated assumptions about the spatial and temporal scales of variations in the observed data. It is therefore critical to keep in mind when utilizing this data the assumptions that go into it. Two key assumptions are the degree of persistence in time and the spatial structure of the data. In general, this represents a powerful technique for supplementing existing data sets. There is, however, a potential for this technique to underestimate variability in data-scarce regions. This potential bias must be kept in mind.

An additional point Dr. Parker made is the critical importance of recovering and digitizing existing observations from data-poor regions. Allow me to comment on the importance of this with an additional modeling example. In characterizing low-frequency variability, a simple technique is to compute at each grid point the serial correlation of data time series. This is a measure of the persistence in the data and thus the inherent time scales. The results of such computations using annual mean sea surface temperature computed in a coupled model shows some very intriguing features. The longest time scales of sea surface temperature anomalies appear to be associated with higher latitudes where deepwater formation is occurring in the model. There are also very long time scales in the circum-Antarctic region. These are features that we would like to investigate in the observations, but the limitations in observational data sets make that difficult. For example, computing serial correlations of observed annual mean sea surface temperature from MOHSST4 produces a map with large data-void regions. In particular, the very intriguing model features in variability occur in regions with insufficient observations to assess their validity. Therefore, the technique that Dr. Parker is trying to use to extract more information from the observations available is really a critical one.

The techniques of data analysis described in the manuscript check for physical consistency between independent data. In particular, comparisons were made between derived and observed wind fields, and inconsistencies between the two were noted and studied. This is an important technique, and is an appropriate method to assess what features are real and what features are not.

Finally, as Dr. Parker mentioned, these data sets are of great utility in conducting simulations of the climate of the twentieth century. It would be beneficial to have other variables available in such a format. For example, land-surface processes might have a role in climate variability. One can envision a similar data set of time-varying land-surface characteristics over the twentieth century.

Discussion

RASMUSSON: There's much to be said for working only with real data. I hope you will also make available fields that are real data only.

PARKER: Yes, MOHSST5 is available, and we'd like to improve it by digitizing all the data possible.

KUSHNIR: If you have a good approximation to the covariance matrix structure, you can use that with more confidence than Laplacian methods to fill up missing regions.

PARKER: That would be similar to the eigenvector technique, which I think would be a good one here. We just haven't got that far yet. Also, I think getting more real data is important; for example, our pre-1953 sea ice is all from an old climatology, so the sea-ice anomalies are the same from year to year.

TRENBERTH: The use of covariances in either space or time depends very much on the nature of your signal. For instance, when you put in greenhouse warming a global-scale structure is superimposed on your fields, and larger spatial and temporal correlations are implied than you might otherwise get.

PARKER: Indeed. What would really be nice would be to have designators categorizing the quality of the data for each of the months you're presenting—for instance, with the Air Force's cloud-cover analysis, designating which are real data and which climatology.

KARL: David, when you said you tried to make the frequency of observations consistent with that of the nineteenth century, did you actually reduce the number of observations in a grid box to match the earlier ones?

PARKER: No, we had to use the box values, so we were just testing for errors resulting from the interpolation scheme. Selecting observations would have been a major computational exercise. But we are aware of that problem.

KEELING: When you are adding the COADS data to your own data set, what do you do when you have both? Can you identify the data sources and tell which are original observations?

PARKER: For the moment we are inserting the COADS SST only if the box is missing from our data set. We hope that with Scott Woodruffs help we will be able to blend the two sets, removing duplicates, by 1994. Ultimately we'd like to have everything that can be digitized.

KEELING: How hard would it be, then, to average your data by anything other than month?

PARKER: In our scheme the data are in five-day periods, or pentads, so that you could do half-months or three-month periods. We also have data sets of the bucket corrections we used so that anyone who wants to remove them from the data as they stand can do so.

JONES: We have now digitized the spring and summer months of that Danish sea-ice chart series that runs from the turn of the century to about 1960. I believe John Walsh is working on the winter ones.

WALSH: We're aggregating the narrative reports into a data set for winter. But I'd like to add that no one has yet taken advantage of the spatial character of these reports the way David has with SSTs. There is also a set of reports going back to the turn of the century from an Antarctic ice station, and some sort of spatial extrapolation procedure used on both of these might give us some measure of interannual variation in certain limited regions.

GHIL: In addition to data origin identifiers and such, I would really like to have error bars with your data.

PARKER: Well, there's a first approximation already there—the root-mean-square error fields—though they might be a lower limit, considering Tom's comment about the number of observations per grid box.

GROISMAN: I wanted to mention the dangers of using the German and Russian climatology of sea ice for the 1920s to 1940s, since sea ice was retreating considerably during this period. I hope that some day you will be able to use some Russian arctic observation data now stored in the Arctic Institute-—not digitized, of course.

LEVITUS: I'm happy to say that we at NOAA are making arrangements with the appropriate officials right now to do just that.

Decadal-Scale Variability of Ice Cover and Climate in the Arctic Ocean and Greenland and Iceland Seas

LAWRENCE A. MYSAK1

ABSTRACT

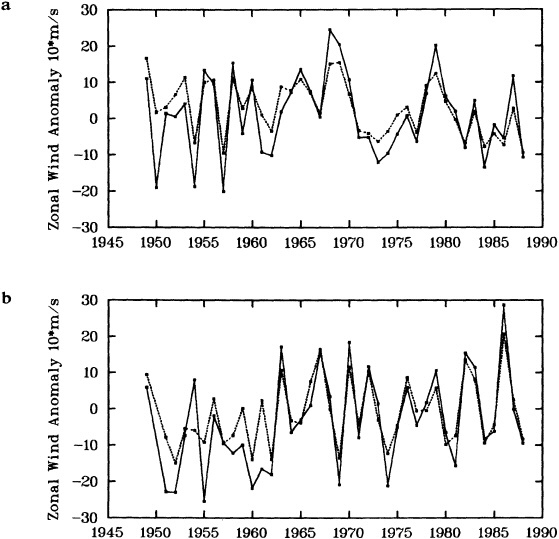

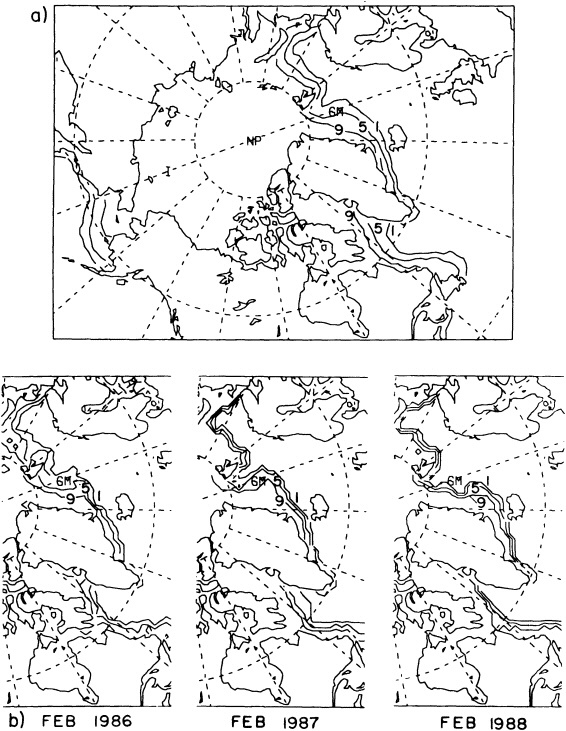

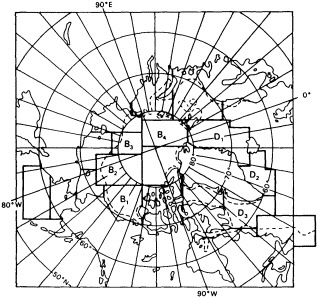

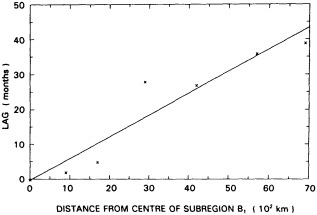

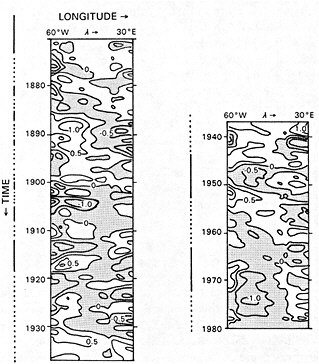

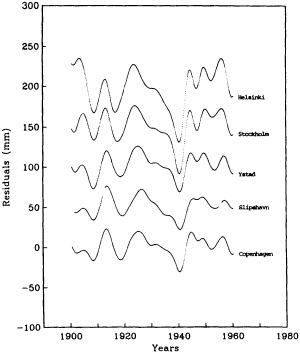

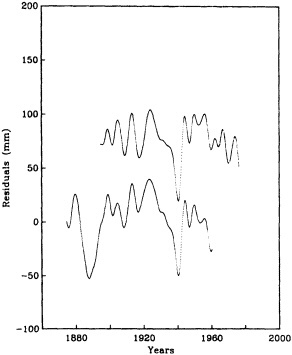

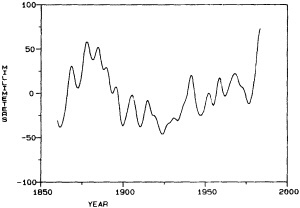

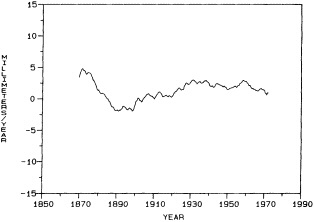

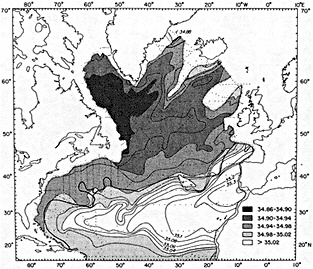

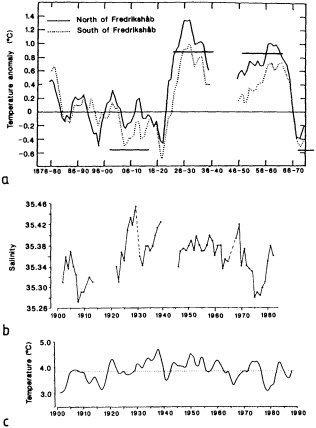

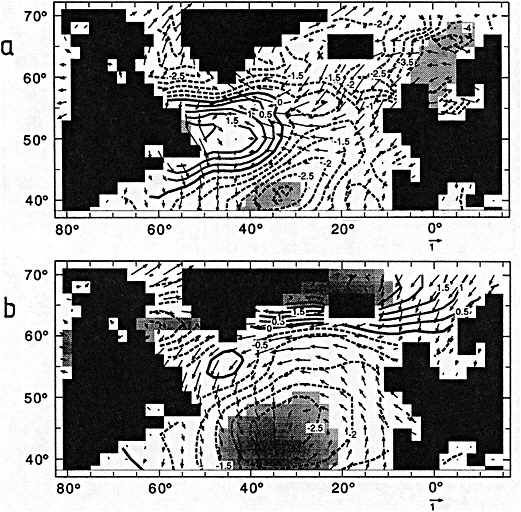

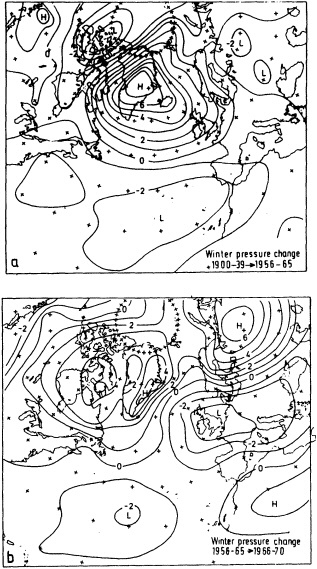

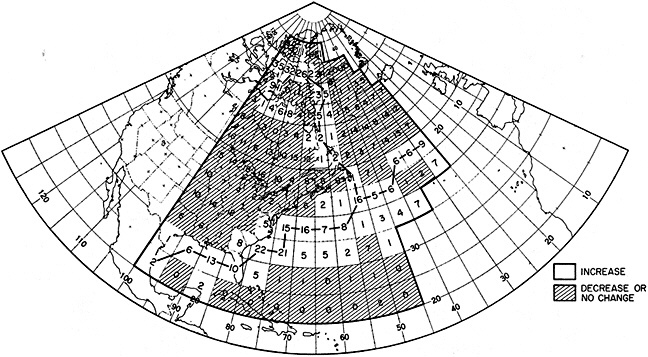

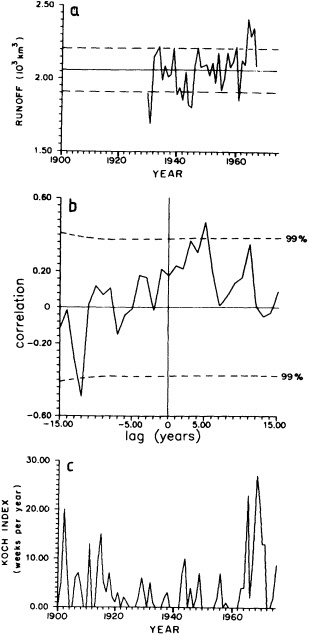

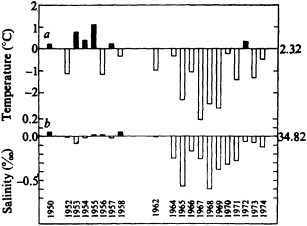

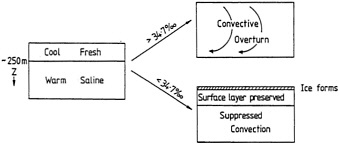

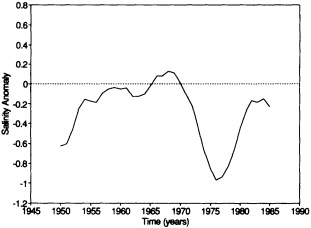

Analyses of 90 years of sea-ice concentration and ice-limit data from the Arctic Ocean and marginal seas reveal the presence of decadal-scale fluctuations in sea-ice extents, especially in the Greenland and Iceland seas. It has recently been proposed (Mysak et al., 1990) that such fluctuations may be part of an interdecadal Arctic climate cycle that can be described in terms of a reversing or negative feedback loop. An important property of this cycle is the suppression, every other decade, of convective overturning in the Iceland Sea for several winters (which occurred, for example, at the time of the "Great Salinity Anomaly" (GSA) in the 1960s, when sea-ice extents were also large). Such anomalies are preceded by increases in runoff and ice production in the western Arctic, which, it is argued, may partly cause such events as the GSA because of ice-anomaly advection from the Arctic into the Greenland Sea and subsequent ice melt in the Iceland Sea to the south. To find further evidence for this Arctic climate cycle, a lagged cross-correlation analysis of regional ice anomalies derived from sea-ice concentration data for the period 1953 to 1988 has been performed by Mysak and Power (1992). They found that both positive and negative ice anomalies in the Beaufort Sea consistently lead those in the Greenland and Iceland seas by 2 to 3 years. Mysak and Power also showed that high Mackenzie River runoffs in the mid-1980s led positive ice and negative salinity anomalies in the Greenland and Iceland seas in the late 1980s, which together resemble another (although weaker) GSA-like event.

A Boolean delay-equation model of this cycle has recently been developed by Darby and Mysak (1993). Among other things, they found that both ice and upper-ocean salinity advections from the western Arctic through to the Greenland and Iceland seas are needed in the model to create the observed structure of the Great Salinity Anomaly in the Iceland Sea.

INTRODUCTION

Over the past two decades there have been many studies of the nature and causes of interannual variability of sea-ice cover in the Arctic Ocean and marginal seas. Observational studies have been carried out by Walsh and Johnson (1979), Mysak and Manak (1989), Parkinson (1991), and others, whereas model simulations of atmospherically forced interannual variability of sea-ice extent and concentration have been performed by, for example, Walsh et al. (1985) and Fleming and Semtner (1991). Mysak and Manak (1989) also noted that the low-pass filtered areal sea-ice anomalies in the Barents and Greenland seas contain well-defined decadal-scale fluctuations, and they hypothesized that some of these might be related to the Great Salinity Anomaly (GSA; see Dickson et al., 1988), a widespread freshening of the surface waters in the subpolar gyre of the North Atlantic during the 1960s and 1970s.