6

Accelerators and Detectors: The Tools of Elementary-Particle Physics

INTRODUCTION

A variety of tools are required to perform the observations that form the basis of our current understanding of elementary-particle physics as developed through the twentieth century and presented in the preceding chapters. In this chapter the tools that elementary-particle physicists use to observe their worldparticle accelerators and particle detectors—are described. For the past 60 years these devices have been the instruments of choice for research on elementary particles. During this period the historic advances in elementary-particle physics have been inextricably driven by advances in accelerator and detector technologies.

Elementary-particle physics (EPP) is distinguished from other accelerator-based sciences by its reliance on accelerators operating at the highest energies attainable with present technology—the "energy frontier." To sustain continued progress in elementary-particle physics it is necessary to create conditions under which elementary particles-protons, electrons, muons, neutrinos (and their antiparticles)—interact at extremely high energies and in quantities sufficient to allow observation of extremely rare processes. Such conditions do not exist naturally on Earth, and cosmic rays of sufficiently high-energy are too rare. This

Note: This chapter contains more technically detailed information than other chapters in the report. The interested reader is encouraged to read the chapter in its entirety. However, the sections "Performance of Existing Accelerators," "Accelerator Facilities Under Construction," and "Particle Detector Topologies'' can be omitted without missing the essence of this report.

need has driven the construction of ever larger accelerator facilities and increasingly large and complex particle detectors.

The two most important properties characterizing the utility of a facility for EPP research are energy and luminosity. Today, physicists are able to produce collisions between elementary particles with energies close to 1 TeV (1012 electron volts)—the energy equivalent of 1012 V energy source. This represents a millionfold increase since the invention of the cyclotron 65 years ago (the difference in scale is shown in Figure 6.1). Until the 1960s, accelerator-based elementary-particle experiments relied exclusively on directing particle beams onto bulk matter—the so-called stationary target configuration. However, over the past 30 years, energy performance has been greatly enhanced by the development of the "particle collider"—an accelerator configuration in which particles and/or antiparticles collide head-on. As described in Chapter 2, the collider configuration provides the most efficient mechanism for translating beam energy into collision energy and thus provides the most direct access to the energy frontier.

The luminosity of a facility is a measure of the rate at which particles collide. Luminosity is directly related to the intensity of the particle beam (or beams) employed (and, in a collider, to the size of a spot onto which the beams are focused). Elementary-particle physicists measure luminosity in units of inverse square centimeters times inverse seconds (cm−2 s−1. This allows one to calculate an event rate by multiplying luminosity by the effective cross-sectional area of the particles that are colliding. Typical luminosities are in the range 1030 to 1035 cm−2 s−1. Such large luminosities are required because the effective areas of the colliding particles are so small—for example, high-energy electrons have an effective area for producing Z0 particles of only about 10−32 cm2, leading to an interaction rate of one every 10 seconds in a facility operating with a luminosity of 1031 cm−2 s−1.

The highest luminosities are attained at stationary target facilities where one can use a dense solid as a target. At the Brookhaven Alternating Gradient Synchrotron (AGS), for example, several trillion protons can be made to interact with stationary targets every second. Stationary target facilities are often used to produce intense beams of particles that can not be accelerated or stored in collider facilities because of their short lifetimes (muons, charged K and p mesons) or their lack of electric charge (neutrons and neutrinos).

Luminosity at collider facilities is much lower due to the low density of the beams relative to ordinary matter. However, for observations undertaken at these machines, the increased operating energy more than compensates for the lower luminosity. At the Fermilab Tevatron, for example, current operations produce about 500,000 annihilations every second between protons and antiprotons in the two countercirculating beams. Experimenters were recently able to identify approximately 100 examples of production of the top quark based on a year of operations at this facility. The observation and measurement of such

extremely rare processes requires highly sophisticated particle detectors supported by state-of-the-art computer facilities.

Particle beams contain anywhere between 1010 and 1014 particles. Although the total mass contained in these beams is minuscule, less than one ten-billionth of a gram, the energy is contained within an incredibly small volume. Beam sizes range from the size of a human hair, in the Fermilab Tevatron, to a hundred times smaller in the Stanford Linear Collider (SLC) at SLAC.

The detectors of elementary-particle physics are used to observe the debris produced in a single collision of two individual particles—an "event." Such events often produce up to a hundred individual particles emanating from a small region surrounding the "interaction point." The job of the detector is to characterize an event in terms of the energy (or momentum) and type of particles produced. This characterization is based on reconstruction of the tracks, or footprints, left by the particles in the detector. After reconstruction of an event, the elementary-particle physicist must interpret the event in terms of the underlying physical processes involved. An example of an event in which a pair of top quarks are produced has been shown in Figure 4.6.

FIGURE 6.1 (a) The 11-inch cyclotron in a Berkeley laboratory in the early 1930s, in contrast with (b) a sector of the Tevatron in the mid-1980s. (Courtesy of the Lawrence Berkeley National Laboratory and the Fermi National Accelerator Laboratory.)

Over the preceding decades, the need for ever higher energy has led to the construction of ever larger (and more expensive) facilities. As cost and complexity have increased, the number of such facilities has decreased to the point that today there are nine major elementary-particle physics laboratories in the world. These laboratories and their operating facilities are listed in Table 6. 1.

The highest-energy collider operational in the world today is the Fermilab Tevatron, situated in Batavia, Illinois. This facility accelerates protons and antiprotons to 900 GeV (1 GeV = 109 eV) per beam and is based on superconducting magnet technology. The Tevatron supports operations in both collider and stationary target mode and will remain the highest-energy facility in the world until the initiation of operations at the Large Hadron Collider (LHC) facility in Geneva, Switzerland, currently expected in the middle of the next decade. The SLC in Palo Alto, California, is an electron-positron (e+e−) collider capable of producing collisions at 45 GeV per beam. It is one of two facilities in the world capable of direct production of the Z0 boson and the only one with a polarized electron capability. The SLC is the first facility ever built as a "linear collider"the technology that appears to be required for further extension of the energy of electron-based facilities. Other facilities in the United States include the Cornell Electron Storage Ring (CESR), an electron-positron collider operating at approximately 5 GeV per beam for the study of B mesons (the highest-luminosity

TABLE 6.1a Elementary-Particle Physics Facilities Operational in the World Today—Collider Facilities

|

Laboratory, Facility |

Beam Energy (GeV) |

Center-of-Mass Energy (GeV) |

Particle Types |

Luminosity (cm−2 s−1) |

Start of Operations |

Location |

|

Fermi National Accelerator Laboratory, Tevatron |

900 |

1,800 |

Proton-antiproton |

2 × 1031 |

1986 Illinois |

Batavia |

|

Stanford Linear Accelerator Center, SLC |

45 |

90 |

Electron-positron |

1 × 1030 |

1989 California |

Palo Alto |

|

Cornell University, CESR |

5 |

10 |

Electron-positron |

4 × 1032 |

1980 New York |

Ithaca |

|

CERN, LEP |

91 |

182 |

Electron-positron |

3 × 1031 |

1989 Switzerland |

Geneva |

|

KEK, Tristana |

32 |

64 |

Electron-positron |

1 × 1031 |

1986 Japan |

Tsukuba |

|

Institute of High Energy Physics, BEPC |

2 |

4 |

Electron-positron |

6 × 1030 |

1989 China |

Beijing |

|

Budker Institute of Nuclear Physics, VEPP-2M |

0.7 |

1.4 |

Electron-positron |

3 × 1031 |

1989 Russia |

Novosibirsk |

|

DESY, HERA |

30 (electrons) 820 (protons) |

310 |

Electron-proton |

3 × 1030 |

1991 |

Hamburg, Germany |

|

a Operations at Tristan ceased in December 1995. |

||||||

TABLE 6.1b Elementary-Particle Physics Facilities Operational in the World Today—Stationary Target Facilities

|

Laboratory, Facility |

Beam Energy (GeV) |

Center-of-Mass Energy (GeV) |

Particle Type |

Beam Intensity (particles/ sec) |

Start of Operations |

Location |

|

Fermi National Accelerator Laboratory, Tevatron |

800 |

39 |

Proton |

4 × 1011 |

1983 |

Batavia, Illinois |

|

Brookhaven National Laboratory. AGS |

30 |

8 |

Proton or heavy ion |

3 × 1013 |

1960 |

Upton, New York |

|

Stanford Linear Accelerator Center, LINAC |

50 |

10 |

Electron |

1 × 1013 |

1967 |

Palo Alto, California |

|

CERN, SPS |

440 |

29 |

Proton |

4 × 1012 |

1976 |

Geneva, Switzerland |

|

KEK, KEK-PS |

12 |

5 |

Proton |

2 × 1012 |

1976 |

Tsukuba, Japan |

collider ever built), and the AGS at Brookhaven National Laboratory, a 30-GeV proton facility utilized for a variety of high-precision measurements, with the highest beam intensity available for elementary-particle physics in the world. Major facilities overseas include the Large Electron-Positron collider (LEP) at CERN (the European Laboratory for Particle Physics), the highest-energy e+e− collider ever constructed; the electron-proton collider, HERA, at the DESY laboratory in Hamburg; and modest-energy electron colliders in Beijing and Novosibirsk. (The facility at the KEK laboratory in Japan is currently being upgraded, as discussed later in this chapter.)

PARTICLE ACCELERATORS

The term "particle accelerator" refers generically to a machine in which elementary particles can be accelerated and/or stored. A "collider" is a special kind of accelerator in which particle beams are directed at each other, producing head-on collisions. In all accelerators constructed to date, the accelerated particles are both stable and electrically charged, limiting the possibilities to protons, electrons, and their antiparticles (although ions are often used in applications outside EPP). Fortunately, it is possible to create "particle beams" of a variety of short-lived or neutral particles. Such particle beams are usually produced through interaction of a high-energy proton beam with a stationary target, resulting in a "secondary particle beam,'' and are a standard feature of proton based stationary target facilities.

Protons and electrons used in particle accelerators are relatively easy to produce. Protons are extracted from ionized hydrogen gas, whereas electrons are emitted from a cathode either under the influence of heating (as in a television or computer monitor) or under the influence of incident laser light. Antiprotons and antielectrons (also known as positrons) do not occur naturally and so have to be created. Both are produced by directing particle beams onto pieces of ordinary bulk matter. In practice it is much easier to produce positrons than antiprotons because of the much lower mass of the positron. By interacting electrons with lead it is possible to produce approximately one positron for every electron, whereas it takes approximately 60,000 protons interacting in a nickel target to produce a single usable antiproton.

Performance of Existing Accelerators

Modern accelerators used in support of elementary-particle physics research come in two basic types: linear accelerators (linacs for short), and synchrotrons. Both types trace their lineage back to the late 1920s and early 1930s. It is remarkable that although both the size and the technological basis of these machines have changed dramatically over the past 60 years, the basic operating

principles remain the same—particle beams are accelerated by electric fields and confined by magnetic fields.

Linear accelerators run in straight lines. The energy of the beam delivered from a linac is simply the product of the average applied electric field and the length. The largest linac built to date is the SLAC linac, capable of accelerating electrons to 50 GeV. This device is roughly 3 km (2 miles) long, having an average accelerating field of about 17 × 106 V/m (see Figure 6.2 in color well following p. 112). Increasing the energy performance in linacs depends on raising the electric field that can be applied to the beam. Accelerating systems currently under development are aimed at doubling to tripling the accelerating fields in these facilities.

Circular accelerators are descendants of the early cyclotrons and utilize magnetic fields to bring particles around a circle so that the accelerating electric field can be applied repeatedly to the beam to increase its energy. In a synchrotron, the recirculation circle is fixed, so the confining magnetic field must be increased in direct proportion to the energy of the beam. In this case the energy of the synchrotron is the product of the number of revolutions a particle makes around the accelerator and the average accelerating voltage seen on each revolution. The Fermilab Tevatron and the Brookhaven AGS are synchrotrons. The Tevatron achieves its operating energy of 900 GeV through the repeated application of an accelerating voltage of 10¬6 V over approximately a million revolutions of the machine.

In principle, the energy could be increased indefinitely in a synchrotron by continued application of the accelerating voltage over many revolutions, but the product of the confining magnetic field and accelerator radius must be high enough to keep the particle beam circulating at the highest-energy. The maximum energy attainable in proton synchrotrons has been increased most recently through the application of superconducting magnet technology. This technology has allowed more than a doubling of the magnetic fields that can be produced and hence more than a doubling of the attainable energy for a fixed-circumference ring. The Fermilab Tevatron was the first high-energy accelerator ever built utilizing superconducting magnets. Tevatron magnets operate at 44,000 gauss (G). (By way of reference, the magnetic field of the Earth is about 0.5 G and the largest field achievable in a room-temperature electromagnet is about 20,000 G.) Magnets developed for the canceled Superconducting Super Collider (SSC) project operated at 66,000 G, whereas those under development for the LHC project in Europe are specified at 90,000 G.

In contrast, the energy of electron synchrotrons is limited not by the ability to produce large magnetic fields but by the need to replenish power radiated in the form of synchrotron light. This phenomenon, known as "synchrotron radiation," occurs because high-energy electrons lose energy by radiating light when forced to travel in circles. The effect is so strong that every doubling of the electrons' energy is accompanied by a sixteenfold increase in radiated power.

However, this effect is reduced in direct proportion to the size of the circle traced out by the electron. For an accelerator such as LEP the radiated power is between 10 and 20 MW-about the power consumption of a large town. To keep the power manageable, the radius chosen is 4.2 km, and the magnet field required to guide a 90-GeV electron beam is a mere 720 G. It is illustrative of the difference between electron and proton synchrotron capabilities that this same tunnel will support a 7,000-GeV proton beam once the LHC accelerator has been installed. LEP is the largest particle accelerator in the world today. The very strong energy dependence of synchrotron-radiated power is reason to believe that it will be difficult to extend further the energy frontier for electrons by utilizing circular accelerators and storage rings. This belief has provided the motivation for construction of a colliding linac, the SLC, as the first example of the technology that will be required to extend the electron energy frontier.

It is evident that much higher energies are achievable in proton accelerators than in electron accelerators. This situation is expected to persist into the foreseeable future. As explained in Chapter 2, electron accelerators remain competitive because of the more fundamental nature of the electron than the proton. Accompanying luminosities are similar in the two types of accelerators.

Accelerator Facilities Under Construction

Continued pursuit of elementary-particle physics requires access to facilities of continually improving performance. A number of upgrades to existing EPP facilities are currently under way in the United States and abroad aimed at supporting the research needs of the elementary-particle physics community over the next 10 years. These projects are listed in Table 6.2. Upgrades to existing facilities are highly cost-effective, building on the significant investments in the "base" laboratory. However, it should be noted that with the exception of the LHC, each of the projects listed targets increased luminosity; only the LHC provides a significant extension of the energy frontier, as well.

The Main Injector project at Fermilab involves construction of a new rapid-cycling 150-GeV proton accelerator that will support an increase in the intensity of the proton and antiproton beams in the Tevatron. The goal is to boost Tevatron collider luminosity by a factor of five beyond current operations. Additionally, a new antiproton storage ring, known as the Recycler, has been recently incorporated into the project. This ring holds the promise of a further increase of a factor between 2 and 10 in luminosity. A secondary benefit of the Main Injector project will be the creation of a new capability for high-intensity stationary target operations at 120 GeV. The Main Injector accelerator is based on conventional, room-temperature, magnet technology, whereas the Recycler is designed to utilize permanent magnets-the first large-scale use of permanent magnet technology in a storage ring.

TABLE 6.2a Major Upgrades and New Facilities Under or Approved for Construction-Facilities Upgrades

|

Laboratory, Project |

Operational Goal |

Start of Operations |

Location |

|

Fermi National Accelerator Laboratory, Main Injector |

Proton-antiproton collisions at 2,000 GeV and 2 × 1032 cm−2 s−l 120-GeV protons for stationary target operations |

1999 |

Batavia. Illinois |

|

Stanford Linear Accelerator Center, PEP-II |

Asymmetric electron-positron collisions at 10 GeV and 3 × 1033 cm−2 s−1 |

1999 |

Palo Alto. California |

|

Cornell University. CESR |

Symmetric electron-positron collisions at 10 GeV and 2 × 1033 cm−2 s−1 |

1998 |

Ithaca, New York |

|

CERN, LEP-II |

Electron-positron collisions at 192 GeV and 1 × 1032 cm−2 s−l |

1998 |

Geneva, Switzerland |

|

KEK, B Factory |

Asymmetric electron-positron collisions at 10 GeV and 3 × 1033 cm−2 s−1 |

1999 |

Tsukuba, Japan |

TABLE 6.2b Major Upgrades and New Facilities Under or Approved for Construction-New Facilities

|

Laboratory, Project |

Operational Goal |

Start of Operations |

Location |

|

Brookhaven National Laboratory, RHIC |

Polarized proton-proton collisions at 500 GeV and 2 × 1032 cm−2 s−l |

2000 |

Upton, New York |

|

Frascati, DAPHNE |

Symmetric electron-positron collisions at I GeV and 5 × 1032 cm−2 s−1 |

1998 |

Frascati Italy |

|

CERN, LHC |

Proton-proton collisions at 14,000 GeV and 1 × 1034 cm−2 s−1 |

2005 |

Switzerland |

The PEP-II project at SLAC is an upgrade of the original Positron-Electron Project (PEP) electron-positron colliding beam facility to support investigation of CP violation in the B-meson system. The facility is based on two rings operating at different energies, roughly 3 and 8 GeV. Technology challenges are related to the very high circulating currents requiring state-of-the-art feedback systems. This facility will also represent the first operation of an e+e− colliding beam facility with unequal beam energies. A similar facility is under construction at KEK in Japan.

Cornell is also upgrading the very successful CESR electron-positron collider to achieve higher luminosity, again for detailed study of the B-meson system. In contrast to the PEP-II facility, the CESR upgrade will continue to utilize equal energy beams circulating in a common storage ring.

A new colliding beam facility, the Relativistic Heavy Ion Collider (RHIC), is under construction at Brookhaven National Laboratory, with operations scheduled to commence in 1999. This facility is being funded as a nuclear physics project and will have as a primary operational mode the collision of heavy ions, up to fully ionized Au+79. RHIC will also have a capability for producing collisions between polarized protons at 500 GeV in the center of mass. The RHIC facility is based on superconducting magnet technology.

Overseas, a significant upgrade to the energy of the LEP collider is proceeding with a goal of achieving 96 to 100 GeV per beam by 1998 to 1999. However, the major initiative worldwide is the LHC. This facility, with seven times the energy of Fermilab, is approved for construction at CERN. With initiation of operations at the LHC projected for the year 2005, the energy frontier will move from Fermilab in the United States to CERN in Europe. The LHC is designed to collide two countercirculating proton beams at a center-of-mass energy of 14 TeV. The LHC will be constructed within the existing 26 km LEP tunnel and is based on the superconducting magnet technology first developed at the Fermilab Tevatron and improved on at Brookhaven, HERA, and the SSC.

Options for Future Facilities

A variety of projects that could extend the energy frontier up to or beyond the LHC are in various stages of development in the United States and abroad. Possibilities include electron-positron colliders operating in the range of 0.5 to 1.5 TeV, muon colliders operating in the range of 0.5 to 4.0 TeV, and very large hadron colliders with energies in the range of 50 to 100 TeV. Any such facility would be required to support a factor of about 100 increase in luminosity relative to current achievements. These possibilities are summarized in Table 6.3. All facilities listed are likely to have associated construction costs measured in excess of $1 billion. Cost efficiencies in manufacturing, installation, and operations are a major concern of the development efforts for each.

TABLE 6.3 Potential Major Facilities to Be Constructed After the Year 2005

|

Facility |

Particle Types |

Energy (center of mass) |

Enabling Technologies |

|

Next-generation linear collider |

Electron-positron |

0.5-1.5 TeV |

Microwave power sources and accelerating structures. Cost-efficient manufacturing |

|

Muon collider |

Muon-antimuon |

0.5-4.0 TeV |

Superconducting magnets and accelerating structures. Beam cooling. High-intensity particle beams and targeting |

|

Next-generation large hadron collider |

Proton-proton |

50-100 TeV |

Superconducting magnets. Cost-efficient manufacturing and tunneling |

Research and development programs aimed at new electron colliders are by far the most advanced of the areas listed above. Because of the relationship between radiated power, energy, and accelerator size, all electron collider designs currently under consideration are based on the linear collider configuration pioneered by SLAC. Most effort to date has been devoted to investigating the 0.5 to 1.0 TeV range. Any facility operating in this range is expected to have a linear extent of 30 to 50 km. Major R&D efforts are currently centered at SLAC in this country, KEK in Japan, DESY in Germany, and CERN in Switzerland. Communication and cooperation between the major development centers has been a significant feature of the R&D program for many years and is expected to lay the groundwork for international cooperation on a next-generation linear collider when and if one is built.

The SLAC and KEK approaches to linear colliders are quite similar-both have developed designs based on room-temperature copper-accelerating structures driven by high-efficiency radio-frequency power sources known as klystrons. The DESY approach differs in that it relies on a superconducting accelerating structure, and CERN is exploring accelerating structures driven by a dedicated electron "drive" beam. The warm and superconducting approaches are complementary. Room-temperature designs require very small (a few nanometers in height) beam sizes and high bunch current. Component fabrication

and alignment tolerances, precision control of beam trajectories, and removal of optical aberrations to high order are critical in these designs. Many of these tolerances are relaxed in the superconducting design. The trade-off is that superconducting accelerating structures are inherently more expensive and provide a lower accelerating gradient, thus requiring a facility nearly twice as long as the room-temperature structures. Proponents of each of these approaches are now pursuing integrated conceptual designs and cost estimates. The expectation is that such designs could be available in the next 3 to 5 years.

A significant effort directed toward developing design concepts for a muon collider has grown over the past several years in the United States. The muon collider is a facility in which muons are produced, accelerated, and collided at very high energies. No such facility has ever been built. The physics research supported by a muon collider would be similar to that supported by an electron collider. The virtue of the muon is that with a mass 200 times that of the electron, synchrotron radiation effects are greatly suppressed, affording the possibility of operations at higher energies. The downside is that muons are not stable-once produced they decay in a few thousandths of a second, leaving little time to accelerate and bring them into collision. A feasibility study has been developed for a facility operating in the 0.5 to 4.0 TeV range. Significant issues are identified as requiring solution before a viable design for such a facility can be contemplated. Many of the required developments are symbiotic with R&D efforts on other projects. For example, proton targeting for muon production shares many issues in common with antiproton or neutron production, whereas the muon-accelerating structures required are very similar to those being developed for the linear collider effort at DESY. With sufficient support, development of a complete conceptual design for such a facility, if one can be built, could probably be forthcoming in the period 2005 to 2010.

Scientists at a number of U.S. laboratories and universities have started to identify requirements for a new proton-proton collider with an energy reach a factor of approximately 10 beyond LHC. Although current hadron collider technology could probably form the basis for such a facility, the SSC experience has convinced those involved that significant reduction of construction and operating costs is absolutely critical. Two approaches are currently under study, one based on relatively low- and one on very high-field superconducting magnets. Both approaches require advances in superconducting material and magnet technologies, as well as advances in tunneling technologies beyond the current state of the art in order to achieve desired cost efficiencies. Such superconducting magnet R&D represent a natural outgrowth of existing programs at Fermilab, Brookhaven, and Lawrence Berkeley Laboratory, all of whom are currently engaged in R&D in support of the LHC construction. Close cooperation with the domestic and foreign superconducting materials industry is expected to be mutually beneficial throughout this development period. It appears likely that, spurred by recent rapid advances in high-temperature superconducting technologies in

industry, the next hadron collider beyond LHC will utilize these new developments. With sufficient developmental support, a viable conceptual design for a proton-proton collider at least seven times more powerful than LHC could probably be developed sometime from 2005 to 2010.

All the efforts described here require significant R&D to move forward. Lead times associated with the design and construction of new accelerators have become long enough that R&D into promising areas is required now if the United States is to be in a position to participate in, or host, a new forefront accelerator facility in the post-LHC era. R&D in support of a second-generation linear collider design is relatively advanced at this time but has to be continued through cost minimization studies and the development of a complete conceptual design. R&D in support of very large hadron colliders and muon colliders is in the early stages of development and has to be nurtured. Significant support will be required in the areas of superconducting materials, superconducting magnet design, superconducting accelerating structures, development of very high intensity proton beams, and ionization cooling if hadron or muon collider concepts are to be developed to a degree that will offer the United States viable choices for continued leadership in elementary-particle physics into the extended future.

DETECTORS IN ELEMENTARY-PARTICLE PHYSICS

The role of the accelerators described earlier is to bring high-energy particles into collision at a well-defined point in space-the interaction point. The interaction point is viewed by a detector whose job is to take a snapshot of the debris produced in the collision. Events are observed at rates up to several million per second in some experiments, and each event can involve the production of hundreds of particles. Of these collisions, only a very few are interesting and represent a phenomenon that has not been frequently observed before.

The frequency of collision and the volume of information required to characterize individual events constrain the methods of detection that can be employed. Many years ago it was possible to perform experiments in which the human played the primary role in the acquisition and storage of data. The physicist could observe, by eye, some flashes of light, indirectly from nuclear disintegrations, and then make some notes in a logbook. In modern detectors, such techniques have, by necessity, given way to a vast array of high technology often involving millions of the most advanced electronic circuits and the highest-performance computers available. The strategies involved in producing the event snapshot are discussed below. In general, the approaches utilized are common to lepton collider, hadron collider, fixed-target, and cosmic-ray experiments.

The snapshot ideally contains sufficient information to characterize any given event. The complete picture may be built up from detection of all the individual elementary particles in the event. These "elementary" particles may be discrete particles or they may be jets of hadronic particles. Sometimes the

presence of a particle can be inferred only by invoking rather general laws of physics, such as momentum conservation, to determine what is "missing." An example is the original evidence for the existence of the neutrino.

Most particles produced in an interaction are long-lived, whereas a few may decay before they exit the detector, allowing observation of the decay products as an aid to identification of the parent particle. As an example, hadrons containing b quarks travel just a few millimeters before decaying. The decay vertex can be distinguished from the primary vertex by using very high resolution silicon-based detectors.

A given experiment is a collection of different detectors that, when assembled together about the interaction region, gives a complete picture of an event. Such assemblies are never perfect-any given design has its own strengths and weaknesses. Choices are driven by the physics interests. The individual building blocks can be employed in different environments in different ways, but the components of lepton collider experiments and hadron collider experiments are more remarkable for their commonality than their differences.

Particle Detection

The first step in constructing the event snapshot is to identify the presence of individual particles and to measure their tracks. The basis for detecting the presence of an individual particle is the exploitation of some well-understood physical process, typically the electromagnetic interaction of a charged particle with matter. For example, when Henri Becquerel discovered in 1896 that uranium was radioactive, he saw light traces in photographic paper. The light was produced by the ionization and subsequent de-excitation of some atoms by the passage of charged particles produced in the radioactive decay of uranium. This process is called scintillation and remains a very common detection method. If the same ionization process is induced by the passage of a charged particle through a gas in which there is a strong electric field, the liberated electron is accelerated in the direction of the field, and by collision with another atom, can produce a second ionization. The process can multiply into an avalanche of electrons. The result is an electrical impulse that can be detected.

An early example of the detection of individual particles using the ionization process is the familiar Geiger-Muller tube. A more modern example is the silicon strip detector. The understanding of the beautiful properties of semiconductors is responsible for the revolutionary advances that have made today's electronics industry possible. Over the last 15 years, new multichannel semiconductor detectors have also transformed major high-energy experiments by allowing a tenfold increase in the precision with which particle tracks can be identified.

The basic silicon strip detection device is a silicon diode. The material properties of silicon are such that the passage of a charged particle promotes electrons to the conduction band of the material in a manner analogous to the ionization of a gas. The electrons and their corresponding hole pairs are then free to drift under the influence of an electric field. Thus, in the presence of a modest voltage applied between electrodes on either side of the silicon wafer, an electric pulse appears upon passage of a charged particle. What has made this type of detector so attractive for particle physicists is the ability to implant the appropriate electrodes with a separation of about 50 µm, allowing the position of a charged particle to be determined with a precision of about 10 µm, Figure 6.3 (in color well following p. 112) shows an example of particle tracks emanating from a particle interaction as captured by a silicon detector.

Other particle detection techniques are also frequently employed, two examples being Cerenkov detection and calorimetry. Cerenkov light is a phenomenon rooted in the fact that a particle can travel with a velocity greater than the speed of light in a medium-for example, a gas-that has a refractive index greater than 1. When this happens, the optical equivalent of a sonic boom is produced and light is emitted. Thus, the presence or absence of a light burst when a charged particle traverses a gas indicates whether the velocity of the particle is higher or lower than a certain value. Calorimetric detection methods, on the other hand, rely on attempting to have a particle interact with some material in such a manner that all of its energy is deposited in a measurable way. The generic name for devices utilizing this technique is "calorimeters." Calorimetry has the virtue of working equally well for particles with and without an electric charge.

Modern particle physics detectors are assembled from a wide array of individual detection elements. The elements each have different purposes and different capabilities. Some measure particle trajectories, others measure velocity, and still others measure total energy. Some can see neutral particles and some cannot. Often, information from a variety of detector elements is used to deduce the identity of a particle. As described below, physicists assemble detectors in a manner that allows for coordinated use of the information collected from all the detection elements to form the most accurate picture of the event possible.

Particle Detector Topologies

The goal of physicists assembling a variety of individual detection elements into an integrated detector is to obtain an accurate snapshot of an event. This usually means measuring the momentum and ascertaining the identity of as many of the particles produced in the event as possible. The individual techniques for particle detection and measurement utilized in modern particle detectors are broadly applicable to all types of experiments. However, the topologies of dif-

ferent detectors often differ considerably. In stationary target experiments, because of the high momentum of the beam particles, the particles produced generally tend to move in a small cone in the direction of the incident beam. Stationary target detectors therefore tend to be restricted to cones about the beam direction. A typical stationary target detector might measure 3 m in diameter and 100 m in length. In collider experiments, by contrast, the two colliding beams are usually of equal energy. Forward and backward tend to be equivalent, so there is a tendency to use a detector that extends approximately the same length in all directions, typically 5 to 10 m. Intermediate between these two extremes are detectors at colliders in which the beams are of unequal energy. There is a desire for coverage of all directions, with a strong emphasis on the direction of the higher-energy beam. Beyond this topological consideration, all detectors are very similar.

One of the striking aspects of a particle physics detector is the range of scales involved. Detectors for new experiments at the LHC at CERN will measure 10 m on a side—1,000 m−3. Their weight will be tens of thousands of tons. In contrast, embedded in the heart of the detector will be devices 3 × 10−4m thick, capable of measuring position with a precision of 1 × 10−5 m. In distances, the range of scales is 1 million!

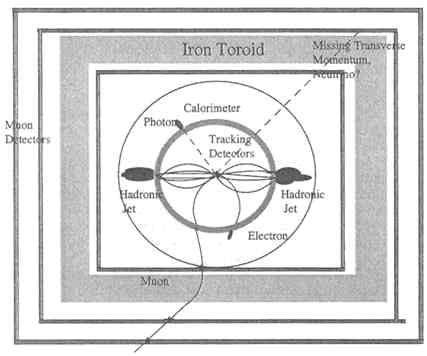

Figure 6.4 shows a typical topology for a detector utilized in a colliding beam experiment. The integration of a variety of individual detection elements into a large detector represents a particular strategy for creating the event snapshot. By starting from the point of interaction and moving outward, it makes sense to determine a particle trajectory before the particle is deflected or absorbed through interaction with dense matter. Nearly all detectors measure particle momenta with tracking chambers immersed in a magnetic field. In the generic design shown, a solenoid magnet is placed so as to enclose the tracking volume inside the particle calorimeters. The calorimeters are near the outside because the particles, with a few exceptions as noted below, are completely absorbed in these devices.

Layer 1:

Charged-Particle Tracking Volume

Large arrays of wire or silicon ionization detectors can be assembled to measure the trajectories, or tracks, of individual particles. Trajectories are used to determine the point of origin and momentum of the particles. Closest to the primary interaction point, one usually deploys a series of layers of the highest precision detectors possible. Typically these are silicon strip detectors. These detectors are used to identify unstable particles that travel a short distance from the interaction point before transmuting into different particle types (e.g., B mesons) as shown in Figure 6.3. The precision with which a secondary vertex can be distinguished from the primary vertex is determined by the closeness of the

FIGURE 6.4 Figure of a generic collider detector with particle signatures superimposed.

innermost detector to the interaction point and the measurement precision of the detector.

Outside the silicon detectors, the remainder of the tracking volume is filled with detectors of moderately high precision immersed in a magnetic field. The goal here is to measure the momentum of individual charged particles by measuring the trajectories as they traverse the magnetic field. Momentum is calculated by using the property that a charged particle travels on a circular trajectory in the presence of a magnetic field. The radius of curvature of the orbit is directly proportional to the momentum of the particle and inversely proportional to the strength of the magnetic field. Unfortunately, the resolution of this technique decreases as the particle momentum increases.

As might be expected, the more precisely the trajectory is measured, the more accurately is the momentum determined. This leads to choices in construction inevitably resulting in trade—offs between resolution and cost. Because of the desire to keep particles following their naturally curved trajectories, every attempt is made to eliminate extraneous material that might deflect the particles, and thereby diminish measurement accuracy, as they traverse this region.

Layer 2: Charged-Particle Identification

The techniques described above allow detection of the presence of individual charged particles and measurement of their momenta and location in space. Complete characterization of the event also requires the ability to determine the identity of the charged particles and to identify the presence of electrically neutral particles. A number of techniques are employed to fulfill this need. In both of the examples presented below, the information obtained from a specific type of particle detector is combined with the momentum information generated by the tracking detectors to lead to information on particle identity.

The speed of a charged particle of a given momentum depends on its mass. The most straightforward method for determining the speed of a particle is to measure the time it takes to traverse a specified distance in the detector. Because of the high velocities involved, measurement of the elapsed time with a precision of a few 10−10 s is required to generate useful information. Such a technique is referred to as ''time of flight" and is useful for particles with momenta up to a few billion electron volts; above this energy, all particles tend to have velocities indistinguishable from the speed of light, independent of their identity. Generation of Cerenkov light can also be used as an aid to identification. The presence or absence of the Cerenkov light signal contains information about the velocity of a particle and hence can help distinguish between particles of different masses such as the electron, muon, or proton. Again, this technique is viable only up to momenta of several billion electron volts.

Layer 3: Calorimetric Energy Measurement

Electrons are very light particles, and their electromagnetic interactions with high atomic number materials, such as lead, result in a shower of electrons, positrons, and photons as first the parent and then its daughter particles emit photons. Photons, in turn, produce pairs of electrons and positrons and so on. This shower development and the manner in which the original energy of the electron is deposited in material provide a characteristic signature of the electron. The total energy deposited in the calorimeter is that of the original particle.

Photons, the particles of light, develop showers in almost exactly the same way as electrons. Thus, their calorimetric signature is the same as that of electrons. However, being electrically neutral, photons can be distinguished from electrons—photons leave no track in the charged particle tracking volume whereas electrons will.

Hadrons—typically pions, kaons, neutrons, and protons-can interact with matter through strong nuclear interaction. Electrons, positrons, and muons do not possess this capability. Therefore hadrons produce showers with different characteristics. Given the difference in interactions and in the properties of materials, hadronic showers are more extensive than electromagnetic showers

both longitudinally and laterally. Thus, the pattern of energy deposition distinguishes them from both electrons and muons. Again, as with an electron, the total energy deposited is that of the original particle.

Calorimeters have several virtues. As mentioned earlier, they are sensitive to both electrically charged and electrically neutral particles. Calorimeters are also a very effective means of identifying and measuring the energy contained in jets associated with quark production as described in Chapter 4. A rather nice feature of the measurements is that, in contrast to tracking measurements, the energy resolution improves as the particle energy increases. Calorimeters are heavy, large, and expensive, often dominating the overall cost of a modern detector.

Layer 4: Muon Identification and Measurement

The massive calorimeter is the end of the line for most particles. All the photons, electrons, and hadrons interact therein, deposit all their energies, and proceed no further. The two particles to which this does not apply are the muon and the neutrino. Muons are often dubbed "heavy electrons." Because of their higher mass, a muon of a given momentum will develop a much weaker electromagnetic shower than the corresponding electron. This occurs to such an extent that muons penetrate matter with relative ease, leaving only small fractions of their energy through ionization and similar processes.

A muon's momentum may have been measured in the inner tracking volume; however, at that point there is no distinction between it and other particles. Muons may be identified by observing particles that penetrate the calorimetry and match one of the tracks in the inner volume. If the determination of inner tracking momentum is adequate, muon detectors are often characterized by multiple layers of measurement separated by further layers of dense material. Sometimes, this material is iron, which may be magnetized in an attempt to enhance identification. In other cases, large magnetic field volumes may be employed, with little interleaved material, in an attempt to improve the momentum measurement of muons in a region free of confusion by other trajectories.

Hermeticity: Neutrino Measurement

Neutrinos are neutral and, as far as determined to date, massless; they interact with matter only through the weak interaction. Experiments have been performed in which beams of billions of neutrinos impinge on detectors weighing many thousands of tons with only one or two interacting in any manner. So if a neutrino is produced in the primary interaction or in subsequent decays, it invariably escapes direct detection. However, the law of conservation of momentum applies to the interactions being observed. Therefore, if all other particles produced in an interaction are measured, the absence or presence of a neutrino (or perhaps other hypothetical penetrating particles) can be inferred by comparing

the total momentum of the observed interaction products to the total momentum of the beam and target particles prior to the collision. Of course, if more than one neutrino is produced in an event, the situation is more complicated. The extent to which a detector is capable of determining the existence of an energetic neutrino is therefore controlled by its ability to measure all other particles. This feature is known as "hermeticity": If a detector is hermetic, nothing escapes detection. Since closing the box to make a hermetic detector controls the extent of the detector, it also controls the cost.

Triggering

One of the most important jobs performed by the detector is to decide when an interaction is of sufficient interest to record permanently all the information contained in individual sensors and detectors for later examination (i.e., when to hit the shutter release to take a snapshot). This function, referred to as the "trigger," is carried out by a large array of sophisticated electronics circuits and computers that examine the information flowing in and decide whether the event is worthy of further consideration. Most modern elementary-particle experiments rely on hierarchical triggers, in which decisions are made at two or three different levels. Each level provides a go or no-go decision to proceed to additional, more sophisticated processing of information at the next level. Only events satisfying the highest-level trigger are permanently recorded. In this manner, fundamental interaction rates of 1 million per second can be reduced to a more manageable level of 100 events or less per second recorded permanently. With a properly designed trigger, the interesting events, which represent new phenomena, survive through the highest trigger level whereas uninteresting events do not.

Challenges for the Next 10 to 20 Years

The needs for detector research and development for the next 10 years or more are dominated by issues involved in experimentation at the LHC and, to a lesser extent, in a possible future lepton collider. In addition to building on the experience gained at existing collider facilities, many of the issues being confronted by LHC detector designers were identified, and in many cases resolved, as a result of R&D programs initiated during the SSC era.

The characteristic physics in which EPP researchers are interested has, as its origin, interactions between the fundamental fermions of the present Standard Model. The interaction probabilities decrease very rapidly as the energy increases, pushing elementary-particle physicists to demand ever higher luminosities at higher-energy accelerators. Unfortunately, the backgrounds associated with uninteresting processes do not decrease nearly as quickly. As a result, ever larger fluxes of particles associated with uninteresting interactions will flood

detectors of the future, leading to potential confusion in the search for more interesting, but rare, interactions. The proverbial needle gets smaller, but the haystack gets bigger.

Occupancy

Increased luminosity means that in time and space, particles resulting from interactions are grouped more closely together. If the probability that an individual detector element will have a hit when interrogated reaches about 10%, the utility of the detector becomes problematic. There are two ways to improve the situation:

-

Granularity. The occupancy in a detector element can be reduced by reducing the size of the element. This is happening with silicon detectors. At modest luminosities it is possible to work with strip counters. Two stereo views can then provide the relevant two-dimensional information. As luminosities and occupancies increase, the probability of mistaken association increases dramatically. The solution is to decrease the long dimension of the strip. The result is a rectangular pixel with almost equal sides. Development of this new technology depends on providing readout on a much finer scale-to attach the electronics to detector elements by connection to the surface of the detector rather than the edge.

-

Speed. If a detector is sensitive for a certain period of time, it will respond to all the particles traversing it in that time. If interactions occur several times during the sensitive period, the detector sees a corresponding number of background particles as well as the particles of interest. At the LHC, collisions will take place every 25 ns. If a detector is sensitive for say 75 ns, it will see an average background from two spurious interactions. Consequently, there are advantages to increasing the speed of response of detectors.

Radiation Damage

When a detector or its associated electronics are subjected to high radiation levels, as represented by the passage through or interaction of large numbers of particles with the detector medium itself, there is some probability of a modification to the material of the detector or the electronics. This can lead to a reduction in the signal from the detector or even more dramatic malfunctions. There are many examples.

A straightforward example occurs in the case of plastic scintillators. For the light pulse to exit the scintillator and be detected efficiently, the plastic should be transparent. Exposure to sufficient radiation excites color centers, and many plastics become brown. They thus transmit less light, and if the condition becomes severe enough, they must ultimately be replaced.

Silicon detectors are also limited in their resistance to radiation. These detectors must be carefully designed and the background environment accurately modeled to ensure many years of efficient operations at facilities such as the Tevatron collider or the LHC.

Typically, two avenues may be pursued to mitigate such effects. On the one hand, the materials used can be improved, which is the current situation with respect to silicon devices. Research is being conducted to modify those characteristics of the material that make it sensitive to radiation. As with the original development of silicon detectors, this requires cooperation between the user and the manufacturer. Often the problem involves impurities in the material at the level of parts per million or less. At this level, few manufacturing processes are understood. Furthermore, the materials science of the detector is not entirely understood. The particle physicist then has to grapple with issues of solid-state physics.

The alternative path is to look for new materials. There was hope for a few years that the use of gallium arsenide instead of silicon would be a solution. In terms of neutron damage, gallium arsenide held promise. However, measurements were then made with charged particles, pions, and protons, and gallium arsenide was found to be less resistant than silicon. A possibility currently under investigation is the use of commercial diamond crystals. This also looks promising, but considerable development is required before a viable and affordable solution can be identified.

Opening New Possibilities

Significant R&D efforts are aimed at developing the detector technologies that will be required to support future experimentation at electron, proton, and/or muon facilities. This section has described a few areas in which the needs for future development are accompanied by a sense of the directions that are most fruitfully pursued. However, it should be emphasized that progress is required on a wide range of fronts and breakthroughs can occur any time a physicist is presented with some new possibility, perhaps developed outside the confines of elementary-particle physics, that acts as a stimulus. Research and development of detectors with no apparent immediate application has always been, and will continue to be, a necessary component of particle physics research.