This paper was presented at a colloquium entitled “Neuroimaging of Human Brain Function,” organized by Michael Posner and Marcus E.Raichle, held May 29–31, 1997, sponsored by the National Academy of Sciences at the Arnold and Mabel Beckman Center in Irvine, CA.

Anatomy of word and sentence meaning

MICHAEL I.POSNER* AND ANTONELLA PAVESE

Department of Psychology, University of Oregon, Eugene, OR 97403–1227

ABSTRACT Reading and listening involve complex psychological processes that recruit many brain areas. The anatomy of processing English words has been studied by a variety of imaging methods. Although there is widespread agreement on the general anatomical areas involved in comprehending words, there are still disputes about the computations that go on in these areas. Examination of the time relations (circuitry) among these anatomical areas can aid in understanding their computations. In this paper, we concentrate on tasks that involve obtaining the meaning of a word in isolation or in relation to a sentence. Our current data support a finding in the literature that frontal semantic areas are active well before posterior areas. We use the subject’s attention to amplify relevant brain areas involved either in semantic classification or in judging the relation of the word to a sentence to test the hypothesis that frontal areas are concerned with lexical semantics and posterior areas are more involved in comprehension of propositions that involve several words.

The language section of this colloquium presents papers with images of brain areas active during the processing of visual and auditory words and sentences. Although there is a heavy emphasis on visual words, it is also important to be able to connect the findings on individual word processing with studies of written and spoken sentences and longer passages. To do this, it is necessary to explore the mechanisms that relate individual words to the context of which they are a part.

The findings of imaging studies of language reflect the complex networks that can be activated by tasks involving words. When the words are combined into phrases and sentences, the networks become even more complex. One of the goals of our paper is to suggest productive ways of linking findings at the single word level with those that involve comprehension of continuous passages.

Imaging studies have shown that language tasks can activate many brain areas (1), predominately but not exclusively in the left cerebral hemisphere. However, much greater anatomical specificity can be obtained when specific mental operations are isolated (2, 3). The mental operations related to activation and chunking of visual letters or phonemes into visual or auditory units, often called “word forms,” tend to occur near to the sensory systems involved in language. These same sensory systems are also active during translations of auditory stimuli into visual letters or the reverse (4).

Additional mental operations are involved in relating the sound of two words whether presented aurally or visually, as in rhyming tasks or storing the words in working memory. These operations involve phonological processing that appears to be common to auditory and visual input. Understanding the meaning of words also invokes common systems whether they had been presented visually or aurally. Of course, understanding the meaning of a word can involve sensory specific and phonological processes in addition to those strictly related to word meaning.

In this paper, we are concerned primarily with reading to comprehend the meaning of the word or sentence. We label the mental operations specific to comprehension as “semantic.” Tasks requiring comprehension usually give specific activation of both left frontal and posterior brain areas when the semantic operations are isolated by subtracting away sensory, motor, and other processes. Semantic tasks that have been studied include generating the uses for visual and auditory input (4, 5), detecting targets in one class (e.g., animals) or classifying each word into categories (e.g., manufactured vs. natural) (4).

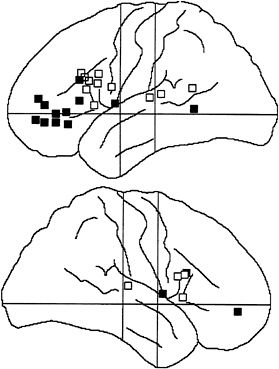

There has been considerable dispute about the role of the left frontal area in processing of visual words. One view (6) is illustrated in Fig. 1, which suggests that, within the left frontal area, more anterior activations (e.g., area 47) seem to be related to tasks that involve semantic classification or generation of a semantic association whereas frontal areas slightly more posterior (e.g., areas 44 and 45) are engaged by phonological tasks such as rhyming (2, 3) or use of verbal working memory. This more posterior frontal area includes the third frontal convolution on the left side (Broca’s area). Although the bulk of studies that try to separate meaning from phonology supports separation of the two within the frontal brain areas, as indicated in Fig. 1, there are also some studies that fail to show such separation (see refs. 7 and 8 for detailed reviews of semantic and phonological anatomy). Fig. 1 also shows that posterior brain areas (Wernicke’s area) are activated during semantic and phonological operations on visual and auditory words.

Recently, studies have been designed to give the time course of these activations by use of scalp recordings from high density electrodes (9, 10). For example, when reading aloud is subtracted from generating the use of a visual word, the resulting difference waves show increased positivity over frontal sites starting at ≈160 ms. Efforts to estimate best fitting dipoles by use of brain electrical source analysis (11) suggest an area of left frontal activation at 200 ms, corresponding roughly to that shown in Fig. 1. Depth recording from area 47 in patients with indwelling electrodes, during the task of distinguishing animate from inanimate words, confirms this time course (12). The difference waves in the generate minus repeat subtraction also show greater positivity for the generate task over left posterior electrodes (Wernicke’s area) starting at ≈600 ms. When a novel association is required after long practice on more usual associations, this left posterior area is joined by a right posterior homologue of Wernicke’s area (9).

© 1998 by The National Academy of Sciences 0027–8424/98/95899–7$2.00/0

PNAS is available online at http://www.pnas.org.

|

|

Abbreviations: RT, reaction time; ERP, event-related potentials. |

|

* |

To whom reprint requests should be addressed. e-mail: mposner@oregon.uoregon.edu. |

FIG. 1. Summary of frontal and posterior cortical activations found in various positron emission tomography, functional MRI, and cellular studies of semantic (filled squares) and phonological (open squares) processing of visual and auditory words. The more anterior of the left frontal areas seems to be largely related to meaning of words, and the more posterior frontal area is more related to sound. Distinctions within the right hemisphere and posterior areas are less clear.

It is not yet known how the frontal and posterior areas share semantic processing during reading and listening. However, one clue comes from the study of eye movements during skilled reading (13). Skilled readers fixate on a word for only ≈300 ms, and the length and even the direction of the saccade after this fixation are influenced by the meaning of the word currently fixated (14). The time course of fixations during skilled reading suggests that frontal areas are activated in time to influence saccades to the next word but that the posterior activity is too late to play this role. Previous analysis of semantic processing, such as the N400 (15), has involved components that are also too late to influence the saccade during normal skilled reading.

Because the distance of the saccade during skilled reading reflects the meaning of the lexical item currently fixated, it is necessary that at least some of the brain areas reflecting semantic processing occur in time to influence the saccade. Areas involved in chunking visual letters into a unit (visual word form) and those related to attention as well as the anterior semantic areas are active early enough to influence saccades (3). Partly for this reason, we have suggested that the left anterior area that is active during the processing of single words reflects the meaning of the single word (lexical semantics), and the posterior area is involved in relating the current word to other words within the same phrase or sentence (sentential or propositional semantics).

The distinction between lexical and propositional semantics is a common one in linguistics (16). Many psycholinguistic studies (17, 18) also draw on this or similar distinctions. It is clear that the meaning of each individual lexical item taken in isolation gives little that would serve as a reliable cue to the overall meaning of a passage. If even giving a highly familiar use of a word requires activation of left posterior areas, as the positron-emission tomography studies argue (5), this left posterior area also must be important to obtaining the overall meaning of passages that rely on integrating many words. Most psycholinguistic studies draw heavily on working memory to perform this role (19). Thus, it may be of importance that the portion of working memory involved in the storage of verbal items lies in a brain area near Wernicke’s area (ref. 6; see also ref. 25).

For all of these reasons, we tried to test the specific hypothesis that frontal areas will be more important in obtaining information about the meaning of a lexical item and posterior areas will be more important in determining whether the item fits a sentence frame. Our basic approach was to have each subject perform these two tasks on the same lexical items in separate sessions. We then compared the electrical activity generated during the two tasks to determine whether the lexical task produces increased activity in the front of the head while the sentence task does so in posterior regions.

A secondary hypothesis refers to the ability of subjects to give priority voluntarily to the lexical or sentence level computation. Our basic idea (4) is that the subject can increase the activation in any brain area by giving attention to that area. Accordingly, in one session, we trained subjects to press a key if “the word is manufactured and fits the sentence,” and in another session, we asked them to press a key if the word “fits the sentence and is manufactured.” These two conjunctions are identical in terms of their elements, but they are to be performed with opposite priorities. Our general view is that attention reenters the same anatomical areas at which the computation is made originally and serves to increase neuronal activity within that area.

In accord with this view, we expect that, in the front of the head, we will see more activity early in process under the instruction to give priority to the lexical computation, and late in processing, there will be more frontal activity when the sentence elements has been given priority. At posterior sites, the effect of instruction will be reversed.

METHODS

Twelve right-handed native English speakers (six women) participated in the main experiment. Handedness of participants was assessed by the Edinburgh Handedness Questionnaire (20, 21). Their ages ranged between 19 and 30 years and averaged 21.9 years.

EEG was recorded from the scalp by using the 128-channel Geodesic Sensor Net (Electrical Geodesics, Eugene, OH) (22). The recorded EEG was amplified with a 0.1- to 50-Hz bandpass, 3-dB attenuation, and 60-Hz notch filter, digitized at 250 Hz with a 12-bit A/D converter, and stored on a magnetic disk. Each EEG epoch lasted 2 s and began with a 195-ms pre-stimulus baseline before the onset of the word stimulus. All recordings were referenced to Cz. ERPs were re-referenced against an average reference and averaged for each condition and for each subject after automatic exclusion of trials containing eye blinks and movements. A grand average across subjects was computed; difference waves as well as statistical (t test) values comparing different tasks were interpolated onto the head surface for each 4-ms sample (these methods are described further in refs. 9 and 10).

The experimental trials began with the presentation of a sentence with a missing word (e.g., “He ate his food with his _____”). The sentence was displayed until the subject pressed a key, and then a fixation cross appeared in the center of the screen for a variable interval of 1,800–2,800 ms. After the fixation cross, a word (e.g., “fork”) was presented for 150

ms and then replaced again by the fixation stimulus. After a 1200-ms interval, a question mark, which served as a response cue, was displayed for 150 ms. To exclude motor artifacts, participants were instructed to respond only after the question mark was presented, and anticipation trials were excluded from the analysis. Participants responded by pressing one of two keys. The correspondence between keys and responses (“yes” and “no”) was counterbalanced across subjects.

The same sequence of events was used to perform three different tasks. In the single lexical task, subjects were asked to ignore the sentence and to decide whether the word represented a natural or a manufactured object; for half of the participants, the “yes” category was “natural objects,” and for the other half, the “yes” category was “manufactured objects.” In the single sentence task, participants were instructed to press the “yes” key if the word fit the previously presented sentence and the “no” key if the word did not fit the sentence. In the conjunction task, participants were asked to respond “yes” only if the word was a member of the “yes” category AND it fit the sentence.

Subjects participated in two sessions. In session A, they performed the single lexical task, followed by the conjunction task in which the lexical element was given priority. In session B, subjects performed the single sentence task, followed by the conjunction task in which the sentence element was given priority. The order of the two sessions was counterbalanced between subjects. The conjunction tasks performed in the two sessions differed in two respects: (i) In session A, the conjunction task followed extended practice with the single lexical task whereas in session B it followed extended practice with the single sentence task; (ii) in session A, the instructions asked the subjects to first perform the lexical decision and then the sentence decision whereas the opposite was required in session B. It was hoped that these manipulations would modify the priority of the two decisions: In session A, the lexical decision had priority over the sentence decision (conjunction task-lexical first) whereas in session B the sentence decision had priority (conjunction task-sentence first).

Each task consisted of four presentations of 50 sentences (200 trials overall), each followed by one of four possible words. For example, the sentence “He ate his food with his _____” was presented four times in each task, followed by the word “fork” (manufactured-fits the sentence), “fingers” (natural-fits the sentence), “tub” (manufactured-does not fit the sentence), or “bush” (natural-does not fit the sentence).

An additional eight subjects (four women; age between 19 and 29 years, average 21 years) were run in a purely behavioral study of reaction time in the same experiment. The only difference in this study was that subjects responded as quickly as possible after the word rather than waiting for a response cue to appear. No EEG was recorded in these sessions, and the reaction time to respond to the probe word was the dependent measure.

RESULTS

Behavioral Study. Medians of the response times (RTs) were computed for each subject and each condition of the behavioral study. The single lexical task was slightly faster than the single sentence task (727 and 739 ms, respectively), but this difference was not significant {t test [t(7)=–0.216], P>0.4}. Mean RTs across subjects were 733 ms for the single tasks and 794 ms for the conjunction tasks. These two conditions did not significantly differ from each other [t(7)=–1.529, P>0.15]. Although the lack of significance of this effect may have been because of the small sample size, it is worth noting that the conjunction task was always the second task of the session and was performed after extensive practice with the single task on the same stimulus material. It is therefore likely that a practice effect was responsible for the relatively small increase in RTs in the conjunction tasks when compared with the much simpler single tasks.

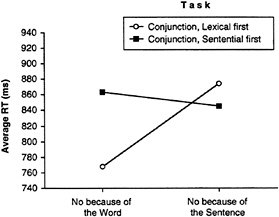

An important prediction is that RTs should reflect the priority of the two decisions in the conjunction task. If our manipulation was successful in determining the order of the lexical and of the sentence decision, we should find a particular pattern of RTs in the “no” responses. When the word is not a member of the appropriate category (e.g., manufactured) and it does fit the sentence, subjects can correctly respond “no” after they make the semantic classification on the lexical item. On the other hand, when the word is a member of the appropriate category and it does not fit the sentence, they can respond “no” after they make the sentence decision. In these two conditions. RTs should therefore reflect the priority of the two tasks. When subjects respond “no” because the word does not belong to the specified lexical category, they should be faster when priority is given to the lexical task than when priority is given to the sentence task. However, when subjects respond “no” because the word does not fit the sentence, they should be faster when priority is given to the sentence task than when priority is given to the lexical task.

To verify this prediction, we carried out a repeated measures ANOVA with two factors: type of conjunction task (lexical first vs. sentence first) and response (“no” because of the word vs. “no” because of the sentence). The main effects of task and response were not significant (Fs<1). However, the interaction task by response was significant [F(1, 7)=6.148, P<.05] (see Fig. 2).

As predicted, when the lexical decision had the priority in the conjunction task, subjects were faster to respond “no” when the word was not a member of the targeted lexical category than when the word did not fit the sentence. The opposite was true when the sentence decision had the priority. This result suggests that the priority of the two decisions was manipulated effectively in the two conjunction tasks.

Event-Related Potentials (ERP) Study. The ERP analysis focuses on the anatomical regions that have been associated previously with written word processing during semantic tasks. As outlined in Fig. 1, left frontal regions and left parieto-temporal regions have been found active in positron emission tomography and functional MRI studies of use generation and semantic classification. As discussed in the introduction, ERP and depth recording studies suggest that the frontal activation occurs at ≈200 ms and that the posterior regions are activated much later.

FIG. 2. RT to reject a word in the conjunction task as a function priority give to lexical or sentence semantics. Only data from “no” responses are presented. The cross-over interaction indicates that the instruction, and training, to give priority to a given element was effective in changing performance.

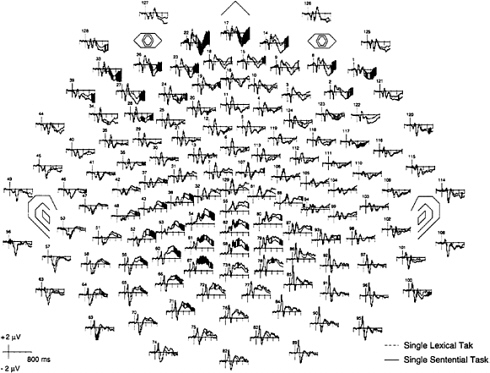

FIG. 3. Grand-average data from the 128 electrodes of the event-related potentials for a lexical task (classifying words into one of two categories) and a sentence task (deciding whether the word fits the sentence frame). The time of presentation of the stimulus word is indicated by the vertical line at the left of each tracing. Baseline before the stimulus was 200 ms. The nose, eyes, and ears are indicated on the chart. Black areas indicate significant differences between the two conditions (P<0.05) with the Wilcoxon signed rank test.

Fig. 3 shows the grand-averaged ERPs of the 128 channels in the semantic classification compared with the sentence task. Important differences occur in left frontal channels during the first positive component starting ≈160 ms after input. In these frontal channels, the lexical task is more positive and thus further from the baseline than the sentence task. We interpreted this larger amplitude as reflecting greater activation of the frontal area in the lexical task. This difference can be seen as early as 200 ms after presentation. A much larger difference is found in posterior channels at ≈400 ms after input.

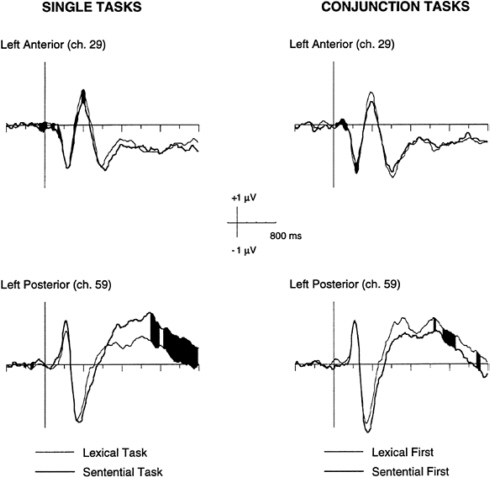

To examine these differences in more detail, each of the four tasks was shown for channels 29 (between F7 and F3) and 59 (between T5 and P3), which are representative channels for the frontal and posterior areas as illustrated in Fig. 4.

The single lexical task was more positive than the single sentence task in the frontal channel, starting at ≈120 ms and continuing until ≈500 ms. Because this difference was during a positive excursion of the waveform, the greater positivity in it indicates that there was more electrical activity during the lexical task. In the posterior channel, however, the sentence task was more positive than the lexical task, starting at 350 ms, significant by 550 ms, and continuing to the end of the 800-ms epoch. Because both tasks produced a positive wave during this time, the sentence task showed more activity than the lexical task. This pattern would fit with the early involvement of the left frontal area in the lexical task and the later involvement of the left posterior area in the sentential task.

In the conjunction task, the frontal channel showed a larger activation in the lexical first condition from ≈100 ms, which suggested a stronger activation of the frontal region in which the lexical category was computed when the conjunction was done with priority given to the lexical category element. We also would have expected that the sentence priority condition would have shown a late enhancement in this channel reflecting the delayed processing of the lexical component. There is no evidence favoring this idea. Because this effect was expected to be quite late, variability in exactly when the second element of the conjunction is processed may make it hard to see in time-locked averages.

In the posterior channel, the sentence first condition showed enhanced negativity at ≈200 ms. However, the lexical priority condition was generally much more positive than the sentence priority condition after ≈400 ms. Indeed, the lexical priority condition looked much like the single sentence task during this portion of the epoch. Both of these findings in the posterior channels fit with the idea that this area is involved in computing information related to the sentence. The sentence priority condition activated this sentence computation relatively early whereas the lexical priority condition activated it relatively late.

FIG. 4. Representative frontal (ch 29) and posterior (ch 59) channels selected to indicate differences between ERPs to lexical and sentence component tasks and lexical and sentence conjunctions. Black areas indicate significant differences between conditions (P<0.05) with the Wilcoxon signed rank test. Although the differences for the frontal channels are small, they are replicated at many frontal electrode sites and for both single and conjunction tasks and are confirmed by the t test (see Fig. 5).

The overall pattern of results generally supports the hypothesis that left anterior regions reflect decisions based on the word categorization and the posterior area reflects decisions related to the sentence.

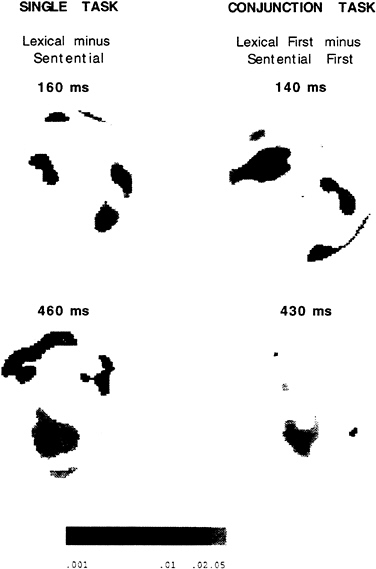

A map of the significant t tests was computed on the difference waves of the average ERP of the four tasks. The results of this computation are plotted in a spherical interpolation in Fig. 5. The significance of the t values are plotted as increasing with the darkness of the area.

The comparison between the single lexical task and the single sentence task revealed a significant difference in a left anterior area ≈160 ms. In this interval, the single lexical task’s waveform had a larger amplitude than the single sentence task. At 460 ms, both left anterior and left posterior areas showed a significant difference. In the left anterior regions, the single lexical task was more positive than the sentence task whereas in the posterior regions the single sentential task was more positive than the lexical task. These differences correspond to the results suggested before from the waveform analysis.

In the conjunction task, a left anterior difference between the lexical first and the sentential first tasks reached significance slightly earlier (140 ms) than in the single task. This difference indicates a larger amplitude in the waveform associated with the lexical first condition, which reflects greater positivity of the lexical computation in frontal electrodes when this element of the conjunction is given priority. Another significant difference was present in a posterior region ≈430 ms. This difference occurred when the lexical element was given priority and indicates a greater late positivity than when the sentence element was given priority. In the conjunction

FIG. 5. T-maps of significant differences between lexical and sentence components and the two forms of conjunctions at one early and one late time interval after the probe word.

tasks, both groups of differences appeared earlier than in the single tasks. A possible explanation is the practice effect already mentioned in the discussion of the RT data.

A number of other findings are present in these data that are less related to the main theme of this paper. A large late negativity appeared when words that fit the sentence frame were subtracted from those that did not. This is clearly related to the so-called N 400 and represents a semantic incongruity effect. Although this effect was broadly distributed, it appears to have been strong over right frontal electrodes as has been reported often in the literature (15).

DISCUSSION

The study of almost all cognitive tasks by positron emission tomography and functional MRI have produced complex networks of active brain areas. This is true even when subtraction is used to eliminate as many of the mental operations involved in the task as possible. One example of this complexity is the brain areas involved in comprehending the meanings of words and sentences. Two of the most prominent areas that have been related by many studies of comprehension are left frontal and left temporo-parietal areas (see refs. 2 and 6; Fig. 1).

Studies of the time course of these mental operations reveal some important constraints on the computations they perform. The frontal area is active at ≈200 ms whereas the posterior becomes active later. Because saccades have been shown to reflect access to lexical semantics and take place by 300 ms, only the frontal area is active in time to influence saccades in skilled reading. During skilled reading, only one or two words are comprehended in any fixation. Thus, the time course of comprehension of a word when it appears by itself must be well within 300 ms if the data from word reading are

to be appropriate to the study of skilled reading of continuous text. This constraint led us to the hypothesis that the frontal area represent the meaning of the current word.

Our study represents a test of the hypothesis that the frontal area is involved in lexical semantics and the posterior area is involved in integration of words into propositions. The data from the single task condition fit well with the temporal and spatial locations defined by this hypothesis. Despite this support from the data, it may be surprising that frontal areas are involved in semantic processing because the classical lesion literature argues that semantic functions involve Wernicke’s area. Lesions in Wernicke’s area do produce a semantic aphasia in which sentences are uttered with fluent form but do not make sense. However, studies of single word processing, which prime a meaning and then have subjects respond to a target, have shown greater impairment or priming from frontal than from posterior lesions (23). Thus, the lesion data also provide some support for the involvement of frontal structures in lexical meaning and posterior structures in sentence processing.

Our data also suggest that the meaning of the lexical item is active by ≈200 ms after input. Psycholinguistic studies of the enhancement and suppression of appropriate and inappropriate meanings of ambiguous words suggest that the appropriate and inappropriate meanings are both active at ≈200 ms but that at 700 ms only the appropriate meaning remains active, suggesting a suppression of the inappropriate meaning by the context of the sentence (24). The time course of these behavioral studies fits quite well with what has been suggested by our ERP studies.

Another source of support for the relation of the frontal area to processing individual words and the posterior area for combining words is found in studies of verbal working memory (25). These studies suggest that frontal areas (e.g., areas 44 and 45) are involved in the rehearsal of items in working memory and that posterior areas close to Wernicke’s area are involved in the storage of verbal items. Such a specialization suggests that the sound and meaning of individual words are looked up and that the individual words are subject to rehearsal within the frontal systems. The posterior system has the capability of holding several of these words in an active state while their overall meaning is integrated. Also in support of this view is the finding that Wernicke’s area shows systematic increases in blood flow enhanced with the difficulty of processing a sentence (26).

The conjunction of a lexical category and a fit to a sentence frame is a very unusual task to perform. Subjects have to organize the two components, and it is quite effortful to carry out the instruction. Yet, as far as we can tell from our data, the anatomical areas that carry out the component computations remain the same as in the individual tasks. Thus, when subjects have to rely on an arbitrary ordering of the task components, they use the same anatomical areas as would normally be required by these components. However, the ERP data from the two conjunction conditions are quite different. This is rather remarkable because the two conditions involve exactly the same component computations. The results we have obtained are best explained by the view that the subjects use attention to amplify signals carrying out the selected computation and in this way establish a priority that allows one of the component computations to be started first. Such a mechanism would be consistent with the many studies showing that attention serves to increase blood flow and scalp electrical activity (4). Defining a target in terms of a conjunction of anatomical areas is a powerful method to use the subjects’ attention to test hypotheses about the function of brain areas. Our results fit well with the idea that frontal areas are most important for the classification of the input item and posterior areas serve mainly to integrate that word with the context arising from the sentence.

We are grateful to Y.G.Abdullaev for help in preparing Fig. 1. This paper was supported by a grant from the Office of Naval Research N00014–96–0273 and by the James S.McDonnell and Pew Memorial Trusts through a grant to the Center for the Cognitive Neuroscience of Attention.

1. Cabeza, R. & Nyberg, L. (1997) J. Cognit. Neurosci. 9, 1–26.

2. Demb, J.B. & Gabrieli, G.D. (in press) in Converging Methods for Understanding Reading and Dyslexia, eds. Klein, R. & McMullen, P. (MIT Press, Cambridge, MA).

3. Posner, M.I., McCandliss, B.D., Abdullaev, Y.G. & Sereno, S. in Normal Reading and Dyslexia, ed. Everatt, J. (Routledge, London), in press.

4. Posner, M.I. & Raichle, M.E. (1994) Images of Mind (Scientific American Library, New York).

5. Raichle, M.E., Fiez, J.A., Videen, T.O., MacLeod, A.M.K., Pardo, J.V. & Petersen, S.E. (1994) Cereb. Cortex 4, 8–26.

6. Fiez, J.A. (1997) Hum. Brain Mapp. 5, 79–83.

7. Gabrieli, J.D.E., Poldrack, R.A. & Desmond, J.E. (1998) Proc. Natl. Acad. Sci. USA 95, 3, 906–913.

8. Fiez, J.A. & Petersen, S.E. (1998) Proc. Natl. Acad. Sci. USA 95, 3, 914–921.

9. Abdullaev, Y.G. & Posner, M.I. (1997) Psychol. Sci. 8, 56–59.

10. Snyder, A.Z., Abdullaev, Y.G., Posner, M.I. & Raichle, M.E. (1995) Proc. Natl. Acad. Sci. USA 92, 1689–1693.

11. Scherg, M. & Berg, P. (1993) Brain Electrical Source Analysis (NeuroScan, Herndon, VA), Ver. 2.0.

12. Abdullaev, Y.G. & Bechtereva, N.P. (1993) Int. J. Psychophysiol. 14, 167–177.

13. Rayner, K. & Pollatsek, A. (1989) The Psychology of Reading (Prentice-Hall, Englewood Cliffs, NJ).

14. Sereno, S.C., Pacht, J.M. & Rayner, K. (1992) Psychol. Sci. 3, 296–300.

15. Kutas, M. & Van Petten, C.K. (1994) in Handbook of Psycholinguistics, ed. Gernsbacher, M.A. (Academic, San Diego), pp. 83–143.

16. Givón, T. (1995) Functionalism and Grammar (Benjamins, Amsterdam).

17. Kellas, G., Paul, S.T., Martin, M. & Simpson, G.B. (1991) in Understanding Word and Sentence, ed. Simpson, G.B. (North-Holland, Amsterdam), pp. 47–71.

18. Van Petten, C. (1993) Lang. Cognit. Processes 8, 485–531.

19. Carpenter, P.A., Miyake, A. & Just, M.A. (1995) Annu. Rev. Psychol. 46, 91–100.

20. Oldfield, R.C. (1971) Neuropsychologia 9, 97–113.

21. Raczkowski, D., Kalat, J.W. & Nebes, R. (1974) Neuropsychologia 12, 43–47.

22. Tucker, D.M. (1993) Electroencephalograph. Clin. Neurophysiol. 87, 154–163.

23. Milberg, W., Blumstein, S.E., Katz, D., Gershber, F. & Brown, T. (1995) J. Cognit. Neurosci. 7, 33–50.

24. Gernsbacher, M.A. & Faust, M. (1991) in Understanding Word and Sentence, ed. Simpson, G.B. (North-Holland, Amsterdam), pp. 97–128.

25. Awh, E., Jonides, J., Smith, E.E., Schumacher, E.H., Koeppe, R.A. & Katz, S. (1996) Psychol. Sci. 7, 25–31.

26. Just, M.A., Carpenter, P.A., Keller, T.A., Eddy, W.F. & Thulborn, K.R. (1996) Science 274, 114–116.