This paper was presented at a colloquium entitled “Neuroimaging of Human Brain Function,” organized by Michael Posner and Marcus E.Raichle, held May 29–31, 1997, sponsored by the National Academy of Sciences at the Arnold and Mabel Beckman Center in Irvine, CA.

Cerebral organization for language in deaf and hearing subjects: Biological constraints and effects of experience

HELEN J.NEVILLE*†, DAPHNE BAVELIER‡, DAVID CORINA§, JOSEF RAUSCHECKER‡, AVI KARNI¶, ANIL LALWANI∥**, ALLEN BRAUN∥, VINCE CLARK∥, PETER JEZZARD∥, AND ROBERT TURNER††

*University of Oregon, Eugene, OR 97403–1227; ‡Georgetown University Medical Center, Washington, DC 20007; §University of Washington, Seattle, WA 98195; ¶Weizmann Institute of Science, Rehovot, 76100 Israel; ∥National Institutes of Health, Bethesda, MD 20892; ††Institute of Neurology, Queen Square, London WC1N3BG, United Kingdom; and **University of California, San Francisco, CA 94143

ABSTRACT Cerebral organization during sentence processing in English and in American Sign Language (ASL) was characterized by employing functional magnetic resonance imaging (fMRI) at 4 T. Effects of deafness, age of language acquisition, and bilingualism were assessed by comparing results from (i) normally hearing, monolingual, native speakers of English, (ii) congenitally, genetically deaf, native signers of ASL who learned English late and through the visual modality, and (iii) normally hearing bilinguals who were native signers of ASL and speakers of English. All groups, hearing and deaf, processing their native language, English or ASL, displayed strong and repeated activation within classical language areas of the left hemisphere. Deaf subjects reading English did not display activation in these regions. These results suggest that the early acquisition of a natural language is important in the expression of the strong bias for these areas to mediate language, independently of the form of the language. In addition, native signers, hearing and deaf, displayed extensive activation of homologous areas within the right hemisphere, indicating that the specific processing requirements of the language also in part determine the organization of the language systems of the brain.

The development and accessibility of neuroimaging techniques continue to permit detailed characterization of the neural systems active during perception and cognition in the intact human brain. Research along these lines reveals the adult human brain as a highly differentiated amalgam of systems and subsystems, each specialized to process specific and different kinds of information. A central issue is how these highly specialized systems arise in human development, the degree to which they are biologically constrained, and the extent to which they depend on and can be modified by input from the environment. Extensive research at many levels of analysis has documented that, within the domain of sensory processing, strong biases constrain development, but many aspects of sensory organization can adapt and reorganize after both increases and decreases in sensory input (1–13). For example, in humans who have sustained auditory deprivation since birth some aspects of visual processing are unchanged whereas the processing of motion is enhanced and reorganized (10, 14, 15).

It is likely that the development of the neural systems important for higher cognitive functions, including language, are also guided by strong biases but that some of these are modifiable by experience, within limits. Several lines of evidence suggest that cerebral organization for a language depends on the age of acquisition of the language, the ultimate proficiency in the language, whether an individual learned more than one language, and the degree of similarity between the languages learned (16–21). Behavioral studies suggest that early exposure to language is necessary for complete linguistic proficiency, and several different types of evidence raise the hypothesis that early exposure to a language may be necessary for the hallmark specialization of the left hemisphere for language (19, 22–24). Little is known about which aspect(s) of early language experience may be important in this development. It has been proposed that the demands of processing rapidly changing acoustic spectra is a key factor whereas other evidence suggests that the grammatical recoding of information may be central in the differentiation of the left hemisphere for language (25–27). The effects of language structure and modality on neural development can provide evidence on these proposals.

Comparison of cerebral organization in native speakers who are and are not also native users of American Sign Language (ASL) and of native signers who are and are not also native users of English permits a unique perspective on these issues. Studies of the effects of brain damage in early learners of ASL suggest that sound-based processing of language may not be necessary for the specialization of the left hemisphere (28–30). Electrophysiological and lesion-based evidence provide limited and apparently contradictory evidence on the proposal that the right hemisphere may play a role in processing ASL (28–32). Different lines of evidence suggest that areas within the right hemisphere may be included in the language system when the perception and/or production of the language depend on spatial contrasts. Additionally, several studies suggest that bilingualism is associated with a different cerebral organization than monolingualism, but there is very little evidence on the effects of sign/spoken bilingualism on the development of the language systems of the brain.

To address these issues we employed fMRI to compare cerebral organization in three groups of individuals with different language experience. (i) Normally hearing, monolingual, native speakers of English who did not know any ASL. (ii) Congenitally, genetically deaf individuals who learned English late and imperfectly and without auditory input. The deaf subjects’ native language was ASL, a language that makes use of spatial location and motion of the hands in grammatical encoding of linguistic information (33). (iii) A group of normally hearing, bilingual subjects who were born to deaf

© 1998 by The National Academy of Sciences 0027–8424/98/95922–8$2.00/0

PNAS is available online at http://www.pnas.org.

parents and who acquired both ASL and English as native languages (hearing native signers).

METHODS

Subjects. All subjects were right-handed, healthy adults (see Table 1).

Experimental Design/Stimulus Material. Each population was scanned by using functional magnetic resonance imaging while processing sentences in English and in ASL. The English runs consisted of alternating blocks of simple declarative sentences (read silently) and consonant strings, all presented one word/string at a time (600 msec/item) in the center of a screen at the foot of the magnet. The ASL runs consisted of film of a native deaf signer producing sentences in ASL or nonsign gestures that were physically similar to ASL signs. The material was presented in four different runs (two of English and two of ASL—presentation counterbalanced across subjects). Each run consisted of four cycles of alternating 32-sec blocks of sentences (English or ASL) and baseline (consonant strings or nonsigns). None of the stimuli were repeated. Subjects had a practice run of ASL and of English to become familiar with the task and the nature of the stimuli.

Behavioral Tests. At the end of each run, subjects were asked yes/no recognition questions on the sentences and nonwords/nonsigns to ensure attention to the experimental stimuli (see Table 1). ANOVAs were performed on the percent-correct recognition. Deaf subjects also took 10 subtests of the Grammaticality Judgment Test (34) to assess knowledge of English grammar (see Table 1). At the end of each run subjects indicated whether or not specific sentences and nonword/nonsign strings had been presented.

MR Scans. Gradient-echo echo-planar images were obtained by using a 4-T whole body MR system, fitted with a removable z-axis head gradient coil (35). Eight parasagittal slices, positioned from the lateral surface of the brain to a depth of 40 mm, were collected (TR=4 sec, TE=28 ms, resolution 2.5 mm×2.5 mm×5 mm, 64 time points per image). For each of the subjects, only one hemisphere was imaged in a given session because a 20-cm diameter transmit/receive radio-frequency surface coil was used to minimize rf interaction with the head gradient coil. The surface coil had a region of high sensitivity that was limited to a single hemisphere.

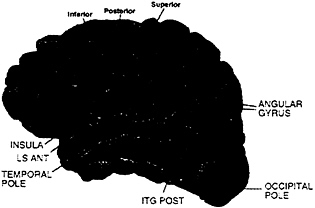

MR Analysis. Subjects were asked to participate in two separate sessions (one for each hemisphere). However, this was not always possible, leading to the following numbers of subjects: (i) hearing, eight subjects on both left and right hemispheres; (ii) deaf, seven subjects on both left and right hemispheres, plus four subjects left hemisphere only and five subjects right hemisphere only, (iii) hearing native signers, six subjects on both left and right hemispheres, plus three subjects left hemisphere only and four subjects right hemisphere only. Between-subject analyses were performed by considering left and right hemisphere data from all three groups as a between-subject variable. Individual data sets were first checked for artifacts (runs with visible motion and/or signal loss were discarded from the analysis resulting in the loss of the data from four hearing native signers: two left hemisphere on English, one left hemisphere on ASL, and one right hemisphere on English). A cross-correlation thresholding method was used to determine active voxels (36) (r≥0.5, effective df=35, alpha=.001). MR structural images were divided into 31 anatomical regions according to the Rademacher et al. (37) division of the lateral surface of the brain (see Fig. 1); between-subject analyses were performed on these predetermined anatomical regions. Activation measurements were made on the following two variables for each region and run: (i) the mean percent change of the activation for active voxels in a region and (ii) the mean spatial extent of the activation in the region (corrected for size of the region). In addition, a region was not considered further unless at least 30% of runs displayed activation. Multivariate analysis was used to take into account each of these different aspects of the activation. The analyses relied on Hotelling’s T2 (38) statistic, a natural generalization of the Student’s t-statistic, and were performed by using BMDP statistical software. In all analyses, the log transforms of the percent change and spatial extent were used as dependent variables, and data sets were used as independent variables. Activation within a region was assessed by testing the null hypothesis that the level of activation in the region was zero. Comparisons across hemispheres and/or languages were performed by entering these factors as treatments.

RESULTS

The behavioral data confirmed that subjects were attending to the stimuli and were better at recognizing sentences than nonsense strings [stimulus effect F(1,55)=156, P<.0001]. All groups performed equally well in remembering both the (simple, declarative) English sentences and the consonant strings [group effect not significant (NS)]. Hearing subjects who did not know ASL performed at chance in recognizing ASL sentences and nonsigns unlike the two other native signer groups (group effect, F(2,55)=41, P<.0001). Deaf and hearing signers performed equally well on ASL stimuli (group effect NS) (Table 1).

English. When normally hearing subjects read English sentences they displayed robust activation within the standard language areas of the left hemisphere, including inferior frontal (Broca’s) area, Wernicke’s area [superior temporal sulcus (STS) posterior], and the angular gyrus. Additionally, the dorsolateral prefrontal cortex (DLPC), inferior precentral cortex, and anterior and middle STS were active, in agreement with recent studies indicating a role for these areas in language processing and memory (39–42). Within the right hemisphere there was only weak and variable activation (less than 50% of runs) reflecting the ubiquitous left hemisphere asymmetry described by over a century of language research (Fig. 1a; Table 2). In contrast to the hearing subjects, deaf subjects did not display left hemisphere dominance when reading English

Table 1. Demographic and behavioral data for the three subject groups

|

|

Hearing |

Deaf |

Hearing native signers |

|

English exposure |

Birth |

School age |

Birth |

|

English proficiency |

Native |

Moderate* |

Native |

|

ASL exposure |

None |

Birth |

Birth |

|

ASL proficiency |

None |

Native |

Native |

|

Hearing |

Normal |

Profound deafness |

Normal |

|

Mean age |

26 |

23 |

35 |

|

Handedness |

Right |

Right |

Right |

|

Performance on fMRI task, percent correct |

|||

|

English |

|

||

|

Sentences |

85 |

85 |

80 |

|

Consonant strings |

52 |

56 |

55 |

|

ASL |

|

||

|

Sentences |

56 |

92 |

92 |

|

Nonsigns |

51 |

62 |

60 |

|

Numbers of subjects |

|

||

|

English |

|

||

|

Left hemisphere |

8 |

11 |

7 |

|

Right hemisphere |

8 |

12 |

9 |

|

ASL |

|

||

|

Left hemisphere |

8 |

11 |

8 |

|

Right hemisphere |

8 |

12 |

10 |

|

* Range of errors on different subtests of Grammaticality Test of English: 6–26%. |

|||

FIG. 1. Cortical areas displaying activation (P<.005) for English sentences (vs. nonwords) for each subject group.

(Fig. 1b; Table 2). In particular, none of the standard language structures within the left hemisphere displayed reliable and asymmetrical activation (all less than 50% of runs). In addition, unlike hearing subjects, deaf subjects displayed robust activation of middle and posterior temporal-parietal structures within the right hemisphere [see Table 2 and Fig. 1b;

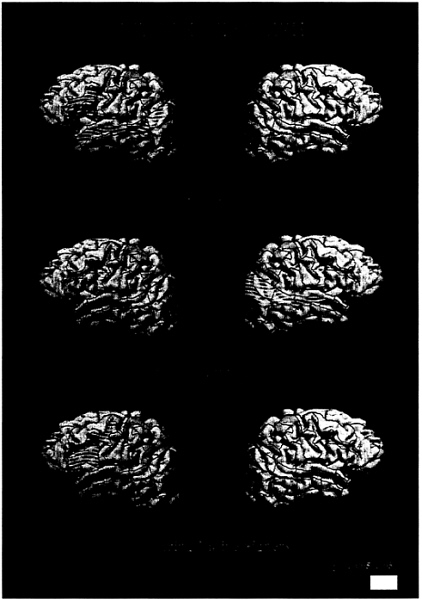

FIG. 2. Cortical areas displaying activation (P<.005) for ASL sentences (vs. nonsigns) for each subject group

group (deaf/hearing)×hemisphere effect, Wernicke’s (STS post) P<.01; angular gyrus P<.01, angular occipital sulcus P<.008]. There are several aspects of these deaf subjects’ different experiences with English that might have accounted for this departure from the pattern seen in normally hearing subjects. First, the possibility that learning ASL as a native

Table 2. Significance levels for English (sentences vs. consonant strings) for the left hemisphere (left), right hemisphere (right), and the hemisphere asymmetry (hemi) for each group studied.

|

|

WRITTEN ENGLISH CONDITION |

||||||||

|

|

Hearing |

Deaf |

Hearing native signers |

||||||

|

AREAS |

Left |

Right |

Hemi |

Left |

Right |

Hemi |

Left |

Right |

Hemi |

|

Frontal |

|||||||||

|

Middle frontal gyrus |

NS |

NS |

NS |

NS |

NS |

NS |

NS |

NS |

NS |

|

Frontal pole |

NS |

NS |

NS |

NS |

NS |

NS |

NS |

NS |

NS |

|

Frontal orbital cortex |

NS |

NS |

.043 |

NS |

NS |

NS |

NS |

NS |

NS |

|

Dorsolateral prefrontal cortex |

.005 |

NS |

.024 |

.038 |

NS |

NS |

.0005 |

NS |

.0000 |

|

Broca’s* |

.0004 |

.039 |

.011 |

.038 |

.030 |

NS |

.0009 |

NS |

.0005 |

|

Precentral sulcus, inf. |

.002 |

.048 |

.038 |

.003 |

.004 |

NS |

.007 |

NS |

NS |

|

Precentral sulcus, post. |

.007 |

NS |

.012 |

.020 |

NS |

NS |

.004 |

NS |

NS |

|

Precentral sulcus, sup. |

.010 |

NS |

.011 |

.007 |

.046 |

.041 |

.020 |

NS |

NS |

|

Central sulcus |

.035 |

NS |

NS |

NS |

NS |

NS |

.018 |

NS |

.017 |

|

Temporal |

|||||||||

|

Temporal Pole |

.021 |

NS |

NS |

NS |

NS |

NS |

NS |

NS |

NS |

|

Superior temp. sulcus, ant. |

.001 |

.047 |

.007 |

NS |

NS |

NS |

NS |

NS |

NS |

|

Superior temp. sulcus, mid. |

.0007 |

.010 |

.005 |

.017 |

.001 |

NS |

NS |

NS |

NS |

|

Superior temp. sulcus, post. |

.0000 |

NS |

.0001 |

.019 |

.0009 |

NS |

.019 |

NS |

.032 |

|

Sylvian fissure, ant. |

.044 |

NS |

NS |

.013 |

NS |

NS |

NS |

NS |

.015 |

|

Sylvian fissure, mid. |

.015 |

NS |

NS |

.022 |

NS |

.030 |

NS |

NS |

NS |

|

Temporoparietal |

|||||||||

|

Supramarginal gyrus |

.007 |

NS |

NS |

NS |

NS |

NS |

NS |

NS |

.023 |

|

Angular gyrus |

.003 |

NS |

.0002 |

NS |

.005 |

NS |

.042 |

NS |

.010 |

|

Anterior occipital sulcus |

.039 |

NS |

NS |

.044 |

.001 |

NS |

.028 |

NS |

.005 |

|

Intraparietal sulcus |

NS |

NS |

NS |

.044 |

.020 |

NS |

.044 |

NS |

NS |

|

Postcentral sulcus |

NS |

NS |

NS |

NS |

NS |

NS |

NS |

NS |

NS |

|

NS, not significant (P>.05). The cortical areas were defined relative to sulcal anatomy. *Pars frontalis, opercularis, triangularis. |

|||||||||

language contributed to the activation within the right hemisphere was assessed by observing the results displayed by the bilingual, hearing native signers. As seen in Fig. 1c and Table 2, these subjects did not display reliable right hemisphere activation, but, as for the normally hearing, monolingual subjects, they displayed a clear left hemisphere lateralization of the activation and a reliable recruitment of anterior left hemisphere language areas [group (hearing/hearing native signers) all areas NS]. Posterior language areas within the left hemisphere were more weakly activated but were significantly asymmetrical (left>right, Table 2). Thus, the lack of left hemisphere asymmetry and the presence of right hemisphere activation when deaf subjects read English was probably not due to the acquisition of ASL as a first language, because the hearing native signers did not display this pattern. The results for ASL clarify the interpretation of these results.

ASL. Next, by observing the pattern of activation during sentence processing in ASL, we evaluated the roles of other factors that have been implicated in establishing and/or modulating language specialization within the left hemisphere, including the acquisition of an aural/oral language that requires the processing of rapid shifts of auditory temporal information, the acquisition of a natural (grammatical) language early in development, and the structure and modality of the language(s) acquired.

As seen in Fig. 2a and Table 3, hearing subjects who did not know ASL did not display any difference in activation between meaningful and nonmeaningful signs (consistent with their behavioral data).

Would sentence processing within a language that relies on the perception of spatial layout, hand shape, and motion recruit classical language areas within the left hemisphere? As seen in Fig. 2b and Table 3, when processing ASL deaf subjects displayed significant left hemisphere activation within Broca’s area and Wernicke’s area. In addition, activation within DLPC, the inferior precentral sulcus, and anterior STS was similar to that observed in hearing subjects when processing English (Fig. 2b; Table 3). This result suggests that acquisition of a spoken language is not necessary to establish specialized language systems within the left hemisphere. Remarkably, processing of ASL sentences in deaf subjects also strongly recruited the right hemisphere, including the entire extent of the superior temporal lobe, the angular region, and inferior prefrontal cortex (see Fig. 2b and Table 3). Is this striking right hemisphere activation during ASL sentence processing attributable to auditory deprivation or to the acquisition of a language that depends on visual/spatial contrasts? As seen in Fig. 2c and Table 3, when processing ASL, hearing native signers also displayed right hemisphere activation similar to that of the deaf subjects (all group effects NS). Thus, the activation of the right hemisphere when processing sentences in ASL appears to be a consequence of the temporal coincidence between language information and visuospatial decoding. In addition, within the left hemisphere, robust activation of Broca’s area, DLPC, precentral sulcus, Wernicke’s area, and the angular gyrus also was observed in these hearing native signers (Fig. 2c; Table 3).

DISCUSSION

In summary, every subject processing their native language (i.e., hearing subjects processing English, deaf subjects processing ASL, hearing native signers processing English, and hearing native signers processing ASL) displayed significant activation within left hemisphere structures classically linked to language processing. These results imply that there are strong biological constraints that render these particular brain areas well designed to process linguistic information independently of the structure or the modality of the language. This effect was more robust for anterior than for posterior regions and suggests that language experience may more strongly influence the development of posterior language areas. Elucidation of the functional organization and the functional significance of the language-invariant areas identified by the

Table 3. Significance levels for ASL (sentences vs. nonsigns) for the left hemisphere (left), right hemisphere (right), and the hemisphere asymmetry (hemi) for each of the groups studied

|

|

American Sign Language condition |

||||||||

|

|

Hearing |

Deaf |

Hearing native signers |

||||||

|

Areas |

Left |

Right |

Hemi |

Left |

Right |

Hemi |

Left |

Right |

Hemi |

|

Frontal |

|||||||||

|

Middle frontal gyrus |

NS |

NS |

NS |

.013 |

NS |

NS |

NS |

NS |

NS |

|

Frontal pole |

NS |

NS |

NS |

NS |

.016 |

NS |

NS |

.013 |

NS |

|

Frontal orbital cortex |

NS |

NS |

NS |

.025 |

NS |

NS |

.028 |

.025 |

NS |

|

Dorsolateral prefrontal cortex |

NS |

NS |

NS |

.001 |

.001 |

NS |

.001 |

NS |

NS |

|

Broca’s* |

NS |

NS |

NS |

.004 |

.0004 |

NS |

.0008 |

.038 |

.001 |

|

Precentral sulcus, inf. |

NS |

NS |

NS |

.005 |

.004 |

NS |

.0000 |

.017 |

.007 |

|

Precentral sulcus, post. |

NS |

NS |

NS |

.001 |

.016 |

NS |

.0003 |

.003 |

NS |

|

Precentral sulcus, sup. |

NS |

NS |

NS |

.0001 |

.007 |

.030 |

.002 |

.014 |

.005 |

|

Central sulcus |

NS |

NS |

NS |

.033 |

.031 |

NS |

.010 |

NS |

NS |

|

Temporal |

|||||||||

|

Temporal pole |

NS |

NS |

NS |

.002 |

.0001 |

NS |

.020 |

.011 |

NS |

|

Superior temp, sulcus, ant. |

NS |

NS |

NS |

.004 |

.0002 |

NS |

.002 |

.0001 |

NS |

|

Superior temp, sulcus, mid. |

NS |

NS |

NS |

.010 |

.0000 |

NS |

.0004 |

.0000 |

NS |

|

Superior temp, sulcus, post. |

NS |

NS |

NS |

.002 |

.0000 |

NS |

.0003 |

.0002 |

NS |

|

Sylvian fissure, ant. |

NS |

NS |

NS |

.005 |

.033 |

NS |

.004 |

NS |

NS |

|

Sylvian fissure, mid. |

NS |

NS |

NS |

.017 |

NS |

NS |

.044 |

NS |

NS |

|

Temporoparietal |

|||||||||

|

Supramarginal gyrus |

NS |

NS |

NS |

NS |

NS |

NS |

.001 |

.044 |

.006 |

|

Angular gyrus |

NS |

NS |

NS |

.020 |

.0002 |

NS |

.0006 |

.0002 |

NS |

|

Anterior occipital sulcus |

NS |

NS |

NS |

NS |

.003 |

NS |

.006 |

.003 |

NS |

|

Intraparietal sulcus |

NS |

NS |

NS |

NS |

NS |

NS |

.013 |

NS |

NS |

|

Postcentral sulcus |

NS |

NS |

NS |

NS |

.037 |

NS |

NS |

NS |

NS |

|

The cortical areas were defined relative to sulcal anatomy. NS, not significant (P>.05). *Pars frontalis, opercularis, triangularis. |

|||||||||

type of neuroimaging technique used in the present study (i.e., blood oxygenation level-dependent contrast) requires further research. However, event-related brain potential (ERP) studies comparing the timing and activation patterns of sentence processing in English and ASL suggest that similar neural events with similar timing and functional organization occur within the left hemisphere of native speakers and signers (31). The ERP research, in line with the present study, also points to extensive right hemisphere activation in early learners of ASL and supports the proposal that activation within parietooccipital and anterior frontal areas of the right hemisphere may be specifically linked to the linguistic use of space. Although fMRI and ERP results suggest both hemispheres are active during ASL processing in neurologically intact native signers, lesion evidence suggests that the contribution of the right hemisphere may not be as central as that of the left hemisphere because lasting impairments of sign production and perception have been reported after left hemisphere lesions but fewer deficits have been reported after right hemisphere damage (28, 32). If upheld in future studies of congenitally deaf native signers who sustain neurological damage, such results would suggest that studies of the effects

of lesions on behavior and neuroimaging studies of neurologically intact subjects provide different types of information. The former point to areas that may be necessary and sufficient for particular types of processing whereas the latter index areas that participate in processing in the neurologically intact individual.

Recent lesion studies and neuroimaging studies of normal adults reading English have identified additional language-relevant structures beyond Broca’s and Wernicke’s areas, including the left DLPC and the entire extent of the left superior temporal gyrus (39–46). In the present study these areas were active when normal hearing subjects read English and when hearing and deaf native signers viewed ASL. These results suggest that these newly identified language areas may, like the classical language areas, mediate language independently of the sensory modality and structure of the language.

The results showing a lack of left hemisphere asymmetry when deaf subjects read English are consistent with previous behavioral and electrophysiological studies (23, 24). Previous studies of deaf subjects and hearing bilingual subjects who perform differently on tests of English grammar suggest this effect may be linked to the age of acquisition and/or the degree to which grammatical skills have been acquired in English (19, 24). The strong right hemisphere activation in deaf subjects reading English may be interpreted in light of behavioral studies that report many deaf individuals rely on visual-form information when reading and encoding written English (47). In the present study, as in previous studies, the right hemisphere effect was variable in extent and was observed in about 70% of deaf subjects.

The hearing native signers were true bilinguals, native users of both English and ASL from infancy. Their results are striking because they displayed, within the same experimental session, a strongly left-lateralized pattern of activation for reading English but robust and repeated activation of both the left and the right hemispheres for sentence processing in ASL. The activation patterns of these subjects, although similar,

were not identical to either those of the monolingual hearing subjects reading English or to the congenitally deaf subjects viewing ASL. For example, when reading English, both hearing groups displayed strong left hemisphere asymmetries over inferior frontal regions. However, the hearing native signers did not display as robust activation over temporal brain regions. It may be that anterior regions perform similar processing on language input independently of the form and structure of the language whereas posterior regions may be organized to process language primarily of one form (e.g., manual/spatial or oral/aural). Further research characterizing cerebral organization during language acquisition will contribute to an understanding of this effect. When processing ASL, both deaf and hearing native signers displayed significant activation of both left and right frontal and temporal regions. However, whereas the activations were uniformly bilateral or larger in the right hemisphere for the deaf subjects, over anterior areas they tended to be larger from the left hemisphere in the hearing native signers. This pattern suggests that the early acquisition of oral/aural language influences the organization of anterior areas for ASL.

The hearing native signers (bilinguals) displayed considerable individual differences during sentence processing of both English and ASL. These results are reminiscent of recent reports of a high degree of variability from individual to individual and area to area of language activation in hearing, speaking bilinguals who learned their second language after the age of 7 years (20, 21). Thus, these data are consistent with the proposal that in addition to individual differences in the age of acquisition, proficiency, and learning and/or biological substrates, the structure and modality of the first and second languages also determine cerebral organization in the bilingual.

In summary, classical language areas within the left hemisphere were recruited in all groups (hearing or deaf) when processing their native language (ASL or English). In contrast, deaf subjects reading English did not display activation in these areas. These results suggest that the early acquisition of a fully grammatical, natural language is important in the specialization of these systems and support the hypothesis that the delayed and/or imperfect acquisition of a language leads to an anomalous pattern of brain organization for that language.‡‡ Furthermore, the activation of right hemisphere areas when hearing and deaf native signers process sentences in ASL, but not when native speakers process English, implies that the specific nature and structure of ASL results in the recruitment of the right hemisphere into the language system. This study highlights the presence of strong biases that render regions of the left hemisphere well suited to process a natural language independently of the form of the language, and reveals that the specific structural processing requirements of the language also in part determine the final form of the language systems of the brain.

|

‡‡ |

The deaf subjects in this study scored moderately on tests of English grammar (Table 1). However, deaf subjects who fully acquire the grammar of English (i.e., score ≥95% on tests) display evidence of left hemisphere specialization for English (48). |

We thank Dr. Robert Balaban of the National Heart, Lung, and Blood Institute at the National Institutes of Health for providing access to the MRI system, students and staff at Gallaudet University for participation in these studies, Drs. S.Padmanhaban and A.Prinster and C.Hutton, T.Mitchell, A.Newman, and A.Tomann for help with these studies, Dr. J.Haxby for lending us equipment, and M.Baker, C.Vande Voorde, and L.Heidenreich for help with the manuscript. This research was supported by grants from the National Institute on Deafness and Communication Disorders, the Human Frontiers Grant, and the J.S.McDonnell Foundation.

1. Rice, C. (1979) Res. Bull. Am. Found. Blind 22, 1–22.

2. Hyvarinen, J. (1982) The Parietal Cortex of Monkey and Man (Springer, New York).

3. Merzenich, M.M. & Jenkins, W.M. (1993) J. Hand Ther. 6, 89–104.

4. Recanzone, G.H., Schreiner, C.E. & Merzenich, M.M. (1993) J. Neurosci. 13, 87–103.

5. Uhl, F., Kretschmer, T., Linginger, G., Goldenberg, G., Lang, W., Oder, W. & Deecke, L. (1994) Electroenceph. Clin. Neurophys. 91, 249–255.

6. Kujala, T., Alho, K., Kekoni, J., Hämäläinen, H., Reinikainen, K., Salonen, O., Standerskjöld-Nordenstam, C.-G. & Näätänen, R. (1995) Exp. Brain Res. 104, 519–526.

7. Rauschecker, J.P. (1995) Trends Neurosci. 18, 36–43.

8. Kaas, J.H. (1995) in The Cognitive Neurosciences, ed. Gazzaniga, M.S. (MIT Press, Cambridge, MA).

9. Karni, A., Meyer, G., Jezzard, P., Adams, M.M., Turner, R. & Ungerleider, L.G. (1995) Nature (London) 377, 155–158.

10. Neville, H.J. (1995) in The Cognitive Neurosciences, ed. Gazzaniga, M.S. (MIT Press, Cambridge, MA), pp. 219–231.

11. Sadato, N., Pascual-Leone, A., Grafman, J., Ibanez, V., Deiber, M.-P., Dold, G. & Hallett, M. (1996) Nature (London) 380, 526–528.

12. Röder, B., Rösler, F., Hennighausen, E. & Naecker, F. (1996) Cog. Brain Res. 4, 77–93.

13. Röder, B. Teder-Salejarvi, W., Sterr, A., Rösler, F., Hillyard, S.A. & Neville, H.J. (1997) Soc. Neurosci. 23, 1590.

14. Neville, H.J. & Bavelier, D. (1998) Proc. NIMH Conf. Adv. Res. Dev. Plasticity, in press.

15. Mitchell, T.V., Armstrong, B.A., Hillyard, S.A. & Neville, H.J. (1997) Society for Neurosci. 23, 1585.

16. Newport, E. (1990) Cognit. Sci. 14, 11–28.

17. Mayberry, R. (1993) J. Speech Hearing Res. 36, 1258–1270.

18. Paradis, M. (1995) Aspects of Bilingual Aphasia (Pergamon, Oxford).

19. Weber-Fox, C. & Neville, H.J. (1996) J. Cognit. Neurosci. 8, 231–256.

20. Kim, K.H.S., Relkin, N.R., Lee, K.M. & Hirsch, J. (1997) Nature (London) 388, 171–174.

21. Dehaene, S., Dupoux, E., Mehler, J., Cohen, L., Paulesu, E., Perani, D., van de Moortele, P., Lehéricy, S. & Le Bihan, D. (1998) Neuroreport, in press.

22. Lenneberg, E. (1967) Biological Foundations of Language (Wiley, New York).

23. Neville, H.J., Kutas, M. & Schmidt, A. (1982) Brain Lang. 16, 316–337.

24. Neville, H.J., Mills, D.L. & Lawson, D.S. (1992) Cereb. Cortex 2, 244–258.

25. Liberman, A. (1974) in The Neurosciences Third Study Program, eds. Schmitt, F.O. & Worden, F.G. (MIT Press, Cambridge, MA), pp. 43–56.

26. Corina, D.P., Vaid, J. & Bellugi, U. (1992) Science 225, 1258– 1260.

27. Tallal, P., Miller, S. & Fitch, R.H. (1993) Ann. N. Y. Acad. Sci. 482, 27–47.

28. Poizner, H., Klima, E.S. & Bellugi, U. (1987) What the Hands Reveal about the Brain (MIT Press, Cambridge, MA).

29. Söderfeldt, B., Rönnberg, J. & Risberg, J. (1994) Brain Lang. 46, 59–68.

30. Hickok, G., Bellugi, U. & Klima, E.S. (1996) Nature (London) 381, 699–702.

31. Neville, H.J., Coffey, S.A., Lawson, D., Fischer, A., Emmorey, K. & Bellugi, U. (1997) Brain Lang. 57, 285–308.

32. Corina, D.P. (1997) in Aphasia in Atypical Populations, ed. Coppens, P. (Erlbaum, Hillsdale, NJ).

33. Klima, D. & Bellugi, U. (1979) The Signs of Language (Harvard Univ. Press, Cambridge MA).

34. Linebarger, M.C., Schwartz, M.F. & Saffran, E.M. (1983) Cognition 13, 361–397.

35. Turner, R., Jezzard, P., Wen, K.K., Kwong, D., LeBihan, T., Zeffiro, T. & Balaban, R.S. (1993) Magn. Reson. Med. 29, 277–279.

36. Bandettini, P.A., Jesmanowicz, A., Wong, E.C. & Hyde, J.S. (1993) Magn. Reson. Med. 30, 161–173.

37. Rademacher, J., Galaburda, A.M., Kennedy, D.N., Filipek, P.A. & Caviness, V.S. (1992) J. Cognit. Neurosci. 4, 352–374.

38. Srivastava, M.S. & Carter, E.M. (1983) An Introduction to Applied Multivariate Statistics (North-Holland, Amsterdam).

39. Mazoyer, B., Tzourio, N., Frak, V., Syrota, A., Murayama, N., Levrier, O., Salamon, G., Dehaene, S., Cohen, L. & Mehler, J. (1993) J. Cognit. Neurosci. 5, 467–479.

40. Demonet, J.-F., Wise, R. & Frackowiak, R.S.J. (1993) Human Brain Map. 1, 39–47.

41. Cohen, J.D., Perstein, W.M., Baver, T.S., Nystrom, L.E., Noll, D.C., Jonides, J. & Smith, E.E. (1997) Nature (London) 386, 604–608.

42. Dronkers, N.F., Wilkins, D.P., Van Valin, R.D., Redfern, B.B. & Jaeger, J.J. (1994) Brain Lang. 47, 461–463.

43. Petersen, S.E. & Fiez, J.A. (1993) Annu. Rev. Neurosci. 16, 509–530.

44. Dronkers, N.F. & Pinker, S. (1998) in Principles in Neural Science, in press, 4th Ed.

45. Nobre, A.C. & McCarthy, G. (1995) J. Neurosci. 15, 1090– 1099.

46. Bavelier, D., Corina, D.P., Jezzard, P., Padmanhaban, S., Clark, V.P. , Karni, A., Prinster, A., Braun, A., Lalwani, A., Rauschecker, J., Turner, R. & Neville, H.J. (1997) J. Cog. Neurosci. 9, 664–686.

47. Conrad, R. (1977) Br. J. Educ. Psychol. 47, 138–148.

48. Neville, H.J. (1991) in Brain Maturation and Cognitive Development: Comparative and Cross-Cultural Perspectives, ed. Gibson, K.R. & Petersen, A.C. (Aldine de Gruyter, Hawthorne, NY), pp. 355–380.