2

Information for Decision Making

Data Needs and Resources

Individuals, corporations, and government officials can be called on at any time to make decisions related to natural disaster mitigation, preparedness, response, and recovery. In making these decisions they utilize whatever information resources are available to them. In the absence of a global or national disaster information network, a wide variety of alternative information resources are utilized. Some organizations have developed their own internal information networks, while others rely on a variety of external information sources. This complexity presents a formidable challenge in analyzing information uses and needs.

Disaster information is needed by decision makers at many different levels and different scales. Officials in municipalities and counties need information that is sufficiently detailed to be useful in all aspects of disaster management—information such as land ownership; detailed hazard and risk maps; and the locations of utility lines, disaster response teams, and emergency supplies. State and federal officials need regional and nationwide data for program design, planning, and response to major disasters. Private corporations, such as utilities, insurance companies, manufacturing companies, and land corporations, as well as such organizations as the American Red Cross, need information on both the local and the national levels for planning, preparedness, response, and recovery. Some of the types of information needed for disaster planning, preparedness, response, and recovery are listed in Table 2-1.

Disaster information is gathered and held by many different organizations at different scales and levels of detail—for example, individuals hold plans, specifications, and as-built drawings of buildings. Companies hold information at different scales on their facilities and systems. Municipalities have maps showing properties, locations of roads, power lines, and sources of emergency supplies. Counties and states have data on infrastructure, hazardous waste sites, and fault lines. The federal government holds back-

TABLE 2-1 Information Resources for Decision Making

|

Base Data Topography Political boundaries Public land survey system Geographic names Demography Land ownership/use Critical facilities Scientific Data Hydrography/hydrology (surface and subsurface flows and levels) Ocean levels and tides Soils Geology: rock types/ages/properties/structure Meteorology and climatology Archeology Seismology: active faults, seismicity, seismic wave propagation, ground motion Volcanology Wildlife: habitat, spawning areas, breeding grounds Engineering Data Control structures: locks, dams, levees Pump stations Building inventories/codes Offshore facilities Transportation, bridges, tunnels Utility infrastructure, pipelines, power lines Critical facilities Communication systems Economic Data Financial Insurance: holdings, losses Exposure Environmental Data Threatened and endangered species Hazardous sites Water quality Critical facilities Response Data Evacuation routes Management plans Aircraft routes Personnel deployment Equipment deployment Warning system Shelters Monitoring system Loss estimates |

ground data on cartography and demographics and gathers real-time data on precipitation, stream flow, and seismic activity, as listed in Table 2-2. This diversity of interests argues for federal leadership in establishing a national process for better dissemination of disaster information.

Case Studies in Disaster Decision Making

The information-gathering process for disaster management is so complex and varied that it almost defies generalization. Instead of attempting a comprehensive analysis of this process, the use of disaster information in decision-making is illustrated here through case studies. These case studies show how information resources currently serve decision makers and indicate the strengths and weaknesses of current disaster information systems.

Tornado.

At 8:03 p.m. CDT on Saturday, July 13, 1998, a tornado touched down in western Oklahoma City, Oklahoma. For the next 10 minutes it hopscotched its way eastward through the northern suburbs of the city. Fortunately, the tornado was relatively weak, and preliminary damage surveys classified it as an F-2 on a scale of 0 to 5. A trained media storm spotter measured winds at 105 mph at one location.

Numerical weather forecasts produced by the National Weather Service (NWS) three days prior to the Oklahoma City tornado indicated the potential for severe weather in the Southern Great Plains during the evening hours of July 13. Such computer-generated numerical products are available on the World Wide Web and through private distributors of the NWS “Family of Services.” Private entities, such as the Weather Channel, newspapers, and the broadcast media distribute this information without charge, in the form of forecasts for specific cities and in graphical formats (maps). Many companies and organizations with sensitivity to severe weather (such as railroads, tourism, and utilities) contract with commercial weather providers for value-added services tailored to their specific needs. A three-to five-day forecast is used to make decisions regarding anticipated staffing levels, possible overtime pay, equipment availability, communications checks, and inventories.

The “severe weather outlook” issued by the NWS Storm Prediction Center at 12:00 p.m. on July 12 placed Oklahoma City in an area of “slight risk” for the following day. Updates on July 13 at 12:00 a.m., 5:00 a.m., and 10:00 a.m. kept Oklahoma City under the “slight risk” threat. The city was included in a “tornado watch” issued by the Storm Prediction Center at

4:01 p.m., fully four hours in advance of touchdown. These “outlooks” and “watches” are available via the World Wide Web and NWS's Family of Services. Also, watches are broadcast on the National Oceanic and Atmospheric Administration's (NOAA) Weather Radio Network. Inclusion in an outlook or tornado watch area triggers many activities throughout local governments and industries. Examples include staffing emergency management centers, dispersing storm spotters, alerting repair crews, tying down light aircraft, and monitoring weather radars owned by television stations.

The NWS's Oklahoma City Forecast Office issued a tornado warning on July 13 at 7:42 p.m. for the county containing Oklahoma City. Tornado warnings are automatically disseminated to local media and local emergency management agencies and broadcast on NOAA Weather Radio. This 21-minute lead time is considerably better than the national average of 12 minutes made possible by the NWS's recent modernization and restructuring. Even 12 minutes allows people to seek shelter in the safest places in their homes, schools, and businesses and to take action to protect some property.

No deaths were reported in the aftermath of the Oklahoma City tornado of July 13, 1998. Preliminary estimates of insured losses were placed at $25 million. There were injuries requiring medical attention, including 17 at an amusement park near Interstate 35 that was still operating its rides and attractions when it took a direct hit by the tornado. The Oklahoma City media broadcast weather information, including the location of the tornado, to the general public. The path of the storm as reported by NWS Doppler radar, the media, and emergency services was crucial to getting aid and medical assistance to the affected areas and to preventing looting.

Tsunami

On October 4, 1994, at 06:23 PDT, a magnitude 8.2 earthquake occurred along the Kuril Islands trench subduction zone, about 150 kilometers off the east coast of Hokkaido, Japan. The Pacific Tsunami Center in Hilo, Hawaii, confirmed that a tsunami had been generated by the earthquake and that destructive tsunami waves had struck northern Japan and the Kuril Islands. Wave heights of 1.3 to 3.5 meters had been reported in northern Japan.

At 09:01 PDT, NOAA issued a tsunami warning for all coastal areas and islands in the Pacific. The warning included estimates of the arrival time of the initial wave at various locations around the Pacific Ocean. NOAA advised that tsunami wave heights could not be predicted and that the tsunami threat could result in a series of waves lasting several hours after the arrival

TABLE 2-2 Examples of Major Types of Information Held and Being Gathered by Federal Agencies

|

|

Agency/Bureaua |

||||

|

Data Type |

NOAA |

USGS |

Other DOI |

DOA |

Census Bureau |

|

Base cartographic |

X |

X |

|

|

X |

|

Land-use/land cover/vegetation type |

X |

X |

X |

X |

|

|

Ecological |

|

X |

X |

X |

|

|

Seismic |

|

X |

X |

|

|

|

Hydrological |

X |

X |

X |

X |

|

|

Oceanographic |

X |

X |

|

|

|

|

Threatened and endangered species |

|

X |

X |

X |

|

|

Geology and soils |

|

X |

X |

X |

|

|

Meteorological |

X |

|

|

|

|

|

Hazardous sites |

|

|

X |

X |

|

|

Nuclear waste sites |

|

|

|

|

|

|

Demographic |

|

|

|

|

X |

|

Fire fuel type |

|

|

X |

X |

|

|

Land ownership |

|

|

X |

X |

|

|

Aircraft routes |

X |

|

|

|

|

|

River flow and stage |

X |

X |

|

|

|

|

Snow pack |

|

|

|

X |

|

|

Ground deformation |

|

X |

|

|

|

|

a Abbreviations: NOAA, National Oceanic and Atmospheric Administration; USGS, U.S. Geological Survey; DOI, U.S. Department of the Interior; DOA, U.S. Department of Agriculture; EPA, U.S. Environmental Protection Agency; NRC, U.S. Nuclear Regulatory Commission; FAA, Federal |

|||||

of the initial one. NOAA further advised that, if no major (or damaging) waves were recorded for two hours after the estimated time of initial arrival, local authorities could assume the tsunami threat had passed. They stated that the “all clear” determination had to be made by local authorities. The warning concluded that bulletins would be issued hourly or sooner if conditions warranted and that the tsunami warning would remain in effect until further notice.

Decision makers at Pacific Gas and Electric's (PG&E) Diablo Canyon Power Plant were concerned about the safety of offshore divers who were conducting maintenance operations on the cooling-water intake structure at the plant. In addition, the sea level often drops significantly as a large tsunami approaches the coastline; if the drop were large enough and long enough, there could be a loss of cooling water. Although the plant has emergency cooling water in reservoirs onshore, those responsible for emergency response at the plant were apprehensive.

|

EPA |

NRC |

FAA |

FEMA |

NASA |

DOD |

DOE |

|

|

|

|

|

|

X |

|

|

X |

|

|

|

X |

X |

|

|

|

X |

|

X |

|

|

|

|

X |

|

|

X |

|

|

|

|

|

|

|

|

|

X |

|

|

X |

|

|

|

|

|

|

|

|

|

X |

X |

|

|

|

|

X |

|

|

X |

|

X |

|

|

|

X |

|

|

|

X |

X |

|

|

|

X |

|

|

X |

|

|

|

|

|

|

X |

|

|

|

Aviation Administration; FEMA, Federal Emergency Management Agency; NASA, National Aeronautics and Space Administration; DOD, U.S. Department of Defense; DOE, U.S. Department of Energy. |

||||||

At 10:00 PDT, with the tsunami about six hours away, PG&E decision makers called on their geosciences department for advice. It was determined that there was no immediate concern for the safety of the divers but that it would be prudent to keep a close watch on the situation as it developed. An important indicator of the potential danger of the approaching tsunami would be the wave's characteristics as it arrived at Hilo, Hawaii. Knowing the wave characteristics of the tsunami at Hilo, a confident judgment could be made about the likelihood of the tsunami wave being destructive when it struck the California coastline about two hours later.

When the tsunami arrived at Hilo about 8 hours after the earthquake occurred, the waves were 0.5 meters high. Given other nondestructive wave heights elsewhere as the tsunami traveled across the Pacific, NOAA canceled the tsunami warning at 14:50 PDT. The cancellation notice remarked that no destructive Pacific-wide tsunami wave threat existed; however, it

continued to caution against local conditions, which could cause variations in tsunami wave action.

With somewhat less than two hours until the time the wave was predicted to reach the California coast, NOAA faxed to PG&E the data from tide-gauge recordings. Officials there had already made a conservative decision to remove their divers from the water in case the approaching tsunami posed a threat to them.

The response of local authorities varied. Some ignored the warning, while others passed it on to the public to let people decide what, if anything, they wanted to do. Some believed evacuation of coastal areas should be ordered immediately. This resulted in some corporate decision makers wondering if they should take precautionary actions or simply ignore the warning.

Although the tsunami warning alerted individuals and organizations of the potential for a destructive tsunami along the Pacific Coast of California, it resulted in considerable confusion. Because of the lack of more timely and user-focused information, valuable response time was lost. NOAA's responsibility was only to issue the warning and to provide periodic updates as the tide-gauge data were received. It was the responsibility of local authorities to decide what actions needed to be taken to protect the well-being of potentially exposed populations along low-lying coastal areas. The warning revealed a general lack of understanding with regard to what should be done to prepare for the arrival of tsunami waves, but even knowledgeable people such as PG&E geoscientists were unable to use the available information effectively.

Volcanic Eruption

On Tuesday afternoon, August 25, 1992, Mt. Spurr in Alaska erupted and sent an ash plume 16 kilometers (10 miles) into the air. A few hours later the skies over Anchorage, located 130 kilometers (80 miles) east of Mt. Spurr, became blackened and gray “snow” fell over the city. The airborne particles and fallen ash forced the temporary closure of the Anchorage Airport, which served as the principal airport for transporting ARCO and British Petroleum workers to the North Slope of Alaska.

Prompt response by ARCO to an eruption warning issued by the Alaska Volcanic Observatory resulted in the following actions: relocation from Anchorage to Fairbanks of two Boeing 727 airplanes used to transport workers and supplies from Anchorage to the North Slope, establishment of interim North Slope ARCO transport operations out of Fairbanks, provision of bus transportation for flight crews and passengers between Anchor-

age and Fairbanks, and curtailment of ARCO night flights in the region. These actions remained in effect for several weeks until it was safe to resume normal ARCO flight operations from the Anchorage airport. The actions taken enabled vital oil field operations to continue without interruption and greatly reduced the danger to personnel and support equipment. Not all operations were so fortunate. Some commercial aircraft had engine damage and were stranded in Anchorage until the airport became functional again and the airborne particles had dissipated.

Flood

The July 31, 1976, Big Thompson Canyon flash flood occurred with little warning and claimed 145 lives. In response to this disaster, officials of the city of Boulder, Colorado, became concerned about what might happen if a similar storm should occur over Boulder Creek just 50 kilometers farther south. Researchers studied the Big Thompson flood and determined the feasibility of implementing an early flood detection and warning system for the city of Boulder. By January 1978 an agreement was reached to design and install an automated flood detection network of approximately 20 real-time self-reporting rain and stream gages. This system became one of the earliest ALERT (Automated Local Evaluation in Real-Time) systems implemented in the United States. A comprehensive flood warning plan also was developed for Boulder Creek that incorporated the early detection and notification components into a warning decision process. Local officials are committed to updating and exercising this plan annually.

The Boulder Creek system was initially operated and maintained by the Boulder County Sheriff's Department. Through its first decade of operation, Sheriff's Department officials were the principal users of the data and remote access capabilities were very limited. This situation changed dramatically after the development of the personal computer. Today with Internet use commonplace, the demands for accurate real-time data are rapidly increasing. The gaging network has been expanded to include more than 130 stations monitoring many other drainage basins and streams in the six-county Denver metropolitan area, and data collection, analysis, and display functions have been integrated into an areawide system. Greatly improved graphical displays and telecommunications capabilities have made remote access to this system very popular.

Wildland Fire

More lightning strikes occur in Florida than in any other state in the

nation. With the very wet winter and very dry spring of 1998, it was not surprising that Florida had one of the worst fire seasons in recent history. The unusually dry conditions and lack of fuel treatment for the past several years (prescribed fires to reduce overgrowth of brush and grasses) allowed a buildup of fuel ready for high-intensity fires.

As multiple fires broke out in northeastern Florida, local fire-fighting organizations were stretched beyond their capabilities and requested assistance from sister agencies throughout the state and from federal agencies in the surrounding states. The National Interagency Coordination Center located at the National Interagency Fire Center in Boise, Idaho, provides national wildland fire coordination of limited resources and support for fires anywhere in and outside the country. Resources such as infrared scanning aircraft were moved to Florida to meet the needs of local fire-fighting organizations. This was possible because of the great amount of information that is shared among the agencies. Standards presently exist for training, experience, management, and technical information interpretation that assure coordinated and consistent emergency response independent of geographical region.

Communities of 1,000 or more people were established in two to three days to take care of the needs of the fire fighters. This included food, showers, transportation, and many other resources. Locations of the camps and staging areas were based on the latest knowledge of which direction the fire was expected to travel. This information is derived from intelligence reports from all available sources, especially weather forecasts. The safety of fire fighters is paramount when they are trying to protect private citizens and also maintain a safe environment for themselves when off duty.

As the fires burned throughout May and June, a massive work force formed behind the scenes to provide intelligence and support for the fire operations. Fire behavior specialists, meteorologists, and fire managers used the available information to identify the areas most severely impacted by drought and the highest fuel loading buildups to project fire spread in threatened areas. Meteorologists downlinked weather data and modeled local weather conditions right at the fire site. Field observers were scouting the line and constantly reporting about fire and fuel information. Data from infrared scanning aircraft, satellites, and ground-based sensors helped decision makers predict the fire spread (movement) and smoke impacts and allowed them to evacuate homeowners from areas of immediate danger. Data were also used to predict indices such as flame height and resistance to control, which in turn were used to determine the amount of crews, equipment, supplies, and aircraft needed to be deployed in the region. Tactical

The greatest risk from wildfires can be in the wildland-urban interface areas, where rural lands meet the outskirts of urban lands. As the number of people in these areas grows, so will the frequency and severity of fires. (Photo courtesy of the U.S. Forest Service.)

decisions were made based on the latest information being received by aerial or ground-based data collections.

Earthquake

Millions of people viewing television coverage of the third game of the World Series in 1989 were instantly aware that a major earthquake had struck San Francisco. Graphic images of shaking at Candlestick Park, plumes of smoke from fires in the Marina District, and collapse of both the San Francisco-Oakland Bay Bridge and the Cypress Freeway viaduct bore witness to the widespread effects of the Loma Prieta earthquake on San Francisco and Oakland. Dramatic television images created an impression that the most significant damage was in the bay area. What was unknown at the time was that the smaller communities of Santa Cruz and Watsonville, about 70 miles south of San Francisco and much closer to the epicenter of the earthquake, experienced devastating damage to their core downtown areas. Even emergency response officials in those communities assumed they were better off than the bay area. By then needed emergency resources bypassed these communities in route to the bay areas. It was not until the next day

that information was available that indicated the extent of damage to such communities as Santa Cruz and Watsonville. The response of emergency personnel and equipment and relief resources to such regions was much slower than needed.

To prevent situations like this in the future, a new state-of-the-art satellite communications system was installed, a new emergency management structure was created, and now an earthquake monitoring and information system is being installed in Southern California. This new system, called TriNet, is intended to provide accurate information on the intensity and geographic distribution of shaking within minutes of the occurrence of a major earthquake. It uses sensors placed in a spatial array on the ground and a sophisticated communications system to relay ground-shaking information to a central processing site for integration and dissemination. This information will guide the gathering of disaster intelligence and the deployment of emergency response resources to the regions where damage is most likely to have occurred. The result will be reduced response time and more accurate deployment of critical resources. This will reduce both the number of casualties and the level of property damage caused by fires and aftershocks. The first phase of the system will be deployed in the southern portion of the state and will be completed in 2002. Planning has begun to expand the system to all of California and to facilitate the distribution of information to a wider range of stakeholders.

Hurricane

In September 1988 Hurricane Gilbert moved into the Gulf of Mexico after leaving behind considerable destruction across Jamaica and Cancun, Mexico. By Thursday, September 15, it was a very dangerous storm with sustained winds of 120 mph. The official National Hurricane Center forecast called for the storm to make landfall somewhere near the Texas-Mexico border, but because of the uncertainties inherent in the forecast, residents of the Texas coast south of Corpus Christi were advised to remain alert for a change in track.

Throughout the day the Galveston city manager, who was also the city's emergency management coordinator, relied on the NWS forecasts which indicated that the area with the highest probability of landfall was well south of Galveston. Consequently, there would be no need to issue an evacuation notice for Galveston. However, early in the afternoon, the port of Galveston received a forecast from a private meteorological company stating that it thought Hurricane Gilbert would make landfall between Galveston and Corpus Christi during early afternoon on Friday. This forecast was passed to

the mayor and the emergency management coordinator. During the afternoon a Galveston radio station carried the same private weather service forecast predicting landfall much farther north than predicted by the National Hurricane Center.

At 4 p.m. the city of La Marque (10 miles north of Galveston on the mainland) recommended evacuation of its citizens. At 6 p.m. the emergency coordinator received a message over the Texas Law Enforcement Telecommunications System that described a scenario of a landfall 40 miles south of Galveston. Although in retrospect this was a worst-case scenario and not a forecast, the confusion factor from the various forecasts was now high enough that at 8 p.m. the emergency coordinator issued a recommendation for total evacuation of Galveston Island. While it is, of course, always better to be safe than sorry, this evacuation proved to be unnecessary and costly as on Friday, September 16, Gilbert came ashore in Mexico, south of Brownsville, Texas.

Observations.

The above case studies, although not illustrative of all situations that might be encountered, indicate how information can and is used to mitigate the effects of natural disasters in a variety of settings. The following observations may be made from the case studies presented and from others:

- Timely and accurate information can significantly reduce loss of life and financial impacts from a natural disaster.

- For most natural disasters, time is of the essence in the delivery of information for the purpose of decision making.

- Disaster information must be in a form that is readily understandable by the potential user.

- When conflicting sources of information exist in an emergency situation, the potential for incorrect decisions is increased, with costly or even life-threatening consequences.

- Acting on incorrect information can be as costly as not acting on correct information.

- Decision makers use disaster information in a variety of different ways, and for optimum benefit this information must be readily adaptable to the decision-making process.

- Information systems can be used to predict the physical processes of disasters as well as their effects.

- Location and intensity information can be used to deploy vital personnel and resources to counter the effects of natural disasters.

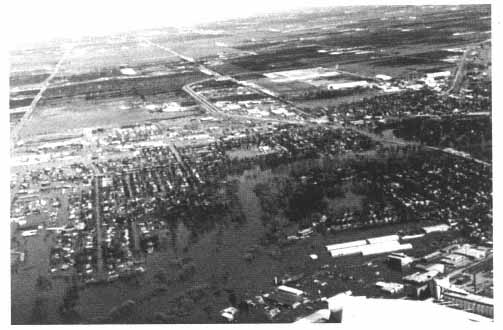

Aerial view of flooding in Grand Forks, North Dakota, during spring of 1997. (Photo courtesy of U.S. Geological Survey.)

-

- Private organizations can provide a useful function in the dissemination of disaster information, especially in delivering such information to the general public.

- Private for-profit organizations assist in delivering disaster information, and it is likely that this will continue to be the case in the future.

- Government agencies possess much of the basic data that are needed for an effective disaster information system, but data are also valuable from private sources.

- Disaster information user needs vary greatly. For some, highly processed data are most useful, while for others raw data are more useful.

- It is important that users of disaster information be adequately trained.

- As atmospheric and oceanographic conditions know no national boundaries, global disaster information is needed for natural disasters.

- In some cases, early and progressive information can be used to optimize the deployment of personnel and resources in a potential high-hazard area prior to the actual occurrence of the effects of a natural disaster there.

- Information products could benefit greatly from user input in defining process requirements for hazard-specific situations at the national (or global) scale.