2

Data Sources

A variety of data types can be used in estimating flood quantiles or exceedance probabilities for the American River. These include systematic streamflow and precipitation data, historical and paleoflood data, and regional hydrometeorological information on extreme events. Flood frequency analysis traditionally has been based on systematic streamflow or precipitation records, where use of the latter requires the application of precipitation runoff modeling. Modem statistical innovations have enabled the use of historical and paleohydrologic data in flood frequency analysis. These data can provide information about extreme flooding over much longer time frames than systematic records, and thus could increase the accuracy of the frequency analysis (Cohn and Stedinger, 1987). Regional studies of maximum precipitation and flood discharges can provide information about extreme floods that can be used to check the accuracy of estimated flood distributions, particularly when these distributions are extrapolated (i.e., applied to flood magnitudes much larger than observed in the systematic record). This chapter begins with a general description of the various sources of data used in flood frequency analysis. It then focuses on specific data relevant to the American River watershed, and potential limitations of these data.

General Description Of Flood Frequency Data

Systematic Streamflow Data

The U.S. Geological Survey (USGS) has primary responsibility for operating a streamflow-gaging-station network in the United States. Systematic streamflow gaging by the USGS began in the late 1800s (Mason and Weiger, 1995). As of 1994, the USGS network consisted of 10,240 stations (Gilbert, 1995) and accounted for more than 85 % of the nation's stream-gaging stations. (About 3,000 of these stations employed crest-stage gages that provide only peak flow information). Historical records of daily streamflow and peak flows for almost 20,000 USGS stations are available for various periods of record. The data are published in annual USGS water data reports for each state and are available on the Internet (http://water.usgs.gov), through USGS data bases, and on compact disc (CD-ROM) from private vendors.

The accuracy of flood discharge data depends on whether large floods are

directly measured by current meter surveys or are estimated by rating-curve extension or indirect measurement techniques (Rantz and others, 1982). Jarrett (1987) stresses the need to assess the reliability of extreme flood data, particularly data collected before 1950.

Flood frequency analysis of systematic flood data typically assumes that the data are independent and identically distributed in time (i.e., temporally uncorrelated and stationary). Local human activities, such as land use changes or reservoir construction, or climate change (regional or global) can make this assumption untenable. There are relatively few gaged streams on watersheds that have not been affected to some degree by human activities. At the same time, there are relatively few cases where human impacts on flood magnitude and frequency have been carefully documented. Lins and Slack (1999) evaluated flood data from watersheds that are considered to be relatively unimpacted by local human activities and did not find compelling evidence of climate-induced non-stationarity for floods. Note, however, that it is very difficult to detect climate-induced non-stationarity in flood data because of the high variability (Jarrett, 1994).

Precipitation Data

The National Weather Service is responsible for maintaining a network of meteorological stations in the United States. The current network includes about 300 primary stations staffed by paid technicians and over 8,000 cooperative stations operated primarily by volunteers (NRC, 1998b). As of 1975 there were about 3,500 non-recording precipitation gages with records of 50 years or more (Chang, 1981). Precipitation data are published in Climatological Data and Hourly Precipitation Data by the National Oceanic and Atmospheric Administration (NOAA). Digital records can be obtained from the National Climatic Data Center, from regional climatic centers, and various vendors. Note that many digital records do not include data collected prior to 1940.

Another source of extreme precipitation data for the United States is a catalog of extreme storms maintained by the U.S. Army Corps of Engineers, the U.S. Bureau of Reclamation, and the National Weather Service. This catalog includes information on over 300 extreme storms including the 1862 storm in California and the Pacific Northwest. For each storm in the catalog, official climatic data as well as rainfall bucket survey data (if available) were compiled and storm characteristics analyzed. It should be noted that extreme storms and floods are not as well documented today as they were in the past, in spite of technological improvements that greatly facilitate such documentation.

Precipitation data are subject to large errors. The most serious problem is the undermeasurement at all operational precipitation gages by amounts that depend primarily on the type of gage (including wind shield), exposure, wind speed, and whether the precipitation is rain or snow. Precipitation measurements during snowfalls are particularly biased. For example, during a snowfall a wind-shielded gage typically undermeasures precipitation by about 40% in a wind of 25 km/hr (Larson and Peck, 1974). In the case of a systematic rainfall record, the problem may be exacerbated if the location and type of precipitation gage is changed during the

period of record. This can lead to inconsistent data, which may appear to indicate climatic non-stationarity (Potter, 1979).

Historical Flood Data

Historical data are episodic observations of flood stage or conditions that were made before systematic data were collected (Jarrett, 1991). In the United States, historical data typically are available for 100 to 200 years (Thomas, 1987). In Egypt and China, historical data are available for several thousands of years (Baker, 1987; Pang, 1987). Historical data are obtained from a variety of sources, including newspapers, human observers, diaries, historical museums, and libraries. Historical descriptions of storms and floods are typically qualitative, and sometimes exaggerated and contradictory; hence, they require careful review (Engstrom, 1996; Pruess, 1996). Historical floods were generally recorded because they disrupted people's lives. The threshold of perception typically depends on the location of people, buildings, and economic activity, and may change in time and with observers (Stedinger and Cohn, 1986).

In statistical terms, historical (and paleoflood) data are usually treated as censored samples. An important type of historical data is knowledge of a level that has not been exceeded at a given location over a known period of time, which USGS has compiled and annotated at many gaging stations.

There are three potential problems with the use of historical data in flood frequency analysis. First, estimates of peak flood discharges associated with historical stage information are subject to error; such errors can be reduced, however, by careful hydraulic analysis, and their impact on flood frequency analysis can be minimized by explicitly accounting for them in the analysis. Second, the most serious error (and one that cannot be statistically accounted for) is an erroneous conclusion that a given level has not been exceeded over a known period of time. Such an error is less likely to happen in heavily populated areas. Finally, as in the case of systematic data, the use of historical data is conditioned on the assumption of stationarity. This can be problematic because of the hydrologic impacts which occurred during the early history of the United States and because of the scarcity of systematic data with which to assess these impacts.

Paleoflood Data

Paleoflood hydrology is the study of ancient flood events, which occurred prior to the time of human observation or direct measurement (Baker, 1987). Paleoflood data provide a perspective on long-term hydrologic and climatic variability that can be useful in flood project design and management. Paleoflood data complement short-term systematic and historical records. They provide information at ungaged locations, provide likely upper limits of the largest floods that have occurred in a river basin, and potentially decrease the uncertainty in estimates of the magnitude and frequency of large floods (Baker, 1987; Costa, 1987; Enzel et al., 1993; Jarrett, 1991; Kochel and Baker, 1982; Patton, 1987; Stedinger and Baker,

1987; Xu and Ye, 1987).

Paleoflood hydrology primarily is concerned with determining the magnitude and frequency of individual paleofloods (Baker, 1987; Baker et al., 1988; Costa, 1987; Gregory, 1983; Hupp, 1988; Jarrett, 1991; Kochel and Baker, 1982; Stedinger and Baker, 1987). Although most paleoflood studies involve prehistoric floods, the methodology is applicable to historic or modern floods (Baker, 1987). Two approaches are in current use. The geomorphic approach is based on the sizes of flood transported boulders (Costa, 1983; Gregory, 1983; Williams, 1984; Stedinger and Baker, 1987). The hydraulic approach, which is more commonly used today, is based on paleostage indicators that provide indirect evidence of the maximum stages in a flood (Baker, 1987; Hupp, 1987; Jarrett and Malde, 1987).

There are many kinds of paleostage indicators, including evidence of vegetation damage, accumulations of woody debris, and sedimentologic evidence. The latter includes erosional and depositional flood features along the margins of flow in a channel (Figure 2.1). Slack-water deposits of sand-sized particles (Figure 2.2) and bouldery flood bar deposits commonly are used as paleostage indicators. The strategy of a paleoflood investigation is to visit the places where evidence of out-of-bank flooding is most likely to be preserved. The types of sites where flood deposits commonly are found include: (1) locations of rapid energy dissipation where flood transported sediments would be deposited, such as tributary junctions, reaches of decreased channel gradient, abrupt channel expansions, or reaches of increased flow depth; (2) locations along the sides of valleys in wide, expanding reaches where fine-grained sediments or slack-water deposits would likely be deposited; (3) ponded areas upstream from channel contractions; and (4) locations downstream from moraines across valley floors where large floods would likely deposit sediments eroded from the moraines. Lack of evidence of extraordinary floods may be as important as tangible onsite evidence of flooding (Jarrett and Costa, 1988; Levish et al., 1994). Knowledge of the nonoccurrence of floods for long periods of time has great potential value in improving flood frequency estimates (Stedinger and Cohn, 1986). The actual value depends on the correctness of the assumed probability distribution and of the assumption that flood flows are independent and identically distributed. Paleoflood evidence is generally relatively easy to recognize and long lasting (e.g., Figure 2.3) because of the quantity, morphology, structure, and size distribution of sediments deposited by floods.

Once paleostages have been estimated, a hydraulic analysis must be conducted to estimate the corresponding discharges. The step backwater method (Chow, 1959) is a commonly used and reliable method for discharge estimation in which a one-dimensional gradually-varied flow analysis is used to calculate watersurface elevations as a function of discharge. For a given site, the discharge that produces the observed paleostage elevations is selected as the peak discharge. The analysis readily allows for evaluation of critical assumptions, such as choice of roughness coefficients, and for estimation of uncertainties. For complex channel reaches, two-dimensional hydraulic models are coming into use (Stockstill and Berger, 1994; Miller, 1994).

A third step in the analysis of a paleoflood is dating of the event. A commonly used and relatively accurate dating technique is radiocarbon dating (Baker, 1987; Kochel and Baker, 1982, 1988), by which absolute ages are

Figure 2.1

Diagrammatic section across a stream channel showing a flood stage and various flood features.

Source: Jarrett, 1991.

determined from laboratory measurements of the ratio of radioactive carbon-14 to stable carbon-12 in a samples of organic carbon. Typical sources of organic carbon include wood, charcoal, leaves, humus in soils, and bone. A recent advance in radiocarbon dating based on the use of tandem accelerator mass spectrometry has resulted in more accurate age estimates and requires a smaller sample of organic carbon (Kochel and Baker, 1988). Using this approach, samples having an age of 10,000 years or less generally can be dated with an uncertainty of less than 100 years. When flood-scarred or -damaged trees are present, dendrochronological methods can be used to date floods. In some cases, these dates are accurate to the year and even the season.

The use of paleoflood information in flood frequency analysis is subject to errors in the estimation of discharge peak and age, errors in field interpretations, and questions of hydrologic stationarity. Errors in the estimation of peak discharges and ages can be controlled by careful hydraulic and laboratory analysis. Furthermore, these errors can be quantified and incorporated into the flood frequency analysis. Qualified paleohydrologists can avoid errors in field interpretations by collecting information at several sites to provide internal checks. Note, however, that there are no universally accepted methods for quality assurance and control in the practice of paleohydrology.

The most problematic issue regarding the use of paleoflood information in

flood frequency analysis is the statistical nature of climatic variability. Flood frequency analysis is traditionally based on the assumption that flood magnitudes are independent and identically distributed in time. As previously discussed, changes in watershed vegetative cover due to humans or natural disturbances (fires, blowdowns, etc.) can change the probability distribution of floods, and invalidate the assumption that floods are identically distributed in time. Climatic variability can also pose a problem. Over decades it may be a useful approximation to assume that climate and flood magnitudes are independent and identically distributed in time. Over thousands of years, such an approximation may not be warranted.

Several recent papers have provided evidence that flood magnitudes are not independent and identically distributed over the last 5,000 to 10,000 years. Based on a 7,000-year record of overbank floods for upper Mississippi River tributaries, Knox (1993, p. 430) concludes: "During a warmer, drier period between about 3,300 and 5,000 years ago, the largest, extremely rare floods were relatively small-the size of floods that now occur once every 50 years. After ~3,300 years ago, when the climate became cooler and wetter, an abrupt shift in flood behavior occurred, with frequent floods of a size that now recur only once every 500 years or more. Still larger floods occurred between about A.D. 1250 and 1450, during the transition from the medieval warm interval to the cooler Little Ice Age. All of these changes were apparently associated with changes in mean annual temperature of only about 1-2°C and changes in mean annual precipitation of ≤10-20%." Knox's evidence suggests that during the past 7,000 years, floods on upper Mississippi River tributaries have not behaved as independent and identically distributed random variables.

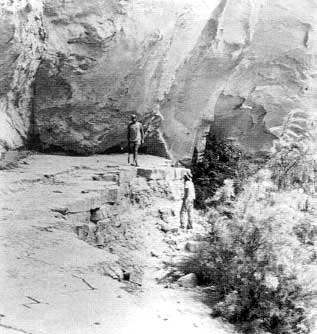

Figure 2.2

An ideal channel for studying slack-water deposits—the Escalante River in Utah. The person on the left is standing on a typical sequence of slack-water deposits, which were deposited where the flow velocity decreased in the canyon of the Escalante River.

Source: Robert H. Webb, U.S. Geological Survey.

Figure 2.3

View upstream toward the canyon of the Snake River in Idaho at river mile 462. The surface of the basalt bench in the foreground, which is about 450 feet above the river (elevation 2,750 feet), was scoured by the Bonneville Flood about 15,000 years ago. The light colored mound in the lee of the bench in the upper left of the photograph is a grass-covered gravel bar deposited by the flood, which demonstrated the preservation potential of paleostage indicators.

Source: Reprinted, with permission, from the Geological Society of America, 1987. © 1987 The Geological Society of America.

Ely et al. (1993) used paleoflood data from 19 rivers in Arizona and southern Utah to conclude that "the largest floods in the region cluster into distinct time intervals that coincide with periods of cool, moist climate and frequent El Niño events. The floods were most numerous from 4,800 to 3,600 years before the present (B.P.), around 1,000 B.P., and 500 years B.P., but decreased markedly from 3,600 to 2,200 and 800 to 600 B.P." Figure 2 in Ely et al. (1993) indicates that about 70% of the extreme floods represented in the documented paleoflood record of the last 5,000 years occurred during the last 600 years. Unless the paleoflood record is grossly incomplete, this suggests that extreme floods in Arizona and southern Utah do not behave as independent and identically distributed random variables.

The committee is interested in the use of flood frequency analysis to predict and mitigate future flood risk. If floods are not well modeled as independent and identically distributed over the time period for which paleoflood information is available, use of this information in conventional flood frequency analysis may lead to biased estimates of future flood risk. For example, based on the data of Ely et al. (1993), conventional use of a 5,000-year paleoflood record from Arizona and southern Utah would result in underprediction of the future risk of extreme floods there. Estimation of the bias in this case or in any other case requires specification of a model of floods that accounts for climatic variability. As discussed in Chapter 4, we do not at this time have sufficient understanding of climate dynamics to confidently specify such a model.

Regional Analyses of Hydrometeorologic Extremes

Regional analyses of hydrologic extremes are based on the concept of substituting space for time. The idea is that a rare event that occurs in one part of a large homogeneous region could occur at other locations. Regional methods allow the analyst to make use of such rare events. Two kinds of regional analyses are at issue in the American River—the envelope curve of maximum observed flood discharges and the probable maximum flood (PMF).

A flood envelope curve is a mathematical expression that provides an upper bound of observed maximum instantaneous peak discharges for some region as a function of drainage area. Envelope curves have been long used in flood hydrology (Crippen and Bue, 1977; Costa, 1987), and were particularly useful before alternative methods of regional flood analysis were developed. A recent innovation is the incorporation of peak discharges estimated from paleoflood data (Enzel et al., 1993). A flood envelope curve is useful for "displaying and summarizing data on actual occurrences of extreme floods" (IACWD, 1986, p. 71). However, the envelope curve itself offers no means of estimating flood exceedance probabilities. Estimation of such probabilities requires the use a statistical framework, which in turn requires careful evaluation of regional homogeneity and spatial correlation.

For more than 50 years, the PMF has been used in the design of hydraulic features of high-hazard dams. The PMF is defined as "the maximum runoff condition resulting from the most severe combination of hydrologic and meteorologic conditions that are considered reasonably possible for the drainage basin under study" (Cudworth, 1987, p. 114). PMF estimates are derived from

estimates of the probable maximum precipitation (PMP), which is the estimated upper limit of precipitation for a basin. In the United States, the estimation of PMP is based on data contained in the previously mentioned extreme storm catalog. The standard approach for estimating PMF includes (1) estimating the PMP for the basin; (2) deducting appropriate precipitation losses to estimate the excess rainfall available for runoff; (3) converting rainfall excess into a flood hydrograph; and (4) adding interflow and snowmelt hydrograph components to obtain the final PMF hydrograph (Cudworth, 1987). The PMF method is widely used for assessing maximum flood potential at a site. Although the concept of maximum limits for floods is widely accepted, methods used to estimate these limits are subject to large uncertainties. Over the years, estimates of PMP and PMF have typically increased. Furthermore, it is possible for a computed PMF to be exceeded at a given site. In a study of 61 watersheds, Bullard (1986) found that there had been nine rain flood events that had produced peaks greater than or equal to 80% of the PMF peaks estimated by methods of the U.S. Bureau of Reclamation (USBR), and two events that produced peaks greater than or equal to 90% of the USBR-estimated PMF.

American River Data

The USACE based its American River flood frequency analysis solely on daily discharge data from the USGS gaging station at Fair Oaks (USGS station #11446500, drainage area 1,888 square miles) corrected for storage in upstream reservoirs. The validity of these data, particularly the data collected since the completion of Folsom Dam, has been questioned by various observers. In addition, critics have suggested that the frequency analysis should include the use of historical and paleoflood data and have argued that the results of the USACE flood frequency analysis are inconsistent with an existing flood envelope curve for California and with current estimates of PMF. These issues are explored in the remainder of this chapter.

Homogeneity of the Systematic Flood Record

The most important data set for use in flood frequency analysis for the American River is the set of annual maximum rain flood discharges for various durations. We focus on the three-day rain flood discharges, because three days is the most critical duration for designing and evaluating flood mitigation strategies for Sacramento. The systematic maximum three-day rain flood series covers the period 1905-1998 (Figure 2.4), and is based on the USGS station at Fair Oaks corrected for storage in upstream reservoirs. Figure 2.4 shows that the five largest three-day discharges in the series occur after 1950, as well as 10 of the top 13 discharges. Completion of the Folsom Dam in 1956 raises the question of whether the apparent increase in the frequency of large flood discharges is an artifact of the corrections for Folsom storage.

The USACE-estimated unregulated discharge on a daily basis using a simple mass balance is as follows. If Qu,t is estimated unregulated discharge for day t; Qg,t

Figure 2.4

Annual maximum unregulated three-day rain flood flows for the American River at Fair Oaks for the period 1905-1998. Note that the five largest flows in the series occur after 1950, as do 10 of the top 13 flows.

Source: USGS gage record at Fair Oaks, corrected by the USACE for storage in upstream reservoirs.

is gaged discharge at Fair Oaks (below Folsom); ΔSf,t is daily change in storage in Folsom Reservoir; and ΔSj,t is daily change in storage in the five upstream reservoirs (j = 1...5), then

(1)

The lagging of storage changes in upstream reservoirs is intended to reflect flood wave travel times. Five significant upstream reservoirs have combined storage in excess of 700,000 acre-feet and collectively account for 90% of upper basin storage. Sources of error potentially associated with this procedure include errors in gage discharge (Q g,t), errors in stage measurement (ΔSf,t ), errors on the stage storage rating curve and errors in flood wave travel times.

The question of bias in flood magnitude estimates following dam closure was raised by Robert Meyer of the USGS at the July 1998 workshop hosted by the committee in Sacramento (Meyer, personal communication, 1998). Meyer presented results of double mass curve and regression analyses based on regional streamflow and precipitation data that suggested that the American River flood record may be non-homogeneous.

These observations, particularly given the concentration of large events in the latter (post-dam) portion of the record, prompted further investigation into the homogeneity of the American River flood record. The results of several independent analyses, summarized below, provide convincing evidence that the apparent shift in flood behavior commencing in the 1950s is most likely not, or at least not primarily, an artifact of the methods used to estimate unregulated flood discharges in the post-Folsom Dam period. These include David Goldman's (1998) double mass curve analysis, analysis of discharge records in surrounding basins, order-of-magnitude. estimates of the impact of storage measurement errors, and statistical analysis of long-term precipitation and temperature records from stations in or surrounding the American River basin.

Goldman (1998) constructed an alternative double mass curve comparing American River flood flows at Folsom with flood flows at North Fork Dam on the American, where discharge is uncontrolled. The curve (Figure 2.5) is linear, suggesting causes other than methodological error for Meyer's results. A similar analysis was performed by the committee using annual three-day flood volume data from six surrounding basins of comparable drainage area: the Feather (2 sites), Yuba, Mokelumne, Stanislaus, Toulumne, and Merced Rivers. Like the American, these basins were substantially regulated at some point in their periods of record, although dams were constructed at different times. Visual inspection confirms a high degree of similarity in the time series of three-day flood volumes across the seven basins, with larger events concentrated in the post-1950 portion of respective records. The bivariate test for statistically significant shift in mean (Maronna and Yohi, 1978; Potter, 1981) was applied using the American flood series as test series and the mean of surrounding stations as regional series. (This test is similar in concept to doublemass curve analysis, except that it provides an explicit measure of statistical significance.) The only statistically significant (P<0.05) shift in American mean flood magnitudes relative to regional values occurred in or around 1918, well before

the period of regulation on the American.

Order-of-magnitude estimates of potential error related to stage measurement also point to other factors responsible for increased flood magnitudes in the more recent period. At capacity, Folsom Reservoir contains approximately 1,000,000 acre-feet of storage and covers approximately 12,000 acres (NRC, 1995). It is assumed that daily changes in storage (ΔSf,t) are obtained directly from stage measurements applied to a rating curve. For a hypothetical stage measurement error of 1.0 foot at maximum stage (storage), or approximately 0.4% relative to the 260-ft maximum depth of pool, the corresponding error in storage is 12,000 acre-feet, or 6,050 cfs-days. In comparing this number to the 1986 peak one- and three-day maxima of 171,000 and 166,000 cfs (daily mean), the resulting relative errors are 3.54% and 1.21%, respectively. For any stage below that design capacity, the corresponding percentages would be lower. These are presumably within the range of gaging error, particularly when gage measurements represent extrapolations of the rating curve well beyond any discharges measured by current meter, as would be the case for an extreme flood.

Figure 2.5

Cumulative peak annual inflows to North Fork Dam (North Fork of the American River) vs. cumulative peak annual inflow to Folsom Dam (1942-1996).

A more detailed analysis of flood record homogeneity was conducted with detailed precipitation and temperature records of duration comparable to the American River flood discharge series. The American River basin and surrounding areas contain a number of National Weather Service and cooperative meteorological stations active since the late 19th century or early 20th century. To construct a series of estimated basin average precipitations suitable for evaluating the homogeneity of American River flood records, daily data were assembled for the stations, shown in Table 2.1.

Daily precipitation and temperature data in electronic format were obtained from the Western Regional Climate Center (WRCC) for the period 1949-1997 for Represa, Auburn, Placerville, and Lake Spaulding; and for the period 1931-1997 for Nevada City and Lake Tahoe. Original data for the period 1900 (or earliest available year) to 1930 were obtained on microfiche from the National Climatic Data Center (NCDC) and digitized by Charles Rodgers (consultant to the committee). A system of cross checks allowed reasonable quality control, and the digitized daily precipitation data are judged to be as accurate as the printed sources from which they were taken. Since coverage for some early years was absent at key stations (e.g., Lake Spaulding) and many early records were of poor quality, a continuous set of daily precipitation records judged to be of acceptable quality could be assembled only for the water year 1915 through water year 1997. This is sufficient, however, to support an analysis based on 41 years of pre-regulation (1915-1955) and 42 years of post-regulation (1956-1997) precipitation and flood discharge data.

The bivariate test and several regression models were used to determine the homogeneity of the American River three-day discharge series relative to a series of estimated concurrent maximum three-day basin average precipitation. The latter series was constructed in two steps. First, we computed the weighted average of the daily precipitation amounts from the gages for each day of the seven-day period ending with the end of the discharge event. We then selected the maximum three-day precipitation total for each discharge event. The weights used to construct the basin average (Table 2.1) were derived from elevation-area data from the American River supplied by Robert Collins of the USACE Sacramento District. Figure 2.6 shows the time series of basin average precipitation. Note the apparent increase in large events since 1950, consistent with the observed increase in three-day maximum discharges.

In applying the bivariate test, the test series was the American River three-day flood volume and the regional series was the three-day basin average precipitation. The test was applied to the logarithms of the respective series, since both series are positively skewed in real space but approximately normal in log space. The test detected no significant shifts in mean, which can be interpreted as evidence that the behavior of American River floods, during the period 1956-1997 in particular, did not depart systematically from the pattern of precipitation in the catchment.

As an additional test of record homogeneity, a family of regression models was estimated to predict three-day American River flood volumes using basinweighted mean precipitation, temperature, and a variety of additional variables as potential predictors. The procedure used was to specify the best model, defined as the model that explained the greatest percentage of interannual variation in American

TABLE 2.1 Weather Stations Used to Estimate Basin Average Precipitationa

|

Gauge |

Location |

Elevation (ft MSL) |

Weighta |

|

Represa |

Near Folsom Dam |

295 |

.040 |

|

Auburn |

On North Fork near Auburn damsite |

1,295 |

.093 |

|

Placerville |

On South Fork |

1,890 |

.090 |

|

Nevada City |

North catchment divide |

2,600 |

.204 |

|

Lake Spaulding |

North catchment divide |

5,153 |

.261 |

|

Tahoe City |

East of divide by Lake Tahoe |

6,230 |

.312 |

|

a Used to estimate basin average precipitation. |

|||

River flood volumes in a physically plausible manner, and then add to that model an indicator ("dummy") variable having a value of 1 for the period 1956-1997—otherwise. If the value of the estimated linear coefficient on this indicator, equal to the intercept shift or shift in mean during this period, is statistically significant, it would be interpreted as evidence for a shift in hydrologic regime.

The basic model estimation results can be interpreted to indicate that variation in three-day event precipitation accounts for 75% of the interannual variation in three-day flood volumes. The addition of three-day storm temperature at higher elevations increases explanatory power by an additional 8.3% to 83.3%. Addition of variables for date of occurrence and antecedent precipitation does not significantly improve the model. When the period indicator is added to the model, explanatory power is increased by only 0.3%, and the t statistic for the period indicator in this equation, which has already accounted for the influence of precipitation and temperature, has a P value somewhat above 0.10. This suggests that any residual variation in flood volume magnitudes "explained" by period effects in a linear model already accounting for the influences of event precipitation and temperature, albeit crudely specified, is not statistically significant.

Historical Activities in the American River Basin

Before considering the historical and paleoflood data for the American River, it is instructive first to review the history of land use practices in the American River basin. Of particular importance are the activities associated with gold mining in the region, which began with the discovery of gold in 1848. Although the impacts of these activities on flood hydrology are not well known, it is important to consider them when evaluating the relevance of historical and paleoflood data.

Initial gold mining activities involved small placer claims along Sierra streams and probably had relatively minor effects. Hydraulic mining began in California in 1853, and by the mid-1860s giant hydraulic mines were in place. These mines had enormous impacts on the streams, particularly with respect to sediment

loads. Hydraulic mining peaked in the late 1870s, and ended in 1884 due to federal legislation.

Gilbert (1917) estimated that hydraulic mining in the Feather, Yuba, Bear, and American River basins yielded nearly 1.1 billion cubic meters of debris, primarily mud, sand, and gravel. This enormous sediment load and the absence of any environmental controls led to severe aggradation of the streams draining the mines, with amounts ranging from several to as much as 30 meters (Gilbert, 1917). The first major flood to transport this sediment occurred in water year 1862. Transport continued in subsequent floods. The sediment moved primarily in pulses during winter floods, with amounts gradually decreasing after the curtailment of hydraulic mining. By 1988, American River channels near the mining district were largely free of mining sediments, except terrace sediment, and appeared to be at or near their pre-mined grade (James, 1988). NRC (1995) and James (1997) concluded that the lower American River reaches still have substantial mining sediment remaining and that cyclical patterns of aggradation and degradation occur, but that the net trend appears to be slow channel degradation and increasing channel flow capacity.

Vast areas of Sierra forests were cut in the mid- 1800s to support the mining industry (Beesley, 1996). Lumber was needed for fuel and construction of camps, towns, water flumes, mining structures, tunnels, and railroads. In the 1840s, the estimated annual lumber production in California was about 20 million board feet per year. In less than 30 years, annual lumber production increased to nearly 700 million board feet, primarily to support activities related to gold mining (Mount, 1995). Based on data from various sources, Beesley (1996) concluded that about one-third of the trees (primarily yellow and sugar pine) in the mining area in the Sierra Nevada had been harvested by about 1885. In the early 1900s, logging diminished, enabling the forest ecosystem to substantially recover, although pine was replaced primarily by white fir (Beesley, 1996). As a result of the rapid population growth of California after World War II, timber harvesting rapidly increased, reaching 6 billion board feet per year by 1960.

Grazing of domestic livestock, primarily sheep and cattle, has probably affected a larger proportion of the Sierra Nevada than any other human activity (Menke et al., 1995). Grazing was minimal prior to about 1860, then increased dramatically until the early 1900s. The effects of unmanaged grazing included increases in runoff and sediment yields and localized gully formation (Gilbert, 1917). With the advent of regulation by the U.S. Forest Service in 1905, better management practices were instituted, reducing the overall watershed impacts (Beesley, 1996).

Wildfire can produce extensive changes in streamflow and sediment yield (Florsheim et al., 1991; Meyer et al., 1995; Weise and Martin, 1995). Hydrophobic conditions often develop after a wildfire, as combustion of vegetation and organic matter produces aliphatic hydrocarbons that move as vapor through the soil and substantially reduce infiltration. Hydrophobic soils, decreased vegetation cover, and reduced surface storage following wildfire dramatically increase the potential for extreme flooding and soil erosion. Favorable runoff conditions may remain for several years to decades until burned areas sufficiently recover to pre-burn conditions (Evanstad and Rasely, 1995). Native Americans, who have inhabited California for at least 10,000 years, modified the Sierra Nevada landscape by burning and various

agricultural practices (Anderson and Moratto, 1996). In the late 1950s, after more than a half century of active fire suppression, greater emphasis was placed on prescribed burning to reduce the buildup of fuelwood and hence decrease the potential of catastrophic fires (Weise and Martin, 1995). It is not known whether the hydrologic effects of prescribed burns are the same as those of wildfires.

Levees were built in the Sacramento area to aid in draining wetlands for agriculture and for protection from floods on the American and Sacramento Rivers. As noted in Chapter 1, the first levees were built following the flood of 1850. These levees failed in the 1852 flood, and were subsequently rebuilt to higher levels (Woodward and Smith, 1977). Following the disastrous flooding in 1861-1862, substantial efforts were directed towards major levee projects. Unfortunately, as a result of aggradation from mining sediments, the height of flood waters for a given discharge progressively increased. This led to levee failures during moderate floods, requiring additional levee improvements.

Most rivers in the Sierra Nevada have surface water impoundments for multi-use purposes to help support the rapid population growth in California, particularly after World War II. These impoundments can dramatically affect streamflows, reducing flood flows and increasing low flows. As a result of the substantial impact of impoundments on flood flows it is necessary to correct measured streamflows to establish unregulated conditions, as discussed previously in this chapter.

What are the implications of these various human activities with respect to the use of historical and paleoflood data for flood frequency estimation on the American River? The most obvious implication is that the enormous amount of mining sediment in the American River during the latter part of the 19th century makes it very difficult to accurately estimate historical flood discharges during that period, precisely the period when historical information is available. There is also the possibility that the net effect of human activity has been to increase the flood response of the American River. With the available information it is not possible to quantify this potential effect.

Historical Data

Reliable observations of historical floods on the American River began in 1848 with the discovery of gold at Sutter's Mill. Major floods damaged Sacramento in 1850, 1862, 1867, 1881, 1891, and 1907 (the systematic flood record begins in 1905). Of these, the flood of 1862 clearly had the largest peak discharge, although the maximum stage of the 1867 flood on the lower American River may have been higher as a result of channel aggradation (McGlashan and Briggs, 1939).

The winter of 1861-1862 was extremely wet with few interruptions of the heavy rains from early November 1861 to mid-January 1862. The culminating event was a warm storm in January that had a three-day precipitation of 12.2 inches at Nevada City, the only station in the upper American River basin having records (Weaver, 1962). This was exceeded at this site by only the February 1986 storm (15 inches) and the January 1997 storm (12.7 inches). Flooding was extreme on all rivers

from the Klamath south to San Diego (Hoyt and Langbein, 1955; McGlashan and Briggs, 1939). Lynch (1931) concluded that the flood of 1862 was probably the largest in California since the settlement of the Spanish missions in 1769; he had little information for northern California. McGlashan and Briggs (1939) indicated that the floods of 1861-1862 appear to have been the largest in California since at least the early 19th century. The flood is described as covering the entire Sacramento valley with a vast inland sea (Guinn, 1907) except Marysville Buttes (Ellis, 1939). According to Engstrom (1996) the inland sea or lake ranged from 250 to 300 miles long and from 20 to 60 miles wide. Sacramento was submerged and almost ruined by the floods (Guinn, 1907). Bossen (1941) estimated the peak flow on the American River at Fair Oaks to be 265,000 cfs.

The utility of the historical record from about 1848 to 1907 (and perhaps even part of the early systematic gaged record) is questionable because of unknown cumulative effects of land-use changes associated with gold mining. The largest peak flood (1862) in the systematic and historic period occurred during the period of maximum watershed disturbance. Limited precipitation data in Sacramento and Nevada City available during the winter of 1861-1862 suggests that the rainfall and snowmelt contributing to the peak discharge was comparable to the record storms in 1986 and 1997. The estimated peak flood discharge in 1862 was only slightly larger than the floods in 1986 and 1997, suggesting that even with the extensive basin disturbance in the last half of the nineteenth century, basin response may not have been much different from today. One possible explanation is that snowpack covering disturbed surfaces may have masked the potential increase in runoff from mining and vegetation removal. It is also possible that the estimated peak discharge of the 1862 event is low. In any case, it is prudent to cautiously incorporate the historical data in the flood frequency analysis.

Paleoflood Data

As this report was being prepared, the U.S. Bureau of Reclamation (USBR) was concluding a comprehensive paleoflood investigation of the American River and nearby basins. The primary objective of the USBR study was to characterize the probabilities of flood magnitudes greater than those contained in the historical record for use in risk assessment of Folsom Dam. Summarized below are some of the major findings of the paleoflood study provided by Dean Ostenaa (U.S. Bureau of Reclamation, written communication, 1998).

The American River, both upstream and downstream from Folsom Dam, is flanked by a distinct series of stream terraces. These terraces represent abandoned floodplains whose surface morphology and underlying soils accurately record the time since the last major flood. The main objective of the USBR study was to identify and assign ages to terrace surfaces adjacent to the river that serve as limits or paleohydrologic bounds for the stage, and therefore discharge, of past large floods over particular time intervals.

Paleohydrologic records were developed at 12 sites along the American, Consumnes, Mokelumne, and Stanislaus Rivers. Despite the extensive mining activity locally along these rivers, the geologic record of floods remains intact and

hydraulic conditions are definable in localized reaches conducive to paleoflood reconstructions. Chronology for paleohydrologic bounds was established by 60 radiocarbon ages, 21 archaeological sites, published soil surveys, and 39 soil/stratigraphic sections. Paleohydrologic discharge estimates were established by a variety of hydraulic modeling techniques. For some sites, discharge estimates were obtained by comparison to measured and estimated discharges at nearby gaging stations. For other sites, detailed topographic surveys provided the basis for two-dimensional flow modeling of study reaches up to 12 miles in length. Paleoflood sites were located in bedrock-controlled reaches; channel geometry for the reach near Fair Oaks, which has changed substantially in the 20th century, was reconstructed from topographic surveys made in 1907.

USBR study results indicate that the flood experience in the American River over the last 50 years is not anomalous. Floods of a magnitude similar to the January 1997 flood have occurred during the past few hundred to several thousand years. Geomorphic and stratigraphic evidence also indicates that there have been floods somewhat larger than the January 1997 flood, but there is no evidence of floods with peak discharges substantially larger than that of January 1997. Peak stage indicators consisting of fine-grained flood sediments, which included mining debris, were used to estimate the peak stage of the largest flood, probably the flood of 1862. The estimated stage was slightly higher than the 1997 peak stage. The peak estimated discharge at Fair Oaks was 260,000 cfs, which is close to the estimate of Bossen (1941). Paleoflood data for the lower American River indicate that a peak discharge of about 300,000 cfs to 400,000 cfs has not been exceeded in the past 1,500 to 3,500 years. These results are consistent with paleoflood data at sites upstream of Folsom Dam and at sites on other rivers in the region.

The quality of the USBR data and analysis is excellent. The committee finds no reasons to disagree with the paleoflood information that the USBR has assembled. As discussed previously, the committee has serious doubts about the assumption that flood magnitudes have been completely independent and identically distributed in time during the period represented by the paleoflood information. Although paleoflood chronologies have not been well documented in the Sierra Nevada (the USBR study is the first systematic attempt to document paleofloods in the region), other paleoclimatic studies have indicated systematic variations in climate there that are consistent with regional and global patterns. For example, paleoecological data (Woolfender, 1995) indicate that the Sierras experienced persistent above-average temperatures during the Medieval Warm Period (approximately A.D. 950-1350) and persistent below-average temperatures during the Little Ice Age (about A.D. 13501850). During the latter period, the Sierras experienced multiple advances of alpine glaciers and a decrease in the number of fire events (Birman, 1964; Burke and Birkland, 1983; Curry, 1969; Gillespie, 1982; Scuderi, 1984, 1987; Swetnam, 1993). Given that extreme floods on the American River occur in winter storms that mainly produce rain rather than snow, it is possible that the frequency of extreme floods would have been lower during the Little Ice Age.

The key issue regarding the usefulness of any data on past floods to a particular planning or design problem is the information the data provide on the potential for flooding during the planning horizon. If floods can be assumed to be independent and identically distributed in time, then all past information is equally

relevant to estimating the likelihood of future floods. If this assumption cannot be made, the relative usefulness of particular data on past floods depends on the actual distribution of floods in time, the age of the data, the length of the planning horizon, and the exceedance probabilities of interest. If we had a correct mathematical model of the variation of floods in time i.e., an alternative to the independent and identically distributed model we could estimate the parameters of that model to appropriately weight data from past floods. Unfortunately, we only have a very general understanding of how floods vary in time, and must rely heavily on judgment. Where paleoflood information is inconsistent with modem flood data (i.e., a systematic flood record), the judgment might be not to use the paleoflood data in the flood frequency analysis. As we will see in Chapter 3, the American River provides such a case.

Even if American floods are assumed to be independent and identically distributed, the nature of the USBR paleoflood data somewhat limits its utility for flood planning and management. In particular, these data consist of levels (and hence flows) that have not been exceeded in the last 1,500 to 3,500 years. There is little direct information about the magnitude and frequency of the smaller floods that are of most interest to flood management in Sacramento—floods that occur every 100 to 200 hundred years. While it is true that the use of non-exceedance data in a flood frequency analysis can improve the estimation of the exceedance probabilities of smaller flows, the value of the data critically depends on whether the assumed frequency distribution is correct for flows up to the non-exceedance flow. As we shall see in Chapter 3, although our "best" log-Pearson type III model of the American River 3-day flows provides a good fit to the systematic and historic data, it does not appear to provide an adequate model for significantly larger flows.

Clearly there are potential problems associated with the use of the USBR paleoflood information to estimate exceedance probabilities and flood quantiles for the American River. Consequently, it was decided to not use this information to estimate the committee's recommended flood frequency relationship for the American River.

Envelope Curves

Meyer (1994) developed an envelope curve for peak flood discharges in California based on the highest recorded peak discharges from 1,296 gaging stations (Figure 2.7). For drainage areas greater than 1 square mile, the envelope curve is defined by

where A is the drainage area in square miles and Q is the envelope discharge in cfs. For the American River at Fair Oaks the value of Q is 267,000 cfs.

For several reasons, Meyer's envelope curve is of limited usefulness in estimating the flood frequency distribution for the American River. One potential problem is that the curve does not include data from floods that occurred during

Figure 2.7

Selected peak discharges and regional envelope curve.

Source: Meyer, 1994.

periods of regulated flow. Note that most of the larger Sierra Nevada streams have been regulated during the period since 1950, when floods have been most severe on the American River and neighboring rivers.

Other problems result from spatial correlation and heterogeneity. Floods on large rivers in California are highly correlated in space, making it difficult to estimate probabilities of exceeding envelope discharges. For example, the annual flood discharges of the seven Sierra Nevada rivers used in Chapter 3 to compute a regional skew (Table 3.2) have an average pairwise cross correlation of 0.87. Furthermore California streams and rivers are highly heterogeneous with respect to flood magnitudes. Basins with the same drainage area are likely to vary in the magnitude of the floods they produce. For example, four floods on the American River (Table 2.2) have peak discharges within 10% of the envelope discharge of 267,000 cfs; of these, the peak discharge of the 1997 flood was estimated to be about 10% larger. Given the precision of flood peak estimates, these four observations essentially lie on the Meyers envelope curve, indicating that the curve does not provide an upper bound for American River floods. This combination of strong heterogeneity and spatial correlation makes it difficult to estimate probabilities of exceeding envelope discharges.

TABLE 2.2 Maximum Peak Discharges on the American River (Unregulated Conditions at Fair Oaks)

|

Year |

Discharge |

Source |

|

1862 |

265,000 |

Bossen, 1941 |

|

1963 |

240,000 |

1987 Folsom Control Manual |

|

1964 |

260,000 |

1987 Folsom Water Control Manual |

|

1997 |

295,000a |

Roos, 1999a |

|

a Estimated at Folsom Dam. Source: Maury Roos, memorandum to Kenneth Potter dated February 16, 1999. |

||

Probable Maximum Flood

In October 1996, the U.S. Bureau of Reclamation, in consultation with the USACE Sacramento District, used HMR 58 to estimate a mean probable maximum storm amount for the American River basin of 29.62 inches (Pick, 1996; NWS, in press). Using loss rates based on saturated soil for unfrozen ground and snow cover for frozen ground, the USBR calculated one- and three-day probable maximum flood discharges of 575,000 and 401,000 cfs, respectively, for regulated conditions upstream. Due to the combined volume of upstream storage and likely extent of occupied storage at flood time, the equivalent unregulated volumes were expected to exceed regulated values by only a few percentage points. In 1997, following the January 1 flood, USACE Sacramento District re-estimated the probable maximum flood for the basin by applying loss rates equivalent to those observed for this large event (0.7 inches loss of 11.8 inches total) to the probable maximum storm derived in 1996. The resulting three-day runoff was 29.07 inches and the maximum three-day average flow was 485,000 cfs.

PMF estimates for the American River provide some information about the upper tail of the flood distribution. In theory the PMF is the maximum flood that can be expected at a site, the PMF concept is largely empirical, and hence a PMF estimate should be thought of as a very large flood discharge that is highly unlikely to be exceeded. While the committee is unable to specify the distribution of the likely values of the exceedance probability of a PMF for the American River, empirical data suggest that the exceedance probability should probably be smaller than 1 x 10-4 and almost surely smaller than 1 x 10-3. In the case of the American River, the committee decided to use the two PMF estimates as likely upper bounds on the flood quantile associated with a probability of 1 x 10-3.

Summary

A variety of data are available for use in flood frequency analysis on the American River. Based on the committee's consideration of these data, it has concluded the following:

- The three-day mean of the 1862 flood (estimated to be 265,000 cfs) is likely the largest peak discharge on the American River since 1848 (and perhaps since the beginning of the 19th century). This historical information should be used in American River frequency analysis, although there are questions about its accuracy and about its relevance given the potential hydrologic impacts of hydraulic gold mining.

- Although the quality of the paleoflood information developed by the U.S. Bureau of Reclamation is excellent, it has two problems. First, explicit use of this information in flood frequency requires the assumption that floods are independent and identically distributed in time or the use of a particular non-independent and identically distributed model. Existing paleoclimatic data call into question the assumption, but are not yet of sufficient quality to allow development of such models. Second, the USBR paleoflood information does not include any information about paleofloods of the magnitudes of greatest interest-discharges with exceedance probabilities from 0.5 up to and beyond 0.002. For these reasons, the use of the USBR paleoflood information was approached with caution.

- Meyer's envelope curve of maximum flood discharges is not especially useful to American River frequency analysis.

- The two most recent PMF estimates for the American River at Folsom Dam represent reasonable upper bounds on the three-day flood quantile associated with a probability of at most 1 x 10-3.