4

Climate and Floods: Role of Non-Stationarity

Flood frequency analysis, as traditionally practiced, is marked by an assumption that annual maximum floods conform to a stationary, independent, identically distributed random process. Furthermore, the assumption that floods are independent and identically distributed in time is at odds with the recognition that climate naturally varies at all scales, and that climate additionally may be responding to human activities, such as changes over the past century in atmospheric composition or in global land use patterns, which have changed the climate forcing and perhaps the hydroclimatic response on regional scales in recent decades. Porparto and Ridolfi (1998) demonstrate that estimated flood exceedance probability can increase quite rapidly with time even in the presence of rather mild rising trends in the annual maximum flood. As mentioned in Chapter 2, Knox (1993) makes the same point. Thus, it is important to acknowledge that non-stationarities are likely to be present in the records and to discuss potential sources of such trends or non-stationarities.

There is considerable evidence of regime-like or quasi-periodic climate behavior and of systematic trends in key climate variables over the last century and longer (see NRC, 1998a for one overview). The unambiguous attribution of cause for such non-stationarities in a finite record is difficult, given the rather rich, nonlinear dynamics of the climate system. Even with stationary underlying dynamics (i.e., no change in the governing equations or parameters), finite sample statistics of a nonlinear dynamical system can be non-stationary as the system evolves from one regime to another. The nature of the nonlinear oscillations of the system as well as regime probabilities and its mean state may change as the external forcings (e.g., solar radiation or greenhouse gases) are changed.

The stationarity assumption in flood frequency analysis has persisted because of (a) short historical records that limit a formal analysis of non-stationarities, (b) the lack of a formal framework for analyzing non-stationary flood processes and the associated annual risk, and (c) institutional adherence to engineering practice guidelines. As record lengths have increased, trends in floods and other processes have been observed. The ongoing global climate change debate and identification of interannual and decadal ocean-atmosphere oscillations (e.g., El Niño Southern Oscillation), and their teleconnections to continental hydroclimate, have led to increased awareness of this issue.

Cyclical or monotonic non-stationarities pose a serious challenge to flood frequency and risk analysis and flood control design and practice. If cyclical or regime-like variations arise due to the natural dynamics of the climate system, a

relatively short historical record may not be representative of the succeeding design period. Further, by the time one recognizes that the project operation period has been different from the period of record used for design, the climate system may be ready to switch regimes again. Thus, it is unclear whether the full record, the first half or the last half of the record, or some other suitably selected portion is most useful for future decisions without a better understanding and prediction of the climate regimes. This is one issue faced in an analysis of the American River and Sacramento flood protection question. In addition, if a monotonic trend in floods is indicated in a reasonably long record and the possibility of global climate change effects is considered, projections of future flood potential are still unclear. For one, the effects of global climate changes may be more in the variability of the process than the mean, and may translate into an increased probability of recurrence of certain regimes of climate more than others. No means for the believable projections of such changes have as yet emerged. Deterministic coupled ocean-atmosphere general circulation models do not yet adequately reproduce observed low frequency climatic patterns or watershed scale precipitation and hence their utility for answering this question is limited.

The thrust of these comments is that the uncertainty associated with the flood frequency estimates presented in Chapter 3 is likely to be considerably greater than that indicated by the statistical estimates. Some of the non-stationarities of the American River flood records and related hydroclimatic records are documented in this chapter.

General Meteorological Features Of Major Floods

The utility of connecting atmospheric circulation patterns to flood events is now well established. Hirschboeck (1987ab, 1988) demonstrated that catastrophic floods as well as trends in floods may be best understood in terms of large-scale and regional circulation pattern anomalies. She also proposed mixture estimation methods for flood frequency estimation conditional on the frequency of atmospheric circulation patterns. Time constraints precluded such an analysis for the American River basin. The discussion here is similarly motivated in that an explanation for changes in the flood frequency of the American River is sought in terms of associated changes in the large-scale atmospheric circulation patterns.

In the ensuing discussion, the term "annual" will refer to a winter-centered 12-month period, such as the water year (Oct-Sept) or the period July-June. Similarly, "winter" will in general refer to the entire cool portion of the year, not just December-February as in much meteorological literature.

Central California has a modified Mediterranean climate. Precipitation usually builds to a maximum in winter and subsides to nearly nothing in the summer, so that there are essentially two seasons rather than the traditional four. Consequently, major Sierra Nevada flooding occurs predominantly in the middle of the wet season and rarely in the summer months. Heavy snowmelt years can bring streams to slightly over their banks in late spring, but this type of flooding is not catastrophic. The 10 largest annual maximum floods in the 1905-1997 period on the American River in the Fair Oaks record occurred between late November and early

March. The 10 smallest annual maximum floods occurred between March and July or in December, reflecting lower winter precipitation and colder winter temperatures in those years.

The Sierra Nevada floods of winter are produced by strong onshore atmospheric flow patterns containing numerous embedded disturbances. The flow generally has a southwest to northeast orientation, typically tapping deeply into tropical and subtropical moisture. This orientation is nearly perpendicular to the elevation contours east of Sacramento, where the terrain steadily ramps up from sea level to about 7,500 feet at pass level, and near 10,000 feet at the peaks. Vertical velocities caused by the forced ascent of the rapidly moving air are quite large. The associated cooling and moisture condensation proceeds at a high rate. High freezing levels and warm temperatures cause rain at higher elevations, often to near the tops of the mountains. Snowmelt is often a contributing factor, but rain is the main ingredient. Antecedent conditions (degree and depth of low elevation snowpack, soil moisture from prior to the first snowfall) can be significant. Large floods begin when such an atmospheric flow regime persists with little deviation for two to three days. Precipitation rates can be so high that in some situations, even a difference of an hour or two in duration can make a critical difference in the size and shape of a flood pulse. Descriptions of one such event (1996-1997 New Years Flood) are given in Redmond and Pulwarty (1997), and of the response in California Flood Emergency Action Team (1997).

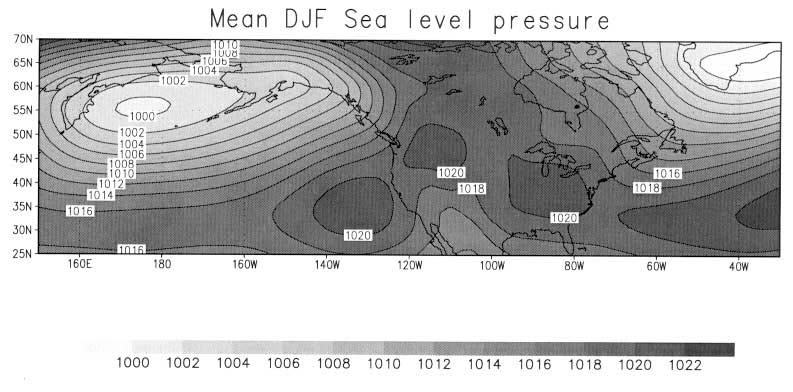

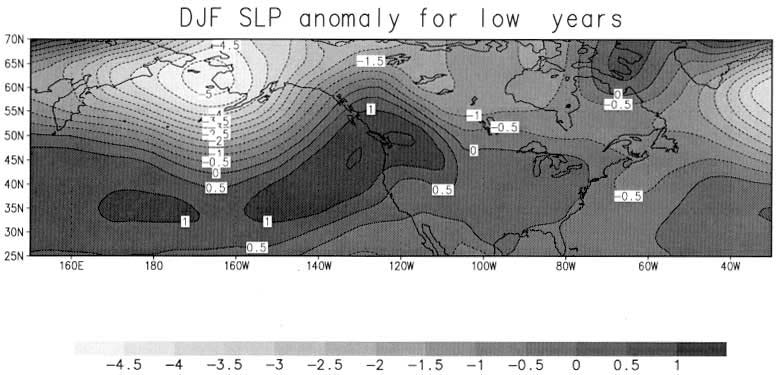

An examination of the large scale atmospheric conditions associated with the December-January-February (DJF) circulation for different types of years is instructive. The mean DJF sea level pressure map (Figure 4.1a) shows a deep low pressure center located in the central North Pacific, and two broad high pressure centers located over the southeastern Pacific and over the Great Basin and northern Rockies. The corresponding mean atmospheric flow will be counter clockwise around the low pressure center and clockwise about the high pressure center. The presence of the two high pressure centers in the mean DJF pattern reflects a climatological tendency for deflection of storms to the typically wetter, more northerly portions of the western United States.

Winter precipitation is brought to the Sierra Nevada by transient systems, typically 20-25 each year, that are coupled to the jet stream. On occasion, these midlatitude systems entrain moisture from the subtropics, and even the tropics, and deliver even more precipitation than the average system. The strength and position of the mean upper air flow, and of the disturbances which both feed from and feed back into the jet stream (and which are influenced in part by access to heat and moisture at lower latitudes) are linked to the pattern of sea surface temperatures in the tropical and extratopical Pacific Ocean.

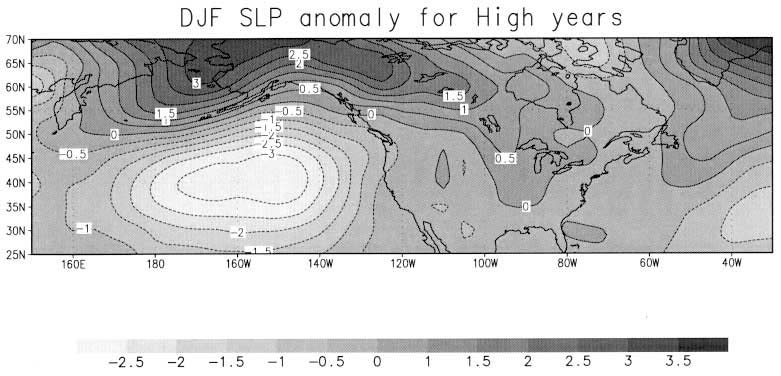

A composite of the average anomaly (departure from the full record) DJF sea level pressure for the 10 years with the largest annual maximum American River floods is shown in Figure 4.1b. The largest negative anomaly is found considerably to the southeast of the area with climatologically lowest pressure (the "Aleutian Low" in Figure 4. 1a). This implies a slight filling and shifting of the Aleutian Low to the southeast. Of note is that pressures near California are only slightly less, and that most of the change in pressure is well away from the mainland, so that an

Figure 4.1

DJF sea level pressure (mb) patterns for (a) the 1900-1997 period, (b) the years with the 10 largest annual maximum floods (ending year of winter 1907, 1928, 1951, 1956, 1963, 1965, 1980, 1982, 1986, and 1997), and (c) the years with the 10 smallest annual maximum floods (ending year of winter 1912, 1913, 1931, 1933, 1961, 1966, 1977, 1988, 1990, and 1994). The mean sea level pressure is plotted in (a) and anomalies from this climatology are plotted in (b) and (c).

increased east-west surface pressure gradient exists. The upper air pattern (not shown) is an accentuated version of the surface pattern. An enhanced south to north flow component is noted, well offshore, turning east at higher latitudes, with "landfall" over the Pacific Northwest. During flood episodes within these winters, periods that may only last 5-10 days, this pattern shifts closer to the coast and becomes temporarily greatly accentuated, and delivers abundant moisture to the "favored" site. This is consistent with the observation that winters with California floods do not appear to be otherwise particularly wet (see below).

A similar compositing was performed for the 10 years with the smallest annual maximum rain-fed floods, and results are shown in Figure 4.1c. In this case, the anomaly is positive over a broad area nearly coincident with the band of climatological high pressure, extending at its eastern end over the northern west coast and the northern Rockies. This even more strongly entrenched high pressure constitutes a pattern known as "blocking," in which the prevailing upper flow shunts storms far to the north, more toward the Alaska Panhandle. Such patterns are very persistent and hard to dislodge. In these circumstances, very few frontal systems are able to penetrate through to central California.

Consequently, in terms of flood potential and changes in flood frequency in the American River region, one needs to understand changes in the low frequency variability of the associated atmospheric flow patterns. These patterns are in turn related to oceanic temperature and ultimately oceanic circulation patterns, which are also related to atmospheric circulation patterns in an endlessly circular fashion, and hence to low frequency variability in ocean-atmosphere interactions such as the El Niño/Southern Oscillation and the Pacific Decadal Oscillation. These low frequency forcings and global climate change issues are discussed further in a later section.

Large floods need not reflect the character of the entire winter. Notably, the floods in December 1955 and February 1986 occurred in what would have otherwise been dry years, and the 1996-1997 July-June total would have been just slightly above average. After a second smaller storm later in January, the next four months were the driest in records spanning 150 years. The discussion of the average DJF sea level pressure patterns is consequently useful only because it addresses changes in the probabilities of flood causing events.

Observed Climate And Streamflow Variability

Non-Stationarity of American River Floods

Since 1950, there have been seven annual maximum floods on the American River that have equaled or exceeded the largest previous flood in the systematic record. The estimated frequency of exceedance of extreme floods has correspondingly increased. The 100-year event for the three day annual maximum flood for the American River at Fair Oaks estimated using the log normal distribution from 2- or 51-year moving windows shows a near monotonic increase over the period of record (Figure 4.2). A moving window analysis of the mean and standard

Figure 4.2

Trends in the 100-year, three-day annual maximum flow (cfs) at the American River at Fair Oaks gage computed using a log normal distribution with 51-year (heavy solid) and 21-year (dashed) moving windows. The 100-year event (204,674 cfs) estimated using the log normal distribution with the 1905-1997 record is shown by the solid horizontal line.

deviation of the three-day annual maximum flood reveals that the trend in the 100-year flood is primarily due to the trend in the standard deviation (toward increasing variance) of the annual floods. For the associated precipitation record, whereas year-to-year variability recently has increased greatly, trends in total precipitation (for seasons or for the wettest episodes) are barely discernible. Similar conclusions are reached for the one-day and five-day annual streamflow maxima.

A perspective for the American River basin flood trends is next developed through a review of climatic trends in nearby basins and in the United States. Even under a scenario of global climate change, given our understanding of climate dynamics, there is no expectation that the trends in floods or precipitation would be geographically similar.

Trends in Systematic Records of Other Nearby Basins

Precipitation records for locations in and near the American River basin show the latter half of the 20th century slightly wetter than the first half. The number of significantly wet years, however, shows a considerable difference between the earlier and later parts of the record. For example, at Placerville (elevation 1,700 ft, 124-year average is 39.92 inches) in the 22 years from 1874 through 1895, 5 years exceeded 55 inches (1 in 4.4 years); then just one year in the 55 years from 1896 through 1950 (1 in 55); and 6 years in the 47 years from 1951 through 1997 (1 in 7.8 years). At higher elevation Bowman Lake (5,390 ft, 98-year average 66.44 inches), just north of the North Fork basin, in the 51 available years from 1898 through 1950, 5 years exceeded 87 inches (1 in 10.2); followed by 11 cases in the remaining 47 years from 1951 through 1997 (1 in 4.3). Similarly, the later years also show more cases of dry winters, so that in general the number of extreme wet or dry years is increasing. This pattern for the 20th century is similar to those just over the mountain crest, from a station with an excellent record, Tahoe City, in the adjoining Truckee/Tahoe basin (elevation 6230 feet) just east of the American River. For the winter months of October through March, this site has only 1 year that exceeds 40 inches from 1910-1950 (1 in 41), compared with 11 years from 1951-1998 (1 in 4.4). The decadal trends in this series are illustrated in Figure 4.3 using a 10 year running mean of the winter precipitation.

The temporal history of multi-day precipitation extremes is perhaps of more direct interest in the flood context. The time series of maximum 10-day precipitation for each water year is shown in Figure 4.4 for Lake Spaulding (elevation 6,160 feet). Though only a slight upward trend exists overall, the number of instances of very wet episodes increases during the latter half of the 20th century. For example, there are two years where 10-day maximum exceeded 22 inches in the 48 available winters from 1898 through 1950 (1 in 24), and 9 in the 47 years from 1950-1951 through 1996-1997 (1 in 5.2). A similar increase from the first to the second half of the century is seen in three-day amounts exceeding 14 inches. The three-day annual maximum basin precipitation for the American River basin estimated using the precipitation stations at Repressa, Auburn, Placerville, Nevada, Spaulding, and Tahoe shows similar trends. Its correlation with the three-day annual maximum flow at the

American River at Fair Oaks gage is 0.88. This suggests that the non-stationarity in the American River flood series is of climatic origin and is unlikely to be caused by errors in the allocation of Folsom flood storage to the peak flows.

The California Department of Water Resources uses an eight station index to help track precipitation over a larger area, the Sacramento River basin. These eight stations are in basins starting just north of the North Fork of the American, extending to just west of Shasta Dam. The index consists of a simple arithmetic average of their daily precipitation. Because the generating mechanisms for winter precipitation function on large scales, correlation deteriorates slowly with distance. Water year correlation between the eight station and Placerville precipitation for data in a recent period (1931-1992) is 0.91, 0.92 with Bowman Lake, 0.96 with Lake Spaulding, and 0.86 with Tahoe City. The eight station index begins in 1921, but shows the same increase in high precipitation years beginning in mid-century, as well as an increase in the number of low precipitation years. For example, July-June 8-Station precipitation totals of 70 inches or more occur twice in the 29 winters from 1922-1923 through 1950-1951 (1 per 14.5 years), and then 10 times in the next 47 years through 1997-1998 (1 per 4.7 years). Since the 1930s, there is no overall trend in the eight station mean. Of particular note, the last 20 years have brought the driest and the wettest individual years in the record, and also the driest and wettest four-year running averages.

The conditions that produce floods on the west slopes of the Sierra Nevada also cause heavy runoff on the east slopes, which drain into the elevated playas of the western Great Basin (Pupacko, 1993). In a sense ,west-side precipitation "spills over" to the narrower band of steep slopes on the east side. Thus, major Sierra floods usually occur on both sides of the crest at the same time, and evidence from the east-facing basins is relevant to west-facing basins. Major floods on the Truckee River in Reno, for instance, coincide with those on the American River, the adjoining basin to the west of Lake Tahoe (Rigby et al., 1998). Flood series from the Truckee (Garcia, 1997; Hess and Williams, 1997; Rigby et al., 1998), Carson (Thomas and Williams, 1997), and Walker (Thomas and Hess, 1997) Rivers also show general accord.

The increased variability and the increase in the number of extremes in the latter half of the century is consistent with corresponding trends in the American River floods.

Trends in California

Over the State of California as a whole, both measures (the number of significantly wet years and the magnitude of the largest multi-day wet events during each year) appear to have increased considerably during the second half the century. Goodridge (1998) identified a fixed set of 95 long-term records distributed around the state. His analysis shows that there are no cases where the 95-station annual average exceeds 33 inches in the 40 winters from 1898 through 1937 (0 per 40), and 2 cases in the 53 years from 1898-1950 (1 per 26 years), followed by 8 cases in the 48 years from 1951 through 1998 (1 per 6 years). Because the station set is fixed, this is not a result of wetter sites being used for later years. Although stations in many parts of the state exhibit this behavior, some areas show opposing effects. The

biggest and most regionally consistent effects are seen in the central parts of the state at all altitudes.

Goodridge (1997a, 1998) also performed similar analyses of maximum n-day precipitation for each water year, averaged over all stations for each year, for fixed sets of stations with digitized daily data. An 83-station set shows five cases (years) with an average 10-day maximum of at least 8.5 inches in the 53 years from 1898 through 1950 (1 per 10.6 years), and nine such cases in the next 47 years from 1951 through 1997 (1 per 5.2 years).

U.S. Trends

On the national scale (lower 48 state average), there is little evidence for an increase of precipitation over the last century (see, for example, NCDC Climate Variations Bulletin, http://www.ncdc.noaagov/pub/data/cvb/cvb1297.pdf). This search has been conducted with multistation aggregates averaged over climate divisions (California has seven). Using these divisions, Karl et al. (1996) show a downward trend in the state over the last century. The station mixture that forms the aggregate changes with time. Conversely, an analysis with a set of 95 fixed stations in California (Goodridge, 1998) stations showed a slight rise in annual precipitation from 1898 through 1997. A slightly longer record (Goodridge, 1997b) of 76 fixed stations showed no trend from 1883 through 1995.

Karl et al. (1996) and Karl and Knight (1998) used daily records from an area with relatively good records, the continental U.S., to uncover evidence of more extreme events and, in particular, greater numbers of heavy precipitation days in more recent decades. Karl et al. (1995) found that the fraction of the total precipitation contributed by daily amounts of 2 inches or more in the United States had increased during the 20th Century. Using an updated procedure on a 1 x 1 degree grid, Karl and Knight (1998) showed that the contribution of 2-inch daily precipitation amounts increased from 9% of the annual U.S. total in 1910 to 11% of the annual total in 1995. Using a set of 182 daily climate records, Karl and Knight (1998) looked at trends in the contribution by decile to the annual total, across the United States and in regional blocks of the country. They find that the upper ten percentile of daily precipitation (for only those days with precipitation, rainless days excluded) contributed about 36% of the annual total in 1910, a fraction which rose to 40% in 1996. This approach tries to overcome the noisiness of precipitation records with large sample sizes. These analyses require long time series of homogeneous daily data, a situation difficult to find in many countries. For the United States as a whole, the study by Karl and Knight further shows little evidence of a stepped increase in the fractional contribution by heavier events at any point during the 20th Century.

A finer breakdown shows the growth in the upper 5th percentile to be even greater. A regional block of California and Nevada stations shows that the frequency of precipitation in this upper 5% increased more than any other region of the country. The behavior noted by Karl is consistent with the expectation of more rain per rainy day, as climate models indicate for a warmer globe (see below).

Relation of American River to Trends in Hemispheric Circulation

A space-time-frequency domain analysis of hemispheric pressure and temperature data was performed by Mann and Park (1996). Gridded (degree by degree) monthly records of Northern Hemisphere sea level pressure (SLP) (Trenberth and Paulino, 1980 ) and surface temperature (Jones and Briffa, 1992; Jones, 1994) for the period 1899-1996 were used to identify and reconstruct space and time patterns of quasi-oscillatory, large-scale climate patterns at quasi-biennial (approximately 2.2 years), ENSO (approximately 3-6 years), decadal (approximately 10 years), interdecadal (approx. 16 years period) and secular (greater than 20 years) frequency bands using a 40 year moving window multi-taper method/singular value decomposition (MTM-SVD). These patterns are identified as space-time oscillations in SLP and surface temperature in these frequency bands. The frequency bands indicated were identified through a Monte Carlo statistical significance test for the fractional variance explained across all the series analyzed. The simultaneous analysis of these data sets helps one identify dynamically consistent, space-and-time coherent patterns of low frequency climate evolution. The hemispheric space-time oscillations identified can be projected as a time series to any of the 5x5 degree grid points for each frequency band.

The projections of the five quasi-oscillatory SLP and surface temperature space-time patterns for the frequency bands indicated above were evaluated at the grid points in the vicinity of the American River stream gage at Fair Oaks. The spatial averages of the projections for the four closest grid points are shown in Figure 4.5a. The low frequency SLP and surface temperature projections are obtained from the MTM-SVD analysis by summing over the reconstructions for the secular, interdecadal, decadal, ENSO, and quasi-biennial bands at the closest grid point. Note the secular trend for a shift to a lower SLP and warmer temperature at the American River region since about 1940. The spectral coherence between the American River annual maximum flood and the projected low frequency SLP and temperature is significant for the frequency bands where there is spectral power in the climate signal (Figure 4.5b). The spectral coherence analysis estimates the correlation between two time series as a function of frequency. The low frequency projections of the SLP and surface temperature series were first converted to annual time series by picking off the value of the projection for the month of the annual maximum flood in a given year. The spectral coherence between these annual climatic time series and the annual maximum flood series were then computed. Here, the correlation is statistically significant for the frequency bands where the climatic series have statistical power. Thus, the non-stationarity in the frequency and timing of the American River floods is likely due to interannual, decadal, and centennial scale variability in the hemispheric climate. The latter may be related to either natural dynamics or human enhanced global climate changes, or both.

Changes in Seasonality

In addition to the changes in precipitation and floods, changes in the seasonality of these fields have also been noted. Wang and Mayer (1995) present

Figure 4.5

(a) The secular and low frequency components of SLP and temperature at the grid point nearest the American River from MTM-SVD. Note the secular trend towards warmer temperatures and lower pressure in the region, post-1940, coincident with the increased flood incidence and shift in flood timing. (b) Spectral coherence of the annual maximum flood series with the low frequency projections of SLP and temperature for the flood month. The correlations are significant in the quasi-biennial, ENSO, decadal, and secular bands. Hence, the large-scale, low-frequency climate patterns that affect the regional pressure and temperature fields are likely responsible for the non-stationarity in the annual maximum flood process.

data on changes in the seasonality of flooding for the United States. They constructed regional time series of the percentage of annual floods occurring in each of the four seasons for the period 1912 through 1984. For the western United States, they noted a sharp increase in the percentage of floods occurring in the winter since about 1950. Similar trends in the date of the annual maximum flow of the American River can be seen in Figure 4.6. A secular trend is pronounced since 1950, with significant superimposed decadal variability throughout the record. These change are synchronous with the apparent non-stationarity in the American River flood record. In the context of Wang and Mayer's analysis, it appears that the apparent non-stationarity in the American River flood record is part of a climatic phenomenon occurring over a large region.

Many investigators have noted similar trends in the timing of West Coast streamflow. In an analysis of streamflow data (corrected for human impacts) from the major streams draining the west side of the Sierra Nevada mountains, Roos (1987) and Aguado et al. (1992) observed a decreasing trend in the percentage of annual runoff occurring in the period of April-July. Studies of trends in streamflow by other investigators, including Wahl (1991), Pupacko (1993), Danard and Murty (1994), and Dettinger and Cayan (1995), provide evidence that the change in seasonal runoff observed in the Sierra Nevada streams is in fact a regional phenomenon.

The physical cause for these trends in streamflow has also been the subject of inquiry. Aguado et al. (1992) focused on the potential role of variations in rainfall and temperature in the timing of Sierra Nevada runoff. They conclude that the more frequent occurrence of high autumn precipitation, particularly in November, has contributed to the increase in November-December-January fractional flows and the decrease in May-June-July fractional flows. They speculate that the trends represent normal climatic fluctuations rather than a signal of anthropogenic warming. Pupacko (1993) considered temporal streamflow patterns for the North Fork of the American River for the period 1939 through 1989. He noted an increase in November through March runoff beginning in 1965. He speculated that since 1965 more precipitation is falling as rain and less water is being stored as winter snowpack. This shift in the seasonality of precipitation is consistent with the findings of Rajagopalan and Lall (1995). They observed that the wet season, defined in terms of either the frequency or magnitude of precipitation, may have moved forward by as much as 30 days at some locations in the Great Basin.

Dettinger and Cayan (1995) found that trends in runoff and snowmelt since the late 1940s in northern and central California are most pronounced in moderate-altitude basins, which are sensitive to changes in mean winter temperatures. Such basins have broad areas in which winter temperatures are near enough to freezing that small increases result initially in the formation of less snow and eventually in early snowmelt. A declining fraction of the annual runoff has come in April-June. They noted that weather stations in central California, including the central Sierra Nevada, have shown trends toward warmer winters since the 1940s. A series of regression analyses indicate that the observed decadal-scale winter temperature trends can explain the runoff-timing trends.

Dettinger and Cayan (1995) argue that earlier snowmelt in California may be caused by a trend toward warmer winters in California and a concurrent, long-term fluctuation in winter atmospheric circulations over the North Pacific Ocean and North America. The fluctuation began to affect California in the 1940s, when the region of strongest low-frequency variation of winter circulations shifted to a part of the central North Pacific Ocean that is strongly linked to California temperatures through the Pacific-North American teleconnection pattern (Leathers et al., 1991). Since the late 1940s, winter wind fields have been displaced progressively southward over the central North Pacific and northward over the west coast of North America. These shifts in atmospheric circulation are associated with concurrent shifts in both West Coast air temperatures and North Pacific sea surface temperatures, and with earlier snowmelt and increased spring moisture fluxes in the American River basin.

The investigations into the changing seasonality of flow, temperature, and precipitation were recently augmented by an analysis of trends in snow water equivalent (SWE) in the Sierra Nevada by different elevation zones and months. Johnson (1998) analyzed comprehensive snow course data collected over the last 60 years and concludes that several basins in the region have experienced lower snow water equivalents and earlier snowmelt below an elevation of 2,400 m. There is higher variability in the SWE trends at higher elevations; however, the general trend is for increased SWE and earlier melt.

Trends in Longer Proxy Records

Given indications of low-frequency climatic variability at interannual, decadal, and century scales, insights from long climate indicators are of interest. Long records of hydroclimatic variables in the western United States based on tree ring reconstructions have shown significant interdecadal variations in recent centuries. Although growth is a complicated function of several climatic elements, tree ring reconstructions can provide estimates of annual precipitation, and perhaps even flood occurrence. Earle (1993) reconstructed annual streamflow using tree ring chronologies for several major rivers in California, including the American River. He found that significant prolonged periods of high and low flows have occurred during the last 440 years and that first half of the century (1917-1950) was the driest in the reconstructed record for California rivers. Scuderi (1993) described a 2,000-year reconstruction of seasonal (June-January) temperatures in the Sierra Nevada. He found evidence of a strong 125-year cycle in temperatures (with a peak in the cycle in the late-1900s) that may be related to solar activity.

Meko et al. (1998) have recently used a variety of tree ring series from much of the Central Valley, the central and southern Sierra Nevada, southern Oregon, and far western Nevada to reconstruct year by year estimates of the Four Rivers Index (Sacramento, Feather, Yuba, American streamflow). Statistical models that consistently account for 60-65% of the modern variance can be extended over the last 500 to 700 years, and less accurate models (because there are fewer long tree ring series) can be used back to 700 A.D. The method by which the growth curve is removed (young trees have wider rings) can potentially influence the reconstruction

of low-frequency (several decades) variability. However, individual year-to-year variations, of greatest interest here, are hardly affected at all.

Of most interest in the present context are individual wet years (wet enough to have contained a major flood episode). Wet years do not necessarily contain a flood, but dry years probably do not. As previously noted, major Sierra Nevada floods seem to occur in years with modest annual runoff, and seldom or never in the years with the highest annual runoff. The latter years are characterized by very heavy snowpack and extended periods with large volumes of snowmelt-driven runoff. This will be discussed further below under ENSO.

The tree ring reconstructed annual Four Rivers Index for 700-1961 A.D. is presented in Figure 4.7a. Using a 51-year moving window and assuming a log normal distribution, the 0.99 quantile was estimated for the index and is shown in Figure 4.7b. Even though the tree ring flow reconstruction process removes some of the low-frequency variability in the original record, there is evidence of protracted low-frequency flow regimes (both high and low) in these reconstructions as seen through the 51-year moving window.

Sources Of Sierra Nevada Climate Variability

There are many possible physical sources of low-frequency variability of central Sierra Nevada climate behavior. These include quasi-periodic modes of ocean-atmosphere circulation, such as ENSO, as well as considerations related to global climate change due to increases in greenhouse gases and land use. Their importance lies in the way frequencies of atmospheric circulation patterns (e.g., see Figure 4.1 presented earlier) responsible for large winter floods are modified over decadal time scales. Some of them remain plausible but primarily speculative; others we can say more about. These will be addressed next.

El Niño / Southern Oscillation (ENSO)

In the interval between the 1982 and 1983 El Niño and the build-up of the equally large 1997-98 El Niño a great deal was learned about California climate and its relation to El Niño.

El Niño is one of the two major phases of a more complicated irregular cycle, typically lasting three to seven years, during which ocean temperatures within a few degrees of the equator between South America and the International Date Line become warmer than average. The other major phase, La Niña, is characterized by cooler than average ocean temperatures in the same region. Strictly speaking, the terms El Niño and La Niña refer to the oceanic temperatures.

Another signal is seen in the overlying atmosphere. When mid-ocean temperatures are high (El Niño), the surface atmospheric pressure near Easter Island and Tahiti is a bit lower than usual and near Indonesia and northern Australia is a bit higher than usual. At monthly time scales, the atmospheric pressure over large areas centered on these two regions varies in a strongly out-of-phase sense, a nearly global

phenomenon known since the 1920s and earlier as the Southern Oscillation. Because this atmospheric oscillation is strongly linked to oscillations in the ocean temperatures in the El Niño region, the two terms are often merged together as El Niño/Southern Oscillation or ENSO.

Western US. and California Climate Relations to ENSO

Studies have shown significant connections between the state of ENSO and the winter climate of the western states (e.g., Schoner and Nicholson, 1989; Redmond and Koch, 1991; Kahya and Dracup, 1993; Dracup and Kahya, 1994; Cayan et al., forthcoming). Wetter winters are more likely during El Niño winters in the southern West, including southern California, but there are exceptions. Drier winters are more likely in the Pacific Northwest with El Niño. Generally opposite patterns are seen with La Niña. The nodal line dividing the two behaviors extends from about San Francisco to Cheyenne, Wyoming. Thus, there is little relation between central Sierra Nevada winter precipitation (Oct-Mar) and ENSO. There are roughly equal numbers of dry and wet El Niño and La Niña winters.

In terms of the asymmetries in the California climate response to El Niño and La Niña there appear to be two exceptions to the overall picture outlined above. The first is that during larger El Niños, the nodal dividing line is found more to the north than during normal El Niños, and the central Sierra region experiences wetter winters. However, these winters tend to be long, cool, and snowy, with few instances of elevated freezing levels. A second exception is associated with the direction of upper air movement and an associated east-west contrast (at the latitude of the American River basin) in precipitation response. In El Niño years the upper air flow extends from the central Pacific north of Hawaii directly to California. This trajectory can deposit abundant snow on the Sierra Nevada without causing large floods and typically brings heavy precipitation to the low-lying coastal mountains, in the form of rain, thus making flooding of coastal streams more likely.

In this regard, it is particularly notable that none of the major floods in the Sierra Nevada over the last century has occurred during El Niño winters. The largest flood in an El Niño year ranks 10th among all floods since 1933 (see Figure 4.8, scatterplot of SOI versus American River floods).

La Niña winters are characterized by a flow regime with greater alternation of north-south movement and more storm systems affecting the Pacific Northwest and northern California. There is strong asymmetry in the response of the overlying atmosphere to tropical sea surface temperatures, and thus in cloudiness, precipitation, and heating of the atmosphere, and thus in teleconnections to and influences upon the mid-latitude jet stream. The convection and moisture sources in the western tropical Pacific are unusually active, and the interaction with equatorial excursions of the jet stream provides a western Pacific connection to the flow that eventually impinges on California. On occasion, southwesterly flows associated with embedded storm systems will tap deep into tropical low latitudes and their abundant moisture. A persistent flow, such as this, of a few days duration is sufficient to bring very heavy precipitation, warm temperatures, and floods to the Sierra Nevada. These situations

are not common, and as a rule, flood peaks (highest three-day runoff) in La Niña winters tend to be lower than average on streams such as the American River. However, on the American four of the top five three-day flow events in the last 65 years have occurred during modest to strong La Niña winters (refer again to Figure 4.8). As mentioned before, aside from their flood periods, many of the big flood years are relatively lackluster, even dry; so their water year flows are large but not exceptional. La Niña winters appear to have the interesting property that peak flows are likely to be lower than average, but carry an increased risk of producing some of the highest flows in the record. Strong evidence is emerging that heavy West Coast precipitation episodes are related to the so-called Madden-Julian Oscillations ("MJO") seen in the vicinity of Indonesia (Mo, 1999; Mo and Higgins, 1998a, 1998b; Ye and Cho, 1999).

Regimes of El Niño/Southern Oscillation

To the extent there may be a relation between floods and any of the phases of ENSO, such as La Niña, periods of extended predominance of one or the other ENSO phase could affect the frequency of Sierra Nevada floods. The record of ENSO does indeed show such behavior, most notably the period since 1976, when a major shift occurred in the Pacific climate (see Ebbesmeyer et al., 1991; Trenberth and Hurrell, 1994). Since that time El Niño years (negative Southern Oscillation Index) have occurred at a much higher frequency than earlier this century, about nine times in 20 years or one year in 2.2, compared with a historical frequency of about 1 year in 3.7 years. La Niña has been notably scarce since this 1976 shift, with one appearance in 1988-1989, a weak episode in 1996-1997, and a significant episode in the winter of 1998-1999. These ENSO non-stationarities are also discussed by Trenberth and Hoar (1996, 1997), Harrison and Larkin (1997) and Rajagopalan et al. (1997). Trenberth and Hurrell (1994) argue that the duration of the 1991-1995 El Niño event and the increase in the frequency of El Niño events is likely to be an indicator of global climate change. Rajagopalan et al. (1997) use non-homogeneous Markov chains to argue that these changes can be explained as natural long-term variations of the ENSO cycle and may not be dissimilar to the nature of ENSO activity in the late 1800/early 1900s. Lall et al (1998) used a wavelet analysis of the NIÑO3 sea surface temperature index to show that there have been several systematic variations in the dominant return period of ENSO between 1856 and 1997. They also analyzed a 1,000-year sequence from the Cane-Zebiak ENSO model with stationary forcings and found that El Niño frequencies in this deterministic stationary model varied dramatically at century time scales. Several papers (Enfield, 1992; Diaz and Pulwarty, 1992; Lough, 1992; Thompson et al., 1992; and Michaelson and Thompson, 1992) in the volume edited by Diaz and Markgraf (1992) examine the history and statistics of ENSO, by a variety of means, which collectively show intermittency, regime-like behavior, and general non-stationarity on scales ranging from decades to centuries. Mann et al. (1998) has attempted to put the recent El Niños in context by reconstructing their occurrence using proxy records extending back over six centuries. The commentary in studies

such as this has tended to focus more on El Niño than La Niña, but the data in some cases do portray both phases.

Pacific Decadal Oscillation

The Pacific Decadal Oscillation as a Potential Modulator of ENSO Effects

Mantua et al (1997) and Hare et al. (in press) have identified a pattern of variability in the Pacific basin and the overlying atmosphere having characteristic time scales of 20-30 years, which they called the Pacific Decadal Oscillation (PDO). The pattern resembles the interannual-to-ENSO time scale variability pattern, but clearly separates out using singular value decomposition of the time histories of a set of fields of oceanic and atmospheric variables. This pattern expresses itself most clearly in the North Pacific, and thus is also referred to by some as the North Pacific Oscillation. One prominent aspect of the PDO is an out-of-phase relationship between the Pacific Northwest of the U.S., and the northern Gulf of Alaska. Streamflow, temperatures, and salmon abundance are clearly linked to this mode of variability over this century (Hare et al, in press).

Mann and Park (1994; 1996) also identified a 16 to 20 year oscillation related to the North Pacific, which oscillation appears to correspond to one identified by Latif and Barnett (1994) using a coupled ocean-atmosphere model. Latif and Barnett's postulated mechanism is that self-sustained oscillations at interdecadal time scales can be set up through the influence of the subtropical ocean gyre on SST anomalies in the North Pacific and a subsequent delayed response of wind stress that spins down the gyre. This mechanism provides a potential for understanding and predicting interdecadal fluctuations in climate and flow in the western United States. Indeed, Lall and Mann (1995) and Mann et al (1995) show that a projection of this mode on to the Great Basin explains a significant fraction of the interannual variation in the Great Salt Lake and is tied to its major highs and lows. The connection of the interdecadal mode identified by these authors to the more diffuse decadal variability identified as the PDO is not clear.

The primary importance of the PDO and other extratropical interdecadal North Pacific climate patterns is that they may modulate the mean position of the jet stream and also of the tropical interaction with the jet stream. Potential ENSO effects could be enhanced or reduced depending on the phase of the longer period North Pacific oscillation. An understanding of these issues would help (a) by allowing proper adaptation to ENSO events at interannual time scales and (b) by providing an understanding of interdecadal tendencies for increased or decreased flood potential. If the PDO is also shown to be associated with the regimes of frequent and stronger or infrequent and weaker El Niño events, additional understanding of regimes of wet and dry periods will result. Finally, an understanding of internal dynamic modes of the climate system with interdecadal time scales and their impacts on floods is essential if the potential effects of secular global climate change are to be sorted out from the last century of record.

Regimes in ENSO Resulting from PDO Decadal Modulation

One of two ways that the PDO could be relevant to central Sierra floods could be its possible modulation of relationship between ENSO and the winter climate of the western United States. Gershunov and Barnett (1998) and Gershunov et al. (1998) have indicated that this may very well be the case. During one phase, lasting a few decades, the strength and robustness of the connection appears to be greater than during the opposite phase. That is, whether or how La Niña or El Niño affects the West Coast would, if these findings bear up, depend on which phase the PDO or the Mann and Park/Latif and Barnett interdecadal oscillation or more generally the state that the North Pacific seas surface temperatures is in. In a very interesting paper, McCabe and Dettinger (1998) have recently examined the temporal characteristics of the relationships reported by Redmond and Koch (1991). They find that the relationship between the Southern Oscillation Index (SOI) and western winter climate has varied considerably over the past 100 years. Currently the relationship is quite good, but earlier in the 20th Century it was much weaker. They also note that the relationship between Pacific equatorial atmospheric pressure behavior (expressed by the SOI) and Pacific equatorial ocean behavior (expressed by sea surface temperatures, SSTs) has similarly varied quite considerably this century. Of relevance to the American River and California, it is likely not a mere coincidence that the SOI-SST relationship was rather weak until about 1950, when it became the much stronger relationship to which we have grown accustomed. McCabe and Dettinger also find a strong modulation of the SOI-SST correlation and of the SOI western climate correlation by the Pacific Decadal Oscillation. This lends further support to the idea that large-scale changes in Pacific Basin climate behavior, and in its relation to Pacific Rim locations, took place about 1950. These findings are quite intriguing. Of particular note, if this is related to "regime" behavior rather than secular global change trends (below), then the possibility exists to return to a prior regime, i.e., the one that existed during the first part of this century.

Other Potential PDO Effects Not Involving ENSO

The second way that the PDO could be relevant to central Sierra floods could be by modulating other connections, not related to ENSO, between the North Pacific and the Sierra Nevada. By contrast with the tropics, the ocean and the atmosphere drive each other more equally in the higher latitudes, on scales of a few weeks, and it is nearly impossible to say anything specific about the implications for the Sierra Nevada. Because ENSO accounts for only a modest fraction of the year-to-year climate variability in the West, there must be other sources of variability, and the conditions in and over the Gulf of Alaska would be a strong candidate for an additional influence. Much more remains to be learned about potential connections there. It seems almost certain that any such connection would involve the deep ocean.

Other Potential Natural Influences on California

The earth's climate system is extremely complicated. In the fullest sense, climatic behavior at any given location and over any significant span of time (e.g., a few decades) is determined by processes involving the earth's biological organisms, its frozen water, volcanic activity, astronomical factors, solar output, radiatively active components in the atmosphere, ocean behavior from top to bottom, as well as a host of positive and negative feedbacks involving clouds, precipitation, adiabatic heating and cooling, flow dynamics, and more, with numerous thresholds at which subcomponent behavior changes radically (e.g., freezing, convection), all interacting in highly nonlinear ways. For an engaging popular discussion of this subject, see, for example, Bak (1996). In such a system it would not be surprising if internal feedbacks operating through a multiplicity of links could contribute to the variability observed at any one point of interest. In fact, the absence of variations resulting from internal dynamical processes would be a major surprise. A consideration of the variety of external forcings interacting with a variety of complicated internal interactions and feedbacks led Bryson (1997) to state unequivocally that "the history of climate is a non-stationary time series."

A frequently cited example of a "remote" and large-scale influence is the thermohaline circulation of the world's oceans. Temperature and salinity both affect the density of sea water, spatial and temporal variations of which produce horizontal and vertical accelerations and motion at all depths. These factors, in concert with fluxes of heat, moisture, and momentum across the water-atmosphere interface, affect the circulation of the atmosphere (e.g., Cayan and Peterson, 1989; Cayan, 1992). Because of the small speeds and large time constants involved, oceanic influences on climate can have time scales from days to about a millennium. Manabe and Stouffer (1996, 1997) have used the results of very long simulations to argue that General Circulation Model (GCM) runs of nearly a thousand years are needed to properly understand the role of natural variations and internal feedbacks affecting ocean circulation, and thus by implication effects on terrestrial climates. Broecker's notion (1987, 1991) of a global linkage among the world's oceans driven by temperature and salinity differences (an aspect of the thermohaline circulation dubbed the "conveyor belt") has attracted wide attention. Though the ocean is regarded as slow and ponderous, gradual processes could bring conditions to near thresholds, where behavior changes suddenly. Ice cores from Greenland (e.g., Mayewski et al. 1993a, 1993b) are showing that major circulation changes in, for example, Gulf Stream position may occur in less than a decade; perhaps in just a few years atmospheric adjustments would be seen over the entire hemisphere.

Global Change Issues

To this point only the natural variations in climate have been addressed. During the last century the human population has increased to the point where its activities can significantly alter the flow of radiation in the atmosphere. Although much of the focus has been on temperature, the realization has been slowly growing that other significant climatic adjustments to the altered radiation regime may be

expressed in the hydrologic cycle. A general conclusion is that global evaporation and precipitation will proceed more energetically and that water will cycle through the system faster. This implies that large precipitation rates will be more common. Climate change, whatever the cause, almost never affects all locations and seasons equally, and these details cannot be resolved at the current level of understanding.

Hydrologic modeling studies for California by Lettenmaier and Gan (1990), Lettenmaier and Sheer (1991), and Tsuang and Dracup (1991), all indicate that similar temperature increases (e.g., those predicted by GCMs under global change scenarios) would cause changes in streamflow timing and increased flooding, primarily due to increases in the rain-to-snow precipitation ratios. These conclusions are clearly of concern in light of the changes in seasonality and extreme floods noted earlier. A brief perspective on the global climate change debate is provided here.

All the various natural mechanisms that can potentially cause climate fluctuations on annual to century scales are considered to be capable of producing both positive and negative contributions to climate forcing at one time or another. With respect to human-induced changes in climate forcings, especially the radiative forcings associated with atmospheric composition changes, a widely held view is that such temporal trends are unidirectional and unlikely to change course in a century or two. Partly on the evidence of modeling experiments, it is likewise widely held that a steadily increasing forcing will also lead to a steadily increasing response. Of course, in finite physical systems, no component can increase forever without limit, but it can appear to do so within a limited range of forcing. Unfortunately, modeling experiments pertaining to global climate change do not have a realistic representation of known low-frequency ocean-atmosphere interactions and their treatment of the hydrologic cycle is also relatively primitive. Given the importance of water vapor as a greenhouse gas and also its role in the atmospheric energy balance, a better understanding of the radiative nature of clouds and the movement, organization, and precipitation of atmospheric water vapor is needed.

It is possible that the response will be stepped, as a series of plateaus; or will have different seasonal signs that are influenced by the background state; or sometimes will even be in this direction, sometimes in that, as planetary adjustments in the mass fields and flow of the two major fluids—water and air—take place. In light of the possibility that long term natural variations in climate occur, the global climate change response in this area may occur as a change in the frequency distribution, strength, and recurrence of these regimes. Such changes will of necessity be at longer time scales than the recent record. Thus, an unambiguous detection of global change and its impacts is unlikely unless the changes are altogether dramatic. Definitive answers to these questions are not expected anytime soon.

Essentially, the problem facing flood managers, engineers, and everyday citizens in this situation amounts to making a forecast for the next several decades of what the flood statistics will be and then acting on that forecast. Aside from recently introduced human-induced or human-enhanced factors, the remaining natural mechanisms for climate change have been operating all along, have been "seen" before, and have been either directly measured or otherwise recorded in the proxy

evidence. The human factors are new, may have unidirectional effects, and may carry system behavior beyond bounds it has not exceeded for some time.

When humans look at time series, there is a universal tendency to extrapolate any type of trend discerned in the latest points linearly out into the future. In a natural system it is widely realized that eventually this expectation will prove incorrect. With global climate change there is a possibility that, within the useful lifetime of a prediction (say, a century or so, by which time the entire matter of how humans interact with rivers will almost certainly have been completely re-thought), this linear extrapolation might be correct. If this logic is correct, flood frequency curves may edge closer to or enter territory not seen during Sacramento's history. Moreover, there still remains the possibility that natural variations of larger amplitude, not observed during the few centuries of recorded settlement, could also occur (or recur). Of the various mechanisms for climate change facing us in the near term, the human-induced global changes appear to have the greatest likelihood of taking us to this point.

Just as a cautionary note, it is worth pointing out that like carbon dioxide, the optically active gas methane—which contributes about 15% of the enhanced greenhouse effect—was also expected to continue to rise steadily in concentration well into the next century; however, in a major surprise, the concentration began to increase less rapidly in the early 1990s, and by 1996 had essentially leveled off (Dlugokencky et al., 1998). This holds important lessons about how we should regard even our "safe" assumptions.

It is also worth noting that for short time periods—a few decades or centuries—naturally occurring fluctuations would masquerade as "trends," especially with the short records we possess. When we are sensitized to the prospect that our activities may lead to global or regional climatic changes, we are more likely to find such trends, and to interpret them as evidence of the hypothesized effect. The hard question, one very difficult to answer, is "what would the natural system have done otherwise?" We are a long way from answering this. In climate change research, this problem is known as the attribution problem, in contrast to the other two main pieces of the puzzle: the detection problem and the prediction problem.

In addition to greenhouse gas concerns, a body of literature is emerging (see, for example, Chase et al., 1996; Pielke, 1991, 1998, In press, and references therein) showing that land use changes—on local, regional, and global scales—are a significant factor in causing actual and potential climate change—again at local, regional, and global scales. Changes in land use modify flows of energy in substantial ways. The climate system adjusts to these energy flow changes by changing its circulation patterns. The atmospheric adjustments are both local and remote. This area of climate change research is beginning to receive a substantial amount of attention.

Recent climate modeling experiments by these investigators (e.g., Chase et al., 1996) show that the observed changes in land use around the earth during this century (with no change in greenhouse gasses) are sufficient by themselves to produce regional circulation and surface temperature responses of the same magnitude as the changes that have been projected for changes resulting from greenhouse gas increases. In such regions as western North America worldwide land

use changes in these preliminary experiments lead to temperature increases on a par with those observed in the Sierra Nevada winter over the last several decades.

There is no simple pattern to global land use changes over the last 100 years, and the patterns of land use change are themselves changing. Although it is not clear whether the earth as a whole will warm or cool from such changes, the way the climate response (temperature, precipitation, and snowfall) is distributed in space and by season and altitude could be very complex. Because of the highly nonlinear nature of this system, climate changes that result from land use changes will not necessarily have to exhibit monotonic trends. Model performance will need to improve still further before specific results can be accepted without question. For now, the conclusion that land use effects can rival other sources of variability is sufficient.

Unfortunately, these long-term trends in land use are taking place while greenhouse gases and atmospheric sulfate aerosol emissions are also changing. There is as yet no way to separate out their effects, and it is not clear if additive (linear) approaches are even appropriate (see, for example, Hanson et al., 1997; Hanson et al., 1998). These instructive and sobering studies have increasingly led to a reluctant acceptance of the possibility that our ability to provide useful climate change predictions may stay barely ahead of the actual progress of time, if at all.

Summary

Non-stationarity in the flood process can come from naturally structured, low-frequency climate variability; from human changes to the watershed (e.g., hydraulic mining, subsidence, urbanization, land use, and vegetative cover); or from watershed influences on the large-scale climate system (likely minimal in this case). There is evidence of significant changes in land use and surface attributes of the American River basin over the last two centuries. Trends are evident in basin land use and surface attributes, as well as precipitation and other climatic elements, particularly the incidence of extremes. A context for understanding these trends in terms of climatic mechanisms has been provided above. Global climate change concerns related to greenhouse gas emissions over the last century may also be considered as a plausible factor in changing flood frequencies. The contribution of structured, oscillatory, interannual- to millennial-scale climate variability to changing flood potential in the region is also of considerable interest. The latter may represent the behavior of a nonlinear dynamical system that exhibits unstable oscillations or close returns of a trajectory that appears periodic. Such a system would have stationary dynamics, but a finite period of record may exhibit apparent non-stationarity in terms of the statistics.

Key implications of these observations are:

|

(1) |

Given trends, persistence or memory in the system, the true variability of the flood process could be substantially higher than that estimated from a finite period of record. In other words, the uncertainty in the estimate of the T-year flood is higher than that indicated by a method that considers the n years of record to |

- represent a stationary, independent identically distributed stochastic process. The latter is the standard assumption for flood frequency analysis.

-

(2)

Record high and low floods are likely to be clustered over extended periods of years, if the underlying climate system is slowly oscillating. Thus, the pre-and post-1950 segments of the American River flood record are plausible members of trajectories of the same stationary dynamical system. As the underlying climate state changes slowly, the flood potential, as well as the timing and causative mechanisms undergo systematic structural changes. This leads to the question of whether a single probability distribution is an appropriate descriptor of the flood process, and whether the frequency curve should be bent at one end, or whether low and high floods should be modeled by the same distribution. Censoring, mixed distribution models and non-parametric flood frequency estimators are commonly offered as solutions to this problem. However, all these methods assume that the underlying process is independent identically distributed. Consequently, the resulting flood frequency estimates will be reasonable only if our flood record extends over an adequate number of the underlying cycles and if our planning horizon is infinite in the future.

-

(3)

Unless the quasi-oscillatory climate behavior is predictable over the next 5 to 30 years, and unless that information is used for modifying the underlying flood frequency curve, the independent identically distributed procedures used may lead to an apparent bias in the flood frequency curve, as seen in the pre- and post-1950 period for the American River. Unfortunately, neither the understanding of the complexity of the underlying dynamical system nor the technology for such interannual to century scale predictions (see, however, Rajagopalan et al., [1998] and Lall et al. [1996]) is currently available. Consequently, the risk of being wrong about the estimate of the flood frequency curve remains higher than anticipated by the standard analyses.

-

(4)

The use of paleodata spanning centuries or millennia is often offered as a tool for improving flood frequency estimates in conjunction with a probable maximum flood analysis. Such information, if untainted by anthropogenic effects and derived accurately, is potentially very useful for refining the flood frequency estimate for "steady state" future conditions. This may or may not be reasonable, as our understanding of cyclic climate variations at century to millennia time scales is still very much in its infancy. In a Bulletin 17-B setting, where a guideline for steady state flood prediction is of interest, the recent few centuries of reconstructed data is likely to be useful at least for providing a context for interpreting the flood record of the American River over this century.

The committee's summary recommendation is that its understanding of climate variability suggests that (a) the uncertainty of the flood frequency estimates is higher than indicated by the usual statistical criteria, (b) climatic regime shifts may—slowly or abruptly—significantly affect the local flood frequency curve for protracted periods, and (c) at this time, given the limited understanding of the low frequency climate-flood connection, the traditional independent identically distributed approach to flood frequency estimation is recommended with the strong caution that the application of such a curve is likely to lead to significant biases or variability over any period of time. A more conservative design criterion as well as

adaptive flood control measures in addition to structural flood control may therefore be appropriate.

Recognition of the non-stationary nature of climate dynamics should motivate society to replace the existing static flood risk framework with a dynamic one. The existing static flood risk paradigm considers the estimation of a single flood frequency distribution from all available historical, regional, and paleoflood data and the application of the estimated distribution for an indefinite future period. A dynamic risk paradigm would call for the evaluation of potential flood risk over the duration of project operation, and/or a regular flood frequency updating procedure. Adaptive flood control and design strategies would be favored under this paradigm. Given the usual paucity of flood data, the interest in extreme (1% annual risk) floods, the limited ability to forecast climate statistics into future planning periods, and the weak understanding of the connection between slowly-varying climate factors and the at-site or regional flood process, it is beyond our ability at the present time to implement practical dynamic flood risk models. However, research in various areas is needed to address these important issues. New diagnostic, prognostic and decision frameworks need to be developed.

First, investigations of the nature of flood risk variations in the historical record and their connections to low frequency climate variability are needed to establish the nature and sensitivity of the at-site or regional flood process to key climate indicators or factors, to provide a context for understanding the apparent changes in flood risk as seen in the American River, and to assess the need to consider climate induced flood non-stationarity in the decision process. Given the potential for anthropogenic climate change, and ongoing research on its prognostication, it is important to assess the specific ocean-atmosphere. state variables that are useful predictors of flood risk, and their spatial signature in the regional flood process. A causal, hypothesis-testing framework may be useful for such analyses. Identification of the sensitivity of flood risk to identifiable, changing (and predictable) climate indicators will be useful for decisions on whether a dynamic risk framework is useful. Such analyses also have implications for changing regional flood frequency estimation methods. At-site flood records used for regional frequency curve estimation can often have widely varying periods of record. Recognition of quasi-periodic and monotonic trends in climate factors influencing floods behooves stratification of these records by "climate epoch" prior to the estimation of regional frequency curves. An examination of the spatial structure of the regional flood risk relative to the climate state may also be useful. For small basins where flood risk is determined by local thunderstorms, regional information for several decades could be quite useful. For large basins (such as the American River basin) where flood risk is determined by very large regional storms, regional information extracted using traditional methods may be of limited value. These large regional storms have preferred tracks that can be related to seasonality and to identifiable slowly varying ocean temperature (and associated atmospheric) conditions. There may hence be prospects for relating low-frequency climate variability to regional storm frequency and severity and hence to floods. Conditioning basin and regional flood process on ocean-atmosphere teleconnections using the century long records available may be more fruitful in this context than

"pooling" available regional flood data. A statistical characterization of the connections between ocean-atmosphere variability at interannual to decadal time scales and the frequency of the annual maximum flood in the region is needed. This relationship, coupled with "beliefs" as to scenarios for future climate derived from an analysis of the historical and paleoflood record and coupled general circulation models of the climate system, may be useful for assessing scenarios for future flood risk. A framework for formally conducting such analyses to better estimate potentially changing flood frequency distributions and their uncertainty is needed.

Second, a framework is needed for decision analysis on flood management that explicitly considers the dynamic risk and its estimation uncertainty. Clearly, such a framework needs to consider both the length of the planning period over which the projected flood risk will be used and the reliability with which the risk can be estimated from available information. Such a framework may be developed considering a "bias-variance" tradeoff or considering related explicit economic consequences. Consider first a monotonic trend in the annual maximum flood. In this case, one may be tempted to use the last 10 years of record to estimate the 100 year flood for the next 10 years (the planning period). One would reduce bias, but there would be tremendous uncertainty in risk estimates because the record is so short. If instead one had employed a 200-year period of record to project the flood risk over the next 10 years, then the bias in flood risk is likely to be larger, while the variance of flood risk estimators should be reduced. The magnitude of the expected shift (i.e. the projected bias) in the estimated 100 year flood over the next 10 years, and its economic consequences, relative to the increased uncertainty of estimate of this flood, would determine whether the shorter record is used. This answer may well be different if a 50-year planning period were considered. The bias would be larger, as would the uncertainty associated with projecting the monotonic trend into the future. This situation is complicated if quasi-periodic climate variations are considered. For instance, if a 20 year periodic climate variation were considered, using the last 10 years of record to project flood risk for the next 10 will increase both bias (as one goes from the high to the low phase of the oscillation) and variance of estimated flood risk. Explicitly conditioning the flood risk estimate on climate state has an effect similar to the selection of a subset of years of the record as discussed above. The use of such a conditional probability statement would attach higher weights to floods in years with climate state similar to the one projected and lower weights to other floods. This reduces the effective sample size used for flood risk estimation. Thus, the "conditional risk estimation" framework needed needs to consider length of record, length of planning period, the nature of the climatic non-stationarity and causal relations between the climatic factors and the floods. The utility of paleoflood and proxy climate data could be evaluated in the same framework.