3

Dimensions of Relevance

The previous chapter outlined areas in which SRS may improve its operations and its processes for ensuring the continuing relevance of its data and analyses. This chapter expands the discussion on ensuring relevance by focusing on three key dimensions—appropriateness of concepts and their measurement, ability to link data, and data currency. Specific substantive areas in which the relevance of SRS data could be improved are discussed in chapters 4 and 5.

Relevance of data has many dimensions and each of these should be considered in determining whether a statistical agency is meeting the needs of its constituents (NRC 1997a). For purposes of this study, we focus on three aspects of data collection that influence the relevance and analytic value of SRS data:

Appropriateness of concepts and their measurement. A statistical agency should, working with data users, define the concepts that it will measure in order to meet their information needs. These concepts and how to measure them should be continuously reviewed and revised as issues change, and as analysis reveals weaknesses in current measures and suggests alternate measures that might better capture information of use to constituents. Issues of measurement include the ability to quantify these concepts in a meaningful way, the ability to reliably collect data, and the level of detail that both meets user needs and is cost-effective.

Ability to link data. The ability to link data collected through various instruments within a data collection program and to link that program's data to external data sources increases the breadth and depth of data, and thereby, the ability of analysts to use them to address current issues.

Data currency. Whether data reflect current conditions depends on several factors. The first of these is the periodicity of each survey. Data that are collected biennially, for example, are expected to have a shelf life twice that of annually collected data. The second of these is the timeliness with which collected data are released for public use. Timeliness is measured, in the case of SRS, as the time that elapses between the reference date in each survey and the date at which survey data are released. The third factor is the rate at which trends in the field surveyed actually change, and sometimes more importantly, are perceived to change.

A statistical agency may best influence current and future policy debates by improving its operations across all three of these dimensions of relevance. This chapter discusses each of these in turn.

Relevance of Data Concepts

First of all, the concepts measured by a statistical agency must be appropriate and upto-date. Statistical agencies are charged with providing data and analyses on subject areas relevant to policymakers, researchers, and others with a stake in the targeted policy issues. Agencies must identify the appropriate set of concepts that capture important trends and issues in that subject area and then establish appropriate measures for the concepts, which they apply through their data collection and acquisition activities. These measures should be established at a level of detail that allows data analysis to provide answers to the questions data users are concerned about.

Statistical agencies must establish means for monitoring and updating the set of concepts they measure and their data collection and acquisition activities as their subject areas change. An important source of information for statistical agencies on the continuing relevance of concepts in their specific subject areas is their community of data users. As the Committee on National Statistics has advised, a statistical agency's mission "should include responsibility for assessing the needs for information of its constituents" in order to ensure that the data and information it provides continue to be relevant over time (NRC 1992).

SRS Data Users

While SRS data are widely used, the evolution of the SRS portfolio described in the previous chapter has not been sufficient recently to allow the division to provide all the data needed by national policymakers as they deliberate questions about resources in a fast changing world. The science and engineering enterprise has changed substantially in the last two decades. With the exception of the three personnel surveys and some aspects of the Survey of Industrial Research and Development, however, SRS data collection activities have changed little during this time. As a result, policymakers, planners, and researchers have confronted new resource issues for which data do not currently exist.

In planning its program and identifying emerging issues of import, a statistical agency "should work closely with policy analysts in its department, other appropriate agencies in the executive branch, relevant committees and staff of the Congress, and appropriate nongovernmental groups" (NRC 1992). For SRS, these constituents include policymakers in the Executive Office of the President, Congressional committees and staff, the National Science Board, and officials in federal agencies. They include policymakers at the state and local level who play a role in education and technology-based economic development. They include those who seek to influence or inform policy, such as the National Academy of Sciences, professional associations, and think tanks. SRS data users also include academic administrators in the nation's colleges and universities and planners and policymakers in industry who have much at stake in federal policies and programs. Academic researchers seeking to understand scientific processes and explore relevant science and technology policy issues are also important data users. The media are also a constituency as they serve as one means for disseminating data and issue-oriented analysis to the SRS audience. Finally, students and faculty are SRS customers and the more informed their career and mentoring decisions, the more effective the science and engineering enterprise.

Use of SRS Data

SRS has served these constituents reasonably well. Many among the people interviewed for this study believed SRS is already doing an excellent job. One individual remarked that overall, SRS data are the ''gold standard" for data related to science and technology, and noted that coverage of issues is very good. Another interviewee said that SRS data are very helpful for U.S. science and technology issues: "Their domestic data are essential; they are the official statistics. Their international publications are also useful. The data in such publications as Science and Engineering Indicators, National Patterns of R&D Resources, and Federal Funds are quite detailed and very useful."

While use of data is not an indicator of satisfaction with those data, the widespread use of SRS data is notable. The National Science Board, for example, relies heavily on SRS data as can be seen in Science and Engineering Indicators. SRS data are widely used by officials in the U.S. Office of Management and Budget (OMB), the White House Office of Science and Technology Policy (OSTP), and the Congressional Research Service (CRS) to analyze research budgets and science and technology policy issues. These officials are not necessarily intense SRS data users, but when they do use SRS data it tends to be for important federal science and technology policy issues. These officials rely mainly on published materials, particularly Science and Engineering Indicators and SRS publications on research and development funding. University administrators are also perennial users of SRS data. Graduate deans widely use data from the Survey of Earned Doctorates and other administrators use SRS data on university R&D and academic facilities. In SRS surveys for which university administrators are respondents, SRS obtains very high response rates—around 95 percent—because the data are, in turn, used by these administrators.

Besides this, SRS data show up in reports prepared by various other organizations. The American Association for the Advancement of Science (AAAS) primarily uses data from the U.S. Office of Management and Budget and from federal agencies in its annual report on federal research and development spending in the President's budget. However, these data are supplemented by SRS data for almost one-third (5 of 17) of its budget overview tables (AAAS 1999). In a recent report on the "new economy" prepared by the Progressive Policy Institute, five of thirty-nine indicators drew on SRS data from either Science and Engineering Indicators or National Patterns of R&D Resources compared to three drawn from the Economic Report of the President and seven drawn from Bureau of Labor Statistics data (Atkinson and Court 1998). Likewise, the Committee for Economic Development recently released a report, America's Basic Research, in which almost 90 percent of the data in the report's tables and figures were SRS data drawn from either SRS publications or the NSB's Science and Engineering Indicators (Committee for Economic Development 1998).

Substantial Room for Improvement

Still, national policymakers face new issues about the allocation of scarce federal resources in support of changing science and engineering. Examples of questions for which additional data may be useful follow:

-

Now that the majority of scientists and engineers work outside of academia, how do they use their training in non-academic careers? Are there changes in Ph.D. training that could improve their productivity and job satisfaction in these areas?

-

Which fields in which industries are generating professional opportunities for new degree holders?

-

How are recent Ph.D.s faring in the job market and are they, as a group, experiencing higher levels of unemployment or underemployment than other professionals are?

-

How are graduate students supported financially throughout their studies and how does the packaging of support at various stages in their training affect their success?

-

How does innovation occur in a firm, and how do firms translate research findings into commercial products?

-

What is the return to government supported R&D, and how does it compare to returns on private investments?

-

How has industry reshaped research and development in the private sector?

-

Is the United States investing adequately in specific critical technologies?

-

What forms have government-university industry partnerships taken? Which forms have been most successful?

-

What are appropriate costs associated with science and engineering facilities at major U.S. research universities?

-

What are the locational arrangements among science and engineering endeavors and how are they changing at the regional, national, and international levels?

-

How does the globalization of science and engineering affect the R&D enterprise and the labor market for scientists and engineers?

To provide data and analysis that will meet some of the unaddressed information needs of its customers, SRS already plans to tackle two major areas where more information is needed on emerging policy issues. First, to address issues regarding the graduate school experience, SRS is now in the planning stages for a new Longitudinal Survey of Beginning Graduate Students. SRS is initiating this survey to "improve our abilities to assess graduate education by studying the consequences of NSF support and other funding mechanisms on persistence and timeliness attributes of degree attainment, transition from education to work, and subsequent employment experiences and career outcomes." Second, in order to provide more information on the innovation process in the United States, SRS is developing a Survey of Industrial Innovation. Recognizing that "innovation and technological change play a pivotal role in the performance of U.S. firms," SRS is launching this survey ''to be in the position to provide policymakers with systematic data on the processes of technological innovation" (NSF 1998a).

SRS data need to be reviewed and updated so that they better address issues such as those listed above. The three personnel surveys in the Science and Engineers Statistical Data System (SESTAT) were substantially revised in 1993, and the Survey of Industrial Research and Development (RD-1) significantly increased its sample size and added a substantial number of firms in the service sector to the sampling frame about the same time. Most SRS surveys, however, have changed little in recent years. SRS requires an ongoing process that produces, through interaction with policymakers, planners, and researchers, continual renewal of the concepts its seeks to measure and the data collection and acquisition activities that provide those measures in order to ensure the relevance of its information. A statistical agency must carry out user needs assessments on a regular basis in order to ensure that the data it provides is currently relevant. A proactive agency, however, will actively engage its constituents in a variety of ways to review and revise its data collection portfolio on an ongoing basis, maintaining important data series, yet also addressing newly emergent data needs.

Dialogue and Renewal

There has been very little systematic study on the ways statistical agencies can best engage their data users to review and update the concepts they measure. We have, however, identified a set of activities that we believe will orient SRS's processes toward continuous renewal of the concepts it seeks to measure and of its data collection and acquisition activities. First, SRS needs to organize an ongoing dialogue with its customers through advisory panels, workshops, and customer surveys. Second, SRS should obtain feedback on its data and products through outreach activities, purposeful dissemination, and enhanced customer service. Third, the analysis of its microdata—by its own staff and by external researchers—provides SRS essential opportunities for understanding its data and gathering information that will yield strategies for improving data. Fourth, SRS should create small, targeted pilot surveys on new topics using statistically valid sampling methods to determine whether they should be incorporated into the SRS portfolio. SRS should utilize the information obtained in these ways to review and revise existing surveys or enhance the data they provide through temporary survey supplements, quick response panels, or tightly-focused qualitative analyses.

Organizing a Dialogue with Data Users and Policymakers

One of the principal means for generating feedback from customers on the issues they face and the way they address them with SRS data is to establish advisory panels (called "special emphasis panels") of users, policy analysts, and data providers for each existing SRS survey. Many statistical agencies have advisory committees for their surveys, though their use varies. For example, the U.S. Census Bureau has advisory committees that focus on specific survey activities (e.g., the 2000 Census, the Survey of Income and Program Participation) as well as on a broad range of programs (e.g., the Advisory Committee of Professional Associations). By contrast, the Energy Information Agency and the Bureau of Justice Statistics each have an advisory committee for the entire agency appointed by the American Statistical Association.

For SRS, these advisory panels for SRS would seek to keep staff abreast of relevant policy issues, identify new topics for surveys, recommend survey changes, and suggest new areas for analysis. Advisory panels can also help make tradeoffs between survey questions and identify which data are practical to collect. A number of SRS surveys already have advisory panels in place. For surveys that do not, SRS should establish them. The committee is especially concerned that an advisory panel be established for the Survey of Industrial Research and Development (RD-1) to deliberate emerging issues further and to provide suggestions for revising the survey instrument in light of these issues. Also, the 1989 panel on the SRS personnel data system recommended creation of an advisory panel for what is now the SESTAT system. As SESTAT is revised for the next decade, we recommend that the Special Emphasis Panel of the Doctorate Data Project play this role, in addition to providing advice on the Survey of Earned Doctorates and the Survey of Doctorate Recipients as it does now.

Holding conferences or workshops on important science and engineering resources issues or data collection and usage issues is a second means for interacting with data users. SRS periodically holds conferences and workshops that provide useful opportunities for interchange. Examples include the series of workshops SRS has held with professional societies on issues related to education and the workforce, the 1997 NRC workshop funded by SRS on Industrial Research and Innovation Indicators for Public Policy, and the recent Workshop on Federal Research and Development that brought together agency staff who provide and use data from the

Survey of Federal Funds for R&D. These have been useful events and a set of such workshops should be planned on an ongoing basis across all SRS data collection efforts to provide interaction with data providers, data users, and policymakers and to suggest ways to keep data collection focused on data elements that address important policy issues.

SRS customer surveys obtain user judgments about data relevance and quality. In a survey of SRS customers undertaken in 1996 (with an 84 percent response rate), 38 percent of respondents rated the relevance of SRS data on the topics of most interest to them as "excellent." Another 50 percent rated them as "good." In order to mandate improvement in the relevance of SRS data, NSF has required SRS (under the Government Performance and Results Act) to increase the percentage of customers rating SRS data relevance as ''excellent" to 45 percent and increase the overall percentage who rate SRS data "good" or "excellent" to 90 percent.

SRS may take other steps to improve its customer surveys and the accuracy of its results. First, SRS may better specify the universe of SRS data users it uses to draw the sample for its customer surveys. In 1996, the sampling frame for the survey was 1,200 individuals who indicated that their principal professional interest was science and technology policy in a survey conducted by the AAAS. While these individuals are likely or potential data users, they do not cover the entire universe of such users which includes federal program administrators, educators, students and faculty, academic researchers, and others, and these individuals should be included in the sampling frame. (It should be noted that a change in the sample would make the resultant data non-comparable to those derived from the 1996 customer survey, and therefore, NSF's GPRA goals for SRS of 45 percent "excellent" and 90 percent "good" to "excellent" may therefore be of questionable value.) Second, the survey may be enhanced by adding questions about the role data from specific surveys play in the work of those who use them. In this way, customer surveys may provide SRS further insight into exactly which kinds of data its users need. An investigation of how data are used should provide an opportunity for dialogue that will improve the data for the end user.

Feedback through Outreach, Dissemination, and Customer Service

In spite of public relations and information dissemination efforts, according to one person interviewed, "SRS still isn't known well by the communities who should know it." SRS has made a significant effort to establish an accessible web site that allows interactive access to data by a wide audience. SRS also has a loyal following for data from many of its surveys, such as the Survey of Earned Doctorates, which annually generates a long list of data requests from repeat as well as onetime data users. Many of those interviewed for this study, however, felt that many people could use SRS data, but are not aware of this resource. SRS could increase its visibility by appearing at a larger number of public events, by more purposefully disseminating its data and reports, and by improving customer service.

Outreach to current and potential data user communities provides another source of interaction that informs SRS's data collection, acquisition, and analysis. SRS has engaged certain groups already. For example, the series of workshops with professional societies on graduate education and labor market issues has provided SRS an opportunity to inform users in these organizations about SRS data. The workshops allow for dialogue about current issues, and establish working relationships that help fill data gaps on such topics as underemployment for recent Ph.D.s. Similarly, SRS recently received input on the Survey of Industrial Research and Development (RD-1) from the American Economic Association Advisory Panel to the U.S. Census Bureau. While in the case of RD-1 input from an even more focused group, such as an advisory committee, is required,

these linkages can be extended to other surveys. They can be augmented as well by having an SRS presence, an exhibit booth for example, at the annual meeting or conference of important constituent groups like the American Association for the Advancement of Science (AAAS), the American Economic Association (AEA), and the Council of Graduate Schools (CGS).

The data and report dissemination process provides another opportunity for meaningful interaction with customers. SRS publications appear to be distributed in an ad hoc manner. Those interested in reports contact NSF and are placed on subscription lists. SRS should examine these lists and take action to ensure that they include important constituents in the policy arena, whether or not they have requested certain publications. Some customers do not know that certain publications exist. For example, many graduate deans who regularly receive data on new doctorate degree recipients do not receive data and publications on new enrollment in graduate programs by field or on academic R&D funding that powerfully affects both graduate education and human resources needs. In addition, many SRS data users do not realize that publication of a data brief coincides with the official release of a data set. SRS should find ways to make this more transparent and to apprise its data users when data sets are made public. A short statement attached to a data brief indicating that data from a certain survey is now available to the public would help to accomplish this.

Another practical strategy for improving dissemination involves the SRS web site. A number of people interviewed for this study, including several high-volume web site users, commented that while the SRS web site is among the best hosted by a federal statistical agency, the functionality of the data sets on the web site needs to be more user-friendly.

Opportunities in Analysis to Improve Data

The analysis of its data provides SRS with additional opportunities for interacting with data users and policymakers and gathering information that will yield strategies for improving data. As is well known in the federal statistical community, a statistical agency enhances its understanding of the uses and limits of its own data by using them. Use of the data to support analyses of current issues suggests opportunities for improving existing data or augmenting them through new surveys since analysis helps to identify areas where information is incomplete and new data are needed to tackle an issue. SRS conducts a substantial amount of data analysis. SRS prepares special publications, including the influential Science and Engineering Indicators under the guidance of the National Science Board. SRS also prepares a series of Issues Briefs on current topics in science and engineering resources. Still, many SRS data sets are underutilized, to the detriment of both the user community and the process of understanding and improving SRS's data. One of our interviewees argued, "NSF has neither fully exploited its data nor sufficiently encouraged use of the data by outside analysts."

Comments by a number of other interviewees supported the claim that SRS data are underutilized and many urged that SRS institute a program that would increase use of its data by increasing access to microdata for, or providing funding to, external analysts with expertise in science and technology resources issues. Examples of the kinds of SRS data that are underutilized as perceived by interviewees include the National Survey of College Graduates (NSCG) and the Survey of Doctorate Recipients (SDR). SRS has done little comparative analysis using the available NSCG data on the non-science and engineering respondents to the survey. Also, SRS has done very little, if any, longitudinal analysis of the SDR or other surveys in its personnel system.

Increasing access to microdata is a priority item for SRS. SRS should revise its policies to increase access by researchers to microdata that are essential to analyzing the details of certain issues. Many of SRS's rich databases are not fully utilized because of the limits placed on access to detailed data due to SRS's overly restrictive interpretation of federal privacy laws. Given proper procedures, the security and confidentiality of the data may be maintained even while the number of analysts accessing microdata is increased to include a small number of external analysts.

In addition, SRS should develop a grant program to support external researchers with expertise in science and technology resources issues. We applaud the new SRS program providing young researchers training on the use of its databases. Scholarships were offered to these young researchers so that they could attend a weeklong training session. This is a step toward developing a new cohort of researchers interested in analyzing evercomplex science and engineering resources issues. Beyond this, SRS should also develop a program that provides grants to external researchers in support of analyses that utilize SRS data. SRS has the authority to administer an external grant program for analytical research, but has not issued a general announcement for one since the mid-1980s because of budget constraints. This program provided grants ranging from about $50,000 to $150,000 for external research using SRS data. Under this program, SRS requested proposals on specific topics, which provided an opportunity to obtain assessments on a variety of current issues. Moreover, according to one interviewee, these externally funded studies often anticipated issues that would otherwise have taken two to three additional years to surface. In this sense, these grants not only inform current issues but also provide what some have termed "exploratory indicators." In essence, these studies used SRS data to help identify emerging issues and to define the kinds of data that will be needed to address these issues. We would like to see a new grants program continue this.

SRS should also develop a means for bringing researchers into SRS to work on science resources issues, perhaps for six to twenty-four months. The development and funding of these programs and the resultant familiarization of these analysts with SRS data will strengthen SRS's programs substantively and methodologically. Independent external researchers may explore science and engineering resources issues in new ways or tackle issues that SRS staff may not have the time to explore. This research would broaden the range of insights developed from SRS data. Moreover, independent researchers—as do SRS analysts—would uncover methodological or data quality issues in SRS data sets, thereby helping to improve the data as well. A variety of issues needs to be addressed in developing programs such as these. For example, should a researcher who comes to work at the SRS study issues defined by SRS, independently defined issues, or some mixture of the two? Would analyses result in SRS publications or independent publications? Certainly details such as these will have to be addressed, but the experiences of these researchers, even if few in number, would contribute to SRS's mission by increasing the flow of ideas in and out of the organization. NSF has standards and procedures for bringing visiting personnel into other programs that might be adapted for SRS. Statistical agencies like the Census Bureau also have regular visitor programs that could serve as models.

Dissemination of analyses provides an opportunity for additional feedback from data users and policymakers. It is difficult for a statistical agency to know a priori all of the issues that matter to its customers since important issues are ever changing. Analyzing data on subjects that are known to be important allows for additional opportunity to interact with data users and policymakers to generate additional feedback that may identify new issues for analysis or new data products that serve customer needs.

Using Feedback to Revise Data Collection and Acquisition

SRS should approach each of its data collection efforts as an opportunity to provide important time-series data as well as data on current issues. Many analysts find time series valuable, and SRS should collect data so as to allow time series analysis in each of its substantive areas. Structural changes in the science and engineering enterprise, however, also require changes from time-to-time in the questions posed and response categories offered in data collection efforts. The feedback generated through dialogue, outreach, dissemination, and analysis should be used to address these structural changes by revising—and occasionally overhauling—data collection activities to meet current data needs.

SRS should establish a periodic review process for each survey to ensure that they address the current structure of the science and engineering enterprise based on the feedback obtained through the means described above. With guidance from survey advisory committees, staff responsible for each survey should be required to periodically submit a plan to the survey's advisory committee for dropping obsolete questions and adding new ones as needed to keep the data collection effort current.

To tackle new dimensions of the science and engineering enterprise that may substantially change data, SRS has to consider the difficult tradeoffs between maintaining time series and changing data to reflect new realities. The Committee on National Statistics has said that a federal statistical agency must "be alert to changes in the economy or in society that may call for changes in concepts or methods used in particular data sets. Often the need for change conflicts with the need for comparability with past data series and this issue may dominate consideration of proposals for change" (NRC 1992).

The assumption should be that it is better to maintain than disrupt a time series, but when reality has changed substantially or it is determined that current approaches to data collection do not capture the sources and uses of science and engineering resources, it is better to start a new time series that provides relevant information. Changes to the personnel surveys in the SESTAT system and to RD-1 earlier in the 1990s, for example, did result in discontinuities in time series. However, the need to better expand and integrate survey instruments, in the case of the three personnel surveys, and the need to improve sample design, in the case of RD-1, compelled a break with the past in these instances. To strike the appropriate balance between maintaining and disrupting time series in any given situation, SRS should draw on the advice of its data users through its survey advisory committees to establish what action would best meet their needs.

When structural changes like these are not the issue, there are ways to address current issues without disrupting time series. First, to address important issues that may not require permanent changes in a survey instrument, SRS should continue to use special survey supplements to obtain needed data. Some SRS surveys already use special supplements. For example, the 1995 Survey of Doctorate Recipients (SDR) included a work history module. Other surveys, such as the Survey of Earned Doctorates (SED), have never used such modules even though they could be employed profitably to obtain data that policymakers desire. Second, SRS may respond even more quickly to important short-term issues by using quick-response panels to answer questions that require a quick turnaround rather than changing an existing survey instrument. SRS had an Industrial Panel on Science and Technology and a Higher Education Panel that it used in the 1970s and 1980s to answer questions quickly when needed and without substantial cost.

SRS often used these panels, for example, to gather time-sensitive information in response to questions posed by the U.S. Congress and OMB. Examples of the issues previously examined through quick-response panels include the impact of the R&D tax credit and foreign support of research conducted on U.S. campuses.

SRS should also consider qualitative techniques in expanding its repertoire of methods for information collection. Quantitative data cannot answer every question, or at least, not in the most cost-effective manner. Just as SRS can use quick response panels to explore an issue broadly, it could profitably use case studies as a means for exploring particular issues that require indepth investigation or for which data are not obtainable. Case studies would be appropriate when understanding an issue or phenomenon requires more detail than a survey could cost-effectively provide. SRS could also use focus groups, site visits, and interviews as means for collecting information. SRS already uses focus groups as a mechanism for pretesting new questions and response categories on its surveys. However, these techniques could also be used to collect primary information as part of a quick-response activity or a case study.

Data Linkages

The second aspect of data relevance that federal statistical agencies should focus on is the ability to link their data sets internally and to data collected by other sources. The Committee on National Statistics asserts that an effective statistical agency promotes data linkages in order to enhance the value of data sets for analyzing current policy, program, and research issues (NRC 1992). Science and technology policy analysts and researchers interviewed for this study also expressed a desire for more cross-survey analysis to provide better interpretations of the changing components of the science and engineering enterprise and how they interrelate. They have suggested that integrating data sets within SRS and better coordination of SRS data collection with that of other organizations will facilitate these kinds of analyses.

Integrating SRS Data Sets

SRS's portfolio of data collection activities has grown over the past half century as individual surveys have been established to provide information on specific pieces of the science and engineering enterprise. SRS has only recently begun to manage its surveys as components of a more integrated data system and would increase the depth and validity of its data by pursuing this further. A number of interviewees for this study claimed there are many "walls" in SRS among staff and between surveys, a suggestion borne out by differences among survey instruments and in survey results. SRS should facilitate greater interaction among staff to promote information sharing, to examine how SRS surveys could be better coordinated, to seek practical means for linking data sets, and to surface opportunities for cross-survey analysis.

WebCASPAR and SESTAT, discussed in the previous chapter, provide examples of ways in which SRS has integrated its data and could do so in the future. WebCASPAR draws on data from SRS and the National Center for Education Statistics to provide profiles of academic institutions. SESTAT, which will be discussed further in Chapter 4, combines data from the National Survey of College Graduates, the Survey of Recent College Graduates, and the Survey of Doctorate Recipients to provide an integrated data system on the population of scientists and engineers at the bachelor level and above in the United States.

On the whole, however, SRS surveys have tended to operate relatively independently of each other. SRS could implement changes to make data elements more consistent across

surveys. SRS should find ways to link data sets beyond SESTAT and WebCASPAR to facilitate deeper analysis of the rich data sets it has created and maintained.

First, SRS should improve comparability of data derived from its surveys within each of its statistical programs. For example, the Survey of Earned Doctorates and the Survey of Doctorate Recipients both ask Ph.D. recipients questions on such subjects as type of employer and disability status. However, the two surveys ask these questions in different ways and with different response categories. As the two national surveys of Ph.D.s, the content of these two surveys could be better coordinated to link the status of Ph.D.s at degree receipt—the point at which the SED is administered—with the career paths of Ph.D.s, the subject of the SDR.

Second, SRS should continue its investigations of discrepancies in the results it obtains on similar questions from different surveys in order to assess and improve the validity of its data. SRS has been investigating several recently identified data discrepancies in its R&D statistics program. For example, the Survey of Federal Funds for Research and Development reports federal obligations of $31.4 billion in 1997 for research and development performed by industry, while the Survey of Industrial Research and Development reports that the federal government was the source of just $23.9 billion in industry R&D expenditures (NSF 1999b, NSF 1999h). Similarly, the Survey of Federal Funds for Research and Development reports federal obligations of $12.6 billion in 1997 for research and development performed by the nation's colleges and universities, while the Survey of Research and Development Expenditures at Universities and Colleges reports that the federal government was the source of $14.5 billion in academic R&D expenditures (NSF 1999b, NSF 1999h). This discrepancy and some of its analytical consequences will be discussed further in Chapter 5.

In the 18 months or so that these discrepancies have been evident, SRS has undertaken a number of activities (e.g., workshops with data providers in federal agencies and academic institutions) to understand the nature of these discrepancies, the extent to which some differences might be expected and can be explained, and the extent to which some differences need to be addressed through changes in either questionnaires or data collection.

SRS has noted that the disparity in the results of the Survey of Federal Funds for Research and the Survey of Industrial Research and Development can be accounted for by a discrepancy between what the Department of Defense (DOD) reports as the R&D funding it provides to industry and what industry reports as the R&D funding it receives from DOD. Thus, SRS has been able to suggest what some of the reasons for the disparity may be: firms may not count as their own R&D those projects funded by federal agencies that they subcontract to nonindustrial organizations; DOD may classify some technical services contracts with industry as R&D that firms do not classify as such; there are differences between federal obligations and actual expenditures from year-to-year, though these should be small; and there may be differences between what is reported as R&D by a firm as opposed to what might more accurately be reported as R&D by a firm's business unit. SRS has discovered that other advanced industrial countries with significant defense programs experience similar accounting problems.

SRS has begun an investigation into the discrepancy between the Federal Funds Survey and the Survey of Research and Development Expenditures at Universities and Colleges. SRS has found that at least $350 million of the $1.9 billion difference—almost one-fifth—is due to double counting that occurs when one university subcontracts R&D to another and they both count it as R&D expenditures.

Another potential source of the difference may occur when federal agencies obligate funds to states or industry, which then pass funds on to universities. The federal government counts these funds as obligated to states or industry, as appropriate, and not as R&D funding to universities. The universities, however, knowing the original source of these funds, report them as federally-funded R&D expenditures.

SRS should continue these investigations and take steps in the near term to explain these differences to data users and consider implementing questionnaire or data collection changes that will help resolve the discrepancies. We additionally recommend that SRS consider commissioning a further study that would examine in greater detail the possibility of better integrating the SRS R&D surveys. Such a study might design an "R&D data system" analogous to the SESTAT data system in the Human Resources Statistics program. The resulting data system would ideally account for related structural changes in the nation's research and development system, such as intra-and inter-sectoral R&D partnerships.

Third, SRS should structure its surveys so they may also be directly linked one to another. There is great potential utility in such linkages, including those between human resources and R&D investment data sets. The most critical obstacle to meaningful linkage of SRS data sets, other than non-comparability in questions and response categories, has been the lack of a uniform science and engineering field taxonomy across SRS surveys. As can be seen in Table 3-1, for example, the biological sciences are described differently across SRS graduate education and personnel surveys. Each of the SED, the GSPSE, and the NSCG use a different taxonomy for science and engineering field of degree. To cite another example, there are differences in field classifications among R&D funding and performance surveys. The life sciences in the Survey of Scientific and Engineering Expenditures at Universities and Colleges are disaggregated into agricultural, biological, medical and other life sciences. In the Survey of Federal Support to Universities, Colleges, and Non-Profit Institutions, however, the life sciences are disaggregated into these fields as well as a fifth, environmental biology. SRS has contracted with SRI International to review its taxonomy. The SRI analysis should be taken seriously as a step toward standardizing taxonomies across data collection activities.

Field taxonomies not only require standardization so that different surveys may be better linked; they also require a means for keeping them current on an ongoing basis so that data may continue to be relevant to policymakers. It has been known for some time that SRS taxonomies become outdated and need constant revision. An internal 1994 SRS report on customer views of SRS products stated:

Many customers, especially those at NSF, said that some science and engineering taxonomies used in SRS surveys were out-dated and/or not at the level of detail necessary to provide the information they need (NSF 1994).

Care should be taken to preserve time series when possible, but taxonomic changes are required in order to ensure that the data are providing accurate measures of current science and engineering R&D funding and performance, especially in emerging fields.

Table 3-1 Biological Science Fine Field Categories in the 1997 Survey of Earned Doctorates (SED), the 1997 Survey of Graduate Students and Postdoctorates in Science and Engineering (GSPSE), and the 1997 National Survey of College Graduates (NSCG).

|

SED Biological Science Fields |

GSPSE Biological Science Fields |

NSCG Biological Science Fields |

|

Biochemistry |

Biochemistry |

Biochemistry and Biophysics |

|

Biomedical Sciences |

|

|

|

Biophysics |

Biophysics |

|

|

Biotechnology Research |

|

|

|

Bacteriology |

|

|

|

Plant Genetics |

Botany |

Botany |

|

Plant Pathology |

|

|

|

Plant Physiology |

|

|

|

Botany, Other |

|

|

|

Anatomy |

Anatomy |

|

|

Biometrics & Biostatistics |

Biometry and Epidemiology |

|

|

Cell Biology |

Cell and Molecular Biology |

Cell and Molecular Biology |

|

Ecology |

Ecology |

Ecology |

|

Developmental Biology/Embryology |

|

|

|

Endocrinology |

|

|

|

Entomology |

Entomology and Parasitology |

|

|

Biological Immunology |

|

|

|

Molecular Biology |

|

|

|

Microbiology |

Microbiology, Immunology, and Virology |

Microbiology |

|

Neuroscience |

|

|

|

Nutritional Sciences |

Nutrition |

Nutritional Sciences |

|

Parasitology |

|

|

|

Toxicology |

|

|

|

Genetics, Human & Animal |

Genetics |

Genetics, Animal and Plant |

|

Pathology, Human & Animal |

Pathology |

|

|

Pharmacology, Human & Animal |

Pharmacology |

Pharmacology, Human & Animal |

|

Physiology, Human & Animal |

Physiology |

Physiology, Human & Animal |

|

Zoology, other |

Zoology |

Zoology, General |

|

Biological Sciences, General |

Biology, General |

Biology, General |

|

Biological Sciences, Other |

Biology, not elsewhere classified |

Other Biological Sciences |

Coordination and Linking with External Agencies and Organizations

While it is a high priority for SRS to focus on better data integration among its own surveys, there are opportunities for SRS to improve the coordination of, and create linkages between, its survey data and those of other federal agencies and non-governmental organizations.

First, SRS could help coordinate data collection by professional associations, colleges and universities, and other countries to improve data availability and comparability.

-

As will be further discussed in Chapter 4, SRS needs to continue to improve its data on the transition of new Ph.D.s to employment. SRS should continue its productive work with others to obtain data on the job market experience of new Ph.D.s—especially those without definite commitments for study or work at the time of degree—during the immediate months following graduation. SRS has interacted extensively and productively with professional societies and the Commission on Professionals in Science and Technology (CPST) in obtaining data on the job market experiences of new Ph.Ds. This interaction should be continued, and strengthened. SRS could provide these associations with a framework for collecting these data in a standardized way and technical assistance on statistical methods.

-

SRS should also explore how it might work closely with colleges and universities to assist them with the development of standardized data sets on the placement of their recent graduates. In its recent report on graduate education, the Association of American Universities (AAU) urged colleges and universities to maintain comprehensive data on completion rates, time-to-degree, and job placement for each of their graduate programs. The report specifically recommends that institutions track their graduates at least until first professional employment beyond postdoctoral appointments. Such data would allow better tracking of alumni for purposes of understanding career paths and the effectiveness of university programs in providing the knowledge and skills that graduates need in the labor market. The AAU also suggests that institutions provide student applicants with this information (AAU 1998). As research universities implement this recommendation, SRS could help them standardize their data collection efforts; locally collected data could then potentially be aggregated in a meaningful way at the national level.

Second, while meeting requirements for maintaining confidentiality, SRS should seek to link its data with those of other federal agencies and private firms to further enhance their analytic value:

-

SRS should seek ways to link its graduate education and personnel data with data collected by the other relevant data sources such as the National Center for Education Statistics and the Educational Testing Service (ETS). For example, researchers would like to be able to link student scores on the Graduate Record Examination (GRE) to data from the Survey of Earned Doctorates and the Survey of Doctorate Recipients to examine further the predictive power of the GRE with regard to career outcomes.

-

SRS data could be linked to an array of other education, career, and productivity data. SRS should find efficient means for linking its graduate education, personnel, and R&D funding and performance data with public data on federal agency awards

-

(fellowships, traineeships, associateships, research grants) and patents and with private data sets on publications. For example, graduate students can accurately identify the type but not the source of their funding, particularly when funding originates from federal agencies and flows through institutions. Creating linkages between federal data sets on awards and federal data sets on degree recipients would substantially improve the available data on federal support for graduate students. In theory, linking SRS data to other federal data sets, particularly those that include name and Social Security Number as identifiers, should be routine, though in some cases augmenting data sets on awards will need to be improved to facilitate the linking process. Linking personnel data with publication and patent data is more difficult, but SRS should also seek ways to make such linkages easier. SRS asked for data on the number of articles, other publications, patents applications, and patent awards in a special module for the 1995 NSCG and 1995 SDR. If these data points were collected on a continuous basis they would provide benchmarks against which researchers could measure their ability to link SRS with publication and patent databases.

-

SRS should also investigate the opportunities made available by adding an indicator for metropolitan statistical area to each of its R&D funding and performance surveys. Currently, SRS R&D surveys collect data on the state in which the respondent is located which provides an approximation of the state distribution of R&D performance. (Actual location of performance may differ from location of respondent when laboratories or business units are geographically dispersed.) These data are widely requested by federal and state officials and by researchers who are interested in the locational dimensions of R&D spending.

However, the data on state distribution of R&D spending does not offer a precise measure of the regional economic impact of R&D expenditures since regional economies often include areas in more than one state and there may be more than one regional economy within a state. These data users, particularly researchers, would benefit from a metropolitan indicator as an added data point for analysis and also as a means for linking these data with other economic and demographic data available at the metropolitan level.

Finally, SRS interprets federal privacy laws in a way that restricts the agency's ability to provide data to external researchers and specifically to facilitate data linkages. SRS does provide data to external researchers under licensing agreements. However, we believe that SRS is overly restrictive in its interpretation of privacy laws, and thus limits both the use and usefulness of the data it provides to these researchers. With proper licensing agreements and oversight, SRS should be able to better support the data linking activities of its data users.

Data Currency

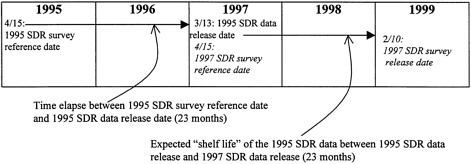

A third dimension of relevance for statistical agencies relates to whether data collected by such agencies are current, or are perceived to be, during their "shelf life," the period of time from the date they are made public until the date when data from the next survey cycle are released. Using the Survey of Doctorate Recipients (SDR) as an example, Figure 3-1 illustrates how survey periodicity and data processing time affect data currency. The survey reference date for the 1995 SDR was April 15, 1995. Surveys were sent to respondents in May 1995, data were collected throughout 1995 and early 1996, and file preparation was completed toward the end of

Figure 3-1 Survey Reference Date and Data Release Date for the 1995 and 1997 Cycles of the Survey of Doctorate Recipients

1996. NSF released the data set on March 13, 1997. Thus, approximately 23 months elapsed between the survey reference date and the data release date. Given the two-year periodicity of the survey, then, the 1995 data were the most recent data available on the population of Ph.D. scientists and engineers until February 1999, when NSF released the 1997 SDR data by posting a limited set of early release tables on their web site.

At issue is whether the 1995 SDR data were accepted by their audience as ''current" for the entire length of their expected "shelf life," i.e., the 23 months from March 1997 to February 1999. Experiences of SDR analysts suggest that by mid-1998, some already questioned the validity of analyses based on 1995 SDR data. For example, when the NRC's Trends in the Early Careers of Life Scientists (NRC 1998c) was released, its detractors argued that the labor market for scientists and engineers had already changed and 1995 data used in the analysis did not reflect these changes. Even if, in fact, the situation in 1998 was much the same as in 1995, the lack of more recent data allowed those who wanted to substitute anecdote for data in policy debates to do just that. To make matters worse, the 1997 SDR had already been administered, but its data had not yet been released. Thus, the period of data currency did not extend throughout the expected shelf life of the data.

In the case of the SDR, a shorter time period between survey cycles could increase the percentage of its expected shelf life that its data are current. However, shortening periodicity increases survey costs, potentially doubling them if the periodicity is cut in half from a biennial to an annual cycle. By contrast, SRS has considered increasing the periodicity of the SDR from two to three years to cut costs. One might argue that the labor market for Ph.D. scientists and engineers does not change substantially in two years and that three years is sufficient to capture important trends. Such a periodicity change would save money, then, while not affecting data accuracy. However, such a move would substantially decrease the percentage of the shelf life period that SDR data are current, and therefore, would reduce their overall relevance and usefulness to analysts.

Data currency also depends on how long it takes SRS to administer a survey (including survey follow-up), process survey data, and release them for public use. This process is measured, in the case of SRS, as the time that elapses between the reference date in each

survey and the date at which survey data are released. The timeliness with which SRS releases its data has been of comment for some time. A 1994 customer survey conducted by SRS concluded that "timeliness of SRS data was a major concern of several interviewees; it was not considered a problem by others." In summarizing the findings of a 1996 customer survey, then-SRS deputy director Alan Tupek concluded, "when interest in a topic is high, currency/timeliness is the major area of concern. I conclude that we should continue to place priority on improving timeliness" (NSF 1997). Timeliness has also been a concern of external analysts. The Committee on Science, Engineering, and Public Policy (COSEPUP) of the National Academies found in utilizing data from the Survey of Earned Doctorates and the Survey of Doctorate Recipients that the data were not sufficiently current to provide timely measures of the rapidly changing environment for the education and labor market for scientists and engineers. COSEPUP concluded "The National Science Foundation should continue to improve the coverage, timeliness, and clarity of analysis of the data on the education and employment of scientists and engineers in order to support better national decision-making about human resources in science and technology" (NAS 1995).

The relevance, and therefore, the use of SRS data will increase as the data are delivered in a more timely fashion. The NSF GPRA Performance Plan for FY1999 requires SRS to improve its timeliness by decreasing by 10 percent from the current average of 540 days, the time interval between the reference period (the time to which the data refer) and the data release date for each survey. Table 3-2 provides information on SRS's record for data releases. There are several options for decreasing the time between reference and release dates. Some options focus on the data collection phase; other options focus on the data processing and analysis phase.

-

Reduce the time for data collection by tightening the schedule for the initial and follow-up phases of survey response. At present, many SRS surveys are conducted by mail with computer assisted telephone follow-up. Minimizing the time for each of these steps, consonant with maintaining adequate response rates, could potentially save weeks or months in the schedule. As an example, the methodological report for the 1995 Survey of Doctorate Recipients states that a paper questionnaire was sent to sample members in May 1995, a second questionnaire was sent by priority mail in July, and computer-assisted telephone interviewing (CATI) was used for follow-up between October 1995 and February 1996. It seems worthwhile to explore the extent to which the data collection period for the SDR could be reduced from 9 months to 6 months or even less. Analysis of mailback rates by week would be helpful in this regard. If, for example, the response to the first mail questionnaire dropped off substantially after 3 or 4 weeks, it would make sense to mail the second questionnaire in June rather than July. Similarly, it might be possible to begin the CATI operation as early as August and complete it within 2 or 3 months.

-

Explore further the use of Internet technology as a survey mechanism that could speed up response. It would be useful to conduct research to determine if respondents to such surveys as the SDR would respond more willingly and faster via the Internet.

-

Explore the use of motivational material in the survey mailings that emphasizes not only the need for a high response rate, but also the need for a timely response. In addition, for longitudinal surveys, such as the SDR, or the large businesses that are regularly included in RD-1, early results might be mailed to respondents to thank them for their timely response.

Table 3-2 Time Elapse in Months for SRS Surveys Between Reference Month and Data Release Month (data displayed by calendar year of reference month) (in months)

|

|

1993 |

1994 |

1995 |

1996 |

1997 |

|

National Survey of College Graduates* |

25 |

n/a |

25 |

n/a |

16 |

|

National Survey of Recent College Graduates* |

21 |

n/a |

19 |

n/a |

21 |

|

Survey of Doctorate Recipients* |

23 |

n/a |

23 |

n/a |

22 |

|

Survey of Graduate Students and Postdoctorates in Science and Engineering |

18 |

18 |

16 |

16 |

13 |

|

Survey of Earned Doctorates |

16 |

17 |

11 |

17 |

18 |

|

Survey of Scientific and Engineering R&D Facilities at Colleges and Universities* |

— |

n/a |

12 |

n/a |

17 |

|

Survey of Research and Development in Industry |

19 |

17 |

23 |

15 |

13 |

|

Survey of Research and Development Expenditures at Universities and Colleges |

15 |

16 |

14 |

15 |

13 |

|

Survey of Federal Support to Universities, Colleges, and Nonprofit Institutions |

20 |

21 |

20 |

20 |

16 |

|

Survey of Federal Funds for Research and Development |

20 |

21 |

20 |

19 |

14 |

|

* Biennial surveys: n/a means not applicable |

|||||

-

Release data for key indicators early For many key business statistics (e.g., gross domestic product figures) preliminary results are released as soon as possible followed by revisions at a later date. Such a strategy might be explored for some SRS statistics. The preliminary results could be based on early survey responses, on data for a sub-sample or on simpler data editing and imputation procedures than would ultimately be used for the final estimates. Alternatively, final estimates for key indicators could be released early by putting the processing, analysis, and data review for them on a fast track separate from the other data collected in a survey. (As an example, the monthly unemployment figures from the Current Population Survey are released within a few weeks of data collection, while other data in the survey, such as income statistics, are released on a slower schedule.) Central to this strategy is the identification of statistics from a survey that are most important for key users. Strategies to permit their early processing and release then need to be devised. Such strategies may include an emphasis on obtaining the responses for these indicators in the follow-up of non-respondents.

Research will be required to determine which of these or other options is feasible and cost-effective for each of the SRS surveys. Given the interest in timely data for many important policy issues related to science and engineering resources such research is a high priority. In addition to the options outlined above, quick-response surveys are another way to provide timely data on topics of current interest that may not yet be covered in existing surveys.