1

Introduction

“May your wildest dreams come true” is an old adage brought to mind by the phenomenal advances in computing and communications technology and their deployment in a widening array of business, government, commercial, and social applications. The underlying industrial base for computing and communications— the information technology (IT) industries—has grown rapidly, creating jobs, improving the standard of living, and fueling the nation's transition to an information economy. Since 1992, firms that produce computers, semiconductors, software, and communications equipment and provide computing and communications services have contributed one-third of the nation's economic growth, and in 1998 they employed 5.2 million workers at wages 85 percent higher than the private-sector average (U.S. Department of Commerce, 2000). Companies throughout the economy are using IT to compete in global markets, and IT promises to transform the way people work, play, live, and learn. The nation's dependence on the vitality of the technology base for IT was underscored in the late 1990s by such voices as the chairman of the Federal Reserve, the director of the National Science Foundation, and the President of the United States. This technology base and tomorrow's information economy depend, in turn, on continued research on IT.

The role of research in driving innovation and social transformations is often difficult to see. The seemingly endless introduction of new goods and services by entrepreneurs and established corporations obscures the fundamental science and engineering bases underlying innovation. It

also creates the appearance of a self-sustaining process. If businesses are growing and new products are proliferating, why should national leaders be concerned about IT research? This question remains central to contemporary political debate about federal budgets for IT research, despite recent increases in funding. The difficulty of explaining and justifying federal IT research spending influenced the evolution and eventual transformation of the first large federal IT research initiative, the High Performance Computing and Communications Initiative (HPCCI);1 it enlarged the scope of the President's Information Technology Advisory Committee (PITAC) and the associated federal proposals for new and larger research programs, notably the 1999 Information Technology for the Twenty-First Century (IT2) initiative, and shaped the reports that came out of them;2 and it continues to color the annual budget debates about the level and distribution of IT research funds.

An enduring lack of understanding of the nature of both IT research and industrial innovation in IT makes debates about federal programs in this area unusually contentious. Experts from industry and academia, individually and as participants in groups such as Computer Science and Telecommunications Board (CSTB) committees, PITAC, and professional organizations, have asserted publicly that both government and industry are underinvesting in IT research—especially fundamental research. Calls for increased funding have met with skepticism from those who are critical of the rationale for increased funding, uncertain about the nature of IT research (which is apparently less comprehensible than, for example, classical scientific research) and who question why it should be expensive (a concern that reflects a limited understanding of software research). Unless these criticisms and questions can be answered, technological progress may be stymied by a lack of needed research funding.

The nation's increasing reliance on IT demands a reexamination of the IT research base. Both the substance of the research and how it is carried out are at issue. As for substance, the potential is mounting for problems to arise and for opportunities to be lost as a result of deficiencies in the technologies already being distributed quickly and widely into the economy. Society is becoming dependent on information systems that are fragile, and companies striving to be competitive in the short term sacrifice opportunities for IT innovations that depend on sustained or less-constrained exploration, raising questions about long-term prospects. Research is needed to address a host of new problems—many arising as a consequence of interactions among a large and growing number of individual components—as well as long-standing problems that are becoming more prominent and, once a technology is in use, more difficult to manage.

Procedurally, the situation challenges the confederation of govern-

ment, industry, and academia that drives IT research. The give-and-take among these parties was healthy for several decades, as is clear from today's commercial and societal successes with IT, but recently it has become weaker. In addition, industry has faced increasing pressures to streamline research and development (R&D) as a result of waves of structural change in the IT industries in the 1980s and 1990s.3 These conditions discourage investments of time and money in research, instead favoring the creative exploitation of existing science and technology in the guise of new products. Today's IT industry is thriving because it is leveraging a rich base of historical investments in research (see Box 1.1); emblematic of the practice is the now-familiar story of the Internet 's roots in government-sponsored academic research (see CSTB, 1999a). But where, today, is the base for a thriving industry tomorrow?

This report examines the approaches to sustaining IT research, including institutional support mechanisms for the nation's IT research base. The definition of IT research is broad, encompassing work that advances computing and communications technologies as well as systems that combine those technologies to serve a range of social needs. The report does not attempt to develop a detailed research agenda (that information can be gleaned from other CSTB reports and assorted government documents). Rather, it addresses four main topics:

-

Levels of funding for IT research—Are government and industrial sponsors providing sufficient funding for research to keep up with the fast pace of innovation and the explosive growth of the IT marketplace?

-

The scope of IT research—Is the scope broad enough to address the range of challenges to IT systems as they are increasingly integrated into business, societal, and government applications?

-

The constituencies supporting IT research—Is the base of organizations supporting IT research broad enough to ensure sufficient financial and intellectual contributions needed to advance the field?

-

Mechanisms for supporting research—Are existing structures for funding and conducting IT research adequate to address future challenges? Are new mechanisms needed?

This report offers recommendations in each of these areas, with the objective of ensuring that the investments in IT research will propel the nation through the Information Age.

WHY FOCUS ON INFORMATION TECHNOLOGY?

Few in the IT industry—or elsewhere—foresaw the dramatic progress in IT that has occurred over the last few decades. Fewer still can read a

|

BOX 1.1 Research and Innovation in IT: A Historical Perspective Many of the information technologies commonly used today have roots in research conducted decades ago. That research was often supported by a combination of government agencies and private firms. Federal funding for computing research began in earnest immediately following World War II and supported the development of many of the nation 's earliest computers. Beginning in the late 1960s, federal support for long-term fundamental research created a growing knowledge base for exploitation by innovators and entrepreneurs. Industry also funded such research and brought the new technologies to the marketplace. The following list presents some of the better-known examples of current technologies that leverage historical investments in research:

SOURCE: Computer Science and Telecommunications Board (1999a). |

newspaper today without coming across comments, articles, or special sections that remark on trends in IT and their social and economic impacts. Dramatic advances in computer processing speeds, communications bandwidth, and storage capacities are almost clichés. A popular yardstick is Moore's law: for the last 40 years, computing capability per dollar has doubled every 18 to 24 months, equivalent to a 100-fold improvement every 10 to 13 years, reflected in both rapidly increasing performance and declining price. These performance gains, the associated development of software applications, and falling prices for IT relative to its capabilities have propelled IT into new markets. For instance, controllers are now embedded within products such as cellular telephones and automobile transmissions, and complex information systems are used to manage air traffic, book air travel reservations, and process electronic commerce transactions. Research has borne fruit in a cumulative manner, transforming the IT baseline. The early emphasis on computation, combined with the advent, later on, of mass storage devices, focused attention on the capture, storage, and retrieval of massive amounts of data. Likewise, early developments in networking led to ever more sophisticated communications and to distributed processing. With each advance came a dramatic expansion in the uses of computing. The expansion in the use of communications has been more recent; the combined progress in each field has stimulated applications of increasing scope and sophistication.

To a technologist, IT is being applied to increasingly complex systems, thanks to past advances in microprocessors, algorithms, packet networking, memory, and information storage and retrieval. To a layperson, IT is becoming infrastructure, an enabler of more and more of what people do, even in situations in which the use of a “computer” is neither obvious nor intentional. The Internet, because of its pervasiveness and intrinsic ability to connect many elements, epitomizes these advances, as does the proliferation of personal computers for applications such as electronic banking and home shopping and the now-routine use of cellular telephones, especially those capable of Internet access. All of this is happening because decades of research led to proven concepts and technologies, lowering risks enough to enable commercialization.

The lesson of the recent growth of the Internet, and the World Wide Web that rides atop it, is that technical success can generate new challenges: in short, IT is neither stable nor static. What will tomorrow's Web be like? No one knows for sure, but today's research and experimentation hint at advances in the design and implementation of virtual reality systems, which enable telepresence and other blends of real and virtual environments; advances in integrating computing and biology that enable novel approaches to computation, such as through molecular or chemical processes; leaps in the capacity to collect, store, and retrieve both

previously generated and new information; and capabilities surpassing those of humans for seeing, hearing, and speaking (see Box 1.2). The integration of such specific advances into applications and systems that are more complex than today's state of the art can only be speculated on. What is not speculation is the fact that today 's society depends greatly on IT, and tomorrow's will do so even more. The Internet of today is a beginning, not an end point: it may be thought of as infrastructure, but it is far from offering the stability and predictability associated with the traditional infrastructures of the physical world.

WHAT IS INFORMATION TECHNOLOGY RESEARCH?

The Many Faces of Information Technology Research

IT research takes many forms. It consists of both theoretical and experimental work, and it combines elements of science and engineering. Some IT research lays out principles or constraints that apply to all computing and communications systems; examples include theorems that show the limitations of computation (what can and cannot be computed by a digital computer within a reasonable time) or the fundamental limits on capacities of communications channels. Other research investigates different classes of IT systems, such as user interfaces, the Web, or electronic mail (e-mail). Still other research deals with issues of broad applicability driven by specific needs. For example, today 's high-level programming languages (such as Java and C) were made possible by research that uncovered techniques for converting the high-level statements into machine code for execution on a computer. The design of the languages themselves is a research topic: how best to capture a programmer's intentions in a way that can be converted to efficient machine code. Efforts to solve this problem, as is often the case in IT research, will require invention and design as well as the classical scientific techniques of analysis and measurement. The same is true of efforts to develop specific and practical modulation and coding algorithms that approach the fundamental limits of communication on some channels. The rise of digital communication, associated with computer technology, has led to the irreversible melding of what were once the separate fields of communications and computers, with data forming an increasing share of what is being transmitted over the digitally modulated fiber-optic cables spanning the nation and the world.

Experimental work plays an important role in IT research. One modality of research is the design experiment, in which a new technique is proposed, a provisional design is posited, and a research prototype is built in order to evaluate the strengths and weaknesses of the design.

|

BOX 1.2 Some Research Goals for Information Technology Despite the incredible progress made in information technology over the past 50 years, the field is far from mature. A number of compelling goals will drive research. The following is just a partial list of possible research goals articulated by Jim Gray, a leading IT researcher and recipient of the Turing Award, the top prize in computer science. They are envisioned as well-defined goals that can stimulate considerable research.

SOURCE: Gray (1999). |

Although much of the effect of a design can be anticipated using analytic techniques, many of its subtle aspects are uncovered only when the prototype is studied. Some of the most important strides in IT have been made through such experimental research. Time-sharing, for example, evolved in a series of experimental systems that explored different parts of the technology. How are a computer's resources to be shared among several customers? How do we ensure equitable sharing of resources? How do we insulate each user's program from the programs of others? What resources should be shared as a convenience to the customers (e.g., computer files)? How can the system be designed so it's easy to write computer programs that can be time-shared? What kinds of commands does a user need to learn to operate the system? Although some of these trade-offs may succumb to analysis, others —notably those involving the user's evaluation and preferences—can be evaluated only through experiment.

Ideas for IT research can be gleaned both from the research community itself and from applications of IT systems. The Web, initiated by physicists to support collaboration among researchers, illustrates how people who use IT can be the source of important innovations. The Web was not invented from scratch; rather, it integrated developments in information retrieval, networking, and software that had been accumulating over decades in many segments of the IT research community (Schatz, 1997; Schatz and Hardin, 1994). It also reflects a fundamental body of technology that is conducive to innovation and change (CSTB, 1994). Thus, it advanced the integration of computing, communications, and information. The Web also embodies the need for additional science and technology to accommodate the burgeoning scale and diversity of IT users and uses: it became a catalyst for the Internet by enhancing the ease of use and usefulness of the Internet, it has grown and evolved far beyond the expectations of its inventors, and it has stimulated new lines of research aimed at improving and better using the Internet in numerous arenas, from education to crisis management.

Progress in IT can come from research in many different disciplines. For example, work on the physics of silicon can be considered IT research if it is driven by problems related to computer chips; the work of electrical engineers is considered IT research if it focuses on communications or semiconductor devices; anthropologists and other social scientists studying the uses of new technology can be doing IT research if their work informs the development and deployment of new IT applications; and computer scientists and computer engineers address a widening range of issues, from generating fundamental principles for the behavior of information in systems to developing new concepts for systems. Thus, IT research combines science and engineering, even though the popular—and even professional—association of IT with systems leads many people

to concentrate on the engineering aspects. Fine distinctions between the science and engineering aspects may be unproductive: computer science is special because of how it combines the two, and the evolution of both is key to the well-being of IT research. Because of its emphasis on IT systems in the service of society, this report emphasizes the engineering perspective, but takes an even broader view of the field that includes the interaction between IT systems and their end users. 4

A Classification of Information Technology Research

Distinguishing different types of research is problematic and politicized; it feeds enduring science policy debates that can seem to confuse the issues, but it remains important for diagnosing what needs to be done and how that might differ from what is being done. A variety of terms have been used to distinguish between different types of scientific and technological research. The most widely used distinction is between basic and applied research. In this classification, which is used by federal statistical agencies, basic research is defined as work motivated by a desire to better understand fundamental aspects of phenomena without specific applications in mind; it is often called curiosity-driven research. Applied research is defined as work performed to gain the understanding needed to meet a particular need; it is often called problem-oriented research. Although useful in some respects, this distinction tends to place utility and understanding at the extremes of a one-dimensional research spectrum.

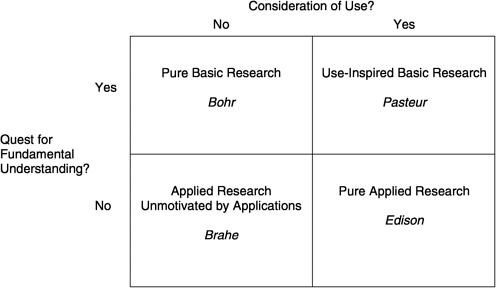

Another, more useful classification, developed by Donald Stokes, overcomes these limitations by explicitly separating the usefulness of research results from the degree to which the research seeks fundamental understanding (Stokes, 1997). It classifies research along two dimensions: whether use is considered, and whether or not the research pursues fundamental understanding (Figure 1.1). Stokes distinguishes four types of research: (1) pure basic research performed with the goal of fundamental understanding, without any thought of practical use (exemplified by Niels Bohr's research on atomic structure); (2) use-inspired basic research that pursues fundamental understanding but is motivated by a particular question or application (exemplified by Louis Pasteur's research on the biological bases of fermentation and disease, and by the fundamental work done for the Manhattan Project); (3) pure applied research that is motivated by use but does not seek fundamental understanding (exemplified by Thomas Alva Edison's inventive work); and (4) applied research that is not motivated by a particular application (such as the development of taxonomies for birds and plants, or Tycho Brahe's work to document the position of the planets, which later informed Kepler's developments of laws about planetary motion). In contrast to the basic/applied research

FIGURE 1.1 Stokes' quadrant model of research. SOURCE: Stokes (1997).

dichotomy, this taxonomy explicitly recognizes the category of research that is simultaneously inspired by use and seeks fundamental knowledge. This category of research—“Pasteur's quadrant” in Stokes' formulation—is especially important in IT.

A considerable amount of basic IT has been developed as a result of Pasteur-style research that focuses on understanding the fundamental principles of information representation and behavior, addresses widespread and enduring problems, and yields broad capabilities rather than a specific product or system (e.g., better ways to specify, build, and maintain software of all sorts). Operating systems stem from research into how multiple tasks can share a single computer. Communications and networking research seeks better ways to overcome constraints on communication, such as the nature (“quality”) of service needed for delivering real-time video or audio. Today's reduced-instruction-set computing (RISC) microprocessors are based on research that showed how to increase performance by optimizing the speed of the processor instructions that are used most frequently in actual computer programs. Speech recognition and machine vision technologies have matured through research into the machine collection of information from the physical world and its interpretation, which has to be quick and accurate to be useful.

Pasteur-style research in IT sometimes aims at solving new problems that arise in older areas. The Internet satisfied an older research goal of carrying many types of data traffic over a single network, and it generates new research problems associated with multimedia (including audio and video), congestion control, quality of service, and new communication paradigms such as broadcast and multicast. In wireless communications, rapidly increasing demands for service stimulate research into smart antenna systems and multiuser detection to achieve dramatic increases in capacity. More generally, IT researchers are still struggling to find the best ways to tell computers what to do—that is, to write correct software efficiently. They are also still struggling to find the best hardware designs that can scale up to many thousands of processors harnessed to a single computation. These are difficult research problems that endure.

Pasteur-style research tends to have long time horizons. It involves a cycle in which novel designs are worked out, implemented, and evaluated in use. The cycle is often long because each individual stage may require that new techniques be developed. For example, new programming language designs require developing techniques for translating programs into machine language. Implementations of the language have to be complete, robust, and widely available before widespread use begins; evaluation requires that a number of programmers learn the new language, apply it to a range of systems, and accumulate evidence about the value of the language; only some languages will survive these processes. Previous reports on retrospective assessments of IT have demonstrated how much of IT research has yielded results that became evident only after periods of time measurable in decades, a reality that may seem counterintuitive—the new cliché of “Internet time” has not erased the inherent lags in creating and leveraging new scientific and engineering knowledge (see CSTB, 1995, 1999a). A long-term perspective also fosters recognition of the key role of unexpected research results, which lay the foundations for new technologies, products, and entire industries.

In the IT sector, applied research differs from Pasteur-style research only in degree: the focus is sufficiently narrow that results usually apply only to specific applications, products, or systems. Applied IT research tends to be short term, with clear paths to the transfer of research results into production. In one example from industry, a research project investigated how to obtain maximum data rates from a specific disk drive attached to a specific computer that was to be used to transmit digital video data over a network in real time. Unlike conventional disk-driver software, which sacrifices performance to ensure that there are no errors in the data read from the disk, this application emphasized speed above all else. This investigation was (arguably) research because it was not

known beforehand what data rates could be achieved or how best to control the disk drive. But the research targeted a particular product, and the results were unlikely to apply to a broad class of settings.

Sometimes, what is intended to be applied research achieves far-reaching results more characteristic of Pasteur-style research. For example, researchers at Carnegie Mellon University who were investigating ways to improve scheduling on a particular factory shop floor devised a new type of optimization algorithm, called constrained optimization, that was able to solve more complex problems than could previous algorithms. The new algorithm went far beyond solving a shop-floor problem: it had applications in many other domains. In fact, its use for optimizing the assignment of payload to transport aircraft during Operation Desert Storm saved millions of dollars in transportation costs. This result epitomizes the benefits that can emerge from research on specific problems that also attempts to arrive at broad-based solutions.

Both basic and applied research differ from development. Development exploits the knowledge generated by research into scientific and technological phenomena, creating specific goods, processes, or services. In general, Internet start-ups, electronic commerce (e-commerce) technology, and the growing variety of information appliances are creatures of development. Although useful knowledge is often created in the process of developing new goods and services (as well as in manufacturing and selling them) and development generates new questions for research to address, research has the primary aim of creating scientific and technical knowledge, and in the process it serves to train people, who go on to generate (and apply) more knowledge. Unless the research base is replenished, development—and innovation —will eventually slow. The distinction between research and development and the relationship of one to the other are often obscured by glib references to R&D; tallies of industrial investment in R&D, which tends to favor development, can produce large numbers without, however, yielding research value. Part of the misunderstanding lies in the available data, part in interpretation of the data.

THE CHANGING ENVIRONMENT FOR INFORMATION TECHNOLOGY RESEARCH

The 1990s witnessed the rise of a new environment for IT research investments. The structure of the IT industry has changed, and the nature of IT applications has greatly expanded. A review of the changes that have taken place underscores why now is an appropriate time to examine the structures and mechanisms used to fund and conduct research, to ensure that they will help the nation reach its IT-related objectives.

Changing Industrial Structure

The IT industry appears to be growing faster than the research base that has supported it, raising questions about the future in an arena where ideas and talent, or intellectual capital, are the most critical assets. Most obvious is the influx of smaller firms that leverage fundamental research undertaken in universities (and elsewhere). Also obvious is the relative decline of several large U.S. industrial laboratories as sources of IT research. Players present in the early 1990s, such as Digital Equipment Corporation, Control Data Corporation, Cray Research, and even Apple Computer, are no longer contributing to the research base. Enduring players, such as IBM Corporation and AT&T, have reorganized and now focus their research more narrowly than they did in the 1970s and 1980s. Newer companies, such as Microsoft Corporation, and foreign corporations, such as NEC and Mitsubishi, launched U.S. research laboratories in the late 1980s and 1990s; the impact of these new efforts remains to be seen—what is known is that they have attracted leading researchers from academia.5 Industrial research relevant to IT has grown, both in absolute terms and as a share of all such research, but that growth is dwarfed by the IT industry's growth. In this environment, any research investment can have great leverage and influence developments across a broad spectrum of industry and society.

Why does change in the IT industry matter? One might expect, after all, that as an industry matures, R&D spending would decline as a proportion of revenues; such a decline could also come from expanding sales of a stable product—one for which the R&D has been more or less completed. An industry whose history is measured in decades cannot, however, be called mature. Indeed, the evidence of the 1990s points to rejuvenation rather than senescence—the rapid growth of the Internet and its associated business activity, for example, are new phenomena, and that activity will shape yet other phenomena through cumulative experimentation with the network-based interactions of people and systems. Information technology is neither mature nor stable, and the structure and competitive conduct of the industry continue to change.

Measuring the IT research effort is difficult; the most common yardstick is funding. Several factors contribute to concerns about the sufficiency of IT research funding. First, attempts to reduce federal budget deficits and trim defense spending (which historically supported a significant portion of computing and electrical engineering research) constrained federal funding for university research in the early 1990s, affecting career decisions and research output in ways that are beginning to have an impact now. Efforts to enhance accountability in federal government operations and spending also led to increasing support for near-

term, mission-oriented research by federal agencies, at the expense of more fundamental long-term research.6 Yet, as the Congressional Budget Office (CBO) observed, federal funding for long-term research “may have a disproportionately large effect on the direction that information technology takes in the long run ” (Webre, 1999). Indeed many argue that the true value of the early research investments in IT by agencies such as the Defense Advanced Research Projects Agency (DARPA) and the National Science Foundation (NSF) has been support for the creation of technologies and capabilities not known before (CSTB, 1999a). External validation of these claims may be gauged not only from industry growth, which lags research investments, but also in flows of venture capital funds, which, according to the CBO, “raise the efficiency of existing R&D by raising the rate at which ideas developed in the laboratory are brought to market” (Webre, 1999). Realizing the future potential of IT therefore depends on continued federal support for fundamental research.

Second, the private sector also faces growing disincentives to investing in long-term research. Increased competition has forced many bluechip firms to restructure their IT research and development programs to concentrate on problems with greater market relevance. The most obvious examples are the reduction in, and reorientation of, research and development investments by historical industry leaders IBM and AT &T. At the same time, a broader move toward a more horizontal, layered industry structure has resulted in the transfer of research and development to the suppliers of IT components, whether microprocessors, disk drives, or software. The associated specialization may militate against sufficient progress in systems research, which unifies efforts across the IT industries (see Box 1.3 for a description of component research vs. systems research). At the same time, new entrants into the marketplace, and the new mix of products, feature players without a research history. New software enterprises and Web-based ventures can be started with a few highly talented people and a little capital. The venture capital industry is ready and willing to provide the additional funding needed if the start-up appears to be on its way to success.

Third, Moore's law has the effect of driving IT companies to offer the very latest technology continually. To do otherwise would mean losing out to competitors. There is an unusually high obsolescence factor driven by the rapid advances in IT. Any company attempting to offer 2-year-old versions of its systems would, in effect, be offering technology only half as cost-effective as the latest technology allows. In other words, the penalty for being late to market is to lag the performance of more rapidly developed competitive products. Abbreviated product development cycles accelerate the pace of research—if researchers can produce tangible results faster, then products can change faster. If research is done at all, it

|

BOX 1.3 Components, Systems, and Applications Even in fields traditionally associated with information technology (IT), the methods and subjects of research can vary. These problems can be viewed on three levels:

The distinction between components, systems, and applications is not absolute but depends on the perspective from which they are viewed. A personal computer (PC), for example, may be considered an application by a microprocessor designer, a system by a PC designer, and a component by a network designer. As IT has become more integral to everyday life and work, the set of research problems associated with systems and applications has grown. These problems result from success in building components, such as the PC and the Internet, which have in turn created opportunities for new systems and applications. |

is often impossible to follow the traditional open research model, with results broadly available for review. As suggested earlier in this chapter, research investments that are coupled more closely to market objectives tend to refine and exploit existing knowledge rather than lay the groundwork for the more radical innovations upon which the industry's future success will surely rely. The shortcomings of this mode of operation are obvious in the areas of security—where, for example, the cycle of iterative product release, public announcement of product flaws, and product fixes has become the norm (CSTB, 1999b) —and of usability, where, for example, the lack of time for studying how real people with differing abilities use systems and what they need and want from the systems continues to constrain ease of use (CSTB, 1997). The situation is compounded by long-standing difficulties in improving productivity in the development of quality software; progress in software engineering continues to be elusive (CSTB, 1999b).

Expanding Applications of Information Technology

The second major change affecting IT research is the expanding role of IT systems in a number of important social applications of IT that support groups of people performing tasks related to government, industry, business, and commerce and in which the technology and larger organizational or social processes are inseparable. It is no exaggeration to say that, today, virtually every facet of government, industry, and commerce is touched by IT, and some depend heavily on it. Many crucial organizational and societal systems operate at a scale and complexity that would simply not be feasible without the assistance of IT. These applications tend to implemented in large-scale systems—IT systems that contain many hardware and software components that interact with each other in complex ways. Many social applications of IT are characterized by the deep interrelationship of the IT with nontechnological elements, such as people, workers, groups of people, students, and organizations of workers. For example, data storage has become a foundation of organizational processes that involve people and material as well as data. Networking has integrated computing into many human interactions, supporting group activities and collaboration: for example, collaboration on the design of a complex vehicle by manufacturers, suppliers, and major customers who share in the development and modification of plans and specifications or the enhancement of retail product and pricing strategies and customer service by capturing information about customer buying behavior.

Newfound applications of IT represent a significant step forward in the evolution of computing, much of which has been shaped by the needs of and conditions in the scientific and engineering communities. 7 The runaway success of the Internet has vastly expanded the range and sophistication of applications, making the issues surrounding large-scale system design, which were for a long time open issues, more critical and immediate. For example, growing numbers of IT applications are spanning different organizations and administrative domains, incorporating not only multiple users but also multiple organizations (with different preferences, procedures, capabilities, and so on) into a single application. As a result, it is becoming much less appropriate to design applications with the assumption that they will be implemented, deployed, operated, and maintained in a coordinated fashion under central control.8

Increasing complexity and sophistication are predictable trends. They are standard phenomena in technologically advanced industries, in which productivity gains, fundamental innovations, and difficult, if less fundamental, research problems continue for many years.9 A corollary is the generation of research problems, discussed in the subsequent chapters, that arise from technical complexity. New IT systems are especially com-

plex because of their large size, the number of interacting components, and their intimate involvement with people who are themselves complex components. The performance of these cannot be fully modeled, so it is difficult to understand how they work before they are put to actual use. Their intended applications and design continue to evolve, and they are increasingly embedded in real-world systems. This situation stands in contrast to the large-scale systems of the past, such as the telephone system, which, because it was so regulated and standardized, did not have to address heterogeneity to the same degree as do today 's large-scale IT systems.

The shortcomings in the current state of technology supporting social applications of IT are painfully evident. Engineers are building IT systems that venture beyond the state of knowledge, much as designers of the Tacoma Narrows bridge ventured too far into lightweight suspension bridge design.10 Today's news reports of system outages in electronic trading, Internet access, and telephony signal that users expect IT systems to have characteristics of reliability and availability that parallel those of physical infrastructures, such as roads, bridges, and power supplies. Aggravating the situation is the distribution of the underlying computing and networking infrastructure across multiple organizations, with components designed by multiple equipment and software vendors. A low level of coordination is possible, but the virtual distributed computing infrastructure cannot be designed or even “tuned” in the same way as earlier generations of computing infrastructure were, when everything was typically under the control of a single organization and its hand-picked vendors. As a result, today's infrastructure forms a rather shaky substrate for the distributed social applications of IT that depend on it.

One manifestation of this reality is vulnerability to various security threats, unpredictable emergent behaviors, and breakdowns. It is difficult to predict or control the performance of large-scale systems or the environment in which they operate. Challenges include constant change in both functional requirements and regulatory constraints, as well as in the underlying infrastructure itself, all of which have the effect of compounding the vulnerability and performance issues. The responses to these challenges are generally ad hoc and not based on any fundamental understanding that could inspire confidence in the methodologies and outcomes. Indeed, the many shortcomings and even failures of past efforts suggest that confidence would not be justified.

Many system problems have been evident for decades, but the broadening deployment of IT and growing dependence on it mean that the payoff from finally resolving them will be greater than ever. Enduring difficulties include achieving parallelism in systems, reusing system components, enhancing ease of use and trustworthiness, and supporting a

larger scale. Some other difficulties are becoming more compelling: for example, achieving adaptability (the ability to evolve over time) or maintaining availability of use in the face of predictable and unpredictable problems.11 The need for progress on these problems has become acute.12 Major users of large-scale systems, networks, and applications are severely limited in their ability to develop new IT systems by high development costs, uncertain outcomes, and the need to maintain existing operations even though a new system could reduce operational costs, speed product innovation, and improve the services they provide to their customers.

The problems associated with large-scale systems are much the same as those associated with the large, monolithic systems created by organizations like the Federal Aviation Administration (FAA), the Internal Revenue Service (IRS), and large private companies. They could become characteristics of systems used for health care, education, manufacturing, and other social applications that have become widespread. 13 The continued development of computing systems embedded in other devices and systems promises to exacerbate these problems. Microprocessors are being incorporated into an increasing array of devices, from automobile transmissions and coffeemakers to a range of electronic measuring devices, such as thermostats, pollution detectors, cameras, microphones, and medical devices (Business Week Online, 1999b). They are also entering a range of information appliances that can be used for playing music, reading electronic books, sending and reading e-mail, and browsing the Web. Such devices are expected to become more numerous than stand-alone computers and will be able to share information across the Internet and other information infrastructures. Some believe that, within 10 years, discrete microprocessors will be knitted together into ad hoc distributed computers whose terminals are laptops, cell phones, or handheld devices.

In considering the social applications of IT, two distinct but related categories of research are relevant. The first is systems research, which has long been pursued in the computing community but takes on added significance in light of the broadening range of functions supported by large-scale systems. In fact, the definition of systems research has changed. In the past, it meant primarily computer architecture, operating systems, and related computer science subjects. Research in these subjects continues, driven by the need for highly scalable systems, around-the-clock availability, and the like. However, the greatest impact of systems and opportunities for them transcend the technology per se: they lie in the social applications of IT. There is a huge demand for research in e-commerce systems and technologies, content management and analysis, community and collaboration systems, and so forth. There is a broad spectrum of social applications of IT, ranging from those that serve pri-

marily a single user operating in a larger context (as in home banking applications that are used by a single user but connect into the bank's financial system) through those that serve groups of users in a time-limited activity, through those that serve the needs of organizations, through those that serve groups of organizations (in commerce or tax collection, for example), to those that serve individual users interacting with groups of organizations (as in online shopping). Systems research will aim at new levels of automation and integration of activities within and among organizations. It will couple IT more closely than ever to complex social and business processes, making IT more truly an information infrastructure. But such coupling also complicates the pursuit of success.

The second category of research focuses on IT embedded in a social context. This research addresses the technology itself, asking how IT can be changed to provide greater benefits to users and end-user organizations through social applications. This perspective is the opposite of the more conventional perspective on the impact of technology, which emphasizes how people must change to accommodate IT: the question is how technology can be changed to accommodate people and organizations.

One characteristic of IT is its extreme malleability. At the level of software and applications, there are few physical limits to be concerned about, and the inherent flexibility of the technology enables it to adjust rather unconstrainedly to application needs, at least in theory. There are, however, serious obstacles to carrying out such adjustments. These include the practical difficulties of changing existing approaches and infrastructure, known by economists as “ path-dependent effects” and “lock-in.” But even if these economic obstacles could be overcome, there is simply a lack of fundamental understanding of how the technology could be made to better serve the social applications of IT. Furthermore, there are serious gaps in the methodologies for translating contextual application requirements into concrete architectures and specifications for a software implementation (as well as gaps in methods for modifying the context to take maximum advantage of IT). The implication is that trial and error is inevitable, as are false starts and dead ends.

Technical and organizational factors are intertwined, adding to the scientific and engineering challenges. For example, despite its leveraging of work on constrained optimization in the Gulf War, cited above, the United States experienced notable logistical failures in that war: some 40 percent of the sea containers that arrived dockside at the Saudi disembarkation points had to be physically opened and inspected for want of documentation of their contents and its disposition, and it took at least twice as long as the Department of Defense' s worst-case projections to mobilize the necessary warfighting materiel in Saudi Arabia and other

key points and nearly four times as long to get all that materiel to where it was supposed to go. These represent failures in information systems and reflect underinvestments in areas such as sealift, containerization, and logistics, none of which is as intriguing as, say, smart bombs. They underscore the importance of human and organizational factors in harnessing IT and demonstrate why more research needs to be done on the blend of factors. Although in practice IT has always been shaped by the environment in which it is used, IT research needs to focus more on the overall system and the operational context in which such systems will be used.

To date, IT research has not emphasized systems and applications to nearly the extent called for by the virtual explosion of sophisticated social applications of IT as a result of the Internet. This is not to say there has been a complete void. Indeed, there has been a substantial effort, but it appears to have been inadequate when weighed against the significant challenges and tremendous opportunities. There is a critical need for more fundamental understanding, given the rapidly expanding deployment of applications that support organizational missions and the interaction of many entities (such as businesses, universities, and government) as well as of society in general. This is an area in which opportunities abound for substantial advances in technology, with the goal of making applications much more effective and improving their development, administration, operation, and maintenance in terms of effectiveness, reliability, trustworthiness, and cost.

IMPLICATIONS FOR INFORMATION TECHNOLOGY RESEARCH

The challenges posed by large-scale IT systems and the social applications of IT can be addressed effectively only if the IT research base is expanded. Past research in IT has tended to focus on areas such as the following:

-

Fundamental understanding of the limitations on computation and communications;

-

Underlying technologies (such as integrated circuits), design techniques and tools (such as compilers), components (such as microprocessors), and computer systems (such as parallel and networked computers); and

-

Applications and usability for isolated users, improvements in the human-computer interface, and applications that serve an isolated user.

This technology base must be carried forward; continuing substantial progress will be needed to support and maintain the progress in larger domains. As the importance of networking increases, research in associated challenges (such as distributed file systems and transaction and

multicast protocols) is expanding. A number of engaging social applications (such as e-mail, newsgroups, chat rooms, multiplayer games, and remote learning) have emerged from the research community. As is true for other relatively immature suites of technologies, much of the research was begun in academia, and large segments of both the research agenda and its commercial applications have migrated to industry.

Past research in technology emphasized underlying technologies and components, in part because these were necessary ingredients for getting started and in part because it was challenging to obtain the functionality and performance required of even the most basic applications. Today, the challenge is almost the reverse. In the wake of several decades of exponential advances in the capabilities of both electronics and fiber optics, it can be argued that the technologies are beginning to outstrip society's understanding of how to use them effectively. Increasingly, the challenge can be stated as follows: Wonderful technologies are now in our grasp; how can they be put to good use? This is not to minimize either the importance or the challenges of advancing the core technologies, because such advances are critical to progress in applications built on these technologies. Rather, the point is that the existing research agenda needs to accommodate a major push into the uses of technology.

The idea of an expanded IT research agenda is not entirely new. A study committee convened by CSTB in the early 1990s observed both that intellectually substantive and challenging computing problems can and do arise in the context of problem domains outside computer science and engineering per se and that computing research can be framed within the discipline's own intellectual traditions in a manner that is directly applicable to other problem domains (CSTB, 1992). The committee viewed computing research as an engine of progress and conceptual change in other problem domains, even as these domains contribute to the identification of new areas of inquiry within computer science and engineering. Its recommendations sought to sustain the core effort in computer science and engineering (similar to “components ” research as defined in this report) while simultaneously broadening the field to explore intellectual opportunities available at the intersection of computer science and engineering and other problem domains (see Box 1.4). Efforts over the past decade have shown both the virtue and the difficulty of including more application-inspired work under the umbrella of IT research, but the need for such work continues to outstrip efforts to produce it.

As the emphasis shifts from applications serving solitary users and single departments to those serving large groups of users, entire enterprises, and critical societal infrastructures, the issues related to this embedding become more interesting and challenging. In particular, research is needed in two areas in addition to IT components: (1) the technical

|

BOX 1.4 Echoes of CSTB's Computing the Future In the early 1990s, the CSTB Committee to Assess the Scope and Direction of Computer Science and Technology was charged to assess how best to organize the conduct of research and teaching in computer science and engineering (CS&E) in the future. In considering appropriate responses, the committee formulated a set of priorities, the first two of which are relevant to this report: Priority 1—Sustain the core effort in CS&E, i.e., the effort that creates the theoretical and experimental science base on which computing applications build. This core effort has been deep, rich, and intellectually productive and has been indispensable for its impact on practice in the last couple of decades. Priority 2—Broaden the field. Given the solid CS&E core and the many intellectual opportunities available at the intersection of CS&E and other problem domains, the committee believes that academic CS&E is well positioned to broaden its self-concept. Such broadening will also result in new insights with wide applicability, thereby enriching the core. Furthermore, given the pressing economic and social needs of the nation and the changing environment for industry and academia, the committee believes academic CS&E must broaden its self-concept or risk becoming increasingly irrelevant to computing practice. These priorities led the committee to offer a set of recommendations that, while linked to particular programs of the time, have continued relevance today and foreshadow the recommendations made in Chapter 5 of this report. Recommendation 1—The High Performance Computing and Communications (HPCC) Program should be fully supported throughout the planned 5-year program. The HPCC program is of utmost importance for three reasons. The first is that high-performance computing and communications are essential to the nation's future economic strength and competitiveness, especially in light of the growing need and demand for ever more advanced computing tools in all sectors of society. The second reason is that the program is framed in the context of scientific and engineering grand challenges. Thus the program is a strong signal to the CS&E [computer science and engineering] community that good CS&E research can flourish in an application context and that the demand for interdisciplinary and applications-oriented CS&E research is on the rise. And finally, a fully funded HPCC Program will have a major impact on relieving the funding stress affecting the academic CS&E community. . . . Recommendation 2—The federal government should initiate an effort to support interdisciplinary and applications-oriented CS&E research in academia that is related to the missions of the mission-oriented federal agencies and departments that are not now major participants in the HPCC [High Performance Computing and Communications] Program. Collectively, this effort would cost an additional $100 million per fiscal year in steady state above amounts currently planned. Many federal agencies are not currently participating in the HPCC Program, despite the utility of computing to their missions, and they should be brought into the program. Those agencies that support substantial research efforts, though not in CS&E, should support interdisciplinary CS&E research, i.e., CS&E research undertaken jointly with research in other fields. Problems in these other fields often include an |

|

important computational component whose effectiveness could be enhanced substantially by the active involvement of researchers working at the cutting edge of CS&E research. Those agencies that do not now support substantial research efforts of any kind, i.e., operationally oriented agencies, should consider supporting applications-oriented CS&E research because of the potential that the efficiency of their operations would be substantially improved by some research advance that could deliver a better technology for their purposes. Such research could also have considerable “spin-off” benefit to the private sector as well. Recommendation 3—Academic CS&E should broaden its research horizons, embracing as legitimate and cogent not just research in core areas (where it has been and continues to be strong) but also research in problem domains that derive from nonroutine computer applications in other fields and areas or from technology-transfer activities. The academic CS&E community should regard as scholarship any activity that results in significant new knowledge and demonstrable intellectual achievement, without regard for whether that activity is related to a particular application or whether it falls into the traditional categories of basic research, applied research, or development. Chapter 5 describes appropriate actions to implement this recommendation. Recommendation 4—Universities should support CS&E as a laboratory discipline (i.e., one with both theoretical and experimental components). CS&E departments need adequate research and teaching laboratory space, staff support (e.g., technicians, programmers, staff scientists); funding for hardware and software acquisition, maintenance, and upgrade (especially important on systems that retain their cutting edge for just a few years); and network connections. New faculty should be capitalized at levels comparable to those in other scientific or engineering disciplines. SOURCE: Excerpts from Computer Science and Telecommunications Board(1992), pp. 5-8. |

challenges, such as trustworthiness, scalability, and location transparency, associated with large-scale systems and (2) challenges that surround the molding of embedded IT within its application context, that is, within the social applications of IT. The second challenge can be considered only partly a technical one, arising as it does from the context of IT. These are difficult problems to characterize, let alone solve.

An analogy to the health sciences may be helpful in understanding the relationship between the more traditional components-oriented research and the additional work that is needed on large-scale systems and on the social applications of IT. One way to classify the health sciences

is to divide them into three related and overlapping sets of disciplines, each with its own research base:

-

The biological sciences, which examines the basic scientific processes that underlie much of the progress in medicine;

-

Physiology, anatomy, and pathology, which focus on understanding how biological systems, such as organisms composed of interacting organs, work and how their components can be manipulated to provide medical benefits; and

-

Clinical medicine and epidemiology. The former focuses on treating human diseases, drawing on the other disciplines but also working closely and empirically with patients; the latter focuses on the relationship between environments and health.

Pursuing this analogy, the component- and technology-oriented research that has so far dominated IT research is similar to research in the biological sciences: it develops the basic understanding and techniques that are invaluable in constructing working systems. Research related to large-scale systems that use IT is analogous to physiology: it attempts to understand the interactions among components and the development of systems. Interdisciplinary research involving the social sciences, business, and law, in collaboration with technologists and addressing many of the social and organizational challenges posed by the social applications of IT, is analogous to medicine and epidemiology. This research is focused on applications and is justified by the direct impact it can have on them and society as a whole.

As this report argues, research in components, systems, and applications is needed to ensure the development of fundamental understanding that will allow IT systems to evolve to meet society's growing needs. This is not research directed at finding a more effective way to use IT in a narrow application domain; rather, it is research directed at revolutionizing the understanding of how distributed computing environments with decentralized design and operations can offer predictable, reproducible performance and capability and controlled vulnerability. It is also directed at revolutionizing the ability to accommodate rapid change in both the context and requirements of the infrastructure and applications. It is directed at fundamentally revamping methodologies for capturing application requirements and transforming them into working applications. It is directed at revolutionizing the organizational processes and group dynamics that form the application context in ways that can most effectively leverage IT. This is long-term research that is extremely challenging.

IMPLICATIONS FOR THE RESEARCH ENTERPRISE

The trends in IT suggest that the nation needs to reinvent IT research and develop new structures to support, conduct, and manage it. The history of U.S. support for IT research established enduring principles for research policy: support for long-term fundamental research; support for the development of large systems that bring together researchers from different disciplines and institutions to work on common problems; and work that builds on innovations pioneered in industrial laboratories (CSTB, 1999a).

As IT permeates many more real-world applications, additional constituencies need to be brought into the research process as both funders and performers of IT research. This is necessary not only to broaden the funding base to include those who directly benefit from the fruits of the research, but also to obtain input and guidance. An understanding of business practices and processes is needed to support the evolution of e-commerce; insight from the social sciences is needed to build IT systems that are truly user-friendly and that help people work better together. No one truly understands where new applications such as e-commerce, electronic publishing, or electronic collaboration are headed, but business development and research together can promote their arrival at desirable destinations.

Many challenges will require the participation and insight of the end user and the service provider communities. They have a large stake in seeing these problems addressed, and they stand to benefit most directly from the solutions. Similarly, systems integrators would benefit from an improved understanding of systems and applications because they would become more competitive in the marketplace and be better able to meet their estimates of project cost and time. Unlike vendors of component technologies, systems integrators and end users deal with entire information systems and therefore have unique perspectives on the problems encountered in developing systems and the feasibility of proposed solutions. Many of the end-user organizations, however, have no tradition of conducting IT research—or technological research of any kind, in fact—and they are not necessarily capable of doing so effectively; they depend on vendors for their technology. Even so, their involvement in the research process is critical. Vendors of equipment and software have neither the requisite experience and expertise nor the financial incentives to invest heavily in research on the challenges facing end-user organizations, especially the challenges associated with the social applications of IT.14 Of course, they listen to their customers as they refine their products and strategies, but those interactions are superficial compared with the demands of the new systems and applications. Finding suitable mechanisms for

the participation of end users and service providers, and engaging them productively, will be a big challenge for the future of IT research.

Past attempts at public-private partnerships, as in the emerging arena of critical infrastructure protection, show it is not so easy to get the public and private sectors to interact for the purpose of improving the research base and implementation of systems: the federal government has a responsibility to address the public interest in critical infrastructure, whereas the private sector owns and develops that infrastructure, and conflicting objectives and time horizons have confounded joint exploration. As a user of IT, the government could play an important role. Whereas historically it had limited and often separate programs to support research and acquire systems for its own use, the government is now becoming a consumer of IT on a very large scale. Just as IT and the widespread access to it provided by the Web have enabled businesses to reinvent themselves, IT could dramatically improve operations and reduce the costs of applications in public health, air traffic control, and social security; government agencies, like private-sector organizations, are turning increasingly to commercial, off-the-shelf technology.

Universities will play a critical role in expanding the IT research agenda. The university setting continues to be the most hospitable for higher-risk research projects in which the outcomes are very uncertain. Universities can play an important role in establishing new research programs for large-scale systems and social applications, assuming that they can overcome long-standing institutional and cultural barriers to the needed cross-disciplinary research. Preserving the university as a base for research and the education that goes with it would ensure a workforce capable of designing, developing, and operating increasingly sophisticated IT systems. A booming IT marketplace and the lure of large salaries in industry heighten the impact of federal funding decisions on the individual decisions that shape the university environment: as the key funders of university research, federal programs send important signals to faculty and students.

The current concerns in IT differ from the competitiveness concerns of the 1980s: the all-pervasiveness of IT in everyday life raises new questions of how to get from here to there—how to realize the exciting possibilities, not merely how to get there first. A vital and relevant IT research program is more important than ever, given the complexity of the issues at hand and the need to provide solid underpinnings for the rapidly changing IT marketplace.

ORGANIZATION OF THIS REPORT

The remainder of this report elaborates on the themes introduced in this chapter. Chapter 2 examines trends in support for IT research by government, industry, and universities. It reviews statistics on funding for R&D and describes the linkages between existing funding patterns and the trends outlined above. Chapter 3 examines challenges in large-scale systems research that are not new but that have taken on renewed importance as social applications of computing and communications emerge. It identifies existing shortfalls in the research community's understanding of large-scale systems and suggests avenues for further investigation. Chapter 4 examines IT systems that are embedded in a social context, outlining the research problems that result from the integration of IT into a range of social applications and discussing the value of interdisciplinary research in this area. It identifies areas in which interdisciplinary research drawing on the social sciences is needed to complement the strictly technical research being conducted by IT researchers; outlines different mechanisms for pursuing an expanded IT research agenda that considers systems and applications as well as components; and discusses ways in which government, industry, and universities need to alter their organization and management of IT research to ensure that mechanisms exist for conducting the kinds of research that will be needed for the future. Chapter 5 presents the committee's recommendations for strengthening the resource base in IT. It outlines actions that government, universities, and industry should take to advance a broader research agenda for IT.

BIBLIOGRAPHY

Buderi, Robert. 1998. “Bell Labs Is Dead: Long Live Bell Labs,” Technology Review 101(5):50-57.

Business Week Online. 1999a. “Q&A: An Internet Pioneer Moves Toward Nomadic Computing,” August 30.

Business Week Online. 1999b. “The Earth Will Don an Electronic Skin,” August 30.

Carey, John. 1997. “What Price Science?” Business Week, May 26. Available online at <www.businessweek.com/1997/21/b3528136.htm>.

Committee for Economic Development (CED). 1998. America's Basic Research: Prosperity Through Discovery. Committee for Economic Development, New York.

Computer Science and Telecommunications Board (CSTB), National Research Council. 1992. Computing the Future. National Academy Press, Washington, D.C.

Computer Science and Telecommunications Board (CSTB), National Research Council. 1994. Realizing the Information Future: The Internet and Beyond. National Academy Press, Washington, D.C.

Computer Science and Telecommunications Board (CSTB), National Research Council. 1995. Evolving the High-Performance Computing and Communications Initiative to Support the Nation's Information Infrastructure. National Academy Press, Washington, D.C.

Computer Science and Telecommunications Board (CSTB), National Research Council. 1997. More Than Screen Deep. National Academy Press, Washington, D.C.

Computer Science and Telecommunications Board (CSTB), National Research Council. 1999a. Funding a Revolution: Government Support for Computing Research. National Academy Press, Washington, D.C.

Computer Science and Telecommunications Board (CSTB), National Research Council 1999b. Trust in Cyberspace. National Academy Press, Washington, D.C.

Council on Competitiveness. 1996. Endless Frontier, Limited Resources: U.S. R&D Policy for Competitiveness. Council on Competitiveness, Washington, D.C., April.

Council on Competitiveness. 1998. Going Global: The New Shape of American Innovation. Council on Competitiveness, Washington, D.C., September.

Critical Infrastructure Assurance Office (CIAO). 2000a. Defending America's Cyberspace: National Plan for Information Systems Protection, Version 1.0, An Invitation to a Dialogue. The White House, Washington, D.C., January. Available online at <http://www.ciao.gov/National_Plan/national_plan%20_final.pdf>.

Critical Infrastructure Assurance Office (CIAO). 2000b. Practices for Securing Critical Information Assets. CIAO, Washington, D.C., January. Available online at <http://www.ciao.gov/CIAO_Document_Library/Practices_For_Securing_Critical_Information_Assets.pdf >.

David, Paul. 1990. “The Dynamo and the Computer: An Historical Perspective on the Modern Productivity Paradox,” The American Economic Review 80(2):355-361, May.

Gray, Jim. 1999. What's Next: A Dozen Information-Technology Research Goals. Technical report MS-TR-99-50, Microsoft Research, Redmond, Washington, June. Available online at HtmlResAnchor http://www.research.microsoft.com/~Gray/.

Leibovich, Mark. 1999. “Microsoft Skims Off Academia's Best for Research Center,” Washington Post, April 5, p. A1.

President's Commission on Critical Infrastructure Protection (PCCIP). 1997. Critical Foun dations: Protecting America's Infrastructures, Washington, D.C., October. Available online at <http://www.ciao.gov/CIAO_Document_Library/PCCIP_Report.pdf>.

President's Information Technology Advisory Committee (PITAC). 1999. Information Technology Research: Investing in Our Future. National Coordination Office for Computing, Information, and Communications , Arlington, Va., February. Available online at <http://www.ccic.gov/ac/report/>.

Schatz, Bruce R. 1997. “Information Retrieval in Digital Libraries: Bringing Search to the Net,” Science 275(5298):327-334, January 17.

Schatz, Bruce R., and Joseph B. Hardin. 1994. “NCSA Mosaic and the World Wide Web: Global hypermedia protocols for the Internet,” Science 265(5174):895-901, August 12.

Stokes, Donald E. 1997. Pasteur's Quadrant: Basic Science and Technological Innovation. Brookings Institution Press, Washington, D.C.

Uchitelle, Louis. 1996. “Basic Research Is Losing Out As Companies Stress Results,” New York Times, pp. A1 and D6, October 8M.

U.S. Department of Commerce, Economics and Statistics Administration . 2000. Digital Economy 2000. U.S. Department of Commerce, Washington, D.C., June.

Webre, Philip. 1999. “Current Investments in Innovation,” Congressional Budget Office, Washington, D.C., April.

Ziegler, Bart. 1997. “Lab Experiment: Gerstner Slashed R&D by $1 Billion: For IBM, It May Be a Good Thing, Latest Breakthrough Shows,” Wall Street Journal, October 6, p. A1.

NOTES

1. For a discussion of the influence that debates over federal research policy had on the High Performance Computing and Communications Initiative (HPCCI), see CSTB (1995).

2. The recommendations of PITAC regarding federal funding for IT research can be found in PITAC (1999). Additional information on the Clinton Administration's IT2 initiative is available online at <http://www.ccic.gov/it2/>.

3. Numerous press accounts from the mid- and late 1990s reported on the reorientation of research labs in the IT industry and resulting concerns about long-term, fundamental research. See, for example, Uchitelle (1996), Carey (1997), Ziegler (1997), and Buderi (1998).

4. A new CSTB study on the fundamentals of computer science will emphasize the science aspects, including the interaction of computer science with other sciences. Additional information on this project is available on the CSTB Web site at <www.cstb.org>.

5. Microsoft, in particular, has been noted for tapping top academic talent for its research lab. See Leibovich (1999).

6. The Government Performance and Results Act of 1993, for example, called for explicit attempts to measure the results of government programs, including research programs. The act therefore added to the pressure for near-term and mission-oriented work.

7. In scientific computing, IT is used to model, emulate, and simulate various scientific and technical processes. Scientific computing has received considerable attention from the IT research community from the earliest days, for several reasons. First, IT researchers are technically oriented and can appreciate and understand the issues related to scientific computing. Second, scientific computing has been critical to the scientific and engineering enterprise and to major government programs such as nuclear energy and weapons and the military and so has been admirably supported by the government. Scientific computing continues to be important to the future of computing, science, many fields of engineering, and the military enterprise and should not be deemphasized.

Because it has been strongly supported by funding agencies and the research community, scientific computing is an inspirational example of the interrelationship and synergy between application and technology. Scientific computing applications have been a major driver of high-performance computing technologies and parallel programming techniques. In turn, scientific computing has influenced engineering and science, providing not only substantial benefits but also approaches to problem solving —such as the characteristics of numerical algorithms that yield to parallelism. The very methodologies of science have been substantially affected in ways that make scientific progress more rapid as well as cost effective. Scientific computing has benefited greatly from its long-term association with the research environment.

Scientific computing also demonstrates the importance of long-term and fundamental research on technology in application contexts. There are fundamental gaps in understanding and unexploited opportunities that can be addressed by taking a long-term perspective, one that is not generally pursued by industrial organizations with their more short-term profit motivation. Much of the benefit of research outcomes will accrue not to individual private firms but to industry, government, and society in general. The government is a major user and developer of scientific computing applications and, in many ways, its challenges are much greater than those of the private sector. If government-funded research in any facet of technology is justified, then surely research in the more effective application of technology to the needs of government and its citizens is even more strongly justified.

8. As recalled recently by Leonard Kleinrock: “In the early days of ARPA [the Advanced Research Projects Agency], even when we had only three machines, we were able to uncover logical errors in the routing protocol, or the flow-control protocol, that would cause network failures. Those errors are hard to detect ahead of time, especially as the systems get more complex. It's easy to detect the cause of a massive crash or degradation. But complex systems have latent failure modes that we have not yet excited. There is always potential for deadlocks, crashes, degradations, errant behavior. . . . As systems get more complex, they crash in incredible and glorious ways. What you want to have is a self-

repairing mode [so that if] one part fails, other parts continue to function” (Business Week Online, 1999a).

9. A considerable economics literature exists on the increasing complexity of technologies as they evolve over time. See, for example, David (1990).

10. The first Tacoma Narrows suspension bridge, near the city of Tacoma, Washington, collapsed as a result of wind-induced vibrations on November 7, 1940, just 4 months after it was opened to traffic. The collapse was attributed to the bridge's structure, which caught the wind instead of letting it pass through. A windstorm caused the bridge to undergo a series of undulations, which were caught on film before its collapse, earning the bridge the nickname “Galloping Gertie. ” Video footage of the bridge's collapse is available online at <http://mecad.uta.edu/~bpwang/me5311/1999/lecture1/intro25.htm>.

11. This material derives from a set of briefing slides entitled “Computer Systems Research: Past and Present” prepared by Butler Lampson from Microsoft Research. The slides are available online at <http://www.research.microsoft.com/lampson/Slides/ ComputerSystemsResearchAbstract.htm >.