9

Technology to Support Learning

Attempts to use computer technologies to enhance learning began with the efforts of pioneers such as Atkinson and Suppes (e.g., Atkinson, 1968; Suppes and Morningstar, 1968). The presence of computer technology in schools has increased dramatically since that time, and predictions are that this trend will continue to accelerate (U.S. Department of Education, 1994). The romanticized view of technology is that its mere presence in schools will enhance student learning and achievement. In contrast is the view that money spent on technology, and time spent by students using technology, are money and time wasted (see Education Policy Network, 1997). Several groups have reviewed the literature on technology and learning and concluded that it has great potential to enhance student achievement and teacher learning, but only if it is used appropriately (e.g., Cognition and Technology Group at Vanderbilt, 1996; President’s Committee of Advisors on Science and Technology, 1997; Dede, 1998).

What is now known about learning provides important guidelines for uses of technology that can help students and teachers develop the competencies needed for the twenty-first century. The new technologies provide opportunities for creating learning environments that extend the possibilities of “old” —but still useful—technologies—books; blackboards; and linear, one-way communication media, such as radio and television shows—as well as offering new possibilities. Technologies do not guarantee effective learning, however. Inappropriate uses of technology can hinder learning— for example, if students spend most of their time picking fonts and colors for multimedia reports instead of planning, writing, and revising their ideas. And everyone knows how much time students can waste surfing the Internet. Yet many aspects of technology make it easier to create environments that fit the principles of learning discussed throughout this volume.

Because many new technologies are interactive (Greenfield and Cocking, 1996), it is now easier to create environments in which students can learn by doing, receive feedback, and continually refine their understanding and build new knowledge (Barron et al., 1998; Bereiter and Scardamalia,

1993; Hmelo and Williams, 1998; Kafai, 1995; Schwartz et al., 1999). The new technologies can also help people visualize difficult-to-understand concepts, such as differentiating heat from temperature (Linn et al., 1996). Students can work with visualization and modeling software that is similar to the tools used in nonschool environments, increasing their understanding and the likelihood of transfer from school to nonschool settings (see Chapter 3). These technologies also provide access to a vast array of information, including digital libraries, data for analysis, and other people who provide information, feedback, and inspiration. They can enhance the learning of teachers and administrators, as well as that of students, and increase connections between schools and the communities, including homes.

In this chapter we explore how new technologies can be used in five ways:

-

bringing exciting curricula based on real-world problems into the classroom;

-

providing scaffolds and tools to enhance learning;

-

giving students and teachers more opportunities for feedback, reflection, and revision;

-

building local and global communities that include teachers, administrators, students, parents, practicing scientists, and other interested people; and

-

expanding opportunities for teacher learning.

NEW CURRICULA

An important use of technology is its capacity to create new opportunities for curriculum and instruction by bringing real-world problems into the classroom for students to explore and solve; see Box 9.1. Technology can help to create an active environment in which students not only solve problems, but also find their own problems. This approach to learning is very different from the typical school classrooms, in which students spend most of their time learning facts from a lecture or text and doing the problems at the end of the chapter.

Learning through real-world contexts is not a new idea. For a long time, schools have made sporadic efforts to give students concrete experiences through field trips, laboratories, and work-study programs. But these activities have seldom been at the heart of academic instruction, and they have not been easily incorporated into schools because of logistical constraints and the amount of subject material to be covered. Technology offers powerful tools for addressing these constraints, from video-based problems and computer simulations to electronic communications systems that connect

|

BOX 9.1 Bringing Real-World Problems to Classrooms Children in a Tennessee middle-school math class have just seen a video adventure from the Jasper Woodbury series about how architects work to solve community problems, such as designing safe places for children to play. The video ends with this challenge to the class to design a neighborhood playground: Narrator: Trenton Sand and Lumber is donating 32 cubic feet of sand for the sandbox and is sending over the wood and fine gravel. Christina and Marcus just have to let them know exactly how much they’ll need. Lee’s Fence Company is donating 280 feet of fence. Rodriguez Hardware is contributing a sliding surface, which they’ll cut to any length, and swings for physically challenged children. The employees of Rodriguez want to get involved, so they’re going to put up the fence and help build the playground equipment. And Christina and Marcus are getting their first jobs as architects, starting the same place Gloria did 20 years ago, designing a playground. Students in the classroom help Christina and Marcus by designing swingsets, slides, and sandboxes, and then building models of their playground. As they work through this problem, they confront various issues of arithmetic, geometry, measurement, and other subjects. How do you draw to scale? How do you measure angles? How much pea gravel do we need? What are the safety requirements? Assessments of students’ learning showed impressive gains in their understanding of these and other geometry concepts (e.g., Cognition and Technology Group at Vanderbilt, 1997). In addition, students improved their abilities to work with one another and to communicate their design ideas to real audiences (often composed of interested adults). One year after engaging in these activities, students remembered them vividly and talked about them with pride (e.g., Barron et al., 1998). |

classrooms with communities of practitioners in science, mathematics, and other fields (Barron et al., 1995).

A number of video- and computer-based learning programs are now in use, with many different purposes. The Voyage of the Mimi, developed by Bank Street College, was one of the earliest attempts to use video and computer technology to introduce students to real-life problems (e.g., Char and Hawkins, 1987): students “go to sea” and solve problems in the context of learning about whales and the Mayan culture of the Yucatan. More recent

series include the Jasper Woodbury Problem Solving Series (Cognition and Technology Group at Vanderbilt, 1997), 12 interactive video environments that present students with challenges that require them to understand and apply important concepts in mathematics; see the example in Box 9.2. Students who work with the series have shown gains in mathematical problem solving, communication abilities, and attitudes toward mathematics (e.g., Barron et al., 1998; Crews et al., 1997; Cognition and Technology Group at Vanderbilt, 1992, 1993, 1994, 1997; Vye et al., 1998).

New learning programs are not restricted to mathematics and science. Problem-solving environments have also been developed that help students better understand workplaces. For example, in a banking simulation, students assume roles, such as the vice president of a bank, and learn about the knowledge and skills needed to perform various duties (Classroom Inc., 1996).

The interactivity of these technology environments is a very important feature for learning. Interactivity makes it easy for students to revisit specific parts of the environments to explore them more fully, to test ideas, and to receive feedback. Noninteractive environments, like linear videotapes, are much less effective for creating contexts that students can explore and reexamine, both individually and collaboratively.

Another way to bring real-world problems into the classroom is by connecting students with working scientists (Cohen, 1997). In many of these student-scientist partnerships, students collect data that are used to understand global issues; a growing number of them involve students from geographically dispersed schools who interact through the Internet. For example, Global Lab supports an international community of student researchers from more than 200 schools in 30 countries who construct new knowledge about their local and global environments (Tinker and Berenfeld, 1993, 1994). Global Lab classrooms select aspects of their local environments to study. Using shared tools, curricula, and methodologies, students map, describe, and monitor their sites, collect and share data, and situate their local findings into a broader, global context. After participating in a set of 15 skill-building activities during their first semester, Global Lab students begin advanced research studies in such areas as air and water pollution, background radiation, biodiversity, and ozone depletion. The global perspective helps learners identify environmental phenomena that can be observed around the world, including a decrease in tropospheric ozone levels in places where vegetation is abundant, a dramatic rise of indoor carbon dioxide levels by the end of the school day, and the substantial accumulation of nitrates in certain vegetables. Once participants see significant patterns in their data, this “telecollaborative” community of students, teachers, and scientists tackles the most rigorous aspects of science—designing experiments, conducting peer reviews, and publishing their findings.

|

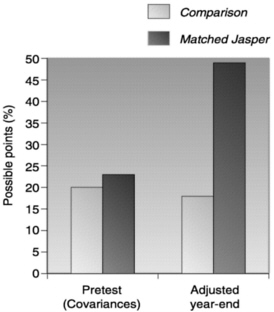

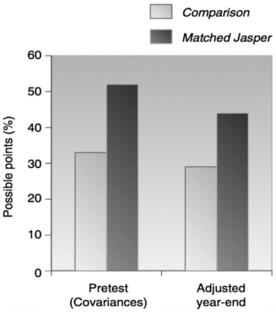

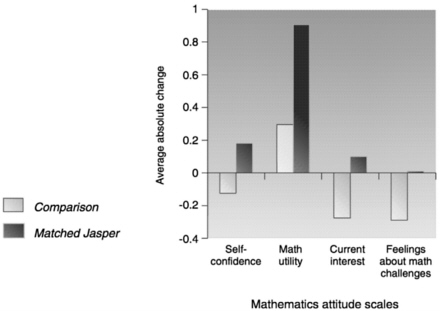

BOX9.2 Problem Solving and Attitudes Students in classrooms in nine states received opportunities to solve four Jasper adventures distributed throughout the year. The average total time spent solving Jasper adventures ranged from 3 to 4 weeks. The students were compared with non-Jasper comparison classes on standardized test scores of mathematics, problems requiring complex problem solving, and attitudes toward mathematics and complex challenges. With no losses in standardized test scores, both boys and girls in the Jasper classrooms showed better complex problem solving and had more positive attitudes toward mathematics and complex challenges (see Cognition and Technology Group at Vanderbilt, 1992; Pellegrino et al., 1991). The graphs show scores for Jasper and comparison students on questions that asked them to (a) identify the key data and steps needed to solve complex problems, (b) evaluate possible solutions to these problems, and (c) indicate their self-confidence with respect to mathematics, their belief in the utility of mathematics, their current interest in mathematics, and their feelings about complex math challenges. Figure 9.1 shows positive attitude changes from the beginning to the end of the school year for students in the interactive video challenge series, with negative changes falling below the midline of the graph, as shown for most of the students in the comparison groups. Figures 9.2 and 9.3 indicate positive changes for Jasper-video students’ planning skills growth and comprehension on the problem-solving challenges. Clearly, the interactive video materials had positive effects on children’s problem solving and comprehension.  FIGURE 9.1 Changes in attitude. |

Similar approaches have been used in astronomy, ornithology, language arts, and other fields (Bonney and Dhondt, 1997; Riel, 1992; University of California Regents, 1997). These collaborative experiences help students understand complex systems and concepts, such as multiple causes and interactions among different variables. Since the ultimate goal of education is to prepare students to become competent adults and lifelong learners, there is a strong argument for electronically linking students not just with their peers, but also with practicing professionals. Increasingly scientists and other professionals are establishing electronic “collaboratories” (Lederberg and Uncapher, 1989), through which they define and conduct their work (e.g., Finholt and Sproull, 1990; Galegher et al., 1990). This trend provides both a justification and a medium for establishing virtual communities for learning purposes.

Through Project GLOBE (Global Learning and Observations to Benefit the Environment), thousands of students in grades kindergarten through 12 (K–12) from over 2,000 schools in more than 34 countries are gathering data about their local environments (Lawless and Coppola, 1996). Students collect data in five different earth science areas, including atmosphere, hydrology, and land cover, using protocols specified by principal investigators from major research institutions. Students submit their data through the Internet to a GLOBE data archive, which both the scientists and the students use to perform their analyses. A set of visualization tools provided on the GLOBE World Wide Web site enables students to see how their own data fit with those collected elsewhere. Students in GLOBE classrooms demonstrate higher knowledge and skill levels on assessments of environmental science methods and data interpretation than their peers who have not participated in the program (Means et al., 1997).

Emerging technologies and new ideas about teaching are being combined to reshape precollege science education in the Learning Through Collaborative Visualization (CoVis) Project (Pea, 1993a; Pea et al., 1997). Over wideband networks, middle and high school students from more than 40 schools collaborate with other students at remote locations. Thousands of participating students study atmospheric and environmental sciences—including topics in meteorology and climatology—through project-based activities. Through these networks, students also communicate with “telementors” —university researchers and other experts. Using scientific visualization software, specially modified for learning, students have access to the same research tools and datasets that scientists use.

In one 5-week activity, “Student Conference on Global Warming,” supported by curriculum units, learner-centered scientific visualization tools and data, and assessment rubrics available through the CoVis GeoSciences web server, students across schools and states evaluate the evidence for global warming and consider possible trends and consequences (Gordin et al.,

1996). Learners are first acquainted with natural variation in climatic temperature, human-caused increases in atmospheric carbon dioxide, and uses of spreadsheets and scientific visualization tools for inquiry. These staging activities specify themes for open-ended collaborative learning projects to follow. In laying out typical questions and data useful to investigate the potential impact of global warming on a country or a country’s potential impact on global warming, a general framework is used in which students specialize by selecting a country, its specific data, and the particular issue for their project focus (e.g., rise in carbon-dioxide emissions due to recent growth, deforestation, flooding due to rising sea levels). Students then investigate either a global issue or the point of view of a single country. The results of their investigations are shared in project reports within and across schools, and participants consider current results of international policy in light of their project findings.

Working with practitioners and distant peers on projects with meaning beyond the school classroom is a great motivator for K–12 students. Students are not only enthusiastic about what they are doing, they also produce some impressive intellectual achievements when they can interact with meteorologists, geologists, astronomers, teachers, or computer scientists (Means et al., 1996; O’Neill et al., 1996; O’Neill, 1996; Wagner, 1996).

SCAFFOLDS AND TOOLS

Many technologies function as scaffolds and tools to help students solve problems. This was foreseen long ago: in a prescient 1945 essay in the Atlantic Monthly, Vannevar Bush, science adviser to President Roosevelt, depicted the computer as a general-purpose symbolic system that could serve clerical and other supportive research functions in the sciences, in work, and for learning, thus freeing the human mind to pursue its creative capacities.

In the first generation of computer-based technologies for classroom use, this tool function took the rather elementary form of electronic “flashcards” that students used to practice discrete skills. As applications have spilled over from other sectors of society, computer-based learning tools have become more sophisticated (Atkinson, 1968; Suppes and Morningstar, 1968). They now include calculators, spreadsheets, graphing programs, function probes (e.g., Roschelle and Kaput, 1996), “mathematical supposers” for making and checking conjectures (e.g., Schwartz, 1994), and modeling programs for creating and testing models of complex phenomena (Jackson et al., 1996). In the Middle School Mathematics Through Applications Projects (MMAP), developed at the Institute for Research on Learning, innovative software tools are used for exploring concepts in algebra through such problems as designing insulation for arctic dwellings (Goldman and Moschkovich,

1995). In the Little Planet Literacy Series, computer software helps to move students through the phases of becoming better writers (Cognition and Technology Group at Vanderbilt, 1998a, b). For example, in the Little Planet Literacy Series, engaging video-based adventures encourage kindergarten, first-, and second-grade students to write books to solve challenges posed at the end of the adventures. In one of the challenges, students need to write a book in order to save the creatures on the Little Planet from falling prey to the wiles of an evil character named Wongo.

The challenge for education is to design technologies for learning that draw both from knowledge about human cognition and from practical applications of how technology can facilitate complex tasks in the workplace. These designs use technologies to scaffold thinking and activity, much as training wheels allow young bike riders to practice cycling when they would fall without support. Like training wheels, computer scaffolding enables learners to do more advanced activities and to engage in more advanced thinking and problem solving than they could without such help. Cognitive technologies were first used to help students learn mathematics (Pea, 1985) and writing (Pea and Kurland, 1987); a decade later, a multitude of projects use cognitive scaffolds to promote complex thinking, design, and learning in the sciences, mathematics, and writing.

The Belvedere system, for example, is designed to teach science-related public policy issues to high school students who lack deep knowledge of many science domains, have difficulty zeroing in on the key issues in a complex scientific debate, and have trouble recognizing abstract relationships that are implicit in scientific theories and arguments (Suthers et al., 1995). Belvedere uses graphics with specialized boxes to represent different types of relationships among ideas that provide scaffolding to support students’ reasoning about science-related issues. As students use boxes and links within Belvedere to represent their understanding of an issue, an online adviser gives hints to help them improve the coverage, consistency, and evidence for their arguments (Paolucci et al., 1996).

Scaffolded experiences can be structured in different ways. Some research educators advocate an apprenticeship model, whereby an expert practitioner first models the activity while the learner observes, then scaffolds the learner (with advice and examples), then guides the learner in practice, and gradually tapers off support and guidance until the apprentice can do it alone (Collins et al., 1989). Others argue that the goal of enabling a solo approach is unrealistic and overrestrictive since adults often need to use tools or other people to accomplish their work (Pea, 1993b; Resnick, 1987). Some even contend that well-designed technological tools that support complex activities create a truly human-machine symbiosis and may reorganize components of human activity into different structures than they had in pretechnological designs (Pea, 1985). Although there are varying views on

the exact goals and on how to assess the benefits of scaffolding technologies, there is agreement that the new tools make it possible for people to perform and learn in far more complex ways than ever before.

In many fields, experts are using new technologies to represent data in new ways—for example, as three-dimensional virtual models of the surface of Venus or of a molecular structure, either of which can be electronically created and viewed from any angle. Geographical information systems, to take another example, use color scales to visually represent such variables as temperature or rainfall on a map. With these tools, scientists can discern patterns more quickly and detect relationships not previously noticed (e.g., Brodie et al., 1992; Kaufmann and Smarr, 1993).

Some scholars assert that simulations and computer-based models are the most powerful resources for the advancement and application of mathematics and science since the origins of mathematical modeling during the Renaissance (Glass and Mackey, 1988; Haken, 1981). The move from a static model in an inert medium, like a drawing, to dynamic models in interactive media that provide visualization and analytic tools is profoundly changing the nature of inquiry in mathematics and science. Students can visualize alternative interpretations as they build models that can be rotated in ways that introduce different perspectives on the problems. These changes affect the kinds of phenomena that can be considered and the nature of argumentation and acceptable evidence (Bachelard, 1984; Holland, 1995).

The same kinds of computer-based visualization and analysis tools that scientists use to detect patterns and understand data are now being adapted for student use. With probes attached to microcomputers, for example, students can do real-time graphing of such variables as acceleration, light, and sound (Friedler et al., 1990; Linn, 1991; Nemirovsky et al., 1995; Thornton and Sokoloff, 1998). The ability of the human mind to quickly process and remember visual information suggests that concrete graphics and other visual representations of information can help people learn (Gordin and Pea, 1995), as well as help scientists in their work (Miller, 1986).

A variety of scientific visualization environments for precollege students and teachers have been developed by the CoVis Project (Pea, 1993a; Pea et al., 1997). Classrooms can collect and analyze real-time weather data (Fishman and D’Amico, 1994; University of Illinois, Urbana-Champaign, 1997) or 25 years of Northern Hemisphere climate data (Gordin et al., 1994). Or they can investigate the global greenhouse effect (Gordin et al., 1996). As described above, students with new technological tools can communicate across a network, work with datasets, develop scientific models, and conduct collaborative investigations into meaningful science issues.

Since the late 1980s, cognitive scientists, educators, and technologists have suggested that learners might develop a deeper understanding of phenomena in the physical and social worlds if they could build and manipulate

models of these phenomena (e.g., Roberts and Barclay, 1988). These speculations are now being tested in classrooms with technology-based modeling tools. For example, the STELLA modeling environment, which grew out of research on systems dynamics at the Massachusetts Institute of Technology (Forrester, 1991), has been widely used for instruction at both the undergraduate and precollege level, in fields as diverse as population ecology and history (Clauset et al., 1987; Coon, 1988; Mintz, 1993; Steed, 1992; Mandinach, 1989; Mandinach et al., 1988).

The educational software and exploration and discovery activities developed for the GenScope Project use simulations to teach core topics in genetics as part of precollege biology. The simulations move students through a hierarchy of six key genetic concepts: DNA, cell, chromosome, organism, pedigree, and population (Neumann and Horwitz, 1997). GenScope also uses an innovative hypermodel that allows students to retrieve real-world data to build models of the underlying physical process. Evaluations of the program among high school students in urban Boston found that students not only were enthusiastic about learning this complex subject, but had also made significant conceptual developments.

Students are using interactive computer microworlds to study force and motion in the Newtonian world of mechanics (Hestenes, 1992; White, 1993). Through the medium of interactive computer microworlds, learners acquire hands-on and minds-on experience and, thus, a deeper understanding of science. Sixth graders who use computer-based learning tools develop a better conceptual understanding of acceleration and velocity than many 12th-grade physics students (White, 1993); see Box 9.3. In another project, middle school students employ easy-to-use computer-based tools (Model-It) to build qualitative models of systems, such as the water quality and algae levels in a local stream. Students can insert data they have collected into the model, observe outcomes, and generate what if scenarios to get a better understanding of the interrelationships among key variables (Jackson et al., 1996).

In general, technology-based tools can enhance student performance when they are integrated into the curriculum and used in accordance with knowledge about learning (e.g., see especially White and Frederiksen, 1998). But the mere existence of these tools in the classroom provides no guarantee that student learning will improve; they have to be part of a coherent education approach.

FEEDBACK, REFLECTION, AND REVISION

Technology can make it easier for teachers to give students feedback about their thinking and for students to revise their work. Initially, teachers working with the Jasper Woodbury playground adventure (described above) had trouble finding time to give students feedback about their playground

|

BOX9.3 The Use of ThinkerTools in Physics Instruction The ThinkerTools Inquiry Curriculum uses an innovative software tool that allows experimenters to perform physics experiments under a variety of conditions and compare the results with experiments performed with actual objects. The curriculum emphasizes a metacognitive approach to instruction (see Chapters 2, 3, and 4) by using an inquiry cycle that helps students see where they are in the inquiry process, plus processes called reflective assessment in which students reflect on their own and each others’ inquiries. Experiments conducted with typical seventh-, eighth-, and ninth-grade students in urban, public middle schools revealed that the software modeling tools made the difficult subject of physics understandable as well as interesting to a wide range of students. Students not only learned about physics, but also about processes of inquiry. We found that, regardless of their lower grade levels (7–9) and their lower pretest scores, students who had participated in ThinkerTools outperformed high school physics students (grades 11–12) on qualitative problems in which they were asked to apply the basic principles of Newtonian mechanics to real-world situations. In general, this inquiry-oriented, model-based, constructivist approach to science education appears to make science interesting and accessible to a wider range of students than is possible with traditional approaches (White and Fredericksen, 1998:90–91). |

designs, but a simple computer interface cut in half the time it took teachers to provide feedback (see, e.g., Cognition and Technology Group at Vanderbilt, 1997). An interactive Jasper Adventuremaker software program allows students to suggest solutions to a Jasper adventure, then see simulations of the effects of their solutions. The simulations had a clear impact on the quality of the solutions that students generated subsequently (Crews et al., 1997). Opportunities to interact with working scientists, as discussed above, also provide rich experiences for learning from feedback and revision (White and Fredericksen, 1994). The SMART (Special Multimedia Arenas for Refining Thinking) Challenge Series provides multiple technological resources for feedback and revision. SMART has been tested in various contexts, including the Jasper challenge. When its formative assessment resources are added to these curricula, students achieve at higher levels than without them (e.g. Barron et al., 1998; Cognition and Technology Group at Vanderbilt, 1994,

|

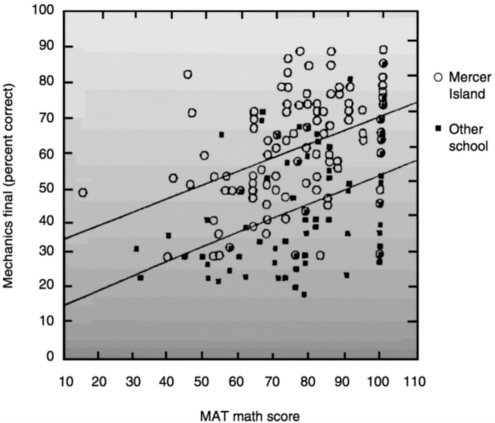

BOX 9.4 A Program for Diagnosing Preconceptions in Physics A computer-based DIAGNOSER program has helped teachers increase student achievement in high school physics (Hunt and Minstrell, 1994). The program assesses students’ beliefs (preconceptions) about various physical phenomena— beliefs that often fit their everyday experiences but are not consistent with physicists’ views of the world (see Chapters 2, 3, 6, and 7). Given particular beliefs, sets of activities are recommended that help students reinterpret phenomena from a physicist’s perspective. Teachers incorporate information from the diagnoser to guide how they teach. Data from experimental and comparison classrooms on students’ understanding of important concepts in physics show strong superiority for those in the experimental groups; see the graph below.  FIGURE 9.1 Mercer Island versus comparable school mechanics vinal and MAT math scores. SOURCE: Hunt and Minstrell (1994). |

1997; Vye et al., 1998). Another way of using technology to support formative assessment is described in Box 9.4.

Classroom communication technologies, such as Classtalk, can promote more active learning in large lecture classes and, if used appropriately, highlight the reasoning processes that students use to solve problems (see Chapter 7). This technology allows an instructor to prepare and display problems that the class works on collaboratively. Students enter answers (individually or as a group) via palm-held input devices, and the technology collects, stores, and displays histograms (bar graphs of how many students preferred each problem solution) of the class responses. This kind of tool can provide useful feedback to students and the teacher on how well the students understand the concepts being covered and whether they can apply them in novel contexts (Mestre et al., 1997).

Like other technologies, however, Classtalk does not guarantee effective learning. The visual histograms are intended to promote two-way communication in large lecture classes: as a springboard for class discussions in which students justify the procedures they used to arrive at their answers, listen critically to the arguments of others, and refute them or offer other reasoning strategies. But the technology could be used in ways that have nothing to do with this goal. If, for example, a teacher used Classtalk merely as an efficient device to take attendance or administer conventional quizzes, it would not enhance two-way communication or make students’ reasoning more visible. With such a use, the opportunity to expose students to varying perspectives on problem solving and the various arguments for different problem solutions would be lost. Thus, effective use of technology involves many teacher decisions and direct forms of teacher involvement.

Peers can serve as excellent sources of feedback. Over the last decade, there have been some very successful and influential demonstrations of how computer networks can support groups of students actively engaged in learning and reflection. Computer-Supported Intentional Learning Environments (CSILE) provide opportunities for students to collaborate on learning activities by working through a communal database that has text and graphics capabilities (Scardamalia et al., 1989; Scardamalia and Bereiter, 1991, 1993; Scardamalia et al., 1994). Within this networked multimedia environment (now distributed as Knowledge Forum), students create “notes” that contain an idea or piece of information about the topic they are studying. These notes are labeled by categories, such as question or new learning, that other students can search and comment on; see Box 9.5. With support from the instructor, these processes engage students in dialogues that integrate information and contributions from various sources to produce knowledge. CSILE also includes guidelines for formulating and testing conjectures and prototheories. CSILE has been used in elementary, secondary, and postgraduate classrooms for science, history, and social studies. Students in

|

BOX 9.5 Slaminan Number System An example of how technology-supported conversations can help students refine each other’s thinking comes from an urban elementary classroom. Students worked in small groups to design different aspects of a hypothetical culture of rain forest dwellers (Means et al., 1995). The group that was charged with developing a number system for the hypothetical culture posted the following entry: This is the slaminan’s number system. It is a base 10 number system too. It has a pattern to it. The number of lines increase up to five then it goes upside down all the way to 10. Another student group in the same classroom reviewed this CSILE posting and displayed impressive analytic skills (as well as good social skills) in a response pointing out the need to extend the system: We all like the number system but we want to know how the number 0 looks like, and you can do more numbers not just 10 like we have right now. Many students in this classroom speak a language other than English in their homes. CSILE provides opportunities to express their ideas in English and to receive feedback from their peers. |

CSILE classes do better on standardized tests and portfolio entries and show greater depth in their explanations than students in classes without CSILE (see, e.g., Scardamalia and Bereiter, 1993). Furthermore, students at all ability levels participate effectively: in fact, in classrooms using the technology in the most collaborative fashion, CSILE’s positive effects were particularly strong for lower- and middle-ability groups (Bryson and Scardamalia, 1991).

As one of its many uses to support learning, the Internet is increasingly being used as a forum for students to give feedback to each other. In the GLOBE Project (described above), students inspect each others’ data on the project web site and sometimes find readings they believe may be in error. Students use the electronic messaging system to query the schools that report suspicious data about the circumstances under which they made their measurement; for another kind of use, see Box 9.6.

An added advantage of networked technologies for communication is that they help make thinking visible. This core feature of the cognitive apprenticeship model of instruction (Collins, 1990) is exemplified in a broad range of instructional programs and has a technological manifestation, as

well (see, e.g., Collins, 1990; Collins and Brown, 1988; Collins et al., 1989). By prompting learners to articulate the steps taken during their thinking processes, the software creates a record of thought that learners can use to reflect on their work and teachers can use to assess student progress. Several projects expressly include software designed to make learners’ thinking visible. In CSILE, for example, as students develop their communal hypermedia database with text and graphics, teachers can use the database as a record of students’ thoughts and electronic conversations over time. Teachers can browse the database to review both their students’ emerging understanding of key concepts and their interaction skills (Means and Olson, 1995b).

The CoVis Project developed a networked hypermedia database, the collaboratory notebook, for a similar purpose. The collaboratory notebook is divided into electronic workspaces, called notebooks, that can be used by students working together on a specific investigation (Edelson et al., 1995). The notebook provides options for making different kinds of pages—questions, conjectures, evidence for, evidence against, plans, steps in plans, information, and commentary. Using the hypermedia system, students can pose a question, then link it to competing conjectures about the questions posed by different students (perhaps from different sites) and to a plan for investigating the question. Images and documents can be electronically “attached” to pages. Using the notebook shortened the time between students’ preparation of their laboratory notes and the receipt of feedback from their teachers (Edelson et al., 1995). Similar functions are provided by SpeakEasy, a software tool used to structure and support dialogues among engineering students and their instructors (Hoadley and Bell, 1996).

Sophisticated tutoring environments that pose problems are also now available and give students feedback on the basis of how experts reason and organize their knowledge in physics, chemistry, algebra, computer programming, history, and economics (see Chapter 2). With this increased understanding has come an interest in: testing theories of expert reasoning by translating them into computer programs, and using computer-based expert systems as part of a larger program to teach novices. Combining an expert model with a student model—the system’s representation of the student’s level of knowledge—and a pedagogical model that drives the system has produced intelligent tutoring systems, which seek to combine the advantages of customized one-on-one tutoring with insights from cognitive research about expert performance, learning processes, and naive reasoning (Lesgold et al., 1990; Merrill et al., 1992).

A variety of computer-based cognitive tutors have been developed for algebra, geometry, and LISP programming (Anderson et al., 1995). These cognitive tutors have resulted in a complex profile of achievement gains for the students, depending on the nature of the tutor and the way it is inte-

|

BOX 9.6 Monsters, Mondrian, and Me As part of the Challenge 2000 Multimedia Project, elementary teachers Lucinda Surber, Cathy Chowenhill, and Page McDonald teamed up to design and execute an extended collaboration between fourth-grade classes at two elementary schools. In a unit they called “Monsters, Mondrian, and Me,” students were directed to describe a picture so well in an e-mail message that their counterparts in the other classroom could reproduce it. The project illustrates how telecomunication can both make clear the need for clear, precise writing and provide a forum for feedback from peers. During the Monster phase of the project, students in the two classes worked in pairs first to invent and draw monsters (such as “Voyager 999,” “Fat Belly,” and “Bug Eyes”) and then to compose paragraphs describing the content of their drawings (e.g., “Under his body he has four purple legs with three toes on each one”). Their goal was to provide a complete and clear enough description that students in the other class could reproduce the monster without ever having seen it. The descriptive paragraphs were exchanged through electronic mail, and the matched student pairs made drawings based on their understanding of the descriptions. The final step of this phase involved the exchange of the “second-generation drawings” so that the students who had composed the descriptive paragraphs could reflect on their writing, seeing where ambiguity or incomplete specification led to a different interpretation on the part of their readers. The students executed the same steps of writing, exchange of paragraphs, drawing, and reflection, in the Mondrian stage, this time starting with the art of abstract expressionists such as Mondrian, Klee, and Rothko. In the Me stage, students studied self-portraits of famous painters and then produced portraits of themselves, which they attempted to describe with enough detail so that their distant partners could produce portraits matching their own. |

grated into the classroom (Anderson et al., 1990, 1995); see Boxes 9.7 and 9.8.

Another example of the tutoring approach is the Sherlock Project, a computer-based environment for teaching electronics troubleshooting to Air Force technicians who work on a complex system involving thousands of parts (e.g., Derry and Lesgold, 1997; Gabrys et al., 1993). A simulation of this complex system was combined with an expert system or coach that offered advice when learners reached impasses in their troubleshooting attempts; and with reflection tools that allowed users to replay their performance and try out possible improvements. In several field tests of techni-

|

By giving students a distant audience for their writing (their partners at the other school), the project made it necessary for students to say everything in writing, without the gestures and oral communication that could supplement written messages within their own classroom. The pictures that their partners created on the basis of their written descriptions gave these young authors tangible feedback regarding the completeness and clarity of their writing. The students’ reflections revealed developing insights into the multiple potential sources for miscommunication: Maybe you skipped over another part, or maybe it was too hard to understand. The only thing that made it not exactly perfect was our mistake…. We said, “Each square is down a bit, “What we should have said was, “Each square is all the way inside the one before it,” or something like that. I think I could have been more clear on the mouth. I should have said that it was closed. I described it [as if it were] open by telling you I had no braces or retainers. The electronic technologies that students used in this project were quite simple (word processors, e-mail, scanners). The project’s sophistication lies more in its structure, which required students to focus on issues of audience understanding and to make translations across different media (words and pictures), potentially increasing their understanding of the strengths and weaknesses of each. The students’ artwork, descriptive paragraphs, and reflections are available on a project website at http://www.barron.palo-alto.ca.us/hoover/mmm/mmm.html. |

cians as they performed the hardest real-world troubleshooting tasks, 20 to 25 hours of Sherlock training was the equivalent of about 4 years of on-the-job experience. Not surprisingly, Sherlock has been deployed at several U.S. Air Force bases. Two of the crucial properties of Sherlock are modeled on successful informal learning: learners successfully complete every problem they start, with the amount of coaching decreasing as their skill increases; and learners replay and reflect on their performance, highlighting areas where they could improve, much as a football player might review a game film.

|

BOX 9.7 Learning with the Geometry Tutor When the Geometry Tutor was placed in classes in a large urban high school, students moved through the geometry proofs more quickly than expected by either the teachers or the tutor developers. Average, below-average, and underachieving high-ability students with little confidence in their math skills benefited most from the tutor (Wertheimer, 1990). Students in classes using the tutor showed higher motivation by starting work much more quickly—often coming early to class to get started—and taking more responsibility for their own progress. Teachers started spending more of their time assisting individual students who asked for help and giving greater weight to effort in assigning student grades (Schofield, 1995). |

It is noteworthy that students can use these tutors in groups as well as alone. In many settings, students work together on tutors and discuss issues and possible answers with others in their class.

CONNECTING CLASSROOMS TO COMMUNITY

It is easy to forget that student achievement in school also depends on what happens outside of school. Bringing students and teachers in contact with the broader community can enhance their learning. In the previous chapter, we discussed learning through contacts with the broader community. Universities and businesses, for example, have helped communities upgrade the quality of teaching in schools. Engineers and scientists who work in industry often play a mentoring role with teachers (e.g., University of California-Irvine Science Education Program).

Modern technologies can help make connections between students’ in-school and out-of-school activities. For example, the “transparent school” (Bauch, 1997) uses telephones and answering machines to help parents understand the daily assignments in classrooms. Teachers need only a few minutes per day to dictate assignments into an answering machine. Parents can call at their convenience and retrieve the daily assignments, thus becoming informed of what their children are doing in school. Contrary to some expectations, low-income parents are as likely to call the answering machines as are parents of higher socioeconomic status.

The Internet can also help link parents with their children’s schools. School calendars, assignments, and other types of information can be posted on a school’s Internet site. School sites can also be used to inform the community of what a school is doing and how they can help. For example, the American Schools Directory (www.asd.com), which has created Internet pages for each of the 106,000 public and private K–12 schools in the country,

|

BOX 9.8 Intelligent Tutoring in High School Algebra A large-scale experiment evaluated the benefits of introducing an intelligent algebra tutoring system into an urban high school setting (Koedinger et al., 1997). An important feature of the project was a collaborative, client-centered design that coordinated the tutoring system with the teachers’ goals and expertise. The collaboration produced the PUMP (Pittsburgh urban mathematics program) curriculum, which focuses on mathematical analyses of real-world situations, the use of computational tools, and on making algebra accessible to all students. An intelligent tutor, PAT (for PUMP Algebra Tutor) supported this curriculum. Researchers compared achievement levels of ninth- grade students in the tutored classrooms (experimental group) with achievement in more traditional algebra classrooms. The results demonstrated strong benefits from the use of PUMP and PAT, which is currently used in 70 schools nationwide.. |

includes a “Wish List” on which schools post requests for various kinds of help. In addition, the ASD provides free e-mail for every student and teacher in the country.

Several projects are exploring the factors required to create effective electronic communities. For example, we noted above that students can

learn more when they are able to interact with working scientists, authors, and other practicing professionals. An early review of six different electronic communities, which included teacher and student networks and a group of university researchers, looked at how successful these communities were in relation to their size and location, how they organized themselves, what opportunities and obligations for response were built into the network, and how they evaluated their work (Riel and Levin, 1990). Across the six groups, three factors were associated with successful network-based communities: an emphasis on group rather than one-to-one communication; well-articulated goals or tasks; and explicit efforts to facilitate group interaction and establish new social norms.

To make the most of the opportunities for conversation and learning available through these kinds of networks, students, teachers, and mentors must be willing to assume new or untraditional roles. For example, a major purpose of the Kids as Global Scientists (KGS) research project—a worldwide clusters of students, scientist mentors, technology experts, and experts in pedagogy—is to identify key components that make these communities successful (Songer, 1993). In the most effective interactions, a social glue develops between partners over time. Initially, the project builds relationships by engaging people across locations in organized dialogues and multimedia introductions; later, the group establishes guidelines and scaffolds activities to help all participants understand their new responsibilities. Students pose questions about weather and other natural phenomena and refine and respond to questions posed by themselves and others. This dialogue-based approach to learning creates a rich intellectual context, with ample opportunities for participants to improve their understanding and become more personally involved in explaining scientific phenomena.

TEACHER LEARNING

The introduction of new technologies to classrooms has offered new insights about the roles of teachers in promoting learning (McDonald and Naso, 1986; Watts, 1985). Technology can give teachers license to experiment and tinker (Means and Olson, 1995a; U.S. Congress, Office of Technology Assessment, 1995). It can stimulate teachers to think about the processes of learning, whether through a fresh study of their own subject or a fresh perspective on students’ learning. It softens the barrier between what students do and what teachers do.

When teachers learn to use a new technology in their classrooms, they model the learning process for students; at the same time, they gain new insights on teaching by watching their students learn. Moreover, the transfer of the teaching role from teacher to student often occurs spontaneously during efforts to use computers in classrooms. Some children develop a

profound involvement with some aspect of the technology or the software, spend considerable time on it, and know more than anyone else in the group, including their teachers. Often both teachers and students are novices, and the creation of knowledge is a genuinely cooperative endeavor. Epistemological authority—teachers possessing knowledge and students receiving knowledge—is redefined, which in turn redefines social authority and personal responsibility (Kaput, 1987; Pollak, 1986; Skovsmose, 1985). Cooperation creates a setting in which novices can contribute what they are able and learn from the contributions of those more expert than they. Collaboratively, the group, with its variety of expertise, engagement, and goals, gets the job done (Brown and Campione, 1987:17). This devolution of authority and move toward cooperative participation results directly from, and contributes to, an intense cognitive motivation.

As teachers learn to use technology, their own learning has implications for the ways in which they assist students to learn more generally (McDonald and Naso, 1986):

-

They must be partners in innovation; a critical partnership is needed among teachers, administrators, students, parents, community, university, and the computer industry.

-

They need time to learn: time to reflect, absorb discoveries, and adapt practices.

-

They need collegial advisers rather than supervisors; advising is a partnership.

Internet-based communities of teachers are becoming an increasingly important tool for overcoming teachers’ sense of isolation. They also provide avenues for geographically dispersed teachers who are participating in the same kinds of innovations to exchange information and offer support to each other (see Chapter 8). Examples of these communities include the LabNet Project, which involves over 1,000 physics teachers (Ruopp et al., 1993); Bank Street College’s Mathematics Learning project; the QUILL network for Alaskan teachers of writing (Rubin, 1992); and the HumBio Project, in which teachers are developing biology curricula over the network (Keating, 1997; Keating and Rosenquist, 1998). WEBCSILE, an Internet version of the CSILE program described above, is being used to help create teacher communities.

The worldwide web provides another venue for teachers to communicate with an audience outside their own institutions. At the University of Illinois, James Levin asks his education graduate students to develop web pages with their evaluations of education resources on the web, along with hot links to those web resources they consider most valuable. Many students not only put up these web pages, but also revise and maintain them

after the course is over. Some receive tens of thousands of hits on their web sites each month (Levin et al., 1994; Levin and Waugh, 1998).

While e-mail, listservs, and websites have enabled members of teacher communities to exchange information and to stay in touch, they represent only part of technology’s full potential to support real communities of practice (Schlager and Schank, 1997). Teacher communities of practice need chances for planned interactions, tools for joint review and annotation of education resources, and opportunities for on-line collaborative design activities. In general, teacher communities need environments that generate the social glue that Songer found so important in the Kids as Global Scientists community.

The Teacher Professional Development Institute (TAPPED IN), a multiuser virtual environment, integrates synchronous (“live”) and asynchronous (such as e-mail) communication. Users can store and share documents and interact with virtual objects in an electronic environment patterned after a typical conference center. Teachers can log into TAPPED IN to discuss issues, create and share resources, hold workshops, engage in mentoring, and conduct collaborative inquiries with the help of virtual versions of such familiar tools as books, whiteboards, file cabinets, notepads, and bulletin boards. Teachers can wander among the public “rooms,” exploring the resources in each and engaging in spontaneous live conversations with others exploring the same resources. More than a dozen major teacher professional development organizations have set up facilities within TAPPED IN.

In addition to supporting teachers’ ongoing communication and professional development, technology is used in preservice seminars for teachers. A challenge in providing professional development for new teachers is allowing them adequate time to observe accomplished teachers and to try their own wings in classrooms, where innumerable decisions must be made in the course of the day and opportunities for reflection are few. Prospective teachers generally have limited exposure to classrooms before they begin student teaching, and teacher trainers tend to have limited time to spend in classes with them, observing and critiquing their work. Technology can help overcome these constraints by capturing the complexity of classroom interactions in multiple media. For example, student teachers can replay videos of classroom events to learn to read subtle classroom clues and see important features that escaped them on first viewing.

Databases have been established to assist teachers in a number of subject areas. One is a video archive of mathematics lessons from third- and fifth-grade classes, taught by experts Magdalene Lampert and Deborah Ball (1998). The lessons model inquiry-oriented teaching, with students working to solve problems and reason and engaging in lively discussions about the mathematics underlying their solutions. The videotapes allow student teachers to stop at any point in the action and discuss nuances of teacher perfor-

mance with their fellow students and instructors. Teachers’ annotations and an archive of student work associated with the lessons further enrich the resource.

A multimedia database of video clips of expert teachers using a range of instructional and classroom management strategies has been established by Indiana University and the North Central Regional Educational Laboratory (Duffy, 1997). Each lesson comes with such materials as the teacher’s lesson plan, commentary by outside experts, and related research articles. Another technological resource is a set of video-based cases (on videodisc and CDROM) for teaching reading that shows prospective teachers a variety of different approaches to reading instruction. The program also includes information about the school and community setting, the philosophy of the school principals, a glimpse of what the teachers did before school started, and records of the students’ work as they progress throughout the year (e.g., Kinzer et al., 1992; Risko and Kinzer, 1998).

A different approach is shown in interactive multimedia databases illustrating mathematics and science teaching, developed at Vanderbilt University. Two of the segments, for example, provide edited video tapes of the same teacher teaching two second-grade science lessons. In one lesson, the teacher and students discuss concepts of insulation presented in a textbook chapter; in the second lesson, the teacher leads the students in a hands-on investigation of the amount of insulation provided by cups made of different materials. On the surface, the teacher appears enthusiastic and articulate in both lessons and the students are well behaved. Repeated viewings of the tapes, however, reveal that the students’ ability to repeat the correct words in the first lesson may mask some enduring misconceptions. The misconceptions are much more obvious in the context of the second lesson (Barron and Goldman, 1994).

In yet a different way in which technology can support preservice teacher preparation, education majors enrolled at the University of Illinois who were enrolled in lower division science courses like biology were electronically linked up to K–12 classrooms to answer student questions about the subject area. The undergraduates helped the K–12 students explore the science. More important, the education majors had a window into the kinds of questions that elementary or high school students ask in the subject domain, thus motivating them to get more out of their university science courses (Levin et al., 1994).

CONCLUSION

Technology has become an important instrument in education. Computer-based technologies hold great promise both for increasing access to knowledge and as a means of promoting learning. The public imagination

has been captured by the capacity of information technologies to centralize and organize large bodies of knowledge; people are excited by the prospect of information networks, such as the Internet, linking students around the globe into communities of learners.

What has not yet been fully understood is that computer-based technologies can be powerful pedagogical tools—not just rich sources of information, but also extensions of human capabilities and contexts for social interactions supporting learning. The process of using technology to improve learning is never solely a technical matter, concerned only with properties of educational hardware and software. Like a textbook or any other cultural object, technology resources for education—whether a software science simulation or an interactive reading exercise—function in a social environment, mediated by learning conversations with peers and teachers.

Just as important as questions about learning and the developmental appropriateness of the products for children are issues that affect those who will use them as tools to promote learning; namely, teachers. In thinking about technology, the framework of creating learning environments that are learner, knowledge, assessment, and community centered is also useful. There are many ways that technology can be used to help create such environments, both for teachers and for the students whom they teach. Many issues arise in considering how to educate teachers to use new technologies effectively. What do they need to know about learning processes? About the technology? What kinds of training are most effective for helping teachers use high-quality instructional programs? What is the best way to use technology to facilitate teacher learning?

Good educational software and teacher-support tools, developed with a full understanding of principles of learning, have not yet become the norm. Software developers are generally driven more by the game and play market than by the learning potential of their products. The software publishing industry, learning experts, and education policy planners, in partnership, need to take on the challenge of exploiting the promise of computer-based technologies for improving learning. Much remains to be learned about using technology’s potential: to make this happen, learning research will need to become the constant companion of software development.